Poster Presentation - Poster Presentation

CLABSI

Examining CLABSI rates by central-line type

- Lauren DiBiase, Shelley Summerlin-Long, Lisa Stancill, Emily Sickbert-Bennett Vavalle, Lisa Teal, David Weber

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s48-s49

-

- Article

-

- You have access Access

- Open access

- Export citation

-

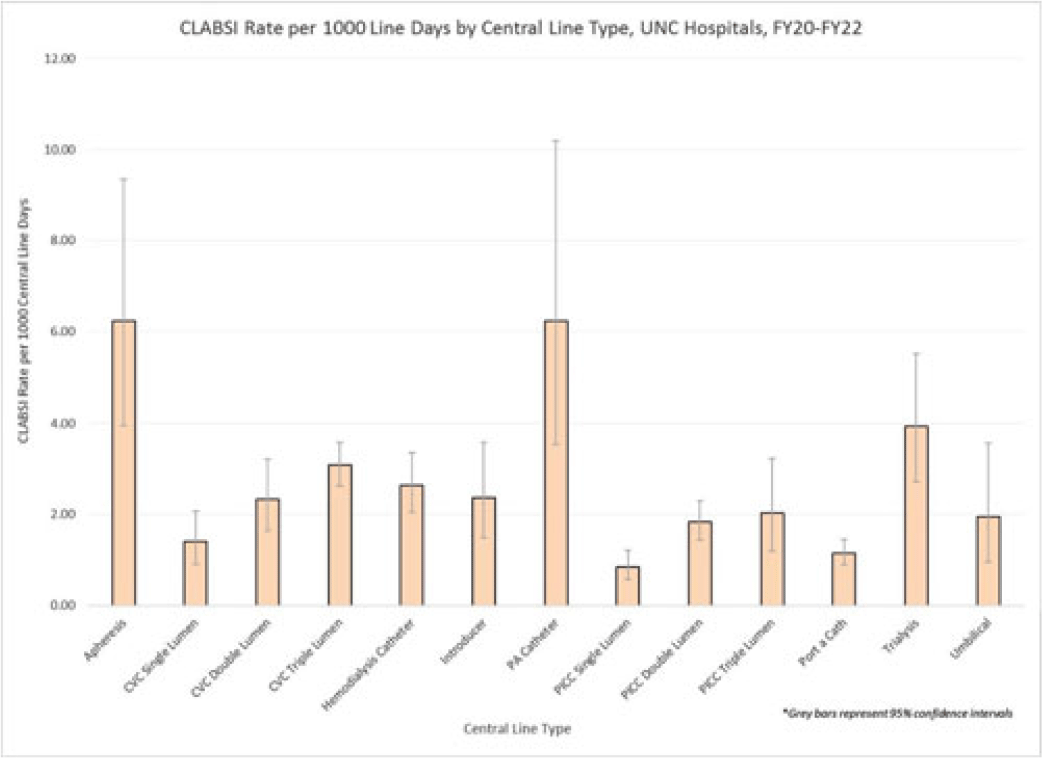

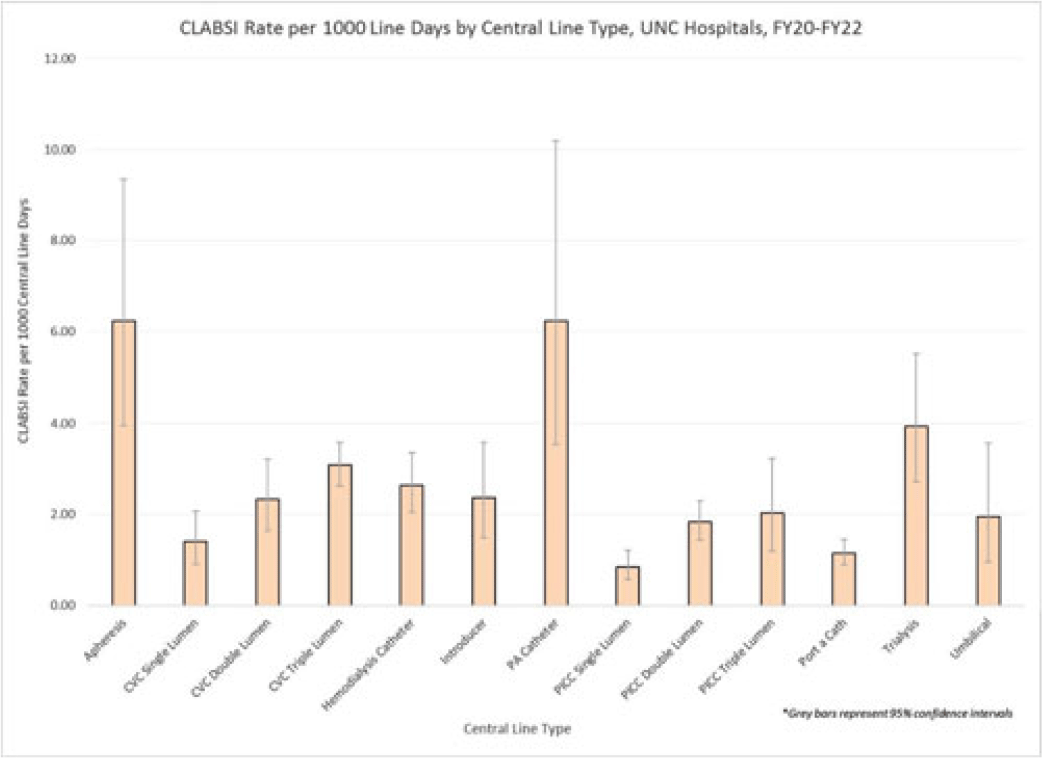

Background: Central-line–associated bloodstream infections (CLABSIs) are linked to increased morbidity and mortality, longer hospital stays, and significantly higher healthcare costs. Infection prevention guidelines recommend line placement in specific insertion locations over others because of the relative risk of infection. The purpose of this study was to assess CLABSI rates by line type to determine whether some central lines had a lower risk of infection and should be recommended over others given similar clinical indications. Methods: At UNC Hospitals, data were obtained on central lines across a 3-year period (FY20–FY22) from the EMR (Epic Systems). Central lines were categorized as apheresis catheters, CVC lines (single, double, or triple lumen), hemodialysis catheters, introducer lines, pulmonary artery (PA) catheters, PICC lines (single, double, or triple lumen), port-a-catheters, trialysis catheters, or umbilical lines. The line type(s) associated with each CLABSI during the same period were recorded, and CLABSI rates by line type per 1,000 central-line days were calculated using SAS software. If an infection had >1 central-line device type associated, the infection was counted twice when calculating the CLABSI rate by line type. We calculated 95% CIs for each point estimate to assess for statistically significant differences in rates by line type. Results: During FY20–FY22, there were 264,425 central-line days and 458 CLABSIs, for an overall CLABSI rate of 1.73 CLABSIs per 1,000 central-line days. Also, 16% of patients with a CLABSI had >1 type of central line in place. Stratified data on CLABSI rates by each central-line type is presented in the Figure. CLABSI rates were highest in patients with apheresis lines (6.22; 95% CI, 3.96–9.35) and PA catheters (6.22; 95% CI, 3.54–10.20), and the lowest CLABSI rates occurred in patients with PICC lines (1.44; 95% CI, 1.19–1.73) and port-a-catheters (1.14; 95% CI, 0.89, 1.45). For both CVC and PICC lines, as the number of lumens increased from single to triple, CLABSI rates increased, from 0.91 to 2.63 and from 0.57 to 1.20, respectively. Conclusions: At our hospital, different types of central lines were associated with statistically higher CLABSI rates. Additionally, a higher number of lumens (triple vs single) in CVC and PICC lines were also associated with statistically higher CLABSI rates. These findings reinforce the importance of considering central-line type and number of lumens to minimize risk of CLABSI while ensuring that patients have the best line type based on their clinical needs.

Disclosures: None

Bloodstream infection burden among cancer clinic patients with PICC Lines: A prospective, observational study

- Jessica Bethlahmy, Hiroki Saito, Bardia Bahadori, Thomas Tjoa, Shereen Nourollahi, Mohamad Alsharif, Justin Chang, Linda Armendariz, Vincent Torres, Sandra Masson, Edward Nelson, Richard Van Etten, Syma Rashid, Raheeb Saavedra, Raveena D. Singh, Shruti Gohil

-

- Published online by Cambridge University Press:

- 29 September 2023, p. s49

-

- Article

-

- You have access Access

- Open access

- Export citation

-

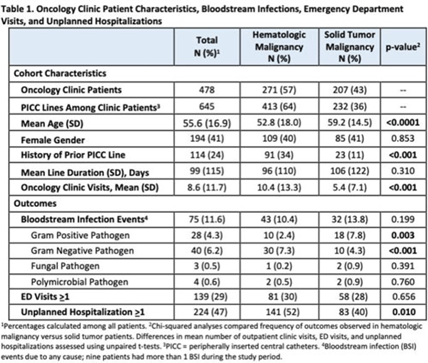

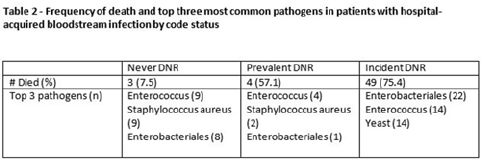

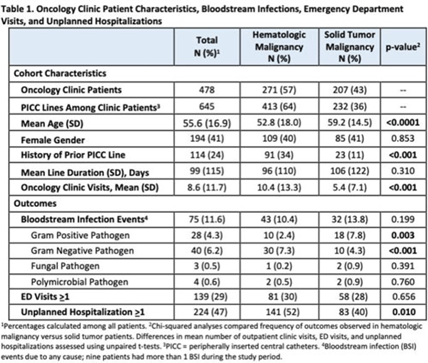

Background: Oncology patients are at high risk for bloodstream infection (BSI) due to immunosuppression and frequent use of central venous catheters. Surveillance in this population is largely relegated to inpatient settings and limited data are available describing community burden. We evaluated rates of BSI, clinic or emergency department (ED) visits, and hospitalizations in a large cohort of oncology outpatients with peripherally inserted central catheters (PICCs). Methods: In this prospective, observational study, we followed a convenience sample of adults (age>18) with PICCs at a large academic outpatient oncology clinic for 35 months between July 2015 and November 2018. We assessed demographics, malignancy type, PICC insertion and removal dates, history of prior PICC, and line duration. Outcomes included BSI events (defined as >1 positive blood cultures or >2 positive blood cultures if coagulase-negative Staphylococcus), ED visits (without hospitalization), and unplanned hospitalizations (excluding scheduled chemotherapy hospitalizations). We used χ2 analyses to compare the frequency of categorical outcomes, and we used unpaired t tests to assess differences in means of continuous variable in hematologic versus solid-tumor malignancy patients. We used generalized linear mixed-effects models to assess differences in BSI (clustered by patient) separately for gram-positive and gram-negative BSI outcomes. Results: Among 478 patients with 658 unique PICC lines and 64,190 line days, 271 patients (413 lines) had hematologic malignancy and 207 patients (232 lines) had solid-tumor malignancy. Cohort characteristics and outcomes stratified by malignancy type are shown in Table 1. Compared to those with hematologic malignancy, solid-tumor patients were older, had 47% fewer clinic visits, and had 32% lower frequency of prior PICC lines. Overall, there were 75 BSI events (12%; 1.2 per 1,000 catheter days). We detected no significant difference in BSI rates when comparing solid-tumor versus hematologic malignancies (P = 0.20); BSIs with gram-positive pathogen were 69% higher in patients with solid tumors. Gram-negative BSIs were 41% higher in patients with hematologic malignancy. Solid-tumor malignancy was associated with 4.5-fold higher odds of developing BSI with gram-positive pathogen (OR, 4.48; 95% CI, 1.60–12.60; P = .005) compared to those with hematologic malignancy, after adjusting for age, sex, history of prior PICC, and line duration. Differences in gram-negative BSI were not significant on multivariate analysis. Conclusions: The burden of all-cause BSIs in cancer clinic adults with PICC lines was 12% or 1.2 per 1,000 catheter days, as high as nationally reported inpatient BSI rates. Higher risk of gram-positive BSIs in solid-tumor patients suggests the need for targeted infection prevention activities in this population, such as improvements in central-line monitoring, outpatient care, and maintenance of lines and/or dressings, as well as chlorhexidine bathing to reduce skin bioburden.

Disclosures: None

End-of-life care and hospital-acquired bloodstream infection

- Melanie Zarnoski, Patrick Burke, Steven Gordon, Joanne Sitaras, Thomas Fraser

-

- Published online by Cambridge University Press:

- 29 September 2023, p. s50

-

- Article

-

- You have access Access

- Open access

- Export citation

-

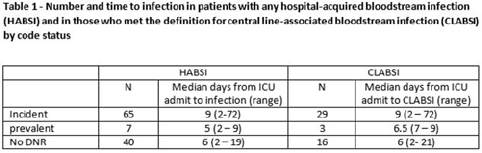

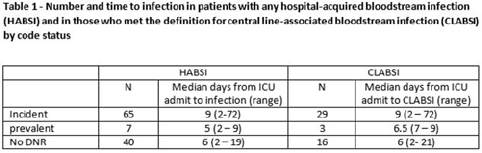

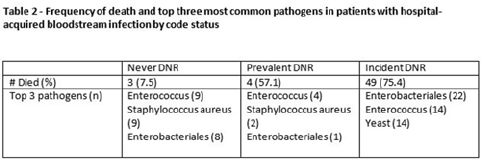

Background: All critically ill patients are at risk for hospital-acquired bloodstream infection (HABSI). At any time, however, there is heterogeneity among patients in the ICU; some patients have the added complexity of end-of-life discussions. We sought to better understand the patients in our medical intensive care unit (MICU) with HABSIs that do and do not meet the NHSN definition for a central-line–associated bloodstream infection (CLABSI) event by evaluating for the presence of a do-not-resuscitate (DNR) order. Methods: The study was conducted at our 66-bed MICU at the Cleveland Clinic Main Campus between January 2021 and September 2022. Surveillance for HABSI to include determination of CLABSI is performed prospectively according to the NSHN definition. The electronic health record was queried for each patient with a HABSI for the presence of a DNR order. DNR orders were categorized as follows: prevalent (DNR orders present at the time of admission to the MICU), incident (orders entered after admission to the MICU), or no DNR (for patients without an order at any time during their MICU stay). For incident orders, time from order to HABSI was recorded. Time to event was calculated as days between ICU admission to HABSI. Results: During the observation period there were 36,477 MICU patient days and 4,815 admissions. There were 112 HABSIs, of which 48 (43%) were CLABSIs. Overall, 65 patients were categorized as incident DNR, 7 were categorized as prevalent DNR, and 40 were categorized as no DNR. For patients with an incident DNR order, 50 HABSIs occurred on the date of or before the order and 15 occurred after the order. In patients in whom HABSI occurred after the incident DNR order, the median number of days between DNR order and HABSI was 11 days (range, 1–69). Discussion: In our MICU, >50% of HABSIs and 60% of CLABSIs occurred in patients with a DNR order incident to their MICU stay. Interventions to prevent hospital-acquired bloodstream infection and the analysis of the events are inextricably linked to issues of end-of-life care for critically ill patients. Further exploration of patient characteristics easily obtainable from the EHR, such as DNR orders, is necessary to inform best practices for prevention and risk adjustment of bloodstream infection rates.

Disclosures: None

Novel strategies to reduce central-line–associated blood stream infection (CLABSI) events in the neonatal intensive care unit

- Ingrid Camelo, Srilatha Neshangi, Amy Thompson

-

- Published online by Cambridge University Press:

- 29 September 2023, p. s50

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: We describe the components of an improved and easy-to-implement strategy to reduce CLABSI events in the NICU implemented during July–September 2021 in a tertiary-care healthcare center. These strategies were added to an existing institutional protocol created following CDC guidelines. Methods: During the previous timeframe of the implementation of new strategies, CDC insertion-related prevention measures [ie, hand hygiene, use of personal protective equipment (PPE), catheter size selection, standard chlorhexidine gluconate (CHG) antisepsis, maintenance related Curos disinfecting caps, and scrubbing the hub] were part of an existing protocol at our institution. We introduced the following key elements along with the previous ones: decrease length of umbilical vein catheter (UVC) utilization from 14 days to 5–7 days, change of dressing materials from BIOPATCH to 3M Tegaderm CHG chlorhexidine gluconate IV securement transparent dressing, enhanced compliance of an existing artificial nail policy, and restricted blood draw from central lines. Results: After optimization of the previous protocol through these additional strategies, we achieved a significant reduction in the NICU CLABSI rates from 12 CLABSI events between July 2020 and June 2021 to only 3 CLABSI events between July 2021 and June 2022. Conclusions: Revision of CLABSI bundle prevention protocols should be performed frequently to allow improvement opportunities to be added to diminish infection rates. The addition of simple and easy-to-implement key elements interventions to the existing CLABSI bundle had an important impact on the CLABSI rate at our institution.

Disclosures: None

Estimating racial differences in risk for CLABSI in a large urban healthcare system

- Giancarlo Licitra, Scott Fridkin, Zanthia Wiley, Lindsey Gottlieb, William Dube, Vishnu Ravi Kumar, Rachel Patzer, Radhika Prakash Asrani

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s50-s51

-

- Article

-

- You have access Access

- Open access

- Export citation

-

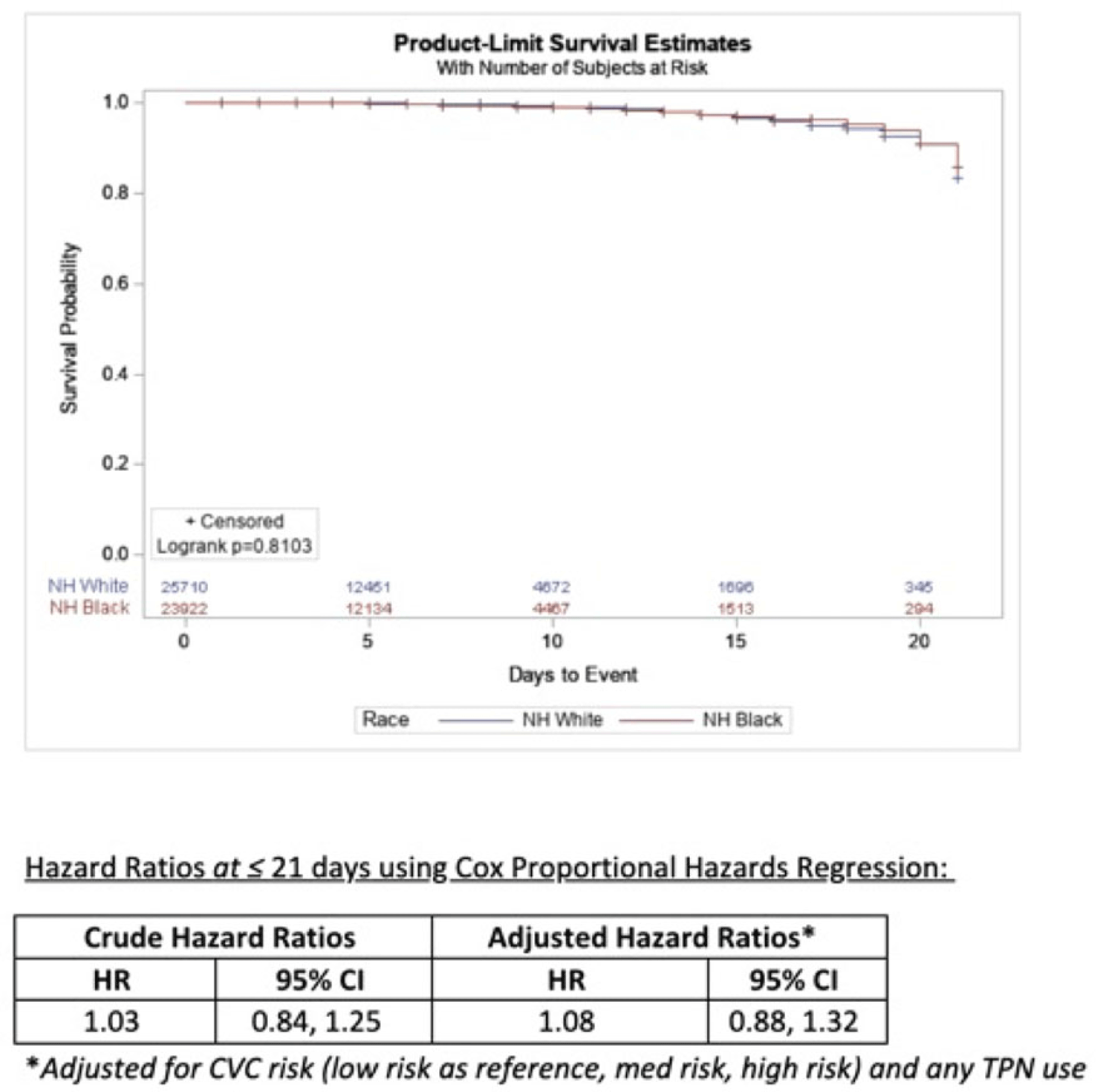

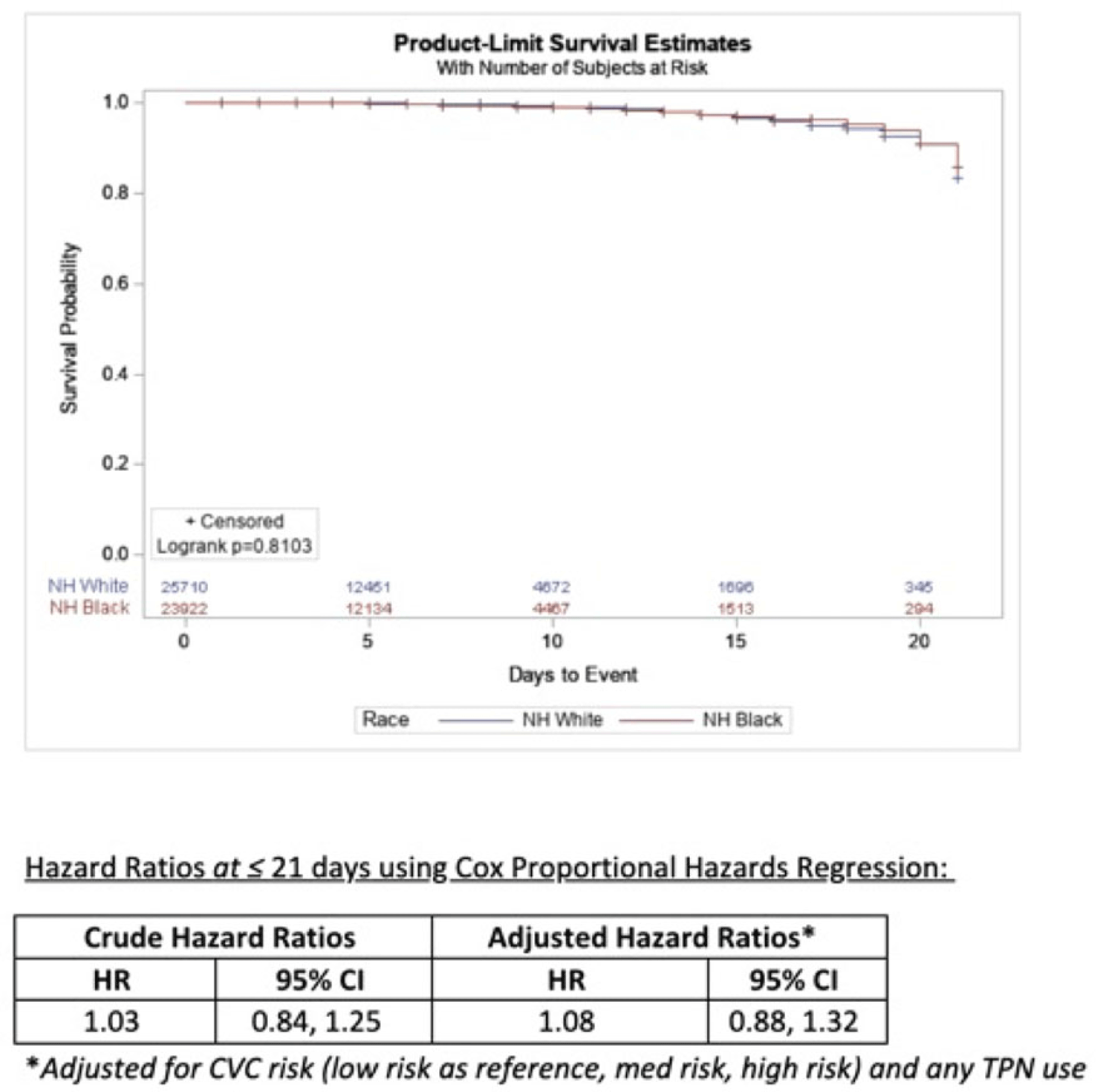

Background: Socioeconomic barriers or divergent implementation of prevention measures may impact risk of healthcare-associated infections by racial groups. We utilized a previously studied cohort of patients to quantify disparities in central-line–associated bloodstream infection (CLABSI) risk by race accounting for inherent differences in risk related to device utilization. Methods: In a retrospective cohort of adult patients at 4 hospitals (range, 110–733 beds) from 2012 to 2017, we linked central-line data to patient encounter data: race, age, comorbidities, total parenteral nutrition (TPN), chemotherapy, CLABSI. Analysis was limited to patients with >2 central-line days and <3 concurrent central lines. Patient exposures were calculated for each central-line episode (defined by insertion and removal dates); analysis of central-line episode-specific risk of CLABSI among Black versus White patients adjusted for clinical factors, duration of central-line episode, and central-line risk category (ie, low: single port, dialysis or PICC; medium: single temporary or nontunneled; or high: any concurrent central-lines) in Cox proportional hazards regression of time to CLABSI. Results: In total, 526 CLABSIs occurred a median of 14 days after insertion among 57,642 central-line episodes in 32,925 patients. CLABSIs occurred in similar frequency across racial groups: 217 (1.7%) among Black patients, 256 (1.6%) among White patients, and 11 (1.6%) among Hispanic patients (also 42 among unknown or other race). Duration of central-line episode was similar between racial groups (median, 5 days). Black patients were less likely to have medium-risk central lines (34%) compared to white patients (RR, 0.82; 95% CI, 0.79–0.84), but they had a similar frequency of high-risk central lines (21%; RR, 1.0; 95% CI, 1.0–1.1). Compared with low-risk central lines, risk of CLABSI was increased among medium-risk central lines (RR, 1.3; 95% CI, 1.0–1.7) and high-risk central lines (RR, 2.2; 95% CI, 1.8–2.7). CLABSIs were more likely in TPN central lines (RR, 2.3; 95% CI, 1.9–2.7) than others, but they were not more likely among Black patients than White patients (RR, 0.9; 95% CI, 0.1–1.1). In survival analysis, there were 24,700 central-line episodes among Black patients compared to 26,648 episodes among White patients; adjusting for central-line risk and TPN, the risk of CLABSI was similar during the first 21 days of central-line use (adjusted hazard ratio, 1.08; 95% CI, 0.88–01.32) (Fig. 1). Conclusions: After accounting for central-line configuration, Black patients did not have a higher risk of CLABSI within 21 central-line days. Further evaluation is warranted to assess racial disparities in risks of other healthcare-associated infections and to determine whether a lack of CLABSI-specific racial disparities can be replicated in other regions and healthcare systems.

Disclosures: None

COVID-19

Multidrug-resistant ventilator-associated pneumonia (VAP) before and during the COVID-19 pandemic among hospitalized patients in a tertiary-care private hospital

- Alec Ann Alissa Aligui, Cybele Lara Abad

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s51-s52

-

- Article

-

- You have access Access

- Open access

- Export citation

-

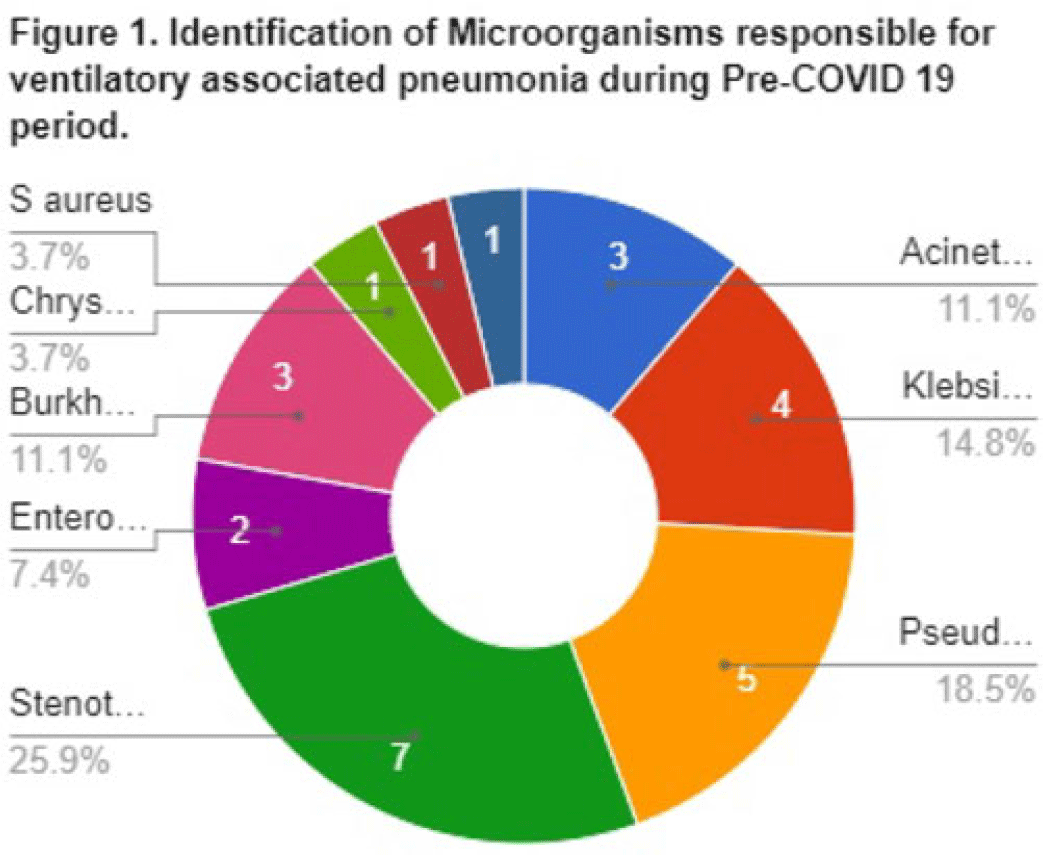

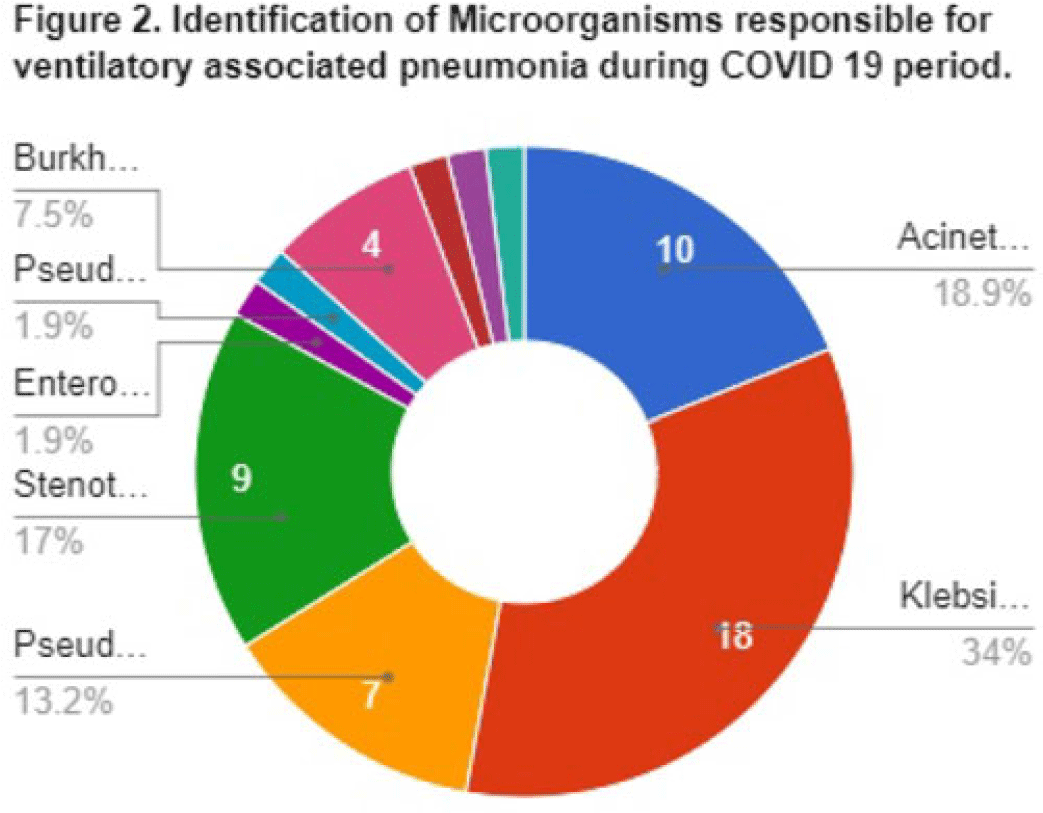

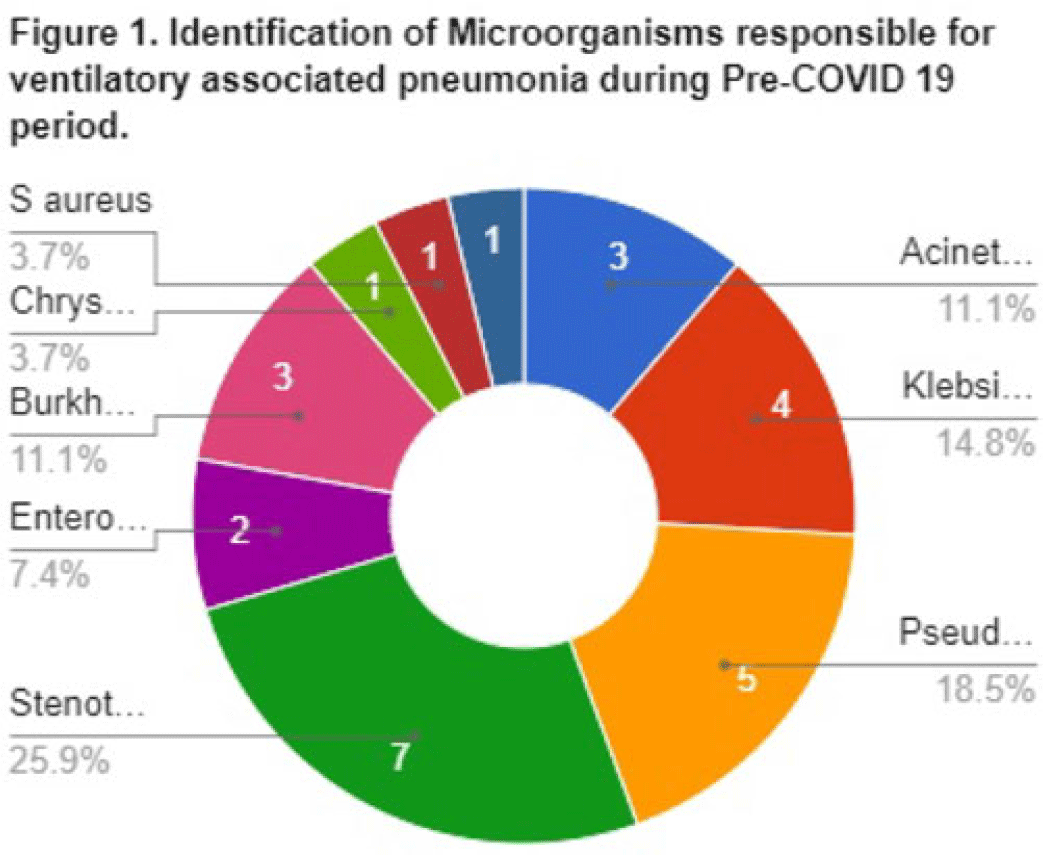

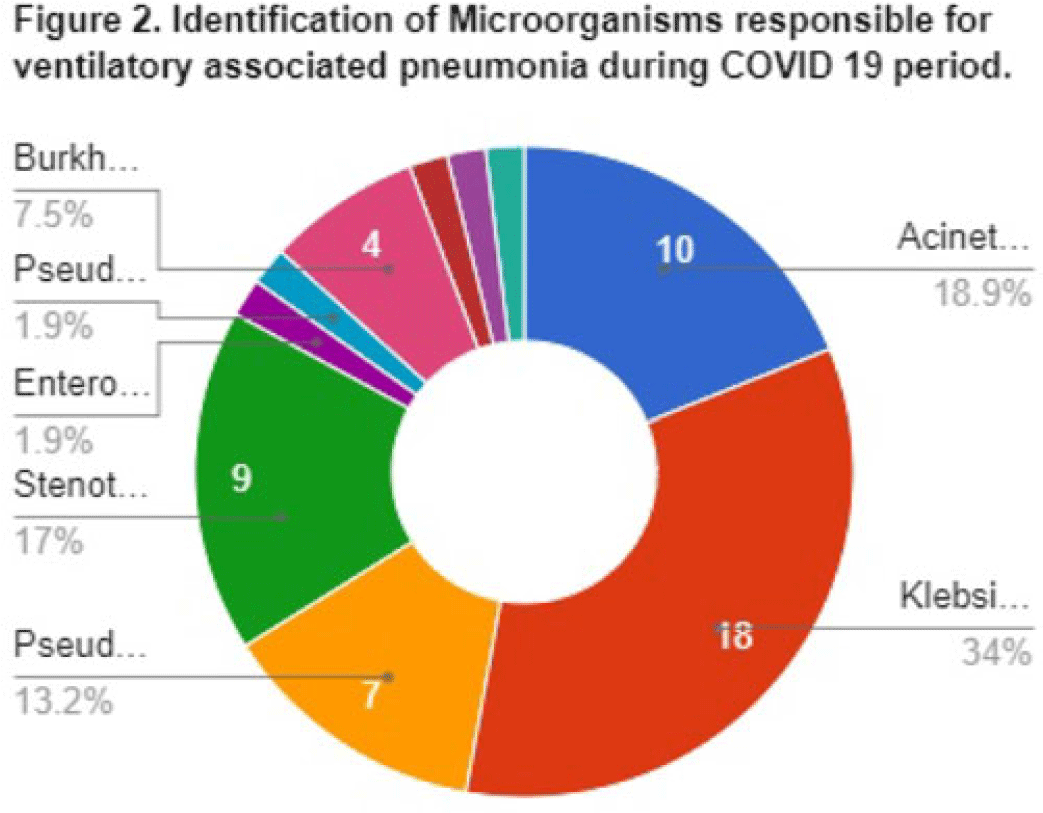

Background: Data on the incidence and outcome of ventilator-associated pneumonia (VAP) and multidrug-resistant VAP (MDR-VAP) among COVID-19 patients are limited. We compared the incidence and incidence density (ie, number of VAP per 1,000 ventilatory days) of MDR-VAP prior to and during the COVID-19 period in an urban, tertiary-care hospital. Methods: A retrospective study was conducted to compare the incidence, profile, and outcomes of patients with MDR-VAP during the pre–COVID-19 period (2018–2019) and during the COVID-19 pandemic (2020–2021). Results: In total, 80 (22%) of 362 patients developed VAP and were included in the cohort: 27 (33.75%) from the pre–COVID-19 period and 53 (66.25%) from the COVID-19 period, respectively. Most were male [20 (74%) of 27 vs 34 (64%) of 53], with median ages of 66 years (range, 35–90) and 67 years (range, 32–92) in the pre–COVID-19 and COVID-19 periods, respectively. Comorbidities were similar between the 2 periods, except for cardiovascular disease (14 vs 11; P = .005) and chronic lung disease (14 vs 9; P = .0012), which decreased significantly from the pre–COVID-19 period to the COVID-19 period. Only 15 (56%) of 27 versus 37 (70%) of 53 patients developed MDR-VAP during the pre–COVID-19 and COVID-19 period, with incidence densities of 19.3 of 1,000 and 27.8 of 1,000 ventilator days (P = .0371), respectively. The median length of stay prior to VAP for the pre–COVID-19 and COVID-19 periods were 17 and 10 days, respectively (P < .0001). Extended-spectrum β-lactamase (ESBL) resistance increased significantly from 1 (3.7%) of 27 before COVID-19 to 15 (28.3%) of 53 during the COVID-19 period. Carbapenem-resistant Enterobacteriaceae (CRE) resistance was higher before COVID-19 than during the COVID-19 period: 15 (56%) of 27 versus 10 (19%) of 53. In both periods, Klebsiella pneumoniae and Acinetobacter baumannii were the most common pathogens isolated. Mortality was high in both periods at 93% and 83%, respectively. Only female sex was associated with MDR-VAP in the COVID-19 period on multivariate analysis (OR, 3.47; 95% CI, 1.019–11.824; P < .047). Conclusions: The frequency of VAP and MDR-VAP increased during the COVID-19 period, despite a shorter median hospital stay. Mechanisms of resistance differed in the pre–COVID-19 and COVID-19 periods. Mortality with VAP was extremely high. The factors associated with increased risk of VAP and COVID-19 need to be studied further, and measures to prevent VAP should be prioritized.

Disclosures: None

Identifying COVID-19 clusters in Tennessee long-term care facilities based on weekly staff vaccination rates

- Marissa Turner, Ashley Gambrell, Erin Hitchingham, Simone Godwin

-

- Published online by Cambridge University Press:

- 29 September 2023, p. s52

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: In September 2021, the CMS mandated that long-term care facility (LTCF) healthcare workers be vaccinated for COVID-19 unless medically or religiously exempt. Vaccinating healthcare workers reduces transmission of COVID-19 among patients and workers, reducing the risk of illness among residents and patients. We examined the relationship between COVID-19 clusters and staff vaccination rates in Tennessee LTCFs. Methods: COVID-19 cluster data were collected using REDCap from January 3, 2021, to September 25, 2022, and LTCF vaccination rates were collected from the NHSN. Clusters were identified in facilities with 2 or more cases. The staff vaccination rate 2 weeks prior to the cluster was used, accounting for the lag time between vaccination dose and reaching full immunity. We selected 75% as the critical immunization threshold. The facility case rate was calculated per 100 beds. A test was performed to determine whether reaching the critical vaccination threshold was associated with cluster occurrence. The relationship between vaccination rate and case number was tested using Pearson correlation. Statistical analyses were conducted using SAS version 9.4 software. Results: The average staff vaccination rate when NHSN first required long-term care facilities to report rates rose from 47% in June 2021 to 83% in September 2022. In total, 806 clusters were identified with 20,868 combined weeks from all facilities being reported after merging facilities’ weekly vaccine percentage rates with cluster data. Most weeks from all facilities did not identify a cluster (n = 20,064, 96.15%) and did not meet the critical immunization threshold (n = 11,050, 52.95%). The association between a cluster occurring and a facility meeting the threshold was significant (χ2 = 5.41; df = 1; P 95% CI, .7327–.9740). The Pearson correlation coefficient between vaccination rate and case number was 0.05560 (P = .2894). Conclusions: There was a significant association between facilities not reaching the immunization threshold and presence of a COVID-19 cluster. The facility case rate was not correlated with staff vaccination rate; however, a limitation of this analysis was that resident vaccination was not tested. Another limitation was that medical and religious exemptions could not be differentiated. Healthcare staff should consider getting vaccinated, if able, to reduce the risk of COVID-19 and to keep staff and residents safe from COVID-19.

Disclosures: None

Utilizing data to foster equity in infection prevention outreach among skilled nursing facilities in Michigan

- Christine White, Michael David, Ruben Juarez

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s52-s53

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: Since October 2020, the Infection Prevention Resource and Assessment Team (IPRAT) has provided infection prevention guidance and support to congregate-care settings throughout Michigan. Specifically, outreach to skilled nursing facilities (SNFs) in response to reported positive COVID-19 resident and staff cases. Case rates provide limited data and do not factor in additional variables, such as staffing shortages, geographical location, or access to supplies, which can increase the vulnerability of staff and residents to outbreaks. To facilitate equitable outreach, a risk assessment was developed using variables related to infection prevention and poor COVID-19 outcomes utilizing local, state, and federal data reporting websites. Methods: A retrospective data review of IPRAT’s electronic data repository was performed, and 2 distinct periods were identified between November 6, 2020, and December 5, 2022. Outreach method 1 involved only using case counts from November 6, 2020, to September 24, 2021. Outreach method 2 (new risk-assessment–based outreach) involved additional data points from April 12, 2021, to December 5, 2022. Data included 17 self-reported items from the NHSN, 3 characteristics regarding facilities’ COVID-19 units, and 7 community-level variables derived from county vaccine rates, social vulnerability index (SVI), and COVID-19 community transmission level. The scoring of each data point ranged from 0–10, and outreach was prioritized to facilities with the highest overall scores. Successful referrals (resulting in a site visit) were compared to the SVI and healthcare emergency regional maps to determine whether the new outreach method reached more facilities in vulnerable communities. Results: Of 358 outreach attempts, IPRAT had a higher success rate with method 2 (6.9%) compared to method 1 (5.3%) and improved outreach in rural Michigan regions 7 and 8 (15% vs 3%). Site visits in counties with a high SVI rating with method 2 were 14.5% versus 10.6% using method 1. COVID-19 prevention referral success rates were higher (4.4% vs 3.1%) using method 2. Conclusions: The risk-assessment–based outreach method showed improvement in overall referral success rates among facilities in rural and higher-SVI counties. These communities tend to experience higher health disparities and poorer health outcomes. Incorporating the more nuanced data variables correlated with at-risk congregate-care settings receiving timelier outreach. The limitations of the study include sample size, period of data collected (2 years), and the complexity of objectively measuring equity.

Disclosures: None

Universal COVID-19 screening at hospitals in a large Canadian health region

- Matthew Garrod, Katy Short

-

- Published online by Cambridge University Press:

- 29 September 2023, p. s53

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: Hospitals were affected by COVID-19, with significant concern regarding transmission from unidentified cases. Fraser Health, a Canadian regional health authority, implemented universal testing along with screening questions for emergency department (ED) admissions. We sought to determine which factors were associated with SARS-CoV-2–positive test on admission as well as patient outcome, stratified by screening question responses. Methods: This retrospective analysis included patients aged ≥6 years admitted through 12 hospital EDs between November 1, 2020, and June 30, 2022. Admission, laboratory, and screening data were extracted from electronic health records. Patients who had a first SARS-CoV-2 PCR–positive test in the prior 60 days collected within 48 hours of admission were classified as positive. Covariates included age, geographical region, and SARS-CoV-2 variant era. All questions were modeled using multinomial logistic regression, with components informed through crude analysis in R Studio software. Results: There were 88,511 unique eligible admissions, with 7,642 positive tests (8.6%). The positivity rate over the study period ranged from 0.6% to 21.8%, with a mean of 6.5%. Patients meeting screening criteria were 4.7 times (95% CI, 4.43–4.92) as likely to test positive as those who did not. Patients in the SARS-CoV-2 omicron variant era were 3.2 times (95% CI, 2.98–3.47) as likely to test positive as those in the earlier era of the pandemic. Patients later in the pandemic were less likely to be identified by screening questions than those in earlier eras, with patients in the SARS-CoV-2 omicron variant era only 14% (95% CI, 12%–17%) as likely as in the earlier stages of the pandemic to be identified by screening questions. Patients who tested positive were 1.5 (95% CI, 1.37–1.64) times as likely to die as patients who tested negative, whereas patients in later stages of the pandemic were less likely to die overall. Discussion: Patients who tested positive on admission were more likely to meet screening criteria; however, screening missed half of all positive cases. It is not known whether patients who tested positive without meeting screening criteria would have resulted in transmission. Conclusions: Due to changes in COVID-19 epidemiology, Fraser Health has discontinued universal admission screening. Although universal testing increased resource needs, more than half of patients who tested positive during the study period would not have been identified based on screening criteria alone, allowing for implementation of precaution measures to prevent possible transmission. Ultimately, the decision to conduct universal testing must be a balance of the resources required, community prevalence, and patient population.

Disclosures: None

Inpatient remdesivir versus nirmatrelvir-ritonavir in the progression of COVID-19

- Dimple Patel, Christopher Mccoy, Kendall Donohoe, Matthew Lee, Howard Gold, Ryan Chapin

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s53-s54

-

- Article

-

- You have access Access

- Open access

- Export citation

-

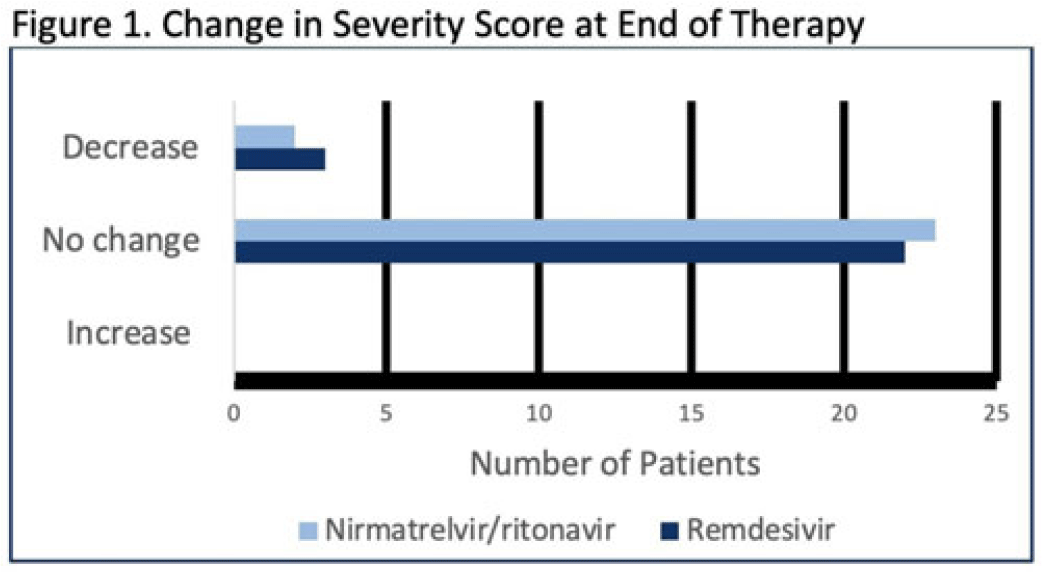

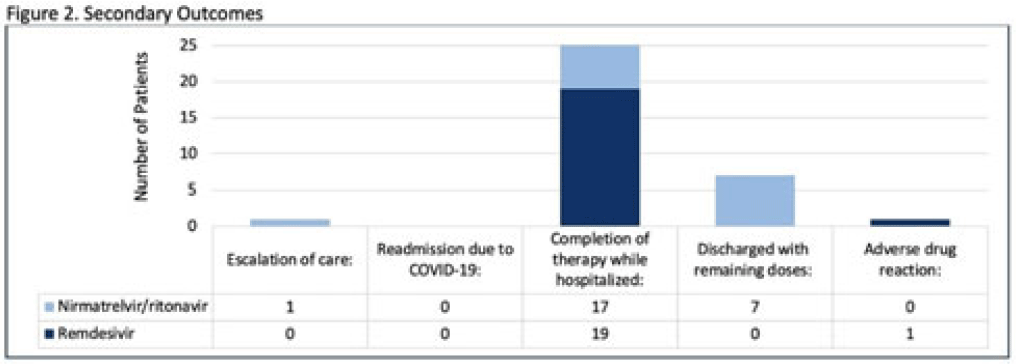

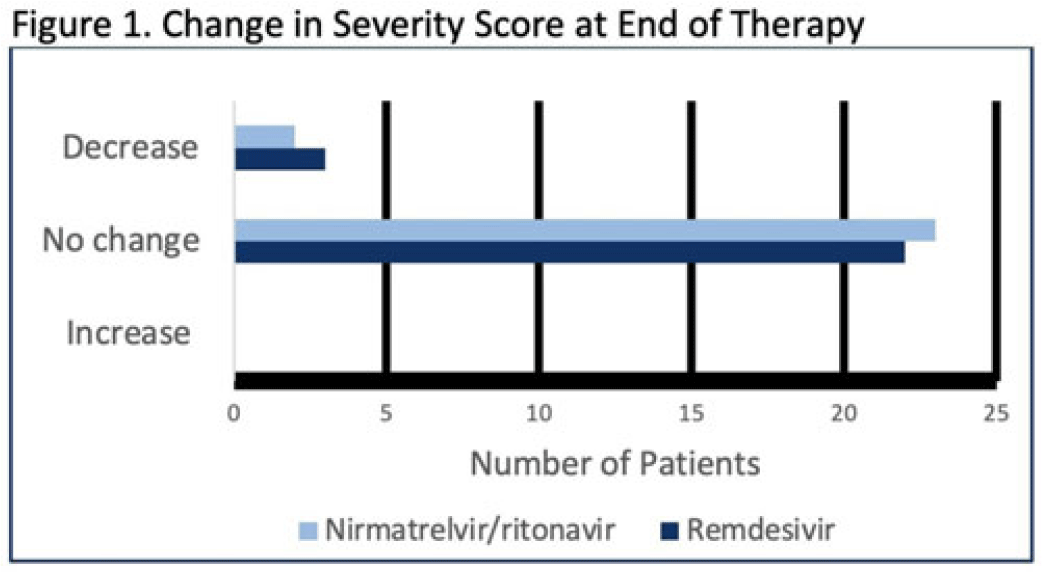

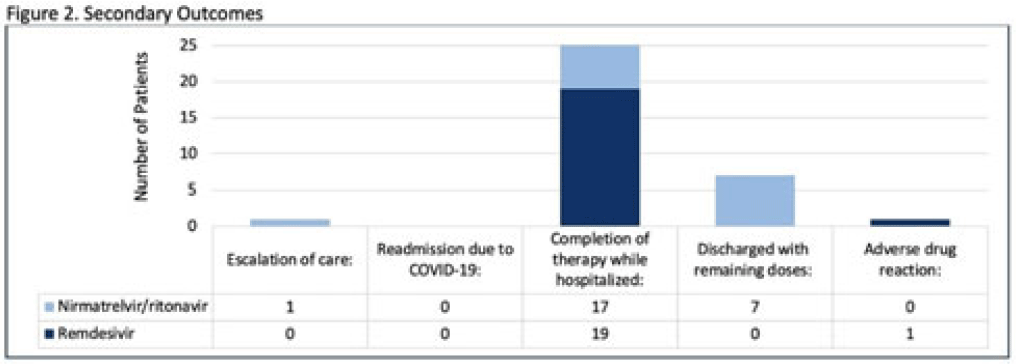

Background: Nirmatrelvir-ritonavir received emergency use authorization (EUA) for the prevention of progression of COVID-19 in December 2021. Most data supporting this authorization are limited to the outpatient setting in unvaccinated patients, and high-quality head-to-head comparisons to other antivirals such as remdesivir are lacking. Patients at high risk of disease progression, such as advanced age, smokers, and those with cardiovascular disease, diabetes, obesity, or cancer continue to be admitted to acute-care settings for various indications, and some are incidentally found to have mild COVID-19. The objective of this project was to compare rates of progression of mild-to-moderate COVID-19 for inpatients treated with remdesivir versus nirmatrelvir-ritonavir. Methods: This study was a single-center, retrospective cohort study that included patients aged ≥18 years with PCR-confirmed SARS-CoV-2 infection who were initiated on nirmatrelvir-ritonavir within 5 days or remdesivir within 7 days of symptom onset between June 2022 and August 2022. The primary outcome was the worsening of symptoms via the WHO ordinal clinical severity scale for COVID-19. Secondary outcomes included escalation of care or readmission due to COVID-19, discharge prior to treatment completion, and any adverse drug reactions (ADRs). Within our institutional guidelines, prior approval is needed for COVID-19 treatment through collaboration between the primary team and antimicrobial stewards. Nirmatrelvir-ritonavir is the preferred agent for both in- and outpatients unless the patient had drug interactions or lack of enteral access, in which case remdesivir was considered. Results: In total, 58 patients were screened and 50 patients were included, 25 patients in each arm. Most were non-Hispanic, white males with at least 1 comorbidity. Compared to the remdesivir arm, the nirmatrelvir-ritonavir arm had more patients with at least a primary COVID-19 vaccine (44% vs 34%). Also, 88% of patients in each arm had a baseline ordinal score of 4, and 12% had a score of 5. Ordinal score changes between the start and end of therapy were similar between groups, and neither had an increase in oxygen requirements (Fig. 1). No readmissions were due to COVID-19, and both medications were well tolerated. Refer to Fig. 2 for secondary outcomes. Conclusions: Nirmatrelvir-ritonavir and remdesivir showed similar safety and efficacy in the treatment of hospitalized patients with mild-to-moderate COVID-19. Current evidence-based guidelines and treatment costs favor nirmatrelvir-ritonavir for patients who can receive this drug.

Disclosures: None

Whether or not the weather matters: A retrospective review assessing the influence of weather on SARS-CoV-2 transmission

- Melissa Colaluca, Sean Harford, Ann Palmer, Ulysses Wu, Kaelin Wu, Matthew Kulowski

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s54-s55

-

- Article

-

- You have access Access

- Open access

- Export citation

-

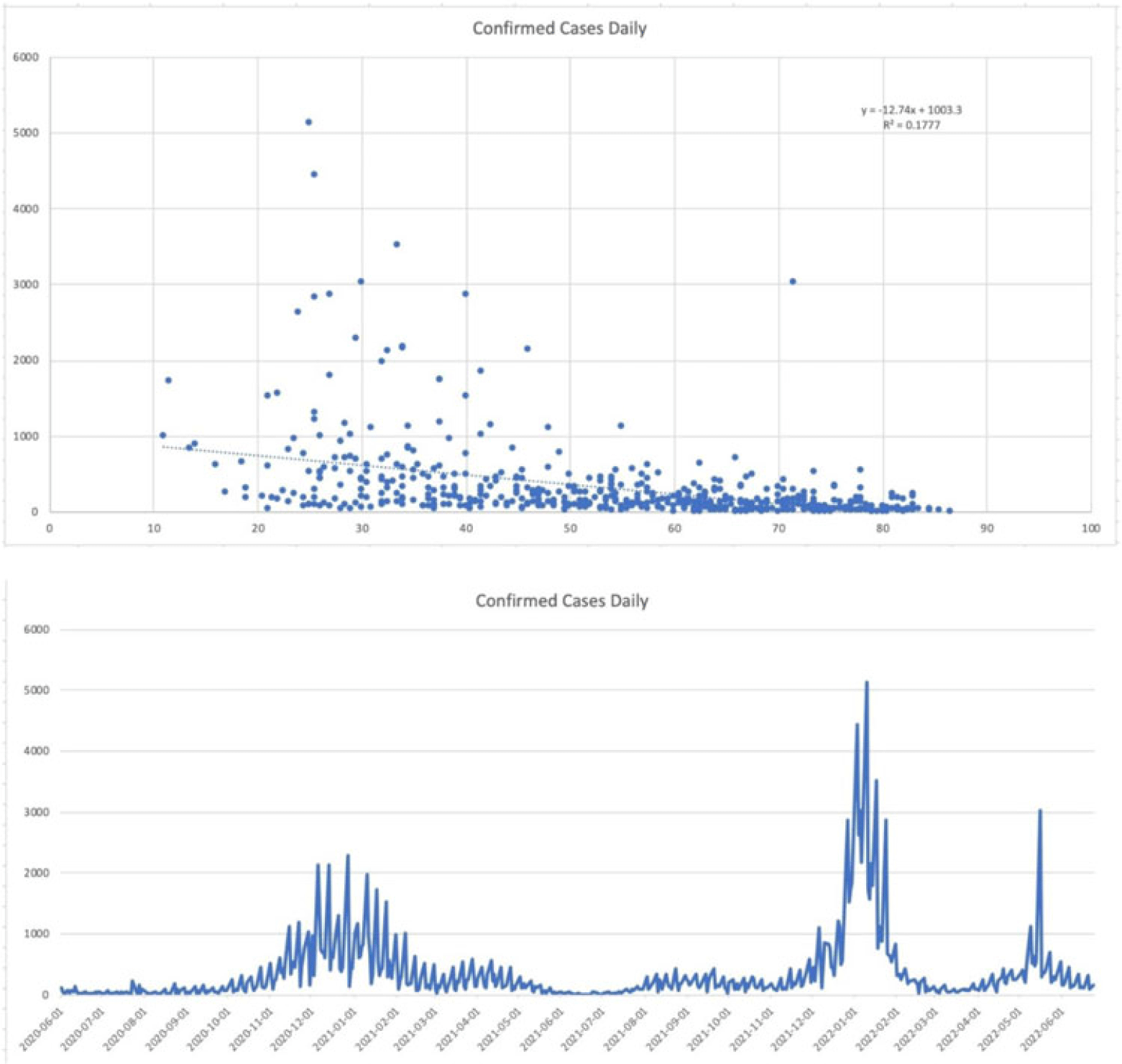

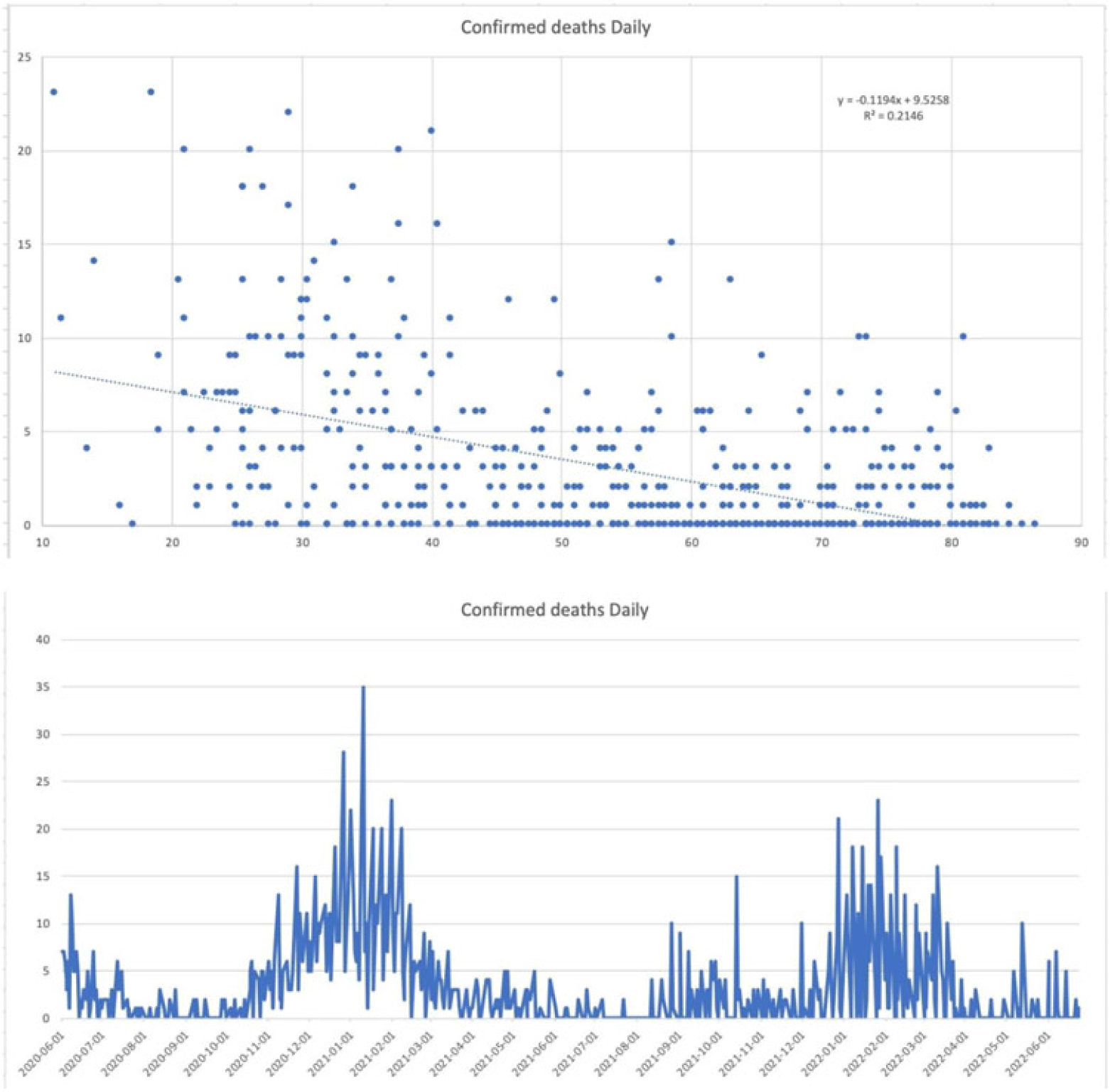

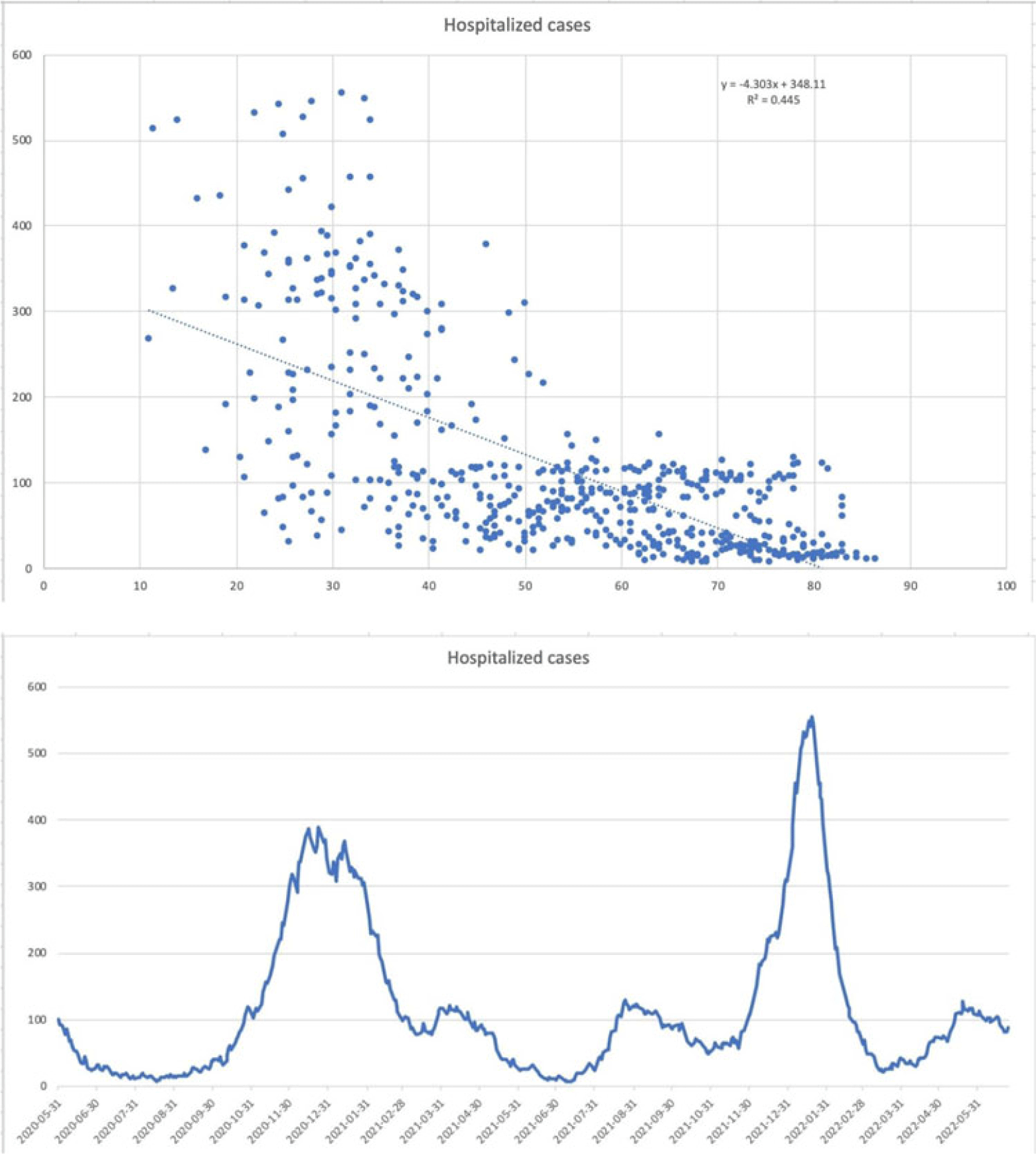

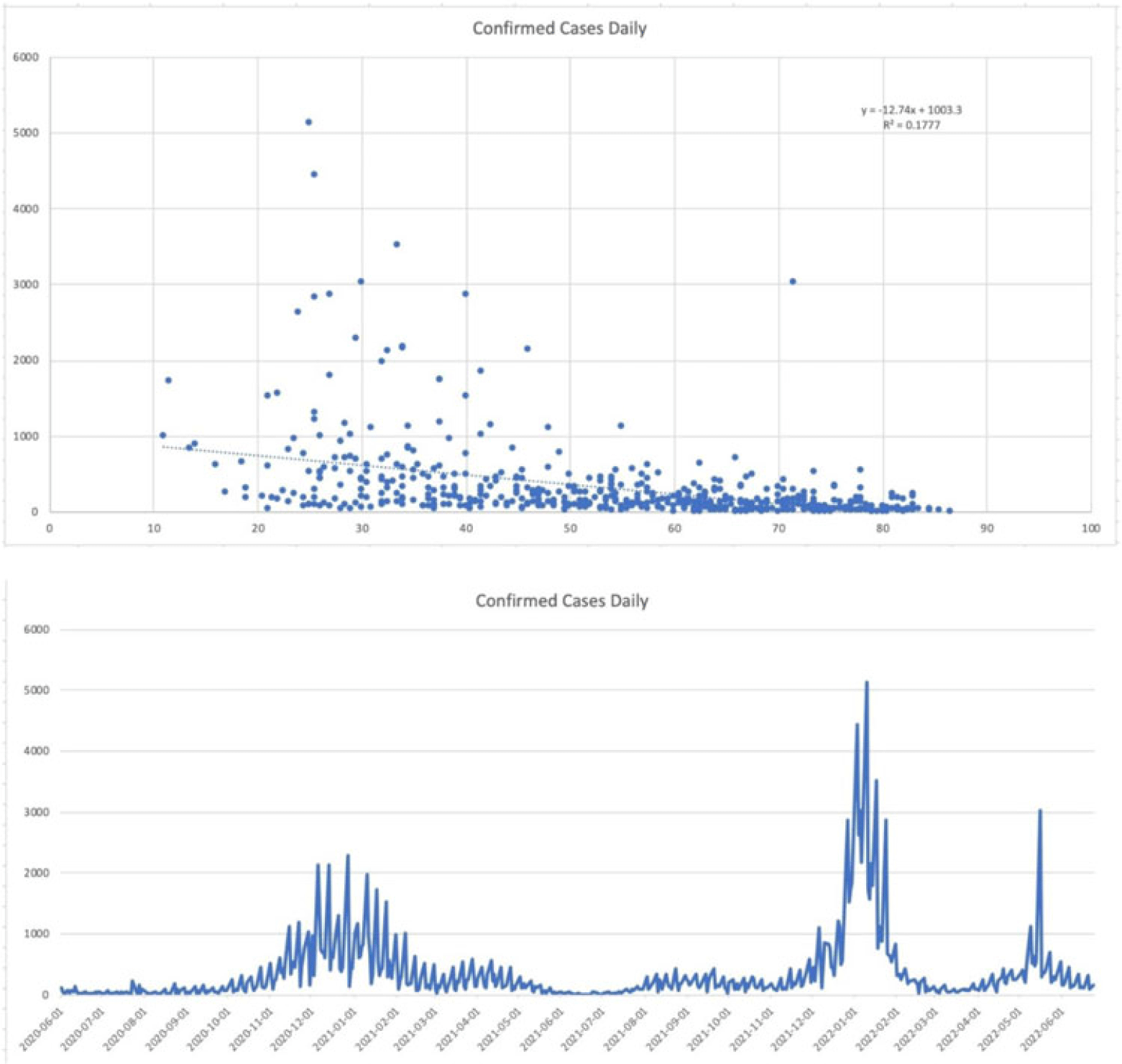

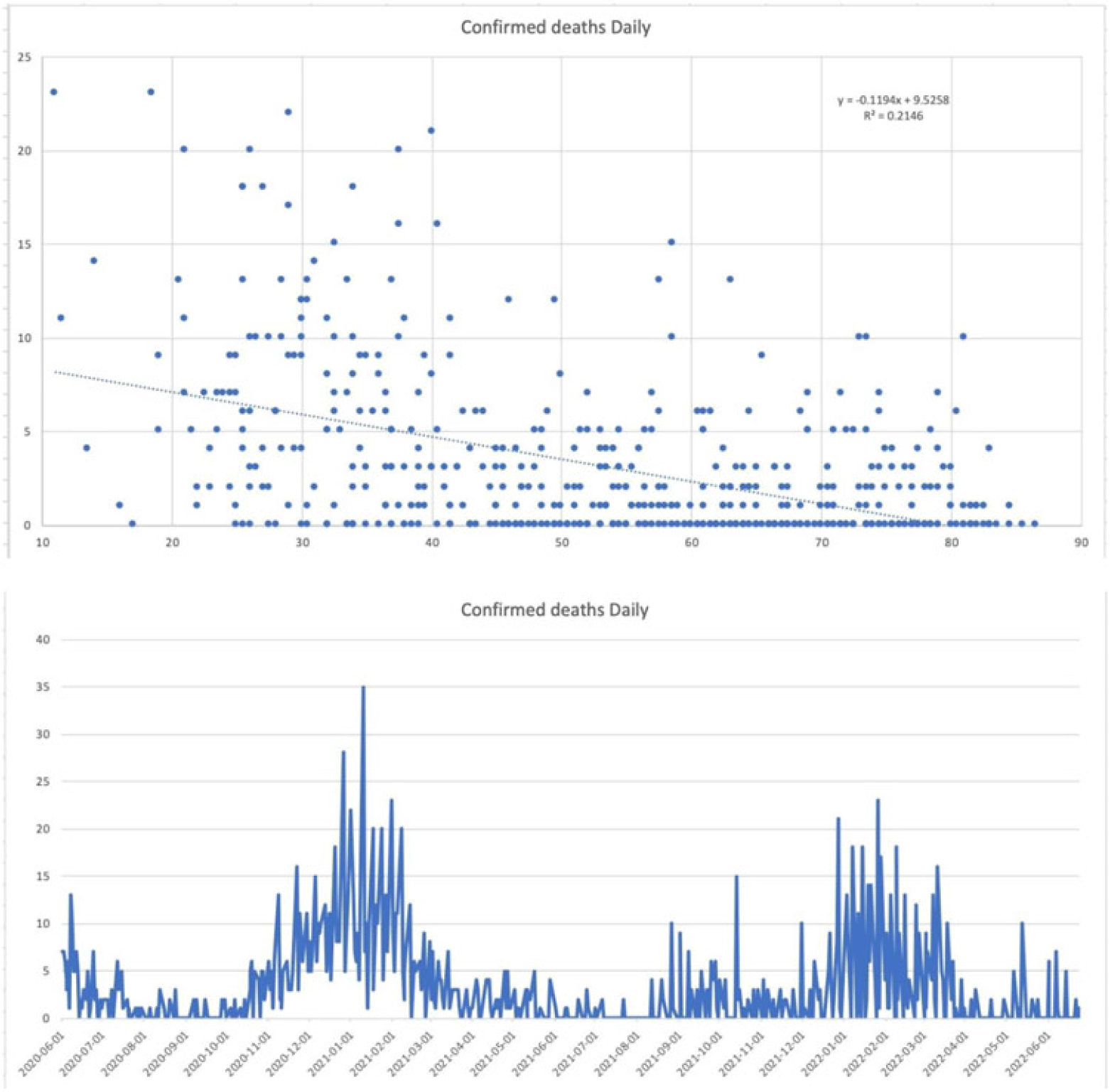

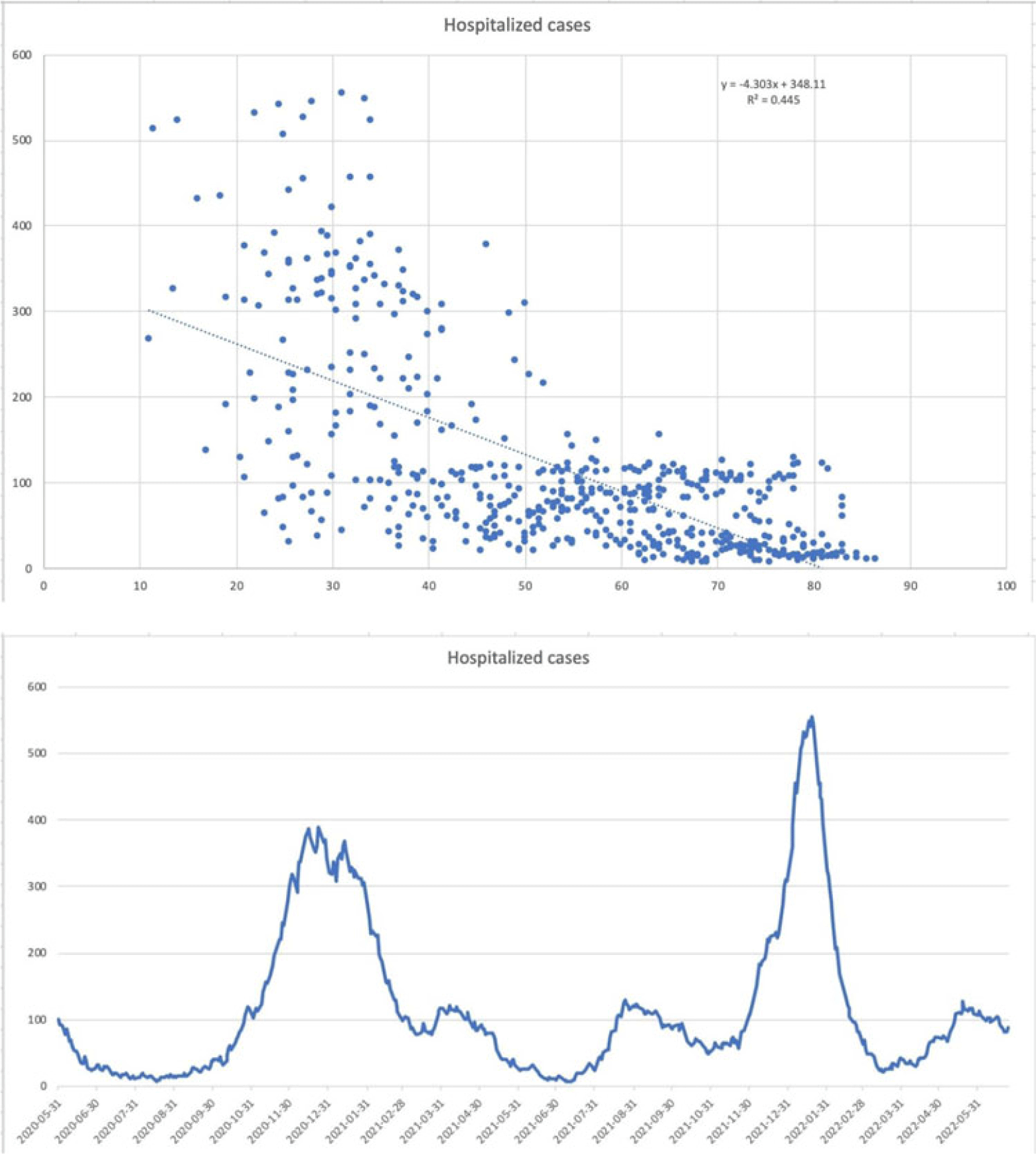

Background: Since the start of the COVID-19 pandemic, many variables have contributed to surges in cases such as the presence of variants, vaccination status, and comorbid medical conditions. However, other factors can be considered including temperature, precipitation, and periods in large congregations. The spike in SARS-CoV-2 infections during the winter has made it seem plausible that transmission may be affected by meteorological factors. A study by Birukov et al demonstrated that a 1°C increase in temperature was associated with a 3.08% reduction in daily new cases and a 1.19% decrease in daily new deaths. We propose that SARS-CoV-2 transmission will decline more rapidly when either precipitation or temperature is higher; thus, in warmer regions with less precipitation daily cases, hospitalizations and deaths will be lower. Methods: This is a retrospective study of statewide data in Hartford County, Connecticut, collected from May 2020 to June 2022 assessing percent positivity reported in daily case count, hospitalizations for COVID-19, and deaths from COVID-19 collected from the Connecticut Department of Public Health COVID-19 database. Information on weather conditions, including temperature and precipitation, were collected from the National Weather Service pertaining to Hartford County. Trends in variables related to patient outcomes were compared to weather conditions within the county of Hartford. Moreover, certain periods within the various seasons that typically involve large gatherings and public holidays (eg, New Year’s Day, Memorial Day, 4th of July, Labor Day, Thanksgiving, and Christmas Day) were further analyzed. Results: There appears to be an inverse correlation coefficient of −0.422, between confirmed daily cases and mean temperature in Hartford County, indicating that as temperature increases, confirmed cases decrease. This phenomenon is also observed with confirmed daily deaths and mean temperature, with a correlation coefficient of −0.463. Moreover, there is an even more significant relationship between hospitalization cases and mean temperature, with a correlation coefficient of −0.667. Furthermore, the year-end holidays (Christmas Day and New Year’s Day) were associated with a significant spike in confirmed daily cases, hospitalizations, and deaths.

However, the relationship between confirmed daily cases, hospitalized cases, and confirmed deaths against mean precipitation in Hartford County demonstrated no significant relationship, reporting correlation coefficients of −0.042, −0.044, and −0.044, respectively. Conclusions: Our available COVID-19 and weather data show that temperature is inversely correlated with daily cases, hospitalizations, and deaths. However, with regard to precipitation, there was no discernable relationship between the variables.

Disclosures: None

Outcomes of patients hospitalized for COVID-19, secondary infections, antimicrobial use during SARS-CoV-2 delta and omicron variants

- Swetha Srialluri, Curtis Collins, Holly Murphy

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s55-s56

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: The SARS-CoV-2 omicron variant has been associated with increased transmissibility and less severe disease than the SARS-CoV-2 delta variant. Low rates of secondary infections and excess empiric antimicrobial use were reported early in the pandemic. Comparisons between later variants are not as well documented. We evaluated outcomes for SARS-CoV-2 delta- and omicron-variant surges with emphases on COVID-19–related treatment, secondary infections, and antimicrobial utilization. Methods: A single-center, observational, retrospective study was conducted for SARS-CoV-2–positive patients admitted to our 548-bed community teaching hospital between November and December 2021 (SARS-CoV-2 delta-variant–predominant phase) and January–February 2022 (SARS-CoV-2 omicron-variant–predominant phase). Demographic and outcome data were obtained from the institutional data warehouse and were compared between groups. Secondary infections were defined as positive blood and respiratory culture results during admission, with likely contaminants excluded. Mann-Whitney U tests were used to evaluate continuous variables, and t tests were used to analyze categorical variables. P ≤ .05 was considered statistically significant. Results: In total, 1,297 patients were included: 787 (60.7%) in SARS-CoV-2 delta-variant–predominant phase and 510 (39.3%) in SARS-CoV-2 omicron-variant–predominant phase. Patients in SARS-CoV-2 omicron-variant–predominant phase were more often vaccinated (37.7% vs 55%; P < .001), required lower rates of ICU care (16.0% vs 11.6%; P = .025), and required less intubation (13% vs 6.3%; P < .001). Utilization of remdesivir (51.0% vs 32.2%; P < .001), dexamethasone (70.8% vs 43.3%; P < .001), and tocilizumab or baricitinib (14.5% vs 5.3%; P < .001) decreased during the SARS-CoV-2 omicron-variant–predominant phase. Length of stay (5 days vs 4 days; P < .001) and 30-day mortality also decreased during this period (16.40% vs 9.8%; P = .001). Infectious diseases consultation increased during the SARS-CoV-2 omicron-variant–predominant phase (39.8% vs 45.5%; P = .042). There was no significant difference in patients with positive blood cultures (3.4% vs 1.8%; P = .074), but there was a significant decrease in positive respiratory cultures (5.8% vs 2.7%; P = .009), combining for an overall reduction (8.4% vs 4.1%; P = .003). The incidence of overall antimicrobial use increased during the omicron-predominant phase (36.1% vs 41.8%; P = .04), and duration was lower (5 days vs 4 days; P < .001). Antimicrobial class-specific duration was unchanged, with the exception of decreased gram-positive agents (3 days vs 2 days; P = .012). Conclusions: Our results confirm previous reports of reduced disease severity during the SARS-CoV-2 omicron-variant–predominant period. The incidence of secondary infections decreased, driven by a reduction in respiratory infections. Antimicrobials were used at increased rates and for shorter durations during the SARS-CoV-2 omicron-variant–predominant period.

Disclosures: None

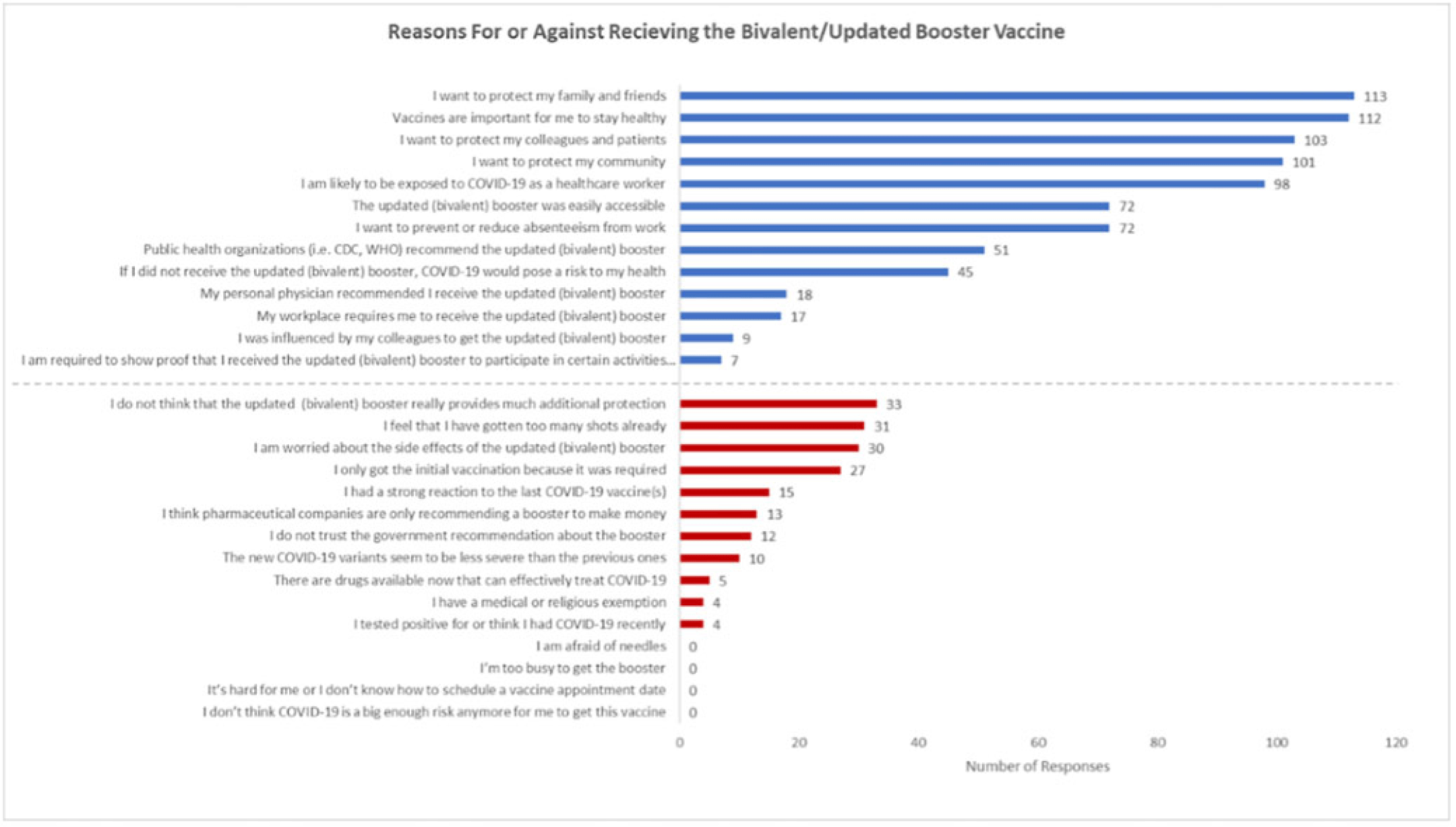

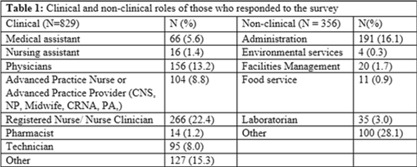

Characterizing healthcare worker attitudes toward the bivalent COVID-19 booster

- Kathryn Willebrand, Jacqueline Fredrick, Lauren Pischel, Kavin Patel, Scott Roberts, Thomas Murray, Richard Martinello

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s56-s57

-

- Article

-

- You have access Access

- Open access

- Export citation

-

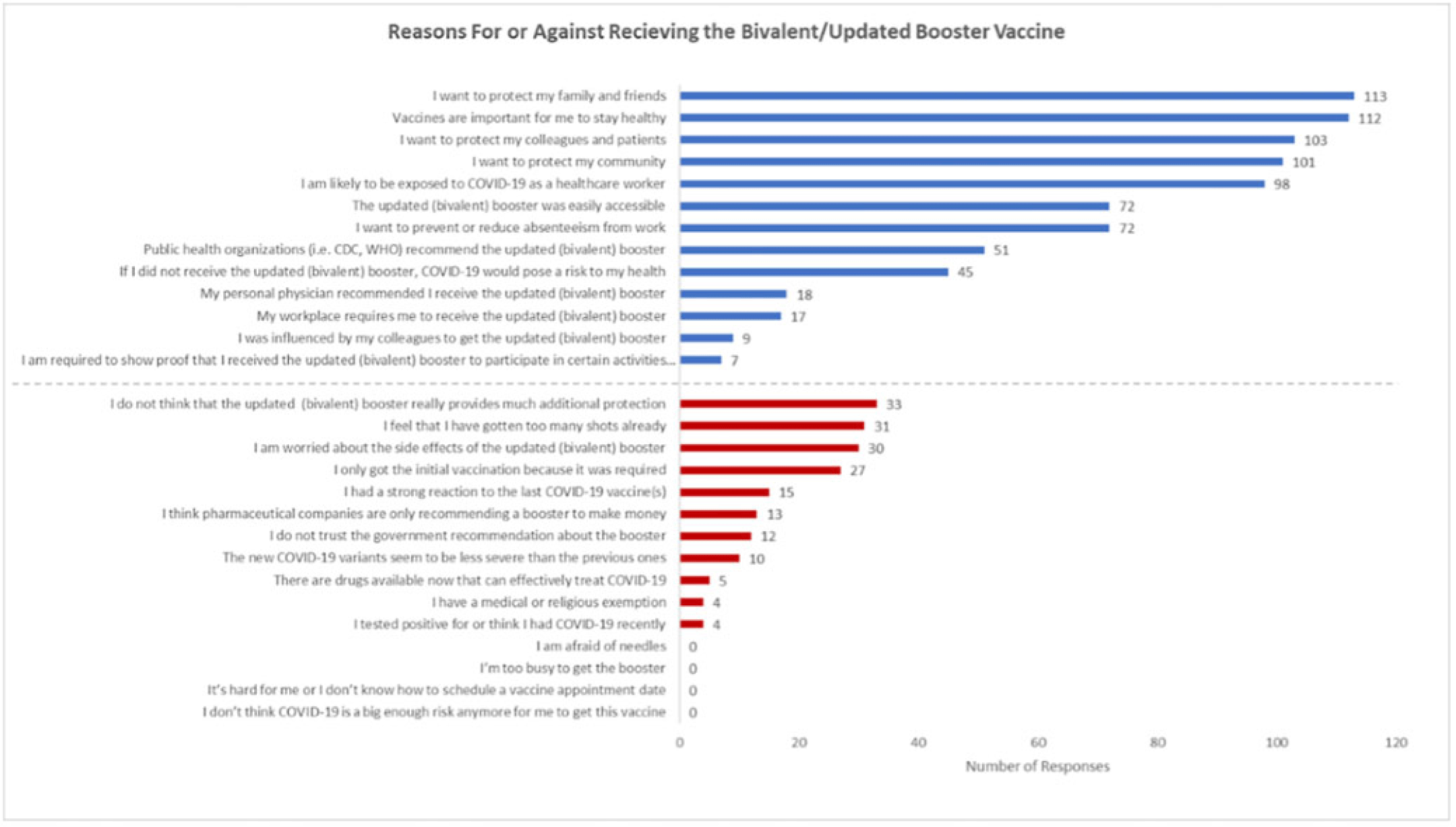

Background: Recent evidence has shown that the updated COVID-19 bivalent booster is effective in preventing COVID-19 compared with no previous vaccination and prior monovalent vaccination. Despite its effectiveness, uptake has been poor, and a minority of eligible recipients have received the booster. Understanding healthcare worker (HCW) attitudes for and against voluntary uptake of the bivalent booster dose against COVID-19 can help guide communication strategy to maximize uptake. In this survey study, we investigated attitudes toward updated and/or bivalent booster uptake in a behavioral health hospital shortly after a COVID-19 outbreak. Methods: A survey tool was developed and sent to all HCWs at the Yale New Haven Psychiatric Hospital in December 2022. The survey queried demographic data, job category, history of COVID-19, prior COVID-19 vaccinations, perception of COVID-19 exposure, and updated and/or bivalent booster doses. The survey was administered several weeks after a COVID-19 outbreak on multiple inpatient behavioral health units. Receipt of the COVID-19 primary vaccination series and the first booster dose were mandated for HCWs; however, receipt of the bivalent booster was voluntary. Results: The survey was sent to 664 HCWs with primary assignments in behavioral health settings. In total, 182 (27.4%) provided complete responses to the survey and are included in these data. Moreover, 91 HCWs (50.0%) reported previously having COVID-19 at least once. Overall, 100 HCWs (55.0%) received the bivalent booster. The most identified reasons for receiving the bivalent booster were wanting to protect family and friends (n = 113), importance of staying healthy (n = 112), and protecting colleagues and patients (n = 103). The most identified reasons for not wanting to receive the bivalent booster dose were not thinking it provides additional protection (n = 33), “too many” shots already received (n = 31), and concern about side effects (n = 30). Discussion: Bivalent booster dose uptake in HCWs on behavioral health units shortly after a COVID-19 outbreak was greater than the general population. HCWs reported varying reasons for and against receipt of the bivalent booster dose, with the most common being protection of family and friends and perceptions of no additional protection, respectively. A limitation of this study was voluntary response bias, in which results are biased toward individuals more likely to receive a bivalent booster vaccine. It is unclear whether reasons for declining the vaccine are representative of HCWs who did not complete the survey. Assessing attitudes for the bivalent booster dose can assist in guiding communication and outreach strategies to increase vaccine uptake by HCWs.

Disclosures: None

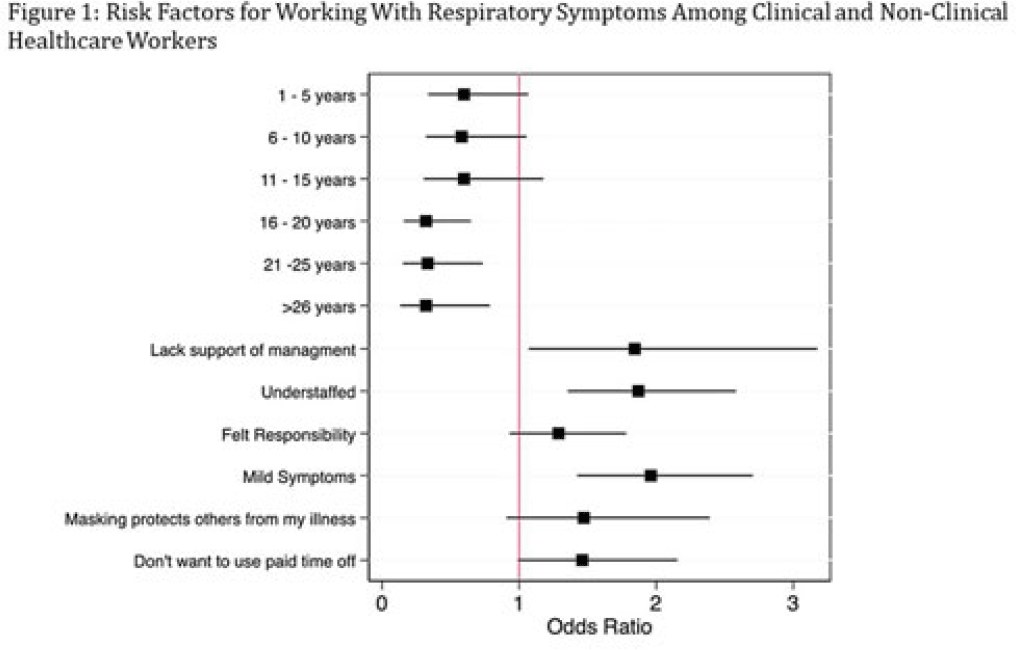

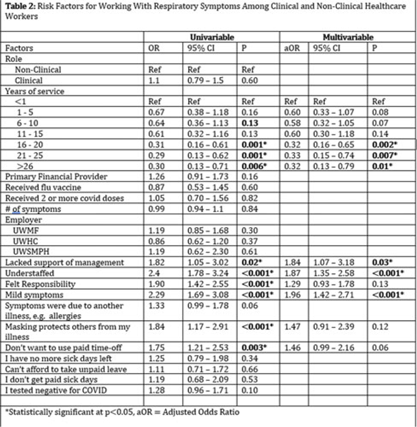

Factors influencing healthcare personnel decision making to work with respiratory symptoms during the COVID-19 pandemic

- Rachel Meyer, Michael Kessler, Daniel Shirley, Linda Stevens, Fauzia Osman, Nasia Safdar

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s57-s58

-

- Article

-

- You have access Access

- Open access

- Export citation

-

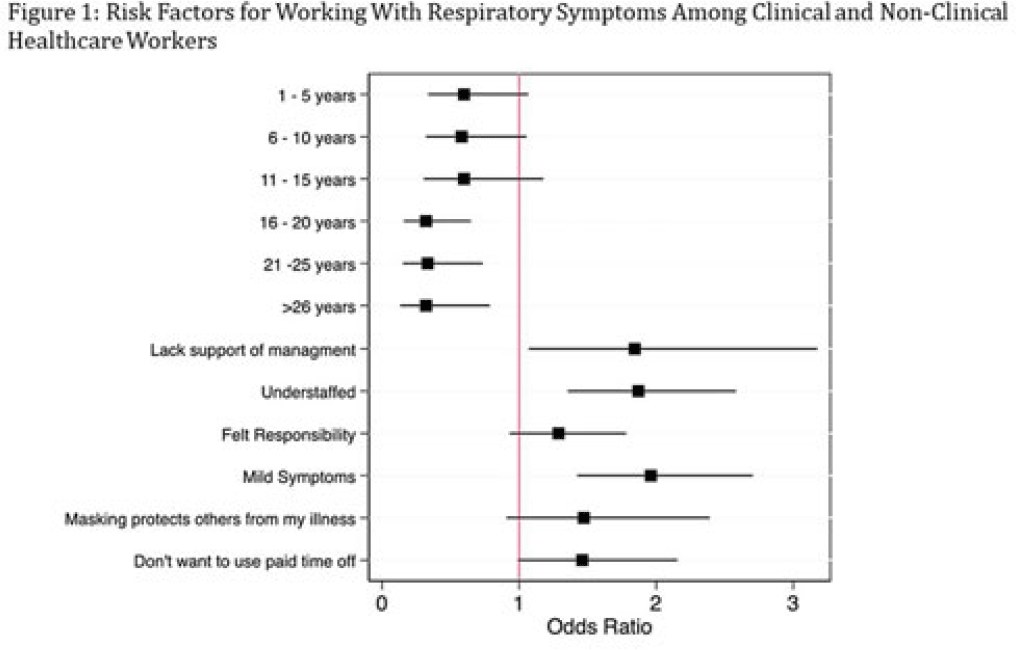

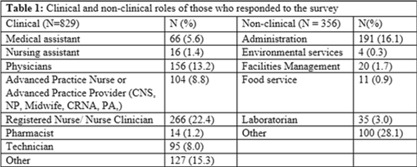

Background: Amid the COVID-19 pandemic, healthcare systems were stretched thin, with staffing shortages posing substantial challenges. Limiting spread of COVID-19 among healthcare professionals (HCP) is paramount to preventing exacerbation of such shortages, but strategies are highly dependent on HCP self-screening for symptoms and isolating when present. We examined HCP perceptions of barriers and factors that facilitate staying home when experiencing respiratory symptoms. Methods: At an academic tertiary-care referral center, in inpatient and ambulatory settings, we conducted an anonymous electronic survey between March 11, 2022, and April 12, 2022. Using logistic regression analysis, we analyzed predictors of employees reporting to work with respiratory symptoms using STATA and SAS software. Results: In total, 1,185 individuals including 829 clinical staff and 356 nonclinical staff responded to the survey. When excluding participants who reported working “remotely” (N = 381) and those who reported being unsure of whether they had worked with symptoms (N = 14), the prevalence of working with respiratory symptoms was 63%. There was no significant difference between clinical and nonclinical staff (OR, 1.1; 95% CI, 0.8–1.5; P = .60). Increasing number of years of service was protective against working with symptoms, achieving statistically significance in multivariable analysis after 16 years. Compared to those having worked <1 year, the odds ratios of working with symptoms were 0.32 (95% CI, 0.16–0.65; P = .002), 0.33 (95% CI, 0.15–0.74; P = .007), and 0.32 (95% CI, 0.13–0.79; P = .007) for those working 16–20 years, 21–25 years, and ≥26 years, respectively. More than half of HCP who worked with symptoms identified being understaffed (56.9%), having mild symptoms (55.3%), and sense of responsibility (55.1%) as reasons to work with respiratory symptoms. The following barriers, or reasons to work with symptoms, were more commonly identified as significant by those who worked with symptoms compared to those who did not: being understaffed (OR, 1.87; 95% CI, 1.35–2.58; P ≤ .001), having mild symptoms (OR, 1.96; 95% CI, 1.42–2.71; P < .001), and lack of support from management (OR, 1.84; 95% CI, 1.07–3.18; P = .03). Conclusions: Working with respiratory symptoms is prevalent in clinical and nonclinical HCP. Those with fewer years of work experience appear to be more susceptible to misconceptions and pressures to work despite respiratory symptoms. Messaging should stress support from leadership and the significance of even mild respiratory symptoms and should emphasize responsibility to patients and colleagues to stay home with respiratory symptoms. Strategies to ensure adequate staffing and sick leave may also be high yield.

Disclosures: None

Decolonization Strategies

MRSA PCR improves sensitivity of detection of colonization in neonates

- Nahid Hiermandi, Catherine Foster, Krystal Purnell, James Dunn, Judith Campbell, Lucila Marquez

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s58-s59

-

- Article

-

- You have access Access

- Open access

- Export citation

-

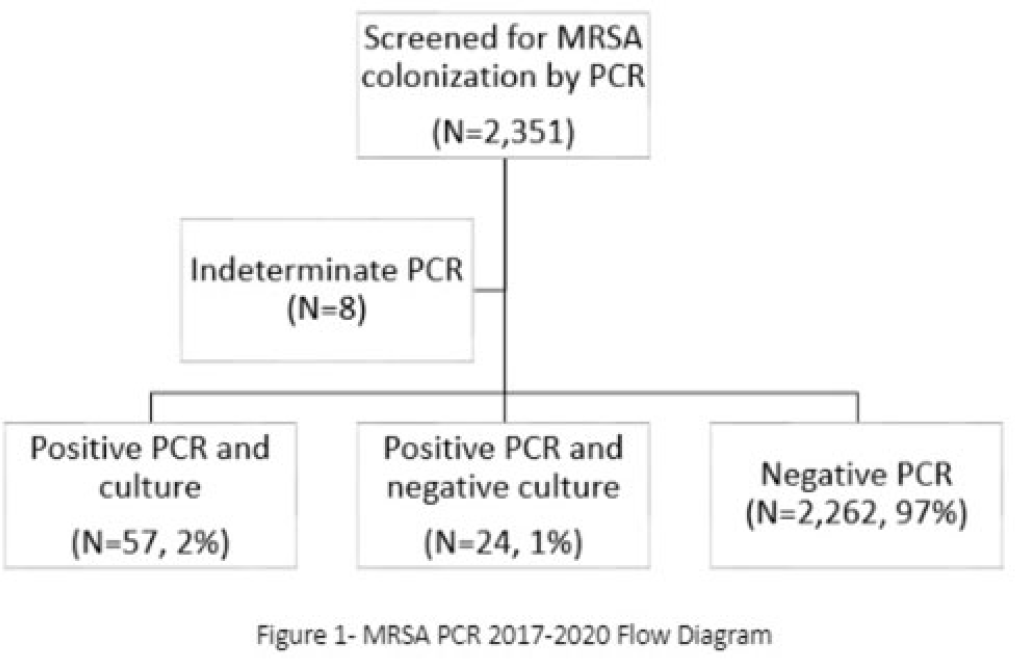

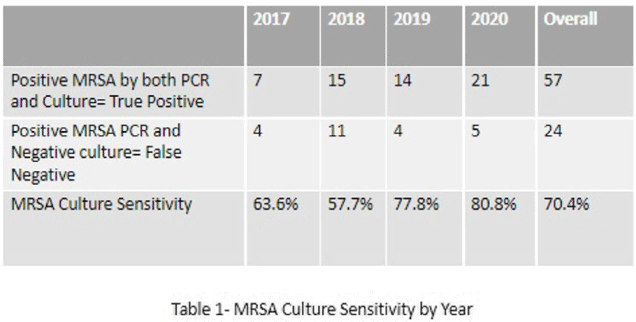

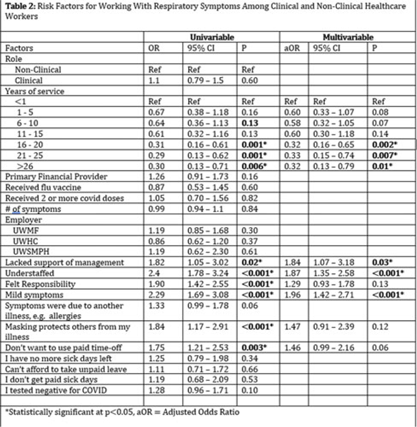

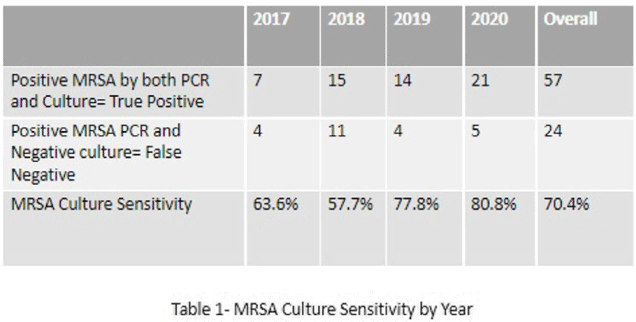

Background: Neonates colonized with methicillin-resistant Staphylococcus aureus (MRSA) are at high risk of developing life-threatening MRSA infection. Due to lack of evidence, national guidelines do not currently recommend a specific methodology for detecting MRSA colonization. We hypothesize that surveillance for MRSA colonization via polymerase chain reaction (PCR) is superior to culture for the detection of colonization. Methods: In this retrospective study, we compared results of MRSA surveillance by 2 methodologies, culture and PCR, after implementation of an MRSA surveillance and decolonization protocol in the Texas Children’s Hospital Pavilion for Women, a 42-bed neonatal intensive care unit. MRSA colonization of 3 body sites via the 2 methodologies was assessed from June 2017 through December 2020. All neonates were screened for MRSA upon admission to the NICU and weekly thereafter until MRSA-positive or discharged. Swab specimens were initially tested by PCR (Xpert MRSA NxG, Cepheid) and when MRSA-positive reflexed to culture to recover the organism for further characterization. This study was approved through the Baylor College of Medicine Institutional Review Board. Results: During the study period, 2,351 neonates were assessed for MRSA colonization by PCR; 81 (3.4%) infants were PCR positive (Fig. 1). Of those 81, 57 (70.4%) had concordant MRSA PCR and culture results, and 24 (29.6%) were MRSA PCR positive but no isolate was recovered in culture. Also, 8 specimens were indeterminate by PCR. However, 1 infant who was negative by culture but was PCR positive developed an MRSA orbital infection. Compared to PCR, the overall sensitivity of MRSA culture was 70.4% (range, 57.7%–80.8%, depending on the year) (Table 1). Conclusions: PCR is more sensitive than culture for detecting MRSA colonization in neonates. Utilizing a PCR method enhances the ability to identify MRSA colonized infants more readily and allows for prompt initiation of infection control interventions including isolation precautions and decolonization strategies. Reflex to culture remains important for strain characterization during outbreak investigations and for additional susceptibility testing. Resource utilization and cost–benefit analyses should be done in future studies to influence changes in national guidelines for the control of Staphylococcus aureus colonization and infection in neonatal intensive care units.

Disclosures: None

Decolonization of hospital patients may aid efforts to reduce transmission of carbapenem-resistant Enterobacterales

- Brajendra K. Singh, Prabasaj Paul, Camden D. Gowler, Sujan C. Reddy, Rachel B. Slayton

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s59-s60

-

- Article

-

- You have access Access

- Open access

- Export citation

-

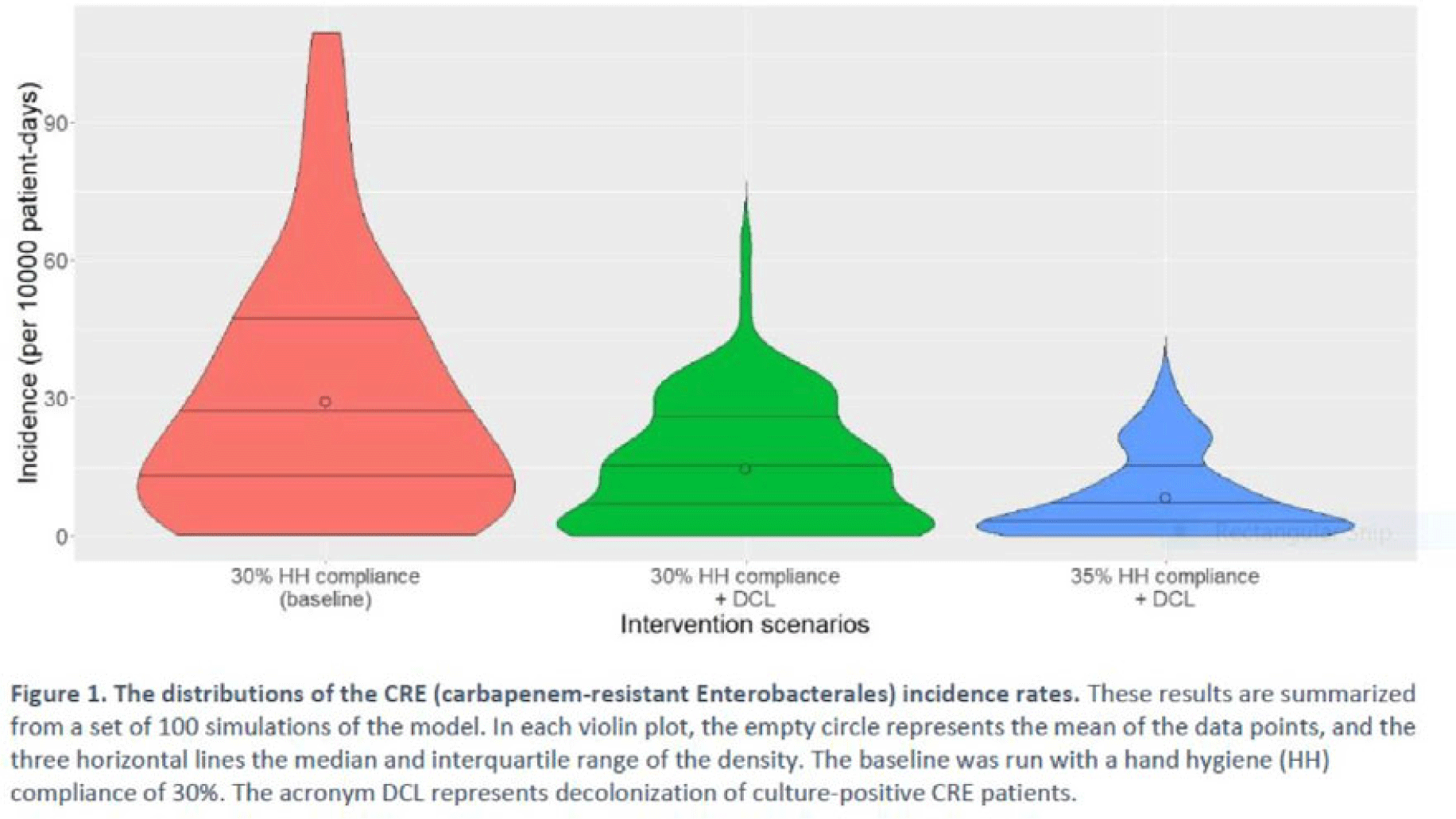

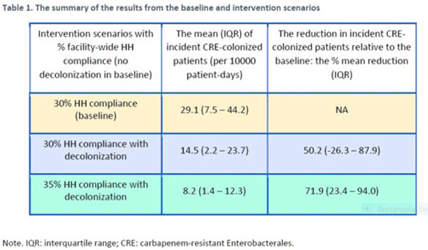

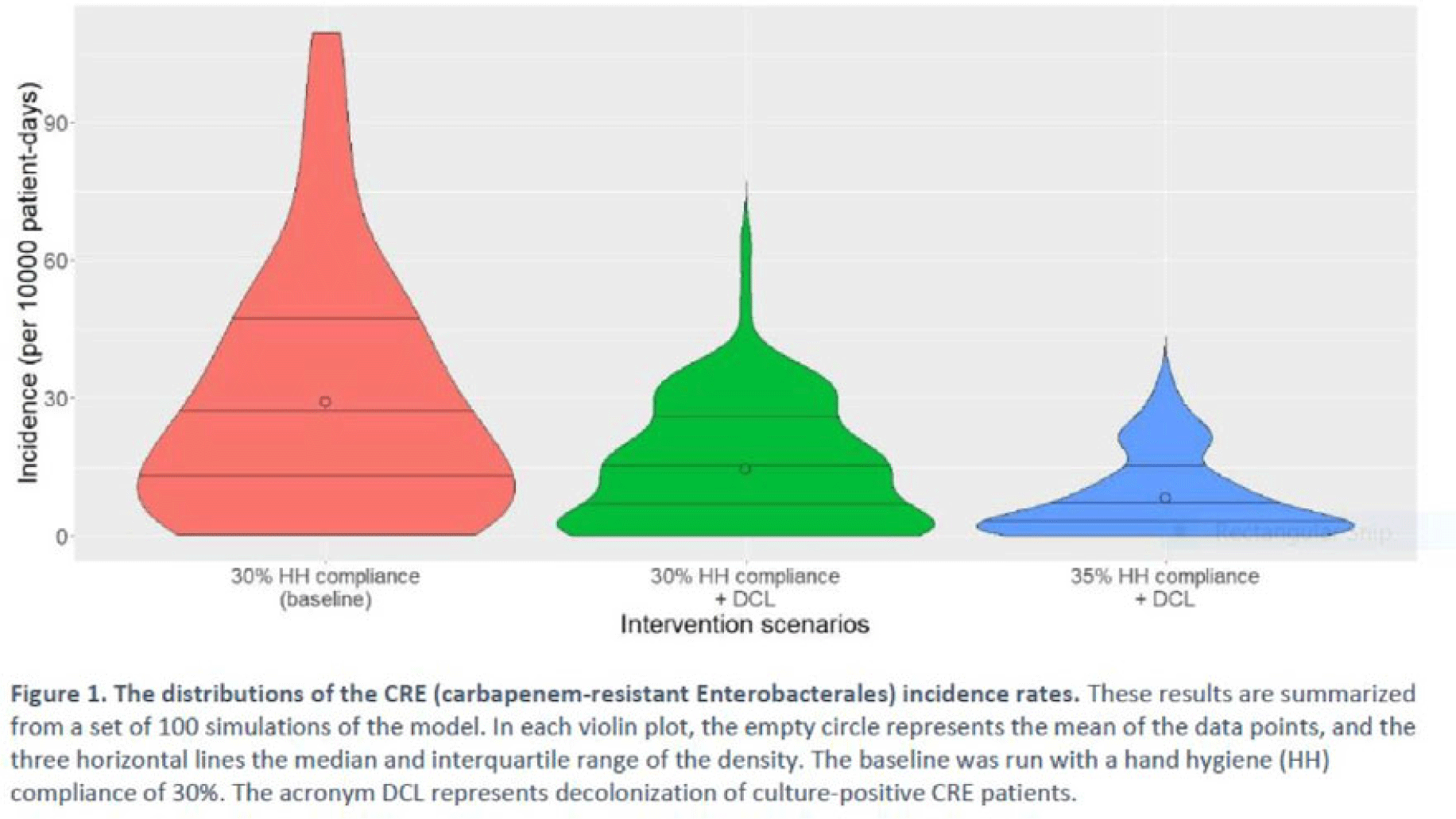

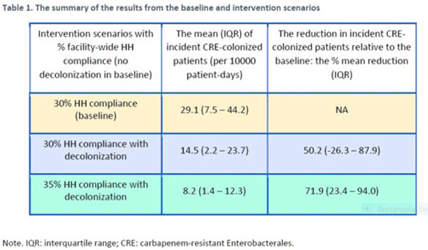

Background: Multimodal approaches are often used to prevent transmission of antimicrobial-resistant pathogens among patients in healthcare settings; understanding the effect of individual interventions is challenging. We designed a model to compare the effectiveness of hand hygiene (HH) with or without decolonization in reducing patient colonization with carbapenem-resistant Enterobacterales (CRE). Methods: We developed an agent-based model to represent transmission of CRE in an acute-care hospital comprising 3 general wards and 2 ICUs, each with 20 single-occupancy rooms, located in a community of 85,000 people. The model accounted for the movement of healthcare personnel (HCP), including their visits to patients. CRE dynamics were modeled using a susceptible–infectious–susceptible framework with transmission occurring via HCP–patient contacts. The mean time to clearance of CRE colonization without intervention was 387 days (Zimmerman et al, 2013). Our baseline included a facility-level HH compliance of 30%, with an assumed efficacy of 50%. Contact precautions were employed for patients with CRE-positive cultures with assumed adherence and efficacy of 80% and 50%, respectively. Intervention scenarios included decolonization of culture-positive CRE patients, with a mean time to decolonization of 3 days. We considered 2 hypothetical intervention scenarios: (A) decolonization of patients with the baseline HH compliance and (B) decolonization with a slightly improved HH compliance of 35%. The hospital-level CRE incidence rate was used to compare the results from these intervention scenarios. Results: CRE incidence rates were lower in intervention scenarios than the baseline scenario (Fig. 1). The baseline mean incidence rate was 29.1 per 10,000 patient days. For decolonization with the baseline HH, the mean incidence rate decreased to 14.5 per 10,000 patient days, which is a 50.2% decrease relative to the baseline incidence (Table 1). The decolonization scenario with a slightly improved HH compliance of 35% produced a relative reduction of 71.9% relative to the baseline incidence. Conclusions: Our analysis shows that decolonization, combined with modest improvement in HH compliance, could lead to large decreases in pathogen transmission. In turn, this model implies that efforts to identify and improve decolonization strategies for better patient safety in health care may be needed and are worth exploring.

Disclosures: None

Diagnostic/Microbiology

The impact of a blood-culture diagnostic stewardship intervention on utilization rates and antimicrobial stewardship

- Kelvin Zhou, Melinda Wang, Sabra Shay, James Herlihy, Muhammad Asim Siddique, Sergio Trevino Castillo, Todd Lasco, Miriam Barrett, Mayar Al Mohajer

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s60-s61

-

- Article

-

- You have access Access

- Open access

- Export citation

-

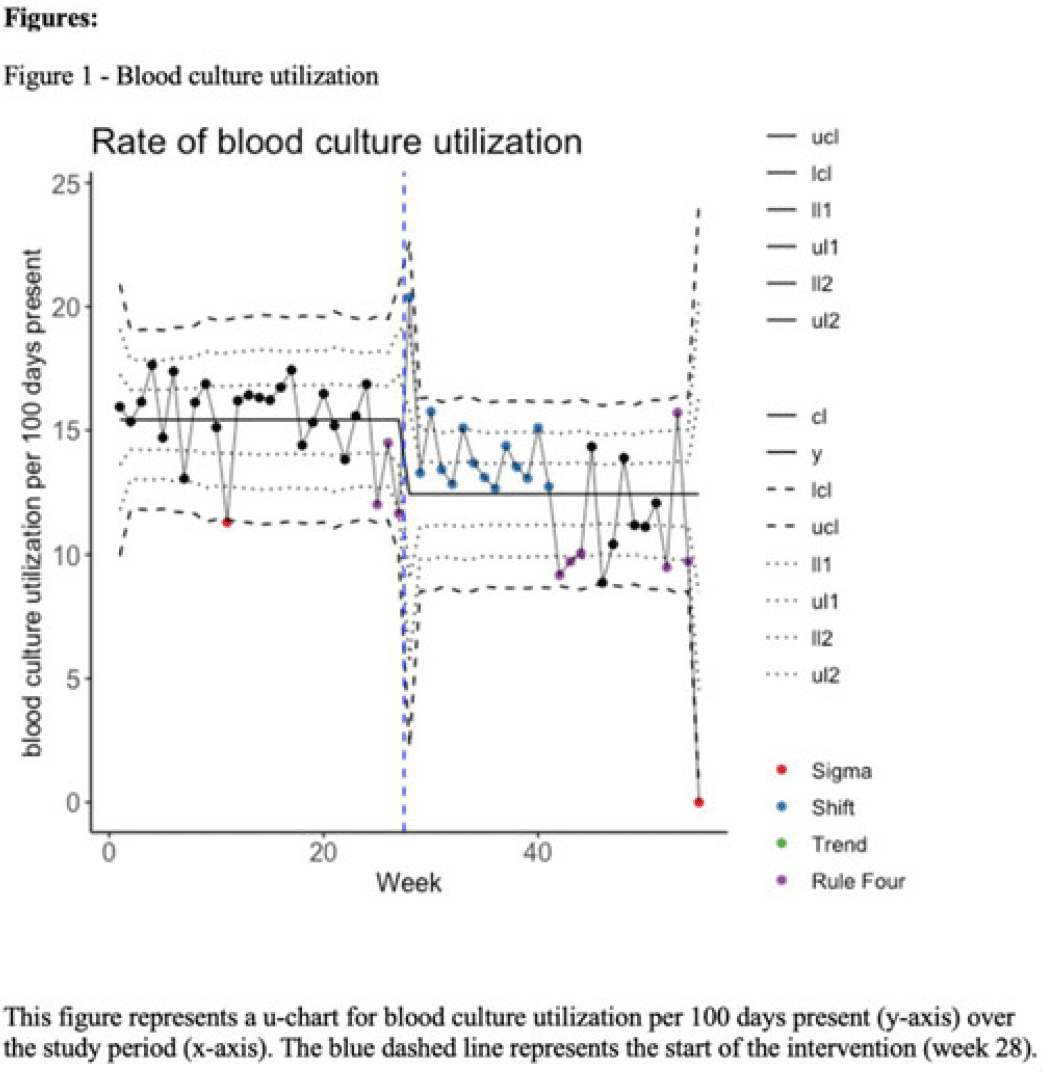

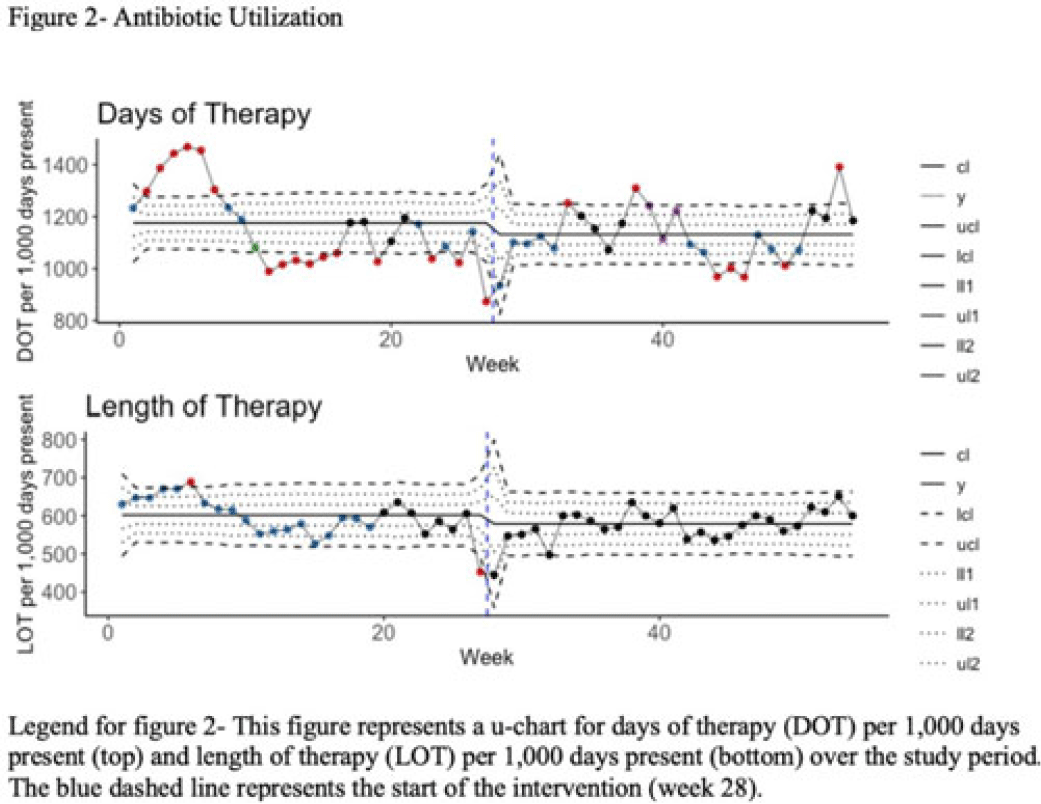

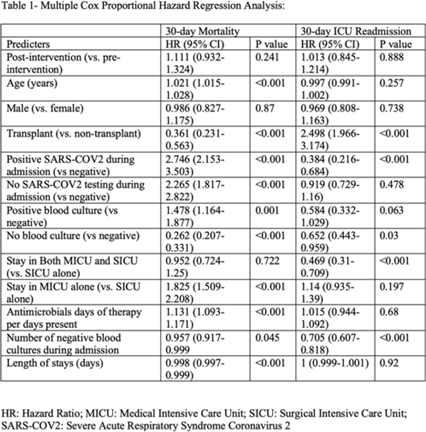

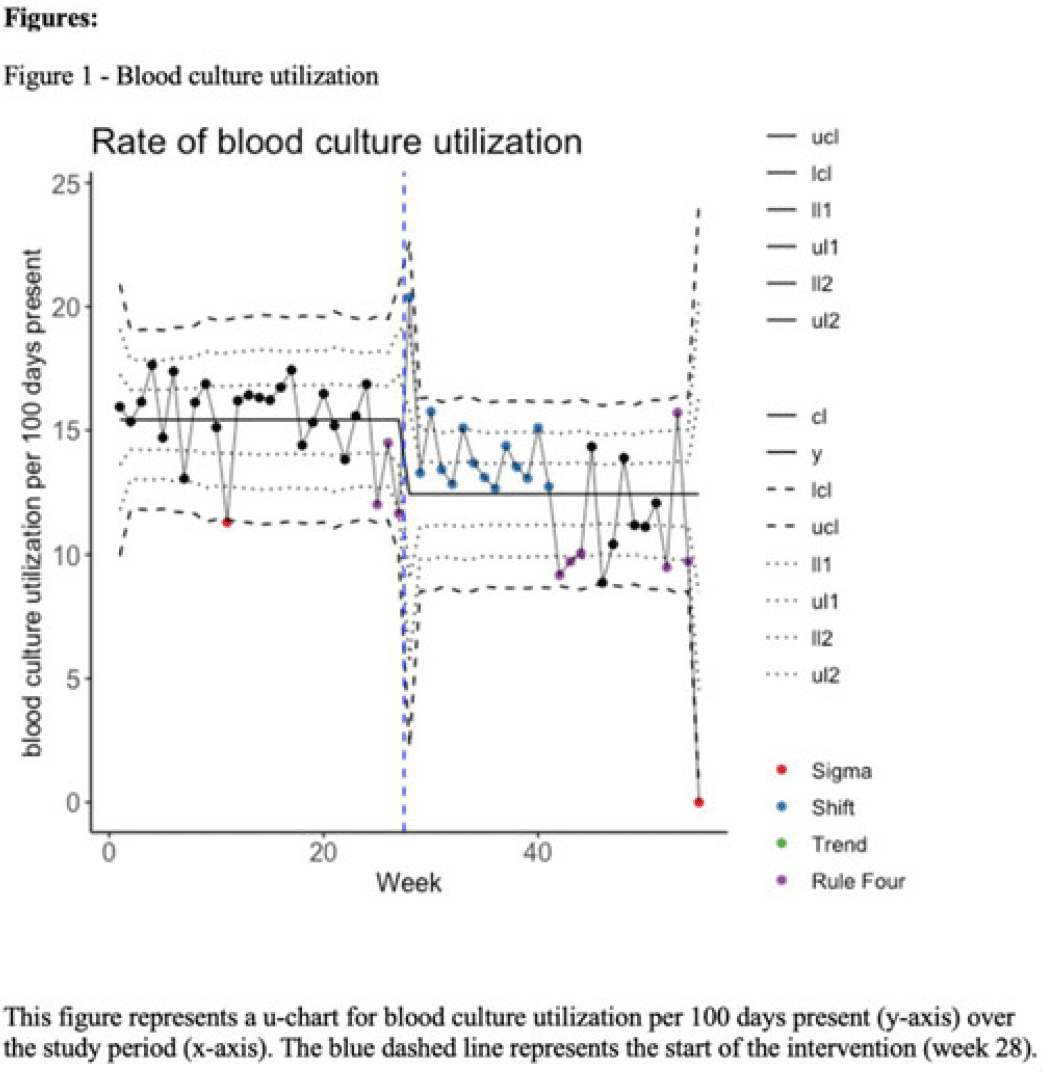

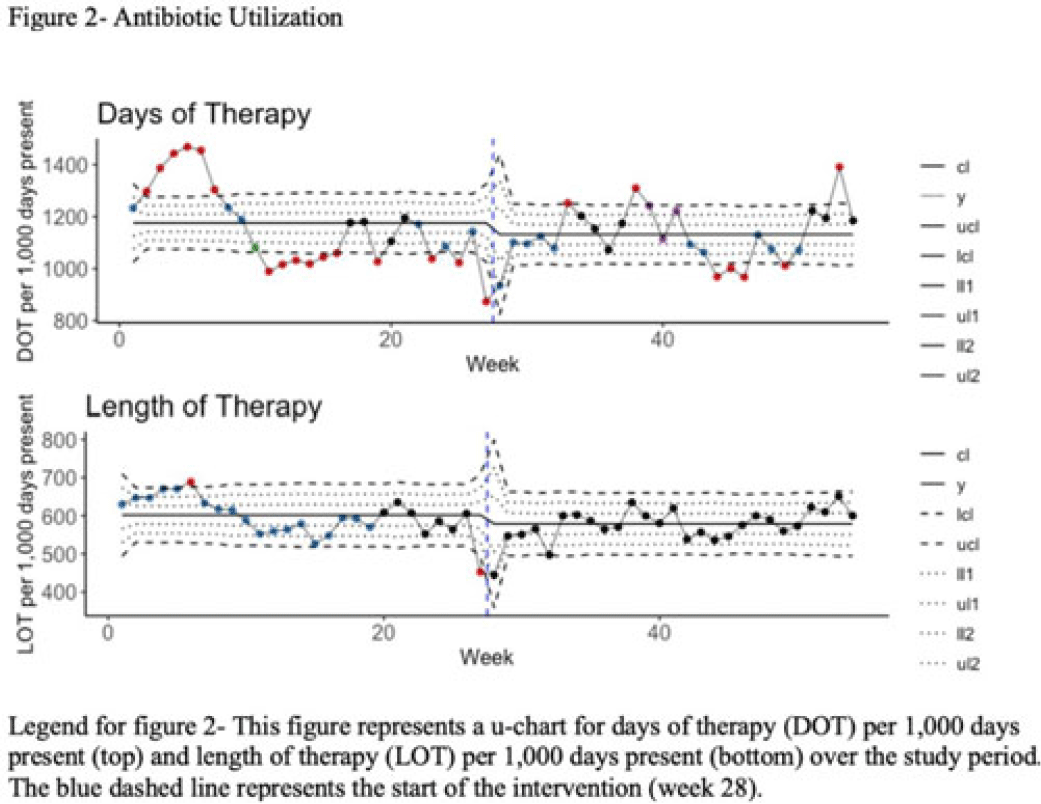

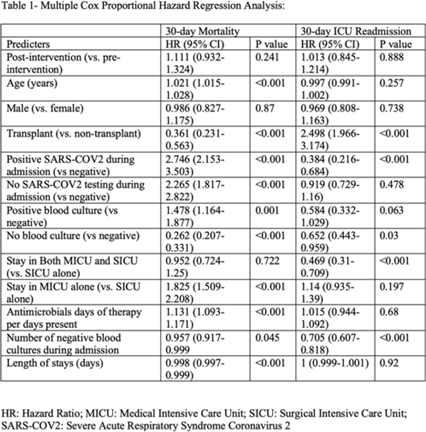

Background: Blood cultures are often ordered when an infection is suspected; however, they have a low yield in most cases. The overuse of blood culture is associated with high contamination rates, resulting in excess diagnostics, unnecessary antibiotics, longer hospital stays, and higher hospital costs. We evaluated the safety of a multifaceted intervention, which encompassed education and blood-culture restriction, and its impact on blood-culture utilization and antibiotic use in adult intensive care unit (ICU) patients. Methods: The study was performed between October 2020 and October 2021 in the 12 general medicine and specialty ICUs of a quaternary academic care center. The intervention, implemented in April 2021, included providing education to ICU and infectious disease physicians based on an algorithm adapted from the Johns Hopkins DISTRIBUTE study in addition to restricting blood-culture ordering on these units to these providers. The month of April 2021 was excluded as a washout period. Study outcomes comprised blood-culture utilization, blood-culture positivity, days of therapy (DOT), and length of therapy (LOT), which were compared across the study periods using IRR or the Pearson χ2 test, as appropriate. In addition, 30-day mortality and 30-day ICU readmission were evaluated utilizing multiple COX regression models. Results: In total, 6,303 patients (2,087 MICU, 3,636 SICU, and 580 both) were included in the study, with a median age of 65 years (IQR, 21). Most participants were male (57.5%), with a median length of stay of 175 hours (IQR, 186). After the intervention, blood-culture utilization rates decreased from 15.4% to 12.4% (IRR 0.80, 95% CI, 0.76–0.85) (Fig. 1). There was no difference in blood-culture positivity between the preintervention period (11.05%) and the postintervention period (11.64%; P = .459). Days of therapy decreased from 1,180 to 1,130 per 1,000 patient days (IRR, 0.96; 95% CI, 0.95–0.98), and the length of therapy decreased from 602 to 579 per 1,000 patient days (IRR, 0.96; 95% CI, 0.94–0.99) (Fig. 2). There was no difference in 30-day mortality (P = .241) nor 30-day ICU readmission (P = .888) across the study periods after adjusting for potential confounders (Table 1). Conclusions: Our multifaceted intervention decreased blood-culture and antimicrobial utilization in the ICUs without significantly affecting the positivity rate, mortality, or readmission. This study suggests that educating providers on appropriate blood-culture use along with restriction could safely improve healthcare outcomes. Further studies are warranted to validate our results across various institutions and to evaluate the impact of blood-culture optimization in non-ICU patients.

Disclosures: None

Evaluation of interprovider consistency in interpretation of blood culture guidelines at an academic medical center

- Sherif Shoucri, Tony Li-Geng, David DiTullio, Jenny Yang, Emily Fiore, Arnold Decano, Yanina Dubrovskaya, Dana Mazo, Ioannis Zacharioudakis

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s61-s62

-

- Article

-

- You have access Access

- Open access

- Export citation

-

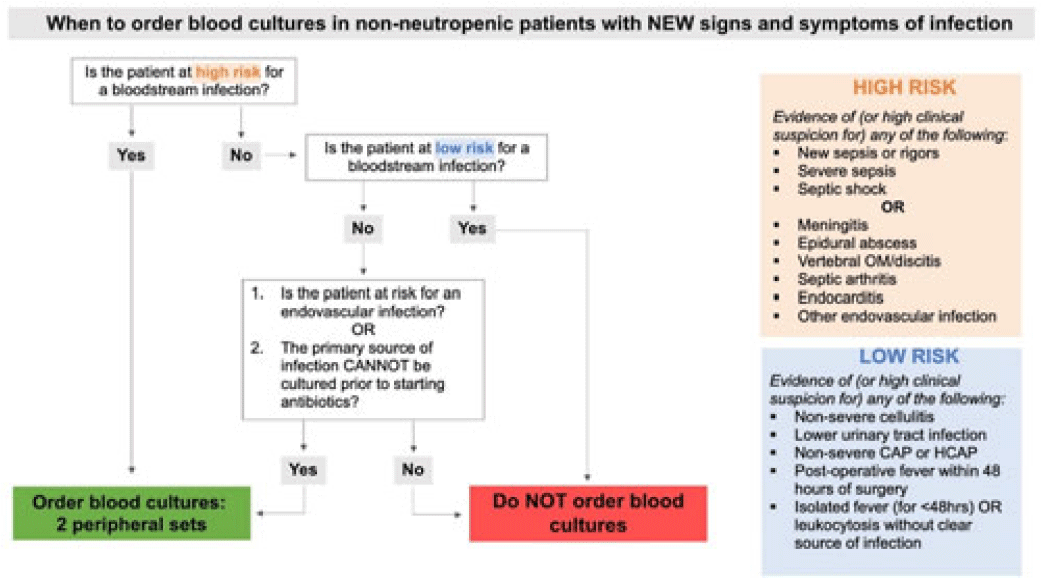

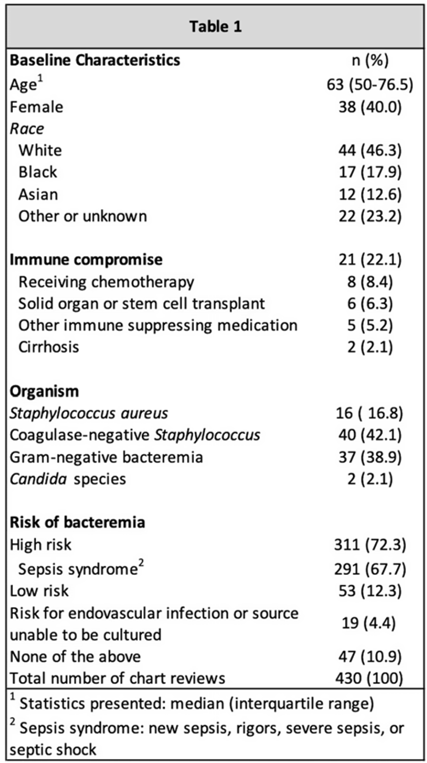

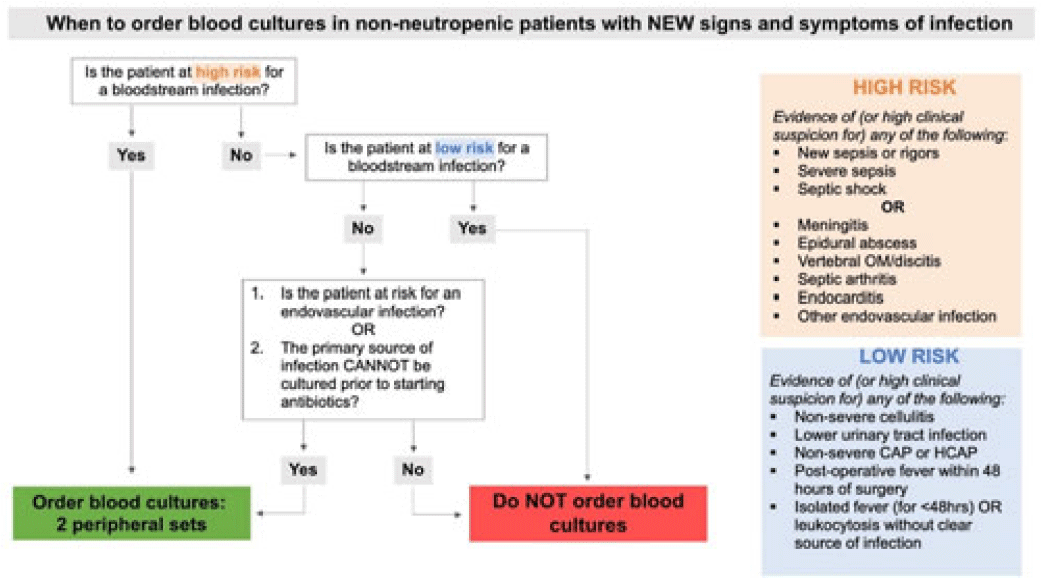

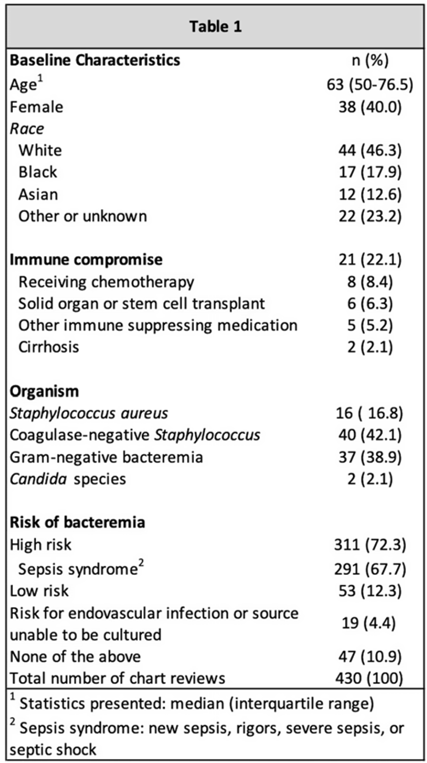

Background: Blood cultures are a fundamental tool in the diagnosis of infections, but they can lead to clinical confusion and waste resources when they yield false results. To optimize blood-culture orders at our institution, we developed an evidence-based clinical guideline (Fig. 1) to be used by frontline providers on nonneutropenic hospitalized adult inpatients. We retrospectively reviewed charts of patients with positive blood cultures to evaluate whether frontline providers and infectious diseases (ID) attending physicians were able to consistently interpret the guidelines to determine whether blood cultures were drawn appropriately. Methods: In total, 95 nonneutropenic adults with an initial positive blood culture collected while on an inpatient unit were identified through a query of the electronic medical record from January 2021 through June 2022. Patients with polymicrobial bacteremia and bacteremia due to Enterococcus, Streptococcus, and gram-positive rods were excluded. Moreover, 4 medical resident physicians reviewed all patients and 2 ID attending physicians reviewed one-quarter of cases; all were blinded to the culture results. Blood cultures were determined to be either appropriately or inappropriately performed based on our institution’s guideline. The free-marginal multirater κ statistics with 95% CIs were calculated to evaluate interrater agreement. Results: Baseline patient demographics are shown in Table 1. Immune compromise without neutropenia was noted in 21 of 95 patients. Most patients were at high risk for bacteremia (72%) per our institutional guideline, most of whom were septic (67.7%). Low risk for bacteremia was found in only 12.3% of reviews. Medical resident physicians, ID attending physicians, and all reviewers combined agreed on whether blood cultures were drawn appropriately or inappropriately (84.2%, 92%, and 86.4% agreement rates, respectively). The free-marginal κ statistic was highest for ID attending physicians (0.84; 95% CI, 0.62–0.78), followed by attending physicians and resident physicians combined (0.73; 95% CI, 0.56–0.90), and resident physicians alone (0.68; 95% CI, 0.58–0.78). In the 21 patients with immune compromise, the agreement rates on blood culture appropriateness remained high among all reviewers, resident physicians, and ID attending physicians were 86.6%, 90.5%, and 95%, respectively. Conclusions: In our retrospective study of nonneutropenic hospitalized adult inpatients, frontline providers and ID attending physicians interpreted blood-culture guidelines consistently, largely agreeing on which patients had cultures drawn appropriately. Agreement among ID attending physicians was excellent and remained substantial among medical resident physicians. Guidelines on the appropriate use of blood cultures are vital to limiting unnecessarily collected cultures, which can lead to extended length of stay and increase cost across hospital systems. Further analyses on the clinical impact of this guideline are ongoing.

Disclosures: None

Evaluation of indication in a urinalysis driven reflex urine culture protocol at an academic medical center

- Mackenzie Keintz, Jasmine Marcelin, Mark Rupp, Trevor Van Schooneveld

-

- Published online by Cambridge University Press:

- 29 September 2023, p. s62

-

- Article

-

- You have access Access

- Open access

- Export citation

-

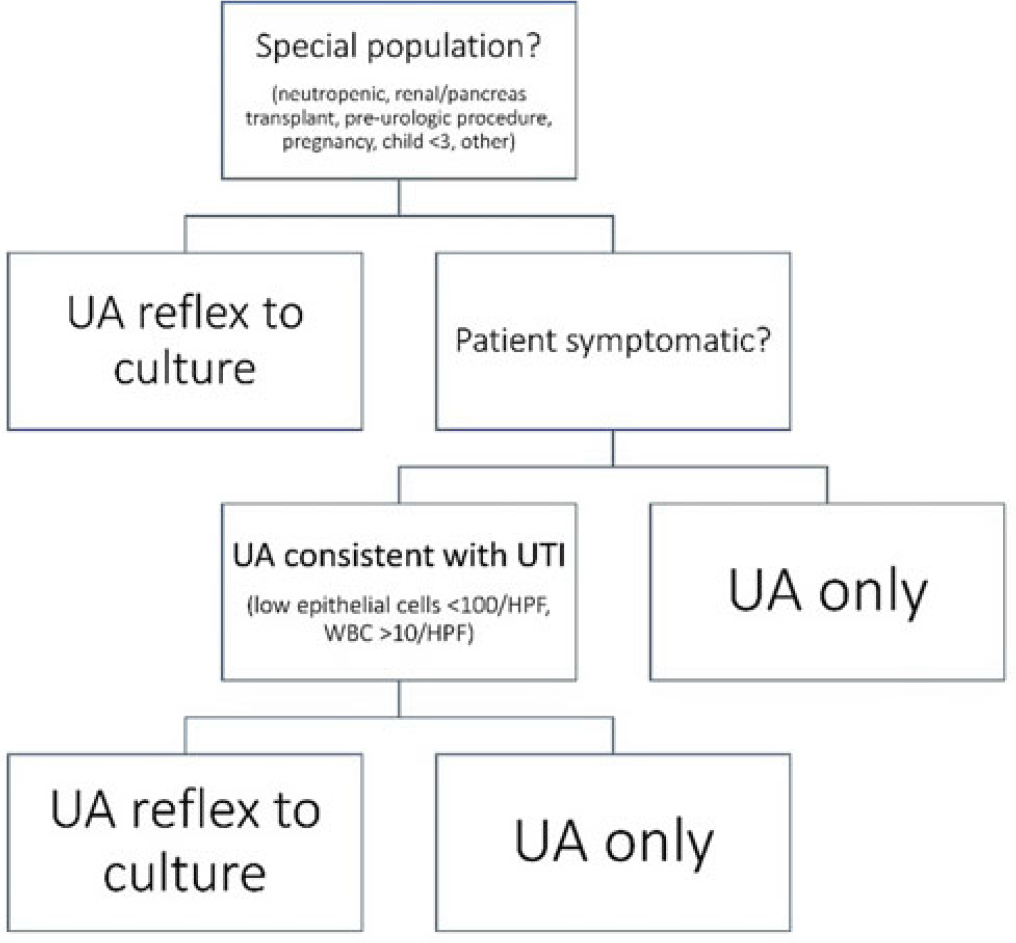

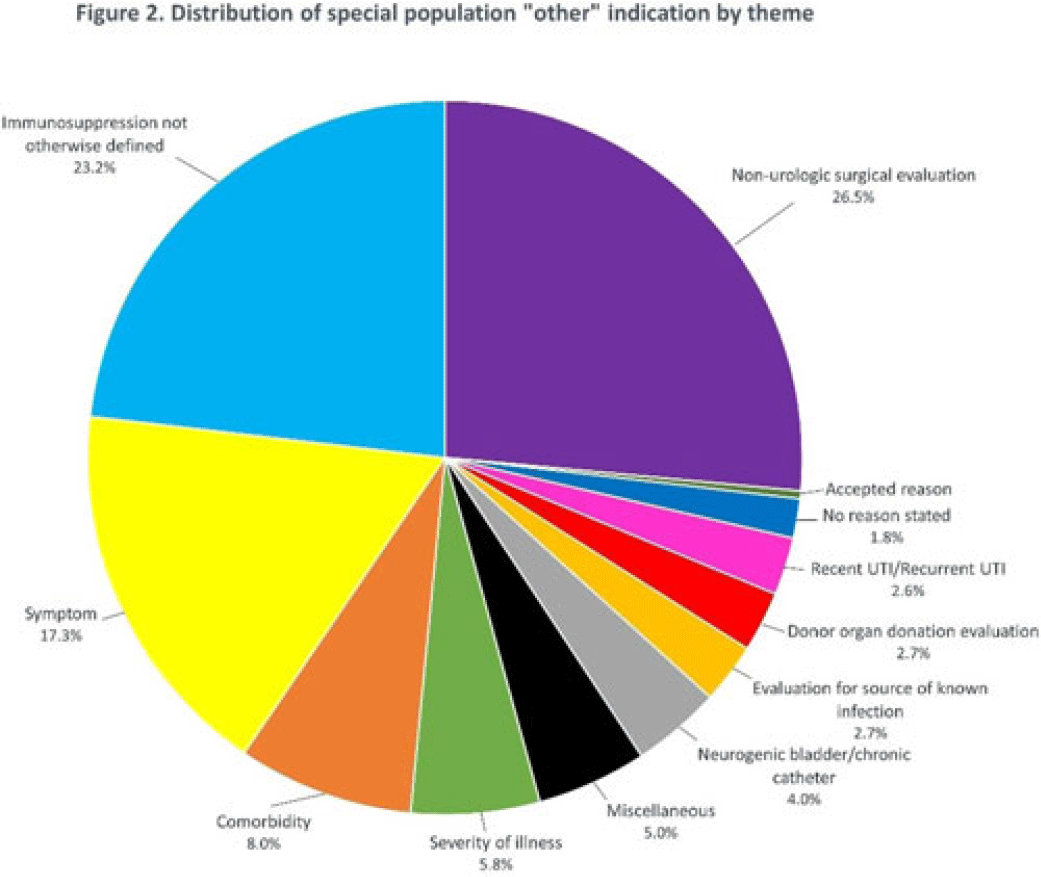

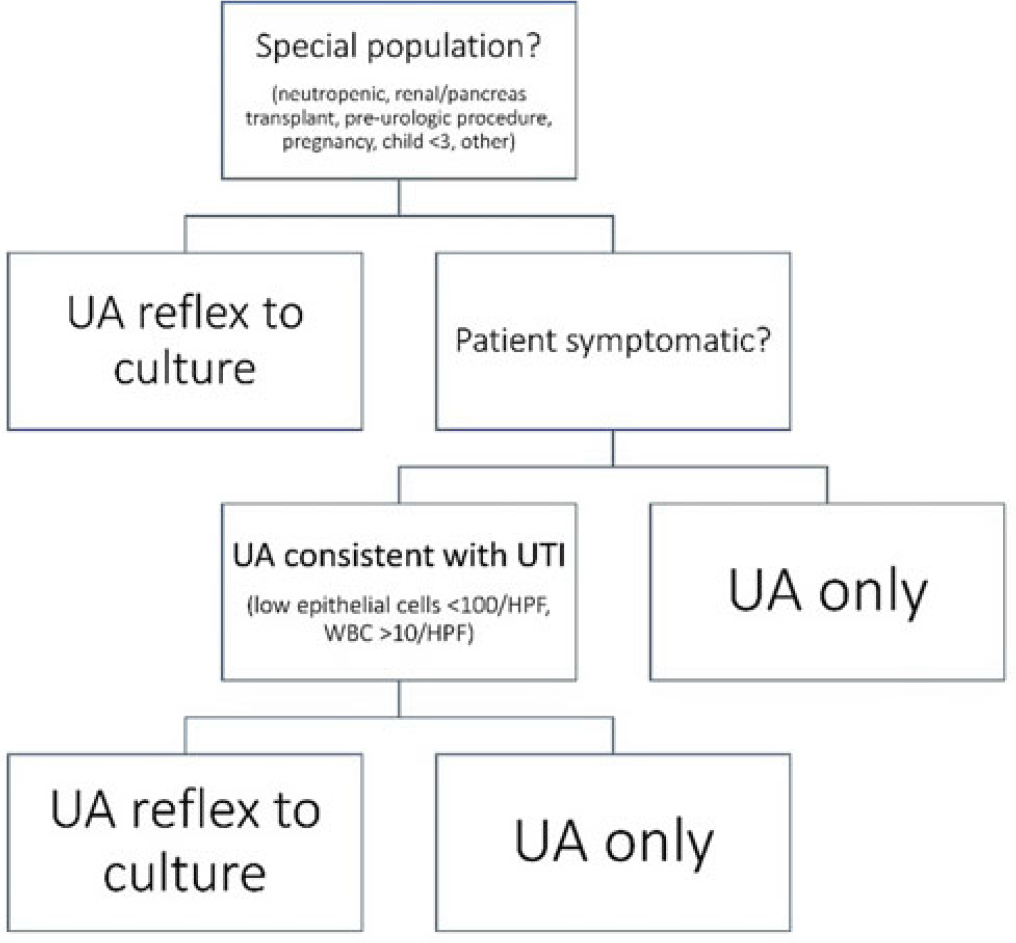

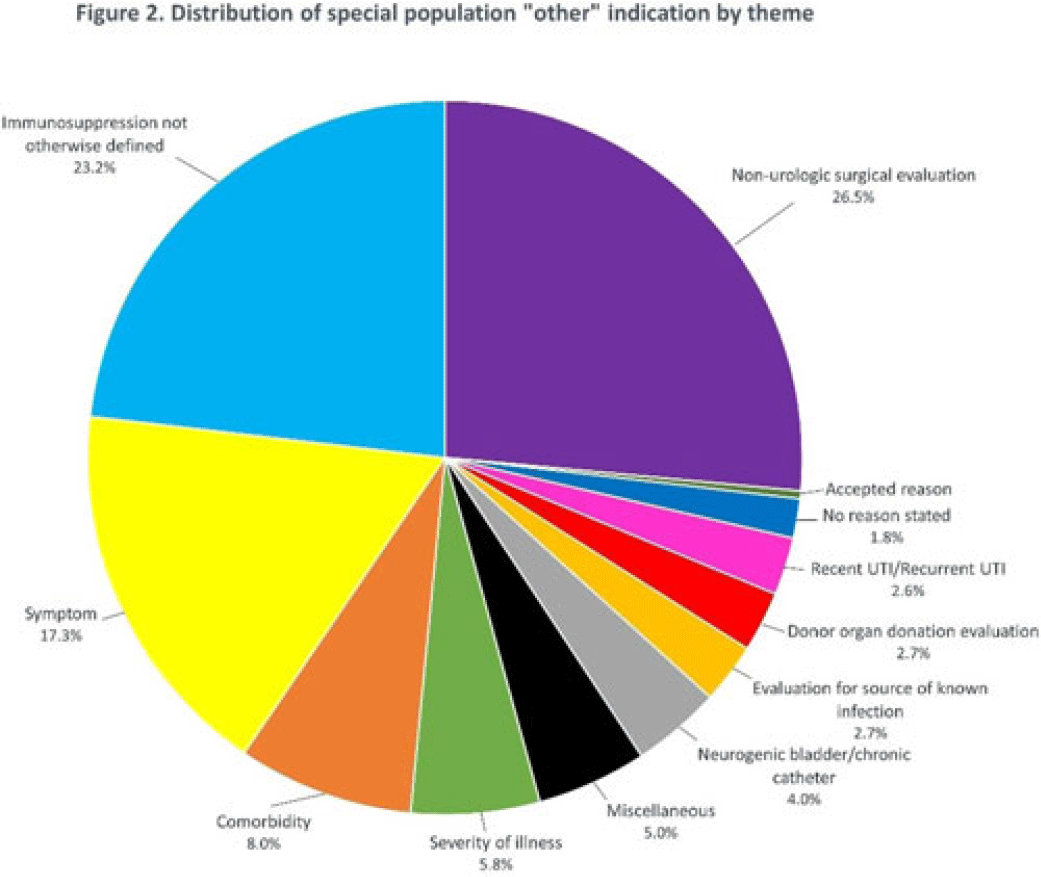

Background: Asymptomatic bacteriuria (ASB) is a widespread problem in hospitalized patients in which only a small subset of patients benefit from treatment. Other patient populations with ASB are harmed by treatment. In 2014, our institution implemented a urinalysis (UA)-driven reflex culture protocol which evaluated patient symptoms, risk factors, and the UA to determine whether bacterial culture was performed (Fig. 1). The goal of this process was to ensure that urine cultures were only performed in those patients who had symptoms of UTI and an abnormal urinalysis while allowing for exceptions in populations where treatment of ASB may be appropriate (ie, pregnancy, aged <3 years, impending urologic surgery, kidney transplant) or where the urinalysis may not be useful in determining whether infection is present (ie, neutropenia). An “other” indication with free-text documentation required was included to allow for unique situations. We evaluated the free-text option to determine whether additional indications were needed and whether data entered were medically appropriate. Methods: This retrospective review at a Midwestern, tertiary-care, academic medical center included inpatient UA with UTI evaluation order sets between July 1, 2020, and June 30, 2022. Descriptive statistics analyzed order-set utilization. Results: In total, 35,469 “urinalysis to reflex culture” order sets were submitted, of which 9,493 resulted in culture. Of these, 839 (8.8%) were ordered with an indication of “other.” “Other” was the most cited indication for special population override contributing to 40% (n = 839 of 2,085) of these indications, followed by kidney or pancreas transplant (29%) and neutropenia (13%). The write-in options fell into 1 of 11 themes (Fig. 2). The 3 most common reasons a urine culture was obtained using the free-text option were nonurologic surgical intervention (n = 223 of 839), immunosuppression not otherwise defined (n = 195 of 839), and symptom presence (n = 146 of 839). Based on current literature, 97% of other indications were inappropriate (n = 816 of 839). If the UTI protocol had been strictly followed, 696 of 839 (83%) cultures ordered with an indication of “other” would not have been obtained, due either to lack of symptoms or, if symptomatic, lack of pyuria. Conclusions: Most cultures obtained by selecting the “other” special population option on the algorithm were obtained in situations in which a urine culture was unnecessary. Removing the “other” indication from the algorithm may improve appropriateness of urine culturing with a possible decrease in CA-UTI and treatment of ASB. Although most write in rationales were inappropriate, adding an additional category for deceased donor-organ evaluation would be reasonable.

Disclosures: None

Identifying opportunities for diagnostic stewardship in UTI testing in pediatrics

- Karen Acker, Michael Alfonzo, Tess Gray, Taylor Dempsey, Lisa Saiman, Lars Westblade, Sabrina Racine-Brzostek, Nicole Gerber

-

- Published online by Cambridge University Press:

- 29 September 2023, pp. s62-s63

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: Reflexive urine culturing, a strategy wherein urine cultures are only performed on samples with pyuria, is increasingly being used to reduce unnecessary urine cultures, healthcare costs, and inappropriate antibiotics. To support implementation of a reflexive urine-culture order for pediatric patients aged <18 years, we assessed the proportion of urine cultures that would be avoided with reflexive urine culturing, and we calculated the sensitivity and negative predictive value (NPV) of the ≥10 white blood cells (WBC) per high-powered field (HPF) threshold for diagnosing urinary tract infections (UTI) in patients aged <18 years who presented to the pediatric emergency department (ED). Methods: A retrospective review of patients <18 years with a urine culture performed from January to May 2022 in an urban, tertiary-care, pediatric ED was performed. A positive urine culture was defined as ≥50,000 CFU/mL for catheterized specimens and ≥100,000 CFU/mL for clean-catch or unspecified specimens. Pyuria was defined as ≥10 WBC/HPF. ‘True UTI’ was defined as a positive urine culture with a consistent clinical presentation (eg, fever or dysuria). Sensitivity, specificity, and NPV were calculated using the pyuria threshold of ≥10WBC/HPF compared to the gold standard of a ‘true UTI.’ Results: During the study period, 658 patients aged <18 years had urine cultures sent, of which 46 (7%) were positive. In all, 407 urine cultures (61.9%) were obtained by clean catch, 233 (35.4%) were obtained by urethral catheterization, 2 (0.3%) were obtained by Foley catheter, and 16 (2.4%) were unspecified. Among the 46 positive cultures, 32 (69.6%) had ≥10 WBC/HPF, and 55 (9.0%) of 612 negative cultures had ≥10 WBC/HPF. Of the 14 patients with positive urine cultures without pyuria, 8 had a contaminated sample or asymptomatic bacteriuria, 3 had urologic abnormalities, and 3 were infants aged <3 months. Of the 14 patients, 3 (21.4%) had a consistent clinical presentation for UTI and were treated with antibiotics: 2 were infants aged <3 months and 1 had urologic abnormalities. Using the ≥10 WBC/HPF threshold compared to ‘true UTI,’ sensitivity was 91.4%, specificity was 91.5%, positive predictive value was 36%, and NPV was 99.5%. Sensitivity and NPV increased to 100% when infants aged <3 months and urologic patients with positive urine culture were excluded. We estimated a cost saving of ~$200,000 had reflexive testing been in place. Conclusions: A reflexive urine culture for specimens with ≥10 WBC/HPF would have reduced the number of urine cultures substantially because 571 (86.8%) of 658 urine cultures would not have been performed. To prevent missed diagnoses of UTI, infants aged <3 months and children with urologic abnormalities should be excluded from this diagnostic stewardship intervention.

Disclosures: None