No CrossRef data available.

Article contents

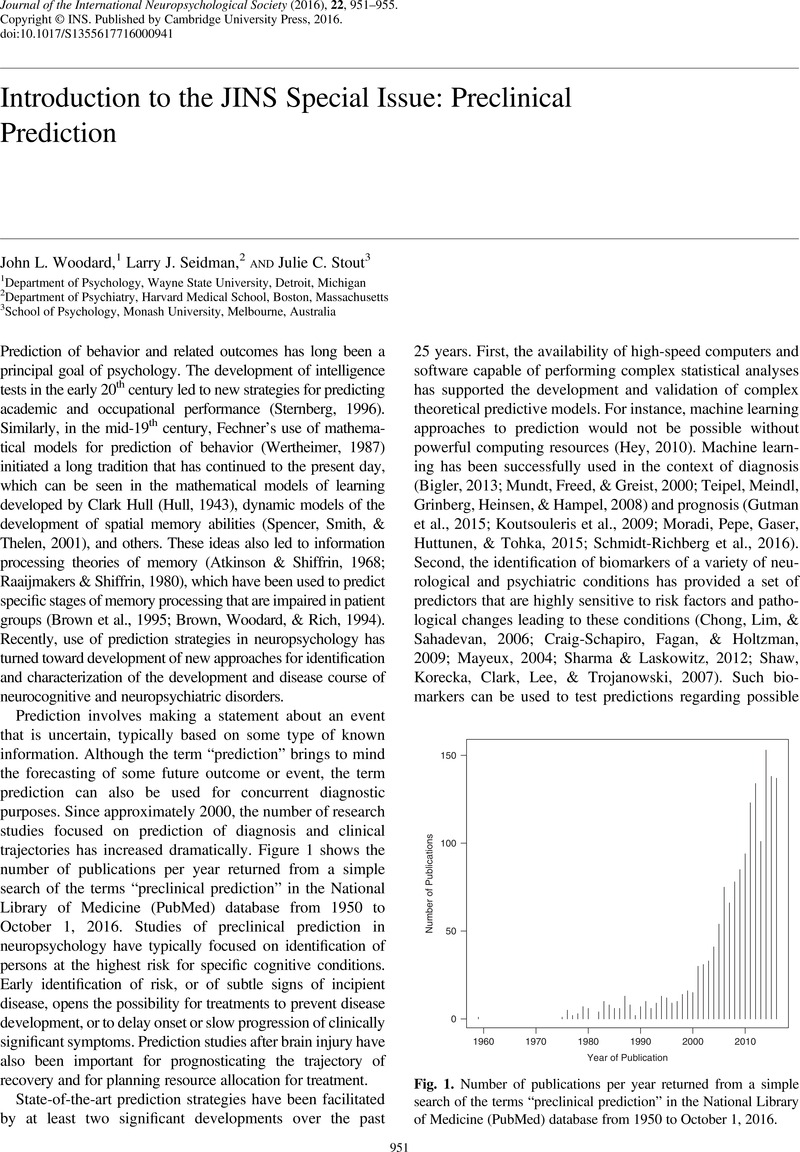

Introduction to the JINS Special Issue: Preclinical Prediction

Published online by Cambridge University Press: 01 December 2016

Abstract

An abstract is not available for this content so a preview has been provided. Please use the Get access link above for information on how to access this content.

- Type

- Introduction

- Information

- Journal of the International Neuropsychological Society , Volume 22 , Special Issue 10: Special Issue: Preclinical Prediction , November 2016 , pp. 951 - 955

- Copyright

- Copyright © The International Neuropsychological Society 2016

References

REFERENCES

Agnew-Blais, J., & Seidman, L.J. (2013). Neurocognition in youth and young adults under age 30 at familial risk for schizophrenia: A quantitative and qualitative review. Cognitive Neuropsychiatry, 18(1–2), 44–82. http://doi.org/10.1080/13546805.2012.676309

Google Scholar

Atkinson, R.C., & Shiffrin, R.M. (1968). Human memory: A proposed system and its control processes. In K.W. Spence & J.T. Spence (Eds.), The psychology of learning and motivation (pp. 89–195). New York: Academic Press.Google Scholar

Bigler, E.D. (2013). Neuroimaging biomarkers in mild traumatic brain injury (mTBI). Neuropsychology Review, 23(3), 169–209. http://doi.org/10.1007/s11065-013-9237-2

Google Scholar

Brown, G.G., Simkins-Bullock, J., Woodard, J.L., Cushman, L., Malik, G.M., Greiffenstein, M., && McGillicuddy, J. (1995). Modeling the immediate free recall impairment of patients with surgical repair of anterior communicating artery aneurysm. Neuropsychology, 9(1), 27–38. http://doi.org/10.1037/0894-4105.9.1.27

Google Scholar

Brown, G.G., Woodard, J.L., & Rich, J.B. (1994). Using a computer model to explore impairments of acquisition processes following ingestion of diazepam. Psychopharmacology (Berl), 113(3–4), 339–345.Google Scholar

Cannon, T.D., Cadenhead, K., Cornblatt, B., Woods, S.W., Addington, J., Walker, E., & Heinssen, R. (2008). Prediction of psychosis in youth at high clinical risk. Archives of General Psychiatry, 65(1), 28–37. http://doi.org/10.1001/archgenpsychiatry.2007.3

Google Scholar

Cannon, T.D., Yu, C., Addington, J., Bearden, C.E., Cadenhead, K.S., Cornblatt, B.A., & Kattan, M.W. (2016). An individualized risk calculator for research in prodromal psychosis. American Journal of Psychiatry, 173, 980–988. http://doi.org/10.1176/appi.ajp.2016.15070890

Google Scholar

Carrion, R., Cornblatt, B.A., Burton, C.Z., Tso, I.F., Auther, A.M., Adelsheim, S., & McFarlane, W.R. (2016). Personalized prediction of psychosis: External validation of the NAPLS-2 risk calculator with the EdiPPP project. American Journal of Psychiatry, 173, 989–996. http://doi.org/10.1176/appi.ajp.2016.15121565

Google Scholar

Chong, M.S., Lim, W.S., & Sahadevan, S. (2006). Biomarkers in preclinical Alzheimer’s disease. Current Opinion in Investigational Drugs (London, England: 2000), 7(7), 600–607.Google Scholar

Craig-Schapiro, R., Fagan, A.M., & Holtzman, D.M. (2009). Biomarkers of Alzheimer’s disease. Neurobiology of Disease, 35, 128–140. http://doi.org/10.1016/j.nbd.2008.10.003

Google Scholar

Cronbach, L.J., & Furby, L. (1970). How we should measure “change” - or should we?

Psychological Bulletin, 74(1), 68–80. http://doi.org/10.1037/h0029382

CrossRefGoogle Scholar

Francis, D.J., Fletcher, J.M., Stuebing, K.K., Davidson, K.C., & Thompson, N.M. (1991). Analysis of change: Modeling individual growth. Journal of Consulting and Clinical Psychology, 59(1), 27–37.Google Scholar

Gabrieli, J.D.E., Ghosh, S.S., & Whitfield-Gabrieli, S. (2015). Prediction as a humanitarian and pragmatic contribution from human cognitive neuroscience. Neuron, 85, 11–26. http://doi.org/10.1016/j.neuron.2014.10.047

Google Scholar

Giuliano, A.J., Li, H., Mesholam-Gately, R.I., Sorenson, S.M., Woodberry, K.A., & Seidman, L.J. (2012). Neurocognition in the psychosis risk syndrome: A quantitative and qualitative review. Current Pharmaceutical Design, 18(4), 399–415. http://doi.org/10.2174/138161212799316019

CrossRefGoogle ScholarPubMed

Gottman, J.M., & Rushe, R.H. (1993). The analysis of change: Issues, fallacies, and new ideas. Journal of Consulting and Clinical Psychology, 61(6), 907–910. http://doi.org/10.1037/0022-006X.61.6.907

CrossRefGoogle ScholarPubMed

Gutman, B.A., Wang, Y., Yanovsky, I., Hua, X., Toga, A.W., Jack, C.R., & Thompson, P.M. (2015). Empowering imaging biomarkers of Alzheimer’s disease. Neurobiology of Aging, 36(S1), S69–S80. http://doi.org/10.1016/j.neurobiolaging.2014.05.038

Google Scholar

Harrell, F.E. Jr. (2015). Regression modeling strategies: With applications to linear models, logistic and ordinal regression, and survival analysis (2nd ed.). Cham, Switzerland: Springer International Publishing.Google Scholar

Hey, T. (2010). The next scientific revolution. Harvard Business Review, 88(11), 56–63.Google Scholar

Ivanoiu, A., Dricot, L., Gilis, N., Grandin, C., Lhommel, R., Quenon, L., & Hanseeuw, B. (2015). Classification of non-demented patients attending a memory clinic using the new diagnostic criteria for Alzheimer’s disease with disease-related biomarkers. Journal of Alzheimer’s Disease, 43(3), 835–847. http://doi.org/10.3233/JAD-140651

Google Scholar

Koutsouleris, N., Meisenzahl, E.M., Davatzikos, C., Bottlender, R., Frodl, T., Scheuerecker, J., & Gaser, C. (2009). Use of neuroanatomical pattern classification to identify subjects in at-risk mental states of psychosis and predict disease transition. Archives of General Psychiatry, 66(7), 700–712. http://doi.org/10.1001/archgenpsychiatry.2009.62

Google Scholar

Mayeux, R. (2004). Biomarkers: Potential uses and limitations. NeuroRx, 1(2), 182–188. Retrieved from http://www.ncbi.nlm.nih.gov/entrez/query.fcgi?cmd=Retrieve&db=PubMed&dopt=Citation&list_uids=15717018

Google Scholar

McGorry, P.D., Purcell, R., Hickie, I., Yung, A.R., Pantelis, C., & Jackson, H.J. (2007). Clinical staging: A heuristic model for psychiatry and youth mental health. Medical Journal of Australia, 187, 40–42.Google Scholar

Miller, S.L., Fenstermacher, E., Bates, J., Blacker, D., Sperling, R.A., & Dickerson, B.C. (2008). Hippocampal activation in adults with mild cognitive impairment predicts subsequent cognitive decline. Journal of Neurology, Neurosurgery, and Psychiatry, 79(6), 630–635. http://doi.org/jnnp.2007.124149 [pii] 10.1136/jnnp.2007.124149

Google Scholar

Moradi, E., Pepe, A., Gaser, C., Huttunen, H., & Tohka, J. (2015). Machine learning framework for early MRI-based Alzheimer’s conversion prediction in MCI subjects. Neuroimage, 104, 398–412. http://doi.org/10.1016/j.neuroimage.2014.10.002

CrossRefGoogle ScholarPubMed

Mundt, J.C., Freed, D.M., & Greist, J.H. (2000). Lay person-based screening for early detection of Alzheimer’s disease: Development and validation of an instrument. The Journals of Gerontology, Series B: Psychological Sciences and Social Sciences, 55(3), P163–P170.Google Scholar

O’Hara, R., Yesavage, J.A., Kraemer, H.C., Mauricio, M., Friedman, L.F., & Murphy, G.M. Jr. (1998). The APOE epsilon4 allele is associated with decline on delayed recall performance in community-dwelling older adults. Journal of the American Geriatrics Society, 46(12), 1493–1498.Google Scholar

Raaijmakers, J.G.W., & Shiffrin, R.M. (1980). SAM: A theory of probabilistic search of associative memory. In G. H. Bower (Ed.), The psychology of learning and motivation: Advances in research and theory, (Vol. 14, pp. 207–262). New York: Academic Press.Google Scholar

Reiman, E.M., Chen, K., Alexander, G.E., Caselli, R.J., Bandy, D., Osborne, D., & Hardy, J. (2004). Functional brain abnormalities in young adults at genetic risk for late-onset Alzheimer’s dementia. Proceedings of the National Academy of Sciences of the United States of America, 101(1), 284–289.Google Scholar

Schmidt-Richberg, A., Ledig, C., Guerrero, R., Molina-Abril, H., Frangi, A., & Rueckert, D. (2016). Learning biomarker models for progression estimation of Alzheimer’s disease. PLoS One, 11(4), 1–27. http://doi.org/10.1371/journal.pone.0153040

Google Scholar

Seidman, L.J., Giuliano, A.J., & Walker, E.F. (2010). Neuropsychology of the prodrome to psychosis in the NAPLS consortium: Relationship to family history and conversion to psychosis. Archives of General Psychiatry, 67(6), 578–588. http://doi.org/10.1001/archgenpsychiatry.2010.66.Neuropsychology

CrossRefGoogle ScholarPubMed

Seidman, L.J., Shapiro, D.I., Stone, W.S., Woodberry, K.A., Ronzio, A., Cornblatt, B. A., & Woods, S.W. (2016). Association of neurocognition and transition to psychosis: Baseline functioning in the second phase of the North American Prodrome Longitudinal Study. JAMA Psychiatry, 12(12), 1–11. http://doi.org/doi:10.1101/jamapsychiatry.2016.2479. published online November 2, 2016.Google Scholar

Sharma, R., & Laskowitz, D.T. (2012). Biomarkers in traumatic brain injury. Current Neurology and Neuroscience Reports, 12(5), 560–569. http://doi.org/10.1007/s11910-012-0301-8

Google Scholar

Shaw, L.M., Korecka, M., Clark, C.M., Lee, V.M., & Trojanowski, J.Q. (2007). Biomarkers of neurodegeneration for diagnosis and monitoring therapeutics. Nature Reviews Drug Discovery, 6(4), 295–303. http://doi.org/nrd2176 [pii] 10.1038/nrd2176

Google Scholar

Singer, J.D., & Willett, J.B. (2003). Applied longitudinal data analysis: Modeling change and event occurrence. New York: Oxford University Press.Google Scholar

Small, G.W., Komo, S., La Rue, A., Saxena, S., Phelps, M.E., Mazziotta, J.C., & Roses, A.D. (1996). Early detection of Alzheimer’s disease by combining apolipoprotein E and neuroimaging. Annals of the New York Academy of Sciences, 802, 70–78.Google Scholar

Spencer, J.P., Smith, L.B., & Thelen, E. (2001). Tests of a dynamic systems account of the A-not-B error: The influence of prior experience on the spatial memory abilities of two-year-olds. Child Development, 72(5), 1327–1346. http://doi.org/10.1111/1467-8624.00351

CrossRefGoogle ScholarPubMed

Sternberg, R.J. (1996). Successful intelligence: How practical and creative intelligence determine success in life. New York: Simon & Schuster.Google Scholar

Steyerberg, E.W. (2009). Clinical prediction models: A practical approach to development, validation, and updating. New York: Springer.Google Scholar

Steyerberg, E.W., & Harrell, F.E. (2016). Prediction models need appropriate internal, internal-external, and external validation. Journal of Clinical Epidemiology, 69, 245–247. http://doi.org/10.1016/j.jclinepi.2015.04.005

CrossRefGoogle ScholarPubMed

Steyerberg, E.W., Vickers, A.J., Cook, N.R., Gerds, T., Gonen, M., Obuchowski, N., & Kattan, M. W. (2010). Assessing the performance of prediction models. Epidemiology, 21(1), 128–138. http://doi.org/10.1097/EDE.0b013e3181c30fb2

CrossRefGoogle ScholarPubMed

Teipel, S.J., Meindl, T., Grinberg, L., Heinsen, H., & Hampel, H. (2008). Novel MRI techniques in the assessment of dementia. European Journal of Nuclear Medicine and Molecular Imaging, 35(Suppl 1), S58–S69. http://doi.org/10.1007/s00259-007-0703-z

Google Scholar

Temkin, N.R., Heaton, R.K., Grant, I., & Dikmen, S.S. (1999). Detecting significant change in neuropsychological test performance: A comparison of four models. Journal of the International Neuropsychological Society, 5(4), 357–369.Google Scholar

Tsuang, M.T., Van, Os, J., Tandon, R., Barch, D.M., Bustillo, J., Gaebel, W., & Carpenter, W. (2013). Attenuated psychosis syndrome in DSM-5. Schizophrenia Research, 150(1), 31–35. http://doi.org/10.1016/j.schres.2013.05.004

Google Scholar

Wertheimer, M. (1987). A brief history of psychology. New York: Holt, Rinehart, and Winston.Google Scholar

Wirth, M., Madison, C.M., Rabinovici, G.D., Oh, H., Landau, S.M., & Jagust, W.J. (2013). Alzheimer’s disease neurodegenerative biomarkers are associated with decreased cognitive function but not beta-amyloid in cognitively normal older individuals. Journal of Neuroscience, 33(13), 5553–5563. http://doi.org/10.1523/JNEUROSCI.4409-12.2013

Google Scholar

Woodberry, K.A., Shapiro, D.I., Bryant, C., & Seidman, L.J. (2016). Progress and future directions in research on the psychosis prodrome: A review for clinicians. Harvard Review of Psychiatry, 24(2), 87–103. http://doi.org/10.1097/HRP.0000000000000109

Google Scholar

Yung, A.R., & McGorry, P.D. (1996). The prodromal phase of first-episode psychosis: Past and current conceptualizations. Schizophrenia Bulletin, 22, 353–370. http://doi.org/10.1093/schbul/22.2.353

Google Scholar