66 results

Noam Chomsky Reflections on Language. New York: Random House, 1975. 269 pp.

-

- Journal:

- Canadian Journal of Philosophy / Volume 9 / Issue 3 / September 1979

- Published online by Cambridge University Press:

- 01 January 2020, pp. 519-544

-

- Article

- Export citation

Copyright page

-

- Book:

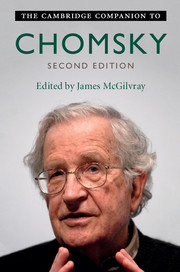

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp iv-iv

-

- Chapter

- Export citation

Dedication

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp v-vi

-

- Chapter

- Export citation

Contents

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp vii-viii

-

- Chapter

- Export citation

Part III - Chomsky on Politics and Economics

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp 255-330

-

- Chapter

- Export citation

8 - Cognitive Science: What Should It Be?

- from Part II - The Human Mind and Its Study

-

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp 175-195

-

- Chapter

- Export citation

Index

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp 331-342

-

- Chapter

- Export citation

The Cambridge Companion to Chomsky

-

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017

Introduction

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp 1-26

-

- Chapter

- Export citation

Contributors

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp x-xii

-

- Chapter

- Export citation

Figures

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp ix-ix

-

- Chapter

- Export citation

Part II - The Human Mind and Its Study

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp 153-254

-

- Chapter

- Export citation

Part I - The Science of Language: Recent Change and Progress

-

- Book:

- The Cambridge Companion to Chomsky

- Published online:

- 13 July 2017

- Print publication:

- 13 April 2017, pp 27-152

-

- Chapter

- Export citation

Contributors

-

-

- Book:

- The Cambridge Handbook of Biolinguistics

- Published online:

- 05 May 2013

- Print publication:

- 14 February 2013, pp xiii-xiv

-

- Chapter

- Export citation

4 - The philosophical foundations of biolinguistics

-

-

- Book:

- The Cambridge Handbook of Biolinguistics

- Published online:

- 05 May 2013

- Print publication:

- 14 February 2013, pp 22-46

-

- Chapter

- Export citation

20 - Language, agency, common sense, and science

- from Part II - Human nature and its study

-

- Book:

- The Science of Language

- Published online:

- 05 June 2012

- Print publication:

- 15 March 2012, pp 124-128

-

- Chapter

- Export citation

8 - Perfection and design (interview 20 January 2009)

- from Part I - The science of language and mind

-

- Book:

- The Science of Language

- Published online:

- 05 June 2012

- Print publication:

- 15 March 2012, pp 50-58

-

- Chapter

- Export citation

2 - On a formal theory of language and its accommodation to biology; the distinctive nature of human concepts

- from Part I - The science of language and mind

-

- Book:

- The Science of Language

- Published online:

- 05 June 2012

- Print publication:

- 15 March 2012, pp 21-30

-

- Chapter

- Export citation

Frontmatter

-

- Book:

- The Science of Language

- Published online:

- 05 June 2012

- Print publication:

- 15 March 2012, pp i-iv

-

- Chapter

- Export citation

23 - Epistemology and biological limits

- from Part II - Human nature and its study

-

- Book:

- The Science of Language

- Published online:

- 05 June 2012

- Print publication:

- 15 March 2012, pp 133-137

-

- Chapter

- Export citation