The language of adult foreign language learners, even those who reach advanced proficiency levels, is rarely native-like. The vocabulary range of such individuals may be impressive but still have significant gaps, both in the words they know and in their sensitivity to subtleties in meaning. Their grammatical knowledge may allow them to write and be published in their foreign language, yet their grammatical intuitions do not appear to be the same as those of native speakers (NSs) (Coppieters, Reference Coppieters1987). Nowhere is their lack of native-likeness as apparent as in their pronunciation. Foreign-accented speech is the overwhelming norm among adult learners of foreign languages, and even foreign language learners who are far advanced in their grammatical knowledge and ability to use their new language are likely to have a noticeable accent, yet be largely intelligible. By intelligibility, we mean that “a speaker’s message is actually understood by a listener” (Munro & Derwing, Reference Munro and Derwing1995, p. 76). The presence of a foreign accent is often attributed to critical period constraints on acquiring a second language phonological and phonetic system (Lenneberg, Reference Lenneberg1967; Scovel, Reference Scovel2000). The evidence for the critical period is far more uncertain than is commonly believed, with some older learners reaching native-like status with pronunciation, and some young learners never achieving a native accent (Coppetiers, Reference Coppieters1987; Flege, Frieda, & Nozawa, Reference Flege, Frieda and Nozawa1997; Moyer, Reference Moyer2014). But in general, achieving native-like pronunciation is relatively uncommon for adult language learners and may be related to how closely related the L1 and L2 are (Bongaerts, Van Summeren, Planken, & Schils, Reference Bongaerts, Van Summeren, Planken and Schils1997). Despite the unlikelihood of sounding native, many learners continue to desire such an achievement, and many teachers believe it is achievable.

This focus on reaching native levels of language achievement sometimes masks the fact that second-language speakers do not need to be native-like to be effective communicators. Their vocabulary does not have to match that of an NS to get their message across. Indeed, any two educated NSs are unlikely to have exactly the same vocabulary knowledge. Many second-language speakers can write, read, speak, listen, and effectively communicate in a wide range of contexts, despite having less than perfect grammar. Such speakers can also be understood even though their pronunciation differs markedly from what NSs expect of other NSs. Listeners are remarkably flexible in deriving messages from widely varying phonetic and phonological input, and people can communicate, and communicate well, even when their speech does not match a particular model or does not fit the stereotypical expectations of a listener. In other words, nonnative speakers can be highly intelligible even when their speech is strongly accented.

The specific aspects of such understanding, and the relationship of intelligibility to pronunciation, is the primary topic of this book. I’m using a broad definition of intelligibility here, one that includes both actual understanding (at the lexical, semantic, and pragmatic levels) and the degree of effort involved in understanding (called comprehensibility). These two types of understanding overlap, yet are distinct (Munro & Derwing, Reference Munro and Derwing1995). A speaker can be fully intelligible, but listeners may have to work hard to understand. Because the vast majority of the research on intelligibility and comprehensibility has focused on English language acquisition, this volume is about intelligibility primarily related to English and English language teaching. These principles are likely to be relevant to other languages as well, but the questions about intelligibility and teaching have been asked most often about English because of the dominance of English as a lingua franca around the world. The book’s overall emphasis is the role of pronunciation in intelligibility, even though other areas of language structure are also important to intelligibility and comprehensibility. The use of the wrong word in describing something can confuse a listener (for example, when a British English speaker talks about the boot of a car to an American English speaker, who calls the same thing a trunk). In such a case, misunderstanding, or even partial or delayed understanding, is directly related to word choice. However, some research has shown that loss of intelligibility is more closely tied to pronunciation deviations (e.g., Jenkins, Reference Jenkins2000). In the boot–trunk example, both speakers can pronounce English accurately, albeit differently, but misunderstanding occurs because boot has different meanings in the two varieties of English. In contrast, if a speaker pronounces boot as voot, the word not only may be unfamiliar to the listener, but the listener also has to decode it to fit a possible English word. Even if the listener can decode the word and guess what the speaker means, the amount of time and energy the listener must use to process the speech changes the nature of the interaction. In other words, such deviations also begin to affect comprehensibility. The effect of a phonetic deviation may be multiplied by the 200–300+ syllables that can be spoken each minute by NSs (Cruttenden, Reference Cruttenden2014), and the opportunity for misunderstanding grows exponentially with greater numbers of unexpected deviations.

Given the possibilities for misunderstanding based on pronunciation differences, it is surprising that foreign-accented speech often loses little or none of its intelligibility. Many variations in pronunciation are not hard to interpret. In this, foreign-accented speech is similar to NS speech, which is also full of variability. Speakers of different dialects can have large numbers of systematic differences in their speech yet still understand one another. Such differences in pronunciation are more likely to cause a loss of intelligibility if they are beyond the range of expected variation, but the ability of NSs to understand each other despite significant variations in their pronunciation shows that people typically demonstrate a great deal of flexibility in communication. Unlike machines, people are flexible listeners.

But listeners’ flexibility, while still operative, may be somewhat impaired when exposed to nonnative accents, in which pronunciation variations are more likely to be unexpected or outside the range of familiar NS dialects. In addition, the speech of nonnative speakers may contain other features of language structure that are unexpected, so pronunciation variations can combine with grammar and word choice differences to cause an overload that leads to a loss of intelligibility (Varonis & Gass, Reference Varonis and Gass1982). For example, speakers who pronounce the stressed vowel in bed and letter as [aI] (bide, lighter) rather than [ɛ] will be more difficult for listeners to understand than speakers who use perceptually close vowels such as [e] (bade, later) or [æ] (bad, latter). The sheer unexpectedness of the erroneous pronunciation [aI] coupled with the fact that it often sounds like another word causes a consistent loss of intelligibility.

Reasons for an Intelligibility-Based Approach to Teaching

In 1981, when pronunciation was not being widely taught during the early days of the Communicative Approach, Hinofotis and Bailey conducted research into the effectiveness of foreign teaching assistants (FTAs) in US universities. Foreign teaching assistants (now usually called ITAs, or international teaching assistants) often taught or assisted in introductory-level classes in academic fields such as chemistry or math. It was important for these FTAs to be understandable. In their research, Hinofotis and Bailey found that although some FTAs were sufficiently expert in the course content and that their overall language proficiency was advanced, they were still not easily understood. The researchers argued that their results meant that speakers must have a threshold level of pronunciation ability to communicate and that pronunciation had a special role in promoting intelligibility.

Hinofotis and Bailey (Reference Hinofotis, Bailey, Cameron Fisher, Clarke and Schachter1981) questioned the dichotomy of native and nonnative speech, suggesting instead that we need a more nuanced continuum. Foreign language learners may not be able to achieve the pronunciation abilities of NSs, but progress in pronunciation is both possible and essential. Learners must have sufficient command of pronunciation or else their speech will not be sufficiently understood. It will not matter if they speak fluently (smoothly and without unnecessary hesitations), have sufficient vocabulary, use perfect grammar, or are pragmatically appropriate in their language use.

An intelligibility-based approach to oral communication is thus the pursuit of skilled rather than native speech. While pronunciation is important in speaking (after all, one cannot speak without pronouncing), it is not the only component of effective speech. Speakers must choose appropriate vocabulary and grammar, and must speak with sufficient fluency to communicate. All elements of speech are important in getting one’s message across, and all must combine in such a way that they are within a listener’s expected range of production. None of these elements of speech needs to be perfectly native-like, but they all need to be good enough. But what is good enough?

Since 1981, research and many teaching materials have sought to define what features are important in developing a threshold level of intelligibility, typically centered on the rough dichotomy of suprasegmentals (stress, rhythm, intonation) and segmentals (consonants, vowels). Many have advocated greater emphasis on suprasegmentals than segmentals (e.g., McNerney & Mendelsohn, Reference McNerney, Mendelsohn, Avery and Ehrlich1992) to promote intelligibility because of the belief that suprasegmentals lead to faster improvement in comprehensible speech. Because time and energy for pronunciation instruction is always limited, this faster rate of improvement is important. Derwing and Rossiter (Reference Derwing and Rossiter2003) found that comprehensibility ratings for learners who were taught with a more global approach (focusing more heavily on suprasegmentals) improved significantly more than did those for learners who were taught with a focus on consonants and vowels (a segmental approach).

Research has also found a differential importance for errors. Some errors cause greater impairments to intelligibility than others, a fact that is at the heart of the suprasegmental–segmental question. The principle of differential importance also applies within categories of errors. For example, Munro and Derwing (Reference Munro and Derwing2006) examined the principle of differential importance by looking at the comprehensibility ratings of two kinds of consonant errors: /θ/–/f/ (as in think–fink) and /l/–/n/ (as in light–night). They found not only that the /l/–/n/ errors had a greater effect than /θ/–/f/ on listeners being able to understand a sentence, but also that one /l/–/n/ error had a greater effect than a combination of two or three /θ/–/f/ errors. This is attributed to the different functional load of the two types of errors. The first has few minimal pairs, and so has a low functional load, while the second has many minimal pairs, and a high functional load with greater opportunities for confusion.

This principle of differential importance applies in many contexts outside language teaching. It is known as the Pareto principle, or the 80–20 rule. The principle may suggest, for example, that 20 percent of workers create 80 percent of the wealth, or even that 20 percent of the students take up 80 percent of a teacher’s time and energy. Applied to pronunciation teaching, then, we can guess that the largest improvement in a learner’s intelligibility may come from improvement in a minority of the learner’s errors.

If we can determine which errors constitute that important minority of errors, we can target our teaching toward the few vital errors, and deemphasize or ignore the other, less important errors, either because they will not make a difference or because many of them will be remedied in the normal course of development (as with some vowel errors or consonant errors; see Munro & Derwing, Reference Munro and Derwing2008 and Munro, Derwing, & Thomson, Reference Munro, Derwing and Thomson2015, respectively). Almost all pronunciation materials focus overwhelmingly on vowel and consonant sounds, including sound contrasts that are unlikely to be a problem for any learner or listener. For example, one can find exercises teaching the difference between the interdental fricatives /θ/ and /ð/, as in ether–either, despite the lack of evidence that it is worthwhile to teach this contrast. Most learners of English have plenty of trouble with both sounds, but the two sounds are potentially confusable with only a few infrequent words, and if learners have trouble with either sound, they are likely to confuse it with other sounds such as /s, t, f/ and /z, d, v/, not with the other interdental fricative.

What Is Intelligibility?

Intelligibility is widely agreed to be the most important goal for spoken language development in a second language – both for listening and speaking – no matter the context of communication. In ESL (English as a second language) contexts, in which learners are surrounded by the target language outside the classroom, and in English as a lingua franca (ELF) contexts, where nonnative speakers communicate primarily with other nonnative speakers, researchers have called for a focus on intelligibility.

But what is intelligibility? This is not an easy question to answer, it seems, because the word has often been used with two distinct but related meanings. Munro and Derwing suggested that “intelligibility may be broadly defined as the extent to which a speaker’s message is actually understood by a listener” (Reference Munro and Derwing1999, p. 289). This broad description of intelligibility presumes that listeners understand speech in a variety of ways: that they can identify the words that are spoken, that they understand the message, and that they understand the intent behind the message (Smith & Nelson, Reference Smith and Nelson1985). Broadly or narrowly conceived, intelligibility does not include speech that is irritating or socially unacceptable. For example, the use of taboo words in some contexts may be socially unacceptable but not unintelligible. The overuse of filler words such as like or umm may be irritating, but it does not necessarily create problems with understanding. However, intelligibility may be compromised by other features of the communicative environment, such as noise (Munro, Reference Munro1998) or a speaking rate that is challenging (Munro & Derwing, Reference Munro and Derwing1998).

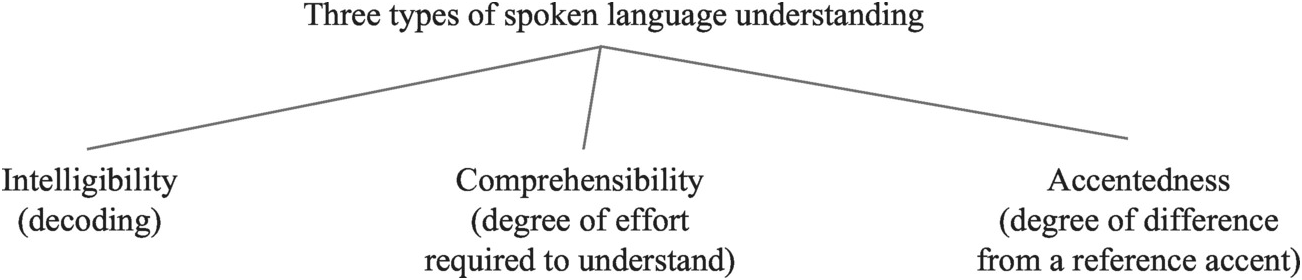

Munro and Derwing (Reference Munro and Derwing1995), whose model of spoken language understanding is based on the work of James Flege, include three distinct but partially overlapping types of judgments that listeners make about L2 speech. These are intelligibility, comprehensibility, and accentedness (Figure 1.1).

Figure 1.1 Three types of spoken language understanding

Intelligibility means both the extent to which a speaker is understandable, and whether the particular words used by a speaker are successfully decoded (the lexical level of intelligibility). In the narrow sense, intelligibility may be measured through transcription or dictation tasks, in which listeners are asked to write down exactly what they heard. If listeners cannot successfully decode particular words, the words are defined as unintelligible. In a broader sense, listeners may be asked to answer comprehension questions or provide summaries of what they understood (e.g., Hahn, Reference Hahn2004), thus demonstrating intelligibility at the semantic level.

The second type of understanding is comprehensibility, defined as the amount of work that listeners need to do in understanding a speaker. This is often measured via a Likert scale, on which 1 = extremely easy to understand and 9 = impossible to understand (Munro & Derwing, Reference Munro and Derwing1999, p. 291), or through continuous sliding scales on a computer (e.g., Crowther, Trofimovich, Saito, & Isaacs, Reference Crowther, Trofimovich, Saito and Isaacs2015). Thus comprehensibility taps into listeners’ overall sense of how easy speech is to understand and highlights difficulties in speech processing, feelings about how difficult it is to listen to a speaker, and “factors … that [do] not necessarily determine whether an utterance [is] fully understood” (Munro & Derwing, Reference Munro and Derwing1999, p. 303). Listeners are very reliable with these kinds of holistic ratings. Tapping into NSs’ intuitions also has a long history in linguistic research.

What is the relationship between intelligibility and comprehensibility? Munro and Derwing (Reference Munro and Derwing1999) found that there was a correlation between ratings of intelligibility and ratings of comprehensibility, but that the two dimensions did not measure the same things. It was not uncommon for listeners to transcribe speech perfectly (that is, to find it completely intelligible) and yet perceive it to be difficult/effortful to understand.

Finally, accentedness is important to consider because accent is commonly appealed to, both popularly and in research, for its presumed connection to whether speech is understandable and its potential usefulness in assessing spoken language (e.g., Ockey, Papageorgiou, & French, Reference Ockey, Papageorgiou and French2016). Clearly, accent can interfere with intelligibility, but research has shown that speakers can be perfectly intelligible (in that all of the words they speak are understood) while being simultaneously judged as having a very strong accent. Accentedness is also scalar, and is defined as the degree of difference between speech and a local or reference accent (Munro & Derwing, Reference Munro and Derwing1995). Because it is scalar, an assumption demonstrated by Derwing and Munro (Reference Derwing and Munro2009), it is possible to talk about listeners hearing speech as more or less accented in comparison to the reference accent or in comparison to another accent. However, describing accents as thick, heavy, or light without some kind of measured judgment task is unhelpful. Accentedness judgments of this sort seem particularly prone to bias based on stereotypes and other social judgments, perhaps leading to perceptions of accented speakers being less truthful (Lev-Ari & Keysar, Reference Lev-Ari and Keysar2010), less understandable (Rubin, Reference Rubin1992), or having poorer teaching skills (Major, Fitzmaurice, Bunta, & Balasubramanian, Reference Major, Fitzmaurice, Bunta and Balasubramanian2002).

In summary, listeners’ ability to decode words successfully does not guarantee that speech will be easy to understand. This kind of intelligibility is not only a matter of how many words are not understood, but also which words. Misunderstood function words may have less impact than misunderstood content words (Zielinski, Reference Zielinski2006). It is likely, however, that speech will be less comprehensible if words are frequently unintelligible. What we do not know is how many words can be missed by a listener before overall understanding is severely impaired. Nation (Reference Nation2002) suggested that nonnative listeners need to understand at least 95 percent (nineteen of every twenty words) or perhaps 98 percent (forty-nine of fifty words) of the content words in running speech in order to have excellent comprehension and be able to guess the meanings of unknown words. The threshold for NSs, with better guessing skills and faster processing of their first language, is likely to be lower, but we do not know how much lower.

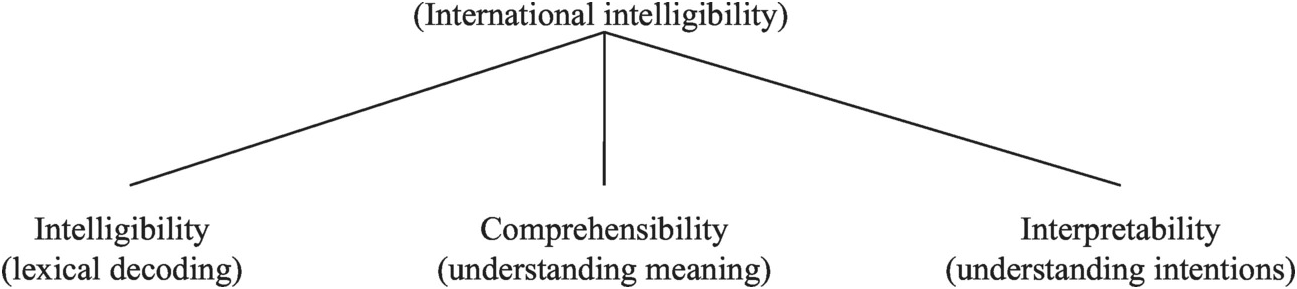

A potential confusion of intelligibility and comprehensibility comes from Smith and Nelson (Reference Smith and Nelson1985), who divided their concept of “international intelligibility” into three different types of understanding, which they named intelligibility, comprehensibility, and interpretability (Figure 1.2). International intelligibility is listed as an overarching term based on Smith and Nelson’s attempt to describe understanding across different varieties of world Englishes. It is important to note that, despite the terms used, all three types of understanding in Smith and Nelson’s work are types of intelligibility – that is, they are all types of actual understanding. None of them is the same as comprehensibility as discussed by Munro and Derwing (Reference Munro and Derwing1995).

Figure 1.2 Three types of understanding thought to constitute international intelligibility

Smith and Nelson (Reference Smith and Nelson1985) define intelligibility as a listener’s ability to decode the words that are spoken. In other words, this is lexical intelligibility. From the speaker’s point of view, this is the ability to say words in such a way that listeners can successfully decode them. For example, I once was listening to a talk given by the Australian applied linguist, Wilga Rivers. I had no difficulty understanding anything she said even though her accent was largely unfamiliar to me at the time. Then she used a word that I didn’t understand at all – [kəˈ ɹɑlɹi]. I spent almost a minute trying to decode the word, during which time I did not hear any of the rest of her talk. First, I searched my mind for any word that seemed to fit. Finding none, I spelled out the sounds I heard <c_rol_ry> and then visually was able to see a word that fit: corollary. Then, and only then, did I realize that I had heard that British-influenced varieties of English used this stress pattern for this word that is pronounced in North American English as [ˈkhɔɹəˌlєɹi]. The speaker’s unexpected stress pattern and consequent vowel and consonant pronunciations of this word caused a loss of intelligibility in that I was not able to successfully decode the word in real time.

In Smith and Nelson’s work, comprehensibility involves the ability of a listener to understand a spoken message. Again, this use of comprehensibility is not the same as that of Munro and Derwing (Reference Munro and Derwing1995). Comprehensibility in this model refers to “the meaning of a word or an utterance” (Smith & Nelson, Reference Smith and Nelson1985, p. 334). A text is comprehensible if the listener can make sense of or paraphrase it. Smith and Nelson’s (Reference Smith and Nelson1985) third type of understanding, interpretability, refers to understanding the “meaning behind the word or utterance” (p. 334). The interpretability of a spoken text is high if the listener is able to understand the speaker’s intentions in uttering the message in a particular context. Interpretability refers to another type of intelligibility, pragmatic intelligibility, but this type of intelligibility can be extremely variable. It is also difficult to determine whether a listener has misunderstood the meaning behind the word or utterance. Listeners may think that they understand, and a speaker may think that a listener has understood the meaning that the speaker intended, only to find out later that the listener’s understanding was quite different from the speaker’s intended meaning. Interpretability has not played a significant role in research involving pronunciation. In one study that suggests a role for this type of intelligibility, Low (Reference Low2006) described how given information is marked as (non)prominent by British English (BE) and Singapore English (SE) speakers. It is typical for BE speakers to make given information less prominent when it is repeated (e.g., the second use of “car” in the example below). SE speakers, rather than making car less prominent, appear to make it prominent again. Low suggested that this difference caused a loss of interpretability, in that inner-circle speakers misunderstood the communicative intent of SE speakers:

the Singapore English speaker’s reaccenting of old information causes a British interlocutor in at least a few instances to misunderstand the SE speaker’s communicative intent … Anecdotally, a compromise in interpretability makes sense. In cases where I merely reaccented repeated words, for example, I looked around for the car but there was no car; I have often been asked by foreigners whether I was angry when my communicative intent has been far from conveying the feeling of anger.

This book is primarily about intelligibility, but it is also about comprehensibility. When the word intelligibility is used, it is used to mean understanding of a spoken message, either in regard to lexical identification, meaning, or intention. When I speak of comprehensibility, I mean the degree of difficulty a listener has in understanding. Loss of intelligibility can happen between two NSs, and in fact any mismatch between a listener’s expectation and the speaker’s production can cause it (Zielinski, Reference Zielinski2008). However, mismatches do not always impair understanding. They may, however, make a difference in how easily a listener understands a speaker, and consequently how likely a listener is willing to communicate with different individuals. If it takes extra effort to understand someone, interlocutors may avoid contact.

Intelligibility and Teaching Pronunciation

In teaching pronunciation in relation to discussions of intelligibility, we often make assumptions about who the listener and the speaker are in an interaction. Typically, we assume that the listener is an NS while the speaker is a nonnative speaker (NNS). Thus, we assume that the NNS bears the burden for being understood, while the NS, who has a command of the language and is able to determine whether an NNS is intelligible, bears less of a burden. Because any interaction involves both speakers and listeners playing both roles, this need not be the only, or even the most helpful, way to understand intelligibility. As I have written elsewhere (Levis, Reference Levis2005a), intelligibility can be understood through a matrix of possible relationships between listeners and speakers.

Figure 1.3 delineates possible relationships between listeners and speakers, and each of the relationships has implications for intelligibility, and in turn for pronunciation instruction.

In Quadrant A, both the speaker and listener are NSs. Implicitly, communication in this quadrant should be easy and successful since both the listener and speaker share not only the same linguistic code but many of the same rules for using it as well as intuitions about its unspoken rules. Unfortunately, we do not know how far we can expect such success to reach. Native speakers come in many flavors, with wide varieties of dialects and ways of speaking. It is not hard to recall interactions between NSs that include a loss of intelligibility. Once, while playing golf in Scotland, I played with two other golfers, one native to Scotland and the other native to the north of England but who had lived in Scotland for decades. More than once, their speech became increasingly unintelligible to me, especially as topics became more emotionally charged. I didn’t understand many words, the meaning, or the intent of the messages being communicated.

Quadrant B, with native speakers and nonnative listeners, is often found in language classrooms and when NNSs interact with NSs in second-language contexts. This is the flip side of typical intelligibility research which focuses on nonnative speakers and native listeners. In this quadrant, NNS listeners are tasked with understanding normal NS speech, with all its variations, in real time. A lack of intelligibility in this case means that NNSs cannot understand. In other words, the NS may be unintelligible to the NNS. From the viewpoint of a pronunciation teacher, the goal must be to help learners decode and process speech as quickly and easily as possible. In real-world communicative interactions, NSs must carry a large part of the burden for intelligibility, not only in phonology but also in adjustments, speech rate, etc., if some interactions are to be successful.

Quadrant C reflects the bulk of the research on intelligibility, in which NNSs are the speakers and NSs the listeners. The artificial division between Quadrant B and Quadrant C is unfortunate, because in reality any interaction between NSs and NNSs means that NSs and NNSs will play both roles. The important thing to notice, though, is that it is possible for both NSs and NNSs to be unintelligible when communicating, and conversational adjustments are common by NSs (for an early review, see Long, Reference Long1983). In Quadrants B and C, the NS often places the responsibility for success on the NNS, but the NNS cannot be fully responsible for successful communication. Success in any communicative interaction involves the ability to speak and listen and to adjust if communication is not happening. If NSs cannot adjust, they are also partly responsible for a lack of intelligibility.

Finally, Quadrant D involves speakers and listeners who are both NNSs. This is typical of the ELF setting. This quadrant describes the widespread use of English as a medium of communication for speakers who have different non-English first languages. In a smaller way, it also reflects the need for learners in a second-language classroom setting to communicate with other learners of different L1s. I have often had students ask if they could practice with me in class and not with other learners, because they believed the other learners’ grammar and pronunciation were bad. This request presumes a kind of osmosis in that other learners’ errors will somehow rub off and ruin their progress, while my native speech will rub off and lead to the kind of improvement they desire. Such osmosis is unlikely to work – either for good input or for bad. Findings about correction show that learners don’t seem to correct fellow students’ errors, and thus presumably they don’t notice them (Long & Porter, Reference Long and Porter1985).

All four quadrants reflect different intelligibility issues. Intelligibility is always a two-way street, and both NS and NNS interlocutors carry the responsibility for successful communication. It is only by recognizing the contexts in which we talk about intelligibility issues that we can achieve greater success in our teaching.

Pronunciation and Oral Communication

Although this book is primarily about the role of pronunciation in intelligibility, it is impossible to discuss pronunciation outside the larger context of oral communication. Pronunciation, with all its intricacies and interesting features, does not exist on its own. It is a servant skill. We pronounce as a byproduct of speaking, and we pronounce within the context of the communicative acts or the speaking task we are involved in. We pronounce if we are fluent, and we pronounce if we are not fluent. We pronounce with our particular lexical and grammatical skills. Likewise, we process the pronunciation features of others’ speech, presented to us within the fluency, speed, grammar, and lexis of their speech. Sometimes the speech will be more careful, and sometimes it will be more casual. Pronunciation (or its primary correlate, accent) seems to play such an outsized role in intelligibility because it is a first stop for listeners, and many of its features are those that listeners are very good at noticing. We notice small deviations of vowel and consonant sounds even when listening to other NSs, and we often make social judgments about others because of these differences. For example, the insertion of /ɹ/ into the word wash so that it is pronounced warsh is immediately noticed by most US speakers and is almost always criticized as sounding uneducated. Likewise, the deletion of [h] in words like house and hospital in British English has historically been highly stigmatized, indicating social class distinctions (Mugglestone, Reference Mugglestone1996), despite the fact that other [h] deletions in function words (e.g., Did he give him the book?) are usually unnoticeable in normal conversational speech.

Word-Based and Discourse-Based Features

What are the features of pronunciation and how do they fit into Munro and Derwing’s (Reference Munro and Derwing1995) intelligibility/comprehensibility research? First, let’s look at the kinds of features that are usually associated with pronunciation teaching. I will divide these into word-based and discourse-based features, although it is notoriously difficult to divide English pronunciation into discrete categories. The stress and rhythm of English affect the ways that vowels and consonants are pronounced, and the clarity of a word’s pronunciation is influenced by its location in the discourse. Likewise, vowels are spoken with different pitch levels, thus influencing pitch range and intonation. Many of these variations are ignored by NSs because they are allophonic, or characteristics of different registers, or irrelevant noise, but we should not consider that such variations are heard in the same way by learners, for whom the variations are likely to affect how they process speech.

To native listeners, intelligibility and comprehensibility mean the extent to which they can understand the speech of others, or the extent to which they need to work in understanding. Both types of understanding for native listeners are by and large unconscious and noticeable only when understanding is challenging. Native listeners also tend not to recognize their own role in promoting understanding while communicating with nonnative speakers. However, when native listeners do take on the role of an active participant in the interaction, even difficult speech can become more understandable. In one study, social work students were given training on listening to Vietnamese-accented speech. The training led to increased confidence in their ability to understand immigrant clients compared to a group with cross-cultural training and a control group (Derwing, Rossiter, & Hannis, Reference Derwing, Rossiter and Hannis2000; Derwing, Rossiter, & Munro, Reference Derwing, Rossiter and Munro2002).

To nonnative listeners, especially those at lower proficiency levels, negotiating the intelligibility of speech is a conscious process, sometimes painfully so, during casual speech or in a communicative situation in which messages are partly masked by noise. Intelligibility is important for nonnative speakers in their own speech and in listening to the speech around them. The speech of NSs can sound excessively fast, a rapid fire of foreign and strangely distributed sounds that they can barely process.

Word-Based Features

Word-based features include segmentals and word stress. These features are most likely to affect intelligibility at the lexical level. In some languages (e.g., Mandarin, Vietnamese, Kikuyu), this category would also include lexical tone. First, some justification of my classification is in order. Typically, pronunciation features are described as being either segmental or suprasegmental. This classification scheme is central to teaching materials, but it creates an unnatural division in discussing intelligibility. Consonant and vowel errors primarily cause listeners to have trouble decoding individual words. This is the logic behind the use of minimal pairs in teaching pronunciation. For example, the numerous research studies that have examined the difficulty that Japanese learners of English have with /l/ and /ɹ/ all reflect the many minimal pairs that these two phonemes have in English. Brown (Reference Brown1988), extending Catford (Reference Catford and Morley1987), described one effect of the number of minimal pairs that two phonemes have as functional load. Functional load is measured by the numbers of minimal pairs that exist for a contrast (e.g., [p]/[b]) initially and finally, and the likelihood that the contrast is enforced in all varieties of English. Minimal pairs of segments with a high functional load are thought to be more likely to cause loss of intelligibility.

In addition to minimal pairs, another segmental feature that can cause difficulties in understanding words involves allophonic variations. For example, voiceless stops and affricates ([p], [t], [k]) in inner-circle English varieties are aspirated at the beginning of stressed syllables. If the sounds are not aspirated, there is a strong chance of unintelligibility because inner-circle listeners are likely to hear them as their voiced counterparts ([b], [d], [g]).

Vowel reduction is also a strong candidate for intelligibility problems, especially from the second-language learner’s point of view. Because English NS speech is full of vowel reduction (Woods, Reference Woods2005), L2 learners of English may find NS speech unintelligible when they cannot easily connect the speech signal to the written representation of the words.

Likewise, word-stress errors also cause trouble with intelligibility at the lexical level. Stressing a word incorrectly can not only mask the identity of the word (Zielinski, Reference Zielinski2008), it can also change how the vowels and consonants are pronounced. Although word stress is typically considered a suprasegmental feature, I will treat it similarly to segmentals in its effect upon intelligibility. In other words, the kinds of interpretive problems related to word stress are likely to be related primarily to intelligibility at the lexical level.

Discourse-Based Features

Most suprasegmental errors are unlikely to cause listeners to misidentify words, but may cause loss of intelligibility in understanding the message or the speaker’s intent. They may also cause decreased comprehensibility by increasing the effort listeners put forth in understanding speech. Most suprasegmental features, unlike segmental features, carry their meaning beyond the sentence level. As such, these features are part of the overall structure of spoken English, allowing it to be spoken with its characteristic rhythm and melody, and creating meaning that is pragmatic, attitudinal, and connected to the communication of information structure.

Unlike word-based features, discourse-based features in English are rarely right or wrong. If a Korean speaker who does not consistently distinguish the sounds [p] and [f] says the name Pat as fat, there is a clear sense of right or wrong. However, if the same Korean speaker asks the question, “Is Pat available to talk?” with falling rather than rising intonation, the difference in meaning is far more subtle. We know from research that both intonations are perfectly acceptable and may be equally common for yes-or-no questions (Thompson, Reference Thompson1995), so any interpretive issues are likely to be pragmatic in nature rather than related to basic speech decoding.

Intonation is thus the first suprasegmental feature that is unlikely to cause difficulties with word intelligibility. Although we do not hear differences in intonation as right or wrong as with mispronounced words or segmentals, this does not mean that intonation is unimportant since it is more likely to affect intelligibility in terms of the message or the intent. In one well-known example, Gumperz (Reference Gumperz1982) described a misunderstanding that occurred due to misperceptions of intonational meaning. British Airway employees (primarily native speakers of English) who ate at a company cafeteria thought that the servers (largely women of Indian and Pakistani descent) were rude and sullen. This perception was so strong that it caused a barely simmering revolt on both sides: The airline staff felt they were being treated badly by those they perceived as being beneath them in status, and the cafeteria workers felt those they were serving were rude and angry. Gumperz discovered that the problem had its roots in the use of intonation. When serving, the Pakistani and Indian women would offer things to the employees with a falling intonation (e.g., Gravy↘), while the employees, having English-language expectations, expected rising intonation (e.g., Gravy↗). In the Indian and Pakistani women’s L1s, the use of falling intonation was polite and appropriate, while for the native English-speaking employees it was heard as rude. In other words, the English-speaking employees did not hear the intonation as linguistically wrong, like a phoneme error; rather, they considered it to be a social failing.

In another study, Hahn (Reference Hahn2004) found that misplacement of sentence focus caused listeners difficulties in recalling information from a lecture. The study examined three conditions in which a Korean English bilingual presented the same information: correct focus placement, incorrect focus placement, and no focus placement at all. All three conditions included a text that was, technically, perfectly intelligible. However, when unexpected words were given special emphasis, or when no emphasis at all was used, listeners did not recall the information with the same degree of success. In addition, the speaker was heard as more or less likable, depending on the emphasis pattern.

Another suprasegmental feature is phrasing, or juncture, in which speakers’ pauses have more than one effect on how well listeners interpret speech. In Example (1.1), juncture plays an obvious role in differentiating between the meanings of the two sentences. There is no necessary difference in intelligibility, because both sentences use the same words. The entire difference in meaning is one of intelligibility, although phrasing differences are rarely so clear as in this example.

“Joe said his wife was away” versus “Joe, said his wife, was away”.

A third suprasegmental feature is rhythm. Although closely related to word stress in its ability to affect the pronunciation of vowels and consonants, phrase rhythm in English affects the overall structure of the discourse. Function words, such as single-syllable prepositions (e.g., in, at, on, with, by), determiners (e.g., the, a, some, an, his), and auxiliary verbs (e.g., have, can, could, would, is, did) are almost always unstressed in discourse, and thus can be difficult to process for learners of English. On the other hand, the same learners’ tendency to pronounce function words as full forms rather than reduced (e.g., can as [khæn] rather than [kən]) sometimes causes difficulties for native North American English listeners, who associate the full vowel pronunciation with the negative, can’t. Additionally, spoken language with too many stresses can overload the circuits of native listeners, causing them to pay attention to too much informational content, thus making it hard for them to select key words from the stream of speech. Interestingly, Jenkins (Reference Jenkins2000) argued that pronouncing all segments with full forms is unlikely to lead to loss of understanding in ELF communication, in which NNSs speak primarily to other NNSs. This remains an open question for research in ELF.

Rhythm also has the potential to affect intelligibility, especially for nonnative listeners. Rhythm in English leads to pronunciation adjustments both within and across word boundaries, especially in informal casual speech. The word polite is likely to lose its initial unstressed syllable, being pronounced p’lite, which in discourse can be misunderstood as plight. Native listeners are likely to pay attention to an additional cue, the length difference for the stressed vowel, but there is no guarantee that nonnative speakers will pay attention to or produce this cue consistently. Palatalization across word boundaries along with reductions can mask the identity of words completely, so that sentences like Did you eat yet? may sound like Didja eat yet? or even Jeechet?

Other Features

The flow of speech (fluency) appears to affect comprehensibility more than intelligibility. Fluency is a bundle of different features, and it is notoriously difficult to define precisely (Riggenbach, Reference Riggenbach2000). It includes, at the very least, speech rate, phrasing, grammatical grouping, and final lengthening, all mixed up in a soup pot with just the right amount of timing. Even NS speech may be judged as not fluent, since native speakers may have excessive and badly placed filled pauses (e.g., um) or pathological conditions such as stuttering. For listeners who are native speakers of English, disfluent speech interrupts their processing of the message. Likewise, overly fast speech, without appropriate pauses and emphasis, can also make a speaker’s message hard to understand. For listeners who are nonnative speakers of English, excessively fluent speech may be hard to process simply because of its speed. Slower speech, and speech that is less fluent, may be easier to process. From the point of view of speaking, fluency in nonnative speech is likely to develop along with proficiency and practice. A focus on language chunks may be helpful in developing fluency, but fluency is primarily a result of greater speech automaticity, in which all the elements of language do not have to be thought about equally during communication (Gatbonton & Segalowitz, Reference Gatbonton and Segalowitz1988, Reference Gatbonton and Segalowitz2005).

Speech rate is closely related to fluency. Although some L2 speakers talk at an excessively fast speech rate (Munro & Derwing, Reference Munro and Derwing2001), many more speak with rates that are too slow for native listeners. While it may appear to some North American English listeners that some second-language speakers speak too quickly (such as Indian speakers of English), the perception of a fast speech rate may also come from an unfamiliar rhythmic pattern in which more syllables are strongly stressed than expected.

Another global feature of pronunciation, voice quality (Esling & Wong, Reference Esling and Wong1983), may play a role in judgments of comprehensibility, either by facilitating understanding (if the voice quality is familiar and comfortable for a listener) or by exaggerating the effect of other speech differences. For example, Indian English is often spoken with a higher voice pitch than is American English. This difference in voice quality is part of a typical Indian English accent, and it is noticeable enough to add to a listener’s processing load, especially if the listener is not familiar with or favorably disposed toward the accent.

Another feature that may play a role in judgments of comprehensibility is loudness (or its acoustic correlate, intensity). Loudness can play an obvious role if a speaker is excessively soft, or excessively loud, in that listeners will have to work harder to listen effectively. Loudness, however, has little role in linguistic categories. It is, perhaps, a component of stress in English (Zhang & Francis, Reference 294Zhang and Francis2010), but it is not as important as length or pitch. After all, one can identify stressed vowels whether they are whispered or bellowed. In one situation I am familiar with, a female Chinese teaching assistant (TA) was teaching American undergraduate students who had complained about her English, even though her language appeared to be highly comprehensible. However, she spoke very quietly. Upon being questioned, she said that she believed that, as a woman, she was supposed to speak quietly in public situations. This appeared to be a cultural difference (and perhaps an idiosyncratic one at that), but undeniably her lack of loudness harmed both her intelligibility and her comprehensibility. In another situation, a male TA from Sri Lanka was easy to understand when speaking one-on-one, but did not pass a test for TAs because he spoke so quietly. In this case, the quiet speech was not cultural but idiosyncratic.

Finally, another area of pronunciation that may affect comprehensibility has to do with energy or “liveliness.” Hincks, in a series of articles (e.g., Hincks & Edlund, Reference Hincks and Edlund2009), has advocated teaching spoken language through using computer-assisted feedback that measures “liveliness” of speech, a complex feature that appears to be most heavily connected to variations in pitch range throughout extended spoken discourse. Hincks has found that speakers who have livelier voices (with more variation in their pitch range) are heard as more effective. In contrast, speech that sounds more monotone, with flatter pitch over long stretches of speech, may require more work by listeners. The effect of pitch variation does not appear to be connected to linguistic categories of intonation (e.g., final intonation or focus placement), but is rather an effect of how animated voices are.

Error Gravity and Intelligibility

Language errors of any kind are likely to affect the ways that listeners understand a message. Even if the errors are not serious, they may be salient, and thus the errors become an extra part of the message being communicated. For example, children’s speech is noted for its tendency to overgeneralize patterns to exceptions. English-speaking children may say “they flied” rather than “they flew” or “two mans” rather than “two men.” Although this process may seem ubiquitous in children’s speech production, one study that examined the speech of 83 children found that only a median of 2.5 percent of potential errors were actually regularized for all the children studied (Marcus et al., Reference Marcus, Pinker, Ullman, Hollander, Rosen, Xu and Clahsen1992). When I have asked students in introductory linguistics classes how often children make these kinds of errors, and given them options of 5, 25, 45, and 65 percent, they invariably choose 45 or 65 percent, and are shocked when they hear that 5 percent is closer to the actual occurrence. This finding regarding the perception of the frequency of L1 errors suggests a principle. When we consider our ability to understand a speaker with a foreign accent, we are likely to overstate the role of accent and to single-out errors that we know how to name, leading us to attribute to those errors an oversized role in intelligibility. Segmental errors, especially obvious ones like substitutions for /θ/, are noticeable and we can name them. While linguists know that such errors, even when they occur more than once in a sentence, rarely affect the most basic level of understanding – that is, intelligibility (Munro & Derwing, Reference Munro and Derwing2006) – the sounds continue to be taught first in many pronunciation classes. This is the equivalent of putting a bandage on a scratch when the accident victim is having trouble breathing.

Research into errors in written and spoken language has shown over and over again that certain errors are more serious than others. In an early study for English NSs, Hairston (Reference Hairston1981) asked readers in various occupations to judge the seriousness of errors in grammar and usage that are typically addressed in college writing classes and writing handbooks. A surprising number of errors were not considered serious, while those that were considered most serious included those Hairston called status markers (i.e., glaring examples of nonstandard usage, such as double negatives) and errors that caused sentences to seem incompletely formed, such as sentence fragments. In addition, Hairston found differences between the judgments of male and female respondents. Females were stricter in their judgments.

Other studies have looked at judgments of spoken nonnative errors. Fayer and Krasinski (Reference Fayer and Krasinski1987) asked English- and Spanish-speaking judges to evaluate the seriousness of errors from Puerto Rican speakers’ speech. Spanish judges were stricter and more irritated than were native judges, suggesting that the intelligibility of spoken language is also dependent on the listeners and their connection to the language of the speakers. In another study, Derwing, Rossiter, and Ehrensberger-Dow (Reference Derwing, Rossiter and Ehrensberger-Dow2002) found that NSs were better than nonnative speakers at identifying errors in aural than in written form, but that nonnative judges were stricter about the gravity of errors and were more annoyed by the errors.

Other Factors Affecting Judgments of Spoken Language

Judgments of speech may also be negatively influenced by factors unrelated to understanding. Some errors are more irritating than others, even though the message may be perfectly understood. For example, Hairston’s (Reference Hairston1981) status markers, her most negatively rated category, included errors such as “He don’t” and “We was,” both of which are well-known deviations from standard English found in nonstandard dialects. Lippi-Green (Reference Lippi-Green1997) described errors of native and nonnative speakers that are stigmatized even though the speech is perfectly understood. If speakers come from a social group that listeners consider less desirable, then the way they speak may also be considered less desirable.

There is also some evidence that accented speech may be more intelligible if the listeners become familiar with the features of the speech. Derwing, Rossiter, and Munro (Reference Derwing, Rossiter and Munro2002) provided training for social work students who needed to interact with Vietnamese learners of English. The students were given training in the typical features of Vietnamese accents before interacting with Vietnamese immigrants to Canada. The training was minimal (20-minute sessions integrated with other classroom work over eight weeks). Their confidence increased and they reported being more successful in their interactions than those who did not do the training.

Firm answers about the differential effects of errors on understanding have been elusive. While we believe it is possible to identify which errors make a difference and which ones do not, even errors that are not usually harmful to intelligibility may sometimes be more serious, and errors that might be expected to cause difficulties may not. For example, errors in the pronunciation of /θ/ have been almost uniformly considered as not having a serious effect on intelligibility. Jenkins (Reference Jenkins2000) went so far as to include all consonants as important for international intelligibility except dark [ɫ] (as in all) and the two “th” sounds. The case of the “th” sounds is particularly interesting in making recommendations about how much effect errors have on communication. Jenkins (Reference Jenkins2000) and Jenner (Reference Jenner1989) both say that these sounds are relatively unimportant. Brown (Reference Brown1988) found that errors involving “th” typically have a low functional load. However, Deterding (Reference Deterding2005) found that the actual pronunciations of /θ/ as /f/ by Estuary English speakers was very harmful to intelligibility for Singaporean English listeners who listened to British English speakers. The lack of intelligibility was especially noticeable in the words three and free.

Similarly, word-stress errors can easily mask the identity of a word and cause serious difficulties in comprehension, but Cutler, Dahan, and van Donselaar (Reference Cutler, Dahan and van Donselaar1997) found that NSs were rarely troubled by mis-stressing of words for which the stress was a key clue to lexical category. Word pairs like ˈpermit/perˈmit and ˈinsult/inˈsult differ only in stress, yet native listeners do not seem to rely on stress to interpret the words (Cutler, Reference Cutler1986).

These findings suggest that the gravity of errors in a language not only differ between categories, but that they also differ within categories. Thus, it is unlikely that suprasegmental errors are uniformly more serious than segmental errors or that consonant errors are uniformly more serious than vowel errors. Rather, it is likely that each subset of errors has members that are sometimes more serious and sometimes less so. While Gumperz’s (Reference Gumperz1982) examination of Indian and Pakistani women’s intonation shows that unexpected intonation can sometimes cause serious problems in intelligibility (as well as the consequent irritation), it is likely that the use of single-word utterances (Gravy↗ rather than Would you like gravy↘) also played a role in the depth of the misunderstanding. The same unexpected intonation in a syntactically complete question with the politeness marker “would” may be less likely to be considered rude than would the single-word utterance heard by the English NSs.

We need a nuanced approach to intelligibility, an approach that examines in detail what we know of intelligibility, an approach that specifies research priorities that will help tease apart those features that are important for those helping L2 students become more successful in communicating. While it is unlikely that we will ever know perfectly which errors always cause the greatest difficulty, we can know which are likely to do so. But we must examine pronunciation and other errors in greater detail before our language classes can truly reflect an approach that prioritizes intelligibility.

Currently there is very little debate about whether intelligibility is an appropriate goal for teaching spoken language. At least since the late 1980s, a majority of knowledgeable teachers and researchers have advocated some version of the Intelligibility Principle for teaching pronunciation. This does not always affect how pronunciation is actually taught, or how published teaching materials are constructed, but the advocacy has influenced both implicit and explicit discussions of priorities.

The opposite of the Intelligibility Principle, the Nativeness Principle (Levis, Reference Levis2005a), is now rarely put forth by teachers or researchers as a worthwhile goal, even though it remains vibrant, living on in popular beliefs about language learning, in spy novels (where effective spies always seem to become native-like in an unusually short time), and in accent-reduction advertising. The Nativeness Principle, by definition, says that learners have to completely match a native speaker’s production of all pronunciation targets, segmental and suprasegmental, in order to have achieved the target pronunciation in a foreign language. A number of pronunciation class texts appear to follow the Nativeness Principle. Such books include a nearly exhaustive collection of exercises for teaching segmentals and segmental contrasts (e.g., Orion Reference Orion2002), including sound contrasts that are rarely applicable, although often with a less than complete treatment of suprasegmentals. The advantage of such an approach to pronunciation teaching is that it defines what must be mastered and articulates a final objective – native-like speech. There is no obvious need to prioritize because the entire L2 sound system must be learned to mastery.

The power of the Nativeness Principle can be seen in the fact that it is not unusual to hear language learners themselves say they want to sound like a native speaker (NS). Every time I teach pronunciation, one or two students tell me unbidden that this is what they want. This is not surprising, as it shows that learners believe nativeness to be a desirable goal, and it shows that they understand that any deviation from that norm can mark them as being different or as being hard to understand. Their desire assumes a dichotomous state of affairs: native (and therefore unaccented and easy to understand) or nonnative (and therefore accented and harder to understand). Even though it is unlikely that they will ever reach their goal, their desire comes from a noble motive in that they want their spoken language to facilitate their communication. Not being language teachers, they do not realize that reaching a good-enough pronunciation is neither a sign of weakness nor of low standards, but is instead all that is really necessary (or all that is really possible, in most cases).

Unfortunately, few teachers or students have the time, aptitude, or the age necessary to achieve the kind of mastery needed for native-like pronunciation. An intelligibility-based approach, in contrast, requires prioritizing what is taught. Teaching to achieve intelligibility is challenging precisely because of this prioritized approach to errors. Such an approach is based on the assumption that some errors have a relatively large effect on understanding and others a relatively small effect. Judy Gilbert (personal communication) talks about priorities in terms of a battlefield medical image – triage. When faced with many people who are wounded, medics must prioritize treatment. Applied to pronunciation, the image suggests that certain errors (injuries to communication, to follow the metaphor) should be treated first because they are more likely to harm communication than are others. Other injuries to communication are far less problematic, and neither listeners nor speakers will be harmed by lack of accuracy in such cases.

What such an approach should look like in the classroom is not clear, however, partly because proposals based on the Intelligibility Principle conflict, and partly because the Nativeness Principle continues to strongly influence classroom practice and teachers’ attitudes. Also, the Intelligibility Principle must be context-sensitive and connected to both speaking and listening – speakers need to be intelligible to listeners, and listeners need to be able to understand speakers. So decisions about priorities not only involve helping learners produce speech in an accessible way for listeners, but also involve teaching those same learners to understand the speech they hear.

What Is Involved in Pronunciation?

An intelligibility-based approach to teaching pronunciation requires a clear description of the key elements of pronunciation and how they relate to one another. This description is the goal of the following section.

When we use the term pronunciation, we are talking about an interrelated system of sounds and prosody that communicates meaning through categorical contrasts (e.g., phonemes), systematic variations (e.g., allophones), and individual variations that may mark gradient differences such as gender, age, origin, etc. As such, the system of pronunciation can be divided in a variety of ways, but the divisions are merely a way to understand pieces of the system, even though all parts of the system interact with each other in ways that often make it impossible to separate their effects upon understanding.

The classification of the features that I use here is somewhat unusual (Figure 2.1). Typically, pronunciation is divided into segmentals and suprasegmentals, but from the viewpoint of spoken language understanding, word-level features are those most likely to impact intelligibility at the lexical level, and discourse-level features are likely to affect intelligibility at the semantic and pragmatic levels, and they are also more likely to impact comprehensibility. There are also spoken language elements related to pronunciation that are nonetheless not included in my categories for pronunciation. Any of the categories (word-level, discourse-level, and related areas) may impact perceptions of speech. In addition, although Figure 2.1 divides features into separate categories, this is a failure of the visual in representing the ways in which the categories interact. For example, word stress and rhythm both affect the pronunciation of vowels, with unstressed syllables strongly leaning toward schwa. Schwa is clearly a frequent segmental, but it is not a phoneme of English, but rather a variant of other vowels when they occur in unstressed syllables (Ladefoged, Reference Ladefoged1980). Stressed syllables in any part of a word are also the location for aspirated voiceless stop consonants, as in pill, repeat, till, return, kill, and acute, creating a dependency of some consonant allophones and stress. The rhythm of the phrase level and the stress patterns of words affect the ways sounds change in connected speech (e.g., the phoneme /t/ as realized in city, nature, button, and “can’t you’” are not the phonetic sound [t] in North American English). Pronunciation of the individual segments, in other words, is dependent upon where they occur in words, which are in turn affected by where they occur within a phrase. Prominence occurs on syllables that are emphasized through a combination of pitch, syllable duration (a rhythmic feature), and loudness. Prominence typically (but not always) occurs on the stressed syllable of the prominent word, connecting prominence to word stress. Prominence results in segments that are pronounced with particular clarity and precision. The prominent syllable in a phrase is often marked by pitch, and is the beginning of the final pitch contour in the phrase – that is, its intonation.

Figure 2.1 Pronunciation features related to intelligibility

Word-Level Features

Word-level features include segmentals (vowels and consonants) and word stress (Figure 2.2). They also include consonant clusters, a type of segmental which may, when mispronounced, have an effect on syllable structure. This introduction is provided to define how segmentals and word stress are related to intelligibility and comprehensibility.

Segmentals include approximately forty phonemes for most varieties of English (around twenty-four consonant phonemes and fourteen or more vowel phonemes). The phonemes mask the number of sounds that English uses, since phonemes often have multiple well-known allophones that are important for pronunciation teaching. For example, English regularly employs a glottal stop ([ʔ]) before vowel initial words (e.g., I → [ʔaɪ]) or as an allophone for /t/ before a final nasal (e.g., button –[bʌʔn̩]). Other well-known allophones include aspirated voiceless stops and affricates in pill/till/kill/chill, the dark (velarized) /l/ in all (as opposed to the light /l/ in word initial position, e.g., lap), the flapped /t/ in city, the now increasingly rare voiceless labial-velar fricative [ʍ] in which (sounds like [hw]), and many others. For vowels, allophones are almost too numerous to count, as vowel quality often shifts noticeably in the presence of nasals, before /ɹ/ or dark /l/, before /g/, and in unstressed syllables.

Regarding word stress, English is a free-stress language in that stress is not fixed to a particular syllable. Stress can occur on first, second, or third syllables, but the placement is fixed for individual words. For example, the main stress for a word may fall on the first syllable (COMfort, BEAUtiful), the second (caNOE, rePULsive), the third (referENdum, questionnAIRE), etc. Stressed syllables typically have greater segmental clarity, greater syllable duration, and greater intensity than unstressed syllables. When they are in particular discourse contexts, they may also be marked with pitch movement (Ladd & Cutler, Reference Ladd, Cutler, Cutler and Ladd1983)

Both segmentals and word stress are likely to impact intelligibility at the lexical level in that mispronunciations may lead listeners to fail to decode the intended words. This failure may come from identifying other possible words (as happens with minimal pairs) or failing to identify any word that matches the speech signal (as with segments that are distorted). Both consonants and vowels are affected by the stress patterns of words. Mis-stressed words may especially affect the ways that vowels are pronounced in English because of the ubiquity of the unstressed vowel schwa (33 percent of all vowels according to Woods, Reference Woods2005). Schwa is a key perceptual clue to lack of stress in English, and listeners tend to classify full vowels, even in unstressed syllables, as stressed (Fear, Cutler, & Butterfield, Reference Fear, Cutler and Butterfield1995). Because of these interactions, word stress and segmentals are inseparable in their impact on intelligibility (Zielinski, Reference Zielinski2008). An unexpected stress pattern on a word (e.g., FORtune → forTUNE) also may affect the ways that vowels and consonants are produced (e.g., [ˈfɔɹtʃən] versus [fɚˈtʰun]).

Discourse-Level Features

Discourse-level features (Figure 2.3) include suprasegmental features that carry categorical (i.e., phonological) meaning differences. In relation to how listeners may (mis)understand speakers, these suprasegmentals are not likely to cause listeners to misunderstand individual words (making words unintelligible) but are likely to cause listeners to process meaning with greater difficulty (making speech more effortful to understand). Another suprasegmental, word stress, as discussed above, is included as a word-level feature because it is more likely to impact intelligibility (though it may also impact comprehensibility, or ability to process speech, even without a change in vowel quality, as in Slowiaczek [Reference 290Slowiaczek1990]).

Rhythm, the first discourse-level feature, involves at the very least the relative durations of syllables and the timing of syllabic beats. The constructed sentence in (2.1), made up of all single-syllable words, varies between longer, stressed syllables (in CAPS) and shorter, unstressed syllables (in lower case). The stressed words have a pronunciation that will be closer to the citation form, while the unstressed words are prone to simplification (e.g., has is likely to have the [h] deleted or even to be contracted with John).

JOHN has CLIMBED the TREE to GET the CAT that’s been STUCK for a TIME.

In pronunciation teaching, rhythm has often been described in terms of stress timing and syllable timing (e.g., Pike, Reference Pike1945). Stress-timed languages (like English) are asserted to have large durational differences between stressed and unstressed syllables, with relatively equal timing between stresses. Syllable-timed languages are considered to have quite similar durations between syllables and thus timing that is at the level of the syllable rather than at the level of stressed syllables. This well-known formulation is overly simplistic, however, and stress timing and syllable timing are tendencies rather than absolutes (Dauer, Reference Dauer1983). The rhythmic characteristics of many languages remain of interest to researchers, and a wide variety of rhythm metrics have been tested for different L1 speakers (e.g., Low, Grabe, & Nolan, Reference Low, Grabe and Nolan2000), L2 speakers (Yang & Chu, Reference Yang and Chu2016), and L1–L2 comparisons (White & Mattys, Reference White and Mattys2007). However, there is great uncertainty about how well various rhythm metrics actually capture perceived rhythmic differences between languages (Arvaniti, Reference Arvaniti2012).

In English, rhythm and word stress are similar. The discourse level for rhythm in many ways mirrors the word-level rhythm of lexical stress. A major difference is that word stress typically is limited to multi-syllabic words, whereas rhythm includes stress for single-syllable words. In English, for example, content words (e.g., nouns, verbs, adjectives, adverbs, negatives), including those of one syllable, are normally stressed in discourse. Single-syllable function words (e.g., prepositions, auxiliary verbs, pronouns, determiners) are typically unstressed in discourse. Many single-syllable function words are also among the most frequent words in English, helping to contribute to the perception of stress timing.

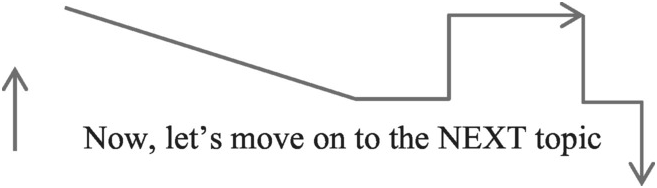

Intonation, the second suprasegmental, includes at least three distinct ways in which meaning is communicated: prominence, tune, and range. The example in (2.2) illustrates these three. For context, imagine that the sentence is spoken in the middle of a lecture.

The initial extra-high pitch range in the example is meant to signal a topic shift, or what has been called a paratone (paragraph tone, see Wichmann, Reference Wichmann2000). Pitch range may also signal gradient meanings related to emotional engagement. NEXT is a prominent syllable with a jump up in pitch to call attention to the importance of the information (in this case, NEXT is likely related to PREVIOUS, the topic(s) that came before.) The last use of pitch is the drop in pitch from NEXT to the end of the utterance. This is the tune. Each of these uses is part of how intonation works in English.

Intonation is the system that uses voice pitch changes to communicate meaning. However, intonation may include more than voice pitch. Prominent syllables are not only higher or lower in pitch than the syllables that precede them, they also have greater duration (a rhythmic feature) and more clearly enunciated segmentals. In addition, the varied intonational categories are closely related. The final prominent syllable in a phrase (the nucleus) is also the beginning of the tune, and both may be pronounced with greater or lesser pitch range.

In regard to comprehensibility, the specific contribution of these suprasegmental features is understudied and needs greater attention. Isaacs and Trofimovich (Reference Isaacs and Trofimovich2012) found that more native-like vowel reduction and pitch contours correlated with better comprehensibility ratings, while pitch range was not significantly related to comprehensibility. Kang (Reference Kang2010) found that pitch range was instead associated with accentedness, but not comprehensibility. Tune, on the other hand, has also been suggested to have an effect on comprehensibility. Pickering (Reference Pickering2001), for example, found that Koreans teaching in English used a greater number of falling tunes than would be expected, and that the relative numbers of rising and falling tunes made their speech more challenging for listeners. (This is an interpretation of Pickering, given that she worked within a model that considers tunes to communicate differences in information structure.)

Related Areas

Comprehensibility and intelligibility are not only associated with pronunciation, but also with other characteristics of spoken language (Figure 2.4) that have an indirect connection to pronunciation. These areas include fluency (Derwing, Munro, & Thomson, Reference Derwing, Munro and Thomson2007), speech rate (Kang, Reference Kang2010), loudness (not typically addressed for L2 pronunciation research), and voice quality (Esling & Wong, Reference Esling and Wong1983; Ladd, Silverman, Tolkmitt, Bergmann, & Scherer, Reference Ladd, Silverman, Tolkmitt, Bergmann and Scherer1985; Munro, Derwing, & Burgess, Reference Munro, Derwing and Burgess2010). Generally, this book will not address these characteristics in detail, because other than fluency and speech rate (which is a component of fluency), these things are more idiosyncratic than the other features.

Figure 2.4 Spoken language features sometimes associated with pronunciation

In particular, research on fluency and speech rate seems to have significant effects on judgments of comprehensibility and accentedness. Pronunciation research on voice quality and its inclusion in teaching materials has never been common, despite its seeming promise in pedagogy (Jones & Evans, Reference Jones and Evans1995; Pennington, Reference Pennington1989). Loudness is an especially important issue in regard to hearing loss, hearing in noise, and the intelligibility of speech for those with cochlear implants. Anecdotally, some L2 learners can become more intelligible simply by speaking at a volume more appropriate to the context (e.g., in a large classroom), but this has not been typically considered important for an L2 pronunciation syllabus.

Fluency is sometimes associated with general proficiency (Fillmore, Reference Fillmore, Fillmore, Kempler and Wang1979) or smoothness of speech (Lennon, Reference Lennon1990). I use fluency to refer to smoothness of speech. However, judgments of fluency are also closely tied to many discrete elements of speech, including the numbers of silent pauses and filled pauses, whether junctures in spoken phrases are grammatically logical, mean length of run, repetitions in speech, and more (Lennon, Reference Lennon1990), as well as the level of automaticity in speaking (Segalowitz, Reference Segalowitz and Riggenbach2000), phonological memory (O’Brien, Segalowitz, Freed, & Collentine, Reference O’Brien, Segalowitz, Freed and Collentine2007), and attention control (Segalowitz, Reference Segalowitz2007). Remedial attention to pronunciation is more likely to be successful when learners are relatively comfortable speaking and listening in the L2 – that is, when they are sufficiently fluent. The development of fluency is clearly an important part of the big picture of L2 speaking development and instruction (Firth, Reference Firth, Avery and Ehrlich1992), and comfortable fluency can help give a global structure to other elements of spoken language, but fluency is not, by itself, part of L2 pronunciation. The fact that it impacts comprehensibility and is critical in communicative language teaching (Rossiter, Derwing, Manimtim, & Thomson, Reference Rossiter, Derwing, Manimtim and Thomson2010) means that, like pronunciation, it should be prioritized in teaching speaking and listening.

Speech rate is predictive of fluency judgments (Cucchiarini, Strik, & Boves, Reference Cucchiarini, Strik and Boves2000; Kormos & Dénes, Reference Kormos and Dénes2004). Fluent speakers can speak at different rates, so a fluent speaker could speak at a slower rate than a speaker who is judged less fluent. Speech rate is typically measured in syllables per second or words per minute. This rate may include all silences and filled pauses, or they may be removed, providing a measure of articulation rate. L2 speakers tend to speak more slowly than L1 natives, and their comprehensibility may be helped by faster speech. However, excessively fast or slow speech is more likely to be rated as less comprehensible and more accented (Derwing & Munro, Reference 273Derwing and Munro2001; Munro & Derwing, Reference Munro and Derwing1998).

Prioritizing: A Summary and Critique of Recommendations