124 results

Sustainability of Surgical Site Infection (SSI) Prevention Bundle for Pediatric Cardiothoracic Surgery Patients

- Melissa Campbell, Jennifer Turi, Cheyenne English, Sharah Collier, Vani Sistla, Jessica Seidelman, Becky Smith, Sarah Lewis, Ibukunoluwa Kalu

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 4 / Issue S1 / July 2024

- Published online by Cambridge University Press:

- 16 September 2024, pp. s145-s146

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: Frequent use of delayed sternal closure and prolonged stays in critical care units contribute to surgical site infections among pediatric patients undergoing cardiothoracic (CT) procedures. Bundled interventions to prevent or reduce surgical site infections (SSIs) have shown prior success, but limited data exist on sustainability of these efforts especially during the Coronavirus Disease 2019 (COVID-19) pandemic. Here, we re-examine the SSI rates for pediatric CT procedures after the onset of the pandemic. Methods: In a single academic center providing regional quaternary care, we created a multidisciplinary CT-surgery SSI Prevention workgroup in response to rising CT SSI rates. Bundle elements focused on daily chlorhexidine bathing, environmental cleaning, monthly room changes, linen management, antimicrobial prophylaxis, and sterile techniques for beside and operating room procedures. CDC surveillance definitions were used to identify superficial, deep or organ space SSIs. To assess the bundle’s sustainability, we compared SSI rates during years impacted by the COVID-19 pandemic (2021–2023, period 2) to pre-pandemic rates (2017–2019, period 1). Data from 2020 were excluded to account for bundle implementation, pandemic restrictions, and a minor decrease in surgical volumes. Rates were calculated as surgical site infection cases per 100 procedures. Mean rates across both periods were compared using paired t-tests (Stata/SE version 14.2). Results: Excluding the year 2020, the average SSI rate per 100 CT procedures increased from 1.07 in period 1 to 1.56 in period 2(p=0.55). Concurrently, the average SSI rate per 100 CT procedures with delayed closures increased from 1.49 in period 1 to 1.97 in period 2(p=0.67). Figure 1 shows SSI rates and procedure counts for 2017–2023. Coagulase negative Staphylococci most frequently caused SSIs in period 1 while methicillin-susceptible Staphylococcus aureus (MSSA) was most frequently identified in period 2. During period 2, the estimated compliance with SSI prevention bundle remained stable and reached 95% for pre-operative chlorhexidine baths and use of appropriate antimicrobial prophylaxis. Monthly room changes with dedicated environmental cleaning reached 100% compliance. Conclusion: Despite staffing shortages and resource limitations (e.g., discontinuation of contact isolation for MRSA colonization) during the COVID-19 pandemic, SSI rates for pediatric CT surgeries showed a slight, but non-statistically significant, increase in post-pandemic years as compared to pre-pandemic years. implementation of bundled interventions and improved surveillance methods may have sustainably impacted these SSI rates. Reinforcing bundle adherence as well as identifying additional prevention interventions to incorporate in pre-, intra-, and post-operative periods may improve patient outcomes.

Neurosurgical Operative Cancellations in Canada: A Multicentre Retrospective Cohort Study

- Mark A. MacLean, Amit R. Persad, Nicole R. Coote, Dilakshan Srikanthan, Michael A. Rizzuto, Jonathan Chainey, Taylor Duda, Matthew E. Eagles, Shannon Hart, Jessica Jung, Michelle M. Kameda-Smith, Melissa Lannon, Eric Toyota, Nicolas Sader, Sean Christie

-

- Journal:

- Canadian Journal of Neurological Sciences , First View

- Published online by Cambridge University Press:

- 17 May 2024, pp. 1-7

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Introduction:

Operative cancellations adversely affect patient health and impose resource strain on the healthcare system. Here, our objective was to describe neurosurgical cancellations at five Canadian academic institutions.

Methods:The Canadian Neurosurgery Research Collaborative performed a retrospective cohort study capturing neurosurgical procedure cancellation data at five Canadian academic centres, during the period between January 1, 2014 and December 31, 2018. Demographics, procedure type, reason for cancellation, admission status and case acuity were collected. Cancellation rates were compared on the basis of demographic data, procedural data and between centres.

Results:Overall, 7,734 cancellations were captured across five sites. Mean age of the aggregate cohort was 57.1 ± 17.2 years. The overall procedure cancellation rate was 18.2%. The five-year neurosurgical operative cancellation rate differed between Centre 1 and 2 (Centre 1: 25.9%; Centre 2: 13.0%, p = 0.008). Female patients less frequently experienced procedural cancellation. Elective, outpatient and spine procedures were more often cancelled. Reasons for cancellation included surgeon-related factors (28.2%), cancellation for a higher acuity case (23.9%), patient condition (17.2%), other factors (17.0%), resource availability (7.0%), operating room running late (6.4%) and anaesthesia-related (0.3%). When clustered, the reason for cancellation was patient-related in 17.2%, staffing-related in 28.5% and operational or resource-related in 54.3% of cases.

Conclusions:Neurosurgical operative cancellations were common and most often related to operational or resource-related factors. Elective, outpatient and spine procedures were more often cancelled. These findings highlight areas for optimizing efficiency and targeted quality improvement initiatives.

A bacteriophage-based validation of a personal protective equipment doffing procedure to be used with high-consequence pathogens

- Brandon A. Berryhill, Kylie B. Burke, Andrew P. Smith, Jill S. Morgan, Jessica Tarabay, Josia Mamora, Jay B. Varkey, Joel M. Mumma, Colleen S. Kraft

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 06 May 2024, pp. 1-7

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Objective:

To determine if the high-level personal protective equipment used in the treatment of high-consequence infectious diseases is effective at stopping the spread of pathogens to healthcare personnel (HCP) while doffing.

Background:Personal protective equipment (PPE) is fundamental to the safety of HCPs. HCPs treating patients with high-consequence infectious diseases use several layers of PPE, forming complex protective ensembles. With high-containment PPE, step-by-step procedures are often used for donning and doffing to minimize contamination risk to the HCP, but these procedures are rarely empirically validated and instead rely on following infection prevention best practices.

Methods:A doffing protocol video for a high-containment PPE ensemble was evaluated to determine potential contamination pathways. These potential pathways were tested using fluorescence and genetically marked bacteriophages.

Results:The experiments revealed existing protocols permit contamination pathways allowing for transmission of bacteriophages to HCPs. Updates to the doffing protocols were generated based on the discovered contamination pathways. This updated doffing protocol eliminated the movement of viable bacteriophages from the outside of the PPE to the skin of the HCP.

Conclusions:Our results illustrate the need for quantitative, scientific investigations of infection prevention practices, such as doffing PPE.

Comparative epidemiology of hospital-onset bloodstream infections (HOBSIs) and central line-associated bloodstream infections (CLABSIs) across a three-hospital health system

- Jay Krishnan, Erin B. Gettler, Melissa Campbell, Ibukunoluwa C. Kalu, Jessica Seidelman, Becky Smith, Sarah Lewis

-

- Journal:

- Infection Control & Hospital Epidemiology , First View

- Published online by Cambridge University Press:

- 20 March 2024, pp. 1-7

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Objective:

To evaluate the comparative epidemiology of hospital-onset bloodstream infection (HOBSI) and central line-associated bloodstream infection (CLABSI)

Design and Setting:Retrospective observational study of HOBSI and CLABSI across a three-hospital healthcare system from 01/01/2017 to 12/31/2021

Methods:HOBSIs were identified as any non-commensal positive blood culture event on or after hospital day 3. CLABSIs were identified based on National Healthcare Safety Network (NHSN) criteria. We performed a time-series analysis to assess comparative temporal trends among HOBSI and CLABSI incidence. Using univariable and multivariable regression analyses, we compared demographics, risk factors, and outcomes between non-CLABSI HOBSI and CLABSI, as HOBSI and CLABSI are not exclusive entities.

Results:HOBSI incidence increased over the study period (IRR 1.006 HOBSI/1,000 patient days; 95% CI 1.001–1.012; P = .03), while no change in CLABSI incidence was observed (IRR .997 CLABSIs/1,000 central line days, 95% CI .992–1.002, P = .22). Differing demographic, microbiologic, and risk factor profiles were observed between CLABSIs and non-CLABSI HOBSIs. Multivariable analysis found lower odds of mortality among patients with CLABSIs when adjusted for covariates that approximate severity of illness (OR .27; 95% CI .11–.64; P < .01).

Conclusions:HOBSI incidence increased over the study period without a concurrent increase in CLABSI in our study population. Furthermore, risk factor and outcome profiles varied between CLABSI and non-CLABSI HOBSI, which suggest that these metrics differ in important ways worth considering if HOBSI is adopted as a quality metric.

53 2-Back Performance Does Not Differ Between Cognitive Training Groups in Older Adults Without Dementia

- Nicole D Evangelista, Jessica N Kraft, Hanna K Hausman, Andrew O’Shea, Alejandro Albizu, Emanuel M Boutzoukas, Cheshire Hardcastle, Emily J Van Etten, Pradyumna K Bharadwaj, Hyun Song, Samantha G Smith, Steven DeKosky, Georg A Hishaw, Samuel Wu, Michael Marsiske, Ronald Cohen, Gene E Alexander, Eric Porges, Adam J Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 360-361

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Cognitive training is a non-pharmacological intervention aimed at improving cognitive function across a single or multiple domains. Although the underlying mechanisms of cognitive training and transfer effects are not well-characterized, cognitive training has been thought to facilitate neural plasticity to enhance cognitive performance. Indeed, the Scaffolding Theory of Aging and Cognition (STAC) proposes that cognitive training may enhance the ability to engage in compensatory scaffolding to meet task demands and maintain cognitive performance. We therefore evaluated the effects of cognitive training on working memory performance in older adults without dementia. This study will help begin to elucidate non-pharmacological intervention effects on compensatory scaffolding in older adults.

Participants and Methods:48 participants were recruited for a Phase III randomized clinical trial (Augmenting Cognitive Training in Older Adults [ACT]; NIH R01AG054077) conducted at the University of Florida and University of Arizona. Participants across sites were randomly assigned to complete cognitive training (n=25) or an education training control condition (n=23). Cognitive training and the education training control condition were each completed during 60 sessions over 12 weeks for 40 hours total. The education training control condition involved viewing educational videos produced by the National Geographic Channel. Cognitive training was completed using the Posit Science Brain HQ training program, which included 8 cognitive training paradigms targeting attention/processing speed and working memory. All participants also completed demographic questionnaires, cognitive testing, and an fMRI 2-back task at baseline and at 12-weeks following cognitive training.

Results:Repeated measures analysis of covariance (ANCOVA), adjusted for training adherence, transcranial direct current stimulation (tDCS) condition, age, sex, years of education, and Wechsler Test of Adult Reading (WTAR) raw score, revealed a significant 2-back by training group interaction (F[1,40]=6.201, p=.017, η2=.134). Examination of simple main effects revealed baseline differences in 2-back performance (F[1,40]=.568, p=.455, η2=.014). After controlling for baseline performance, training group differences in 2-back performance was no longer statistically significant (F[1,40]=1.382, p=.247, η2=.034).

Conclusions:After adjusting for baseline performance differences, there were no significant training group differences in 2-back performance, suggesting that the randomization was not sufficient to ensure adequate distribution of participants across groups. Results may indicate that cognitive training alone is not sufficient for significant improvement in working memory performance on a near transfer task. Additional improvement may occur with the next phase of this clinical trial, such that tDCS augments the effects of cognitive training and results in enhanced compensatory scaffolding even within this high performing cohort. Limitations of the study include a highly educated sample with higher literacy levels and the small sample size was not powered for transfer effects analysis. Future analyses will include evaluation of the combined intervention effects of a cognitive training and tDCS on nback performance in a larger sample of older adults without dementia.

80 Implications of Body Mass Index on Executive Functioning in Clinically Diagnosed Neurodiverse Children

- Laura A Campos, Sri Vaishnavi Konagalla, Jessica Smith, Jordan Linde, Madison Berl, Chandan Vaidya, Lauren Kenworthy

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 72-73

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Childhood obesity is a serious health epidemic affecting the world today. Children who are obese earlier in life are more likely to stay obese and have an increased risk of poorer health outcomes later in life, such as diabetes and cardiovascular diseases. Obesity is also associated with deficits in executive function. Executive function (EF) is comprised of several distinct but interrelated abilities including working memory, planning, inhibition, and flexibility. Prior research suggests that obesity drives brain changes which implicate executive function structures. Our aim is to examine the relationship between childhood obesity and executive function in children with neurodevelopmental disorders.

Participants and Methods:These data are from an ongoing study on neural and behavioral phenotypes of executive functioning in children with developmental disabilities, primarily Attention-Deficit/Hyperactivity Disorder (ADHD) and Autism Spectrum Disorder (ASD). Only study participants with complete BMI and BRIEF data were included in these analyses (n = 184). 134 representing (72.8%) of the participants were Male, 49 representing (26.6%) were Female, and 1 representing (.5%) were Gender nonconforming. 50 representing (27.2%) of the participants were between 8-9 years, 55 representing (29.9%) were between 10-11 years, and 80 representing (43.0%) were between 12-13 years. Average age was 11 years. 11 representing (6.0%) of the participants were underweight, 115 representing (62.5%) were healthy, 29 representing (15.8%) were overweight, and 29 representing (15.8%) were obese. Average BMI was 19.0, ranging from 13.2 to 36.3. 106 representing (57.6%) of the participants identified as White, 65 representing (35.3%) identified as BIPOC (2 Asian, 31 Hispanic/Latinx, 32 Black) and 13 representing (4.4%) identified as other/unspecified. 114 representing (61.9%) of the participants had a diagnosis of ADHD, ASD, or comorbid ASD and ADHD, 70 representing (38.1%) had a diagnosis of other. Average FSIQ-2 score was 106.98. Parents were asked to complete the Behavior Rating Inventory of Executive Function (BRIEF-2) and the Inhibit, Shift, Working Memory (WM), Planning, and Global Executive Composite (GEC) scales were used as the dependent measure in analyses. BMI (kg/mA2) was calculated based on CDC 2000 growth charts and classified into 4 mutually exclusive categories—underweight, healthy, overweight, and obese. There was a prediction that higher BMI would be associated with lower executive function.

Results:A one-way ANOVA revealed a statistically significant difference between groups (F(3,180) = 3.649, p = .014). A Tukey post hoc test revealed more Shift problems in the obese group (74.55 ± 11.7) compared to the overweight group (65.79 ± 11.6, p = .026). There was no statistically significant difference between the underweight/healthy and obese groups (p = .999/p = .054). There was no statistically significant difference in mean T-scores for the Inhibit, WM, Planning, or GEC scales.

Conclusions:Childhood obesity and executive function deficits are significant risk factors for adult health outcomes. Obesity and elevated executive function T-scores for flexibility are related in a group of children with neurodevelopmental disorders. Future investigation will explore the role of cortical thickness and medication in these data.

A cluster of three extrapulmonary Mycobacterium abscessus infections linked to well-maintained water-based heater-cooler devices

- Jessica L. Seidelman, Arthur W. Baker, Sarah S. Lewis, Bobby G. Warren, Aaron Barrett, Amanda Graves, Carly King, Bonnie Taylor, Jill Engel, Desiree Bonnadonna, Carmelo Milano, Richard J. Wallace, Matthew Stiegel, Deverick J. Anderson, Becky A. Smith

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 5 / May 2024

- Published online by Cambridge University Press:

- 21 December 2023, pp. 644-650

- Print publication:

- May 2024

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Background:

Various water-based heater-cooler devices (HCDs) have been implicated in nontuberculous mycobacteria outbreaks. Ongoing rigorous surveillance for healthcare-associated M. abscessus (HA-Mab) put in place following a prior institutional outbreak of M. abscessus alerted investigators to a cluster of 3 extrapulmonary M. abscessus infections among patients who had undergone cardiothoracic surgery.

Methods:Investigators convened a multidisciplinary team and launched a comprehensive investigation to identify potential sources of M. abscessus in the healthcare setting. Adherence to tap water avoidance protocols during patient care and HCD cleaning, disinfection, and maintenance practices were reviewed. Relevant environmental samples were obtained. Patient and environmental M. abscessus isolates were compared using multilocus-sequence typing and pulsed-field gel electrophoresis. Smoke testing was performed to evaluate the potential for aerosol generation and dispersion during HCD use. The entire HCD fleet was replaced to mitigate continued transmission.

Results:Clinical presentations of case patients and epidemiologic data supported intraoperative acquisition. M. abscessus was isolated from HCDs used on patients and molecular comparison with patient isolates demonstrated clonality. Smoke testing simulated aerosolization of M. abscessus from HCDs during device operation. Because the HCD fleet was replaced, no additional extrapulmonary HA-Mab infections due to the unique clone identified in this cluster have been detected.

Conclusions:Despite adhering to HCD cleaning and disinfection strategies beyond manufacturer instructions for use, HCDs became colonized with and ultimately transmitted M. abscessus to 3 patients. Design modifications to better contain aerosols or filter exhaust during device operation are needed to prevent NTM transmission events from water-based HCDs.

2 Higher White Matter Hyperintensity Load Adversely Affects Pre-Post Proximal Cognitive Training Performance in Healthy Older Adults

- Emanuel M Boutzoukas, Andrew O’Shea, Jessica N Kraft, Cheshire Hardcastle, Nicole D Evangelista, Hanna K Hausman, Alejandro Albizu, Emily J Van Etten, Pradyumna K Bharadwaj, Samantha G Smith, Hyun Song, Eric C Porges, Alex Hishaw, Steven T DeKosky, Samuel S Wu, Michael Marsiske, Gene E Alexander, Ronald Cohen, Adam J Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 671-672

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Cognitive training has shown promise for improving cognition in older adults. Aging involves a variety of neuroanatomical changes that may affect response to cognitive training. White matter hyperintensities (WMH) are one common age-related brain change, as evidenced by T2-weighted and Fluid Attenuated Inversion Recovery (FLAIR) MRI. WMH are associated with older age, suggestive of cerebral small vessel disease, and reflect decreased white matter integrity. Higher WMH load associates with reduced threshold for clinical expression of cognitive impairment and dementia. The effects of WMH on response to cognitive training interventions are relatively unknown. The current study assessed (a) proximal cognitive training performance following a 3-month randomized control trial and (b) the contribution of baseline whole-brain WMH load, defined as total lesion volume (TLV), on pre-post proximal training change.

Participants and Methods:Sixty-two healthy older adults ages 65-84 completed either adaptive cognitive training (CT; n=31) or educational training control (ET; n=31) interventions. Participants assigned to CT completed 20 hours of attention/processing speed training and 20 hours of working memory training delivered through commercially-available Posit Science BrainHQ. ET participants completed 40 hours of educational videos. All participants also underwent sham or active transcranial direct current stimulation (tDCS) as an adjunctive intervention, although not a variable of interest in the current study. Multimodal MRI scans were acquired during the baseline visit. T1- and T2-weighted FLAIR images were processed using the Lesion Segmentation Tool (LST) for SPM12. The Lesion Prediction Algorithm of LST automatically segmented brain tissue and calculated lesion maps. A lesion threshold of 0.30 was applied to calculate TLV. A log transformation was applied to TLV to normalize the distribution of WMH. Repeated-measures analysis of covariance (RM-ANCOVA) assessed pre/post change in proximal composite (Total Training Composite) and sub-composite (Processing Speed Training Composite, Working Memory Training Composite) measures in the CT group compared to their ET counterparts, controlling for age, sex, years of education and tDCS group. Linear regression assessed the effect of TLV on post-intervention proximal composite and sub-composite, controlling for baseline performance, intervention assignment, age, sex, years of education, multisite scanner differences, estimated total intracranial volume, and binarized cardiovascular disease risk.

Results:RM-ANCOVA revealed two-way group*time interactions such that those assigned cognitive training demonstrated greater improvement on proximal composite (Total Training Composite) and sub-composite (Processing Speed Training Composite, Working Memory Training Composite) measures compared to their ET counterparts. Multiple linear regression showed higher baseline TLV associated with lower pre-post change on Processing Speed Training sub-composite (ß = -0.19, p = 0.04) but not other composite measures.

Conclusions:These findings demonstrate the utility of cognitive training for improving postintervention proximal performance in older adults. Additionally, pre-post proximal processing speed training change appear to be particularly sensitive to white matter hyperintensity load versus working memory training change. These data suggest that TLV may serve as an important factor for consideration when planning processing speed-based cognitive training interventions for remediation of cognitive decline in older adults.

1 Task-Based Functional Connectivity and Network Segregation of the Useful Field of View (UFOV) fMRI task

- Jessica N Kraft, Hanna K Hausman, Cheshire Hardcastle, Alejandro Albizu, Andrew O’Shea, Nicole D Evangelista, Emanuel M Boutzoukas, Emily J Van Etten, Pradyumna K Bharadwaj, Hyun Song, Samantha G Smith, Steven T DeKosky, Georg A Hishaw, Samuel Wu, Michael Marsiske, Ronald Cohen, Eric Porges, Adam J Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 606-607

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Interventions using a cognitive training paradigm called the Useful Field of View (UFOV) task have shown to be efficacious in slowing cognitive decline. However, no studies have looked at the engagement of functional networks during UFOV task completion. The current study aimed to (a) assess if regions activated during the UFOV fMRI task were functionally connected and related to task performance (henceforth called the UFOV network), (b) compare connectivity of the UFOV network to 7 resting-state functional connectivity networks in predicting proximal (UFOV) and near-transfer (Double Decision) performance, and (c) explore the impact of network segregation between higher-order networks and UFOV performance.

Participants and Methods:336 healthy older adults (mean age=71.6) completed the UFOV fMRI task in a Siemens 3T scanner. UFOV fMRI accuracy was calculated as the number of correct responses divided by 56 total trials. Double Decision performance was calculated as the average presentation time of correct responses in log ms, with lower scores equating to better processing speed. Structural and functional MRI images were processed using the default pre-processing pipeline within the CONN toolbox. The Artifact Rejection Toolbox was set at a motion threshold of 0.9mm and participants were excluded if more than 50% of volumes were flagged as outliers. To assess connectivity of regions associated with the UFOV task, we created 10 spherical regions of interest (ROIs) a priori using the WFU PickAtlas in SPM12. These include the bilateral pars triangularis, supplementary motor area, and inferior temporal gyri, as well as the left pars opercularis, left middle occipital gyrus, right precentral gyrus and right superior parietal lobule. We used a weighted ROI-to-ROI connectivity analysis to model task-based within-network functional connectivity of the UFOV network, and its relationship to UFOV accuracy. We then used weighted ROI-to-ROI connectivity analysis to compare the efficacy of the UFOV network versus 7 resting-state networks in predicting UFOV fMRI task performance and Double Decision performance. Finally, we calculated network segregation among higher order resting state networks to assess its relationship with UFOV accuracy. All functional connectivity analyses were corrected at a false discovery threshold (FDR) at p<0.05.

Results:ROI-to-ROI analysis showed significant within-network functional connectivity among the 10 a priori ROIs (UFOV network) during task completion (all pFDR<.05). After controlling for covariates, greater within-network connectivity of the UFOV network associated with better UFOV fMRI performance (pFDR=.008). Regarding the 7 resting-state networks, greater within-network connectivity of the CON (pFDR<.001) and FPCN (pFDR=. 014) were associated with higher accuracy on the UFOV fMRI task. Furthermore, greater within-network connectivity of only the UFOV network associated with performance on the Double Decision task (pFDR=.034). Finally, we assessed the relationship between higher-order network segregation and UFOV accuracy. After controlling for covariates, no significant relationships between network segregation and UFOV performance remained (all p-uncorrected>0.05).

Conclusions:To date, this is the first study to assess task-based functional connectivity during completion of the UFOV task. We observed that coherence within 10 a priori ROIs significantly predicted UFOV performance. Additionally, enhanced within-network connectivity of the UFOV network predicted better performance on the Double Decision task, while conventional resting-state networks did not. These findings provide potential targets to optimize efficacy of UFOV interventions.

78 BVMT-R Learning Ratio Moderates Cognitive Training Gains in Useful Field of View Task in Healthy Older Adults

- Cheshire Hardcastle, Jessica N. Kraft, Hanna K. Hausman, Andrew O’Shea, Alejandro Albizu, Nicole D. Evangelista, Emanuel Boutzoukas, Emily J. Van Etten, Pradyumna K. Bharadwaj, Hyun Song, Samantha G. Smith, Eric Porges, Steven DeKosky, Georg A. Hishaw, Samuel Wu, Michael Marsiske, Ronald Cohen, Gene E. Alexander, Adam J. Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 180-181

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Cognitive training using a visual speed-of-processing task, called the Useful Field of View (UFOV) task, reduced dementia risk and reduced decline in activities of daily living at a 10-year follow-up in older adults. However, there is variability in the level of cognitive gains after cognitive training across studies. One potential explanation for this variability could be moderating factors. Prior studies suggest variables moderating cognitive training gains share features of the training task. Learning trials of the Hopkins Verbal Learning Test-Revised (HVLT-R) and Brief Visuospatial Memory Test-Revised (BVMT-R) recruit similar cognitive abilities and have overlapping neural correlates with the UFOV task and speed-ofprocessing/working memory tasks and therefore could serve as potential moderators. Exploring moderating factors of cognitive training gains may boost the efficacy of interventions, improve rigor in the cognitive training literature, and eventually help provide tailored treatment recommendations. This study explored the association between the HVLT-R and BVMT-R learning and the UFOV task, and assessed the moderation of HVLT-R and BVMT-R learning on UFOV improvement after a 3-month speed-ofprocessing/attention and working memory cognitive training intervention in cognitively healthy older adults.

Participants and Methods:75 healthy older adults (M age = 71.11, SD = 4.61) were recruited as part of a larger clinical trial through the Universities of Florida and Arizona. Participants were randomized into a cognitive training (n=36) or education control (n=39) group and underwent a 40-hour, 12-week intervention. Cognitive training intervention consisted of practicing 4 attention/speed-of-processing (including the UFOV task) and 4 working memory tasks. Education control intervention consisted of watching 40-minute educational videos. The HVLT-R and BVMT-R were administered at the pre-intervention timepoint as part of a larger neurocognitive battery. The learning ratio was calculated as: trial 3 total - trial 1 total/12 - trial 1 total. UFOV performance was measured at pre- and post-intervention time points via the POSIT Brain HQ Double Decision Assessment. Multiple linear regressions predicted baseline Double Decision performance from HVLT-R and BVMT-R learning ratios controlling for study site, age, sex, and education. A repeated measures moderation analysis assessed the moderation of HVLT-R and BVMT-R learning ratio on Double Decision change from pre- to post-intervention for cognitive training and education control groups.

Results:Baseline Double Decision performance significantly associated with BVMT-R learning ratio (β=-.303, p=.008), but not HVLT-R learning ratio (β=-.142, p=.238). BVMT-R learning ratio moderated gains in Double Decision performance (p<.01); for each unit increase in BVMT-R learning ratio, there was a .6173 unit decrease in training gains. The HVLT-R learning ratio did not moderate gains in Double Decision performance (p>.05). There were no significant moderations in the education control group.

Conclusions:Better visuospatial learning was associated with faster Double Decision performance at baseline. Those with poorer visuospatial learning improved most on the Double Decision task after training, suggesting that healthy older adults who perform below expectations may show the greatest training gains. Future cognitive training research studying visual speed-of-processing interventions should account for differing levels of visuospatial learning at baseline, as this could impact the magnitude of training outcomes.

6 Adjunctive Transcranial Direct Current Stimulation and Cognitive Training Alters Default Mode and Frontoparietal Control Network Connectivity in Older Adults

- Hanna K Hausman, Jessica N Kraft, Cheshire Hardcastle, Nicole D Evangelista, Emanuel M Boutzoukas, Andrew O’Shea, Alejandro Albizu, Emily J Van Etten, Pradyumna K Bharadwaj, Hyun Song, Samantha G Smith, Eric S Porges, Georg A Hishaw, Samuel Wu, Steven DeKosky, Gene E Alexander, Michael Marsiske, Ronald A Cohen, Adam J Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 675-676

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Aging is associated with disruptions in functional connectivity within the default mode (DMN), frontoparietal control (FPCN), and cingulo-opercular (CON) resting-state networks. Greater within-network connectivity predicts better cognitive performance in older adults. Therefore, strengthening network connectivity, through targeted intervention strategies, may help prevent age-related cognitive decline or progression to dementia. Small studies have demonstrated synergistic effects of combining transcranial direct current stimulation (tDCS) and cognitive training (CT) on strengthening network connectivity; however, this association has yet to be rigorously tested on a large scale. The current study leverages longitudinal data from the first-ever Phase III clinical trial for tDCS to examine the efficacy of an adjunctive tDCS and CT intervention on modulating network connectivity in older adults.

Participants and Methods:This sample included 209 older adults (mean age = 71.6) from the Augmenting Cognitive Training in Older Adults multisite trial. Participants completed 40 hours of CT over 12 weeks, which included 8 attention, processing speed, and working memory tasks. Participants were randomized into active or sham stimulation groups, and tDCS was administered during CT daily for two weeks then weekly for 10 weeks. For both stimulation groups, two electrodes in saline-soaked 5x7 cm2 sponges were placed at F3 (cathode) and F4 (anode) using the 10-20 measurement system. The active group received 2mA of current for 20 minutes. The sham group received 2mA for 30 seconds, then no current for the remaining 20 minutes.

Participants underwent resting-state fMRI at baseline and post-intervention. CONN toolbox was used to preprocess imaging data and conduct region of interest (ROI-ROI) connectivity analyses. The Artifact Detection Toolbox, using intermediate settings, identified outlier volumes. Two participants were excluded for having greater than 50% of volumes flagged as outliers. ROI-ROI analyses modeled the interaction between tDCS group (active versus sham) and occasion (baseline connectivity versus postintervention connectivity) for the DMN, FPCN, and CON controlling for age, sex, education, site, and adherence.

Results:Compared to sham, the active group demonstrated ROI-ROI increases in functional connectivity within the DMN following intervention (left temporal to right temporal [T(202) = 2.78, pFDR < 0.05] and left temporal to right dorsal medial prefrontal cortex [T(202) = 2.74, pFDR < 0.05]. In contrast, compared to sham, the active group demonstrated ROI-ROI decreases in functional connectivity within the FPCN following intervention (left dorsal prefrontal cortex to left temporal [T(202) = -2.96, pFDR < 0.05] and left dorsal prefrontal cortex to left lateral prefrontal cortex [T(202) = -2.77, pFDR < 0.05]). There were no significant interactions detected for CON regions.

Conclusions:These findings (a) demonstrate the feasibility of modulating network connectivity using tDCS and CT and (b) provide important information regarding the pattern of connectivity changes occurring at these intervention parameters in older adults. Importantly, the active stimulation group showed increases in connectivity within the DMN (a network particularly vulnerable to aging and implicated in Alzheimer’s disease) but decreases in connectivity between left frontal and temporal FPCN regions. Future analyses from this trial will evaluate the association between these changes in connectivity and cognitive performance post-intervention and at a one-year timepoint.

28 Factor Structure of Conventional Neuropsychological Tests and NIH-Toolbox in Healthy Older Adults

- Kailey Langer, Cheshire Hardcastle, Hanna Hausman, Jessica Kraft, Alejandro Albizu, Nicole Evangelista, Emanuel Boutzoukas, Andrew O’Shea, Emily Van Etten, Samantha Smith, Hyun Song, Pradyumna Bharadwaj, Georg Hishaw, Samuel Wu, Steven DeKosky, Gene Alexander, Eric Porges, Michael Marsiske, Ronald Cohen, Adam Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, p. 710

-

- Article

-

- You have access Access

- Export citation

-

Objective:

The National Institutes of Health-Toolbox cognition battery (NIH-TCB) is widely used in cognitive aging studies and includes measures in cognitive domains evaluated for dimensional structure and psychometric properties in prior research. The present study addresses a current literature gap by demonstrating how NIH-TCB integrates into a battery of traditional clinical neuropsychological measures. The dimensional structure of NIH-TCB measures along with conventional neuropsychological tests is assessed in healthy older adults.

Participants and Methods:Baseline cognitive data were obtained from 327 older adults. The following measures were collected: NIH-Toolbox cognitive battery, Controlled Oral Word Association (COWA) letter and animals tests, Wechsler Test of Adult Reading (WTAR), Stroop Color-Word Interference Test, Paced Auditory Serial Addition Test (PASAT), Brief Visuospatial Memory Test (BVMT), Letter-Number Sequencing (LNS), Hopkins Verbal Learning Test (HVLT), Trail Making Test A&B, Digit Span. Hmisc, psych, and GPARotation packages for R were used to conduct exploratory factor analyses (EFA). A 5-factor solution was conducted followed by a 6-factor solution. Promax rotation was used for both EFA models.

Results:The 6-factor EFA solution is reported here. Results indicated the following 6 factors: working memory (Digit Span forward, backward, and sequencing, PASAT trials 1 and 2, NIH-Toolbox List Sorting, LNS), speed/executive function (Stroop color naming, word reading, and color-word interference, NIH-Toolbox Flanker, Dimensional Change, and Pattern Comparison, Trail Making Test A&B), verbal fluency (COWA letters F-A-S), crystallized intelligence (WTAR, NIH-Toolbox Oral Recognition and Picture Vocabulary), visual memory (BVMT immediate and delayed), and verbal memory (HVLT immediate and delayed. COWA animals and NIH-Toolbox Picture Sequencing did not adequately load onto any EFA factor and were excluded from the subsequent CFA.

Conclusions:Findings indicate that in a sample of healthy older adults, these collected measures and those obtained through the NIH-Toolbox battery represent 6 domains of cognitive function. Results suggest that in this sample, picture sequencing and COWA animals did not load adequately onto the factors created from the rest of the measures collected. These findings should assist in interpreting future research using combined NIH-TCB and neuropsychological batteries to assess cognition in healthy older adults.

9 Connecting memory and functional brain networks in older adults: a resting state fMRI study

- Jori L Waner, Hanna K Hausman, Jessica N Kraft, Cheshire Hardcastle, Nicole D Evangelista, Andrew O’Shea, Alejandro Albizu, Emanuel M Boutzoukas, Emily J Van Etten, Pradyumna K Bharadwaj, Hyun Song, Samantha G Smith, Steven T DeKosky, Georg A Hishaw, Samuel S Wu, Michael Marsiske, Ronald Cohen, Gene E Alexander, Eric C Porges, Adam J Woods

-

- Journal:

- Journal of the International Neuropsychological Society / Volume 29 / Issue s1 / November 2023

- Published online by Cambridge University Press:

- 21 December 2023, pp. 527-528

-

- Article

-

- You have access Access

- Export citation

-

Objective:

Nonpathological aging has been linked to decline in both verbal and visuospatial memory abilities in older adults. Disruptions in resting-state functional connectivity within well-characterized, higherorder cognitive brain networks have also been coupled with poorer memory functioning in healthy older adults and in older adults with dementia. However, there is a paucity of research on the association between higherorder functional connectivity and verbal and visuospatial memory performance in the older adult population. The current study examines the association between resting-state functional connectivity within the cingulo-opercular network (CON), frontoparietal control network (FPCN), and default mode network (DMN) and verbal and visuospatial learning and memory in a large sample of healthy older adults. We hypothesized that greater within-network CON and FPCN functional connectivity would be associated with better immediate verbal and visuospatial memory recall. Additionally, we predicted that within-network DMN functional connectivity would be associated with improvements in delayed verbal and visuospatial memory recall. This study helps to glean insight into whether within-network CON, FPCN, or DMN functional connectivity is associated with verbal and visuospatial memory abilities in later life.

Participants and Methods:330 healthy older adults between 65 and 89 years old (mean age = 71.6 ± 5.2) were recruited at the University of Florida (n = 222) and the University of Arizona (n = 108). Participants underwent resting-state fMRI and completed verbal memory (Hopkins Verbal Learning Test - Revised [HVLT-R]) and visuospatial memory (Brief Visuospatial Memory Test - Revised [BVMT-R]) measures. Immediate (total) and delayed recall scores on the HVLT-R and BVMT-R were calculated using each test manual’s scoring criteria. Learning ratios on the HVLT-R and BVMT-R were quantified by dividing the number of stimuli (verbal or visuospatial) learned between the first and third trials by the number of stimuli not recalled after the first learning trial. CONN Toolbox was used to extract average within-network connectivity values for CON, FPCN, and DMN. Hierarchical regressions were conducted, controlling for sex, race, ethnicity, years of education, number of invalid scans, and scanner site.

Results:Greater CON connectivity was significantly associated with better HVLT-R immediate (total) recall (ß = 0.16, p = 0.01), HVLT-R learning ratio (ß = 0.16, p = 0.01), BVMT-R immediate (total) recall (ß = 0.14, p = 0.02), and BVMT-R delayed recall performance (ß = 0.15, p = 0.01). Greater FPCN connectivity was associated with better BVMT-R learning ratio (ß = 0.13, p = 0.04). HVLT-R delayed recall performance was not associated with connectivity in any network, and DMN connectivity was not significantly related to any measure.

Conclusions:Connectivity within CON demonstrated a robust relationship with different components of memory function as well across verbal and visuospatial domains. In contrast, FPCN only evidenced a relationship with visuospatial learning, and DMN was not significantly associated with memory measures. These data suggest that CON may be a valuable target in longitudinal studies of age-related memory changes, but also a possible target in future non-invasive interventions to attenuate memory decline in older adults.

Implementation of a diagnostic stewardship intervention to improve blood-culture utilization in 2 surgical ICUs: Time for a blood-culture change

- Jessica L. Seidelman, Rebekah Moehring, Erin Gettler, Jay Krishnan, Lynn McGugan, Rachel Jordan, Margaret Murphy, Heather Pena, Christopher R. Polage, Diana Alame, Sarah Lewis, Becky Smith, Deverick Anderson, Nitin Mehdiratta

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 45 / Issue 4 / April 2024

- Published online by Cambridge University Press:

- 11 December 2023, pp. 452-458

- Print publication:

- April 2024

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Objective:

We compared the number of blood-culture events before and after the introduction of a blood-culture algorithm and provider feedback. Secondary objectives were the comparison of blood-culture positivity and negative safety signals before and after the intervention.

Design:Prospective cohort design.

Setting:Two surgical intensive care units (ICUs): general and trauma surgery and cardiothoracic surgery

Patients:Patients aged ≥18 years and admitted to the ICU at the time of the blood-culture event.

Methods:We used an interrupted time series to compare rates of blood-culture events (ie, blood-culture events per 1,000 patient days) before and after the algorithm implementation with weekly provider feedback.

Results:The blood-culture event rate decreased from 100 to 55 blood-culture events per 1,000 patient days in the general surgery and trauma ICU (72% reduction; incidence rate ratio [IRR], 0.38; 95% confidence interval [CI], 0.32–0.46; P < .01) and from 102 to 77 blood-culture events per 1,000 patient days in the cardiothoracic surgery ICU (55% reduction; IRR, 0.45; 95% CI, 0.39–0.52; P < .01). We did not observe any differences in average monthly antibiotic days of therapy, mortality, or readmissions between the pre- and postintervention periods.

Conclusions:We implemented a blood-culture algorithm with data feedback in 2 surgical ICUs, and we observed significant decreases in the rates of blood-culture events without an increase in negative safety signals, including ICU length of stay, mortality, antibiotic use, or readmissions.

Implementation of diagnostic stewardship in two surgical ICUs: Time for a blood-culture change

- Jessica Seidelman, Rebekah Moehring, Erin Gettler, Jay Krishnan, Christopher Polage, Margaret Murphy, Rachel Jordan, Sarah Lewis, Becky Smith, Deverick Anderson, Nitin Mehdiratta

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s9-s10

-

- Article

-

- You have access Access

- Open access

- Export citation

-

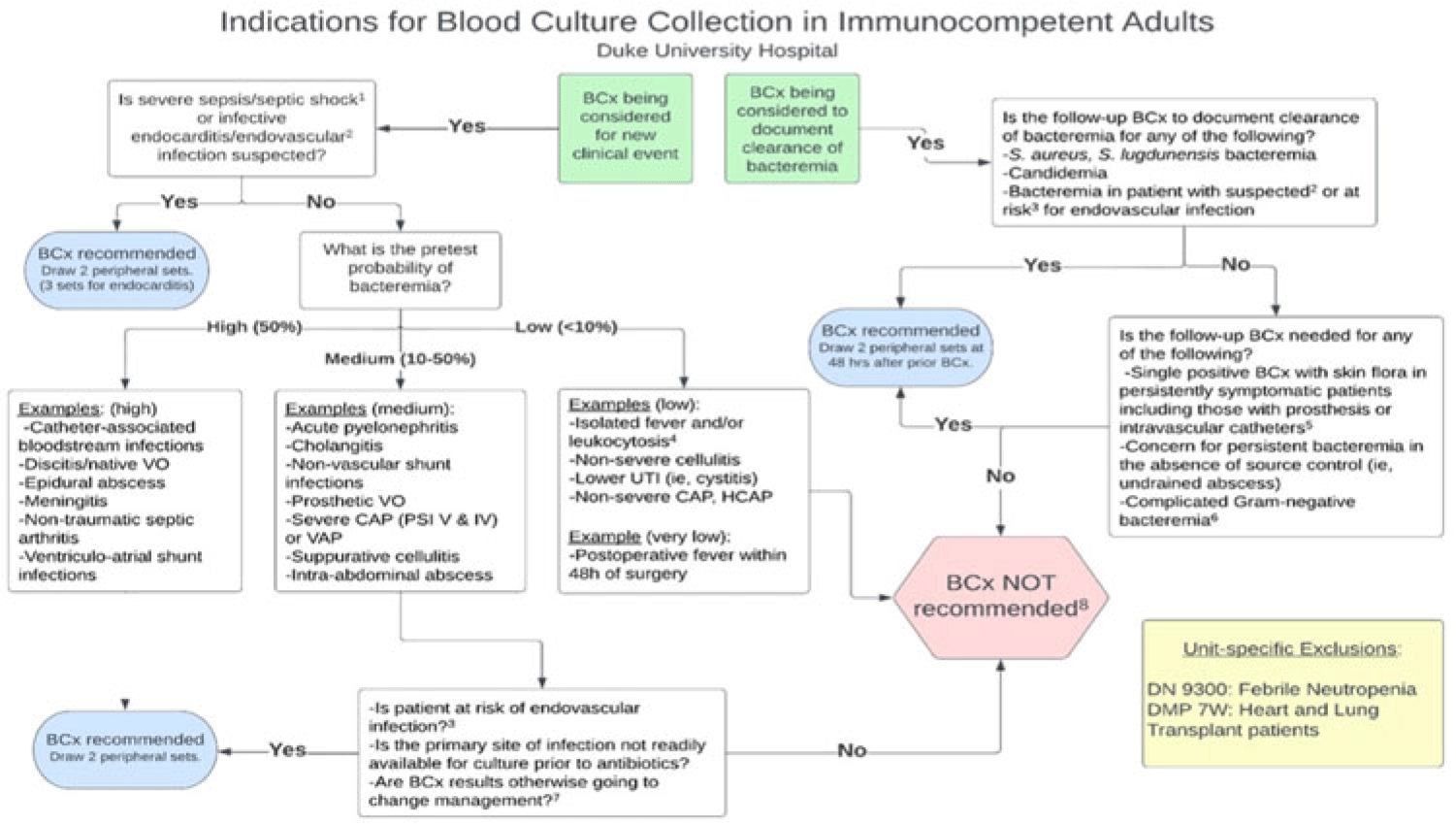

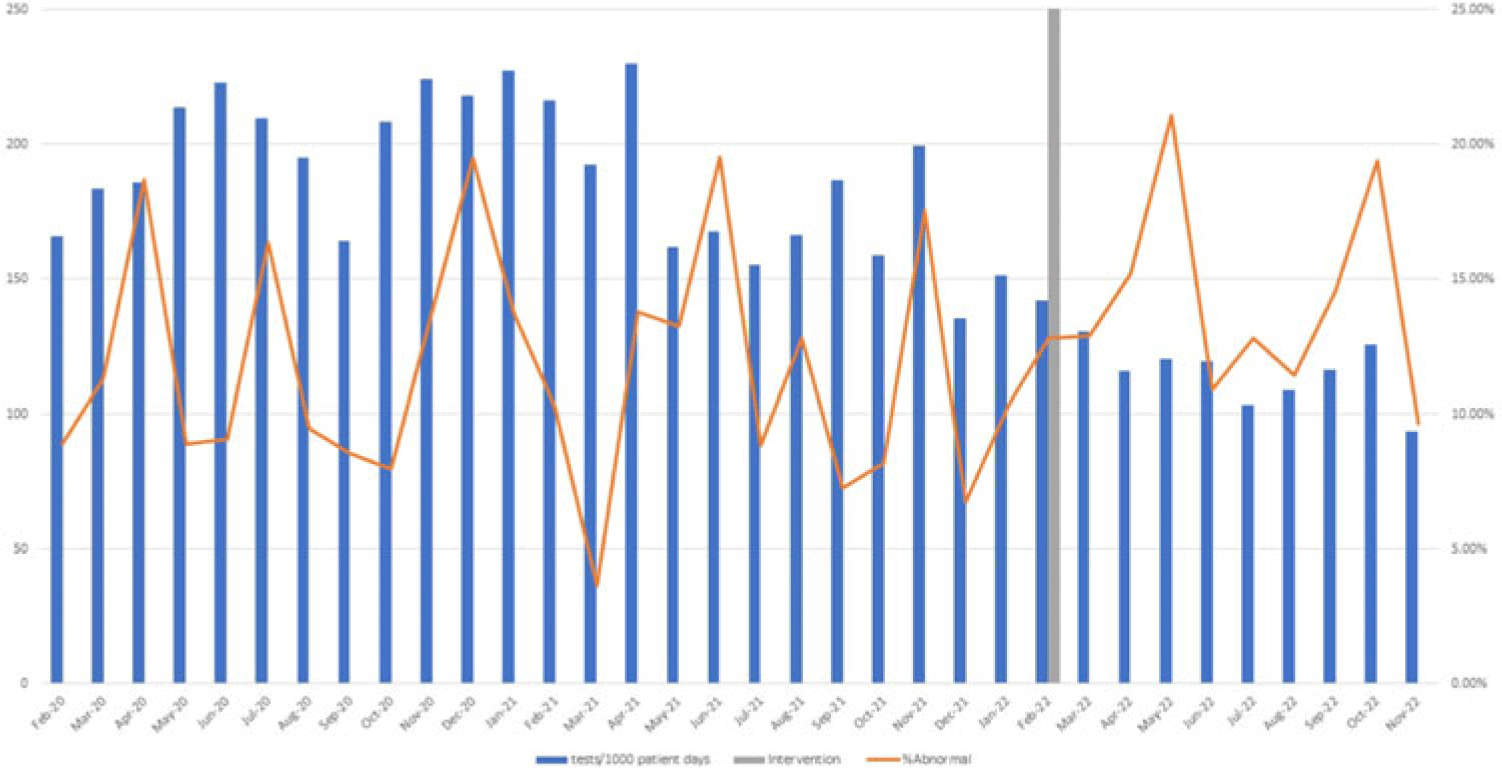

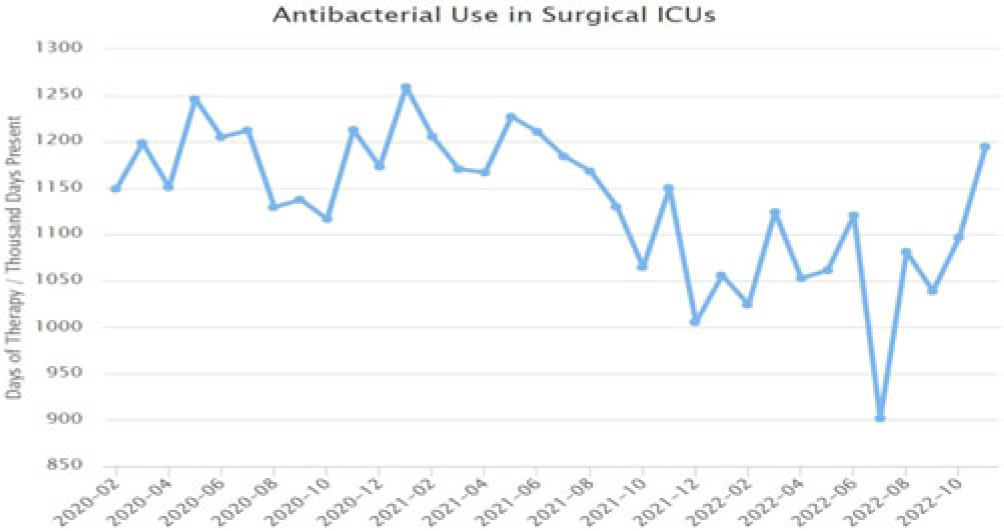

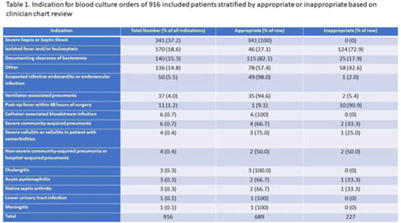

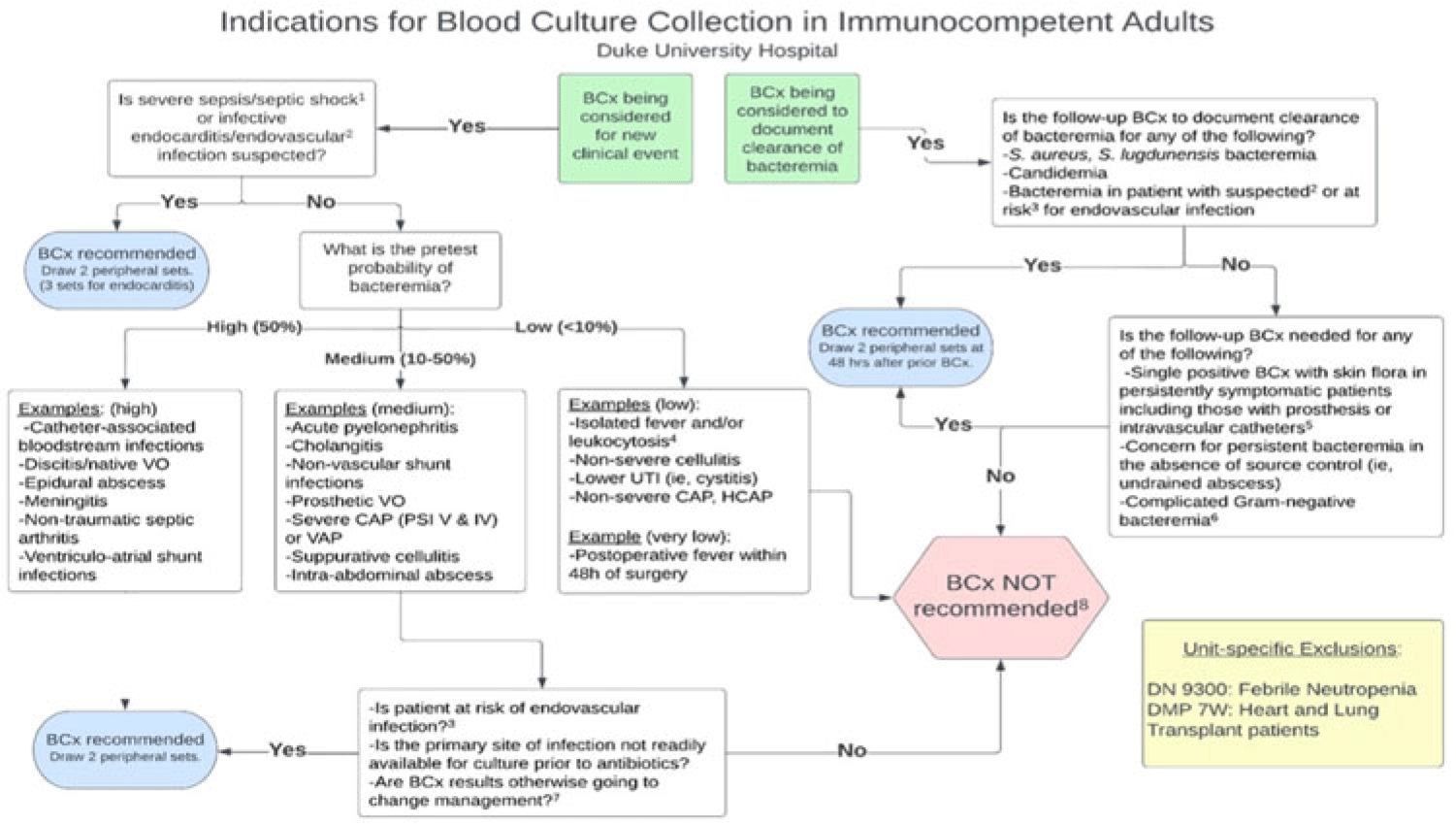

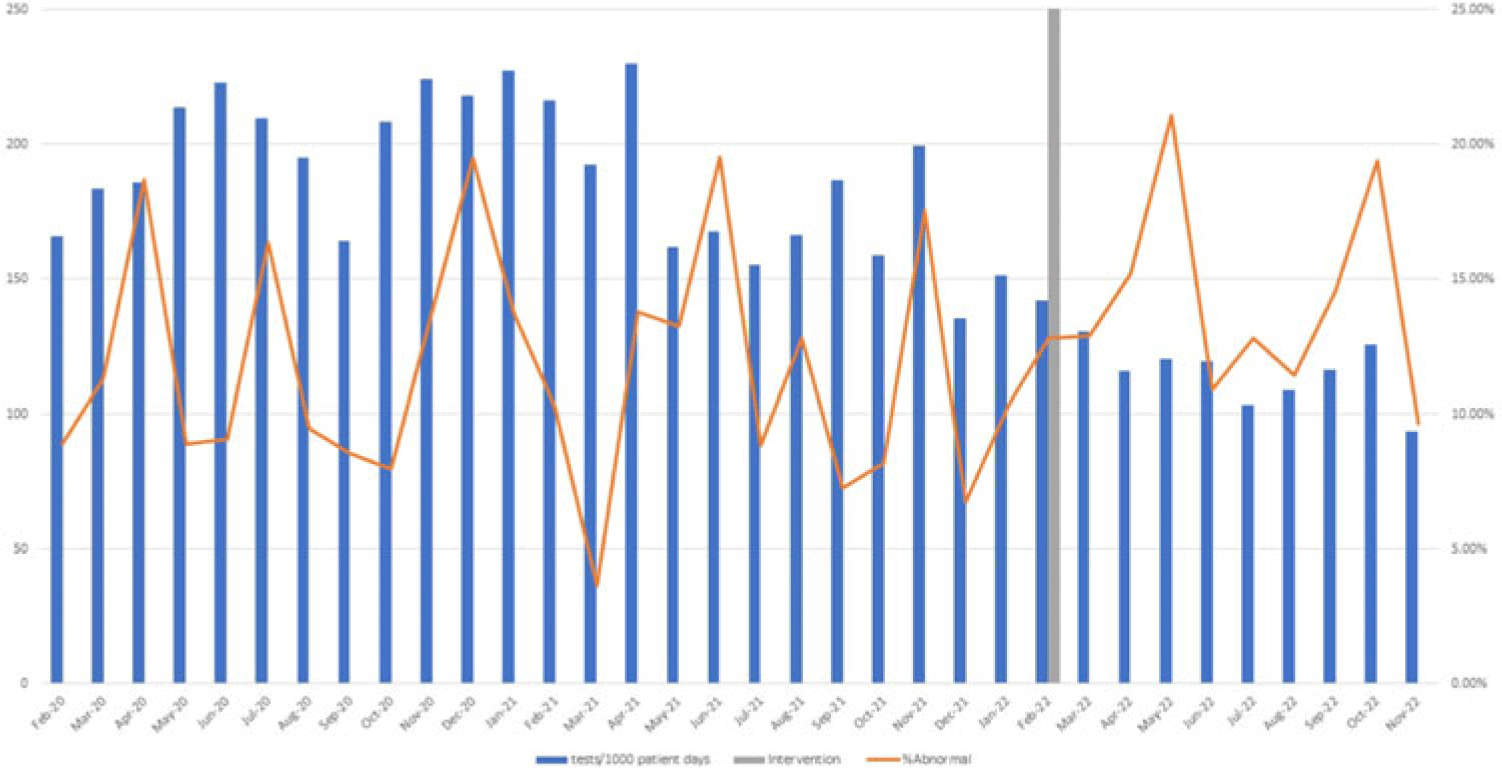

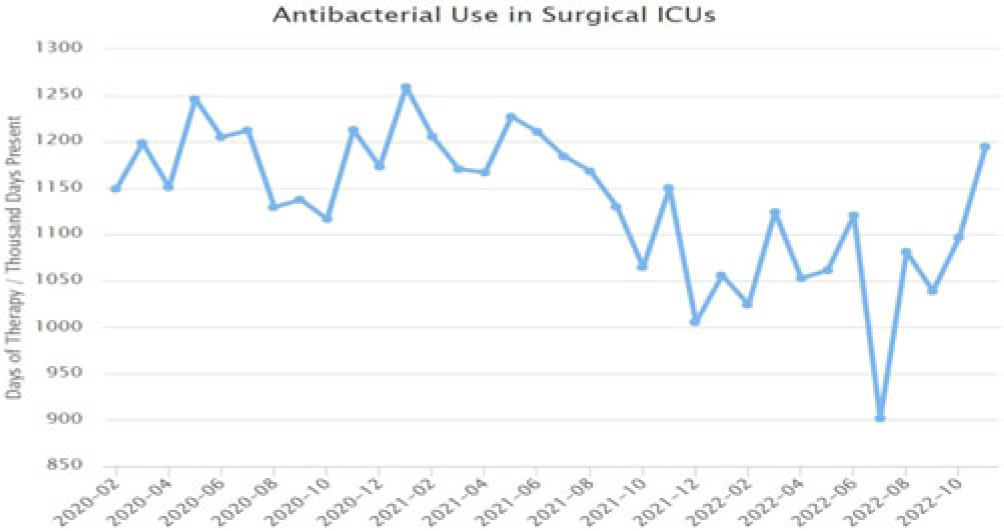

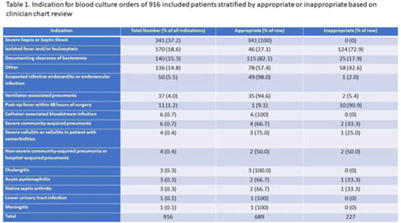

Background: Blood cultures are commonly ordered for patients with low risk of bacteremia. Liberal blood-culture ordering increases the risk of false-positive results, which can lead to increased length of stay, excess antibiotics, and unnecessary diagnostic procedures. We implemented a blood-culture indication algorithm with data feedback and assessed the impact on ordering volume and percent positivity. Methods: We performed a prospective cohort study from February 2022 to November 2022 using historical controls from February 2020 to January 2022. We introduced the blood-culture algorithm (Fig. 1) in 2 adult surgical intensive care units (ICUs). Clinicians reviewed charts of eligible patients with blood cultures weekly to determine whether the blood-culture algorithm was followed. They provided feedback to the unit medical directors weekly. We defined a blood-culture event as ≥1 blood culture within 24 hours. We excluded patients aged <18 years, absolute neutrophil count <500, and heart and lung transplant recipients at the time of blood-culture review. Results: In total, 7,315 blood-culture events in the preintervention group and 2,506 blood-culture events in the postintervention group met eligibility criteria. The average monthly blood-culture rate decreased from 190 blood cultures per 1,000 patient days to 142 blood cultures per 1,000 patient days (P < .01) after the algorithm was implemented. (Fig. 2) The average monthly blood-culture positivity increased from 11.7% to 14.2% (P = .13). Average monthly days of antibiotic therapy (DOT) was lower in the postintervention period than in the preintervention period (2,200 vs 1,940; P < .01). (Fig. 3) The ICU length of stay did not change before the intervention compared to after the intervention: 10 days (IQR, 5–18) versus 10 days (IQR, 5–17; P = .63). The in-hospital mortality rate was lower during the postintervention period, but the difference was not statistically significant: 9.24% versus 8.34% (P = .17). The all-cause 30-day mortality was significantly lower during the intervention period: 11.9% versus 9.7% (P < .01). The unplanned 30-day readmission percentage was significantly lower during the intervention period (10.6% vs 7.6%; P < .01). Over the 9-month intervention, we reviewed 916 blood-culture events in 452 unique patients. Overall, 74.6% of blood cultures followed the algorithm. The most common reasons overall for ordering blood cultures were severe sepsis or septic shock (37%), isolated fever and/or leukocytosis (19%), and documenting clearance of bacteremia (15%) (Table 1). The most common indications for inappropriate blood cultures were isolated fever and/or leukocytosis (53%). Conclusions: We introduced a blood-culture algorithm with data feedback in 2 surgical ICUs and observed decreases in blood-culture volume without a negative impact on ICU LOS or mortality rate.

Disclosure: None

Impact of COVID-19 on healthcare-associated infections in Canadian acute-care hospitals: Interrupted time series (2018–2021)

- Anada Silva, Jessica Bartoszko, Joëlle Caye, Kelly Baekyung Choi, Robyn Mitchell, Linda Pelude, Jeannette Comeau, Susy Hota, Jennie Johnstone, Kevin Katz, Stephanie Smith, Kathryn Suh, Jocelyn Srigley

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, pp. s112-s113

-

- Article

-

- You have access Access

- Open access

- Export citation

-

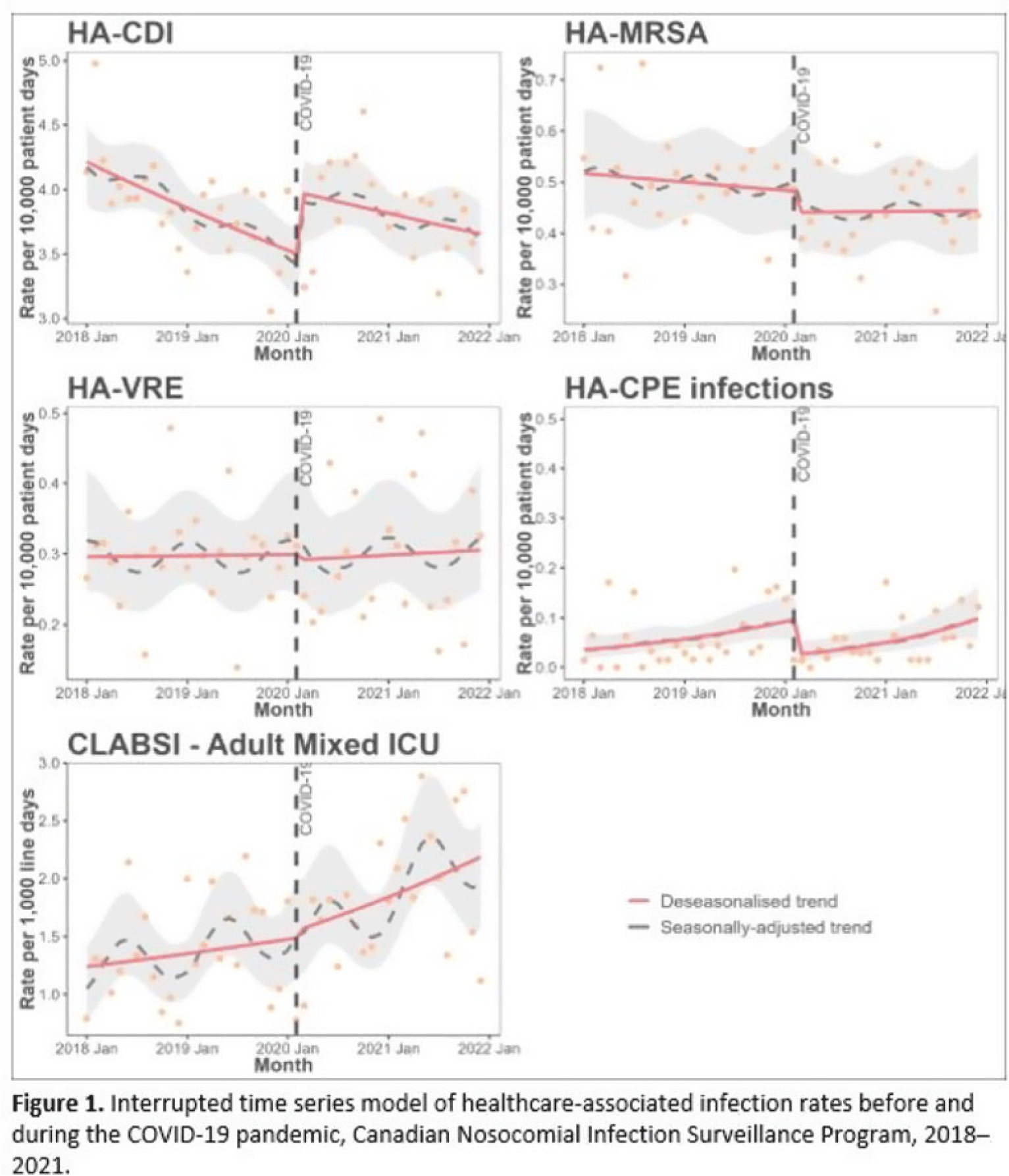

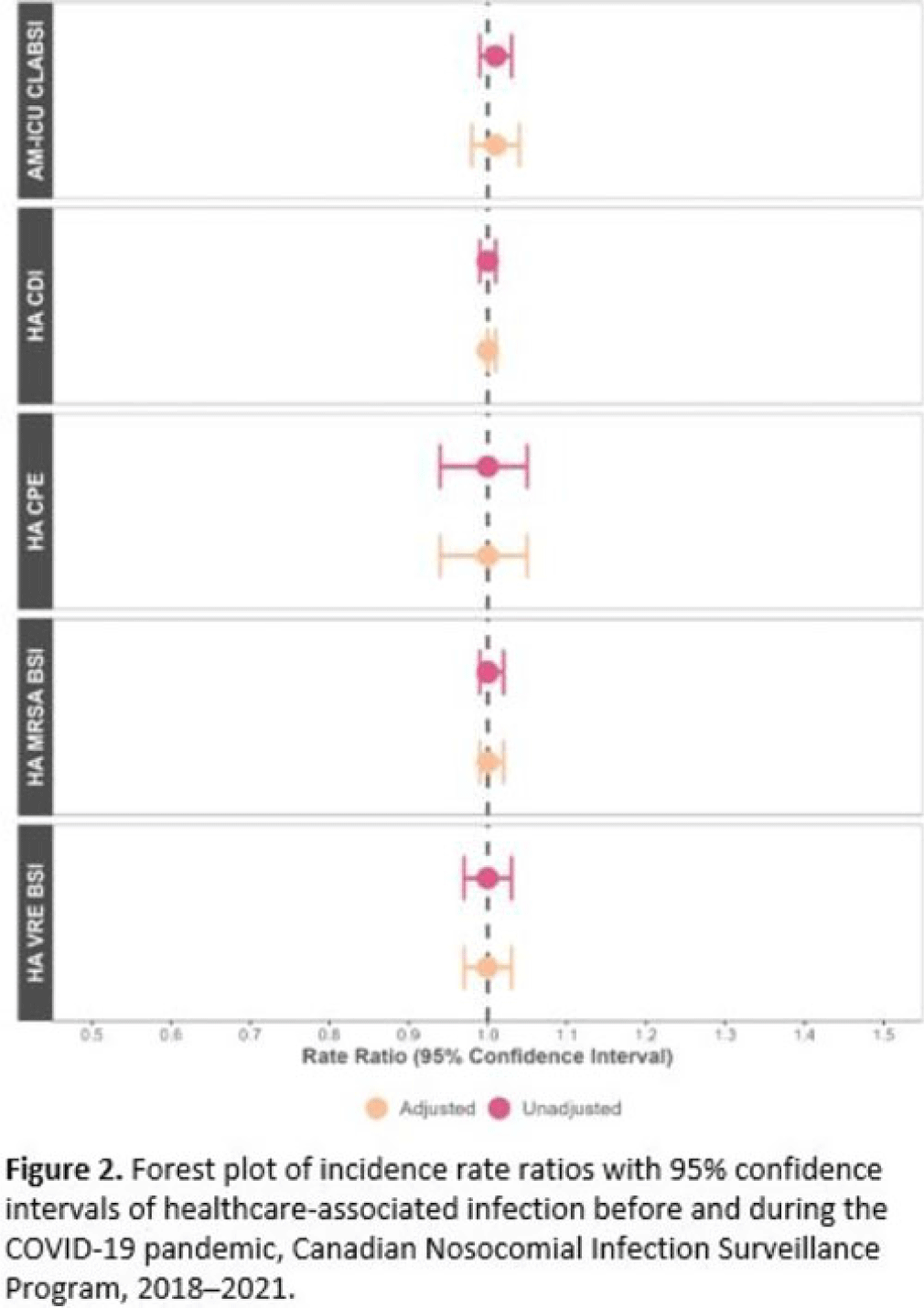

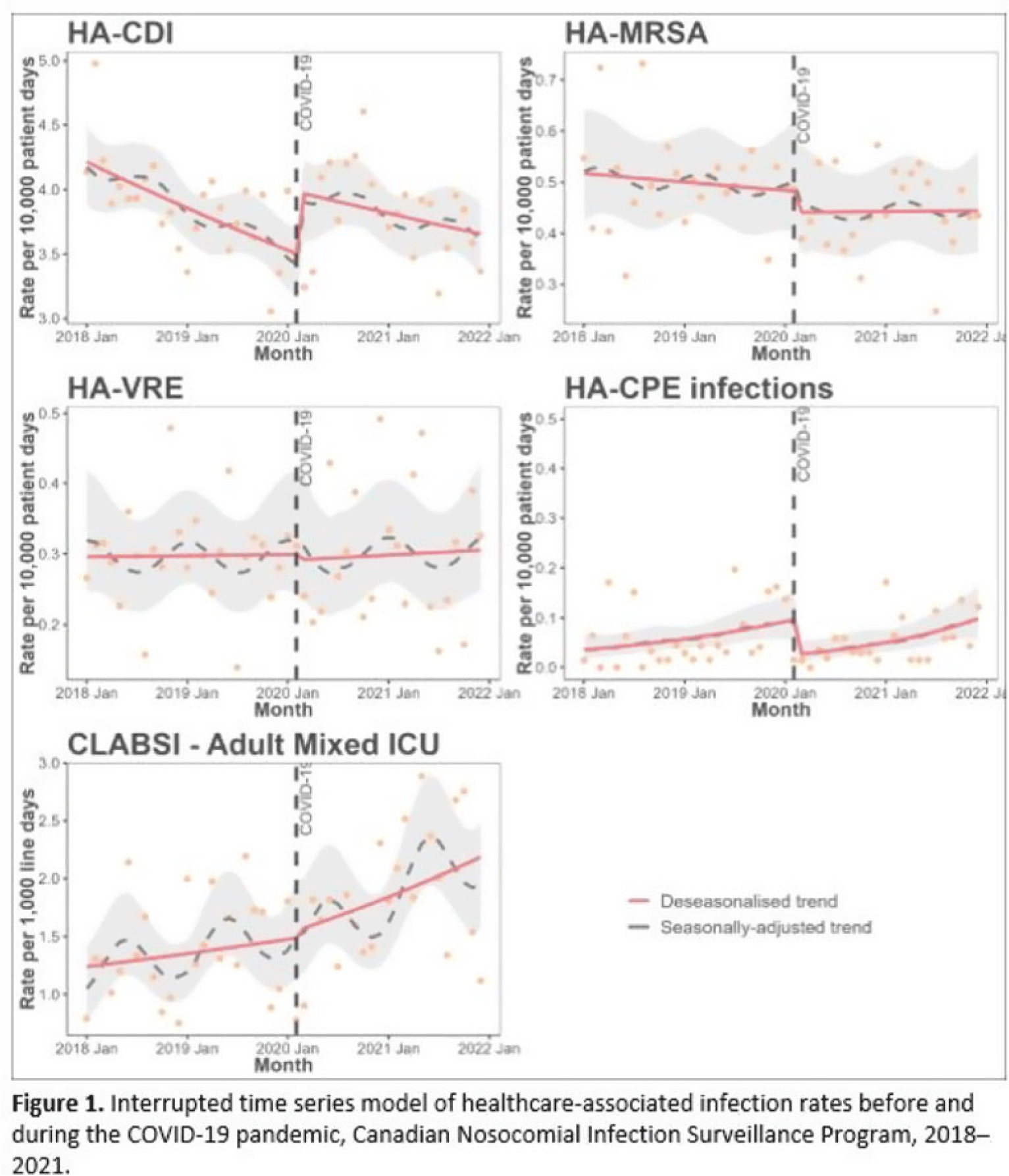

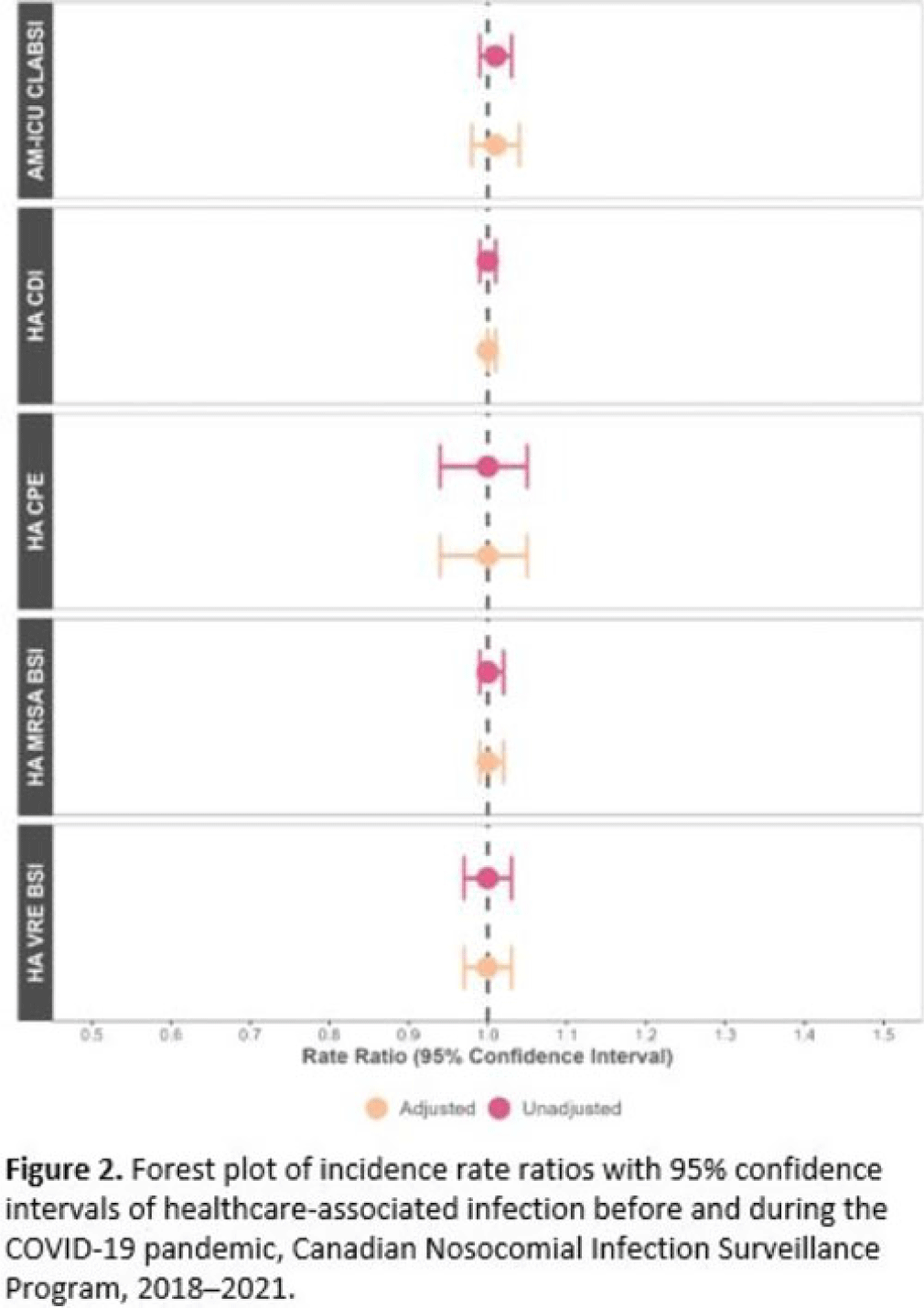

Background: Data regarding the effects of the SARS-COV-2 (COVID-19) pandemic on healthcare-associated infections (HAIs) in Canadian acute-care hospitals are limited. We examined the impact of the COVID-19 pandemic on HAIs and antimicrobial resistant organisms in hospitals participating in the Canadian Nosocomial Infection Surveillance Program. Methods: We analyzed 13,406 HAIs including adult mixed intensive care unit (ICU) central-line–associated bloodstream infections (CLABSIs), and healthcare-associated (HA) Clostridioides difficile infection (CDI), methicillin-resistant Staphylococcus aureus (MRSA) bloodstream infections (BSI), vancomycin-resistant Enterococcus (VRE) BSI, and carbapenemase-producing Enterobacterales (CPE) infections collected using standardized case definitions and questionnaires from 29–64 hospitals participating in the Canadian Nosocomial Infection Surveillance Program (CNISP) from January 2018 to December 2021. We used a generalized linear mixed model with quasi-Poisson distribution to assess step and slope changes in monthly HAI rates between the pre–COVID-19 pandemic period (January 1, 2018–February 29, 2020; 26 time points) and the COVID-19 pandemic period (March 1, 2020–December 31, 2021; 22 time points). Results were reported as incidence rate ratios (IRRs) with 95% confidence intervals (CIs) and adjusted for seasonality, hospital clustering, and hospital characteristics of interest. Results: In the CNISP network, 7,352 (55%) HAIs were reported in the prepandemic period and 6,054 (45%) in the pandemic period. Median age was significantly younger during the pandemic period compared to the prepandemic period among patients with HA-CDI, HA-MRSA BSI, and adult mixed ICU CLABSIs, and more than half of cases among all reported HAIs were male (range, 52%–65%). The 30-day all-cause in-hospital mortality rate did not significantly change between the prepandemic and pandemic periods for all reported HAIs and was highest among HA-VRE BSIs (34%). Modeling results indicated that the COVID-19 pandemic was associated with an immediate increase in HA-CDI and adult mixed ICU CLABSI rates whereas HA-MRSA BSI, HA-CPE and HA-VRE BSI rates immediately decreased. However, pandemic status did not have a statistically significant lasting impact on monthly rate trends for all reported HAIs after adjusting for seasonality, clustering, and hospital covariates (Fig. 1 and 2). Adjusted IRRs for all HAIs ranged from 1.00 to 1.01 (95% CI, 0.94–0.99 to 1.01–1.05).

Conclusions: Although the COVID-19 pandemic placed a significant burden on the Canadian healthcare system, the immediate impact on monthly rates of HAIs in Canadian acute-care hospitals was not sustained over time. Understanding the epidemiological effects of the COVID-19 pandemic in the context of changing patient populations, and clinical and infection control practices, are essential to inform the continued management and prevention of HAIs in Canadian acute-care settings.

Conclusions: Although the COVID-19 pandemic placed a significant burden on the Canadian healthcare system, the immediate impact on monthly rates of HAIs in Canadian acute-care hospitals was not sustained over time. Understanding the epidemiological effects of the COVID-19 pandemic in the context of changing patient populations, and clinical and infection control practices, are essential to inform the continued management and prevention of HAIs in Canadian acute-care settings.Disclosures: None

Epidemiology of central-line–associated bloodstream infection mortality in Canadian NICUs before and after 2017

- Maria Spagnuolo, Anada Silva, Jessica Bartoszko, Linda Pelude, Blanda Chow, Jeannette Comeau, Chelsey Ellis, Charles Frenette, Lynn Johnston, Kevin Katz, Joanne Langley, Bonita Lee, Santina Lee, Marie-Astrid Lefebvre, Allison McGeer, Dorothy Moore, Senthuri Paramalingam, Jennifer Parsonage, Donna Penney, Caroline Quach, Michelle Science, Stephanie Smith, Kathryn Suh, Jocelyn Srigley

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue S2 / June 2023

- Published online by Cambridge University Press:

- 29 September 2023, p. s48

-

- Article

-

- You have access Access

- Open access

- Export citation

-

Background: The Canadian Nosocomial Infection Surveillance Program (CNISP) observed increased mortality among neonatal intensive care unit (NICU) patients with central-line–associated bloodstream infection (CLABSI) starting in 2017. In this study, we compared NICU patients with CLABSIs before and after 2017, and quantified the impact of epidemiological factors on 30-day survival. Methods: We included 1,276 NICU patients from 8–16 participating CNISP hospitals from the pre-2017 period (2009–2016) and the post-2017 period (2017–2022) using standardized definitions and questionnaires. We used Cox regression modeling to assess the impact of age at date of positive culture, sex, birthweight, CLABSI microorganism, region of the country, and surveillance period (before 2017 vs after 2017) on time to 30-day all-cause mortality from date of positive culture. Gestational age was not available for this analysis. We reported model outputs as hazard ratios with 95% CIs. Results: In total, 769 (60%) NICU CLABSIs were reported in the pre-2017 period and 507 (40%) in the post-2017 period. The 30-day all-cause mortality rate was 8% (n = 100 of 1,276) overall, and significantly higher after 2017 (12%, n = 61 of 507) than before 2017 (5%, n = 39 of 769) (P < .001).

During the post-2017 period, cases were significantly younger: 16 days (IQR, 9–33) versus 21 days (IQR, 11–49) (P = .002). Median days from ICU admission to infection were shorter: 14 (IQR, 8–31) versus 19 (IQR, 10–41) (P < .001). More gram-negative CLABSIs were identified (29% vs 24%; P = .040) and fewer gram-positive CLABSIs were identified (64% vs 72%; P = .006) compared to the pre-2017 period. Mortality was higher in CLABSIs caused by gram-negative bacteria (15%, n = 50 of 328) than gram-positive bacteria (4.4%, n = 39 of 877) (P < .001), and mortality was higher in neonates with birthweight <1,000 g (11%, n = 71 of 673) compared to those weighing ≥1,000 g (5%, n = 28 of 560) (P < .001).

Adjusting for all other factors, survival modeling indicated that NICU CLABSIs identified in the post-2017 period had 2.12 (95% CI, 1.23–3.66) times the hazard ratio of 30-day all-cause mortality compared to those before 2017 (P < .006). Those identified with a gram-positive bacterium had a 0.28 hazard ratio (95% CI, 0.12–0.65) of 30-day mortality compared to those with a gram-negative bacterium or fungus (P = .003). In the fully adjusted model, age, sex, and birthweight were not significantly associated with NICU CLABSI survival. Conclusions: NICU patients with CLABSIs had significantly higher all-cause mortality between 2017–2022 compared to 2009–2016, and those who acquired gram-positive–associated CLABSIs had improved survival compared to other organisms. Further work is needed to identify and understand factors driving the increased mortality among NICU CLABSI patients from 2017–2022.

Disclosures: None

What Do You Do When You Can Do No More? Limited Resources, Unimaginable Environments, Personal Danger: What Have Previous Disasters Taught Us About Moral and Ethical Challenges?

- Stephanie Smith, Jessica Kuipers

-

- Journal:

- Disaster Medicine and Public Health Preparedness / Volume 17 / 2023

- Published online by Cambridge University Press:

- 11 April 2023, e373

-

- Article

- Export citation

-

Historically, natural and manmade disasters create many victims and impose pressures on health-care infrastructure and staff; potentially hampering the provision of patient care and overloading clinician capacity. Throughout the course of history, clinicians have performed heroics to work well above their required duty, despite limitations, even putting their own health and safety at risk. In times when clinicians needed to either physically abandon patients or consider abandoning active treatment, we have seen extreme hesitancy to do so, fearing that they may be giving up too soon, that undue harm may come to patients, or even feeling unsure of legal or moral burdens that may ensue. In times when clinicians are placed in this unimaginable position, feeling isolated and overwhelmed, it is essential that they be supported and provided with resources to standardize decision-making.

Using the COM-B model to identify barriers to and facilitators of evidence-based nurse urine-culture practices

- Sonali D. Advani, Ali Winters, Nicholas A. Turner, Becky A. Smith, Jessica Seidelman, Kenneth Schmader, Deverick J. Anderson, Staci S. Reynolds

-

- Journal:

- Antimicrobial Stewardship & Healthcare Epidemiology / Volume 3 / Issue 1 / 2023

- Published online by Cambridge University Press:

- 31 March 2023, e62

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Our surveys of nurses modeled after the Capability, Opportunity, and Motivation Model of Behavior (COM-B model) revealed that opportunity and motivation factors heavily influence urine-culture practices (behavior), in addition to knowledge (capability). Understanding these barriers is a critical step towards implementing targeted interventions to improving urine-culture practices.

Using clinical decision support to improve urine testing and antibiotic utilization

- Michael E. Yarrington, Staci S. Reynolds, Tray Dunkerson, Fabienne McClellan, Christopher R. Polage, Rebekah W. Moehring, Becky A. Smith, Jessica L. Seidelman, Sarah S. Lewis, Sonali D. Advani

-

- Journal:

- Infection Control & Hospital Epidemiology / Volume 44 / Issue 10 / October 2023

- Published online by Cambridge University Press:

- 29 March 2023, pp. 1582-1586

- Print publication:

- October 2023

-

- Article

-

- You have access Access

- Open access

- HTML

- Export citation

-

Objective:

Urine cultures collected from catheterized patients have a high likelihood of false-positive results due to colonization. We examined the impact of a clinical decision support (CDS) tool that includes catheter information on test utilization and patient-level outcomes.

Methods:This before-and-after intervention study was conducted at 3 hospitals in North Carolina. In March 2021, a CDS tool was incorporated into urine-culture order entry in the electronic health record, providing education about indications for culture and suggesting catheter removal or exchange prior to specimen collection for catheters present >7 days. We used an interrupted time-series analysis with Poisson regression to evaluate the impact of CDS implementation on utilization of urinalyses and urine cultures, antibiotic use, and other outcomes during the pre- and postintervention periods.

Results:The CDS tool was prompted in 38,361 instances of urine cultures ordered in all patients, including 2,133 catheterized patients during the postintervention study period. There was significant decrease in urine culture orders (1.4% decrease per month; P < .001) and antibiotic use for UTI indications (2.3% decrease per month; P = .006), but there was no significant decline in CAUTI rates in the postintervention period. Clinicians opted for urinary catheter removal in 183 (8.5%) instances. Evaluation of the safety reporting system revealed no apparent increase in safety events related to catheter removal or reinsertion.

Conclusion:CDS tools can aid in optimizing urine culture collection practices and can serve as a reminder for removal or exchange of long-term indwelling urinary catheters at the time of urine-culture collection.