Introduction

Artificial intelligence (AI) has recently produced one generative system after another, trained on language, photos, videos, music, and other forms of multimodal communication, and it is sure to continue these advances at a brisk pace. These AI generative systems produce forms such as texts or pictures. Construction grammarians investigate the exact ways in which such forms prompt for meaning. To what extent can the construction grammarian draw on these AI systems to study constructions – that is, form–meaning pairs – and to investigate the ways that constructions blend to create constructs, where by “constructs” we mean communicative performances?

Consider large language models (LLMs), which derive from mathematical models that rely on self-supervised training on enormous amounts of data in a deep neural network architecture (Reference Bommasani, Hudson and AdeliBommasani et al., 2021). Natural Language Processing (NLP) research has strenuously investigated whether LLMs can represent different aspects of linguistic knowledge (see Reference Rogers, Kovaleva and RumshiskyRogers et al., 2020 for a survey of 150 publications). The present Element asks quite a different question about the relation of LLMs and linguistics. Instead of focusing on whether LLMs have any understanding of language or not, it asks whether LLMs can be useful tools for doing linguistics. Linguists have historically used many digital assistants as copilots for research. Can AI, specifically conversational LLMs, join that list? We reframe the discussion about chatty LLMs from what they can do with language to what they can do for linguists. Our goal is to show how linguists can “find a way to benefit from conversational AI without losing the many important aspects that render scientific work one of the most profound and gratifying enterprises: curiosity, imagination and discovery” (Reference van Dis, Bollen, Zuidema, van Rooij and Bocktingvan Dis et al., 2023: 226).

In this Element, we conducted our experiments using two AI LLM chatbots: one proprietary and one open source. The proprietary chatbot was ChatGPT by OpenAI, often running the GPT4 model. To locate a suitable open source alternative, we ran preliminary tests with Alpaca, Koala, OpenchatKit, and OpenAssistant. For OpenAssistant, we used the HuggingChat interface. Although we trained OpenchatKit on a high-performance 80GB GPU node, its performance was not competitive. OpenAssistant, without any additional training from us, performed the best of these open source alternatives. Accordingly, we conducted the same experiments using both ChatGPT and OpenAssistant.

The possibilities for AI LLM chatbots as eliciting interlocutors for linguists are extensive. The conversational interface allows for continuous dialogue with the system, which can present text in a variety of formats, including formats ideal for further computational processing. For example, AI LLM chatbots can write code that is useful for the linguist. When informed of mistakes, they can respond repeatedly and inventively to requests for corrections. In this Element, we investigate whether these chatbots can aid the construction grammarian. Since Frame Semantics is the central theory of meaning in cognitive Construction Grammar approaches, our most specific investigation explores whether AI LLM chatbots can aid the frame semanticist in expanding and refining FrameNet – the major, global computational repository of representations of frames, devised at its inception to serve as an aid to linguists.

For the LLM-based chatbot to generate text accepted as mimicking human language (including false, mistaken, biased, and offensive content), its output must draw on its exposure to vast oceans of text; it must mimic the use of cognitive frames and grammatical patterns, regardless of whether it is saying things that are true or false, biased or objective, naughty or nice. Otherwise, no one would understand it; it would not merely say things with which we might disagree, things we might find distasteful, things we might judge to be contrary to fact, but, incomparably more important for the work of the linguist, it would say things that are linguistically alien, nonhuman.

The mimicking ability of these chatbots depends on three factors. First and foremost, it depends on the human user’s ability to construe meaning from linguistic forms. The human user can take the stance that the entity responsible for producing those forms is cooperatively engaging in a communicative effort. Second, it depends on the vector probabilistic representations (embeddings) that LLMs build for linguistic forms during training. Its representations are based on the recognition of patterns in hundreds of billions of words in the training data (Reference Brown, Mann and RyderBrown et al., 2020; Reference Touvron, Lavril and IzacardTouvron et al., 2023). Lastly, it depends on the fine-tuning of the LLM through reinforcement learning from human feedback, improving the overall quality of the conversational interface by reducing toxicity and hallucinations, and increasing trustworthiness and perceived appropriateness. Given that an important part of the job of linguists in general – and of construction grammarians and frame semanticists in particular – involves analyzing data and recognizing usage patterns in language, it is plausible that an AI LLM chatbot can assist the linguist in finding and detailing those patterns.

A native speaker shows astounding mastery of a language, but has very little conscious understanding of the processes and knowledge underlying that mastery. Those processes and knowledge are in the backstage of cognition, mostly hidden from consciousness. The linguist is forever attempting to drag onstage a little of that backstage system. This is a notoriously difficult occupation. The linguist must first notice some hint of an element of a grammatical system, and then work, often for decades, on the details. Reference Fillmore, Kay and O’ConnorFillmore and colleagues (1988: 518–519), for example, explain that after working for months on the “let alone construction,” during which all evidence seemed to suggest that it was a negative polarity construction, they began to encounter solid ranges of attested examples of non-negative let alone. It then took them more months to arrive at an “exploratory speculation” of the underlying principles of non-negative let alone. It is common for the linguist to notice a linguistic phenomenon consciously only after long exposure to usage, and then to work on that topic for years. Might an AI LLM chatbot serve not as an expert or even a reliable informant but instead as a tool for eliciting knowledge and insight from the linguist? Can it suggest analyses that prompt the linguist to better analyses? To be sure, all the examples we will give in the following sections show the ELIZA effect: “the susceptibility of people to read far more understanding than is warranted into strings of symbols – especially words – strung together by computers” (Reference HofstadterHofstader, 1995: 157). The computational tool may produce output that the tool does not understand at all but that the human being finds meaningful and useful. The ELIZA effect consists of attributing that understanding to the tool. Here, we are interested, on the contrary, in only whether the tool might be useful to the linguist, whose job is to recognize patterns on which, as it conveniently happens, the tool has been elaborately trained.

1 Safety Instructions: Risks and Limitations of LLMs and Generative AI

Before taking off with our new copilot, it is necessary, however, to consider the limitations of the current approach and to post some warnings. A substantial amount of research has focused on the limits of LLMs in understanding language (Reference Bender and KollerBender & Koller, 2020; Reference Mahowald, Ivanova, Blank, Kanwisher, Tenenbaum and FedorenkoMahowald et al., 2023) and on their potential risks (Reference Ruane, Birhane and VentresqueRuane et al., 2019; Reference Bender, Gebru, McMillan-Major and ShmitchellBender et al., 2021; Reference Birhane, Prabhu and KahembweBirhane et al., 2021). Moreover, LLMs only model the probabilities that a given word follows a preceding string of words. These probabilities are a function of the “weights” assigned to nodes in the machine learning model. The weights are created by training the model on very large amounts of existing textual data, sometimes modified by further reinforcement learning through human feedback (RLHF), during which the human user provides feedback on the actual performance of the LLM, so as to adjust its reward function and therefore fine-tune its subsequent performance. Accordingly, generative AI services like ChatGPT that depend on LLMs will generate text depending on their training data. It is in the nature of the process that training an LLM on unimaginably vast oceans of texts will produce output that follows the linguistic patterns of the training data. But – a quite different subject – it will also produce content whose truth-value follows the truth-value of the training data. If the assertions and claims of the training data are garbage, the product will most probably be garbage. Output that is linguistically perfect can prompt for meanings that are totally false, just as a con artist can be a master of communication. In addition, there is an extra threat to reliability, referred to as “confabulation” or “hallucination”: The LLM will mostly do as commanded, generating text according to the user’s prompting, which naturally carries the user’s preferences. Confabulation can contribute to the production of text that is factually unreliable. Many analysts have warned that to use a generative AI unreflectively as it comes out of the box is to use it wrong. For example, the personal tech columnist for The New York Times writes:

The chatbots are the least beneficial when we ask them questions and then hope whatever answers they come up with on their own are true, which is how they were designed to be used. But when directed to use information from trusted sources, such as credible websites and research papers, A.I. can carry out helpful tasks with a high degree of accuracy.

For the future, what seems to be required in order to create a reliable reference resource for linguistics is to train LLMs on texts and/or databases regarded as authoritative (e.g., FrameNet or https://constructicon.de/). For example, we used AskYourPDF to fine-tune ChatGPT (using the GPT4 model) on the pdf of a classic, long, technical CxG article by Fillmore, Kay, and O’Connor, “Regularity and idiomaticity.” The result is a chatbot that delivers answers to questions about that article. Training generative AI to serve as a reliable informant or tutor for linguistics is not the subject of the present Element, but it is important for readers to remember that ChatGPT and other generative AIs cannot be assumed to have been trained on reliable information only.

Finally, Reference Birhane, Kasirzadeh and LeslieBirhane and colleagues (2023), in a Nature Viewpoint interview paper, list key risks associated with the use of chatty LLMs such as ChatGPT and OpenAssistant in scientific practice. On top of the known issues regarding the carbon footprint of such models and the poor labor conditions involved in training some of them, the authors point out that LLMs are tools and must always be regarded as such. In Birhane’s words, “science is a human enterprise and LLMs are tools – albeit impressive at predicting the next word in a sequence based on previously ‘seen’ words – with limitations such as brittleness (susceptibility to catastrophic failure), unreliability and the fabrication of seemingly ‘scientific’ nonsense.” Leslie, another author of the Nature Viewpoint article, claims that:

Scientists must view LLMs and GenAI technologies as exploratory tools that bolster responsible, mission-driven and society-led research practices and that support the advancement of scientific discovery and understanding. To paraphrase the words of economist Zvi Griliches, the expanding use of these AI technologies in scientific research is the “discovery of a method of discovery” – the invention of a new set of research tools that support and enable new pathways of insight, innovation and ingenuity.

In line with this, the current Element tries to showcase how AI LLM chatbots can be used responsibly and productively for Frame Semantic and Construction Grammar research. Towards the end, we will return to the issue of reliability and brittleness of this method and assess it in the light of the findings of our experiments.

2 Constructions

Various construction grammarians come at language from various different vantage points. These include typology, language acquisition, cognitive mechanisms, formalization, and computational implementation. Consequently, different Construction Grammar approaches, with different emphases, have emerged. These approaches include Radical Construction Grammar, Cognitive Construction Grammar, Embodied Construction Grammar, Fluid Construction Grammar, and Sign-Based Construction Grammar. They often differ on important theoretical assumptions (cf., e.g., Reference Hoffmann and TrousdaleHoffmann & Trousdale, 2013; Reference HoffmannHoffmann, 2022a: 256; Reference Ungerer and HartmannUngerer & Hartmann, 2023). What unites all constructionist approaches is that they agree that language is nonmodular, without derivations, and that the central units of language are constructions (form–meaning pairs). All construction grammarians aspire to account for creativity otherwise ignored in linguistics. In doing so, they follow the wisdom of the study of σχήματα (schemata) in classical rhetoric (Reference Turner, Cacciari, Gibbs and KatzTurner, 1998): σχῆμα was a technical term used to signify a conventional pairing of a form and a meaning (a construction) or, more broadly, a form and a conceptual pattern. To know a language, one must know its schemata and how they can be used and combined creatively. Of course, as both classical rhetoric and Construction Grammar recognize, nearly all creativity in networks of form–meaning pairs goes unnoticed in consciousness by individuals, despite what in retrospect is clearly very rapid development, innovation, and extension.

The meaning side of a form–meaning pair is often a cognitive frame. Human beings think largely by using mental operations they already possess to work on conceptual arrays they already know. Some of their knowledge is organized into mental bundles that we call “mental frames,” “cognitive frames,” or just “frames” (Reference Fillmore, Bach and HarmsFillmore, 1968, Reference Fillmore1976, Reference Fillmore and Zampolli1977a, Reference Fillmore and Karlgren1977b, Reference Fillmore1982, Reference Fillmore1985, Reference Fillmore and Rossini Favretti2008, Reference Fillmore, Hoffmann and Trousdale2013; Reference Fillmore, Atkins, Lehrer and KittayFillmore & Atkins, 1992, Reference Fillmore, Atkins, Atkins and Zampolli1994; Reference Fillmore, Kay and O’ConnorFillmore et al., 1988; Reference Lowe, Baker and FillmoreLowe et al., 1997). Some of these frames are so important to communication that we expect every mature native speaker of a particular language to have mastered them, or at least a version of them. The language presupposes their availability to its speakers. For example, when someone says, “I have to call my stockbroker,” everyone can activate the appropriate mental package, the appropriate conceptual bundle of related elements. We imagine, unless we are told or have reason to believe otherwise, that the telephone call is about buying and selling securities. Nobody needs to explain that the call is about buying and selling securities, because the word “stockbroker” calls up that frame. To understand “I have to call my stockbroker,” we activate information from that frame to build a small mental array containing the speaker, the phone call, and the broker. In the frame for buying and selling securities, there is a role for the agent who initiates the trade in a financial market, and we understand that the stockbroker is in that role. In that frame, there is an actual client, who owns the securities, and we place the speaker in that role. In that frame, the client communicates with the agent, and we take “call” to indicate a phone call between the client and the agent. Of course, other interpretations of “I have to call my stockbroker” are possible. If the speaker then calls out to someone walking by in the park, we might have to reframe the utterance to reach a compatible interpretation. Such forced reframing can be funny, exactly because the speaker seemed to invite us to use one frame (communicating with an agent to transact a buy–sell transaction for securities), but then forced a shift in the frame to calling out to get the attention of someone. Forced frame shifts are often a source of humor (Reference CoulsonCoulson, 2001).

Forms of all sorts can evoke frames: pointing (at the policeman or the waiter), paintings and sculptures and pictures of objects (the holy grail, a sword, a bagpipe), melodies (the leitmotif for Darth Vader), and so on. The most obvious forms that evoke frames are words, or, more precisely, lexical units. Consider safe, for example, said of some situation. It prompts us to activate a mental web corresponding to that situation, activate a frame of potential harm, and understand that the situation with harm is counterfactual to the mental space in which safe applies.Footnote 1 A single sentence can contain many such words, activating many such frames, as in the National Public Radio warning a few days before Halloween, October 2000: “A Halloween costume that limits sight or movement is an accident lurking in disguise.” (Halloween will activate all activities and props associated with the holiday, including types of costumes, trick-and-treating, and so on. Sight and movement evoke embodied frames, including the fact that they can be temporarily impeded. Accident activates the catastrophe frame and lurking a frame of hiding. Finally, disguise is intentionally used ambiguously here, activating the frame of a potentially negative effect that is masked as something positive as well as evoking the costume element of the Halloween frame.)

Syntactic patterns, so-called argument structure constructions (Reference GoldbergGoldberg, 1995, Reference Goldberg2006, Reference Goldberg2019), also evoke frames of basic human event construals such as transfer, movement, or causation. The syntax is the form; the frame is part of the meaning evoked. A verb like THROW (e.g., He threw the ball towards the pitcher) evokes the frame of Caused_motion and encodes the meaning of “propel[ing an object] with force through the air by a rapid movement of the arm and hand.”Footnote 2 However, when such a verb is blended with the Ditransitive construction (FORM: NP1 Verb2 NP3 NP4 ↔ MEANING: Agent1 causes Recipient3 to receive Patient4 by Verb2-ing; adapted from Reference HoffmannHoffmann, 2022a: 189), as in She threw me the coke bottle, the Transfer frame is activated by the argument structure construction in addition to the Caused_motion frame with which THROW is associated. Similarly, FLOAT prototypically activates the Motion frame, meaning “mov[ing] slowly or hover[ing] in a liquid or the air.”Footnote 3 When combined with the Caused Motion construction (FORM: NP1 Verb2 NP3 PP4 ↔ MEANING: Agent1 causes Theme3 to move towards Goal4 by Verb2-ing; adapted from Reference HoffmannHoffmann, 2022a: 187), the abstract syntactic construction adds the Caused Motion meaning to the simple movement meaning of FLOAT: He floated the boat to me (cf. Reference GoldbergGoldberg, 1995; Reference Fauconnier, Turner and GoldbergFauconnier & Turner, 1996, Reference Fauconnier and Turner2002; Reference Turner, Dąbrowska and DivjakTurner, 2015). As recent studies have shown, argument structure constructions are not stored entirely independent of verbal predicates. Instead, there are strong usage ties between certain verbs and these abstract constructions (e.g., GIVE, TELL, SEND, ASK, and SHOW and the Ditransitive construction; Reference Stefanowitsch, Hoffmann and TrousdaleStefanowitsch, 2013: 293; cf. also Reference BoasBoas, 2005, Reference Boas, Hoffmann and Trousdale2013: 237–238; Reference Perek and LemmensPerek & Lemmens, 2010; Reference CroftCroft, 2012: 364–374; Reference HerbstHerbst, 2018). This makes frame-based analyses (see Section 5) a central topic for constructional research.

Constructions, symbolic pairings of form and meaning, range from morphological templates (e.g., FORM: un1-Verb2 ↔ MEANING: reverse1 Verb2-ing event; adapted from Reference Hoffmann, Bergs, Hennemann and TackeHoffmann and Bergs, 2024) over word constructions (e.g., FORM: THROW ↔ MEANING: Cause_Motion frame | ‘propel with force through the air by a rapid movement of the arm and hand’) to abstract constructions such as the Ditransitive construction or the Caused Motion construction. One of the most thoroughly analyzed abstract constructions whose meaning is an elaborate prompt for blending a frame with another input space is the “X is the Y of Z” construction (XYZ) (e.g., Death is the mother of beauty, These fire retardants are the asbestos of our time; Reference TurnerTurner, 1987). The construction has as its form the pattern NP1 BE the NP2 of NP3 with each NP providing frame information that must be compressed via conceptual blending in the speaker’s working memory: NP1 (X) and NP3 (Z) are combined into an input space (death and beauty/fire retardants and our time), while NP2 (Y) activates a frame that is used to establish the relationship between X and Y that the speaker tries to express. Through conceptual blending, the construction then can give rise to a concise (sometimes metaphorical) meaning, such as that death, like a mother, “gives birth” to beauty, because our recognition of mortality is the basis of our reaction to beauty, or that fire retardants today are as frequently used, yet as secretly dangerous, as asbestos was as a building material in the 1960s and 1970s.

The XYZ construction is a clausal construction with a complex meaning pole that requires the spontaneous blending of various frames. In addition to argument structure constructions and clause-level constructions such as the XYZ construction, some researchers have recently suggested that there are even larger, schematic discourse-level constructions. Reference Bergen, Binsted, Brône, Feyaerts and VealeHoffmann and Bergs (2015, Reference Hoffmann and Bergs2018, Reference Hoffmann, Bergs, Hennemann and Tacke2024 and references therein), for example, have provided evidence that football chants, Knock Knock jokes, and even TED talks can be analyzed as schematic constructions.

This section has illustrated the range of constructions that are analyzed within current constructionist research. The selection of the phenomena discussed in this section is obviously somewhat eclectic – for a more in-depth overview of the scope of present Construction Grammar research see, for example, Reference Hoffmann and TrousdaleHoffmann and Trousdale (2013), Reference HilpertHilpert (2019), and Reference HoffmannHoffmann (2022a). In the next section, we will outline how AI LLM chatbots can assist in constructionist research, using the constructions discussed in this section for our case studies.

3 Using an AI to Help Study Constructions

One can prompt an AI LLM chatbot to provide further examples and even analyses of such constructions. Starting with the un-VERB construction (see also Hoffmann, unpublished dataFootnote 4), one can, for example, prompt ChatGPT and OpenAssistant to give ten examples of the construction:

(1) USER: Hi, Assistant. The un-VERB construction which creates verbs such as e.g. “unbutton” or “untie” has a form that consist of “un” followed by a verb. The meaning of the construction is “reversal”. Thus, “unbutton” means “to reverse a buttoning action” and “untie” means “to reverse a tying action.” Please give 10 more examples of this construction.

a. ChatGPT: Sure, here are 10 more examples of the “un-verb” construction:

1. Unzip – to reverse a zipping action

2. Unfold – to reverse a folding action

3. Unplug – to reverse a plugging action

4. Unlock – to reverse a locking action

5. Unwind – to reverse a winding action

6. Uncork – to reverse a corking action

7. Untangle – to reverse a tangling action

8. Unlace – to reverse a lacing action

9. Unravel – to reverse a unraveling action

10. Unclasp – to reverse a clasping action

b. OpenAssistant: Here are ten additional examples of the un-verb construction in English:

1. Unbuckle: To reverse an action of buckling

2. Undraw: To reverse an action of drawing

3. Unfold: To reverse an action of folding

4. Uneven: To reverse an even number

5. Understand: To reverse an action of understanding

6. Untwist: To reverse an action of twisting

7. Unbraid: To reverse an action of braiding

8. Unpack: To reverse an action of packing

9. Undo: To reverse any previous action or decision

10. Unlike: To reverse your opinion or feeling towards someone or something

The ChatGPT results (2a) are generally good, but already showcase how a particular prompt crucially influences the model’s output: In the prompt, the meaning of the construction is specified as “reversal” and the two examples given are “to reverse a buttoning action” and “to reverse a tying action.” Just like conservative learners, the models generalize this to the pattern “to reverse a V-ing action,” which they apply to all ten examples. As a result, they fail to report polysemous meanings (in addition to the meaning in (1), unwind, inter alia, has the figurative meaning “[t]o relieve from tension or anxiety, to cause to relax”Footnote 5). Somewhat unexpected, however, is the fact that ChatGPT gives “to reverse a unraveling action” as the meaning of unravel. In all other cases, just as in the prompt, the models seem to strip the un-V word of its un-prefix in the meaning definitions (cf. zipping, folding, plugging, etc.). A potential reason might be that the model is affected by the frequency of the un-V word and the corresponding V word. Now, the precise content of the training data is not known, so as a proxy we queried the frequency of the verb lemma UNVERB_v* and VERB_* for the ten ChatGPT examples from (2) in the Corpus of Contemporary American English (COCA).

Currently, COCA comprises about one billion words – only a fraction of ChatGPT’s training data. Moreover, just like ChatGPT’s output, the numbers in Table 1 do not distinguish the various polysemous meanings of the V lemmas. Still, as expected, for all but two words, the V lemma is (considerably) more frequent than the un-V lemma. Uncork is 3.15 times more frequent than cork in COCA – –but both appear fairly infrequently (384 vs 121 tokens). In contrast to this, unravel is considerably more frequent than ravel, with a ratio of 76.06 to 1, and ravel appears only fifty-four times in COCA. Moreover, LLMs are, obviously, crucially influenced by the size of their training data. In this case, however, it seems as if this can even lead to implausible meaning descriptions (such as “to reverse a unraveling action” for unravel).

Table 1 Frequency of un-V versus V lemma constructions for ChatGPT examples.

| un-V lemma | Frequency | V lemma | Frequency | Ratio un-V lemma versus V lemma |

|---|---|---|---|---|

| UNZIP | 1,411 | ZIP | 5,012 | 1 : 3.55 |

| UNFOLD | 12,727 | FOLD | 16,148 | 1 : 1.27 |

| UNPLUG | 973 | PLUG | 10,332 | 1 : 10.61 |

| UNLOCK | 9,097 | LOCK | 43,093 | 1 : 4.74 |

| UNWIND | 2,067 | WIND | 26,183 | 1 : 12.67 |

| UNCORK | 384 | CORK | 121 | 3.15 : 1 |

| UNTANGLE | 877 | TANGLE | 2,568 | 1 : 2.93 |

| UNLACE | 163 | LACE | 3,171 | 1 : 19.45 |

| UNRAVEL | 4,107 | RAVEL | 54 | 76.06 : 1 |

| UNCLASP | 60 | CLASP | 3,212 | 1: 53.53 |

OpenAssistant (2b) arguably performs worse for this prompt: Understand is, of course, a highly frequent word, but does not segment into un- and derstand. Since derstand does not exist as a verb, this result is somewhat unexpected (and raises questions as to how well the prompt conduced OpenAssistant to perform the task). In addition to that, uneven as a verb is highly infrequent and means “to make uneven,”Footnote 6 and not “to reverse an even number.” At the same time, as Table 2 shows, OpenAssistant’s examples are mostly ones for which the base V LEMMA is highly frequent (while several un-V lemmas such as undraw, untwist, or unlike do not appear even once in COCA).

Table 2 Frequency of un-V versus V lemma constructions for OpenAssistant examples.

| un-V lemma | Frequency | V lemma | Frequency | Ratio un-V lemma vs V lemma |

|---|---|---|---|---|

| UNBUCKLE | 233 | BUCKLE | 4,049 | 1 : 17.38 |

| UNDRAW | 0 | DRAW | 135,022 | N/A |

| UNFOLD | 12,727 | FOLD | 16,148 | 1 : 1.27 |

| UNEVEN | 0 | EVEN | 1,645 | N/A |

| *UNDERSTAND | N/A | N/A | N/A | N/A |

| UNTWIST | 0 | TWIST | 16,274 | N/A |

| UNBRAID | 1 | BRAID | 1,351 | 1 : 1,351 |

| UNPACK | 3,248 | PACK | 35,348 | 1 : 10.88 |

| UNDO | 8,067 | DO | 8,186,409 | 1 : 1,014.80 |

| UNLIKE | 0 | LIKE | 614,689 | N/A |

Let us move along the lexicon–syntax cline and see how the LLMs deal with argument structure constructions:

(3) USER: Hi, Assistant. In the caused-motion frame, an agent performs an action on an object that causes it to move in a direction. “Throw” is a caused-motion verb. “Throw” evokes the caused-motion frame. “Throw” indicates that the subject of the verb performed an action, throwing, that caused an object to move in a direction. But the syntactic pattern “Noun Phrase – Verb Phrase – Noun Phrase – Prepositional Phrase” can also evoke the caused-motion frame. When it does, the verb can be intransitive. “She rolled the ball to me” does not mean that she actually rolled; instead, it indicates that she performed some action, whatever it was, that caused the ball to move in a direction in a rolling manner. “He slid the plate across the table to me” uses the intransitive verb “slide.” In this sentence, the subject, “he,” did not actually move across the table, but instead performed an action that caused the plate to move in a direction across the table, indicated by the prepositional phrase “to me.” Please give 20 examples of intransitive verbs used in caused-motion clausal syntactic patterns, like “He floated the boat to me.”

a. ChatGPT: Certainly, here are examples of intransitive verbs used in caused-motion clausal syntactic patterns.

1. She dreamed the car into the garage.

2. They paused the game for a bathroom break.

3. The child smiled the balloon up into the sky.

4. She laughed the milk out of her nose.

5. The tree grew its branches over the fence.

6. He wobbled the tray towards the customers.

7. They bounced the ball across the court.

8. The sun baked the ground into a hard crust.

9. The boy whistled the dog into the house.

10. She danced the baby to sleep.

11. He hummed the tune into my ear.

12. He jogged the dog around the park.

13. She tiptoed the glass to the edge of the table.

14. The child skipped the stone across the lake.

15. She twirled the baton in her hand.

16. The fire flickered the shadows across the walls of the cave.

17. The sunbeams danced the shadows on the floor.

18. The sun melted the snow into puddles.

19. The waves crashed the debris onto the shore.

20. The fire crackled the logs in the fireplace.

b. OpenAssistant: Sure, here are twenty examples of intransitive verbs used in caused-motion clauses:

1. He waved goodbye to his friend.

2. She wrote a letter to her family.

3. The dog chased a cat up a tree.

4. A breeze blew through the leaves.

5. They pushed the car to the side of the road.

6. The water flowed downstream.

7. An earthquake shook the building.

8. The lightning struck a tree.

9. The plane took off from the runway.

10. The rain fell from the sky.

11. The sun rose above the horizon.

12. The waves crashed against the shore.

13. The smoke drifted away in the wind.

14. The fireworks exploded high in the air.

15. The bullet pierced through the target.

16. The ball rolled into the hole.

17. The gusts of wind carried the kite away.

18. The water dripped from the faucet.

19. The steam escaped from the boiling pot.

20. The snow fell silently outside my window.

(3) already contains a more challenging prompt. Not only does it ask the model to provide twenty examples of the Caused Motion construction, but it also explicitly requires it to generate examples with intransitive verbs only. Such a prompt, if successful, could, for example, be used to create a list of stimuli sentences for a potential acceptability experiment. In (4a) we can see that ChatGPT does indeed nominate some interesting examples that might be used as stimuli (She laughed the milk out of her nose). The output also contains cases where the Caused Motion construction is interpreted as a Resultative (The sun melted the snow into puddles), as discussed by Reference GoldbergGoldberg (2006). At the same time, ChatGPT also provides examples that have the right syntactic form, but not the meaning of the Caused Motion construction: They paused the game for a bathroom break exhibits the NP-V-NP-PP structure, but cannot be construed as “They1 caused the-game3 to move towards a-bathroom-break4 by pausing2-ing”). Again, however, OpenAssistant performs considerably worse on this task. Only sentences 3, 5, and 17 are clear instances of the Caused Motion construction. Thirteen examples are in fact instances of the Intransitive Motion construction and do not contain an object. Note that, throughout this Element, we do not ask whether the LLMs will produce flawless responses – clearly they do not (yet). Instead, we want to focus on whether those responses can serve as useful nominations for the linguist’s consideration, and in that respect, (4a) is already impressive, as ChatGPT produces a great number of relevant instances of the argument structure construction under investigation, while OpenAssistant still clearly fails this task. At the same time, these examples also illustrate that the linguist still needs to carefully evaluate the output of conversational AIs in order to distinguish relevant responses from those that are incorrect.

Increasing the complexity of constructions even more, we next asked both generative AIs about the XYZ construction, which, as mentioned in Section 2, requires the blending of several frame input spaces to express a concise, novel idea:

(5) USER: The XYZ construction, e.g. “Paul (X) is the father (Y) of Sally (Z)” or “Causation (X) is the cement (Y) of the universe (Z)” prompts us to evoke a frame indicated by Y and to blend it with the X-Z information. Please give 10 more examples in which the Y term evokes a conceptual frame to be blended with the X-Z information.

a. ChatGPT: Sure, here are 10 more examples:·

1. The internet is the nervous system of modern society.

2. Gratitude is the memory of the heart.

3. The library is the brain of the university.

4. Imagination is the fuel of creativity.

5. Time is the river of life.

6. Music is the medicine of the soul.

7. Language is the bridge between cultures.

8. Curiosity is the compass of exploration.

9. Empathy is the food of human connection.

10. Humor is the band-aid of a stressful day.

b. OpenAssistant: Certainly, here are ten more examples of the XYZ construction:

1. John (X) is the manager (Y) of the team (Z).

2. Success (X) is the result (Y) of hard work (Z).

3. Education (X) is the key (Y) to success (Z).

4. Love (X) is the most powerful (Y) force (Z) in the world.

5. Music (X) is the language (Y) of emotions (Z).

6. Nature (X) is the greatest (Y) artist (Z).

7. Fear (X) is the enemy (Y) of progress (Z).

8. Health (X) is wealth (Y) in disguise (Z).

9. Creativity (X) is the spark (Y) of innovation (Z).

10. Failure (X) is the stepping stone (Y) for achievements (Z).

How creative are the responses that the models give for the XYZ construction? Again, it is unclear which data were in the training sets, but a Google search at least allows for a heuristic assessment of the innovativeness of ChatGPT’s example sentences:

a. The internet is the nervous system of modern society. (4 hits; https://rigf.ru/en/press/?v=archive&id=115&year=2021)

b. Gratitude is the memory of the heart. (202,000 hits; https://diocesan.com/gratitude-is-the-memory-of-the-heart/)

c. The library is the brain of the university. (2 hits; http://ublibrary.weebly.com/rules-and-regulations.html)

d. Imagination is the fuel of creativity. (8 hits; https://anatola.beehiiv.com/p/neuroscience-of-imagination)

e. Time is the river of life. (492,000 hits; www.goodreads.com/work/quotes/67142076)

f. Music is the medicine of the soul. (83,100 hits; https://irp.nih.gov/catalyst/27/4/is-music-really-the-medicine-of-the-soul)

g. Language is the bridge between cultures. (7,890 hits; www.nexus.edu.sg/people/primary-zoe-zhou)

h. Curiosity is the compass of exploration. (0 hits)

i. Empathy is the food of human connection. (0 hits)

j. Humor is the band-aid of a stressful day. (0 hits)

As a Google search (28 March 2023) revealed, (7b, e, f, g) are so frequent that it seems likely that they appeared somewhere in the training data. If so, these would not be creative constructs but only reused, entrenched constructions (Reference TurnerTurner, 2020; Reference HoffmannHoffmann, 2022b). Other examples, such as (7a, c, d), do indeed occur on the web, but only very infrequently (with 2 to 8 hits), so for these items, it is next to impossible to assess whether the model simply recombined them or productively constructed them. (7h, i, j) are by far the most interesting instances of the XYZ construction, as these cannot be found on the web (yet). Still, partial matches can nevertheless be found for all three:

a. Curiosity is the compass of the heart and the lust of the mind. (www.andycash.co/dictionary)

b. As we all know feedback and empathy is the food of champions. (https://twitter.com/TemitopeAyodel_/status/1587866741119614976?s=20)

c. alcohol is the band-aid of life (https://twitter.com/animatedtext/status/1094372119448903680?s=20)

OpenAssistant, on the other hand, mostly provides rather uncreative examples, many of which are highly frequent quotes or clichés:

a. John is the manager of the team. (3 hits; www.espn.com/blog/boston/red-sox/post/_/id/47394/crucible-of-cancer-forges-stronger-bond-between-john-farrell-torey-lovullo)

b. Success is the result of hard work. (258,000 hits; https://quotefancy.com/quote/916496/Thomas-A-Edison-Success-is-the-result-of-hard-work)

c. Education is the key to success. (1,170,000 hits; www.intelligenthq.com/why-is-education-the-key-to-success/)

d. Love is the most powerful force in the world. (64.900 hits; www.goodreads.com/quotes/7364186-love-is-the-most-powerful-force-in-the-world-if).

e. Music is the language of emotions. (119,000 hits; https://unquote.li/en/quotes/emmanuel-kant/music-is-the-language-of-emotions-a52wxl8wn9)

f. Nature is the greatest artist. (15,600 hits; https://medium.com/spiritual-tree/an-artist-called-nature-47eb23caf426)

g. Fear is the enemy of progress. (101,000 hits; https://seekerproject4se.org/2022/08/11/truth-is-the-enemy-of-faith-and-fear-is-the-enemy-of-progress/)

h. Health is wealth in disguise. (3 hits; www.facebook.com/341405716431476/posts/health-is-wealth-in-disguiseto-mark-todays-world-clean-up-day-toro-youths-have-m/756513928253984/)

i. Creativity is the spark of innovation. (3,660 hits; www.creativecave.biz/about-us)

j. Failure is the stepping stone for achievements. (0 hits)

While the full sentence in (9a) is not very frequent, the VP “is the manager of the team” has 2,590,000 hits. Similarly, “wealth in disguise” (9h) and “stepping stone for achievements” (9j) are fairly frequent phrases (with 123,000 and 20,800 hits, respectively). All other examples in (9) can be found several thousand times on the web.

Given that LLMs excel at pattern recognition, it is no surprise that ChatGPT might have encoded chunks such as (8a–c) as highly likely sequences of words, which then leads it to produce novel constructs such as (7h, i, j). After all, (8a–c) already establish a connection between the seemingly independent frames of X and Y in an existing XYZ construct. Are these creative instances of the XYZ construction? As always, a lot depends on one’s definition, but these examples at least exhibit a certain degree of novelty and can be considered appropriate uses of the construction, thus meeting two integral properties of standard psychological definitions of creativity (see, e.g., Reference HoffmannHoffmann, 2022b). Note that we do not mean to imply that the models are creative or exhibit human behavior. All we want to point out is that they can at least generate products that go beyond their input and are, in this sense, creative. We, thus, want to emphasize the important distinction between creative products and creative producers (Reference HoffmannHoffmann, 2022b). To a certain degree, at least, some models, such as ChatGPT, might be able to supply Construction Grammar researchers with (somewhat) creative constructs that can be the starting point for further constructionist analysis (e.g., testing how humans rate the appropriateness of such novel constructs). Other chatty LLMs, such as OpenAssistant, so far, fail to provide particularly interesting novel instances of certain constructional patterns.

Can LLMs also be used to suggest potentially corroborating evidence for constructions? Reference Hoffmann, Bergs, Hennemann and TackeHoffmann & Bergs (2024) and Hoffmann (unpublished dataFootnote 7) argue along these lines, claiming that the pattern-detection functionality of LLMs provides independent support for the claim that speakers possess discourse-level constructions. They illustrate this with Knock Knock jokes such as (10) and (11) (source: https://parade.com/944054/parade/knock-knock-jokes/):

(10)

A: Knock, Knock

B: Who’s there?

A: Luke.

B: Luke who?

A: Luke through the peep hole and find out.

(11)

A: Knock, Knock.

B: Who’s there?

A: Figs.

B: Figs who?

A: Figs the doorbell, it’s not working!

Based on a cursory discussion of the construction in Reference Bergen, Binsted, Brône, Feyaerts and VealeBergen and Binsted (2015) as well as their introspective analysis, Hoffmann and Bergs (2024) claim that Knock Knock jokes are stored as a complex discourse-level construction, with complex FORM–MEANING properties:

(12)

FORM: PHONOLOGY: A: /ˈnɒk ˈnɒk/ B: /ˈhuz ðeə/ A: X1 B: X1 /ˈhu/ A: Y [contains sth. phonetically similar to X1] MORPHOSYNTAX: A: knockV knockV B: WhoWH isAUX thereOBL? A: X1 B: X1 WhoWH? A: Y MEANING: SEMANTICS: PRAGMATICS: function=entertain

As the following example shows, even given a very short prompt, such as in (13), ChatGPT offers support for a constructional analysis of Knock Knock jokes and identifies virtually all of the various constructional properties postulated in (12), while OpenAssistant’s attempt is less detailed but still suggestive:

(13) USER: Give me a construction grammar analysis of Knock Knock jokes.

a. ChatGPT: Knock Knock jokes are a type of joke that follows a specific construction or formula, which involves a call-and-response format between two speakers. The construction typically follows this format:

Speaker 1: “Knock Knock!”

Speaker 2: “Who’s there?”

Speaker 1: [Insert a name or phrase that is a play on words]

Speaker 2: [Repeats the name or phrase]

Speaker 1: [Completes the joke with a punchline that involves a twist or surprise]

This construction involves several linguistic elements that are used in a creative and humorous way to create a joke. The opening phrase “Knock Knock” functions as an attention-getter or signal that a joke is about to be told. The response from Speaker 2, “Who’s there?” sets up the joke by inviting Speaker 1 to provide a name or phrase that will be used as the basis for the joke.

The next element of the construction is the name or phrase that Speaker 1 provides, which is typically a play on words that sets up the punchline of the joke. This may involve a pun, a homophone, or a double entendre, among other linguistic devices.

The final element of the construction is the punchline, which involves a twist or surprise that plays on the wordplay or pun set up by the name or phrase. The punchline is intended to be unexpected and to create a humorous or absurd effect.

Overall, the construction of Knock Knock jokes involves a combination of linguistic elements, including attention-getters, call-and-response patterns, wordplay, and surprise twists, that are used in a creative and humorous way to create a joke that often relies on the listener’s ability to recognize and appreciate the linguistic devices involved.

b. OpenAssistant: Sure, Construction Grammar provides a framework for analyzing how words and phrases are combined into larger structures in natural languages such as English. According to Construction Grammar theory, words and phrases form constructions, which are recurrent patterns of co-occurrences between linguistic elements. These constructions represent stored knowledge about language use and provide speakers with templates for creating new utterances without having to retrieve individual lexemes or word forms one at a time.

Knock knock jokes often follow specific grammatical patterns and constructions. One common type of construction found in Knock knock jokes involves two noun phrases followed by a question word (such as who or what), similar to the following example:

knocker: Who’s there?

boo: Boo.

knocker: Boo who?

boo: Don’t cry, it’s just a joke!

In this structure, the first noun phrase (Who’s there?`) functions as the topic of the discourse, while the second noun phrase (Boo) serves as the answer to the question posed in the first element of the construction. This structure creates expectations among listeners about how the exchange will continue and allows them to anticipate potential punchlines based on their familiarity with these patterns. Other types of constructions might involve direct address to interlocutors (e.g., “Oi, you there!”), rhetorical questions (e.g., Why did the tomato turn red? Because it saw the salad dressing), or elliptical expressions (“You know … right? Or was it left?).

Finally, AI LLM chatbots can nominate items for the linguist to consider as supporting evidence for existing constructions, and in general propose items worth exploring. For example, the linguist might ask the chatbot to propose other discourse-level constructions:

(15) USER: Name ten other discourse-level patterns that can be analyzed as constructions.

a. ChatGPT: Sure, here are ten more examples of discourse-level patterns that can be analyzed as constructions:

Apology Construction: This construction typically begins with an expression of regret, followed by an acknowledgement of fault or responsibility, and ends with a request for forgiveness or a promise to make amends.

Job Interview Construction: This construction typically begins with an introduction of the candidate, followed by a discussion of their qualifications and experience, and ends with a question and answer period.

Debate Construction: This construction typically begins with an introduction of the topic and the speakers, followed by a presentation of arguments and counterarguments, and ends with a conclusion or resolution.

News Report Construction: This construction typically begins with a headline or lead-in, followed by a presentation of facts and details, and ends with a conclusion or summary.

Wedding Toast Construction: This construction typically begins with an expression of congratulations or best wishes, followed by anecdotes and memories of the couple, and ends with a toast to their future together.

Political Speech Construction: This construction typically begins with an introduction of the speaker and the topic, followed by a presentation of their platform and policy proposals, and ends with a call to action or a message of hope.

Eulogy Construction: This construction typically begins with an expression of condolences, followed by memories and stories of the deceased, and ends with a message of comfort and closure.

Sermon Construction: This construction typically begins with a scripture or reading, followed by a presentation of the message or lesson, and ends with a call to action or a message of inspiration.

Stand-up Comedy Construction: This construction typically begins with a setup or premise, followed by a punchline or joke, and ends with a tagline or callback.

Podcast Interview Construction: This construction typically begins with an introduction of the guest and the topic, followed by a discussion of their background and expertise, and ends with a Q&A session or a call to action.

b. OpenAssistant: Here are ten other discourse level patterms that could be analyzed using construction grammer

Exclamative sentences/constructions

Rhetorical questions

Advice seeking/requesting

Politeness strategies

Complaining

Agreeing/disagreenging

Reason giving/arguing

Condolancing

Aggressiveness·

Threats

Notice that ChatGPT responds with analyses, but OpenAssistant sticks to the minimum and supplies only the labels. Such performance can be controlled by engineering a different prompt. We do not dive into the possibilities of alternative prompting here.

The above text types, obviously, vary considerably in their generality, and construction grammarians will have to investigate carefully whether there is any cognitive evidence that these discourse patterns are stored as constructions. Nevertheless, as a first step, it is also possible to query models for further details that might support such an analysis. (17–18) illustrates this for prototypical apologies:

(17) USER: Give a detailed analysis of FORM and MEANING elements of the Apology Construction.

a. ChatGPT: The Apology Construction is a discourse-level pattern that speakers use when they want to express regret, remorse, or sorrow for something that they have done or failed to do. It typically involves a specific structure and a set of linguistic markers that indicate the speaker’s attitude towards the situation.

FORM:

The Apology Construction has a specific form that includes the following elements:

Expression of regret or remorse: This is usually the first element of the Apology Construction and is often signaled by an apology formula, such as “I’m sorry” or “I apologize”.

Acknowledgment of responsibility: The speaker takes responsibility for the action or situation that caused the harm, often using verbs like “I did” or “I failed to”.

Explanation or justification (optional): The speaker may provide an explanation or justification for their behavior, but this is not always necessary.

Promise of repair or restitution: The speaker offers to make things right, often by offering to do something to fix the situation or prevent it from happening again.

MEANING:

The Apology Construction is used to convey a range of meanings, including:

Regret or remorse: The Apology Construction is primarily used to express feelings of regret or remorse for something that the speaker has done or failed to do. The expression of regret or remorse is a way for the speaker to acknowledge the harm that has been caused and to show that they understand the impact of their actions.

Responsibility: The Apology Construction is also used to acknowledge responsibility for the situation or action that caused harm. This is an important part of the apology because it shows that the speaker is willing to take ownership of their actions and to accept the consequences.

Repair or restitution: The Apology Construction often includes a promise of repair or restitution, which is a way for the speaker to demonstrate their commitment to making things right. This can involve a specific action or set of actions that the speaker will take to repair the harm caused or prevent it from happening again.

Respect: The Apology Construction is also used to show respect for the person or people who have been harmed. By acknowledging the harm that has been caused and taking responsibility for it, the speaker is demonstrating their respect for the feelings and experiences of others.

In summary, the Apology Construction is a discourse-level pattern that speakers use to express regret, remorse, or sorrow for something that they have done or failed to do. The construction has a specific form that includes an expression of regret or remorse, an acknowledgment of responsibility, an explanation or justification (optional), and a promise of repair or restitution. The construction is used to convey meanings such as regret or remorse, responsibility, repair or restitution, and respect.

b. OpenAssistant: The Apology Construction involves two main components –

FORM and MEANING. Let’s take a closer look at both elements.

FORM: The Apology Construction consists of four key parts: a) Acknowledgment: This component signals the beginning of the apology, acknowledging that some wrongdoing occurred. For example: “I’m sorry for what happened … ” b) Expression of regret: Expressions of regret convey remorseful feelings, and acknowledge responsibility for one’s actions. For example: “I feel really bad about it.” c) Offer of repair: This part offers restitution or reparation, indicating steps taken to prevent similar situations from happening again. For example: “I’ll make sure it doesn’t happen again.” d) Closure statement: The last part finalizes the apology by saying “thank you,” or something similar, signaling the end of the apology. For example: “Thanks for listening.”

MEANING: The Meaning of the Apology Construction conveys repentance for past behaviors. It expresses empathy and understanding toward others, as well as respect for social norms. By offering an apology, speakers demonstrate their willingness to fix things and restore relationships, which helps build trust and strengthen bonds between people.

Apologies come in various shapes and forms and have been the subject of numerous linguistic studies (cf., e.g. Reference LeechLeech, 2014: 115–133). Similarly, tons of websites (e.g., https://ggia.berkeley.edu/practice/making_an_effective_apology) offer advice as to how one should apologize. We do not know whether our chosen LLMs had access to any of those sources. Nevertheless, what they do offer the linguist are schematic form–meaning representations such as (18a and b) that could be used as a starting point for the investigation of an Apology construction. The AI LLM chatbots can then be asked further to compose an appropriate construct using the Apology construction:

(19) USER: Give me an example of a full text that is created by the Apology Construction. Indicate which parts correspond to which FORM or MEANING component of the construction.

a. ChatGPT: Here is an example of a full text that follows the Apology Construction:

FORM:

Attention-getter

Expression of regret

Explanation of what went wrong

Acknowledgment of responsibility

Declaration of repentance

Offer of repair

Request for forgiveness

Closing

MEANING:

The Apology Construction is used to express remorse and seek forgiveness after a wrong has been committed. The speaker must show that they understand what they did wrong and take responsibility for their actions. They must also demonstrate a sincere desire to make things right and offer a plan for how they can do so. Finally, the speaker must ask for forgiveness and offer a closing that shows their commitment to making things right.

Here’s an example text that follows the Apology Construction:

FORM:

Attention-getter: “I want to start by saying how sorry I am.”

Expression of regret: “I regret my actions and the hurt that I have caused.”

Explanation of what went wrong: “I was not thinking clearly and acted impulsively.”

Acknowledgment of responsibility: “I take full responsibility for my actions and the consequences that have resulted.”

Declaration of repentance: “I understand how my actions have affected you and I am committed to making things right.”

Offer of repair: “I would like to offer to pay for any damages and work to repair our relationship.”

Request for forgiveness: “I hope you can find it in your heart to forgive me.”

Closing: “I want you to know that I value our relationship and will do everything in my power to make things right.”

MEANING:

The speaker is expressing remorse and taking responsibility for their actions. They understand that their actions have caused harm and are committed to repairing the damage and making things right. The speaker is seeking forgiveness and demonstrating a genuine desire to repair the relationship.

b. OpenAssistant:

Example: “I would like to apologize for my behavior yesterday during our meeting. I realize now how inconsiderate I was acting when interrupting you, not letting you finish speaking. It was unprofessional and unethical on my behalf, and I understand the frustration that caused in our team. Moreover, my attitude may have undermined your credibility with your higher ups, who were also present. From this point forward, I will work hard to ensure that our communication is more balanced by asking thoughtful questions instead of immediately sharing my opinions, so we can have constructive dialogue without interruption. Once again, I apologize deeply for my misbehavior”.

Both responses offer a suitable construct, but ChatGPT conforms to the prompt for an analysis, while OpenAssistant does not. The suggested constructs in (20) sound formulaic (cf., e.g. “I regret my actions and the hurt that I have caused.” or “I would like to offer to pay for any damages and work to repair our relationship.”). Still, both models offer suitable constructs, and ChatGPT seems to have also identified a general template (an authentic and potentially successful human apology will, obviously, have to be more personal and make more concrete references to the situation for which the speaker is apologizing). More interesting, from a theoretical point of view, is the fact that the constructional properties of (18a) and (20a) are not identical. (20a) adds certain elements (such as the Attention-getter or the Explanation of what went wrong slot). Since the content of the previous discourse seems to be important for the answers a model provides, it is perhaps important to note that, in the case of ChatGPT but not OpenAssistant, in-between (17) and (19), we first issued the following two prompts, (21) and (22), having to do with the Wedding Toast construction:

(21) USER: Give a detailed analysis of FORM and MEANING elements of the Wedding Toast Construction.

a. ChatGPT: The Wedding Toast Construction is a discourse-level pattern that typically occurs in the context of a wedding reception or ceremony, where a speaker offers a speech or toast to honor and congratulate the newlyweds.

FORM:

The Wedding Toast Construction typically includes the following form elements:

Address: The speaker begins by addressing the audience and acknowledging their presence.

Example: “Ladies and gentlemen, distinguished guests, friends and family of the bride and groom … ”

Congratulations: The speaker congratulates the newlyweds on their marriage and wishes them a happy future together.

Example: “I want to start by congratulating [Bride’s name] and [Groom’s name] on their wedding day. May your future be filled with love, laughter, and joy.”

Personal anecdotes: The speaker may share personal stories or anecdotes about the newlyweds or their relationship to add a personal touch to the speech.

Example: “I remember when [Bride’s name] first told me about [Groom’s name], and how she knew he was the one for her. Seeing them together today, I know she was right.”

Advice: The speaker may offer advice to the newlyweds on how to have a successful and happy marriage.

Example: “Marriage is not always easy, but it is worth it. My advice to you both is to communicate openly, cherish each other, and never forget the love that brought you together today.”

Toast: The speaker raises a glass to the newlyweds and invites the audience to join in a toast to their happiness.

Example: “So let us raise a glass to [Bride’s name] and [Groom’s name], and wish them all the happiness in the world!”

MEANING:

The Wedding Toast Construction serves a social function in celebrating and honoring the newlyweds’ union, as well as providing guidance and support for their future together. The speech typically expresses feelings of happiness, love, and goodwill towards the newlyweds, and may include humorous or sentimental anecdotes to add a personal touch. The advice offered in the speech serves to provide guidance and support for the couple as they embark on their new life together. The toast to the newlyweds is a symbolic gesture of wishing them all the best for their future together.

b. OpenAssistant: Certainly!

Form: The Wedding Toast Construction typically follows a specific form that includes several stages or sections. These include:

Opening remarks: The speaker begins by introducing themselves and explaining their connection to the couple being honored. They might share memories or stories about how they met, or simply offer warm wishes and best regards.

Contextualization: Next, the speaker provides relevant background information about the couple or event itself. This may include details about their courtship, engagement story, special moments shared together, etc.

Compliments: During this stage, the speaker extends formal or informal expressions of admiration, praise, gratitude, love, affection, respect, appreciation, esteem, pride, satisfaction, joy, happiness, support or approval regarding one or both members of the newlywed pair. Their words aim to celebrate their union, and inspire guests to raise glasses and join in the festivities.

Well-Wishes / Blessings: The next portion of the speech centers around hopeful expectations, sentiments, good intentions, desires, aspirations, prayer requests, vibes, energy, prayers, benedictions, supplications, petitions, appeals, blessings, curses, exhortations, encouragements, recommendations, or entreaties for happiness, success, fulfillment, longevity, prosperity, wealth, health, fertility, harmony, bliss, peace, love or companionship for the couple.

Again, we do not know the data on which the model has been trained. The data might include substantial examples of wedding toasts. In any event, it is clear that the chatty model can respond to local context as provided in its chat history to produce a constructional analysis of the text type.

As before, we asked both LLMs to provide us with an example of this discourse-level construction:

(23) USER: Give me an example of a full text that is created by the Wedding Toast Construction. Indicate which parts correspond to which FORM or MEANING component of the construction.

a. ChatGPT: Certainly, here’s an example of a text that follows the Wedding Toast Construction:

FORM:

Address the audience

Express gratitude to hosts and guests

Recall personal anecdote about the couple

Offer congratulations and well-wishes

Raise a toast to the newlyweds

MEANING:

This construction is used in a wedding setting to honor and congratulate the newlyweds, while also expressing gratitude to the hosts and guests. The personal anecdote provides a heartfelt and intimate touch to the speech, while the toast at the end celebrates the couple’s union.

Example Text:

Good evening everyone,

First of all, I would like to thank [HOST NAME] for hosting such a beautiful wedding and gathering us all here tonight to celebrate the marriage of [BRIDE NAME] and [GROOM NAME]. It’s an honor to be part of this special occasion.

[PERSONAL ANECDOTE: Insert a personal anecdote about the couple, such as how they met, a funny story about their relationship, or a touching moment you shared with them.]

I want to congratulate you both on finding each other and building such a strong and loving relationship. [BRIDE NAME], you look absolutely stunning today, and [GROOM NAME], you are one lucky man.

May your marriage be filled with love, happiness, and laughter. [INSERT WELL-WISHES OR ADVICE, IF DESIRED.] Let’s raise a glass to the newlyweds – cheers!

[RAISE A TOAST TO THE NEWLYWEDS: Insert a toast to the couple, such as “To [BRIDE NAME] and [GROOM NAME], may your love continue to grow stronger with each passing day.”]

Thank you and enjoy the rest of the evening.

b. OpenAssistant: Certainly!

Form: The Wedding Toast Construction typically follows a specific form that includes several stages or sections. These include:

Opening remarks: The speaker begins by introducing themselves and explaining their connection to the couple being honored. They might share memories or stories about how they met, or simply offer warm wishes and best regards.

Contextualization: Next, the speaker provides relevant background information about the couple or event itself. This may include details about their courtship, engagement story, special moments shared together, etc.

Compliments: During this stage, the speaker extends formal or informal expressions of admiration, praise, gratitude, love, affection, respect, appreciation, esteem, pride, satisfaction, joy, happiness, support or approval regarding one or both members of the newlywed pair. Their words aim to celebrate their union, and inspire guests to raise glasses and join in the festivities.

Well-Wishes / Blessings: The next portion of the speech centers around hopeful expectations, sentiments, good intentions, desires, aspirations, prayer requests, vibes, energy, prayers, benedictions, supplications, petitions, appeals, blessings, curses, exhortations, encouragements, recommendations, or entreaties for happiness, success, fulfillment, longevity, prosperity, wealth, health, fertility, harmony, bliss, peace, love or companionship for the couple.

Again, we find slight differences between the constructional templates proposed by ChatGPT in (22a) and (24a) for which we currently have no explanation: Congratulations appear in a different place on the FORM pole (no. 4 in (24a) instead of no. 2 in (22a)) and Advice is only mentioned in passing in (24a) in the suggested exemplar of the construction “[INSERT WELL-WISHES OR ADVICE, IF DESIRED]”, but not on the FORM pole. OpenAssistant, by contrast, offers the same general template in both 21b and 23b: Form: Opening remarks, Contextualization, Compliments, Well-Wishes / Blessings.

These differences, however, are minor, if worth exploring. We believe that the above examples nevertheless indicate that conversational LLMs such as ChatGPT and OpenAssistant are already helpful tools for investigating discourse-level constructions.

At this point, let us take a moment to dive deeper into the limitations of AI LLM chatbots when used for supporting construction grammarians in their analyses.

4 Limitations of LLMs for Constructional Analysis

On top of the limitations already pointed out in the chat sequences in the previous section, the tests we conducted with ChatGPT and OpenAssistant reveal two major challenges for the use of an AI LLM chatbot as a tool for constructionist analyses: multilinguality and semantics. In this section, we look into them in more detail.

4.1 Constructions in Languages Other Than English

As shown in the previous section, more precisely in (3 and 4), both AI LLM chatbots proposed a list of twenty examples of a caused motion clause with an intransitive verb. Some were actually instances of the Resultative construction, some verbs were not intransitive, yet many could be regarded as possible instances of the Caused Motion construction. During our tests with the chatbots, we also prompted them to analyze constructions in other languages. In the remainder of this section, we discuss two of the prompts we provided to both AI LLM chatbots and their responses to them.

The first prompt concerns the Split Argument construction in Brazilian Portuguese. Such a construction conforms to the NP V NP syntactic pattern – the same pattern present in the Active Transitive construction – but instead of featuring an agentive subject NP and a patient-like object NP, it assigns a patient reading to both NPs, evoking the Undergoing frame (Reference AlmeidaAlmeida, 2016, Reference Almeida2022; Reference Matos, Torrent and AlmeidaMatos et al., 2017). There are four types of the Split Argument construction: Artifact, Body part, Possession, and Entity-attribute (Reference SampaioSampaio, 2010), exemplified in (25–28).

(25)

O celular quebrou a tela. The cell phone break.PST.3SG the screen The screen in my cell phone cracked.

(26)

O bebê quebrou o braço. The baby break.PST.3SG the arm The baby broke his arm.

(27)

Eu furei o pneu. I pierce.PST.1SG the tire I got a flat tire.

(28)

A gasolina subiu o preço. The gasoline go-up.PST.3SG the price Gas prices went up.

Note that, in all four of them, some sort of semantic relationship holds between the element in the first NP slot and the NP in the second: whole–part, body–body part, possessor–possession, or entity–attribute. In this construction, the composition of the two NPs undergoes some sort of change and the fact that the semantic argument of Undergoing is split into two parts profiles the element in the first NP slot (Reference SampaioSampaio, 2010).

We used the first subtype of the Split Argument construction to prompt the chatbots, as shown in (29–32), where sentences in Portuguese have been glossed by us for the sake of comprehension. OpenAssistant already provided a translation into English for each sentence in Brazilian Portuguese. For the sake of better visualization, those translations were moved to the last line of each example, following the translation provided by us. The chat sequence demonstrates that neither chatbot provides, in its first attempt, one single correct example for the Split Argument construction. When corrected by the user, they once again failed in the task: In (32), for ChatGPT, only sentence 6 and (with some contextualization effort) sentence 5 can be regarded as instances of the Split Argument construction. Although in some cases ChatGPT chooses nonhuman and nonanimate subjects for the sentences it creates – a feature common in the Split Argument construction with artifacts – when it does so, it usually comes up with sentences where the first NP gains an instrumental reading. For OpenAssistant, once again, none of the sentences are instances of the construction. OpenAssistant also performs worse in regard to the grammaticality of the sentences in Brazilian Portuguese, and even makes mistakes when proposing instances of the Transitive construction. Some sentences lack fluency or sound odd. There are also some subword-level issues, such as the word demolidaram in the last sentence produced by OpenAssistant, after the correction. The verb in Portuguese would be demoliram.

(29) USER: Brazilian Portuguese has two constructions sharing the same formal pattern, namely one involving a noun phrase, a verb phrase and another noun phrase. One of those constructions is the Active transitive construction, where the first noun phrase is an agent who acts on the patient expressed in the second noun phrase. An example of such a construction would be the sentence “A cozinheira quebrou as nozes”. The other construction is the Split argument construction, where both noun phrases are a patient for the action expressed in the verb. Usually, there is a whole-part relation between the first noun phrase and the second one. An example of such a construction is the sentence “A panela quebrou o cabo”. Please give me ten additional examples for each type of constructions mentioned above. Make sure not to repeat the verbs in the example lists.

a. ChatGPT:

Active transitive construction:

1.

O menino jogou a bola. The boy throw.PST.3SG the ball The boy threw the ball. 2.

O gato arranhou a cadeira. The cat scratch.PST.3SG the chair The cat scratched the chair. 3.

O pintor pintou a parede. The painter paint.PST.3SG the wall The painter painted the wall. 4.

O motorista dirigiu o carro. The driver drive.PST.3SG the car The driver drove the car. 5.

O médico examinou o paciente. The doctor examine.PST.3SG the patient The doctor examined the patient. 6.

O garçom serviu o prato. The waiter serve.PST.3SG the dish The waiter served the dish. 7.

O bombeiro apagou o fogo. The firefighter put out.PST.3SG the fire The firefighter put out the fire. 8.

A professora corrigiu a prova. The teacher grade.PST.3SG the test The teacher graded the test. 9.

O pedreiro construiu a casa. The mason build.PST.3SG the house The mason built the house. 10.

O jardineiro plantou as flores. The gardener plant.PST.3SG the flowers The gardener planted the flowers. Split argument construction:

1.

O cão comeu o osso. The dog eat.PST.3SG the bone The dog ate the bone. 2.

O martelo quebrou a pedra. The hammer break.PST.3SG the stone The hammer broke the stone. 3.

O fogo queimou a madeira. The fire burn.PST.3SG the wood The fire burnt the wood. 4.

A faca cortou a carne. The knife cut.PST.3SG the meat The knife cut the meat. 5.

A tesoura rasgou o papel. The scissors tear.PST.3SG the paper The scissors tore the paper. 6.

A chave abriu a porta. The key open.PST.3SG the door The key opened the door. 7.

O sal danificou o carro. The salt damage.PST.3SG the car The salt damaged the car. 8.

A tempestade arrancou a árvore. The storm rip out.PST.3SG the tree The storm uprooted the tree. 9.

O tempo desgastou o metal. The time wear out.PST.3SG the metal The weather corroded the metal. 10.

O sol derreteu o gelo. The sun melt.PST.3SG the ice The sun melted the ice. b. OpenAssistant: Sure, here are some more examples of the active transitive construction:

Active Transitive Construction:

1.

* O garoto jogador de futebol atirou em um pênalti. The boy player of soccer shoot.PST.3SG in a penalty * The boy fired at a penalty. (The boy soccer player took a penalty kick.) 2.

A professora ensinou os alunos como The teacher teach.PST.3SG the students how resolver equações. solve.INF equations The teacher taught the students how to solve equations. (The teacher taught the students how to solve equations.) 3.

Os bombeiros apagaram o fogo na casa. The firefighters put out.PST.3SG the fire in the house The firefighters put out the fire in the house. (The firefighters put out the fire in the house.) 4.

O motorista dirigiu o carro até a estação de trem. The driver drive.PST.3SG the car to the station of train The driver drove the car to the train station. (The driver drove the car to the train station.) 5.

Os pescadores capturam peixes com redes e armadilhas. The fisherman capture.PRES.3SG fishes with nets and traps Fishermen catch fish with nets and traps. (Fishermen catch fish with nets and traps.) 6.

A enfermeira administrou uma vacina à criança. The nurse administer.PST.3SG a vaccine to the child The nurse administered a vaccine to the child. (The nurse administered a vaccine to the child.) 7.

Os construtores estão reconstruindo a ponte The builders be.PRES.3PL rebuild.GER the bridge sobre o rio. over the river The builders are rebuilding the bridge over the river. (The builders are rebuilding the bridge over the river.) 8.

A bibliotecária ajudou o estudante a encontrar The librarian help.PST.3SG the student to find.INF livros relevantes para seu trabalho. books relevant for his work The librarian helped the student find books relevant to his work (The librarian helped the student find relevant books for their work.) 9.

* O técnico fixou o computador danificado durante The technician fixate.PST.3SG the computer damaged during a noite. the night * The technician fixated the damaged computer during the night. (The technician repaired the broken computer during the night.) 10.

O arquiteto projetou um novo prédio moderno e The architect project.PST.3SG a new building modern and elegante. elegant. The architect designed a new modern and elegant building. (The architect designed a new modern and elegant building.)

(30) And here are some examples of the split argument construction:

Split Argument Construction:

1.

? As poltronas de couro estragadas podem ser The armchairs of leather damaged can.PRES.3PL be.inf consertadas por um tapete de mesa. fix.PART by a rug of table ? The damaged leather armchairs can be fixed using a placemat. (Torn leather chairs can be fixed by a placemat) 2.

Os dentes do meu filho se romperam devido ao The teeth of the my son self break.PST.3SG due to the suco natural de uva. juice natural of grape My son’s teeth broke due to natural grape juice. (My son’s teeth broke due to grape juice). 3.

Deve- se abster deste vinho, pois must.PRES.3SG self avoid.INF of this wine because contém álcool. contain.PRES.3SG alcohol One should avoid this wine because it contains alcohol (You should stay away from this wine because it contains alcohol) 4.

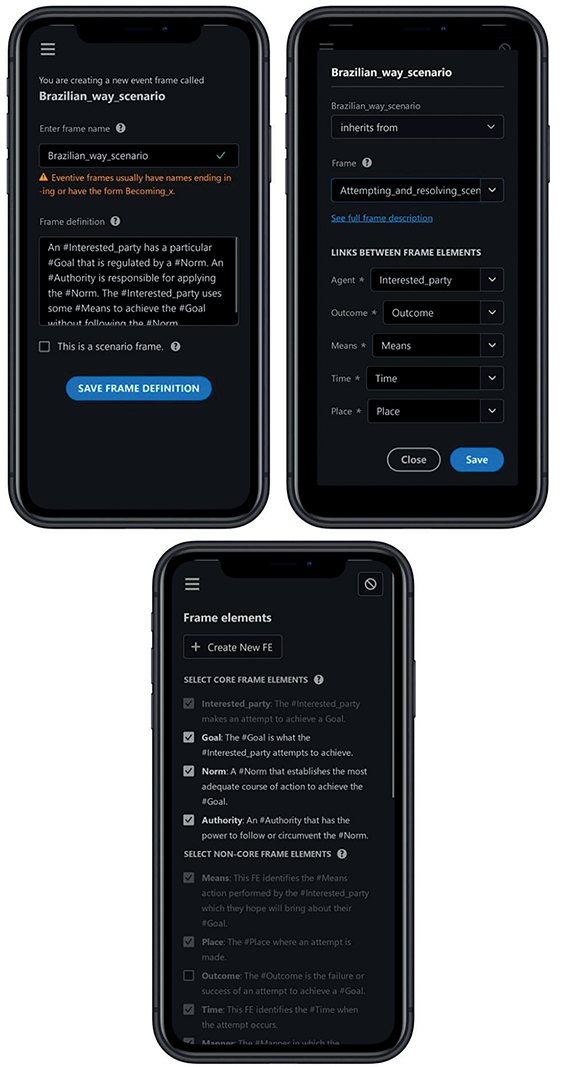

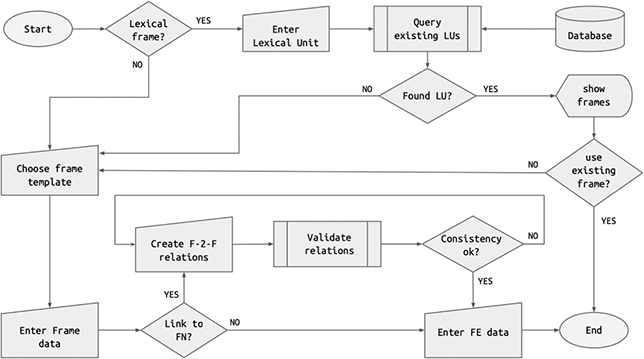

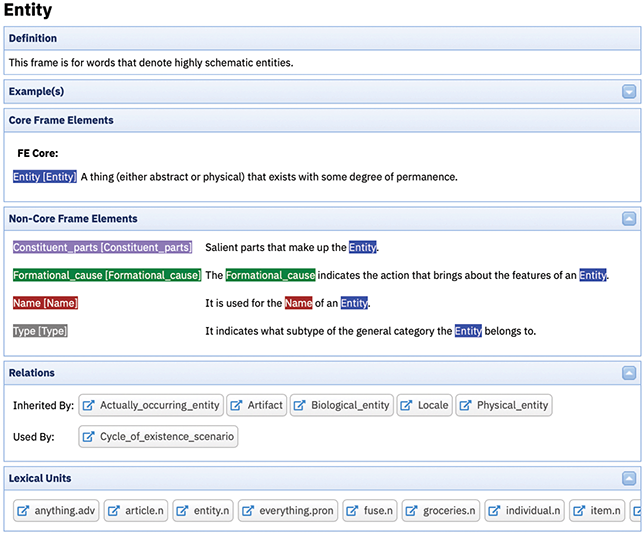

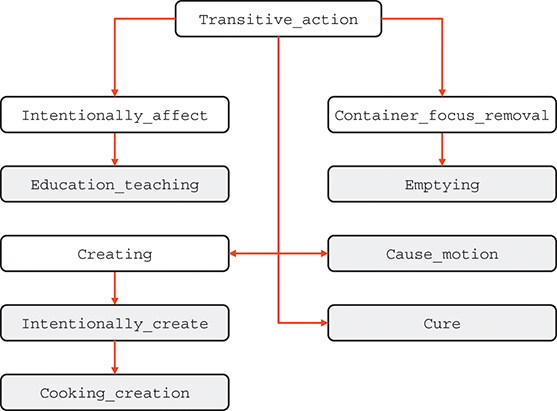

* Desculpa- te comigo, esqueci apologize.IMP.2SG you.OBL with me forget.PST.1SG minha bolsa nas traseiras da táxi. my purse in the backs of the taxi. * Apologize to me because I forgot my purse in the backs of your taxi. (I’m sorry I forget my purse inside your taxi)