1 Peer Review and Its Discontents

What Is Peer Review and Is It Any Good?

‘Peer review’ is the system by which manuscripts and other scholarly objects are vetted for validity, appraised for originality, and selected for publication as articles in academic journals, as academic books (‘monographs’), and in different forms.Footnote 1 Since an editor of an academic title cannot be expected to be an expert in every single area covered by a publication and since it appears undesirable to have a single person controlling the publication’s flow of scientific and humanistic knowledge, there is a need for input from more people. Manuscripts submitted for consideration are shown to external expert advisers (‘peers’) who deliver verdicts on the novelty of the work, criticisms or praise of the piece, and a judgement of whether or not to proceed to publication. A network of experts with appropriate degrees of knowledge and experience within a field are coordinated to yield a set of checks and balances for the scientific and broader research landscapes. Editors are then bound, with some caveats and to some extent, to respect these external judgements in their own decisions, regardless of how harsh the mythical ‘reviewer 2’ may be (Reference Bakanic, McPhail and SimonBakanic, McPhail, and Simon 1989; Reference Bornmann and DanielBornmann and Daniel 2008; Reference Fogg and FiskeFogg and Fiske 1993; Reference LockLock 1986; Reference Petty, Fleming and FabrigarPetty, Fleming, and Fabrigar 1999; Reference Sternberg, Hojjat, Brigockas and GrigorenkoSternberg et al. 1997; Reference Zuckerman and MertonZuckerman and Merton 1971).

The premise behind peer review may appear sound, even incontrovertible. Who could object to the best in the world appraising one another, nobly ensuring the integrity of the world’s official research record? Yet, considering the system for even a few moments leads to several questions. What is a peer and who decides? What does it mean when a peer approves somebody else’s work? How many peers are required before a manuscript can be properly vetted? What happens if peers disagree with one another? Does (or should) peer review operate in the same fashion in disciplines as distinct as neuroscience and sculpture? Particle physics and social geography? Math and literary criticism? When academics rely on publications for their job appointments and promotions, how does peer review interact with other power structures in universities? Do reviewers act with honour and integrity in their judgements within this system?

Consider, as just one example, the question of anonymity in the peer-review process. Review is meant to assess the work itself, not the authors. If the identity of the authors is available to reviewers, though, then might not they give an easy ride to people they know or allow personal disputes to affect their judgement negatively? It is also possible that radically incorrect work might be erroneously perceived as truthful when it comes from an established figure within a discipline or that bold new and correct work might be incorrectly rejected because it comes from new or unusual quarters (Reference CampanarioCampanario 2009).

Yet simply removing the names of authors is not itself necessarily a solution. When one is dealing with small pools of experts, this can provide a false sense of security (Reference Fisher, Friedman and StraussFisher, Friedman, and Strauss 1994; Reference Godlee, Gale and MartynGodlee, Gale, and Martyn 1998; Reference SandströmSandström 2009; Reference Wang and UlfWang and Sandström 2015). If a reviewer knows that the work was part of a publicised funded project, for instance, it could be possible to guess with some accuracy the authors’ identities. In niche sub-fields, researchers usually all know one another and the areas in which their colleagues are working (Reference Mom, Sandström and PeterMom, Sandström, and Besselaar 2018; Reference Sandström and MartinSandström and Hällsten 2008).

On the other side of this process, what about the identity of the reviewer? Should the authors (or even the readership of the final piece) be told who has reviewed the manuscript (Reference PullumPullum 1984; Reference van Rooyen, Delamothe and Evansvan Rooyen, Delamothe, and Evans 2010)? There are arguments for both positive and negative answers to this question. When people are anonymous, they may be more able to speak without constraint. A junior postdoctoral researcher may be capable of reviewing the work of a senior professor but might not be able to criticise extensively the work for fear of career reprisals were their identity to be revealed (this also raises the question, though, of what we mean by ‘peer’). Yet we also know that the cover of anonymity can be abused. Anonymous reviewers, it is assumed, may be more aggressive in their approach and can even write incredibly hurtful ad hominem attacks on papers (Reference Silbiger and StublerSilbiger and Stubler 2019).

Further, how can we tell the standards demanded of a publisher without knowledge of the individuals used to assess the manuscripts? As Kathleen Fitzpatrick notes, conditions of anonymity limit our ability to investigate the review process thoroughly. For ‘in using a human filtering system’, she writes, ‘the most important thing to have information about is less the data that is being filtered, than the human filter itself: who is making the decisions, and why’ (Reference FitzpatrickFitzpatrick 2011, 38). It is also clear that errors are only likely to be caught if the selection of peers is up to scratch (although as we note later, there are even some problems with this assumption). Group social dynamics may also affect decision-making in this area (Reference Olbrecht and BornmannOlbrecht and Bornmann 2010; Reference van Arensbergen, van der Weijden and van den Besselaarvan Arensbergen, van der Weijden, and van den Besselaar 2014). Gender biases also play a role (Reference van den Besselaar, Sandström and SchiffbaenkerBesselaar et al. 2018; Reference Biernat, Tocci and WilliamsBiernat, Tocci, and Williams 2012; Reference Helmer, Schottdorf, Neef and BattagliaHelmer et al. 2017; Reference Kaatz, Gutierrez and CarnesKaatz, Gutierrez, and Carnes 2014). Anonymity in the review process, just the first of many concerns, is far more complicated than it might at first appear (for more on this debate, see Reference BrownBrown 2003; Reference DeCourseyDeCoursey 2006; Reference Eve, Vincent and WickhamEve 2013; Reference GodleeGodlee 2002; Reference Ross-HellauerRoss-Hellauer 2017; Reference Seeber and BacchelliSeeber and Bacchelli 2017; R. Reference SmithSmith 1999; Reference TattersallTattersall 2015; Reference van Rooyen, Godlee, Evans, Black and Smithvan Rooyen et al. 1999).

It also seems abundantly clear that the peer-review process is far from infallible. Every year, thousands of articles are retracted (withdrawn) for containing inaccuracies (Reference Brainard and YouBrainard and You 2018), for conducting unethical research practices, and for many other reasons (for more on this, see the ‘Retraction Watch’ site; see Reference Brembs, Button and MarcusBrembs, Button, and Munafò 2013 for a study that found that impact factor correlates with retraction rate). On occasion, this has had devastating consequences in spaces such as public health. Andrew Wakefield’s notorious retracted paper claiming a link between the mumps, measles, and rubella (MMR) vaccine and the development of autism in children was published in perhaps the most prestigious medical journal in the world, The Lancet (Reference Wakefield, Murch, Anthony, Linnell, Casson, Malik and BerelowitzWakefield et al. 1998). The work was undoubtedly subject to stringent pre-publication review and was cleared for publication. Yet the article was later retracted and branded fraudulent, having caused immense and ongoing damage to public health (Reference Godlee, Smith and MarcovitchGodlee, Smith, and Marcovitch 2011). It is, alas, always easier to make an initial statement than subsequently to retract or to correct it. As a result, a worldwide anti-vaccination movement has seized upon this circumstance as evidence of a conspiracy. The logic uses the supposed initial validation of peer review and the prestige of The Lancet as evidence that Wakefield was correct and that he is the victim of a conspiratorial plot to suppress his findings. Hence, when peer review goes wrong, the general belief in its efficacy, coupled with the prestige of journals founded on the supposed expertise of peer review, has damaging real-world effects.

In other cases, peer review is problematic for the delays it introduces. Consider research around urgent health emergencies, such as the Zika virus or newly proposed treatments for the 2019 novel coronavirus. Is it appropriate and ethical to wait several days, weeks, or even months for expert opinion on whether this information should be published when people are dying during the lag period? The answer to this depends on the specific circumstances and the outcome, which can only be known after publication. On the one hand, if the information is published, without peer review, and it turns out to be correct and solid without revision, then the checks and balances of peer review would have cost lives. On the other hand, if the information published is wrong or even actively harmful, and there is even a chance that peer review could have caught this, one might feel differently. These are but a few of the problems, dilemmas, and ethical conundrums that circulate around that apparently ‘simple’ concept of peer review.

In this opening chapter, we describe the broad background histories of peer review and its study. This framing chapter is designed to give the reader the necessary surrounding context to understand the historical evolution and development of peer review. It also introduces much of the secondary criticism of peer review that has emerged in recent years, questioning the usual assumption that the objectivity (or intersubjectivity) of review is universally accepted as the best course of action to ensure standards. Finally, we address the merits of innovative new peer-review practices and disciplinary differences in their take-up (or otherwise). While there are certainly cross-disciplinary implications for our work, it has been in the natural sciences that the benefits and rewards of these new approaches have been most heavily sold.

The Study of Peer Review

Despite the aforementioned challenges, the role of peer review in improving the quality of academic publications and in predicting the impact of manuscripts through criteria of ‘excellence’ is widely seen as essential to the research endeavour. As a term that first entered critical and popular discourse around 1960 but also as a practice that only became commonplace far later than most suspect, ‘peer review’ is sometimes described as the ‘gold standard’ of quality control, and the majority of researchers consider it crucial to contemporary science (Reference Alberts, Hanson and KelnerAlberts, Hanson, and Kelner 2008; Reference BaldwinBaldwin 2017, Reference Baldwin2018; Reference Enslin and HedgeEnslin and Hedge 2018; Reference Fyfe, Squazzoni, Torny and DondioFyfe et al. 2019; Reference HamesHames 2007, 2; Reference Moore, Neylon, Eve, O’Donnell and PattinsonMoore et al. 2017; Reference Mulligan, Hall and RaphaelMulligan, Hall, and Raphael 2013, 132; Reference ShatzShatz 2004, 1). Indeed, peer review is much younger than many suspect. In 1936, for instance, Albert Einstein was outraged to learn that his unpublished submission to Physical Review had been sent out for review (Reference BaldwinBaldwin 2018, 542). Yet, despite its relative youth, peer review has nonetheless become a fixture of academic publication. This raises the question, though, of why this might be the case. For surprisingly little evidence exists to support the claim that peer review is the best way to pre-audit work, leading Michelle Lamont and others to note the importance of ensuring that ‘peer review processes … [are] themselves subject to further evaluation’ (Reference LamontLamont 2009, 247; see also Reference LaFolletteLaFollette 1992). Indeed, there are long-standing criticisms of the validity of peer review, exemplified in Franz J. Ingelfinger’s notorious statement that the process is ‘only moderately better than chance’ (Reference IngelfingerIngelfinger 1974, 686; see also Reference DanielDaniel 1993, 4; Reference Rothwell and MartynRothwell and Martyn 2000) and Drummond Rennie’s (then deputy editor of the Journal of the American Medical Association) ‘if peer review was a drug it would never be allowed onto the market’ (cited in Richard Reference SmithSmith 2006a, Reference Smith2010). However, the status function declaration, as John Reference SearleSearle (2010) puts it, of peer review is to institute a set of institutional practices that allow for the selection of quality by a group of empowered, qualified experts (see also Reference RacharRachar 2016). Peer review as conducted within universities resonates, in many senses, as a type of ‘total institution’ as defined by Christie Davies and Erving Goffman: a ‘distinctive set of organizations that are both part of and separate from modern societies’ (Reference DaviesDavies 1989, 77) and a ‘social hybrid’ that is ‘part residential community, part formal organization’ (Reference GoffmanGoffman 1968, 22).

Yet research into peer review processes can be difficult to conduct. At least one of the challenges with such studies is that there is always the risk of seeking explanations for the accepted constructions and logics of peer review, rather than recognising the contingency of their emergence. This has not, however, prevented a burgeoning field from emerging around the topic (Reference Batagelj, Ferligoj and SquazzoniBatagelj, Ferligoj, and Squazzoni 2017; Reference Tennant and Ross-Hellauer.Tennant and Ross-Hellauer 2019). Certainly, following the influential work of John Reference SwalesSwales (1990), an ever-increasing number of studies have examined the language and mood of published academic articles, grant proposals, and editorials (Reference Aktas and CortesAktas and Cortes 2008; Reference Connor and MauranenConnor and Mauranen 1999; Reference GiannoniGiannoni 2008; Reference HarwoodHarwood 2005a, Reference Harwood2005b; Reference Shehzad and FitzpatrickShehzad 2015; these examples are drawn from Reference Lillis and CurryLillis and Curry 2015, 128). This is not surprising; after all, as van den Besselaar, Sandström, and Schiffbaenker note, ‘[l]anguage embodies normative views about who/where we communicate about, and stereotypes about others are embedded and reproduced in language’ (Reference van den Besselaar, Sandström and Schiffbaenker2018, 314; see also Reference Beukeboom and BurgersBeukeboom and Burgers 2017; Reference Burgers and BeukeboomBurgers and Beukeboom 2016). Indeed, a number of existing studies have examined the linguistic properties of peer review reports written by the authors themselves (Reference ConiamConiam 2012; Reference WoodsWoods 2006, 140–6; for more, see Reference PaltridgePaltridge 2017, 49–50).

Yet, as examples of some of the difficulties that hinder the study of these documents, consider that peer-review reports are often owned and guarded by organisations that wish to protect not only the anonymity of reviewers but also the systems of review that bring them an operational advantage – anonymous review comes with several benefits for organisations that are in commercial competition with one another. In particular, and as just one instance, since reviewers often work for multiple publication outlets (that is, the same reviewers can review for more than one journal), the claim of one publisher to have a more rigorous standard of review or better quality of reviewer than other outlets could be damaged were review processes open, non-anonymous, and subject to transparent verification. (It is also clear that top presses can publish bad and incorrect work and that excellent work can appear in less prestigious venues (Reference ShatzShatz 2004, 130).) Further, these organisations often do not have conditions in place that will allow research to be conducted upon peer-review reports. The earliest studies of peer review, therefore, generally used survey methodologies rather than directly interrogating the results of the process (Reference ChaseChase 1970; Reference Lindsey and LindseyLindsey and Lindsey 1978; Reference Lodahl and GordonLodahl and Gordon 1972). As an occluded genre of writing that nonetheless underpins scientific publication, relatively little is known about the ways in which academics write and behave, at scale, in their reviewing practices.

As another example, an absence of rules and guidelines around ownership of peer-review comments certainly contributes to the challenges. The lack of financial incentives in many cases and, therefore, contracts and agreements with reviewers have meant that no clear ownership of reviews has been established (for more on the economics and financials of scholarly communications in the digital era, see Reference GansGans 2017; Reference HoughtonHoughton 2011; Reference Kahin and VarianKahin and Varian 2000; Reference McGuigan and RussellMcGuigan and Russell 2008; Reference MorrisonMorrison 2013; Reference WillinskyWillinsky 2009). In the absence of such statements, it can be assumed that copyright remains with the author of the reports in most jurisdictions. Publishers often do not wish to exert any dominance in this area for fear of dissuading future referees, who can be hard enough to persuade at the best of times. Reviews, therefore, exist in a world of grace and favour rather than one with any clear legal framework. Publishers also benefit from opacity in this domain in other ways. For example, by keeping poor-quality reviews hidden from sight, journals are able to build their reputations on other, less direct, criteria such as citation indices and editorial board celebrity (for more on the occluded nature of peer review, see Reference GosdenGosden 2003). A journal’s reviewer database can also provide competitive advantage and bring value to a publishing stable beyond the status of the title itself.

That said, in spite of these difficulties, a substantial number of studies have examined peer review (for just a selection, consider Reference Bonjean and HullumBonjean and Hullum 1978; Reference Mustaine and TewksburyMustaine and Tewksbury 2008; Reference Smigel and RossSmigel and Ross 1970; Reference Tewksbury and MustaineTewksbury and Mustaine 2012), and it would be a mistake to call the field under-researched, although the methods used are diverse and disaggregated (Reference Grimaldo, Marušić and SquazzoniGrimaldo, Marušić, and Squazzoni 2018; Reference Meruane, González Vergara and Pina-StrangerMeruane, González Vergara, and Pina-Stranger 2016, 181). Indeed, Reference Meruane, González Vergara and Pina-StrangerMeruane, González Vergara, and Pina-Stranger (2016, 183) provide a good history of the disciplinary specialisations of the study of peer-review processes (PRP) since the 1960s noting that while ‘PRP has been a prominent object of study, empirical research on PRP has not been addressed in a comprehensive way.’ The precise volume of research varies by the way that one searches, but there appears to be up to 23,000 articles on the topic between 1969 and 2013 by one count – and this does not even include the so-called grey literature of blogs (Reference Meruane, González Vergara and Pina-StrangerMeruane, González Vergara, and Pina-Stranger 2016, 181). It is, then, well beyond the scope of this book to provide a comprehensive meta-review of the secondary literature on peer review. The interested reader, though, could consult one of the many other studies that have conducted such an exercise (Reference BornmannBornmann 2011b; Reference Meruane, González Vergara and Pina-StrangerMeruane, González Vergara, and Pina-Stranger 2016; Reference Silbiger and StublerSilbiger and Stubler 2019; Reference WellerWeller 2001). Much, although by no means all, of this research has been critical of peer-review processes, finding our faith in the practice to be misplaced (Reference SquazzoniSquazzoni 2010, 19; Reference Sugimoto and CroninSugimoto and Cronin 2013, 851–2; for more positive opinions on the process, see Reference Bornmann, Shin, Toutkoushian and TeichlerBornmann 2011a; Reference GoodmanGoodman 1994; Reference Pierie, Walvoort and OverbekePierie, Walvoort, and Overbeke 1996). Critics of peer review usually point to its poor reliability and lack of predictive validity (Reference Fang, Bowen and CasadevallFang, Bowen, and Casadevall 2016; Reference Fang and CasadevallFang and Casadevall 2011; Reference HerronHerron 2012; Reference Kravitz, Franks, Feldman, Gerrity, Byrne and TierneyKravitz et al. 2010; Reference MahoneyMahoney 1977; Reference Schroter, Black, Evans, Carpenter, Godlee and SmithSchroter et al. 2004; Richard Reference SmithSmith 2006b); biases and subversion within the process (Reference BardyBardy 1998; Reference Budden, Tregenza, Aarssen, Koricheva, Leimu and LortieBudden et al. 2008; Reference Ceci and PetersCeci and Peters 1982; Reference ChawlaChawla 2019; Reference Chubin and HackettChubin and Hackett 1990; Reference CroninCronin 2009; Reference Dall’AglioDall’Aglio 2006; K. Reference Dickersin, Chan, Chalmers, Sacks and SmithDickersin et al. 1987; Reference Dickersin, Min and MeinertKay Dickersin, Min, and Meinert 1992; Reference Ernst and KienbacherErnst and Kienbacher 1991; Reference FanelliFanelli 2010, Reference Fanelli2011; Reference Gillespie, Chubin and KurzonGillespie, Chubin, and Kurzon 1985; Reference IoannidisIoannidis 1998; Reference LinkLink 1998; Reference LloydLloyd 1990; Reference MahoneyMahoney 1977; Reference Ross, Gross, Desai, Hong, Grant, Daniels, Hachinski, Gibbons, Gardner and KrumholzRoss et al. 2006; Reference ShatzShatz 2004, 35–73; Reference Travis and CollinsTravis and Collins 1991; Reference TregenzaTregenza 2002); the inefficiency of the system (Reference Ross-HellauerRoss-Hellauer 2017, 4–5); and the personally damaging nature of the process (Reference BornmannBornmann 2011b, 204; Reference Chubin and HackettChubin and Hackett 1990). For instance, and as just a sample, when Reference Rothwell and MartynRothwell and Martyn (2000, 1964) studied the reproducibility of peer-review reports, they repeated Ingelfinger’s assertion that ‘although recommendations made by reviewers have considerable influence on the fate of both papers submitted to journals and abstracts submitted to conferences, agreement between reviewers in clinical neuroscience was little greater than would be expected by chance alone.’ As another example, more recent work by Reference Okike, Hug, Kocher and LeopoldOkike et al. (2016) unveiled a strong unconscious bias among reviewers in favour of known or famous authors and institutions in the discipline of orthopaedics when using a single-blind mode of review. This casts serious doubt on claims to be able to ‘put aside’ one’s knowledge of an author or to act objectively in the face of conflicts of interest, although in other disciplinary spaces, it has been argued that the definition of merit, as defined in a discipline, is constructed by particular figures and that this identity should play a part in the evaluation of their work (Reference FishFish 1988). Additionally, Reference Murray, Siler, Lariviére, Chan, Collings, Raymond and SugimotoMurray et al. (2018, 25) explore the relationship between gender and international diversity and equity in peer review, concluding that ‘[i]ncreasing gender and international representation among scientific gatekeepers may improve fairness and equity in peer review outcomes, and accelerate scientific progress.’

Special mention should also be made of the PEERE Consortium, which has, in particular, achieved a great deal in opening up datasets for study (PEERE Consortium n.d.). Aiming, with substantial European research funding, to ‘improve [the] efficiency, transparency and accountability of peer review through a trans-disciplinary, cross-sectorial collaboration’, the consortium has been one of the most prolific centres for research into peer review in the past half decade. Publications from the group have spanned the author perspective on peer review (Reference Drvenica, Bravo, Vejmelka, Dekanski and OlgicaDrvenica et al. 2019), the reward systems of peer review (Reference Zaharie and SeeberZaharie and Seeber 2018), the links between reputation and peer review (Reference Grimaldo, Paolucci and Sabater-Mir.Grimaldo, Paolucci, and Sabater-Mir 2018), the role that artificial intelligence might play in future structures of review (Reference 97Mrowinski, Fronczak, Fronczak, Ausloos and NedicMrowinski et al. 2017), the timescales involved in review (Reference Huisman and SmitsHuisman and Smits 2017; Reference Mrowinski, Fronczak, Fronczak, Nedic and AusloosMrowinski et al. 2016), the reasons why people cite retracted papers (Reference Bar-Ilan and HaleviBar-Ilan and Halevi 2017), the fate of rejected manuscripts (Reference Casnici, Grimaldo, Gilbert and SquazzoniCasnici, Grimaldo, Gilbert, Dondio et al. 2017), and the ways in which referees act in multidisciplinary contexts (Reference Casnici, Grimaldo, Gilbert and SquazzoniCasnici, Grimaldo, Gilbert, and Squazzoni 2017).

In terms of the language used in peer review reports, work by Brian Reference PaltridgePaltridge (2015) has examined the ways in which reviewers request revisions of authors, using a mixed-methods approach. Paltridge studied review reports for the peer-reviewed journal English for Specific Purposes finding a mixture of implicit and explicit directions for revision used by reviewers, making for a confusing environment in which ‘what might seem like a suggestion is not at all a suggestion’ but ‘rather, a direction’ (Reference PaltridgePatridge 2015, 14), a view echoed by Reference GosdenGosden (2001, 16). Somewhat in contrast to this, though, Reference KourilovaKourilova (1998) found that non-native users of English often wrote with an honest, or brutal, bluntness in their reports for a range of sociocultural reasons. While this may come with its own challenges and be painful for authors, such bluntness is far less subject to misinterpretation than hedged attempts at avoiding offence. Comments with such a negative tone can also appear in published book reviews (Reference Salager-Meyer and AndrásSalager-Meyer 2001), but these are often more complimentary than behind-the-scenes peer-review reports (Reference HylandHyland 2004, 41–62). The ‘out of sight’ or occluded nature of peer review (Reference Swales and FeakSwales and Feak 2000, 229) tends, then, to lend itself to more critical judgements.

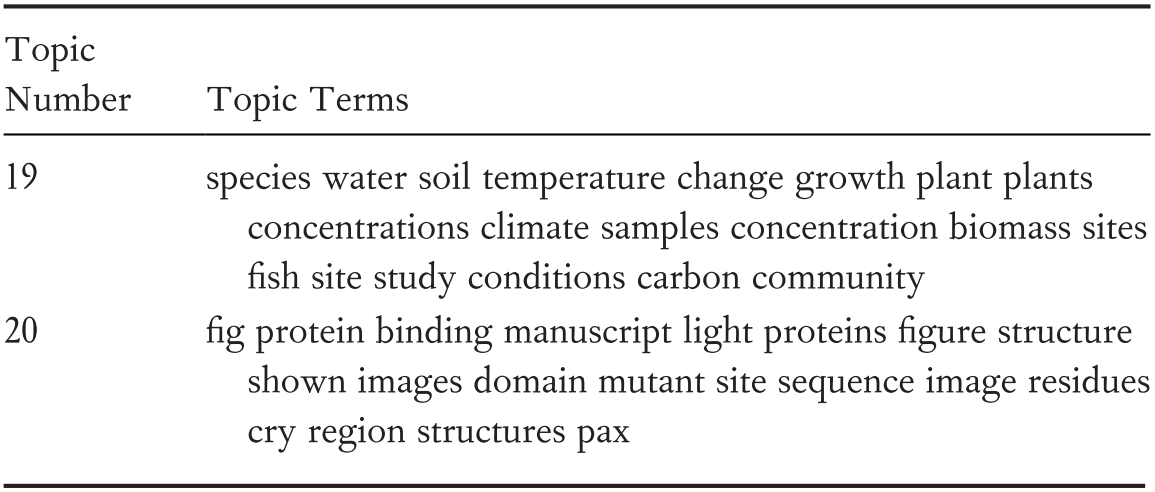

New Modalities of Peer Review

For some commentators, peer review is the least bad option, and any intervention is only likely to result in poorer outcomes. Others, though, have proposed a range of new methods and operational modes that are designed to address the perceived shortcomings in existing review protocols. Certainly, for the most part, this has taken place within the natural sciences and, as David Shatz remarks, ‘the paucity of humanities literature on peer review … [is] truly striking’, although he is unable to explain why this should be (Reference Shatz2004, 4). In this section of this chapter, we outline these new experiments, mostly within this disciplinary space.

Post-Publication Review

The twenty-first century is characterised by what Clay Shirky has famously called ‘filter failure’ (Reference Shirky2008). In the face of an ever-increasing abundance of material – be that scholarly, information, or news – it is apparent that we face severe difficulties in knowing where to spend our scarce attention (Reference BhaskarBhaskar 2016; Reference Eve and TabbiEve 2017; Reference NielsenNielsen 2011). Various solutions have been posed for how this might be remedied, most of which centre on what Michael Bhaskar calls a culture of ‘curation’, in which whether by algorithm or by human selection, the ‘wheat is sorted from the chaff’ (Reference BhaskarBhaskar 2016). What it might mean to do so appropriately is, of course, a matter of some contention. Algorithms that surface only mainstream content when we are looking for outliers represent just another problematic case of filter failure, as opposed to any viable solution.

The same problems apply to the scholarly and research literature (Reference Eve, Eve and GrayEve 2020). We exist in a world where more is published than it is possible for a person to read, even in almost every niche sub-discipline. Some solutions have taken the algorithmically curated route. Hence, there have been, on the reader side, several attempts to provide automatic summaries of articles, condensing these otherwise large artefacts down into bite-size, digestible chunks (‘Get The Research’ n.d.; Reference McKenzieMcKenzie 2019; Reference Nikolov, Pfeiffer and HahnloserNikolov, Pfeiffer, and Hahnloser 2018). Yet, we actually exist within a model of digital authorship that R. Lyle Reference SkainsSkains (2019) has dubbed ‘the attention economy’. It is a model where we only discover material that can float above the otherwise amorphous mass of scholarship. Simply shortening the material itself can help, but it does not resolve the discoverability deficit (Reference NeylonNeylon 2010).

This is problematic for scholarly research writing in a way that it is not for, say, works of fiction. While nobody enjoys a badly researched novel, the degree of comprehensiveness required remains considerably less for fiction than for science and scholarship. Writers of historical fiction, for example, conduct research to provide themselves with historically plausible details – appropriate language, modes of transportation, manners of dress, and so on. They seek evidence and descriptions, rather than resolving long-standing debates.

Scientists and scholars, on the other hand, are held to a much higher, though often more narrow, standard. While they are not responsible, as the novelist is, for the construction of an entire world, they are responsible in most cases for the entire range of professional opinion on their topic: what other researchers have found, where they have disagreed, and where the gaps are among them. To conduct new, rigorous research (or even a well-designed replication study), researchers need to have read everything that is pertinent to their topic. Domain mastery is essential and many see the mitigation of this problem as the role of peer review. If there is too much to read and too little time, perhaps too much is being published, the reasoning goes. By subdividing fields into topical journals – even if these do, then, mutate into ‘club goods’, rather than mere subject-based discoverability aids (Reference Hartley, Potts, Montgomery, Rennie and NeylonHartley et al. 2019; Reference Potts, Hartley, Montgomery, Neylon and RenniePotts et al. 2017) – and then by limiting the volume that is published, the idea is that researchers have an easy way to keep abreast of their field: simply read that journal.

This model has become untenable and problematic for many reasons. The first is that most high-status journals, which act as poor proxies for quality in institutional hiring and promotion metrics, publish across a range of fields. In addition to monitoring The Journal of Incredibly Niche Studies, authors must also regularly check Nature, Cell, Science, PMLA, New Literary History, the journals of the Royal Society and so on, depending upon their field, all of which publish across sub-disciplinary spaces. In this context, most of the content in these publications will not be relevant to a reader who aims to work on a specific project. But they also cannot be dismissed and must be checked. Hence, journal brand does not work well as a filter for subject, even if one believes that it can work as a filter for quality (that is, one might assume – although this assumption is not, in itself, non-problematic – that work published in Nature is good but it may not be relevant) (see Reference Moore, Neylon, Eve, O’Donnell and PattinsonMoore et al. 2017).

In light of this discussion, though, we can also query whether the work that is published is necessarily good. This is the second problem: given the critiques and failures of peer review to act as a reliably predictive arbiter of quality; if the review system is used as an exclusionary filter, we know that some good work will not be published and that some bad work will nonetheless appear. For although the logic of the system seems sound – the more important and rigorous a work appears to be, when appraised by experts, the more prominence it should be given through publication in a ‘top’ venue – it is not clear that expert preselection is able accurately to determine, in advance, whether work is important or even correct (Reference Brembs, Button and MarcusBrembs, Button, and Munafò 2013). In this sense, then, peer review cannot act universally well as a filter for quality, either, admitting both Type I (false positive) and Type II (false negative) errors.

What, though, if there were another way of surfacing relevant material that was also good? Given that we only seem to know whether material was accurate and true after the fact, some publications have speculated that post-publication peer review might be a sound way of ensuring that correct material is not excluded, while also seeking expert judgement on the accuracy of work. The basic premise of such systems works as follows:

1. The work is made available online, before a review is conducted;

2. Expert reviews are either solicited or volunteered on the publicly available manuscript;

3. These reviews are either made public or used as evidence to remove the piece from the public record if they are unanimously negative.

One of the challenges with point three is that retractions are less effective than work not entering the public space at all, as we have also seen in the case of the traditionally vetted, but problematic, work by Wakefield. Further, for technical and preservation reasons, retracted articles are not removed but merely marked as retracted. Post review may, if anything, make the problem of fraudulent or incorrect work entering the public record – a major reason for establishing a vetting system in the first place – worse and even more difficult to correct.

Proposals for post-publication peer review arise as a recognition of the fact that we are not very good at determining, ahead of publication, what is important and good. However, it comes with its own challenges. When a publisher seeks a traditional pre-publication review, they could argue that they are conducting a form of due diligence, in which they have done the best they could to ensure the accuracy of material. This could be important in specific biomedical sciences and the applied medical literature, even if we know that peer review has flaws. In a model that uses post-publication peer review, it is not clear that the same could be said. If material is published that then causes harm, it is not clear that a publisher could argue that they had done everything they could to prevent this, even when they do not endorse the material. Further, it is unclear who would take the legal blame here. While authors usually must sign a waiver for negligence due to inaccuracy, publishers are the ones displaying the material and claiming that they add value to the truth of the work. In addition, in many jurisdictions one cannot disclaim negligence for death or injury. Further, if one believes that peer review does do at least some good in filtering out bad material, then it is clear that this model of peer review will be one in which readers would ‘have to trek through enormous amounts of junk’ to find true material and one in which inundation frustrates truth (Reference ShatzShatz 2004, 16, 25). This is also a type of ‘post-truth’ system in which, it is claimed, all competing views are equally legitimated: ‘flat earth theories now can be said to enjoy “scholarly support”’ (Reference ShatzShatz 2004, 16; see also Reference Holmwood, Eve and GrayHolmwood 2020).

PLOS ONE, the journal that is the subject of the study to which most of this book is devoted, uses a system of post-publication review with an initial review on grounds of ‘technical soundness’. In other words, there is a filtering system of pre-review at PLOS ONE, but it is not predicated on novelty, importance, or significance (although reviewers are free to alert editors to the potential significance of work if it is proved to be correct). Rather it is supposed to examine purely whether the experiment was established in a sound fashion and executed according to that plan, whether it is technically sound. Whether or not reviewers actually assess according to these criteria is something to which we will later turn. Whether or not post-publication peer review even actually happens with any regularity is another problematic matter (Reference PoynderPoynder 2011, 17).

Most systems of post-publication peer review, such as that piloted at F1000, present a ‘tick box’ mechanism, where readers can easily see the review status on an article. This usually takes the form of marking the work as ‘approved’ (‘The paper is scientifically sound in its current form and only minor, if any, improvements are suggested’), ‘approved with reservations’ (‘Key revisions are required to address specific details and make the paper fully scientifically sound’), or ‘not approved’ (‘Fundamental flaws in the paper seriously undermine the findings and conclusions’). In this way, F1000 gives clear signals to its readership on the opinions of reviewers towards the material, while not preselecting in advance. This allows for an evaluation of both the article and the review process, especially since the reviewer names and comments are publicly available, the subject of the next section.

Open Peer Review

There is little to no standardised consensus on what is meant by the term ‘open peer review’, even within the self-identifying ‘open science’ community (Reference FordFord 2013; Reference HamesHames 2014; Reference Ross-HellauerRoss-Hellauer 2017; Reference WareWare 2011). As Tony Ross-Hellauer notes:

While the term is used by some to refer to peer review where the identities of both author and reviewer are disclosed to each other, for others it signifies systems where reviewer reports are published alongside articles. For others it signifies both of these conditions, and for yet others it describes systems where not only ‘invited experts’ are able to comment. For still others, it includes a variety of combinations of these and other novel methods.

Indeed, for Ross-Hellauer, seven types of open peer review are identified in the secondary scholarly literature on the subject:

Open identities (‘Authors and reviewers are aware of each other’s identity.’)

Open reports (‘Review reports are published alongside the relevant article.’)

Open participation (‘The wider community are [sic] able to contribute to the review process.’)

Open interaction (‘Direct reciprocal discussion between author(s) and reviewers, and/or between reviewers, is allowed and encouraged.’)

Open pre-review manuscripts (‘Manuscripts are made immediately available (e.g., via pre-print servers like arXiv) in advance of any formal peer review procedures.’)

Open final-version commenting (‘Review or commenting on final “version of record” publications.’)

Open platforms (‘Review is facilitated by a different organizational entity than the venue of publication.’) (Reference Ross-HellauerRoss-Hellauer 2017, 7; for an alternative taxonomy, see Reference Butchard, Rowberry, Squires and TaskerButchard et al. 2017, 28–32)

While ‘open pre-review manuscripts’ were covered in the earlier section on the temporality of review, any of these other areas are up for grabs under the heading ‘open review’. As such, we will treat each here in turn.

We have already given some attention to issues of anonymity in review, but one of the definitions of open peer review seeks to make public the names of reviewers, either to authors or to the readership at large. It is worth adding to the previous discussion, though, that a series of studies in the 1990s showed, counter-intuitively, that revealing the identity of reviewers makes no difference to the detection and reporting of errors in the review process (Reference Fisher, Friedman and StraussFisher, Friedman, and Strauss 1994; Reference Godlee, Gale and MartynGodlee, Gale, and Martyn 1998; Reference Justice, Cho, Winker, Berlin and RennieJustice et al. 1998; Reference McNutt, Evans, Fletcher and FletcherMcNutt et al. 1990; Reference van Rooyen, Godlee, Evans, Black and Smithvan Rooyen et al. 1999). Nonetheless, in the early 2020s many venues were experimenting with forms of open or signed reviews, most notably, again, F1000, which also provides platforms for major funders such as the Wellcome Trust. Such venues also extend into the ‘open reports’ category, as it is possible to read the reviews provided by each named reviewer. This comes with a type of reputational benefit to reviewers, surfacing an otherwise hidden form of labour for which credit is not usually forthcoming.

Models of ‘open participation’ in which anyone may review a manuscript are more contentious, for they might stretch the bounds of the term ‘peer’ to the breaking point (for more on which, see Reference FullerFuller 1999, 293–301; Reference TsangTsang 2013), although some systems such as Science Open require that a prospective referee show they are an author on at least five published articles (as demonstrated through their ORCID profile) to qualify (Reference Ross-HellauerRoss-Hellauer 2017, 10). It is also the case that such open participation efforts can see low levels of uptake when reviewers are not explicitly invited (Reference Bourke-WaiteBourke-Waite 2015). That said, there have been extensive calls for greater interactivity that would replace the judgemental and potentially exclusionary characteristics of peer review with dialogue between researchers but also with a wider community (Reference KennisonKennison 2016). Furthermore, the development of external review framework sites such as Pubpeer and Retraction Watch have fulfilled a genuine community need for scrutiny of work, after it has been published. These are community-run sites, often with anonymous commenting, and so suffer, technically, from the same drawbacks as other open participation platforms. However, several high-profile instances of misconduct have been identified in recent years through such platforms, demonstrating substantial value even as these mechanisms sit outside the mainstream practices of peer review.

Finally, sites such as Publons, RUBRIQ, Axios Review, and Peerage of Science offer or have offered independent services whereby reviews can be ‘transferred’ between journals. In theory, this makes sense; as the same reviewers often work for different publications; behind the scenes, there is a mass duplication of labour in the event of resubmission to a new venue. It is even possible that the same reviewers may be asked to appraise work at a different journal if work is resubmitted elsewhere. In practice, though, this portability poses substantial challenges for journals. For example, one might ask, what is the point of a journal if peer review is decoupled from the venue? If the work has been reviewed, why could it not appear in an informal venue, such as a blog or personal website, and merely point back to the peer-review reports? This would be particularly potent if the names of the reviewers were also available. There are also questions of versioning. Ensuring that it is clear to which version each peer review points is critical in such situations.

New Media Formats

Finally, while it might seem, at first glance, that the media form of the object under review is irrelevant to a review process, this is not the case. Despite debates over the fixity of print (Reference EisensteinEisenstein 2009; Reference HudsonHudson 2002; Reference JohnsJohns 1998), the fundamentally unfinished and even incompletable nature of interactive, collaborative, and born-digital scholarship poses new challenges for peer review (Reference RisamRisam 2014). For if work continues to go through iteration after iteration, as just one example, how are review processes to be managed, at a point when peer review already appears to be buckling beneath the burden of unsustainable time demands (Reference Bourke-WaiteBourke-Waite 2015)? Further, one might also consider how new types of output – software, data, non-linear artefacts with user-specific navigational pathways (interactive visualisations, for instance), practice-based work – would fare under conventional review mechanisms (Reference HannHann 2019).

Conclusions

Since its rise to prominence in the late twentieth century, peer review has occupied a leading place in the cultural scientific imaginary. It has been enshrined as the gold standard for the arbitration of quality and is often falsely thought to be a timeless element of scientific intersubjectivity. Yet, despite its seventeenth-century or eighteenth-century origins (Reference Butchard, Rowberry, Squires and TaskerButchard et al. 2017, 7; Reference FitzpatrickFitzpatrick 2011, 21) and its evolution to become ‘sanctified through long use’ (Reference 78BaverstockBaverstock 2016, 65), the wide-scale adoption of peer review is nowhere near so old as many people imagine and, as this chapter has shown, there is also much disquiet about its efficacy, accuracy, and negative consequences. This has led to a range of new, experimental practices that attempt to modify the peer-review workflow, changing the temporalities (pre- vs post-), visibilities (open identities vs anonymity), and positional relationalities (at-venue vs portability) of these practices. Given the speed of ascent with which peer review has moved to claim its crown, though, it would not be surprising if another modality of practice could rise as swiftly to supplant it. Surely, though, any new method would also be subject to fresh critique. For none of the mentioned methods is perfect and peer review is likely always to have its discontents.

This leads to the obvious question: in relation to process and procedure, does change actually happen? If changes to processes are mooted, does this lead to substantive change in practices or indeed broader cultural and intellectual change? What opportunities are there to test such questions?

In the remainder of this book, we turn to these questions through a focal lens on the Public Library of Science (PLOS). We present this organisation as a case study in attempted radicalism in peer-review practice and unearth the degree to which it has been successful in initiating institutional change in academia. In Chapter 2, we examine the ways in which PLOS sought to reshape review and the challenges that it faced. We give a background history of the organisation and its mission, which are crucial to understanding PLOS’s strategy for change. We also detail the sensitivities of and difficulties faced in studying a confidential peer-review report database and the protocols that we had to develop to conduct this work. We finally look at the overarching structure of the database, detailing how much reviewers write and the subjects on which they centre their analysis.

In Chapter 3 we turn to a set of specific questions around PLOS’s supposedly changed practice. In this chapter, we ask whether PLOS’s guidelines yield a new format of review; whether reviewers in PLOS ONE ignore novelty, as they are supposed to; whether reviews in PLOS ONE are more critically constructive than one might expect; how much of a role an author’s language plays in the evaluation of a manuscript; how much of a reviewer’s behaviour can be controlled or anticipated; and to what extent reviewers understand new paradigms of peer review. The last part of this chapter details our only partially successful efforts to use computational classification methods to ‘distant read’ the corpus. Finally, in Chapter 4 we turn to an appraisal of the extent to which PLOS has managed to change the standards for peer review in a broader fashion.

As a final note, the subtitle of this book makes reference to ‘institutional change in academia’ more widely. Isn’t it presumptuous to assume that PLOS and its niche crafting of new modalities of peer review can act as a synecdoche for all types of change within academia? Perhaps. Yet PLOS has been incredibly influential. It has grown to house the largest single journal on the planet, and it was founded from a position espousing radical change. We believe, therefore, that valuable lessons can be drawn from PLOS’s experience. We now move to set out the background against which these lessons can be taught.

2 The Radicalism of PLOS

A New Hope

We closed the introduction with a set of questions. These can be summarised as follows: given an agenda for new practice, what opportunities are there to observe and critique interventions intended to drive change?

The Public Library of Science (PLOS) can safely be characterised as an organisation that shared the concerns about peer review that we outlined earlier and that has sought to drive such change through a radical approach. Instigated in the year 2000 as a result of a petition by the Nobel Prize–winning scientist Harold Varmus, Patrick O. Brown of Stanford, and Michael Eisen of the University of California, Berkeley, PLOS – or ‘PLoS’ as it was initialled until 2012 (PLOS 2012) – was originally established with the support of a $9 million grant from the Gordon and Betty Moore Foundation. The vision here was one of a radically open publication platform in which PLoS Biology and PLoS Medicine, their two initial publications, would ‘allow PLoS to make all published works immediately available online, with no charges for access or restrictions on subsequent redistribution or use’ (Gordon and Betty Moore Foundation 2002).

Yet open access to published content, itself a fairly outlandish proposition at the time, was not enough for PLOS. Frustration was growing among the founders and board that peer review was returning essentially arbitrary results. As Catriona J. MacCallum put it, ‘[t]he basis for such decisions’ in peer review ‘is inevitably subjective. The higher-profile science journals are consequently often accused of “lottery reviewing,” a charge now aimed increasingly at the more specialist literature as well. Even after review, papers that are technically sound are often rejected on the basis of lack of novelty or advance’ (Reference MacCallum2006). PLOS wanted to do something different.

Specifically, in 2006 PLOS established PLOS ONE (again, originally PLoS ONE), a flagship journal that works differently from many other venues. The peer-review procedure at PLOS ONE is predicated on the idea of ‘technical soundness’ in which papers are judged according to whether or not their methods and procedures are thought to be solid, rather than on the basis of whether their contents are judged to be important (PLOS 2016b). Notably, though, even this definition is contentious: subjectivity is involved in the assessment of whether a methodology is rigorous and sound (Reference PoynderPoynder 2011, 25–26). For instance, to understand whether a methodology is suitable, one must not only know and understand its mechanics but also imagine potential problems and alternative experiments. Even while the aim of an experimental set-up is to eradicate subjectivity from observation, it takes imagination and subjectivity to create and then to appraise good experimental design. PLOS ONE was nonetheless designed to ‘initiate a radical departure from the stifling constraints of this existing system’. In this new model, it was claimed, ‘acceptance to publication [would] be a matter of days’ (Reference MacCallumMacCallum 2006).

At the time of its inception, this was a fairly radical idea (Reference GilesGiles 2007; The Editors of The New Atlantis 2006). Dubbed ‘peer-review lite’, the mechanism was nonetheless still far from the more innovative, pure post-publication approaches that we earlier described. Instead, it offered a lowered threshold for publication on the grounds that replication studies (‘not novel’), pedestrian basic science (‘not significant’, or otherwise framed as Kuhnian ‘normal science’), and even failed experiments (‘not successful’) should all have their place in the complete scientific record. Of course, one might ask how far PLOS would really be willing to take this. Would a well-designed replication study of the boiling point of water be published? It appears to fulfil the criteria of technical soundness while also replicating a well-known result. We doubt, however, that such an article would, in reality, make it through even peer-review lite.

As it was described at the time, the plan was for PLOS ONE to use ‘a two-stage assessment process starting when a paper is submitted but continuing long after it has been published’. A handling editor – of which PLOS had assembled a vast pool – could ‘make a decision to reject or accept a paper (with or without revision) on the basis of their own expertise, or in consultation with other editorial board members or following advice from reviewers in the field’ (Reference MacCallumMacCallum 2006). The fact that handling editors could make their own judgement internally was indeed particularly controversial (Reference PoynderPoynder 2011, 26). This review process was supposed to focus ‘on objective and technical concerns to determine whether the research has been sufficiently well conceived, well executed, and well described to justify inclusion in the scientific record’ (Reference MacCallumMacCallum 2006).

PLOS also asserted at the time that ‘peer review doesn’t, and shouldn’t, stop’ at publication (for more on this, see Reference Spezi, Wakeling, Pinfield, Fry, Creaser and WillettSpezi et al. 2018). Although disallowing anonymous commenting and having a code of conduct with civility criteria for reviewers, the idea for PLOS ONE was that ‘[o]nce a paper is in the public domain post-publication, open peer review will begin.’ This was explicitly driven by technological capacity. It was an area ‘where the increasing sophistication of web-based tools [could] begin to play a part’ and PLOS’s new partnership with the TOPAZ platform at the time drove the capacity for post-publication review (Reference MacCallumMacCallum 2006). In this sense, the radicalism of PLOS was pushed in part by technological capacity – a technogenetic source made ‘possible because some of the limitations and costs of traditional publishing begin to go away on the Internet’ (Reference PoynderPoynder 2006a). It was also fuelled, though, by the social concerns about peer review, its (lack of) objectivity, and its relative slowness.

Critics further pointed out, though, that the broad scope of PLOS ONE, coupled with its high acceptance rate, could be construed as a revenue model for the organisation (Reference PoynderPoynder 2011, 9). This was one of the main concerns of an economic situation that rested upon the acceptance of papers for a revenue stream: that it might introduce a perverse incentive to accept work. For those with greater faith in conventional peer review, the experiment in peer-review lite was a cynical economic means by which PLOS could cross-subsidise its selective titles, while propounding an ethical argument about the good of science.

In addition to its culture of radical experiment, PLOS ONE is, further, an interesting venue as, unlike many other journals, it has a long-standing clause that makes reviews available for research, provided that the research in question has obtained internal ethical approval (PLOS 2016a). And this is where we step in. PLOS provided us with a database consisting of 229,296 usable peer-review reports written between 2014 and 2016 from PLOS ONE. While the two-year period gap does contain potential for heterogeneity of policy to cause challenges for analysis, the relatively short time span should, we hope, provide some coherence between analysed units. There were other reports in this database, but the identifiers assigned to them made it impossible to group these reports by review round and so these data were discarded. We wanted to know the following: How have the radical propositions that led to the creation of PLOS ONE affected actual practices on the ground in the title? Do PLOS reviewers behave as one might expect given the radicalism on which PLOS ONE was premised? And what can we learn about organisational change and its drivers?

As we have already touched upon, papers in PLOS ONE span various disciplinary remits, albeit mainly in the natural sciences (where the majority of research into peer review has already found itself focused (Reference Butchard, Rowberry, Squires and TaskerButchard et al. 2017). ‘From the start’, wrote MacCallum, ‘PLoS ONE will be open to papers from all scientific disciplines.’ This was because, it was believed, ‘links’ of commonality ‘can be made between all the sciences’: an interesting argument that stops short of asserting that this might also apply to humanistic inquiry (Reference MacCallum2006). Varmus, indeed, conceived of PLOS ONE as ‘an encyclopedic, high volume publishing site’ across disciplines (Reference Varmus2009, 264). As an early staffer at PLOS ONE put it, the general view was that ‘science subjects developed in a pretty arbitrary way. The boundaries have effectively been imposed as a consequence of the way that journals work, and the way that universities are structured’, and PLOS ONE was designed, from the outset, to counter such disciplinary siloing (Reference PoynderPoynder 2006b). This multidisciplinarity yields opportunities but at the same time also creates evaluative challenges (Reference CollinsCollins 2010). Indeed, we must acknowledge early on that our study will be unable to draw discipline-specific conclusions, even while it may be able to speak generally across a relatively broad remit.

While it is unlikely, then, that a large-scale, mass review of peer-review practices across the academic funding and publishing sectors will emerge at any point in the near future, in the descriptive portions of this book, we report on our access to the PLOS ONE review corpus and thereby take the smaller step of interrogating a very large dataset that focuses only on the components of peer review concerned with technical soundness and that is ethically cleared for this type of research project. Specifically, in this chapter we describe the data-handling protocol, data-handling risks, and high-level composition of the database, our own aspects of ‘technical soundness’. We then move to outline the content types of the reports according to a coding taxonomy that we developed for this exercise and that was used to attempt to train a neural-network text classifier. Finally, we report on the stylometric properties of the reports and the correlations of different language use with recommended outcome.

The Peer Review Database and Its Sensitivities

Securing this database of peer-review reports meant accepting restrictions on its future use and on the extent to which the underlying dataset would need to remain private – a delicate matter to negotiate when one is working in a field of ‘open science’. Reviewers have an expectation that their reviews will be used in confidence within the review process and that they can remain anonymous. However, referees agree as part of the PLOS review process that their contributions may be used for research purposes:

In our efforts to improve the peer review system and scientific communication, we have an ongoing research program on the processes we use in the course of manuscript handling at the PLOS journals. If you are a reviewer, author or editor at PLOS, and you wish to opt out of this research, please contact the relevant journal office. Participation does not affect the editorial consideration of submitted manuscripts, nor PLOS’ policies relating to confidentiality of authors, reviewers or manuscripts.

Individual research projects will be subject to appropriate ethical consideration and approval and if necessary individuals will be contacted for specific consent to participate.

In order to minimise the risks of identification of referees from any analysis conducted (and associated reputational risks to PLOS, authors, and reviewers), our protocol required removal of all identifying data prior to release from PLOS to the project team. The project team never held email addresses or names of referees and all records that relate to submissions or publications in the relevant PLOS journals from any member of the project team were removed from the corpus by PLOS prior to the transfer of the dataset to the team. Where a project member was an academic or professional editor, rather than a reviewer, we did not consider that this posed a risk of reidentification of confidential information. We note that Cameron Neylon was an academic editor for PLOS ONE from 2009–12 and a PLOS staff member from 2012–15 but played no editorial role over the latter time period. Sam Moore worked at PLOS from 2009–12 and on PLOS ONE specifically from 2011–12.

The dataset was treated as sensitive and analysed only on project computers running the latest distribution of Ubuntu Linux using hard disks encrypted with the crypto-luks system and a minimum key-length of thirty characters. Backups were stored on two non-co-located computers with encrypted hard drives and data were never transferred via email, cloud storage, or other insecure online transmission mechanisms, instead using the secure copy facility (scp) using RSA key-based authentication. Data were only accessed by the researchers charged directly with actual analysis and with Martin Paul Eve or Daniel Paul O’Donnell as their direct supervisor. Other members of the project team had access to summaries that included snippets of representative text. The original dataset was destroyed at the end of the project and unfortunately cannot be shared. To obtain the original dataset, third-party researchers would need to contact PLOS directly. As a consequence, useful data sharing on this project is extremely limited in scope and relates only to compositional matters without any original citation. This, of course, causes substantial challenges for those who wish to replicate and/or verify our findings, and we regret that we are unable to be of much help here. Further, citation of the reports in this article are restricted to generic non-identifying statements and to versions of statements that are either redacted or have had their subject matter replaced or made synonymous. In this way, though, we are able to comment on the ways in which reviewers write and behave, without compromising anonymity and without linking any report to a final published or rejected article.

How Much Do Reviewers Write?

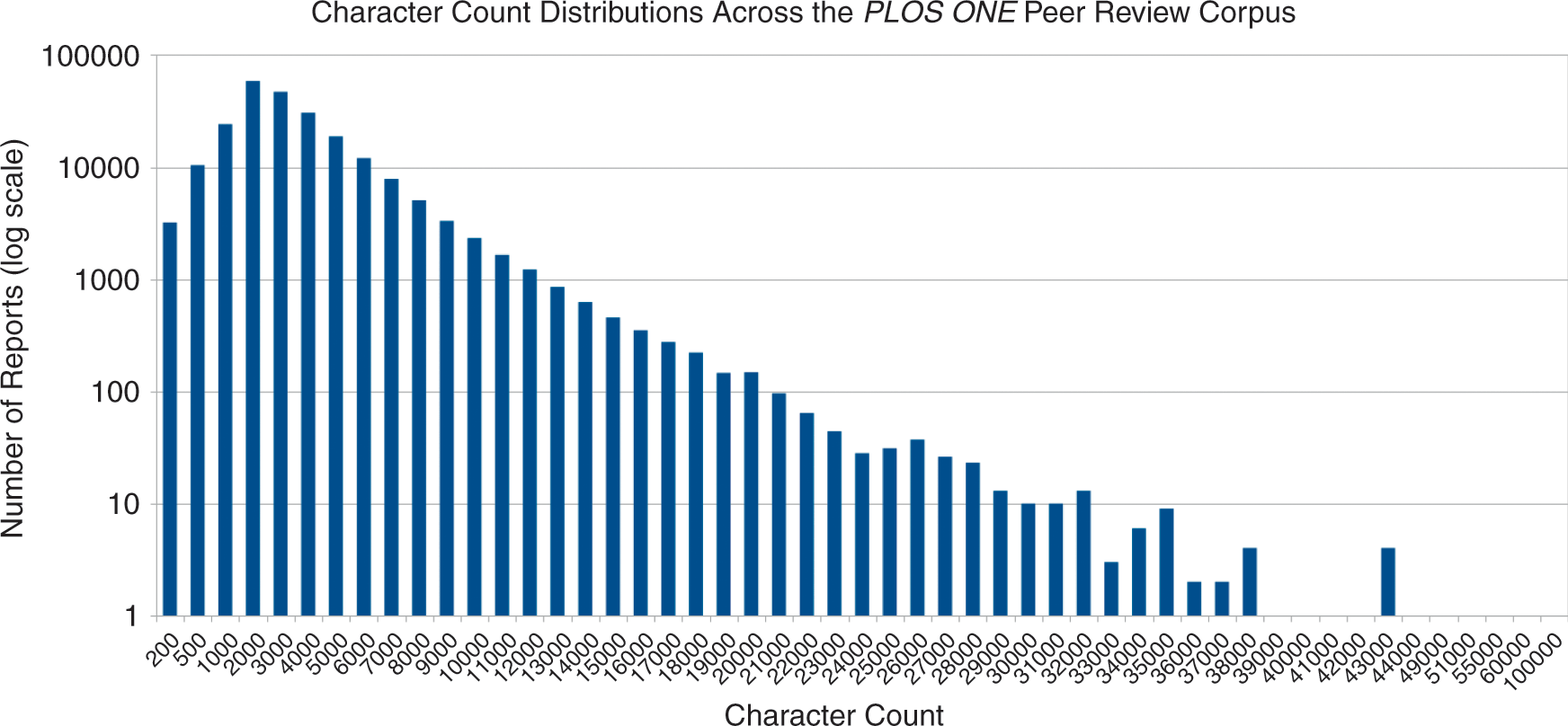

An initial fundamental quantitative question that can be asked of the database is the following: How much do peer reviewers write per review? This is an important question because it bears on the extent to which peer review is a gatekeeping mechanism as against a mechanism for constructive feedback. It takes very few words to say ‘accept’ or ‘reject’, but many more to help an author improve a paper. With PLOS’s early radicalism and belief that peer review could be a positive force in the new context, it was hard to know what to expect in terms of volume. Figure 1 plots the character counts of reviews in the database and shows a strongly positive skewed distribution peaking around the 2,000 to 4,000 character bands. This is approximately 500 words per report as the most common length (depending on the tokenisation method that is used to delimit words).

Character count frequency distributions across the PLOS ONE peer-review database

There are some significant outliers within this distribution (clear from the very long tail of Figure 1). The mean character count for reports (with an attempt to exclude conflict of interest statements) is 3,081. The median character count is 2,350. The longest report in the corpus, however, is 90,155 characters long, or just under 14,000 words; in all likelihood, this is longer than the article on which it comments (a study showed that for medical journals the mean length of article is 7.88 pages, or roughly 2,000–4,000 words at 250–500 words per page (Reference Falagas, Zarkali, Karageorgopoulos, Bardakas and MavrosFalagas et al. 2013)). The second-longest review is 59,946 characters, or approximately 5,000 words. Indeed, at the upper end of the distribution among those reviewers whose reports are voluminous, there appears to be roughly an inverse proportionality to rank in the frequency table. (That is, the first report is twice the length of the second, etc.)

However, some anomalies are masked by the apparent simplicity of this visualisation. For instance, one might assume that second-round reports, where a reviewer appraises whether or not a set of revisions has been accepted, would fall at the shorter end of the spectrum with a stronger positive skew. In our manual-tagging exercise, however, we often found substantive comments in subsequent rounds: indeed, reviewers frequently apologised for introducing additional criticisms in second- and third-round reviews that were not picked up in the original. In the overall amalgamation, we pulled together all sets of comments by the same reviewers on the same manuscript into single documents. Hence, Figure 1 gives a misleading picture of the corpus shape.

A more accurate way of classifying this is perhaps only to look at the first round of review. This distribution of first-round-only reports can be seen in Figure 2.

Character count frequency distributions across the PLOS ONE peer-review database, first-round reviews only

Plotting the first-round reviews as opposed to the general corpus makes little difference to the overall distribution pattern of the length of reviews. In general, most peer-review reports at PLOS ONE are less than 2,351 characters long and the addition of comments appraising whether revisions have been made, as in Figure 2, does not significantly affect the skew. These findings correlate with previous studies of the peer-review process, which found average report lengths to range from 304 to 1,711 words in other disciplinary spaces (Reference BolívarBolívar 2011; Reference FortanetFortanet 2008, 30; Reference GosdenGosden 2003; Reference SamrajSamraj 2016). Of course, other studies have concluded that the length of report is not proportional to its quality, when measured by the ‘level of directness of comments used in a referee report’ (Reference Fierro, Meruane, Varas Espinoza and González HerreraFierro et al. 2018, 213). An initial glance at the quantity written, though, shows no difference in practice to more conventional journals.

The higher bound for the average length of peer-review reports that we found in a multidisciplinary database has several consequences. For one, it may be indicative of an uneven distribution of length of report between disciplinary spaces. That is, some disciplines may have greater expected norms for the length of report that must be returned to be considered rigorous. At a time when peer review is thought to be growing at an unsustainable rate (Reference Bourke-WaiteBourke-Waite 2015), this may have different consequences in those disciplines where much longer reports are expected. Indeed, the labour time involved in conducting a peer review inheres not just in the length of the submitted artefact that must be appraised – although, superficially, it is quantitatively more effort to read a monograph of 80,000 words than an article of 2,000 words – but also in the expected volume of returned comments and the level of analytical detail that is anticipated by an editor both to signal that a proper and thorough review has been conducted and to ensure the usefulness of the report. To more accurately appraise the potential strains on the peer-review system, we would urge publishers with access to report databases to make public the average length of their reports by disciplinary breakdown to help disaggregate our findings.

What Do Reviewers Write?

To understand the composition of the database and to communicate these findings in a way that does not cite any material directly, we undertook a qualitative coding exercise (specifically domain and taxonomic descriptive coding (Reference McCurdy, Spradley and ShandyMcCurdy, Spradley, and Shandy 2005, 44–45)) in which three research assistants (RAs) collaboratively built a taxonomy of statements derived from the longer reviews. Two of the research assistants were native English speakers based in London in the United Kingdom (Gadie and Odeniyi), although we note that the policed boundary of ‘native’ and ‘non-native’ speakers comes with both challenges for the specific study of peer review and postcolonial overtones (Reference Lillis and CurryLillis and Curry 2015, 130; see also Reference EnglanderEnglander 2006). The third research assistant is an L2 English speaker based in Lethbridge in Canada (Parvin) with significant social scientific background experience, including with this kind of coding work. Eve and O’Donnell oversaw coordination between the research groups.

The goal of our coding exercise was to delve into the linguistic structures and semantic comment types that are used by reviewers, following previous work by Reference FortanetFortanet (2008). This would allow us to disseminate a description of some of the peer-review database without compromising the strict criteria of anonymity and privacy that the database demanded.

Qualitative coding techniques are always inherently subjective to some degree (Reference MerriamMerriam 1998, 48; Reference Sipe and GhisoSipe and Ghiso 2004, 482–3). Indeed, as Saldaña notes, there are many factors that affect the results of qualitative coding procedures:

The types of questions you ask and the types of responses you receive during interviews (Reference KvaleKvale 1996; Reference Rubin and RubinRubin and Rubin 1995), the detail and structuring of your field notes (Reference Emerson, Fretz and ShawEmerson, Fretz, and Shaw 1995), the gender and race/ethnicity of your participants – and yourself (Reference Behar and GordonBehar and Gordon 1995; Reference Stanfield and DennisStanfield and Dennis 1993), and whether you collect data from adults or children (Reference Greene and HoganGreene and Hogan 2005; Reference Zwiers and MorrissetteZwiers and Morrissette 1999).

We mitigated some of the language, race, and gender problems by ensuring diversity among the coding and research team. As just one instance of this across one identity axis, without Parvin’s input, we would have been confined to a British English anglophonic perspective, a stance that matches the profile of neither PLOS authors nor their reviewers. The triplicate process of coding that we undertook – in which each RA worked at first individually but then regrouped to build collaborative consensus among the group on both sentiment and thematic classification – also helped to construct an intersubjective agreement on the labels assigned for each term. Reference Bornmann, Weymuth and DanielBormann et al. (2010, 499) also used such a triplicate system. The downside of this approach is that, clearly, we traded accuracy for volume. It is also possible that a different group of coders would have used different terms, and part of our coding exercise involved a process of synonym checking. Such partial and subjectively situated knowledges are, however, an essential and well-recognised part of post-structuralist social-scientific studies, and we acknowledge explicitly our active involvement in the shaping and building of such knowledges (Reference CrottyCrotty 1998; Reference DenzinDenzin 2010; Reference HarawayHaraway 1988).

There are also debates as to the quantity of material that should be coded (Reference SaldañaSaldaña 2009, 15). We opted to use our RAs’ time to work initially on the richer reports, as a preliminary survey of the database did not reveal any types of statement in the shortest reviews that were not present, in some form, in the longer entries. Once the initial taxonomy had been constructed from the tagging of the longest reviews, we then took a random sample of reports from around the median character count length with the number of documents tagged determined by the funding constraints on the time of the RAs. This resulted in 78 triplicate tagged reports, consisting of 2,049 statements. The coding process resulted in the development of a taxonomy of statements found within the PLOS ONE review database. At the broadest level these pertained to the following:

the ‘field of knowledge’ to which the statement referred

‘references to data’

‘section analyses’

comments on ‘omission’

comments on ‘methodology’

comments on ‘expression/language’

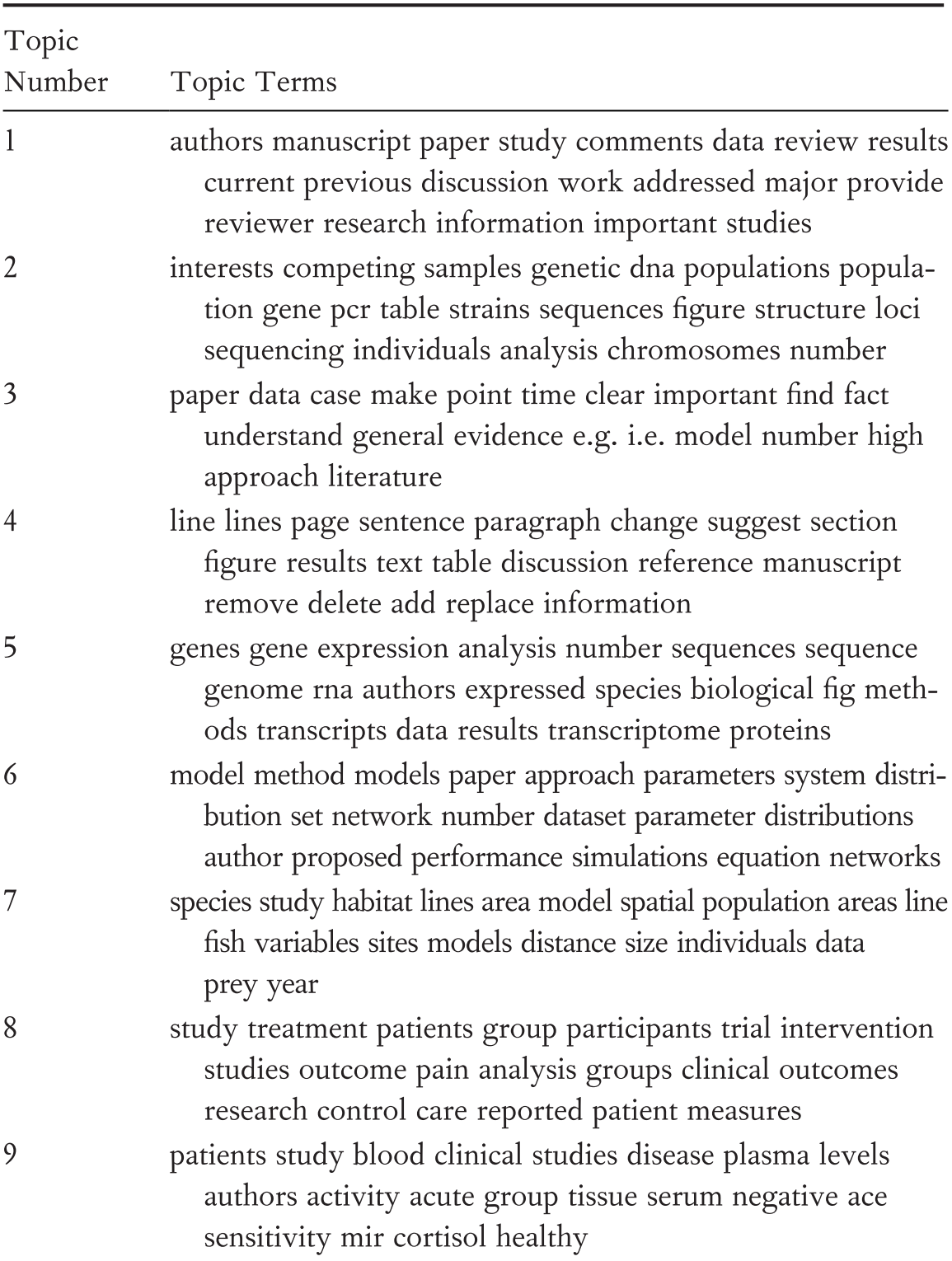

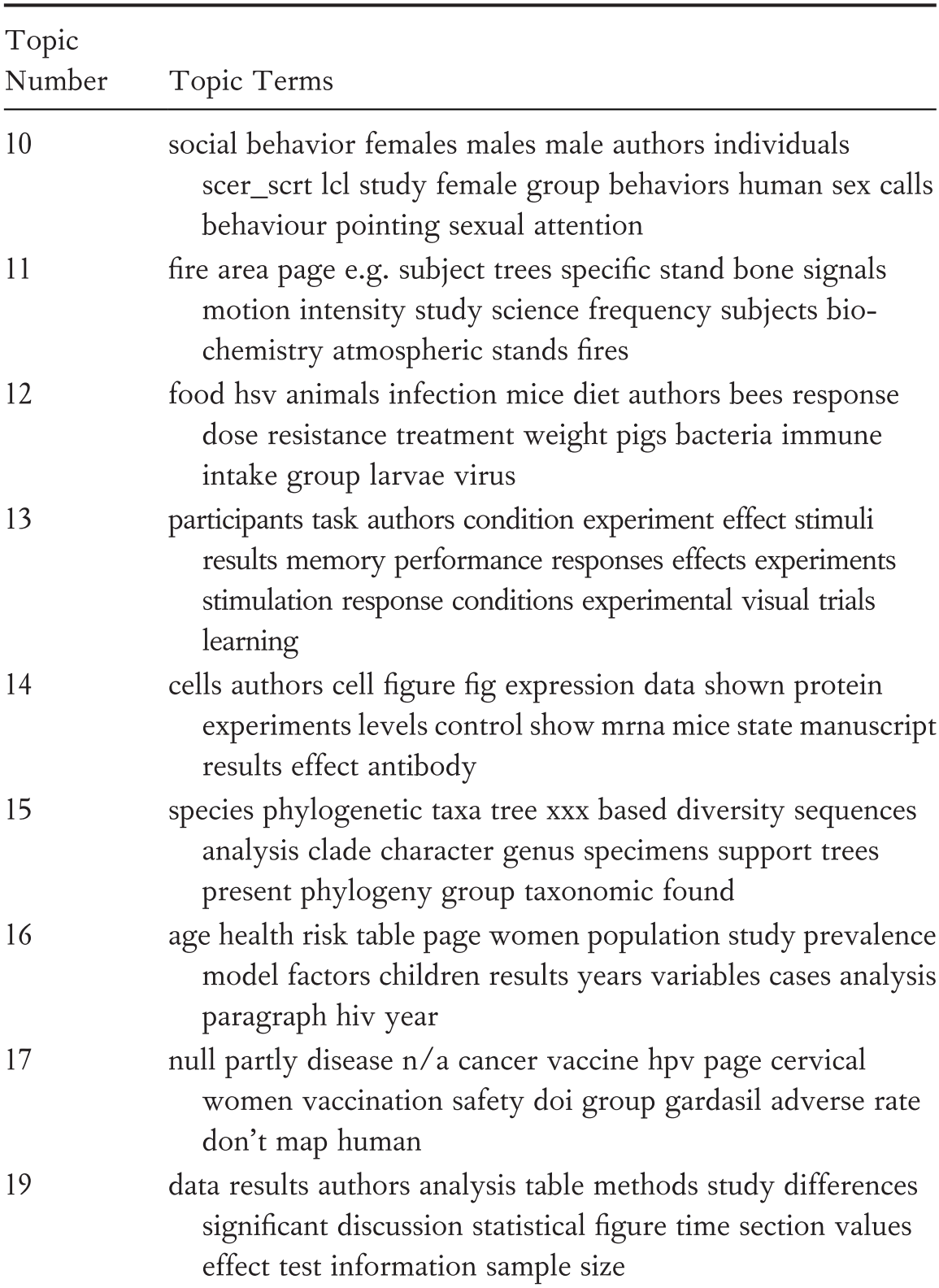

Each of these categories had a range of specific sub-tags, which are shown and explicated in Table 1.

In addition to coding against the taxonomy to categorise statements, each statement was also assigned a sentiment score (again negotiated among the three coders). The sentiment scale used ran from −10 (strongly negative) to +10 (strongly positive) with zero (0) denoting a neutral statement. Certainly, sentiment was, at first, the most contentious element of the coders’ practice with little agreement among readers. By the end of the process, reviewers found themselves broadly in agreement on sentiment markers with little negotiation required.

An interesting phenomenon that demonstrates some of the limitations of our sample size can be seen in the fact that no statements in our taxonomy pertain to ethics and Institutional Review Board procedures (rather than to the ethics of peer review itself (for more on the latter, see Reference George and Woodwardde George and Woodward 1994; reprinted in Reference ShatzShatz 2004)). This is not the case in the corpus as a whole, where reports contain notes expressing diverse sentiments such as ‘[t]he description of the human research ethics and informed consent process is confusing. Was the research protocol reviewed and approved by an institutional review board (IRB)? If yes, which one?’; ‘I have sincere reservations about the authors [sic] lack of independent review by an ethics committee’; ‘[t]hus, review by an ethics board is not needed’; and ‘an ethics statement is required in the method section.’ While these statements would have been tagged as remarks upon ‘expression’ and ‘omission’, they would also have been marked as pertaining to ethics had we encountered any such reports in our tagging exercise. This is important since some reviewers dubbed ethics problems as major concerns with certain manuscript submissions, such as a lack of ethical clearance for work upon vertebrates or cephalopods, one of PLOS ONE’s ethics criteria. There are several clear reasons for this. The first is that ethical issues in PLOS policies only apply to a subset of articles and, therefore, only a subset of the random sample. Second, PLOS ONE editorial staff screen major ethics issues before assigning papers to academic editors and so we would expect that in the subset of papers for which ethics is relevant, many issues may already have been cleared with authors before the work is sent to peer review.

On the other hand, there were occasionally also statements that merely confirmed that ethics procedures had been followed: ‘[t]he research meets all applicable standards for the ethics of experimentation and research integrity’, for instance. Although reviewers are asked, explicitly, to consider ethical issues in any paper in a separate question, it is notable that our sample did not find any statements on this matter. This could be interpreted to mean that the ‘missing’ statements on ethics in our sample indicate implicit assent that all ethical matters have been satisfactorily addressed. However, a sociology of absences means that it remains important to continue to pay attention to what might be expected but is not found (Reference SantosSantos 2002). For an important point that informs all of our analysis herein is that PLOS ONE editors attempt to screen for policy matters before sending to peer review in any case. This is meant to ensure that work that is not in line with PLOS’s publishing policy and could not be published anyway is not sent to reviewers. Such work is incredibly hard to investigate; nonetheless, it sits as an invisible substrata to the reports that are visible.

In the next chapter, we turn to what we can learn about reviewing practices under supposedly radical conditions, and the extent to which this institutional policy can be seen as a driver of academic practice.

3 New Technologies, Old Traditions?

At the end of our year-long coding project, we had tagged 2,049 statements across 78 reports in triplicate consensus. While this constitutes a small proportion of the total dataset, the specific reports were selected using a computational random number generator to ensure that our results came from across the spectrum of review types and represented accurately the underlying database. This chapter details the composition and congruence of the reports that we studied. We also attempt to show whether or not our findings match those made by others in different journal spaces.

More specifically, this chapter aims to appraise whether reviewers in PLOS ONE, a journal with radical criteria for peer review, behave in radical ways or stick to tried-and-tested norms. In other words, our investigation here is into the difficulties of organisational change in academia. What does it take, we ask, to change practices? Are policies enough? Is technology sufficient? Or are there broader and deeper social requirements?

Did PLOS’s Guidelines Yield a New Review Format?

In line with existing studies on the commonality of report structures and the ‘moves’ within them (Reference BornmannBornmann 2011b, 201; Reference GosdenGosden 2003; Reference PaltridgePaltridge 2017, 39–40), which we here confirm, most reviewers for PLOS ONE begin their reports with a summary of the manuscript on which they have been asked to comment. That is, reports open by describing the contents of the paper with statements such as ‘this manuscript investigates the relationship between t-cells and inflammatory markers in rheumatoid arthritis.’