Introduction

First-time users of Large Language Models (LLMs) come away feeling wonder or discomfort, rarely indifference. Wonder comes from feeling that there is something sentient on the other end of the conversation. We know this to be false, of course. Next-token predictors, stochastic parrots,Footnote 1 statistical models, fancy autocompletes;Footnote 2 there are many ways to explain away the magic. St ill, the feeling lingers, at least until it becomes routine. The Turing Test’s conquest with hardly a comment is testimony to how accustomed we have become to being understood by the same computers that, until recently, were controlled through arcane methods like code commands or symbol manipulation.Footnote 3 Chatbots now read and write in natural language and do a good job of it.

Discomfort arises from the realization that, despite their humanlike attributes, these things are not human at all. In fact, they are alien minds. We are amazed when they surpass us in the breadth of their knowledge, their speed, their multilingualism; that is also what makes their failures dumbfounding. How can something that so capably translates Homeric Greek crash when asked to count the number of r’s in “strawberry”? Or which is larger, 0.9 or 0.11? In fairness, these errors have been ironed out. Other, equally perplexing, mistakes remain.Footnote 4

The “uncanny valley” effect that LLMs induce makes them harder to categorize. Should we anthropomorphize them? Some people who were enraged when GPT-4o was deprecated certainly thought so.Footnote 5 Should we regard them as glorified office tools? Regardless, it is clear that they are creating social, cultural, and economic shockwaves of global magnitude. In work meetings, government communiqués, media articles, and coffeehouse conversations, the words “artificial intelligence” (AI) are uttered with excitement and no small measure of trepidation. For many artists and musicians, generative AI is the thief of creativity; in board meetings, a possible bonanza of productivity. Workers of all stripes fear it will replace them, parents that it will take their place as a trusted source of knowledge, environmentalists that it will strain our overburdened ecosystem to a breaking point in its hunger for power. Our reckoning with AI is in its infancy, and already it is set to transform society more profoundly than any recent technological revolution.

Amid the social conversation on AI, academia has been especially vocal. Although associated with Silicon Valley, generative AI is the brainchild of universities, so it is only natural that they should have a say in its evolution. That, however, is not the sole reason for their outspoken stance. Rather, it is because generative AI threatens academic scholarship and research. While some scholars and university administrators have lauded the creativity of these tools and recognized their potential for opening up new avenues of discovery, the response from most quarters of academia has been critical and defensive. The humanities, which have undergone a crisis in recent years resulting not only in decreased enrollment but also, and perhaps more importantly, in an eroding sense of relevance, feel especially vulnerable. This Element strives to answer an urgent question: How will generative AI impact scholarship in the humanities, and primarily historical research?

Anxiety regarding the technology generally falls into three categories: in the immediate term, the fear that generative AI labs will “train” their models on existing scholarship and allow universities and research institutions to outsource projects to LLMs, reducing the need for human expertise and commoditizing knowledge work; in the medium term (three to five years from now), the nagging worry that chatbots and agents will eliminate human creativity altogether, ushering in a dystopian plutocracy where AI robber barons rule an impoverished underclass or, no less menacingly, a dictatorial panopticon that silences any stirring of thoughtcrime; and in the long term (five years and beyond), that AI will prove impossible to control, with runaway superintelligences displacing humanity as the dominant species. It is easy to scoff at these scenarios, branding as “doomers” and Luddites those who believe the meager chatbot capable of such chaos. Nevertheless, even for its most avid proponents, AI holds immense disruptive potential, whose consequences, they admit, will force a renegotiation of long-held social contracts.

This Element does not address medium- and long-term worries, justified though they might be. To remain manageable in size and reasonably grounded in the present, these concerns must be left to other authors. It is thus solely concerned with short-term challenges to academic praxis. Rather than propose countermeasures, it seeks to harness the technology to upskill humanities scholars, primarily historians, for a new age of creativity. As this Element will show, LLMs are poised to expand the capabilities and research horizons of scholarship, empowering historians, literary experts, philosophers, musicologists, and others to embark on projects that have, in the past, required teams working in unison to achieve or that have previously been entirely unthinkable.

This is, then, an optimistic text. It sees historical scholarship first and foremost as a beneficiary of generative AI. It is adamant that the humanities, particularly history, are uniquely positioned to respond to the challenge of generative AI, while cognizant of its formidable scope. After all, the present generation of tools is called large language models, and language is the purview of the humanities. Humanities scholars excel at recognizing biases, identifying subtext, and unmasking narrative strategies, making them ideal for evaluating AI’s strengths and weaknesses. It falls to the humanities to rise to the challenge and have a decisive voice in shaping the future of mankind’s interaction with AI. More than theoretical musings about a tech apocalypse, this is the way to influence the outcome in the medium and long range.

Artificial Intelligence and Historical Scholarship

Early adopters of generative AI in academia often face pressure to justify its usefulness and morality to skeptics who regard it as a corrosive force chipping away at their beloved discipline. Other colleagues are less critical but hesitant to use the technology, fearful of transgressing some invisible rule in an ever-evolving regulatory landscape. Others still want to use AI but do not know where to start.

These are valid concerns, and this Element will not make light of any of them. It intends to offer a path into the technology from the perspective of a historian who respects the field and wants to see it flourish. To be sure, AI can be wielded irresponsibly, but this need not discourage scholars from harnessing its capabilities to advance their disciplines.Footnote 6 Applied correctly, it promises to enrich historical scholarship in much the same way as computerized archives and databases, digitized primary source repositories, and online research tools have done. In other words, it can give individual scholars the power once reserved for well-funded institutions to conduct broad comparative research, uncover trends across lengthy corpora of sources, extract novel insights – in short, to produce innovative synthesis.

Historians need to become familiarized with these tools and their underlying technology to do this effectively. They must recognize the pitfalls and biases of generative AI, but even more importantly, they must understand its capabilities and methodologies to form a mental frame of reference that will serve them in their quest to apply it to their research. The Element will examine these questions in detail, using this discussion as a foundation for practical use cases: How should historians use LLMs to analyze primary sources; build knowledge maps and uncover networks of connections; and, importantly, how can they do all this safely and ethically?

This Element will therefore address ethical concerns: hallucinations/confabulations, attribution, originality and plagiarism, data privacy, intellectual property, and skill erosion. While some categories will inevitably need recalibration, the Element sees the technology – at least as we understand it today – as one tool in the historian’s toolkit, not a stand-in for professional capability and accountability.

A Note on Terminology and Bibliography

When LLMs emerged, many media outlets featured a diagram visualizing the relationship between the different fields as a set of concentric circles. The outer circle was simply titled “AI,” the one contained within it “Machine Learning (ML),” followed by “Deep Learning,” “Generative AI,” and, finally, “LLMs.”Footnote 7 We will not dwell on these distinctions, although they are important categories of computer science. Our focus here is on the influence of LLMs, and more specifically the commercial offerings from the main AI labs, on the historical discipline. This last point is not coincidental. Companies like Google, OpenAI, and Microsoft have diversified their products and launched targeted promotions, hoping to attract customers in academia. Their models have thus dominated the academic market in the West, relegating open-source models to the fringe. In addition, general models often outperform fine-tuned specialized ones.Footnote 8 Unless operating in very specific contexts, students, staff, and faculty will likely be working with Gemini, ChatGPT, and comparable platforms.

For this reason, the terms AI, LLM, model, and chatbot are used almost interchangeably to refer to this class of products and services. There are subtle differences in tone, with AI as the overarching field, LLMs as the underlying technology, and chatbots as the user interface. Likened to a car, AI would be the broad category of automobiles, LLMs the internal combustion (or electrical) engine, and chatbots the steering wheel, gauges, and everything else we see on our dashboard. This analogy will not be applied very rigidly, however, trusting the reader to understand the intent.

Another point concerns the use of literature. Academic publications, to whose company this Element aspires to belong, usually depend on peer-reviewed articles and monographs. The time for an article to pass review means that those appearing today were probably completed in 2023 or early 2024 at the latest. In AI terms, this is a veritable eternity. While I relied on peer-reviewed literature when possible, I also made ample use of prepublication articles that appear on ArXiv. Many will indeed go on to be published in illustrious platforms, but for the purposes of the Element, this mattered less. While I vetted the literature, its role as corroborating evidence for a cumulative chain of arguments leading to an inevitable conclusion is secondary. This Element is equal parts exploratory and first-person empirical, which means literature provides a theoretical background for applied ideas. More importantly, much of what happens in AI happens on platforms like X, Reddit, podcasts, and even legacy media. For this reason, many cited sources are “popular” rather than “academic,” although the boundaries between the two spheres are growing more permeable.

Third, the degree to which I made use of AI in the composition of this Element warrants clarification. The idea to write arose naturally from lectures I gave and courses I taught, where many of the issues addressed here already surfaced to some extent. Preliminary discussions with AI before deciding on the structure of the Element provided a general sense of direction, although ultimately, I charted the argumentative path myself. AI’s most decisive contribution was in locating relevant literature. I set up agents for bibliography retrieval in Claude Code and GPT Codex through the Cursor IDE and used Perplexity’s Comet browser to triage and summarize findings. My text editor of choice was Lex.page, which meant that I could avail myself of an AI companion to identify non sequiturs, clumsy phrasings, and unwanted repetitions. Lex also allowed me to fine-tune its suggestions through the “knowledge bases” functionality, where articles, blogs, and websites can be uploaded to steer its comments in a specific direction. When I ran into dead ends, I often prompted Lex (usually running Claude Opus 4) to suggest a way forward. While overall helpful, these suggestions were always too verbose and ornate to consider seriously. The resulting prose is therefore my own, as are any of the errors in fact and judgment that remain.

Finally, a comment on positionality. This Element was written using ChatGPT Pro, Claude Max, Gemini Pro, and Perplexity Pro subscriptions. Other paid subscriptions, like Cursor.ai and Wisprflow.ai, also contributed to the research and writing process, and all were covered by funds made available through my position as a tenured researcher at an Israeli university. The same institution, Bar-Ilan University, also made data repositories, journal subscriptions, and other resources available through its library system. All of this is to say that I wrote the Element from a position of extreme privilege not shared by many researchers, let alone students, in other corners of the world. That said, the Element does not assume this level of access for its recommendations to be useful. For most use cases described in the following pages, one $20/month subscription to an AI service is entirely appropriate. I recognize that this, too, might be a cumbersome demand for many, highlighting AI’s potential to further exacerbate inequality. As open-source models continue to gain capabilities, access may become democratized. Regardless, this will remain a social issue deserving serious consideration. With English, Mandarin, and other widely spoken languages dominating datasets, scholars who conduct research in under-resourced languages face comparable disadvantages. As is the case with many disruptive technologies, generative AI brings the uneven geographic distribution of resources into sharper relief.

Structure of the Element

This Element has four short sections. Section 1, “New Tools, Old Questions,” places the new technology against the backdrop of the historical discipline. It argues that, while AI will automate traditional scholarly tasks like archival research, literature reviews, and even writing, historians must actively engage with these tools rather than resist them.

Section 2, “Refining Historical Inquiry Through Dialogue,” fleshes out the artisan/apprentice paradigm, emphasizing context curation. It demonstrates these principles through examples of academic tasks, concluding that successful AI collaboration depends more on developing context management skills than on technical proficiency.

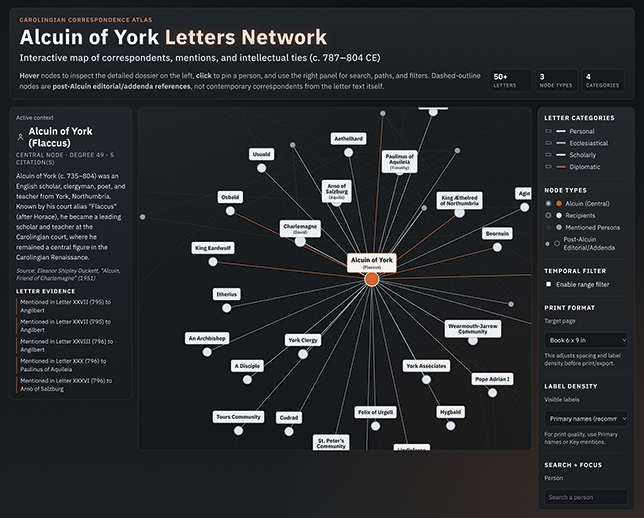

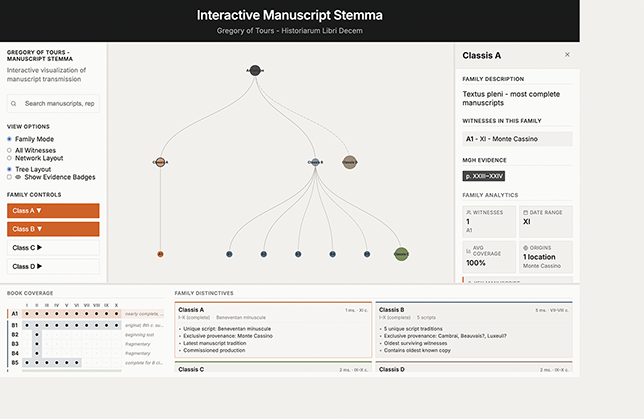

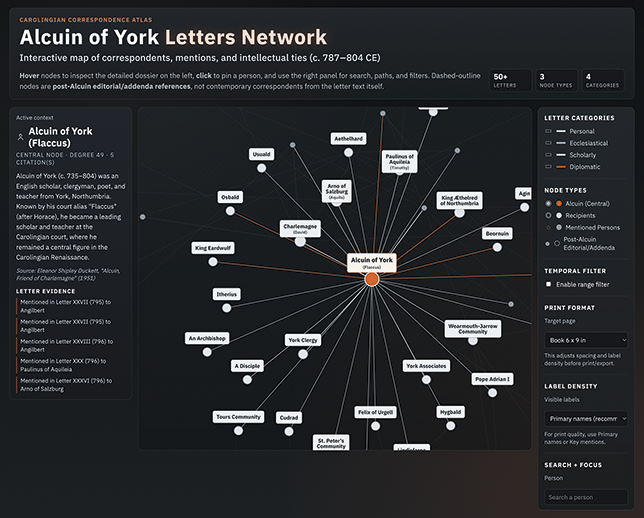

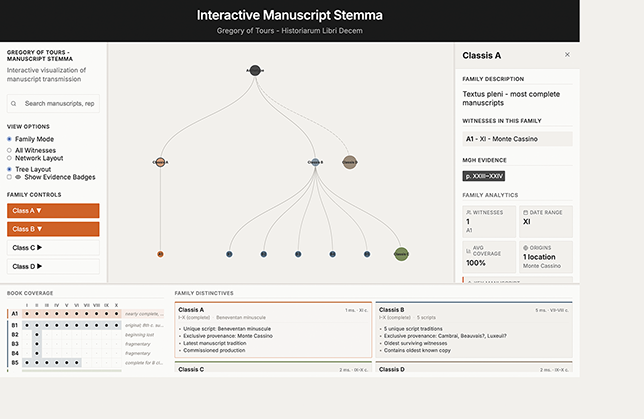

Section 3, “Contextual Search and Conceptual Exploration,” provides three case studies for using LLMs and video models in historical research: semantic search, network visualizations, and historical footage analysis. It details workflows that can be broadly generalized to fit a range of scenarios.

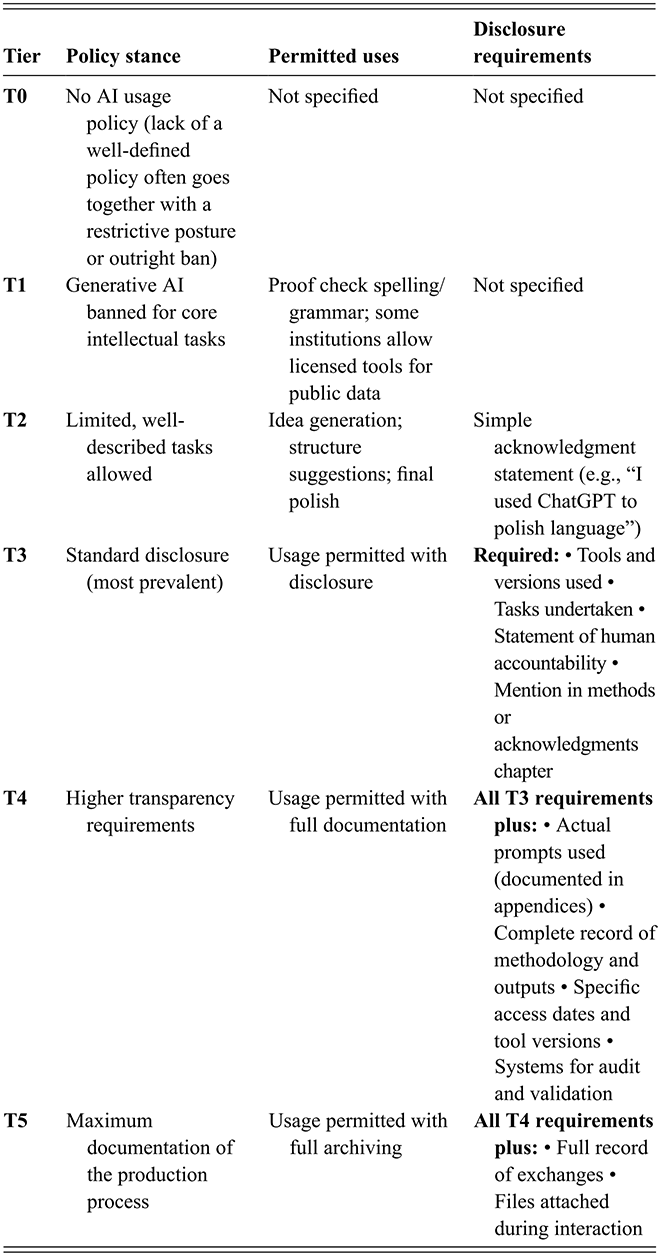

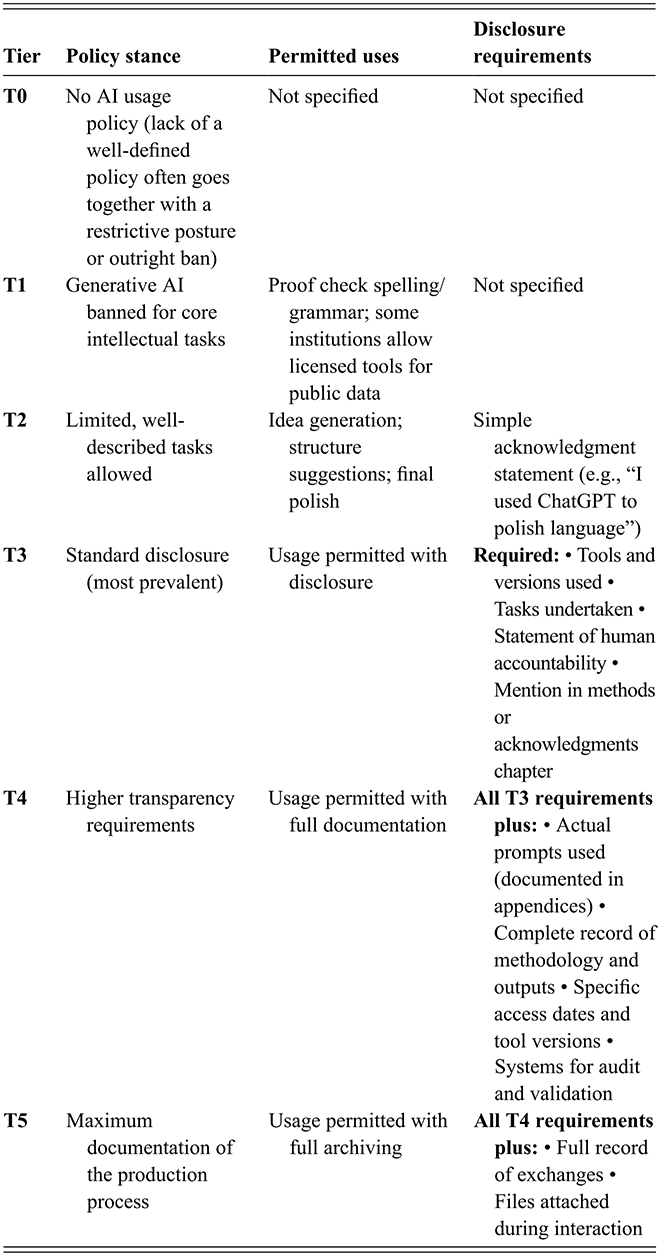

Finally, Section 4, “Governance, Ethics, and Futures,” examines how generative AI challenges traditional academic practices around attribution, intellectual property, and data security. As an alternative, it proposes a “triggered transparency” system modeled on Freedom of Information legislation. Finally, it shifts the attribution discussion from originality to methodological transparency.

The wonder and discomfort that mark our first encounters with AI signal an important threshold: We are witnessing something deserving of serious intellectual engagement. The longer we remain on the sidelines, the less influence we will have over its development and integration into scholarly practice.

1 New Tools, Old Questions

Mapping the Territory of AI in Historical Practice

Generative AI will change the historical profession. The change will happen gradually, then all at once, like it does with every revolution. Historians already use ChatGPT to rephrase messy paragraphs or convey elusive ideas. Soon, they will deploy it to do literature reviews or to check if an idea is new or just a paraphrase of something they had read but forgotten. Next, AI agents will go on intellectual quests on our behalf, returning from their explorations holding something familiar yet alien. We will ask ourselves – “Is this ours?” And we will answer “yes! We sent it on its way, we gave it prompts, we provided context, we taught it to think like us.” But increasingly, it will be, in the words of Ethan Mollick, like “working with a wizard.”Footnote 9 Reproducibility and interpretability are not the chief preoccupations of practitioners of the magical arts.

The result of this change is difficult to foresee. It is likely that the classical output of humanities scholars, historians included, will cease to be the monograph or the peer-reviewed article. Deeper still, the nature of historical thought will shift. Writing on “Will the Humanities Survive Artificial Intelligence?,” historian of science, D. Graham Burnett, had this to say: “ Now everything must change. That kind of knowledge production has, in effect, been automated. As a result, the ‘scientistic’ humanities – the production of fact-based knowledge about humanistic things – are rapidly being absorbed by the very sciences that created the A.I. systems now doing the work. We’ll go to them for the ‘answers.’”Footnote 10

This Element will not be an attempt to answer Burnett, with whose insights I mostly agree, nor will it meditate at length on the role of humanities scholars in a changing world.Footnote 11 Its purpose is to give historians tools to think with about the coming wave.Footnote 12 One might ask: If the nature of historical scholarship is destined to undergo deep transformation, what is the point of writing a book that teaches historians to use AI to write and conduct research? Will it not produce work that is much richer than we could ever dream to produce?

The answer is that the course AI takes is far from determined. The form it will eventually assume will evolve through social conversation. Indeed, it will don many shapes and work its way into every facet of our lives. Given the high stakes, critique of AI is not only justified but imperative. For the technology to evolve in ways that are beneficial not only to the tech moguls driving it or businesses adopting it in their relentless pursuit of efficiency, the humanities should have their say.Footnote 13 To advocate for such an AI, humanities scholars must understand these tools’ capabilities. Only through familiarity and proficiency can informed opinion emerge.

The historical perspective is uniquely suitable to opine on the adoption of technology across time and the changes endured by the disciplines that harness it. To understand how AI changes society, the contribution of historians is pivotal. In this sense, the Element sees the role of historians as twofold: Noticing what AI does to society and suggesting alternatives when it fails to live up to its potential, which it will undoubtedly do.Footnote 14 This is a cumbersome task, and not one that necessarily aligns with their immediate goals as working academics.

Historians, even those with an ideological bend, will not discard their specialties to become social engineers. A case needs to be made for a tangible benefit to adopting the technology. This Element contends that the benefit is clear – AI can help historians with research avenues and projects that were previously unmanageable. Unlike past technological booms, the learning curve is moderate. Knowing the technology does not mean becoming computer scientists. Indeed, historians may feel more comfortable with AI than STEM researchers do because the currency of LLMs is language.

A recent MIT Sloan study showed that prompting accuracy can influence the result as much as the model.Footnote 15 Soon after LLMs arrived, prompting was hailed as the skill to master.Footnote 16 Enthusiasm cooled when reasoning models came along, as commentators assumed they would improve at understanding us without the need to elaborate on every aspect of our desired outcome. In some respects, this was true, although the launch of GPT-5 showed they work best with the right context and clear expectations. The public’s tepid reaction to GPT-5’s launch prompted OpenAI’s CEO, Sam Altman, to promise that GPT-6 will offer a friendlier, less robotic, interaction style.Footnote 17 Wherever we find ourselves on this pendulum of efficiency vs. warmth, it is clear that clarity, awareness of bias and subtext, and the charged nature of language will continue to be valuable components of communicating with AI. Not coincidentally, they are also sensibilities with which humanities scholars are inculcated.

A reasonable question to pose here concerns the amount of time historians-in-training should devote to achieve AI proficiency. The preponderance of tools and methodologies can feel overwhelming, even for seasoned users. Many who wish to acquaint themselves come away discouraged. This is why the burden of responsibility for building robust AI literacy rests, first and foremost, with the academic programs themselves. Just as academic skills modules are mandatory in undergraduate and graduate course loads, an AI literacy course must be part of basic academic training. A constantly updated semester-long course will provide a preliminary foundation, although having dedicated institutional staff for more advanced use cases is beneficial. Support on the department level can be taken up by either faculty or staff and does not require highly specialized training. For most problems, having a slightly more knowledgeable person to turn to should suffice. Primarily self-reliant students should consider every task an opportunity to experiment with AI. Whether they are trying to find articles, proofread text, or fact-check a certain claim, enlisting AI should be a priority. Gaining confidence is easier with the help of one’s peers, so even without formal resources, a modest text group dedicated to sharing tools and experiences, asking questions, and working through challenges can be immensely helpful.

This will not be a technical Element; it will not go into details about neural networks, attention heads, gradient descent, and vector embeddings. Nor will it chart the history of LLMs from the musings of Alan Turing and the fated 1956 Dartmouth Summer Research Project, where the term AI was coined, to everything that happened since.Footnote 18 It will attempt to balance generality – guidelines that will hold true as technology changes – and specificity, providing recommendations for historians wanting to use LLMs now. As readers will surely recognize, some of the models named here are not the newest. The final quarter of 2025 witnessed the releases of GPT-5.2, Gemini 3, Opus 4.5, and others, a trend expected to continue apace in 2026. It is an inescapable attribute of the field, and as a result, some of this Element will age poorly. Specificity is its first casualty, eroding with every new model release and UI redesign. This has not discouraged me from writing it and should not discourage you from reading it. Knowledge in the AI age is becoming commoditized; agency and taste are not. By applying their agency and taste to the knowledge they find in this Element and similar sources, historians can make it actionable. Moreover, the best practices charted by this Element are model agnostic. Thinking carefully about context, applying rigorous fact-checking, and considering ethical ramifications are topics that will remain evergreen, even as AI capabilities evolve.

What’s Really at Stake

Of the many famous quotes from British mathematician and philosopher Alfred North Whitehead (d. 1947), perhaps the most recognized is this one:

It is a profoundly erroneous truism, repeated by all copybooks and by eminent people when they are making speeches, that we should cultivate the habit of thinking of what we are doing. The precise opposite is the case. Civilization advances by extending the number of important operations which we can perform without thinking about them. Operations of thought are like cavalry charges in a battle – they are strictly limited in number, they require fresh horses, and must only be made at decisive moments.Footnote 19

Despite being over a century old, this short and evocative statement encapsulates the challenge of AI to scholarly inquiry and knowledge production. Much of what historians consider the core of their practice – reading, making connections, extracting insights, synthesizing, writing, even indulging in the odd m-dash – will be subsumed by AI, manifesting as LLMs, agents, and whatever comes next. If we take the pronouncements of tech pundits at face value, this is not really a question of automation but of sharing the stage with nonhuman subjectivities whose claim to knowledge production, as opposed to data generation, cannot be dismissed.Footnote 20

Recognition of this development’s seriousness has been slow to reach humanities departments. While social critique has grown more vociferous,Footnote 21 even hysterical,Footnote 22 historians carry on leisurely debating if using LLMs to automate literature search, polish prose, and brainstorm should be considered “cheating.” Academia is used to doing things at its own, often glacial, pace. Faculty meetings that adjourn by deciding to table a tough policy decision to the next semester, or budget committees reconvening for the nth time to weigh the pros and cons of purchasing a subscription to an outdated tool, are familiar features of academic life. They are unsustainable in an age where AI models train the next generation of LLMs, becoming stronger and faster with every refresh cycle.Footnote 23

The question of whether AI is capable of producing knowledge is hotly contested, mainly because the definition of knowledge is elusive and context dependent. A 2024 survey asked this and related questions of 100 researchers working with AI.Footnote 24 Philosophers generally opposed this statement, while computer scientists were evenly divided between acceptance, rejection, and indecision. When asked if AI will ever reach true knowledge, a third of philosophers responded negatively. Recalcitrance is rooted in the tendency of philosophers to endow humans with “semantic grounding,”Footnote 25 whereas LLMs are relegated to the lesser epistemological rung of “syntactic engines.”Footnote 26 Semantic grounding usually entails some variation on embodied experience involving sensory perception and a causal connection to the world.Footnote 27 LLMs, it is argued, lack these characteristics and therefore cannot produce true knowledge.

A common quip among computer scientists is that AI (or, more recently, artificial general intelligence (AGI))Footnote 28 is whatever we can do that computers cannot; once achieved, it simply becomes “Machine Learning.”Footnote 29 This resembles the “God of the gaps” idea against which Scottish evangelist Henry Drummond argued in 1893Footnote 30 (the phrase itself came from Charles Alfred Coulson’s Reference Coulson1955 book, Science and Christian Belief).Footnote 31 Walter Benjamin’s The Work of Art in the Age of Mechanical Reproduction harbors a similar sentiment by arguing that critique of technology is essentially a bitter elitist reaction to the demystification of its cherished monopolies:

Thus, the distinction between author and public is about to lose its basic character. The difference becomes merely functional; it may vary from case to case. At any moment the reader is ready to turn into a writer. As expert, which he had to become willy-nilly in an extremely specialized work process, even if only in some minor respect, the reader gains access to authorship.Footnote 32

We might be making a similar blunder. Because of the humanities’ (justified) focus on the human, we struggle to see what machines are becoming. Large Language Models are stochastic engines whose knowledge base prevents them from realizing what we conveniently placed out of their reach, namely embodied and causal knowledge. Should we assume that progress in “embodied AI,” otherwise known as robotics, will not achieve what text-based models failed to? Time will tell. Regardless, it is important for the academic community to show humility and brace not only for the possibility that many of our methods are about to be automated. We should also be prepared to ask what it means to write history when the production of historical knowledge is no longer the sole preserve of humans.

Professional Stakes

Historians have traditionally controlled access to historical knowledge stored in archives – physical or digitized. Searching through these archives for valuable information required access and fluency in professional terminology. This is no longer true. Open-access digitized databases predate AI, but when the two are combined, they open up source material to nonspecialized investigation. AI enables semantic search, which means that we are no longer limited to keywords and can now search for ideas and themes in the text.

AI knocks down other barriers, too. To a significant degree, language becomes less of a hindrance. Large Language Models are excellent translators, and while fluency in highly specialized linguistic domains, like jurisprudential Latin or medical Akkadian, is not entirely obviated, many historical sources become more accessible.Footnote 33 Another capability is handling large corpora. Large Language Models breeze through hundreds of pages in the time it takes a human to work their way through a single paragraph. Context windows have grown larger, and models are better at retaining and retrieving what they read. Most off-the-shelf models can now use tools for search, data compilation, summarization, and presentation. Already, AI has reduced the need to spend long hours in archives and libraries, sorting through primary sources and scholarly literature, a trend that will likely accelerate. More importantly, it is destabilizing the assumption that professional historical training is needed to access source material, query it effectively, and extract insights that are relevant and actionable.Footnote 34

The value of popular history notwithstanding, it seems likely that, with the importance of information gathering diminished, historical work will move to prioritize evaluation. Archival, paleographical, and codicological prowess is highly coveted by historians. Once it is no longer part of the toolkit, much of what we currently consider historical work disappears. Pragmatization is always melancholy, but here it is accompanied by an additional, more sinister dimension – model bias. By outsourcing archival work to opaque models, we forfeit control over countless small decisions that determine what we see and evaluate. This, too, is a matter of serious concern. In sum, the professional questions historians must answer are changing, and a conversation about these developments is overdue.

Disciplinary Stakes

The shifting focus of historical work creates opportunities for scholars who are capable users of generative AI. By the same token, it disadvantages those who do not wield the tools proficiently. Inevitably, we end up with a fragmented discipline. The impact on the field is uncertain, but some challenges are already coming into view. Without clear guidelines, authors will face a review process that is often suspicious, if not outright hostile, to AI-assisted scholarship. Historians who adopt these tools without understanding their limitations risk undermining both their own research and their effectiveness as peer reviewers and evaluators. The speed at which scholarly works, grant proposals, lesson plans, and other textual artifacts of academic work can now be produced calls into question the entire trajectory of the historian’s academic career. These are three examples out of a host of issues that will arise as AI becomes entrenched in academic work.

Decisions are urgently needed. They extend not only to the obvious matter of restructuring attribution and reconsidering originality but also to the ways in which we train the next generation of historians. Soon, LLMs will produce adequate articles, then excellent ones. Once achieved, the process can be sped up such that the most obscure questions can receive book-length treatments within minutes, if we so wish. Meanwhile, AI literacy is being introduced in piecemeal fashion into the curricula of history programs, which are preoccupied with outdated ideas about authorship and originality. Put differently, the institutional response seeks to harmonize AI with existing academic practices without realizing it makes many of them redundant and hollow.

The problem, then, is less about solving disclosure for ChatGPT-assisted compositions and more about fostering an environment that takes the technology as a starting point for new modes of historical production. While there will probably be demand for AI-generated text in some circumstances, the discipline must find new and compelling ways of mediating historical questions that go beyond dry academic texts. Could we envisage agentic reconstructions of crowd movements during the Nika riots in Constantinople or, nine centuries later, world models creating simulations of a walk down (now) Istanbul’s Kapalıçarşı Grand Bazaar? Might these be accepted outcomes of academic work?Footnote 35 There is no reason to discount such examples as less scholarly, rigorous or cognitively demanding. For this to happen, platforms for showcasing the work need to be developed and, importantly, incentivized through peer review and accreditation.

Intellectual Stakes

Perhaps the most worrisome aspect of our increasingly AI-infused environment is cognitive offloading.Footnote 36 In the context of the present discussion, the term means an overreliance on AI-generated responses that adversely affect critical thinking. Cognitive offloading is decried as a problem primarily with students, but its effects can be injurious to scholars who have become dependent on LLMs for a variety of cognitive tasks. Not all tasks are equal in this regard. Some are technical and require very little mental effort, while others are primarily reasoning tasks that take a toll on the user’s deductive faculties when continuously delegated to language models.

The challenge lies in telling the two apart, especially during the dynamic nature of an exploratory conversation with a chatbot. Once certain paths are introduced by an LLM, they linger in the user’s consciousness even if they are not adopted. Asking ChatGPT to produce a thematic skeleton for a composition puts one’s thoughts in order but also dictates a trajectory for the argument that would not have been taken otherwise. The purpose here is not to espouse a purist perspective on work with AI; it is to argue for the importance of recognizing when we are swayed by ideas that are not our own, not to reject them outright but to examine them and see if they are truly justified or simply a choice of convenience.

Responses reflect the data upon which the models generating them were trained. Consequently, they contain a mixture of all the social pathologies one would expect of information harvested from the farthest reaches of the internet. Models trained on racial hatred, religious fundamentalism, and other noxious ideologies are concerning for obvious reasons, although historians of all people are expected to engage with such source material critically.Footnote 37 Indeed, some historians actually study hate speech for a living. Equally distressing are the often-heavy-handed attempts by AI labs at policing the model’s speech. The incentive structure is all too clear and rarely takes into account what historians need. Be that as it may, efforts at presentism have shown how intervention might produce the opposite of the intended effect, as demonstrated by the racially diverse representation of WWII German soldiers in Google’s early image generators.Footnote 38 A similar design choice characterized the Chinese DeepSeek R1 model that refused to discuss politically contentious issues like the 1989 Tiananmen Square crackdown or Taiwanese sovereignty.Footnote 39

Good AI literacy should endow the user with relevant discernment skills, allowing them to identify when the model’s ideas dominate the conversation to such an extent that the work becomes disconnected from what the user had originally intended. The same applies to instances in which it attempts to inject its own (or its designer’s) ideological bias. The latter is essentially a special case of the former. For many reasons, this is a difficult sensibility to nurture. Cognitive offloading is the path of least resistance, so we are incentivized to overlook it. Bias can be hard to detect, especially when it subtly nudges us in a direction that only becomes apparent once we have arrived at the destination. Experience – time spent conversing with different models, getting a feel for their “character” and ideological leanings, and spotting manipulation – is essential. As models grow more sophisticated, this will become harder.

The Irreducible Core of Historical Practice

Until now, we have dealt primarily with the challenges posed by AI’s encroachment on traditional domains of the historical discipline. The remainder of the section will explore the space of possible solutions, not to prescribe remedies to complex, evolving problems but to clarify the criteria proposed solutions should satisfy. As the title of this subsection suggests, there is an “irreducible core” of the profession that endures, even as its expressions take on new form.

The purpose of historical work is to create narratives that accurately, contextually, and empathetically depict the past. It acknowledges its sources’ lacunae, biases, and errors and attempts to evaluate them on their own terms. It is equally cognizant of the flaws of scholarship, which imposes its own, often anachronistic, suppositions on the historical setting. More than anything, it is an exercise in creativity and imagination, bridging gaps in knowledge and explaining to its readership notions that it may find offensive, misaligned, or simply strange by appealing to a shared sense of human kinship. This is not a project that is eroded by the advent of AI.

A first step on this path is to reframe work with AI as collaboration, not competition. Development of generative models is not expected to stall, despite frequent statements to the contrary.Footnote 40 This means that it is (and will continue to be) difficult to foresee the technology’s final form. At this point in its evolution, the relationship resembles that between an artisan and their precocious apprentice. We expect it will be able to relieve us of certain tasks while recognizing that others might be too demanding. We should, however, test those boundaries frequently. AI differs from a traditional apprentice in the sense that it has much more information than we do, although it has not yet learned to deploy it effectively. In subsequent sections we will discuss workflows that allow us to make the most of the artisan/apprentice paradigm.

As the technology matures, the relationship will become less asymmetrical. AI will grow into a partner and its ability to handle longer, more nuanced assignments will improve. Some barriers to its usefulness today, like hallucination or inability to access paywalled content, will likely be resolved or mitigated. Once this is achieved, the historian’s role changes from artisan to architect. In this new capacity, the historian oversees engineers, contractors, bureaucrats, and builders. They conceptualize a project broadly, drilling down where necessary but leaving much of the fieldwork to a specialized crew. Soon, historians will launch fleets of AI agents to perform archival work, scour literature, compare opinions, and present possible paths toward a predetermined goal. The remaining historical work is encapsulated in two words: agency and taste.

Put simply, agency and taste are tail ends of the same process. The former is the conceptualization of a research question and the decision to embark on a mission to find out the answer; the latter constitutes the judgment necessary to determine if the results produced by the AI coalesce into a cogent and compelling argument. For the foreseeable future, both remain firmly within the realm of human expertise. The term “expertise” is especially salient because it is the determining variable in ensuring a high-quality product of human-AI collaboration. Anyone who has worked with chatbots knows that they are good at creating the illusion of quality. Colloquially, this is known as “AI slop.” It is text that seems to meet the criteria for well-phrased, well-argued prose but is hollow and vapid. A recent research project by BetterUp and Stanford Social Media Lab has pointed to “workslop,” defined as: “AI generated work content that masquerades as good work, but lacks the substance to meaningfully advance a given task,” as responsible for significant financial harm in the workplace.Footnote 41 What is lacking from such AI-generated content is human expertise.Footnote 42

This insight is even more significant in academic writing and becomes glaringly obvious when one encounters compositions of nonexperts produced almost entirely by AI, with undergraduate course assignments as the prime culprit. When asked to identify if student work is AI-generated, faculty often successfully diagnose it not through telltale errors but through what is absent – the friction of genuine thought.Footnote 43 Expertise does not manifest in cosmetic changes to academic prose but in a substantive understanding of the argumentative direction. Even when LLMs are integral to the process of writing, the human is in control. It is they who pilot the vessel toward its destination.

Expertise leaves its mark on agency and taste equally. Opponents of AI complain that it might as well “do our job for us.” But it does no such thing. Until such a time as the AI initiates novel research directions and carries them out to their successful completion, there is still a place for human expertise. A future in which AI obtains these capabilities might yet materialize. For now, we should direct our agency and taste to align the technology’s development with our values and aspirations.

Concluding Thoughts

Our discussion opened with how our current thinking about history and its attendant methodologies is set to become outdated as LLMs and other generative technologies embed themselves in academic work. Mollick’s wizard analogy, which bemoans AI’s uninterpretability, adds another layer of complexity – or, rather, opacity – to an already difficult problem.

The profession, discipline, and the thinking that binds them will change, and change profoundly. Not only is the academic career trajectory expected to morph into something unrecognizable by the time the process has run its course; the kinds of products we, as historians, value and produce will likely also take on new form. As increasing swathes of cognitive labor are outsourced to computerized processes for which we have very little “theory of mind,”Footnote 44 the issues of cognitive offloading and susceptibility to bias grow more pronounced.

This section attempted not only to present the problems with the current generation of AI but also to argue for a potential solution. In a recent interview, Richard Sutton, often termed “the father of reinforcement learning,” pointed out the limitations of the current paradigm. Drawing on John McCarthy’s definition of intelligence as “the computational part of the ability to achieve goals,” he argued that LLMs lack substantive goals and consequently have no real intelligence.Footnote 45 Translated into the vocabulary of the present section, this means LLMs, as we currently understand them, have no agency and no taste. The impulse to study a certain question and the decision to embark on a mission to answer it are the essence of agency. Without it, neither history nor any discipline can progress.

The case for taste is a bit more complicated but arises from the same perspective. Confronted with the question of whether LLMs learn, Sutton insists that next-token prediction is inconsistent with true learning. For it to occur, there needs to be a persistent change based on new information and experience. In other words, if the model predicts a token but the token provided by reality is different enough to “surprise” the model, it does not adjust the weights of its neural network. Humans and other animals, unlike LLMs, learn from experience all the time by making subtle or radical adjustments to their suppositions, as the case demands. For LLMs to do the same, they need to undergo retraining. Refinement of preferences, by which one develops taste, cannot happen unless whoever is doing the prediction changes as a result.Footnote 46

Sutton forecasts that AI will achieve superintelligence, so those finding solace in LLMs’ current shortcomings are in for a disappointment. Regardless, agency and taste are valuable anchors in the artisan/apprentice and architect/contractor heuristics for working with the current generation of AI. It is the human who controls the initiative and applies the discernment.

Whether humans are ultimately dethroned remains to be seen. It is, in any event, beyond the scope of the present Element. For now, it is imperative that historians come to terms with the technology and experience it firsthand. This must happen if they are to free themselves of magical thinking and critically comment on the path AI should take to benefit the collective. In the next section, we will examine some initial ways to bring this into practice.

2 Refining Historical Inquiry Through Dialogue

How Should We Approach These Tools?

For historians of my generation, who began training when card catalogs were still a frequent sight in libraries, the move to digital information was rocky because it was prolonged and gradual. Archives needed to undergo digitalization, a time-consuming undertaking of varying quality. Articles and books are still being scanned and OCRed, a process that will doubtless take years to complete.

The promise of digitization was nevertheless transformative. That information, primarily in the form of metadata, was immediately retrievable and, better yet, from a distance, was nothing short of groundbreaking. If a historian wanted to know whether a book was available for loan or if a key term appeared in a chapter of a book, she could avail herself of the iconic tool of the digital age – the search engine – and get an answer straight away. To most of us, search engines meant Google, which trained us in the telegraphic language of key terms. “Late antique women conversion Minorca article” would not have been an out-of-place query to type into Google Scholar, producing the reassuring page of blue links to websites of approximate relevance to our query. Search engine information was dependable, deterministic, and static.

When chatbots burst onto the scene, they mimicked the user interface of Google Search: A blank page with an empty textbox. Old habits die hard, so we naturally interacted with LLMs as though they were search engines. Some still do. But LLMs are not search engines, and while they can be deployed as data retrieval machines, this is not how best to use them. This section will frame LLMs as assistants, even cognitive partners of sorts, to whom we can turn when we need help thinking through problems, when we are at an impasse, or need a fresh perspective. In such instances, the dreaded hallucination problem becomes less of an issue because we do not come to LLMs for factuality but for creativity, which is another way of saying “controlled hallucination.”Footnote 47

Interaction with LLMs has always begun with the prompt. In the early days, people talked about “prompt engineering” as if it were a proper field of knowledge, either revered as an arcane discipline or marketed as a simple process of matching endless prompt libraries (sold by enterprising “experts”) to any conceivable use case. In reality, prompt libraries are usually useless, and mastering talking to LLMs is not like learning incantation but a general sense that comes with experience. More importantly, the prompt is only a small part of the interaction, albeit one we can easily control. In this section, we will talk about prompts, context, and good communication with LLMs.

AI as Assistant

Possibly the best advice about communicating with AI is to think of it as an overzealous and slightly confused assistant. This suggestion humanizes and contextualizes the interaction, making it richer and more rewarding. Many have warned against anthropomorphism in AI because it does not need the niceties that come with human conversation. While true, conversation serves both the talker and the listener. Humanizing the interaction reframes it from a one-way stream of instructions to a gradually crystallizing context to which both interlocutors contribute.

Let us take this analogy further. The assistant is on their first day and needs guidelines about the task at hand: Who you (the user) are, what you need, and how you want it carried out. You might show them how things are done, pointing out sensitive or error-prone areas. These three components are, in fact, the basics of solid communication with AI: context, task, and structure. Basic prompting techniques usually designate these as the building blocks of a good prompt. This is one approach; another is to regard prompting as a constituent of context, and that the focus of any interaction with AI should be on “context engineering.”

Viewing AI as an assistant or colleague has implications that span beyond context. First, we must recognize the iterative nature of interacting with LLMs. Formulating a verbose set of instructions to volley at the AI all but guarantees confusion. It is much better to introduce complexity and nuance gradually, as with a new coworker. Reasoning models tend to exhibit their thought process, so we are privy to the model’s “internal dialogue” or a semantically expressible reflection thereof.Footnote 48 By glancing at these conversations it has with itself as it tries to parse our request, we can get a sense of how our intentions are perceived. Iteration serves to correct the model when it has not understood us, and we should expect this in every interaction.

Second, when possible, we should aspire to express ourselves with precision. We will focus on precision when discussing tasks and structures, but for now, let us agree that we come to LLMs with a set of preferences, tastes, and opinions it has not had ample opportunity to study. The introduction of memory into ChatGPT and other platforms has allowed them to learn our habits and sensibilities, which means that more and more of what happens in our AI conversations is documented and accounted for going forward.Footnote 49 A brief look through the “memory” section of ChatGPT dispels the notion that this replaces true familiarity. Unless diligently maintained, much of it becomes obsolete, while crucial information about us is not captured at all.

Precision requires effort in phrasing.Footnote 50 When we use terms with underlying assumptions unfamiliar to the LLM, we let it interpret our intention, which it often does incorrectly. Translation is a good example of this. Large Language Models are known as capable translators,Footnote 51 but capturing the precise sentiment, intent, tone, or musicality of a text and conveying it in a new language calls for precision and, perhaps more importantly, intentionality. An instruction to “translate this paragraph into English” might catch the essence of a Latin sermon by Bishop Caesarius of Arles (d. 542), but not much else. A better prompt would be:

“Translate lines … of Caesarius of Arles, Sermon … into academic English that maintains Caesarius” wordplay and scriptural references.

Signal every biblical allusion, citing book, chapter, and verse.

Retain Latin puns or ambiguous terms in brackets after their first appearance.

Explain your translation choice and provide alternatives and reasons for their disqualification.

Maintain Caesarius’ rhetorical rhythm through balanced clauses and assonance where feasible.

This works only if we are interested in biblical allusions and rhetorical style. Analyzing Caesarius’s epistolary exchanges for clues of his politics would naturally yield different prompts. Regardless, our instructions should be precise and intentional. For them to be grounded in a deeper understanding of our goals, communication with LLMs should rest on context, our next topic.

Context

Context means everything the LLM needs to know to do its job well. Large Language Models are trained on vast amounts of data, but it is data that is agnostic to the ways in which we want to employ the model. Without relevant focus, the model defaults to its training data, resulting in boilerplate outcomes. In any given project, there are volumes of unspoken context taken for granted, and it is only when faced with machines that do not share our assumptions that we realize how much is assumed but not verbalized in human communication. The purpose of AI is to facilitate cognitive tasks, so starting from zero is not practical. We must focus on the information that is critical for the LLM to know if it is to become a valuable stakeholder. This makes subsequent work easier and forces us to reconsider our primitives in a helpful way.

A good first move is to start every first conversation on a topic with “context dumping” or telling the model everything that is on our mind regarding a topic. Early LLMs struggled with long prompts and numerous document uploads, but this is no longer true. Multimodality is an extra advantage, since most of us have a rough idea of the context but find it difficult or time-consuming to formulate an exhaustive first prompt. ChatGPT and most other off-the-shelf LLMs have precise speech-to-text functionality in English and other common languages, and they are improving in low-resource languages too. There are specialized transcription applications for when those prove insufficient. So, we can opt to speak rather than type.

Recording a five-minute meandering, circular rant might sound frustrating and subpar to historians who take pride in lucid argumentation. Though inelegant, such bursts of unstructured thought are ideal for giving the model context. Restructuring is something LLMs do well. What is important is that the seed of the idea is there. This advice might seem to contradict what was said earlier about precision. While efforts should be taken to articulate our intentions in an exact way, this is not always possible, certainly in the brainstorming phase. More importantly, it should not come at the expense of exploring uncharted directions, even ones that are not clearly formulated.

Another Element of context is grounding. Academic projects draw their inspiration from literature. In the case of history, this takes the shape of either primary sources or scholarship, both of which can be used to ground the model in a “base truth.” For the LLM’s responses to be useful, it needs to draw not only from our intentions but also from the state of the question. So, selecting articles or primary sources that are essential for the model to keep in mind becomes a useful way of defining context. This does not mean that we need to agree with the opinions expressed in the literature we select. We might be quite critical of it and still want the model to know what was said and in what ways our rejection of it is meaningful.

Maintaining context through different threads is likewise important. Context windows are growing rapidly but are not endless, so keeping a conversation going indefinitely guarantees that things said at the beginning are forgotten by the model. This phenomenon is known as “lost-in-the-middle,” where information is less accessible and memorable when it is buried in the middle of the context window.Footnote 52 Good housekeeping requires that we partition work on a project into different threads, not only from the model’s perspective but also from ours. Starting new conversation threads is challenging if we depend on the accumulated context to carry on into the next thread, which might make us reluctant to abandon a conversation. There are, however, ways of mitigating this.

One way is simply to ask the chatbot to craft a prompt that would instruct a new conversation thread on the content of the one we are ending. This is helpful if we are concerned about the ideas we exchanged throughout the conversation but does little to compensate for the loss of articles and sources we uploaded. Luckily, most chatbots feature a “projects” functionality that allows us to group numerous conversation threads around a particular context. Anthropic’s Claude pioneered this approach, which was then adopted into ChatGPT. Projects offer repositories for uploading documents whose content applies to all the conversations that reside within it. Whenever we open a new thread, we need not concern ourselves with reminding the model that they exist. Moreover, it is possible to further refine the purpose of the project by giving it a set of instructions that are read at the start of each new conversation.Footnote 53 Here it is advantageous to say what we are trying to accomplish, which documents can be found in the project’s knowledge base, how we want them treated, and how we would like the model to behave. If we expect the project to serve as an arena for wrestling with the model intellectually, trying out new ideas, and putting them to the test, we should express our wish for the model to push back and be critical and judgmental.

A word of caution: As a result of their training, which includes a process called “reinforcement learning,” models are rewarded for pleasing behavior and so tend to be sycophantic.Footnote 54 This has been diagnosed as a serious problem, and not one easily overcome by instructing the model to adopt a more critical posture. Put differently, while models are capable of criticizing their users, when pressed, they often revert to appeasing behavior, and it is thus important not to put too much stock in the accolades dispensed by chatbots.

Context is more than just a component of prompting, although often reduced in this way. It exists outside our interactions with LLMs, encompassing everything we think and feel about our project, which is an ever-changing web of ideas, associations, and emotions. The fluctuations of enthusiasm regarding a scholarly endeavor are familiar to academics, whether novices or veterans. This relates to doubts concerning our approach or capabilities, exposure to new scholarship, and dead ends in writing, note-taking, and problem-solving. In a very real sense, then, context lives between our mind and the environment. To “engineer” it effectively, we must be conscious of its vagaries and attempt to capture and codify it.

Adopting generative AI is a transformative decision, mainly because it encourages us to think about context management seriously. Success in leveraging AI depends less on technical proficiency, which changes with the constantly accelerating rhythms of technology. It is more a cognitive and behavioral leap that trains us to think in terms of context. Consider the gap between a lesson plan and the actual lecture to a live audience. A lesson plan is a pale facsimile of a live lecture’s richness. Since COVID-19, my university has instructed faculty to record lectures, so I have gotten into the habit of transcribing the lecture and preparing an artifact to hold onto the experience, perhaps as a document or an interactive AI resource. Now that it is possible to upload raw video to some models (like Gemini 3), more components of the lecture that cannot be expressed as text are preserved. In this sense, context becomes multidimensional.

How does this translate into historical scholarship? The settings in which we gain context on our research are numerous: reading and note-taking sessions, conversations, writing, and sudden bolts of inspiration. A short Element like this one cannot provide a remedy for transforming these modalities into an operative context, but we should remember that AI is itself multimodal. We can talk to it, record audio snippets, take photos of quotes, handwritten notes, and articles, and send links of videos for the AI to watch. Since conversation with our context is also context, talking to our notes should be regarded as a valuable artifact in the context creation process. It is then a matter of extracting insight from this chaos, which is where knowledge management products like NotebookLM come in. To some, voice conversations with LLMs are most inspiring; others might prefer keyboard interaction. Either is fine, as long as we keep talking to our knowledge base in a way that challenges the boundaries of context and its assumptions.

Another component of context is style. If we want AI to write for us, teaching it to sound like us is as easy as feeding it samples of our work. The more writing we provide, the better the AI’s ability to mimic us. This can be done directly, with a prompt to replicate our voice, or by formulating a style sheet with instructions and examples.Footnote 55 Using LLMs to generate text that we present as our own poses ethical issues, which we will discuss later. For now, it is enough to say there are benefits to teaching LLMs your voice and perspective, even if you do not intend to harness it to write. Exploring narrative options by asking the AI to generate a paragraph in your style can help visualize what committing to a certain idea might look like. Copy editing and polishing our prose is another option that requires familiarity with our tone.

We must decide if we are comfortable taking this direction. If we are not deterred by the ethical considerations, we should nevertheless hesitate because once an idea generated in our voice is imprinted on us, it is very difficult to unshackle ourselves from it, creating a form of conceptual capture. This is less a liability when writing an email or preparing a lesson plan than serious writing, so each of us must draw the line where the cost outweighs the benefit.

In conclusion, context is evolving and dynamic. It develops both in tandem with our thinking on a project and independently of it, as LLMs improve in discerning our intentions and habits. Curating context to benefit scholarly work and academic writing needs to become an intentional habit of using LLMs as assistants.

Task

Unlike context, which tends to be general and amorphous, a task is usually specific and focused. Tasks describe what you want the LLM to do, as opposed to how, which we will discuss in the structure section. This seems like a straightforward definition, but tasks are often tricky. The most common task LLMs perform is probably to summarize a long text, so a prompt like: “summarize this article” meets the criteria for specificity, although it would probably fall short of expectations for a good summary. In fact, “summary” hides layers of nuance: Does it need to capture the main arguments or just the takeaways? Is the order of arguments important? To what level of detail? Are the examples and illustrations critical, or can they be left out?

A summary, and indeed any task assigned to an AI, needs to be verifiable with quantifiable parameters for determining if the LLM returned a successful result. One simple technique for summarizing primary sources and academic literature is to request references. The text is available to the LLM, so there is no reason not to request citations encapsulating the essence of arguments in the summary. For example, Peter Brown’s article, “The Rise and Function of the Holy Man in Late Antiquity,” published in The Journal of Roman Studies in 1971.Footnote 56 Running it through ChatGPT with the prompt: “summarize this article” produces the following result with the OpenAI model O3:

Central thesis The emergence of the “holy man” is the key motif of late-antique religious change, not a by-product; his rise reframes the period’s crisis as one of personal freedom rather than cultural decay.

Social function In rural Syria, weak village institutions created demand for an external patron; the holy man stepped in as mediator, judge, and safety-valve, stabilizing prosperity and local disputes.

Patronage model Villagers expected him to wield the same power and largesse formerly supplied by urban aristocrats, providing protection “to those in distress.”

Bridge to the divine He personalized an otherwise distant, formidable Christian God, translating cosmic justice into concrete acts − rainmaking, healing, exorcism − while remaining visibly compassionate.

Professionalization of the sacred By concentrating blessing, prophecy, and protection in one figure, the holy man displaced communal spells, amulets, and wise-women, marking a decisive shift from diffuse pagan practices.

Indicator of social transformation His ascendancy reflects new classes forging fresh social relations rather than simple decline; rural patronage acted like a “governor” preventing societal “over-heating.”

Decline and legacy As towns revived and monastic/martyr cults grew, sacred power relocated from living individuals to institutions, flattening the holy man’s trajectory yet leaving an enduring model of charismatic authority.

O3 is a strong model, and the result is not bad. Now, let us try this prompt, which complies with the suggestions just outlined:

Task-Oriented Prompt

Role You are a late-antique historian preparing teaching notes. Input Full text of Peter Brown, “The Rise and Function of the Holy Man in Late Antiquity” (PDF attached). Task Write a verifiable analytical summary that I can copy into a course reader. Deliverable Markdown document, max 300 words, with these sections:

1. Central thesis (≤ 40 words)

2. Key arguments bullet list preserving the article’s order; each bullet ≤ 25 words.

3. Illustrative examples list 3 concrete cases Brown cites (place/person/event), each ≤ 20 words.

4. Historiographic contribution 1-sentence statement of novelty. Verification rules • Attach ≥ 4 inline citations to Brown’s page numbers (e.g., “mediator of disputes” [p. 20]). • Every key argument must be traceable to at least one citation. • No new interpretation, no outside sources.

5. Format

### Central Thesis

…

### Key Arguments

- … [p. x]

- …

### Illustrative Examples

1. … [p. y]

2. …

3. …

### Historiographic Contribution

… [p. z]

Success criteria – summary covers thesis + main arguments + examples; citations present; total length ≤ 300 words.

And here is the result:

Central Thesis

Brown contends that Syrian holy men became indispensable social mediators – patrons, judges, and healers – because late-Roman communities sought an impartial authority beyond civic and ecclesiastical hierarchies. [p. 81]

Key Arguments

Syrian steppe-desert allowed ascetics proximity to villages and constant crowds. [p. 83]

Leadership gap in prosperous but egalitarian villages let holy men assume patronal roles (loans, water, tax advice). [p. 85]

Public curses and exorcisms dramatized power, legitimizing their arbitration in disputes. [p. 88]

Extreme ascetic estrangement fashioned the “objective stranger,” enabling influence in urban and imperial politics. [p. 91]

Laypeople relied on them for focused cures, penance and everyday ethical decisions, professionalizing spiritual care. [p. 97]

Their rise marks a shift of divine power from institutions to individuals; later, churches and icons re-absorbed it. [p. 100]

Illustrative Examples

1. Abraham of the Lebanon brokered a tax loan and was acclaimed village προστάτης. [p. 86]

2. Mass pilgrimages to Symeon Stylites’ column at Telnesin sought rain and legal judgments. [p. 84]

3. Daniel the Stylite arbitrated between Emperor Zeno and the patriarch during Basiliscus’ revolt. [p. 92]

Historiographic Contribution

Brown recasts the holy man as a socio-economic agent, integrating asceticism into analyses of late-Roman community dynamics and state formation. [p. 80]

It is easy to see straight away that this result differs from a general summarization prompt. It is not necessarily better for providing a general outline of the article but undoubtedly more precise and specific, focusing on verifiable criteria like references. The prompt set out to elicit this information. Had we wanted something else, our prompt would have reflected that objective. A general instruction like “summarize this article” leaves the agency to the model, and the results, while not bad, are expectedly generic.

Tasks can be significantly more complex than summarization, encompassing various outcomes, some of which do not require a final answer. A different kind of task could ask the model to brainstorm an idea or “pressure test” an argument, known as “red teaming.”Footnote 57 Here, too, specificity and precision are beneficial, but we should expect the answer to manifest cumulatively, as an ongoing dialog, not a one-time response. What would such a conversation look like? When constructing a theory based on a primary source or sources, it is helpful to ask the model whether its reading supports the theory. Many historical sources, especially famous ones, are likely in the model’s training data. Nevertheless, providing the source as grounding should be considered a best practice, intended to provide references and minimize hallucinations.

We might ask the LLM to criticize our idea, role-playing a scholar with a different opinion. Context is key, so we should upload an article we are arguing against and task the model with simulating the opposition from its perspective. To counter the ensuing criticism, we might solicit suggestions on its weak points. Whether we are convinced or not is less important than understanding our argument’s landscape.

Another task that is quickly becoming a mainstay of research-oriented interactions with AI is a literature review. The advent of Deep Research functionality and more general agentic capabilities makes this an especially compelling use case.Footnote 58 Some clarification of terms is in order, especially since agents and their permutations have become ubiquitous buzzwords in an already hype-susceptible discourse. First, much like the vaunted and elusive AGI, AI agents lack a consensual definition. For this discussion, agents are LLM-based tools capable of executing extended tasks on the user’s behalf.

Deep Research is a narrow agent, while ChatGPT Agent is a more general agent with basic functionality. When researching literature on a specific field, it functions as a hybrid of Deep Research and a browsing agent. Google, Anthropic, Perplexity, X, and other AI labs have their own Deep Research equivalents, and as of the writing of this section, Google’s is probably the most comprehensive. Its downside is that it has difficulty discerning academically rigorous items from blogs and op-eds. ChatGPT Agent tends to be less susceptible to this problem. When researching technology, where much of the content comes from informal channels like podcasts, X posts, and Reddit, this is less of a concern. For historians, whose sole source of quotable professional knowledge comes from academic literature, this is not ideal. As contextual filters improve and AI labs strike deals with publishers – a process already underwayFootnote 59 – this problem will likely diminish. The impact on the authors whose work trains the models is a separate question galvanizing fierce debate.Footnote 60 For now, let us focus on our task.

First, we should define our focus. We are interested in monasticism as a tool for organizing community in the fifth and sixth centuries, as Roman imperial rule weakened and disappeared. This should be evident in agrarian redistribution, repurposing villas, social stratification, and the innovative solutions monasteries as local rural patrons provided in the Latin West. What would a well-structured Deep Research prompt look like for literature on this question and comparable ones? Remember, we are interested only in professional academic literature, not blogs or popular media.

In this case, the workflow included pasting the paragraph above into Google Gemini 2.5 Pro, so that it returned this comprehensive Deep Research prompt:

Comprehensive Research Prompt

Your Task: Conduct a comprehensive academic literature review on the role of early Christian monasticism in reshaping social and economic structures in the Latin West during the fifth and sixth centuries.

Core Objective: Analyze how monastic communities functioned as new centers of local power and organization as Roman imperial authority waned. Your analysis should focus specifically on agrarian redistribution, the repurposing of Roman villas into monastic centers, the evolution of social stratification, and the monastery’s emerging role as a rural patron.

Analytical Requirements:

1. Identify and summarize key scholarly debates: Detail the central arguments concerning monastic economic impact. For example, did they represent a continuity of the Roman villa economy or a radical disruption? Were they primarily conservative institutions or engines of economic innovation?

2. Synthesize methodological approaches: Compare and contrast the evidence and methods used by scholars. Distinguish between conclusions drawn from textual sources (e.g., hagiographies like Sulpicius Severus’s Life of Martin, monastic rules, and letters) and those based on archaeological evidence (e.g., excavation of villas, landscape archaeology, pollen analysis).

3. Highlight key case studies: Isolate and discuss specific, well-documented examples from the literature, such as the communities at Lérins, Marmoutier, Vivarium, or early Benedictine foundations, focusing on how they exemplify the broader trends of economic adaptation and social influence.

4. Map the intellectual landscape: Identify the seminal authors and foundational scholarly works that have shaped this field of study.

Search Scope and Constraints:

Primary keywords: Early monasticism, Late Antiquity, post-Roman West, agrarian economy, villa repurposing, monastic patronage, social stratification, land tenure, community formation, coenobitism.

Geographical focus: The Latin West, with particular attention to Gaul, Hispania, Italy, and Sub-Roman Britain.

Temporal focus: The period from 400 ce to 600 ce, though sources covering the late fourth and early seventh centuries are acceptable to establish context and trace developments.

Source types: Restrict all results to peer-reviewed academic literature only. This includes scholarly monographs from university presses, articles in established academic journals (such as Journal of Late Antiquity, Early Medieval Europe, Speculum, Journal of Roman Studies), and chapters from peer-reviewed edited volumes.

Explicit exclusions: Do not include results from nonacademic blogs, popular media, general encyclopedias, or unpublished dissertations.

Present the output as a structured report addressing each of the analytical requirements outlined here.

Asking LLMs to prompt on your behalf is often a good idea. Anthropic’s Claude even has a prompt optimizer, where you can drop your text and have it rephrased to improve results.Footnote 61 While convenient, we can achieve similar results by asking the model to formulate a detailed prompt for our task, as we did here. The above prompt was given to Gemini’s Deep Research and ChatGPT Agent. Gemini took about five minutes to complete its report, citing sixty-eight sources and reading several more. It produced a comprehensive review of the phenomenon, which can be usefully integrated into our project context. ChatGPT Agent worked for thirty-four minutes and returned a brief discussion, a list of important academic works, and several primary sources on related topics. Neither replaces serious research but can function as a first step in identifying sources and literature that can later be expanded with traditional or AI-assisted methods.

Summarization and literature review are two use cases among many that AI tools offer historians. We will encounter other examples below. To optimize AI’s execution of tasks, we now turn to another Element of effective communication with LLMs – structure.

Structure

While context tells the model who we are and what it needs to know before engaging with us, and task specifies our needs, structure contains instructions on how we want it to answer. It is best not to think of these components as distinct parts of a prompt but as information to articulate before we send the LLM off to work. We can choose to introduce this in one lengthy prompt, but it is preferable to spread it across a conversation.

Often, we do not want the model to answer straight away. Instead, we would like it to ask guiding questions until we are satisfied it understands our request. This is where context, task, and structure overlap. However, if we have a definite end result in mind, we should control for structure, especially if we must follow a given format, like a research proposal.

For complicated tasks, response structure becomes especially important. Some models naturally opt for specific ways of presenting information. OpenAI’s O3 was known for its penchant for tables.Footnote 62 This may suit our needs; if not, we should let the model know how to structure its response. We can tell it directly: “

When responding, structure your answer as follows: a 200-word introduction, followed by a 700-word historical context discussion. Then, a 500-word literature review concluding with the research question in italics. Transition to the methodology and timeline (500 words) and finish with a 150-word conclusion.”