1. Introduction

Motivation and context. We address the following fundamental question: given information on individual policyholder characteristics, how can we calculate insurance prices that do not discriminate with respect to protected characteristics, such as gender? This is a pertinent question in the context of anti-discrimination legislation; for instance, current EU law requires gender neutral insurance pricing, see European Council (2004). This question has become even more pronounced with the emergence of big data and associated developments in complex algorithmic models, since such models may be able to infer discriminatory characteristics from other policyholder features. For an overview on antidiscrimination laws, we refer to Avraham et al. (Reference Avraham, Logue and Schwarcz2014) and Prince and Schwarcz (Reference Prince and Schwarcz2019).

We aim at developing pricing formulas that are devoid of discrimination, while the insurer is still able to differentiate between policyholders with respect to nonprotected characteristics. Here, by “discrimination” we mean the provision of insurance prices that differentiate between policyholders on the basis of (legally) prohibited characteristics. For this, we assume that an insurer has access to policyholders’ data that can be split into discriminatory (e.g., gender, ethnicity) and nondiscriminatory characteristics (e.g., age, smoking habits). When we refer to discriminatory characteristics, we are relying on legal and regulatory requirements, such as those in the EU, which prohibit insurers from using certain characteristics within their pricing framework. In such a legal context, the use of protected characteristics amounts to illegal discrimination, thus creating an imperative for insurance pricing models to avoid using them. For example, within the EU, the council directive (European Council, 2004) provides definitions of direct and indirect discrimination, motivating our technical arguments.

Direct discrimination can be easily understood and identified as the use of prohibited characteristics as rating factors. Indirect discrimination presents more of a challenge, because it can be thought of as the confluence of two distinct effects: (a) the implicit ability to infer protected characteristics from other (legitimately used) policyholder features and (b) a systematic disadvantage resulting for a group that is protected by a nondiscrimination provision (Tobler, Reference Tobler2008). These two concepts are interrelated but distinct, the former, also referred to as proxy discrimination, arises from correlation between protected and unprotected characteristics; the latter, disparate impact, from correlations between protected characteristics and actual insurance prices – we refer to Frees and Huang (Reference Frees and Huang2021) for a detailed discussion from an actuarial perspective. The pricing adjustment we propose explicitly addresses (a) however such an adjustment may be legally unnecessary if (b) is not additionally present. Both these effects disappear when discriminatory characteristics are statistically independent of nondiscriminatory ones, though this observation does not imply that (a) and (b) are mathematically or conceptually equivalent.

The development of ideas in this paper is drawn from an actuarial rather than a legal perspective. We do not make any claim about their correspondence to legal definitions of discrimination in particular jurisdictions and do not argue that the pricing adjustment as proposed in this paper should be applied in all circumstances. Our focus is to provide an explicit mathematical method to remove indirect discrimination – if it happens to exist – from insurance pricing models. We begin our arguments on the assumption that certain characteristics have been prohibited and consider how pricing models can be adapted correspondingly. We say that

-

• A pricing model avoids direct discrimination, if none of the discriminatory features (characteristics) is used as a rating factor.

-

• A pricing model avoids indirect discrimination, if it avoids direct discrimination and, furthermore, the nondiscriminatory features are used in a way that does not allow implicit inference of discriminatory features from them.

To help clarify these concepts, we consider examples of directly and (potentially) indirectly discriminatory rating factors. In many jurisdictions, it is illegal to include the race/ethnicity of a policyholder within a pricing model, meaning that direct discrimination on the basis of race is illegal, even if race was (hypothetically) a good predictor of propensity to claim. There are other rating factors that are highly correlated with race, but which do not have much direct impact on the propensity to claim. For example, a policyholder’s native language is highly correlated with race in parts of the world where certain languages are spoken only by members of a particular race, and including this rating factor within a pricing model will do little but act as a proxy for race. Hence, including this rating factor may lead to what we term indirect discrimination in this work.

Then, there are rating factors that may be both directly predictive of insurance claims as well as act as proxies for discriminatory characteristics. For example, using the presence of diabetes as a rating factor will be directly predictive of health insurance costs, but since certain racial or ethnic groups may be predisposed to develop diabetes, including diabetes as a rating factor may lead to this rating factor acting as a proxy for race, potentially leading to indirect discrimination. Our aim in this paper is to develop a method that is capable of removing both direct and indirect discrimination from pricing models, where these may exist, while maintaining the predictive nature of variables that do not directly discriminate against protected characteristics. Thus, we emphasize that by avoiding indirect discrimination we do not mean to suggest removing all variables that may allow implicit inference of discriminatory features from the model (e.g., diabetes), but instead to ensure that these variables, while still remaining within the predictive model, do not act as proxies for discriminatory characteristics.

Finally, we stress that when we talk about “inferring discriminatory features,” we do not mean that an insurer necessarily has access to such data. Rather, such inference, as we will show in the sequel, takes place implicitly, via correlation between discriminatory and other features.

We illustrate indirect discrimination in the following example and will come back to this example in Section 2.2, below.

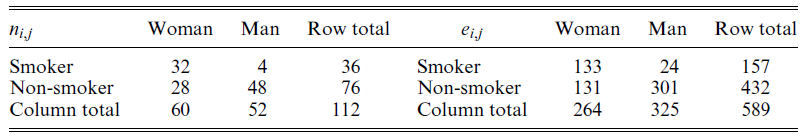

Example 1. Assume that we have observed a health insurance product and obtained the following claim counts

![]() $(n_{i,j})_{i,j = 0,1}$

and claim exposures

$(n_{i,j})_{i,j = 0,1}$

and claim exposures

![]() $(e_{i,j})_{i,j = 0,1}$

:

$(e_{i,j})_{i,j = 0,1}$

:

where

![]() $i = 1$

corresponds to “smoker” and

$i = 1$

corresponds to “smoker” and

![]() $j = 1$

corresponds to “woman”. Based on the above contingency tables, we estimate the claim frequencies

$j = 1$

corresponds to “woman”. Based on the above contingency tables, we estimate the claim frequencies

![]() $\lambda_{i,j}$

by the empirical frequency

$\lambda_{i,j}$

by the empirical frequency

![]() $\widehat\lambda_{i,j} = n_{i,j}/e_{i,j}$

. Assume now that gender is considered a discriminatory characteristic. In order to avoid direct discrimination, its explicit influence on the calculated insurance price needs to be removed. The standard way of doing this is to consider the aggregated estimators (row sums)

$\widehat\lambda_{i,j} = n_{i,j}/e_{i,j}$

. Assume now that gender is considered a discriminatory characteristic. In order to avoid direct discrimination, its explicit influence on the calculated insurance price needs to be removed. The standard way of doing this is to consider the aggregated estimators (row sums)

![]() $\widehat\lambda_{i, \bullet} = n_{i,\bullet} / e_{i,\bullet}=(n_{i,0}+n_{i,1})/(e_{i,0}+e_{i,1})$

. This approach produces, for example, for smokers,

$\widehat\lambda_{i, \bullet} = n_{i,\bullet} / e_{i,\bullet}=(n_{i,0}+n_{i,1})/(e_{i,0}+e_{i,1})$

. This approach produces, for example, for smokers,

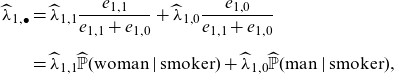

The estimate

![]() $\widehat\lambda_{1,\bullet}$

(and a premium for smokers based on it), thus, can be calculated by completely ignoring policyholders’ gender information. But one can note that an alternative representation of

$\widehat\lambda_{1,\bullet}$

(and a premium for smokers based on it), thus, can be calculated by completely ignoring policyholders’ gender information. But one can note that an alternative representation of

![]() $\widehat\lambda_{1,\bullet}$

is

$\widehat\lambda_{1,\bullet}$

is

\begin{align*}\widehat\lambda_{1,\bullet} &=\widehat \lambda_{1, 1}\frac{e_{1,1}}{e_{1,1}+e_{1,0}} + \widehat \lambda_{1, 0}\frac{e_{1,0}}{e_{1,1}+e_{1,0}}\\[6pt]

&= \widehat \lambda_{1, 1}\widehat{\mathbb{P}}(\mathrm{woman} \mid \mathrm{smoker}) + \widehat \lambda_{1, 0}\widehat{\mathbb{P}}(\mathrm{man} \mid \mathrm{smoker}),\end{align*}

\begin{align*}\widehat\lambda_{1,\bullet} &=\widehat \lambda_{1, 1}\frac{e_{1,1}}{e_{1,1}+e_{1,0}} + \widehat \lambda_{1, 0}\frac{e_{1,0}}{e_{1,1}+e_{1,0}}\\[6pt]

&= \widehat \lambda_{1, 1}\widehat{\mathbb{P}}(\mathrm{woman} \mid \mathrm{smoker}) + \widehat \lambda_{1, 0}\widehat{\mathbb{P}}(\mathrm{man} \mid \mathrm{smoker}),\end{align*}

where

![]() $\widehat{\mathbb{P}}$

refers to the empirical distribution obtained from the data. Hence, the estimate

$\widehat{\mathbb{P}}$

refers to the empirical distribution obtained from the data. Hence, the estimate

![]() $\widehat\lambda_{1,\bullet}$

not only contains information about the influence of smoking on producing a claim, but via the conditional probabilities

$\widehat\lambda_{1,\bullet}$

not only contains information about the influence of smoking on producing a claim, but via the conditional probabilities

![]() $\widehat{\mathbb{P}}(\mathrm{gender} \mid \mathrm{smoking\ habits})$

also about the propensity of smokers to be female or male. In our case, because smoking habits substantially differ between genders (a smoker is a woman with probability

$\widehat{\mathbb{P}}(\mathrm{gender} \mid \mathrm{smoking\ habits})$

also about the propensity of smokers to be female or male. In our case, because smoking habits substantially differ between genders (a smoker is a woman with probability

![]() $133/157=85\%$

, whereas a non-smoker is a woman with probability

$133/157=85\%$

, whereas a non-smoker is a woman with probability

![]() $131/432=30\%$

). It is indeed the case that the above approach exploits the correlation between gender and smoking habits, which may give rise to indirect discrimination against females in the case that claims frequencies for females are higher than males, as they indeed are here; we come back to this in Example 8, below.

$131/432=30\%$

). It is indeed the case that the above approach exploits the correlation between gender and smoking habits, which may give rise to indirect discrimination against females in the case that claims frequencies for females are higher than males, as they indeed are here; we come back to this in Example 8, below.

The numbers used in Example 1 are purely illustrative, though we note that the proportion of female smokers has been greater compared to the male population in for example, Sweden during the 2000s. A further discussion of implications of alternative statistical assumptions behind this example is given in Section 2.1, Remark 9. The example illustrates that avoiding direct discrimination does not necessarily entail also avoiding indirect discrimination. Consequently, just ignoring discriminatory features in the calculation of insurance prices does not generally yield discrimination-free prices. Hence, unawareness (or willful ignorance) of discriminatory features is not a solution to the problem of calculating discrimination-free insurance prices.

Finally, we are not arguing in this paper whether certain characteristics ought to be prohibited from a legal or ethical perspective. Indeed, there are varying views on this around the world; for example, gender is a permitted characteristic in insurance pricing in many jurisdictions outside of the EU. Also, there are circumstances, where apparently discriminatory characteristics may be used for pricing, if there is a “legitimate aim”; in the context of EU law see for example, Article 2(b) in European Council (2004). Furthermore, we do not aim to address insurance market and economic implications that may result from legally prohibiting the use of certain characteristics in insurance pricing. An example of such issues is potential “reverse discrimination,” meaning that pricing without using all policyholder characteristics may imply (unwanted) cross-subsidies between groups of policyholders, with this in turn leading to adverse selection and other undesirable side effects. Moreover, excluding some rating factors from statistical models typically leads to a decrease of predictive performance.

Our contributions. First, we embed the ideas of direct and indirect discrimination into a mathematical context. The ideas and principles we develop are relevant to all situations where predictors are calculated on the basis of conditional expected values and, hence, they are applicable in all fields where discrimination is an important issue, for example, also in customer credit rating. Second, we give a rigorous probabilistic account of discrimination-free prices and their existence. We propose a simple pricing formula that avoids both potential direct and indirect discrimination. This adjustment will always remove the potential for indirect discrimination from prices, regardless of whether such indirect discrimination is present or not. Furthermore, while the formula only uses nondiscriminatory features as rating factors, it introduces an adjustment, which requires knowledge of policyholders’ discriminatory features. Third, we justify discrimination-free prices using tools from causal inference. Fourth, we identify bias in aggregate portfolio prices as an unintended consequence of discrimination-free prices. While prices that can be written as conditional expectations under the physical probability measure naturally lead to an unbiased pricing system on a portfolio level, discrimination-free prices do not generally have this property. Therefore, we propose methods for bias correction. The bias corrections rely on the overall portfolio risk being assessed using all available characteristics, since it is only the step of allocating the overall price to individual contracts that potential discrimination can occur. Fifth, we illustrate how discrimination-free prices can be calculated in practice, using either machine learning algorithms or standard statistical methods like generalized linear models (GLMs).

Literature review. Although an issue of key relevance for insurance pricing, until recently relatively little attention has been paid to the issue of discrimination-free pricing within the actuarial literature. In a discussion of the implications of EU gender legislation, Guillén (Reference Guillén2012) suggests that covariates highly correlated with gender can be used as proxies by insurance companies, which from our perspective may result in indirect discrimination. Focusing on the case of mortality pricing, Chen and Vigna (Reference Chen and Vigna2017) criticize the industry practice of deriving unisex life tables by mixing the life tables for each gender on the grounds that this does not respect the principles of actuarial fairness, which is to say that the total unisex premiums charged for the portfolio are not equal to the total premiums charged using gender-specific life tables. They provide alternative approaches without this shortcoming; note that our proposed discrimination-free prices reproduce the pricing formulas of Chen and Vigna (Reference Chen and Vigna2017). The implications of unisex pricing on insurer capital requirements in the context of Solvency II are examined in Chen et al. (Reference Chen, Guillén and Vigna2018), and an ALM approach to unisex pricing is taken in Burszas et al. (Reference Bruszas, Kaschützke, Maurer and Siegelin2018), where also the concept of “gender mix risk” is discussed. Market implications of unisex tariffs are discussed in Sass and Seifried (Reference Sass and Seifried2014), see also De Jong and Ferris (Reference De Jong and Ferris2006) for a discussion of adverse selection stemming from restrictions on risk classification. A recent wide-ranging discussion of several issues connected with the topic of discrimination in insurance is found in Frees and Huang (Reference Frees and Huang2021), who also address the issue of indirect discrimination.

The issue of indirect discrimination occurring by ignoring discriminatory covariates has been discussed in Pope and Sydnor (Reference Pope and Sydnor2011) and Kusner et al. (Reference Kusner, Loftus, Russell and Silva2017). The procedure for discrimination-free pricing provided in Pope and Sydnor (Reference Pope and Sydnor2011) is essentially the same as in our proposal; this pricing rule is applied in the context of auto insurance pricing by Aseervatham et al. (Reference Aseervatham, Lex and Spindler2016). However, these authors do not provide a probabilistic justification for the prices used nor do they address the critical issue of a potential bias at portfolio level (and associated corrections).

We are aware of relatively few examples of causal inference applied within an insurance context. For renewals of insurance policies, some insurers seek to estimate policyholder demand elasticity by randomly varying renewal prices for a subset of policyholders (i.e., a form of randomized controlled trial is conducted) and estimating the impact on the probability of renewal. Once the demand elasticities have been estimated, a profit maximizing pricing policy can be established in a practice referred to as price optimization, see for example, Krikler et al. (Reference Krikler, Dolberger and Eckel2004). Within that context, Guelman and Guillén (Reference Guelman and Guillén2014) apply methods from causal inference to estimate demand elasticity functions from observational data collected by an insurer.

We emphasize that the issues discussed in this paper apply to many other industries; we refer to, for example, Fuster et al. (Reference Fuster, Goldsmith-Pinkham, Ramadorai and Walther2018) where a credit rating application is considered. Their study focuses on evaluating the differential impact of prediction technologies on ethnic groups, rather than on a mathematical definition of discrimination.

Organization of the paper. In Section 2, we discuss different kinds of insurance prices, comprising the best-estimate price, which considers all available information, the unawareness price, which avoids direct discrimination, and the discrimination-free price, which avoids both direct and indirect discrimination, whenever the latter exists. In particular, Subsection 2.3 gives mathematical descriptions of direct and indirect discrimination, which are based on a change of probability measure. Special cases of discrimination-free prices can be interpreted in terms of causal inference; this is discussed in Section 3. The bias that discrimination-free prices can induce at portfolio level is discussed in Section 4, along with proposals for bias mitigation. In Section 5, we describe the calculation of discrimination-free prices based on models estimated from data. This is explored in more detail in Section 6, where a numerical example is given, based on a synthetic health insurance portfolio. Concluding remarks are collected in Section 7.

2. Discrimination-free pricing

2.1. Definition of discrimination-free prices

We denote by

![]() $(\Omega, {\mathcal{F}}, {\mathbb{P}})$

the underlying probability space with physical probability measure

$(\Omega, {\mathcal{F}}, {\mathbb{P}})$

the underlying probability space with physical probability measure

![]() ${\mathbb{P}}$

. For a given portfolio of insurance policies, let

${\mathbb{P}}$

. For a given portfolio of insurance policies, let

![]() $\textbf{D}$

denote the vector of discriminatory covariates (characteristics, features, explanatory variables) of a policyholder, and let

$\textbf{D}$

denote the vector of discriminatory covariates (characteristics, features, explanatory variables) of a policyholder, and let

![]() $\textbf{X}$

denote the vector of nondiscriminatory covariates. This split into

$\textbf{X}$

denote the vector of nondiscriminatory covariates. This split into

![]() $\textbf{X}$

and

$\textbf{X}$

and

![]() $\textbf{D}$

is exogenous, provided by, for example, a legislator. Further, we assume that

$\textbf{D}$

is exogenous, provided by, for example, a legislator. Further, we assume that

![]() $\textbf{X}$

and

$\textbf{X}$

and

![]() $\textbf{D}$

are random vectors on

$\textbf{D}$

are random vectors on

![]() $(\Omega, {\mathcal{F}}, {\mathbb{P}})$

; the randomness of these covariate vectors represents variations between policyholders. A realization of

$(\Omega, {\mathcal{F}}, {\mathbb{P}})$

; the randomness of these covariate vectors represents variations between policyholders. A realization of

![]() $(\textbf{X},\textbf{D})$

corresponds to choosing an insurance policy at random from the portfolio; a policyholder profile with specific characteristics is obtained by conditioning on

$(\textbf{X},\textbf{D})$

corresponds to choosing an insurance policy at random from the portfolio; a policyholder profile with specific characteristics is obtained by conditioning on

![]() $\textbf{X}=\textbf{x}, \textbf{D}=\textbf{d}$

. For simplicity, we denote the marginal and conditional distributions of covariates under

$\textbf{X}=\textbf{x}, \textbf{D}=\textbf{d}$

. For simplicity, we denote the marginal and conditional distributions of covariates under

![]() ${\mathbb{P}}$

by

${\mathbb{P}}$

by

![]() $\textbf{X}\sim {\mathbb{P}}(\textbf{x}), \textbf{D}\sim {\mathbb{P}}(\textbf{d})$

and

$\textbf{X}\sim {\mathbb{P}}(\textbf{x}), \textbf{D}\sim {\mathbb{P}}(\textbf{d})$

and

![]() $(\textbf{D}\mid\textbf{X}=\textbf{x})\sim {\mathbb{P}}(\textbf{d}\mid\textbf{x})$

, respectively, thus, we use the same letter

$(\textbf{D}\mid\textbf{X}=\textbf{x})\sim {\mathbb{P}}(\textbf{d}\mid\textbf{x})$

, respectively, thus, we use the same letter

![]() ${\mathbb{P}}$

for the (conditional) distribution functions of

${\mathbb{P}}$

for the (conditional) distribution functions of

![]() $\textbf{X}$

and

$\textbf{X}$

and

![]() $\textbf{D}$

.

$\textbf{D}$

.

A policyholder claim is denoted by the random variable Y. The claim Y typically depends on (but is not fully determined by) both the discriminatory covariates

![]() $\textbf{D}$

and the nondiscriminatory ones

$\textbf{D}$

and the nondiscriminatory ones

![]() $\textbf{X}$

. Our aim is to price such a claim Y, with the resulting price being free from direct as well as indirect discrimination (where this exists), according to the arguments of Section 1. A technical description of these concepts will be given in Section 2.3, below.

$\textbf{X}$

. Our aim is to price such a claim Y, with the resulting price being free from direct as well as indirect discrimination (where this exists), according to the arguments of Section 1. A technical description of these concepts will be given in Section 2.3, below.

In the sequel, it will be useful to assume

![]() $Y,\textbf{X}, \textbf{D} \in {\mathcal{L}}^2(\Omega, {\mathcal{F}}, {\mathbb{P}})$

. This assumption is not crucial for defining discrimination-free prices, but it will allow us to give more intuitive interpretations in terms of orthogonal projections and minimal distances. Our notion of price will be based on conditional expectations of Y, when conditioning on different subsets of covariates. We first introduce a number of different prices that are important for the subsequent discussions and derivations.

$Y,\textbf{X}, \textbf{D} \in {\mathcal{L}}^2(\Omega, {\mathcal{F}}, {\mathbb{P}})$

. This assumption is not crucial for defining discrimination-free prices, but it will allow us to give more intuitive interpretations in terms of orthogonal projections and minimal distances. Our notion of price will be based on conditional expectations of Y, when conditioning on different subsets of covariates. We first introduce a number of different prices that are important for the subsequent discussions and derivations.

Definition 2 (best-estimate price). The best-estimate price for Y w.r.t.

![]() $(\textbf{X}, \textbf{D})$

is defined by

$(\textbf{X}, \textbf{D})$

is defined by

Remark 3.

-

(a) We call the price

$\mu(\textbf{X}, \textbf{D})$

“best-estimate” because it minimizes the

$\mu(\textbf{X}, \textbf{D})$

“best-estimate” because it minimizes the

${\mathcal{L}}^2$

-distance of all

${\mathcal{L}}^2$

-distance of all

$(\textbf{X}, \textbf{D})$

-measurable prices to Y, that is,

$(\textbf{X}, \textbf{D})$

-measurable prices to Y, that is,

$\mu(\textbf{X}, \textbf{D})$

is the orthogonal projection of Y onto the sub-space generated by

$\mu(\textbf{X}, \textbf{D})$

is the orthogonal projection of Y onto the sub-space generated by

$(\textbf{X}, \textbf{D})$

.

$(\textbf{X}, \textbf{D})$

. -

(b) In general, the best-estimate price is not discrimination-free, unless we are in the special case of

$\mu(\textbf{X}, \textbf{D})=\mu(\textbf{X})$

, implied by

$\mu(\textbf{X}, \textbf{D})=\mu(\textbf{X})$

, implied by

$\textbf{X}$

being independent of

$\textbf{X}$

being independent of

$\textbf{D}$

.

$\textbf{D}$

. -

(c) The best-estimate price is unbiased w.r.t. Y, that is,

we use the tower property of conditional expectations, see Williams [26, Sec. 9.7]. Unbiasedness is important because it indicates that best-estimate prices achieve on average the correct price level for the portfolio. \begin{equation*} \mu \,:\!=\, {\mathbb{E}}[Y] = {\mathbb{E}} \left[\mu(\textbf{X}, \textbf{D}) \right]; \end{equation*}

\begin{equation*} \mu \,:\!=\, {\mathbb{E}}[Y] = {\mathbb{E}} \left[\mu(\textbf{X}, \textbf{D}) \right]; \end{equation*}

An initial attempt at achieving discrimination-free prices arises through simply ignoring discriminatory covariates

![]() $\textbf{D}$

.

$\textbf{D}$

.

Definition 4 (unawareness price). The unawareness price for Y w.r.t.

![]() $\textbf{X}$

is defined by

$\textbf{X}$

is defined by

Remark 5.

-

(a) As the price

$\mu(\textbf{X})$

does not depend explicitly on

$\mu(\textbf{X})$

does not depend explicitly on

$\textbf{D}$

, it avoids direct discrimination. However, the unawareness price may produce indirect discrimination, as was discussed in Section 1; see also Kusner et al. (Reference Kusner, Loftus, Russell and Silva2017). Specifically, we can write the unawareness price as

(2.2)The potential for discrimination arises because the conditional probability

$\textbf{D}$

, it avoids direct discrimination. However, the unawareness price may produce indirect discrimination, as was discussed in Section 1; see also Kusner et al. (Reference Kusner, Loftus, Russell and Silva2017). Specifically, we can write the unawareness price as

(2.2)The potential for discrimination arises because the conditional probability \begin{equation} \mu(\textbf{X}) =\int_\textbf{d} \mu(\textbf{X}, \textbf{d}) \ \, {\rm d}{\mathbb{P}}(\textbf{d} \mid \textbf{X}). \end{equation}

\begin{equation} \mu(\textbf{X}) =\int_\textbf{d} \mu(\textbf{X}, \textbf{d}) \ \, {\rm d}{\mathbb{P}}(\textbf{d} \mid \textbf{X}). \end{equation}

${\mathbb{P}}(\textbf{d} \mid \textbf{X})$

enables inference of discriminatory covariates

${\mathbb{P}}(\textbf{d} \mid \textbf{X})$

enables inference of discriminatory covariates

$\textbf{D}$

from nondiscriminatory ones

$\textbf{D}$

from nondiscriminatory ones

$\textbf{X}$

. We stress that discrimination here is indirect: while

$\textbf{X}$

. We stress that discrimination here is indirect: while

$\textbf{D}$

is not directly used in the pricing formula, it is potentially “proxied” by

$\textbf{D}$

is not directly used in the pricing formula, it is potentially “proxied” by

$\textbf{X}$

, if statistical dependence between

$\textbf{X}$

, if statistical dependence between

$\textbf{D}$

and

$\textbf{D}$

and

$\textbf{X}$

exists. This is precisely the situation discussed in Section 1. Indirect discrimination is avoided in the special case when

$\textbf{X}$

exists. This is precisely the situation discussed in Section 1. Indirect discrimination is avoided in the special case when

$\textbf{D}$

and

$\textbf{D}$

and

$\textbf{X}$

are independent, since then it holds that

$\textbf{X}$

are independent, since then it holds that

${\rm d}{\mathbb{P}}(\textbf{d} \mid \textbf{X}) = {\rm d} {\mathbb{P}}(\textbf{d})$

.

${\rm d}{\mathbb{P}}(\textbf{d} \mid \textbf{X}) = {\rm d} {\mathbb{P}}(\textbf{d})$

.

-

(b) The price

$\mu(\textbf{X})$

minimizes the

$\mu(\textbf{X})$

minimizes the

${\mathcal{L}}^2$

-distance to Y based solely on

${\mathcal{L}}^2$

-distance to Y based solely on

$\textbf{X}$

, that is, it is the best price w.r.t. information

$\textbf{X}$

, that is, it is the best price w.r.t. information

$\textbf{X}$

. At the same time, the price

$\textbf{X}$

. At the same time, the price

$\mu(\textbf{X})$

also minimizes the

$\mu(\textbf{X})$

also minimizes the

${\mathcal{L}}^2$

-distance to

${\mathcal{L}}^2$

-distance to

$\mu(\textbf{X}, \textbf{D})$

, by a simple application of the Pythagorean theorem. Note that

which intuitively should decrease with increasing dependence between

$\mu(\textbf{X}, \textbf{D})$

, by a simple application of the Pythagorean theorem. Note that

which intuitively should decrease with increasing dependence between \[ || \mu(\textbf{X}) - \mu(\textbf{X}, \textbf{D}) ||_2^2= {\mathbb{E}}[{\operatorname{Var}}(\mu(\textbf{X}, \textbf{D}) \mid \textbf{X})],\]

\[ || \mu(\textbf{X}) - \mu(\textbf{X}, \textbf{D}) ||_2^2= {\mathbb{E}}[{\operatorname{Var}}(\mu(\textbf{X}, \textbf{D}) \mid \textbf{X})],\]

$\textbf{X}$

and

$\textbf{X}$

and

$\textbf{D}$

. Hence, the quality in the approximation of

$\textbf{D}$

. Hence, the quality in the approximation of

$\mu(\textbf{X},\textbf{D})$

using

$\mu(\textbf{X},\textbf{D})$

using

$\mu(\textbf{X})$

should be good if

$\mu(\textbf{X})$

should be good if

$\textbf{D}$

essentially is a deterministic function of

$\textbf{D}$

essentially is a deterministic function of

$\textbf{X}$

, that is, if the nondiscriminatory covariates

$\textbf{X}$

, that is, if the nondiscriminatory covariates

$\textbf{X}$

allow us to almost perfectly infer the discriminatory covariates

$\textbf{X}$

allow us to almost perfectly infer the discriminatory covariates

$\textbf{D}$

.

$\textbf{D}$

.

-

(c) The unawareness price is unbiased, since

\begin{equation*} \mu = {\mathbb{E}}[Y] = {\mathbb{E}} \left[\mu(\textbf{X}) \right]. \end{equation*}

\begin{equation*} \mu = {\mathbb{E}}[Y] = {\mathbb{E}} \left[\mu(\textbf{X}) \right]. \end{equation*}

We now propose a price that is free of both direct and indirect discrimination.

Definition 6 (discrimination-free price). A discrimination-free price for Y w.r.t.

![]() $\textbf{X}$

is defined by

$\textbf{X}$

is defined by

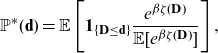

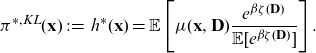

where the distribution

![]() ${\mathbb{P}}^*(\textbf{d})$

is defined on the same range as the marginal distribution of the discriminatory variables

${\mathbb{P}}^*(\textbf{d})$

is defined on the same range as the marginal distribution of the discriminatory variables

![]() $\textbf{D}\sim {\mathbb{P}}(\textbf{d})$

.

$\textbf{D}\sim {\mathbb{P}}(\textbf{d})$

.

Remark 7.

-

(a) The discrimination-free price (2.3) is obtained by averaging best-estimate prices over discriminatory covariates, using a (potentially arbitrary) marginal distribution

${\mathbb{P}}^*(\textbf{d})$

. The crucial step here is the imposed marginalization w.r.t.

${\mathbb{P}}^*(\textbf{d})$

. The crucial step here is the imposed marginalization w.r.t.

$\textbf{D}$

, rather than the specific choice of

$\textbf{D}$

, rather than the specific choice of

${\mathbb{P}}^*(\textbf{d})$

(which can be

${\mathbb{P}}^*(\textbf{d})$

(which can be

${\mathbb{P}}^*(\textbf{d}) = {\mathbb{P}}(\textbf{d}$

)). Given that the price

${\mathbb{P}}^*(\textbf{d}) = {\mathbb{P}}(\textbf{d}$

)). Given that the price

$h^*(\textbf{X})$

does not explicitly depend on

$h^*(\textbf{X})$

does not explicitly depend on

$\textbf{D}$

, it is obviously free from direct discrimination. We argue that the averaging construction proposed in (2.3) also removes all potential indirect discrimination. While (2.3) appears similar to (2.2), there is a key difference: discrimination-free prices do not in any way depend on the conditional distribution

$\textbf{D}$

, it is obviously free from direct discrimination. We argue that the averaging construction proposed in (2.3) also removes all potential indirect discrimination. While (2.3) appears similar to (2.2), there is a key difference: discrimination-free prices do not in any way depend on the conditional distribution

${\mathbb{P}}(\textbf{d} \mid\textbf{X})$

– hence they do not use any inference of discriminatory covariates from nondiscriminatory ones. This will be further discussed in Section 2.3 and verified in the case study of Section 6. In the special case of

${\mathbb{P}}(\textbf{d} \mid\textbf{X})$

– hence they do not use any inference of discriminatory covariates from nondiscriminatory ones. This will be further discussed in Section 2.3 and verified in the case study of Section 6. In the special case of

$\textbf{X}$

and

$\textbf{X}$

and

$\textbf{D}$

being independent and

$\textbf{D}$

being independent and

${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

, it follows that

${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

, it follows that

$h^*(\textbf{X}) = \mu(\textbf{X})$

.

$h^*(\textbf{X}) = \mu(\textbf{X})$

. -

(b) Definition 6 is designed to remove the possible explanatory power that

$\textbf{X}$

may have for

$\textbf{X}$

may have for

$\textbf{D}$

; it does not assume independence between

$\textbf{D}$

; it does not assume independence between

$\textbf{X}$

and

$\textbf{X}$

and

$\textbf{D}$

in the given portfolio. This point will be made more precise in Section 2.3, and in Section 2.4 we discuss existence of discrimination-free prices as well as alternative interpretations of

$\textbf{D}$

in the given portfolio. This point will be made more precise in Section 2.3, and in Section 2.4 we discuss existence of discrimination-free prices as well as alternative interpretations of

$h^*(\textbf{X})$

.

$h^*(\textbf{X})$

. -

(c) Definition 6 can also be motivated by arguments from causal inference. Specifically, formulas like (2.3) are used to quantify the direct causal effect of

$\textbf{X}$

on Y; we discuss this in more detail in Section 3, below. We stress that although causal inference can in many situations serve as an alternative motivation of discrimination-free prices, the reasoning behind our Definition 6 does not rely on any causal assumptions. Further discussions of this are provided in Section 3. Furthermore, formula (2.3) using the special choice

$\textbf{X}$

on Y; we discuss this in more detail in Section 3, below. We stress that although causal inference can in many situations serve as an alternative motivation of discrimination-free prices, the reasoning behind our Definition 6 does not rely on any causal assumptions. Further discussions of this are provided in Section 3. Furthermore, formula (2.3) using the special choice

${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

corresponds precisely to the partial dependence plot (PDP) introduced by Friedman (Reference Friedman2001), see also Zhao and Hastie (Reference Zhao and Hastie2021).

${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

corresponds precisely to the partial dependence plot (PDP) introduced by Friedman (Reference Friedman2001), see also Zhao and Hastie (Reference Zhao and Hastie2021). -

(d) Prices obtained using (2.3) will in general not be unbiased, since

(2.4)even for the special choice \begin{equation} \mu={\mathbb{E}}[Y] \neq {\mathbb{E}}[h^*(\textbf{X})] = \int_{\textbf{x},\textbf{d}} \mu(\textbf{x}, \textbf{d}) {\rm d}{\mathbb{P}}^*(\textbf{d}){\rm d}{\mathbb{P}}(\textbf{x}),\end{equation}

\begin{equation} \mu={\mathbb{E}}[Y] \neq {\mathbb{E}}[h^*(\textbf{X})] = \int_{\textbf{x},\textbf{d}} \mu(\textbf{x}, \textbf{d}) {\rm d}{\mathbb{P}}^*(\textbf{d}){\rm d}{\mathbb{P}}(\textbf{x}),\end{equation}

${\mathbb{P}}^*(\textbf{d}) = {\mathbb{P}}(\textbf{d})$

. This observation motivates portfolio level price adjustments, which will be discussed in Section 4. We note that, in actuarial practice, such a bias is not necessarily a problem, as insurers are primarily interested in the relativities between different policyholders, which can be used to differentiate a baseline premium of the overall portfolio costs to individual policyholders. Still, a poor allocation principle may result in adverse selection.

${\mathbb{P}}^*(\textbf{d}) = {\mathbb{P}}(\textbf{d})$

. This observation motivates portfolio level price adjustments, which will be discussed in Section 4. We note that, in actuarial practice, such a bias is not necessarily a problem, as insurers are primarily interested in the relativities between different policyholders, which can be used to differentiate a baseline premium of the overall portfolio costs to individual policyholders. Still, a poor allocation principle may result in adverse selection.

-

(e) Note that, given the potential arbitrariness of

${\mathbb{P}}^*$

, calculation of discrimination-free prices only requires knowledge of the mapping

${\mathbb{P}}^*$

, calculation of discrimination-free prices only requires knowledge of the mapping

$(\textbf{x},\textbf{d})\mapsto\mu(\textbf{x},\textbf{d})$

, where

$(\textbf{x},\textbf{d})\mapsto\mu(\textbf{x},\textbf{d})$

, where

$\mu(\textbf{x},\textbf{d})$

may be an (algorithmically derived implicit) regression function. Nevertheless, as pointed out in the previous remark, if one aims to correct a potential bias of

$\mu(\textbf{x},\textbf{d})$

may be an (algorithmically derived implicit) regression function. Nevertheless, as pointed out in the previous remark, if one aims to correct a potential bias of

$h^*(\textbf{X})$

, it is necessary to perform modeling and model calibration under the “real-world” probability measure

$h^*(\textbf{X})$

, it is necessary to perform modeling and model calibration under the “real-world” probability measure

${\mathbb{P}}$

.

${\mathbb{P}}$

. -

(f) Given the construction (2.3),

${\mathbb{P}}^*(\textbf{d})$

may be inferred from comparing best-estimate prices

${\mathbb{P}}^*(\textbf{d})$

may be inferred from comparing best-estimate prices

$\mu(\textbf{X},\textbf{D})$

and observed discrimination-free prices

$\mu(\textbf{X},\textbf{D})$

and observed discrimination-free prices

$h^*(\textbf{X})$

.

$h^*(\textbf{X})$

.

2.2. Choice of weighting distributions for discriminatory covariates

From Definition 6, it follows that the distribution

![]() ${\mathbb{P}}^*(\textbf{d})$

can be chosen rather freely. A simple choice is

${\mathbb{P}}^*(\textbf{d})$

can be chosen rather freely. A simple choice is

![]() ${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

, that is, average in (2.3) w.r.t. the marginal distribution of the discriminatory characteristics in the portfolio. This choice is supported by causal inference arguments in Section 3. We denote this special case by

${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

, that is, average in (2.3) w.r.t. the marginal distribution of the discriminatory characteristics in the portfolio. This choice is supported by causal inference arguments in Section 3. We denote this special case by

We illustrate how

![]() $h(\textbf{X})$

is evaluated in the context of Example 1.

$h(\textbf{X})$

is evaluated in the context of Example 1.

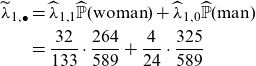

Example 8. In Example 1, we argued that aggregated estimators (row sums)

![]() $\widehat\lambda_{i,\bullet}$

are discriminatory because gender can be inferred from smoking habits. The price

$\widehat\lambda_{i,\bullet}$

are discriminatory because gender can be inferred from smoking habits. The price

![]() $h(\textbf{X})$

removes this effect by replacing the conditional probability

$h(\textbf{X})$

removes this effect by replacing the conditional probability

![]() ${\mathbb{P}}(\mathrm{gender} \mid \mathrm{smoking\ habits})$

by

${\mathbb{P}}(\mathrm{gender} \mid \mathrm{smoking\ habits})$

by

![]() ${\mathbb{P}}(\mathrm{gender})$

. This implies that the frequency estimate for smokers

${\mathbb{P}}(\mathrm{gender})$

. This implies that the frequency estimate for smokers

![]() $\widehat\lambda_{1, \bullet}$

is replaced by

$\widehat\lambda_{1, \bullet}$

is replaced by

\begin{align}\nonumber \widetilde\lambda_{1, \bullet} &= \widehat \lambda_{1,1}\widehat{{\mathbb{P}}}(\mathrm{woman})+\widehat \lambda_{1,0}\widehat{{\mathbb{P}}}(\mathrm{man})\\ &= \frac{32}{133}\cdot \frac{264}{589}+ \frac{4}{24}\cdot \frac{325}{589}\end{align}

\begin{align}\nonumber \widetilde\lambda_{1, \bullet} &= \widehat \lambda_{1,1}\widehat{{\mathbb{P}}}(\mathrm{woman})+\widehat \lambda_{1,0}\widehat{{\mathbb{P}}}(\mathrm{man})\\ &= \frac{32}{133}\cdot \frac{264}{589}+ \frac{4}{24}\cdot \frac{325}{589}\end{align}

Similarly, for non-smokers

We demonstrate the potential portfolio bias that discrimination-free prices induce. The total cost of the portfolio, under best-estimate prices, is equal to the observed total claim of 112. For discrimination-free prices, the total cost is given by

This indicates that the discrimination-free price

![]() $h(\textbf{X})$

leads to an under-pricing of the overall portfolio in the present situation.

$h(\textbf{X})$

leads to an under-pricing of the overall portfolio in the present situation.

Recall that there is some flexibility in the selection of

![]() ${\mathbb{P}}^*(\textbf{d})$

. In this simple example, with

${\mathbb{P}}^*(\textbf{d})$

. In this simple example, with

![]() $\textbf{D}$

being a binary classification variable, we can directly choose

$\textbf{D}$

being a binary classification variable, we can directly choose

![]() ${\mathbb{P}}^*(\mathrm{woman})$

and

${\mathbb{P}}^*(\mathrm{woman})$

and

![]() ${\mathbb{P}}^*(\mathrm{man})$

in a way that eliminates the portfolio bias. Specifically, we set

${\mathbb{P}}^*(\mathrm{man})$

in a way that eliminates the portfolio bias. Specifically, we set

and require for the resulting overall portfolio price that it holds

The resulting choice is

![]() ${\mathbb{P}}^*(\mathrm{woman})=48.3\%>44.8\%={\mathbb{P}}(\mathrm{woman})$

.

${\mathbb{P}}^*(\mathrm{woman})=48.3\%>44.8\%={\mathbb{P}}(\mathrm{woman})$

.

Finally, we note that in this example, switching to discrimination-free prices leads to a reduction in the share of the portfolio costs covered by women. Women cause

![]() $60/112=53.6\%$

of the total costs which is exactly the share of the total costs that women have to pay under best-estimate pricing (assuming that the prices coincide with the claims caused). If we use the unawareness price by simply dropping the gender variable, women cover

$60/112=53.6\%$

of the total costs which is exactly the share of the total costs that women have to pay under best-estimate pricing (assuming that the prices coincide with the claims caused). If we use the unawareness price by simply dropping the gender variable, women cover

![]() $47.8\%$

of the total costs. If we charge the discrimination-free price (2.6)-(2.7), women cover

$47.8\%$

of the total costs. If we charge the discrimination-free price (2.6)-(2.7), women cover

![]() $45.7\%$

of all costs, thus, less than under the unawareness price. This exactly reflects the potential for indirect discrimination in the unawareness price: women have on average higher costs than men, and the allocation of these excess costs is bigger to the sub-population where women are more prevalent compared to the population distribution

$45.7\%$

of all costs, thus, less than under the unawareness price. This exactly reflects the potential for indirect discrimination in the unawareness price: women have on average higher costs than men, and the allocation of these excess costs is bigger to the sub-population where women are more prevalent compared to the population distribution

![]() ${\mathbb{P}}(\textbf{d})$

, that is, we learn

${\mathbb{P}}(\textbf{d})$

, that is, we learn

![]() $\textbf{D}$

from

$\textbf{D}$

from

![]() $\textbf{X}$

through the portfolio distribution.

$\textbf{X}$

through the portfolio distribution.

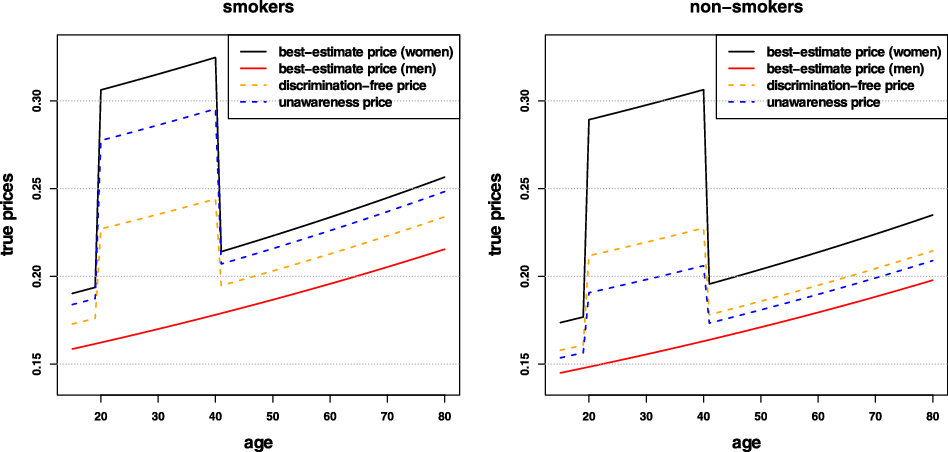

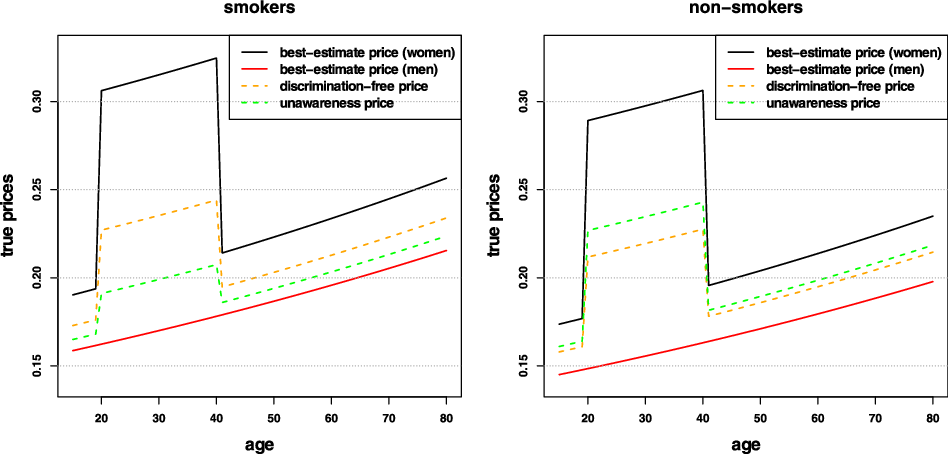

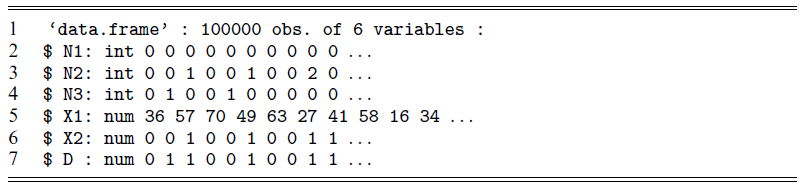

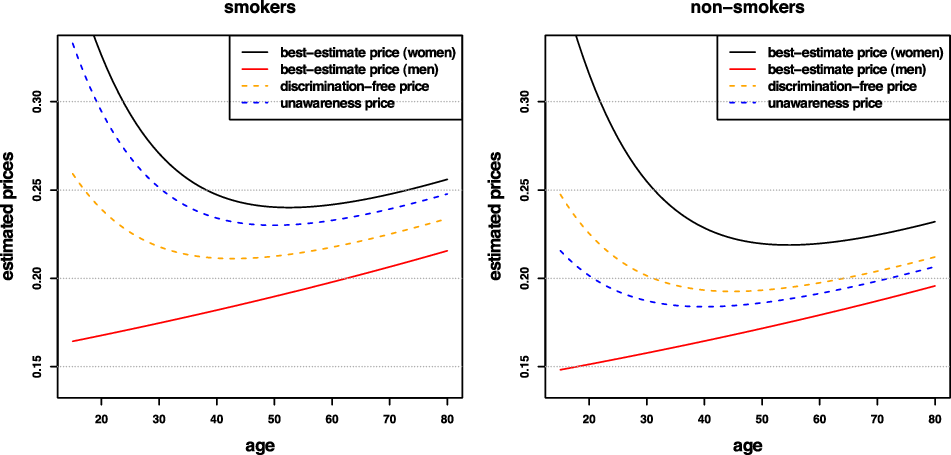

Remark 9. While in Examples 1 and 8, the potential indirect discrimination was against women, one can easily swap the “woman” and “man” labels, so that such indirect discrimination is against males. This indicates that the notion of discrimination used here (as well as the proposed pricing adjustment) does not reflect (or indeed seek to correct for) historical or current injustices. A more subtle impact arises if, ceteris paribus, the frequency of smokers in the female population was actually lower than that for males. In such a case, unawareness prices would actually understate the impact of smoking, as this would be “masked” by males’ otherwise lower propensity to claim; on the contrary, discrimination-free prices would become more sensitive with respect to the specific risk posed by smoking. This idea is further developed in the detailed numerical example presented later in the paper; see last paragraph of Section 6.1 and Figure 3.

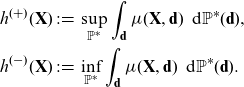

Furthermore, it is useful to consider the extrema of discrimination-free prices. Consider the following prices:

\begin{align*} h^{(+)}(\textbf{X}) &\,:\!=\, \sup_{{\mathbb{P}}^*}\int_\textbf{d} \mu (\textbf{X}, \textbf{d})\, \ {\rm d}{\mathbb{P}}^*(\textbf{d}),\\

h^{(-)}(\textbf{X}) &\,:\!=\, \inf_{{\mathbb{P}}^*}\int_\textbf{d} \mu (\textbf{X}, \textbf{d})\ \, {\rm d}{\mathbb{P}}^*(\textbf{d}).\end{align*}

\begin{align*} h^{(+)}(\textbf{X}) &\,:\!=\, \sup_{{\mathbb{P}}^*}\int_\textbf{d} \mu (\textbf{X}, \textbf{d})\, \ {\rm d}{\mathbb{P}}^*(\textbf{d}),\\

h^{(-)}(\textbf{X}) &\,:\!=\, \inf_{{\mathbb{P}}^*}\int_\textbf{d} \mu (\textbf{X}, \textbf{d})\ \, {\rm d}{\mathbb{P}}^*(\textbf{d}).\end{align*}

Here,

![]() $h^{(+)}(\textbf{X})$

and

$h^{(+)}(\textbf{X})$

and

![]() $h^{(-)}(\textbf{X})$

correspond to the essential supremum and infimum over

$h^{(-)}(\textbf{X})$

correspond to the essential supremum and infimum over

![]() $\textbf{d}$

in the range of

$\textbf{d}$

in the range of

![]() $\textbf{D}$

, respectively. Thus, for nondiscriminatory covariates

$\textbf{D}$

, respectively. Thus, for nondiscriminatory covariates

![]() $\textbf{X}=\textbf{x}$

, this immediately gives us

$\textbf{X}=\textbf{x}$

, this immediately gives us

Moreover, for the bias property we get the following relationship

By definition

![]() $h^{(+)}(\textbf{x})$

corresponds to the “worst” (or most “prudent”) price and has been discussed in the context of unisex pricing in Chen and Vigna (Reference Chen and Vigna2017).

$h^{(+)}(\textbf{x})$

corresponds to the “worst” (or most “prudent”) price and has been discussed in the context of unisex pricing in Chen and Vigna (Reference Chen and Vigna2017).

As seen in Example 8, the discrimination-free price (2.3) is generally biased. An alternative possibility for the choice of

![]() ${\mathbb{P}}^*(\textbf{d})$

is to additionally require unbiasedness in (2.4). In the simple case of a binary discriminatory covariate like gender in Example 8, this reduced to choosing a suitable

${\mathbb{P}}^*(\textbf{d})$

is to additionally require unbiasedness in (2.4). In the simple case of a binary discriminatory covariate like gender in Example 8, this reduced to choosing a suitable

![]() ${\mathbb{P}}^*(\mathrm{woman})$

. A more general construction of unbiased prices via choices of

${\mathbb{P}}^*(\mathrm{woman})$

. A more general construction of unbiased prices via choices of

![]() ${\mathbb{P}}^*(\textbf{d})$

is presented in Section 4.

${\mathbb{P}}^*(\textbf{d})$

is presented in Section 4.

A special case corresponds to an additive best-estimate price, in the sense that

![]() $\mu(\textbf{X},\textbf{D})=\mu_1(\textbf{X})+\mu_2(\textbf{D})$

. Then, the simple choice

$\mu(\textbf{X},\textbf{D})=\mu_1(\textbf{X})+\mu_2(\textbf{D})$

. Then, the simple choice

![]() ${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

is appealing, as it provides an unbiased price. Note that

${\mathbb{P}}^*(\textbf{d})={\mathbb{P}}(\textbf{d})$

is appealing, as it provides an unbiased price. Note that

which implies

2.3. Revisiting direct and indirect discrimination

In this section, following the development of our ideas so far, we provide more technical definitions of prices that avoid direct and indirect discrimination, where the latter may exist.

Choose an arbitrary probability measure

![]() ${\mathbb{P}}^*$

on the measurable space

${\mathbb{P}}^*$

on the measurable space

![]() $(\Omega, {\mathcal{F}})$

such that

$(\Omega, {\mathcal{F}})$

such that

![]() $Y \in {\mathcal{L}}^1(\Omega, {\mathcal{F}}, {\mathbb{P}}^*)$

. Choose a (sub-)vector

$Y \in {\mathcal{L}}^1(\Omega, {\mathcal{F}}, {\mathbb{P}}^*)$

. Choose a (sub-)vector

![]() ${\textbf{Z}}$

of the covariates

${\textbf{Z}}$

of the covariates

![]() $(\textbf{X},\textbf{D})$

and define the

$(\textbf{X},\textbf{D})$

and define the

![]() $({\mathbb{P}}^*,{\textbf{Z}})$

-conditional-expectation price by

$({\mathbb{P}}^*,{\textbf{Z}})$

-conditional-expectation price by

where

![]() ${\mathbb{E}}^*$

denotes the expectation under

${\mathbb{E}}^*$

denotes the expectation under

![]() ${\mathbb{P}}^*$

.

${\mathbb{P}}^*$

.

Definition 10. A price avoids direct discrimination, if it can be written as

where

![]() ${\textbf{Z}}$

is

${\textbf{Z}}$

is

![]() $\sigma(\textbf{X})$

-measurable, and where the expectation is taken w.r.t. a probability measure

$\sigma(\textbf{X})$

-measurable, and where the expectation is taken w.r.t. a probability measure

![]() ${\mathbb{P}}^*$

on

${\mathbb{P}}^*$

on

![]() $(\Omega, {\mathcal{F}})$

such that

$(\Omega, {\mathcal{F}})$

such that

![]() $Y \in {\mathcal{L}}^1(\Omega, {\mathcal{F}}, {\mathbb{P}}^*)$

.

$Y \in {\mathcal{L}}^1(\Omega, {\mathcal{F}}, {\mathbb{P}}^*)$

.

Remark 11.

-

(a) Definition 10 says that a price avoids direct discrimination if it can be written as a measurable function of the nondiscriminatory covariates

$\textbf{X}$

. For

$\textbf{X}$

. For

${\textbf{Z}}=\textbf{X}$

we receive maximal use of nondiscriminatory information (relative to

${\textbf{Z}}=\textbf{X}$

we receive maximal use of nondiscriminatory information (relative to

${\mathbb{P}}^*$

), therefore, we typically work with

${\mathbb{P}}^*$

), therefore, we typically work with

${\textbf{Z}}=\textbf{X}$

.

${\textbf{Z}}=\textbf{X}$

. -

(b) The choice

${\mathbb{P}}^*={\mathbb{P}}$

(and

${\mathbb{P}}^*={\mathbb{P}}$

(and

${\textbf{Z}}=\textbf{X}$

) provides the unawareness price

${\textbf{Z}}=\textbf{X}$

) provides the unawareness price

$\mu(\textbf{X})$

of Definition 4 which, thus, avoids direct discrimination.

$\mu(\textbf{X})$

of Definition 4 which, thus, avoids direct discrimination. -

(c) Importantly, under the choice

${\mathbb{P}}^*={\mathbb{P}}$

, the unawareness price

${\mathbb{P}}^*={\mathbb{P}}$

, the unawareness price

$\mu(\textbf{X})$

can be calculated without explicit knowledge of

$\mu(\textbf{X})$

can be calculated without explicit knowledge of

$\mu(\textbf{X},\textbf{D})$

– hence it does not require collection of discriminatory policyholder information. This also applies if we need to estimate

$\mu(\textbf{X},\textbf{D})$

– hence it does not require collection of discriminatory policyholder information. This also applies if we need to estimate

$\mu(\textbf{X})$

from data, see (5.3) below.

$\mu(\textbf{X})$

from data, see (5.3) below.

Now, indirect discrimination can be defined.

Definition 12. A price

![]() $\mu^*({\textbf{Z}})$

that avoids direct discrimination is said to avoid indirect discrimination if

$\mu^*({\textbf{Z}})$

that avoids direct discrimination is said to avoid indirect discrimination if

![]() ${\textbf{Z}}$

and

${\textbf{Z}}$

and

![]() $\textbf{D}$

are independent under

$\textbf{D}$

are independent under

![]() ${\mathbb{P}}^*$

.

${\mathbb{P}}^*$

.

Independence under

![]() ${\mathbb{P}}^*$

effects the decoupling of discriminatory covariates from nondiscriminatory ones, for specific policyholders. Thus, according to Definition 12, a price that avoids indirect discrimination satisfies

${\mathbb{P}}^*$

effects the decoupling of discriminatory covariates from nondiscriminatory ones, for specific policyholders. Thus, according to Definition 12, a price that avoids indirect discrimination satisfies

where

![]() $\mu^\ast({\textbf{Z}},\textbf{d}) = {\mathbb{E}}^* [Y \mid {\textbf{Z}},\textbf{D}=\textbf{d}]$

.

$\mu^\ast({\textbf{Z}},\textbf{d}) = {\mathbb{E}}^* [Y \mid {\textbf{Z}},\textbf{D}=\textbf{d}]$

.

Remark 13.

-

(a) From Definition 12, it is clear that avoiding indirect discrimination requires avoiding direct discrimination. As indirect discrimination relates to covariates in

$\textbf{X}$

acting as proxies for (elements of)

$\textbf{X}$

acting as proxies for (elements of)

$\textbf{D}$

, it is not meaningful to talk about indirect discrimination, when

$\textbf{D}$

, it is not meaningful to talk about indirect discrimination, when

$\textbf{D}$

is used directly in pricing.

$\textbf{D}$

is used directly in pricing. -

(b) The independence in Definition 12 is an artifice of the introduced probability measure

${\mathbb{P}}^*$

under which insurance is priced and does not generally reflect the actual observed dependence between

${\mathbb{P}}^*$

under which insurance is priced and does not generally reflect the actual observed dependence between

$\textbf{X}$

and

$\textbf{X}$

and

$\textbf{D}$

.

$\textbf{D}$

. -

(c) For

${\textbf{Z}}=\textbf{X}$

, the calculation that avoids indirect discrimination is based on the knowledge of

${\textbf{Z}}=\textbf{X}$

, the calculation that avoids indirect discrimination is based on the knowledge of

$\mu^*(\textbf{X},\textbf{D})$

, see (2.8) – hence it requires collection of discriminatory policyholder information. In fact, one of the most critical problems in practice is that discriminatory information is often incomplete, for example, about ethnicity, which may result in indirect discrimination.

$\mu^*(\textbf{X},\textbf{D})$

, see (2.8) – hence it requires collection of discriminatory policyholder information. In fact, one of the most critical problems in practice is that discriminatory information is often incomplete, for example, about ethnicity, which may result in indirect discrimination. -

(d) In statistical applications we usually use the conditional probability

${\mathbb{P}}(y\mid \textbf{X},\textbf{D})$

to model a claim Y, given the covariates

${\mathbb{P}}(y\mid \textbf{X},\textbf{D})$

to model a claim Y, given the covariates

$(\textbf{X},\textbf{D})$

. The reason for this choice is that Y, given

$(\textbf{X},\textbf{D})$

. The reason for this choice is that Y, given

$(\textbf{X},\textbf{D})$

, is observed under the real world measure

$(\textbf{X},\textbf{D})$

, is observed under the real world measure

${\mathbb{P}}$

, which allows for direct estimation of the regression function, see Section 5 below,

We could choose the measure

${\mathbb{P}}$

, which allows for direct estimation of the regression function, see Section 5 below,

We could choose the measure \begin{equation*} (\textbf{x},\textbf{d}) \mapsto \mu(\textbf{x},\textbf{d}).\end{equation*}

\begin{equation*} (\textbf{x},\textbf{d}) \mapsto \mu(\textbf{x},\textbf{d}).\end{equation*}

${\mathbb{P}}^*$

in a way that preserves the (causal) structure of how the covariates impact the response, that is, let

${\mathbb{P}}^*$

in a way that preserves the (causal) structure of how the covariates impact the response, that is, let

${\mathbb{P}}^*(y\mid \textbf{x},\textbf{d})={\mathbb{P}}(y\mid \textbf{x},\textbf{d})$

. This then motivates the choice

for

${\mathbb{P}}^*(y\mid \textbf{x},\textbf{d})={\mathbb{P}}(y\mid \textbf{x},\textbf{d})$

. This then motivates the choice

for \[{\rm d}{\mathbb{P}}^*(y,\textbf{x},\textbf{d})={\rm d}{\mathbb{P}}(y\mid \textbf{x},\textbf{d})\, {\rm d}{\mathbb{P}}^*(\textbf{x})\ \, {\rm d}{\mathbb{P}}^*(\textbf{d}),\]

\[{\rm d}{\mathbb{P}}^*(y,\textbf{x},\textbf{d})={\rm d}{\mathbb{P}}(y\mid \textbf{x},\textbf{d})\, {\rm d}{\mathbb{P}}^*(\textbf{x})\ \, {\rm d}{\mathbb{P}}^*(\textbf{d}),\]

${\textbf{Z}}=\textbf{X}$

in Definition 12. In view of (2.8), this results in the discrimination-free price

Thus, the discrimination-free price of Definition 6 does neither allow for potential direct nor for indirect discrimination.

${\textbf{Z}}=\textbf{X}$

in Definition 12. In view of (2.8), this results in the discrimination-free price

Thus, the discrimination-free price of Definition 6 does neither allow for potential direct nor for indirect discrimination. \begin{equation*} \mu^*(\textbf{X})= \int_\textbf{d} \mu(\textbf{X}, \textbf{d})\, \ {\rm d}{\mathbb{P}}^*(\textbf{d} \mid \textbf{X}) = \int_\textbf{d} \mu(\textbf{X}, \textbf{d})\, \ {\rm d}{\mathbb{P}}^*(\textbf{d} )=h^*(\textbf{X}).\end{equation*}

\begin{equation*} \mu^*(\textbf{X})= \int_\textbf{d} \mu(\textbf{X}, \textbf{d})\, \ {\rm d}{\mathbb{P}}^*(\textbf{d} \mid \textbf{X}) = \int_\textbf{d} \mu(\textbf{X}, \textbf{d})\, \ {\rm d}{\mathbb{P}}^*(\textbf{d} )=h^*(\textbf{X}).\end{equation*}

-

(e) Linking to Remark 7(e), in practice, we need to know (calibrate under) the real world measure

${\mathbb{P}}$

in order to study unbiasedness w.r.t.

${\mathbb{P}}$

in order to study unbiasedness w.r.t.

$\mu={\mathbb{E}}[Y]$

. Since the actual portfolio that we hold is described by

$\mu={\mathbb{E}}[Y]$

. Since the actual portfolio that we hold is described by

${\textbf{Z}}\sim{\mathbb{P}}({\textbf{z}})$

, we need to average discrimination-free prices

${\textbf{Z}}\sim{\mathbb{P}}({\textbf{z}})$

, we need to average discrimination-free prices

$\mu^*({\textbf{Z}})$

w.r.t. the same population

$\mu^*({\textbf{Z}})$

w.r.t. the same population

${\mathbb{P}}({\textbf{z}})$

to see whether we receive unbiasedness of discrimination-free prices on the actual portfolio.

${\mathbb{P}}({\textbf{z}})$

to see whether we receive unbiasedness of discrimination-free prices on the actual portfolio.

2.4. Existence of discrimination-free prices

We have not yet discussed existence of discrimination-free prices according to Definition 6 and the possibility of avoiding indirect discrimination according to Definition 12. This is done in the present section.

We emphasize that properties of available data (and the related statistical models) play a crucial role in our considerations:

-

• Indirect discrimination may be the result of incomplete discriminatory information, see Remark 13(c).

-

• Indirect discrimination may be the result of nonexistent or insufficient information of certain parts of the population.

In this section, we discuss the second item that can enter in different ways. A first one is that not all parts of the population are equally well represented in the development of the statistical model. For instance, there is research in image recognition to discover malignant melanoma (skin cancer). If this research is mainly based on images of people with light complexion, the corresponding model will likely fail to discover malignant melanoma for people with dark complexion. This is a form of discrimination resulting from insufficient data of certain parts of the population. In our situation, this may result in poor best-estimate prices

![]() $\mu(\textbf{X}, \textbf{D})$

for certain covariate combinations. Note that the quality of the estimation of best-estimate prices directly impacts discrimination-free prices.

$\mu(\textbf{X}, \textbf{D})$

for certain covariate combinations. Note that the quality of the estimation of best-estimate prices directly impacts discrimination-free prices.

In the current section, we rather focus on nonexistent data of certain parts of the population. The meaning and implications of nonexistent data are going to be discussed in more detail. We start with an example. Assume that the discriminatory covariates

![]() $\textbf{D}$

correspond to gender and the nondiscriminatory ones

$\textbf{D}$

correspond to gender and the nondiscriminatory ones

![]() $\textbf{X}$

to education. Education could be in the ordinal form “secondary school degree,” “high school degree” or “university degree,” but information about education could also be received in the following categorical form “Catholic college degree,” “public college degree” or “girls college degree.” Per definition the last label “girls college degree” contains as only gender “female”. This implies that

$\textbf{X}$

to education. Education could be in the ordinal form “secondary school degree,” “high school degree” or “university degree,” but information about education could also be received in the following categorical form “Catholic college degree,” “public college degree” or “girls college degree.” Per definition the last label “girls college degree” contains as only gender “female”. This implies that

thus, the event

![]() $A=\{\textbf{X}=\mathrm{girls\ college\ degree}, \textbf{D}=\mathrm{man}\} \in {\mathcal{F}}$

is a null set w.r.t.

$A=\{\textbf{X}=\mathrm{girls\ college\ degree}, \textbf{D}=\mathrm{man}\} \in {\mathcal{F}}$

is a null set w.r.t.

![]() ${\mathbb{P}}$

. In many cases, we do not model responses Y on null sets. Therefore, neither Y on A may be specified in our model nor the conditional expectation

${\mathbb{P}}$

. In many cases, we do not model responses Y on null sets. Therefore, neither Y on A may be specified in our model nor the conditional expectation

![]() $\mu(\mathrm{girls\ college\ degree}, \mathrm{man})={\mathbb{E}}[Y\mid A]$

may be determined. But this implies that we cannot evaluate the discrimination-free price

$\mu(\mathrm{girls\ college\ degree}, \mathrm{man})={\mathbb{E}}[Y\mid A]$

may be determined. But this implies that we cannot evaluate the discrimination-free price

if

![]() ${\mathbb{P}}^*(\textbf{d})$

has positive probability mass on both genders. In the current situation, the problem may be solved by setting

${\mathbb{P}}^*(\textbf{d})$

has positive probability mass on both genders. In the current situation, the problem may be solved by setting

![]() ${\mathbb{P}}^*(\textbf{D}=\mathrm{woman})=1$

which gives the discrimination-free price

${\mathbb{P}}^*(\textbf{D}=\mathrm{woman})=1$

which gives the discrimination-free price

![]() $h^*(\textbf{X}) = \mu (\textbf{X}, \mathrm{woman})$

.

$h^*(\textbf{X}) = \mu (\textbf{X}, \mathrm{woman})$

.

If the education information

![]() $\textbf{X}$

has an additional level “boys college degree”, the above solution will not work because we have a second

$\textbf{X}$

has an additional level “boys college degree”, the above solution will not work because we have a second

![]() ${\mathbb{P}}$

-null set

${\mathbb{P}}$

-null set

![]() $B=\{\textbf{X}=\mathrm{boys\ college\ degree}, \textbf{D}=\mathrm{woman}\} \in {\mathcal{F}}$

which makes it impossible to choose a distribution

$B=\{\textbf{X}=\mathrm{boys\ college\ degree}, \textbf{D}=\mathrm{woman}\} \in {\mathcal{F}}$

which makes it impossible to choose a distribution

![]() ${\mathbb{P}}^*(\textbf{d})$

such that the discrimination-free price

${\mathbb{P}}^*(\textbf{d})$

such that the discrimination-free price

![]() $h^*(\textbf{X})$

is well-defined.

$h^*(\textbf{X})$

is well-defined.

The simple solution to this problem is to drop the education information, that is, choose a smaller covariate set. This is equivalent to choosing a true subset

![]() ${\textbf{Z}}$

of

${\textbf{Z}}$

of

![]() $\textbf{X}$

in Definition 12. In practice, we often try to inter- or extrapolate the model assumptions for Y. This is reasonable if unavailable information corresponds to numerical variables (and responses have some smoothness in these covariates). In certain cases, it may also be justified for categorical variables by, for example, postulating a multiplicative influence structure of covariates, say, women are

$\textbf{X}$

in Definition 12. In practice, we often try to inter- or extrapolate the model assumptions for Y. This is reasonable if unavailable information corresponds to numerical variables (and responses have some smoothness in these covariates). In certain cases, it may also be justified for categorical variables by, for example, postulating a multiplicative influence structure of covariates, say, women are

![]() $x\%$

better than men regardless of the attended college. This is similar to a GLM approach where gender may be reflected by a single parameter on the canonical scale. In our situation such an assumption can be made, but it cannot be verified because of a missing control group.

$x\%$

better than men regardless of the attended college. This is similar to a GLM approach where gender may be reflected by a single parameter on the canonical scale. In our situation such an assumption can be made, but it cannot be verified because of a missing control group.

Proposition 14. Assume there exists a product measure

![]() ${\mathbb{P}}^*(\textbf{x}){\mathbb{P}}^*(\textbf{d})$

on

${\mathbb{P}}^*(\textbf{x}){\mathbb{P}}^*(\textbf{d})$

on

![]() $(\Omega, {\mathcal{F}})$

which is absolutely continuous w.r.t. the probability measure

$(\Omega, {\mathcal{F}})$

which is absolutely continuous w.r.t. the probability measure

![]() ${\mathbb{P}}(\textbf{x},\textbf{d})$

of the covariates

${\mathbb{P}}(\textbf{x},\textbf{d})$

of the covariates

![]() $(\textbf{X},\textbf{D})$

. Then, there exists a price

$(\textbf{X},\textbf{D})$

. Then, there exists a price

![]() $\mu^*(\textbf{X})$

that avoids indirect discrimination.

$\mu^*(\textbf{X})$

that avoids indirect discrimination.

Proof. Absolute continuity implies that every

![]() ${\mathbb{P}}(\textbf{x},\textbf{d})$

-null set is also a

${\mathbb{P}}(\textbf{x},\textbf{d})$

-null set is also a

![]() ${\mathbb{P}}^*(\textbf{x}){\mathbb{P}}^*(\textbf{d})$

-null set. Therefore,

${\mathbb{P}}^*(\textbf{x}){\mathbb{P}}^*(\textbf{d})$

-null set. Therefore,

![]() $\mu(\textbf{X}, \textbf{D})$

is well-defined on all sets where

$\mu(\textbf{X}, \textbf{D})$

is well-defined on all sets where

![]() $(\textbf{X},\textbf{D})$

has positive

$(\textbf{X},\textbf{D})$

has positive

![]() ${\mathbb{P}}^*(\textbf{x}){\mathbb{P}}^*(\textbf{d})$

-probability mass. Since the latter is a product measure, we can calculate the discrimination-free price

${\mathbb{P}}^*(\textbf{x}){\mathbb{P}}^*(\textbf{d})$

-probability mass. Since the latter is a product measure, we can calculate the discrimination-free price

![]() $h^*(\textbf{X})$

by integrating

$h^*(\textbf{X})$

by integrating

![]() $\mu(\textbf{X}, \textbf{d})$

over

$\mu(\textbf{X}, \textbf{d})$

over

![]() ${\rm d}{\mathbb{P}}^*(\textbf{d} \mid \textbf{X})= {\rm d}{\mathbb{P}}^*(\textbf{d})$

, see also (2.8). This completes the proof.

${\rm d}{\mathbb{P}}^*(\textbf{d} \mid \textbf{X})= {\rm d}{\mathbb{P}}^*(\textbf{d})$

, see also (2.8). This completes the proof.

3. Causal inference and discrimination

The purpose of this section is to discuss the discrimination-free prices of Definition 6 in a causal inference setting. Discrimination-free prices given by Definition 6 hold without recourse to any causal relationships between variables. Nonetheless, there is a nice motivation of discrimination-free pricing in a causal inference context which provides additional insight. We give these arguments in a pedagogical and somewhat informal way; for a rigorous treatment we refer to Hernán and Robins (Reference Hernán and Robins2020), Pearl (Reference Pearl2009) and Pearl et al. (Reference Pearl, Glymour and Jewell2016), Ch.3.1).

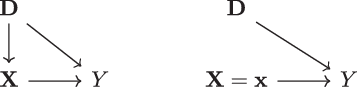

The starting point of causal inference is a hypothesis of variable relationships, which may be described in terms of a directed graph

![]() $\mathfrak{G}$

. The graph

$\mathfrak{G}$

. The graph

![]() $\mathfrak{G}$

consists of a set of nodes corresponding to the different variables and directed edges – “arrows” – indicating directions of potential influence between the variables. This informal definition is most easily understood by an example such as the one given in Figure 1 (left), involving the variables

$\mathfrak{G}$

consists of a set of nodes corresponding to the different variables and directed edges – “arrows” – indicating directions of potential influence between the variables. This informal definition is most easily understood by an example such as the one given in Figure 1 (left), involving the variables

![]() $(Y,\textbf{X},\textbf{D})$

introduced above in the context of insurance pricing. The graph

$(Y,\textbf{X},\textbf{D})$

introduced above in the context of insurance pricing. The graph

![]() $\mathfrak{G}$

in Figure 1 (left) is an example of a directed acyclic graph (DAG), meaning that the graph does not contain any loops (for a precise definition, see [21, Chapter 1.4]). Figure 1 (left) corresponds to a situation where the discriminatory characteristics

$\mathfrak{G}$

in Figure 1 (left) is an example of a directed acyclic graph (DAG), meaning that the graph does not contain any loops (for a precise definition, see [21, Chapter 1.4]). Figure 1 (left) corresponds to a situation where the discriminatory characteristics

![]() $\textbf{D}$

may influence Y both directly, but also indirectly via

$\textbf{D}$

may influence Y both directly, but also indirectly via

![]() $\textbf{X}$

.

$\textbf{X}$

.

Figure 1: (left) Causal diagram described by

![]() $\mathfrak{G}$

; (right) causal diagram altered according to the intervention

$\mathfrak{G}$

; (right) causal diagram altered according to the intervention

![]() $\textbf{X} = \textbf{x}$

.

$\textbf{X} = \textbf{x}$

.

Figure 1 (left) already captures a large number of realistic insurance pricing situations. For instance, in view of Example 1, we may identify smoking habits by

![]() $\textbf{X}$

and the gender by the discriminatory factors

$\textbf{X}$

and the gender by the discriminatory factors

![]() $\textbf{D}$

. Differences in smoking habits between men and women can be expressed by a directed edge

$\textbf{D}$

. Differences in smoking habits between men and women can be expressed by a directed edge

![]() $\textbf{D} \rightarrow \textbf{X}$

, while intrinsic differences between men and women when it comes to health outcomes are described by

$\textbf{D} \rightarrow \textbf{X}$

, while intrinsic differences between men and women when it comes to health outcomes are described by

![]() $\textbf{D} \rightarrow Y$

. Moreover, smoking in itself may cause health problems,

$\textbf{D} \rightarrow Y$

. Moreover, smoking in itself may cause health problems,

![]() $\textbf{X} \rightarrow Y$

, this is exactly expressed by the directed edges in Figure 1 (left).

$\textbf{X} \rightarrow Y$

, this is exactly expressed by the directed edges in Figure 1 (left).

Since the directed edges in the DAG

![]() $\mathfrak{G}$

do not act fully deterministically, we endow

$\mathfrak{G}$

do not act fully deterministically, we endow

![]() $\mathfrak{G}$

with a probability measure

$\mathfrak{G}$

with a probability measure

![]() ${\mathbb{P}}$

that describes the randomness involved. Here, we consider a Markovian measure, which, colloquially speaking, means that all nodes in Figure 1 (left) are complemented with independent noisy background variables (Pearl et al., Reference Pearl, Glymour and Jewell2016, Chapter 3.2.1). In such a Markovian setting, let, for a general DAG

${\mathbb{P}}$

that describes the randomness involved. Here, we consider a Markovian measure, which, colloquially speaking, means that all nodes in Figure 1 (left) are complemented with independent noisy background variables (Pearl et al., Reference Pearl, Glymour and Jewell2016, Chapter 3.2.1). In such a Markovian setting, let, for a general DAG

![]() $\mathfrak{G}$

,

$\mathfrak{G}$

,

![]() ${\textbf{Z}} = (Z_1, \ldots, Z_p)$

be the vector containing all variables (e.g.,

${\textbf{Z}} = (Z_1, \ldots, Z_p)$

be the vector containing all variables (e.g.,

![]() ${\textbf{Z}}=(Y,\textbf{X},\textbf{D})$

) and let

${\textbf{Z}}=(Y,\textbf{X},\textbf{D})$

) and let

![]() $\textbf V_i$

denote the set of “parent” variables of

$\textbf V_i$

denote the set of “parent” variables of

![]() $Z_i$

(that have a directed edge attached pointing directly to

$Z_i$

(that have a directed edge attached pointing directly to

![]() $Z_i$

). Furthermore, in this section, we denote by

$Z_i$

). Furthermore, in this section, we denote by

![]() $\mathbb{p}({\textbf{z}})$

the probability density or mass function of

$\mathbb{p}({\textbf{z}})$

the probability density or mass function of

![]() ${\textbf{Z}}$

. Then, on the Markovian DAG, it holds that (see e.g., Theorem 1 in Pearl (Reference Pearl2009))

${\textbf{Z}}$

. Then, on the Markovian DAG, it holds that (see e.g., Theorem 1 in Pearl (Reference Pearl2009))

In the simple example of Figure 1 (left), identity (3.1) leads to decomposition

which, of course, is nothing but Bayes’ rule.

With this modeling setup in place, one way to approach nondiscriminatory pricing is to ask the following:

-

Given that a policyholder has the set of characteristics

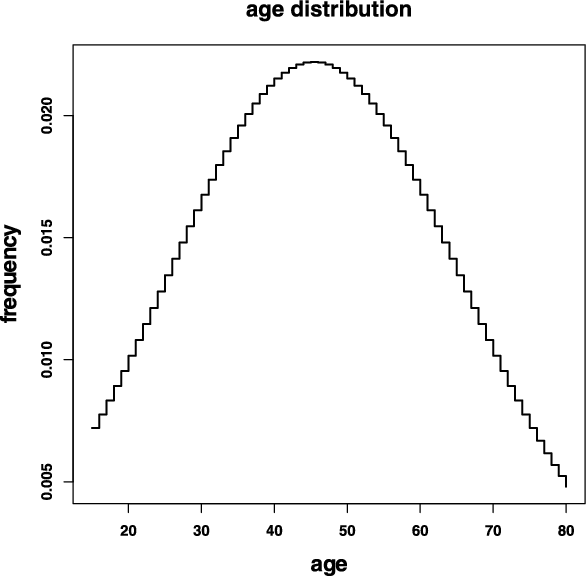

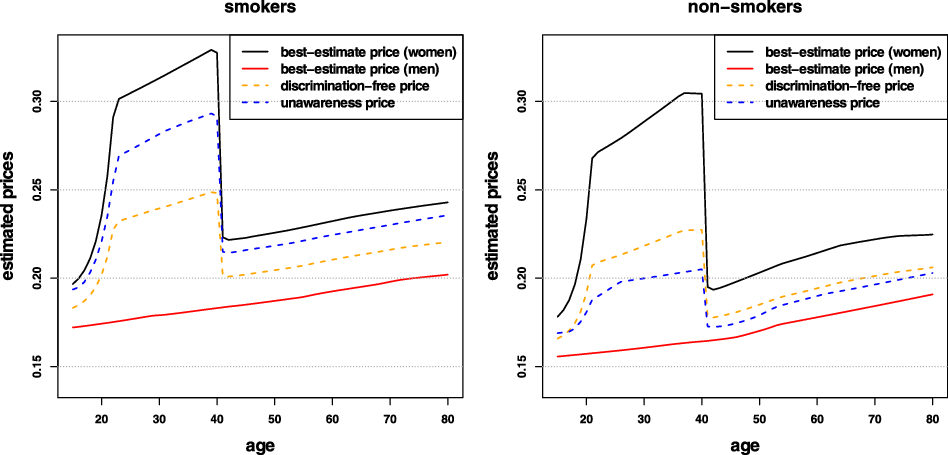

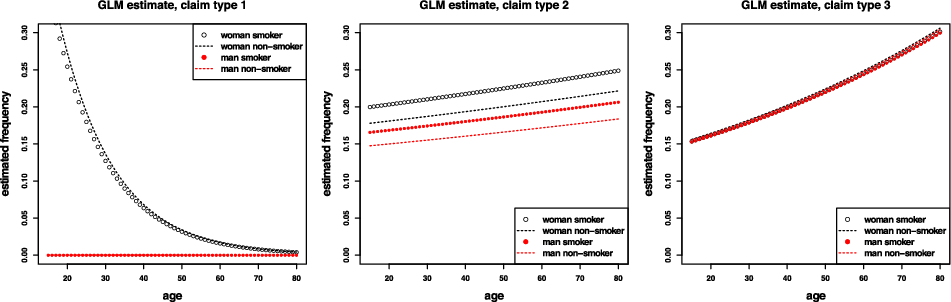

$\textbf{X}=\textbf{x}$