Is an outcome where many people are saved and one person dies better than an outcome where the one is saved and the many die? Most of us judge that the former is better. But what justifies this evaluation? The standard utilitarian answer is that it would be better if the many were saved, because the combined gain in well-being for the many if they were saved would be greater than the gain in well-being for the one if he or she were saved.Footnote 1 This form of utilitarianism justifies evaluations by

The Total Principle: Outcome X is at least as good as outcome Y if and only if the sum total of well-being is at least as great in X as in Y.

The justification by the Total Principle is an example of moral aggregation, which some people, such as John M. Taurek and T. M. Scanlon, find problematic. Taurek, for example, complains that

It is not my way to thinking of [the people who need help] as each having a certain objective value, determined however it is we determine the objective value of things, and then to make some estimate of the combined value of the [many] as against the one. (Reference Taurek1977, 307)Footnote 2

Scanlon is somewhat less clear, demanding that

the justifiability of a moral principle depends only on various individuals’ reasons for objecting to that principle and alternatives to it. (Reference Scanlon1998, 229)

An aggregate or sum of several individuals’ reasons, however, still depends on those individual reasons. Yet, since Scanlon takes his demand to rule out justifications that appeal to a ‘sum of a certain sort of value’ (230), he seems to have in mind a requirement that is, more or less, equivalent to Taurek’s requirement.

These moral-aggregation critics object that moral justifications should not be based on comparisons between aggregates of people’s claims or well-being.Footnote 3 Unfortunately, this objection, which we may call the Objection from Moral Aggregation, is rarely put forward in a precise manner. Still, a plausible explication is that the objection rejects justifications that involve moral aggregation in the following sense:Footnote 4

A justification of a moral evaluation involves moral aggregation if and only if the justification is fundamentally based in part on a comparison where at least one of the relata is an aggregate of the claims or well-being of more than one individual.

Rejecting moral aggregation means accepting

The Individualist Restriction: The only comparisons that a justification of a moral evaluation may be fundamentally based on are comparisons where no relatum is an aggregate of the claims or well-being of more than one individual.

The Objection from Moral Aggregation is not that moral evaluations of aggregates of claims are necessarily problematic. What is supposed to be problematic is that comparisons of such aggregates are part of the justifications of moral evaluations. So the evaluation that it’s better to save the many than to save the one needn’t be problematic. The target of the Objection from Moral Aggregation is the justification of this evaluation by the Total Principle or by some other form of moral aggregation.Footnote 5 In fact, many moral-aggregation critics believe that there is an adequate justification of its being better to save the many than to save the one.Footnote 6 They believe that, while the standard utilitarian justification involves moral aggregation, there is an alternative justification that does not—namely, the Argument for Best Outcomes.Footnote 7

In this paper, I will extend the Argument for Best Outcomes with a further principle to show that any utilitarian evaluation can be justified without relying on the Total Principle or any other form of moral aggregation.

1. The Argument for Best Outcomes

The Argument for Best Outcomes relies on three principles.Footnote 8 The first is based on the idea that morality demands impartiality between people, other things being equal (Sen Reference Sen1974, 391 and Blackorby, Bossert, and Donaldson Reference Blackorby, Bossert and Donaldson2005, 49):

Anonymity: If outcomes X and Y only differ in that the identities of some people who exist in these outcomes have been permuted, then X and Y are equally good.

This principle is sometimes called ‘Impartiality’.Footnote 9 But the principle requires more than mere impartiality between outcomes that are alike except for a permutation of identities; it requires that the outcomes are equally good. It wouldn’t be any less impartial if the outcomes were incomparable in value than if they were equally good. Because, just like equality, incomparability is symmetric. It doesn’t favour any one of the relata.

While Anonymity is compelling, it isn’t beyond dispute: Anonymity rules out partiality, and partiality is part of common-sense morality (specifically, the idea that you may give extra weight to your own well-being and the well-being of your friends and family).Footnote 10 Yet, for the purposes of our current discussion, the key feature of Anonymity is not that it’s self-evident or undeniable but that it’s free from moral aggregation—that is, Anonymity does not involve any comparisons of aggregates of people’s claims or well-being. This feature is still clearer for the following weakened variant, which suffices for the argument:

Pairwise Anonymity: If outcomes X and Y only differ in that the identities of two people who exist in these outcomes have been permuted, then X and Y are equally good.

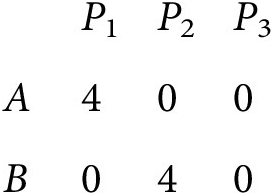

Consider the following outcomes A and B, which only differ in that the identities of two people (P 1 and P 2) have been permuted (a third person, P 3, is unaffected):

Since A and B only differ in that the identities of P 1 and P 2 have been permuted, Pairwise Anonymity entails that A and B are equally good. If two outcomes only differ in that the identities of two people have been permuted, then no further person is affected and any loss for one of the two is perfectly matched by a gain for the other.Footnote 11 In a choice between A and B, for instance, any loss for one of P 1 and P 2 is perfectly matched by a gain for the other. So, by only making one-to-one comparisons between individuals, we have that there is an equivalence of gains and losses between A and B. Even though this justification balances gains against losses, it only balances the gain for one individual against the loss for another individual. Hence the justification avoids moral aggregation, and it conforms to the Individualist Restriction.Footnote 12

The second principle is based on the idea that if one outcome dominates another outcome in terms of individual well-being, then its better (Broome Reference Broome1987, 410; Reference Broome1991, 165):Footnote 13

The Strong Principle of Dominance: If (i) the same people exist in outcomes X and Y, (ii) each of these people has at least as high well-being in X as in Y, and (iii) some person has higher well-being in X than in Y, then X is better than Y.

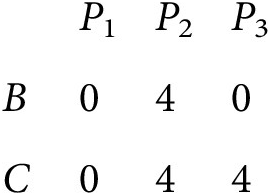

Consider the following outcomes B and C, where everyone is equally well off in B as in C except P 3 who is better off in C than in B:

By comparing each person’s well-being in B with their well-being in C, we can conclude that each person has at least as high well-being in C as in B and that P 3 has higher well-being in C than in B. Based on these intrapersonal comparisons, the Strong Principle of Dominance entails that C is better than B. This justification does not involve moral aggregation because it doesn’t balance claims or well-being between different people.

The third principle is the following principle of the logic of value (Arrow Reference Arrow1951, 13; Sen Reference Sen1970, 2; Reference Sen2017, 47; and Quinn Reference Quinn1977, 77):

Transitivity: If outcome X is at least as good as outcome Y and Y is at least as good as outcome Z, then X is at least as good as Z.

From (i) that A and B are equally good and (ii) that C is better than B, it follows by Transitivity that C is better than A. As long as the first two evaluations—(i) and (ii)—have been justified without moral aggregation, Transitivity provides a justification of C’s being better than A which does not involve moral aggregation (because, for this justification, Transitivity does not rely on any other comparisons than the first two).

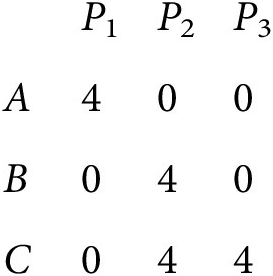

With these principles, we can state the Argument for Best Outcomes. Suppose that getting 4 units of well-being in outcomes A, B, and C corresponds to getting saved and that getting 0 units corresponds to not being saved. In A, only P 1 is saved. In B, only P 2 is saved. And, in C, both P 2 and P 3 are saved but P 1 is not. Hence we have the following outcomes:Footnote 14

We can then argue as follows:

The Argument for Best Outcomes

-

(1) A and B are equally good. Pairwise Anonymity

-

(2) C is better than B. The Strong Principle of Dominance

-

(3) C is better than A. (1), (2), Transitivity

We have argued, without relying on moral aggregation, that C is better than A. The difference between A and C is that, if A were chosen over C, only one person (P 1) would be saved but, if C were chosen, two other people (P 2 and P 3) would be saved. Therefore, we have an argument for its being better that a greater number of people are saved, and this argument does not rely on moral aggregation.Footnote 15

It may be objected that the Argument for Best Outcomes relies on moral aggregation in the move from (1) and (2) to (3). The evaluation in (3) is justified by (1), (2), and Transitivity. So C’s being better than A is justified in part by A’s being equally as good as B and in part by C’s being better than B. But A’s being equally as good as B is a comparison of the whole of outcome A with the whole of outcome B. And C’s being better than B is a comparison of the whole of outcome C with the whole of outcome B. Each of these compared outcomes includes the well-being of three people. Hence the justification of the evaluation in (3) is based in part on comparisons where at least one of the relata is an aggregate of (among other things) the well-being of more than one individual.

Even so, this does not show that the Argument for Best Outcomes involves moral aggregation, because these comparisons that the justification of (3) is based on—that is, (1) and (2)—can in turn be justified without moral aggregation. So the justification of (3) by (1), (2), and Transitivity is not fundamentally based on a comparison where at least one of the relata is an aggregate of the well-being of more than one individual.Footnote 16

2. The Extended Argument for Best Outcomes

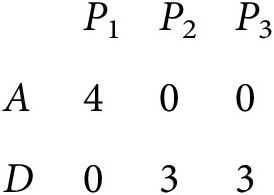

The Argument for Best Outcomes can support the utilitarian evaluation that saving the greater number is better if the competing claims have equal strength. Still, the three principles that the argument relies on are too weak to allow us to derive all utilitarian evaluations. For instance, these principles are too weak to show that saving the many is better than saving the one if the benefit for the one is greater than the benefit for each of the many. Consider an outcome D where P 2 and P 3 are saved but their well-being is slightly lower than P 1’s well-being in outcome A:

To see that no valid argument based on just Anonymity, the Strong Principle of Dominance, and Transitivity could show that D is better than A, consider the Leximax Equity Criterion—a variant of the Leximin Equity Criterion which prioritizes the better off rather than the worse off.

The Leximax Equity Criterion evaluates outcomes with the same population as follows: If the best off in a first outcome are better off than the best off in a second outcome, then the first outcome is better than the second outcome. If the best off in the outcomes are equally well-off, remove one of the best off in each outcome and repeat the test until one outcome emerges as better than the other or there is no one left in the outcomes. If there is no one left in the outcomes, then the outcomes are equally good.

The Leximax Equity Criterion satisfies Anonymity, the Strong Principle of Dominance, and Transitivity, but it entails that A is better than D (and thus that D is not better than A), because the best-off person in A is better off than each of the best-off people in D (d’Aspremont and Gevers Reference d’Aspremont and Gevers1977, 204). Therefore, since utilitarianism entails that D is better than A, there is at least one utilitarian evaluation that cannot be derived with just Anonymity, the Strong Principle of Dominance, and Transitivity.

So, in order to justify the evaluation that D is better than A, we need an additional principle. And, if we want to justify this evaluation without moral aggregation, the additional principle cannot rely on moral aggregation. Even so, there is a principle that fits the bill. Consider

Supervenience on Individual Stakes: If the same people exist in outcomes X, Y, U, and V and, for each person P who exists in these outcomes, P’s well-being in X minus P’s well-being in Y is equal to P’s well-being in U minus P’s well-being in V, then X and Y are equally good if and only if U and V are equally good.

This principle says that, if everyone stands to gain or lose the same amount if X were chosen over Y as they would if U were chosen over V, then the evaluation of these pairs should be the same (in the sense that, if the outcomes in one pair are equally good, the outcomes in the other pair should be so too). Note that the consequent of Supervenience on Individual Stakes is biconditional; it only lets us derive that X and Y are equally good conditional on that U and V are equally good (and vice versa). If the evaluation that U and V are equally good is justified without violating the Individualist Restriction, then Supervenience on Individual Stakes can justify that X and Y are equally good without violating the Individualist Restriction, because, in addition to the evaluation of U and V, Supervenience on Individual Stakes only relies on intrapersonal comparisons of gains and losses between pairs of outcomes. Hence, if U’s being equally as good as V can be justified without moral aggregation, then X’s being equally as good as Y can be justified by Supervenience on Individual Stakes without relying on moral aggregation.

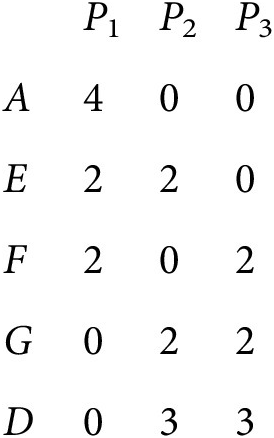

For an example illustrating the application of Supervenience on Individual Stakes, consider the following pairs of outcomes:

If outcome A were chosen over outcome E, then P 1 would be 2 units better off, P 2 would be 2 units worse off, and P 3 would be neither better nor worse off. And, if outcome F were chosen over outcome G, we get the same result: P 1 would be 2 units better off, P 2 would be 2 units worse off, and P 3 would be neither better nor worse off. Since, in this manner, each individual stands to gain or lose the same amount if A were chosen over E as they would if F were chosen over G, Supervenience on Individual Stakes entails that A and E are equally good if F and G are equally good. Suppose that the evaluation that F and G are equally good is justified by Pairwise Anonymity (a justification that doesn’t rely on moral aggregation). Then the evaluation that A and E are equally good can be justified by Supervenience on Individual Stakes without relying on moral aggregation.

The point here is not that Supervenience on Individual Stakes is self evident or uncontroversial. The principle reflects utilitarianism’s insensitivity to whether the distribution of well-being is equal, which is controversial from the perspective of some egalitarian theories.Footnote 17 While there’s no difference with respect to inequality between F and G, there is more inequality in A than in E. Footnote 18 For the purposes of our discussion, however, the key feature of Supervenience on Individual Stakes is that it satisfies the Individualist Restriction and hence that it doesn’t involve moral aggregation.Footnote 19

We have that each one of Pairwise Anonymity, the Strong Principle of Dominance, Supervenience on Individual Stakes, and Transitivity satisfies the Individualist Restriction. And, with these four principles, we can derive that D is better than A. Hence we can justify D’s being better than A without resorting to moral aggregation in any step. To see this, consider once more the following outcomes:

We then argue as follows:

The Extended Argument for Best Outcomes

-

(1) F and G are equally good. Pairwise Anonymity

-

(2) A and E are equally good. (1), Supervenience on Individual Stakes

-

(3) E and G are equally good. Pairwise Anonymity

-

(4) D is better than G. The Strong Principle of Dominance

-

(5) D is better than A. (2), (3), (4), Transitivity

Hence we have an argument that it can be better that two people each get a smaller benefit than that one person gets a larger benefit. And, crucially, this argument does not rely on moral aggregation.

3. A justification of utilitarianism without moral aggregation

The Extended Argument for Best Outcomes can be used to defend utilitarianism against the Objection from Moral Aggregation. The argument’s four principles jointly entail, as we shall see, the same evaluations as utilitarianism given a fixed population of two or more people. In other words, the four principles of the Extended Argument for Best Outcomes jointly entail a value ranking of any pair of outcomes in which the same (two or more) people exist, and this ranking will coincide with the utilitarian value ranking of these outcomes. So, given that there are at least two people, these principles entail a version of utilitarianism which is restricted to evaluations with a fixed population, namely,

Fixed-Population Utilitarianism: If the same people exist in outcomes X and Y, then X is at least as good as Y if and only if the sum total of well-being is at least as great in X as in Y.

Moreover, two of the principles in the Extended Argument for Best Outcomes are stronger than necessary. We can weaken both Transitivity and the Strong Principle of Dominance and still derive Fixed-Population Utilitarianism. Consider the following weakening of Transitivity:Footnote 20

Fixed-Population Transitivity: If (i) the same people exist in outcomes X, Y, and Z, (ii) X is at least as good as Y, and (iii) Y is at least as good as Z, then X at least as good as Z.

And consider the following weakening of the Strong Principle of Dominance:Footnote 21

The Weak Principle of Dominance: If (i) some person exists in outcomes X and Y, (ii) the same people exist in X and Y, and (iii) each of these people has higher well-being in X than in Y, then X is better than Y.

These weakened principles along with Pairwise Anonymity and Supervenience on Individual Stakes jointly entail the same evaluations as Fixed-Population Utilitarianism for finite populations of at least two people. We can prove the following theorem:Footnote 22

Given that the total number of people is finite and greater than one, Fixed-Population Utilitarianism is true if and only if the following principles are all true:

-

• Fixed-Population Transitivity,

-

• Pairwise Anonymity,

-

• Supervenience on Individual Stakes, and

-

• The Weak Principle of Dominance.

From this theorem, we have that each one of Fixed-Population Utilitarianism’s evaluations of outcomes with at least two people can be justified by Fixed-Population Transitivity, Pairwise Anonymity, Supervenience on Individual Stakes, and the Weak Principle of Dominance. And, since none of these principles involves moral aggregation, this justification of Fixed-Population Utilitarianism does not violate the Individualist Restriction.Footnote 23 So utilitarianism can sidestep the Objection from Moral Aggregation.Footnote 24

To derive the same evaluations as utilitarianism for fixed populations with fewer than two people, we also need the following principle of the logic of value (Arrow Reference Arrow1951, 14; Chisholm and Sosa Reference Chisholm and Sosa1966, 248; and Sen Reference Sen1970, 2; Reference Sen2017, 47):Footnote 25

Reflexivity: Outcome X is at least as good as X.

Reflexivity does not involve moral aggregation. It just compares an outcome with itself. So there are no relevant claims of any individual. We can prove the following corollary:Footnote 26

Given that the total number of people is finite, Fixed-Population Utilitarianism is true if and only if the following principles are all true:

-

• Fixed-Population Transitivity,

-

• Pairwise Anonymity,

-

• Reflexivity,

-

• Supervenience on Individual Stakes, and

-

• The Weak Principle of Dominance.

But, since we only need Reflexivity to evaluate outcomes with fewer than two people, this corollary won’t matter for our discussion of moral aggregation. Moral aggregation requires at least two people.

It may be objected that, if we were to justify utilitarian evaluations with these non-aggregative principles, we would still end up with extensionally the same evaluations as if we evaluated outcomes on the basis of their sum total of well-being. So we would still evaluate as if we evaluated on the basis of moral aggregation. But, first, note that we would also evaluate as if we didn’t evaluate on the basis of moral aggregation, since we would also evaluate as if we merely applied the above principles. And, second, note that, however we evaluate outcomes, there will always be a way of justifying an extensionally equivalent evaluation of outcomes on the basis of some (perhaps convoluted) form of moral aggregation. Hence, on the one hand, if the Objection from Moral Aggregation is that we shouldn’t evaluate as if we evaluated on the basis of moral aggregation, it seems to prove too much, since it would rule out any way of evaluating outcomes. On the other hand, if the objection is merely that moral aggregation shouldn’t figure in the justification of evaluations, then it shouldn’t cause concern about utilitarianism, since, by way of the above principles, the utilitarian evaluations can be justified without moral aggregation.

Acknowledgments

I wish to thank Gustaf Arrhenius, Krister Bykvist, Bruce Chapman, Richard Yetter Chappell, Tomi Francis, Bernward Gesang, Christopher Jay, Kacper Kowalczyk, Mary Leng, Alastair Norcross, Martin Peterson, Christian Piller, Mozaffar Qizilbash, Wlodek Rabinowicz, Daniel Ramöller, Korbinian Rueger, Dean Spears, Tom Stoneham, Frans Svensson, Elliott Thornley, John A. Weymark, the audience at ISUS 2018, Karlsruhe Institute of Technology (26 July 2018), and two anonymous referees for valuable comments. Financial support from the Swedish Foundation for Humanities and Social Sciences is gratefully acknowledged.

J ohan E. Gustafsson is an associate professor at University of Gothenburg and Institute for Futures Studies and a senior researcher at University of York. He is currently at work on the book Money-Pump Arguments, which is forthcoming from Cambridge University Press.

Appendices

A. Proof of the theorem

We shall prove the theorem that, given that the total number of people is finite and greater than one, Fixed-Population Utilitarianism is true if and only if Fixed-Population Transitivity, Pairwise Anonymity, Supervenience on Individual Stakes, and the Weak Principle of Dominance are all true.Footnote 27

We begin with the right-to-left direction of the biconditional. Suppose that X and Y are outcomes with the same people P 1, … , Pn. Consider, first, the case where X and Y have the same sum total of well-being. Starting with this pair of outcomes, we will consider a sequence of pairs of outcomes where, in each pair, the outcomes are equally good if the outcomes in the next pair are equally as good as each other, until we get to a pair of outcomes that we can show are equally as good as each other.

(sort): Perform the following sorting procedure on each outcome in the pair: as long as it is not the case, for each i = 1, … , n – 1, that Pi has at least as high well-being as P i+1 in the outcome, find the smallest integer j such that P j+1 has higher well-being than Pj in the outcome and replace the outcome with an outcome that only differs in that the identities of Pj and P j+1 have been permuted. It follows, from Pairwise Anonymity, that each new outcome is equally as good as the outcome it replaces. And we have, from Fixed-Population Transitivity, that the resulting sorted outcome is equally as good as the one we started with. Since there are only a finite number of people in the outcomes, this procedure will provide, in a finite number of iterations, a new pair of outcomes with people ordered (P 1, … , Pn) by decreasing well-being. And the outcomes in this new pair are equally good if and only if the outcomes in the previous pair are equally good.

(decrease): Then, with the resulting pair of outcomes with people ordered by decreasing well-being, replace those outcomes by two new outcomes that only differ from the old two respectively in that each person’s well-being is decreased by whichever is lower of that person’s well-being levels in the old pair of outcomes. We have, by Supervenience on Individual Stakes, that the outcomes in the new pair are equally good if and only if the outcomes in the old pair are equally good.

Repeat step sort followed by step decrease until, after a finite number of iterations of these steps, we have a pair of outcomes in which everyone has zero well-being. To see that this is what we’ll end up with, note that we started with two outcomes with an equal sum total of well-being and, after sort or decrease, we still have two outcomes with an equal sum total of well-being since sort leaves the sum totals of well-being unchanged and decrease subtracts the same amount of well-being from both outcomes. After the first iteration of decrease, any negative well-being has been cancelled out. From then on, since the outcomes have the same sum total of well-being, there are people with positive well-being in one of the outcomes if and only if there are people with positive well-being in the other outcome. Hence, after each further iteration of sort, there must be at least one person (specifically, P 1) that has positive well-being in both outcomes. In the next iteration of decrease, this person will then get their well-being decreased by the lowest of their well-being levels in the two outcomes and thereby end up with zero well-being in at least one of the outcomes. So, with each iteration of decrease after the first one, we have that one of the outcomes will have at least one further person with zero well-being. Moreover, since all negative well-being has been cancelled out, decrease leaves all people with zero well-being as they are. And sort leaves the number of people with zero well-being unchanged. Hence, with each further iteration of decrease, we’ll get more and more people with zero well-being in the outcomes. So, after a finite number of iterations of sort and decrease, we end up with a pair of outcomes X′ and Y′ where everyone has zero well-being.

Then, let X′′ be an outcome that is just like X′ except that the identities of P 1 and P 2 have been permuted. By Pairwise Anonymity, we have that X′ and X′′ are equally good. For each person in these outcomes, the difference in their well-being between X′ and Y′ is the same as the difference in their well-being between X′ and X′′—namely, no difference at all. So, by Supervenience on Individual Stakes, we have that X′ and Y′ are equally good, since X′ and X′′ are equally good.

Since the outcomes in the final pair are equally good (that is, X′ and Y′ are equally good), we have that, in each pair in the sequence of pairs we have considered, the outcomes are equally good. Thus we can conclude that the outcomes in the pair we started with are equally good—that is, X and Y are equally good. So we have that, if the sum total of well-being is the same in X and Y, then X and Y are equally good.

We now turn to the case where the sum total of well-being is greater in one of the outcomes. So suppose now that the sum total of well-being is greater in X than in Y. And, as before, suppose that the same people exist in X and Y. Let X′ and Y′ be two outcomes such that (i) the same people exist in X, Y, X′, and Y′, (ii) X′ has the same sum total of well-being as X, (iii) Y′ has the same sum total of well-being as Y, and (iv) each of X′ and Y′ is perfectly equal—that is, in each of these outcomes, everyone has the same level of well-being. Hence we have that the same people exist in X′ and Y′ and that each of these people has higher well-being in X′ than in Y′. Then, from the Weak Principle of Dominance, we have that X′ is better than Y′. Since X and X′ have the same sum total of well-being, we have, by our previous result, that X and X′ are equally good. And, since Y and Y′ have the same sum total of well-being, we similarly have that Y and Y′ are equally good. Then, from Fixed-Population Transitivity, we have that X is better than Y.

So, combining these results, we have that X is at least as good as Y if and only if the sum total of well-being is at least as great in X as in Y. Hence, if Fixed-Population Transitivity, Pairwise Anonymity, Supervenience on Individual Stakes, and the Weak Principle of Dominance are all true, then Fixed-Population Utilitarianism is true given a finite population of at least two people. The second part of the proof—the proof of the biconditional’s left-to-right direction—is trivial.

B. Proof of the corollary

We shall prove the corollary that, given that the total number of people is finite, Fixed-Population Utilitarianism is true if and only if Fixed-Population Transitivity, Pairwise Anonymity, Reflexivity, Supervenience on Individual Stakes, and the Weak Principle of Dominance are all true.

We begin with the right-to-left direction of the biconditional. Given the theorem we proved in appendix A, we only need to cover outcomes with fewer than two people. First, we will consider the case where X and Y have the same sum total of well-being.

Suppose that only one person exists in X and Y. We have, by Reflexivity, that X is equally as good as X. And, for the person in X and Y, the difference in their well-being between X and Y is the same as the difference in their well-being between X and X—namely, no difference at all. So, by Supervenience on Individual Stakes, we have that X is equally as good as Y since X is equally as good as X.

Next, suppose that no people exist in X and Y. We have, by Reflexivity, that X is equally as good as X. Since no people exist in X and Y, we (trivially) have that the same people exist in these outcomes.Footnote 28 Then, by Supervenience on Individual Stakes, we have that X and Y are equally good.

Finally, we turn to the case where the sum total of well-being is greater in X than in Y. In this case, there has to be one person who exists in X and Y and who has higher well-being in X than in Y. Therefore, since there is only one person in X and Y and this person has higher well-being in X than in Y, we have, from the Weak Principle of Dominance, that X is better than Y.

Hence, if Fixed-Population Transitivity, Pairwise Anonymity, Reflexivity, Supervenience on Individual Stakes, and the Weak Principle of Dominance are all true, then Fixed-Population Utilitarianism is true given a finite population. As before, the proof of the biconditional’s left-to-right direction is trivial.