Impact Statement

Traditional Bayesian model calibration techniques can underestimate predictive uncertainty when the computational model cannot fully represent the observed data. This paper investigates how different likelihood formulations within an embedded model inadequacy framework influence the handling of measurement noise and model form uncertainties. The results highlight that the likelihood specification influences the posterior distribution, which subsequently governs the uncertainty propagated to predictions and unobserved QoIs. This provides valuable guidance for practitioners employing embedded model inadequacy in simulation-based calibration. By providing a more robust framework for managing uncertainties, this work advances the integration of simulations with measurement data, supporting practical applications such as digital twins.

1. Introduction

In the last few decades, advances in sensor technology have made available large quantities of affordable data that reflect the current state of physical systems and processes. In parallel, simulation models have become ubiquitous in science and engineering for generating predictions on the behavior of a given system based on physical laws. With the ongoing transition to an Industry 4.0 paradigm and the proliferation of Digital Twins, the challenge of calibrating simulation models based on data from physical sensors is becoming increasingly relevant (Vaidya et al., Reference Vaidya, Ambad and Bhosle2018). In the specific case of Digital Twins of physical systems that use simulations to predict the behavior of their real counterpart and make decisions on their behalf, the accuracy and reliability of such predictions are essential (Andrés Arcones et al., Reference Andrés Arcones, Weiser, Koutsourelakis and Unger2023). Therefore, their uncertainty must be adequately quantified to achieve such trustworthy predictions.

In most cases, the calibration of the computational models is achieved by estimating a set of parameters that control their behavior based on available observations from the modeled system. Although more uncertainty sources can be identified (Walker et al., Reference Walker, Harremoës, Rotmans, van der Sluijs, van Asselt, Janssen and Krayer von Krauss2003), this process introduces two main ones: the error attributed to noise or uncontrolled variations in the data and the discrepancy created by the assumptions used to generate the computational model. They can be related to aleatoric and epistemic uncertainty sources, respectively. While noise errors can be satisfactorily estimated from the observations, the so-called model discrepancy or model inadequacy presents further challenges. All models are based on assumptions and simplifications that are unavoidable when tackling an infinitely complex reality. Systematic reviews on different approaches for dealing with this model inadequacy exist (Campbell, Reference Campbell2006; Bojke et al., Reference Bojke, Claxton, Sculpher and Palmer2009; Gupta et al., Reference Gupta, Clark, Vrugt, Abramowitz and Ye2012; Pernot and Cailliez, Reference Pernot and Cailliez2017; Sung and Tuo, Reference Sung and Tuo2024), but they all coincide in the need for further research to achieve reliable methods for quantifying the model form uncertainty.

Bayesian approaches are usually implemented to infer the model parameters (Robert, Reference Robert2007; Gelman et al., Reference Gelman, Carlin, Stern, Dunson, Vehtari and Rubin2013), which allows for obtaining a probability distribution for those parameters based on the observed data. This distribution will narrow down to the optimal parameter value, which may not be able to represent the observations in the presence of model inadequacy (Kaipio and Somersalo, Reference Kaipio and Somersalo2006). One classical solution to this problem is the framework proposed by Kennedy and O’Hagan (Reference Kennedy and O’Hagan2001), which extends the model output with a flexible term that corrects the predictions to better reflect the observations. Since its conception, several extensions have been proposed within the framework (Bayarri et al., Reference Bayarri, Berger and Liu2009; Brynjarsdóttir and O’Hagan, Reference Brynjarsdóttir and O’Hagan2014; Plumlee, Reference Plumlee2017; Barbillon et al., Reference Barbillon, Forte and Paulo2024; Leoni et al., Reference Leoni, Maître, Rodio and Congedo2024). However, one key disadvantage of such implementations is the impossibility of transferring the inferred estimation of the model inadequacy term to other derived quantities of interest (QoI) computed with the same calibrated model (Andrés Arcones et al., Reference Andrés Arcones, Weiser, Koutsourelakis and Unger2024). In contrast to these external correction approaches that associate the uncertainty with the predictions from the model, internal corrections arise as an alternative by attributing the uncertainty to its components instead (Wu et al., Reference Wu, Levine, Schneider and Stuart2024). In this way, the inferred uncertainty can be pushed forward to any QoI that uses the same model parameters for its computation.

This family of approaches, also called parameter uncertainty inflation (PUI) methods (Pernot, Reference Pernot2017; Pernot and Cailliez, Reference Pernot and Cailliez2017) have been gaining traction in the last years. The most prominent approach is Sargsyan’s parameter embedding (Sargsyan et al., Reference Sargsyan, Najm and Ghanem2015, Reference Sargsyan, Huan and Najm2019), which adds a stochastic dimension to the variables to be inferred and fits it together with the other parameters. This methodology has been successfully implemented in the context of ignition reaction (Huan et al., Reference Huan, Safta, Sargsyan, Geraci, Eldred, Vane, Lacazek, Oefelein and Najm2017). As alternatives to this methodology, Mortensen et al. (Reference Mortensen, Kaasbjerg, Frederiksen, Nørskov, Sethna and Jacobsen2005) proposes the statistical manipulation of the inferred parameter variance, and Wu et al. (Reference Wu, Levine, Schneider and Stuart2024) suggests inferring the internal model error structure through Kalman filters.

Alternatively, and related to these internal correction strategies, Stochastic Model Updating (SMU) methods have been developed to jointly address aleatory and epistemic uncertainties by updating the probabilistic descriptions of model parameters based on discrepancies between simulations and experimental data. These methods typically require multiple observations per prediction point to separate the two uncertainty sources and to estimate probability distributions directly. For example, Bi et al. (Reference Bi, Broggi and Beer2019) proposed an SMU framework based on the Bhattacharyya distance within an Approximate Bayesian Computation (ABC) setting, while Lee et al. (Reference Lee, Yaoyama, Kitahara and Itoi2025) introduced a latent-space-based SMU formulation that leverages variational autoencoders to handle high-dimensional data efficiently. In contrast, the embedded model inadequacy framework adopted in this work does not require multiple observations to account for the epistemic uncertainty, as the aleatoric part is prescribed or inferred as a parameter. This distinction makes the embedded approach particularly suited to simulation-based model calibration problems where repeated experimental measurements are rarely feasible.

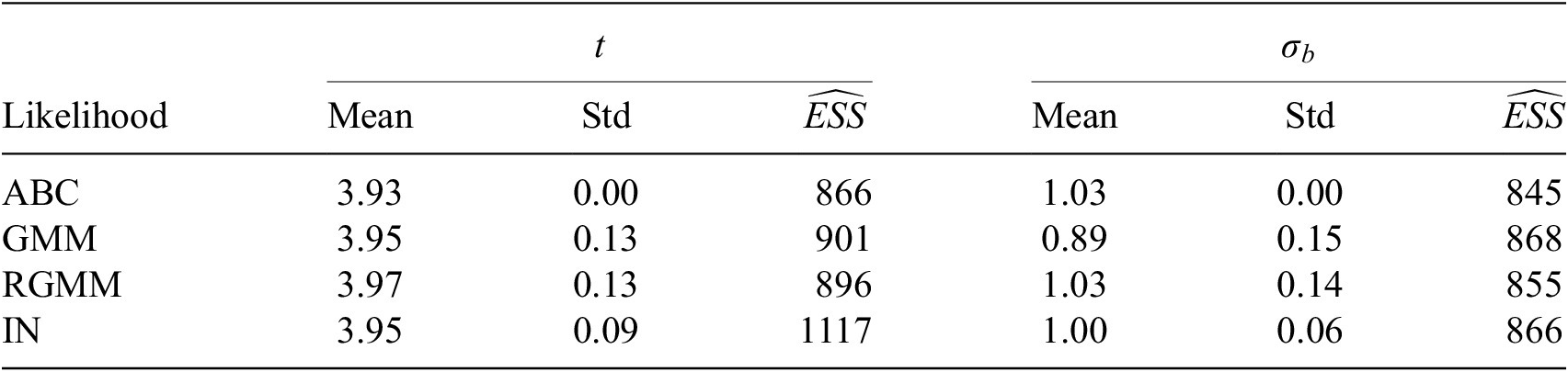

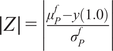

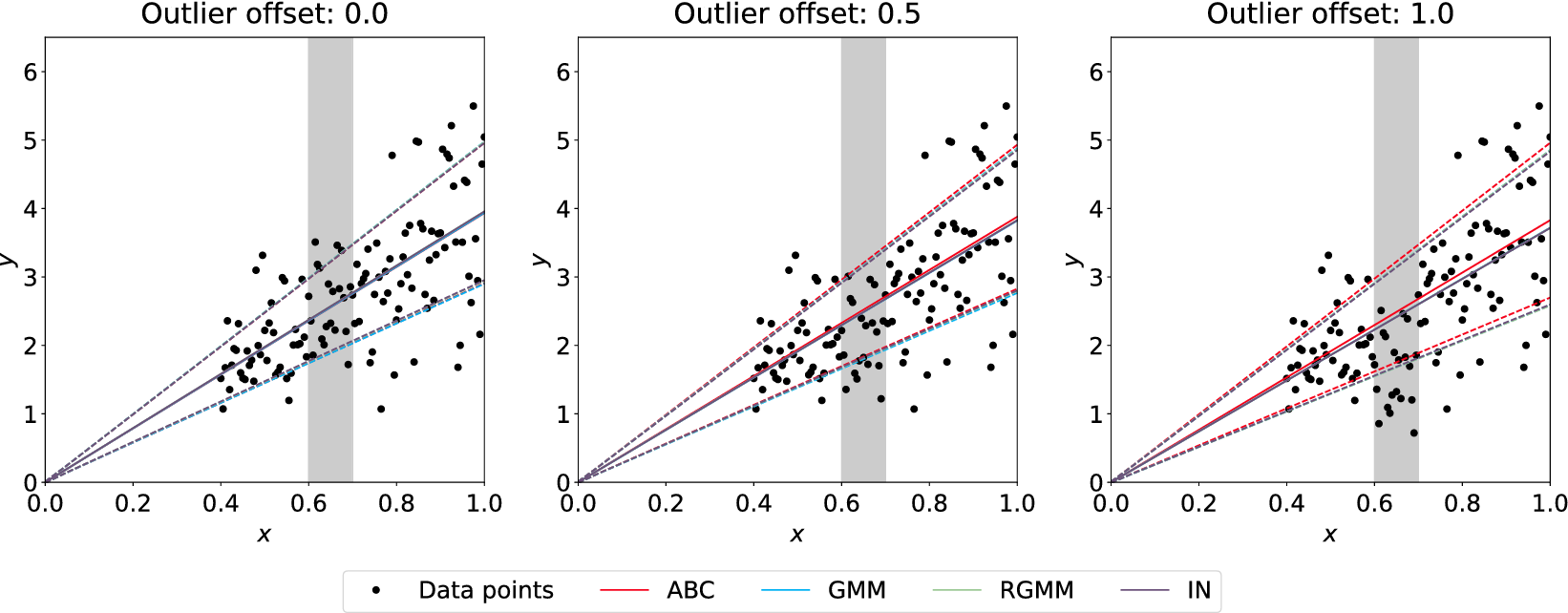

The aforementioned formulations have the advantage of offering a flexible implementation that can be adapted to the requirements of the problem. Despite being the most promising approach for dealing with model error through an internal correction, Sargsyan’s proposal requires further refinement for complex inference cases (Pernot, Reference Pernot2017). This paper aims to address the challenges that arise when using the embedded approach for the calibration of simulation models from sensor data, and in particular a) the impact of misspecified noise models, b) the presence of observations that cannot be explained with modifications of the estimated parameters, and c) how the choice of likelihood affects the uncertainty propagation to other QoIs. A modification in how the prescribed noise is handled is introduced in the two main likelihood formulations proposed in Sargsyan et al. (Reference Sargsyan, Najm and Ghanem2015) for the embedded problem—the Independent Normal (IN) and Approximate Bayesian Computation (ABC) likelihoods. The IN likelihood tends to overemphasize low variance locations (Sargsyan et al., Reference Sargsyan, Najm and Ghanem2015) and it will be shown that ABC is specially sensitive to misspecified noise and locations where the model discrepancy cannot be reduced by variations in the parameters. Two new formulations based on the statistical convergence of the residual distribution aimed to alleviate the flaws of IN and ABC are proposed in this paper: the Global Moment-matching (GMM) and Relative Global Moment-matching (RGMM) likelihoods. Further insight is provided for the choice in the control parameters of the likelihoods and their potential shortcomings. Finally, an analysis of the propagation of the uncertainties through QoI is developed. The QoIs obtained from the computational model are observed by virtual sensors (Andrés Arcones et al., Reference Andrés Arcones, Weiser, Koutsourelakis and Unger2023). In contrast with real sensors that are installed in the physical system, virtual sensors observe values provided by the computational model. They are not limited to observable quantities as the real sensors, but can represent any QoI. Quantifying the uncertainty in the output of virtual sensors is key for an assessment of the reliability of the predictions of QoIs.

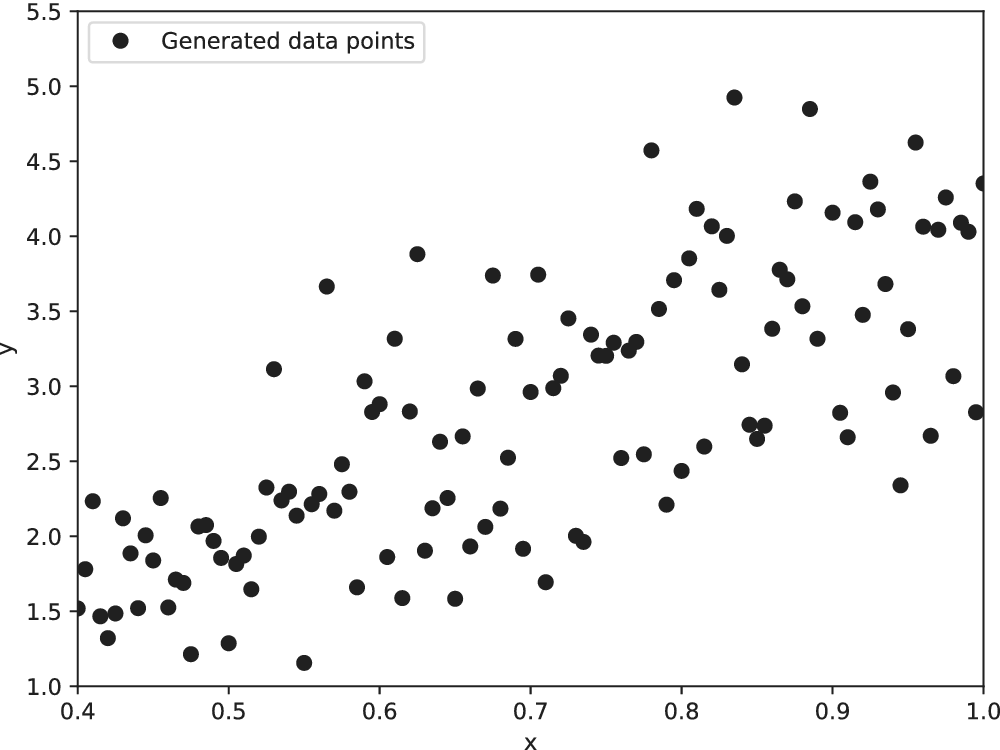

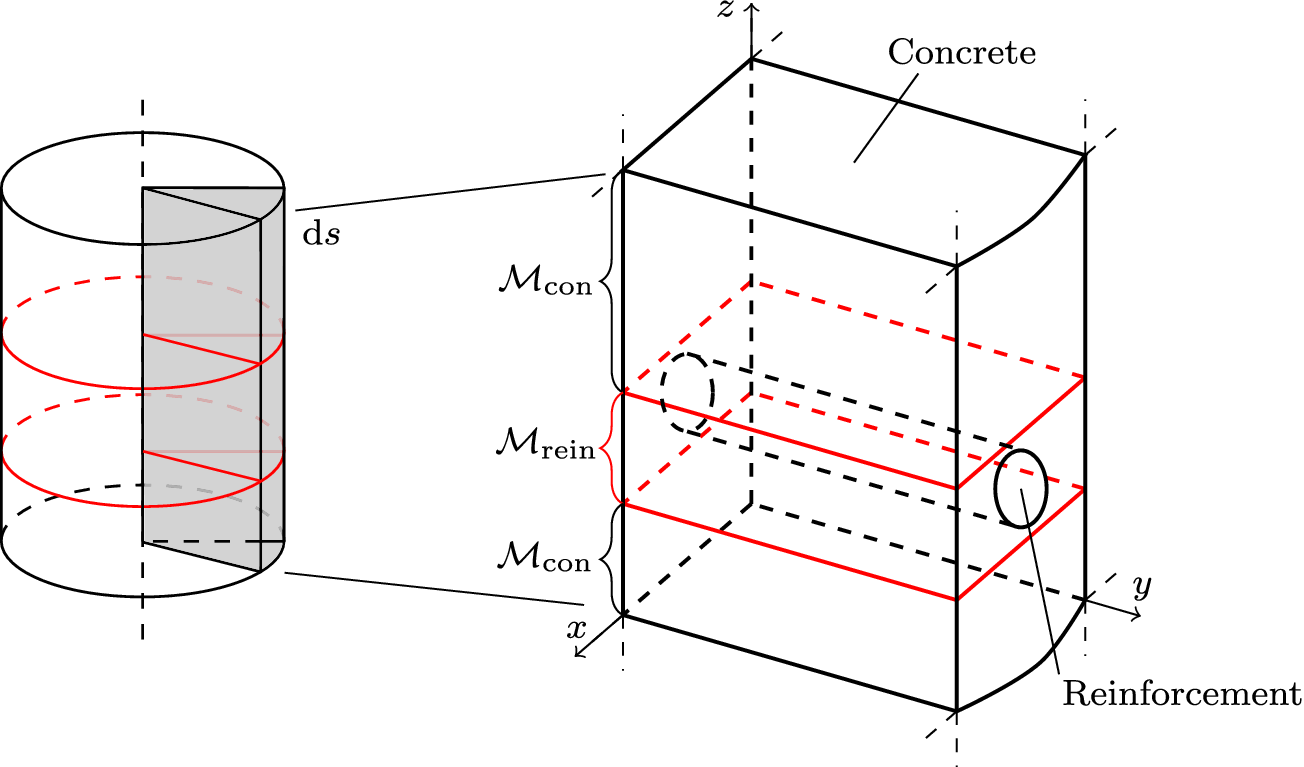

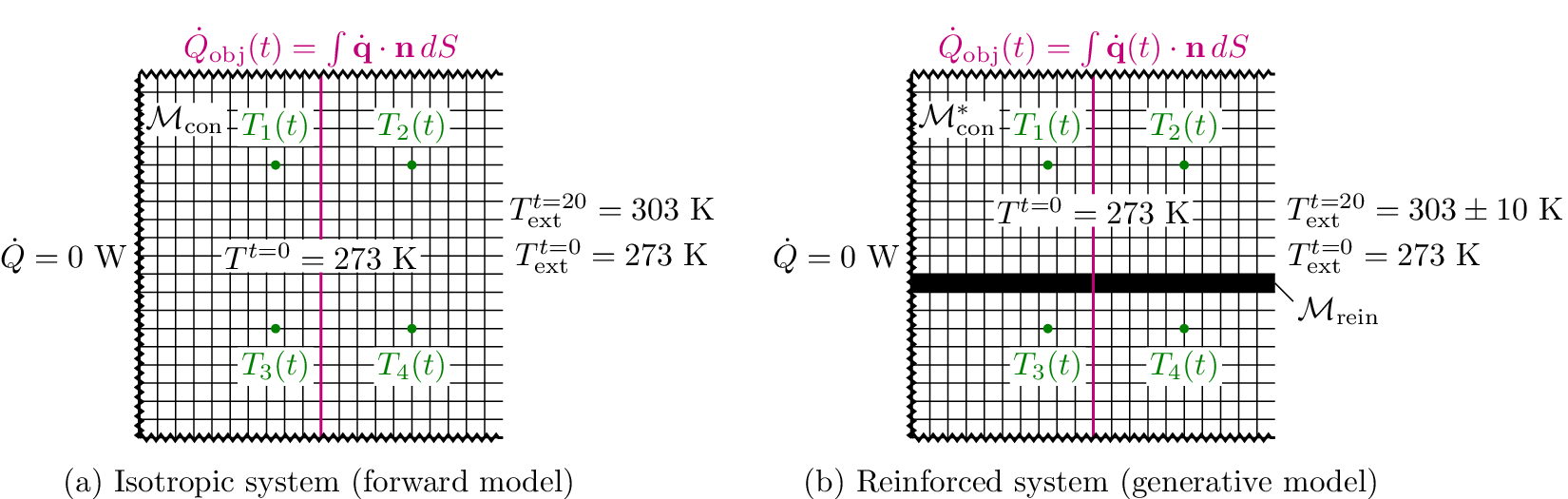

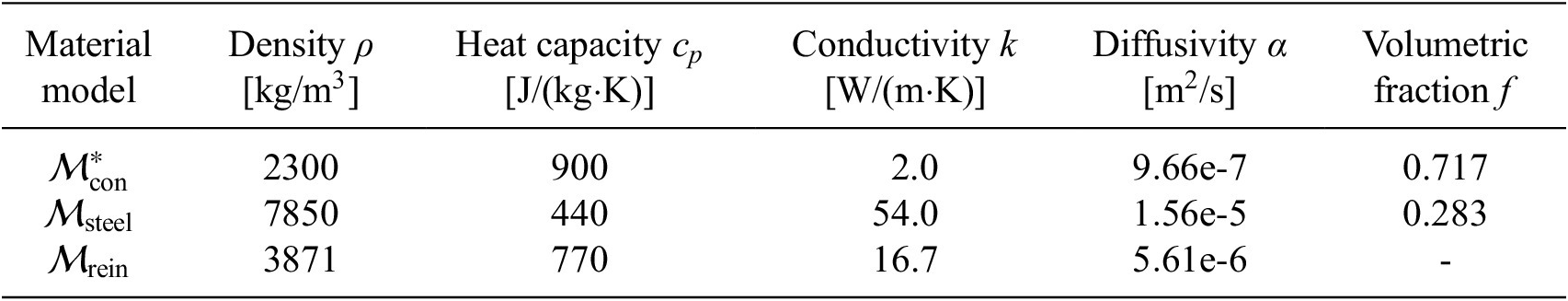

The advantages and disadvantages of each formulation will first be illustrated on a one-dimensional, linear example with different variations that reflect the possible errors in the observations. Afterwards, a more complex transient thermal model simulating the heat transfer through a reinforced concrete structure is investigated. The objective of the more complex case is to test the propagation of the inferred uncertainty using each different likelihood to a non-related QoI. The structure of the rest of the paper is as follows: Section 2 presents the embedded approach, the likelihood functions and the proposed extensions, Section 3 includes the simple, linear model with its variations (Section 3.1) and the complex transient thermal one (Section 3.2), and conclusions and possible extensions are provided in Section 4. The methods here implemented are included in the Python package probeye ([dataset] BAMResearch, 2024).

2. Methodology

2.1. Model form uncertainty framework

Let

![]() $ f $

be a computable, deterministic function

$ f $

be a computable, deterministic function

![]() $ f:\Theta \times {\mathrm{\mathbb{R}}}^{n_x}\to {\mathrm{\mathbb{R}}}^{n_z} $

for a

$ f:\Theta \times {\mathrm{\mathbb{R}}}^{n_x}\to {\mathrm{\mathbb{R}}}^{n_z} $

for a

![]() $ {n}_x $

-dimensional input

$ {n}_x $

-dimensional input

![]() $ x\in {\mathrm{\mathbb{R}}}^{n_x} $

, parametrized through a set of

$ x\in {\mathrm{\mathbb{R}}}^{n_x} $

, parametrized through a set of

![]() $ {n}_{\theta } $

parameters

$ {n}_{\theta } $

parameters

![]() $ \boldsymbol{\theta} \in \Theta \subseteq {\mathrm{\mathbb{R}}}^{n_{\theta }} $

, which have been restricted to real values for simplicity. This function

$ \boldsymbol{\theta} \in \Theta \subseteq {\mathrm{\mathbb{R}}}^{n_{\theta }} $

, which have been restricted to real values for simplicity. This function

![]() $ f $

models the real response

$ f $

models the real response

![]() $ z:{\mathrm{\mathbb{R}}}^{n_x}\to {\mathrm{\mathbb{R}}}^{n_z} $

, defined as a real-valued map that generates the

$ z:{\mathrm{\mathbb{R}}}^{n_x}\to {\mathrm{\mathbb{R}}}^{n_z} $

, defined as a real-valued map that generates the

![]() $ {n}_z $

-dimensional response of a real system. In that case, an additional error term

$ {n}_z $

-dimensional response of a real system. In that case, an additional error term

![]() $ {\varepsilon}_{\mathrm{model}}=z-f $

rooted in the inability of

$ {\varepsilon}_{\mathrm{model}}=z-f $

rooted in the inability of

![]() $ f $

to exactly reproduce

$ f $

to exactly reproduce

![]() $ z $

is introduced. This model inadequacy term

$ z $

is introduced. This model inadequacy term

![]() $ {\varepsilon}_{\mathrm{model}} $

will be referred to as model form uncertainty. If the same response is measured through sensor observations

$ {\varepsilon}_{\mathrm{model}} $

will be referred to as model form uncertainty. If the same response is measured through sensor observations

![]() $ y $

, a noise

$ y $

, a noise

![]() $ {\varepsilon}_{\mathrm{noise}} $

will introduce an additional model inadequacy with

$ {\varepsilon}_{\mathrm{noise}} $

will introduce an additional model inadequacy with

![]() $ z $

. The measurement noise

$ z $

. The measurement noise

![]() $ {\varepsilon}_{\mathrm{noise}} $

is typically prescribed by the sensor manufacturer, often assuming an additive, homoscedastic Gaussian error model with known variance

$ {\varepsilon}_{\mathrm{noise}} $

is typically prescribed by the sensor manufacturer, often assuming an additive, homoscedastic Gaussian error model with known variance

![]() $ {\left({\sigma}_N\right)}^2 $

, whereas in other situations, its statistical properties are estimated directly from repeated or reference data observations. In this work, we generally adopt the former case unless explicitly stated otherwise, as it enables a tractable analytical treatment of the noise contribution consistent with the objectives of this study. The relation between observations and computational model predictions can be expressed as

$ {\left({\sigma}_N\right)}^2 $

, whereas in other situations, its statistical properties are estimated directly from repeated or reference data observations. In this work, we generally adopt the former case unless explicitly stated otherwise, as it enables a tractable analytical treatment of the noise contribution consistent with the objectives of this study. The relation between observations and computational model predictions can be expressed as

modeling the model inadequacy terms additively. This formulation was introduced in the seminal paper of Kennedy and O’Hagan (Reference Kennedy and O’Hagan2001), where they state the need for including the model error in the calibration of computational models. Alternative formulations are possible, such as a multiplicative relation between model inadequacy terms and model predictions.

The objective of the so-called inverse problem is to estimate the values for the parameters

![]() $ {\boldsymbol{\theta}}^{\ast} $

such that

$ {\boldsymbol{\theta}}^{\ast} $

such that

![]() $ f $

best approximates

$ f $

best approximates

![]() $ z $

as

$ z $

as

where

![]() $ \parallel \cdot \parallel $

is a distance to be defined later. As

$ \parallel \cdot \parallel $

is a distance to be defined later. As

![]() $ z $

is not generally known, it is commonly substituted by the set of

$ z $

is not generally known, it is commonly substituted by the set of

![]() $ {n}_y $

sensor observations

$ {n}_y $

sensor observations

![]() $ \boldsymbol{y} $

for known input parameters

$ \boldsymbol{y} $

for known input parameters

![]() $ \boldsymbol{x} $

, where bold notation will be used to denote vector quantities. The inverse problem then transforms to

$ \boldsymbol{x} $

, where bold notation will be used to denote vector quantities. The inverse problem then transforms to

Bayesian approaches are a popular choice to solve the inverse problem (Kaipio and Somersalo, Reference Kaipio and Somersalo2006). They provide a posterior probability density

![]() $ \pi \left(\boldsymbol{\theta} |\boldsymbol{y}\right) $

on the parameters

$ \pi \left(\boldsymbol{\theta} |\boldsymbol{y}\right) $

on the parameters

![]() $ \boldsymbol{\theta} $

given the observations

$ \boldsymbol{\theta} $

given the observations

![]() $ \boldsymbol{y} $

and the computational model

$ \boldsymbol{y} $

and the computational model

![]() $ \boldsymbol{f}\left(\boldsymbol{\theta}, \boldsymbol{x}\right) $

. The application of Bayes’ theorem yields

$ \boldsymbol{f}\left(\boldsymbol{\theta}, \boldsymbol{x}\right) $

. The application of Bayes’ theorem yields

where

![]() $ \pi \left(\boldsymbol{\theta} |\boldsymbol{y}\right) $

is the posterior probability distribution,

$ \pi \left(\boldsymbol{\theta} |\boldsymbol{y}\right) $

is the posterior probability distribution,

![]() $ \pi \left(\boldsymbol{\theta} \right) $

is the prior probability distribution that encompasses the previous knowledge on the unknown parameters,

$ \pi \left(\boldsymbol{\theta} \right) $

is the prior probability distribution that encompasses the previous knowledge on the unknown parameters,

![]() $ \pi \left(\boldsymbol{y}|\boldsymbol{\theta} \right) $

is the likelihood of the observations

$ \pi \left(\boldsymbol{y}|\boldsymbol{\theta} \right) $

is the likelihood of the observations

![]() $ \boldsymbol{y} $

having been generated by

$ \boldsymbol{y} $

having been generated by

![]() $ \boldsymbol{\theta} $

and

$ \boldsymbol{\theta} $

and

![]() $ \pi \left(\boldsymbol{y}\right) $

is the marginal probability distribution of the observations. We assume the prior distribution

$ \pi \left(\boldsymbol{y}\right) $

is the marginal probability distribution of the observations. We assume the prior distribution

![]() $ \pi \left(\boldsymbol{\theta} \right) $

to be given, such that the remaining key challenge is the choice and evaluation of the likelihood function

$ \pi \left(\boldsymbol{\theta} \right) $

to be given, such that the remaining key challenge is the choice and evaluation of the likelihood function

![]() $ \mathcal{L}\left(\boldsymbol{\theta} \right)=\pi \left(\boldsymbol{y}|\boldsymbol{\theta} \right) $

for the given set of observations

$ \mathcal{L}\left(\boldsymbol{\theta} \right)=\pi \left(\boldsymbol{y}|\boldsymbol{\theta} \right) $

for the given set of observations

![]() $ \boldsymbol{y} $

. Bayesian inference approaches are based on the evaluation of the relationship from Equation 4 to obtain the posterior distribution of the unknown parameters

$ \boldsymbol{y} $

. Bayesian inference approaches are based on the evaluation of the relationship from Equation 4 to obtain the posterior distribution of the unknown parameters

![]() $ \boldsymbol{\theta} $

.

$ \boldsymbol{\theta} $

.

Once the posterior distribution of the unknown parameters is obtained, it can be pushed forward to generate predictions

![]() $ {\boldsymbol{f}}_P\left(\boldsymbol{\theta}, \boldsymbol{x}|\boldsymbol{y}\right) $

using the same forward model

$ {\boldsymbol{f}}_P\left(\boldsymbol{\theta}, \boldsymbol{x}|\boldsymbol{y}\right) $

using the same forward model

![]() $ f $

or they can be used in a different model

$ f $

or they can be used in a different model

![]() $ g\left(\boldsymbol{\theta} \right):\Theta \to \mathrm{\mathbb{R}} $

, for example, that computes a QoI using the same model parameters but evaluating a different quantity. In the first case

$ g\left(\boldsymbol{\theta} \right):\Theta \to \mathrm{\mathbb{R}} $

, for example, that computes a QoI using the same model parameters but evaluating a different quantity. In the first case

![]() $ {\boldsymbol{f}}_P\left(\boldsymbol{\theta}, \boldsymbol{x}|\boldsymbol{y}\right) $

can usually be compared with

$ {\boldsymbol{f}}_P\left(\boldsymbol{\theta}, \boldsymbol{x}|\boldsymbol{y}\right) $

can usually be compared with

![]() $ \boldsymbol{y} $

and indirectly

$ \boldsymbol{y} $

and indirectly

![]() $ \boldsymbol{z}\left(\boldsymbol{x}\right) $

through

$ \boldsymbol{z}\left(\boldsymbol{x}\right) $

through

![]() $ {\varepsilon}_{\mathrm{noise}} $

.

$ {\varepsilon}_{\mathrm{noise}} $

.

The calculation of

![]() $ \mathcal{L}\left(\boldsymbol{\theta} \right) $

requires the evaluation of the computational model

$ \mathcal{L}\left(\boldsymbol{\theta} \right) $

requires the evaluation of the computational model

![]() $ f $

under the assumption that it generates the real system’s response

$ f $

under the assumption that it generates the real system’s response

![]() $ z $

. However, that is not generally the case and the model error must be considered. Kennedy and O’Hagan (Reference Kennedy and O’Hagan2001) propose to include the model form uncertainty

$ z $

. However, that is not generally the case and the model error must be considered. Kennedy and O’Hagan (Reference Kennedy and O’Hagan2001) propose to include the model form uncertainty

![]() $ {\varepsilon}_{\mathrm{model}} $

in the inference procedure, formulated as a Gaussian Process added to the predicted response as in Equation 1. Despite its potential applications, this model’s inadequacy term lacks physical meaning and cannot be employed outside of the use case with which it is inferred. Therefore, the uncertainty quantified by

$ {\varepsilon}_{\mathrm{model}} $

in the inference procedure, formulated as a Gaussian Process added to the predicted response as in Equation 1. Despite its potential applications, this model’s inadequacy term lacks physical meaning and cannot be employed outside of the use case with which it is inferred. Therefore, the uncertainty quantified by

![]() $ {\varepsilon}_{\mathrm{model}} $

cannot be propagated to other QoI through the model

$ {\varepsilon}_{\mathrm{model}} $

cannot be propagated to other QoI through the model

![]() $ g $

. Notice that this model inadequacy term does not aim to identify the deficiencies in the model and correct them, but to introduce a term that envelops their effects, such that the response is corrected.

$ g $

. Notice that this model inadequacy term does not aim to identify the deficiencies in the model and correct them, but to introduce a term that envelops their effects, such that the response is corrected.

More importantly, adding the model inadequacy term to the predicted outputs does not address one of the main challenges of using classical Bayesian inference approaches with imperfect models: the posterior distributions of the unknown parameters collapse to Dirac-delta distributions, which produce model responses that do not correspond with the system that generated the observation. This is commonly known as the problem of misspecification. The Bernstein–von Mises theorem states that under a set of conditions of continuity, differentiability and non-singularity, the posterior distribution

![]() $ \pi \left(\boldsymbol{\theta} |\boldsymbol{y}\right) $

converges in total variation (TV) distance with the true generating process

$ \pi \left(\boldsymbol{\theta} |\boldsymbol{y}\right) $

converges in total variation (TV) distance with the true generating process

![]() $ {\pi}_{{\boldsymbol{\theta}}_0} $

to a multivariate normal distribution centered at the maximum likelihood estimator (MLE)

$ {\pi}_{{\boldsymbol{\theta}}_0} $

to a multivariate normal distribution centered at the maximum likelihood estimator (MLE)

![]() $ \hat{\boldsymbol{\theta}} $

and covariance matrix

$ \hat{\boldsymbol{\theta}} $

and covariance matrix

![]() $ {\left({n}_y\right)}^{-1}\mathcal{I}{\left({\boldsymbol{\theta}}_0\right)}^{-1} $

. Here,

$ {\left({n}_y\right)}^{-1}\mathcal{I}{\left({\boldsymbol{\theta}}_0\right)}^{-1} $

. Here,

![]() $ {n}_y $

is the number of observations in

$ {n}_y $

is the number of observations in

![]() $ \boldsymbol{y} $

and

$ \boldsymbol{y} $

and

![]() $ \mathcal{I}\left({\boldsymbol{\theta}}_0\right) $

is Fisher’s information matrix at the true values

$ \mathcal{I}\left({\boldsymbol{\theta}}_0\right) $

is Fisher’s information matrix at the true values

![]() $ {\boldsymbol{\theta}}_0 $

of the unknown parameters (van der Vaart, Reference van der Vaart2000). Formally, this can be formulated as

$ {\boldsymbol{\theta}}_0 $

of the unknown parameters (van der Vaart, Reference van der Vaart2000). Formally, this can be formulated as

This result is crucial for constructing confidence intervals for the parameters and predictive responses, linking Bayesian and frequentist statistics. For large values of

![]() $ {n}_y $

, the posterior distribution becomes concentrated around the MLE

$ {n}_y $

, the posterior distribution becomes concentrated around the MLE

![]() $ \hat{\boldsymbol{\theta}} $

. However, Kleijn and van der Vaart (Reference Kleijn and van der Vaart2012) demonstrated that the confidence intervals derived from Bernstein–von Mises theorem application only reflect the real credibility of the predictions in the case of perfect models that can reproduce the observations exactly.

$ \hat{\boldsymbol{\theta}} $

. However, Kleijn and van der Vaart (Reference Kleijn and van der Vaart2012) demonstrated that the confidence intervals derived from Bernstein–von Mises theorem application only reflect the real credibility of the predictions in the case of perfect models that can reproduce the observations exactly.

In the case of imperfect models, where discrepancies exist between the model and the data, the posterior distribution still converges to a multivariate normal distribution that concentrates around the MLE

![]() $ \hat{\boldsymbol{\theta}} $

for large

$ \hat{\boldsymbol{\theta}} $

for large

![]() $ {n}_y $

. However,

$ {n}_y $

. However,

![]() $ \hat{\boldsymbol{\theta}} $

may not generate the observations due to the model inadequacy, even for large

$ \hat{\boldsymbol{\theta}} $

may not generate the observations due to the model inadequacy, even for large

![]() $ {n}_y $

, and therefore the associated confidence intervals for the predictive response cannot be interpreted as credible intervals with respect to the true system, as demonstrated by Kleijn and van der Vaart (Reference Kleijn and van der Vaart2012). This implies that the predicted distributions do not adequately capture the variability of the true system, making them unsuitable for quantifying system uncertainty.

$ {n}_y $

, and therefore the associated confidence intervals for the predictive response cannot be interpreted as credible intervals with respect to the true system, as demonstrated by Kleijn and van der Vaart (Reference Kleijn and van der Vaart2012). This implies that the predicted distributions do not adequately capture the variability of the true system, making them unsuitable for quantifying system uncertainty.

When the model inadequacy vector

![]() $ {\delta}_{\mathrm{model}} $

, that models

$ {\delta}_{\mathrm{model}} $

, that models

![]() $ {\varepsilon}_{\mathrm{model}} $

at the observation points, is implemented as a correction to the model response, the combined model

$ {\varepsilon}_{\mathrm{model}} $

at the observation points, is implemented as a correction to the model response, the combined model

![]() $ {\boldsymbol{f}}_P+{\delta}_{\mathrm{model}} $

can reproduce exactly the observations

$ {\boldsymbol{f}}_P+{\delta}_{\mathrm{model}} $

can reproduce exactly the observations

![]() $ \mathbf{y} $

. As a result, the Bernstein–von Mises theorem holds within the domain of the parameters updated during this process. However, the model

$ \mathbf{y} $

. As a result, the Bernstein–von Mises theorem holds within the domain of the parameters updated during this process. However, the model

![]() $ {\boldsymbol{f}}_P $

alone cannot reliably quantify uncertainty without correcting the

$ {\boldsymbol{f}}_P $

alone cannot reliably quantify uncertainty without correcting the

![]() $ {\varepsilon}_{\mathrm{model}} $

, as it is limited to the observations’ domain. Similar to the scenario where no model inadequacy term is used, the model produces overly concentrated posterior distributions for both the parameters and the response, which may fail to represent the observations accurately. As the computation of QoIs through

$ {\varepsilon}_{\mathrm{model}} $

, as it is limited to the observations’ domain. Similar to the scenario where no model inadequacy term is used, the model produces overly concentrated posterior distributions for both the parameters and the response, which may fail to represent the observations accurately. As the computation of QoIs through

![]() $ g\left(\boldsymbol{\theta} \right) $

usually depends only on the parameters and not on the corrected model response

$ g\left(\boldsymbol{\theta} \right) $

usually depends only on the parameters and not on the corrected model response

![]() $ {\boldsymbol{f}}_P+{\varepsilon}_{\mathrm{model}} $

, the uncertainty in the QoI will not be representative of the credibility of the model.

$ {\boldsymbol{f}}_P+{\varepsilon}_{\mathrm{model}} $

, the uncertainty in the QoI will not be representative of the credibility of the model.

A promising solution to these challenges is the use of an embedded formulation where the inadequacy is added to the model through the unknown parameters. The objective is to augment those parameters with an additional stochastic variable that introduces random variations in the pre-existing model parameters. It is the introduction of this variability what prevents the unknown parameters from presenting a concentrated posterior for large

![]() $ {n}_y $

. Following the same philosophy as Kennedy and O’Hagan’s (KOH) framework, no corrections are introduced in the structure of the physical model which allows the approach to be used non-intrusively with parametrized black-box models. This embedding is presented through Sections 2.2 to 2.4, the likelihood definition and evaluation in Sections 2.5 and 2.6, and the calculation of predicted variables through

$ {n}_y $

. Following the same philosophy as Kennedy and O’Hagan’s (KOH) framework, no corrections are introduced in the structure of the physical model which allows the approach to be used non-intrusively with parametrized black-box models. This embedding is presented through Sections 2.2 to 2.4, the likelihood definition and evaluation in Sections 2.5 and 2.6, and the calculation of predicted variables through

![]() $ {\boldsymbol{f}}_P $

and QoIs through

$ {\boldsymbol{f}}_P $

and QoIs through

![]() $ g $

are presented in Section 2.7.

$ g $

are presented in Section 2.7.

2.2. Embedding of model form uncertainty

The objective of the embedding is that, despite the unavoidable collapse of the posterior distributions of the unknown parameters to Dirac-deltas, the distribution of the response should preserve the variance present in the observations. This is achieved by treating the unknown parameters

![]() $ \boldsymbol{\theta} $

as random variables with known distributions. The objective is now to infer the values of the parameters of the distribution. We will denote these random variables as

$ \boldsymbol{\theta} $

as random variables with known distributions. The objective is now to infer the values of the parameters of the distribution. We will denote these random variables as

![]() $ \tilde{\boldsymbol{\theta}} $

. This idea is similar to the one employed in hierarchical Bayesian approaches, where the prior distribution of

$ \tilde{\boldsymbol{\theta}} $

. This idea is similar to the one employed in hierarchical Bayesian approaches, where the prior distribution of

![]() $ \boldsymbol{\theta} $

is unknown. This turns the originally deterministic model into a stochastic one. With this approach, the extended model’s response

$ \boldsymbol{\theta} $

is unknown. This turns the originally deterministic model into a stochastic one. With this approach, the extended model’s response

![]() $ f\left(\tilde{\boldsymbol{\theta}}\right) $

does not necessarily collapse to a Dirac-delta distribution, as a given sample of the inferred parameters still generates a non-concentrated distribution of the random variable

$ f\left(\tilde{\boldsymbol{\theta}}\right) $

does not necessarily collapse to a Dirac-delta distribution, as a given sample of the inferred parameters still generates a non-concentrated distribution of the random variable

![]() $ \tilde{\boldsymbol{\theta}} $

.

$ \tilde{\boldsymbol{\theta}} $

.

There are different ways to model the random vector

![]() $ \tilde{\boldsymbol{\theta}} $

. One well-known approach, employed in Sargsyan et al. (Reference Sargsyan, Najm and Ghanem2015, Reference Sargsyan, Huan and Najm2019), uses Polynomial Chaos Expansions (PCE) to represent the stochastic, unknown parameters. Specifically, the parameter vector

$ \tilde{\boldsymbol{\theta}} $

. One well-known approach, employed in Sargsyan et al. (Reference Sargsyan, Najm and Ghanem2015, Reference Sargsyan, Huan and Najm2019), uses Polynomial Chaos Expansions (PCE) to represent the stochastic, unknown parameters. Specifically, the parameter vector

![]() $ \tilde{\boldsymbol{\theta}} $

is expressed as a series expansion as

$ \tilde{\boldsymbol{\theta}} $

is expressed as a series expansion as

where

![]() $ {\alpha}_j\in {\mathrm{\mathbb{R}}}^{n_{\theta }} $

are the expansion’s coefficients (included in the unknown parameter space

$ {\alpha}_j\in {\mathrm{\mathbb{R}}}^{n_{\theta }} $

are the expansion’s coefficients (included in the unknown parameter space

![]() $ \Theta $

),

$ \Theta $

),

![]() $ {\Psi}_j:{\mathrm{\mathbb{R}}}^{n_{\xi }}\to \mathrm{\mathbb{R}} $

are orthogonal polynomials, and

$ {\Psi}_j:{\mathrm{\mathbb{R}}}^{n_{\xi }}\to \mathrm{\mathbb{R}} $

are orthogonal polynomials, and

![]() $ \boldsymbol{\xi} $

is a set of stochastic variables (also called the stochastic germ) with

$ \boldsymbol{\xi} $

is a set of stochastic variables (also called the stochastic germ) with

![]() $ \boldsymbol{\xi} \in {\mathrm{\mathbb{R}}}_{\xi}^n $

where

$ \boldsymbol{\xi} \in {\mathrm{\mathbb{R}}}_{\xi}^n $

where

![]() $ {n}_{\xi } $

is the number of input random variables considered. This PCE-based approach allows for a flexible representation of the distribution of

$ {n}_{\xi } $

is the number of input random variables considered. This PCE-based approach allows for a flexible representation of the distribution of

![]() $ \tilde{\boldsymbol{\theta}} $

.

$ \tilde{\boldsymbol{\theta}} $

.

In this paper, instead of representing

![]() $ \tilde{\boldsymbol{\theta}} $

as a polynomial expansion, we adopt an explicit representation where the stochasticity is introduced through an additive model inadequacy term. This approach is in line with the proposal of Oliver et al. (Reference Oliver, Terejanu, Simmons and Moser2015) for model error characterization and the work of Strong and Oakley (Reference Strong and Oakley2014) on internal model discrepancies decomposition. Specifically, we model the random vector

$ \tilde{\boldsymbol{\theta}} $

as a polynomial expansion, we adopt an explicit representation where the stochasticity is introduced through an additive model inadequacy term. This approach is in line with the proposal of Oliver et al. (Reference Oliver, Terejanu, Simmons and Moser2015) for model error characterization and the work of Strong and Oakley (Reference Strong and Oakley2014) on internal model discrepancies decomposition. Specifically, we model the random vector

![]() $ \tilde{\boldsymbol{\theta}} $

as

$ \tilde{\boldsymbol{\theta}} $

as

where

![]() $ {\boldsymbol{\theta}}^m $

represents the deterministic part, and

$ {\boldsymbol{\theta}}^m $

represents the deterministic part, and

![]() $ \delta \left({\boldsymbol{\theta}}^b\right) $

is a parameterized zero-mean random vector that captures the stochastic deviation from

$ \delta \left({\boldsymbol{\theta}}^b\right) $

is a parameterized zero-mean random vector that captures the stochastic deviation from

![]() $ {\boldsymbol{\theta}}^m $

. The parameters

$ {\boldsymbol{\theta}}^m $

. The parameters

![]() $ {\boldsymbol{\theta}}^b $

control the magnitude of the stochasticity, and the explicit form of

$ {\boldsymbol{\theta}}^b $

control the magnitude of the stochasticity, and the explicit form of

![]() $ \delta \left({\boldsymbol{\theta}}^b\right) $

is chosen based on prior assumptions about the model inadequacy structure. In practice, this is equivalent to a first degree PCE using Sargsyan’s approach. We advocate, however, for explicitly defining the structure of the additional variables associated with the uncertainty and their relation to the original variables, instead of using the coefficients of the PCE directly.

$ \delta \left({\boldsymbol{\theta}}^b\right) $

is chosen based on prior assumptions about the model inadequacy structure. In practice, this is equivalent to a first degree PCE using Sargsyan’s approach. We advocate, however, for explicitly defining the structure of the additional variables associated with the uncertainty and their relation to the original variables, instead of using the coefficients of the PCE directly.

For simplicity, and because the exact shape of the distribution is often unknown, we assume that the model inadequacy

![]() $ \delta $

is a random variable that depends on

$ \delta $

is a random variable that depends on

![]() $ {\boldsymbol{\theta}}^b $

, following a joint normal distribution. Specifically, we assume

$ {\boldsymbol{\theta}}^b $

, following a joint normal distribution. Specifically, we assume

where

![]() $ {\boldsymbol{\theta}}^b\in {\mathrm{\mathbb{R}}}^{n_{\theta }} $

controls the variance of each parameter in

$ {\boldsymbol{\theta}}^b\in {\mathrm{\mathbb{R}}}^{n_{\theta }} $

controls the variance of each parameter in

![]() $ \tilde{\boldsymbol{\theta}} $

. This formulation offers a clear interpretation of the model inadequacy associated with each parameter, making it easy to identify the sources of uncertainty and to calibrate the model accordingly. If the parameters are constrained to be positive or known to follow a different distribution, this assumption may need to be revisited. Nevertheless, it is often possible to transform other distributions to a normal through isoprobabilistic transformations, e.g. the Nataf transform. In particular, multi-modality in the parameter distributions must be supported explicitly in the formulation of the stochastic extension if it is expected. The need to explicitly define a joint distribution for the additional uncertainty parameters is one of the main limitations compared with the PCE approximation. However, it allows more control over the structure of the uncertainty, which may be valuable for complex scenarios with interacting variables, such as those described in Strong and Oakley (Reference Strong and Oakley2014).

$ \tilde{\boldsymbol{\theta}} $

. This formulation offers a clear interpretation of the model inadequacy associated with each parameter, making it easy to identify the sources of uncertainty and to calibrate the model accordingly. If the parameters are constrained to be positive or known to follow a different distribution, this assumption may need to be revisited. Nevertheless, it is often possible to transform other distributions to a normal through isoprobabilistic transformations, e.g. the Nataf transform. In particular, multi-modality in the parameter distributions must be supported explicitly in the formulation of the stochastic extension if it is expected. The need to explicitly define a joint distribution for the additional uncertainty parameters is one of the main limitations compared with the PCE approximation. However, it allows more control over the structure of the uncertainty, which may be valuable for complex scenarios with interacting variables, such as those described in Strong and Oakley (Reference Strong and Oakley2014).

Unlike the PCE approach, which implicitly incorporates model inadequacy through a series expansion and introduces many unknown parameters for the PCE coefficients, an explicit model inadequacy representation offers a more direct and interpretable description of uncertainty. This is particularly valuable for model calibration, where identifying the sources of model inadequacy is crucial. Additionally, since the calculation of QoIs may only require some of the calibrated parameters, defining the embedded model inadequacy

![]() $ {\boldsymbol{\theta}}^b $

independently for each parameter ensures that only the uncertainty associated with the relevant parameter is transferred. Separating the model inadequacy representation from the inference process also enables a more transparent treatment of uncertainty, reducing the identifiability issues that can arise when simultaneously fitting both the inadequacy and model parameters.

$ {\boldsymbol{\theta}}^b $

independently for each parameter ensures that only the uncertainty associated with the relevant parameter is transferred. Separating the model inadequacy representation from the inference process also enables a more transparent treatment of uncertainty, reducing the identifiability issues that can arise when simultaneously fitting both the inadequacy and model parameters.

For the remainder of this paper, we will use the formulation in Equation 7, assuming that

![]() $ \delta \left({\boldsymbol{\theta}}^b\right)\sim \mathcal{N}\left(0,\operatorname{diag}\left({\boldsymbol{\theta}}^b\right)\right) $

. Non-additive inadequacy terms or other probability distributions are possible, but they do not affect the generality of the method presented here. Variance structures with correlation can analogously be considered for

$ \delta \left({\boldsymbol{\theta}}^b\right)\sim \mathcal{N}\left(0,\operatorname{diag}\left({\boldsymbol{\theta}}^b\right)\right) $

. Non-additive inadequacy terms or other probability distributions are possible, but they do not affect the generality of the method presented here. Variance structures with correlation can analogously be considered for

![]() $ \delta $

. To generate a stochastic response from the computational model, the probability distribution of

$ \delta $

. To generate a stochastic response from the computational model, the probability distribution of

![]() $ \tilde{\boldsymbol{\theta}} $

must be pushed through the model. This presents computational challenges, as the forward model must be evaluated for each sample of

$ \tilde{\boldsymbol{\theta}} $

must be pushed through the model. This presents computational challenges, as the forward model must be evaluated for each sample of

![]() $ \tilde{\boldsymbol{\theta}} $

. To address this, we implement a PCE approximation of the model’s response while keeping the prescribed stochastic structure of the embedding, which is detailed in Section 2.3.

$ \tilde{\boldsymbol{\theta}} $

. To address this, we implement a PCE approximation of the model’s response while keeping the prescribed stochastic structure of the embedding, which is detailed in Section 2.3.

Finally, because the model outputs are now stochastic, traditional inference methods that rely on deterministic outputs are no longer applicable. In Section 2.5, we introduce likelihood formulations inspired by Approximate Bayesian Computation (ABC) methods, which leverage summary statistics to infer the unknown parameters of the model. These methods provide a robust framework for handling stochastic models, enabling a direct comparison between the stochastic model response and the available data. Instead of replicating individual observations exactly, the approach focuses on fitting their statistical moments, resulting in more reliable predictions and avoiding overfitted posterior distributions.

In Section 3, we demonstrate how introducing the stochastic extension significantly improves the probability of the observations being generated by the posterior-predicted model. This improvement is measured through the Mahalanobis distance between the predicted and observed data and is shown to outperform standard Bayesian approaches.

2.3. Forward model evaluation and polynomial chaos expansion (PCE)

The evaluation of the stochastic response of the forward model requires the propagation of the uncertainty introduced by the embedding. A closed-form representation of the response is generally not possible, therefore sampling-based methods are required. A very popular approach for uncertainty propagation in recent years has been the use of generalized polynomial chaos expansions (PCE) (Xiu and Karniadakis, Reference Xiu and Karniadakis2002) to approximate the stochastic response once the uncertainty has been propagated. This approach consists of approximating the response

![]() $ f $

by a linear combination of a basis of orthonormal polynomials

$ f $

by a linear combination of a basis of orthonormal polynomials

![]() $ {\left\{{\Psi}_j\right\}}_{j=0}^D $

truncated at degree

$ {\left\{{\Psi}_j\right\}}_{j=0}^D $

truncated at degree

![]() $ d $

.

$ d $

.

Let

![]() $ {\Psi}_j:{\mathrm{\mathbb{R}}}^{n_{\xi }}\to \mathrm{\mathbb{R}} $

be the number of input random variables, where

$ {\Psi}_j:{\mathrm{\mathbb{R}}}^{n_{\xi }}\to \mathrm{\mathbb{R}} $

be the number of input random variables, where

![]() $ \xi \in {\mathrm{\mathbb{R}}}^{n_{\xi }} $

is the stochastic germ that determines the realization of a given random variable with probability distribution function

$ \xi \in {\mathrm{\mathbb{R}}}^{n_{\xi }} $

is the stochastic germ that determines the realization of a given random variable with probability distribution function

![]() $ {\pi}_{\xi } $

and

$ {\pi}_{\xi } $

and

![]() $ {n}_{\xi }={n}_b $

. These stochastic germs must follow an independent distribution inherited from

$ {n}_{\xi }={n}_b $

. These stochastic germs must follow an independent distribution inherited from

![]() $ \theta $

. For example, if

$ \theta $

. For example, if

![]() $ \theta $

is normal,

$ \theta $

is normal,

![]() $ \xi $

must also follow a normal distribution. The assumption of independent input random variables, which can be enforced through the use of isoprobabilistic transformations such as Nataf or Rosenblatt transforms (Jakeman et al., Reference Jakeman, Franzelin, Narayan, Eldred and Plfüger2019). The number of polynomial bases

$ \xi $

must also follow a normal distribution. The assumption of independent input random variables, which can be enforced through the use of isoprobabilistic transformations such as Nataf or Rosenblatt transforms (Jakeman et al., Reference Jakeman, Franzelin, Narayan, Eldred and Plfüger2019). The number of polynomial bases

![]() $ D+1 $

is computed as

$ D+1 $

is computed as

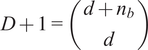

$ D+1=\left(\begin{array}{c}d+{n}_b\\ {}d\end{array}\right) $

, where

$ D+1=\left(\begin{array}{c}d+{n}_b\\ {}d\end{array}\right) $

, where

![]() $ {n}_b $

is the number of input random variables to be considered.

$ {n}_b $

is the number of input random variables to be considered.

Then, for each output

![]() $ {f}_i\left(\theta \right)=f\left(\theta, {x}_i\right) $

, which refers to the model response evaluated at the

$ {f}_i\left(\theta \right)=f\left(\theta, {x}_i\right) $

, which refers to the model response evaluated at the

![]() $ i $

th point

$ i $

th point

![]() $ {x}_i $

of the domain

$ {x}_i $

of the domain

![]() $ x $

(i.e.,

$ x $

(i.e.,

![]() $ {f}_i $

is

$ {f}_i $

is

![]() $ f $

evaluated at

$ f $

evaluated at

![]() $ {x}_i $

with fixed

$ {x}_i $

with fixed

![]() $ \theta $

), its approximation via PCE

$ \theta $

), its approximation via PCE

![]() $ {\tilde{f}}_i\left(\theta \right) $

is

$ {\tilde{f}}_i\left(\theta \right) $

is

$$ {f}_i\left(\theta \right)\approx {\tilde{f}}_i\left(\theta \right)=\sum \limits_{j=0}^D{\alpha}_{ij}{\Psi}_j\left(\xi \right). $$

$$ {f}_i\left(\theta \right)\approx {\tilde{f}}_i\left(\theta \right)=\sum \limits_{j=0}^D{\alpha}_{ij}{\Psi}_j\left(\xi \right). $$

As it can be observed,

![]() $ \theta $

generates a single approximation for each

$ \theta $

generates a single approximation for each

![]() $ {\tilde{f}}_i\left(\theta \right) $

, which are random variables that can be sampled by evaluating the PCE for different values of

$ {\tilde{f}}_i\left(\theta \right) $

, which are random variables that can be sampled by evaluating the PCE for different values of

![]() $ \xi $

. To express this more concisely, the approximation can be written in vector form

$ \xi $

. To express this more concisely, the approximation can be written in vector form

![]() $ \mathbf{f}\left(\theta \right)=\left[{f}_1\left(\theta \right),{f}_2\left(\theta \right),\dots, {f}_N\left(\theta \right)\right] $

as

$ \mathbf{f}\left(\theta \right)=\left[{f}_1\left(\theta \right),{f}_2\left(\theta \right),\dots, {f}_N\left(\theta \right)\right] $

as

$$ \mathbf{f}\left(\theta \right)\approx \tilde{\mathbf{f}}\left(\theta \right)=\sum \limits_{j=0}^D{\boldsymbol{\alpha}}_j{\Psi}_j\left(\xi \right), $$

$$ \mathbf{f}\left(\theta \right)\approx \tilde{\mathbf{f}}\left(\theta \right)=\sum \limits_{j=0}^D{\boldsymbol{\alpha}}_j{\Psi}_j\left(\xi \right), $$

where

![]() $ {\alpha}_{ij} $

are the PCE coefficients associated with each polynomial

$ {\alpha}_{ij} $

are the PCE coefficients associated with each polynomial

![]() $ {\Psi}_j $

that fit the approximation to

$ {\Psi}_j $

that fit the approximation to

![]() $ {f}_i $

(Sudret, Reference Sudret2021). The choice of the base of orthonormal polynomials

$ {f}_i $

(Sudret, Reference Sudret2021). The choice of the base of orthonormal polynomials

![]() $ \Psi $

depends on the distribution of the input random variables to be propagated. Askey’s scheme (Xiu and Karniadakis, Reference Xiu and Karniadakis2002) assigns popular distributions with their corresponding polynomial basis that ensures orthonormality and numerical stability.

$ \Psi $

depends on the distribution of the input random variables to be propagated. Askey’s scheme (Xiu and Karniadakis, Reference Xiu and Karniadakis2002) assigns popular distributions with their corresponding polynomial basis that ensures orthonormality and numerical stability.

Computing the

![]() $ {\alpha}_{ij} $

coefficients requires solving the problem of minimizing the

$ {\alpha}_{ij} $

coefficients requires solving the problem of minimizing the

![]() $ {L}_2 $

-distance between

$ {L}_2 $

-distance between

![]() $ \mathbf{f}\left(\theta, x\right) $

and

$ \mathbf{f}\left(\theta, x\right) $

and

![]() $ \tilde{\mathbf{f}}\left(\theta, x\right) $

as in

$ \tilde{\mathbf{f}}\left(\theta, x\right) $

as in

Here, the

![]() $ \alpha $

coefficients depend on

$ \alpha $

coefficients depend on

![]() $ x $

, and the norm

$ x $

, and the norm

![]() $ \parallel \cdot {\parallel}_2 $

is defined as the

$ \parallel \cdot {\parallel}_2 $

is defined as the

![]() $ {L}_2 $

norm, with the inner product given by

$ {L}_2 $

norm, with the inner product given by

where

![]() $ {\pi}_{\xi}\left(\xi \right) $

is the probability density function associated with

$ {\pi}_{\xi}\left(\xi \right) $

is the probability density function associated with

![]() $ \xi $

. As

$ \xi $

. As

![]() $ \left\langle {\Psi}_i,{\Psi}_j\right\rangle ={\delta}_{ij} $

due to the orthonormality of the polynomial basis, where

$ \left\langle {\Psi}_i,{\Psi}_j\right\rangle ={\delta}_{ij} $

due to the orthonormality of the polynomial basis, where

![]() $ {\delta}_{ij} $

is Dirac’s delta function, the PCE coefficients that minimize the

$ {\delta}_{ij} $

is Dirac’s delta function, the PCE coefficients that minimize the

![]() $ {L}_2\hbox{-} \mathrm{distance} $

are given by

$ {L}_2\hbox{-} \mathrm{distance} $

are given by

where

![]() $ {\pi}_{\xi}\left(\xi \right) $

is the probability density associated with the random variable

$ {\pi}_{\xi}\left(\xi \right) $

is the probability density associated with the random variable

![]() $ \xi $

. This integral can be computed using a Gauss quadrature scheme with weights

$ \xi $

. This integral can be computed using a Gauss quadrature scheme with weights

![]() $ {w}_1,{w}_2,\dots, {w}_P $

and nodes

$ {w}_1,{w}_2,\dots, {w}_P $

and nodes

![]() $ {\xi}_1,{\xi}_2,\dots, {\xi}_P $

, where

$ {\xi}_1,{\xi}_2,\dots, {\xi}_P $

, where

![]() $ P={p}^{n_b} $

is the number of quadrature points. The expansion coefficients are then computed as

$ P={p}^{n_b} $

is the number of quadrature points. The expansion coefficients are then computed as

$$ {\alpha}_{ij}=\sum \limits_{k=1}^P{w}_k{f}_i\left(\theta \left({\xi}_k\right)\right){\Psi}_j\left({\xi}_k\right), $$

$$ {\alpha}_{ij}=\sum \limits_{k=1}^P{w}_k{f}_i\left(\theta \left({\xi}_k\right)\right){\Psi}_j\left({\xi}_k\right), $$

where the factor

![]() $ {\pi}_{\xi}\left({\xi}_k\right) $

is already included in the quadrature weights

$ {\pi}_{\xi}\left({\xi}_k\right) $

is already included in the quadrature weights

![]() $ {w}_k $

.

$ {w}_k $

.

The full process to evaluate a sample through the forward model is summarized in Algorithm 1. The Python package chaospy (Feinberg and Langtangen, Reference Feinberg and Langtangen2015) has been used for the PCE implementation in this paper. The result of such evaluation is the PCE of the forward model’s response, which is stochastic and requires post-processing. The polynomial formulation provides direct access to the statistical moments of the response. Let

![]() $ {\mu}^f $

be the vector of means of the response

$ {\mu}^f $

be the vector of means of the response

![]() $ \tilde{\mathbf{f}}\left(\theta, x\right) $

. Then, each of its entries can be obtained as

$ \tilde{\mathbf{f}}\left(\theta, x\right) $

. Then, each of its entries can be obtained as

which holds under the assumption that

![]() $ {\Psi}_0=1 $

. Analogously, the vector of variances

$ {\Psi}_0=1 $

. Analogously, the vector of variances

![]() $ {\sigma}^f $

is computed as

$ {\sigma}^f $

is computed as

$$ {\left({\sigma}_i^f\right)}^2=\mathrm{Var}\left[{\tilde{f}}_i\left(\theta \right)\right]=\sum \limits_{j=1}^D{\left({\alpha}_{ij}\right)}^2, $$

$$ {\left({\sigma}_i^f\right)}^2=\mathrm{Var}\left[{\tilde{f}}_i\left(\theta \right)\right]=\sum \limits_{j=1}^D{\left({\alpha}_{ij}\right)}^2, $$

because the variance is the sum of squares of the non-constant coefficients.

Algorithm 1 Forward model evaluation with polynomial chaos expansion (PCE) and pseudo-spectral projection

Given a sample

![]() $ \theta $

:

$ \theta $

:

Step 1. Build a joint distribution

![]() $ \mathcal{J} $

of the stochastic unknown parameters

$ \mathcal{J} $

of the stochastic unknown parameters

![]() $ {\theta}^b $

.

$ {\theta}^b $

.

Step 2. If

![]() $ \mathcal{J} $

is not composed of independent variables, perform an isoprobabilistic transformation to make them independent, e.g., Nataf or Rosenblatt transform.

$ \mathcal{J} $

is not composed of independent variables, perform an isoprobabilistic transformation to make them independent, e.g., Nataf or Rosenblatt transform.

Step 3. Compute the weights

![]() $ {w}_1,{w}_2,\dots, {w}_P $

and nodes

$ {w}_1,{w}_2,\dots, {w}_P $

and nodes

![]() $ {\xi}_1,{\xi}_2,\dots, {\xi}_P $

for the Gauss quadrature scheme of degree

$ {\xi}_1,{\xi}_2,\dots, {\xi}_P $

for the Gauss quadrature scheme of degree

![]() $ p $

given

$ p $

given

![]() $ \mathcal{J} $

.

$ \mathcal{J} $

.

Step 4. Generate the orthonormal polynomial basis

![]() $ \Psi $

of degree

$ \Psi $

of degree

![]() $ d $

using Askey’s scheme.

$ d $

using Askey’s scheme.

Step 5. Evaluate the forward model at the nodes

![]() $ \xi $

for the sampled

$ \xi $

for the sampled

![]() $ \theta $

.

$ \theta $

.

Step 6. For each entry in

![]() $ \mathbf{f} $

, compute the PCE coefficients

$ \mathbf{f} $

, compute the PCE coefficients

![]() $ {\alpha}_j $

using Gauss integration with weights

$ {\alpha}_j $

using Gauss integration with weights

![]() $ w $

, nodes

$ w $

, nodes

![]() $ \xi $

, and the corresponding model evaluations.

$ \xi $

, and the corresponding model evaluations.

2.4. Embedded model form uncertainty as a hierarchical Bayesian problem

The inverse problem with an embedded model form uncertainty can be interpreted within a hierarchical Bayesian framework. First, a set of hyperpriors is prescribed for

![]() $ {\boldsymbol{\theta}}^m $

and

$ {\boldsymbol{\theta}}^m $

and

![]() $ {\boldsymbol{\theta}}^b $

, from which they are sampled. Then, a prior distribution for

$ {\boldsymbol{\theta}}^b $

, from which they are sampled. Then, a prior distribution for

![]() $ \boldsymbol{\theta} $

is defined through Equation 7. Finally, the forward model is evaluated based on

$ \boldsymbol{\theta} $

is defined through Equation 7. Finally, the forward model is evaluated based on

![]() $ \boldsymbol{\theta} $

. This structure follows the sequential definition of unknown variables that typically appears in hierarchical Bayesian models (Robert, Reference Robert2007). This formulation can also be extended to use Approximate Bayesian Computation (ABC) (Turner and Van Zandt, Reference Turner and Van Zandt2013), which has been applied to inferring the model inadequacy in industrial electric motor simulations (John et al., Reference John, Stohrer, Schillings, Schick and Heuveline2021; John, Reference John2021).

$ \boldsymbol{\theta} $

. This structure follows the sequential definition of unknown variables that typically appears in hierarchical Bayesian models (Robert, Reference Robert2007). This formulation can also be extended to use Approximate Bayesian Computation (ABC) (Turner and Van Zandt, Reference Turner and Van Zandt2013), which has been applied to inferring the model inadequacy in industrial electric motor simulations (John et al., Reference John, Stohrer, Schillings, Schick and Heuveline2021; John, Reference John2021).

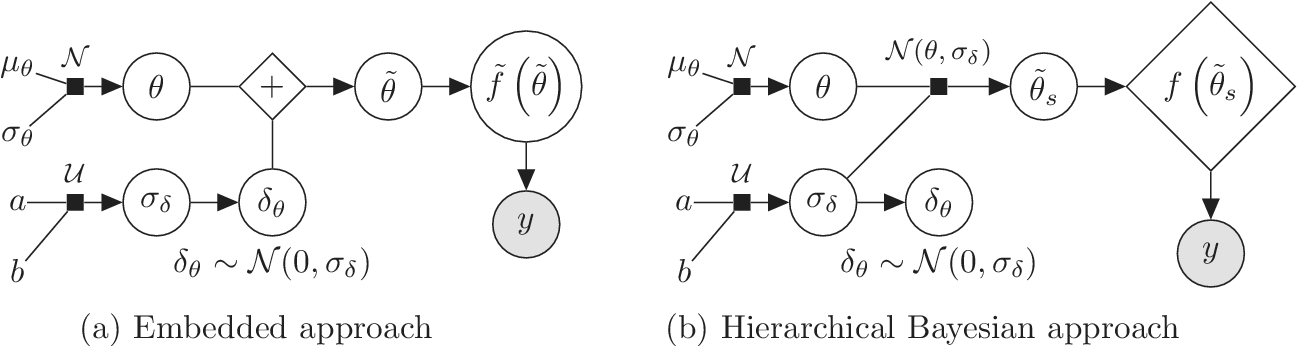

We highlight the key difference between embedded and most hierarchical formulations: while hierarchical approaches factorize the parameters, sampling them and inputting these samples into the model to generate a deterministic response, the embedded formulation directly propagates the distributions of the parameters, resulting in a stochastic response. These distinctions are illustrated in Figure 1, where the inadequacy in a single parameter

![]() $ \tilde{\theta} $

is modeled such that

$ \tilde{\theta} $

is modeled such that

![]() $ \tilde{\theta}={\theta}^m+\delta \left({\theta}^b\right) $

, where

$ \tilde{\theta}={\theta}^m+\delta \left({\theta}^b\right) $

, where

![]() $ \delta \sim \mathcal{N}\left(0,{\sigma}_{\delta}\right) $

. Equivalently,

$ \delta \sim \mathcal{N}\left(0,{\sigma}_{\delta}\right) $

. Equivalently,

![]() $ \tilde{\theta}\sim \mathcal{N}\left({\theta}^m,{\sigma}_{\delta}\right) $

for this simple case. This comparison highlights that both the embedded and hierarchical formulations share similar modeling principles but differ fundamentally in how the forward model response is computed. The embedded approach handles the forward model stochastically, leveraging polynomial chaos expansion (PCE) to propagate uncertainty efficiently, while the hierarchical Bayesian approach results in a deterministic forward model after sampling

$ \tilde{\theta}\sim \mathcal{N}\left({\theta}^m,{\sigma}_{\delta}\right) $

for this simple case. This comparison highlights that both the embedded and hierarchical formulations share similar modeling principles but differ fundamentally in how the forward model response is computed. The embedded approach handles the forward model stochastically, leveraging polynomial chaos expansion (PCE) to propagate uncertainty efficiently, while the hierarchical Bayesian approach results in a deterministic forward model after sampling

![]() $ \tilde{\theta} $

.

$ \tilde{\theta} $

.

Bayesian graph for the inference of the parameters involved in the embedded formulation of the model form uncertainty. (a) Embedded approach. (b) Classical hierarchical Bayesian approach with Gibbs’ sampling. Following usual notation (Dietz, Reference Dietz2010; Obermeyer et al., Reference Obermeyer, Bingham, Jankowiak, Pradhan, Chiu, Rush, Goodman, Chaudhuri and Salakhutdinov2019), circled values with white background represent unknown variables, circled shaded values represent observations, rhomboids represent deterministic operations, and black squares represent drawing a sample from the indicated distribution.

While both methodologies have practical applications, an exhaustive comparison between them is beyond the scope of this paper. Pernot and Cailliez (Reference Pernot and Cailliez2017) compared, among others, an ABC implementation of the embedded approach and a hierarchical one, concluding that the former is a better fit for the estimation of parameters under model form uncertainty. For improved results, the hierarchical formulation required a local modification of the parameters, effectively increasing their number. For a more comprehensive discussion on hierarchical Bayesian approaches and their computational challenges, refer to Robert and Casella (Reference Robert and Casella2004); Robert (Reference Robert2007); Turner and Van Zandt (Reference Turner and Van Zandt2013); Li et al. (Reference Li, Du, Ni, Han, Xu and Bai2024).

2.5. Likelihood formulation

Due to the uncertainty propagation, the output

![]() $ \boldsymbol{f}\left(\boldsymbol{\theta} \right) $

of evaluating the forward model with a given sample

$ \boldsymbol{f}\left(\boldsymbol{\theta} \right) $

of evaluating the forward model with a given sample

![]() $ \boldsymbol{\theta} $

or its PCE approximation

$ \boldsymbol{\theta} $

or its PCE approximation

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

is a stochastic random variable that follows a probability distribution described by the PCE approximation. While a modified approach that utilizes the statistical moments of the distribution is feasible, directly evaluating a classical Gaussian likelihood function based on the residuals between the predictions,

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

is a stochastic random variable that follows a probability distribution described by the PCE approximation. While a modified approach that utilizes the statistical moments of the distribution is feasible, directly evaluating a classical Gaussian likelihood function based on the residuals between the predictions,

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

, and the observations,

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

, and the observations,

![]() $ \mathbf{y} $

, is not possible because their domains are not directly comparable,

$ \mathbf{y} $

, is not possible because their domains are not directly comparable,

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

being a random variable and

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

being a random variable and

![]() $ \mathbf{y} $

being deterministic observations. Approximate Bayesian Computation (ABC) approaches provide a methodology to obtain the likelihood

$ \mathbf{y} $

being deterministic observations. Approximate Bayesian Computation (ABC) approaches provide a methodology to obtain the likelihood

![]() $ {\pi}_{\mathrm{ABC}}\left(\mathbf{y}|\boldsymbol{\theta} \right) $

from the statistical moments of

$ {\pi}_{\mathrm{ABC}}\left(\mathbf{y}|\boldsymbol{\theta} \right) $

from the statistical moments of

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

, which are readily available from the PCE, enabling the formulation of a likelihood function.

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

, which are readily available from the PCE, enabling the formulation of a likelihood function.

Throughout this paper, an ensemble-based Monte Carlo-Markov Chain (MCMC) sampler using a stretch move (Goodman and Weare, Reference Goodman and Weare2010) from the package emcee (Foreman-Mackey et al., Reference Foreman-Mackey, Hogg, Lang and Goodman2013) is used. A threshold on the effective sample size (

![]() $ ESS $

) for each of the unknown parameter chains as described in Appendix A is set as stopping criteria for the MCMC algorithm.

$ ESS $

) for each of the unknown parameter chains as described in Appendix A is set as stopping criteria for the MCMC algorithm.

2.5.1. ABC-likelihood without noise

Classical ABC approaches usually include two assumptions: (1) measurements are free of noise, where

![]() $ {\varepsilon}_{\mathrm{noise}} $

is assumed

$ {\varepsilon}_{\mathrm{noise}} $

is assumed

![]() $ 0 $

, and (2) the model response can fully represent the system, where

$ 0 $

, and (2) the model response can fully represent the system, where

![]() $ {\varepsilon}_{\mathrm{model}} $

is assumed

$ {\varepsilon}_{\mathrm{model}} $

is assumed

![]() $ 0 $

. Therefore, the model response is expected to fully represent the system’s real behavior as

$ 0 $

. Therefore, the model response is expected to fully represent the system’s real behavior as

![]() $ \mathbf{z}=\boldsymbol{f}\left(\boldsymbol{\theta} \right) $

. Those approaches define a residual function

$ \mathbf{z}=\boldsymbol{f}\left(\boldsymbol{\theta} \right) $

. Those approaches define a residual function

![]() $ \rho \left(\mathbf{y},\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right):{\mathrm{\mathbb{R}}}^{n_y}\times {\mathrm{\mathbb{R}}}^{n_z}\to \mathrm{\mathbb{R}} $

between observations

$ \rho \left(\mathbf{y},\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right):{\mathrm{\mathbb{R}}}^{n_y}\times {\mathrm{\mathbb{R}}}^{n_z}\to \mathrm{\mathbb{R}} $

between observations

![]() $ \mathbf{y} $

and predictions

$ \mathbf{y} $

and predictions

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

, which usually defines a distance, and aim at reducing it below a tolerance value

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

, which usually defines a distance, and aim at reducing it below a tolerance value

![]() $ \varepsilon $

by the following steps (Sisson et al., Reference Sisson, Fan and Beaumont2018):

$ \varepsilon $

by the following steps (Sisson et al., Reference Sisson, Fan and Beaumont2018):

-

1. Sample

$ \boldsymbol{\theta} $

from

$ \boldsymbol{\theta} $

from

$ p\left(\boldsymbol{\theta} \right) $

.

$ p\left(\boldsymbol{\theta} \right) $

. -

2. Evaluate the forward model

$ \mathbf{z}=\boldsymbol{f}\left(\boldsymbol{\theta} \right)\sim p\left(\mathbf{z}|\boldsymbol{\theta} \right) $

.

$ \mathbf{z}=\boldsymbol{f}\left(\boldsymbol{\theta} \right)\sim p\left(\mathbf{z}|\boldsymbol{\theta} \right) $

. -

3. Accept or reject

$ \boldsymbol{\theta} $

if

$ \boldsymbol{\theta} $

if

$ \rho \left(\mathbf{y},\boldsymbol{f}\left(\boldsymbol{\theta} \right)\right)<\epsilon $

.

$ \rho \left(\mathbf{y},\boldsymbol{f}\left(\boldsymbol{\theta} \right)\right)<\epsilon $

.

If the model is approximated, such as the forward model built in Section 2.3, its evaluation would correspond to

![]() $ \tilde{\mathbf{z}}=\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\sim p\left(\tilde{\mathbf{z}}|\boldsymbol{\theta} \right) $

, where the model form uncertainty is expected to be fully represented in the stochastic response of the computational model, preserving assumption (2). When this tolerance

$ \tilde{\mathbf{z}}=\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\sim p\left(\tilde{\mathbf{z}}|\boldsymbol{\theta} \right) $

, where the model form uncertainty is expected to be fully represented in the stochastic response of the computational model, preserving assumption (2). When this tolerance

![]() $ \epsilon \to 0 $

, the predictions are expected to reproduce exactly the observations, and therefore the samples of

$ \epsilon \to 0 $

, the predictions are expected to reproduce exactly the observations, and therefore the samples of

![]() $ \theta $

would belong to the associated posterior distribution. As

$ \theta $

would belong to the associated posterior distribution. As

![]() $ \mathbf{y} $

is deterministic and

$ \mathbf{y} $

is deterministic and

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

stochastic, the residual function

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

stochastic, the residual function

![]() $ \rho $

is evaluated on its statistical moments

$ \rho $

is evaluated on its statistical moments

![]() $ t $

such that

$ t $

such that

-

3a. Accept or reject

$ \boldsymbol{\theta} $

if

$ \boldsymbol{\theta} $

if

$ \rho \left(t\left(\mathbf{y}\right),t\left(\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right)\right)<\epsilon $

.

$ \rho \left(t\left(\mathbf{y}\right),t\left(\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right)\right)<\epsilon $

.

A possibility is to use the mean and standard deviation as summary statistics under the assumption of them being statistically sufficient. The predictions

![]() $ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

are approximated at each output dimension by

$ \tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

are approximated at each output dimension by

![]() $ t\left(\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right)=\left[{\boldsymbol{\mu}}^f,{\boldsymbol{\sigma}}^f\right] $

. The output observations

$ t\left(\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right)=\left[{\boldsymbol{\mu}}^f,{\boldsymbol{\sigma}}^f\right] $

. The output observations

![]() $ \mathbf{y} $

are approximated by its statistical moments as

$ \mathbf{y} $

are approximated by its statistical moments as

![]() $ t\left(\mathbf{y}\right)=\left[\boldsymbol{\mu}, \boldsymbol{\sigma} \right] $

, where

$ t\left(\mathbf{y}\right)=\left[\boldsymbol{\mu}, \boldsymbol{\sigma} \right] $

, where

![]() $ \boldsymbol{\mu} \approx \mathbf{y} $

and

$ \boldsymbol{\mu} \approx \mathbf{y} $

and

![]() $ \boldsymbol{\sigma} \approx \gamma \left|{\boldsymbol{\mu}}^f-\mathbf{y}\right| $

with

$ \boldsymbol{\sigma} \approx \gamma \left|{\boldsymbol{\mu}}^f-\mathbf{y}\right| $

with

![]() $ \gamma $

an additional factor to be specified. The approximation

$ \gamma $

an additional factor to be specified. The approximation

![]() $ \boldsymbol{\sigma} \approx \gamma \left|{\boldsymbol{\mu}}^f-\mathbf{y}\right| $

is necessary due to only one observation being available. A reasonable assumption would be that on, average, the observations

$ \boldsymbol{\sigma} \approx \gamma \left|{\boldsymbol{\mu}}^f-\mathbf{y}\right| $

is necessary due to only one observation being available. A reasonable assumption would be that on, average, the observations

![]() $ \mathbf{y} $

are located a given distance from the mean

$ \mathbf{y} $

are located a given distance from the mean

![]() $ {\boldsymbol{\mu}}^f $

controlled by their standard deviations

$ {\boldsymbol{\mu}}^f $

controlled by their standard deviations

![]() $ \boldsymbol{\sigma} $

, leading to the previous approximation. The choice of summary statistics is not unique and introduces a potential model inadequacy rooted in the approximation that is not present in the formulation (Fearnhead and Prangle, Reference Fearnhead and Prangle2012).

$ \boldsymbol{\sigma} $

, leading to the previous approximation. The choice of summary statistics is not unique and introduces a potential model inadequacy rooted in the approximation that is not present in the formulation (Fearnhead and Prangle, Reference Fearnhead and Prangle2012).

The acceptance criterion defines the indicator function or kernel that governs the sampling procedure. Following the criteria from Step 3, the uniform kernel

![]() $ I $

indicates if a sample is accepted and can be defined as

$ I $

indicates if a sample is accepted and can be defined as

$$ I\left(\boldsymbol{\theta}, \boldsymbol{y}\right)=\left\{\begin{array}{ll}1& \mathrm{if}\rho \left(t\left(\boldsymbol{y}\right),t\left(\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right)\right)<\epsilon \\ {}0& \mathrm{else}\end{array}\right.. $$

$$ I\left(\boldsymbol{\theta}, \boldsymbol{y}\right)=\left\{\begin{array}{ll}1& \mathrm{if}\rho \left(t\left(\boldsymbol{y}\right),t\left(\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right)\right)\right)<\epsilon \\ {}0& \mathrm{else}\end{array}\right.. $$

Applying Bayes’ theorem with the chosen kernel, it is possible to express the approximate posterior distribution of the parameters as

where

![]() $ \pi \left(\boldsymbol{\theta} \right) $

is the prior distribution for the unknown parameters

$ \pi \left(\boldsymbol{\theta} \right) $

is the prior distribution for the unknown parameters

![]() $ \boldsymbol{\theta} $

and

$ \boldsymbol{\theta} $

and

![]() $ \pi \left(\boldsymbol{z}|\boldsymbol{\theta} \right) $

the probability of the model output for a given

$ \pi \left(\boldsymbol{z}|\boldsymbol{\theta} \right) $

the probability of the model output for a given

![]() $ \boldsymbol{\theta} $

. For a deterministic model

$ \boldsymbol{\theta} $

. For a deterministic model

![]() $ f $

, the probability

$ f $

, the probability

![]() $ \pi \left(\boldsymbol{z}|\boldsymbol{\theta} \right) $

follows a Dirac’s delta distribution located at the value for

$ \pi \left(\boldsymbol{z}|\boldsymbol{\theta} \right) $

follows a Dirac’s delta distribution located at the value for

![]() $ \boldsymbol{f}\left(\boldsymbol{\theta} \right) $

. Analogously, the summary statistics

$ \boldsymbol{f}\left(\boldsymbol{\theta} \right) $

. Analogously, the summary statistics

![]() $ t\left(\tilde{\boldsymbol{z}}\right) $

of the approximated output

$ t\left(\tilde{\boldsymbol{z}}\right) $

of the approximated output

![]() $ \tilde{\boldsymbol{z}}=\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

are deterministic as well, therefore

$ \tilde{\boldsymbol{z}}=\tilde{\boldsymbol{f}}\left(\boldsymbol{\theta} \right) $

are deterministic as well, therefore

![]() $ \pi \left(t\left(\tilde{\boldsymbol{z}}\right)|\boldsymbol{\theta} \right) $

follows the same Dirac’s delta distribution when they are used as a lower-dimensional representation of the model output. Under this consideration, it can be observed that the kernel acts as the likelihood function that is commonly defined in the classical Bayesian approaches. In particular, the likelihood defined by the aforementioned uniform kernel assigns probability 1 to those samples that fulfil

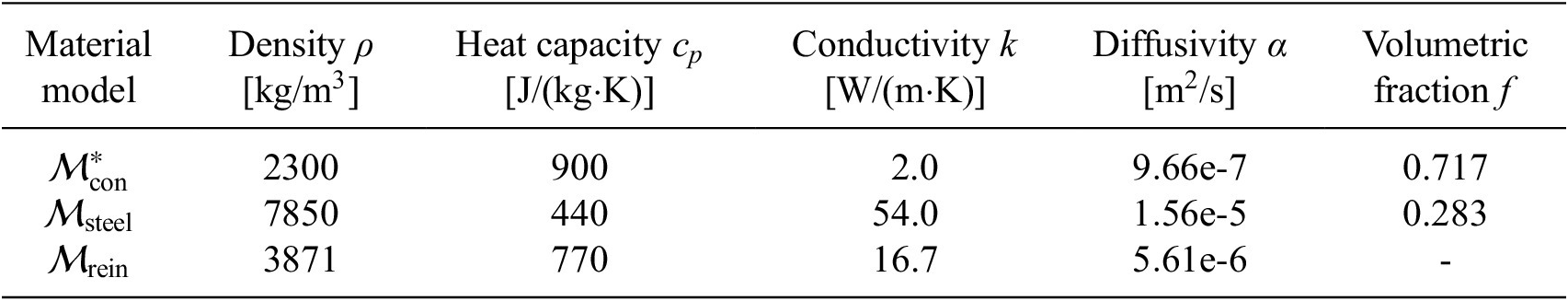

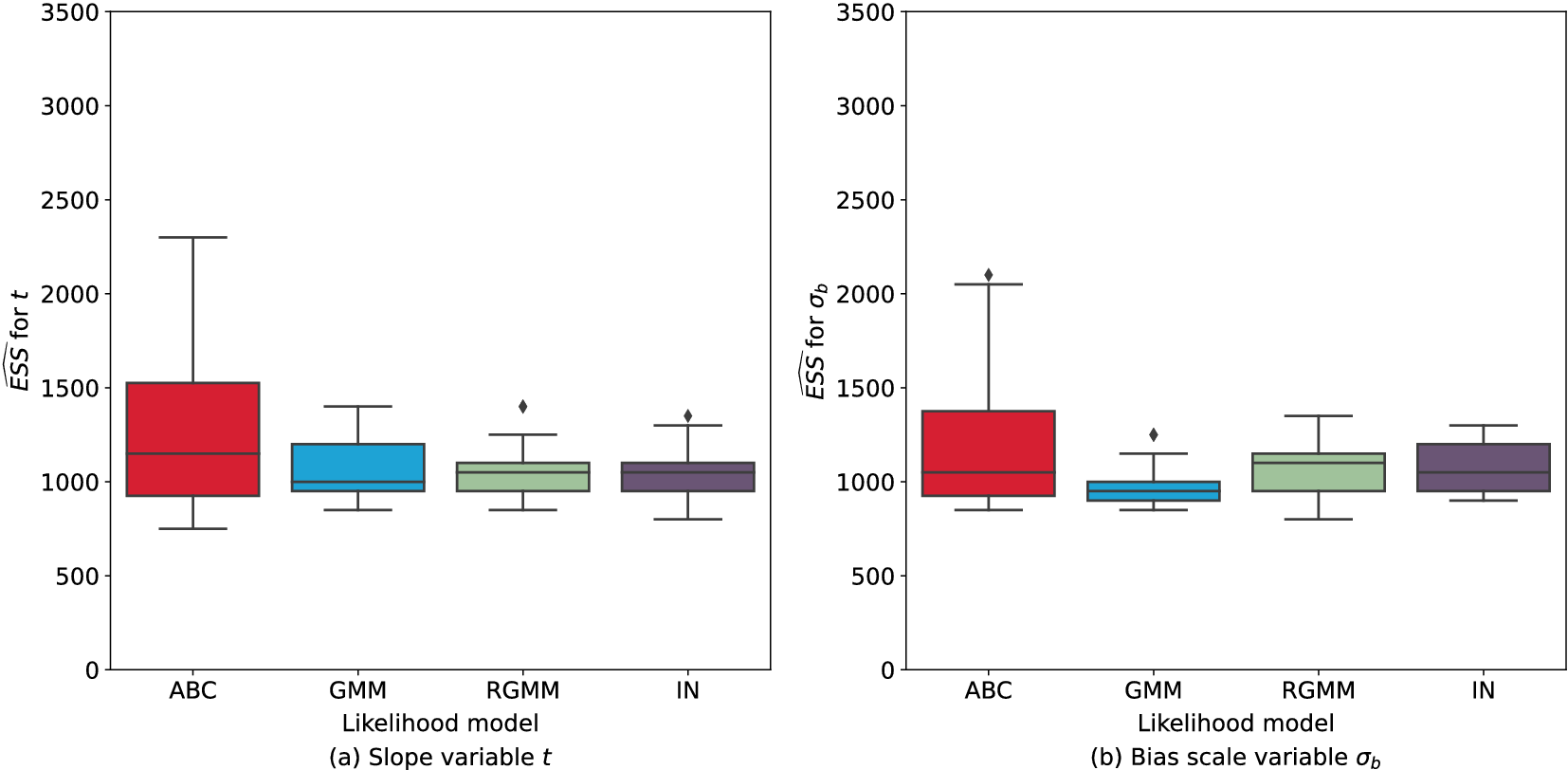

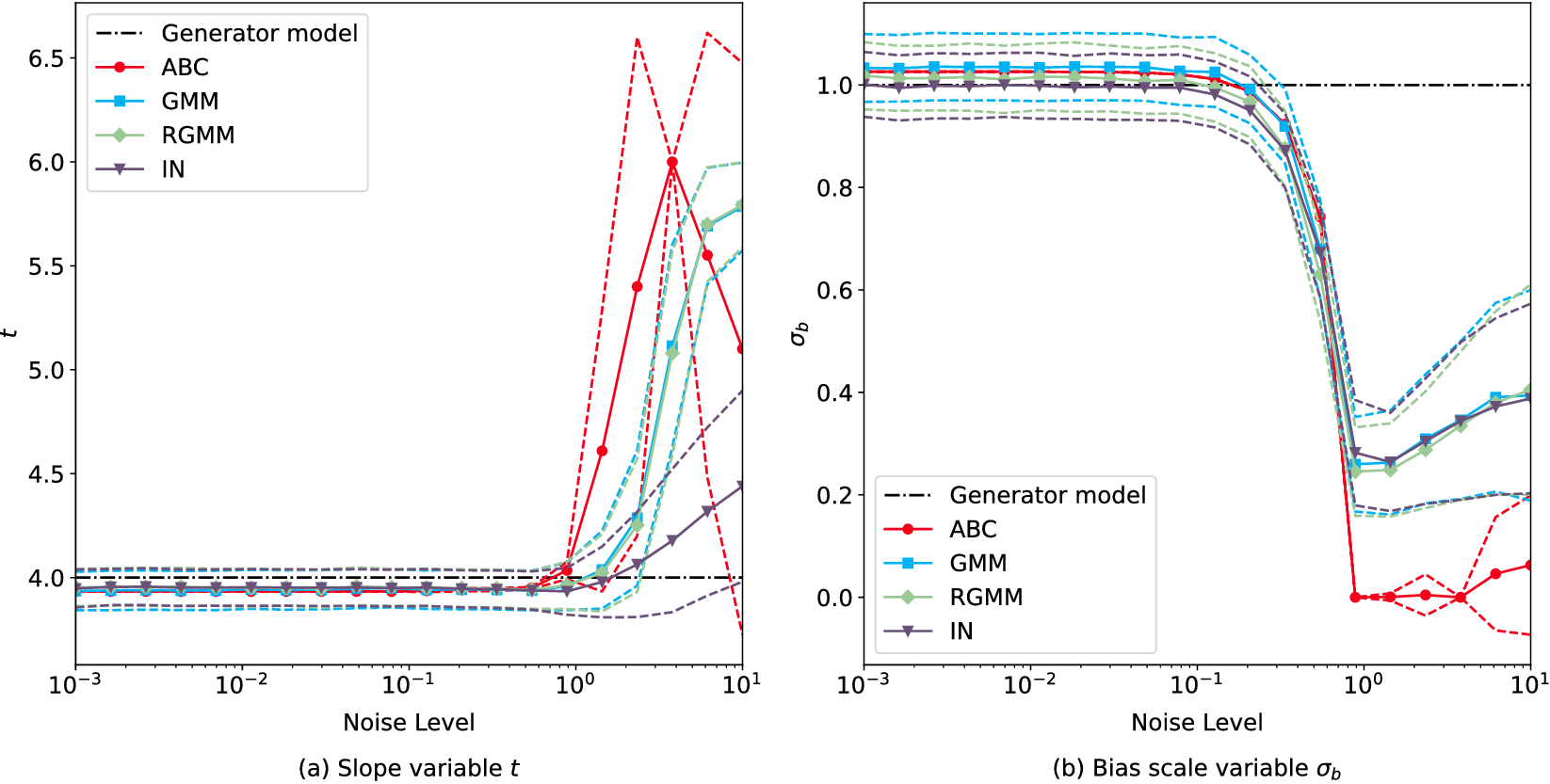

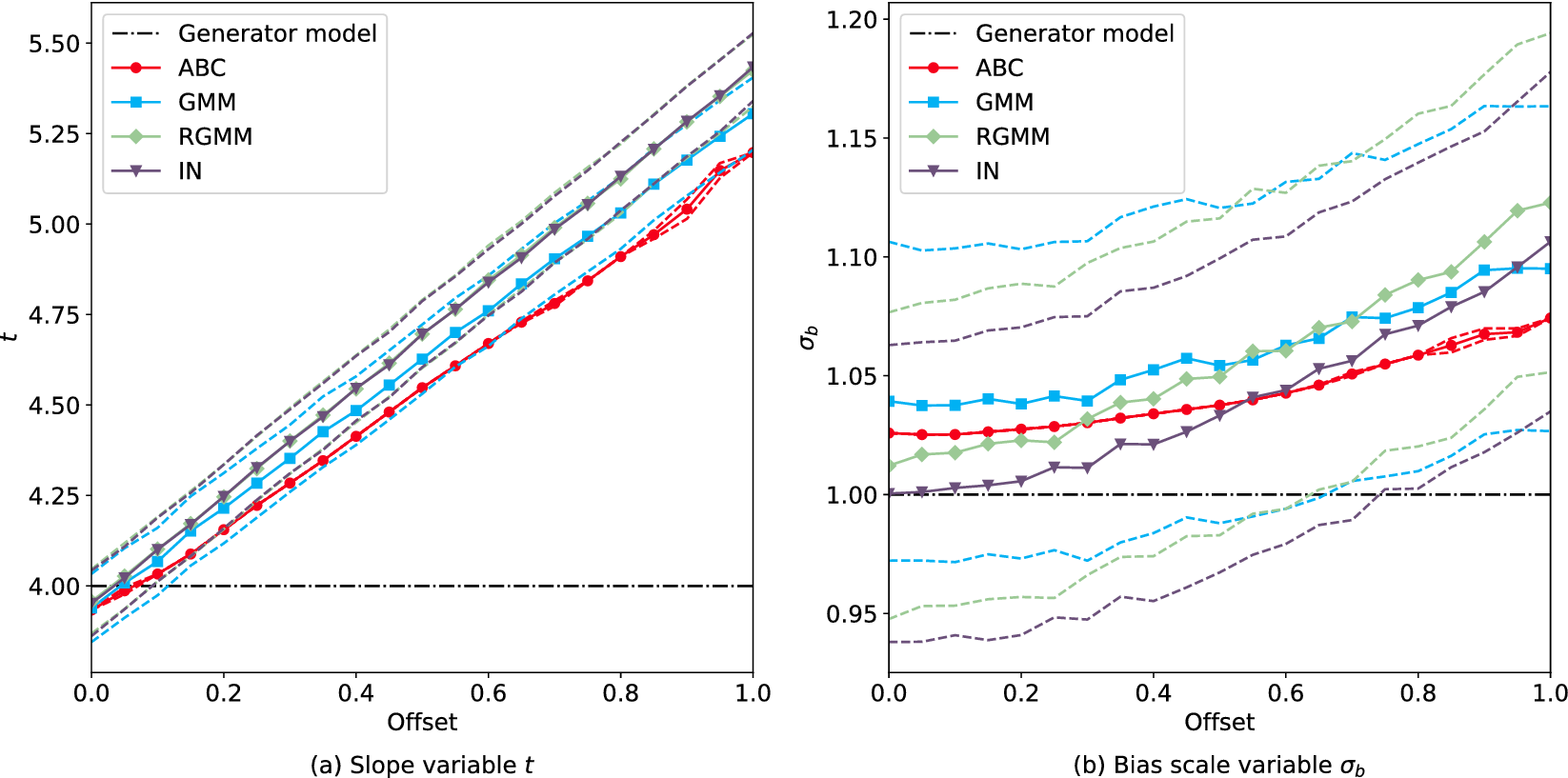

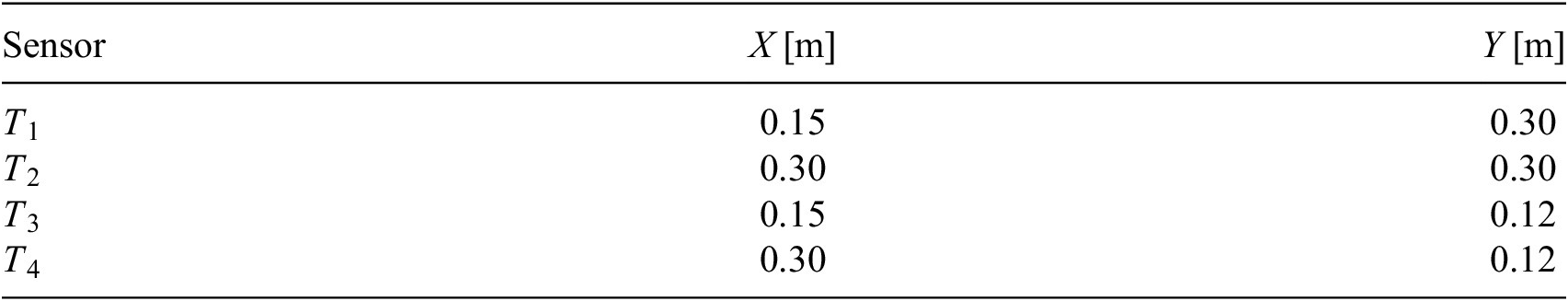

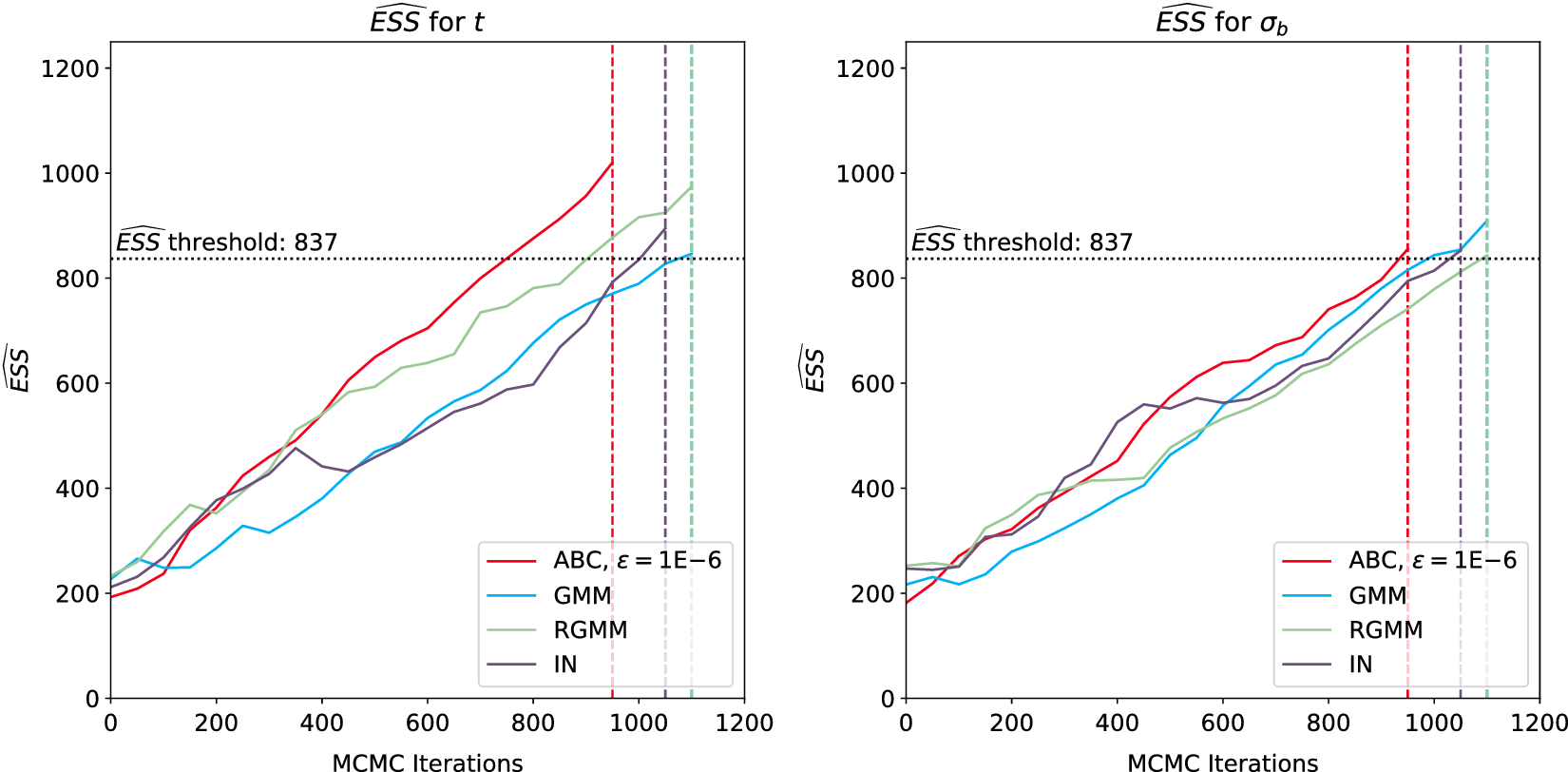

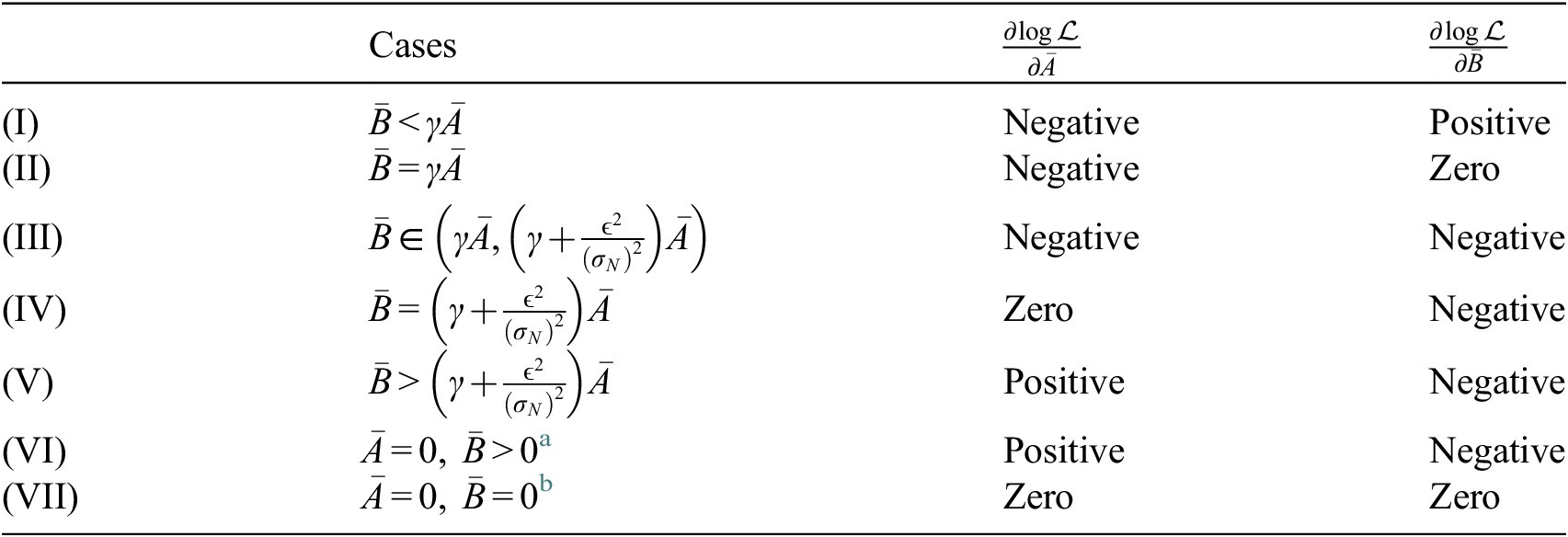

$ \pi \left(t\left(\tilde{\boldsymbol{z}}\right)|\boldsymbol{\theta} \right) $