Multinomial processing tree (MPT) models are stochastic models for categorical data frequently used in different branches of behavioral science, primarily in cognitive psychology and social cognition research (for overviews and a recent tutorial see Batchelder & Riefer, Reference Batchelder and Riefer1999; Erdfelder et al., Reference Erdfelder, Auer, Hilbig, Assfalg, Moshagen and Nadarevic2009, Reference Erdfelder, Hu, Rouder and Wagenmakers2020; Hütter & Klauer, Reference Hütter and Klauer2016; Schmidt et al., Reference Schmidt, Erdfelder and Heck2023). They have been applied to a wide range of phenomena, including, for example, recognition memory (e.g., Riefer & Batchelder, Reference Riefer and Batchelder1995; Xu & Bellezza, Reference Xu and Bellezza2001), source monitoring (e.g., Meiser & Broder, Reference Meiser and Broder2002), recall memory (Batchelder & Riefer, Reference Batchelder and Riefer1986), and judgmental illusions, such as the hindsight bias (Erdfelder and Buchner, Reference Erdfelder and Buchner1998; Nestler and Egloff, Reference Nestler and Egloff2009; Nestler et al., Reference Nestler, Egloff, Küfner and Back2012). Specifically, MPT models are cognitive process models that refer to a particular experimental task or paradigm in which participants’ judgments are categorized into a well-defined set of responses. It is assumed that the observed frequencies of responses who fall into these categories follow a multinomial distribution and that the probabilities underlying these frequencies are determined by latent cognitive processes that drive observed response behavior.

The primary goal of fitting MPT models to observed response frequencies is to estimate the cognitive process parameters, that is, the latent probabilities that certain cognitive processes were successful or not (e.g., memory processes, such as encoding, storage, or retrieval). Furthermore, by estimating models that impose psychologically motivated restrictions on these parameters (e.g., equality constraints or parameter fixations), model comparisons can be used to statistically test psychological assumptions and cognitive hypotheses that are linked to the model.

In most past applications, MPT models have been estimated by aggregating observed category frequencies across participants. This approach presumes that individual differences in cognitive process parameters can be neglected. However, when there are substantial individual differences, parameter estimates may be biased, and the results of inferential statistical procedures might not be optimal (e.g., the standard errors of the parameter estimates may be misleading, Batchelder & Riefer, Reference Batchelder and Riefer1999; Erdfelder et al., Reference Erdfelder, Auer, Hilbig, Assfalg, Moshagen and Nadarevic2009; Klauer, Reference Klauer2006; Reference Klauer2010; Smith & Batchelder, Reference Smith and Batchelder2010). In addition to these statistical problems, the assumption that there are no between-person differences in relevant cognitive processes seems rather implausible (Lee & Webb, Reference Lee and Webb2005; Smith & Batchelder, Reference Smith and Batchelder2010). Such an assumption also precludes the exploration of interesting research questions about the origin of individual differences in process parameters and about their relationships with other model parameters as well as covariates (e.g., Coolin et al., Reference Coolin, Erdfelder, Bernstein, Thornton and Thornton2015, Reference Coolin, Erdfelder, Bernstein, Thornton and Thornton2016). Hence, it is desirable to quantify potential interindividual differences and to investigate which person variables can explain them.

A number of extensions have therefore been proposed to model and predict the heterogeneity of parameters between individuals. First, Klauer (Reference Klauer2006) suggested a latent class MPT framework in which a single person is assumed to be a member of one latent class, and model parameters may differ between latent classes. Second, Smith and Batchelder (Reference Smith and Batchelder2010) proposed a hierarchical MPT model in which participants’ model parameters are assumed to be sampled from independent beta distributions (i.e., a beta distribution for each MPT model parameter; hence the name “beta-MPT model”). More recently, Klauer (Reference Klauer2010) introduced another hierarchical extension of the standard MPT model that allows researchers to capture not only the variability in the (probit-transformed) parameters across individuals but also the covariances or correlations between these parameters. This latent-trait MPT model is based on the assumption that the person parameters stem from a multivariate normal distribution, and their expectations and covariance matrix are estimated from the observed frequency data.

Klauer (Reference Klauer2010) suggested a Bayesian approach for parameter estimation and inferences in which the posterior distribution is approximated using Monte Carlo-Markov chain methods (see Heck et al., Reference Heck, Arnold and Arnold2018a, for an implementation in R). In the present article, we show how the parameters of the latent-trait MPT model can be obtained through marginal maximum likelihood (ML) estimation. Our implementation of the ML method introduces a frequentist approach for hierarchical MPT models that is perhaps more familiar to researchers because standard errors, confidence intervals, and goodness-of-fit tests can be computed on the basis of well-known asymptotic properties of ML estimates. Moreover, in addition to the asymptotic optimality of ML estimates (Reed and Cressie, Reference Reed and Cressie1988), the subtleties of specifying appropriate prior distributions for Bayesian estimation can be avoided when using the ML method. In particular, one does not have to worry about whether and how the obtained estimates and model comparisons are affected by the prior (for a recent discussion, see Sarafoglou et al., Reference Sarafoglou, Kuhlmann, Aust and Haaf2022) or whether de-facto equivalent models may become nonequivalent as a consequence of the choice of the prior (Kellen & Klauer, Reference Kellen and Klauer2020). Importantly, the most efficient numerical ML algorithm is also typically faster than a Bayesian estimator, and the convergence of the ML algorithm is simpler to determine.

1. The Pair-Clustering Model

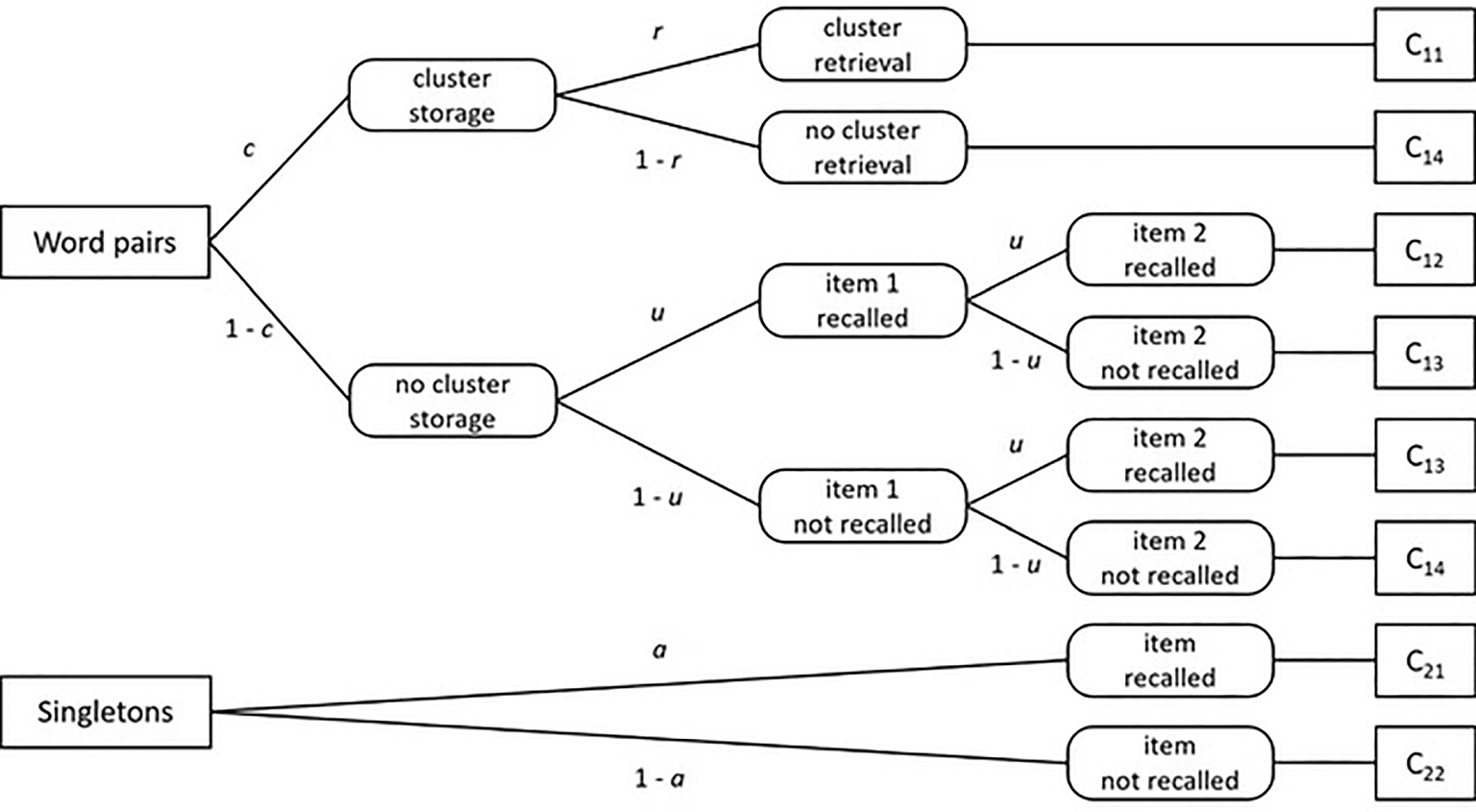

Before we introduce MPT models and the ML estimation methods in more detail, we briefly describe the pair-clustering model (e.g., Batchelder & Riefer, Reference Batchelder and Riefer1980, Reference Batchelder and Riefer1986) that we use throughout the article to illustrate the proposed methods. The pair-clustering model can be used to analyze data from a free-recall experiment in which participants are presented with a list of word pairs plus a number of singletons. All words are preselected to be equally difficult in terms of memorizability. Each word pair consists of semantically related words (e.g., rose–tulip), whereas singletons are unrelated to other words in the list. These pairs and the singletons are presented one word at a time, and participants are later asked to recall the items from the list in any order. On the basis of participants’ free recall performance, the studied word pairs and singletons are then assigned to one of six response categories. It is assumed that the probability of each response category can be modeled by two processing trees that include a total of four parameters (see Fig. 1 for a graphical illustration of the model).

The pair-clustering MPT model (adapted from Riefer & Batchelder, Reference Riefer and Batchelder1988, p. 330, Figure 2). Rectangles indicate stimulus classes (left) and observable responses (right). Rectangles with rounded corners represent latent cognitive states. Parameters attached to the branches indicate transition probabilities from left to right, specifically, storing a word pair as a cluster (c), retrieving a stored cluster in free recall (r), storing and retrieving a word from a non-clustered pair in free recall (u), and storing and retrieving a singleton in free recall (a).

Specifically, the model defines four response categories for the word pairs: Category

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{11}$$\end{document}

![]() includes all cases where both words in a pair are recalled adjacently,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{12}$$\end{document}

includes all cases where both words in a pair are recalled adjacently,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{12}$$\end{document}

![]() represents cases in which both words are recalled nonadjacently,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{13}$$\end{document}

represents cases in which both words are recalled nonadjacently,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{13}$$\end{document}

![]() corresponds to cases where exactly one word is recalled, and finally

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

corresponds to cases where exactly one word is recalled, and finally

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

![]() represents cases in which neither word from the pair is recalled. In addition, the singletons are scored in two categories: singletons recalled successfully (

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{21}$$\end{document}

represents cases in which neither word from the pair is recalled. In addition, the singletons are scored in two categories: singletons recalled successfully (

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{21}$$\end{document}

![]() ) versus not successfully (

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{22}$$\end{document}

) versus not successfully (

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{22}$$\end{document}

![]() ).

).

The pair-clustering model proposes four cognitive process parameters that jointly determine the response probabilities: (1) the probability c that a word pair is stored as a cluster in memory, (2) the probability r that a stored cluster is successfully retrieved in free recall, (3) the probability u that one word from a pair that is not stored as part of a cluster is successfully retrieved, and finally, (4) the probability a that a singleton is successfully stored and retrieved. These parameters can be used to derive response probabilities for each of the six categories by calculating the product of all parameters along a branch and then summing up all branch probabilities that refer to the same category. For example, because there is only one branch that terminates in category

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{11}$$\end{document}

![]() (see Fig. 1), the probability of this category (i.e., both words recalled adjacently) is just the product

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$c \cdot r$$\end{document}

(see Fig. 1), the probability of this category (i.e., both words recalled adjacently) is just the product

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$c \cdot r$$\end{document}

![]() (i.e., successful storage as a cluster followed by successful retrieval of this cluster). Applying the same logic to all branches in Fig. 1 results in the following six model equations:

(i.e., successful storage as a cluster followed by successful retrieval of this cluster). Applying the same logic to all branches in Fig. 1 results in the following six model equations:

The goal of MPT modeling, applied to the pair-clustering model, is to estimate the four cognitive process parameters and to test reasonable hypotheses about these parameters. One example of such a hypothesis would be that unclustered words from a pair behave like singletons in memory, that is, they are stored and retrieved with the same probability (i.e.,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$u = a$$\end{document}

![]() ).

).

In the next section, we begin with a formal introduction of a typical MPT model without random effects. This is followed by an outline of the latent-trait MPT model with some extensions. In the third section, we present three methods of marginal ML estimation for the latent-trait model. Next, we describe the results of a small simulation study in which we compare the different methods with respect to accuracy and speed. In Sect. 3.2, we present an application of our method to an illustrative example using real data. In the final section, we discuss possible extensions and open questions for future research.

2. MPT Models Without Person-Level Random Effects

The pair-clustering model we just described is a specific instance of an MPT model. On a more general level, MPT models are statistical models of response frequencies of mutually exclusive, independent categories. To define the model, we assume that there are

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$k = 1, \ldots , K$$\end{document}

![]() category systems and that each category system consists of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j = 1$$\end{document}

category systems and that each category system consists of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j = 1$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\ldots $$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\ldots $$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$J_k$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$J_k$$\end{document}

![]() categories

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{kj}$$\end{document}

categories

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{kj}$$\end{document}

![]() . In the pair-clustering model, there are

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$K = 2$$\end{document}

. In the pair-clustering model, there are

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$K = 2$$\end{document}

![]() category systems, one for the word pairs and one for the singletons. The first system consists of four categories:

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{11}$$\end{document}

category systems, one for the word pairs and one for the singletons. The first system consists of four categories:

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{11}$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{12}$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{12}$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{13}$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{13}$$\end{document}

![]() , and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

![]() ; and the singleton system contains two categories:

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{21}$$\end{document}

; and the singleton system contains two categories:

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{21}$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{22}$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{22}$$\end{document}

![]() . We further assume that data from

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$t = 1$$\end{document}

. We further assume that data from

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$t = 1$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\ldots $$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\ldots $$\end{document}

![]() , T individuals is available. For each single individual t, the data for category system k are given as a vector of frequencies,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{n}_{kt} = (n_{k1t}, \ldots , n_{kJ_{k}t})$$\end{document}

, T individuals is available. For each single individual t, the data for category system k are given as a vector of frequencies,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{n}_{kt} = (n_{k1t}, \ldots , n_{kJ_{k}t})$$\end{document}

![]() . For instance,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{n}_{1t}$$\end{document}

. For instance,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{n}_{1t}$$\end{document}

![]() contains the four frequencies of person t for the four word pair categories (i.e., k = 1). These frequencies are assumed to stem from a multinomial distribution

contains the four frequencies of person t for the four word pair categories (i.e., k = 1). These frequencies are assumed to stem from a multinomial distribution

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$N_{kt}$$\end{document}

![]() =

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$n_{k1t}$$\end{document}

=

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$n_{k1t}$$\end{document}

![]() +... +

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$n_{kJ_{k}t}$$\end{document}

+... +

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$n_{kJ_{k}t}$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$p_{k1t} + \cdots + p_{kJ_{k}t} = 1$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$p_{k1t} + \cdots + p_{kJ_{k}t} = 1$$\end{document}

![]() . This definition requires the vector of all frequencies across all category systems K of person t, that is,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{n}_{t} = (\varvec{n}_{1t},..., \varvec{n}_{Kt})$$\end{document}

. This definition requires the vector of all frequencies across all category systems K of person t, that is,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{n}_{t} = (\varvec{n}_{1t},..., \varvec{n}_{Kt})$$\end{document}

![]() , to follow a product multinomial distribution:

, to follow a product multinomial distribution:

The idea behind MPT models is that the probability of the occurrence of an event category can be modeled as a function of the cognitive process parameters. In the standard model (i.e., without person-level random effects), these process parameters

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta }$$\end{document}

![]() are unknown constants that do not vary between individuals. Thus,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$ p_{kjt}$$\end{document}

are unknown constants that do not vary between individuals. Thus,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$ p_{kjt}$$\end{document}

![]() is written as

is written as

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta }$$\end{document}

![]() contains the S cognitive process parameters and is thus an element of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$[0, 1]^S$$\end{document}

contains the S cognitive process parameters and is thus an element of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$[0, 1]^S$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$s = 1,..., S$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$s = 1,..., S$$\end{document}

![]() . In the example,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta } = (c, r, u, a)$$\end{document}

. In the example,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta } = (c, r, u, a)$$\end{document}

![]() , and the probability of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

, and the probability of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

![]() (see above) is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$p_{14t} = P( C_{14} | \varvec{\theta } ) = c(1-r) + (1-c)(1-u)^2$$\end{document}

(see above) is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$p_{14t} = P( C_{14} | \varvec{\theta } ) = c(1-r) + (1-c)(1-u)^2$$\end{document}

![]() . To write

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$P( C_{kj} | \varvec{\theta } )$$\end{document}

. To write

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$P( C_{kj} | \varvec{\theta } )$$\end{document}

![]() more generally, we define

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$ I_{kj}$$\end{document}

more generally, we define

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$ I_{kj}$$\end{document}

![]() to be the number of branches of the tree that terminate in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{kj}$$\end{document}

to be the number of branches of the tree that terminate in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{kj}$$\end{document}

![]() , with

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$i = 1,..., I_{kj}$$\end{document}

, with

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$i = 1,..., I_{kj}$$\end{document}

![]() indexing a specific branch

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$B_{kji}$$\end{document}

indexing a specific branch

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$B_{kji}$$\end{document}

![]() that terminates in category j of category system k. As described by Hu and Batchelder (Reference Hu and Batchelder1994), we define:

that terminates in category j of category system k. As described by Hu and Batchelder (Reference Hu and Batchelder1994), we define:

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$a_{skji}$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$b_{skji}$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$b_{skji}$$\end{document}

![]() indicate how often a process parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{s}$$\end{document}

indicate how often a process parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{s}$$\end{document}

![]() and its complement

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$1-\theta _{s}$$\end{document}

and its complement

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$1-\theta _{s}$$\end{document}

![]() , respectively, appear on the branch

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$B_{kji}$$\end{document}

, respectively, appear on the branch

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$B_{kji}$$\end{document}

![]() . For instance, there are two paths that terminate in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

. For instance, there are two paths that terminate in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{14}$$\end{document}

![]() . To illustrate, we just consider the first of these branches,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$B_{141}$$\end{document}

. To illustrate, we just consider the first of these branches,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$B_{141}$$\end{document}

![]() :

:

According to these definitions, the probability of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$C_{kj}$$\end{document}

![]() is

is

and the multinomial probability of the data for a single individual t is

Equation 8 can be used to obtain ML estimates of the process parameters

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta }$$\end{document}

![]() . For instance, Hu and Batchelder (Reference Hu and Batchelder1994) showed how the parameters can be estimated with an EM algorithm. Alternatively, methods based on the analytical gradient or finite difference methods can be used for ML parameter estimation. Both approaches are implemented in the R package MPTinR (Singmann and Kellen, Reference Singmann and Kellen2013; Singmann et al., Reference Singmann, Kellen, Gronau, Mueller and Bhel2020) or the package mpt (Wickelmaier and Zeileis, Reference Wickelmaier and Zeileis2011, Reference Wickelmaier and Zeileis2020). Also, a Bayesian approach can be used to estimate the parameters (e.g., Lee & Wagenmakers, Reference Lee and Wagenmakers2014).

. For instance, Hu and Batchelder (Reference Hu and Batchelder1994) showed how the parameters can be estimated with an EM algorithm. Alternatively, methods based on the analytical gradient or finite difference methods can be used for ML parameter estimation. Both approaches are implemented in the R package MPTinR (Singmann and Kellen, Reference Singmann and Kellen2013; Singmann et al., Reference Singmann, Kellen, Gronau, Mueller and Bhel2020) or the package mpt (Wickelmaier and Zeileis, Reference Wickelmaier and Zeileis2011, Reference Wickelmaier and Zeileis2020). Also, a Bayesian approach can be used to estimate the parameters (e.g., Lee & Wagenmakers, Reference Lee and Wagenmakers2014).

3. MPT Models with Person-Level Random Effects

In the previous section, the process parameters contained in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta }$$\end{document}

![]() were assumed not to differ between individuals. In the latent-trait MPT model, this assumption is relaxed because

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R {\le } S$$\end{document}

were assumed not to differ between individuals. In the latent-trait MPT model, this assumption is relaxed because

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R {\le } S$$\end{document}

![]() elements of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta }$$\end{document}

elements of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\theta }$$\end{document}

![]() are written as a function of person-specific random effects that stem from a multivariate normal distribution

are written as a function of person-specific random effects that stem from a multivariate normal distribution

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

![]() is an

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R \times 1$$\end{document}

is an

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R \times 1$$\end{document}

![]() vector of expectations and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Sigma }$$\end{document}

vector of expectations and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Sigma }$$\end{document}

![]() is an

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R \times R$$\end{document}

is an

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R \times R$$\end{document}

![]() covariance matrix. Because each single

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{st}$$\end{document}

covariance matrix. Because each single

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{st}$$\end{document}

![]() is a probability parameter, we need to make sure that the resulting coefficient remains in the unit interval [0, 1]. On the basis of the literature on the Generalized Linear Model (GLM), we use the logit-link function (see Coolin et al., Reference Coolin, Erdfelder, Bernstein, Thornton and Thornton2015)

is a probability parameter, we need to make sure that the resulting coefficient remains in the unit interval [0, 1]. On the basis of the literature on the Generalized Linear Model (GLM), we use the logit-link function (see Coolin et al., Reference Coolin, Erdfelder, Bernstein, Thornton and Thornton2015)

or the probit-link function (see Klauer, Reference Klauer2010)

to map a real-valued person parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$b_{st}$$\end{document}

![]() on a probability parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{st}$$\end{document}

on a probability parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{st}$$\end{document}

![]() , with

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\beta _s$$\end{document}

, with

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\beta _s$$\end{document}

![]() serving as a parameter-specific intercept constant (in Eq. 11,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\Phi $$\end{document}

serving as a parameter-specific intercept constant (in Eq. 11,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\Phi $$\end{document}

![]() denotes the cumulative normal distribution). The two approaches typically result in very similar parameter estimates (at least in the GLM framework). To allow for comparisons in the framework of random effects MPT models, we have implemented both link functions in the R package that implements the statistical methods proposed in this article.

denotes the cumulative normal distribution). The two approaches typically result in very similar parameter estimates (at least in the GLM framework). To allow for comparisons in the framework of random effects MPT models, we have implemented both link functions in the R package that implements the statistical methods proposed in this article.

We can now define the conditional distribution of the category frequencies of person t given the random effects

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{b}_t$$\end{document}

![]() :

:

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{st}$$\end{document}

![]() is defined as in Eqs. 10 or 11. Along with the multivariate normal density of the random effects

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{b}_t$$\end{document}

is defined as in Eqs. 10 or 11. Along with the multivariate normal density of the random effects

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{b}_t$$\end{document}

![]() , Eq. 12 can then be used to obtain ML estimates of the model parameters. We refer to this model as the random effects MPT model.

, Eq. 12 can then be used to obtain ML estimates of the model parameters. We refer to this model as the random effects MPT model.

Before we proceed, we would like to point out that the mean structure in our model is not identified because the expectation of the random effects is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

![]() and the conditional distribution contains the vector of intercept terms

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

and the conditional distribution contains the vector of intercept terms

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

![]() . To identify the mean structure, we define all elements of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

. To identify the mean structure, we define all elements of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

![]() to be zero when all process parameters are assumed to differ between individuals (i.e.,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R=S$$\end{document}

to be zero when all process parameters are assumed to differ between individuals (i.e.,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R=S$$\end{document}

![]() , where R denotes the number of random effects parameters). In this case, our random effects model corresponds to the model described by Klauer (Reference Klauer2010). However, when only some (but not all) of the process parameters are assumed to be random, we estimate the respective entries in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

, where R denotes the number of random effects parameters). In this case, our random effects model corresponds to the model described by Klauer (Reference Klauer2010). However, when only some (but not all) of the process parameters are assumed to be random, we estimate the respective entries in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

![]() for the

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$(S-R)$$\end{document}

for the

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$(S-R)$$\end{document}

![]() fixed parameters while setting the remaining R entries (corresponding to random parameters) to zero and reducing the dimensions of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

fixed parameters while setting the remaining R entries (corresponding to random parameters) to zero and reducing the dimensions of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Sigma }$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Sigma }$$\end{document}

![]() accordingly. Thus, by writing the model as proposed here, we achieve a high degree of flexibility to estimate MPT models with both random and fixed effects. This can be very useful, for example, when MPT models include guessing parameters that need to be estimated and are assumed to be constant across individuals.

accordingly. Thus, by writing the model as proposed here, we achieve a high degree of flexibility to estimate MPT models with both random and fixed effects. This can be very useful, for example, when MPT models include guessing parameters that need to be estimated and are assumed to be constant across individuals.

Furthermore, we can also extend the model to include person-level covariates contained in the person-specific column vectors

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{X}_{t}$$\end{document}

![]() . This can be achieved in two ways: First, when the person-level covariates are used to predict R process parameters that are assumed to be random,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

. This can be achieved in two ways: First, when the person-level covariates are used to predict R process parameters that are assumed to be random,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

![]() is person-specific, and we write it as function of the predictor values:

is person-specific, and we write it as function of the predictor values:

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Gamma }$$\end{document}

![]() is an

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R \times p$$\end{document}

is an

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R \times p$$\end{document}

![]() matrix of weights, and p is the number of person-level predictors used to predict the random process parameters. Note that when there is an assumption that a process parameter is not affected by a predictor in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{X}_{t}$$\end{document}

matrix of weights, and p is the number of person-level predictors used to predict the random process parameters. Note that when there is an assumption that a process parameter is not affected by a predictor in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{X}_{t}$$\end{document}

![]() , the respective entry in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Gamma }$$\end{document}

, the respective entry in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Gamma }$$\end{document}

![]() is simply set to zero. Furthermore,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

is simply set to zero. Furthermore,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

![]() represents the values of the person parameters when the covariates are zero. Second, when a fixed process parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _s$$\end{document}

represents the values of the person parameters when the covariates are zero. Second, when a fixed process parameter

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _s$$\end{document}

![]() is assumed to vary as a function of person-level covariates so that the intercept

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\beta _{st}$$\end{document}

is assumed to vary as a function of person-level covariates so that the intercept

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\beta _{st}$$\end{document}

![]() may differ between individuals t, we follow the procedure outlined by Coolin et al. (Reference Coolin, Erdfelder, Bernstein, Thornton and Thornton2015) and write

may differ between individuals t, we follow the procedure outlined by Coolin et al. (Reference Coolin, Erdfelder, Bernstein, Thornton and Thornton2015) and write

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\beta _{s}$$\end{document}

![]() is the value of the parameter-specific intercept when all person-level covariates are zero and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\gamma _{s}}$$\end{document}

is the value of the parameter-specific intercept when all person-level covariates are zero and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\gamma _{s}}$$\end{document}

![]() is a row vector containing the weights of the p covariates used to predict

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _s$$\end{document}

is a row vector containing the weights of the p covariates used to predict

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _s$$\end{document}

![]() .

.

3.1. Marginal Maximum Likelihood Estimation

The goal is to estimate the free parameters contained in

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\gamma }$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\gamma }$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\mu }$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Gamma }$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Gamma }$$\end{document}

![]() , and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Sigma }$$\end{document}

, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\Sigma }$$\end{document}

![]() , where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

, where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\beta }$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\varvec{\gamma }$$\end{document}

and

\documentclass[12pt]{minimal}

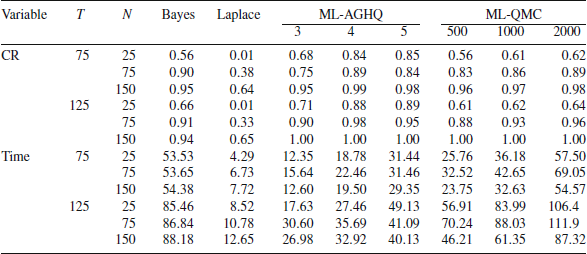

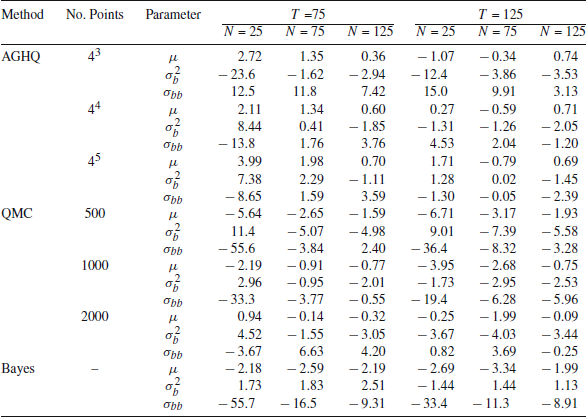

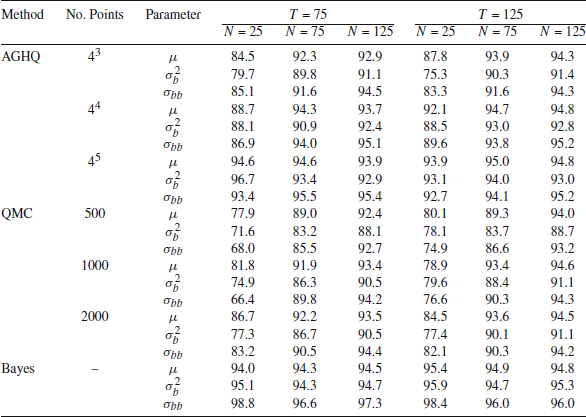

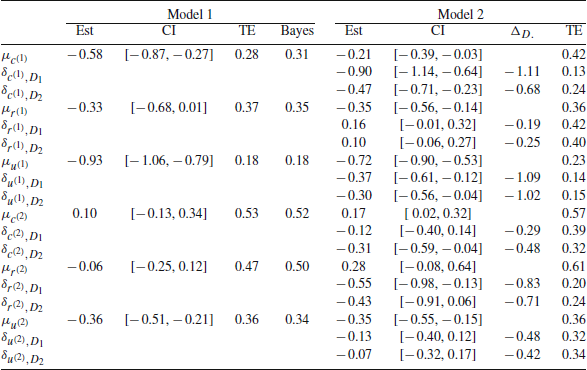

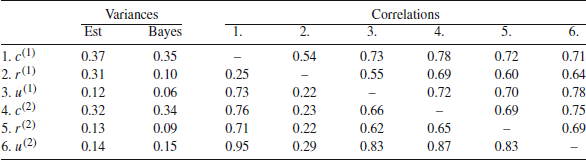

\usepackage{amsmath}