Highlights

What is already known?

-

• Moderator analyses are a crucial step in a meta-analysis, although they are often underpowered.

-

• In the presence of multiple effect sizes within studies, various methods are available for analyzing moderator variables through meta-regression, including multivariate models, multilevel models, robust variance estimation, and combinations of the first two methods with robust variance estimation.

-

• Some simulation studies have compared the performance of these methods. The results indicate that all of them achieve adequate Type I error rates with a large number of studies and that a posterior robust variance correction addresses model mis-specification.

-

• Despite this, some questions remain: The statistical power of these methods has never been directly compared, nor have Type I error rates or power been evaluated when the moderator variable pertains to study characteristics.

What is new?

-

• This article provides a comprehensive comparison of the statistical properties of methods for conducting meta-regressions when multiple effect sizes are present within studies and binary variables are analyzed, related to either study-level or within-study characteristics.

-

• We focus on a common scenario in this context: studies or effect sizes are often highly imbalanced across the categories of the categorical moderator variable.

-

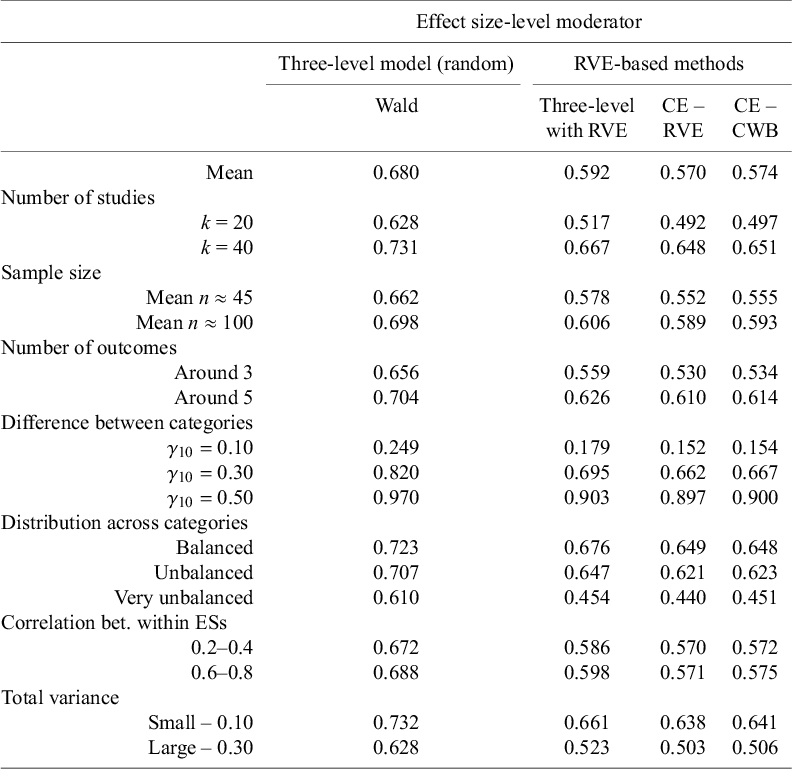

• The results show that the properties of these methods vary depending on whether moderator variables refer to study-level or effect size-level characteristics: Statistical power is negatively affected by the imbalanced distribution of effect sizes, and it is generally higher when the moderator variable pertains to within-study characteristics.

-

• Multilevel models alone can lead to inflated Type I error rates when moderator variables refer to within-study characteristics, but this inflation may be corrected using robust variance estimation.

Potential impact for RSM readers

-

• The results of this study will help applied meta-analysts select the most suitable methods for conducting meta-regression.

In meta-analysis, findings from studies investigating the same research question are aggregated quantitatively to provide a comprehensive overview of the available evidence pertaining to that specific topic. The unit of analysis is typically an effect size, which is a number that summarizes the strength of the association between the variables of interest. Following the compilation of this evidence summary, the next step commonly involves checking whether there is statistical heterogeneity among the effect sizes, and if that is the case, exploring whether the characteristics of the studies have an impact on the observed effect sizes.Reference Thompson 1 For example, in Yan and colleagues’ meta-analysisReference Yan, Lao, Panadero, Fernández-Castilla, Yang and Yang 2 examining the influence of peer assessment on academic outcomes, the researchers explored whether studies that incorporated online technology in their procedures yielded larger effect sizes compared to those that did not. Their findings revealed that, indeed, the overall effect size was greater for the former (g = 0.78) than for the latter (g = 0.44). This procedure of using study characteristics to predict study results is known as moderator analyses and is commonly carried out through the implementation of a mixed-effects model, also known as meta-regression.Reference Thompson and Higgins 3

Moderator analyses play an important role in advancing scientific understanding by uncovering how different study characteristics might affect the results obtained. These analyses are crucial because they direct researchers’ attention to factors that might have been previously overlooked. When significant moderator effects are observed, these previously ignored factors become salient, leading to a more refined picture of the phenomena being studied. This process enriches the overall understanding and interpretation of research findings, ultimately contributing to more robust and comprehensive scientific insights.

Moderator analyses are commonly conducted through statistical regression models known as meta-regressions. In these models, a study characteristic either categorical (e.g., type of design) or quantitative (e.g., mean age of the sample) is used as a predictor variable in a regression where the dependent variables are the effect sizes extracted from primary studies. Given the important role of moderator analyses in scientific advancement, it is crucial to ensure that the estimates derived from meta-regression models are unbiased, effectively control Type I errors, and maintain robust statistical power to detect an effect.

Numerous studies have focused on these aspects of meta-regression analysis in situations where only one effect size could be extracted per study.Reference Berkey, Hoaglin, Mosteller and Colditz 4 – Reference Viechtbauer, López-López, Sánchez-Meca and Marín-Martínez 13 However, studies incorporated into meta-analyses often report multiple results, frequently supplying data that allows the calculation of several effect sizes.Reference López-López, Page, Lipsey and Higgins 14 For example, when different questionnaires are used to measure different aspects of a psychological construct (e.g., to measure anxiety, different facets of the construct can be measured, such as motor, cognitive, or physiological anxiety), measurements are made on different subsamples, or the same sample is measured at different time points. These effect sizes are more closely related to each other because they stem from the same study and therefore share underlying methodological characteristics, or because they are derived from the same individuals, making them statistically dependent. This dependency must be considered in the statistical model of the meta-analysis to avoid a Type I error rate higher than the nominal value.Reference Becker, Tinsley and Brown 15

Three primary methods are used to model the dependence between effect sizes: the multivariate method,Reference Kalaian and Raudenbush 16 , Reference Raudenbush, Becker and Kalaian 17 three-level models,Reference Van den Noortgate, López-López, Marín-Martínez and Sánchez-Meca 18 , Reference Van den Noortgate, López-López, Marín-Martínez and Sánchez-Meca 19 and correlated-effects models using the robust variance estimation (RVE) inference method.Reference Hedges, Tipton and Johnson 20 , Reference Tipton 21 Recently, a combination of three-level models with RVE method has been proposed to ensure nominal Type I errors.Reference Fernandez-Castilla, Aloe and Declercq 22 – Reference Tipton, Pustejovsky and Ahmadi 25 Last, to enhance the power to detect moderator effects, a new inferential technique called cluster wild bootstrapping (CWB) has been proposed within the correlated-effects model with RVE framework.Reference Joshi, Pustejovsky and Beretvas 26 The technical details of these methods will be introduced in the upcoming sections.

All these methods can accommodate moderator variables to explain the variability observed in effect sizes both across studies and within studies. Moderator variables can reflect study characteristics (e.g., the country where the study is conducted) or within-study characteristics (e.g., instruments used to measure multiple dependent variables reported within studies). Throughout this text, we will refer to these two types of characteristics as study-level variables and effect size-level variables, respectively. Although not addressed in the present manuscript, it is important to consider centering these moderator variables (both continuous and categorical) to disentangle level-specific effects.Reference Yaremych, Preacher and Hedeker 27 , Footnote 1

Several studies have investigated or compared the effectiveness of the aforementioned methods in the context of meta-regression, examining both quantitative and categorical study-level and effect size-level characteristics.Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey 9 , Reference Tipton 21 , Reference Fernandez-Castilla, Aloe and Declercq 22 , Reference Joshi, Pustejovsky and Beretvas 26 , Reference Park and Beretvas 28 López-López et al.Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey 9 compared the power and Type I error of three-level models and correlated-effects models with RVE inference method in meta-regressions with a single quantitative moderator variable that could describe a study or an effect size characteristic. Results showed that the three-level multilevel model had higher power but poorer control of Type I error, while the correlated-effects models with RVE method provided correct Type I error rates but had low statistical power. Unfortunately, this research focused exclusively on quantitative moderator variables, whereas many times moderator variables are categorical, such as the educational level of the sample (primary, secondary, and university) or the type of design (experimental, quasi-experimental, and observational).

Regarding the performance of meta-regressions with categorical variables, TiptonReference Tipton 21 found that applying the RVE inference method to a correlated-effects model with different small-sample adjustments, such as the bias-reduced linearization correction proposed by Bell and McCaffreyReference Bell and McCaffrey 29 combined with the Satterthwaite approximation for estimating degrees of freedom,Reference Satterthwaite 30 provided the best tradeoff between Type I error and statistical power when analyzing unbalanced dichotomous variables. This was particularly true when the number of studies was 40, the between-study heterogeneity was small or moderate, and the effect size difference between categories was large (i.e., 0.40). However, in other simulated conditions, statistical power was very low. A limitation of this study is that the performance of the correlated-effects model with RVE and small-sample adjustments was not compared to other methods, such as three-level models or three-level models with RVE.

This limitation is also present in the simulation study conducted by Joshi et al.,Reference Joshi, Pustejovsky and Beretvas 26 who examined the Type I error and power of the CWB method applied to a correlated-effects model with RVE using various types of variables (i.e., quantitative and categorical, both at study and at effect size level). This method is very promising, as it was found to adequately control Type I error while showing higher statistical power than the correlated-effects model with RVE and small-sample correction, particularly when hypothesis tests involved multiple contrasts, with as few as 10 studies. However, the authors did not compare the performance of CWB with other alternative methods.

Two existing simulation studies have compared the performance of the three-level model and the correlated-effects model with RVE inference in meta-regressions with categorical moderator variables.Reference Fernandez-Castilla, Aloe and Declercq 22 , Reference Park and Beretvas 28 Park and BeretvasReference Park and Beretvas 28 found that the three-level model had convergence issues and underestimated standard errors under unfavorable conditions (i.e., a small number of studies, high heterogeneity, and an uneven distribution of effect sizes across moderator categories). In contrast, the correlated-effects model with RVE showed better performance. In a subsequent study, Fernández-Castilla et al.Reference Fernandez-Castilla, Aloe and Declercq 22 extended this research by incorporating additional simulation conditions, testing alternative three-level model specifications (i.e., a three-level model that allows estimating different between-studies residual variance estimates for each category of the moderator) and evaluating hybrid approaches that combined three-level models with RVE. They concluded that all methods provided adequate estimates when at least 40 studies are available. Additionally, they found that applying three-level models with RVE generally maintained appropriate Type I error rates, even when the three-level model was mis-specified and the number of studies was as low as 20. Lastly, they observed that an imbalance in the number of effect sizes across categorical moderator categories negatively impacted results.

Despite the advancements made by these studies, a critical gap remains. Neither of these studies examined or compared the statistical power and Type I error ratesFootnote 2 of the methods for detecting differences in overall effects across categorical moderator categories. Additionally, both studies focused on moderator variables that represented effect size-level characteristics, but not on study-level characteristics. Addressing these gaps is the primary motivation for the present study.

1 The present study

Categorical variables are of special interest from a methodological perspective because, in meta-analysis, effect sizes are often disproportionately distributed across categories (see example provided in Table 1). This imbalanced distribution is known to affect the properties of methods commonly used in meta-regressions.Reference Tipton 21 , Reference Fernandez-Castilla, Aloe and Declercq 22 Beyond the type of variable used in a meta-regression, when a variable describes an effect size-level characteristic, different three-level model specifications can be applied, and choosing an incorrect specification can significantly underestimate standard errors associated with the moderator effect,Reference Fernandez-Castilla, Aloe and Declercq 22 leading to inflated Type I error rates.

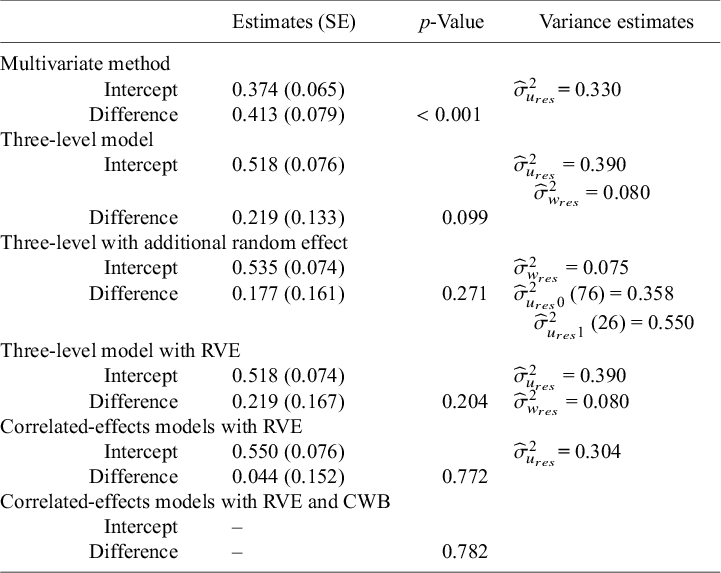

Results from meta-regressions carried out with different methods

Note:

![]() ${\widehat{\sigma}}_{u_{res}}^2$

= residual between-studies variance;

${\widehat{\sigma}}_{u_{res}}^2$

= residual between-studies variance;

![]() ${\widehat{\sigma}}_{w_{res}}^2$

= residual within-study variance; RVE = robust variance estimation; CWB = cluster wild bootstrapping; SE = standard error. To fit the multivariate model, an imputed variance–covariance matrix was used, using a correlation of 0.6.

${\widehat{\sigma}}_{w_{res}}^2$

= residual within-study variance; RVE = robust variance estimation; CWB = cluster wild bootstrapping; SE = standard error. To fit the multivariate model, an imputed variance–covariance matrix was used, using a correlation of 0.6.

To date, no study has conducted an integrative analysis comparing Type I error rates and statistical power across various methods (and their specifications) for meta-regression with binary variables, which can represent study- or effect size-level characteristics, in the presence of dependent effect sizes. This research gap serves as the primary motivation for the present simulation study. The results of this study will help meta-analysts determine which method exhibits better properties under different conditions when analyzing categorical moderator variables. Additionally, it will enable them to make more informed decisions regarding the inclusion (or exclusion) of meta-regression analyses involving binary variables with a high imbalance across categories. Finally, this article aims to clarify the different three-level model specifications that can be implemented when effect size-level moderators are present, along with their respective pros and cons.

2 Methods for meta-regression

In this section, we introduce the meta-regression models that will be tested in the present simulation study: three-level models, correlated-effects model with RVE inference, their combination (three-level models with RVE), and correlated-effects models with RVE and CWB as inferential methods. For clarity, we will explain the different terms in the equations using an applied example. Yan et al.Reference Yan, Lao, Panadero, Fernández-Castilla, Yang and Yang 2 published a meta-analysis on the effect of peer assessment (compared to teacher assessment) on academic outcomes. A relevant (binary) moderator variable was whether students received feedback from their peer assessments (coded as 0 – No and 1 – Yes).

2.1 Three-level models

A traditional random-effects model can be seen as a two-level model, where effect sizes are nested within studies. In the presence of multiple effect sizes, an additional level (or random effect) can be added to account for the dependency among these effects, which are often extracted from the same sample or following identical procedures.

The three-level model employed to estimate an overall effect size as well as the variability within and across studies, along with its advantages and disadvantages, has been thoroughly described in previous manuscripts.Reference Van den Noortgate, López-López, Marín-Martínez and Sánchez-Meca 18 , Reference Van den Noortgate, López-López, Marín-Martínez and Sánchez-Meca 19 , Reference Moeyaert, Ugille, Beretvas, Ferron, Bunuan and Van den Noortgate 31 In general, the primary advantage of this approach is that it does not require knowledge of the covariances among effect sizes, unlike the multivariate approach. Additionally, it treats different outcomes (i.e., various effect sizes within studies) as a random sample from a population of outcomes, rather than a fixed set of outcomes identical from study to study, which might not be realistic in many contexts.

In the following lines, we delineate two types of three-level mixed-effects models applicable for conducting moderator analyses. Initially, we elaborate on the mixed-effects model integrating a binary moderator variable denoting a characteristic of the studies. Subsequently, we discuss a mixed-effects model encompassing a categorical moderator variable reflecting characteristics of the outcomes within studies (e.g., at the effect size level). These models are expounded separately due to the latter’s potential specification in two distinct manners, but in practice, it is possible to incorporate moderator variables at both levels at the same time.

Study-level characteristics. When a meta-analyst aims to test the impact of study characteristics on the observed effect sizes, they can implement the following three-level model. At Level 1, the term

![]() ${t}_{ij}$

refers to the ith effect size observed in study j:

${t}_{ij}$

refers to the ith effect size observed in study j:

Following the example introduced before,

![]() ${t}_{ij}$

would be the standardized mean difference that indicates the difference between the group that received peer assessment and the group that received teacher assessment in academic performance, measured with outcome i (e.g., math exam, science exam, English assignment, etc.) in study j. This observed effect size is not exactly equal to its population effect,

${t}_{ij}$

would be the standardized mean difference that indicates the difference between the group that received peer assessment and the group that received teacher assessment in academic performance, measured with outcome i (e.g., math exam, science exam, English assignment, etc.) in study j. This observed effect size is not exactly equal to its population effect,

![]() ${\beta}_{ij}$

, due to a random deviation,

${\beta}_{ij}$

, due to a random deviation,

![]() ${e}_{ij}$

, that emerges due to the use of different samples across outcomesFootnote

3

and studies. These deviations are assumed to be normally distributed with mean 0 and variance

${e}_{ij}$

, that emerges due to the use of different samples across outcomesFootnote

3

and studies. These deviations are assumed to be normally distributed with mean 0 and variance

![]() ${\sigma}_{e_{ij}}^2$

, typically known as the sampling variance, which is often estimated before conducting the meta-analysis.

${\sigma}_{e_{ij}}^2$

, typically known as the sampling variance, which is often estimated before conducting the meta-analysis.

At Level 2, the population effect

![]() ${\beta}_{ij}$

equals the population mean for study j,

${\beta}_{ij}$

equals the population mean for study j,

![]() ${\theta}_{0j}$

, plus a random deviation,

${\theta}_{0j}$

, plus a random deviation,

![]() ${w}_{ij}$

, whose variance (

${w}_{ij}$

, whose variance (

![]() ${\sigma}_w^2$

) would be interpreted as the within-study variance:

${\sigma}_w^2$

) would be interpreted as the within-study variance:

Following the example, the population effect size reflecting the difference between the peer-assessment group and the teacher-assessment group in outcome i within study j (

![]() ${\beta}_{ij}$

) equals the mean of the population effect of study j,

${\beta}_{ij}$

) equals the mean of the population effect of study j,

![]() ${\theta}_{0j}$

, plus a random deviation

${\theta}_{0j}$

, plus a random deviation

![]() ${w}_{ij}$

that emerges due to the fact that the outcomes used to measure academic performance may differ within studies: maybe authors used both a math and a reading assignment. The variance of

${w}_{ij}$

that emerges due to the fact that the outcomes used to measure academic performance may differ within studies: maybe authors used both a math and a reading assignment. The variance of

![]() ${w}_{ij}$

,

${w}_{ij}$

,

![]() ${\sigma}_w^2$

, reflects the variability in the population effects around the study-specific mean

${\sigma}_w^2$

, reflects the variability in the population effects around the study-specific mean

![]() ${\theta}_{0j},$

that is, the within-study variance.

${\theta}_{0j},$

that is, the within-study variance.

Lastly, at Level 3, the mean population effect for study j,

![]() ${\theta}_{0j}$

, may vary either randomly or in relation to the characteristics of the study:

${\theta}_{0j}$

, may vary either randomly or in relation to the characteristics of the study:

The term

![]() ${Z}_{1j}$

of Equation (3) refers to any characteristic of study j. If this characteristic is categorical such as study design (e.g., experimental or quasi-experimental), or whether the students received feedback from their assessments (e.g., yes or no), the term

${Z}_{1j}$

of Equation (3) refers to any characteristic of study j. If this characteristic is categorical such as study design (e.g., experimental or quasi-experimental), or whether the students received feedback from their assessments (e.g., yes or no), the term

![]() ${\gamma}_{00}$

would refer to the overall effect size for the reference category. For instance, if the “feedback” variable was dummy coded in 1s and 0s,

${\gamma}_{00}$

would refer to the overall effect size for the reference category. For instance, if the “feedback” variable was dummy coded in 1s and 0s,

![]() ${\gamma}_{00}$

would represent the overall standard mean difference for those studies where the students did not receive feedback on their assessments. The term

${\gamma}_{00}$

would represent the overall standard mean difference for those studies where the students did not receive feedback on their assessments. The term

![]() ${\gamma}_{01}$

would represent the difference between the pooled standardized mean differences of both categories, and therefore,

${\gamma}_{01}$

would represent the difference between the pooled standardized mean differences of both categories, and therefore,

![]() ${\gamma}_{00}$

+

${\gamma}_{00}$

+

![]() ${\gamma}_{01}$

would equal the pooled standardized mean difference for those studies where the students did receive feedback of their assessments. Finally, the random effect

${\gamma}_{01}$

would equal the pooled standardized mean difference for those studies where the students did receive feedback of their assessments. Finally, the random effect

![]() ${u}_{0j}$

, assumed to be normally distributed with mean 0 and variance

${u}_{0j}$

, assumed to be normally distributed with mean 0 and variance

![]() ${\sigma}_{u_{res}}^2$

, is the residual between-studies variance after accounting for the effect of

${\sigma}_{u_{res}}^2$

, is the residual between-studies variance after accounting for the effect of

![]() ${Z}_{1j}$

.

${Z}_{1j}$

.

Effect size-level characteristics. Moderator variables can also pertain to characteristics of effect sizes within studies. For example, if some studies include two conditions: one where feedback was not provided to students and another where feedback was provided, different effect sizes could emerge within a study depending on the presence or absence of feedback. Therefore, this variable now describes an effect size-level characteristic. If this categorical moderator was analyzed with a three-level meta-regression model, the first level (Equation (1)) would remain the same. However, at Level 2, the population outcome-specific effect

![]() ${\beta}_{ij}$

would exhibit variability, stemming from both random factors and the attributes of the outcomes:

${\beta}_{ij}$

would exhibit variability, stemming from both random factors and the attributes of the outcomes:

The term

![]() ${X}_{1 ij}$

represents a characteristic of outcome i within study j (e.g., absence or presence of feedback), and the term

${X}_{1 ij}$

represents a characteristic of outcome i within study j (e.g., absence or presence of feedback), and the term

![]() ${\theta}_{1j}$

is the effect of

${\theta}_{1j}$

is the effect of

![]() ${X}_{1 ij}$

on the population outcome effects

${X}_{1 ij}$

on the population outcome effects

![]() ${\beta}_{ij}$

within study j. Importantly, at Level 3, we can make two assumptions regarding the within-study effect

${\beta}_{ij}$

within study j. Importantly, at Level 3, we can make two assumptions regarding the within-study effect

![]() ${\theta}_{1j}$

: either it remains constant across studies (e.g., the presence of feedback systematically improves or worsens the assessment of academic performance to the same extent across studies) or it varies across studies (e.g., the presence of feedback on academic performance differs between studies). In practice, meta-analysts often apply the first model specification, where at the study level (Level 3), the effect

${\theta}_{1j}$

: either it remains constant across studies (e.g., the presence of feedback systematically improves or worsens the assessment of academic performance to the same extent across studies) or it varies across studies (e.g., the presence of feedback on academic performance differs between studies). In practice, meta-analysts often apply the first model specification, where at the study level (Level 3), the effect

![]() ${\theta}_{1j}$

remains constant across studies (

${\theta}_{1j}$

remains constant across studies (

![]() ${\gamma}_{10}$

):

${\gamma}_{10}$

):

The term

![]() ${\gamma}_{00}$

represents the overall effect size in the reference category. Following our example,

${\gamma}_{00}$

represents the overall effect size in the reference category. Following our example,

![]() ${\gamma}_{00}$

would denote the overall standardized mean difference for those outcomes where feedback was absent. Unlike the moderator effect

${\gamma}_{00}$

would denote the overall standardized mean difference for those outcomes where feedback was absent. Unlike the moderator effect

![]() ${\gamma}_{10}$

, the effect

${\gamma}_{10}$

, the effect

![]() ${\gamma}_{00}$

is typically allowed to vary across studies (the random effect

${\gamma}_{00}$

is typically allowed to vary across studies (the random effect

![]() ${u}_{0j}$

is assumed to be normally distributed with mean 0 and variance

${u}_{0j}$

is assumed to be normally distributed with mean 0 and variance

![]() ${\sigma}_{u_0}^2$

, which is the between-studies variance of the overall effect size for the reference category). An important implication of the model is precisely the assumption that the effect

${\sigma}_{u_0}^2$

, which is the between-studies variance of the overall effect size for the reference category). An important implication of the model is precisely the assumption that the effect

![]() ${\gamma}_{10}$

(i.e., the difference between the overall effect across categories) is the same or stable across studies. If

${\gamma}_{10}$

(i.e., the difference between the overall effect across categories) is the same or stable across studies. If

![]() ${\gamma}_{10}$

did vary across studies, that is, if the effect of feedback on academic performance differed between studies, the model in Equation (5) would be mis-specified, and that can have important implications on the parameter estimates.Reference Fernandez-Castilla, Aloe and Declercq

22

Alternatively,

${\gamma}_{10}$

did vary across studies, that is, if the effect of feedback on academic performance differed between studies, the model in Equation (5) would be mis-specified, and that can have important implications on the parameter estimates.Reference Fernandez-Castilla, Aloe and Declercq

22

Alternatively,

![]() ${\gamma}_{10}$

could be allowed to vary across studies:

${\gamma}_{10}$

could be allowed to vary across studies:

Under this model, the moderator effect of variable

![]() ${X}_{1 ij}$

on the outcome effects within study j (

${X}_{1 ij}$

on the outcome effects within study j (

![]() ${\theta}_{1j})$

would equal an overall moderator effect,

${\theta}_{1j})$

would equal an overall moderator effect,

![]() ${\gamma}_{10}$

, plus a random deviation,

${\gamma}_{10}$

, plus a random deviation,

![]() ${u}_{1j}.$

The variance of

${u}_{1j}.$

The variance of

![]() ${u}_{1j}$

is

${u}_{1j}$

is

![]() ${\sigma}_{u_1}^2$

, which would represent the heterogeneity of

${\sigma}_{u_1}^2$

, which would represent the heterogeneity of

![]() ${\gamma}_{10}$

across studies.

${\gamma}_{10}$

across studies.

To assess the statistical significance of the moderator effect (

![]() ${\gamma}_{01}$

or

${\gamma}_{01}$

or

![]() ${\gamma}_{10}$

) which represents the difference between categories, Wald tests are commonly employed, with a significance level of 0.05. Alternatively, likelihood ratio tests (LRTs) can be used to compare the fit of a null model to that of a model incorporating the moderator variable. If the model with the moderator variable shows a significantly better fit (

${\gamma}_{10}$

) which represents the difference between categories, Wald tests are commonly employed, with a significance level of 0.05. Alternatively, likelihood ratio tests (LRTs) can be used to compare the fit of a null model to that of a model incorporating the moderator variable. If the model with the moderator variable shows a significantly better fit (

![]() $\alpha$

= 0.05), it can be concluded that the moderator variable is statistically significant.

$\alpha$

= 0.05), it can be concluded that the moderator variable is statistically significant.

Recently, a method using three-level models in combination with RVE inference method has been proposed. This method consists of applying first a three-level meta-regression, then extracting the variance–covariance matrix from the three-level model results, and then correcting this matrix using a sandwich estimator, including the bias-reduced linearization adjustment of Bell and McCaffrey.Reference Bell and McCaffrey 29 Also, hypothesis tests using Satterthwaite degrees of freedom are conducted. This posterior application of RVE ensures that inferences are correct in terms of Type I error, even if the three-level model is mis-specified.Reference Fernandez-Castilla, Aloe and Declercq 22 , Reference Pustejovsky and Tipton 24

2.2 Correlated-effects model with RVE inference

This method involves the specification of a working model that accounts for the correlation between effect sizes within studies. It builds on the random-effects model but includes a sandwich-type adjustment to the standard errors of the regression coefficients. This adjustment helps to prevent underestimation of standard errors due to statistical dependence among effect sizes.Reference Hedges, Tipton and Johnson 20 A great advantage of this method is that there is no need to estimate covariances between effect sizes, because it relies on a working model that involves making general, approximate assumptions about the dependence structure. Additionally, this method does not make distribution assumptions, as opposed to the three-level model which requires the sampling distribution of the effect sizes to be normal.

The statistical model for meta-regression is the following:

where

![]() $\mathbf{T}$

is a stacked vector containing the i effect sizes within the m studies, and X is a design matrix constructed so that its rows correspond to the total number of effect sizes, while its columns represent the number of covariates examined in the meta-regression analysis (plus the intercept, if desired). These covariates can refer to characteristics of the studies or of the outcomes. Moreover,

$\mathbf{T}$

is a stacked vector containing the i effect sizes within the m studies, and X is a design matrix constructed so that its rows correspond to the total number of effect sizes, while its columns represent the number of covariates examined in the meta-regression analysis (plus the intercept, if desired). These covariates can refer to characteristics of the studies or of the outcomes. Moreover,

![]() $\boldsymbol{\unicode{x3b3}}$

represents a vector containing the regression coefficients (i.e., moderator effects) that need to be estimated, and

$\boldsymbol{\unicode{x3b3}}$

represents a vector containing the regression coefficients (i.e., moderator effects) that need to be estimated, and ![]() is a stacked vector with the residuals of each of the k effect sizes within the m studies. To estimate the vector

is a stacked vector with the residuals of each of the k effect sizes within the m studies. To estimate the vector

![]() $\boldsymbol{\unicode{x3b3}}$

of regression coefficients, weighted least squares estimation method is used:

$\boldsymbol{\unicode{x3b3}}$

of regression coefficients, weighted least squares estimation method is used:

$$\begin{align}\widehat{\boldsymbol{\unicode{x3b3}}}={\left(\sum_{j=1}^m\mathbf{X}^{\prime}_{\boldsymbol{j}}{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{X}}_{\boldsymbol{j}}\right)}^{-\mathbf{1}}\left(\sum_{j=1}^m\mathbf{X}^{\prime}_{\boldsymbol{j}}{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{T}}_{\boldsymbol{j}}\right),\end{align}$$

$$\begin{align}\widehat{\boldsymbol{\unicode{x3b3}}}={\left(\sum_{j=1}^m\mathbf{X}^{\prime}_{\boldsymbol{j}}{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{X}}_{\boldsymbol{j}}\right)}^{-\mathbf{1}}\left(\sum_{j=1}^m\mathbf{X}^{\prime}_{\boldsymbol{j}}{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{T}}_{\boldsymbol{j}}\right),\end{align}$$

where

![]() ${\mathbf{W}}_{\boldsymbol{j}}$

is a diagonal matrix containing the weights assigned to the effects of study j. In the classical application of the RVE inference method, weights (

${\mathbf{W}}_{\boldsymbol{j}}$

is a diagonal matrix containing the weights assigned to the effects of study j. In the classical application of the RVE inference method, weights (

![]() ${\mathbf{W}}_{\boldsymbol{j}}$

) can be approximated by either assuming that effect sizes are correlated because they have been calculated on the same sample (i.e., correlated effects) or assuming that effect sizes covary because they have been obtained under similar conditions: similar research groups, similar instruments and procedures, etc. (i.e., hierarchical effects). Formulas to obtain each of these types of weights can be found elsewhere.Reference Hedges, Tipton and Johnson

20

It should be noted that new approaches have been recently proposed where both types of dependency can be simultaneously modeled.Reference Pustejovsky and Tipton

24

${\mathbf{W}}_{\boldsymbol{j}}$

) can be approximated by either assuming that effect sizes are correlated because they have been calculated on the same sample (i.e., correlated effects) or assuming that effect sizes covary because they have been obtained under similar conditions: similar research groups, similar instruments and procedures, etc. (i.e., hierarchical effects). Formulas to obtain each of these types of weights can be found elsewhere.Reference Hedges, Tipton and Johnson

20

It should be noted that new approaches have been recently proposed where both types of dependency can be simultaneously modeled.Reference Pustejovsky and Tipton

24

After estimating the vector of moderator effects

![]() $\widehat{\boldsymbol{\unicode{x3b3}}}$

, their sampling variances (i.e., standard errors) are given by

$\widehat{\boldsymbol{\unicode{x3b3}}}$

, their sampling variances (i.e., standard errors) are given by

$$\begin{align}\mathbf{V}\left(\widehat{\unicode{x3b3}}\right)={\left(\sum_{j=1}^m{\mathbf{X}}_{\boldsymbol{j}}^{\prime }{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{X}}_{\boldsymbol{j}}\right)}^{-\mathbf{1}}\left(\sum_{j=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{e}}_j{\mathbf{e}}_j^{\prime }{\mathbf{W}}_j{\mathbf{X}}_j\right){\left(\sum_{\boldsymbol{j}=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{X}}_j\right)}^{-\mathbf{1}},\end{align}$$

$$\begin{align}\mathbf{V}\left(\widehat{\unicode{x3b3}}\right)={\left(\sum_{j=1}^m{\mathbf{X}}_{\boldsymbol{j}}^{\prime }{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{X}}_{\boldsymbol{j}}\right)}^{-\mathbf{1}}\left(\sum_{j=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{e}}_j{\mathbf{e}}_j^{\prime }{\mathbf{W}}_j{\mathbf{X}}_j\right){\left(\sum_{\boldsymbol{j}=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{X}}_j\right)}^{-\mathbf{1}},\end{align}$$

where

![]() ${\mathbf{e}}_j$

is the observed vector of residuals of the jth study. In subsequent developments of this approach, TiptonReference Tipton

21

and Pustejovsky and TiptonReference Pustejovsky and Tipton

23

explored ways of further adjusting the estimated variances of the regression coefficients,

${\mathbf{e}}_j$

is the observed vector of residuals of the jth study. In subsequent developments of this approach, TiptonReference Tipton

21

and Pustejovsky and TiptonReference Pustejovsky and Tipton

23

explored ways of further adjusting the estimated variances of the regression coefficients,

![]() $\mathbf{V}\left(\widehat{\unicode{x3b3}}\right),$

to keep the Type I error at the nominal level, especially when the number of studies synthesized is small (i.e., less than 40). In this regard, TiptonReference Tipton

21

found a good performance for a combination of two small-sample corrections, namely the bias-reduced linearization adjustment proposed by Bell and McCaffreyReference Bell and McCaffrey

29

applied on

$\mathbf{V}\left(\widehat{\unicode{x3b3}}\right),$

to keep the Type I error at the nominal level, especially when the number of studies synthesized is small (i.e., less than 40). In this regard, TiptonReference Tipton

21

found a good performance for a combination of two small-sample corrections, namely the bias-reduced linearization adjustment proposed by Bell and McCaffreyReference Bell and McCaffrey

29

applied on

![]() $\mathbf{V}\left(\widehat{\unicode{x3b3}}\right)$

, along with the Satterthwaite correction to the degrees of freedom of the t-distribution used to test the estimated regression coefficient.Reference Satterthwaite

30

Specifically, TiptonReference Tipton

21

proposed adding an adjustment matrix

$\mathbf{V}\left(\widehat{\unicode{x3b3}}\right)$

, along with the Satterthwaite correction to the degrees of freedom of the t-distribution used to test the estimated regression coefficient.Reference Satterthwaite

30

Specifically, TiptonReference Tipton

21

proposed adding an adjustment matrix

![]() ${\mathbf{A}}_j$

next to the cross-product of residuals:

${\mathbf{A}}_j$

next to the cross-product of residuals:

$$\begin{align}\mathbf{V}\left(\widehat{\unicode{x3b3}}\right)={\left(\sum_{j=1}^m{\mathbf{X}}_{\boldsymbol{j}}^{\prime }{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{X}}_{\boldsymbol{j}}\right)}^{-\mathbf{1}}\left(\sum_{j=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{A}}_j{\mathbf{e}}_j{\mathbf{e}}_j^{\prime }{\mathbf{A}}_j{\mathbf{W}}_j{\mathbf{X}}_j\right){\left(\sum_{\boldsymbol{j}=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{X}}_j\right)}^{-\mathbf{1}}.\end{align}$$

$$\begin{align}\mathbf{V}\left(\widehat{\unicode{x3b3}}\right)={\left(\sum_{j=1}^m{\mathbf{X}}_{\boldsymbol{j}}^{\prime }{\mathbf{W}}_{\boldsymbol{j}}{\mathbf{X}}_{\boldsymbol{j}}\right)}^{-\mathbf{1}}\left(\sum_{j=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{A}}_j{\mathbf{e}}_j{\mathbf{e}}_j^{\prime }{\mathbf{A}}_j{\mathbf{W}}_j{\mathbf{X}}_j\right){\left(\sum_{\boldsymbol{j}=1}^m{\mathbf{X}}_j^{\prime }{\mathbf{W}}_j{\mathbf{X}}_j\right)}^{-\mathbf{1}}.\end{align}$$

For a full description of the bias-reduced linearization adjustment (i.e., matrix

![]() ${\mathbf{A}}_j$

) and the Satterthwaite correlation to the degrees of freedom, we refer to the works of Tipton,Reference Tipton

21

Tipton and Pustejovsky,Reference Tipton and Pustejovsky

32

and Pustejovsky and Tipton.Reference Pustejovsky and Tipton

23

It should be noted that the Satterthwaite degrees of freedom are particularly sensitive to the unbalanced distribution of effect sizes across the categories of a categorical variable, especially in study-level covariates. Specifically, TiptonReference Tipton

21

found that when the Satterthwaite degrees of freedom are smaller than 4, the Type I error rate can be inflated, making p-values unreliable.

${\mathbf{A}}_j$

) and the Satterthwaite correlation to the degrees of freedom, we refer to the works of Tipton,Reference Tipton

21

Tipton and Pustejovsky,Reference Tipton and Pustejovsky

32

and Pustejovsky and Tipton.Reference Pustejovsky and Tipton

23

It should be noted that the Satterthwaite degrees of freedom are particularly sensitive to the unbalanced distribution of effect sizes across the categories of a categorical variable, especially in study-level covariates. Specifically, TiptonReference Tipton

21

found that when the Satterthwaite degrees of freedom are smaller than 4, the Type I error rate can be inflated, making p-values unreliable.

Recently, Joshi et al.Reference Joshi, Pustejovsky and Beretvas 26 proposed combining the correlated-effects models with RVE method with bootstrapping techniques to enhance the power for detecting moderator effects while maintaining the Type I error rate at the nominal level. Among the various bootstrapping methods, Joshi et al.Reference Joshi, Pustejovsky and Beretvas 26 recommend using CWB,Reference Cameron, Gelbach and Miller 33 which accounts for the clustered structure of effect sizes within studies. This approach begins by fitting two models to the meta-analytic data: a null model, typically a standard random-effects model excluding moderator variables, and a full model that incorporates a moderator variable. A test statistic is then derived from the full model. Residual vectors from the null model are subsequently obtained, clustered by study. Then, for each bootstrap iteration, a random value (with mean 0 and variance 1) is generated for each study, and the corresponding residual vector is multiplied by this value. These modified residuals are added to the predicted values from the null model to create a new set of bootstrapped outcome scores. The full model is subsequently refitted using these scores, and a new test statistic is computed.

This process yields a p-value, calculated as the proportion of bootstrap test statistics that exceed the test statistic obtained from the original data analysis. This value is then used to determine whether the effect of the moderator variable is statistically different from zero. Technical details of this method, as well as relevant R code for its implementation, can be found in Joshi et al.Reference Joshi, Pustejovsky and Beretvas 26

3 Empirical example

To illustrate the properties of the methods discussed above, we use real meta-analytic data from the previously mentioned study by Yan et al.Reference Yan, Lao, Panadero, Fernández-Castilla, Yang and Yang 2 In this meta-analysis, the authors compared peer assessment with teacher assessment in enhancing academic performance. As previously presented, one potential moderator explored was whether students received feedback following their self-assessments. The results presented in Table 1 are based on a meta-regression in which the binary variable “feedback” (coded as 0 = no feedback; 1 = feedback) was included as a predictor of the standardized mean differences, which represent the relative efficacy of peer assessment compared to teacher assessment. It is important to note that this variable reflects a characteristic at the effect size level; that is, within a single study, some effect sizes were drawn from contexts where students received feedback on their peer assessments, while others came from contexts where no feedback was provided. Additionally, the distribution of effect sizes across the two categories was highly unbalanced: 259 effect sizes were drawn from scenarios where no feedback was provided to students, while only 78 effect sizes fell into the category where feedback was given.

The differences in p-values and estimates across methods are noteworthy. The multivariate approach indicates a statistically significant difference between categories, while the three-level model yields results approaching significance. In contrast, the three-level model with RVE and the version including an additional random effect both produce non-significant results. Moreover, the correlated-effects models with RVE and with RVE and CWB appear more conservative in this example. Overall, this brief illustration highlights how the choice of analytical method can influence meta-regression outcomes, with different approaches potentially leading to different conclusions. The data for this example and the R code used to generate the results in Table 1 are available at https://osf.io/czwtp/overview.

4 Method

4.1 Data generation

The R code to run the simulation studies and supplementary results are available at https://osf.io/czwtp/overview.

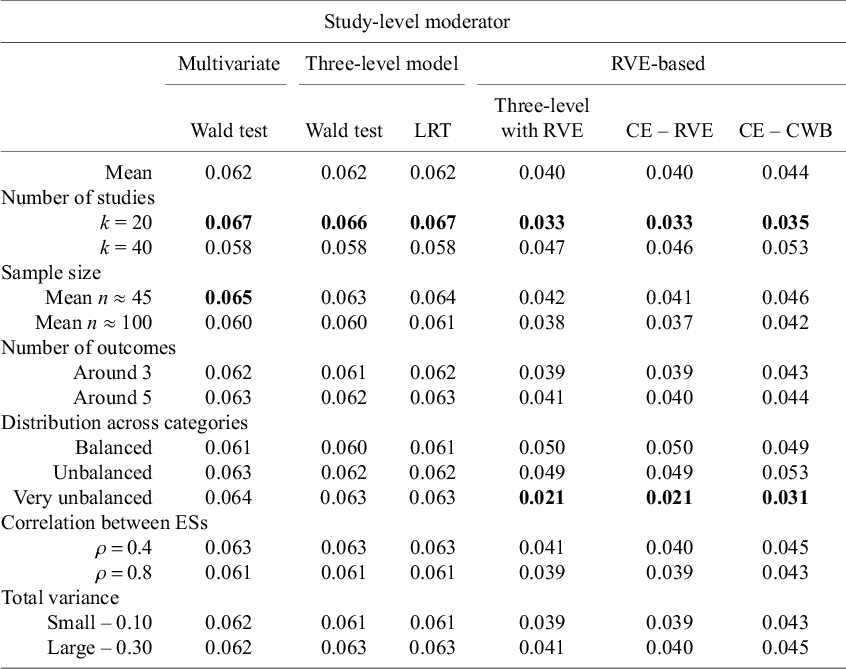

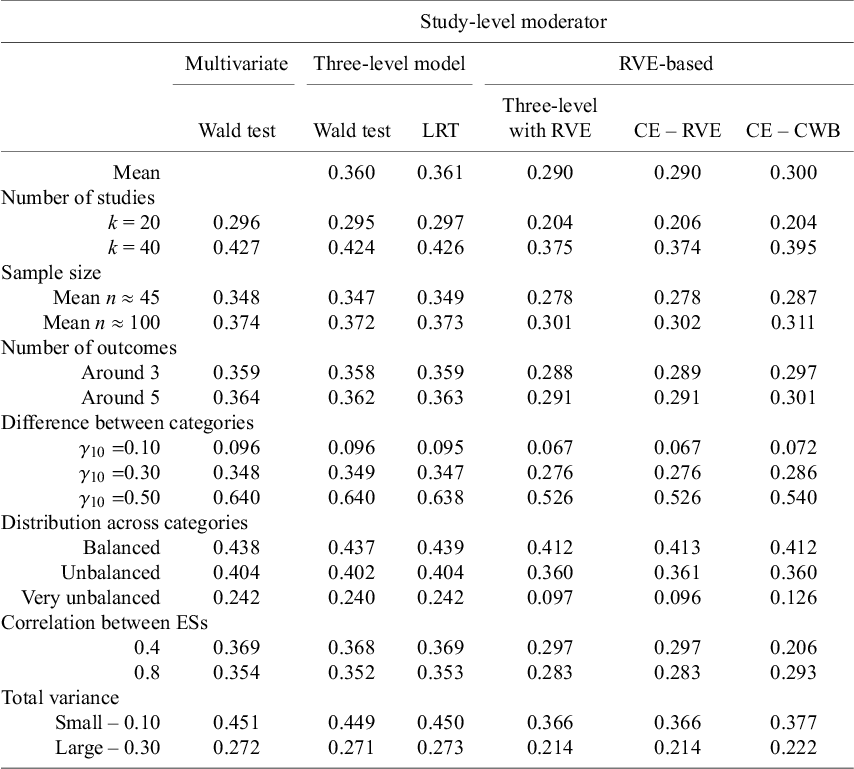

4.1.1 Study-level moderator

Cohen’s d effect sizes were directly generated from a multivariate two-level model:

where

![]() ${d}_{ij}$

refers to the observed effect size i reported in study j, and

${d}_{ij}$

refers to the observed effect size i reported in study j, and

![]() ${\beta}_{0j}$

represents the overall population effect size of study j. The term

${\beta}_{0j}$

represents the overall population effect size of study j. The term

![]() ${e}_{ij}$

represents the random deviation of

${e}_{ij}$

represents the random deviation of

![]() ${d}_{ij}$

from

${d}_{ij}$

from

![]() ${\beta}_{0j}$

due to the use of a sample of participants (e.g., random sampling error). The estimation procedure typically used in this model, namely ML or REML, assumes that the vector of residuals

${\beta}_{0j}$

due to the use of a sample of participants (e.g., random sampling error). The estimation procedure typically used in this model, namely ML or REML, assumes that the vector of residuals

![]() $\mathbf{e}$

within study j follows a multivariate normal distribution with mean 0 and with the following I × I variance–covariance matrix (V), with I being the total number of effect sizes within a study, so that

$\mathbf{e}$

within study j follows a multivariate normal distribution with mean 0 and with the following I × I variance–covariance matrix (V), with I being the total number of effect sizes within a study, so that

$$\begin{align*}\left[\begin{array}{c}{e}_{1j}\\ {}\begin{array}{c}{e}_{2j}\\ {}\begin{array}{c}\vdots \\ {}{e}_{Ij}\end{array}\end{array}\end{array}\right]\sim MVN\left(\left[\begin{array}{c}0\\ {}\begin{array}{c}0\\ {}\begin{array}{c}\vdots \\ {}0\end{array}\end{array}\end{array}\right],\left[\begin{array}{ccc}{\sigma^2}_{e_1}& & \kern0.5em \\ {}{\sigma}_{e_1{e}_2}& {\sigma^2}_{e_2}& \begin{array}{cc}& \end{array}\\ {}\begin{array}{c}\vdots \\ {}{\sigma}_{e_1{e}_I}\end{array}& \begin{array}{c}\vdots \\ {}{\sigma}_{e_2{e}_I}\end{array}& \begin{array}{c}\begin{array}{cc}\ddots & \end{array}\\ {}\begin{array}{cc}\dots & {\sigma^2}_{e_I}\end{array}\end{array}\end{array}\right]\right).\end{align*}$$

$$\begin{align*}\left[\begin{array}{c}{e}_{1j}\\ {}\begin{array}{c}{e}_{2j}\\ {}\begin{array}{c}\vdots \\ {}{e}_{Ij}\end{array}\end{array}\end{array}\right]\sim MVN\left(\left[\begin{array}{c}0\\ {}\begin{array}{c}0\\ {}\begin{array}{c}\vdots \\ {}0\end{array}\end{array}\end{array}\right],\left[\begin{array}{ccc}{\sigma^2}_{e_1}& & \kern0.5em \\ {}{\sigma}_{e_1{e}_2}& {\sigma^2}_{e_2}& \begin{array}{cc}& \end{array}\\ {}\begin{array}{c}\vdots \\ {}{\sigma}_{e_1{e}_I}\end{array}& \begin{array}{c}\vdots \\ {}{\sigma}_{e_2{e}_I}\end{array}& \begin{array}{c}\begin{array}{cc}\ddots & \end{array}\\ {}\begin{array}{cc}\dots & {\sigma^2}_{e_I}\end{array}\end{array}\end{array}\right]\right).\end{align*}$$

In the main diagonal of the variance–covariance matrix, we find the sampling variance of each effect size, which was calculated following the formula provided by Gleser and OlkinReference Gleser, Olkin, Cooper and Hedges 34 :

$$\begin{align}{\sigma}_{e_{ij}}^2=\frac{n_1+{n}_2}{n_1{n}_2}+\frac{\delta_{ij}^2}{2\left({n}_1+{n}_2\right)},\end{align}$$

$$\begin{align}{\sigma}_{e_{ij}}^2=\frac{n_1+{n}_2}{n_1{n}_2}+\frac{\delta_{ij}^2}{2\left({n}_1+{n}_2\right)},\end{align}$$

where

![]() ${n}_1$

and

${n}_1$

and

![]() ${n}_2$

represent the sample sizes of the experimental and control groups, and

${n}_2$

represent the sample sizes of the experimental and control groups, and

![]() ${\delta}_{ij}$

refers to the population effect of effect size i within study j. Moreover, covariances between effect sizes (e.g.,

${\delta}_{ij}$

refers to the population effect of effect size i within study j. Moreover, covariances between effect sizes (e.g.,

![]() ${\sigma}_{e_1{e}_2}$

) are specified outside the main diagonal. For this study, we have assumed that effect sizes reported in the same study referred to different types of outcomes. Therefore, the covariances were computed using the formulas established by Gleser and OlkinReference Gleser, Olkin, Cooper and Hedges

34

for Cohen’s d effect sizes for this scenario:

${\sigma}_{e_1{e}_2}$

) are specified outside the main diagonal. For this study, we have assumed that effect sizes reported in the same study referred to different types of outcomes. Therefore, the covariances were computed using the formulas established by Gleser and OlkinReference Gleser, Olkin, Cooper and Hedges

34

for Cohen’s d effect sizes for this scenario:

$$\begin{align}{\sigma}_{e_{ij}{e}_{ij\prime }}=\frac{n_1+{n}_2}{n_1{n}_2}{\rho}_{ij ij\prime }+\frac{\delta_{ij}{\delta}_{ij\prime }{\rho}_{ij\; ij\prime}^2}{2\;\left({n}_1+{n}_2\right)}\end{align}$$

$$\begin{align}{\sigma}_{e_{ij}{e}_{ij\prime }}=\frac{n_1+{n}_2}{n_1{n}_2}{\rho}_{ij ij\prime }+\frac{\delta_{ij}{\delta}_{ij\prime }{\rho}_{ij\; ij\prime}^2}{2\;\left({n}_1+{n}_2\right)}\end{align}$$

where

![]() ${\delta}_{ij}$

and

${\delta}_{ij}$

and

![]() ${\delta}_{ij\prime }$

refer to the population effects of two different effect sizes reported within study j, and

${\delta}_{ij\prime }$

refer to the population effects of two different effect sizes reported within study j, and

![]() ${\rho}_{ij\; ij\prime }$

refers to the correlation between outcome variables.

${\rho}_{ij\; ij\prime }$

refers to the correlation between outcome variables.

At Level 2, the study-specific population effects

![]() ${\beta}_{0j}$

is a function of a study-dummy (moderator) variable

${\beta}_{0j}$

is a function of a study-dummy (moderator) variable

![]() ${X}_{1j}$

:

${X}_{1j}$

:

where

![]() ${\gamma}_{00}$

represents the overall effect size for category 0 of the moderator variable

${\gamma}_{00}$

represents the overall effect size for category 0 of the moderator variable

![]() ${X}_{1j}$

,

${X}_{1j}$

,

![]() ${\gamma}_{01}$

is the difference between the overall effects from both categories, and

${\gamma}_{01}$

is the difference between the overall effects from both categories, and

![]() ${\gamma}_{00}+{\gamma}_{01}$

is the overall effect size for category

${\gamma}_{00}+{\gamma}_{01}$

is the overall effect size for category

![]() ${X}_{1j}$

= 1. The study-specific random effects,

${X}_{1j}$

= 1. The study-specific random effects,

![]() ${u}_{0j}$

, represent the random deviation of

${u}_{0j}$

, represent the random deviation of

![]() ${\beta}_{0j}$

from the overall effect size

${\beta}_{0j}$

from the overall effect size

![]() ${\gamma}_{00}$

due to between-studies variation. The random effect

${\gamma}_{00}$

due to between-studies variation. The random effect

![]() ${u}_{0j}$

is normally distributed with mean zero and variance

${u}_{0j}$

is normally distributed with mean zero and variance

![]() ${\sigma}_{u_0}^2$

, which stands for the (residual) between-studies variance around the effect of

${\sigma}_{u_0}^2$

, which stands for the (residual) between-studies variance around the effect of

![]() ${\gamma}_{00}$

once the effect of

${\gamma}_{00}$

once the effect of

![]() ${X}_{1j}$

has been taken into account. Note that in this model, it is assumed that outcomes of the same type share a common population effect size.

${X}_{1j}$

has been taken into account. Note that in this model, it is assumed that outcomes of the same type share a common population effect size.

Cohen’s d was not corrected to Hedges’ g, as Lin and AloeReference Lin and Aloe

35

found that using Cohen’s d as an estimator of population effect delta generally leads to less bias in the meta-analytic results than Hedges’ g. Also, following the recommendations of Lin and Aloe,Reference Lin and Aloe

35

the formula to calculate the sampling variance of Cohen’s d was the one in Equation (3) but replacing the population term

![]() ${\delta}_{ij}^2$

with

${\delta}_{ij}^2$

with

![]() ${d}_{ij}^2$

. Finally, it should be noted that by generating Cohen’s d directly, the results of this simulation can be generalized to effect sizes that are approximately normally distributed (e.g., Fisher’s Z or log-transformed odds ratio).

${d}_{ij}^2$

. Finally, it should be noted that by generating Cohen’s d directly, the results of this simulation can be generalized to effect sizes that are approximately normally distributed (e.g., Fisher’s Z or log-transformed odds ratio).

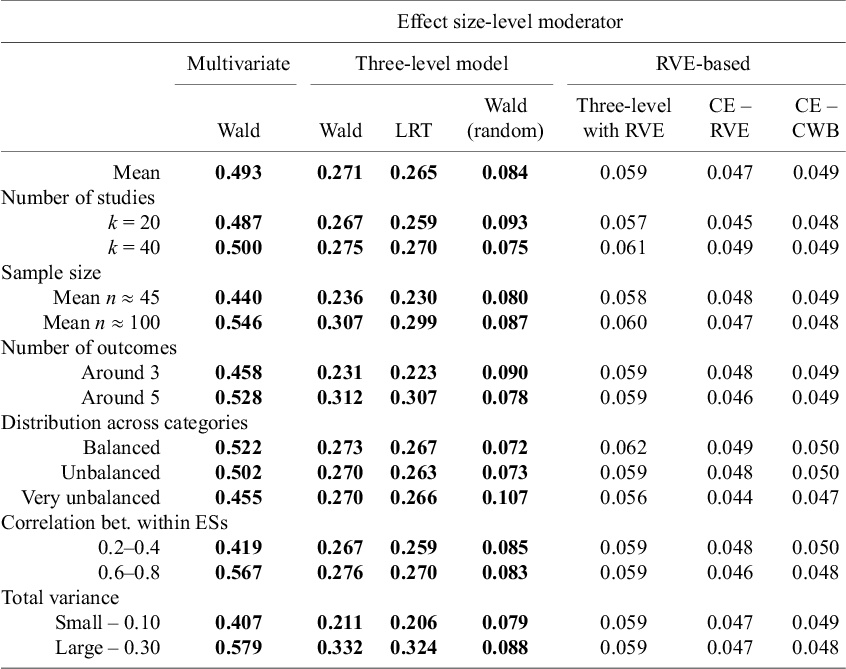

4.1.2 Effect size-level moderator

Cohen’s d effect sizes were directly generated from a multivariate two-level model:

where

![]() ${X}_{0 ij}$

and

${X}_{0 ij}$

and

![]() ${X}_{1 ij}$

are dummy-coded variables indicating the category of the moderator variable to which the effect size pertains (coded as 1), and

${X}_{1 ij}$

are dummy-coded variables indicating the category of the moderator variable to which the effect size pertains (coded as 1), and

![]() ${e}_{ij}$

is a vector of residuals with I × I variance–covariance matrix. At the second level:

${e}_{ij}$

is a vector of residuals with I × I variance–covariance matrix. At the second level:

$$\begin{align}\begin{cases}\beta_{0j} = \gamma_{00} + u_{0j} \\\beta_{1j} = \gamma_{10} + u_{1j},\end{cases}\end{align}$$

$$\begin{align}\begin{cases}\beta_{0j} = \gamma_{00} + u_{0j} \\\beta_{1j} = \gamma_{10} + u_{1j},\end{cases}\end{align}$$

where

![]() ${\gamma}_{00}$

and

${\gamma}_{00}$

and

![]() ${\gamma}_{10}$

represent, respectively, the overall effect for category 0 and the overall effect for category 1. The study-specific random effects,

${\gamma}_{10}$

represent, respectively, the overall effect for category 0 and the overall effect for category 1. The study-specific random effects,

![]() ${u}_{0j}$

and

${u}_{0j}$

and

![]() ${u}_{1j}$

, are assumed to follow a bivariate normal distribution:

${u}_{1j}$

, are assumed to follow a bivariate normal distribution:

$$\begin{align*}\left[\begin{array}{c}{u}_{0j}\\ {}{u}_{1j}\end{array}\right]\sim MVN\;\left(\left[\begin{array}{c}0\\ {}0\end{array}\right],\left[\begin{array}{cc}{\sigma}_{u_0}^2& \\ {}{\sigma}_{u_0{u}_1}& {\sigma}_{u_1}^2\end{array}\right]\right)\nonumber,\end{align*}$$

$$\begin{align*}\left[\begin{array}{c}{u}_{0j}\\ {}{u}_{1j}\end{array}\right]\sim MVN\;\left(\left[\begin{array}{c}0\\ {}0\end{array}\right],\left[\begin{array}{cc}{\sigma}_{u_0}^2& \\ {}{\sigma}_{u_0{u}_1}& {\sigma}_{u_1}^2\end{array}\right]\right)\nonumber,\end{align*}$$

where the variances

![]() ${\sigma}_{u_0}^2$

and

${\sigma}_{u_0}^2$

and

![]() ${\sigma}_{u_1}^2$

stand for the between-studies variances of the population effect sizes

${\sigma}_{u_1}^2$

stand for the between-studies variances of the population effect sizes

![]() ${\gamma}_{00}$

and of the differences between effects,

${\gamma}_{00}$

and of the differences between effects,

![]() ${\gamma}_{10}$

, respectively. These between-studies variances were always equal, meaning that the assumption of homoscedasticity was satisfied. Additionally, it is important to note that this model operates under the assumption that differences between categories may vary across studies. This implies that while one study may show no differences between categories, another may indeed reveal variations. We adopt this approach because there is no compelling reason to assume that overall effects within categories of a moderator remain consistent across studies. Similar to how a random-effects model allows the overall effect to randomly vary across studies, the effects within a moderator category can also exhibit variability across studies. In cases where such variation occurs, we must acknowledge that the differences between effects, denoted as

${\gamma}_{10}$

, respectively. These between-studies variances were always equal, meaning that the assumption of homoscedasticity was satisfied. Additionally, it is important to note that this model operates under the assumption that differences between categories may vary across studies. This implies that while one study may show no differences between categories, another may indeed reveal variations. We adopt this approach because there is no compelling reason to assume that overall effects within categories of a moderator remain consistent across studies. Similar to how a random-effects model allows the overall effect to randomly vary across studies, the effects within a moderator category can also exhibit variability across studies. In cases where such variation occurs, we must acknowledge that the differences between effects, denoted as

![]() ${\gamma}_{10}$

, can also vary across studies.

${\gamma}_{10}$

, can also vary across studies.

The correlation selected to calculate the covariances for between-study residuals (

![]() ${\sigma}_{u_0{u}_1}$

) was set to 0.4, which is an intermediate value.Reference Fernandez-Castilla, Aloe and Declercq

22

,

Reference Park and Beretvas

28

This correlation was not varied in the present simulation because these two previous studies have found no effect of this factor on simulation results.

${\sigma}_{u_0{u}_1}$

) was set to 0.4, which is an intermediate value.Reference Fernandez-Castilla, Aloe and Declercq

22

,

Reference Park and Beretvas

28

This correlation was not varied in the present simulation because these two previous studies have found no effect of this factor on simulation results.

The difference in overall effects across categories is represented by

![]() ${\gamma}_{01}$

when the moderator effect is at the study level, and by

${\gamma}_{01}$

when the moderator effect is at the study level, and by

![]() $\left({\gamma}_{10}-{\gamma}_{00}\right)$

when it operates at the effect size level. To avoid confusion, for the remainder of the article, we will refer to the overall effect of the first category as

$\left({\gamma}_{10}-{\gamma}_{00}\right)$

when it operates at the effect size level. To avoid confusion, for the remainder of the article, we will refer to the overall effect of the first category as

![]() ${\gamma}_0$

, and to the moderator effect of the second category as

${\gamma}_0$

, and to the moderator effect of the second category as

![]() ${\gamma}_1$

, regardless of whether it pertains to a study-level or effect size-level characteristic.

${\gamma}_1$

, regardless of whether it pertains to a study-level or effect size-level characteristic.

4.2 Simulation factors

4.2.1 Number of studies (k)

In the review of multilevel meta-analyses carried out by Fernández-Castilla et al.,Reference Fernández-Castilla, Jamshidi and Declercq 36 the Q1, Q2, and Q3 number of studies in multilevel meta-analysis of different fields were around 18, 35, and 53. Previous simulation studies have found that 40 studies are normally enough to get unbiased estimates.Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey 9 , Reference Fernandez-Castilla, Aloe and Declercq 22 Therefore, also following the procedure of TiptonReference Tipton 21 and López-López et al.,Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey 9 we generated meta-analyses of 20 and 40 studies, corresponding to (approximately) Q1 and Q2.

4.2.2 Number of effect sizes within studies (m)

According to Fernández-Castilla et al.Reference Fernández-Castilla, Jamshidi and Declercq

36

primary studies reporting multiple results rarely include the same number of outcomes, and, on average, they include around 3 effect sizes. Tipton et al.Reference Tipton, Pustejovsky and Ahmadi

25

found that the average number of effect sizes per study was around 4.5 in meta-analysis in the psychology and education areas. These unbalanced distributions of effect sizes across studies were taken into account in the simulation by generating different number of effect sizes across studies. First, the average number of effect sizes per study was set to be 3 or 5 (

![]() $\overline{m}$

= {3, 5}). Then, a number was extracted from a Poisson distribution as

$\overline{m}$

= {3, 5}). Then, a number was extracted from a Poisson distribution as

This approach is similar to the one Joshi et al.Reference Joshi, Pustejovsky and Beretvas

26

used to generate their data. When

![]() $\overline{m}=3$

, the number of effect sizes per study ranged between 1 and 6, and when

$\overline{m}=3$

, the number of effect sizes per study ranged between 1 and 6, and when

![]() $\overline{m}=5$

, the number of effect sizes per study ranged between 1 and 9.

$\overline{m}=5$

, the number of effect sizes per study ranged between 1 and 9.

4.2.3

Correlation between effect sizes (

$\boldsymbol{\rho}$

)

$\boldsymbol{\rho}$

)

Effect sizes from the same study could be moderately (

![]() $\rho =0$

.4) or largely correlated (

$\rho =0$

.4) or largely correlated (

![]() $\rho =0$

.8). These values are consistent with those selected in previous simulation studies. For instance, López-López et al.Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey

9

chose an average correlation of 0.3 (moderate) or 0.7 (large), whereas Joshi et al.Reference Joshi, Pustejovsky and Beretvas

26

and TiptonReference Tipton

21

chose correlations of 0.5 and 0.8 for moderate and large correlations, respectively. Following the procedure of Joshi et al.,Reference Joshi, Pustejovsky and Beretvas

26

correlations were varied across studies by extracting a correlation value from a beta distribution as follows:

$\rho =0$

.8). These values are consistent with those selected in previous simulation studies. For instance, López-López et al.Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey

9

chose an average correlation of 0.3 (moderate) or 0.7 (large), whereas Joshi et al.Reference Joshi, Pustejovsky and Beretvas

26

and TiptonReference Tipton

21

chose correlations of 0.5 and 0.8 for moderate and large correlations, respectively. Following the procedure of Joshi et al.,Reference Joshi, Pustejovsky and Beretvas

26

correlations were varied across studies by extracting a correlation value from a beta distribution as follows:

where

![]() $\upsilon$

was always set to 50 to generate moderate heterogeneity across correlations.

$\upsilon$

was always set to 50 to generate moderate heterogeneity across correlations.

4.2.4 Sample size (n)

In the review of multilevel meta-analyses carried out by Fernández-Castilla et al.,Reference Fernández-Castilla, Jamshidi and Declercq 36 the median sample size (n) in primary studies was around 100, with the first quartile being around 35.Footnote 4 As sample sizes tend to vary significantly among primary studies, we generated random numbers from a lognormal distribution, either LnN(3.91, 0.7) or LnN(4.61, 0.7), reproducing the sample sizes observed in the review. When the mean of the lognormal distribution was 3.91, the average total sample size was 45, and this value ranged from approximately 15 to 200. When the mean of the lognormal distribution was 4.61, the average total sample size was 100, and this value approximately ranged from 52 to 500.

4.2.5 Type of moderator variable

The binary moderator variable could explain characteristics of the studies (study-level moderator) or characteristics of the effect sizes within study (effect size-level moderator).

4.2.6 Overall effect of each category

The overall effect for the reference category (

![]() ${\gamma}_0$

) was always 0, and the difference between categories,

${\gamma}_0$

) was always 0, and the difference between categories,

![]() ${\gamma}_1$

, could be 0 as well (this condition serves to study Type I error), or 0.10, 0.30, and 0.50 (these conditions serve to study power). These values are similar to those selected in previous simulations; for instance, Rubio-Aparicio et al.Reference Rubio-Aparicio, López-López, Viechtbauer, Marín-Martínez, Botella and Sánchez-Meca

10

generated differences of 0.2, 0.4, and 0.6, whereas Joshi et al.Reference Joshi, Pustejovsky and Beretvas

26

generated differences of 0.1, 0.3, and 0.5. Also, these values represent a realistic range of Cohen’s d indices typically observed in meta-analyses from different fields.Reference Fernández-Castilla, Jamshidi and Declercq

36

Additionally, as described by Cafri et al.Reference Cafri, Kromrey and Brannick

5

according to a review of 81 meta-analysis, the median difference between overall effects of the two categories of a moderator is 0.16 (r = 0.08).

${\gamma}_1$

, could be 0 as well (this condition serves to study Type I error), or 0.10, 0.30, and 0.50 (these conditions serve to study power). These values are similar to those selected in previous simulations; for instance, Rubio-Aparicio et al.Reference Rubio-Aparicio, López-López, Viechtbauer, Marín-Martínez, Botella and Sánchez-Meca

10

generated differences of 0.2, 0.4, and 0.6, whereas Joshi et al.Reference Joshi, Pustejovsky and Beretvas

26

generated differences of 0.1, 0.3, and 0.5. Also, these values represent a realistic range of Cohen’s d indices typically observed in meta-analyses from different fields.Reference Fernández-Castilla, Jamshidi and Declercq

36

Additionally, as described by Cafri et al.Reference Cafri, Kromrey and Brannick

5

according to a review of 81 meta-analysis, the median difference between overall effects of the two categories of a moderator is 0.16 (r = 0.08).

4.2.7 Distribution of effects across the categories of the dummy moderator variable

A key focus of this study was to investigate how an uneven distribution of effect sizes across the categories of the moderator variable impacts parameter estimates, the Type I error rate, and statistical power. Three scenarios were examined: balanced (50%–50%), unbalanced (30%–70%), and very unbalanced (10%–90%) distributions of effect sizes across categories of the binary moderator variable. When the moderator variable was at the study level, entire studies were assigned to one category or the other based on the specified percentages. In contrast, when the moderator was at the effect size level, individual effect sizes within studies were randomly assigned to each category according to the same proportions.

4.2.8

(Residual) between-studies variance (

${\widehat{\boldsymbol{\sigma}}}_{{\boldsymbol{u}}_{\boldsymbol{res}}}^{\mathbf{2}}$

)

${\widehat{\boldsymbol{\sigma}}}_{{\boldsymbol{u}}_{\boldsymbol{res}}}^{\mathbf{2}}$

)

To simplify the analysis, we selected two values for between-studies variance representing moderate and high variability: 0.10 for moderate and 0.30 for high variability. These values align with those reported by Fernández-Castilla et al.Reference Fernández-Castilla, Jamshidi and Declercq

36

in multilevel meta-analyses across various fields and match conditions used in previous simulations.Reference López-López, Van den Noortgate, Tanner-Smith, Wilson and Lipsey

9

,

Reference Joshi, Pustejovsky and Beretvas

26

When the moderator variable was at the effect size level, the overall effects for each category could vary across studies (

![]() ${\sigma}_{u_{0j}}^2$

and

${\sigma}_{u_{0j}}^2$

and

![]() ${\sigma}_{u_{1j}}^2$

), with both variances set to 0.05 or 0.15 under an assumption of homoskedasticity. The correlation between random study effects was fixed at 0.4, based on prior simulationsReference Fernandez-Castilla, Aloe and Declercq

22

,

Reference Park and Beretvas

28

showing no significant impact of this correlation. We chose this intermediate value as representative of earlier studies. Please note that in the scenario where the moderator variable referred to an effect size-level characteristic, the total variance became slightly smaller, 0.24 and 0.08, respectively, due to the 0.4 correlation between study effects.

${\sigma}_{u_{1j}}^2$

), with both variances set to 0.05 or 0.15 under an assumption of homoskedasticity. The correlation between random study effects was fixed at 0.4, based on prior simulationsReference Fernandez-Castilla, Aloe and Declercq

22

,

Reference Park and Beretvas

28

showing no significant impact of this correlation. We chose this intermediate value as representative of earlier studies. Please note that in the scenario where the moderator variable referred to an effect size-level characteristic, the total variance became slightly smaller, 0.24 and 0.08, respectively, due to the 0.4 correlation between study effects.

All these settings resulted in a total of 768 scenarios, with 1,000 replications conducted for each of them.

4.3 Data analysis

In this section, we outline the statistical techniques that were applied to run the meta-regression models.

4.3.1 Multivariate method

Although this method is not central to the present study, primarily due to the frequent unavailability of the information needed to calculate the covariance among effect sizes within studies, we still find it valuable to explore its performance, as this approach has been increasingly implemented following the approximation of a variance–covariance matrix.Reference Assink and Wibbelink

37

When the moderator variable referred to study-level characteristics, the multivariate model implemented was the one specified in Equations (11) and (14), consistent with the data generation process. In this case, the variance–covariance matrix of the observed effect sizes was approximated using the same correlation value specified during data generation (either 0.4 or 0.8, depending on the simulation condition), with the data function impute_covariance_matrix() from the clubSandwich package.Reference Pustejovsky

38

When the moderator variable referred to effect size-level characteristics, we implemented a multivariate model similar to that specified in Equations (15) and (16), with a simplification applied to Equation 16. Specifically, we removed the term

![]() ${u}_{1j}$

, implying that the difference between moderator categories was assumed to be constant across studies. In this scenario, the approximate variance–covariance matrix was constructed using the average correlation between effect sizes within studies, which varied depending on whether the effect sizes belonged to the same moderator category. This modified multivariate model was used because, in practice, it is uncommon to specify a model that includes a random effect for the difference between moderator categories. It is also important to note that Assink and WibbelinkReference Assink and Wibbelink

37

proposed a more complex multivariate model with an additional (third) level. The significance of the moderator effect (

${u}_{1j}$

, implying that the difference between moderator categories was assumed to be constant across studies. In this scenario, the approximate variance–covariance matrix was constructed using the average correlation between effect sizes within studies, which varied depending on whether the effect sizes belonged to the same moderator category. This modified multivariate model was used because, in practice, it is uncommon to specify a model that includes a random effect for the difference between moderator categories. It is also important to note that Assink and WibbelinkReference Assink and Wibbelink

37

proposed a more complex multivariate model with an additional (third) level. The significance of the moderator effect (

![]() ${\unicode{x3b3}}_1$

) was evaluated using a Wald test.

${\unicode{x3b3}}_1$

) was evaluated using a Wald test.

4.3.2 Three-level models

When the moderator variable reflected study-level characteristics, we fitted the three-level model specified in Equations (1)–(3). For moderator variables that represented effect size-level characteristics, two versions of the three-level models were applied. The first corresponds to the model defined in Equations (1), (4), and (5), where the moderator effect is assumed to be constant across studies (a specification commonly used in published meta-analyses). The second model, defined in Equations (1), (4), and (6), allows the moderator effect to vary across studies, which more accurately reflects the data generation process.

In all these models, the statistical significance of the moderator effect

![]() ${\unicode{x3b3}}_1$

was evaluated using Wald tests (based on Z statistics) with a nominal level of 0.05. LRTs were only used for the traditional three-level model (using Equations (1)–(3) for study-level moderator, and Equations (1), (4), and (5) for effect size-level moderator) by comparing the deviance of the null model with that of the model including the moderator.

${\unicode{x3b3}}_1$

was evaluated using Wald tests (based on Z statistics) with a nominal level of 0.05. LRTs were only used for the traditional three-level model (using Equations (1)–(3) for study-level moderator, and Equations (1), (4), and (5) for effect size-level moderator) by comparing the deviance of the null model with that of the model including the moderator.

However, for the extended three-level model including an additional study-level random effect for the moderator, the significance of the moderator effect was assessed using only Wald tests. This is because comparing the null model to a model that includes both the moderator and its study-level random effect involves estimating three additional parameters: the fixed moderator effect, its variance, and the covariance between study-level random effects. As a result, a significant LRT result could reflect the influence of any of these components, making it difficult to isolate the specific contribution of the moderator effect. The metafor packageReference Viechtbauer 39 was used for these analyses.