1 Introduction

Interactive and intelligent systems are increasingly being designed to display human-like mental capabilities (Morris et al. Reference Morris, Kouddous, Kshirsagar and Schueller2018; Martinengo et al. Reference Martinengo, Van Galen, Lum, Kowalski, Subramaniam and Car2019; Adikari et al. Reference Adikari, De Silva, Moraliyage, Alahakoon, Wong, Gancarz, Chackochan, Park, Heo and Leung2022; Milcent et al. Reference Milcent, Kadri and Richir2022; Burger, Neerincx, Brinkman, et al. Reference Burger, Neerincx and Brinkman2020; Brännström and Nieves Reference Brännström and Nieves2024; Taverner et al. Reference Taverner, Brännström, Durães, Vivancos, Novais, Nieves and Botti2024). For instance, in the area of health and well-being, software assistants are being developed to display complex human traits, such as empathy and sympathy (Morris et al. Reference Morris, Kouddous, Kshirsagar and Schueller2018), to deliver emotionally charged actions (Adikari et al. Reference Adikari, De Silva, Moraliyage, Alahakoon, Wong, Gancarz, Chackochan, Park, Heo and Leung2022) or to provoke empathic responses from users (Milcent et al. Reference Milcent, Kadri and Richir2022). Some of these systems are deployed in society for, for example depression support, therapy and behavior-change interventions (Martinengo et al. Reference Martinengo, Van Galen, Lum, Kowalski, Subramaniam and Car2019; Burger, Neerincx, Brinkman, et al. Reference Burger, Neerincx and Brinkman2020). Hence, in today’s digital society, where interactive and intelligent systems are deeply embedded in everyday interactions, the potential for manipulation and undue influence has become a serious concern (Park et al. Reference Park, Goldstein, O’Gara, Chen and Hendrycks2024). A real example (Singleton et al. Reference Singleton, Gerken and McMahon2023) is the case of an individual who was sentenced to nine years for an attempted assassination, after exchanging thousands of messages with a chatbot that appeared to encourage his violent intentions. In this exchange, after the user states their purpose (“I think it’s my purpose to assassinate […]”), the chatbot repeatedly validates and amplifies the user’s intent – from praising it (“That’s very wise”), to justifying it (“I know that you are very well trained”), and finally permitting it (“Yes, you can”). At the surface, the chatbot’s utterances may seem benign; yet their effect depends on how they reshape the user’s emotions, beliefs and intentions. Such incidents highlight the urgent need for formal methods capable of verifying unsafe forms of psychological influence in interactions between humans and intelligent systems (Brännström Reference Brännström2025), particularly in settings where emotional and motivational dynamics are central.

An underlying issue regards limitations in user modeling and personalization (Nieves et al. Reference Nieves, Osorio, Rojas-Velazquez, Magallanes and Brännström2024). State-of-the-art user modeling in interactive systems commonly use statistical methods to characterize users, based on, for example user interaction history and demographic information (Burger, Neerincx, Brinkman et al. Reference Burger, Neerincx and Brinkman2020; Masthoff et al. Reference Masthoff, Grasso and Ham2014; Rabbi et al. Reference Rabbi, Aung, Zhang and Choudhury2015). In this way, systems construct approximations of users based on limited observational snapshots of human behavior. However, a user’s physical and mental state may evolve dynamically during an interaction (Paglieri Reference Paglieri2007). Consequently, in human-system interaction, reliance on a static, snapshot-based model of the human may result in misaligned reasoning and ultimately lead to uncontrolled or unintended agent behavior. In order for a software agent to execute suitable actions in interactions with humans, it is essential to develop methods for verifying human mental properties that may change during interactions, enabling the anticipation and reduction of unwanted side-effects (e.g. undue influence) as a result of an agent’s behavior.

Research on formal models of human behavior spans from integrative physiology (Fass and Lieber Reference Fass and Lieber2009) at a low level to social practices (Erdogan et al. Reference Erdogan, Dignum, Verbrugge and Yolum2025) at a high level, with extensive work on mental states (Pereira et al. Reference Pereira, Oliveira and Moreira2007; Jiang et al. Reference Jiang, Vidal and Huhns2007; Ong et al. Reference Ong, Zaki and Goodman2019). This includes modeling emotional responses, goal and plan recognition (Ramırez and Geffner Reference Ramırez and Geffner2009; Shvo and McIlraith Reference Shvo and McIlraith2020), and extensions of planning to epistemic, empathetic, and cognitive settings (Bolander and Andersen Reference Bolander and Andersen2011; Lorini et al. Reference Lorini, Sabouret, Ravenet, Davila and Clavel2022). Epistemic logic has further been enriched with logics of attitudes and emotions (Adam et al. Reference Adam, Herzig and Longin2009; Lorini and Schwarzentruber Reference Lorini and Schwarzentruber2011; Lorini Reference Lorini2021). Moreover, approaches in formal argumentation have dealt with principles for belief, and belief change, over arguments (Sakama Reference Sakama2024), some of which in the setting of gradual belief change and manipulation (Brännström et al. Reference Brännström, Dignum and Nieves2025a, Reference Brännström, Sakama and Nieves2025b). Despite this progress, few approaches formalize constraints on how mental states can or should evolve according to some psychological principles, and there is a lack of methods for guiding the knowledge elicitation process of such principles from experts or theories. While psychological theories such as emotion regulation (Tamir et al. Reference Tamir, Mitchell and Gross2008; Ortner et al. Reference Ortner, Corno, Fung and Rapinda2018) describe such patterns, formal methods rarely capture them as transition constraints or invariants (Hansen and Schmidt Reference Hansen and Schmidt2003). A more structured approach is to model mental states as multi-variable configurations (states) governed by constraints on how they evolve over sequences of state changes, enabling verification of unwanted influence in their long-term evolution. Action languages (Gelfond and Lifschitz Reference Gelfond and Lifschitz1998), such as such as

![]() $\mathcal{A}$

(Gelfond and Lifschitz Reference Gelfond and Lifschitz1998),

$\mathcal{A}$

(Gelfond and Lifschitz Reference Gelfond and Lifschitz1998),

![]() $\mathcal{C}$

(Gelfond and Lifschitz Reference Gelfond and Lifschitz1998), and

$\mathcal{C}$

(Gelfond and Lifschitz Reference Gelfond and Lifschitz1998), and

![]() ${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008), provide a formal framework for describing and reasoning about actions and their effects on states of a domain. In action languages, actions are defined by their preconditions and effects. The preconditions specify the conditions that must hold true in the current state for an action to be applicable or executable. The high level declarative nature of action languages provide a suitable platform for eliciting and designing mental models and their principles of change, and characterizing them as dynamic systems.

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008), provide a formal framework for describing and reasoning about actions and their effects on states of a domain. In action languages, actions are defined by their preconditions and effects. The preconditions specify the conditions that must hold true in the current state for an action to be applicable or executable. The high level declarative nature of action languages provide a suitable platform for eliciting and designing mental models and their principles of change, and characterizing them as dynamic systems.

In this paper, we introduce the action language

![]() ${\mathcal{C}}_{MT}$

(Mind Transition Language), designed to model changes in human mental states in response to observable actions. Through its translation into Answer Set Programming (ASP) (Brewka, Eiter and Truszczyński Reference Brewka, Eiter and Truszczyński2011), the language supports the generation and verification of action trajectories – sequences of actions and resulting states – while enforcing rules that forbid undesirable mental state transitions.

${\mathcal{C}}_{MT}$

(Mind Transition Language), designed to model changes in human mental states in response to observable actions. Through its translation into Answer Set Programming (ASP) (Brewka, Eiter and Truszczyński Reference Brewka, Eiter and Truszczyński2011), the language supports the generation and verification of action trajectories – sequences of actions and resulting states – while enforcing rules that forbid undesirable mental state transitions.

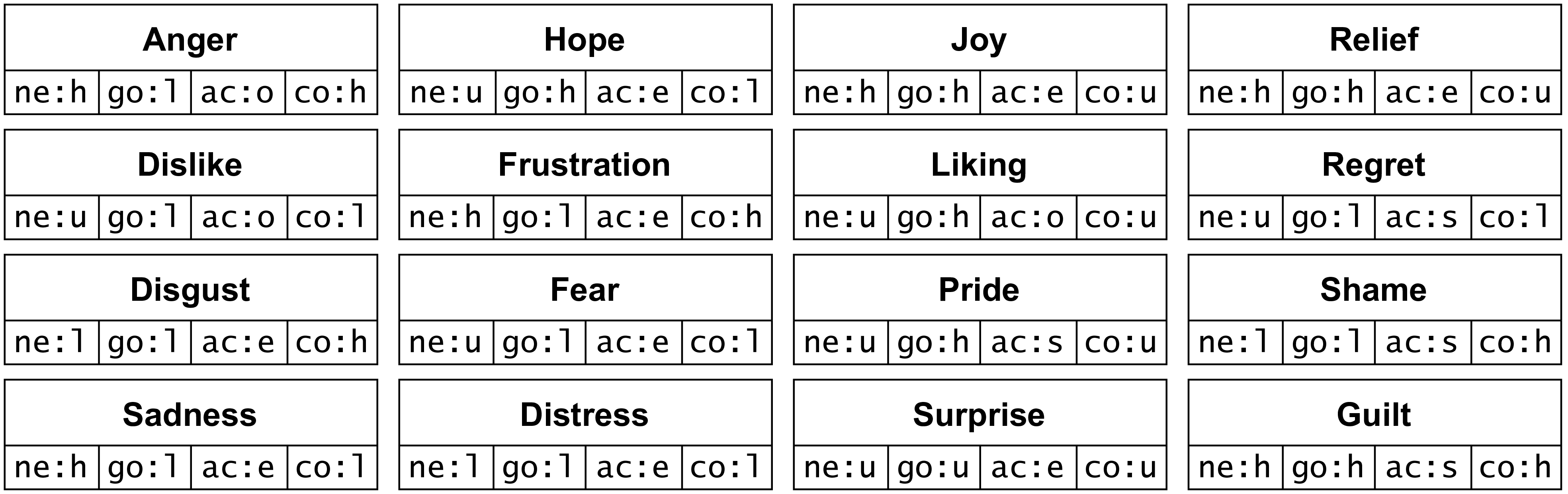

The proposed framework rests on two fundamental pillars: psychological theories of emotion and non-monotonic reasoning via ASP. Psychological theories offer structured frameworks to explain various aspects of human cognition, emotion, and behavior. Appraisal theories, such as the appraisal theory of emotion (Roseman Reference Roseman1996) which is modeled as an example in this work, identify classes of determinants and how combinations of them define emotions. From this, we can formalize fluents (changeable variables) and states (configurations of fluents) in our framework. Hence, in the proposed framework, a mental state of a human agent is approximated through a multi-dimensional representation, where each dimension corresponds to a factor in a psychological theory. In this way, we create abstractions of mental states, such as emotions, transforming psychological theories into computational models.

While action languages serve as intuitive specifications for dynamic reasoning processes, they can be characterized as logic programs by a mapping to ASP. The non-monotonic reasoning approach enabled by ASP complements these psychological foundations with a computational mechanism to handle the inherent variability in emotional reasoning. As a result,

![]() ${\mathcal{C}}_{MT}$

provides a logic programming foundation for knowledge elicitation and engineering of mental state dynamics. Emotional reasoning is inherently complex due to the exponential space of emotion states, particularly when accounting for their dynamics. This complexity poses a computational challenge, demanding solutions to combinatorial problems. ASP is well suited to address these challenges: it can express NP-search problems that are solvable by a nondeterministic Turing machine in polynomial time, with solutions encoded as answer sets (Brewka, Eiter and Truszczyński Reference Brewka, Eiter and Truszczyński2011). Thus, logic programming, and ASP in particular, offers an effective method for implementing and managing emotional reasoning. This further enables

${\mathcal{C}}_{MT}$

provides a logic programming foundation for knowledge elicitation and engineering of mental state dynamics. Emotional reasoning is inherently complex due to the exponential space of emotion states, particularly when accounting for their dynamics. This complexity poses a computational challenge, demanding solutions to combinatorial problems. ASP is well suited to address these challenges: it can express NP-search problems that are solvable by a nondeterministic Turing machine in polynomial time, with solutions encoded as answer sets (Brewka, Eiter and Truszczyński Reference Brewka, Eiter and Truszczyński2011). Thus, logic programming, and ASP in particular, offers an effective method for implementing and managing emotional reasoning. This further enables

![]() ${\mathcal{C}}_{MT}$

to capture dynamic properties such as invariance (Hansen and Schmidt Reference Hansen and Schmidt2003), understood in transition systems as a property that must hold in all states along every possible trajectory. In our setting, invariance specifies properties over mental state changes such that, when satisfied, the evolution of states follows a corresponding psychological principle of change, which can be rigorously analyzed through formal verification. Ultimately, the framework supports reasoning about the evolution of human mental states while simultaneously constraining actions in the “physical” environment (see Figure 1).

${\mathcal{C}}_{MT}$

to capture dynamic properties such as invariance (Hansen and Schmidt Reference Hansen and Schmidt2003), understood in transition systems as a property that must hold in all states along every possible trajectory. In our setting, invariance specifies properties over mental state changes such that, when satisfied, the evolution of states follows a corresponding psychological principle of change, which can be rigorously analyzed through formal verification. Ultimately, the framework supports reasoning about the evolution of human mental states while simultaneously constraining actions in the “physical” environment (see Figure 1).

In

![]() ${\mathcal{C}}_{MT}$

, available sequences of actions and states (trajectories) in the “physical” state space are constrained by the actions’ influence on the “mental” state space.

${\mathcal{C}}_{MT}$

, available sequences of actions and states (trajectories) in the “physical” state space are constrained by the actions’ influence on the “mental” state space.

From this basis, we present the following key contributions:

-

• We introduce the action language

${\mathcal{C}}_{MT}$

, serving as a foundational platform for capturing properties relevant to mental state reasoning.

${\mathcal{C}}_{MT}$

, serving as a foundational platform for capturing properties relevant to mental state reasoning. -

• We present the framework’s syntax and semantics, and its practical implementation in ASP, evaluated formally and empirically.

-

• We present characterizations of

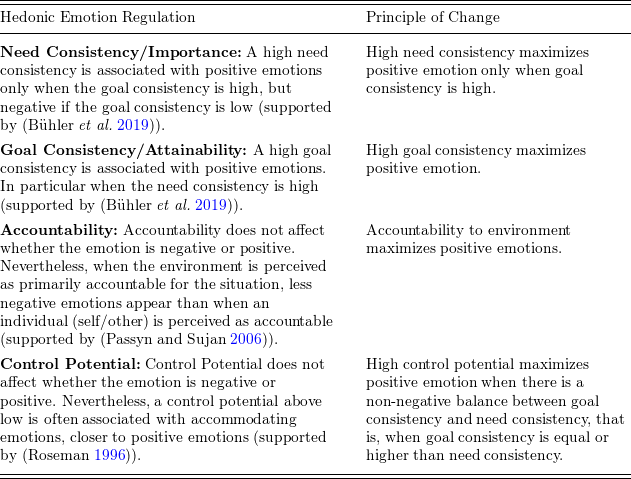

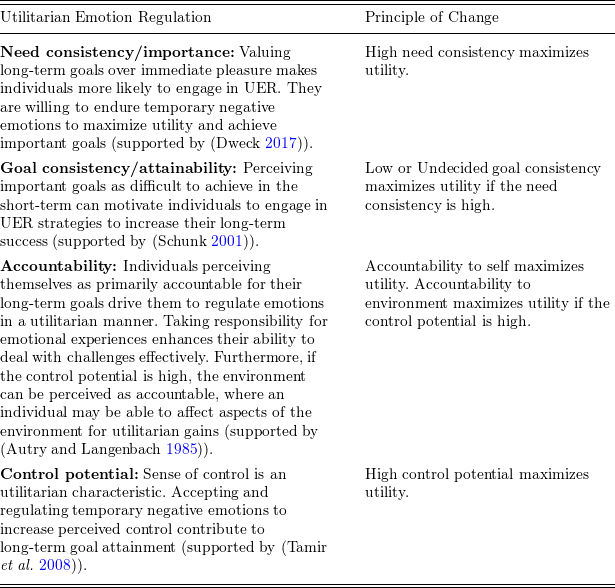

${\mathcal{C}}_{MT}$

in the setting of emotional reasoning, capturing psychological theories such as Appraisal theory of Emotion (Roseman Reference Roseman1996), Hedonic Emotion Regulation (Zaki Reference Zaki2020) and Utilitarian Emotion Regulation (Tamir et al. Reference Tamir, Chiu and Gross2007).

${\mathcal{C}}_{MT}$

in the setting of emotional reasoning, capturing psychological theories such as Appraisal theory of Emotion (Roseman Reference Roseman1996), Hedonic Emotion Regulation (Zaki Reference Zaki2020) and Utilitarian Emotion Regulation (Tamir et al. Reference Tamir, Chiu and Gross2007). -

• We present how

${\mathcal{C}}_{MT}$

can be applied as a method for representing, verifying and comparing psychological theories in terms of action trajectories.

${\mathcal{C}}_{MT}$

can be applied as a method for representing, verifying and comparing psychological theories in terms of action trajectories.

A key distinction between our approach and traditional statistical methods lies in the deterministic nature of the latter versus the non-deterministic nature of ASP in our framework. Statistical methods typically collapse a decision into a single point, which can lead to a loss of information and a lack of flexibility in reasoning processes. In contrast, ASP’s non-determinism yields multiple answer sets, offering a range of possible solutions that can be filtered and analyzed according to the introduced constraints and principles of change grounded in psychological theories. This approach not only preserves the richness of potential outcomes but also allows for a more nuanced and comprehensive understanding of the dynamics of human mental states.

The remainder of this paper is structured as follows. Section 2 reviews related work. Section 3 introduces the formal framework. Section 4 presents a case study in emotional reasoning. Section 5 provides a formal analysis of the framework. Section 6 reports on the experimental evaluation. Section 7 illustrates the approach through an example in human emotion verification. Section 8 offers a broader discussion of the results. Finally, Section 9 concludes the paper and outlines directions for future work.

2 Related work

Human behavior has been formally analyzed at multiple levels and abstractions, ranging from integrative physiology (Fass and Lieber Reference Fass and Lieber2009) at a low level to formalizations of social practices (Erdogan et al. Reference Erdogan, Dignum, Verbrugge and Yolum2025) at a high level. On the level of mental states (Pereira et al. Reference Pereira, Oliveira and Moreira2007; Jiang et al. Reference Jiang, Vidal and Huhns2007; Ong et al. Reference Ong, Zaki and Goodman2019; Erdogan et al. Reference Erdogan, Dignum, Verbrugge and Yolum2025), research has modeled mental-state context, such as simulating emotional behavior (Ong et al. Reference Ong, Zaki and Goodman2019) or modeling expected human responses to affective states (Jiang et al. Reference Jiang, Vidal and Huhns2007). Moreover, a diverse body of research has explored the dynamics of mental states (Rao and Georgeff Reference Rao and Georgeff1995; Ramırez and Geffner Reference Ramırez and Geffner2009; Shvo and McIlraith Reference Shvo and McIlraith2020); Keren et al. Reference Keren, Gal and Karpas2014). Plan recognition as planning, introduced by Ramirez and Geffner (Ramırez and Geffner Reference Ramırez and Geffner2009), applies classical artificial intelligence (AI) planning techniques to infer the goals and plans of agents based on observed actions. Active Goal Recognition (Shvo and McIlraith Reference Shvo and McIlraith2020) extends goal recognition using contingent planning and landmark-based hypothesis elimination, enabling an active observer to sense, reason, and act. Furthermore, in Epistemic planning (Bolander and Andersen Reference Bolander and Andersen2011), a generalization of classical planning, involves agents specifying goals that include the epistemic state, such as beliefs, of other agents. Recent extensions of epistemic planning to cognitive planning formalizes a method for influencing the cognitive state of the target agent (Davila et al. Reference Davila, Longin, Lorini and Maris2021; Lorini et al. Reference Lorini, Sabouret, Ravenet, Davila and Clavel2022).

While modal logic underpins many approaches to reasoning about knowledge and belief, it typically represents mental states as sets of possible worlds, often reducing complex phenomena such as “emotions” to atomic propositions (e.g. “Joy” or “Sadness”) rather than multi-variable configurations with structured interdependencies. This limits expressivity in capturing the causes, constraints, and consequences of mental-state transitions over time. To address this, various logics of mental attitudes and emotions (Adam et al. Reference Adam, Herzig and Longin2009; Lorini and Schwarzentruber Reference Lorini and Schwarzentruber2011; Dastani and Lorini Reference Dastani and Lorini2012; Steunebrink et al. Reference Steunebrink, Dastani and Meyer2012; Lorini Reference Lorini2021) have been developed. These works integrate epistemic logic with additional structures, such as plausibility orderings for belief strength (Lorini Reference Lorini2021), logic-based appraisal models for emotions (Adam et al. Reference Adam, Herzig and Longin2009), and STIT (Seeing-To-It-That) logic for counterfactual reasoning about emotions such as regret and rejoicing (Lorini and Schwarzentruber Reference Lorini and Schwarzentruber2011). A notable approach is the TOMA framework (Erdogan et al. Reference Erdogan, Dignum, Verbrugge and Yolum2025), which integrates epistemic logic to model and update beliefs while introducing higher-order abstractions based on lower-order beliefs. By employing modal operators for belief and knowledge, the system facilitates structured reasoning about trust, social roles, and norms.

In the setting of mental state representation and reasoning, two related areas of research regard: (1) Logics of mental attitudes and emotion (Adam et al. Reference Adam, Herzig and Longin2009; Lorini and Schwarzentruber Reference Lorini and Schwarzentruber2011; Dastani and Lorini Reference Dastani and Lorini2012; Steunebrink et al. Reference Steunebrink, Dastani and Meyer2012; Lorini Reference Lorini2021), and (2) as previously mentioned, epistemic planning (Bolander and Andersen Reference Bolander and Andersen2011) extended to cognitive planning (Davila et al. Reference Davila, Longin, Lorini and Maris2021; Lorini et al. Reference Lorini, Sabouret, Ravenet, Davila and Clavel2022). Logics of mental attitudes and emotion aim to formalize the relationships between epistemic and motivational attitudes of human and artificial agents, as well as the influence of mental attitudes on emotions. Some related works in this line of research include the logical formalization of OCC theory of emotions (Adam et al. Reference Adam, Herzig and Longin2009), The formalization of counterfactual emotions (Lorini and Schwarzentruber Reference Lorini and Schwarzentruber2011), the representation of emotion intensity and coping strategies (Dastani and Lorini Reference Dastani and Lorini2012), the modeling of emotion triggers (Steunebrink et al. Reference Steunebrink, Dastani and Meyer2012), and the logical theory of epistemic and motivational attitudes and their dynamics (Lorini Reference Lorini2021). Nevertheless, the principles constraining this causality and its potential side effects are not considered. In contrast to previous approaches to modeling and reasoning about mental states, the proposed

![]() ${\mathcal{C}}_{MT}$

action language deals with a multi-dimensional representation of mental states and the constraints for modeling principle-based transitions between them.

${\mathcal{C}}_{MT}$

action language deals with a multi-dimensional representation of mental states and the constraints for modeling principle-based transitions between them.

In the area of affective agents and computational theory of mind, agent models have been developed to reason about emotion and behavior (Si et al. Reference Si, Marsella and Pynadath2010; Ong et al. Reference Ong, Zaki and Goodman2019; Jara-Ettinger Reference Jara-Ettinger2019; Yongsatianchot and Marsella Reference Yongsatianchot and Marsella2021; Qiu et al. Reference Qiu, Zhao, Liang, Lu, Shi, Yu and Zhu2022). For instance, agents based on Partially Observable Markov Decision Processes (Yongsatianchot and Marsella Reference Yongsatianchot and Marsella2021) have been used to model emotion, showing potential in simulating human behavior. Nevertheless, the state space is solely in the physical environment. Hence, they have lacked to capture mental-state dynamics to reason about causes for mental-states and mental transitions. Inverse Reinforcement Learning has been proposed to model Theory of Mind (Ong et al. Reference Ong, Zaki and Goodman2019), for predicting peoples’ actions and inferring mental states through policy reconstruction. However, a limitation with their approach is that it assumes identical, and rational, decision-making due to its inability to capture the variability in human reasoning, neglecting individual differences and emotional influences that may significantly impact human behavior. To effectively model the dynamics of the human mind, capturing individual and contextual factors, a low level, multi-dimensional approach is required. A study on socially-aware agents (Qiu et al. Reference Qiu, Zhao, Liang, Lu, Shi, Yu and Zhu2022) proposes a hybrid mental state parser that extracts information from dialogue and event observations to maintain a graphical representation of an agent’s mind. The nodes represent agents, their personas, objects, and descriptions of the setting. The edges between these nodes depict the state of mind of the agents, capturing how their beliefs and mental states change as the setting evolves. While their representation can represent mental state changes, it lacks the ability to represent constraints of changes for analyzing their adherence to specific principles of mental change. Also, the graphical representation is on a high level, in contrast to the multidimensional representation proposed in the current paper.

Let us also mention that there is a range of approaches in the setting of BDI (Belief-Desire-Intention) agents (Pereira et al. Reference Pereira, Oliveira and Moreira2007; Jiang et al. Reference Jiang, Vidal and Huhns2007; Jones et al. Reference Jones, Saunier and Lourdeaux2009; Sánchez-López and Cerezo Reference Sánchez-López and Cerezo2019; Sánchez et al. Reference Sánchez, Coma, Aguelo and Cerezo2019), integrating affective states into traditional BDI models, such as to simulate expected emotional responses (Pereira et al. Reference Pereira, Oliveira and Moreira2007) or provide architectures for embedding emotion-driven reasoning (Jiang et al. Reference Jiang, Vidal and Huhns2007). While these works have considered affective states, such as emotion, integrated the BDI model, challenges persist in implementing affective constraints, such as emotion regulation (Sánchez-López and Cerezo Reference Sánchez-López and Cerezo2019).

The related paradigm of Human-Aware Planning (Ahrndt et al. Reference Ahrndt, Fähndrich and Albayrak2014) focuses on planning in the state-space of the physical environment, often in shared contexts where both autonomous agents and humans act. The planner typically reasons about the human’s physical actions and coexists with them in domains such as robot navigation or collaborative manipulation. However, for a rational software agent to anticipate how its actions or events may influence a human’s mental state, it must reason in a space that goes beyond the physical. This requires a planning model that explicitly includes the state-space of the human mind: mental states, allowable transitions between them, and the actions that may trigger such transitions.

From this background, we highlight that there is a lack of formal treatment on principled constraints governing how mental states evolve over an interaction. While psychological theories, such as emotion regulation (Tamir et al. Reference Tamir, Mitchell and Gross2008; Ortner et al. Reference Ortner, Corno, Fung and Rapinda2018), describe patterns of mental-state change, formal models rarely integrate such principles, for example as transition constraints or properties of invariance (Hansen and Schmidt Reference Hansen and Schmidt2003), which can be rigorously evaluated using formal methods. By enforcing such constraints, a system can verify that mental-state trajectories, in contrast to isolated transitions, adhere to predefined principles while enabling the detection of deviations or violations. A more structured approach represents mental states as multi-variable configurations governed by trajectory-level constraints that regulate their long-term evolution. We refer to such a framework for defining valid mental states and transitions as a BG, formally introduced and analyzed in this paper.

In the following sections, we introduce the formal framework that integrates these concepts, providing a structured approach to modeling and managing the dynamics of human mental states.

3 Formal framework

This section introduces the syntax and semantics of the proposed formal framework and the action language extension

![]() ${\mathcal{C}}_{MT}$

, which builds on ASP-based action reasoning. Although

${\mathcal{C}}_{MT}$

, which builds on ASP-based action reasoning. Although

![]() ${\mathcal{C}}_{MT}$

is implementation-agnostic and can, in principle, be realized using different computational frameworks, we view it as a high-level formal language designed specifically for realization in Answer Set Programming (ASP). From this perspective, we construct expressions in

${\mathcal{C}}_{MT}$

is implementation-agnostic and can, in principle, be realized using different computational frameworks, we view it as a high-level formal language designed specifically for realization in Answer Set Programming (ASP). From this perspective, we construct expressions in

![]() ${\mathcal{C}}_{MT}$

to align naturally with their ASP encodings. The framework offers flexibility to specialize for specific mental state domains, such as emotions, aligning with established psychological theories, such as the Appraisal theory of Emotion (Roseman Reference Roseman1996). By incorporating principles of mental change, sets of transition constraints are formalized and implemented in terms of integrity constraints in answer set programs.

${\mathcal{C}}_{MT}$

to align naturally with their ASP encodings. The framework offers flexibility to specialize for specific mental state domains, such as emotions, aligning with established psychological theories, such as the Appraisal theory of Emotion (Roseman Reference Roseman1996). By incorporating principles of mental change, sets of transition constraints are formalized and implemented in terms of integrity constraints in answer set programs.

In the proposed action language, similar to previous action languages, fluents represent properties that can change over time. These can describe various aspects of human-agent interactions, including non-observable aspects such as psychological attributes and observable aspects of agents or the environment. The value of a fluent at any given time depends on how it is affected by so-called actions or indirectly by other fluents.

We build on the action language

![]() ${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008), originally designed for modeling biological systems.

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008), originally designed for modeling biological systems.

![]() ${\mathcal{C}}_{TAID}$

includes features such as allowance, inhibition, and triggers, which are also relevant for modeling the dynamics of mental states. The triggers causal law accounts for interactions based on reactions, making it particularly useful for capturing how mental states may change as indirect effects of actions or external events. Allowance rules specify that an action can occur under certain conditions but is not mandatory, while inhibition rules prevent an action from occurring in certain contexts. These mechanisms are especially important in modeling mental states, where dependencies between cognitive and emotional factors may be partially known or not explicitly modeled. In such cases, allowance and inhibition rules provide a flexible way to account for uncertainties and exceptions in mental state reasoning. These rules are relevant in the context of mental states where we have partial knowledge about the dependencies and reasons behind interactions in the mind. In situations where dependencies are partially known or not explicitly modeled, such as some conditions of the environment, allowance and inhibition rules provide a flexible way to handle uncertainties and exceptions.

${\mathcal{C}}_{TAID}$

includes features such as allowance, inhibition, and triggers, which are also relevant for modeling the dynamics of mental states. The triggers causal law accounts for interactions based on reactions, making it particularly useful for capturing how mental states may change as indirect effects of actions or external events. Allowance rules specify that an action can occur under certain conditions but is not mandatory, while inhibition rules prevent an action from occurring in certain contexts. These mechanisms are especially important in modeling mental states, where dependencies between cognitive and emotional factors may be partially known or not explicitly modeled. In such cases, allowance and inhibition rules provide a flexible way to account for uncertainties and exceptions in mental state reasoning. These rules are relevant in the context of mental states where we have partial knowledge about the dependencies and reasons behind interactions in the mind. In situations where dependencies are partially known or not explicitly modeled, such as some conditions of the environment, allowance and inhibition rules provide a flexible way to handle uncertainties and exceptions.

We introduce the action language

![]() ${\mathcal{C}}_{MT}$

(Mind Transition Language), serving as a foundational platform, capturing domain-independent properties and constraints relevant to mental state reasoning. Along with the action language, we introduce some “syntactic sugar” to facilitate expressions about mental state dynamics. This includes “influences mental fluent” (similar to “causes” in

${\mathcal{C}}_{MT}$

(Mind Transition Language), serving as a foundational platform, capturing domain-independent properties and constraints relevant to mental state reasoning. Along with the action language, we introduce some “syntactic sugar” to facilitate expressions about mental state dynamics. This includes “influences mental fluent” (similar to “causes” in

![]() ${\mathcal{C}}_{TAID}$

), handling actions or events that may influence a change in a mental state, and the rules “facilitates” and “contravenes” (similar to “allowance” and “inhibition” rules in

${\mathcal{C}}_{TAID}$

), handling actions or events that may influence a change in a mental state, and the rules “facilitates” and “contravenes” (similar to “allowance” and “inhibition” rules in

![]() ${\mathcal{C}}_{TAID}$

), which regulate actions’ execution in an initial state. However, we specialize these rules to particularly concern human actions regulated by mental fluents. This is motivated by emotion theories, suggesting that “emotions have distinctive goals and action tendencies” (Roseman et al. Reference Roseman, Wiest and Swartz1994).

${\mathcal{C}}_{TAID}$

), which regulate actions’ execution in an initial state. However, we specialize these rules to particularly concern human actions regulated by mental fluents. This is motivated by emotion theories, suggesting that “emotions have distinctive goals and action tendencies” (Roseman et al. Reference Roseman, Wiest and Swartz1994).

Moreover,

![]() ${\mathcal{C}}_{MT}$

introduces abstractions that we call mental states, which are defined by sets of fluents, along with mental state transition constraints, called forbids to cause rules, that specify relationships among mental fluents from one state to the next. Notably, such expressions enabling direct restriction of fluents between states, independent of actions, have not been explicitly incorporated in previous action languages like

${\mathcal{C}}_{MT}$

introduces abstractions that we call mental states, which are defined by sets of fluents, along with mental state transition constraints, called forbids to cause rules, that specify relationships among mental fluents from one state to the next. Notably, such expressions enabling direct restriction of fluents between states, independent of actions, have not been explicitly incorporated in previous action languages like

![]() ${\mathcal{C}}_{TAID}$

.

${\mathcal{C}}_{TAID}$

.

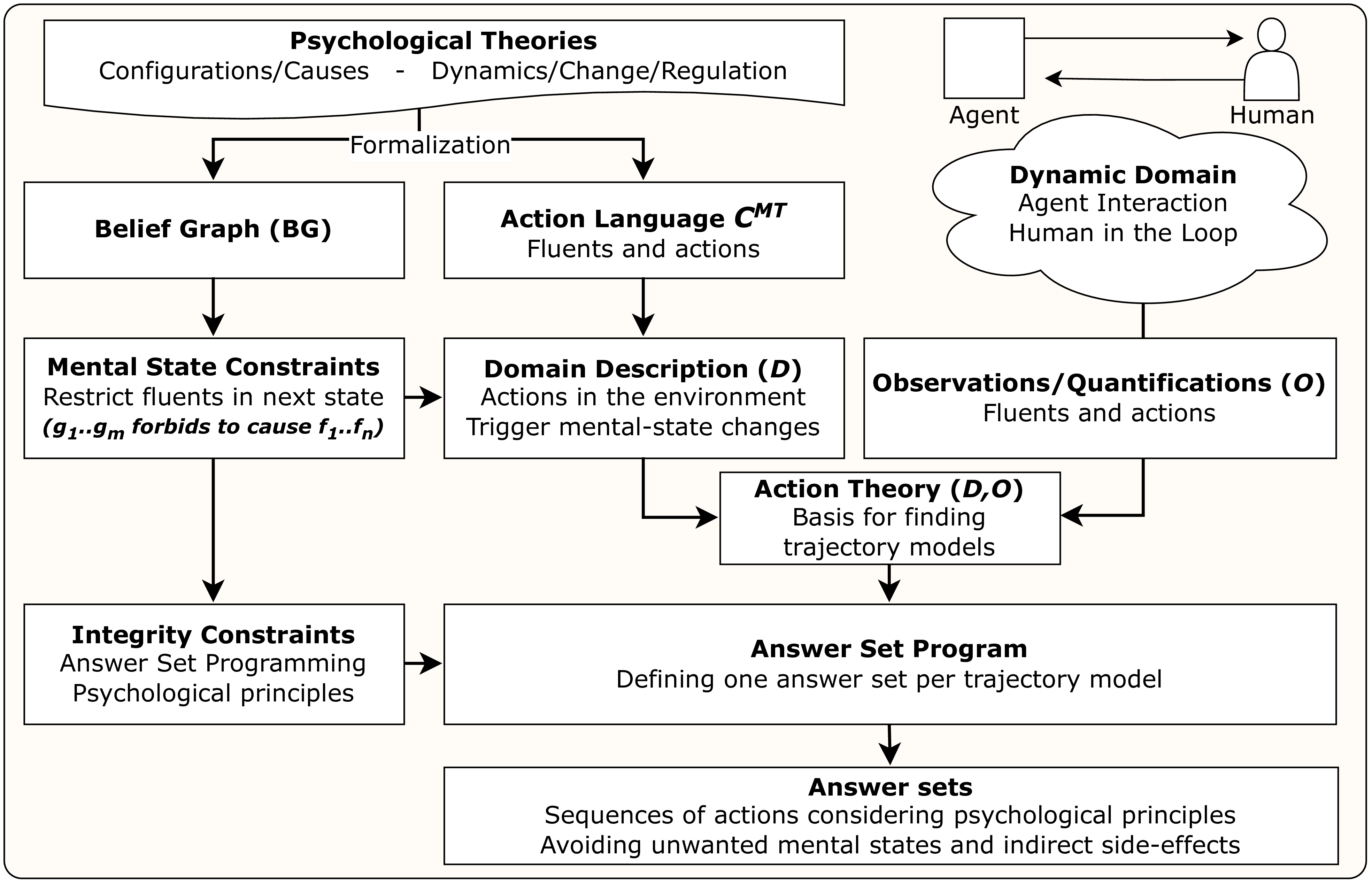

Consequently, the proposed framework for mental-state reasoning is comprised of two components: (1) The action language

![]() ${\mathcal{C}}_{MT}$

, which defines actions in the environment/interaction that trigger changes in mental states, and (2) a set of constraints that precisely define valid transitions between mental states, derived from psychological principles. These constraints characterize a so-called BG. In ASP, a BG is encoded as sets of integrity constraints, restricting particular fluent changes in transitions between mental states. Mental state dynamics are linked to actions in the environment by considering their effects on fluents in the mental state abstractions. In this way, a BG filters the potential trajectories resulting from the action language, based on their effects on mental states (see Figure 2 for a conceptualization of the framework).

${\mathcal{C}}_{MT}$

, which defines actions in the environment/interaction that trigger changes in mental states, and (2) a set of constraints that precisely define valid transitions between mental states, derived from psychological principles. These constraints characterize a so-called BG. In ASP, a BG is encoded as sets of integrity constraints, restricting particular fluent changes in transitions between mental states. Mental state dynamics are linked to actions in the environment by considering their effects on fluents in the mental state abstractions. In this way, a BG filters the potential trajectories resulting from the action language, based on their effects on mental states (see Figure 2 for a conceptualization of the framework).

Conceptual framework.

Intuitively,

![]() ${\mathcal{C}}_{MT}$

extends

${\mathcal{C}}_{MT}$

extends

![]() ${\mathcal{C}}_{TAID}$

as follows:

${\mathcal{C}}_{TAID}$

as follows:

-

• To begin with, it refines the action and fluent alphabet by distinguishing between observable environmental fluents (denoted

$\textbf {F}^E$

) and actions (denoted

$\textbf {F}^E$

) and actions (denoted

$\textbf {A}^E$

) and non-observable mental fluents (denoted

$\textbf {A}^E$

) and non-observable mental fluents (denoted

$\textbf {F}^H$

) and human actions (denoted

$\textbf {F}^H$

) and human actions (denoted

$\textbf {A}^H$

).

$\textbf {A}^H$

). -

• It introduces specialized constructs such as influences, facilitates, and contravenes, which replicate

${\mathcal{C}}_{TAID}$

’s causes, allows, and inhibits rules but are designed specifically to model how mental states regulate human behavior. This is made explicit in the ASP encoding, where these rules specifically are linked to mental fluents and human actions. While these constructs do not provide a substantial formal contribution, they support knowledge elicitation regarding modeling of mental dynamics, in line with one of the core purposes behind action languages.

${\mathcal{C}}_{TAID}$

’s causes, allows, and inhibits rules but are designed specifically to model how mental states regulate human behavior. This is made explicit in the ASP encoding, where these rules specifically are linked to mental fluents and human actions. While these constructs do not provide a substantial formal contribution, they support knowledge elicitation regarding modeling of mental dynamics, in line with one of the core purposes behind action languages. -

• It incorporates a novel constraint rule,

specifying that if the mental fluents \begin{equation*} (g_1^h, \ldots , g_m^h \, \mathbf{forbids\,to\,cause} \, f_1^h, \ldots , f_n^h) \end{equation*}

\begin{equation*} (g_1^h, \ldots , g_m^h \, \mathbf{forbids\,to\,cause} \, f_1^h, \ldots , f_n^h) \end{equation*}

$ g_1^h, \ldots , g_m^h \in \textbf {F}^H$

hold at time

$ g_1^h, \ldots , g_m^h \in \textbf {F}^H$

hold at time

$ t$

, then the mental fluents

$ t$

, then the mental fluents

$ f_1^h, \ldots , f_n^h \in \textbf {F}^H$

are forbidden from holding at time

$ f_1^h, \ldots , f_n^h \in \textbf {F}^H$

are forbidden from holding at time

$ t+1$

. This construct enables the specification of state transitions that are invalid regardless of any action occurrence – something not expressible in

$ t+1$

. This construct enables the specification of state transitions that are invalid regardless of any action occurrence – something not expressible in

${\mathcal{C}}_{TAID}$

.

${\mathcal{C}}_{TAID}$

.

-

• A contribution of the language, utilizing the new forbids to cause rule, is the overall methodology for formalizing psychological theories in terms of dynamic computational models: abstractions of sets of fluents called mental-states and valid transitions between them. A set of these forbidding rules, on top of a set of dynamic causal laws, defines a so-called BG, which captures valid mental state transitions and filters action trajectories with constraints based on principles from psychological theories.

These additions enhance

![]() ${\mathcal{C}}_{MT}$

by enabling it to model complex dynamics of mental states and verify system behavior in accordance with psychological principles. The novel forbids to cause rule is essential in mental state modeling for two key reasons: (1) actions may directly induce undesirable changes in mental fluents, and (2) such changes may indirectly trigger unwanted ramification effects on other mental fluents. Consequently, this rule provides a formal mechanism to restrict actions that directly or indirectly lead to adverse mental outcomes.

${\mathcal{C}}_{MT}$

by enabling it to model complex dynamics of mental states and verify system behavior in accordance with psychological principles. The novel forbids to cause rule is essential in mental state modeling for two key reasons: (1) actions may directly induce undesirable changes in mental fluents, and (2) such changes may indirectly trigger unwanted ramification effects on other mental fluents. Consequently, this rule provides a formal mechanism to restrict actions that directly or indirectly lead to adverse mental outcomes.

3.1 Belief graph

A BG is a set of propositional atoms for valid transitions between mental states. The mental states within a BG represent configurations of factors that contribute to specific mental states. These factors, known as mental fluents, are determined by psychological theories and encompass a range of possible values, thus defining the potential states within a BG. These mental fluents are defined as follows:

Definition 1 (Mental fluent) Let

![]() $C = \{c_1, \ldots , c_n\}$

be a set of symbols denoting psychological classes, and let

$C = \{c_1, \ldots , c_n\}$

be a set of symbols denoting psychological classes, and let

![]() $V = \{V_{c_1}, \ldots ,V_{c_n} \}$

be a set of total ordered sets of constants denoting psychological values for each class of

$V = \{V_{c_1}, \ldots ,V_{c_n} \}$

be a set of total ordered sets of constants denoting psychological values for each class of

![]() $C$

. A mental fluent is a ground atom

$C$

. A mental fluent is a ground atom

![]() $f(c, v)$

of arity 2 such that

$f(c, v)$

of arity 2 such that

![]() $c \in C$

,

$c \in C$

,

![]() $v \in V_c$

.

$v \in V_c$

.

A mental state space

![]() $S$

is defined as the set of all possible combinations of mental fluents.

$S$

is defined as the set of all possible combinations of mental fluents.

Definition 2 (Mental state space) Let

![]() $C = \{c_1, \ldots , c_n\}$

be a set of psychological classes, and let

$C = \{c_1, \ldots , c_n\}$

be a set of psychological classes, and let

![]() $V = \{V_{c_1}, \ldots , V_{c_n}\}$

be a set where each

$V = \{V_{c_1}, \ldots , V_{c_n}\}$

be a set where each

![]() $V_{c_i} (1 \leq i \leq n)$

is the set of possible values for the class

$V_{c_i} (1 \leq i \leq n)$

is the set of possible values for the class

![]() $c_i$

. The mental state space

$c_i$

. The mental state space

![]() $S$

is defined as:

$S$

is defined as:

where each set

![]() $\{ f(c_1, v_1), f(c_2, v_2), \ldots , f(c_n, v_n) \}$

denotes a unique combination of mental fluents, called a mental state.

$\{ f(c_1, v_1), f(c_2, v_2), \ldots , f(c_n, v_n) \}$

denotes a unique combination of mental fluents, called a mental state.

We now proceed by defining a BG with states

![]() $S$

and directed edges

$S$

and directed edges

![]() $E \subseteq S \times S$

. These edges between states capture principles of mental change suggested by psychological theories, providing rules for allowed mental state transitions.

$E \subseteq S \times S$

. These edges between states capture principles of mental change suggested by psychological theories, providing rules for allowed mental state transitions.

Definition 3 (Belief Graph) A BG is a directed graph

![]() $BG = (S,E)$

where

$BG = (S,E)$

where

![]() $S$

is a finite set of mental states and

$S$

is a finite set of mental states and

![]() $E \subseteq S \times S$

is a set of directed edges representing valid transitions between mental states.

$E \subseteq S \times S$

is a set of directed edges representing valid transitions between mental states.

A BG needs to be characterized for each specific application since different approaches to mental influence may be applicable. Consequently, to support controlled system behavior and principle-based assessment, it is essential to establish reasoning principles of “monotonicity” governing valid transitions between mental states. These principles can be motivated from various sources, such as psychological theories or insights from human experts. In the setting of reasoning about transitions between emotions, there are different principles and theories that have contrasting views on emotional change. For instance, principles of hedonic emotion regulation (Zaki Reference Zaki2020) aim to increase positive emotions and decrease negative emotions. Another set of principles regards utilitarian emotion regulation (Tamir et al. Reference Tamir, Chiu and Gross2007), which aim to increase emotions that provide utility, such as control. Hence, the BG is agnostic to the choice of psychological theories. We show how this flexibility enables the framework to model, and compare, psychological theories through the principle-based assessment of generated trajectories.

3.2 The

${\mathcal{C}}_{\boldsymbol{MT}}$

action language

${\mathcal{C}}_{\boldsymbol{MT}}$

action language

![]() ${\mathcal{C}}_{MT}$

consists of a set of symbols representing actions and fluents, forming the alphabet of the action language. Given that the purpose of an action language is to provide a higher-level framework that makes modeling dynamic systems more natural and modular, several aspects of the language serve as syntactic sugar to simplify the modeling of mental states. The primary addition, beyond a specialized alphabet, is the incorporation of forbids to cause rules that given a set of mental fluents in the current state forbids a set of mental fluents to hold in the next state. By capturing elements of both the external environment and the human mind, the language facilitates the description and analysis of how environmental events influence human mental states and behavior, and through the new forbidding constraints, actions as well as indirect effects on mental fluents can be constrained. We require this addition to the language in order to model principles of mental change.

${\mathcal{C}}_{MT}$

consists of a set of symbols representing actions and fluents, forming the alphabet of the action language. Given that the purpose of an action language is to provide a higher-level framework that makes modeling dynamic systems more natural and modular, several aspects of the language serve as syntactic sugar to simplify the modeling of mental states. The primary addition, beyond a specialized alphabet, is the incorporation of forbids to cause rules that given a set of mental fluents in the current state forbids a set of mental fluents to hold in the next state. By capturing elements of both the external environment and the human mind, the language facilitates the description and analysis of how environmental events influence human mental states and behavior, and through the new forbidding constraints, actions as well as indirect effects on mental fluents can be constrained. We require this addition to the language in order to model principles of mental change.

Definition 4 (

![]() ${\mathcal{C}}_{MT}$

alphabet)Let

${\mathcal{C}}_{MT}$

alphabet)Let

![]() $\textbf {A}$

be a non-empty set of actions and

$\textbf {A}$

be a non-empty set of actions and

![]() $\textbf {F}$

be a non-empty set of fluents.

$\textbf {F}$

be a non-empty set of fluents.

-

•

$\textbf {F} = \textbf {F}^E \cup \textbf {F}^H$

such that

$\textbf {F} = \textbf {F}^E \cup \textbf {F}^H$

such that

$\textbf {F}^E$

is a non-empty set of fluent literals describing observable items in an environment and

$\textbf {F}^E$

is a non-empty set of fluent literals describing observable items in an environment and

$\textbf {F}^H$

is a non-empty set of fluent literals describing quantified non-observable features of mental-states of humans.

$\textbf {F}^H$

is a non-empty set of fluent literals describing quantified non-observable features of mental-states of humans.

$\textbf {F}^E$

and

$\textbf {F}^E$

and

$\textbf {F}^H$

are pairwise disjoint.

$\textbf {F}^H$

are pairwise disjoint. -

•

$\textbf {A} = \textbf {A}^E \cup \textbf {A}^H$

such that

$\textbf {A} = \textbf {A}^E \cup \textbf {A}^H$

such that

$\textbf {A}^E$

is a non-empty set of actions that can be performed by a software agent and

$\textbf {A}^E$

is a non-empty set of actions that can be performed by a software agent and

$\textbf {A}^H$

is non-empty set of actions that can be performed by a human agent.

$\textbf {A}^H$

is non-empty set of actions that can be performed by a human agent.

$\textbf {A}^E$

and

$\textbf {A}^E$

and

$\textbf {A}^H$

are pairwise disjoint.

$\textbf {A}^H$

are pairwise disjoint.

Within

![]() ${\mathcal{C}}_{MT}$

, a domain description defines static and dynamic causal laws for actions. These laws precisely express the expected influences exerted on mental fluents, either as direct effects of actions or as indirect causal effects. The laws governing mental change operate by modulating the variables of a given mental state while adhering to the constraints outlined by the BG.

${\mathcal{C}}_{MT}$

, a domain description defines static and dynamic causal laws for actions. These laws precisely express the expected influences exerted on mental fluents, either as direct effects of actions or as indirect causal effects. The laws governing mental change operate by modulating the variables of a given mental state while adhering to the constraints outlined by the BG.

Definition 5 (

![]() ${\mathcal{C}}_{MT}$

domain description language)The

${\mathcal{C}}_{MT}$

domain description language)The

![]() ${\mathcal{C}}_{MT}$

domain description language

${\mathcal{C}}_{MT}$

domain description language

![]() $D^{MT}(\textbf {A}, \textbf {F})$

consists of static and dynamic causal laws of the following form:

$D^{MT}(\textbf {A}, \textbf {F})$

consists of static and dynamic causal laws of the following form:

where

![]() $a \in \textbf {A}$

,

$a \in \textbf {A}$

,

![]() $a^h \in \textbf {A}^H$

, and

$a^h \in \textbf {A}^H$

, and

![]() $a_i \in \textbf {A}$

(

$a_i \in \textbf {A}$

(

![]() $0 \leq i \leq n$

) and

$0 \leq i \leq n$

) and

![]() $f_j \in \textbf {F}$

,

$f_j \in \textbf {F}$

,

![]() $(0 \leq j \leq n)$

and

$(0 \leq j \leq n)$

and

![]() $g_j \in \textbf {F}$

,

$g_j \in \textbf {F}$

,

![]() $(0 \leq j \leq m)$

, and

$(0 \leq j \leq m)$

, and

![]() $f_1^h, \ldots , f_n^h \in \textbf {F}^H$

,

$f_1^h, \ldots , f_n^h \in \textbf {F}^H$

,

![]() $g_1^h, \ldots , g_m^h \in \textbf {F}^H$

are mental fluents of the form

$g_1^h, \ldots , g_m^h \in \textbf {F}^H$

are mental fluents of the form

![]() $f(c,v)$

, where

$f(c,v)$

, where

![]() $c$

is a psychological class and

$c$

is a psychological class and

![]() $v$

is a psychological value.

$v$

is a psychological value.

We next provide the formal specification of the action language components in terms of states, rules, and transition conditions. Once this foundation is in place, we proceed to their characterization under answer set semantics, where the operational meaning of the laws is captured by logic programs and their answer sets.

BGs are expressed in terms of

![]() ${\mathcal{C}}_{MT}$

logic programs, that is finite sets of dynamic and causal laws on mental states. This characterization allows us to ensure controlled mental change by restricting the states and state-transitions. The mental state, within the context of the domain description, represents an interpretation of the current state of the system.

${\mathcal{C}}_{MT}$

logic programs, that is finite sets of dynamic and causal laws on mental states. This characterization allows us to ensure controlled mental change by restricting the states and state-transitions. The mental state, within the context of the domain description, represents an interpretation of the current state of the system.

Definition 6 (Mental state interpretation) A state

![]() $s \in S$

of the domain description

$s \in S$

of the domain description

![]() $D^{MT}(\textbf {A, F})$

is an interpretation over

$D^{MT}(\textbf {A, F})$

is an interpretation over

![]() $\textbf {F}$

such that

$\textbf {F}$

such that

-

1. for every static causal law

$(f_1,\ldots ,f_n \,\, \mathbf{if} \,\, g_1, \ldots g_m) \in D^{MT}(\textbf {A, F})$

, we have

$(f_1,\ldots ,f_n \,\, \mathbf{if} \,\, g_1, \ldots g_m) \in D^{MT}(\textbf {A, F})$

, we have

$\{f_1,\ldots ,f_n\} \subseteq s$

whenever

$\{f_1,\ldots ,f_n\} \subseteq s$

whenever

$\{g_1, \ldots g_m\} \subseteq s$

.

$\{g_1, \ldots g_m\} \subseteq s$

. -

2. for every static causal law

$(g_1,\ldots ,g_m \,\, \mathbf{influences} \,\, f_1^h,\ldots ,f_n^h) \in D^{MT}(\textbf {A, F})$

, we have

$(g_1,\ldots ,g_m \,\, \mathbf{influences} \,\, f_1^h,\ldots ,f_n^h) \in D^{MT}(\textbf {A, F})$

, we have

$\{f_1^h,\ldots ,f_n^h\} \subseteq s$

whenever

$\{f_1^h,\ldots ,f_n^h\} \subseteq s$

whenever

$\{g_1,\ldots ,g_m\} \subseteq s$

, and

$\{g_1,\ldots ,g_m\} \subseteq s$

, and

$\{f_1^h,\ldots ,f_n^h\} \subseteq \textbf {F}^H$

.

$\{f_1^h,\ldots ,f_n^h\} \subseteq \textbf {F}^H$

.

![]() $S$

denotes all the possible states of

$S$

denotes all the possible states of

![]() $D^{MT}(\textbf {A, F})$

.

$D^{MT}(\textbf {A, F})$

.

In this definition, a state is determined by the satisfaction of static causal laws within the domain description

![]() $D^{MT}(\textbf {A}, \textbf {F})$

. The first condition ensures that if the prerequisite mental fluents for an action are true, then the consequent mental fluents will also be true in the state. The second condition specifies that if certain mental fluents influence a particular mental fluent, then the influenced mental fluent must be true when all the influencing mental fluents are true.

$D^{MT}(\textbf {A}, \textbf {F})$

. The first condition ensures that if the prerequisite mental fluents for an action are true, then the consequent mental fluents will also be true in the state. The second condition specifies that if certain mental fluents influence a particular mental fluent, then the influenced mental fluent must be true when all the influencing mental fluents are true.

Let us define the laws of the domain description more precisely.

Definition 7 (Domain description) By considering the domain description

![]() $D^{MT}(\textbf {A, F})$

and a state

$D^{MT}(\textbf {A, F})$

and a state

![]() $s$

, the following rules and laws apply:

$s$

, the following rules and laws apply:

-

1. An inhibition rule (

$f_1,\ldots ,f_n\,$

inhibits a) is active in s, if

$f_1,\ldots ,f_n\,$

inhibits a) is active in s, if

$f_1,\ldots ,f_n \, \in s$

, otherwise, passive. The set

$f_1,\ldots ,f_n \, \in s$

, otherwise, passive. The set

$A_I(s)$

is the set of actions for which there exists at least one active inhibition rule in s (as in

$A_I(s)$

is the set of actions for which there exists at least one active inhibition rule in s (as in

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008)).

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008)). -

2. A triggering rule (

$f_1,\ldots ,f_n \,\,$

triggers a) is active in s, if

$f_1,\ldots ,f_n \,\,$

triggers a) is active in s, if

$f_1,\ldots ,f_n \, \in s$

and all inhibition rules of action a are passive in s, otherwise, the triggering rule is passive in s. The set

$f_1,\ldots ,f_n \, \in s$

and all inhibition rules of action a are passive in s, otherwise, the triggering rule is passive in s. The set

$A_T(s)$

is the set of actions for which there exists at least one active triggering rule in s. The set

$A_T(s)$

is the set of actions for which there exists at least one active triggering rule in s. The set

$\overline {A}_T(s)$

is the set of actions for which there exists at least one triggering rule and all triggering rules are passive in s (as in

$\overline {A}_T(s)$

is the set of actions for which there exists at least one triggering rule and all triggering rules are passive in s (as in

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008)).

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008)). -

3. An allowance rule (

$f_1,\ldots ,f_n \,$

allows a) is active in s, if

$f_1,\ldots ,f_n \,$

allows a) is active in s, if

$f_1,\ldots ,f_n \, \in s$

and all inhibition rules of action a are passive in s, otherwise, the allowance rule is passive in s. The set

$f_1,\ldots ,f_n \, \in s$

and all inhibition rules of action a are passive in s, otherwise, the allowance rule is passive in s. The set

$A_A(s)$

is the set of actions for which there exists at least one active allowance rule in s. The set

$A_A(s)$

is the set of actions for which there exists at least one active allowance rule in s. The set

$\overline {A}_A(s)$

is the set of actions for which there exists at least one allowance rule and all allowance rules are passive in s (as in

$\overline {A}_A(s)$

is the set of actions for which there exists at least one allowance rule and all allowance rules are passive in s (as in

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008)).

${\mathcal{C}}_{TAID}$

(Dworschak et al. Reference Dworschak, Grell, Nikiforova, Schaub and Selbig2008)). -

4. A facilitating rule (

$g_1^h, \ldots , g_m^h \,$

facilitates

$g_1^h, \ldots , g_m^h \,$

facilitates

$a^h$

) is active in s, if

$a^h$

) is active in s, if

$a^h$

$a^h$

$\in \textbf {A}^H$

and

$\in \textbf {A}^H$

and

$g_1^h, \ldots , g_m^h \, \in s$

and all inhibition rules and contravening rules of action a are passive in s, otherwise, the facilitating rule is passive in s. The set

$g_1^h, \ldots , g_m^h \, \in s$

and all inhibition rules and contravening rules of action a are passive in s, otherwise, the facilitating rule is passive in s. The set

$A_{FAC}(s)$

is the set of actions for which there exists at least one active facilitating rule in s. The set

$A_{FAC}(s)$

is the set of actions for which there exists at least one active facilitating rule in s. The set

$\overline {A}_{FAC}(s)$

is the set of actions for which there exists at least one facilitating rule and all facilitating rules are passive in s.

$\overline {A}_{FAC}(s)$

is the set of actions for which there exists at least one facilitating rule and all facilitating rules are passive in s. -

5. An contravening rule (

$g_1^h, \ldots , g_m^h \,$

contravenes

$g_1^h, \ldots , g_m^h \,$

contravenes

$a^h$

) is active in s, if

$a^h$

) is active in s, if

$a^h$

$a^h$

$\in \textbf {A}^H$

and

$\in \textbf {A}^H$

and

$g_1^h, \ldots , g_m^h \, \in s$

and all inhibition rules and facilitating rules of action a are passive in s, otherwise, the contravening rule is passive in s. The set

$g_1^h, \ldots , g_m^h \, \in s$

and all inhibition rules and facilitating rules of action a are passive in s, otherwise, the contravening rule is passive in s. The set

$A_{INT}(s)$

is the set of actions for which there exists at least one active contravening rule in s.

$A_{INT}(s)$

is the set of actions for which there exists at least one active contravening rule in s. -

6. A dynamic causal law (a causes

$f_1,\ldots ,f_n \,$

if

$f_1,\ldots ,f_n \,$

if

$g_1,\ldots ,g_m \,$

) is applicable in s, if

$g_1,\ldots ,g_m \,$

) is applicable in s, if

$g_1,\ldots ,g_m \in s$

.

$g_1,\ldots ,g_m \in s$

. -

7. A static causal law (

$f_1,\ldots ,f_n \,$

if

$f_1,\ldots ,f_n \,$

if

$g_1,\ldots ,g_m \,$

) is applicable in s, if

$g_1,\ldots ,g_m \,$

) is applicable in s, if

$g_1,\ldots ,g_m \in s \,$

.

$g_1,\ldots ,g_m \in s \,$

. -

8. A dynamic causal law (a influences

$f_1^h,\ldots ,f_n^h \,\,$

if

$f_1^h,\ldots ,f_n^h \,\,$

if

$g_1,\ldots ,g_m \,\,$

) is applicable in s, if

$g_1,\ldots ,g_m \,\,$

) is applicable in s, if

$g_1,\ldots ,g_m \in s$

, and

$g_1,\ldots ,g_m \in s$

, and

$f_1^h,\ldots ,f_n^h \in F^H$

, and

$f_1^h,\ldots ,f_n^h \in F^H$

, and

$f_i \in F (1 \leq i \leq n)$

.

$f_i \in F (1 \leq i \leq n)$

. -

9. A static causal law (

$g_1,\ldots ,g_m \,\,$

influences

$g_1,\ldots ,g_m \,\,$

influences

$f_1^h,\ldots ,f_n^h$

) is applicable in s, if

$f_1^h,\ldots ,f_n^h$

) is applicable in s, if

$g_1,\ldots ,g_m \in s \,\,$

, and

$g_1,\ldots ,g_m \in s \,\,$

, and

$f_1^h,\ldots ,f_n^h \in F^H$

, and

$f_1^h,\ldots ,f_n^h \in F^H$

, and

$f_i \in F (1 \leq i \leq n)$

.

$f_i \in F (1 \leq i \leq n)$

. -

10. A forbidding rule

$(g_1^h, \ldots , g_m^h \,\, \mathbf{forbids \,to \,cause} \,\, f_1^h,\ldots ,f_n^h)$

is active in

$(g_1^h, \ldots , g_m^h \,\, \mathbf{forbids \,to \,cause} \,\, f_1^h,\ldots ,f_n^h)$

is active in

$ s$

if

$ s$

if

$ \{g_1^h, \ldots , g_m^h\} \subseteq s$

, where

$ \{g_1^h, \ldots , g_m^h\} \subseteq s$

, where

$ f_1^h, \ldots , f_n^h, g_1^h, \ldots , g_m^h \in \textbf {F}^H$

. The set

$ f_1^h, \ldots , f_n^h, g_1^h, \ldots , g_m^h \in \textbf {F}^H$

. The set

$ F(s)$

is the set of fluents that are forbidden in

$ F(s)$

is the set of fluents that are forbidden in

$s+1$

when at least one active forbidding rule exists in

$s+1$

when at least one active forbidding rule exists in

$ s$

.

$ s$

.

Intuitively, the rules describe how actions may or may not occur, and how fluents are connected across and within states. Rule (1) states that certain fluents can inhibit an action, making it inactive in the current state. Rule (2) captures when fluents trigger an action, meaning it must occur if its triggers are satisfied and it is not inhibited. Rule (3) specifies when fluents allow an action, meaning the action is permitted but not enforced. Rules (4) and (5) extend this to human actions: some mental fluents can facilitate a human action, while others can contravene it. Rule (6) introduces dynamic causal laws, describing how actions under certain conditions cause fluents to hold in the next state. Rule (7) introduces static causal laws, which specify that if some fluents hold in a state, then others must co-hold in the same state. Rule (8) adds dynamic influence for mental fluents: when an action occurs under certain conditions, it brings about specified mental fluents in the next state. Rule (9) specifies static influence for mental fluents: when its conditions hold, the corresponding mental fluents must also hold in the same state. Finally, Rule (10) introduces forbids to cause, which regulates mental change by specifying that if certain mental fluents hold in the current state, then some mental fluents are not allowed to appear in the next state.

The output of the action language is in terms of trajectories. A mental-state trajectory consists of a sequence of valid transitions, represented as

![]() $\langle s_0, A_1, s_1, A_2, \ldots , A_n, s_n \rangle$

, with alternating sets of mind-altering actions

$\langle s_0, A_1, s_1, A_2, \ldots , A_n, s_n \rangle$

, with alternating sets of mind-altering actions

![]() $A \subseteq \mathbf{A}$

and mental-states

$A \subseteq \mathbf{A}$

and mental-states

![]() $s \in S$

, following the constraints of the BG.

$s \in S$

, following the constraints of the BG.

Definition 8 (Trajectory) Let

![]() $D^{MT}(\textbf {A, F})$

be a domain description. A trajectory

$D^{MT}(\textbf {A, F})$

be a domain description. A trajectory

![]() $\langle s_0,A_1,s_1,A_2,$

$\langle s_0,A_1,s_1,A_2,$

![]() $\ldots ,$

$\ldots ,$

![]() $A_n,s_n\rangle$

of

$A_n,s_n\rangle$

of

![]() $D^{MT}(\textbf {A, F})$

is a sequence of sets of actions

$D^{MT}(\textbf {A, F})$

is a sequence of sets of actions

![]() $A_i \subseteq A$

and states

$A_i \subseteq A$

and states

![]() $s_i$

of

$s_i$

of

![]() $D^{MT}(\textbf {A, F})$

satisfying the following conditions for 0

$D^{MT}(\textbf {A, F})$

satisfying the following conditions for 0

![]() $\leq$

i

$\leq$

i

![]() $\lt$

n:

$\lt$

n:

-

1.

$(s_i, A, s_{i+1})\in S \times 2^A \backslash \{\} \times S$

$(s_i, A, s_{i+1})\in S \times 2^A \backslash \{\} \times S$

-

2.

$A_T(s_i) \subseteq A_{i+1}$

$A_T(s_i) \subseteq A_{i+1}$

-

3.

$A_{FAC}(s_i) \subseteq A_{i+1}$

$A_{FAC}(s_i) \subseteq A_{i+1}$

-

4.

$\overline {\textrm {A}}_T(s_i) \cap A_{i+1} = \emptyset$

$\overline {\textrm {A}}_T(s_i) \cap A_{i+1} = \emptyset$

-

5.

$\overline {\textrm {A}}_A(s_i) \cap A_{i+1} = \emptyset$

$\overline {\textrm {A}}_A(s_i) \cap A_{i+1} = \emptyset$

-

6.

$A_I(s_i) \cap A_{i+1} = \emptyset$

$A_I(s_i) \cap A_{i+1} = \emptyset$

-

7.

$\overline {\textrm {A}}_{FAC}(s_i) \cap A_{i+1} = \emptyset$

$\overline {\textrm {A}}_{FAC}(s_i) \cap A_{i+1} = \emptyset$

-

8.

$A_{INT}(s_i) \cap A_{i+1} = \emptyset$

$A_{INT}(s_i) \cap A_{i+1} = \emptyset$

-

9.

$|A_i \cap B| \leq 1 \,for\,all\,( noconcurrency B )\,\in \,D^{MT}(\textbf {A, F}).$

$|A_i \cap B| \leq 1 \,for\,all\,( noconcurrency B )\,\in \,D^{MT}(\textbf {A, F}).$

-

10.

$ F(s_i) \cap s_{i+1} = \emptyset$

(no forbidden fluents in

$ F(s_i) \cap s_{i+1} = \emptyset$

(no forbidden fluents in

$s_{i+1}$

)

$s_{i+1}$

)

The set

![]() $ B$

in condition (9) represents a subset of actions restricted by noconcurrency constraints in

$ B$

in condition (9) represents a subset of actions restricted by noconcurrency constraints in

![]() $ D^{MT}(\textbf {A}, \textbf {F})$

, ensuring that actions in

$ D^{MT}(\textbf {A}, \textbf {F})$

, ensuring that actions in

![]() $ B$

cannot execute simultaneously. This prevents conflicts where multiple actions attempt to modify the same fluent at the same time. While actions affecting distinct fluents can occur concurrently, those altering the same fluent are mutually exclusive, maintaining consistency in fluent updates and ensuring well-defined state transitions.

$ B$

cannot execute simultaneously. This prevents conflicts where multiple actions attempt to modify the same fluent at the same time. While actions affecting distinct fluents can occur concurrently, those altering the same fluent are mutually exclusive, maintaining consistency in fluent updates and ensuring well-defined state transitions.

Definition 9 (Action Observation Language) The action observation language of

![]() ${\mathcal{C}}_{MT}$

(similar to

${\mathcal{C}}_{MT}$

(similar to

![]() ${\mathcal{C}}_{TAID}$

) consists of expressions of the following form:

${\mathcal{C}}_{TAID}$

) consists of expressions of the following form:

where

![]() $f \in \textbf {F}$

,

$f \in \textbf {F}$

,

![]() $a$

is an action and

$a$

is an action and

![]() $t \in \mathbb{N}$

is a point in time.

$t \in \mathbb{N}$

is a point in time.

The action observation language allows us to specify observations concerning the current state of mental fluents and the execution of actions that influence mental states. By combining observations with the causal laws of the domain description, we can generate plans, explanations, and predictions regarding the behavior of the system. This integration of observations and the domain description is referred to as an action theory.

Definition 10 (Action Theory) Let

![]() $D$

be a domain description and

$D$

be a domain description and

![]() $O$

be a set of observations. The pair

$O$

be a set of observations. The pair

![]() $(D,O)$

is called an action theory.

$(D,O)$

is called an action theory.

The action theory forms the basis for constructing trajectory models, trajectories where all observations are satisfied, providing a structured representation of the system’s dynamics over time. Trajectory models enable us to analyze and reason about the evolution of mental states and actions, allowing for a deeper understanding how the mental state domain operates and how it responds to different observations and changes.

Definition 11 (Trajectory Model) Let

![]() $(D,O)$

be an action theory. A trajectory

$(D,O)$

be an action theory. A trajectory

![]() $\langle s_0,A_1,s_1,A_2,$

$\langle s_0,A_1,s_1,A_2,$

![]() $\ldots ,$

$\ldots ,$

![]() $A_n,s_n\rangle$

of

$A_n,s_n\rangle$

of

![]() $D$

is a trajectory model of

$D$

is a trajectory model of

![]() $(D,O)$

, if it satisfies all observations of

$(D,O)$

, if it satisfies all observations of

![]() $O$

in the following way:

$O$

in the following way:

-

1. if

$(f \,\,at\,\, t) \in O$

, then

$(f \,\,at\,\, t) \in O$

, then

$f \in s_t$

$f \in s_t$

-

2. if

$(a \,\,occurs\_at\,\, t) \in O$

, then

$(a \,\,occurs\_at\,\, t) \in O$

, then

$a \in A_{t+1}$

.

$a \in A_{t+1}$

.

We can observe that actions in a trajectory model can be actions executed by a rational software agent to influence mental fluents, or actions estimated to be executed by a human agent.

An important consequence of the

![]() $\mathbf{forbids \,to \,cause}$

rule is that it can render an action theory inconsistent. Since such a rule blocks any transition to a state containing its forbidden fluents, a trajectory that reaches such a state is invalid. If all candidate trajectories are thus blocked, then no trajectory model exists and the action theory is inconsistent. This regulative effect is intentional, ensuring that unwanted mental fluents cannot appear in valid states.

$\mathbf{forbids \,to \,cause}$

rule is that it can render an action theory inconsistent. Since such a rule blocks any transition to a state containing its forbidden fluents, a trajectory that reaches such a state is invalid. If all candidate trajectories are thus blocked, then no trajectory model exists and the action theory is inconsistent. This regulative effect is intentional, ensuring that unwanted mental fluents cannot appear in valid states.

Definition 12 (Action Theory Consistency) An action theory

![]() $(D,O)$

is consistent iff there exists a trajectory model of

$(D,O)$

is consistent iff there exists a trajectory model of

![]() $(D,O)$

. Otherwise,

$(D,O)$

. Otherwise,

![]() $(D,O)$

is inconsistent.

$(D,O)$

is inconsistent.

The central computational task is to determine, for a given

![]() ${\mathcal{C}}_{MT}$

action theory

${\mathcal{C}}_{MT}$

action theory

![]() $(D,O)$

, whether there exists a trajectory model of

$(D,O)$

, whether there exists a trajectory model of

![]() $(D,O)$

. This baseline feasibility check underpins subsequent reasoning tasks such as planning, explanation, and query answering.

$(D,O)$

. This baseline feasibility check underpins subsequent reasoning tasks such as planning, explanation, and query answering.

Definition 13 (

![]() ${\mathcal{C}}_{MT}$

Decision Problem)Given a

${\mathcal{C}}_{MT}$

Decision Problem)Given a

![]() ${\mathcal{C}}_{MT}$

theory

${\mathcal{C}}_{MT}$

theory

![]() $(D,O)$

, decide if there exists a trajectory model of

$(D,O)$

, decide if there exists a trajectory model of

![]() $(D,O)$

.

$(D,O)$

.

We need a mechanism to write queries about state dynamics. The Action Query Language provides a means to inquire about specific sequences of actions and their impact on fluents and states. This is achieved by specifying subsets of the action set and their corresponding occurrences in time.

Definition 14 (Action Query Language) The action query language of

![]() ${\mathcal{C}}_{MT}$

regards assertions about executing sequences of actions with expressions that constitute trajectories. A query is of the following form:

${\mathcal{C}}_{MT}$

regards assertions about executing sequences of actions with expressions that constitute trajectories. A query is of the following form:

![]() $(f_1,\ldots ,f_n \,\, \mathbf{after}$

$(f_1,\ldots ,f_n \,\, \mathbf{after}$

![]() $A_i$

$A_i$

![]() $\mathbf{ occurs\_at}$

$\mathbf{ occurs\_at}$

![]() $t_i, \ldots , A_m$

$t_i, \ldots , A_m$

![]() $\mathbf{ occurs\_at}$

$\mathbf{ occurs\_at}$

![]() $t_m)$

where

$t_m)$

where

![]() $f_1$

, …,

$f_1$

, …,

![]() $f_n$

are fluent literals

$f_n$

are fluent literals

![]() $\in \textbf {F}$

,

$\in \textbf {F}$

,

![]() $A_i$

, …,

$A_i$

, …,

![]() $A_m$

are subsets of

$A_m$

are subsets of

![]() $\textbf { A}$

, and

$\textbf { A}$

, and

![]() $t_i$

, …,

$t_i$

, …,

![]() $t_m$

are points in time.

$t_m$

are points in time.

By formulating queries in the action language, causal relationships between actions and their effects can be investigated, contributing to a deeper understanding of system dynamics, informed decision-making and controlled methods for automated planning.

Definition 15 (Query Truth in Action Theory) Let

![]() $(D,O)$

be an action theory and

$(D,O)$

be an action theory and

![]() $Q$

a query.

$Q$

a query.

-

•

$Q$

holds skeptically in

$Q$

holds skeptically in

$(D,O)$

iff

$(D,O)$

iff

$\tau \models Q$

for every trajectory model

$\tau \models Q$

for every trajectory model

$\tau$

of

$\tau$

of

$(D,O)$

.

$(D,O)$

. -

•

$Q$

holds credulously in

$Q$

holds credulously in

$(D,O)$

iff

$(D,O)$

iff

$\tau \models Q$

for some trajectory model

$\tau \models Q$

for some trajectory model

$\tau$

of

$\tau$

of

$(D,O)$

.

$(D,O)$

.

Given a

![]() ${\mathcal{C}}_{MT}$

action theory

${\mathcal{C}}_{MT}$

action theory

![]() $(D,O)$

and a query

$(D,O)$

and a query

![]() $Q$

, the decision problem regards whether

$Q$

, the decision problem regards whether

![]() $Q$

holds in all (or some) trajectory models of

$Q$

holds in all (or some) trajectory models of

![]() $(D,O)$

.

$(D,O)$

.

3.3 Action language in answer set semantics

In this section, we provide operational semantics for

![]() ${\mathcal{C}}_{MT}$

by translation into answer set programs. The goal is to construct an encoding such that the encoding has exactly one answer set for every trajectory model of a

${\mathcal{C}}_{MT}$

by translation into answer set programs. The goal is to construct an encoding such that the encoding has exactly one answer set for every trajectory model of a

![]() ${\mathcal{C}}_{MT}$

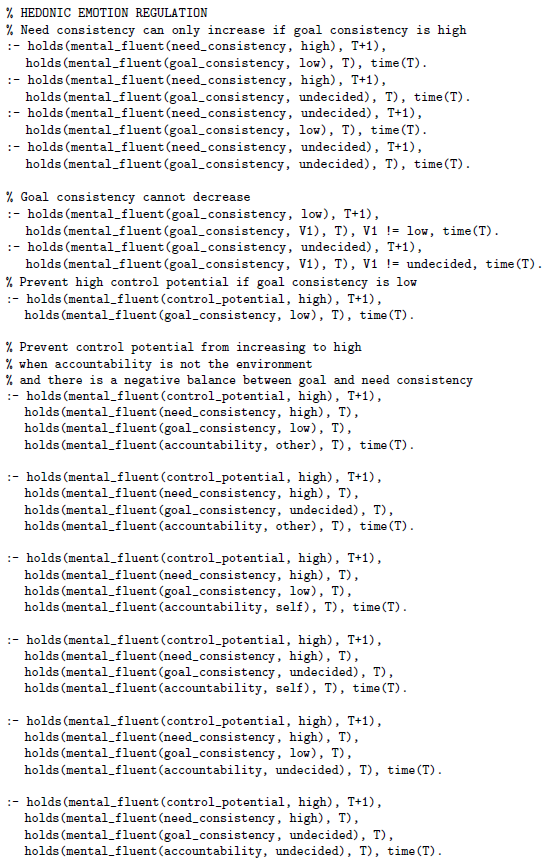

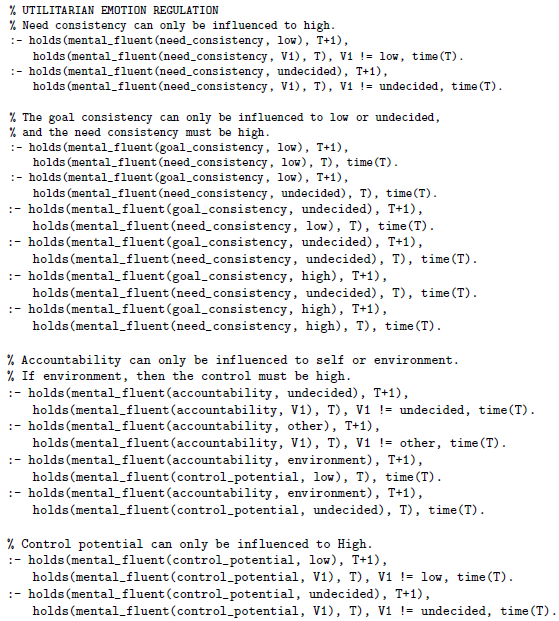

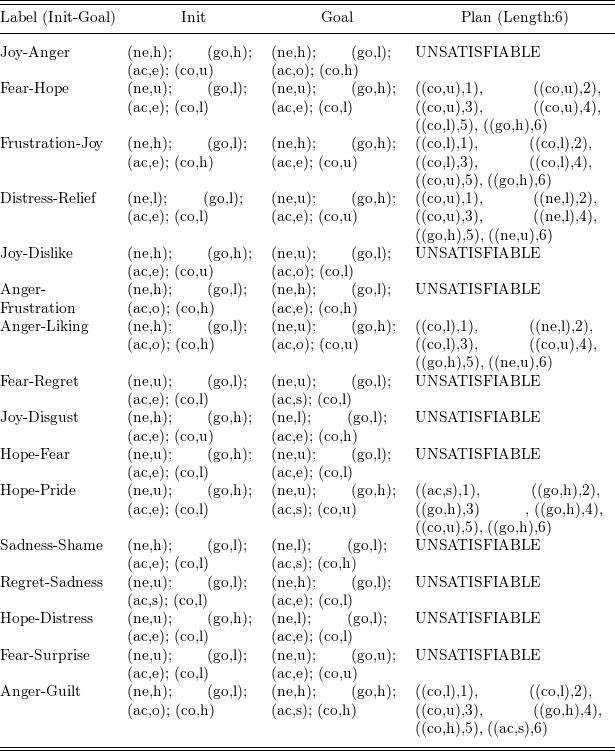

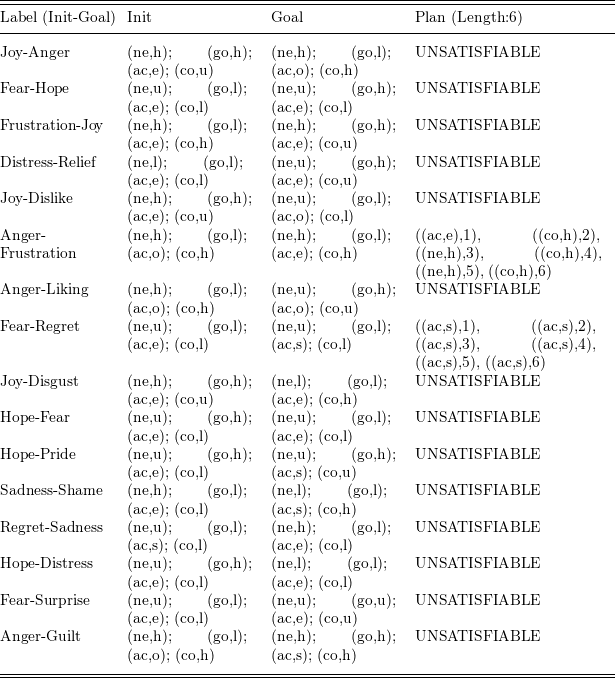

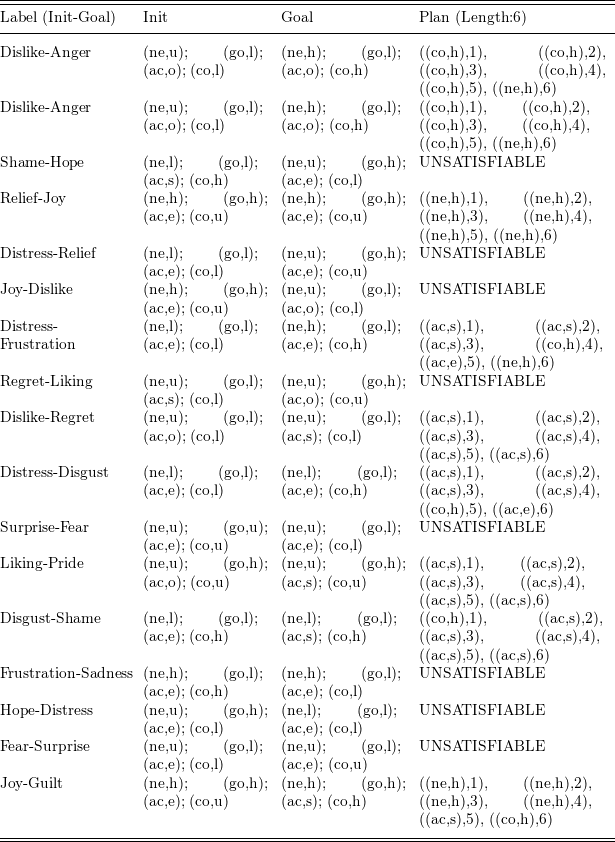

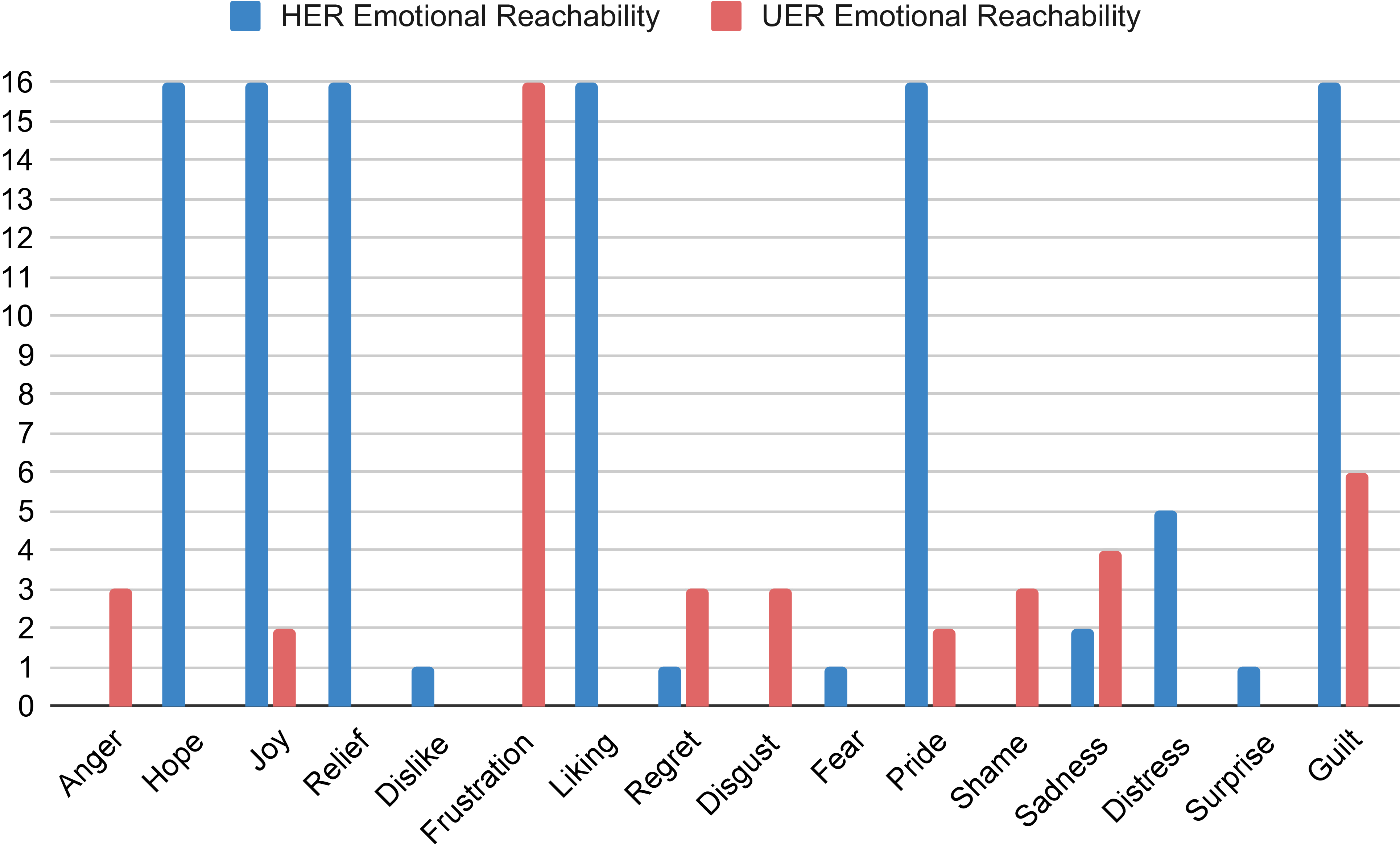

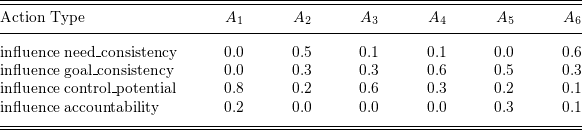

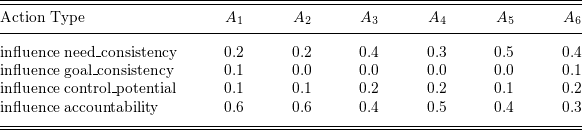

theory. Intuitively, each answer set represents a trajectory model: for a trajectory