1. Introduction

For a wireless transceiver pair with multiple antennas, optimizing the transmit covariance matrix can achieve high data-rate communication over the multiple-input multiple-output (MIMO) channel. Meanwhile, the radiated radio frequency (RF) energy can be acquired by the nearby RF energy harvesters to charge the electronic devices [Reference Zhang and Ho1].

The problem of simultaneous wireless information and power transfer (SWIPT) has been widely discussed in recent years. SWIPT systems are divided into two categories: (1) the receiver splits the received signals for information decoding and energy harvesting [Reference Lee, Liu and Zhang2, Reference Zong, Feng, Yu, Zhao, Yang and Hu3]; (2) separated and dedicated information decoders (ID) and RF energy harvesters (EH) exist in the systems [Reference Xing, Qian and Dong4]. For the second type of the system, different transmission strategies have ever been proposed to achieve good performance points in the rate-energy region [Reference Zhang and Ho1, Reference Lee, Liu and Zhang2, Reference Park and Clerckx5]. For the multiple RF energy harvesters, which are in the vicinity of the wireless transmitter, the covariance matrix at the transmitter is designed to either maximize the net energy harvesting rate or fairly distribute the radiated RF energy at the harvesters [Reference Wu, Zhang, Wang and Wang6, Reference Thudugalage, Atapattu and Evans7]. The achievable information rate of the wireless transmitter-receiver pair is beyond a minimum requirement for reliable communication. Most of the existing works assume the channel state information (CSI) is completely known. Given the complete CSI, the transmitter designs the transmit covariance matrix to achieve the maximum information rate while satisfying the RF energy harvesting requirement [Reference Xing, Qian and Dong4, Reference Xing and Dong8].

However, in practice, it is difficult for the transmitter to obtain the channel state information to the nearby RF energy harvesters because the scattering distribution of the hardware-limited energy harvesters makes the channel estimation at the RF energy harvesters challenging [Reference Mekikis, Antonopoulos, Kartsakli, Lalos, Alonso and Verikoukis9, Reference Xu and Zhang10]. The analytic center cutting plane method (ACCPM) was proposed for the transmitter to approximate the channel information with a few bits of feedback from the RF energy receiver iteratively [Reference Xu and Zhang10]. Since this method is implemented by solving a convex optimization problem, the algorithm leads to high computational complexity. To reduce complexity, channel estimation based on Kalman filtering was proposed [Reference Choi, Kim and Chung11]. Nevertheless, the disadvantage of this approach is the slow convergence rate. In order to effectively deal with the CSI acquisition problem, in our paper, we will use the deep learning algorithm to solve the optimization problem in the SWIPT system only with partial channel information. The partial CSI is easy to acquire, which is already enough to achieve superior system performance using the deep Q-network. To the best of our knowledge, we are the first one to use the deep Q-network to optimize the SWIPT system performance and validate its superiority.

In our model, the transmitter intends to fully charge all surrounded energy harvesters’ energy buffers in the shortest time while maintaining a target information rate toward the receiver. The communication link is defined as a strong line-of-sight (LOS) transmission, which is supposed to be invariant, but the energy harvesting channel conditions vary over time. Due to current hardware limitations, we assume that the estimation of the energy harvesting channel vectors is not able to be implemented under the fast varying channel conditions. As a result, the wireless charging problem can be modeled as a high complexity discrete-time stochastic control process with unknown system dynamics [Reference Mnih, Kavukcuoglu and Silver12]. In [Reference Xing, Qian and Dong13], a similar problem has been explored. A multiarmed bandit algorithm is used to determine the optimal transmission strategy. In our paper, we apply a deep Q-network to solve the optimization problem and the simulation results demonstrate that the deep Q-network algorithm outperforms the multiarmed bandit algorithm. Historically, deep Q-network has a strongly proven record of attaining mastery over complex games with a very large number of system states, and unknown state transition probabilities [Reference Mnih, Kavukcuoglu and Silver12]. More recently, a deep Q-network has been applied to deal with complex communication problems and has been shown to achieve good performance [Reference He, Zhang and Yu14–Reference Xu, Wang, Tang, Wang and Gursoy16]. For this reason, we found deep Q-network fitting for our model. In our model, we consider the accumulated energy of the energy harvesters as the system states, while we define the action as the transmit power allocation. At the beginning of each time slot, each energy harvester sends feedback about the accumulated energy level to the wireless transmitter, and the transmitter collects all the information in order to generate the system state and inputs it into a well-trained deep Q-network. The deep Q-network outputs the Q values corresponding to all possible actions. The action with the maximum Q value is selected as the beam pattern to be used for the transmission during the current time slot.

Based on the traditional deep Q-network, the double deep Q-network and dueling deep Q-network algorithms are applied in order to reduce the observed overestimations [Reference Van Hasselt, Guez and Silver17] and improve the learning efficiency [Reference Wang, Schaul, Hessel, Van Hasselt, Lanctot and De Freitas18]. Henceforth, we apply dueling double deep Q-network to solve the varying channel multiple energy harvester wireless charging problem.

The novelties of this paper are summarized as follows:

-

(i) The simultaneous wireless information and power transfer problem is formulated as a Markov decision process (MDP) in an unknown varying channel condition for the first time.

-

(ii) The deep Q-network algorithm is applied to solve the proposed optimization problem for the first time. We demonstrate that, compared to the other existing algorithms, deep Q-network shows the superiority in efficient and stable wireless power transfer.

-

(iii) Multiple experimental scenarios are explored. By varying the number of transmission antennas and the number of energy harvesters in the system, the performance of both the deep Q-network and the other algorithms is compared and analyzed.

-

(iv) The evaluation for the algorithms is based on the real experimental data, which validate the effectiveness of the proposed deep Q-network in real-time simultaneous wireless information and power transfer systems.

The rest of the paper is organized as follows. In Section 2, we describe the simultaneous wireless information and power transfer system model. In Section 3, we model the optimization problem as a Markov decision process and present a deep Q-network algorithm to determine the optimal transmission strategy. In Section 4, we present our simulation results for different experimental environments. Section 5 concludes the paper.

2. System Model

As shown in Figure 1, an information transmitter communicates with its receiver while perceived by K nearby RF energy harvesters [Reference Xing and Dong8]. Both the transmitter and the receiver are equipped with M antennas, while each RF energy harvester is equipped with one receive antenna. The baseband received signal at the receiver can be represented as

where

![]() denotes the normalized baseband equivalent channel from the information transmitter to its receiver,

denotes the normalized baseband equivalent channel from the information transmitter to its receiver,

![]() represents the transmitted signal, and

represents the transmitted signal, and

![]() is the zero-mean circularly symmetric complex Gaussian noise with

is the zero-mean circularly symmetric complex Gaussian noise with

![]() .

.

Wireless information transmitter and receiver surrounded by multiple RF energy harvesters.

The transmit covariance matrix is denoted by Q, i.e.,

![]() . The covariance matrix is Hermitian positive semidefinite, i.e.,

. The covariance matrix is Hermitian positive semidefinite, i.e.,

![]() . The transmit power is restricted by the transmitter’s power constraint P, i.e.,

. The transmit power is restricted by the transmitter’s power constraint P, i.e.,

![]() . For the information transmission, we assume that a Gaussian codebook with infinitely many code words is used for the symbols and the expectation of the transmit covariance matrix is taken over the entire codebook. Therefore, x is the zero-mean circularly symmetric complex Gaussian with

. For the information transmission, we assume that a Gaussian codebook with infinitely many code words is used for the symbols and the expectation of the transmit covariance matrix is taken over the entire codebook. Therefore, x is the zero-mean circularly symmetric complex Gaussian with

![]() . With transmitter precoding and receiver filtering, the capacity of the MIMO channel is the sum of the capacities of the parallel noninterfering single-input single-output (SISO) channels (eigenmodes of channel H) [Reference Biglieri, Calderbank, Constantinides, Goldsmith, Paulraj and Poor19]. We convert the MIMO channel to M eigenchannels for information and energy transfer [Reference Timotheou, Krikidis, Karachontzitis and Berberidis20, Reference Mishra and Alexandropoulos21]. A singular value decomposition (SVD) on H gives

. With transmitter precoding and receiver filtering, the capacity of the MIMO channel is the sum of the capacities of the parallel noninterfering single-input single-output (SISO) channels (eigenmodes of channel H) [Reference Biglieri, Calderbank, Constantinides, Goldsmith, Paulraj and Poor19]. We convert the MIMO channel to M eigenchannels for information and energy transfer [Reference Timotheou, Krikidis, Karachontzitis and Berberidis20, Reference Mishra and Alexandropoulos21]. A singular value decomposition (SVD) on H gives

![]() , where

, where

![]() contains the M singular values of H. Since the MIMO channel is decomposed into M parallel SISO channels, the information rate can be given by

contains the M singular values of H. Since the MIMO channel is decomposed into M parallel SISO channels, the information rate can be given by

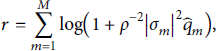

where

![]() are the diagonal elements of

are the diagonal elements of

![]() with

with

![]() .

.

The RF energy harvester received power specifies the harvested energy normalized by the baseband symbol period and scaled by the energy conversion efficiency. The received power at the ith energy harvester is

where

![]() is the channel vector from the transmitter to the ith energy harvester. With MIMO channel decomposition, the received power at energy harvester i is denoted as

is the channel vector from the transmitter to the ith energy harvester. With MIMO channel decomposition, the received power at energy harvester i is denoted as

where

![]() are the elements of vector

are the elements of vector

![]() with

with

![]() .

.

We define the simplified channel vector from the transmitter to the ith RF energy harvester as

for each

![]() . The simplified channel vector contains no phase information. The K simplified channel vectors compose matrix

. The simplified channel vector contains no phase information. The K simplified channel vectors compose matrix

![]() as

as

In what follows, we assume that time is slotted, each time slot as a duration T, and that each energy harvester is equipped with an energy buffer of size

![]() ,

,

![]() . Without loss of generality, we assume that, at t = 0, all harvesters’ buffers are empty, which corresponds to system state

. Without loss of generality, we assume that, at t = 0, all harvesters’ buffers are empty, which corresponds to system state

![]() . At a generic time slot t, the transmitter transmits with one of the designed beam patterns. Each harvester i can harvest the specific amount of power pi, and its energy buffer values increase to

. At a generic time slot t, the transmitter transmits with one of the designed beam patterns. Each harvester i can harvest the specific amount of power pi, and its energy buffer values increase to

![]() . Therefore, each state of the system includes the accumulated harvested energy information of all K harvesters, i.e.,

. Therefore, each state of the system includes the accumulated harvested energy information of all K harvesters, i.e.,

where

![]() denotes the ith energy harvester’s accumulated energy up to time slot t.

denotes the ith energy harvester’s accumulated energy up to time slot t.

Once all harvesters are fully charged, we assume that the system arrives at a final goal state denoted as

![]() . We note that the energy buffer level B max also accounts for situations in which

. We note that the energy buffer level B max also accounts for situations in which

![]() .

.

3. Problem Formulation for Time-Varying Channel Conditions

In this section, we suppose that the communication link is characterized by strong LOS transmission, which results in an invariant channel matrix H, while the energy harvesting channel vector g varies over time slots. We model the wireless charging problem as a Markov decision process (MDP) and show how to solve the optimization problem using reinforcement learning (RL). When the number of system states is very large, we apply a deep Q-network algorithm to acquire the optimal strategy at each particular system state.

3.1. Problem Formulation

In order to model our optimization problem as a RL problem, we define the beam pattern chosen in a particular time slot t as the action at. The set of possible actions A is determined by equally generating L different beam patterns with power allocation vector

![]() that satisfies the power and information rate constraints, i.e.,

that satisfies the power and information rate constraints, i.e.,

![]() ,

,

![]() . Each beam pattern corresponds to a particular power level pi, which depends not only on the action at but also on the channel condition experienced by the harvester during time slot t.

. Each beam pattern corresponds to a particular power level pi, which depends not only on the action at but also on the channel condition experienced by the harvester during time slot t.

Given the above, the simultaneous wireless information and energy transfer problem for a time-varying channel can be formulated as minimizing the time-consumption n to fully charge all the energy harvesters while maintaining the information rate between the information transceivers:

In general, the action selected at each time slot will be different to adapt to the current channel conditions and current energy buffer state of the harvesters. Therefore, the evolution of our system can be described by a Markov chain, where the generic state s is identified by the current buffer levels of the harvester, i.e.,

![]() . The set of all states is denoted by S. Among all states, we are interested in the state in which all harvesters’ buffer is empty, namely,

. The set of all states is denoted by S. Among all states, we are interested in the state in which all harvesters’ buffer is empty, namely,

![]() , and the state sG in which all the harvesters are fully charged, i.e.,

, and the state sG in which all the harvesters are fully charged, i.e.,

![]() . If we suppose that we know all the channel coefficients at each time slot, problem P 1 can be seen as a stochastic shortest path (SSP) problem from state s 0 to state sG. At each time slot, the system is in a generic state s, the transmitter selects a beam pattern or action

. If we suppose that we know all the channel coefficients at each time slot, problem P 1 can be seen as a stochastic shortest path (SSP) problem from state s 0 to state sG. At each time slot, the system is in a generic state s, the transmitter selects a beam pattern or action

![]() , and the system moves to a new state s′. The dynamics of the system is captured by transition probabilities

, and the system moves to a new state s′. The dynamics of the system is captured by transition probabilities

![]() ,

,

![]() , and

, and

![]() , describing the probability that the harvesters’ energy buffers reach the levels in s′ after a transmission with beam pattern a. We note that the goal state sG is absorbing, i.e.,

, describing the probability that the harvesters’ energy buffers reach the levels in s′ after a transmission with beam pattern a. We note that the goal state sG is absorbing, i.e.,

![]() .

.

Each transition also has an associated reward,

![]() , that denotes the reward when the current state is

, that denotes the reward when the current state is

![]() , action

, action

![]() is selected, and the system moves to state

is selected, and the system moves to state

![]() . Since we aim at reaching sG in the fewest transmission time slots, we consider that the action entails a positive reward related to the difference between the current energy buffer level and the full energy buffer level of all harvesters. When the system reaches state sG, we set the reward as 0. In this way, the system not only tries to fully charge all harvesters in the shortest time but will also uniformly charge all the harvesters. In detail, we define the reward function as

. Since we aim at reaching sG in the fewest transmission time slots, we consider that the action entails a positive reward related to the difference between the current energy buffer level and the full energy buffer level of all harvesters. When the system reaches state sG, we set the reward as 0. In this way, the system not only tries to fully charge all harvesters in the shortest time but will also uniformly charge all the harvesters. In detail, we define the reward function as

where

and λ denotes the unit price of the harvested energy.

It is noted that different reward functions can also be selected. As an example, it is also possible to set a constant negative reward (e.g., a unitary cost) for each transmission that the system does not reach the goal state and a big positive reward only for the states and actions that bring the system to the goal state sG. This can be expressed as follows:

We note that the reward formulation of equation (11) is actually equivalent to minimizing the number of time slots required to reach state sG starting from state s 0.

Using the above formulation, the optimization problem

![]() can then be seen as a stochastic shortest path search from state s 0 to state sG on the Markov chain with states S and probabilities

can then be seen as a stochastic shortest path search from state s 0 to state sG on the Markov chain with states S and probabilities

![]() , actions

, actions

![]() , and rewards

, and rewards

![]() . Our objective is to find, for each possible state

. Our objective is to find, for each possible state

![]() , an optimal action

, an optimal action

![]() so that the system will reach the goal state following the path with maximum average reward. A generic policy can be written as

so that the system will reach the goal state following the path with maximum average reward. A generic policy can be written as

![]() .

.

Different techniques can be applied to solve problem P 1, as it represents a particular class of MDPs. In this paper, however, we assume that the channel conditions at each time slot are unknown, which corresponds to not knowing the transition probabilities

![]() . Therefore, in the next section, we describe how to solve the above problem using reinforcement learning.

. Therefore, in the next section, we describe how to solve the above problem using reinforcement learning.

3.2. Optimal Power Allocation with Reinforcement Learning

Reinforcement learning is suitable for solving optimization problems in which the system dynamics follow a particular transition probability function, however, the probabilities

![]() are unknown. In what follows, we first show how to apply the Q-learning algorithm [Reference Barto, Bradtke and Singh22] to solve the optimization problem and then show how we can combine the reinforcement learning approach with a neural network to approximate the system model in case of large states and action sets, using deep Q-network [Reference Mnih, Kavukcuoglu and Silver12].

are unknown. In what follows, we first show how to apply the Q-learning algorithm [Reference Barto, Bradtke and Singh22] to solve the optimization problem and then show how we can combine the reinforcement learning approach with a neural network to approximate the system model in case of large states and action sets, using deep Q-network [Reference Mnih, Kavukcuoglu and Silver12].

3.2.1. Q-Learning Method

If the number of system states is small, we can depend on the traditional Q-learning method to find the optimal strategy at each system state, as defined in the previous section.

To this end, we define the cost function of action a on system state s as

![]() , with

, with

![]() . The algorithm initializes with

. The algorithm initializes with

![]() and then updates the Q values using the following equation:

and then updates the Q values using the following equation:

where

and

![]() denotes the learning rate. In each time slot, only one Q value is updated, and hence, all the other Q values remain the same.

denotes the learning rate. In each time slot, only one Q value is updated, and hence, all the other Q values remain the same.

At the beginning of the learning iterations, since the Q-table does not have enough information to choose the best action at each system state, the algorithm randomly explores new actions. Hence, we first define threshold

![]() , and we then randomly generate a probability

, and we then randomly generate a probability

![]() . In the case that

. In the case that

![]() , we choose the action a as

, we choose the action a as

On the contrary, if

![]() , we randomly select one action from the action set A.

, we randomly select one action from the action set A.

When Q ∗ converges, the optimal strategy at each state is determined as

which corresponds to finding the optimal beam pattern for each system state during the charging process.

3.2.2. Deep Q-Network

When considering a complex system with multiple harvesters, large energy buffers, and time-varying channel conditions, the number of system states dramatically increases. In order to learn the optimal transmit strategy at each system state, the Q-learning algorithm described before requires a Q-table with a large number of elements, making it very difficult for all the values in the Q-table to converge. Therefore, in what follows, we describe how to apply the deep Q-network (DQN) approach to find the optimal transmission policy.

The main idea of DQN is to train a neural network to find the Q function of a particular system state and action combination. When the system is in state s, and action a is selected, the Q function is denoted as

![]() . θ denotes the parameters of the Q-network. The purpose of training the neural network is to make

. θ denotes the parameters of the Q-network. The purpose of training the neural network is to make

According to the DQN algorithm [Reference Van Hasselt, Guez and Silver17], two neural networks are used to solve the problem: the evaluation network and the target network, which are denoted as eval_net and target_net, respectively. Both the eval_net and the target_net are set up with several hidden layers. The input of the eval_net and the target_net are denoted as s and s′, which describe the current system state s and the next system state s′, respectively. The output of eval_net and target_net are denoted as

![]() and

and

![]() , respectively. The evaluation network is continuously trained to update the value of θ; however, the target network only copies the weight parameters from the evaluation network intermittently (i.e.,

, respectively. The evaluation network is continuously trained to update the value of θ; however, the target network only copies the weight parameters from the evaluation network intermittently (i.e.,

![]() ). In each neural network learning epoch, the loss function is defined as

). In each neural network learning epoch, the loss function is defined as

where y represents the real Q value and is calculated as

where ε is the learning rate. As the loss function updates, the values are backpropagated to the neural network to update the weight of the eval_net.

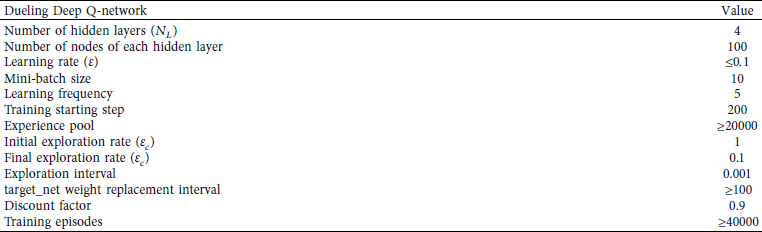

Deep Q-network algorithm training process.

In order to better train the neural network, we apply the experience reply method to remove the correlation between different training data. Each experience consists of the current system state s, the action a, the next system state s′, and the corresponding reward

![]() . The experience is denoted by the set

. The experience is denoted by the set

![]() . The algorithm records D experiences, and randomly select Ds (with

. The algorithm records D experiences, and randomly select Ds (with

![]() ) experiences from D for training. After the training is finished, target_net clones all the weight parameters from the eval_net (i.e.,

) experiences from D for training. After the training is finished, target_net clones all the weight parameters from the eval_net (i.e.,

![]() ).

).

The algorithm used for the DQN training process is presented in Algorithm 1. In the algorithm, we define in each training iteration, we generate D usable experiences ep and select Ds of all for training the eval_net. In total, we suppose there are U training iterations. We consider that, for both the eval_net and the target_net, there are Nl layers in the neural network. In the learning process, we use C to denote all energy harvesters’ channel condition in a particular time slot.

3.2.3. Dueling Double Deep Q-Network

Since more harvesters and time-varying channel conditions incur more system states, even if we utilize the original DQN, it is hard to study the transmit rules for the transmitter. Therefore, we can apply dueling double DQN in order to deal with the overestimating problem during the training process and improve the learning efficiency of the neural network. Doubling DQN is a technique that strengthens the traditional DQN algorithm by preventing overestimating to happen [Reference Van Hasselt, Guez and Silver17]. In traditional DQN, as shown in equation (18), we utilize the target_net to predict the maximum Q value of the next state. However, the target_net is not updated at every training episode, which may lead to an increase in the training error and therefore complicate the training process. In doubling DQN, we utilize both the target_net and the eval_net to predict the Q value. The eval_net is used to determine the optimal action to be taken for the system state s′ as follows:

It can be shown that, following this approach, the training error considerably decreases [Reference Van Hasselt, Guez and Silver17].

In traditional DQN, the neural network only has the Q value as the output. In order to speed up the convergence, we apply dueling DQN by setting up two output streams from the neural network. The first stream is represented by the output value

![]() results of the neural network, which represents the Q value of each system state. The second stream is called advantage output

results of the neural network, which represents the Q value of each system state. The second stream is called advantage output

![]() and describes the advantage of applying each particular action to the current system state [Reference Wang, Schaul, Hessel, Van Hasselt, Lanctot and De Freitas18]. α and β are parameters that relate the two streams and the neural network output, which is denoted as

and describes the advantage of applying each particular action to the current system state [Reference Wang, Schaul, Hessel, Van Hasselt, Lanctot and De Freitas18]. α and β are parameters that relate the two streams and the neural network output, which is denoted as

Dueling DQN can efficiently eliminate the extra training freedom, which speeds up the training [Reference Wang, Schaul, Hessel, Van Hasselt, Lanctot and De Freitas18].

4. Simulation Results

We simulate a MIMO wireless communication system with nearby RF energy harvesters. The wireless transmitter has at most M = 4 antennas. The 4 × 4 communication MIMO channel matrix H is measured by two Wireless Open-Access Research Platform (WARP) v3 boards. Both WARP boards are mounted with the FMC-RF-2X245 dual-radio module, which is operated in 5.805 GHz frequency band. The Xilinx Virtex-6 FPGA operates as the central processing system and the WARPLab is used for rapid physical layer prototyping which is compiled by MATLAB [Reference Dong and Liu23]. We deploy two transceivers as line-of-sight transmission. The maximum transmitted power is P = 12W.

![]() dBm. The information rate requirement R is 53 bps/Hz. The average channel gain from the transmitter to the energy harvester is −30 dB. The energy conversion efficiency is 0.1. The duration of one time slot is defined as T = 100 ms.

dBm. The information rate requirement R is 53 bps/Hz. The average channel gain from the transmitter to the energy harvester is −30 dB. The energy conversion efficiency is 0.1. The duration of one time slot is defined as T = 100 ms.

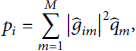

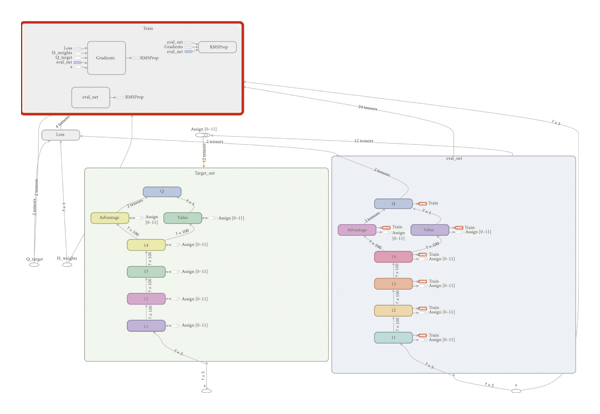

DQN is trained to solve for the optimal transmit strategies for each system state. The simulation parameters used for DQN are presented in Table 1.

As described in Section 3.2, the exploration rate

![]() determines the probability that the network selects an action randomly or follows the values of the Q-table. Initially, we set

determines the probability that the network selects an action randomly or follows the values of the Q-table. Initially, we set

![]() because the experience pool has to accumulate reasonable amount of data to train the neural network.

because the experience pool has to accumulate reasonable amount of data to train the neural network.

![]() decreases with 0.001 at each training interval and finally stops at

decreases with 0.001 at each training interval and finally stops at

![]() , since the experience pool has collected enough training data.

, since the experience pool has collected enough training data.

Refer to [Reference Xing, Pan, Xu, Zhao, Tapparello and Qian24]. The dueling double DQN is used in our paper, which is shown in Figure 2. The software environment for simulation is TensorFlow 0.12.1 with Python 3.6 in Jupyter Notebook 5.6.0.

Dueling double deep Q-network structure.

For the energy harvesters’ channel, to show an example of the performance achievable by the proposed algorithm, we consider the Rician channel fading model [Reference Cavers25]. We suppose within each time slot t, the channel is invariant and varies in different time slots [Reference Wu and Yang26]. At the end of each time slot, the energy harvester feedbacks the current energy level back to the transmitter. For the Rician fading channel model, the total gain of the signal is denoted as

![]() , where gs is the invariant LOS component and gd denotes a zero-mean Gaussian diffuse component. The channel between the transmit antenna m and the energy harvester i can be denoted as

, where gs is the invariant LOS component and gd denotes a zero-mean Gaussian diffuse component. The channel between the transmit antenna m and the energy harvester i can be denoted as

![]() . The magnitude of the faded envelope can be modeled using the Rice factor Kr such that

. The magnitude of the faded envelope can be modeled using the Rice factor Kr such that

![]() , where

, where

![]() denotes the average power of the main LOS component between the transmit antenna m and energy harvester i and

denotes the average power of the main LOS component between the transmit antenna m and energy harvester i and

![]() denotes the variance of the scatter component. We can derive the magnitude of the main LOS component as

denotes the variance of the scatter component. We can derive the magnitude of the main LOS component as

![]() since

since

![]() . The mean and the variance of gim are denoted as

. The mean and the variance of gim are denoted as

![]() and

and

![]() , respectively. In polar coordinates,

, respectively. In polar coordinates,

![]() .

.

First, we explore the optimal deep Q-network structure under fading channels. We suppose the number of antennas is M = 3 and the number of energy harvesters is K = 2. The channel between each antenna of the transmitter and each harvester is individually Rician distributed. The action set A contains 13 actions satisfying the information rate requirement:

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() , and

, and

![]() .

.

The LOS amplitude components of all channel links are defined as

![]() , with i = 1, 2 and m = 1, 2, 3. The LOS phase components of all channel links are defined as

, with i = 1, 2 and m = 1, 2, 3. The LOS phase components of all channel links are defined as

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() , and

, and

![]() . The standard deviation of the gim amplitude and phase is denoted as

. The standard deviation of the gim amplitude and phase is denoted as

![]() and

and

![]() , respectively. We suppose

, respectively. We suppose

![]() . Hence,

. Hence,

![]() .

.

Using the fading channel model above, in Figure 3, we show how the structure of the neural network together with the learning rate can affect the performance of the DQN, for a fixed number of training episodes (i.e., 40000). The performance of DQN is measured by the average number of time slots required to fully charge two harvesters. The average time-consumption is obtained over 1000 testing data. Figure 3 shows that if the deep Q-network has multiple hidden layers, a smaller learning rate is necessary to achieve better performance. When the learning rate is 0.1, the DQN with 4 hidden layers performs worse than a neural network with 2 or 3 hidden layers. On the other side, when the learning rate decreases, we can see that the neural network with 4 hidden layers and a learning rate of 0.00005 achieves the best overall performance. We do not see a monotonic decrease in the average number of time slots due to the stochastic nature of the channel that causes some fluctuations in the DQN optimization. After an initial improvement, decreasing the learning rate results in a slight increase in the average number of charging steps for all three neural network structures. This is due to the fixed number of training episodes. As a result, for all the simulations presented in this section, we consider a DQN algorithm using a 4 hidden layer deep neural network, with 100 nodes in each layer and a learning rate of 0.00005.

Deep Q-network performance on different learning rates and number of hidden layers for the neural network.

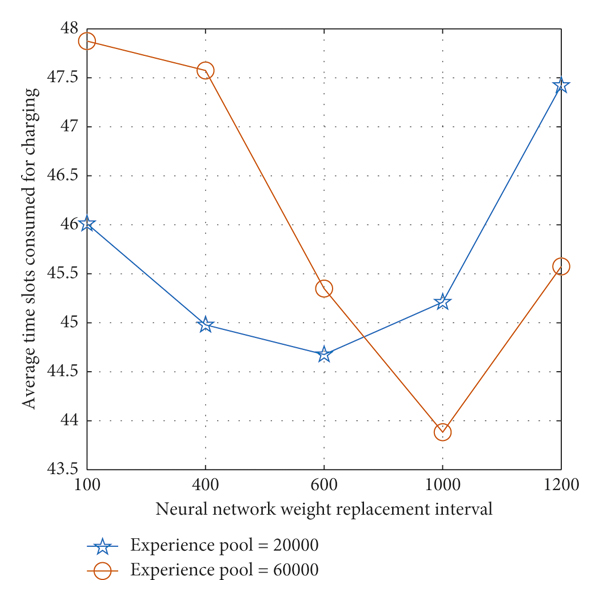

In Figure 4, we can observe that the size of the experience pool also affects the performance of DQN (40000 training episodes). To eliminate the correlation between the training data, we select part of the experience pool for training. In our simulation, this parameter, called mini-batch, is set to 10. Larger experience pool contains more training data; hence, selecting the mini-batch from it for training can eliminate the correlation between the training data. However, we need to balance the size of the experience pool and the target_net weight replacement interval. If the experience pool is large but the replacement iteration interval is small, even if we address the correlation problem between the training data, the neural network does not have enough training episodes to reduce the training error before the weight of the target_net is replaced. From Figure 4, we can observe that a large number of replacement iteration intervals may not be the best choice too. Therefore, we determine that, for our problem, DQN achieves the best performance when the size of the experience pool and the neural network replacement iteration interval are 60000 and 1000, respectively.

Deep Q-network performance for different values of neural network replacement iteration interval and experience pool.

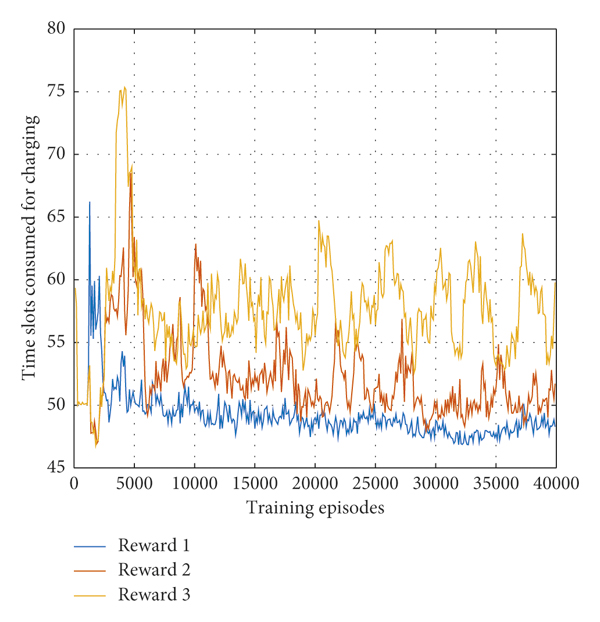

Figure 5 shows the impact of the reward function (see Section 3.1) on the DQN performance. In this figure, we consider the following reward functions: Reward1:

![]() if

if

![]() and

and

![]() otherwise; Reward2:

otherwise; Reward2:

![]() if

if

![]() and

and

![]() otherwise; Reward3:

otherwise; Reward3:

![]() if

if

![]() and

and

![]() otherwise. Here, K = 2 and λ = 0.25. All three reward functions are designed to minimize the number of time slots required to fully charge all the harvesters. However, from Figure 5, we can observe that the best performance can be obtained using Reward1. In this case, the energy level accumulated by each harvester increases uniformly, which results in the DQN to converge faster to the optimal policy. Both Reward2 and Reward3, instead, do not penalize states that unevenly charge the harvesters and therefore require more iterations to converge to the optimal solution (not shown in the figure) due to the large number of system states to explore. Therefore, in the following simulations, we use the reward function Reward1 in both Figures 5 and 6, we average 40000 training steps every 100 steps in order to better show the convergence of the algorithm.

otherwise. Here, K = 2 and λ = 0.25. All three reward functions are designed to minimize the number of time slots required to fully charge all the harvesters. However, from Figure 5, we can observe that the best performance can be obtained using Reward1. In this case, the energy level accumulated by each harvester increases uniformly, which results in the DQN to converge faster to the optimal policy. Both Reward2 and Reward3, instead, do not penalize states that unevenly charge the harvesters and therefore require more iterations to converge to the optimal solution (not shown in the figure) due to the large number of system states to explore. Therefore, in the following simulations, we use the reward function Reward1 in both Figures 5 and 6, we average 40000 training steps every 100 steps in order to better show the convergence of the algorithm.

The deep Q-network performance for different reward functions.

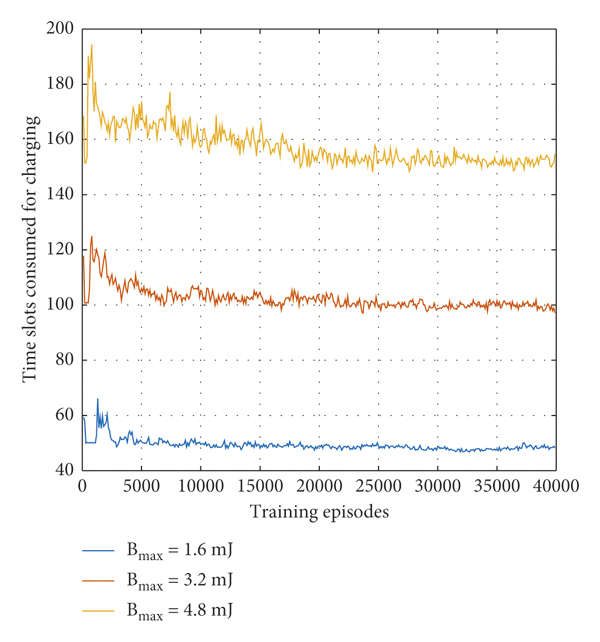

The deep Q-network performance for different energy buffer size B max.

Figure 6 shows that when each energy harvester in the system is equipped with a larger energy buffer, the number of system states increases, and therefore, DQN requires more training period to converge to the steady transmit strategy for each system state. We can observe that when B max = 1.6 mJ, the system only needs less than 5000 training episodes to converge to the optimal strategy, and when B max = 3.2 mJ, the system needs around 12000 training episodes to converge to the optimal policy. However, for B max = 4.8 mJ, the system needs as many as 20000 training episodes to converge to the optimal strategy.

In the following simulations, we explore the impact of the channel model on optimization problem P 1. For the Rician fading channel model, we consider

![]() and to be the same for all i,m. In this way, we can approximate the Rician distribution as a Gaussian distribution. We fix

and to be the same for all i,m. In this way, we can approximate the Rician distribution as a Gaussian distribution. We fix

![]() , but allow the standard deviation of both the amplitude and the phase of the channel to change to evaluate the performance on the system under different channel conditions. Since

, but allow the standard deviation of both the amplitude and the phase of the channel to change to evaluate the performance on the system under different channel conditions. Since

![]() and

and

![]() ,

,

![]() . We define

. We define

![]() to guarantee

to guarantee

![]() .

.

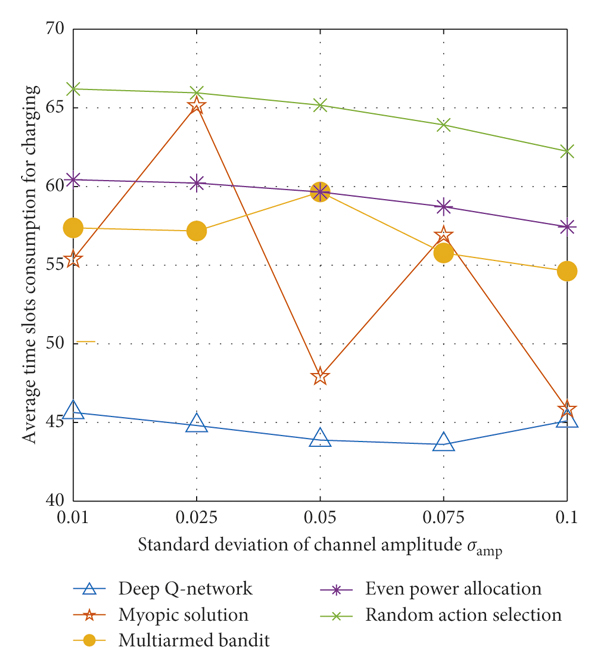

In Figure 7, we express the standard deviation

![]() of the phase and amplitude of the channel, and we compare the performance attained by the optimal policy with the performance of different other algorithms. The multiarmed bandit (MAB) algorithm is also implemented to compare with the DQN. In MAB, each bandit arm represents a particular transmission pattern. The upper confidence bound (UCB) algorithm [Reference Slivkins27] is implemented to maximize the reward

of the phase and amplitude of the channel, and we compare the performance attained by the optimal policy with the performance of different other algorithms. The multiarmed bandit (MAB) algorithm is also implemented to compare with the DQN. In MAB, each bandit arm represents a particular transmission pattern. The upper confidence bound (UCB) algorithm [Reference Slivkins27] is implemented to maximize the reward

![]() and determine the optimal action. Once the action is selected from the action space A, it will be used for transmission continuously. The myopic algorithm is another machine learning algorithm that can be compared with DQN. Myopic solution has the same structure as the DQN; however, the reward discount is defined as γ = 0. As a result, the optimal strategy is determined only according to the current observation instead of considering the future consequence. Myopic solution has been widely used to solve the complex optimization in wireless communication problem and achieve good system performance [Reference Wang, Liu, Gomes and Krishnamachari28]. Besides two machine learning algorithms, another two heuristic algorithms are also used for system performance comparison. For even power allocation, the transmit power P is evenly allocated on parallel channel for transmission. The random action selection is also applied for performance comparison. The random action selection has the worst performance while DQN performs best. Compared to the optimal existing algorithm multiarmed bandit algorithm, the DQN can consume 20% fewer time slots to complete charging. In some channel conditions, the myopic solution can achieve a similar performance as the DQN. However, the myopic solution cannot perform stably. For example, as the standard deviation of the channel amplitude is

and determine the optimal action. Once the action is selected from the action space A, it will be used for transmission continuously. The myopic algorithm is another machine learning algorithm that can be compared with DQN. Myopic solution has the same structure as the DQN; however, the reward discount is defined as γ = 0. As a result, the optimal strategy is determined only according to the current observation instead of considering the future consequence. Myopic solution has been widely used to solve the complex optimization in wireless communication problem and achieve good system performance [Reference Wang, Liu, Gomes and Krishnamachari28]. Besides two machine learning algorithms, another two heuristic algorithms are also used for system performance comparison. For even power allocation, the transmit power P is evenly allocated on parallel channel for transmission. The random action selection is also applied for performance comparison. The random action selection has the worst performance while DQN performs best. Compared to the optimal existing algorithm multiarmed bandit algorithm, the DQN can consume 20% fewer time slots to complete charging. In some channel conditions, the myopic solution can achieve a similar performance as the DQN. However, the myopic solution cannot perform stably. For example, as the standard deviation of the channel amplitude is

![]() , DQN can outperform myopic solution by 45%. The instability can be explained as the myopic solution makes the decision only on the current system state and the current reward, which does not consider the future consequence. Hence, the training effects cannot be guaranteed. Overall, the DQN has superiority in both the charging time consumption and performing stability corresponding to different channel conditions.

, DQN can outperform myopic solution by 45%. The instability can be explained as the myopic solution makes the decision only on the current system state and the current reward, which does not consider the future consequence. Hence, the training effects cannot be guaranteed. Overall, the DQN has superiority in both the charging time consumption and performing stability corresponding to different channel conditions.

The comparison of average time consumption between DQN and other algorithms (myopic solution, multiarmed bandit, even power allocation, and random action selection) in the Rician fading channel model. The number of transmit antennas is M = 3. The number of energy harvesters is N = 2.

To better explain the performance of the optimal policy, in Figure 8, we plot the action selected by DQN at a particular system state when

![]() . When

. When

![]() , the optimal action selected by multiarmed bandit is the third action

, the optimal action selected by multiarmed bandit is the third action

![]() , which can finish charging both harvesters in around 60 time slots. Meanwhile, the optimal policy determined by DQN can finish charging in around 43 time slots. To this end, Figure 8 shows that the charging process can actually be divided into two parts: before harvester 1 accumulates 1.2 mJ energy and harvester 2 accumulates 0.8 mJ energy, mostly action 4

, which can finish charging both harvesters in around 60 time slots. Meanwhile, the optimal policy determined by DQN can finish charging in around 43 time slots. To this end, Figure 8 shows that the charging process can actually be divided into two parts: before harvester 1 accumulates 1.2 mJ energy and harvester 2 accumulates 0.8 mJ energy, mostly action 4

![]() is selected. After that, mostly action 1

is selected. After that, mostly action 1

![]() is selected. As defined above, both the amplitude and the phase of the channel are Gaussian distributed with zero standard deviation,

is selected. As defined above, both the amplitude and the phase of the channel are Gaussian distributed with zero standard deviation,

![]() and

and

![]() . So when both the amplitude and the phase of the channel change, the simplified channel state information will be distributed around

. So when both the amplitude and the phase of the channel change, the simplified channel state information will be distributed around

![]() and

and

![]() . As a result, it can be shown that a policy that selects either action 1 or action 4 with different probabilities can have better performance than the policy that only selects action 3. Henceforth, the DQN can consume 40% fewer time slots to fully charge two energy harvesters.

. As a result, it can be shown that a policy that selects either action 1 or action 4 with different probabilities can have better performance than the policy that only selects action 3. Henceforth, the DQN can consume 40% fewer time slots to fully charge two energy harvesters.

The action selection process of two harvesters scenario when

![]() .

.

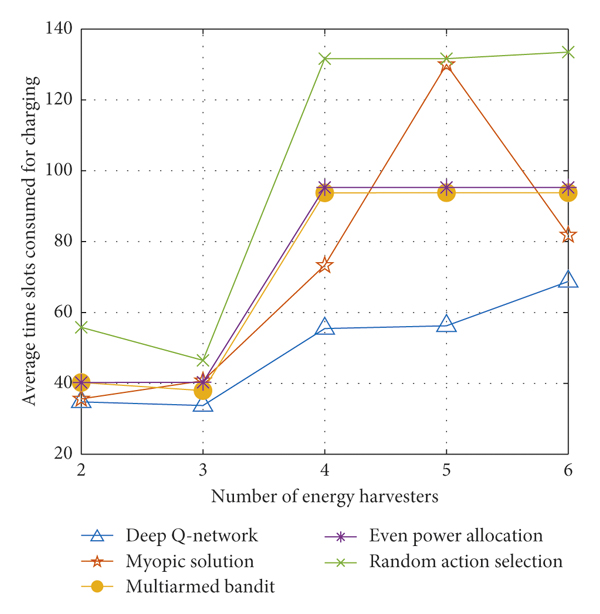

In Figure 9, the performance of the DQN is compared with the other four algorithms by varying the number of energy harvesters in the system. In general, as the number of energy harvesters increases, all four algorithms consume more time slots to complete the wireless charging process. Compared to the random action selection, DQN can consume at least 58% less time slots to complete the charging. The performance of the multiarmed bandit and the even power allocation is very similar, which can be explained as the optimal action determined by the multiarmed bandit algorithm is close to the even power allocation strategy. Compared with two fixed action selection strategies (multiarmed bandit and even power allocation), DQN can reduce the time consumption by up to 72% (when the number of energy harvesters is N = 3). The myopic solution is still not the optimal strategy. From the figure, we can observe that the myopic solution outperforms two fixed action selection algorithms. Even though in some conditions (N = 6), the performance difference between DQN and myopic solution is very small, the myopic solution consumes more than 15% of the time slot than DQN in average. Overall, the DQN is the optimal algorithm which consumes fewest time slots to fully charge all the energy harvesters regardless of the number of energy harvesters.

The comparison of average time consumption between DQN and other algorithms (myopic solution, multiarmed bandit, even power allocation, and random action selection) when the number of energy harvesters is N = 2, 3, 4, 5, 6. The number of transmit antennas is M = 3. The standard deviation of the channel amplitude is

![]() .

.

In Figure 10, the number of transmit antennas is increased from M = 3 to M = 4. The number of energy harvesters varies from N = 2 to N = 6. Though the number of antennas increases, the channel conditions between the transmitter and the energy harvesters become more complicated; DQN still outperforms all the other four algorithms. Compared with myopic solution, multiarmed bandit, even action selection, and random action selection, DQN can consume up to 27%, 54%, 55%, and 76% fewer time slots to fulfill the wireless charging, respectively. As the number of energy harvesters increases, the superiority of the DQN becomes more obvious compared to two fixed action selection algorithms, which can be explained as it is more inefficient to select one fixed action to deal with a more complicated varying channel environment. Even though in some conditions, the performance of the myopic solution and DQN is similar, the myopic solution is not stable in dealing with different energy harvesters conditions. The results from both Figures 9 and 10 demonstrate the superiority of the DQN in optimizing the time consumption for wireless power transfer.

The comparison of average time consumption between DQN and other algorithms (myopic solution, multiarmed bandit, even power allocation, and random action selection) when the number of energy harvesters is N = 2, 3, 4, 5, 6. The number of transmit antennas is M = 4. The standard deviation of the channel amplitude is

![]() .

.

5. Conclusions

In this paper, we design the optimal wireless power transfer system for multiple RF energy harvesters. Deep learning methods are used to enable the wireless transmitter to fully charge the energy buffers of all energy harvesters in the shortest time while meeting the information rate requirement of the communication system.

As the channel conditions between the transmitter and the energy harvesters are time-varying and unknown, we model the problem as a Markov decision process. Due to the large number of system states in the model and the difficulty of training, we adapt a deep Q-network approach to find the best transmit strategy for each system state. In the simulation section, multiple experimental environments are explored. The measured real-time data are used to run the simulation. Deep Q-network is compared with the other four existing algorithms. The simulation results validate that the deep Q-network is superior to all the other algorithms in terms of the time consumption for fulfilling wireless power transfer.

Data Availability

The simulation data used to support the findings of this study are available from the corresponding author upon request.

Conflicts of Interest

The authors declare that they have no conflicts of interest.