Introduction

Scholarly editions of texts are an integral part of the academic world of literary critics and historians, so much so that we take them for granted. The modern critical edition, the one referenced in footnotes, is broadly speaking an invention of the twentieth century: in the English-speaking world, modern theories of editing were developed intensively from the 1920s, in response to the particular challenges of editing Shakespeare, while in France, it was Gustave Lanson who pioneered the idea of critical editing at the Sorbonne in the early years of the century, with his exemplary edition of Voltaire’s Lettres philosophiques (1909). University presses continue to invest significant sums in new critical editions: Edinburgh University Press, for example, having completed the Edinburgh Edition of the Waverley Novels in thirty volumes, has now embarked on the ten-volume Edinburgh Edition of Walter Scott’s Poetry: such landmark editions play a dynamic role in our research culture.

How do these publishing initiatives figure in the present context of the turn to digital publishing? Digital editions of one sort or another have existed now for almost forty years, though there is widespread variation in terms of quality and functionality, and at times there is confusion between the simply digitised products which are little more than scanned versions of print books, and editions that are truly ‘born digital’. Some publishers produce digital versions of their existing print critical editions, the most ambitious example being Oxford Scholarly Editions Online, which brings together on one platform over 1,750 print critical editions published by Oxford University Press. Then there are stand-alone scholarly editions, of widely ranging ambition: Patrick Sahle’s Catalogue of Digital Scholarly Editions gives an overview of what has already been achieved, listing some 714 editions in six different languages, on topics ranging from history and literature to music and history of art.Footnote 1 Likewise, Greta Franzini’s Catalogue of Digital Editions has been gathering digital editions in an attempt to survey and identify best practices in the field of digital scholarly editing since 2012 (Franzini et al. Reference Franzini, Terras, Mahony, Driscoll and Pierazzo2016). Some of these editions, like The Blake Digital Text Project, are remarkable for the large number of high-quality images that they contain; others are particularly attractive because they include large numbers of manuscript images, for example, The Walt Whitman Archive, the Samuel Beckett Digital Manuscript Project, Jane Austen’s Fiction Manuscripts, or Nietzsche Source. It is entirely understandable that digital scholarly editions (or DSEs), in the early phase of digital publication, chose to focus on what we might term ‘documentary’ editions (Pierazzo Reference Pierazzo2015, pp. 74–83). Clearly, however, we have not yet exploited anything like the full potential of the digital medium for producing scholarly editions more generally. The situation has not changed radically since Peter Shillingsburg, in 2006, remarked of the digital editions then available that, ‘Yes they are better. No, they are not good enough’ (Shillingsburg Reference Shillingsburg2006, p. 111).

The challenge is clear. Rather than regard DSEs as derivatives of a print object, how might we rethink from first principles what a digital edition might look like, exploiting to the full the potential for critical editing in the digital medium? This raises many questions, beginning with the business model. There are strong pressures now for academic work to be available on open access, but scholarly editing in particular is a costly business, and financial sustainability is crucial, in particular for work that needs to be available over the long term, serving as a reference resource. We also have to consider the views and habits of the users. Even when researchers use digital editions, they are sometimes reluctant to quote them as the authoritative source of reference, so we should find ways of presenting digital editions as authoritative and easily referenceable. A print edition, with all its merits, is a static work of reference; digital editions, on the other hand, have the potential to be much more than that, to engage their readers by being tools of research and even to serve as platforms on which research can be published. These are exciting possibilities, and the creators of new digital resources need to enthuse and engage their users, and even encourage them to be demanding of the resource presented to them.

Faced by this ambitious agenda, the aim of the present volume is modest, taking as a case study one particular project that has been grappling with these questions. The three authors have all played roles in the planning and building of Oxford University Voltaire, a digital edition of the complete writings of Voltaire, launched commercially in January 2026 and distributed by Liverpool University Press [see Fig. 1].

Oxford University Voltaire Logo

This resource has been (re)created from the authoritative print edition of the Complete Works of Voltaire, produced in 205 volumes over nearly half a century, and published by the Voltaire Foundation in Oxford. We tell the story of that transformation here, in the hope that this case study will interest other creators and users of scholarly digital editions, and perhaps even influence future thinking about their development.

1 The State of the Art in Digital Scholarly Editing

1.1 Introduction

The constant evolution of scholarly editing reflects the profound transformations that have over the centuries affected the ways in which texts are produced, preserved, analysed, and disseminated. Rooted in the philological traditions of textual criticism, scholarly editions have long served as essential tools for understanding the cultural, historical, and linguistic contexts of written works. From the painstakingly crafted manuscript copies of antiquity to the authoritative critical editions of the print era, these endeavours have continuously adapted to address the challenges of textual preservation and interpretation. Today, we stand at the threshold of a new paradigm, shaped by the integration of digital technologies into the field.

Digital scholarly editions (DSEs) represent a convergence of traditional critical editing methodologies and contemporary technological innovation. These editions harness the power of digital tools to expand the possibilities of textual scholarship, enabling the representation of texts in new and previously unimaginable ways. By incorporating dynamic features such as hypertextual linking, multimedia integration, and other interactive functionalities, DSEs transcend the static limitations of print and have the capacity to foster deeper engagement with textual materials and their historical contexts.Footnote 2

The significance of DSEs extends well beyond their technical capabilities. They address enduring challenges in the field, such as the accessibility and preservation of texts. In doing so, they democratise access to cultural heritage, enabling scholars, students, and the general public to engage with primary sources and scholarly commentary from virtually anywhere in the world. Furthermore, DSEs facilitate collaborative and interdisciplinary approaches to text analysis, forming a core element of the digital humanities research community (Sahle Reference Sahle, Driscoll and Pierazzo2016).

This chapter aims to provide a comprehensive examination of the state of the art in digital scholarly editing. It begins with an exploration of the historical foundations of scholarly editions, tracing their evolution from manuscript traditions to the advent of digital methodologies. Following Paul Eggert’s recent work, we also highlight the dual impulses guiding today’s scholarly editions: the archival, which focuses on preserving the historical integrity of texts, and the editorial, which curates and interprets texts for modern audiences (Eggert Reference Eggert2019). The combination of these perspectives in the development of the Oxford University Voltaire allows us to examine how DSEs might negotiate the balance between historical fidelity and contemporary relevance in the future, addressing both the practical and theoretical dimensions of their creation.

1.2 A Brief History of Scholarly Editions

1.2.1 Origins and Early Practices

Scholarly editions have played a pivotal role in the preservation and dissemination of our cultural and textual heritage throughout history. The primary purpose of these editions is to provide an authoritative and reliable version of a text, often accompanied by critical apparatus, annotations, and commentary. These elements enable scholars to engage with texts in a way that contextualises their historical, cultural, and linguistic significance. The history of scholarly editions is thus deeply intertwined with the development of written culture itself. In antiquity, the first ‘scholarly’ efforts were devoted to preserving oral traditions and sacred texts, often through laborious manuscript copying. The first textual scholars, belonging to the Alexandrian school, included figures such as Zenodotus and Aristarchus, who were notable for their early editorial endeavours in standardising the Homeric epics (Nagy Reference Nagy1994). These editors sought not only to preserve the text in its ‘original’ form, but also to interpret and resolve inconsistencies in the manuscript tradition, thus laying the theoretical and practical groundwork for textual criticism as a discipline for the next two millennia.

The modern need for scholarly editions arose from the inherent variability of textual witnesses, especially before the advent of the printing press. Medieval manuscripts were copied by hand, often introducing errors, omissions, and variations (Love Reference Love, Kaylor and Philips2012). These differences necessitated a systematic approach to collating and evaluating textual evidence to produce editions that reflected the closest approximation of the original text. As the field of textual criticism developed in the early-modern period, scholarly editions evolved to incorporate methodologies that addressed these challenges. Editors began to rely on comparative analysis, using multiple manuscript sources to identify and correct errors, while also documenting significant variants (D’Amico Reference D’Amico1988). This approach became a hallmark of scholarly philology, ensuring that texts could be studied and interpreted with confidence both in terms of authenticity and fidelity.

The emergence of the printing press in the fifteenth century marked a significant turning point in the history of scholarly editions. For the first time, texts could be reproduced on a large scale with consistent quality, allowing for broader dissemination and access (Eisenstein Reference Eisenstein1980). This technological advancement also facilitated the development of proto-scholarly editions, such as those by the Renaissance publisher Aldus Manutius, which emphasised textual accuracy and often included paratextual elements like annotations and commentaries (Margolis Reference Margolis2023). These works represent an early intersection of editorial labour and intellectual interpretation, reflecting the dual objectives of preserving and elucidating texts.

1.2.2 Scholarly Editions in the Modern Era

By the nineteenth century, scholarly editions had become central to academic inquiry, especially in the context of literary and historical studies. The rise of philology as a scientific discipline, exemplified by figures such as Karl Lachmann, emphasised the rigorous comparison of manuscript variants to reconstruct authorial intent (Fornaro Reference Fornaro2011). This period also marked the ascendancy of what Paul Eggert terms the ‘capital-R Romantic author’, whose intentions were seen as the definitive guide to textual authority (Eggert Reference Eggert2019, p. 5). Scholarly editions were imbued with a sense of finality, presenting a single, authoritative version of a text that sought to encapsulate its essential meaning as that intended by its author. However, this approach often sidelined the material and social dimensions of textual production. The literary work was treated as an idealised entity, detached from its historical and material contexts. This limitation would later be challenged by the emergence of book history and social-text theories, which emphasised the collaborative and contingent nature of textual production.

The latter half of the twentieth century witnessed significant shifts in the theory and practice of scholarly editing. Influenced by post-structuralist critiques, scholars began to question the privileging of authorial intent and the notion of a definitive text. Key contributions from Jerome McGann and D. F. McKenzie, who argued for a ‘social-text’ approach that acknowledged the multiple agencies involved in textual production, broadened the interests and actors of scholarly editions to include publishers, typesetters, and readers, among others (McGann Reference McGann1983, Reference McGann1991; McKenzie Reference McKenzie1986). This perspective redefined the scholarly edition as a dynamic interplay of textual, material, and social elements, rather than a fixed repository of meaning. Paul Eggert’s earlier work advances this dialogue by proposing the concept of ‘textual agency’, which encompasses the intentions and actions of all contributors to a text’s life cycle, from authors to readers (Eggert Reference Eggert2009). Eggert, following Peter Robinson (Reference Robinson2013a), further emphasises the need for scholarly editions to address these complexities, presenting the text as both a historical artifact and a living work that continues to evolve through its interactions with readers (2019).

The transition to DSEs represents a continuation of these theoretical developments, while also introducing new possibilities and challenges. The digital medium enables editions to function simultaneously as archives and arguments, integrating vast amounts of data while foregrounding editorial decisions (Eggert Reference Eggert2016). This dual role aligns with his broader vision of the scholarly edition as an active mediator between past and present, materiality and meaning.

Digital editions also offer unprecedented opportunities for interactivity and accessibility. By incorporating tools for searching, linking, and annotating, they invite users to engage with texts in ways that transcend the linear constraints of print. Yet, the digital turn also demands a renewed commitment to editorial rigour and sustainability, ensuring that these innovations serve the enduring goals of textual scholarship (Robinson Reference Robinson2013b; Pierazzo Reference Pierazzo2015). In this evolving landscape, the history of scholarly editions serves as both a foundation and a guide. By understanding the principles and practices that have shaped the field, we can better navigate the complexities of its digital future, preserving the richness of textual heritage while embracing the transformative potential of new technologies.

1.2.3 Methodologies

The methodologies underpinning traditional scholarly editions have been shaped by centuries of practice and refinement, with textual criticism at their core. One key approach is stemmatics, developed by Karl Lachmann in the nineteenth century, which aims to reconstruct the ‘archetype’ or the earliest recoverable form of a text by analysing patterns of variation across surviving manuscripts. This involves constructing a ‘stemma codicum’, a family tree that traces the relationships between different manuscript witnesses (Roelli Reference Roelli and Roelli2020).

Another approach is the creation of diplomatic editions, which faithfully reproduce a specific manuscript, including its idiosyncrasies such as spelling variations, marginalia, and scribal errors. These editions prioritise historical authenticity over editorial correction and are often used for manuscripts with significant historical or cultural value. On the other hand, so-called ‘eclectic’ editing represents a more interpretive methodology, where editors select the ‘best’ readings from various manuscript sources to construct an ideal text. This method is common in critical editions of literary and religious texts, where the goal is to present a version that aligns with the editor’s judgment of authorial intent (Sahle Reference Sahle, Driscoll and Pierazzo2016).

A critical apparatus, integral to many traditional editions, documents the editorial decisions and variant readings across sources. This allows readers to trace the editor’s reasoning and engage with the textual evidence directly. The apparatus serves as both a record of textual variants and a tool for scholarly debate.

Traditional methodologies have also been shaped by the goals of the editions themselves. For instance, variorum editions compile a comprehensive record of commentary, interpretations, and textual variants, making them valuable resources for understanding the reception history of a text. Meanwhile, facsimile editions provide high-quality reproductions of manuscripts, offering visual access to their original form.

While these methodologies laid the foundation for modern scholarly practice, they were not without limitations. Editors often grappled with incomplete or conflicting evidence, and their subjective decisions could introduce biases into the editions. Moreover, the constraints of print technologies meant that the rich interconnections between textual variants and commentary often had to be simplified, resulting in a loss of nuance. These foundational methodologies continue to inform the development of DSEs, which aim to overcome the limitations of print by leveraging the dynamic capabilities of the digital medium.

1.2.4 Challenges

Traditional scholarly editions, while invaluable, encountered significant challenges that hindered their effectiveness in representing and preserving complex texts. One of the foremost limitations was the linear format of print media. Texts with extensive variations, interlinear glosses, or marginal annotations were often simplified or truncated to fit the constraints of a printed page. This reduction compromised the ability of editions to fully represent the richness and complexity of source materials.

The reliance on physical archives created additional barriers to reproducibility and comparison. Scholars frequently had to travel to specific locations to consult rare manuscripts or editions, which not only required significant resources but also introduced geographical inequalities in research opportunities. Moreover, physical editions were vulnerable to environmental degradation, loss, and damage, further jeopardising the longevity of scholarly efforts (Baillot & Busch Reference Baillot and Busch2021).

Another persistent issue was the subjectivity of editorial decisions. The interpretative nature of constructing critical editions meant that editors often had to make subjective choices about which variants to include, how to resolve discrepancies, and which annotations to prioritise. These decisions, while necessary, introduced biases that could skew the representation of a text and its historical context. The lack of transparency in some editorial processes exacerbated this problem, leaving readers without a clear understanding of the rationale behind key decisions.

Accessibility and dissemination posed another major challenge. High-quality scholarly editions were often produced in limited print runs, making them accessible only to researchers with access to specialised libraries. This exclusivity limited broader engagement with the texts, curbing their potential influence and scholarly utility. Furthermore, the prohibitive costs of production and acquisition meant that such editions were often financially inaccessible to smaller institutions and independent scholars.

Finally, traditional editions struggled with the fragmentation of resources. Related materials, such as commentary, facsimiles, and critical analyses, were often dispersed across multiple publications, requiring scholars to piece together information from disparate sources. This disjointed approach hindered a comprehensive study and made it challenging to engage with a text holistically. These challenges underscored the need for innovative methodologies that could address the limitations of traditional practices. The advent of digital technologies promised solutions to many of these issues, paving the way for more accessible, dynamic, and integrated approaches to scholarly editing.

1.3 Current Trends in Digital Scholarly Editions

1.3.1 Towards the Digital

The transition from traditional scholarly editions to their digital counterparts began in the late twentieth century, fuelled by advancements in computing and information technology. One of the earliest steps in this evolution was the use of computer-assisted collation tools, which automated the labour-intensive process of comparing textual variants. This innovation allowed scholars to analyse large corpora of manuscripts more efficiently and with greater precision (Gilbert Reference Gilbert1973).

Digital repositories emerged as another critical development in this transition. Projects like the Thesaurus Linguae Graecae (TLG) and the Perseus Digital Library provided scholars with centralised platforms for accessing and studying digitised texts.Footnote 3 These repositories not only enhanced accessibility but also facilitated comparative research by integrating tools for textual analysis.

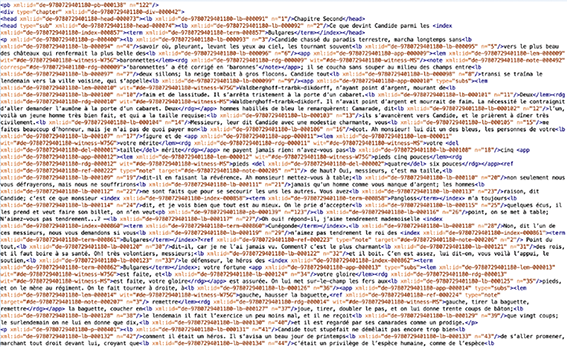

The rise of the Text Encoding Initiative (TEI) in the 1980s marked a watershed moment in the evolution of DSEs.Footnote 4 The TEI provided a standardised framework for encoding textual data – first in SGML markup, and then, finally from 2002, in XML – enabling the consistent and interoperable representation of texts. This standardisation was crucial for ensuring that digital editions could be preserved, shared, and utilised across different platforms and research contexts (Burnard Reference Burnard2014).

Multimedia integration further distinguished digital editions from their print predecessors. High-resolution images of manuscripts, interactive annotations, and hypertextual links allowed users to engage with texts in new and dynamic ways. These features made it possible to represent complex textual relationships, such as parallel narratives or intertextual references, with greater clarity and depth (Van Mierlo Reference Van Mierlo2022).

The shift towards digital editions was not without challenges, however. Ensuring the long-term preservation of digital resources such as DSEs required robust archival strategies, including the use of redundant storage systems and migration to newer formats as technology evolved. Additionally, the reliance on proprietary software and platforms raised concerns about sustainability and accessibility (Oltmanns et al. Reference Oltmanns, Hasler, Peters-Kottig and Kuper2019). Despite these issues, the evolution towards the digital has fundamentally reshaped the landscape of textual scholarship and publishing.

1.3.2 Digital Scholarly Editions Today

Over the past two decades, DSEs have become central to contemporary textual scholarship, presenting significant opportunities and challenges as they adapt to both analogue and born-digital materials. Drawing on the foundational work of Peter Shillingsburg (Reference Shillingsburg1996), Hans Walter Gabler (Reference Gabler2010), Amy Earhart (Reference Earhart2012), Peter Robinson (2013), Elena Pierazzo (2016), Patrick Sahle (Reference Sahle, Driscoll and Pierazzo2016), and Paul Eggert (Reference Eggert2019), as well as recent discussions by James O’Sullivan and Michael Pidd (Reference O’Sullivan and Pidd2023), this section examines how DSEs can address current cultural, technical, and methodological demands while exploring future-oriented possibilities.

Sahle’s assertion that a true digital edition must utilise the unique affordances of the digital medium remains a cornerstone of scholarly discourse. A merely ‘digitised’ edition – that is, a static reproduction of a print artefact – is insufficient to capture the dynamism of digital scholarship. Instead, DSEs must go beyond remediation, becoming tools for interactivity, collaboration, and interpretation. As Sahle notes, a digital edition should be unprintable without losing significant functionality, underscoring its reliance on digital technology to reveal multiple textual dimensions (2017). Digital editions should also embrace the possibilities of hypertextuality, multimedia integration, and algorithmic manipulation, creating interactive environments that transcend the limitations of paper editions. This paradigm shift moves away from static representations towards dynamic, modular structures that facilitate continuous revision and reinterpretation (Earhart Reference Earhart2012).

According to Sahle, good DSEs often navigate between two editorial roles that exist in tension, one of representation and the other of critical engagement. Representation involves reproducing documents visually or textually, while critical engagement entails applying scholarly methods to enhance accessibility and usability. Historically, as we have seen previously, these practices were rooted in philological traditions, focusing on reconstructing authorial intention or ‘original’ versions of texts. Paul Eggert’s distinction between the ‘archival’ and ‘editorial’ impulses in scholarly editing functions in much the same way but provides perhaps a more nuanced framework for understanding DSEs today. Archival activities focus on the faithful recording and preservation of materials (Sahle’s ‘representation’), while editorial efforts mediate these materials for audience consumption (i.e., ‘critical engagement’). Eggert’s proposed ‘slider’ model for DSEs emphasises the interconnectedness of these roles, suggesting that DSEs must balance fidelity to source materials with accessibility and usability for diverse audiences on a continuum rather than in a linear fashion (Reference Eggert2019, pp. 83–89).

The interplay between the archival and editorial impulses highlights the complexity of DSEs and their intended readerships. Archival editions are document-facing, ensuring a faithful transcription of source materials and unhindered access to originals. Editorial texts, in contrast, are audience-facing, seeking to interpret and contextualise materials for usability and clarity. This distinction is fluid, with projects often integrating both impulses to varying degrees. For example, the Rossetti Archive and the Walt Whitman Archive demonstrate how archival fidelity can coexist with reader-oriented interactivity, creating hybrid models that enrich scholarly engagement.Footnote 5

Another critical characteristic of DSEs is their customisability. Users can interact with the text in ways that suit their specific research needs. For instance, scholars can toggle between different textual layers, such as diplomatic transcriptions and critical editions, or view textual variants and annotations in parallel (Ohge Reference Ohge2021). This flexibility makes DSEs versatile tools for a wide range of academic inquiries. Certain DSEs can furthermore expand this interactivity to emphasise collaboration and crowdsourcing, leveraging digital platforms to involve broader communities in the editorial process (Terras Reference Terras, Schreibman, Siemens and Unsworth2016). Tools such as MediaWiki, Zooniverse, and GitHub, or bespoke platforms for crowdsourced transcription, allow researchers, students, and even non-specialist enthusiasts to contribute to the creation and enhancement of digital editions.Footnote 6 This participatory approach not only democratises access to scholarly work but also accelerates the completion of large-scale editorial projects.

Finally, DSEs should prioritise accessibility and sustainability. By making texts available online, they reach a global audience, breaking down geographical and financial barriers to scholarly resources. Many digital editions are open access, aligning with the growing emphasis on equitable access to knowledge and publicly funded research outputs. Furthermore, efforts to ensure the long-term preservation of DSEs, such as adherence to open standards like TEI-XML and partnerships with digital archives, seek to address concerns about the ephemeral nature of digital technologies.Footnote 7

1.3.3 Technological Foundations

The backbone of DSEs is built upon robust technological frameworks that facilitate their dynamic and interactive nature. Among the most important of these frameworks is the TEI, which has become the standard for encoding texts in a structured and machine-readable format. Text encoding is foundational to DSEs, and the TEI has become the lingua franca for encoding textual materials, enabling both interoperability and preservation. The TEI schemas capture the physical, logical, and semantic structures of texts, accommodating various editorial approaches (Burnard Reference Burnard2014). However, the reliance on TEI has also reinforced book-oriented tropes, limiting the exploration of born-digital materials that challenge traditional textual hierarchies (O’Sullivan & Pidd Reference O’Sullivan and Pidd2023).

Automated collation tools, such as Collate, CollateX, and HyperCollate, have become essential components in the modern editorial workflow.Footnote 8 These tools facilitate the comparison of multiple textual witnesses, automating the process of identifying textual variants and generating alignment visualisations (Haentjens Dekker et al. Reference Haentjens Dekker, Van Hulle, Middell, Neyt and Van Zundert2015). This not only saves scholars considerable time compared to traditional manual collation but also ensures precision and repeatability in results. As highlighted by Robinson (2013), such tools represent a shift in scholarly editing towards computational efficiency, enabling editors to focus on interpretative tasks rather than repetitive technical labour.

More recently, the International Image Interoperability Framework (IIIF) has become indispensable for DSEs incorporating high-resolution manuscript images.Footnote 9 The IIIF provides a standardised protocol for accessing, annotating, and manipulating visual materials, ensuring compatibility across platforms. Features such as deep zooming, annotation layers, and comparative views allow users to closely examine the intricate details of digitised artefacts.

Open-source platforms such as Omeka, Scalar, EVT (Edition Visualisation Technology), and TEI-Publisher empower scholars to develop customised digital editions without requiring extensive technical expertise.Footnote 10 These platforms offer modular features for embedding multimedia, linking external datasets, and designing user-centric interfaces. Their adaptability allows projects to cater to diverse audiences, from academic researchers to public users, ensuring that DSEs remain inclusive and accessible. By democratising the technical aspects of digital editing, these tools have expanded the range of participants who can contribute to the creation of scholarly editions.

High-performance computing solutions have further transformed the scalability and reach of DSEs over the past several years. These technologies enable projects to handle large datasets, and implement advanced features such as machine learning-driven text analysis. As the demand for interactivity, responsiveness, and data-intensive features grows, computationally heavy solutions will be needed to provide the infrastructural backbone necessary to support cutting-edge digital editions. Moreover, advances in data visualisation and user interaction have introduced new dimensions to DSEs. From dynamic mapping tools to interactive timelines, these innovations enhance the interpretative possibilities of digital texts. They also address the above-mentioned tension between the archival and editorial impulses by presenting data in ways that serve both preservationist and interpretative goals.

Digital scholarly editions are also beginning to explore the potential of augmented reality (AR) and virtual reality (VR) to create immersive experiences. These technologies allow users to engage with texts and historical contexts in entirely new ways. For example, a virtual reconstruction of a medieval scriptorium might enable users to ‘walk through’ the environment where manuscripts were created, while VR annotations provide a spatial and interactive approach to textual commentary. These applications are still emerging, but they represent an exciting frontier for experiential and contextual scholarship (O’Sullivan & Pidd Reference O’Sullivan and Pidd2023).

Digital editions are increasingly prioritising inclusive and accessible design, ensuring that their resources reach diverse audiences. Features such as text-to-speech functionality, multilingual interfaces, colour-contrast adjustments, and keyboard navigability ensure that digital editions accommodate users with varying needs, including those with disabilities. By adopting inclusive practices, DSEs extend the impact and usability of their work to a global audience (Martinez et al. Reference Martinez, Dillen, Bleeker, Sichani and Kelly2019).Footnote 11 These innovative practices highlight how DSEs are not merely digital reproductions of print editions but complex scholarly objects in their own right.

1.3.4 Challenges and Critiques

Despite the significant advancements in DSEs, several challenges and critiques have emerged, reflecting both technical and conceptual issues. These challenges underscore the need for thoughtful innovation, critical reflection, and interdisciplinary collaboration. For example, while many DSEs have successfully reimagined their analogue sources, they have largely retained book-like structures and methodologies. O’Sullivan and Pidd argue that this conservatism limits DSEs’ potential, particularly when dealing with born-digital materials such as social media posts, digital fiction, and video games (2023). These materials demand a rethinking of editorial practices to reflect their non-linear, multimodal, and platform-dependent characteristics (Bekius & Van Hulle Reference Bekius, Van Hulle, Beloborodova and Van Hulle2024). Born-digital texts often lack fixed boundaries, for example, existing as fluid and interactive entities that challenge traditional notions of textual stability.

The integration of these born-digital materials – content created and consumed entirely within digital ecosystems – is one of the most pressing challenges for DSEs. Tweets, blogs, and e-literature defy conventional editorial frameworks, requiring tools and methods that can address temporality, interactivity, and contextuality. Projects like Digital Fiction Curios and Pathfinders exemplify innovative approaches to editing born-digital texts.Footnote 12 Digital Fiction Curios creates immersive virtual environments to showcase early e-literature, while Pathfinders records the traversal of hypertext works on legacy systems. These models highlight the necessity of embracing impermanence and fluidity, acknowledging that some aspects of digital materials – such as their original interactive platforms – may be irretrievable (O’Sullivan & Pidd Reference O’Sullivan and Pidd2023).

Digital scholarly editions, as with all digital resources, face the persistent challenge of long-term preservation. Unlike printed editions, digital formats are vulnerable to technological obsolescence, requiring continuous updates and migrations to new platforms. The lack of standardised strategies for archiving DSEs exacerbates this problem, as projects often rely on temporary funding and institution-specific infrastructures. Ensuring the longevity of DSEs demands robust and scalable preservation strategies, including partnerships with digital repositories and adherence to open standards such as XML-TEI.Footnote 13 Similarly, IIIF protocols have been instrumental in addressing some of these issues, but broader institutional and financial commitments are necessary to secure our growing digital heritage (Orlandi & Marsili Reference Orlandi and Marsili2019).

While DSEs aim to enhance access to scholarly materials, global disparities still persist in terms of technological infrastructure and digital literacy. Scholars and institutions in resource-limited settings often struggle to access high-resolution images or advanced functionalities due to bandwidth limitations or lack of access to modern devices (Ragnedda & Gladkova Reference Ragnedda and Gladkova2020). Furthermore, the dominance of English-language interfaces and metadata tends to marginalise non-English-speaking users, restricting global engagement with DSEs. Addressing these inequities requires multilingual interfaces, localised resources, and open-access policies that can further democratise digital scholarship.Footnote 14

The reliance on advanced technical skills, such as XML encoding, digital collation, and data visualisation, also creates barriers for scholars trained in traditional methodologies. Many researchers lack the resources or time to acquire these skills, limiting their ability to contribute to or benefit from DSEs fully. This issue underscores the importance of developing user-friendly tools and platforms that minimise the technical burden (Franzini et al. Reference Franzini, Terras and Mahony2019). For instance, platforms like TEI-Publisher have made strides in enabling non-technical users to create and manage digital editions, but more accessible solutions are needed to bridge the gap further.

The massive digitisation of texts raises complex questions about intellectual property rights, particularly for modern works or texts under copyright. Navigating these legal frameworks can be cumbersome and often restricts the scope of what can be included in a DSE. Open-access initiatives help alleviate some of these issues, but they also face resistance from publishers and other stakeholders. Crowdsourcing transcription and annotation has also introduced ethical questions about labour and attribution (Terras Reference Terras, Schreibman, Siemens and Unsworth2016). Contributors, particularly non-specialists, may not receive adequate recognition or compensation for their work. Furthermore, digitising sensitive or culturally significant texts demands careful consideration of the communities and traditions associated with those materials to avoid exploitation or misrepresentation (Risam Reference Risam2018).

Finally, some critics argue that DSEs, despite their technological advantages, often fail to capture the tactile and material qualities of physical texts. Elements such as paper texture, ink composition, and marginal annotations carry significant scholarly value that digital representations may overlook or inadequately replicate. While high-resolution imaging and interactive tools can approximate these qualities, the sensory experience of engaging with a physical artifact remains difficult to reproduce. These various challenges highlight the need for ongoing innovation and critical reflection within the field of digital scholarly editing. Addressing these issues will ensure that DSEs continue to serve as transformative tools for textual scholarship, balancing the preservation of cultural heritage with the demands of a rapidly evolving digital landscape.

1.4 Future Directions in Digital Scholarly Editions

1.4.1 AI and Machine Learning

Artificial intelligence (AI) and machine learning (ML) are increasingly central to the future of the digital humanities and, by extension, DSEs, offering new techniques and approaches for the digitisation, analysis, and dissemination of texts and text traditions. These technologies have begun to revolutionise the way scholars approach traditional problems in textual studies, including transcription, collation, and annotation (Jannidis Reference Jannidis, Cohen, Price and Bernardini2025).

One of the most impactful applications of AI lies in automating the transcription of historical documents. Tools such as Transkribus leverage neural networks trained on diverse handwriting styles to convert manuscripts into digital text with remarkable accuracy.Footnote 15 These tools dramatically reduce the time and labour required to transcribe large collections, while their adaptive algorithms improve continuously as they process more data. For instance, early trials with Transkribus demonstrated its ability to transcribe seventeenth-century handwritten texts with over 90 per cent accuracy, a result that has since been refined with larger datasets and more sophisticated models (Nockels et al. Reference Nockels, Gooding and Terras2024). Such advancements make it feasible to work with previously inaccessible or underexplored archives.

AI also plays a critical role in the collation of textual variants. Machine learning algorithms can identify and align variations across multiple manuscript witnesses, providing comprehensive visualisations of textual relationships. This capability supports more precise reconstructions of original texts and offers new insights into the evolution of works over time (Camps et al. Reference Camps, Clérice and Pinche2021). CollateX, for example, an advanced collation tool mentioned earlier, has been integrated with some rudimentary machine-learning capacities to improve its ability to handle complex textual traditions, such as those found in medieval European literature, early biblical manuscripts, or even modern genetic editions (Van Hulle Reference Van Hulle2016; Whittle et al. Reference Whittle, O’Sullivan, Pidd and Hegland2023).

Beyond transcription and collation, AI enhances the semantic analysis of texts through Natural Language Processing. These technologies allow researchers to extract themes, map relationships, and analyse stylistic changes across extensive corpora (Janke et al. Reference Jänicke, Franzini, Cheema and Scheuermann2017). For instance, sentiment analysis and topic modelling have been applied to large datasets, revealing patterns of cultural and linguistic evolution that would be difficult to detect manually (Galleron et al. Reference Galleron, Patras, Arias and Tanasescu2024). Natural Language Processing also facilitates multilingual studies, enabling the alignment and comparison of texts across different languages and scripts (Levchenko Reference Levchenko2024).

The annotation of texts has also benefited significantly from AI-driven tools. Automated systems can identify and tag entities such as names, dates, and places, linking them to relevant databases or external resources (Humbel et al. Reference Humbel, Nyhan, Vlachidis, Sloan and Ortolja-Baird2021). This not only streamlines the editorial process but also enriches the contextual layers of DSEs, offering users a deeper understanding of the material. For example, projects such as the Recogito platform have employed AI to annotate geographical and historical references in ancient texts, making them accessible to both scholars and the general public.Footnote 16

Another frontier for AI in DSEs is predictive restoration. By training algorithms on extensive datasets of similar texts, AI can reconstruct missing or damaged sections of manuscripts with high degrees of probability (Humbel et al. Reference Humbel, Nyhan, Vlachidis, Sloan and Ortolja-Baird2021). This technique has already been applied to fragmentary papyri and inscriptions, where it has provided reconstructions that align closely with scholarly expectations (Sommerschield Reference Sommerschield, Assael and Pavlopoulos2023). The implications for archaeology and palaeography are profound, as these tools extend the reach of traditional methods.

Artificial intelligence and ML also support collaborative and participatory models in digital scholarship. Tools that validate crowdsourced data or assist non-experts in contributing to DSE projects are becoming more common. For example, AI-driven quality control systems ensure the accuracy of community-generated transcriptions and annotations, allowing large-scale projects to harness the collective efforts of diverse participants without compromising scholarly standards (Mahotra Reference Mahotra and Majchrzak2024).

As AI and ML continue to evolve, their integration into DSEs promises to redefine the landscape of textual scholarship. These technologies not only accelerate traditional workflows but also open entirely new avenues for exploration and interpretation, offering tools that were unimaginable even a decade ago. The ethical and methodological implications of these advancements, however, warrant careful consideration, ensuring that the use of AI aligns with the principles of transparency, inclusivity, and scholarly rigour.

1.4.2 Interdisciplinary Collaboration

The future of scholarly editing thus lies in fostering interdisciplinary collaborations that bridge the gap between textual studies, computer science, design, and the wider digital humanities. Such collaborations enable the integration of expertise from diverse fields to address the multifaceted challenges of creating and sustaining the next generation of DSEs. Textual criticism can benefit from advanced computational methods such as machine learning algorithms for textual analysis, while computer scientists can gain access to complex, real-world datasets to refine their tools and techniques (Van Hulle Reference Van Hulle, Eliot and Rose2019). This reciprocal relationship not only enhances the capabilities of DSEs but also drives research in computational linguistics and AI (Van Zundert Reference Van Zundert, Driscoll and Pierazzo2016).

Another crucial collaboration needs to be established between digital designers and humanities scholars. User experience (UX) design and interface development play an essential role in ensuring that DSEs are accessible and intuitive for a broad audience (Andrews & Van Zundert Reference Andrews, Van Zundert, Bleier, Bürgermeister, Klug, Neuber and Schneider2018). By integrating feedback from textual scholars and end-users, designers can create platforms that balance aesthetic appeal with functional precision (Wheeles Reference Wheeles2010). Such designs encourage engagement and enable users to explore texts in innovative ways (Schofield et al. Reference Schofield, Whitelaw and Kirk2017). The involvement of historians and cultural theorists further enriches the development of DSEs. These experts provide critical insights into the context and interpretation of historical texts, ensuring that digital representations honour the cultural significance of the originals. Collaborative projects often benefit from ethnographic and historiographic perspectives, which guide the ethical curation of sensitive materials and marginalised voices.

Moreover, interdisciplinary collaborations extend to the preservation and dissemination of digital editions. Archivists, librarians, and information scientists contribute essential expertise in metadata standards, cataloguing, and digital preservation strategies. Their involvement ensures that DSEs remain accessible and viable for future generations, addressing concerns about technological obsolescence and data decay. The success of interdisciplinary collaboration also depends on effective project management and communication strategies. Shared digital platforms, regular cross-disciplinary workshops, and open-access publications foster a culture of transparency and inclusivity. Funding agencies increasingly recognise the value of interdisciplinary research, encouraging projects that bring together diverse perspectives to tackle complex problems.

1.4.3 Sustainability

As mentioned, the sustainability of DSEs is one of the most pressing challenges facing the field. Unlike traditional print editions, DSEs require continuous technical maintenance, software updates, and server hosting to remain accessible over time. Ensuring that these projects are not only initiated but also maintained for future generations demands innovative funding models and institutional support (Barats et al. Reference Barats, Schafer and Fickers2020).

One key approach to sustainability is the adoption of open standards and interoperable formats, such as TEI-XML for text encoding and IIIF for image delivery. These standards ensure that DSEs are not tied to proprietary systems, reducing the risk of obsolescence as technologies evolve. Collaborative efforts to establish and adhere to such standards across institutions can help create a more sustainable ecosystem for digital scholarship. Notably, tools like EditionCrafter combine IIIF and static site generation to create lightweight, flexible, and maintainable digital editions, reflecting broader interest in minimal computing strategies.Footnote 17

Minimal computing has emerged as a particularly relevant trend, emphasising low-tech, sustainable approaches to digital scholarship that minimise environmental impact and dependence on complex infrastructure. This movement, promoted by groups like the Digital Humanities Climate Coalition and the Software Sustainability Institute, highlights the ethical dimensions of digital production and maintenance, encouraging projects to adopt efficient, resource-conscious methods.Footnote 18

Institutional partnerships are another critical component of sustainability. Universities, libraries, and cultural heritage organisations play a vital role in providing the infrastructure and expertise needed to support DSEs. Long-term agreements between these institutions and project teams can ensure the continuity of resources and knowledge transfer. In some cases, digital preservation initiatives, such as LOCKSS (Lots of Copies Keep Stuff Safe), provide distributed storage solutions that safeguard against data loss.Footnote 19

Funding models for DSEs must also evolve to address their unique requirements. Traditional grants, often limited in duration, are insufficient for the ongoing maintenance of digital projects (VandeCreek Reference VandeCreek2022). Instead, hybrid funding models that combine public grants, private sponsorships, and crowdfunding initiatives offer a more sustainable solution. Public–private partnerships, where corporations provide financial or technical support in exchange for visibility or collaboration opportunities, have also proven effective in sustaining large-scale projects.

Open-access publishing models have emerged as both an ethical imperative and a practical strategy for sustainability. By making DSEs freely available, creators can attract a larger user base, which in turn generates more opportunities for collaborative input and potential funding. However, open access requires alternative revenue streams, such as institutional support or subscription-based premium features for advanced tools. Ethical considerations also extend to issues of data governance and representation. The CARE principles, for example, advocate for collective benefit, authority to control, responsibility, and ethics in relation to Indigenous data – principles that may be productively applied to DSE design and management.Footnote 20

Finally, integrating sustainability planning into the early stages of project design is essential. Grant applications and project proposals should include detailed plans for long-term funding, preservation strategies, and scalability. By proactively addressing these issues, project teams can mitigate the risks associated with funding gaps or technological obsolescence. The sustainability of DSEs ultimately depends on the collective efforts of scholars, institutions, funders, and users. By embracing innovative funding models and prioritising open standards and collaborative practices, the field can ensure that DSEs remain vibrant and accessible resources well into the future.

With these issues and future directions in mind, we turn now from the general to the particular, exploring how the Oxford University Voltaire project encapsulates and addresses many of the methodological and technical challenges outlined above.

2 Making the Corpus: From Print to Digital

If we want a ‘complete’ Voltaire, it seems obvious that we gather together everything he wrote. But the task is by no means as simple as it might at first appear. To begin with, how do we define the corpus of any writer? Are there items that should be excluded? Then in the case of Voltaire in particular, there are two major complicating factors. Firstly, Voltaire wrote an enormous amount: he was a literary celebrity who held his reading public for a period of sixty years, from his first play Œdipe, published in 1718, until his death in 1778. His 2,000 or so published works total around fifteen million words. The sheer quantity of his writings presents a challenge. Secondly, Voltaire was constantly embroiled in controversy and subject to political and religious censorship: it is much harder to pin down a corpus that has become slippery and fragmented through being argued about over a lengthy period. In the face of such an enormous body of controversial writings, it is difficult not to be influenced by the weight of tradition, and difficult also to imagine how it might be shaped differently.

2.1 Collecting Voltaire’s Works in Print

Voltaire published a number of ‘collected’ editions of his writings, but these were never complete in the modern sense of aiming to include every single work: it was normal practice in the eighteenth century for a well-known author to put together an authorised edition that established the corpus that they wished to pass on to posterity; and this might well exclude so-called minor works, or works that for one reason or another were deemed to be compromising for the author’s posthumous reputation (Cronk Reference Cronk, Didier, Neefs and Rolet2012b; Cronk Reference Cronk2023).

The first serious attempt to bring together all of Voltaire’s works took place a few years after his death. This edition, managed under the editorial direction of the philosopher Condorcet and financed by the playwright Beaumarchais, was published between 1784 and 1789 in seventy volumes at Kehl, a small German town just across the Rhine from Strasbourg – for political reasons, the books could not be printed on French soil. The so-called ‘Kehl edition’ was an ambitious undertaking, and it constituted a serious attempt to establish a ‘canonical’ Voltaire. It grouped the works by genre, and for the first time collected a corpus of some 4,500 letters, in effect inventing Voltaire’s correspondence (he had never in his lifetime systematically published his letters together). The result of enormous labour and conceived deliberately as a monument to the celebrity author, this is still not quite a complete edition in the modern sense: certain works, such as an early poem ‘tainted’ with Christian belief, are omitted because they do not fit the ideological narrative that the editors wished to present. More importantly, the very large number of short prose ‘chapters’ published in various collections – in many ways Voltaire’s most characteristic polemical works – are grouped together in one long alphabetical sequence, obscuring the integrity of their original publication in different forms and at different times. Some texts are seriously deformed in the Kehl edition, while others are simply missing; works from different periods are mixed together; many texts are undated or are dated inaccurately. Most importantly, this is not a critical or scholarly edition in the modern sense: the text is not established on the basis of a specific edition, explanatory notes are minimal, and very few variants to the text are recorded (and in the case of Voltaire, whose works regularly appeared in multiple editions, variants are often numerous, long and important).

The Kehl edition is a remarkable cultural object and an extraordinary publishing achievement. The edition necessarily reflects the editing practices of its period, so it would be pointless to complain that it lacks the qualities of a modern critical edition. It proved to be hugely influential, for good and for ill. The Kehl edition in effect created the Voltaire that was known to the generation of the French Revolution. Later, in the nineteenth century, there was huge demand for editions of Voltaire, yet publishers understandably recoiled at the cost of reorganising such an enormous corpus, and inevitably they preferred to follow the Kehl edition, in the process recycling its assumptions without questioning them. During the period of the Restoration (1815–1830), the name of Voltaire enjoyed iconic importance in the new political landscape, and there were several dozen complete editions of Voltaire. These editions each added newly found letters, along with a few other minor texts that had turned up in manuscript, but fundamentally they all followed the Kehl template. The one editor of this period to try to impose a new order on the inherited Voltairean corpus was Adrien Beuchot, who made some modest improvements to the Kehl edition, for example, by establishing the Lettres philosophiques as a free-standing text.

After the collective editions of Voltaire’s lifetime, which were deliberately not complete, the collective editions that appeared following his death, from Kehl onwards, were ever-expanding, as more manuscripts, in particular letters, came to light. The last complete edition of the nineteenth century was edited by Louis Moland and appeared in fifty-two volumes in the early years of the Third Republic (1877–1885), at a time when Voltairean values were key to the fledgling French Republic, which had come into being following the defeat at the hands of the Prussians in 1870. The Moland edition significantly increased the number of known Voltaire letters, but in other respects, it again largely followed the pattern of the Kehl edition as revised by Beuchot in the 1830s. Throughout the twentieth century, the Moland edition remained, faute de mieux, the standard edition of reference.

2.2 The Complete Works of Voltaire/Œuvres complètes de Voltaire (1968–2022)

There were many oddities and eccentricities about the way in which Voltaire’s texts were handled, and mishandled, over the years in these collective editions. From the early twentieth century, when Voltaire began to be taught in universities, there began to appear scholarly editions of individual texts, such as the Lettres philosophiques (edited by Gustave Lanson, 1909) and Candide (edited by André Morize, 1913). But Voltaire scholars who wanted to venture beyond this narrow selection were still obliged to use the Moland edition, and given the imposing bulk of the corpus, it was immensely difficult to avoid earlier editorial choices, and all but impossible to challenge the traditional Voltairean canon, which in its essentials had remained unchanged since the Kehl edition in the late eighteenth century.

What was needed was a completely new edition, built on first principles, but this posed formidable challenges. Firstly, the work involved, therefore the cost, would be considerable; and secondly, even in the twentieth century, Voltaire remained an awkward and controversial figure in France, especially for Catholics. It is not surprising then that when finally, in the 1960s, plans were laid to produce a completely new edition of the totality of Voltaire’s writings, the initiative did not originate in France. It was two English academics, W. H. Barber and O. R. Taylor, both distinguished specialists of Voltaire in the University of London, who in 1967 approached Theodore Besterman (1904–1976), a Voltaire scholar of independent means, and asked for his support in launching a new edition. Besterman himself had recently produced a 118-volume edition of Voltaire’s correspondence (1953–64), a pioneering achievement that had been widely acclaimed, and he agreed to finance a new Complete Works of Voltaire and to become its general editor. The volumes of the new edition began to appear from 1968, and when Besterman died in 1976, he left a bequest enabling the project to continue at the Voltaire Foundation at the University of Oxford. Work on the so-called Oxford edition, now usually referred to by its acronym OCV, involved over 200 collaborators and was finally brought to completion in 2022, in 205 volumes.

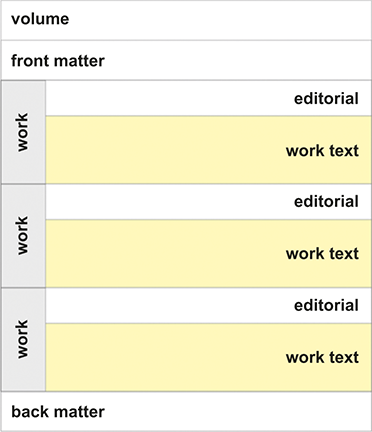

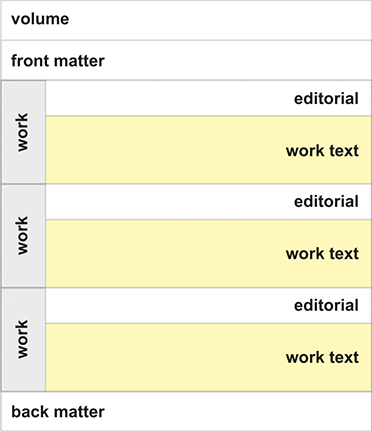

The Oxford edition was the first edition to go back to the drawing board and to construct an edition of Voltaire’s collected works from scratch, without being influenced either by what Voltaire had done in his lifetime, or by the powerful posthumous editorial tradition created by the Kehl editors. It was also the first complete full-scale critical edition, and this is important, given that Voltaire repeatedly revised and rewrote his texts, so that many works exist in multiple versions. For each work, it was necessary to draw up, in so far as possible, a full list of known manuscripts, and a full description of all known print editions dating from Voltaire’s lifetime, identifying those that seem to have been published with his approval (although this is by no means always clear). Given that no library in the world has anything approaching all the editions of Voltaire’s writings, this was an enormous undertaking; the work became somewhat easier in later years, as digitised library catalogues became increasingly common. A copy text was selected for each work, that is to say, one of the versions thought to best represent the work in question, and then all the significant variants drawn from the other witnesses supposedly overseen by Voltaire were carefully noted. This gives readers access to the full extent of a work in all its permutations and allows them to understand how a given text evolved over time. In addition, the editor often provides extensive explanatory notes to the text. Preceding the text, there is a scholarly introduction to the work, placing it in its broader literary and cultural context, and also a full list of manuscripts and printed editions, including full bibliographical descriptions and appendices. Following the text, there is typically a list of works cited and a detailed index of names.

With such a large corpus, the question of how to order the texts is a challenging one. In all the complete editions of Voltaire from Kehl to Moland, the practice had been the same: to group the works by genre. The editions would routinely begin with volumes dedicated to theatre and to epic poetry, then followed the histories, other miscellaneous prose works, the shorter verse and finally, always at the end, the correspondence. This arrangement had the advantage of familiarity and apparent simplicity, but the disadvantage of rather downplaying the importance of the shorter prose works, which do not easily fit into preconceived generic categories (which is one reason of course why we now admire them). Any classification that ends up with so many of the most significant works clustered in some sort of ill-defined ‘miscellany’ looks suspiciously inadequate. The founding editors of the Oxford edition decided to try something different, and to arrange the texts, regardless of genre, by date; to be precise, by the date when the work was thought to have been substantially composed, rather than by the date of first publication (which can be stated with greater reliability). This bold innovation had important practical consequences: by including in a single volume works of different genres, the preparation of the volumes turned out to be slower than might otherwise have been the case, because numerous editors were contributing to the same volume. Academically, however, this was a significant advance: the arrangement of works within the Oxford edition shows clearly how Voltaire worked on different projects simultaneously, a phenomenon that has been called ‘creative concurrence’ (Van Hulle Reference Van Hulle2021); and such an arrangement further avoids the trap of separating writings into ‘major’ and ‘minor’ categories, especially important with this author, where it is the seemingly lesser works, like Candide, that posterity has judged to be among the most important in his œuvre.

The Oxford editors resolved, of course, to include in the edition everything that Voltaire wrote, and that list of works was largely established before they began their work. There could no longer be any question, for example, of quietly suppressing writings that were for whatever reason found to be embarrassing, as had happened in the early editions. In his edition of the correspondence, Besterman had preferred maximal inclusion: he rightly included letters addressed to Voltaire (often essential for understanding replies) and even letters written by third parties containing significant information about Voltaire. There are many quasi-legal documents and financial papers that are not strictly letters but still of interest in charting Voltaire’s life, and these Besterman includes in a long series of appendices to the correspondence.

In English studies, the term ‘unediting’ is sometimes used to describe how editorial choices and procedures in the early-modern period that result from ideological or cultural prejudice can often stifle significant or innovative aspects of the text (Marcus Reference Marcus1996); we can find clear parallels to this phenomenon in early editions of Voltaire. In one key respect, the new Oxford corpus differs from its predecessors, for the simple reason that a number of works published in Voltaire’s lifetime – and significant works at that – quite simply ‘disappeared’ in the Kehl edition, including individual works such as the Lettres philosophiques (1733/1734) or the Questions sur l’Encyclopédie (1770–1772), both of which were dismantled by the Kehl editors and broken down into their constituent chapters which were then absorbed into a larger whole. The Commentaire historique sur les œuvres de l’auteur de La Henriade, published in 1776, is another striking example of this phenomenon. The work has a curious structure, being made up of three unequal parts: an oddly flat and highly selective third-person account of Voltaire’s life, then a dossier of letters, and in conclusion a short poem. This is in fact a rather creative experiment in autobiography, or what we would now term life-writing, but it was not understood as such when it first appeared; the Kehl editors broke up the work, publishing its constituent parts in different volumes of the edition – with the result that the work in effect vanished from sight, even if its various parts were still in print. Thus the edition of the Commentaire historique in the Oxford edition is the first integral publication of the text since 1777, and it is a revelation of an aspect of Voltaire’s writing that was long neglected and misunderstood. Simply by dint of producing the first ever critical edition, therefore, whereby each text is traced back to its first printing, the corpus of the Oxford edition looks radically different from the corpus put together by the Kehl editors.

There were a small number of manuscripts, such as the ‘Chapitre des arts’, an incomplete draft chapter describing the history of the arts, originally destined for the Essai sur les mœurs but subsequently abandoned, that were included in the corpus for the first time. And while wishing to be as complete as possible, it is also necessary to draw the line somewhere: what to do, for example, with the household accounts of the château de Ferney? These manuscript accounts provide an interesting resource for research into material culture, telling us, for example what Voltaire ate, and how much he spent on his servants, and Besterman did publish a facsimile printing of the manuscript. This is obviously not a work by Voltaire, however, even though the manuscript does contain traces of his hand, and it was decided not to include it in the Complete Works. In the case of a corpus as large and complex as Voltaire’s, there is certainly a temptation to keep adding, a temptation that has sometimes to be resisted.

Many eighteenth-century editions of Voltaire contain prefaces and the like, and these paratexts pose an interesting challenge to the editors of a complete edition. If such prefaces are signed by Voltaire, there is no argument, but mostly they are anonymous, and sometimes they are signed with a pseudonym. We may very often surmise that these prefaces were in all probability written either by Voltaire or under his instruction; and whether composed directly by him or not, these are crucial texts for understanding the publishing strategy of a given publication. The Oxford editors tended, and increasingly as the years went on, to include these paratexts. An interesting example of the phenomenon is the subscriber’s list which prefaces the 1728 quarto edition of La Henriade published in London: the list of names of the great and the good that subscribed to the edition is clearly not a work ‘by’ Voltaire, though it is an intrinsic part of the edition which Voltaire corrected in proof, and the list is of course fascinating testimony to Voltaire’s ruthless networking on behalf of his poem. In this case, the original editor of La Henriade in 1970 chose not to include the list of subscribers in his edition, but the text was later included as an appendix to another edition published in 2022:Footnote 21 in the intervening half century, book historians had changed the way we use this type of document for studying the social networks that underlie the subscription sales of books in Britain and Ireland.

One significant addition to the OCV corpus, agreed only in the course of publication, was the inclusion of the marginalia found in the books of Voltaire’s library, now in Russia. The project to edit what was called the Corpus des notes marginales was initiated by the National Library of Russia in St Petersburg, but it sadly came to a halt in 1994, when it had reached the halfway point at volume 5. In 2002 the Voltaire Foundation negotiated with the library in St Petersburg to take over the publication of the Corpus and to reprint the earlier volumes; it was then decided, rather than publish these as a stand-alone collection, as had been initially intended, to incorporate the volumes of marginalia within the Compete Works,Footnote 22 and it was later decided to devote a further volume to the marginalia found in books outside the National Library of Russia.Footnote 23 It seemed a bold decision at the time to include such seemingly ephemeral writing in the corpus (though the Bollingen edition of Coleridge, which includes six volumes of marginalia, provided a distinguished precedent), but with hindsight, this was a sound move. The publication of Voltaire’s marginalia has stimulated renewed interest in the subject and opened up a fresh field of research (Pink Reference Pink2018); furthermore, its inclusion within the corpus had a dynamic effect on the edition itself, as editors were increasingly able to use the evidence of Voltaire’s library and the marginalia within its books to better understand Voltaire’s use of sources and the genesis of certain of his works.

2.3 From Paper to Digital: From OCV to OV

The publication of the Complete Works of Voltaire has been described by the book historian Robert Darnton as ‘a great trek, the greatest ever in the history of scholarly publishing’.Footnote 24 It now stands beyond doubt as the edition of reference for Voltaire, and that in itself poses something of a challenge: how can the edition maintain that standing as scholarship advances and as new discoveries are made? The sheer time and expense of producing a 205-volume paper edition means that we can say with certainty that there will never be a new edition of the complete works published in print. Everything points to a digital edition, but a digital edition of what sort?

As we have seen, digital editions can be archival (derived from print) or born-digital, and it would be possible, in theory at least, to imagine a wholly new born-digital edition of the complete works of Voltaire, one that would be conceived specifically for the digital medium and that would be entirely unconstrained by the necessities of print. But even if this might in theory be our ideal solution, it would be unworkable and unaffordable in practice. Given the enormity of the undertaking, given the vast quantity of information, much of it new research, already contained in OCV, it would not make practical sense to ignore the OCV print edition. So, if OCV is necessarily our starting point, the question becomes a different one: how should we digitise the OCV paper edition to achieve the best possible results? Here, the distinction between a digitised and a digital edition is crucial. The individual print volumes have already been digitised (and are sold both as print-on-demand volumes and as e-books); it would be a relatively simple operation to make a digitised edition by combining the volume files and making them cross-searchable. Elena Pierazzo makes a good argument against over-complication: texts can be simply digitised, and tools are increasingly available to search those texts (Pierazzo Reference Pierazzo2015, p. 2). The result of such an undertaking would be hugely convenient, it would save on shelf-space, but it would not in the end be radically different from the collection of printed books. Such digitised texts, containing the electronic reproductions of paper pages, are not true digital editions.

The Voltaire Foundation has a world-wide reputation as an academic publisher of ‘definitive’ scholarly editions; and it is a timely endeavour to seek to transfer that know-how from paper to the realm of the digital, and so rethink the critical edition in a new medium. Scholars of literature have long been familiar with critical editions in the form of print volumes, so it requires quite a leap to wonder how they might be conceived differently. How might we imagine the modern digital equivalent of what we used to call a ‘definitive’ edition? The very term ‘definitive’ is anachronistic, anchored to the age of print and of texts that were fixed (Shillingsburg Reference Shillingsburg2025). In truth, no scholarly edition ever says the last word, and we should rather speak of an ‘authoritative’ edition, one that is also ‘referenceable’, the edition of reference that scholars are expected to quote in their footnotes. The new digital edition that we are imagining will in addition be expandable and updatable, aiming to include and digest the latest critical thinking.

Voltaire provides an excellent subject for this experiment, firstly on account of the sheer size of the corpus of writings, and secondly because of their unparalleled variety. Voltaire composes in just about every known literary genre, and in addition there is his correspondence (over 21,000 letters), his working notebooks, and the marginalia: few writers offer more complex challenges. The aim to produce an authoritative digital edition of Voltaire’s collected writings is nothing if not ambitious.

An important question before we go any further: the name! What do we call the digital reinvention of the Complete Works of Voltaire? During the early development of the project, the research team referred to it as Digital Voltaire. However, in discussion with two British university presses who were both potential distributors of the product, we were told the same thing: if we wished the name of the digital product to convey a sense of heft and authority, it would be best to avoid altogether the word ‘digital’ and synonyms like ‘electronic’. To use the name of the publisher, Voltaire Foundation, would have created a clumsy and repetitive title, so in the end the research team chose Oxford University Voltaire, to be known also by its abbreviation, OV.

The literary corpus of Voltaire, once it is transformed into a digital object, becomes something different. Print is static, where digital is (or should be) dynamic, and this transforms how we think about the corpus, and about the scholarly edition generally. Elena Pierazzo asks an important question: ‘Are digital editions texts to be read or objects to be used?’ (Pierazzo Reference Pierazzo2015, p. 147). The OCV is principally designed to be consulted; its digital successor OV will be, in addition, an object to be used. So the digital version must not (only) be an enhanced and revised version of the print edition, though it will be that; it must be rethought from the inside out, and try not to be confined by the limitations of the paper edition. The modelling of the content has the power to generate new knowledge, a subject to which we return in the next chapter.

It is a nice paradox that after spending half a century producing a ‘definitive’ print edition of Voltaire, our central aim now is that the digital edition should replace the print version as the authoritative source of reference for this author. But where the print edition was designed primarily as an object to be read, the digital successor will have a triple function:

(1) to be read, and to be cited as the version of reference;

(2) to be used as a research tool, enabling scholars to conduct research; and

(3) to be a platform enabling the publication of that research.

2.3.1 Updating Material in the Digital Edition

The first challenge then is to think about how the material contained in the print volumes might be supplemented, adapted or reworked. At the simplest level, the digital edition provides an opportunity to correct mistakes, make revisions, add recent discoveries, and so forth. Firstly, we can carry out simple updating, such as replacing references to other works of Voltaire, currently a mixture of Moland and OCV references, with hyperlinks. Secondly, we can make minor corrections: there are typographical errors to be corrected, and more importantly, omissions in lists of editions or in sources in the text which can be rectified. In recent years, the availability of online texts has greatly facilitated research into, for example, rare print editions, the location of manuscripts, or the source of quotations. Such resources were not available to earlier editors in the 1970s and 80s, and useful complementary information can and should now be added to the editions published in earlier years. Thirdly, we can make minor additions, and the edition can be updated, to take account of new research as it is published. For example, the edition of the opera libretto Le Temple de la gloire records that we do not know the music of the first (1745) performance; a manuscript of this score was subsequently unearthed in the university library at Berkeley, and it is clearly desirable to include in the edition a reference to this new discovery.