Impact statement

Global sea level is rising because of human-induced climate change. Projections of future sea level are published every couple of years in Assessment Reports (ARs) from the Intergovernmental Panel on Climate Change. These projections provide valuable information for coastal management around the world, as measures to adapt to sea-level change need to be planned well in advance. It is therefore important that the projections are reliable, which can be assessed in hindsight by comparing them to observations, when enough time has passed. In this study, we compare the sea-level projections from AR5, which start in 2007, to satellite observations for the period 2007–2022. Whereas previous studies have done similar comparisons for global mean sea level, we perform this comparison for regional sea level. Since the observations have an inherently different (higher) variability and timing than the projections, which particularly complicates comparisons over short time periods, before comparing, we first reduce the variability in the observations with three different methods, inspired by previous research. We identify low-frequency component analysis as the best performing method. We assess the projections of total sea-level change and its separate components: the sterodynamic (thermal expansion, halosteric variations, plus regional changes in oceanic and atmospheric dynamics) and mass (melting land ice) components. We show that the regional projections are in good agreement with the observations, as their 90% confidence intervals overlap in most of the ocean area, giving confidence in sea-level projections for the instrumental era.

Introduction

Present-day sea level is changing as a result of human-induced climate change (Fox-Kemper et al., Reference Fox-Kemper, Hewitt, Xiao, Aðalgeirsdóttir, Drijfhout, Edwards, Golledge, Hemer, Kopp, Krinner, Mix, Notz, Nowicki, Nurhati, Ruiz, Sallée, Slangen, Yu, Masson-Delmotte, Zhai, Pirani, Connors, Péan, Berger, Caud, Chen, Goldfarb, Gomis, Huang, Leitzell, Lonnoy, Matthews, Maycock, Waterfield, Yelekçi, Yu and Zhou2021), impacting low-lying coastal areas around the world. Sea-level change is primarily driven by changes in ocean density and dynamics, by variations in the amount of water stored on land (land ice and land water) and by vertical land motion (for instance, from tectonics, fluid extraction or glacial isostatic adjustment). As a result of these different drivers, sea-level change strongly varies in space and time.

Because of their relevance to coastal communities and decision-makers, projections of future (global and regional) sea-level change are among the most sought-after outcomes of Intergovernmental Panel on Climate Change (IPCC) reports. Building on previous IPCC reports, the IPCC Fifth Assessment (AR5) report was the first IPCC report to assess regional sea-level projections, including the mass change contributions from land ice and land water. These were based on phase 5 of the Coupled Model Intercomparison Project (hereafter CMIP5; Taylor et al., Reference Taylor, Stouffer and Meehl2012) global climate models for the ocean density and dynamics contribution in combination with dedicated models and parameterizations for ice sheets, glaciers, land water and glacial isostatic adjustment (Church et al., Reference Church, Clark, Cazenave, Gregory, Jevrejeva, Levermann, Merrifield, Milne, Nerem, Nunn, Payne, Pfeffer, Stammer, Unnikrishnan, Stocker, Qin, Plattner, Tignor, Allen, Boschung, Nauels, Xia, Bex and Midgley2013a). This development arose from research into regional sea-level projections using climate models (e.g., Slangen et al., Reference Slangen, Katsman, van de, Vermeersen and Riva2012; Perrette et al., Reference Perrette, Landerer, Riva, Frieler and Meinshausen2013) and was followed by intensive research on the topic in the period following AR5 (see, e.g., Garner et al., Reference Garner, Weiss, Parris, Kopp, Horton, Overpeck and Horton2018, for an overview). In particular, the development of probabilistic approaches (e.g., Kopp et al., Reference Kopp, Horton, Little, Mitrovica, Oppenheimer, Rasmussen, Strauss and Tebaldi2014), the use of physics-based emulators (e.g., Palmer et al., Reference Palmer, Gregory, Bagge, Calvert, Hagedoorn, Howard, Klemann, Lowe, Roberts, Slangen and Spada2020; Yuan and Kopp, Reference Yuan and Kopp2021) and a strong research community focus on the cryospheric contributions to sea-level change (IMBIE, Otosaka et al., Reference Otosaka, Shepherd, Ivins, Schlegel, Amory, van den Broeke, Horwath, Joughin, King, Krinner, Nowicki, Payne, Rignot, Scambos, Simon, Smith, Sørensen, Velicogna, Whitehouse, A, Agosta, Ahlstrøm, Blazquez, Colgan, Engdahl, Fettweis, Forsberg, Gallée, Gardner, Gilbert, Gourmelen, Groh, Gunter, Harig, Helm, Khan, Kittel, Konrad, Langen, Lecavalier, Liang, Loomis, McMillan, Melini, Mernild, Mottram, Mouginot, Nilsson, Noël, Pattle, Peltier, Pie, Roca, Sasgen, Save, Seo, Scheuchl, Schrama, Schröder, Simonsen, Slater, Spada, Sutterley, Vishwakarma, van Wessem, Wiese, van der Wal and Wouters2023; ISMIP6, Nowicki et al., Reference Nowicki, Payne, Larour, Seroussi, Goelzer, Lipscomb, Gregory, Abe-Ouchi and Shepherd2016; and GlacierMIP, Hock et al., Reference Hock, Bliss, Marzeion, Giesen, Hirabayashi, Huss, Radić and Slangen2019) have led to significant advances in sea-level projections since AR5, which could be assessed in the IPCC AR6 report (Fox-Kemper et al., Reference Fox-Kemper, Hewitt, Xiao, Aðalgeirsdóttir, Drijfhout, Edwards, Golledge, Hemer, Kopp, Krinner, Mix, Notz, Nowicki, Nurhati, Ruiz, Sallée, Slangen, Yu, Masson-Delmotte, Zhai, Pirani, Connors, Péan, Berger, Caud, Chen, Goldfarb, Gomis, Huang, Leitzell, Lonnoy, Matthews, Maycock, Waterfield, Yelekçi, Yu and Zhou2021).

Ideally, any model simulation is validated by observations to assess confidence in the results, to improve the models and to enhance process understanding. However, for projections of the future, such a validation is, of course, not possible at the time of publication. What can be done instead is to (i) compare model simulations of the historical period to observations (for sea level, this is done in, e.g., Church et al., Reference Church, Monselesan, Gregory and Marzeion2013c; Meyssignac et al., Reference Meyssignac, Slangen, Melet, Church, Fettweis, Marzeion, Agosta, Ligtenberg, Spada, Richter, Palmer, Roberts and Champollion2017; Slangen et al., Reference Slangen, Meyssignac, Agosta, Champollion, Church, Fettweis, Ligtenberg, Marzeion, Melet, Palmer, Richter, Roberts and Spada2017) or to (ii) compare projections that have been made in the past – for instance, IPCC AR5 projections, which are available from 2007 onwards – against observed changes since that time (e.g., Lyu et al., Reference Lyu, Zhang and Church2021; Wang et al., Reference Wang, Church, Zhang and Chen2021; Slangen et al., Reference Slangen, Palmer, Camargo, Church, Edwards, Hermans, Hewitt, Garner, Gregory, Kopp, Malagon-Santos and van de Wal2023).

The latter comparison is not trivial, because the overlapping time period is relatively short and, in particular, the regional observations contain significant internal variability, with a different regional distribution (both in timing and in magnitude) than the model projections (Figure 1). Using different approaches, projection-observation comparisons have successfully been carried out for global mean sea-level (GMSL) change (Wang et al., Reference Wang, Church, Zhang and Chen2021; Slangen et al., Reference Slangen, Palmer, Camargo, Church, Edwards, Hermans, Hewitt, Garner, Gregory, Kopp, Malagon-Santos and van de Wal2023), for tide gauge locations (Wang et al., Reference Wang, Church, Zhang and Chen2021) and for the ocean warming component (Lyu et al., Reference Lyu, Zhang and Church2021). Here, for the first time, we compare the regional sea-level projections (total and contributions) from AR5 to satellite altimetry observations for the period 2007–2022.

Trends from observed (a) regional sea-level change, (c) sterodynamic sea-level (SDSL) change and (e) mass redistribution, for the period 2007–2022 (see Methods section for observation details). The corresponding IPCC AR5 projections under the RCP4.5 scenario are shown in (b), (d) and (f), respectively.

To facilitate the comparison to the regional sea-level projections from the CMIP5 climate model ensemble, we present and compare three different methods to reduce the internal variability, which impacts regional observations of sea-level change over shorter time periods: low-frequency component analysis (LFCA), multiple variable linear regression (MVLR) and self-organizing maps (SOMs) (see Methods). We then compare the AR5 model projections to the filtered observations. In addition, we break down regional sea-level change into its two main components: (1) sterodynamic sea level (SDSL), which represents changes due to density and circulation variations, and (2) the mass contribution, where water and ice mass exchanges between land and ocean lead to a gravitational, rotational and viscoelastic deformation response of the solid Earth.

Data

Observations

Absolute sea-level change is obtained from satellite altimetry observations, using the MEaSUREs gridded sea-surface height anomalies product, version 2205 (Fournier et al., Reference Fournier, Willis, Killett, Qu and Zlotnicki2022). The original data are provided every 5 days and at a 0.17 × 0.17 degrees resolution. Since the sea-level projections represent relative sea-level change and satellite altimetry represents absolute sea level, we convert the altimetry data by applying corrections for ocean bottom deformation (Frederikse et al., Reference Frederikse, Riva and King2017) and glacial isostatic adjustment with the ICE 6G VM5a model (Argus et al., Reference Argus, Peltier, Drummond and Moore2014; Peltier et al., Reference Peltier, Argus and Drummond2015). For both corrections, we linearly extrapolate the available data until 2022.

Observed SDSL is based on the ensemble mean of three ocean reanalyzes from the Global Ocean Ensemble Physics Reanalysis product (Copernicus Marine Service Information [CMEMS], 2024). This product provides the output for three different simulations, namely GLORYS2V4, ORAS5 and C-GLORSv7, on monthly intervals and at 0.25 × 0.25 degrees resolution. To obtain the SDSL change, we first remove the time-varying global mean from the sea-surface height of each of the reanalysis products. We then add the global mean steric change of each respective reanalysis, computed from the temperature and salinity data, following Camargo et al. (Reference Camargo, Riva, Hermans and Slangen2020), and compute the average of the three products.

For the observed mass-driven sea-level change, we use relative sea-level fingerprints from Camargo et al. (Reference Camargo, Riva, Hermans and Slangen2022) based on satellite gravimetry from GRACE and GRACE-FO JPL mascons (Watkins et al., Reference Watkins, Wiese, Yuan, Boening and Landerer2015; Wiese et al., Reference Wiese, Yuan, Boening, Landerer and Watkins2023), updated to 2022. The fingerprints are computed individually for the Antarctic ice sheet, the Greenland ice sheet, glaciers and terrestrial water storage by solving the sea-level equation (Farrell and Clark, Reference Farrell and Clark1976).

For the filtering, we use monthly data for all observational products (see Methods section), and the filtered data are then averaged into annual means and regridded to a common 1 × 1 degree resolution using bilinear interpolation, to facilitate comparison with the projections. Both the total and SDSL observational estimates are available from January 1993 until December 2022, whereas the mass-driven component spans the period January 2002 to December 2022. Due to low-quality altimetry measurements in high latitudes, we only consider the ocean area between latitudes 66 degrees north and south.

IPCC AR5 sea-level projections

We use the IPCC AR5 regional relative sea-level projections (Church et al., Reference Church, Clark, Cazenave, Gregory, Jevrejeva, Levermann, Merrifield, Milne, Nerem, Nunn, Payne, Pfeffer, Stammer, Unnikrishnan, Stocker, Qin, Plattner, Tignor, Allen, Boschung, Nauels, Xia, Bex and Midgley2013a), which are based on climate model output from 21 CMIP5 models and use Representative Concentration Pathways (RCPs) scenarios. These sea-level projections include contributions from (dedrifted) ocean thermal expansion and ocean dynamics, inverse barometer effect, glaciers’ mass changes, ice sheet surface mass balance and ice dynamics contributions, land water storage changes (from groundwater depletion and reservoir impoundment) and glacial isostatic adjustment. We remove the glacial isostatic adjustment contribution from the projections before comparing the projections to the observations. For this study, we use the ensemble mean, 5th and 95th percentiles of the IPCC AR5 projections under the RCP 4.5 scenario (with a radiative forcing of 4.5 W/m2 by 2,100), but we note that the choice of scenario is not crucial, as for sea-level change the scenarios do not diverge yet in 2022.

Methods

To reduce the internal variability of the observations (total and SDSL), we test and compare three different methods, namely: (1) LFCA, (2) MVLR and (3) SOM. All methods are applied to monthly observational data from 1993 to 2022, to increase the degrees of freedom. The filtered data are then averaged to annual means.

To obtain trends, we compute ordinary least-squares fits, and the reported uncertainties represent the standard error of the linear regression. We compute the root mean square error (RMSE) between the observations and the AR5 projection and use it as a metric to identify the best filtering method.

Low-frequency component analysis (LFCA)

Pattern filtering techniques based on Empirical Orthogonal Functions (EOFs) have proven to effectively reduce internal climate variability by exploiting spatial covariance information contained in a dataset (e.g., Marcos and Amores, Reference Marcos and Amores2014; Wills et al., Reference Wills, Schneider, Wallace, Battisti and Hartmann2018; Wills et al., Reference Wills, Battisti, Armour, Schneider and Deser2020; Malagón-Santos et al., Reference Malagón-Santos, Slangen, Hermans, Dangendorf, Marcos and Maher2023). Some of these techniques rely on other realizations to compute an ensemble mean (Wills et al., Reference Wills, Battisti, Armour, Schneider and Deser2020) or on a pre-industrial control simulation (DelSole et al., Reference DelSole, Tippett and Shukla2011; Marcos and Amores, Reference Marcos and Amores2014) to separate internal natural variability from the forced response. This makes these methods suitable to be applied to modeling experiments featuring such simulations but not to observations. However, LFCA can reduce internal variability using just one single realization (Schneider and Held, Reference Schneider and Held2001; Wills et al., Reference Wills, Schneider, Wallace, Battisti and Hartmann2018), making it an ideal candidate to be applied to regional observations. Although not strictly necessary, we removed the seasonal cycle before applying LFCA to the observational dataset, so the EOF analysis can focus on other types of variability. We decomposed the signal into 100 EOFs, selecting this value as a trade-off between degrees of freedom to decompose the signal and computational burden. LFCA sorts the 100 spatial patterns (EOFs) and their corresponding time series by the ratio

![]() $ {r}_k $

(Eq. [1]), so that the leading anomaly patterns are those that maximize the ratio of low frequency to total variance:

$ {r}_k $

(Eq. [1]), so that the leading anomaly patterns are those that maximize the ratio of low frequency to total variance:

$$ {r}_k=\frac{{{\tilde{t}}_k}^T{\tilde{t}}_k}{t_k^T{t}_k}, $$

$$ {r}_k=\frac{{{\tilde{t}}_k}^T{\tilde{t}}_k}{t_k^T{t}_k}, $$

where

![]() $ {t}_k $

is the time series of the

$ {t}_k $

is the time series of the

![]() $ k $

-th EOF pattern,

$ k $

-th EOF pattern,

![]() $ {t}_k^T $

its transpose and

$ {t}_k^T $

its transpose and

![]() $ {\tilde{t}}_k $

denotes

$ {\tilde{t}}_k $

denotes

![]() $ {t}_k $

after applying a low-pass filter, for which we use a linear Lanczos filter (Duchon, Reference Duchon1979) featuring a 5-year low-pass filter. Using a higher low-pass filter did not lead to significantly different results, indicating that 30 years of observational data is probably not sufficient to accurately characterize modes of variability longer than 5 years. This indicates that there is a possibility of the forced signal leaking into internal variability modes, adding an ambiguity to whether the leading mode truly represents the forced response or realized internal variability.

$ {t}_k $

after applying a low-pass filter, for which we use a linear Lanczos filter (Duchon, Reference Duchon1979) featuring a 5-year low-pass filter. Using a higher low-pass filter did not lead to significantly different results, indicating that 30 years of observational data is probably not sufficient to accurately characterize modes of variability longer than 5 years. This indicates that there is a possibility of the forced signal leaking into internal variability modes, adding an ambiguity to whether the leading mode truly represents the forced response or realized internal variability.

A forced response with reduced internal climate variability can then be constructed by linearly combining the leading anomaly patterns (Wills et al., Reference Wills, Battisti, Armour, Schneider and Deser2020; Malagón-Santos et al., Reference Malagón-Santos, Slangen, Hermans, Dangendorf, Marcos and Maher2023). To determine the number of leading anomaly patterns that make up the forced response, we built a set of different forced responses by combining distinct leading EOFs. To confirm which forced response did not contain variability associated with internal climate variability modes (Multivariate El Niño Southern Oscillation Index [MEI], Pacific Decadal Oscillation [PDO], Indian Ocean Dipole [IOD] and Southern Annular Mode [SAM]; see MVLR section), we performed a grid point regression analysis between the filtered results and individual climate indices. We observed that only the forced response made of the first leading anomaly pattern led to nonsignificant regression coefficients, indicating the absence of variability driven by internal climate oscillations. Thus, we use only the first leading EOF, which explains around 15% of the total variance of the data set (after removing the seasonal cycle), to represent the forced response. We compare these LFCA-filtered observations with the AR5 projections.

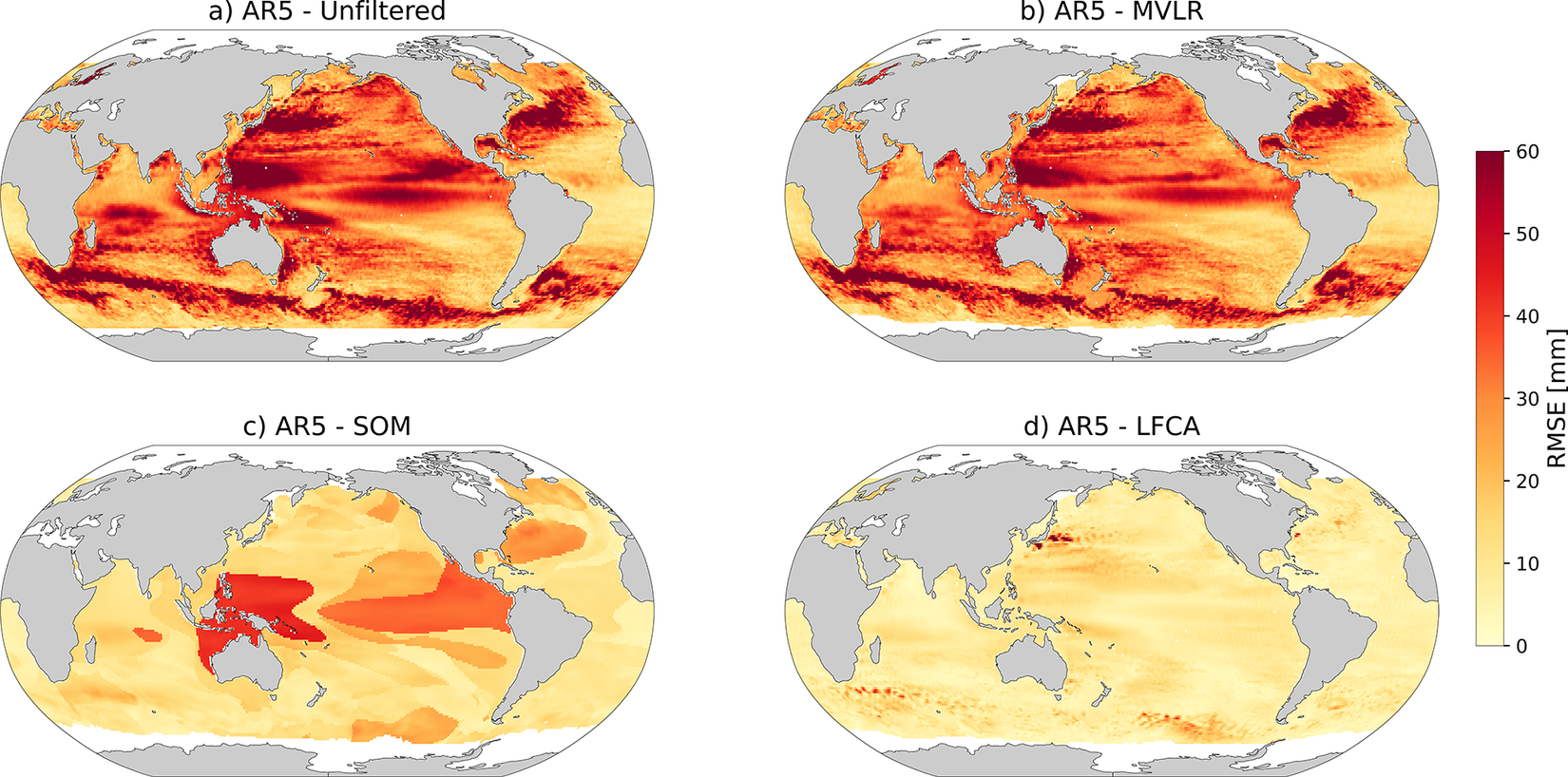

Multiple variable linear regression (MVLR)

As a second method to reduce the internal variability in satellite altimetry, we perform a MVLR analysis following Wang et al. (Reference Wang, Church, Zhang and Chen2021). Five climate indices are used as physical indicators (‘variables’) for modes of internal climate variability: the PDO (Mantua et al., Reference Mantua, Hare, Zhang, Wallace and Francis1997), IOD (Saji et al., Reference Saji, Goswami, Vinayachandran and Yamagata1999), SAM (Marshall, Reference Marshall2003), North Atlantic Oscillation (NAO; Hurrell, Reference Hurrell1995), and the MEI (Wolter and Timlin, Reference Wolter and Timlin1998), which is a combination of multiple indices available for the El Niño Southern Oscillation (ENSO). All climate index time series range from at least 1979 to 2022 and are smoothed and filtered following Zhang and Church (Reference Zhang and Church2012) and Wang et al. (Reference Wang, Church, Zhang and Chen2021). We apply the following choice of MVLR to each grid cell:

$$ \hat{\mathrm{SL}}={\displaystyle \begin{array}{l}{b}_0+{b}_1t+{b}_{\mathrm{MEI}}\cdot \mathrm{MEI}+{b}_{\mathrm{PDO}}\cdot \mathrm{PDO}+{b}_{\mathrm{IOD}}\cdot \mathrm{IOD}\\ {}+\hskip2px {b}_{\mathrm{SAM}}\cdot \mathrm{SAM}+{b}_{\mathrm{NAO}}\cdot \mathrm{NAO}+\unicode{x025B}, \end{array}} $$

$$ \hat{\mathrm{SL}}={\displaystyle \begin{array}{l}{b}_0+{b}_1t+{b}_{\mathrm{MEI}}\cdot \mathrm{MEI}+{b}_{\mathrm{PDO}}\cdot \mathrm{PDO}+{b}_{\mathrm{IOD}}\cdot \mathrm{IOD}\\ {}+\hskip2px {b}_{\mathrm{SAM}}\cdot \mathrm{SAM}+{b}_{\mathrm{NAO}}\cdot \mathrm{NAO}+\unicode{x025B}, \end{array}} $$

where

![]() $ \hat{\mathrm{SL}}\left(t,\unicode{x03B8}, \unicode{x03D5} \right) $

are the sea-level observations under consideration (either total relative sea level from satellite altimetry or SDSL from reanalyzes) and

$ \hat{\mathrm{SL}}\left(t,\unicode{x03B8}, \unicode{x03D5} \right) $

are the sea-level observations under consideration (either total relative sea level from satellite altimetry or SDSL from reanalyzes) and

![]() $ {b}_{\mathrm{CI}}\left(\unicode{x03B8}, \unicode{x03D5} \right) $

is the coefficient for the climate index

$ {b}_{\mathrm{CI}}\left(\unicode{x03B8}, \unicode{x03D5} \right) $

is the coefficient for the climate index

![]() $ \mathrm{CI}(t) $

, for which we use the regression coefficient between

$ \mathrm{CI}(t) $

, for which we use the regression coefficient between

![]() $ \mathrm{CI}(t) $

and

$ \mathrm{CI}(t) $

and

![]() $ \hat{\mathrm{SL}}\left(t,\unicode{x03B8}, \unicode{x03D5} \right) $

over their common time period. A least-squares linear fit is used to find the intercept

$ \hat{\mathrm{SL}}\left(t,\unicode{x03B8}, \unicode{x03D5} \right) $

over their common time period. A least-squares linear fit is used to find the intercept

![]() $ {b}_0\left(\unicode{x03B8}, \unicode{x03D5} \right) $

and linear trend

$ {b}_0\left(\unicode{x03B8}, \unicode{x03D5} \right) $

and linear trend

![]() $ {b}_1\left(\unicode{x03B8}, \unicode{x03D5} \right) $

;

$ {b}_1\left(\unicode{x03B8}, \unicode{x03D5} \right) $

;

![]() $ \unicode{x025B} \left(t,\unicode{x03B8}, \unicode{x03D5} \right) $

is the residual term. Eq. (2) differs from Wang et al. (Reference Wang, Church, Zhang and Chen2021) in that we use all five climate indices everywhere, and we fit a linear trend instead of a quadratic one, because of our shorter observational record. We then remove the regressed climate indices from the observations using

$ \unicode{x025B} \left(t,\unicode{x03B8}, \unicode{x03D5} \right) $

is the residual term. Eq. (2) differs from Wang et al. (Reference Wang, Church, Zhang and Chen2021) in that we use all five climate indices everywhere, and we fit a linear trend instead of a quadratic one, because of our shorter observational record. We then remove the regressed climate indices from the observations using

$$ \hskip-2.2em \hat{SL}{\displaystyle \begin{array}{l}-{b}_{MEI}\cdot MEI-{b}_{PDO}\cdot PDO-{b}_{IOD}\cdot IOD\\ {}-{b}_{SAM}\cdot SAM\hskip1em -\hskip2px {b}_{NAO}\cdot NAO\hskip.5em ={b}_0+{b}_1\cdot t+\unicode{x025B} .\end{array}} $$

$$ \hskip-2.2em \hat{SL}{\displaystyle \begin{array}{l}-{b}_{MEI}\cdot MEI-{b}_{PDO}\cdot PDO-{b}_{IOD}\cdot IOD\\ {}-{b}_{SAM}\cdot SAM\hskip1em -\hskip2px {b}_{NAO}\cdot NAO\hskip.5em ={b}_0+{b}_1\cdot t+\unicode{x025B} .\end{array}} $$

With Eq. (3), we obtain the MVLR-filtered sea level, which represents the sea level observations without the influence of the climate variability. Likely due to a combination of these reasons (short record, all five indices together and a linear fit), we do note that the result of the MVLR filtering is sensitive to the choice of which climate index time series are included, in particular for the PDO. Sensitivity tests excluding the PDO term did not yield better results.

Self-organizing maps (SOM)

SOM is an unsupervised neural network method of feature extraction and classification (Kohonen, Reference Kohonen1982; Liu et al., Reference Liu, Weisberg and Mooers2006). When applied in the time domain, the method identifies clusters with coherent temporal variability. We use the clusters identified by Camargo et al. (Reference Camargo, Riva, Hermans, Marcos, Schütt, Hernandez-Carrasco and Slangen2023), obtained by applying SOM on satellite altimetry sea surface height fields from 1993 until 2019, where the clusters represent regions with similar sea-level variability. Using a slightly different time span does not result in significantly different clusters, as shown in Camargo et al. (Reference Camargo, Riva, Hermans, Marcos, Schütt, Hernandez-Carrasco and Slangen2023). The clustering is based on a 3 × 3 neural network applied to the Atlantic Ocean, and a 3 × 3 network applied to the Indo-Pacific Ocean, which yields 18 clusters in total. The separation between basins prevents the strong sea-level variability in the Equatorial Pacific from hindering pattern recognition in the Atlantic Ocean. Further detailed information on the method and parameters used can be found in Camargo et al. (Reference Camargo, Riva, Hermans, Marcos, Schütt, Hernandez-Carrasco and Slangen2023). In this study, we compute the spatial average of the total observed sea-level change and the SDSL contribution in each of the 18 clusters to reduce the internal variability from the observations.

Results

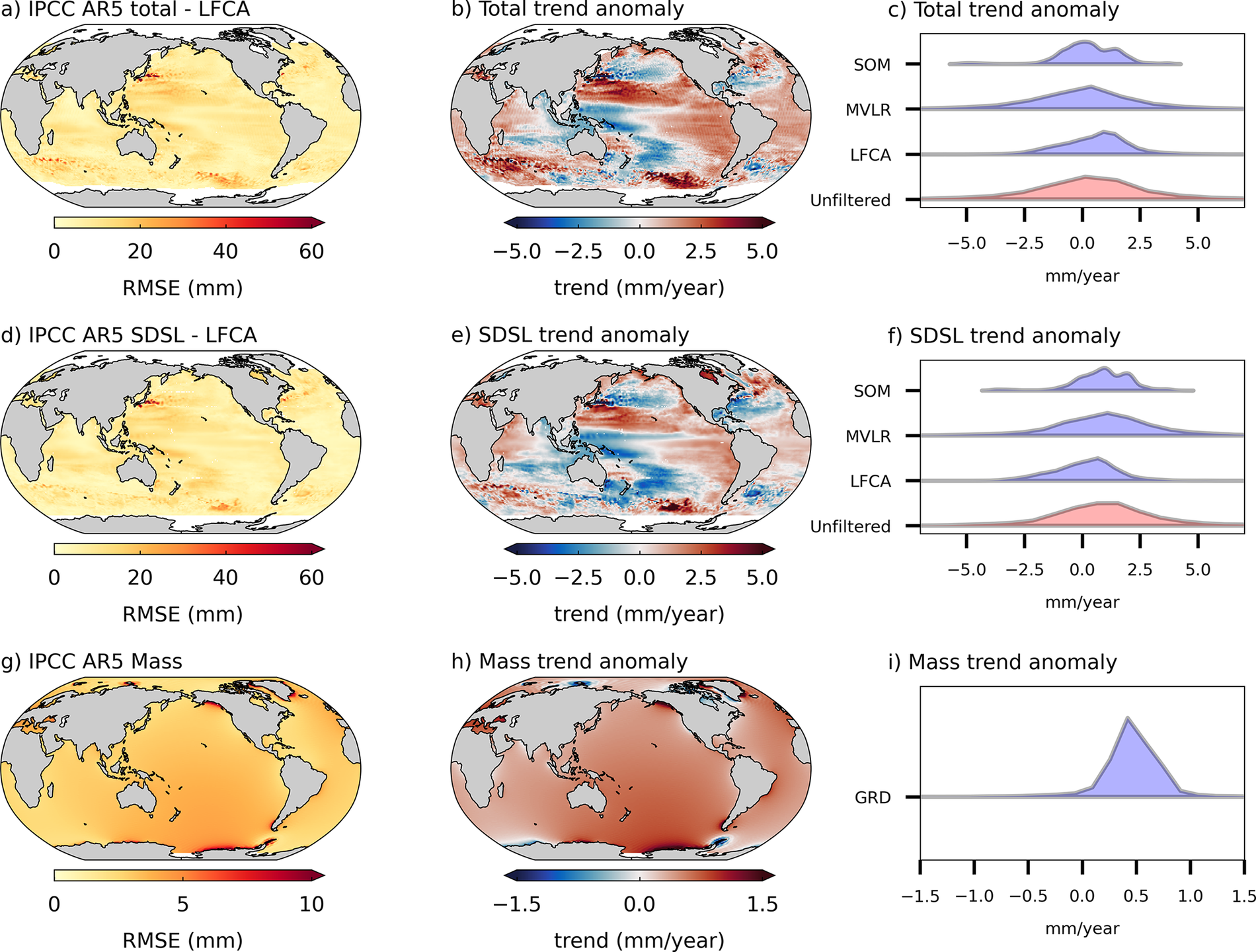

The observed regional sea-level trends of total sea-level change clearly differ from the AR5 projections (Figure 1a,b), with a slightly lower global mean (

![]() $ 3.74\pm 0.12 $

mm/yr observed compared to

$ 3.74\pm 0.12 $

mm/yr observed compared to

![]() $ 4\pm 0.02 $

mm/yr projected) and much stronger spatial variability, compared to the smoother fields of the projections. Likewise, the observed SDSL component shows a larger spatial variability (Figure 1c,d) and has a global average of

$ 4\pm 0.02 $

mm/yr projected) and much stronger spatial variability, compared to the smoother fields of the projections. Likewise, the observed SDSL component shows a larger spatial variability (Figure 1c,d) and has a global average of

![]() $ 0.70\pm 0.10 $

, contrasting with higher and smoother projected trends, with a global average of

$ 0.70\pm 0.10 $

, contrasting with higher and smoother projected trends, with a global average of

![]() $ 1.59\pm 0.02 $

mm/yr. The projected global mean mass-driven sea-level trends are much higher than the observations (

$ 1.59\pm 0.02 $

mm/yr. The projected global mean mass-driven sea-level trends are much higher than the observations (

![]() $ 2.29\pm 0.01 $

mm/yr for projections compared to

$ 2.29\pm 0.01 $

mm/yr for projections compared to

![]() $ 1.81\pm 0.11 $

mm/yr for observations), but the regional patterns are broadly in agreement: negative and positive mass trends are present in similar regions for projections and observations (Figure 1e,f). There are exceptions of some coastal regions in West Canada (only negative in the observations) and Antarctica, where in the observations the mass-driven regional sea-level trends are mainly negative close to the West Antarctic coast, but in the projections this occurs mainly around the Antarctic Peninsula. These differences are probably related to the simplified ice mass loss estimates used in the AR5 projections, which did not feature detailed spatial variability in the fields of mass loss on the ice sheets (Church et al., Reference Church, Clark, Cazenave, Gregory, Jevrejeva, Levermann, Merrifield, Milne, Nerem, Nunn, Payne, Pfeffer, Stammer, Unnikrishnan, Stocker, Qin, Plattner, Tignor, Allen, Boschung, Nauels, Xia, Bex and Midgley2013a). Additionally, groundwater extraction is the only terrestrial water storage component included in the projections, and other components, such as reservoir impoundment or changes in natural water storage, could explain some of the differences between observations and projections. As the regional mass-driven sea-level trends do not show a large spatial variability, we do not apply spatial filtering to this component.

$ 1.81\pm 0.11 $

mm/yr for observations), but the regional patterns are broadly in agreement: negative and positive mass trends are present in similar regions for projections and observations (Figure 1e,f). There are exceptions of some coastal regions in West Canada (only negative in the observations) and Antarctica, where in the observations the mass-driven regional sea-level trends are mainly negative close to the West Antarctic coast, but in the projections this occurs mainly around the Antarctic Peninsula. These differences are probably related to the simplified ice mass loss estimates used in the AR5 projections, which did not feature detailed spatial variability in the fields of mass loss on the ice sheets (Church et al., Reference Church, Clark, Cazenave, Gregory, Jevrejeva, Levermann, Merrifield, Milne, Nerem, Nunn, Payne, Pfeffer, Stammer, Unnikrishnan, Stocker, Qin, Plattner, Tignor, Allen, Boschung, Nauels, Xia, Bex and Midgley2013a). Additionally, groundwater extraction is the only terrestrial water storage component included in the projections, and other components, such as reservoir impoundment or changes in natural water storage, could explain some of the differences between observations and projections. As the regional mass-driven sea-level trends do not show a large spatial variability, we do not apply spatial filtering to this component.

Total and SDSL observations (Figure 1a,c) exhibit high variability due to internal climate variability and mesoscale processes (Carson et al., Reference Carson, Lyu, Richter, Becker, Domingues, Han and Zanna2019; Jain et al., Reference Jain, Scaife, Shepherd, Zappa, Smith, Knight, Weisheimer, Schiemann, Gray and Ineson2023). This is, for instance, visible in the Western Equatorial Pacific (with negative trends) and western boundary currents (with positive trends). The observations also show negative trends associated with small to mesoscale eddies in the Antarctic Circumpolar Current, and negative trends south of Greenland related to subpolar gyre oscillations (e.g., Chafik et al., Reference Chafik, Nilsen, Dangendorf, Reverdin and Frederikse2019). On the other hand, the IPCC AR5 projections (Figure 1b,d) display smoother spatial patterns in the trends, partly due to the coarse model resolution, which does not allow for an accurate representation of small-scale ocean dynamics (Shevchenko and Berloff, Reference Shevchenko and Berloff2022). Furthermore, since the AR5 sea-level projections are a multi-model mean over 21 climate models (Church et al., Reference Church, Clark, Cazenave, Gregory, Jevrejeva, Levermann, Merrifield, Milne, Nerem, Nunn, Payne, Pfeffer, Stammer and Unnikrishnan2013b), the majority of internal climate variability is averaged out in the ensemble mean (Jain et al., Reference Jain, Scaife, Shepherd, Zappa, Smith, Knight, Weisheimer, Schiemann, Gray and Ineson2023), which can explain part of the difference between the observations and projections: climate model ensembles consist of multiple realizations of the climate state, whereas the observations are only one ‘realization’. In addition, the internal variability of model simulations is not in phase with observations (e.g., Carson et al., Reference Carson, Lyu, Richter, Becker, Domingues, Han and Zanna2019). These issues can all be addressed by filtering the observational data to improve the comparison to projections.

Removing climate variability from observations

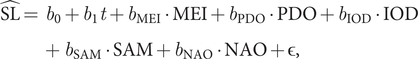

To filter the internal variability from the observations, we tested three different methods (Figure 2): (1) the LFCA method, which identifies the principal components related to the high-frequency (internal variability) and low-frequency variability (forcing); (2) the MVLR method, which identifies and removes the impact of internal climate variability using climate indices; and (3) the SOM method, which identifies regions with similar sea-level behavior and averages over these regions (see Methods section for details on the three approaches). LFCA captures the major forced trends and effectively smooths out variability, with only some remnants of strong eddy variability related to the circumpolar and western boundary currents. The MVLR method (Figure 2b) removes Pacific-centered variability (e.g., ENSO), but it is less effective for the NAO and in regions with intense ocean dynamics (e.g., western boundary currents). Like LFCA, the SOM (Figure 2c) also averages out most of the internal variability, except for the area of large trends in the Northwest Atlantic, indicating that these trends are present across a larger area.

(a–c) Altimetry-observed regional sea-level change trends (mm/yr) for the period 2007–2022 after removing internal variability with (a) low frequency component analysis (LFCA), (b) multiple variable linear regression (MVLR) and (c) self-organizing maps (SOM). (d) Global mean sea-level change with respect to the year 2007, showing the altimetry-observed changes before and after removing variability, and the IPCC AR5 model projections for the RCP4.5 scenario. The shaded area represents the interval between the 5% and 95% percentiles of the ensemble members of the AR5 RCP4.5 scenario projections.

The global mean changes of the projections and filtered observations have similar trends (Table 1), and are mostly within the uncertainty envelope of the projections, except for MVLR (Figure 2d). We note that, while the filtering methods reduce the regional discrepancies between projections and observations (as discussed in the next paragraph), they can increase the global mean trend difference by 0.5 mm/yr, with the LFCA total sea-level trend on the lower end with

![]() $ 3.5\pm 0.05 $

mm/yr, and the MVLR trend on the upper end with

$ 3.5\pm 0.05 $

mm/yr, and the MVLR trend on the upper end with

![]() $ 4.1\pm 0.54 $

mm/yr (Table 1).

$ 4.1\pm 0.54 $

mm/yr (Table 1).

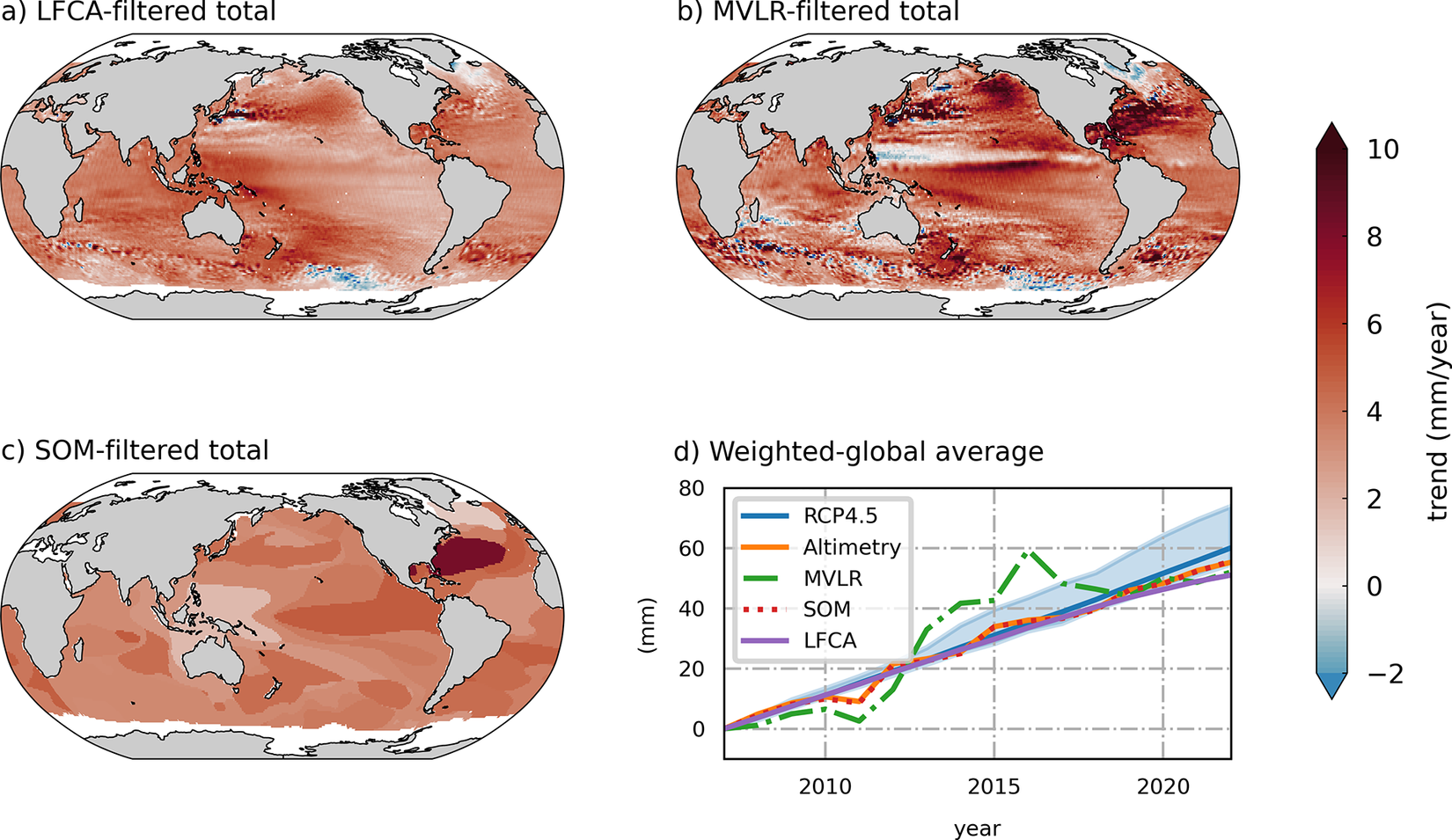

Area-weighted global mean trends of total sea level from IPCC AR5 projections, satellite altimetry observations and altimetry (alt.) observations after filtering using MVLR, SOM and LFCA, over the period 2007–2022

Note: Standard deviation (SD) (mm), RMSE with regards to AR5 projection (mm) and reduction of RMSE due to filtering (%). Uncertainties represent the standard error of the linear regression.

To quantify the efficiency of the filtering methods on regional sea-level change, we compute the RMSE between regional observations (unfiltered and filtered) and projections. We then compute how much, on average, the RMSE reduces for the filtered observations compared to the RMSE of the unfiltered observations against projections (Table 1). While all methods reduce the RMSE of the observation with relation to the projection, the LFCA filtering causes a stronger reduction. As already indicated in Figure 2, filtering with SOM and LFCA brings the observations closest to the projections. We also look at the standard deviation (SD) of the time series to see how much of the variability the filtering methods reduce. Like for the RMSE, we see that all methods reduce the SD, with LFCA being the most effective one. For SDSL, we also find that LFCA is the most effective method in reducing the spatial variability (Fig. 1 in the Supplementary Appendix).

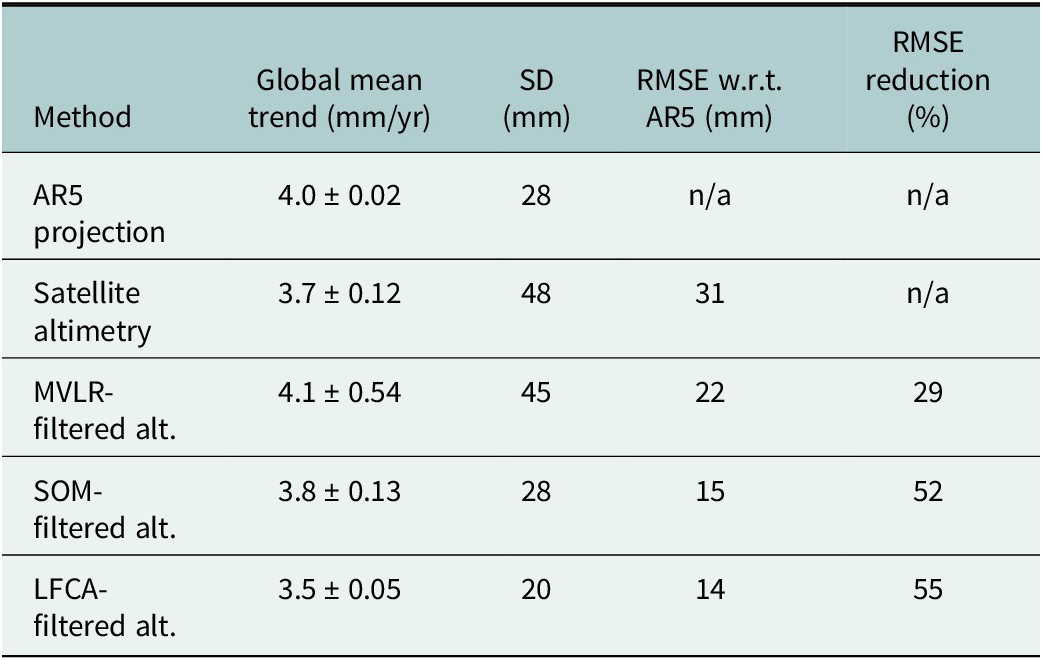

Comparing regional AR5 projections to observations

As a result of the ensemble averaging of the projections, the majority of the internal variability in the models is removed (Monselesan et al., Reference Monselesan, O’Kane, Risbey and Church2015; Slangen et al., Reference Slangen, Church, Zhang and Monselesan2015). When we compare the AR5 projections to the unfiltered observations (Figure 3a), we see large RMSE values, especially in highly dynamic oceanic regions, such as the Indo-Pacific region, the western boundary currents and the Antarctic Circumpolar Current. These high RMSE values in the unfiltered comparison (Figure 3a) are not evidence of model failure, but rather the expected result of comparing a forced-only signal (i.e., the projections) with a dataset containing substantial internal variability (i.e., the unfiltered observations). When we compare the projections with the MVLR-filtered observations (Figure 3b), we see a pattern of RMSE that strongly resembles the RMSE of AR5 versus unfiltered observations. Although the MVLR method should, in principle, remove variability linked to climate modes by incorporating them as predictors, it does not seem effective in this case, possibly because of the limited length of the record. This is in contrast to the SOM and the LFCA methods (Figure 3c and d), which lead to large reductions in the RMSE. The LFCA method is the most successful in isolating the forced response, yielding a small RMSE in most of the regions, with a few exceptions in highly dynamic regions. Thus, we focus on the LFCA method for the remainder of the analysis to highlight the regional agreements and discrepancies between the AR5 and filtered observations. We note, however, that while the LFCA method is the most successful in reducing the RMSE, the LFCA-filtered data might still contain minor remaining internal variability, which cannot be distinguished from the forced signal due to the relatively short time period available for the analysis.

RMSE between IPCC AR5 total sea-level projections and (a) unfiltered, (b) MVLR-filtered, (c) SOM-filtered and (d) LFCA-filtered satellite altimetry observations.

For the total trend (Figure 4b), as well as the SDSL component (Figure 4e), we see that the projections can under- or overestimate the observations depending on the region. For example, most of the equatorial Atlantic is marked by positive anomalies, that is, projections are higher than observations, while in the Eastern Equatorial Pacific, the projections are lower than the observations. The symmetry of the distributions highlights how the projections are both under- and overestimating the observations (Figure 4c,f). The histograms also highlight the efficiency of the different filtering methods in bringing the distribution means closer to zero (i.e., better aligning observations with the projections). The similarities between the anomalies of the total and SDSL components suggest that the differences between the observations and projections are due to sterodynamic processes. The closest agreements between projections and observations are seen for the mass component (Figure 4g–i), with trend anomalies around 0.5 mm/yr, and mainly positive, that is, the mass-driven sea-level projections tend to overestimate the observations. Trend anomalies for the subcomponents of mass-driven sea-level change are presented in Supplementary Appendix Fig. 2.

Difference between IPCC AR5 total, SDSL and mass-driven projections (RCP4.5) and (LFCA-filtered) observations, expressed in RMSE (a, d, g), trends anomalies (projections - observations, b, e, h) and trend histograms (c, f, i).

Discussion

Each filtering method targets the spatiotemporal variability in the observations differently. The LFCA method isolates long-term trends by focusing on low-frequency variability, bringing the observed sea-level trends closer to projections across the Pacific, Indian and Southern Oceans (Figure 3). The MVLR method removes internal climate variability modes like ENSO and NAO, aligning observed trends more closely with projections in areas like the central Pacific, where these oscillations most strongly influence regional sea levels. The SOM method smooths regional variability by clustering areas with similar sea-level trends, helping observations align with projections in broader, less variable regions. However, it retains higher trends in more dynamic regions such as the Northwest Atlantic. Here, we used the method that removed most of the variability across the ocean (LFCA), but if one were interested in a specific area like the Central Pacific and wanted to see what the sea-level trend would be without ENSO, the MVLR method might be better suited to remove the local variability there. The choice of method is therefore dependent on the location and timeframe of interest. Furthermore, the limited record length constrains the robustness of all filtering methods.

In the IPCC AR6 report (Fox-Kemper et al., Reference Fox-Kemper, Hewitt, Xiao, Aðalgeirsdóttir, Drijfhout, Edwards, Golledge, Hemer, Kopp, Krinner, Mix, Notz, Nowicki, Nurhati, Ruiz, Sallée, Slangen, Yu, Masson-Delmotte, Zhai, Pirani, Connors, Péan, Berger, Caud, Chen, Goldfarb, Gomis, Huang, Leitzell, Lonnoy, Matthews, Maycock, Waterfield, Yelekçi, Yu and Zhou2021), the observed global mean sea-level trend was assessed to be 3.69 (3.21–4.17) mm/yr for the period 2006–2018, which is in line with the observed GMSL in Table 1 (which is for a slightly different period). Wang et al. (Reference Wang, Church, Zhang and Chen2021) and Slangen et al. (Reference Slangen, Palmer, Camargo, Church, Edwards, Hermans, Hewitt, Garner, Gregory, Kopp, Malagon-Santos and van de Wal2023) found that global mean trends of the 2007–2018 observations and AR5 projections were similar, which agrees with our findings for the period 2007–2022. We note that the LFCA filtering does reduce the GMSL trend (by 0.5 mm/yr), suggesting that part of the observed trend could have been increased by internal variability. On a regional scale, however, we see that in some places the (filtered) observations show a larger trend, while in other locations the projections are larger.

Compared with AR5, the more recent IPCC AR6 report (i) presents a slightly lower observed GMSL rate for the period in which they overlap (2006–2018, see Slangen et al. [Reference Slangen, Palmer, Camargo, Church, Edwards, Hermans, Hewitt, Garner, Gregory, Kopp, Malagon-Santos and van de Wal2023]) and (ii) projects a slightly larger GMSL rise by the end of the twenty-first century, mainly due to a larger projected contribution from the Antarctic ice sheet (see Table 9.8 of Fox-Kemper et al. [Reference Fox-Kemper, Hewitt, Xiao, Aðalgeirsdóttir, Drijfhout, Edwards, Golledge, Hemer, Kopp, Krinner, Mix, Notz, Nowicki, Nurhati, Ruiz, Sallée, Slangen, Yu, Masson-Delmotte, Zhai, Pirani, Connors, Péan, Berger, Caud, Chen, Goldfarb, Gomis, Huang, Leitzell, Lonnoy, Matthews, Maycock, Waterfield, Yelekçi, Yu and Zhou2021]). It is, however, too soon to say how the AR6 projections compare against the observations, as the projections are only available from 2020 onwards, so the overlapping period is still too short for a meaningful analysis.

The alignment between filtered observations and projections in the global mean, along with regional matches in many areas, suggests that AR5 projections capture reasonably well externally forced trends. However, some observed regional variability is unaccounted for, particularly in high-dynamic areas. The need for filtering emphasizes that while projections are robust for longer-term, large-scale trends, caution is needed when applying them to regions dominated by large internal climate variability or strong dynamic processes (e.g., boundary currents and eddies), particularly on shorter time scales. The discrepancies between projections and observations, especially the ones related to the SDSL anomalies, could potentially be reduced by using higher spatial resolution models (e.g., Hermans et al., Reference Hermans, Tinker, Palmer, Katsman, Vermeersen and Slangen2020) and by improving the representation of regional ocean dynamics by explicitly modeling mesoscale eddies (Morrison et al., Reference Morrison, Griffies, Winton, Anderson and Sarmiento2016). Regional downscaling could, for instance, better resolve local dynamic effects, and probabilistic approaches could address the variability induced by internal climate oscillations such as ENSO or NAO.

Conclusion

The AR5 projections closely match the observations on a regional scale, especially after filtering is applied to the total sea-level change and the SDSL contribution. For total sea level and SDSL, we find that the AR5 projections both under- and overestimate the observations, depending on the region (see Figure 4b,e). For the mass-driven sea-level component, the projections overestimate the observations almost everywhere, but its impact on the observation-projection discrepancies is smaller than for the SDSL component. The use of filtering methods brings the observed regional trends closer to AR5 projections (reducing the RMSE by

![]() $ \sim 50\% $

), suggesting that a substantial part of the discrepancies arise from internal variability in the observations, whereas this variability is averaged out in the model ensemble, and suggesting that the trends remaining in filtered observations across all methods may be at least partially due to external forcings. Our comparison demonstrates that filtering enhances compatibility between observations and projections, validating the AR5 models for large-scale sea-level trends and highlighting that internal variability affects sea-level observations most in the western boundary currents, Equatorial Pacific and Antarctic circumpolar current. These results provide confidence in using projections for assessing forced sea-level changes, particularly over longer periods and larger regions.

$ \sim 50\% $

), suggesting that a substantial part of the discrepancies arise from internal variability in the observations, whereas this variability is averaged out in the model ensemble, and suggesting that the trends remaining in filtered observations across all methods may be at least partially due to external forcings. Our comparison demonstrates that filtering enhances compatibility between observations and projections, validating the AR5 models for large-scale sea-level trends and highlighting that internal variability affects sea-level observations most in the western boundary currents, Equatorial Pacific and Antarctic circumpolar current. These results provide confidence in using projections for assessing forced sea-level changes, particularly over longer periods and larger regions.

Open peer review

To view the open peer review materials for this article, please visit http://doi.org/10.1017/cft.2026.10023.

Supplementary material

The supplementary material for this article can be found at http://doi.org/10.1017/cft.2026.10023.

Data availability statement

Satellite altimetry can be downloaded at https://podaac.jpl.nasa.gov/dataset/MERGED_TP_J1_OSTM_OST_GMSL_ASCII_V52 (last accessed March/2025), and regional sea level data from IPCC AR5 is distributed in netCDF format by the Integrated Climate Data Center (ICDC), CEN, University of Hamburg, Hamburg, Germany, at https://www.cen.uni-hamburg.de/en/icdc/data/ocean/ar5-slr.html (last accessed March/2025). Updated steric and mass-driven sea-level change fields, from 2002 to 2022, as well as the altimetry data used, can be found at https://doi.org/10.5281/zenodo.16993112. Filtered altimetry and sterodynamic data can be found at https://doi.org/10.5281/zenodo.16987128. Climate indices were found at NOAA Physical Sciences Laboratory (2023a) for IOD; NOAA Physical Sciences Laboratory (2023b) for MEI; NOAA Physical Sciences Laboratory (2023c) for PDO; NCAR Climate Data (2003) for NAO; and NCAR Climate Data (2023) for SAM. The code to reproduce the analysis can be found at https://github.com/NIOZ-SL/AR5xOBS (https://doi.org/10.5281/zenodo.18620467).

Acknowledgments

Regional sea-level data from IPCC AR5 were distributed in netCDF format by the Integrated Climate Data Center ICDC, CEN, University of Hamburg, Hamburg, Germany. The authors would like to acknowledge the World Climate Research Programme’s Working Group on Coupled Modeling, which is responsible for CMIP, and also thank the climate modeling groups for producing and making available their model output.

Author contribution

VMS, CC and JS contributed equally to this manuscript. Conceptualization: CC and AS. Methodology: CC, VMS, JS and AS. Data curation: CC. Data analysis LFCA: VMS. Data analysis MVLR: JS. Data analysis SOM: CC. Data analysis mass-driven estimates: BO. Data visualization: VMS. Supervision: AS. Writing – original draft: CC, JS, VMS and AS. Writing – reviewing and editing: CC, JS, VMS, AS and BO.

Financial support

This publication is part of the project DARSea, awarded to AS (file number VI.Vidi.223.058 of the research program ENW Vidi and Aspasia file number 015.022.013), which is financed by the Dutch Research Council (NWO).

Competing interests

The authors declare none.

Comments

No accompanying comment.