1 Contextualising Online Harms in the Metaverse

1.1 Aims

Heraclitus, writing around 500 BCE, famously declared πάντα ῥεῖ (‘everything flows’), capturing the essence of a world in constant motion. He further illustrated this idea with the observation that No man ever steps in the same river twice, for it is not the same river and he is not the same man. This philosophy of perpetual change serves as a guiding principle for this Element, which explores the dynamic and evolving nature of cyberspace, particularly that of the metaverse, revealing how transformation shapes our understanding and experiences in virtual worlds. Over the past three decades, the convergence of communication and computing technologies has rapidly advanced, reshaping how individuals interact and engage with the world. The internet, the World Wide Web (WWW), and mobile communication have become embedded in modern society, influencing nearly every aspect of daily life (Castells et al., Reference Castells, Fernandez-Ardevol, Qiu and Sey2009). This digital transformation has created a new reality fundamentally different from how humans have lived for millennia (Levin et al., 2021).

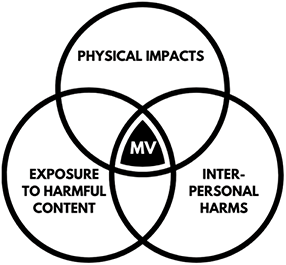

As digital spaces become ever more pervasive, the emergence of the metaverse marks a shift towards a deeply immersive and interactive virtual environment, amplifying both positive and negative experiences (Patel, Reference Patel2025). Its heightened sense of presence can enrich learning (Damaševičius et al., Reference Damaševičius and Sidekerskienė2024), create social connections (Hennig-Thurau et al., Reference Hennig-Thurau, Aliman and Herting2023), and create new opportunities for engagement (Rane et al., 2023). However, this same intensity also has the potential to magnify the impact of harmful encounters (Effing et al., Reference Effing and Hinz2024), possibly leading to loneliness (Oh et al., Reference Oh, Chang, Park and Lee2023), and long-lasting psychological and emotional distress (Zakaria, Reference Zakaria2025). Despite the fast expansion of these virtual spaces, the broader implications, both for individuals and for society, remain largely unexplored. This uncertainty presents critical challenges in safeguarding children, as risks and vulnerabilities may emerge in novel and, at times, unpredictable ways.

The metaverse is currently underrepresented in the cybercrime literature, and it is the intention of this Element to critically explore this emerging topic. This Element aims to address these concerns by exploring the nature of harm experienced by children in virtual reality environments. Drawing on recent research (Davidson et al., Reference Davidson, Martellozzo, Farr, Bradbury and Meggyesfalvi2024), it challenges these risks through a criminological lens, assessing how immersive digital spaces have the potential to reshape victimisation, offending behaviour, and safeguarding strategies. By doing so, it aims to contribute to a more nuanced understanding of child safety in the metaverse and inform the development of effective protective measures.

1.2 Rationale

Virtual reality technology has been intentionally designed to blur the boundaries between digital and physical experiences, with some researchers suggesting that the mind and body often struggle to distinguish between the two (Sabry, Reference Sabry2022). As the metaverse evolves, particularly with the integration of haptic devices that provide sensory feedback, it is increasingly positioned to become a central part of everyday life. However, this immersive digital frontier also presents new risks, including the emergence of sexual violence in virtual environments (Clare McGlynn et al., Reference McGlynn. and Carlotta2025). Research shows that individuals can experience psychological and physiological responses to virtual events as though they occurred in the physical world (Cheng et al., Reference Cheng, Wu, Chen and Han2022; Patel, 2021), raising urgent questions about harm, consent, and protection in immersive spaces. Drawing from data gathered through the Virtual Reality Risks Against Children Project (VIRRAC) funded by the National Research Centre on Privacy, Harm Reduction and Adversarial Influence Online (REPHRAIN), this Element presents different perspectives from industry and practice experts as well as children and young people themselves.

This Element seeks to provide a comprehensive examination of child safety in the metaverse, offering a multidisciplinary perspective that integrates criminological and psychological theory, technology, and policy. As virtual and immersive environments become increasingly embedded in the everyday lives of children and young people, it is important to understand the opportunities and the risks they present.

Another key objective is to address the existing knowledge gaps and resource limitations among professionals and practitioners responsible for protecting children from online harms, including child sexual exploitation and abuse (CSEA) in the metaverse. The Element identifies areas where safeguarding measures, legal frameworks, and technological solutions remain inadequate, and it offers practical recommendations for improving child protection efforts.

Crucially, this Element centres the voices of children, young people, and professionals (see Section 5) by engaging with them directly to understand their lived experiences, concerns, and expectations regarding online safety in the metaverse. Rather than solely relying on adult perspectives, it seeks to incorporate the insights of young users to inform more effective and child-centred policy, safeguarding practice and strategies.

This Element seeks to address critical gaps in knowledge, support evidence-based interventions, and inform future digital safety strategies by bringing together research, policy discussions, and the voices of young people. It is intended for academics, policymakers, child protection professionals, law enforcement, and technology developers committed to creating safer digital environments for children and young people.

1.3 Defining the Metaverse and Its Relevance to Child Safeguarding

The metaverse, despite its popularity, lacks a universally agreed-upon definition. Its multidimensional nature has resulted in a different range of interpretations. Academics have described it as a three-dimensional or spatial internet, an immersive virtual society with its own functioning economy, and a shared world where avatars participate in political, economic, social, and cultural life. It has also been characterised as an interoperable network, a medium for simulation and immersion, and a hypothetical synthetic environment intricately linked to the physical world ; Dolata & Schwabe, Reference Dolata and Schwabe2023; Dwivedi et al., Reference Dwivedi, Ismagilova and Slade2022; Lee et al., Reference Lee, Choi and Lee2021; Park & Kim, Reference Park and Kim2022). Dolata and Schwabe (Reference Dolata and Schwabe2023) found that the notion of the metaverse is subject to interpretative flexibility, as various actors shape its meaning according to their interests, affecting how it is presented and what components or technologies are connected to it, leaving it unclear whether it is already in existence, currently emerging, or only possible in the future.

For the purposes of this Element, we adopt a pragmatic approach: the metaverse is used as an umbrella term for immersive digital environments enabled by Extended Reality (XR) technologies, including virtual reality (VR), augmented reality (AR), and mixed reality (MR) (Rauschnabel et al., Reference Rauschnabel, Felix, Hinsch, Shahab and Alt2022). These environments are typically accessed through headsets or AR-enabled devices, and allow users to interact with others via avatars in shared, persistent spaces.

In this Element, we use XR technologies to refer to the range of tools that generate artificial or enhanced environments, and XR environments to describe any digitally modified setting. VR is particularly central to our analysis, as it fully replaces the user’s physical surroundings with a 3D digital world that is often experienced as immersive, interactive, and lifelike, sometimes including sensory feedback through haptic technologies. These affordances raise distinct safeguarding challenges, especially for children.

Unlike traditional social platforms such as Facebook or Instagram, the metaverse offers offenders enhanced opportunities to manipulate, coerce, or groom others in highly immersive, anonymous settings. The embodied nature of VR interactions (including voice, gesture, eye contact, and even touch via haptics) can intensify the sense of presence and emotional realism. This immersive quality, while a technical achievement, also complicates a child’s ability to distinguish safe from unsafe encounters and may increase the psychological impact of abusive or inappropriate behaviour (Fry et al., Reference Fry, Gaitis and Ladringan2023).

While some scholars have proposed terms such as Extended Verse (XV) to account for the evolving and hybridised nature of these environments (Jagatheesaperumal et al., Reference Jagatheesaperumal, Ahmad, Al-Fuqaha and Qadir2024), we have opted to use metaverse throughout this Element for clarity and accessibility. The term is now broadly recognised, and captures the technologies, environments, and user experiences most relevant to this Element. We have purposely avoided extensive definitional debates in order to focus on the practical implications of immersive technologies for child safety.

The metaverse, as a concept, emerged in the early 1990s, initially coined by author Neal Stephenson in his science fiction novel Snow Crash (1992). The term has since gained considerable traction, both in academic circles and in the popular media. It represents a collective virtual shared space facilitated by converging virtually enhanced physical reality and persistent digital environments. Within the metaverse, users engage through avatars and interact with one another in a simulated environment that is both immersive and persistent in a simulated, immersive, and persistent environment. While the term ‘metaverse’ remains widely accepted, it is important to acknowledge that terminology in this field is not fixed, and alternative expressions such as ‘Extended Verse’ (XV) have emerged. The term ‘Extended Verse’ (or ‘XV’) is a more recent innovation and could be seen as an expansion or modification of the traditional metaverse concept. ‘Extended Verse’ suggests an even broader scope, incorporating virtual worlds and enhanced interactions with the physical world. Such a term could include immersive hybrid environments that merge the virtual and real, offering users a more dynamic, cross-medium experience (Jagatheesaperumal et al., Reference Jagatheesaperumal, Ahmad, Al-Fuqaha and Qadir2024). Therefore, the ‘XV’ label could evoke a sense of evolution beyond the metaverse, incorporating various technologies such as AR, holography, and photorealism, which augment the real world rather than replace it altogether.

Though ‘Extended Verse’ (XV) presents an exciting possibility, the decision to adopt ‘metaverse’ in this Element stems from several considerations. First and foremost, the metaverse has become a widely accepted and easily recognisable term within both academic and public discussions. It encapsulates the digital spaces most people envision when considering virtual environments, encompassing VR worlds, digital avatars, and the various platforms facilitating these experiences. The term ‘Extended Verse’ is, in contrast, less familiar. While it offers an expanded conceptualisation, it may confuse audiences who are more familiar with the established notion of the metaverse. Given that this Element aims to reach a broad audience, including those not necessarily immersed in the cutting-edge developments of digital technology, using the term ‘metaverse’ allows for easier understanding and greater engagement.

Moreover, the term ‘metaverse’ allows for flexibility within its application. As the metaverse continues to evolve and expand, the term itself has become a catch-all for a range of technologies and digital experiences. This broadness allows for future advancements, such as the incorporation of AR, AI, and other innovations, to fall under the metaverse umbrella (Purdy, Reference Purdy2022). For these reasons, the decision to maintain the use of ‘metaverse’ in this Element is one grounded in both accessibility and relevance, ensuring that readers can connect to the material while recognising the broad scope and potential of digital worlds.

At the same time, it is acknowledged that the field of virtual and augmented realities is fluid and rapidly changing. As new technologies and innovations continue to emerge, the term ‘metaverse’ may one day give way to other expressions such as ‘extended verse’ (Anderson & Rainie et al., Reference Anderson, Rainie and Arbanas2022). However, for the purposes of this Element, the choice to prioritise the familiar term ensures that the content remains engaging and relevant to a wide array of readers.

1.3.1 How the Metaverse Is Reshaping Our World

The metaverse is increasingly shaping various aspects of society, driving transformations in education (Bokyun et al., Reference Bokyung, Nara, Eunji, Yeonjeong and Soyoung2021), industry, entertainment, and digital economies (Dwivedi et al., Reference Dwivedi, Ismagilova and Slade2022). Virtual reality (VR) and augmented reality (AR) technologies are revolutionising medical education by providing realistic, risk-free simulations for surgical training, allowing future professionals to refine their skills before performing procedures on real patients (Shahrezaei et al., Reference Shahrezaei, Sohani, Taherkhani and Zarghami2024).

Beyond medical training, the metaverse is redefining education across a variety of disciplines, offering immersive and interactive learning environments that enhance engagement and knowledge retention (Lindgren & Johnson-Glenberg, Reference Lindgren and Johnson-Glenberg2013). In corporate and industrial training, VR-based simulations are increasingly used in fields such as aerospace, automotive, and energy, providing employees with risk-free, experiential learning opportunities that improve skill acquisition and workplace safety (Freina & Ott, Reference Freina and Ott2015; ). Similarly, these practices are growing within policing, although they are still in the early stages. However, they present great potential for transforming law enforcement training and operations. Unlike traditional methods, which rely on classroom instruction and physical training exercises, the metaverse can enable interactive, dynamic environments where officers can engage in realistic simulations without real-world risks (Podoletz et al., Reference Podoletz, McGill and McIlhatton2024). For example, police forces across the UK are already adopting VR technology to provide more situational-based learning in which police officers can experience real-world simulations, for scenarios such as domestic abuse or knife crime (Kleygrewe et al., Reference Kleygrewe, Hutter, Koedijk and Oudejans2024), in which response officers attend, talk to victims and witnesses, integrate and work with colleagues from specialist units, and be observed and supported by trainers. They can also build knowledge and skills, learning about the forensic qualities of different materials and objects as they encounter them (Kye et al., Reference Kye, Han, Kim, Park and Jo2021). Team debriefings can be held, and the scenario could be carried through the whole investigation cycle, ending up presenting evidence in court (Hull, n.d.). Other potential uses could include where police officers and staff practice conversations that they may undertake in the workplace that are relatively infrequent but that have very high stakes when they do, such as delivering a death message, talking to a victim of domestic abuse, or conducting a disclosure briefing to a defence solicitor in a custody suite. Learners could learn about psychology and criminology as they walk through crime case studies. Constructivist and experiential learning theories suggest that students learn more effectively when actively engaged in their environment. Metaverse-based learning platforms align with these pedagogical models by enabling hands-on experiences that promote deeper understanding (Kolb, Reference Kolb1984).

When focusing on children and the associated risks, it is important to examine the gaming industry, which remains at the forefront of metaverse innovation. VR headsets are enabling unprecedented levels of immersion, allowing players to step into fully interactive digital environments and creating deeper engagement with both the game world and other participants (Cummings & Bailenson, Reference Cummings and Bailenson2016). While platforms like Second Life, Minecraft, Roblox, and FortniteFootnote 1 are not yet fully realised 3D metaverses, they exemplify the potential of persistent virtual worlds where users, through a digital avatar, can connect, socialise, explore, and experience virtual spaces with others who are not physically present (University of Cambridge, 2022, December 14).

The future of the metaverse remains uncertain, but Kristensson (Reference Kristensson2022) argues that it holds potential for creating new opportunities for individuals marginalised by disability. He is investigating how the metaverse could provide life-changing benefits to people of all ages experiencing impairments, including those related to vision, hearing, mobility, dexterity, and mental health. The metaverse also provides increased opportunities for socialisation for those with neurodiversity diagnoses such as autism spectrum disorder, as social interactions using headsets can minimise the difficulties often faced with eye-to-eye contact and verbal communication impairments (Lee et al., Reference Lee and Yoo2023).

As the metaverse continues to evolve, its societal impact extends beyond these domains, influencing education, governance, and human interaction in a variety of ways. In part, this Element aims to engage with the implications of the widespread adoption of the metaverse, particularly regarding the safety of children and young people.

1.4 Children and the Metaverse: Overview of Online Harms

This Element explores the types of harm children may encounter in the metaverse, examining how curiosity, peer influence, and social engagement can draw them into virtual spaces that are not intended or designed for audiences of their age group. Despite platforms setting a minimum age of thirteen, weak age verification measures often allow underage access to adult-oriented environments (Patel, Reference Patel2025). As a result, children may be exposed to various risks, including data privacy, inappropriate content, grooming, and cyberbullying. Engaging in gaming and social interactions within the metaverse has become increasingly appealing to children, facilitated by ‘social VR’ applications that offer spatial and relational experiences akin to everyday interactions, irrespective of physical location. The growing popularity of online social gaming attracts children and young people globally, with an estimated 2.7 billion gamers identified as a key consumer demographic (UNICEF, 2023). Platforms such as VRChat, Meta Horizon Worlds, and Rec Room enable users to connect with friends and strangers. Notably, Roblox, a user-generated gaming platform, is considered one of the most frequented metaverses, boasting over thirty-two million daily active users under thirteen as of the fourth quarter of 2024 (Statista, 2024). This demographic represents a substantial portion of its user base, highlighting the platform’s large engagement among younger audiences.

Children are not only participants but also creators within these platforms, developing virtual worlds, designing products, and offering services such as tutorials and entertainment content. This is highly popular within the game Roblox and has led to concerning reports of financial exploitation by such sites regarding child labour accusations (The Guardian, 2022). Some content creators and game developers have achieved notable financial success and global recognition. A particularly lucrative aspect of the metaverse experience is avatar creation. Avatars, digital representations of users or virtual bodies (Han et al., Reference Han, Ratan and Kim2023), allow children to explore self-expression and identity formation. The synchronisation of avatars’ movements and gestures with users’ real-time actions through VR headsets and controllers enables immersive, first-person experiences. Research indicates that approximately half of young individuals perceive their virtual identity as an extension of their physical selves, with 41 per cent finding it easier to express themselves online than in real life (McKinsey & Company, 2022). However, their experiences, such as the sense of presence or enjoyment, can depend on the amount of time spent in the metaverse and the realism of their avatars, with studies suggesting that uniform avatars may provide greater enjoyment than customised self-avatars (Han et al., Reference Han, Ratan and Kim2023).

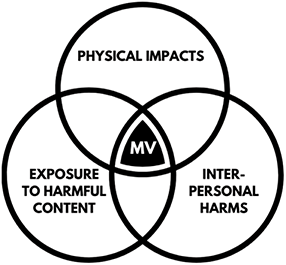

Positive opportunities for children within the metaverse have been identified, including accessible education, fitness and creativity enhancement, social interaction, workplace readiness training, and the promotion of participatory and digital rights (UNICEF, 2023). Through immersive technologies, children are introduced to interactive and engaging educational environments, often leading to enhanced learning outcomes in formal and non-formal educational settings (Dede, 2019). However, the metaverse presents a range of potential harms to children, including exposure to explicit content, data privacy concerns, and harmful interactions. A study by the Centre for Countering Digital Hate (CCDH) revealed that users, including minors, encounter abusive behaviour approximately every seven minutes in virtual environments (CCDH, 2023). These harmful interactions include harassment based on race, sexuality, and gender; exposure to graphic sexual content; bullying; and threats of violence. The immersive nature of these platforms has the potential to intensify the impact of such negative experiences, with inadequate monitoring and moderation exacerbating the issue. The foundational work of Thomas Holt (e.g., Holt et al., Reference Holt, Bossler and Fitzgerald2013; Holt et al., Reference Holt and Bossler2015) laid the groundwork for understanding the dynamics of cybercrime and online child exploitation, and this Element builds upon that legacy by examining how immersive affordances, such as embodied presence, spatial audio, and avatar realism, create new and intensified vectors for harm in the metaverse.

The metaverse’s immersive environments also facilitate grooming processes, allowing offenders to exploit technology to coerce and abuse children (Gómez-Quintero et al., Reference 78Gómez-Quintero, Johnson, Borrion and Lundrigan2024). In 2023, UK police investigated at least eight instances where virtual reality (VR) devices were used to store and view child sexual abuse material (CSAM). A BBC investigation revealed alarming instances of online harm within virtual reality environments. A researcher, posing as a thirteen-year-old girl, encountered grooming behaviours, exposure to sexual content, racist abuse, and even a rape threat while navigating VRChat, a widely accessible online virtual world (BBC News, 2022, February 22).

Bullying within metaverse platforms is another major concern. A study conducted in the United States (Hinduja et al., Reference Hinduja and Patchin2024) revealed that minors engage in abusive behaviours, such as following, insulting, and shouting at others. The absence of capable guardians and adequate safety mechanisms can escalate such violence.

The metaverse presents significant risks related to online radicalisation. Its immersive nature, combined with the ability for users to interact in real-time through lifelike avatars, creates an environment that extremist groups can exploit for recruitment, training, and the dissemination of harmful ideologies (UK Parliamentary Office of Science and Technology, 2023). Security agencies have raised concerns that these groups may use online gaming and virtual reality platforms to target vulnerable minors, expose them to propaganda, and integrate them into extremist networks (Radicalisation Awareness Network, n.d.). The deeply engaging and interactive nature of these spaces can reinforce in-group beliefs, facilitate indoctrination, and even serve as a training ground for violent behaviour (Bhatt et al., Reference Bhatt, Mantua, Gruber and Trachik2023).

Data privacy is another critical issue in the metaverse, potentially infringing upon children’s rights and allowing for the extraction of sensitive information about their habits and emotional states (UNICEF Innocenti, 2023). This data could be misused for malicious intent or targeted advertising (Information Commissioner’s Office, n.d.).

This Element will address all these challenges and present a critical evaluation as to why a comprehensive approach is required, including robust regulatory frameworks, technological safeguards, and educational initiatives to protect young users in these evolving digital spaces.

1.5 Structure

This Element explores how contemporary criminological theories can enhance our understanding of children’s online experiences as victims (see Section 2), addressing a significant gap in the literature. It also highlights the critical knowledge gaps and resource limitations faced by professionals and practitioners responsible for safeguarding children at risk of abuse and exploitation within metaverse environments. Furthermore, it investigates the complexities encountered by professionals in detecting, protecting, and disrupting CSEA activities within the metaverse platforms, shedding light on the multifaceted nature of professional practice in this domain (see Section 5). It amplifies the voices of children, providing an understanding of the need for enhanced support and online safety measures. Through a blend of research findings and practical insights, this Element contributes to a deeper understanding of the challenges and opportunities inherent in safeguarding children within metaverse platforms, providing a foundation for informed policy decisions and targeted interventions aimed at protecting the most vulnerable members of society.

Section 2 applies criminological theories to digital offending and victimisation in the metaverse. It explores frameworks such as anomie theory, routine activity theory, and social learning theory. These theories help explain how offenders operate in virtual environments and the impact on victims. The section highlights the intersection of digital exploitation and broader online abuse patterns, contributing to an evidence-based approach to understanding criminal behaviour in the metaverse.

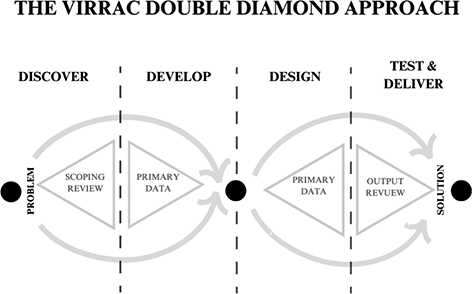

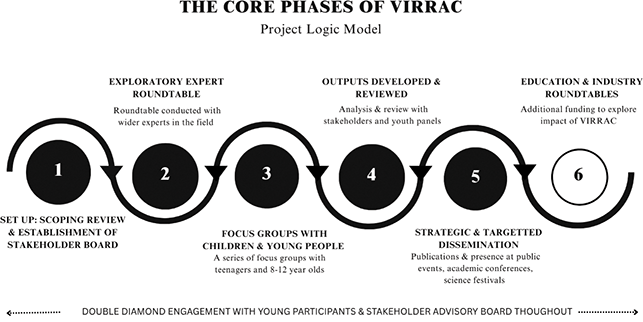

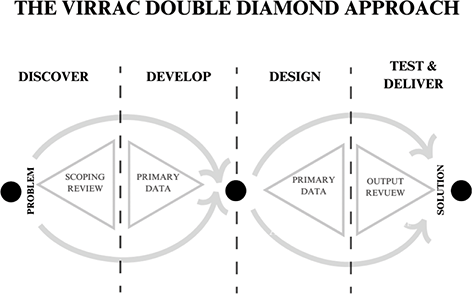

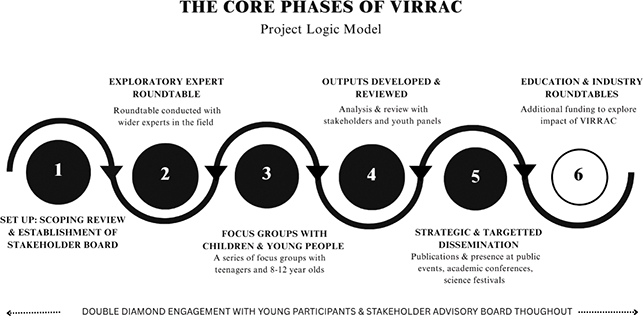

Section 3 discusses research challenges and methodologies for studying CSEA in the metaverse. Due to the anonymity and fluidity of virtual spaces, traditional research methods may be inadequate. The Element draws from the VIRRAC project, which employs interdisciplinary approaches, including criminology, psychology, and sociology, to examine online harms dynamics. A mixed-methods approach, combining VR interactions with qualitative focus groups with children and young people, thematic data analysis, stakeholder roundtables, and primary survey data, aims to offer a comprehensive understanding of risks in these immersive spaces.

Children’s perspectives are at the heart of Section 4, which examines their experiences within metaverse platforms. A youth panel (ages 13–18) provides insights into the use of 2D platforms for gaming and socialising, while younger children (ages 8–12) from different countries reflect on their experiences in VR spaces. While many enjoy online interactions, they also report challenges such as exposure to racism, harassment, and grooming. Notably, children express greater concern about grooming and doxing than online hate crimes, emphasising the need for tailored safety measures. Trust in online individuals is also a key theme, with most preferring connections with people they know offline.

Section 5 examines the practical challenges of safeguarding children in the metaverse, drawing on findings from this and other research. It also explores broader online safety policy issues in the context of child protection and VR environments, incorporating insights from focus groups with expert stakeholders in education and industry (Stage 6 of the VIRRAC Study). Furthermore, this section considers the implications of these findings for policing challenges. Respondents highlighted the metaverse’s potential benefits for children and young people, such as increased inclusivity and therapeutic applications; however, they also expressed concerns about risks, including cyberbullying, harassment, online hate, and grooming.

The Element concludes with Section 6 calling for a recalibration of regulatory, theoretical, and ethical approaches. It argues that traditional criminological theories must evolve to account for digital embodiment, interactivity, and immersive spatial-temporal dynamics. Similarly, policy responses need to move beyond fragmented, reactive strategies towards child-centred, proactive design frameworks. Children’s voices, central throughout the Element, are positioned not only as sources of insight but also as essential partners in developing safer, more empowering, digital futures.

The Element ends with a powerful ethical call: protecting children in the metaverse requires dynamic, adaptive, and child-informed strategies. Safeguarding is no longer just about mitigating harm; it is about reimagining digital life in ways that centre children’s rights, agency, and well-being. It is a challenge not only of regulation or technology, but of collective responsibility in an ever-evolving digital world.

This Element serves as a critical resource for understanding CSEA in digital spaces and shaping policies to protect children in the evolving metaverse landscape.

2 Offending and Victimisation: An Application of Criminological Theory to a Virtual Reality Paradigm

2.1 Introduction

The emergence of Virtual Reality (VR) technology and the proliferation of metaverse platforms have created new avenues for investigating the dynamics of offending and victimisation, particularly in the context of cyber offences against children, through a criminological lens (Van Gelder, Reference Van Gelder2023). Instances of interpersonal violence targeting users and children’s experiences of hate crimes and harassment within these virtual realms underscore the need for social scientists to critically examine this unexplored terrain where legal boundaries, moral ethics, and cognitive vulnerability intersect. The potential impact of this research is significant (Miah, Reference Miah, Hausknecht, Zabel and Miah2022). Primarily, it can provide pivotal insight into ways of detecting, disrupting, and criminalising those who offend and inform measures of support for its victims.

In recent years, there has been significant momentum in the study of cyber offending behaviours and victimisation, leading to a reassessment of criminological frameworks (Bradbury et al., Reference Bradbury, Bleakley and Martellozzo2024; Goldsmith & Brewer, Reference Goldsmith and Brewer2015; Phillips et al., Reference Phillips, Davidson and Farr2022). However, the application of traditional social science theories in this virtual environment requires further adaptation, given the unique and exciting characteristics of the digital sphere. This novel application makes this Element unique, as it will explore offending and victimisation through various theoretical lenses in the context of the VIRRAC research.

Focusing on three primary actors within virtual reality platforms: offenders exploiting children, children engaging in risky online behaviours, and the virtual reality environment itself, this section begins by examining the uniqueness of the virtual reality environment as a facilitation to online behaviour before moving on to consider how such environments can lead to the proliferation of deviancy and victimisation.

Thomas Holt’s body of work provides a valuable theoretical and empirical foundation for understanding criminal behaviour within metaverses, even though he has not focused on these virtual environments directly. His research on cybercrime, digital deviance, and offender decision-making offers frameworks that are particularly adaptable to immersive and decentralised platforms (Holt et al., Reference Holt, Bossler and Fitzgerald2013; Holt et al., Reference Holt and Bossler2015).

Central to Holt’s criminological approach is the application of social learning theory, which posits that individuals acquire deviant behaviour through interactions and shared norms within online communities. This theory finds relevance in metaverses, where socialisation among avatars facilitates the transmission of criminal techniques, rationalisations, and affiliations. Additionally, his incorporation of routine activity theory illuminates how virtual spaces inadvertently foster opportunities for crime. In metaverses, the convergence of motivated offenders, suitable targets, and absent or ineffective guardianship mirrors the conditions Holt identifies in more traditional online settings. Building upon the work of Holt, several theories have been deemed integral to this discussion, from functionalist, positivist, cultural and the Chicago School of thought.

While numerous theoretical frameworks could be applied to online virtual reality behaviours to enhance our understanding of offending and victimisation, this section focuses on a select few that are most relevant to the topic: Durkheim’s Anomie (Reference Durkheim1893), Shaw and McKay’s approach to Social Disorganisation Theory (Reference Shaw and McKay1942), Suler’s (Reference Suler2004) Online Disinhibition Theory, Lyng’s (Reference Lyng1990) approach to Edgework Theory, Hirschi’s (Reference Hirschi2004) Self-Control Theory, and finally Sykes and Matza’s (Reference Sykes and Matza1957) Neutralisation Theory. The critical examination of these theories enables a deeper understanding of virtual reality behaviours and the various factors that shape individual decision-making and actions within virtual environments.

2.2 Virtual Reality Environments, Offending, and Victimisation

The VIRRAC Study and other research suggest that offending in VR spans a broad spectrum of behaviours, from verbal harassment, hate crime and trolling to more complex actions like virtual sexual assault, stalking, theft of virtual property, grooming, and exploitation (Davidson et al., Reference Davidson, Martellozzo, Farr, Bradbury and Meggyesfalvi2024; Porta et al., Reference Porta, Frerich and Hoffman2024). The immersive nature of VR, in contrast to web2 platforms, has been argued to intensify the psychological and emotional impact of offences, rendering users’ experiences more immediate and personal than comparable behaviours in non-immersive online environments (George, Reference George2024). Moreover, the anonymity afforded by VR and the malleability of virtual identities often embolden individuals to act in ways they would not in the physical world (XinYing et al., Reference XinYing, Tiberius, Alnoor, Camilleri and Khaw2024). This disinhibition, combined with the perception that actions in VR are ‘not real’, poses unique challenges for understanding and addressing deviance in these spaces.

For instance, theories like Routine Activity Theory (Cohen & Felson, Reference Cohen and Felson1979) can explain how offenders exploit the convergence of targets and a lack of capable guardianship in poorly moderated VR spaces. Similarly, Neutralisation Theory (Sykes & Matza, Reference Sykes and Matza1957) sheds light on how offenders justify their actions by minimising harm or denying responsibility, often framing VR offences as harmless entertainment. Other frameworks, such as Edgework Theory (Lyng, Reference Lyng1990) and Social Disorganisation Theory (Shaw & McKay, Reference Shaw and McKay1942), provide insight into the social and psychological dynamics that foster deviant behaviour in VR, including the thrill of pushing boundaries and the absence of cohesive societal norms.

2.3 Societal Norms and Social Disorganisation

Émile Durkheim’s theory of anomie (Reference Durkheim1893) refers to a state of normlessness or a breakdown in the social norms and values that guide individual behaviour within a society. This concept can be applied to understanding online offending in virtual reality environments from a social constructionist perspective, as the nature of online environments is a complex paradigm of diverse users with their own moral and ethical belief systems and ambiguous jurisdictional boundary lines (Mincewicz, Reference Mincewicz2023; Thierer & Crew, Reference Thierer and Crews2003). However, the online world is arguably more fractured, as there is no singular societal norm governing the behaviour of others, but rather a space in which multiple societal ideologies and norms exist in the same space and time. Manuel Castells argues that digital environments are not monolithic but rather comprise multiple, overlapping societies and groups, each shaped by distinct cultural, ideological, and normative frameworks (Castells, Reference Castells2000).

It can be argued that the exponential growth of the internet and digital technologies has outpaced the development of ethical guidelines, legal frameworks, and social norms governing online behaviour (Schoentgen & Wilkinson, Reference Schoentgen and Wilkinson2021). The web3 environment is, in essence, a new world, not built on the customs and values of any singular society that governs action. This can leave individuals in a moral vacuum, where they may engage in online offences without fully recognising or respecting the consequences (Hu & Zhou, Reference Hu and Zhou2013). The disconnected and transient nature of online spaces does not require the establishment of social bonds or norms for people to operate within the environment. It could be argued that people use online spaces to escape such bonds and social controls that heavily restrict their behaviours, attitudes, and self-representation in offline spaces. However, without such social bonds or controls, criminological theories have argued that this can potentially increase deviancy as there is no social pressure to conform (Hirschi, Reference Hirschi1969).

Durkheim (Reference Durkheim1893) and Hirschi (Reference Hirschi1969) emphasised the importance of social bonds in preventing deviance. They believed an environment lacking clear guidelines on behaviour resulted in an increased sense of disconnection and a desire to conform.

Much in alignment with earlier discussions around virtual reality behaviour from the perspective of Durkheim, social disorganisation theory, developed by Shaw and McKay in 1947, offers valuable insights. While initially applied to physical neighbourhoods, its principles can be easily attributable to cyberspace, including the metaverse, in elucidating why individuals may engage in risky or deviant behaviours. Research has indicated that social disorganisation in cyberspace, characterised by a lack of structure, moderation, and supervision, catalyses risk-taking and deviant behaviours (Suarez, Reference Suarez2015). Furthermore, in conjunction with factors such as anonymity, absence of rules and regulations, and inadequate content moderation, the diverse user populations contribute to a digital environment where social norms are ambiguous and regulatory mechanisms are less effective. One of the challenges in regulating harmful behaviour within the metaverse lies in the content moderation process and the complexities in detecting audio-overwritten abusive language. The decentralised nature of many VR platforms limits the ability of moderators or administrators to establish effective control, paralleling the lack of law enforcement in socially disorganised neighbourhoods.

In virtual spaces, relationships can be superficial, lacking the emotional depth found in real-world interactions. This weakens social attachments, reducing the internalised pressure to conform to accepted behaviours. Cyberbullying, harassment, and even digital fraud thrive in such environments where empathy is diminished. The metaverse provides an alternate reality where individuals can construct different identities and engage in behaviours that might be deemed unacceptable in physical society. Some users, detached from real-world consequences, may engage in hacking, virtual theft (e.g., stealing digital assets or NFTS), or other unethical activities without fear of legal repercussions. The immersive nature of the metaverse can lead individuals to spend excessive time in virtual worlds, decreasing their participation in conventional activities such as work, education, or family life. This disconnection can foster antisocial behaviour, including online extremism or illegal activities conducted in digital spaces. Without real-world regulatory frameworks, moral values in the metaverse become fluid. Some users may develop alternative moral codes that justify deviant behaviours like virtual violence, exploitation, or illicit trade (e.g., digital black markets). If the virtual community normalises such behaviours, individuals may feel less compelled to adhere to conventional ethical standards. It can also lead to individuals’ lapses in self-control as the environment, perceptions of impact, and consequences can dissociate individuals from attributing their actions to real-world harms.

Virtual reality (VR) creates an immersive digital environment where users can interact in ways that may differ from real-world behaviour or even behaviours that occur on 2D social or gaming platforms. VR can lower real-world consequences and increase impulsivity; it can amplify the relationship between low self-control (Kumar et al., Reference Kumar, Rajendran, Dutta, Ambwani, Lal, Ram and Raghav2023) and deviant behaviour, offering immediate sensory feedback and gratification and reinforcing impulsive behaviours. In physical reality, actions often have social, legal, or moral repercussions. In VR, the perceived consequences are weaker, making it easier for users with low self-control to engage in deviant behaviour without fear of punishment. There is the risk that VR environments can reinforce impulsive behaviours, making them habitual. Behaviours that are amplified by the absence of real-world consequences, as interactions are not face-to-face. Instead, there is the provision of a veil of anonymity by which any user can hide behind an avatar and distance themselves from their actions and feelings of accountability, especially when engaging in harmful or deviant behaviours (Sular, Reference Suler2004).

Avatars in 3D Virtual Reality (VR) spaces offer a fundamentally different and more immersive experience than those in 2D environments due to the depth, embodiment, and interactivity they enable. Avatars are not just visual representations; they become extensions of the user’s body, often tracked in real time through headsets, hand controllers, and even full-body sensors. This allows users to move naturally, gesture, and interact with virtual objects and other avatars in ways that mimic real-world behaviour. The result is a heightened sense of presence, the psychological feeling of ‘being there’, which is far more difficult to achieve or replicate in 2D settings such as Facebook and Roblox.

By contrast, 2D environments typically rely on flat, static, or minimally animated avatars, often viewed from a fixed perspective. These avatars may convey identity or emotion through text or limited visuals, but they lack spatial depth and embodied interaction. Users in 2D spaces are observers; in 3D VR, they are participants. Studies show that users often experience a phenomenon called the Proteus Effect (Yee & Bailenson, Reference Yee and Bailenson2007), where their behaviour changes to align with the characteristics of their avatar. This effect is amplified in VR due to the stronger sense of embodiment, presence, and interconnectivity.

Whilst cyberspace can enhance interconnectivity and mitigate loneliness and social isolation, online spaces, particularly immersive spaces such as the metaverse, can paradoxically foster fragmented interactions, weakening meaningful social integration (Yasuda, Reference Yasuda2024). Similar to how individuals seek out online communities for mental health, lifestyle or well-being support (Kim et al., Reference Kim, Wang and Kim2023), those who feel disconnected from traditional social structures may turn to virtual communities that provide a sense of belonging and validation, this has been evidenced in the context of youth cybercrime (Aitken et al., Reference Aiken, Davidson and Amann2016). However, as Copes and Williams (Reference Copes and Williams2007) note, certain online spaces, such as incel groups, can promote ideological radicalisation, often supporting misogynistic discourse (Powell et al., Reference Powell and Henry2017) and, in some cases, extremist beliefs or violent ideation.

2.4 Deviant Behaviour in VR Environments

In any new technological environment, there will always be those who wish to engage in harmful acts towards others, whether intentional or unintentional. The online world, especially in its developing state of regulation, can often provide opportunities for unregulated behaviours. Research has shown that individuals intent on using online spaces to commit acts of deviancy will take advantage of technological and legal weaknesses (Curtis & Oxborough, Reference Curtis and Oxburgh2023). Criminological theory has somewhat neglected this area but has an important contribution to make to online safety as, through applying criminological and sociological theory, it is possible to develop a greater understanding of the manifestation of these behaviours and how they are unique to VR spaces. Whilst many theoretical constructs can be used, those that relate to anonymity, neutralisation, and self-control will be examined here.

The role of online anonymity as a determinant of online behaviour is integral to understanding human behaviour in online spaces. It amplifies, often reducing the perception of social control and accountability (Nitschinsk et al., Reference Nitschinsk, Tobin, Varley and Vanman2023). This aligns with Durkheim’s idea of anomie, as individuals may feel less restrained by societal norms, resulting in behaviours like trolling, spreading misinformation, or exploiting others, as it separates individuals further from their actions and consequences.

The augmented reality of metaverse domains blurs the lines between reality and the ‘real world’ more so than web2 platforms. Web3 environments give users a sense of reality confusion, which can impact a person’s willingness to engage in harmful behaviours. Behaviours that can also become augmented, disinhibited, and involve harmful and abusive acts that they may not act out so easily in the ‘real world’ (Suler, Reference Suler2004). Online metaverse offenders may view their online predatory behaviours as not being harmful, as the acts take place in virtual spaces.

Suler’s online disinhibition effect (ODE) describes how individuals tend to behave with less restraint in online environments due to anonymity, invisibility, and the lack of immediate real-world consequences. Applying this theory to offending behaviours in VR reveals a significant relationship between VR’s immersive characteristics and the disinhibition that can lead to deviant or harmful actions. Suler identified six areas of influence on human behaviour online;

2.4.1 Dissociative Anonymity

In VR, users often interact through avatars, which can mask their real-world identity. This sense of anonymity can encourage behaviours that individuals would not exhibit in real life, such as harassment, verbal abuse, or sexually inappropriate actions (Kim & Ellithorpe, Reference Kim, Ellithorpe and Burt2023). Offenders may dissociate their actions from their real-world selves, justifying their behaviour as unrelated to their true character. This can be further understood through the impact of the Proteus Effect (Yee & Bailenson, Reference Yee and Bailenson2007), whereby individuals adopt the characteristics of their avatars, and their self-perception and actions change to reflect the persona of the avatar, creating a greater degree of dissociation.

2.4.2 Invisibility

Although VR creates a sense of presence, the offender is not physically visible in the traditional sense. Only the VR user’s avatar is visible. This characterisation can change an indefinite number of times, and this degree of invisibility can embolden individuals to engage in actions they might avoid in face-to-face interactions. This phenomenon is amplified in VR spaces with limited consequences or enforcement mechanisms for deviant behaviour. The sense of presence is likely to change as advancements in photorealistic VR are introduced.

2.4.3 Asynchronicity

While many VR interactions occur in real-time, the perception of delayed or minimal consequences remains. Offenders may feel they have more time to ‘get away’ with harmful behaviours before being held accountable, reducing their inhibitions. Communications with other users, such as forms of harassment, can take place over some time and can be paused and resumed when people engage.

2.4.4 Solipsistic Introjection

In VR, communication is often filtered through avatars and virtual environments, making interactions feel less ‘real’. Offenders might project their fantasies or assumptions onto others, leading to a lack of empathy or understanding of the harm they cause. It is also possible that users can misinterpret the behaviours of others and even create impressions of others that are far removed from who they are in the real world. This tactic can be used by individuals intent on sexually abusing and exploiting children (Odudu, Reference Odudu2024).

2.4.5 Dissociative Imagination

VR environments are often perceived as separate from the real world, a ‘game-like’ space where social norms and rules do not apply. This can encourage individuals to behave as if their actions in VR have no real consequences, fostering harmful behaviour such as theft, assault, or harassment within the virtual space.

2.4.6 Minimisation of Authority

VR environments are often decentralised or lack clear authority figures, making offenders feel less restrained by social or institutional controls. This diminishes the perceived risk of punishment and increases disinhibition. The level of power or affluence offline is immaterial in many online environments.

Suler’s (Reference Suler2004) online disinhibition outlines some of the potential mechanisms behind online deviance in VR spaces as it explains how the online environment reduces social constraints and inhibitions, leading individuals to engage in behaviour they might avoid in face-to-face interactions. This can be benign disinhibition (e.g., self-disclosure, openness) or toxic disinhibition (e.g., cyberbullying, trolling, hate speech). Building upon this, it is important to consider how individuals use cognitive justifications to neutralise feelings of guilt when engaging in deviant behaviour. This allows them to break social norms while maintaining a self-image of morality. Whilst is has been argued that individuals can experienced heightened senses of reality confusion whilst using VR, to date there has been no existing research that has determined whether this sense of realism has an impact on disinhibition. It is possible that the extended sense of presence, and an individual’s evaluation of the costs and rewards of actions, could minimise an individual’s willingness to takes risks in a way not too dissimilar to offline behaviours. However, the reverse could also occur whereby the thrill of breaking societal constructs could amplify the desire to engage in deviant behaviours.

Once individuals engage in deviant online behaviours due to disinhibition, they may use neutralisation techniques (Matza & Sykes, Reference Sykes and Matza1957) or denial (Marshall, Reference Marshall1994) to justify their actions. VR users may justify deviant behaviour using techniques that neutralise guilt or responsibility by downplaying the significance of their actions, the harm caused, or their culpability, as the environment they are in is fantasy based.

The process of neutralisation centres around the denial of the harm caused, the legitimacy of victimisation, and the placing of accountability on others. This process was identified as involving five areas of neutralisation which can be applied to VR:

2.4.7 Denial of Responsibility

Offenders claim they have no control over their actions due to the nature of VR environments. This can include directing blame towards the technology being used, such as system glitches, or being accountable for the avatars’ actions (as previously discussed in the forms of online disinhibition), not their own. Research has shown this to occur when adults engage with children sexually on online platforms, whereby the adults blame the features of the platform, not their actions (Bradbury et al., Reference Bradbury, Bleakley and Martellozzo2024). This disassociation is amplified in VR because users interact through avatars and virtual personas, creating a psychological distance between the individual and their actions. Offenders could also blame the behaviours of others as a form of diffusion of responsibility regarding their actions, as they are only doing what everyone else is doing.

2.4.8 Denial of Injury

For this form of neutralisation, individuals argue that their actions caused no real harm because the VR space is not ‘real’; it is only a fantasy or just a game in which there are ample opportunities for others to leave the environment should they wish. This justification minimises the emotional or psychological harm experienced by victims, especially in cases like sexual harassment, verbal abuse, or griefing (deliberately ruining another user’s experience).

2.4.9 Denial of the Victim

Offenders suggest that the victim deserved the behaviour or that they are not truly victims. This could be where one individual feels aggrieved by another and seeks retribution or retaliation, which they view as justified. Or if an individual believes that behaviours towards others online have less harmful impacts on victims. Whilst the denial of a person’s status as a victim has been extensively discussed and debated over the last century, in a VR context, it becomes even more ambiguous as there can be challenges regarding sexual interactions as no physical contact occurs without the use of haptic suits.

2.4.10 Condemnation of the Condemners

This can occur when offenders shift blame onto those who criticise or enforce rules. For online platforms, the condemners are content moderators responsible for identifying harmful behaviours through observation, user reports, or proactive algorithm detections. Research conducted by Bradbury et al. (Reference Bradbury, Bleakley and Martellozzo2024) has revealed that when adults engage with children sexually online, they neutralise their behaviours by blaming the features of the sites as a facilitator of the interaction (Bradbury et al., Reference Bradbury, Bleakley and Martellozzo2024). In contrast to this, content moderators have been blamed for being overzealous in their approach to interactions, which users regard as being harmless. This deflection undermines the legitimacy of rules and discourages accountability.

2.4.11 Appeal to Higher Loyalties

Offenders justify their actions by claiming loyalty to a group or cause overrides the norms of the VR environment. This can occur in their defence that their actions were for the good of others, such as teammates, in winning a game. This technique is especially prevalent in group dynamics where collective behaviour supports deviance, such as in trolling communities or hacker groups.

As discussed with Suler’s Solipsistic Introjection, many users may perceive VR spaces as ‘not real’, which facilitates neutralisation techniques like denial of injury or responsibility. Changing cultural attitudes about the impact of VR interactions is crucial to reducing offending.

Whilst not the first academic to explore the notion of Edgework within sociology and criminology, Edgework theory, applied by sociologist Stephen Lyng in the 1990s, took a perspective that examines the appeal of risky and deviant behaviour. The theory suggests that individuals are drawn to engaging in deviant behaviours for the excitement and adrenaline rush they provide and for their unique experiences navigating the boundaries between risk and safety, control and danger. Edgework Theory is an important contribution to understanding adult and children’s online behaviours, especially in metaverses where their design aims to stimulate and thrill using augmented environments. These feelings can directly correlate to the desire to push boundaries and take risks to increase these sensations, which can be attributed to deviant behaviours in conjunction with a sense of dissociation and disorganisation (Shaw & McKay, Reference Shaw and McKay1942; Suler, Reference Suler2004).

Lyng’s Edgework Theory explores why individuals engage in risky or deviant behaviours for the thrill of pushing boundaries and experiencing control in chaotic or uncertain situations. When applied to offending in virtual reality (VR), the theory highlights how VR environments provide a unique space for users to test boundaries, take risks, and engage in deviant behaviours that might not be possible or permissible in real life. As discussed in Section 1, VR offers a space where individuals can take risks without experiencing the immediate real-world consequences, making it a fertile ground for edgework behaviours. Offenders might see their actions as a test of skill, such as outsmarting security systems, manipulating environments, or navigating social boundaries within VR. The rules and norms in virtual environments are often less clear or enforced than in the physical world, providing a liminal space for individuals to explore deviant behaviours. In other words, VR environments provide users with greater opportunities to engage in behaviours they might avoid in real life, such as theft, violence, or identity manipulation, as a means of exploring the boundaries of acceptable conduct.

This perception encourages edgework that might involve offending others, like stalking, harassment, or sabotaging virtual assets. Again, as emphasised by Suler’s work, using avatars makes offenders feel distanced from their actions, making it easier to justify boundary-pushing behaviours. The sense of thrill and excitement may be drawn from an increased sense of power and mastery by bending or breaking the rules, particularly in unmoderated or highly customisable environments. The unique environment may also magnify the appeal of edgework as offenders seek peer recognition and approval.

Edgework theory can be directly supported by self-control theory, proposed by Gottfredson and Hirschi (Reference Gottfredson and Hirschi1990), which suggests that individuals with low self-control are more likely to engage in deviant behaviour because they seek immediate gratification, lack foresight, and struggle with impulse regulation. This theory argues that deviant acts occur when an opportunity presents itself, and the individual lacks the self-discipline to resist.

It is reasonable to suggest that VR environments enhance sensory experiences, which can directly diminish an individual’s capacity for self-control (Kumar et al., Reference Kumar, Malhotra and Kathuria2025; Zhang, Reference Zhang2022). Furthermore, research indicates that heightened states of arousal are strongly correlated with increased risk-taking behaviours (Icenogle & Cauffman, Reference Icenogle and Cauffman2021), which may not only contribute to greater deviant conduct but also increase vulnerability to victimisation.

2.5 Victimisation in Virtual Reality Environments

Victimisation in the metaverse is an emerging issue that challenges traditional criminological theories. The development of the metaverse creates new opportunities for victimisation that parallel but also diverge from real-world experiences. Theories of victimisation, including Routine Activity Theory (Cohen & Felson, Reference Cohen and Felson1979), Lifestyle Theory (Walters, Reference Walters1990), and Victim Precipitation Theory (Wolfgang, Reference Wolfgang2002), provide robust frameworks for understanding how individuals become victims in this digital space.

Routine Activity Theory (Cohen & Felson, 1958) suggests that victimisation occurs when three elements converge: a motivated offender, a suitable target, and the absence of capable guardians. In the metaverse, all three components are frequently present. Motivated offenders include cybercriminals, hackers, and digital predators who exploit virtual spaces’ anonymity and unregulated nature. Suitable targets range from new or unsuspecting users to children or individuals who possess valuable digital assets, such as non-fungible tokens (NFTS), cryptocurrency, or the ability to share images. The absence of capable guardians is particularly significant in the metaverse, as many platforms lack effective moderation, legal enforcement, or technical protections against cybercrimes such as hacking, harassment, and virtual sexual assault. Suitable guardians can also extend beyond parents or carers, to a wide range of societal members who have a safeguarding responsibility over children, such as doctors, nurses, teachers, and community workers. It is vital that conversations with children about online safety are not limited to preventative and interventive measures, but extend to post-abuse care and support. Research has shown that after engaging with adults sexually online, children often seek advice and support online to determine whether they are at fault and risk of criminalisation for their actions (Bradbury, Reference Bradbury2025). The risk in this approach is the quality of the responses they receive and the potential harm that could be further caused through mis/disinformation. To fully support children, there needs to be spaces where they can access reliable informative resources and access therapeutic support without the risk of criminalisation or further exploitation.

This combination creates an environment where victimisation is both easy to commit and difficult to prevent. Whilst metaverse users may not define themselves as vulnerable, all users are vulnerable when navigating technological spaces. It can be argued that VR metaverses amplify that vulnerability as the maximisation of sensory experience means that harmful interactions with others can seem more real and feel more threatening as hearing other VR user voices in acts of sexual harassment, misogyny, and hate speech increases experiences of intimidation, anxiety and fear (Freeman et al., Reference Freeman, Zamanifard, Maloney and Acena2022).

While the theory traditionally emphasises situational factors over offender motivation, targeted offender interventions can nonetheless play a critical role in disrupting this convergence and mitigating associated risks.

Interventions aimed at offenders can reduce the likelihood of criminal events by altering either their motivation or their capacity to act. Cognitive-behavioural programs, for instance, seek to reshape decision-making processes and challenge distorted thinking patterns, thereby reducing the propensity to offend. These programs can be particularly effective in addressing impulsiveness, entitlement, or antisocial attitudes that contribute to criminal behaviour.

Moreover, interventions that enhance social bonds, such as employment support, educational opportunities, and community reintegration, can reduce exposure to criminogenic environments and diminish the appeal of suitable targets. By stabilising offenders’ routines and embedding them in prosocial networks, these measures indirectly increase guardianship and reduce the spatial-temporal conditions conducive to crime (Nolet et al., Reference Nolet, Charette and Mignon2022).

In digital or metaverse environments, offender interventions might include behavioural nudges, real-time moderation, or algorithmic deterrents that reduce anonymity and increase accountability. Such measures can recalibrate the offender’s perception of risk and opportunity, even in decentralised or avatar-based spaces. As a result, disrupting the behaviour from an actively developing lifestyle choice.

Lifestyle theory posits that individuals’ behaviours and choices influence their risk of victimisation. In the metaverse, various behaviours increase the risk to users, such as frequenting unmoderated virtual spaces, sharing personal information, or engaging in financial transactions. This phenomenon may be partly driven by the immersive nature of the metaverse, which enhances users’ sense of freedom and security. The heightened sense of presence and embodiment in virtual spaces may lead users to lower their guard, perceiving the environment as safe and controlled while unknowingly exposing themselves to potential risks (Slater & Sanchez-Vives, Reference Slater and Sanchez-Vives2016). As a result, individuals may lower their guard, mistakenly perceiving the environment as safe and controlled while unknowingly exposing themselves to potential risks. This openness to risk can ultimately leave a victim of online exploitation and abuse to be blamed for their active involvement in risk taking behaviours.

Victim precipitation theory (Wolfgang, Reference Wolfgang2002) examines the role of the victim in their own victimisation, either through active participation or by unknowingly provoking an offender. This theory has been viewed as controversial as it places blame towards victims (Dhanani et al., Reference Dhanani, Main and Pueschel2020). This theory is particularly relevant in the metaverse in online harassment, cyberbullying, and digital conflicts. Some users may unintentionally provoke hostility by expressing controversial opinions, challenging online hierarchies, or engaging in competitive gaming environments, which Suler (Reference Suler2004) defines as toxic disinhibition. Others may actively engage in risky behaviour, such as clicking on suspicious links, participating in unregulated digital economies, or sharing sensitive data, inadvertently enabling their victimisation. As discussed earlier, the anonymity of metaverse users and the presence of children in apps designed for adults could increase neutralisation responses that draw parallels with victim precipitation theory. Adults who engage with children in a sexual manner could argue that the child should not be present in such apps, and, therefore, the blame lies with the child, not with the adult (Bradbury et al., Reference Bradbury, Bleakley and Martellozzo2024).

The concept of secondary victimisation is also significant in the metaverse. When victims report abuse, harassment, or fraud, they may face disbelief, victim-blaming, or inadequate support from platform moderators or law enforcement. Given the evolving nature of virtual crimes, legal frameworks often do not address the unique forms of victimisation in digital spaces and policing such behaviour is problematic (see Section 5). Whilst there has been a considerable uptake in the use of VR to train the police in how to respond to crime (Ali & Laib, Reference Al Ali and Laib2024), such as domestic abuse and public order, there is a significant lack of training for law enforcement agencies around the nature of offending and victimisation that specifically occur within the metaverse (Haber, Reference Haber2024). Without such training, the lack of awareness will directly impact police investigations into reported cases of abuse, which can discourage victims from seeking help and may even embolden offenders who realise there are few repercussions for their actions (McIntosh & Allen, Reference McIntosh and Allen2024).

Another key consideration is the psychological impact of metaverse victimisation. Unlike traditional cybercrimes that occur on social media or through messaging platforms, the immersive nature of the metaverse heightens emotional and psychological effects. For instance, virtual sexual harassment, stalking, and simulated assault may not result in physical harm, but they can have severe emotional consequences due to the embodied experience of the metaverse. Victims may suffer from anxiety, PTSD (post-traumatic stress disorder), and social withdrawal, similar to those who experience real-world victimisation (Zakaria, Reference Zakaria2025). This reinforces the need for metaverse platforms to implement stronger safeguarding measures, such as AI-driven moderation, verified identities, and stricter enforcement of virtual conduct policies.

2.6 Conclusion

This section has examined the complexities of offending and victimisation within virtual reality (VR) environments, applying established criminological theories to an evolving digital landscape that defies traditional social structures. The rise of the metaverse has introduced immersive spaces that fundamentally alter how individuals perceive and engage in deviant or harmful behaviours. As technology has advanced, so too have the opportunities for new forms of criminality, moral disengagement, and vulnerability. In these immersive and often anonymised environments, VR not only facilitates deviance but also challenges existing frameworks of accountability, control, and justice.

Through an interdisciplinary application of theoretical perspectives, ranging from Durkheim’s Anomie (1893) and Hirschi’s Self-Control Theory (Reference Hirschi2004) to Suler’s online disinhibition effect (2004) and Lyng’s Edgework Theory (Reference Lyng1990), this section has demonstrated that offending and victimisation in VR are not only technologically mediated phenomena but are deeply embedded in the psychosocial dynamics of the user. Individuals in VR environments are often liberated from real-world constraints: their identities are masked, their actions are less visible, and their behaviours are frequently removed from traditional consequences. These conditions foster a unique psychological space where transgressive actions can feel acceptable, trivial, or even justified.

Criminological theories help to illuminate the mechanisms by which these behaviours are enacted and normalised. Sykes and Matza’s Neutralisation Theory (Reference Sykes and Matza1957) reveals how individuals rationalise their harmful conduct in VR, often claiming that their actions are not real, not harmful, or not their responsibility. Edgework Theory (1990) further highlights the attraction of virtual risk-taking, where thrill-seeking becomes entangled with digital disassociation (Suler, Reference Suler2004). This intersection of arousal motivation (Murray, Reference Murray1938) and anonymity reflects a profound shift in how agency and accountability are experienced online. Moreover, VR enables users to explore boundaries in ways that are often inaccessible in the physical world, creating new thresholds of risk and deviance that must be understood within both psychological and sociological contexts.

Victimisation within VR is equally complex. Immersive environments heighten users’ emotional vulnerability, creating more visceral experiences of harm even in the absence of physical contact. Theories of victimisation – particularly Routine Activity Theory (Cohen & Felson, Reference Cohen and Felson1979) and Lifestyle-Exposure Theory (Walter, 1957) – reveal how metaverse users can become targets through a convergence of risk factors, such as unmoderated spaces, inadequate digital guardianship, and the deceptive nature of avatars. The ambiguous status of avatars in VR – neither fully real nor entirely fictional – adds an additional layer of complexity to identifying and supporting victims. These challenges are further exacerbated by the current limitations in platform governance, moderation systems, and legal frameworks, which remain ill-equipped to address the nuanced forms of harm emerging in these digital territories.

Children, in particular, represent a demographic that is disproportionately at risk. As digital natives (Kincl T Strach, Reference Kincl and Štrach2021), they are drawn to the gamified and social features of metaverse platforms, often without a full understanding of the potential dangers. Yet the systems designed to protect them, such as age verification, moderation protocols, or parental controls, are often inadequate or easily bypassed. As highlighted throughout this section, there is an urgent need for research, policy, and design approaches that treat children not only as vulnerable users but as active participants in shaping the norms of virtual societies. Without such a shift, the metaverse risks becoming a space where the exploitation of children is not only possible but also largely untraceable.

The role of law enforcement and regulatory agencies in these environments remains unclear and underdeveloped. As virtual crimes become increasingly sophisticated, reactive approaches will fall short. Instead, preventative frameworks, grounded in digital ethics, user-centred design, and informed by criminological insight, must guide platform development. This includes the implementation of AI-driven moderation tools, robust identity verification systems, and embedded educational interventions that reinforce digital empathy and ethical engagement.

As criminology begins to adapt to technological shifts in social interactions and offending, it must continue to integrate theoretical frameworks with empirical research to respond effectively to the realities of virtual harm. Offenders’ behaviours, victims’ vulnerabilities, and the limitations of existing governance structures all converge in the metaverse in ways that mirror, distort, and reimagine traditional social interactions.

Having established the theoretical framework that underpins this Element, the next section explores the methods utilised throughout this project, the ethics and challenges that have been encountered, and how risk was minimised.

3 Methods, Ethics, and Challenges

3.1 Introduction

This Element’s section explores the methodological approaches utilised during the VIRRAC project, which was conducted over a twelve-month period, from February 2023 to 2024. VIRRAC was funded by the National Research Centre on Privacy, Harm Reduction, and Adversarial Influence Online (REPHRAIN) and UKRI, adopting a mixed-methods approach (Cresswell, Reference Creswell2018) that combined qualitative focus groups with stakeholder engagement, observations, a snapshot survey, and desktop research. This section discusses the participatory approach adopted throughout the conception, design, and delivery phases of the VIRRAC project. In addition, this section reviews and reflects on the research techniques employed to produce tangible project outputs collaboratively and considers some of the key challenges faced by the researchers in navigating this novel and interdisciplinary study. Crucially, this section evidences the importance of placing the voices of children at the heart of any methodological approach seeking to safeguard children better (Shelbe et al., Reference Schelbe, Chanmugam and Moses2015; UNICEF Innocenti, 2025) to ensure that children’s perceptions of risk and personal safety are gathered, heard, and included in the subsequent shaping of online safety.

3.2 Background and Context of Study

At present, little is known about the potential repercussions for children and young people participating in the immersive metaverse ecosystem. This gap leaves several important questions unanswered, namely: (1) What are children experiencing across metaverse platforms? (2) what impact these experiences may have, and (3) how children feel they can be better empowered and protected in these spaces.

To answer these questions, children’s voices needed to be at the heart of the methodological design, underpinning the foundations of all project outputs, tools, and evidence-led recommendations. Therefore, conducting innovative primary research with children and young people to explore experiences and perceptions of the metaverse was a vital strand of the VIRRAC approach, as was learning from various multidisciplinary stakeholders with first-hand experience and expertise.

VIRRAC adopted a holistic and multidisciplinary approach, not drawing from a single discipline, theory, or perspective but from a selection, as discussed in Sections 1 and 2. By doing so, it is possible to develop a greater understanding of the idiosyncratic nature of cyberspace and the metaverse. How to approach safety for children in these online environments more effectively is a complex and multifaceted journey.

3.3 Ethics and Integrity of VIRRAC

All aspects of VIRRAC received full ethical approval from the University Ethics Committees and the REPHRAIN Research Centre Ethics Committee, adhering strictly to the ethical standards for research involving human participants as set out in the 2006 British Society of Criminology Code of Ethics. Ensuring transparency in the project outputs and the safety and well-being of all participants who participated in VIRRAC was crucial. Implementing rigorous data handling and storage procedures ensured participants’ anonymity and confidentiality. Informed consent was obtained from all participants, with a clear explanation of the study’s objectives, the voluntary nature of participation, and the right to withdraw. Data protection measures complied with UK GDPR, including the secure storage of data on encrypted university servers, access limited to authorised research personnel, and the removal of all identifiable information during data analysis and reporting. Due to the complex nature of conducting qualitative research and navigating the nuanced dynamics of participatory research with children and young people (Morrow, Reference Morrow2008), the VIRRAC project followed multiple layers of ethical reflection (Stapleton & Mayock, Reference Stapleton and Mayock2022). Three separate ethical approval applications were granted. The project stakeholder board reviewed all research tools inputting their interdisciplinary advice and expertise.