1 Introduction

Multidimensional computerized adaptive testing (MCAT) has revolutionized the field of educational and psychological assessments by dynamically selecting tailored items from a large test pool, thereby enhancing the efficiency and precision of latent ability estimates (van der Linden & Glas, Reference van der Linden and Glas2010). Powered by multidimensional item response theory (MIRT; Bock & Gibbons, Reference Bock and Gibbons2021), MCAT leverages multidimensional statistical inference to evaluate respondents’ multidimensional latent traits and allows for more comprehensive and efficient assessments compared to unidimensional approaches. MCAT’s adaptability and accuracy are also particularly crucial in high-stakes diagnostic assessments, such as in clinical psychology and psychiatry, where it can be substituted for in-person assessments by clinical professionals, especially in areas with limited medical resources (Gibbons et al., Reference Gibbons, Weiss, Frank and Kupfer2016).

A substantial body of research in MCAT has focused on item-selection strategies derived from experimental design principles. One prominent strategy is to select items that maximize the determinant of the Fisher information matrix evaluated at the current estimates of the latent traits (Segall, Reference Segall1996, Reference Segall, Linden and Glas2000), known as the D-optimality criterion. An alternative, the A-optimality criterion, aims to minimize the trace of the asymptotic covariance matrix, thereby reducing overall estimation variance (van der Linden, Reference van der Linden1999). In the absence of nuisance latent abilities, both A-optimality and D-optimality have demonstrated superior accuracy relative to other common experimental design criteria (Mulder & van der Linden, Reference Mulder and van der Linden2009).

Another prominent line of MCAT item-selection algorithms leverages Kullback–Leibler (KL) information, often within a Bayesian framework. A common approach is to select items that produce response distributions at the true latent trait value,

![]() $\boldsymbol {\theta }_0$

, that differs maximally from the response distributions generated at the other value of

$\boldsymbol {\theta }_0$

, that differs maximally from the response distributions generated at the other value of

![]() $\theta $

(Chang & Ying, Reference Chang and Ying1996; Veldkamp & van der Linden, Reference Veldkamp and van der Linden2002). Moving beyond response distributions alone, some researchers propose maximizing the KL divergence between the current posterior distribution and the posterior distribution at the next selection step, thereby enhancing adaptation through updated trait estimates (Mulder & van der Linden, Reference Mulder and van der Linden2010). Another promising strategy is the mutual information (MI) criterion, which aims to maximize entropy reduction of the current posterior distribution, encouraging more and more accurate posterior estimates (Weissman, Reference Weissman2007). In particular, Wang and Chang (Reference Wang and Chang2011) demonstrate both theoretical and empirical advantages of the Bayesian MI item-selection rule over common experimental criteria such as D-optimality. More detailed theoretical comparison of KL information and Fisher information criteria is presented by Wang et al. (Reference Wang, Chang and Boughton2011).

$\theta $

(Chang & Ying, Reference Chang and Ying1996; Veldkamp & van der Linden, Reference Veldkamp and van der Linden2002). Moving beyond response distributions alone, some researchers propose maximizing the KL divergence between the current posterior distribution and the posterior distribution at the next selection step, thereby enhancing adaptation through updated trait estimates (Mulder & van der Linden, Reference Mulder and van der Linden2010). Another promising strategy is the mutual information (MI) criterion, which aims to maximize entropy reduction of the current posterior distribution, encouraging more and more accurate posterior estimates (Weissman, Reference Weissman2007). In particular, Wang and Chang (Reference Wang and Chang2011) demonstrate both theoretical and empirical advantages of the Bayesian MI item-selection rule over common experimental criteria such as D-optimality. More detailed theoretical comparison of KL information and Fisher information criteria is presented by Wang et al. (Reference Wang, Chang and Boughton2011).

Although numerous effective item-selection rules have been proposed in the MCAT literature, they all rely on one-step lookahead optimization of an information-theoretic criterion. Despite their ease of implementation and attractive theoretical properties, these selection rules are inherently myopic: they select items based solely on immediate information gain, ignoring how current choices influence future decisions, which can lead to suboptimal policies. For example, existing methods tend to favor items with high loading parameters (Chang, Reference Chang2015). However, CAT researchers also recommend reserving such items for the later stages of testing to improve efficiency (Chang & Ying, Reference Chang and Ying1999). Integrating heuristic guidance into existing selection rules remains challenging.

Addressing these limitations, we propose a novel deep CAT system that integrates a flexible Bayesian MIRT model with a non-myopic online item-selection policy, guided by reinforcement learning (RL) principles (Sutton & Barto, Reference Sutton and Barto2018). Leveraging recent advancements in Bayesian sparse MIRT (Li et al., Reference Li, Gibbons and Rockova2025), our framework seamlessly accommodates multiple latent factors with complex loading structures, while maintaining scalability in both the number of items and factors. To learn the optimal item-selection policy that prioritizes the assessment of target factors, we draw on contemporary RL methodologies and introduce a general double deep Q-learning algorithm (Mnih et al., Reference Mnih, Kavukcuoglu, Silver, Rusu, Veness, Bellemare, Graves, Riedmiller, Fidjeland, Ostrovski, Petersen, Beattie, Sadik, Antonoglou, King, Kumaran, Wierstra, Legg and Hassabis2015; van Hasselt et al., Reference van Hasselt, Guez and Silver2016). This algorithm efficiently trains a Q-network offline using only item parameter estimates; the learned network can then be deployed online to select optimal items based on the current multivariate latent factor posterior distribution. When the test terminates, our framework robustly characterizes the full latent factor posterior distributions rather than providing only a point estimate.

A primary contribution of our work is to show how the identification of the latent factor posterior distribution leads to substantial computational gains in online item selection. Given that such posterior distribution is deemed to be non-Gaussian and unknown, traditional Bayesian methods typically rely on expensive Markov chain Monte Carlo (MCMC) simulations (Béguin & Glas, Reference Béguin and Glas2001) and combined with additional data augmentation to handle categorical likelihood (Albert & Chib, Reference Albert and Chib1993; Polson et al., Reference Polson, Scott and Windle2013). Our approach achieves substantial acceleration by directly sampling latent factor posterior distributions (Botev, Reference Botev2016; Durante, Reference Durante2019; Li et al., Reference Li, Gibbons and Rockova2025). Notably, this improvement not only increases the efficiency of existing Bayesian item-selection procedures but also provides a computational foundation for training our proposed Q-learning algorithm through rapid, large-scale simulations of testing sessions.

Another critical advancement in our work is the integration of CAT within an RL framework. This approach addresses the practical need to prioritize accurate estimation and to overcome known limitations of greedy item-selection methods in sequential decision-making (Bertsekas & Tsitsiklis, Reference Bertsekas and Tsitsiklis1996). Building on the Bayesian MIRT foundation, our neural-network architecture incorporates the identified posterior parameters as state variables, allowing the model to learn optimal item-selection policies through a large amount of testing simulations. This formulation bridges the two paradigms: the Bayesian component provides statistically grounded representations of examinee uncertainty, while the RL component leverages these representations to optimize sequential decisions. The trained neural network is deployable on standard laptops without GPU acceleration, making it suitable for online adaptive testing applications. The sequential nature of CAT aligns naturally with deep Q-learning methods, which have demonstrated remarkable success across diverse application domains (Kalashnikov et al., Reference Kalashnikov, Irpan, Pastor, Ibarz, Herzog, Jang, Quillen, Holly, Kalakrishnan, Vanhoucke and Levine2018; Silver et al., Reference Silver, Huang, Maddison, Guez, Sifre, Driessche, Schrittwieser, Antonoglou, Panneershelvam, Lanctot, Dieleman, Grewe, Nham, Kalchbrenner, Sutskever, Lillicrap, Leach, Kavukcuoglu, Graepel and Hassabis2016). Notably, RL has been successfully employed in educational measurement settings to design personalized learning plans (Li et al., Reference Li, Xu, Zhang and Chang2023; Tan et al., Reference Tan, Han, Ye and Chen2020).

Finally, our work aligns with the emerging research trend of framing traditional statistical sequential decision-making problems as optimal policy learning tasks (Rainforth et al., Reference Rainforth, Foster, Ivanova and Smith2024). This perspective has appeared in Bayesian adaptive design (Chaloner & Verdinelli, Reference Chaloner and Verdinelli1995; Foster et al., Reference Foster, Ivanova, Malik and Rainforth2021; Sebastiani & Wynn, Reference Sebastiani and Wynn2000) and Bayesian optimization (Lam et al., Reference Lam, Willcox, Wolpert, Lee, Sugiyama, Luxburg, Guyon and Garnett2016; Srinivas et al., Reference Srinivas, Krause, Kakade and Seeger2010), where recent methods aim to move beyond one-step criteria toward policy learning that account for long-term consequences. Our contribution fits within this broader trend by providing a principled approach to cognitive and behavioral assessment.

The remainder of the article is organized as follows. Section 2 motivates our deep CAT framework using a cognitively complex item bank from a recent clinical study (Gibbons et al., Reference Gibbons, Lauderdale, Wilson, Bennett, Arar and Gallo2024). Section 3 reviews existing information-theoretic item-selection methods and outlines the necessity to reformulate CAT as an RL task. Section 4 introduces a general Bayesian framework that accelerates existing CAT item-selection rules and serves as the foundation for our Q-learning algorithm. Section 5 details our RL approach, including the neural network architecture and double Q-learning algorithm. Finally, Sections 6 and 7 evaluate our method through both simulations and real data experiments.

2 Adaptive high-dimensional cognitive assessment

Our proposed deep CAT system is motivated by the growing need for adaptive cognitive assessment in high-dimensional latent spaces. Cognitive impairment, particularly Alzheimer’s dementia (AD), is a major public health challenge, affecting 6.9 million individuals in the United States in 2024 (Alzheimer’s Association, 2024). Early detection of mild cognitive impairment (MCI), a precursor to AD, is crucial for slowing disease progression and improving patient outcomes (Huang et al., Reference Huang, Strombotne, Horner and Lapham2018). However, traditional neuropsychological assessments are costly, time-consuming, and impractical for frequent use, underscoring the need for more efficient and scalable assessment methods.

Recently, pCAT-COG, the first computerized adaptive test (CAT) item bank based on MIRT for cognitive assessment, demonstrated its potential as an alternative to clinician-administered evaluations (Gibbons et al., Reference Gibbons, Lauderdale, Wilson, Bennett, Arar and Gallo2024). The data were collected from

![]() $730$

participants. After careful item calibration and model selection, the final item bank consisted of

$730$

participants. After careful item calibration and model selection, the final item bank consisted of

![]() $J=57$

items covering five cognitive subdomains: episodic memory, working memory, executive function, semantic memory, and processing speed. Since each item comprised three related tasks, we used a binary score, where

$J=57$

items covering five cognitive subdomains: episodic memory, working memory, executive function, semantic memory, and processing speed. Since each item comprised three related tasks, we used a binary score, where

![]() $1$

indicates correct answers on all tasks for our analysis.

$1$

indicates correct answers on all tasks for our analysis.

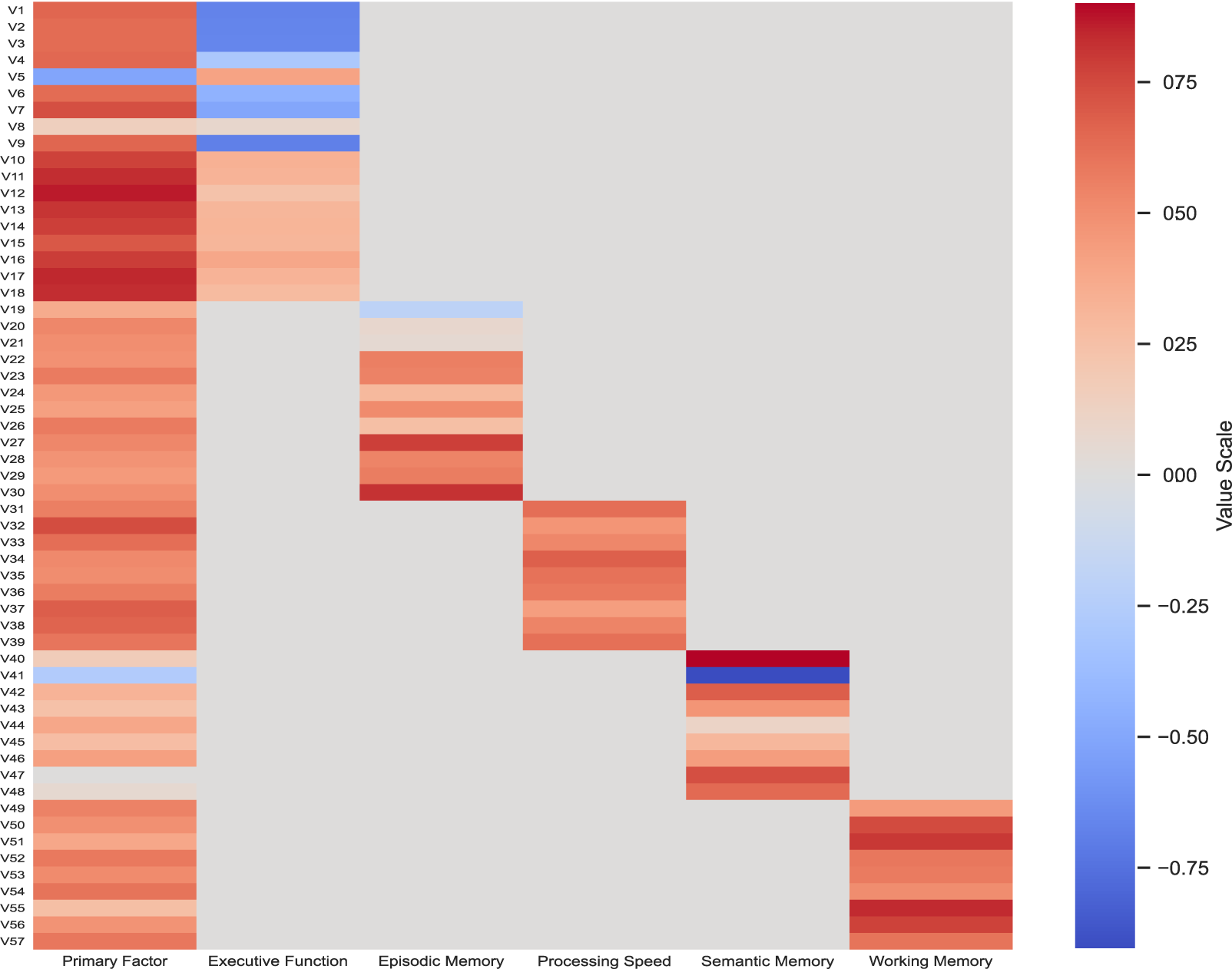

Following Gibbons et al. (Reference Gibbons, Lauderdale, Wilson, Bennett, Arar and Gallo2024), we fit a six-factor bifactor model (Gibbons & Hedeker, Reference Gibbons and Hedeker1992) to the

![]() $730$

by

$730$

by

![]() $57$

binary response matrix, with one primary factor representing the global cognitive ability and five secondary factors. This yields a

$57$

binary response matrix, with one primary factor representing the global cognitive ability and five secondary factors. This yields a

![]() $57$

by

$57$

by

![]() $6$

factor loading matrix, visualized in Figure 1, where rows correspond to pCAT-COG items and columns represent distinct factors.

$6$

factor loading matrix, visualized in Figure 1, where rows correspond to pCAT-COG items and columns represent distinct factors.

Estimated bifactor factor loading matrix for pCAT-COG.

Since items in pCAT-COG are primarily designed to measure the general cognitive factor and only partially capture subdomain information, our goal is to develop an item-selection strategy that efficiently estimates the primary factor (first column) using as few items as possible while maintaining robustness to subdomain influences. Additionally, the selection algorithm must be computationally efficient to navigate a six-dimensional latent space in real time for seamless interactive testing. Online item selection is critical, as learning an optimal sequence from

![]() $57$

items offline presents an intractable combinatorial problem, even in a binary response setting.

$57$

items offline presents an intractable combinatorial problem, even in a binary response setting.

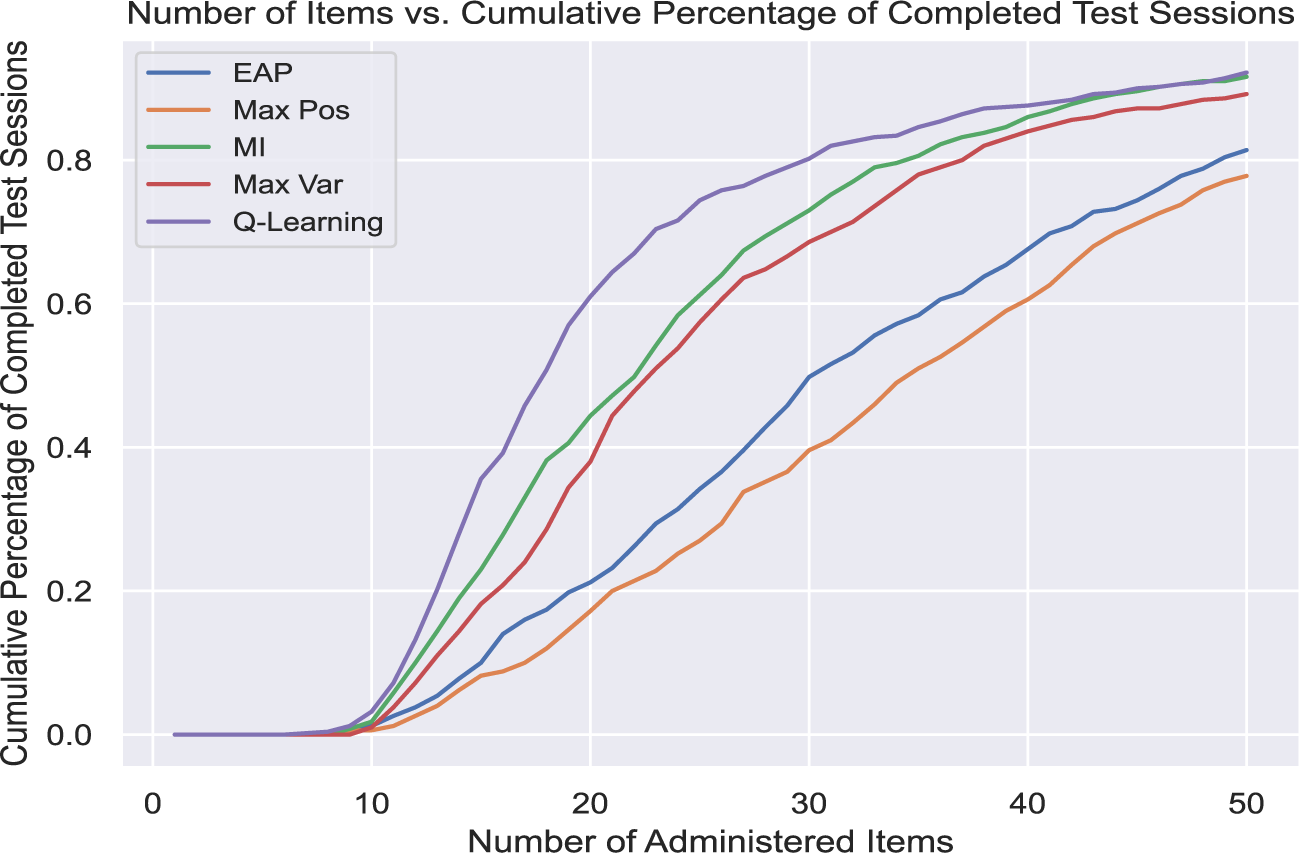

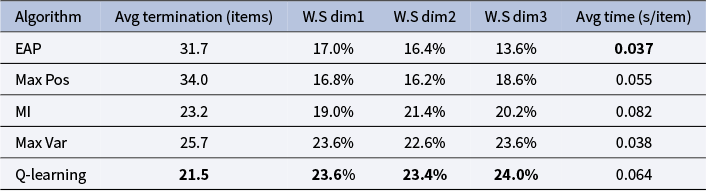

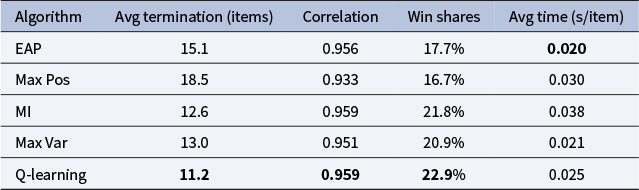

Adaptive cognitive assessment is essential for cognitive assessment, as designing items is costly and administering all items is time-consuming. Our deep CAT system requires on average

![]() $11.2$

items to reduce the posterior variance of the primary factor from

$11.2$

items to reduce the posterior variance of the primary factor from

![]() $1$

to

$1$

to

![]() $0.16$

(posterior s.d.

$0.16$

(posterior s.d.

![]() $= 0.4$

), whereas the next-best MI method requires

$= 0.4$

), whereas the next-best MI method requires

![]() $12.6$

items to achieve the same precision. The item bank is currently expanding to

$12.6$

items to achieve the same precision. The item bank is currently expanding to

![]() $500$

items with a more nationally representative sample. Success on this prototype dataset paves the way for broader deployment in clinical research.

$500$

items with a more nationally representative sample. Success on this prototype dataset paves the way for broader deployment in clinical research.

3 From one-step optimization to policy learning

We formally define the problem of designing a CAT system from an RL perspective. Typical CAT systems consist of two components:

-

• Offline calibration: An MIRT model is fitted to a calibrated dataset

$\boldsymbol {Y} \in \mathbb R^{N \times J}$

to estimate the item characteristic parameters, where N represents the number of examinees, and J represents the number of items in the item bank.

$\boldsymbol {Y} \in \mathbb R^{N \times J}$

to estimate the item characteristic parameters, where N represents the number of examinees, and J represents the number of items in the item bank. -

• Online deployment: Given the estimated item parameters, an item-selection algorithm is deployed online to adaptively select items for future examinees.

The performance of CAT can be measured by the number of items required to estimate an examinee’s latent traits with sufficient precision.

3.1 Notation and problem formulation

For the entirety of the article, we assume that the calibration dataset

![]() $\boldsymbol {Y}$

is binary, where each element

$\boldsymbol {Y}$

is binary, where each element

![]() $y_{ij}$

represents whether subject i answered item j correctly. We consider a general two-parameter MIRT framework with K latent factors (Bock & Gibbons, Reference Bock and Gibbons2021). Let

$y_{ij}$

represents whether subject i answered item j correctly. We consider a general two-parameter MIRT framework with K latent factors (Bock & Gibbons, Reference Bock and Gibbons2021). Let

![]() $\boldsymbol {B} \in \mathbb R^{J \times K}$

denote the factor loading matrix, and

$\boldsymbol {B} \in \mathbb R^{J \times K}$

denote the factor loading matrix, and

![]() $\boldsymbol {D} \in \mathbb R^J$

denote the intercept vector. Throughout the article, boldface notation is used to denote vectors and matrices (e.g.,

$\boldsymbol {D} \in \mathbb R^J$

denote the intercept vector. Throughout the article, boldface notation is used to denote vectors and matrices (e.g.,

![]() $\boldsymbol {\theta }, \boldsymbol {B}$

, and

$\boldsymbol {\theta }, \boldsymbol {B}$

, and

![]() $\boldsymbol {D}$

), while scalar quantity, such as

$\boldsymbol {D}$

), while scalar quantity, such as

![]() $D_{j}$

, is written in standard font. For each examinee i with multivariate latent trait

$D_{j}$

, is written in standard font. For each examinee i with multivariate latent trait

![]() $\boldsymbol {\theta }^{(i)} \in \mathbb R^K$

, the data-generating process for

$\boldsymbol {\theta }^{(i)} \in \mathbb R^K$

, the data-generating process for

![]() $y_{ij}$

is given by

$y_{ij}$

is given by

where

![]() $\boldsymbol {B}_j$

is the jth row of

$\boldsymbol {B}_j$

is the jth row of

![]() $\boldsymbol {B}$

,

$\boldsymbol {B}$

,

![]() $D_j$

is the jth entry of

$D_j$

is the jth entry of

![]() $\boldsymbol {D}$

, and

$\boldsymbol {D}$

, and

![]() $\Phi (\cdot )$

denotes the standard normal cumulative distribution function. The item parameters can be compactly expressed as

$\Phi (\cdot )$

denotes the standard normal cumulative distribution function. The item parameters can be compactly expressed as

![]() $\{\boldsymbol {\xi }_j\}_{j=1}^J := \{(\boldsymbol {B}_j, D_j)\}_{j=1}^J$

. This two-parameter MIRT framework is highly general, imposing no structural constraints on the loading matrix and requiring no specific estimation algorithms for item parameter calibration.

$\{\boldsymbol {\xi }_j\}_{j=1}^J := \{(\boldsymbol {B}_j, D_j)\}_{j=1}^J$

. This two-parameter MIRT framework is highly general, imposing no structural constraints on the loading matrix and requiring no specific estimation algorithms for item parameter calibration.

Given the estimated item parameters, we need to design an item-selection algorithm that efficiently tests a future examinee with unobserved latent ability

![]() $\boldsymbol {\theta } \in \mathbb R^K$

. The sequential nature of the CAT problem makes Bayesian approaches particularly appealing. Without loss of generality, we assume a standard multivariate Gaussian prior on

$\boldsymbol {\theta } \in \mathbb R^K$

. The sequential nature of the CAT problem makes Bayesian approaches particularly appealing. Without loss of generality, we assume a standard multivariate Gaussian prior on

![]() $\boldsymbol {\theta } \sim \mathcal {N}(0, \mathbb I_K)$

and introduce the following notation:

$\boldsymbol {\theta } \sim \mathcal {N}(0, \mathbb I_K)$

and introduce the following notation:

-

• For any positive integer n, let

$[n]$

denote all the positive integers no greater than n. Let

$[n]$

denote all the positive integers no greater than n. Let

$j\in [J]$

be the index for the items in the item bank.

$j\in [J]$

be the index for the items in the item bank. -

• Let

$j_t$

denote the index of the item selected at step t based on an arbitrary item-selection rule. Define

$j_t$

denote the index of the item selected at step t based on an arbitrary item-selection rule. Define

$\mathcal {I}_{t}$

as the set of the first t administered items, and let

$\mathcal {I}_{t}$

as the set of the first t administered items, and let

$R_{t} := [J] \setminus \mathcal {I}_{t-1} $

, the set of available items before the tth item is picked.

$R_{t} := [J] \setminus \mathcal {I}_{t-1} $

, the set of available items before the tth item is picked. -

• We use the shorthand notation

$f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:T})$

to represent the latent factor posterior distributions

$f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:T})$

to represent the latent factor posterior distributions

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:T}, \boldsymbol {\xi }_{1:T})$

after T items have been selected. Here, the response history is denoted by

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:T}, \boldsymbol {\xi }_{1:T})$

after T items have been selected. Here, the response history is denoted by

$\boldsymbol {Y}_{1:T}:= [y_{j_1}, \dots , y_{j_T}]'$

, and the item parameters are given by

$\boldsymbol {Y}_{1:T}:= [y_{j_1}, \dots , y_{j_T}]'$

, and the item parameters are given by

$\boldsymbol {\xi }_{1:T} := (\boldsymbol {B}_{1:T}, \boldsymbol {D}_{1:T})$

, where

$\boldsymbol {\xi }_{1:T} := (\boldsymbol {B}_{1:T}, \boldsymbol {D}_{1:T})$

, where

$\boldsymbol {B}_{1:T}:=[\boldsymbol {B}_{j_1}, \dots , \boldsymbol {B}_{j_T}]'$

and

$\boldsymbol {B}_{1:T}:=[\boldsymbol {B}_{j_1}, \dots , \boldsymbol {B}_{j_T}]'$

and

$\boldsymbol {D}_{1:T}:=[D_{j_1}, \dots , D_{j_T}]'$

.

$\boldsymbol {D}_{1:T}:=[D_{j_1}, \dots , D_{j_T}]'$

.

At time

![]() $(t+1)$

, the algorithm takes the current posterior

$(t+1)$

, the algorithm takes the current posterior

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:t})$

as input and outputs the next item selection

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:t})$

as input and outputs the next item selection

![]() $j_{t+1}$

. This process continues until at time

$j_{t+1}$

. This process continues until at time

![]() $T'$

, either when the posterior variance of

$T'$

, either when the posterior variance of

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:T'})$

falls below a predefined threshold

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:T'})$

falls below a predefined threshold

![]() $\tau ^2$

, or when

$\tau ^2$

, or when

![]() $T'=H$

, where

$T'=H$

, where

![]() $H \leq J$

is the maximum number of items that can be administered. Hence, the goal of CAT is to minimize

$H \leq J$

is the maximum number of items that can be administered. Hence, the goal of CAT is to minimize

![]() $T'$

given the estimated item parameters of the item bank.

$T'$

given the estimated item parameters of the item bank.

3.2 Reviews of KL information item-selection rules

Given that our proposed deep CAT system is built on a general Bayesian MIRT framework (Li et al., Reference Li, Gibbons and Rockova2025), we briefly revisit common Bayesian item-selection rules and show in Section 4.1 how our framework can be used to accelerate these baseline methods. The popular KL expected a priori (EAP) rule selects the tth item based on the average KL information between response distributions on the candidate item at the EAP estimate

![]() $\hat {\boldsymbol {\theta }}_{t-1} = \int _{\boldsymbol {\theta }} \boldsymbol {\theta } f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:(t-1)}) d\boldsymbol {\theta }$

, and random factor

$\hat {\boldsymbol {\theta }}_{t-1} = \int _{\boldsymbol {\theta }} \boldsymbol {\theta } f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:(t-1)}) d\boldsymbol {\theta }$

, and random factor

![]() $\boldsymbol {\theta }$

sampled from the posterior distribution

$\boldsymbol {\theta }$

sampled from the posterior distribution

![]() $f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)})$

(Veldkamp & van der Linden, Reference Veldkamp and van der Linden2002):

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)})$

(Veldkamp & van der Linden, Reference Veldkamp and van der Linden2002):

$$ \begin{align} \arg\max_{j_t \in R_t} \int_{\boldsymbol{\theta}} \bigg\{ \sum_{l=0}^{1} P(y_{j_t}=l | \hat{\boldsymbol{\theta}}_{t-1}) \log \frac{ P(y_{j_t}=l | \hat{\boldsymbol{\theta}}_{t-1})}{ P(y_{j_t}=l | \boldsymbol{\theta})} \bigg\}\ f(\boldsymbol{\theta} | \boldsymbol{Y}_{1:(t-1)}) d\boldsymbol{\theta}. \end{align} $$

$$ \begin{align} \arg\max_{j_t \in R_t} \int_{\boldsymbol{\theta}} \bigg\{ \sum_{l=0}^{1} P(y_{j_t}=l | \hat{\boldsymbol{\theta}}_{t-1}) \log \frac{ P(y_{j_t}=l | \hat{\boldsymbol{\theta}}_{t-1})}{ P(y_{j_t}=l | \boldsymbol{\theta})} \bigg\}\ f(\boldsymbol{\theta} | \boldsymbol{Y}_{1:(t-1)}) d\boldsymbol{\theta}. \end{align} $$

Rather than focusing on the KL information based on the response distributions, Mulder and van der Linden (Reference Mulder and van der Linden2010) propose the MAX Pos approach by maximizing the KL information between two subsequent latent factor posteriors

![]() $f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)})$

and

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)})$

and

![]() $f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:t})$

. Intuitively, this approach prioritizes items that induce the largest shift in the posterior, formalized as

$f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:t})$

. Intuitively, this approach prioritizes items that induce the largest shift in the posterior, formalized as

$$ \begin{align} \arg\max_{j_t \in R_t} \sum_{y_{j_t}=0}^{1} f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)}) \int_{\boldsymbol{\theta}} f(\boldsymbol{\theta}|\boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}|\boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:t})} d\boldsymbol{\theta}, \end{align} $$

$$ \begin{align} \arg\max_{j_t \in R_t} \sum_{y_{j_t}=0}^{1} f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)}) \int_{\boldsymbol{\theta}} f(\boldsymbol{\theta}|\boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}|\boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:t})} d\boldsymbol{\theta}, \end{align} $$

where

![]() $f(y_{j_t}| \boldsymbol {Y}_{1:(t-1)})$

represents the posterior predictive probability:

$f(y_{j_t}| \boldsymbol {Y}_{1:(t-1)})$

represents the posterior predictive probability:

A third Bayesian strategy, the MI approach, maximizes the MI between the current posterior distribution and the response distribution of new item

![]() $y_{j_{t}}$

(Weissman, Reference Weissman2007). Rooted in experimental design theory (Rényi, Reference Rényi1961), we can also interpret MI as the entropy reduction of the current posterior distribution of

$y_{j_{t}}$

(Weissman, Reference Weissman2007). Rooted in experimental design theory (Rényi, Reference Rényi1961), we can also interpret MI as the entropy reduction of the current posterior distribution of

![]() $\boldsymbol {\theta }$

after observing new response

$\boldsymbol {\theta }$

after observing new response

![]() $y_{j_t}$

. More formally,

$y_{j_t}$

. More formally,

$$ \begin{align} \arg\max_{j_t \in R_t} I_M (\boldsymbol{\theta}, y_{j_t}) = \arg\max_{j_t \in R_t} \sum_{y_{j_t}=0}^{1} \int_{\boldsymbol{\theta}} f(\boldsymbol{\theta}, y_{j_t} | \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}, y_{j_t} | \boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) f(y_{j_t} | \boldsymbol{Y}_{1:(t-1)})} d\boldsymbol{\theta}. \end{align} $$

$$ \begin{align} \arg\max_{j_t \in R_t} I_M (\boldsymbol{\theta}, y_{j_t}) = \arg\max_{j_t \in R_t} \sum_{y_{j_t}=0}^{1} \int_{\boldsymbol{\theta}} f(\boldsymbol{\theta}, y_{j_t} | \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}, y_{j_t} | \boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) f(y_{j_t} | \boldsymbol{Y}_{1:(t-1)})} d\boldsymbol{\theta}. \end{align} $$

Given the impressive empirical success of the MI method (Wang & Chang, Reference Wang and Chang2011), we also introduce a competitive heuristic item-selection rule that chooses items with the highest predictive variance under the current posterior estimates, serving as an additional benchmark method. Formally, write

![]() $c_{j_t} := f(y_{j_t} | \boldsymbol {Y}_{1:(t-1)})$

as defined in (3.4), and consider the following criterion:

$c_{j_t} := f(y_{j_t} | \boldsymbol {Y}_{1:(t-1)})$

as defined in (3.4), and consider the following criterion:

This rule selects the item

![]() $j_t$

that maximizes the variance of the predictive means, weighted by the current posterior

$j_t$

that maximizes the variance of the predictive means, weighted by the current posterior

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

. In Appendix A of the Supplementary Material, we establish a connection between this selection rule and the MI method, showing that both favor items with high prediction uncertainty.

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

. In Appendix A of the Supplementary Material, we establish a connection between this selection rule and the MI method, showing that both favor items with high prediction uncertainty.

3.3 Reinforcement learning formulation

Existing item-selection rules face several limitations: first, these methods rely on one-step-lookahead selection, choosing items that provide the most immediate information without considering their impact on future selections, which can result in suboptimal policies (Sutton & Barto, Reference Sutton and Barto2018). Second, they are heuristically designed to balance information across all latent factors, rather than emphasizing the most essential factors of interest. Finally, these rules do not directly minimize test length, potentially increasing test duration without proportional gains in accuracy.

A more principled approach is to formulate item selection as an RL problem, where the optimal policy is learned using Bellman optimality principles rather than relying on heuristics that ignore long-term planning. Beyond addressing myopia, an RL-based formulation provides a direct mechanism to minimize the number of items required to reach a predefined posterior variance reduction threshold, explicitly guiding item selection toward accurately measuring the primary factors of interests.

More generally, consider a general finite horizon setting where each examinee can answer at most

![]() $H \leq J$

items, and the CAT algorithm terminates whenever the posterior variance of all factors of interest is smaller than a certain threshold

$H \leq J$

items, and the CAT algorithm terminates whenever the posterior variance of all factors of interest is smaller than a certain threshold

![]() $\tau ^2$

, or when H is reached. Since H can be set sufficiently large, it serves as a practical secondary stopping criterion to prevent excessively long tests. Formally:

$\tau ^2$

, or when H is reached. Since H can be set sufficiently large, it serves as a practical secondary stopping criterion to prevent excessively long tests. Formally:

-

• State space

$\ \mathcal {S}$

: The space of all possible latent factor posteriors

$\ \mathcal {S}$

: The space of all possible latent factor posteriors

$\mathcal {S} := \{f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:t}): t \in \{1, \ldots , H\}\}.$

The state variable in CAT represents the Bayesian estimate of the examinee’s latent traits as a multivariate distribution at time t. We may write

$\mathcal {S} := \{f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:t}): t \in \{1, \ldots , H\}\}.$

The state variable in CAT represents the Bayesian estimate of the examinee’s latent traits as a multivariate distribution at time t. We may write

$s_t := f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:t})$

, with

$s_t := f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:t})$

, with

$s_0 := \mathcal {N}(0, \mathbb {I}_K)$

. The state space is discrete and can be exponentially large. Since there are J items in the item bank and the responses are binary, the total number of possible states is

$s_0 := \mathcal {N}(0, \mathbb {I}_K)$

. The state space is discrete and can be exponentially large. Since there are J items in the item bank and the responses are binary, the total number of possible states is

$\sum _{t=1}^H \binom {J}{t} \cdot 2^t$

.

$\sum _{t=1}^H \binom {J}{t} \cdot 2^t$

. -

• Action space

$\ \mathcal {A}$

: The item bank

$\ \mathcal {A}$

: The item bank

$[J]:= \{1, \ldots , J\}$

. However, at the tth selection time, the action space is the remaining items in the test bank that have not yet been selected

$[J]:= \{1, \ldots , J\}$

. However, at the tth selection time, the action space is the remaining items in the test bank that have not yet been selected

$R_t:= [J] \setminus \mathcal {I}_{t-1}$

, as we do not select the same item twice.

$R_t:= [J] \setminus \mathcal {I}_{t-1}$

, as we do not select the same item twice. -

• Transition kernel

$\ \mathcal {P}: \mathcal {S} \times \mathcal {A} \rightarrow \Delta (\mathcal {S})$

, where

$\ \mathcal {P}: \mathcal {S} \times \mathcal {A} \rightarrow \Delta (\mathcal {S})$

, where

$\Delta (\mathcal {S})$

denotes the space of probability distributions over

$\Delta (\mathcal {S})$

denotes the space of probability distributions over

$\mathcal {S}$

. The transition kernel

$\mathcal {S}$

. The transition kernel

$\mathcal {P}$

specifies the stochastic rule that governs how the state evolves after an action is taken. In the CAT context, the kernel maps the current latent-trait estimate

$\mathcal {P}$

specifies the stochastic rule that governs how the state evolves after an action is taken. In the CAT context, the kernel maps the current latent-trait estimate

$s_t$

and the selected item

$s_t$

and the selected item

$a_t$

to the next posterior state

$a_t$

to the next posterior state

$s_{t+1}$

. Conditional on

$s_{t+1}$

. Conditional on

$(s_t, a_t)$

, the next estimate

$(s_t, a_t)$

, the next estimate

$s_{t+1}$

depends on the examinee’s binary response

$s_{t+1}$

depends on the examinee’s binary response

$y_t \in \{0,1\}$

to item

$y_t \in \{0,1\}$

to item

$a_t$

, which yields only two possible posterior updates. Because the true ability of the examinee and thus the response probability

$a_t$

, which yields only two possible posterior updates. Because the true ability of the examinee and thus the response probability

$p(y_t = 1 \mid s_t, a_t)$

are unknown, the transition kernel in CAT is also unknown and must be approximated.

$p(y_t = 1 \mid s_t, a_t)$

are unknown, the transition kernel in CAT is also unknown and must be approximated. -

• Reward function: To directly minimize the test length, we assign a simple 0–1 reward structure, where we assign negative rewards whenever more items are needed to reduce posterior variance to a given threshold. In a K-factor MIRT model, we often prioritize a subset of factors

$\mathcal {K} \subset [K]$

(e.g.,

$\mathcal {K} \subset [K]$

(e.g.,

$\mathcal {K} = \{1\}$

for pCAT-COG), with the test terminating once the posterior variances of all factors in

$\mathcal {K} = \{1\}$

for pCAT-COG), with the test terminating once the posterior variances of all factors in

$\mathcal {K}$

fall below the predefined threshold

$\mathcal {K}$

fall below the predefined threshold

$\tau ^2$

: (3.7)where

$\tau ^2$

: (3.7)where $$ \begin{align} R^{(t)}(s_t, a, s_{t+1}) = \begin{cases} -1 & \text{if } V_{t+1}> \tau^2, \\ 0 & \text{otherwise}, \end{cases} \end{align} $$

$$ \begin{align} R^{(t)}(s_t, a, s_{t+1}) = \begin{cases} -1 & \text{if } V_{t+1}> \tau^2, \\ 0 & \text{otherwise}, \end{cases} \end{align} $$

$V_{t+1} = \max _{k \in \mathcal {K}} \operatorname {Var}(\boldsymbol {\theta }_k \mid \boldsymbol {Y}_{1:(t+1)}) $

is the maximum marginal posterior variance among the prioritized factors

$V_{t+1} = \max _{k \in \mathcal {K}} \operatorname {Var}(\boldsymbol {\theta }_k \mid \boldsymbol {Y}_{1:(t+1)}) $

is the maximum marginal posterior variance among the prioritized factors

$\mathcal {K}$

. This also simplifies the learning of the value function, as the rewards are always bounded integers within

$\mathcal {K}$

. This also simplifies the learning of the value function, as the rewards are always bounded integers within

$[-H, -1]$

. We further illustrate the advantages of adopting this reward structure in Appendix E of the Supplementary Material.

$[-H, -1]$

. We further illustrate the advantages of adopting this reward structure in Appendix E of the Supplementary Material.

Rather than following a heuristic rule, we learn a policy

![]() $\pi $

that maps the current state (latent factor estimates) to a distribution over potential items:

$\pi $

that maps the current state (latent factor estimates) to a distribution over potential items:

Given an initial state

![]() $S_0=s_0$

and the discount factor

$S_0=s_0$

and the discount factor

![]() $\gamma \in (0,1]$

, the value function is

$\gamma \in (0,1]$

, the value function is

$$ \begin{align} v_{\pi}(s_0) := \mathbb{E}_{\pi} \left[\sum_{t=0}^{H-1} \gamma^t R^{(t)}(s_t, \pi(s_t), s_{t+1}) | S_0 = s_0 \right]. \end{align} $$

$$ \begin{align} v_{\pi}(s_0) := \mathbb{E}_{\pi} \left[\sum_{t=0}^{H-1} \gamma^t R^{(t)}(s_t, \pi(s_t), s_{t+1}) | S_0 = s_0 \right]. \end{align} $$

The expectation

![]() $\mathbb {E}_\pi [\cdot ]$

is taken over trajectories generated by drawing

$\mathbb {E}_\pi [\cdot ]$

is taken over trajectories generated by drawing

![]() $a_t$

from

$a_t$

from

![]() $\pi (\cdot \mid s_t)$

and then

$\pi (\cdot \mid s_t)$

and then

![]() $s_{t+1}$

from the transition kernel

$s_{t+1}$

from the transition kernel

![]() $\mathcal {P}(\cdot \mid s_t,a_t)$

. Because direct evaluation of (3.8) is challenging, it is more convenient to consider the action-value function

$\mathcal {P}(\cdot \mid s_t,a_t)$

. Because direct evaluation of (3.8) is challenging, it is more convenient to consider the action-value function

$$ \begin{align} Q_{\pi}(s_0, a) := \mathbb E_{\pi} \left[\sum_{t=0}^{H-1} \gamma^{t}R^{(t)} (s_t, \pi(s_t), s_{t+1}) | S_0=s_0, A_0= a \right], \end{align} $$

$$ \begin{align} Q_{\pi}(s_0, a) := \mathbb E_{\pi} \left[\sum_{t=0}^{H-1} \gamma^{t}R^{(t)} (s_t, \pi(s_t), s_{t+1}) | S_0=s_0, A_0= a \right], \end{align} $$

with

![]() $v_\pi (s)=\mathbb {E}_{a\sim \pi (\cdot \mid s)}Q_\pi (s,a)$

. The optimal policy

$v_\pi (s)=\mathbb {E}_{a\sim \pi (\cdot \mid s)}Q_\pi (s,a)$

. The optimal policy

![]() $\pi ^{\star }$

satisfies the Bellman equation (Bertsekas & Tsitsiklis, Reference Bertsekas and Tsitsiklis1996):

$\pi ^{\star }$

satisfies the Bellman equation (Bertsekas & Tsitsiklis, Reference Bertsekas and Tsitsiklis1996):

where

![]() $s'$

is the next posterior estimate after applying action a to the current posterior s, and

$s'$

is the next posterior estimate after applying action a to the current posterior s, and

![]() $v_{\pi ^\star }(s)=\max _{a} Q_{\pi ^\star }(s,a)$

. Since the state space grows exponentially and the transition kernel is unknown, solving for

$v_{\pi ^\star }(s)=\max _{a} Q_{\pi ^\star }(s,a)$

. Since the state space grows exponentially and the transition kernel is unknown, solving for

![]() $\pi ^*$

using traditional dynamic programming approaches becomes intractable (Sutton & Barto, Reference Sutton and Barto2018).

$\pi ^*$

using traditional dynamic programming approaches becomes intractable (Sutton & Barto, Reference Sutton and Barto2018).

In practice, our deep Q-learning approach (Mnih et al., Reference Mnih, Kavukcuoglu, Silver, Graves, Antonoglou, Wierstra and Riedmiller2013) does not evaluate these expectations analytically. Let the transition

![]() $(s,a,r,s')$

represent one testing step, where item a is selected under state s, the reward r is observed, and the posterior is updated to

$(s,a,r,s')$

represent one testing step, where item a is selected under state s, the reward r is observed, and the posterior is updated to

![]() $s'$

through the Bayesian MIRT model. We approximate the Bellman fixed point in (3.10) by fitting a parametric function

$s'$

through the Bayesian MIRT model. We approximate the Bellman fixed point in (3.10) by fitting a parametric function

![]() $Q_w$

that minimizes the squared temporal-difference loss:

$Q_w$

that minimizes the squared temporal-difference loss:

where

Here,

![]() $\mathcal {D}$

(replay buffer) denotes a collection of previously observed testing steps

$\mathcal {D}$

(replay buffer) denotes a collection of previously observed testing steps

![]() $(s,a,r,s')$

generated during simulation, which is used to approximate the expectation above by empirical averaging. The parameter

$(s,a,r,s')$

generated during simulation, which is used to approximate the expectation above by empirical averaging. The parameter

![]() $\bar {w}$

corresponds to a delayed copy of the model parameters w, updated less frequently to stabilize the numerical optimization. We describe the full Q-learning algorithm in Section 5.

$\bar {w}$

corresponds to a delayed copy of the model parameters w, updated less frequently to stabilize the numerical optimization. We describe the full Q-learning algorithm in Section 5.

4 Accelerating item selection via posterior identification

The central insight of our deep CAT framework is that, by iteratively applying the E-step of the PXL-EM algorithm (Li et al., Reference Li, Gibbons and Rockova2025), the latent factor posteriors admit tractable posterior updates. This result is critical for RL, as the posterior distribution is deemed to be non-Gaussian and is analytically intractable under the traditional MIRT literature. By obtaining a tractable representation of this posterior, we can parameterize the examinee’s evolving ability and uncertainty as a well-defined state variable, which is an essential prerequisite for applying Q-learning to adaptive testing. The Bayesian MIRT formulation thus not only replaces costly MCMC procedures with efficient posterior updates under a probit link for existing Bayesian item-selection rules discussed in Section 3.2, but also supplies the statistical foundation that makes the subsequent RL framework feasible.

Specifically, we show that the latent factor posterior updates during CAT belong to an instance of the unified skew-normal distribution (Arellano-Valle & Azzalini, Reference Arellano-Valle and Azzalini2006), defined as follows.

Definition 4.1. Let

![]() $\Phi _{T} \left \{ \boldsymbol {V}; \boldsymbol {\Sigma } \right \}$

represent the cumulative distribution function of a T-dimensional multivariate Gaussian distribution

$\Phi _{T} \left \{ \boldsymbol {V}; \boldsymbol {\Sigma } \right \}$

represent the cumulative distribution function of a T-dimensional multivariate Gaussian distribution

![]() $N_{T}\left (0_{T}, \boldsymbol {\Sigma } \right )$

evaluated at vector

$N_{T}\left (0_{T}, \boldsymbol {\Sigma } \right )$

evaluated at vector

![]() $\boldsymbol {V}$

. A K-dimensional random vector

$\boldsymbol {V}$

. A K-dimensional random vector

![]() $\boldsymbol {\theta } \sim \operatorname {SUN}_{K, T}(\boldsymbol {\mu }, \boldsymbol {\Omega }, \boldsymbol {\Delta }, \boldsymbol {\gamma }, \boldsymbol {\Gamma })$

has the unified skew-normal distribution if it has the probability density function:

$\boldsymbol {\theta } \sim \operatorname {SUN}_{K, T}(\boldsymbol {\mu }, \boldsymbol {\Omega }, \boldsymbol {\Delta }, \boldsymbol {\gamma }, \boldsymbol {\Gamma })$

has the unified skew-normal distribution if it has the probability density function:

$$ \begin{align*}\phi_{K}(\boldsymbol{\theta}; \boldsymbol{\mu}, \boldsymbol{\Omega}) \frac{\Phi_{T}\left\{\boldsymbol{\gamma}+\boldsymbol{\Delta}' \bar{\boldsymbol{\Omega}}^{-1} \boldsymbol{\omega}^{-1}(\boldsymbol{\theta}-\boldsymbol{\mu}); \boldsymbol{\Gamma}-\boldsymbol{\Delta}' \bar{\boldsymbol{\Omega}}^{-1} \boldsymbol{\Delta}\right\}}{\Phi_{T}(\boldsymbol{\gamma}; \boldsymbol{\Gamma})}. \end{align*} $$

$$ \begin{align*}\phi_{K}(\boldsymbol{\theta}; \boldsymbol{\mu}, \boldsymbol{\Omega}) \frac{\Phi_{T}\left\{\boldsymbol{\gamma}+\boldsymbol{\Delta}' \bar{\boldsymbol{\Omega}}^{-1} \boldsymbol{\omega}^{-1}(\boldsymbol{\theta}-\boldsymbol{\mu}); \boldsymbol{\Gamma}-\boldsymbol{\Delta}' \bar{\boldsymbol{\Omega}}^{-1} \boldsymbol{\Delta}\right\}}{\Phi_{T}(\boldsymbol{\gamma}; \boldsymbol{\Gamma})}. \end{align*} $$

Here,

![]() $\phi _{K}(\boldsymbol {\theta }; \boldsymbol {\mu }, \boldsymbol {\Omega })$

is the density of a K-dimensional multivariate Gaussian with expectation

$\phi _{K}(\boldsymbol {\theta }; \boldsymbol {\mu }, \boldsymbol {\Omega })$

is the density of a K-dimensional multivariate Gaussian with expectation

![]() $\boldsymbol {\mu }=\left (\mu _{1}, \ldots , \mu _{K}\right )' $

, and a K by K covariance matrix

$\boldsymbol {\mu }=\left (\mu _{1}, \ldots , \mu _{K}\right )' $

, and a K by K covariance matrix

![]() $\boldsymbol {\Omega } =\boldsymbol {\omega } \bar {\boldsymbol {\Omega }} \boldsymbol {\omega }$

, where

$\boldsymbol {\Omega } =\boldsymbol {\omega } \bar {\boldsymbol {\Omega }} \boldsymbol {\omega }$

, where

![]() $\bar {\boldsymbol {\Omega }}$

is the correlation matrix and

$\bar {\boldsymbol {\Omega }}$

is the correlation matrix and

![]() $\boldsymbol {\omega }$

is a diagonal matrix with the square roots of the diagonal elements of

$\boldsymbol {\omega }$

is a diagonal matrix with the square roots of the diagonal elements of

![]() $\boldsymbol {\Omega }$

in its diagonal.

$\boldsymbol {\Omega }$

in its diagonal.

![]() $\boldsymbol {\Delta }$

is a K by T matrix that determines the skewness of the distribution, and

$\boldsymbol {\Delta }$

is a K by T matrix that determines the skewness of the distribution, and

![]() $\boldsymbol {\gamma } \in \mathbb R^T$

controls the flexibility in departures from normality.

$\boldsymbol {\gamma } \in \mathbb R^T$

controls the flexibility in departures from normality.

In addition, the

![]() $(K+T) \times (K+T)$

matrix

$(K+T) \times (K+T)$

matrix

![]() $\boldsymbol {\Omega }^{*}$

, having blocks

$\boldsymbol {\Omega }^{*}$

, having blocks

![]() $\boldsymbol {\Omega }_{[11]}^{*}=\boldsymbol {\Gamma }, \boldsymbol {\Omega }_{[22]}^{*}=\bar {\boldsymbol {\Omega }}$

, and

$\boldsymbol {\Omega }_{[11]}^{*}=\boldsymbol {\Gamma }, \boldsymbol {\Omega }_{[22]}^{*}=\bar {\boldsymbol {\Omega }}$

, and

![]() $\boldsymbol {\Omega }_{[21]}^{*}=\boldsymbol {\Omega }_{[12]}^{*'}=\boldsymbol {\Delta }$

, needs to be a full-rank correlation matrix.

$\boldsymbol {\Omega }_{[21]}^{*}=\boldsymbol {\Omega }_{[12]}^{*'}=\boldsymbol {\Delta }$

, needs to be a full-rank correlation matrix.

Suppose an arbitrary CAT item-selection algorithm has already selected T items with item parameters

![]() $\boldsymbol {\xi }_{1:T} = (\boldsymbol {B}_{1:T}, \boldsymbol {D}_{1:T})$

, where

$\boldsymbol {\xi }_{1:T} = (\boldsymbol {B}_{1:T}, \boldsymbol {D}_{1:T})$

, where

![]() $\boldsymbol {B}_{1:T}:=[\boldsymbol {B}_{j_1}, \dots , \boldsymbol {B}_{j_T}]'$

and

$\boldsymbol {B}_{1:T}:=[\boldsymbol {B}_{j_1}, \dots , \boldsymbol {B}_{j_T}]'$

and

![]() $\boldsymbol {D}_{1:T}:=[D_{j_1}, \dots , D_{j_T}]'$

. Then it is possible to show the following result.

$\boldsymbol {D}_{1:T}:=[D_{j_1}, \dots , D_{j_T}]'$

. Then it is possible to show the following result.

Theorem 4.1. Consider a K-factor CAT item-selection procedure after selecting T items, with

![]() $\mathcal {N}(\boldsymbol {0}_K, \mathbb {I}_K)$

prior placed on the test taker’s latent trait

$\mathcal {N}(\boldsymbol {0}_K, \mathbb {I}_K)$

prior placed on the test taker’s latent trait

![]() $\boldsymbol {\theta }$

. If

$\boldsymbol {\theta }$

. If

![]() $\boldsymbol {Y}_{1:T}=\left (y_{j_1}, \ldots , y_{j_T}\right )'$

is conditionally independent binary response data from the two-parameter probit MIRT model defined in (3.1), then

$\boldsymbol {Y}_{1:T}=\left (y_{j_1}, \ldots , y_{j_T}\right )'$

is conditionally independent binary response data from the two-parameter probit MIRT model defined in (3.1), then

with posterior parameters

$$ \begin{gather*} \boldsymbol{\mu}_{\mathrm{post}} = \boldsymbol{0}_K, \quad \boldsymbol{\Omega}_{\text {post }}= \mathbb{I}_K, \quad \boldsymbol{\Delta}_{\text {post }}= \boldsymbol{C}_1'\boldsymbol{C}_3^{-1}, \\ \boldsymbol{\gamma}_{\text {post }} = \boldsymbol{C}_3^{-1} \boldsymbol{C}_2, \quad \boldsymbol{\Gamma}_{\text {post }} =\boldsymbol{C}_3^{-1}\left(\boldsymbol{C}_1 \boldsymbol{C}_1'+\mathbb I_{T}\right) \boldsymbol{C}_3^{-1}, \end{gather*} $$

$$ \begin{gather*} \boldsymbol{\mu}_{\mathrm{post}} = \boldsymbol{0}_K, \quad \boldsymbol{\Omega}_{\text {post }}= \mathbb{I}_K, \quad \boldsymbol{\Delta}_{\text {post }}= \boldsymbol{C}_1'\boldsymbol{C}_3^{-1}, \\ \boldsymbol{\gamma}_{\text {post }} = \boldsymbol{C}_3^{-1} \boldsymbol{C}_2, \quad \boldsymbol{\Gamma}_{\text {post }} =\boldsymbol{C}_3^{-1}\left(\boldsymbol{C}_1 \boldsymbol{C}_1'+\mathbb I_{T}\right) \boldsymbol{C}_3^{-1}, \end{gather*} $$

where

![]() $\boldsymbol {C}_1 = \text {diag}(2y_{j_1}-1, \ldots , 2y_{j_T}-1) \boldsymbol {B}_{1:T}$

and

$\boldsymbol {C}_1 = \text {diag}(2y_{j_1}-1, \ldots , 2y_{j_T}-1) \boldsymbol {B}_{1:T}$

and

![]() $\boldsymbol {C}_2 = \text {diag}(2y_{j_1}-1, \ldots , 2y_{j_T}-1)\boldsymbol {D}_{1:T}$

. The matrix

$\boldsymbol {C}_2 = \text {diag}(2y_{j_1}-1, \ldots , 2y_{j_T}-1)\boldsymbol {D}_{1:T}$

. The matrix

![]() $\boldsymbol {C}_3$

is a T by T diagonal matrix, where the

$\boldsymbol {C}_3$

is a T by T diagonal matrix, where the

![]() $(t,t)$

th entry is

$(t,t)$

th entry is

![]() $(\|\boldsymbol {B}_{t,T}\|_2^2 +1)^{\frac {1}{2}}$

, with

$(\|\boldsymbol {B}_{t,T}\|_2^2 +1)^{\frac {1}{2}}$

, with

![]() $\boldsymbol {B}_{t, T}$

representing the tth row of

$\boldsymbol {B}_{t, T}$

representing the tth row of

![]() $\boldsymbol {B}_{1:T}$

.

$\boldsymbol {B}_{1:T}$

.

Theorem 4.1 provides an exact finite-sample Bayesian characterization of the latent factor posterior. With a multivariate normal prior on

![]() $\boldsymbol {\theta }$

and a probit MIRT likelihood, the posterior

$\boldsymbol {\theta }$

and a probit MIRT likelihood, the posterior

![]() $f(\boldsymbol {\theta }\mid \boldsymbol {Y}_{1:T})$

belongs to the unified skew-normal (SUN) family. This does not conflict with the empirical Bayes result of Chang and Stout (Reference Chang and Stout1993), which establishes asymptotic posterior normality as the number of items J grows; related discussion of Bayesian latent-trait estimation and asymptotic covariance properties in MIRT is given in Wang (Reference Wang2015). The finite-sample form is particularly relevant for CAT, where only a small number of items have been administered and large-sample normal approximations may be unreliable. This representation enables exact posterior calculations, improving uncertainty quantification and item selection in short tests. For ordinal responses under a probit link, closely related SUN-based approximations can also be developed as discussed in Li et al. (Reference Li, Gibbons and Rockova2025). The latent factor posterior can often be well approximated within the SUN family, although the representation is generally not exact.

$f(\boldsymbol {\theta }\mid \boldsymbol {Y}_{1:T})$

belongs to the unified skew-normal (SUN) family. This does not conflict with the empirical Bayes result of Chang and Stout (Reference Chang and Stout1993), which establishes asymptotic posterior normality as the number of items J grows; related discussion of Bayesian latent-trait estimation and asymptotic covariance properties in MIRT is given in Wang (Reference Wang2015). The finite-sample form is particularly relevant for CAT, where only a small number of items have been administered and large-sample normal approximations may be unreliable. This representation enables exact posterior calculations, improving uncertainty quantification and item selection in short tests. For ordinal responses under a probit link, closely related SUN-based approximations can also be developed as discussed in Li et al. (Reference Li, Gibbons and Rockova2025). The latent factor posterior can often be well approximated within the SUN family, although the representation is generally not exact.

According to Arellano-Valle and Azzalini (Reference Arellano-Valle and Azzalini2006), an arbitrary unified skew-normal distribution

![]() $\boldsymbol {\theta } \sim \operatorname {SUN}_{K, T}(\boldsymbol {\mu }, \boldsymbol {\Omega }, \boldsymbol {\Delta }, \boldsymbol {\gamma }, \boldsymbol {\Gamma })$

has a stochastic representation as a linear combination of a K-dimensional multivariate normal random variable

$\boldsymbol {\theta } \sim \operatorname {SUN}_{K, T}(\boldsymbol {\mu }, \boldsymbol {\Omega }, \boldsymbol {\Delta }, \boldsymbol {\gamma }, \boldsymbol {\Gamma })$

has a stochastic representation as a linear combination of a K-dimensional multivariate normal random variable

![]() $\boldsymbol {V}_0$

, and a T-dimensional truncated multivariate normal random variable

$\boldsymbol {V}_0$

, and a T-dimensional truncated multivariate normal random variable

![]() $\boldsymbol {V}_{1, -\gamma }$

as follows:

$\boldsymbol {V}_{1, -\gamma }$

as follows:

where

![]() $\boldsymbol {V}_0 \sim \mathcal {N}(0, \bar {\boldsymbol {\Omega }} - \boldsymbol {\Delta } \boldsymbol {\Gamma }^{-1} \boldsymbol {\Delta }') \in \mathbb R^{K}$

, and

$\boldsymbol {V}_0 \sim \mathcal {N}(0, \bar {\boldsymbol {\Omega }} - \boldsymbol {\Delta } \boldsymbol {\Gamma }^{-1} \boldsymbol {\Delta }') \in \mathbb R^{K}$

, and

![]() $\boldsymbol {V}_{1, -\gamma }$

is a multivariate normal distribution

$\boldsymbol {V}_{1, -\gamma }$

is a multivariate normal distribution

![]() $ \mathcal {N}(0, \boldsymbol {\Gamma })$

truncated to

$ \mathcal {N}(0, \boldsymbol {\Gamma })$

truncated to

![]() $\{\boldsymbol {V}_1 \in \mathbb {R}^{T}: V_{1i} \geq -\gamma _i \quad ,\forall i\}$

.

$\{\boldsymbol {V}_1 \in \mathbb {R}^{T}: V_{1i} \geq -\gamma _i \quad ,\forall i\}$

.

Based on Theorem 4.1, we have a closed-form expression for the posterior parameters

![]() $(\boldsymbol {\mu }, \boldsymbol {\Omega }, \boldsymbol {\Delta }, \boldsymbol {\gamma }, \boldsymbol {\Gamma })$

for

$(\boldsymbol {\mu }, \boldsymbol {\Omega }, \boldsymbol {\Delta }, \boldsymbol {\gamma }, \boldsymbol {\Gamma })$

for

![]() $\boldsymbol {\theta }$

. Recall that

$\boldsymbol {\theta }$

. Recall that

![]() $\boldsymbol {\Omega } = \boldsymbol {\omega } \bar {\boldsymbol {\Omega }} \boldsymbol {\omega }$

is the standard covariance correlation matrix decomposition from Definition 4.1, and hence the only unrealized stochastic terms in Equation (4.1) are

$\boldsymbol {\Omega } = \boldsymbol {\omega } \bar {\boldsymbol {\Omega }} \boldsymbol {\omega }$

is the standard covariance correlation matrix decomposition from Definition 4.1, and hence the only unrealized stochastic terms in Equation (4.1) are

![]() $\boldsymbol {V}_0$

and

$\boldsymbol {V}_0$

and

![]() $\boldsymbol {V}_{1, -\boldsymbol {\gamma }}$

. This suggests that sampling from the latent factor posterior distribution

$\boldsymbol {V}_{1, -\boldsymbol {\gamma }}$

. This suggests that sampling from the latent factor posterior distribution

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:T})$

requires two independent steps: sampling from a K-dimensional multivariate normal distribution

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:T})$

requires two independent steps: sampling from a K-dimensional multivariate normal distribution

![]() $\boldsymbol {V}_0$

and a T-dimensional multivariate truncated normal distribution

$\boldsymbol {V}_0$

and a T-dimensional multivariate truncated normal distribution

![]() $\boldsymbol {V}_1$

. As a result, the direct sampling approach scales efficiently with the number of factors K, since generating samples from K-dimensional multivariate normal distributions is trivial. Moreover, in CAT settings, the test is typically terminated early, meaning T remains relatively small. When T is moderate (e.g.,

$\boldsymbol {V}_1$

. As a result, the direct sampling approach scales efficiently with the number of factors K, since generating samples from K-dimensional multivariate normal distributions is trivial. Moreover, in CAT settings, the test is typically terminated early, meaning T remains relatively small. When T is moderate (e.g.,

![]() $T<1,000$

), sampling from the truncated multivariate normal distribution remains computationally efficient using the minimax tilting method (Botev, Reference Botev2016). The exact proof of Theorem 4.1 and the sampling details can be found in Appendix B of the Supplementary Material.

$T<1,000$

), sampling from the truncated multivariate normal distribution remains computationally efficient using the minimax tilting method (Botev, Reference Botev2016). The exact proof of Theorem 4.1 and the sampling details can be found in Appendix B of the Supplementary Material.

The direct sampling approach provides substantial gains in both computational efficiency and numerical precision compared with traditional MCMC methods commonly used in the MIRT literature (Béguin & Glas, Reference Béguin and Glas2001; Jiang & Templin, Reference Jiang and Templin2019). In standard MIRT settings, the posterior distribution of the latent factors is typically regarded as intractable and non-Gaussian, requiring a Markov chain to be constructed via data-augmentation techniques (Albert & Chib, Reference Albert and Chib1993; Polson et al., Reference Polson, Scott and Windle2013) so that its stationary distribution approximates the posterior. This procedure entails repeated simulation of augmented data and is inherently sequential, which limits opportunities for parallelization. Moreover, it demands additional tuning, burn-in, and convergence diagnostics to ensure that the chain adequately converges to the posterior distribution. In contrast, Theorem 4.1 establishes that the posterior distribution can be expressed exactly as a unified skew-normal distribution, allowing direct and parallel sampling without the need for iterative convergence procedures.

Theorem 4.1 also plays a central role in our proposed deep Q-learning algorithm, as it fully characterizes the latent factor posterior distribution

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:T})$

, enabling the parameterization of the state variable

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:T})$

, enabling the parameterization of the state variable

![]() $s_T$

, which serves as an input to the Q-network illustrated in Section 5.1. We illustrate how Theorem 4.1 can be used to accelerate existing item-selection rules, and the same idea can be extended to fully Bayesian item-selection settings as considered by van der Linden and Ren (Reference van der Linden and Ren2020).

$s_T$

, which serves as an input to the Q-network illustrated in Section 5.1. We illustrate how Theorem 4.1 can be used to accelerate existing item-selection rules, and the same idea can be extended to fully Bayesian item-selection settings as considered by van der Linden and Ren (Reference van der Linden and Ren2020).

4.1 Accelerating existing rules

While Bayesian CAT criteria require multidimensional integration, these quantities are typically evaluated in practice via Monte Carlo approximation rather than analytic quadrature in moderate to high dimensions. In such Bayesian CAT implementations, the dominant computational cost lies in obtaining valid samples from the latent factor posterior. By providing exact posterior samples without Markov chain construction, our approach removes this primary bottleneck and renders the remaining Monte Carlo integration computationally efficient and easily parallelizable. For example, in computing the KL-EAP item-selection rule (3.2), we directly sample from

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

instead of resorting to MCMC and evaluate the integral via Monte Carlo integration. Since the EAP estimate

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

instead of resorting to MCMC and evaluate the integral via Monte Carlo integration. Since the EAP estimate

![]() $\hat {\boldsymbol {\theta }}_{t-1}$

remains fixed at time step t, evaluating the KL information term is straightforward. For the Max Pos item-selection rule in Equation (3.3), we again obtain i.i.d. samples from

$\hat {\boldsymbol {\theta }}_{t-1}$

remains fixed at time step t, evaluating the KL information term is straightforward. For the Max Pos item-selection rule in Equation (3.3), we again obtain i.i.d. samples from

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

and compute the posterior predictive probabilities in (3.4). Although the density ratio

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

and compute the posterior predictive probabilities in (3.4). Although the density ratio

![]() $\frac {f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})}{f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:t})}$

is difficult to evaluate, we can leverage the conditional independence assumption of the MIRT model. In particular, we can express the joint distribution of

$\frac {f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})}{f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:t})}$

is difficult to evaluate, we can leverage the conditional independence assumption of the MIRT model. In particular, we can express the joint distribution of

![]() $(\boldsymbol {\theta }, y_{j_t})$

as

$(\boldsymbol {\theta }, y_{j_t})$

as

Using Equation (4.2), we rewrite the KL information term in Equation (3.3) as

$$ \begin{align*} \int f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}, y_{j_t})} d\boldsymbol{\theta} &\ = \int f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}, y_{j_t} | \boldsymbol{Y}_{1:(t-1)})} d\boldsymbol{\theta} \\ &\ = \int f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)})}{f(y_{j_t} | \boldsymbol{\theta})} d\boldsymbol{\theta}. \end{align*} $$

$$ \begin{align*} \int f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}, y_{j_t})} d\boldsymbol{\theta} &\ = \int f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)})}{f(\boldsymbol{\theta}, y_{j_t} | \boldsymbol{Y}_{1:(t-1)})} d\boldsymbol{\theta} \\ &\ = \int f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}) \log \frac{f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)})}{f(y_{j_t} | \boldsymbol{\theta})} d\boldsymbol{\theta}. \end{align*} $$

Since

![]() $f(y_{j_t}|\boldsymbol {\theta })$

can be easily computed for each

$f(y_{j_t}|\boldsymbol {\theta })$

can be easily computed for each

![]() $\boldsymbol {\theta } \sim f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)})$

, the online computation of Max Pos remains efficient.

$\boldsymbol {\theta } \sim f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)})$

, the online computation of Max Pos remains efficient.

Although the MI selection rule in Equation (3.5) has demonstrated strong empirical performance (Wang & Chang, Reference Wang and Chang2011), its computational complexity remains a significant challenge. By applying Equation (4.2), we can rewrite MI as

$$ \begin{align} \arg\max_{j_t \in R_t} \sum_{y_{j_t}=0}^{1} f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)}) \int_{\boldsymbol{\theta}} f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}, y_{j_t} ) \log \frac{f(y_{j_t} | \boldsymbol{\theta})}{ f(y_{j_t} | \boldsymbol{Y}_{1:(t-1)})} d\boldsymbol{\theta}. \end{align} $$

$$ \begin{align} \arg\max_{j_t \in R_t} \sum_{y_{j_t}=0}^{1} f(y_{j_t}| \boldsymbol{Y}_{1:(t-1)}) \int_{\boldsymbol{\theta}} f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}, y_{j_t} ) \log \frac{f(y_{j_t} | \boldsymbol{\theta})}{ f(y_{j_t} | \boldsymbol{Y}_{1:(t-1)})} d\boldsymbol{\theta}. \end{align} $$

This formulation reveals that maximizing MI is structurally similar to Max Pos but requires much more computational effort. Unlike Max Pos, where sampling is performed from the current posterior

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

, MI requires sampling from future posteriors

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

, MI requires sampling from future posteriors

![]() $f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

. Since each candidate item

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

. Since each candidate item

![]() $j_t \in R_t$

has two possible outcomes (

$j_t \in R_t$

has two possible outcomes (

![]() $y_{j_t} = 0$

or

$y_{j_t} = 0$

or

![]() $y_{j_t} = 1$

), evaluating Equation (4.3) requires obtaining samples from

$y_{j_t} = 1$

), evaluating Equation (4.3) requires obtaining samples from

![]() $|R_t| \times 2$

distinct posterior distributions. Even if sampling each individual posterior is computationally efficient, this approach becomes impractical for large item banks.

$|R_t| \times 2$

distinct posterior distributions. Even if sampling each individual posterior is computationally efficient, this approach becomes impractical for large item banks.

We hence propose a new approach to dramatically accelerate the computation of the MI quantity using the idea of importance sampling and resampling and bootstrap filter (Gordon et al., Reference Gordon, Salmond and Smith1993; Smith & Gelfand, Reference Smith and Gelfand1992). Rather than explicitly sampling the future posterior

![]() $f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

for each

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

for each

![]() $j_t \in R_t$

and

$j_t \in R_t$

and

![]() $y_{j_t} \in \{0, 1\}$

, we can simply sample from the current posterior

$y_{j_t} \in \{0, 1\}$

, we can simply sample from the current posterior

![]() $f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:(t-1)})$

once, and then perform proper posterior reweighting. Under Equation (3.1), let

$f(\boldsymbol {\theta } | \boldsymbol {Y}_{1:(t-1)})$

once, and then perform proper posterior reweighting. Under Equation (3.1), let

![]() $p(\boldsymbol {\theta })$

be the prior on the latent factors

$p(\boldsymbol {\theta })$

be the prior on the latent factors

![]() $\boldsymbol {\theta }$

,

$\boldsymbol {\theta }$

,

![]() $l_1(\boldsymbol {\theta })$

be the current data likelihood, and

$l_1(\boldsymbol {\theta })$

be the current data likelihood, and

![]() $l_2(\boldsymbol {\theta })$

denote the future data likelihood after observing

$l_2(\boldsymbol {\theta })$

denote the future data likelihood after observing

![]() $y_{j_t}$

. We have the future posterior density as follows:

$y_{j_t}$

. We have the future posterior density as follows:

$$ \begin{align} f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}, y_{j_t}) & \propto l_2(\boldsymbol{\theta}) p(\boldsymbol{\theta}) \propto \frac{l_2(\boldsymbol{\theta})p(\boldsymbol{\theta})}{l_1(\boldsymbol{\theta})p(\boldsymbol{\theta})} f(\boldsymbol{\theta} | \boldsymbol{Y}_{1:(t-1)}) \notag \\ & = \Phi(\boldsymbol{B}_{j_t}'\boldsymbol{\theta} + D_{j_t})^{y_{j_t}}(1- \Phi(\boldsymbol{B}_{j_t}'\boldsymbol{\theta} + D_{j_t}))^{1- y_{j_t}} f(\boldsymbol{\theta} | \boldsymbol{Y}_{1:(t-1)}). \end{align} $$

$$ \begin{align} f(\boldsymbol{\theta}| \boldsymbol{Y}_{1:(t-1)}, y_{j_t}) & \propto l_2(\boldsymbol{\theta}) p(\boldsymbol{\theta}) \propto \frac{l_2(\boldsymbol{\theta})p(\boldsymbol{\theta})}{l_1(\boldsymbol{\theta})p(\boldsymbol{\theta})} f(\boldsymbol{\theta} | \boldsymbol{Y}_{1:(t-1)}) \notag \\ & = \Phi(\boldsymbol{B}_{j_t}'\boldsymbol{\theta} + D_{j_t})^{y_{j_t}}(1- \Phi(\boldsymbol{B}_{j_t}'\boldsymbol{\theta} + D_{j_t}))^{1- y_{j_t}} f(\boldsymbol{\theta} | \boldsymbol{Y}_{1:(t-1)}). \end{align} $$

Equation (4.4) suggests that we can generate samples from

![]() $f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

via reweighting and resampling from the current posterior samples

$f(\boldsymbol {\theta }| \boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

via reweighting and resampling from the current posterior samples

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

. Specifically, given a sufficiently large set of posterior samples

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

. Specifically, given a sufficiently large set of posterior samples

![]() $(\boldsymbol {\theta }_1, \ldots , \boldsymbol {\theta }_M) \sim f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

, we assign distinct weights for each sample

$(\boldsymbol {\theta }_1, \ldots , \boldsymbol {\theta }_M) \sim f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)})$

, we assign distinct weights for each sample

![]() $m \in \{1, \ldots , M\}$

and future item

$m \in \{1, \ldots , M\}$

and future item

![]() $j_t \in R_t$

:

$j_t \in R_t$

:

To sample from

![]() $f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

, we then draw from the discrete distribution over

$f(\boldsymbol {\theta }|\boldsymbol {Y}_{1:(t-1)}, y_{j_t})$

, we then draw from the discrete distribution over

![]() $(\boldsymbol {\theta }_1, \ldots , \boldsymbol {\theta }_M)$

, placing weight

$(\boldsymbol {\theta }_1, \ldots , \boldsymbol {\theta }_M)$

, placing weight

![]() $q_m$

on

$q_m$

on

![]() $\boldsymbol {\theta }_m$

. This approach eliminates the need to sample from

$\boldsymbol {\theta }_m$

. This approach eliminates the need to sample from

![]() $|R_t| \times 2$

distinct posteriors directly, further accelerating MI computation and making it scalable for large item banks.

$|R_t| \times 2$

distinct posteriors directly, further accelerating MI computation and making it scalable for large item banks.

5 Learning optimal item-selection policy

Building on the Bayesian MIRT foundation established in the previous section, we now formulate the problem of CAT as an RL task. The Bayesian framework provides two key advantages that make this integration both principled and computationally feasible. First, the identified latent factor posterior distributions offer a well-defined representation of examinee knowledge, allowing their corresponding posterior parameters to be parameterized directly as state variables. Without such identification, encoding an unknown and analytically intractable posterior would be ambiguous. Second, because RL typically requires extensive simulations to learn an optimal policy, the acceleration achieved in online item selection enables rapid simulation of testing sessions, thereby substantially improving the efficiency of policy training.

As illustrated in Section 3.3, an RL approach addresses the myopic nature of traditional CAT selection rules, enables a more flexible reward structure, and directly minimizes the number of items required for performing online adaptive testing. Specifically, we propose a novel double Q-learning algorithm (Mnih et al., Reference Mnih, Kavukcuoglu, Silver, Rusu, Veness, Bellemare, Graves, Riedmiller, Fidjeland, Ostrovski, Petersen, Beattie, Sadik, Antonoglou, King, Kumaran, Wierstra, Legg and Hassabis2015; van Hasselt et al., Reference van Hasselt, Guez and Silver2016) for online item selection in CAT. The algorithm trains a deep neural network offline using only the item parameters estimated from an arbitrary two-parameter MIRT model. The offline training phase is completed before any live CAT administration. After training, the network weights are fixed, and online CAT only requires sequentially updating the posterior state and applying the learned network to select the next item. During online item selection, the neural network takes the current posterior distribution

![]() $ s_t := f(\boldsymbol {\theta } \mid \boldsymbol {Y}_{1:t}) $

as input and outputs the next item selection for step

$ s_t := f(\boldsymbol {\theta } \mid \boldsymbol {Y}_{1:t}) $

as input and outputs the next item selection for step

![]() $ (t+1) $

. The neural network architecture is described in Section 5.1, while the double deep Q-learning algorithm is detailed in Section 5.2.

$ (t+1) $

. The neural network architecture is described in Section 5.1, while the double deep Q-learning algorithm is detailed in Section 5.2.

5.1 Deep Q-learning neural network design

A potential reason that a deep RL approach has not been proposed in the CAT literature is the ambiguity arising from unidentified latent factor distributions. However, by Theorem 4.1, it is straightforward to compactly parameterize the posterior distribution at each time step t using the parameters

![]() $\boldsymbol {C}_1 \in \mathbb R^{t \times K}$

,

$\boldsymbol {C}_1 \in \mathbb R^{t \times K}$

,

![]() $\boldsymbol {C}_2 \in \mathbb {R}^t$

, and

$\boldsymbol {C}_2 \in \mathbb {R}^t$

, and

![]() $\text {diag}(\boldsymbol {C}_3) \in \mathbb R^t$

, where

$\text {diag}(\boldsymbol {C}_3) \in \mathbb R^t$

, where

![]() $\text {diag}(\boldsymbol {C}_3)$

represents the diagonal vector of

$\text {diag}(\boldsymbol {C}_3)$

represents the diagonal vector of

![]() $\boldsymbol {C}_3$

. This structured representation of the posterior enables item-selection policy learning via deep neural networks, which takes posterior parameters as input and outputs item selection.

$\boldsymbol {C}_3$

. This structured representation of the posterior enables item-selection policy learning via deep neural networks, which takes posterior parameters as input and outputs item selection.

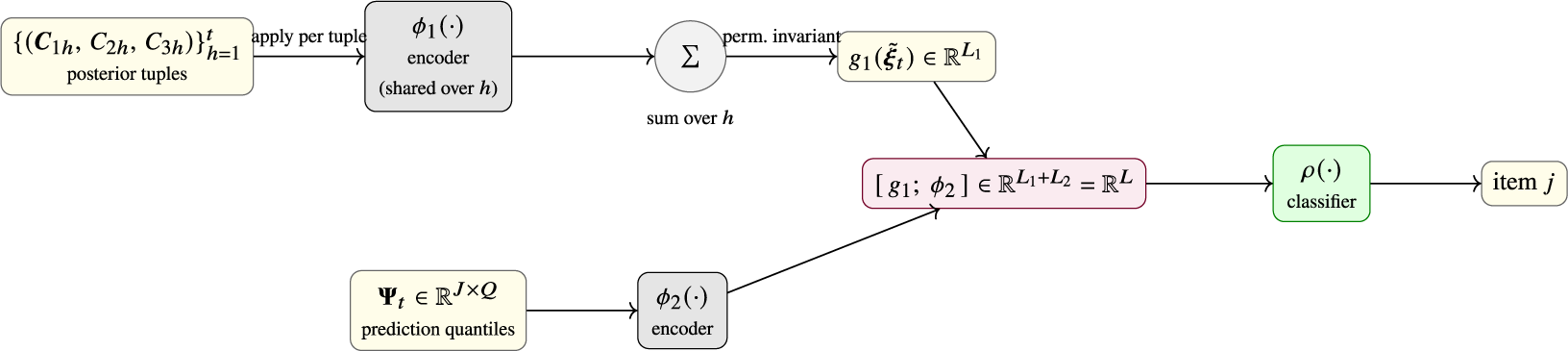

Neural networks can be regarded as flexible function approximators that learn nonlinear mappings between inputs and outputs through a composition of simple transformations (Goodfellow et al., Reference Goodfellow, Bengio and Courville2016). In our framework, the deep neural network consists of two key components: an encoder and a classifier. At each time step t, the encoder maps the collection of posterior parameters to a latent representation in

![]() $\mathbb {R}^{L}$

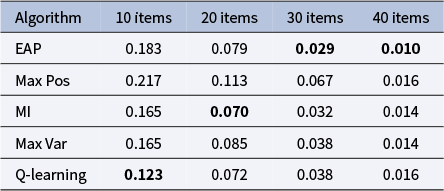

, where L is a hyperparameter and represents the dimension of the latent feature space. The classifier then outputs a J-dimensional vector of estimated Q-values and selects the next item from the item bank that is expected to yield the highest Q-value.

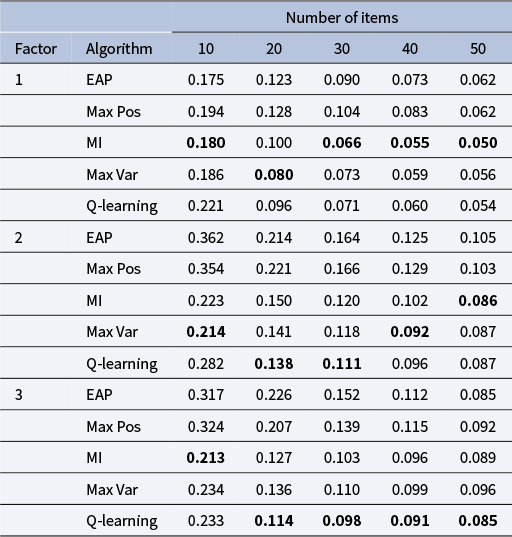

$\mathbb {R}^{L}$