1. Introduction

1.1. Research question

Experiments are the central tool for understanding human behavior. While the vast majority of experimental studies in behavioral economics have focused on human interactions, the rise of human–machine and machine–machine interactions across nearly all facets of social and economic life has spurred researchers to incorporate machines into behavioral studies (Caro et al., Reference Caro, Colliard, Katok, Ockenfels, Stier-Moses, Tucker and Wu2022). Large Language Models (LLMs) have been particularly transformative, enabling innovative experimental methodologies and communication approaches. Yet integrating LLMs as agents into experiments requires technical skills that experimenters do not necessarily possess. This paper aims to lower the technical barrier to entry by introducing a suite of tools that facilitate implementation.

For microexperiments, a ready-made tool requires no coding at all: our “builder” enables researchers to create simple LLM-to-LLM experiments by filling out designated fields. For richer designs, we provide full native Python code that experimenters can adapt to their specific needs. Finally, we offer an integration of LLM agents into experiments run on the popular experimental software oTree (Chen et al., Reference Chen, Schonger and Wickens2016). This version of the code enables human participants to interact with LLM agents.

1.2. Large language models

Large language models have revolutionized many areas, including feature extraction (Dominguez-Olmedo et al., Reference Dominguez-Olmedo, Nanda, Abebe, Bechtold, Engel, Frankenreiter, Gummadi, Hardt and Livermore2024), code writing (Mohsin et al., Reference Mohsin, Janicke, Wood, Sarker, Maglaras and Janjua2024), and text analysis (Törnberg, Reference Törnberg2023). They build on the transformer architecture (Vaswani et al., Reference Vaswani, Shazeer, Parmar, Uszkoreit, Jones, Gomez, Kaiser and Polosukhin2017), but add a generative component: they can not only analyze but also generate text (or pictures, or sound) (for a technical primer see Naveed et al., Reference Naveed, Khan, Qiu, Saqib, Anwar, Usman, Akhtar, Barnes and Mian2023). Their generative ability makes LLMs appealing for experimental research.

1.3. LLM agents

Humans can delegate actions to LLMs, rendering machine behavior a worthy topic in its own right (Rahwan et al., Reference Rahwan, Cebrian, Obradovich, Bongard, Bonnefon, Breazeal, Crandall, Christakis, Couzin, Jackson and Jennings2019). One may, for instance, investigate LLMs’ motivation (Guo, Reference Guo2023; Phelps & Russell, Reference Phelps and Russell2023), willingness to cooperate (Kasberger et al., Reference Kasberger, Martin, Normann and Werner2024), or adaptation to a changing environment (Chen et al., Reference Chen, Liu, Shan and Zhong2023). Another important topic is human behavior when individuals know they are interacting with an algorithm (Crandall et al., Reference Crandall, Tennom, Ishowo-Oloko, Abdallah, Bonnefon, Cebrian, Shariff, Goodrich and Rahwan2018; March, Reference March2021; Chugunova & Sele, Reference Chugunova and Sele2022; von Schenk et al., Reference von Schenk, Klockmann and Köbis2025). Prior research has shown that, in cooperation problems like the prisoner’s dilemma, humans respond to the perceived cooperativeness of the AI agent (Ng, Reference Ng2023), but tend to cooperate less with machines, even when they expect machines to cooperate (de Melo et al., Reference de Melo, Marsella and Gratch2016; Crandall et al., Reference Crandall, Tennom, Ishowo-Oloko, Abdallah, Bonnefon, Cebrian, Shariff, Goodrich and Rahwan2018; Karpus et al., Reference Karpus, Krüger, Verba, Bahrami and Deroy2021; Jiang et al., Reference Jiang, Wang and Hui2025), but only in Western countries (Karpus et al., Reference Karpus, Shirai, Verba, Schulte, Weigert, Bahrami, Watanabe and Deroy2025).

The implications for experimental economics would be even more profound if LLMs reliably replicate human behavior (Charness et al., Reference Charness, Jabarian and List2023; Horton, Reference Horton2023; Mei et al., Reference Mei, Xie, Yuan and Jackson2024). LLMs could then be used not only to pilot, replicate, or potentially substitute human participants in standard economic experiments (Aher et al., Reference Aher, Arriaga and Kalai2023; Brookins & DeBacker, Reference Brookins and DeBacker2023; Tsuchihashi, Reference Tsuchihashi2023), but also as proxies for human choices in macroeconomic simulations (Li et al., Reference Li, Gao, Li, Li and Liao2024), political science (Argyle et al., Reference Argyle, Busby, Fulda, Gubler, Rytting and Wingate2023), and the social sciences more broadly (Manning et al., Reference Manning, Zhu and Horton2024).

1.4. Getting at variance

In the tradition of experimental economics, individual human participants are randomly exposed to alternative conditions. The manipulation precisely matches a hypothesis derived from (perhaps behavioral) theory. Experimenters usually provisionally accept the theoretical hypothesis if the average reaction of treated participants differs significantly from that of untreated participants. As LLMs capitalize on centuries of human utterances and respond using human language, it is meaningful to ask the equivalent question: how do LLMs “behave” when exposed to the same stimuli?

Yet under the hood, an LLM is a prediction engine. Given textual input, it determines the best fitting textual output. LLMs are probabilistic by design. They do not calculate a response based on first principles embedded in the program. Rather, they attempt to make sense of the input—the prompt—as best they can. Researchers can reconstruct the degree of uncertainty that the LLM faces for a given prompt by asking the same question repeatedly.

This approach, however, presupposes that the LLM allows for sufficient variance in possible responses to the prompt. Generative Pretrained Transformer (GPT) is the model family of LLMs developed by OpenAI. Users may define temperature as a free parameter. If temperature ![]() $=0$, the LLM gives the exact same response for every repetition;Footnote 1 the globally best fitting reaction. Setting the temperature higher and running multiple repetitions generates a distribution of responses. If temperature is set to

$=0$, the LLM gives the exact same response for every repetition;Footnote 1 the globally best fitting reaction. Setting the temperature higher and running multiple repetitions generates a distribution of responses. If temperature is set to ![]() $1$, in expectation the distribution of multiple responses replicates the probability of each response estimated by the model. This represents the machine’s estimate of how human participants would react to the manipulation (Chen et al., Reference Chen, Liu, Shan and Zhong2023; Guo, Reference Guo2023).

$1$, in expectation the distribution of multiple responses replicates the probability of each response estimated by the model. This represents the machine’s estimate of how human participants would react to the manipulation (Chen et al., Reference Chen, Liu, Shan and Zhong2023; Guo, Reference Guo2023).

1.5. Human alignment

How good a proxy are LLMs for human choices? This is an active research field (see the survey by Wang et al., Reference Wang, Zhong, Li, Mi, Zeng, Huang, Shang, Jiang and Liu2023). Most economics-related literature has focused on the models offered by OpenAI. Thus far, the evidence is mixed. Davinci-002 yields comparable results for the ultimatum game, garden path tasks and the Milgram shock experiments, but not for wisdom of the crowd tasks (Aher et al., Reference Aher, Arriaga and Kalai2023). GPT-3Footnote 2 exhibits anchoring effects similar to the ones observed in humans (Jones & Steinhardt, Reference Jones and Steinhardt2022), is subject to gender stereotypes (Acerbi & Stubbersfield, Reference Acerbi and Stubbersfield2023), and falls prey to intuition in cognitive reflection tests in about the same way as humans (Hagendorff et al., Reference Hagendorff, Fabi and Kosinski2023).

GPT-3.5 exhibits moral judgements that are similar to the ones observed in human subjects (Dillion et al., Reference Dillion, Tandon, Gu and Gray2023), and emulates the choices well that human proposers make in the ultimatum game (Kitadai et al., Reference Kitadai, Tsurusaki, Fukasawa and Nishino2023). However, in GPT-3.5 cognitive biases are less pronounced than in humans (Hagendorff et al., Reference Hagendorff, Fabi and Kosinski2023). GPT-3.5 is better than human participants at applying Bayes’ ruleReference Orsini2023, and is less likely to overvalue the difference between two options presented simultaneously (Orsini, Reference Orsini2023). The model does not capture well the choices of human responders in the ultimatum game (Kitadai et al., Reference Kitadai, Tsurusaki, Fukasawa and Nishino2023). It is even less patient than human participants (Goli & Singh, Reference Goli and Singh2024). Finally, on multiple tasks GPT-3.5 exhibits a “correct answer bias,” such that it almost always gives the majority response, even if tested multiple times; the variance observed among human participants in analogous tasks is suppressed (Park et al., Reference Park, Schoenegger and Zhu2024). GPT-4 is about as good as human annotators with the classification of text data from lab experiments (Celebi & Penczynski, Reference Celebi and Penczynski2024). The model exhibits risk preferences, time preferences and social preferences that are qualitatively similar to the ones observed in human subjects, but they are more extreme (Chen et al., Reference Chen, Liu, Shan and Zhong2023; Capraro et al., Reference Capraro, Di Paolo and Pizziol2023; Goli & Singh, Reference Goli and Singh2024). On multiple rationality axioms, GPT-4 outperforms human subject pools (Raman et al., Reference Raman, Lundy, Amouyal, Levine, Leyton-Brown and Tennenholtz2024).

Ultimately, even if the alignment between LLM and human choices is pronounced—at least in well-defined subdomains of experimental research—researchers may ask how much experimental economics can learn from probing LLMs. Will LLMs, at best, inform experimental economics about what was already known? We offer two counter-arguments. Even if LLMs only replicate human thoughts and actions, this differs from documenting human behavior under controlled experimental conditions. At the very least, with the help of LLMs, human cognitive or motivational tendencies that are implicit in written language could be explicated and rigorously tested. Moreover, much creativity can be traced to previously unknown combinations of known pieces of knowledge. It is conceivable that, with appropriate experimental designs, such hidden human capabilities and behavioral tendencies can be made visible. Hence arguably, while LLMs critically depend on the human legacy, the human legacy does not limit them to a degree that makes investigating their behavior trivial and uninteresting. A promising proof of concept would be experimental designs that have never been tested on human subjects. These designs could first be run with LLMs (with the results temporarily kept confidential). In the next step, the otherwise identical design could be run with human participants. If results are similar (and statistically indistinguishable), this would provide strong additional evidence.Footnote 3

Large language models might thus become a powerful resource for experimental economics, but much more data is needed to assess the actual predictive value of these models. The next step in this endeavor has recently begun: systematically manipulating the degrees of freedom that large language models offer, such as the degree of variance in outcomes (temperature, see Kitadai et al. (Reference Kitadai, Lugo, Tsurusaki, Fukasawa and Nishino2024) on the ultimatum game), the minimum cumulative posterior probability for a response to be considered (top_p), the size of the most likely set of tokens from which the response is taken (top_k), and the many different ways of prompting the model (for an overview see Chang et al. (Reference Chang, Xu, Wang, Luo, Xiao and Zhu2024)).

1.6. Our suite of tools for LLM experiments

However, a significant barrier to rapid progress in this burgeoning field—and to equal opportunities among researchers across the world—is the absence of tools that enable behavioral scientists to easily develop experiments based on well-established norms and standards in experimental economics. To catalyze this exciting research area, we developed an open-source toolkit, “alter_ego,” that greatly facilitates experiments in which participants are emulated by LLMs. The user-friendly design of our tool facilitates swift and efficient data collection. The software is described in the next section and is freely available at github.com/mrpg/ego.

1.7. Application: a framed prisoner’s dilemma

In the final section, we employ our toolkit to examine framing effects in machine–machine and machine–human interactions in pre-registered prisoner’s dilemmas. We are not the first to have GPT play prisoner dilemmas (Akata et al., Reference Akata, Schulz, Coda-Forno, Oh, Bethge and Schulz2023; Bauer et al., Reference Bauer, Liebich, Hinz and Kosfeld2023; Brookins & DeBacker, Reference Brookins and DeBacker2023; Duffy et al., Reference Duffy, Hopkins and Kornienko2022; Guo, Reference Guo2023; Phelps & Russell, Reference Phelps and Russell2023), but no previous study has focused on framing and group affiliation.Footnote 4 These are particularly interesting and relevant research questions as large language models condense the power of human language. With the help of our framing manipulation, we learn in which ways and to what degree an LLM is swayed by the particular words used to represent a social conflict. Although narrow conceptions of “intelligent” or “rational” behavior assert that a game’s framing should not influence machine or human decisions (Russell, Reference Russell2019), humans are known to respond behaviorally to even subtle differences in framing (e.g., Dufwenberg et al., Reference Dufwenberg, Gächter and Hennig-Schmidt2011). Game descriptions provide contextual cues that influence perceptions of appropriate and normative behavior. Do machines, which capitalize on the richness and sophistication of human language, pick up and respond to such cues? Our data show that the answer is yes.

From a technological perspective, framing machines can be viewed as an instance of prompt engineering (Chen et al., Reference Chen, Zhang, Langrené and Zhu2025; Gu et al., Reference Gu, Han, Chen, Beirami, He, Zhang, Liao, Qin, Tresp and Torr2023; White et al., Reference White, Fu, Hays, Sandborn, Olea, Gilbert, Elnashar, Spencer-Smith and Schmidt2023). In this sense, our application provides a link between the computer science literature on prompt engineering and the experimental economics literature on how framing affects behavior.

Second, a critical question for understanding human–machine interaction is whether and when within-group machine–machine and human–human interactions elicit different responses than between-group machine–human interactions (von Schenk et al., Reference von Schenk, Klockmann and Köbis2025; Verma et al., Reference Verma, Bhambri and Kambhampati2023), a research question that our tool can help resolve. Indeed, our data suggest that, while machines and humans may exhibit similar behavioral patterns when interacting only within their respective groups, behavior changes when opponents do not share one’s group affiliation.

1.8. Organization of paper

The next section dives deeper into the technical background, and introduces our suite of tools that make it easy for experimenters to tap into the power of LLMs (Section 2). Section 3 presents the design of the framed prisoner’s dilemma, both among two LLM agents, and in interaction between an LLM agent and a human participant. Section 4 concludes with discussion.

2. A toolkit for machine–machine and machine-human experiments

Our toolkit is designed to make it easy for experimenters to integrate LLM agents into their experiments. The kit consists of the following tools:

• As a starter kit, we offer an easy-to-use shorthand version of the tool, allowing teachers and researchers to quickly run experiments with LLMs.

• For a broad class of experiments, experimenters can use our builder, a web application that generates most of the code for them.

• For experimenters who want greater flexibility, we provide a full-fledged Python library, which they can adapt to their specific design needs.

• This more complex version of the tool is also usable if experimenters want to have LLM agents interact with human participants. For such designs, our tool is integrated with the popular experimental software oTree.

We introduce these tools in turn, but first have to explain LLM access via an application programming interface API.

2.1. The necessity of using an Application Programming Interface

LLMs usually offer an application programming interface (API). Using the API, the experimenter may fully define the process, store all prompts, repeat the same prompt as often as needed for the research question, and store the resulting data in a format suitable for data analysis (using the preferred statistical package).

While the code needed for running such an experiment is not excessively complex (and we offer such code in one version of our tool), the need to write Python code, assign treatments, dynamically generate prompts, retrieve and filter responses, and perhaps make them accessible in prompts, is a barrier to using LLMs as experimental participants. This motivates the design of our tool. Our package makes these steps as easy as possible.

To use any part of our toolkit, an experimenter needs to install Python 3.8 or later (www.python.org). Most LLM providers additionally require that the user register and obtain an API key (authorizing the user to access the API). Our software comes in the form of the Python package alter_ego, which can be installed into Python from PyPI: alter_ego_llm. Complete documentation is available at github.com/mrpg/ego. To lower the barriers of access as much as possible, we have posted a series of videos:

• Microexperiments: https://youtu.be/GPc0a-Fg1bY

• Builder: https://youtu.be/tV5xACU-abw

• Using Python directly: https://youtu.be/WHW0gkT-oHE

• Integration with oTree: https://youtu.be/ouxRFdKOGEw

2.2. Microexperiments

The tool for running microexperiments on an LLM is the most accessible. Users can vary parameters as in a factorial design. If they are interested in variance (e.g., as a proxy for confidence), they can ask the same question multiple times. To illustrate this design option, we ask GPT-4 about the estimated approval of two US presidents, at the beginning and in the end of their presidency (Table 1). This feature of alter_ego may also be relevant for teaching purposes, an application of LLMs that has been highlighted and increasingly deployed (Cowen & Tabarrok, Reference Cowen and Tabarrok2023). Classroom applications could involve the interactive generation of data that is subsequently analyzed.

Results of the code in Figure 1 (temperature ![]() $=1$, 5 repetitions)

$=1$, 5 repetitions)

As Figure 1 shows, with alter_ego the amount of code needed for this purpose is minimal. Users must import three aspects of our package (lines 1-3). The function in lines 5-6 specifies that GPT-4 shall be used and that the model is allowed a high degree of variability (temperature ![]() $=1$). Lines 8-9 are the functional equivalent of experimental instructions. The two terms enclosed in double braces also define the “treatments”: which president and which time? This yields a 2

$=1$). Lines 8-9 are the functional equivalent of experimental instructions. The two terms enclosed in double braces also define the “treatments”: which president and which time? This yields a 2![]() $\times$2 factorial design. Note that this allows for dynamic prompts, a theme that will recur later. The concluding block of code defines the actual experiment: (a) all possible combinations of the parameters are to be tested (factorial), (b) which persons and which time periods are of interest. The last line calls the agent function, specifies that only numbers in the output shall be reported, and defines the number of repetitions.Footnote 5 Table 1 reports the results (from 5 independent draws).

$\times$2 factorial design. Note that this allows for dynamic prompts, a theme that will recur later. The concluding block of code defines the actual experiment: (a) all possible combinations of the parameters are to be tested (factorial), (b) which persons and which time periods are of interest. The last line calls the agent function, specifies that only numbers in the output shall be reported, and defines the number of repetitions.Footnote 5 Table 1 reports the results (from 5 independent draws).

Complete code for a machine microexperiment

2.3. Designing LLM experiments through a web application

Every researcher who has used oTree to program an experiment knows that coding from scratch is challenging. Even experienced oTree users cannot directly implement experiments with LLM participants. While alter_ego offers an all-Python solution for advanced purposes (explained in later sections), we provide an easier alternative for experiments exclusively involving LLM participants: our builder. The builder is available at https://ego.mg.sb/builder/.

The builder requires minimal Python knowledge compared to traditional microexperiment code (see Figure 1). To run an experiment, a single terminal command suffices: ego run built. The experimental design is imported from built.json.Footnote 6 The experimenter does not need to write this JSON code manually. Using the “Export or import scenario” functionality, experimenters can export their design as JSON or import existing configurations to modify them. This bidirectional approach facilitates collaborative research and iterative refinement.

When the builder’s capabilities suffice, designing an experiment consists of filling out fields on the web app. The following elements can be configured:

• Participants (Threads)

– Number of participants

– LLM model for each participant

• Treatments

– Random assignment of Threads to treatments

• Rounds

– Currently supports partner design

• Variables across treatments

– Treatment-conditional instructions

– Choice variables

– Framing variations

– Payoff structures

• Instructions (prompts)

– System prompts (initialize the conversation)

– User prompts (per round)

– All prompts can be conditional on treatment, role, and other variables

• Response processing (filters)

– Extract relevant data from LLM responses for export

Two features make the builder particularly convenient. First, prompts support dynamic content through Jinja2 templating.Footnote 7 This allows prompts to reference variables, treatments, and other participants’ choices. For example, the prompt template Welcome, your name is {{name }}, and you are playing with {{other.name }} automatically personalizes for each participant. For Alice playing with Bob, this becomes “Welcome, your name is Alice, and you are playing with Bob.”

Second, filters process LLM responses into analyzable data. Instead of exporting verbose raw responses, experimenters can extract numbers, match predefined strings (like “ACCEPT” or “REJECT”), or parse structured formats. This ensures data is immediately suitable for statistical analysis.

Experiments built with the builder include automatic CSV export. The command ego data built [experiment-id] ![]() $ \gt $ data.csv exports all experimental data, including choices, treatment assignments, and response metadata. Both the builder interface and our tutorial videos provide additional details.

$ \gt $ data.csv exports all experimental data, including choices, treatment assignments, and response metadata. Both the builder interface and our tutorial videos provide additional details.

2.4. Coding with Python

If experimenters want to design experiments requiring even more flexibility than offered by our builder, they can directly code the experiment in Python while still exploiting the capabilities offered by alter_ego. In the companion video to this section (https://youtu.be/WHW0gkT-oHE), we explain step by step how the example experiment used to illustrate the capability of the builder can be coded manually.Footnote 8 Experimenters with more extended Python experience can also use this tool to carry the output forward to a Python package for data analysis such as Pandas (pandas.pydata.org) or Polars (www.pola.rs).

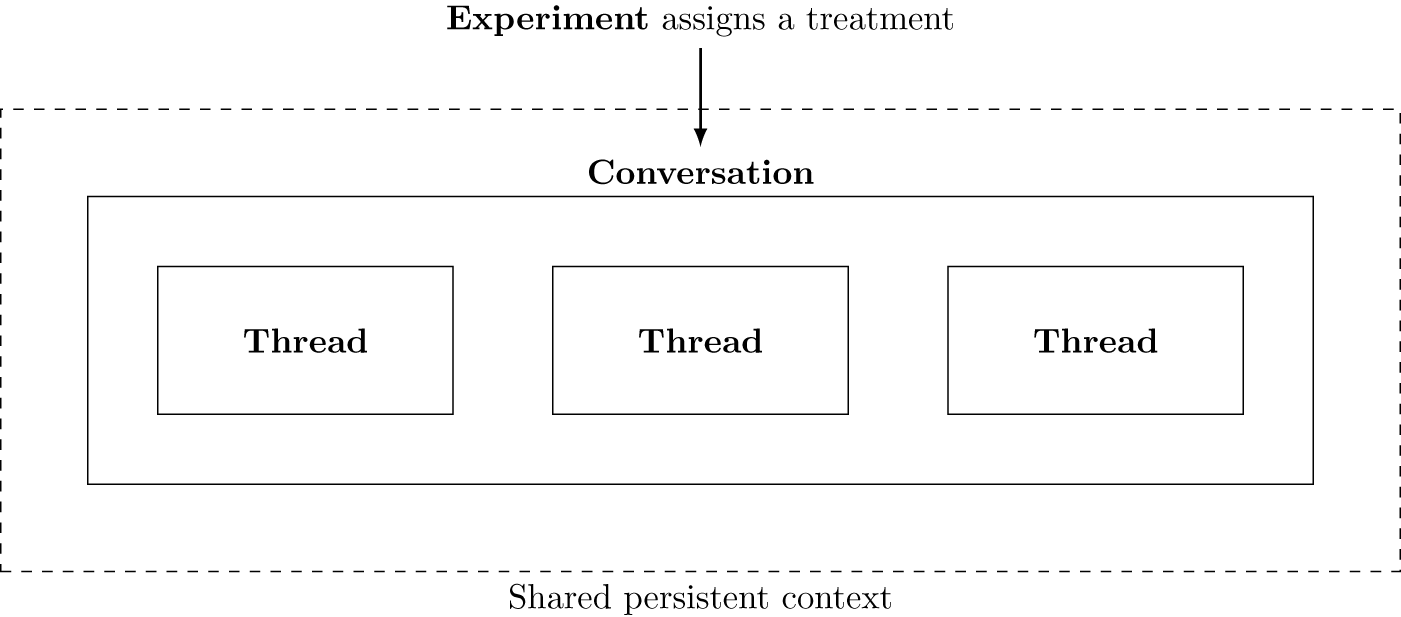

To better understand the architecture of the tool, it is useful to consider Figure 2: Each participant is represented by a Thread. One interaction of multiple participants constitutes a Conversation. The tool makes it possible to assemble multiple instances of interaction in an Experiment, which assigns a treatment to the Conversation.

Architecture of the tool—these elements represent Python classes

2.5. Human–machine interaction: integrating oTree

An important frontier of experimental research is the interaction between humans and machines, not least because machines impact ever more parts of social life. alter_ego makes such experiments possible by introducing LLM functionality into the oTree framework (Chen et al., Reference Chen, Schonger and Wickens2016). As this experimental software is widely used, many users will already be at least somewhat familiar with oTree.

Users who are fluent with oTree may find it appealing if the implementation of data generation happens within the oTree environment, even if using that environment would not be strictly necessary. For such users, we also provide the code for a simple oTree app that has a human chat with GPT (https://github.com/mrpg/ego/tree/master/otree/ego_chat). In computer science parlance, we provide a “façade” (e.g., Gamma et al., Reference Gamma, Helm, Johnson and Vlissides1994, sec 4.5) from oTree to alter_ego.

3. Putting the tool to good use: Do machines react to framing?

In this section, we report on an experiment that puts our tool to good use. We begin with the research question. We test two versions of the game. With the first experimental design, we investigate whether LLMs are subject to framing effects when interacting with another instance of the LLM. In the second design, we let one LLM agent interact with one human participant.

3.1. Research question: Are large language models subject to framing?

A robust experimental literature demonstrates that human participants are sensitive to framing: results systematically differ depending on how the same incentive structure is presented (Kühberger, Reference Kühberger1998; Levin et al., Reference Levin, Schneider and Gaeth1998; Dreber et al., Reference Dreber, Ellingsen, Johannesson and Rand2013). The power of LLMs originates in the richness of human language. It is therefore conceivable that LLM choices also depend on how a choice problem is presented to them. On the other hand, a main goal in the continuous improvement of large language models is making them more “accurate” (see only OpenAI, 2023, 3-6), including the hope that they might outperform human competitors.Footnote 9 A side effect of these efforts at improving language models might be that they are less sensitive to framing than humans.

Arguably, the effect of framing results from contextual cues that trigger descriptive and normative beliefs (and beliefs about beliefs) about behavior (Dufwenberg et al., Reference Dufwenberg, Gächter and Hennig-Schmidt2011). Beliefs matter in prisoner’s dilemma games because many human players choose to cooperate if they believe that the opponent cooperates, and because, in sequential or repeated game contexts, selfish players may have an incentive to trigger positive reciprocity from other players if they are believed to be conditionally cooperative (Ockenfels, Reference Ockenfels1999; Fischbacher et al., Reference Fischbacher, Gächter and Fehr2001). If such beliefs are affected by framing, we expect to see different cooperation rates across our conditions with machine participation. To test machines’ responsiveness to framing, we adapt an experiment that one of the authors has run with human participants. In that sequential prisoner’s dilemma, cooperation was significantly reduced when the game was framed as one of competition and, non-significantly, when the game was framed as facing a joint enemy (Engel & Rand, Reference Engel and Rand2014).

Specifically, we implemented a 2x2 sequential prisoner’s dilemma (Bolle & Ockenfels, Reference Bolle and Ockenfels1990; Clark & Sefton, Reference Clark and Sefton2001; Ahn et al., Reference Ahn, Lee, Ruttan and Walker2007) with binary action space and payoffs as in Table 2:

Payoffs

Following Engel and Rand (Reference Engel and Rand2014), the game was presented sequentially, and repeated 10 times, which was commonly known. We manipulated the frame: neutral (“In this experiment, you are together with another participant …”), joint enemy (“you and another participant … have a joint enemy”), and competition (“you are competing against another participant”; see Section B.3 in the Appendix for full instructions).

Our null hypothesis, ![]() $H_0$, is based on subgame perfect equilibrium predictions for rational and selfish players and predicts no cooperation across all conditions. However, as outlined above, machines and humans are known to cooperate, and humans are known to respond to framing. Consequently, with our alternative hypothesis

$H_0$, is based on subgame perfect equilibrium predictions for rational and selfish players and predicts no cooperation across all conditions. However, as outlined above, machines and humans are known to cooperate, and humans are known to respond to framing. Consequently, with our alternative hypothesis ![]() $H_1$, we predict a positive degree of cooperation that differs depending on the framing of the social conflict.Footnote 10

$H_1$, we predict a positive degree of cooperation that differs depending on the framing of the social conflict.Footnote 10

3.2. Machines interacting with machines

For the experiments that test LLMs, in a second dimension, we manipulated the LLM platform, using either GPT-3.5 (turbo) or GPT-4. Framing effects are influenced by factors that can vary between different “social” groups that do or do not share the same understanding of contextual cues, or in their degree of rationality or selfishness. Yet as both GPT-3.5 and GPT-4 capitalize on the same training data,Footnote 11 we do not expect differences when either two instances of GPT-3.5 or two instances of GPT-4 interact with each other. This is why our alternative hypothesis, ![]() $H_2$, is that, while cooperation is possible, the impact of framing does not systematically differ across the two different versions of GPT, our equivalent of a population difference.

$H_2$, is that, while cooperation is possible, the impact of framing does not systematically differ across the two different versions of GPT, our equivalent of a population difference.

We had 200 groups of 2 instances of GPT, respectively, interacting over 10 periods.Footnote 12 For the reasons explained above, we set temperature to 1, to generate a distribution of responses.

Before we report results, we elaborate on the technical implementation, which is the main reason for writing this paper. We have chosen the design of the (machine–machine version of the) experiment such that it can be implemented with our builder. In Section B.2 of the Appendix, we provide a URL to the resulting code. Interested readers can run the experiment by putting the file built.json into their current working directory and running the experiment from the terminal using ego run built. The program will randomly assign one of the three frames. The data will be stored in folder out under the computer-generated ID of the current run. Experimenters with deeper knowledge of Python may be interested in exploring the alternative version of the machine-only experiment that we programmed manually, which is available on GitHub.Footnote 13

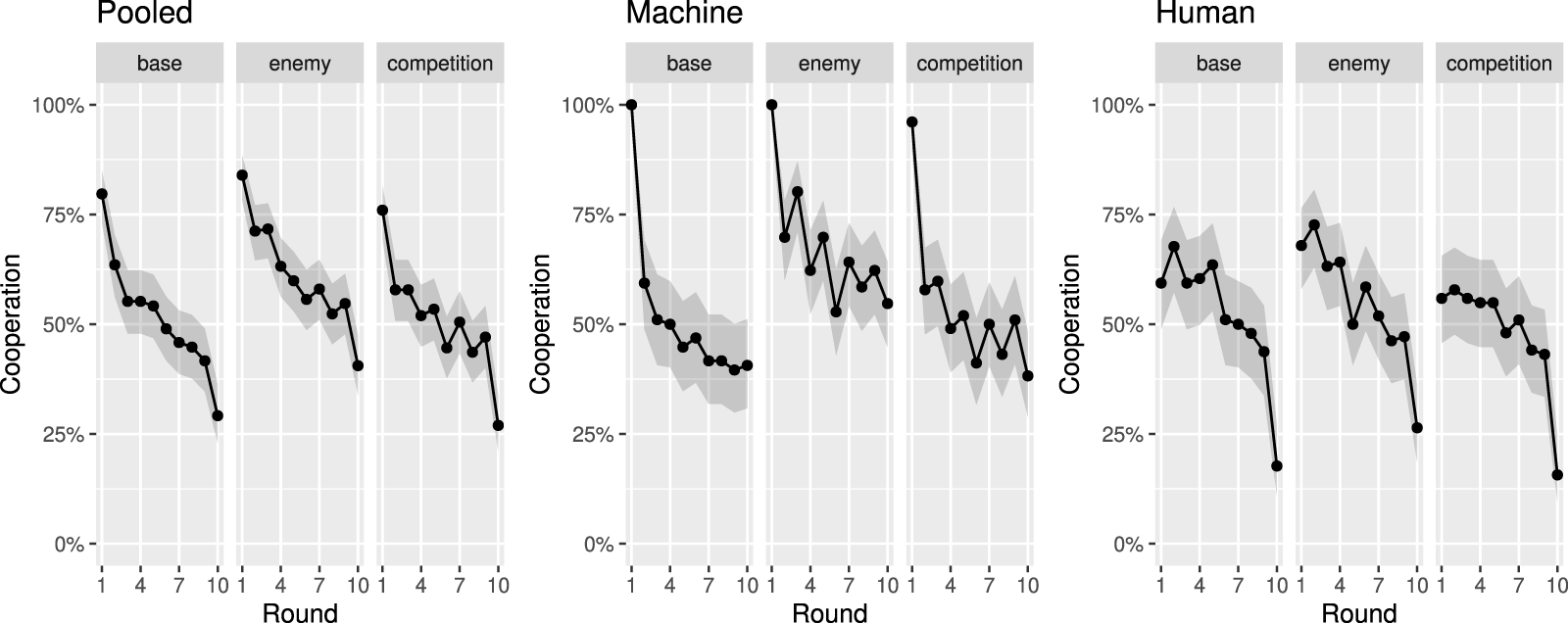

Figure 3 shows significant machine cooperation, which rejects ![]() $H_0$, as expected. On neither platform (GPT-3.5 or GPT-4) and in no frame is the cooperation rate of GPT interacting with another instance of GPT close to 0. In the Appendix (Table 4), we report the upper and lower limits for cooperation rates per condition and round that we cannot exclude at the 5% level. The lower level is never below 30%.

$H_0$, as expected. On neither platform (GPT-3.5 or GPT-4) and in no frame is the cooperation rate of GPT interacting with another instance of GPT close to 0. In the Appendix (Table 4), we report the upper and lower limits for cooperation rates per condition and round that we cannot exclude at the 5% level. The lower level is never below 30%.

Cooperation conditional on platform and round

Figure 3 shows that framing matters: when the game is framed as either jointly protecting against an enemy or, in particular, as competing with each other, GPT cooperates substantially less with another machine compared to the (unframed) base treatment.Footnote 14 Table 3 provides summary statistics by platform and frame. Against our expectation, machine platform matters: while the ranking of cooperation across frames is stable, the framing effects are more pronounced when implementing the experiment on GPT-4.Footnote 15 Providing a conclusive explanation for this unexpected result is beyond the scope of this paper. But in retrospect, it seems to resonate with changes OpenAI advertised when launching GPT-4 (OpenAI, 2023): the newer model is meant to be more accurate than GPT-3.5 (p. 3), less subject to hindsight bias (p. 4), closer to prevalent results from microeconomics (p. 5), and more likely to suppress sensitive or even disallowed prompts (p. 14). The stronger response to our frames could therefore result from making text output more “normative.”Footnote 16

Mean percentage of cooperative choices per platform and frame, aggregated over all rounds

3.3. Machines interacting with human participants

In the human–machine version of our experiment, machines are first-movers and humans are second-movers. For the LLM agents, we used GPT-4. Hence in this experiment, we have only three conditions, one with the base, the enemy, and the competition frame, respectively. We had 96 groups without a frame (base), 106 groups with the enemy frame, and 102 groups with the competition frame. This experiment was conducted in English at the Cologne Laboratory for Economic Research in August 2023. Humans were incentivized while machines were not. We discuss machine incentives in our concluding section. Lab participants were invited to the experiment knowing that it would take approximately 15 minutes and that they would be allowed to start the experiment at any time between 10 AM and 2 PM on a freely chosen day of the experiment. All participants were confirmed students at universities in Cologne; their field of study varies widely. The modal field of study was business administration, and the modal year of birth was 1999. All rounds were paid. The final payment ranged from €2.20 to €6.28 (average: €4.09). At the time of the experiment, the local minimum wage was €12 per hour, or €3 per 15 minutes.

As explained in Section 2, to test human–machine interaction, one must integrate Python with oTree.Footnote 17 The experiment was conducted entirely online and remotely. All code for the experiment is available at our GitHub repository; see the Appendix for all instructions. Note that subjects received information about their history of play with their assigned LLM.Footnote 18

As LLMs capitalize on human language and experience, with our hypothesis ![]() $H_3$, we do not expect differences in how machine and human cooperation respond to framing.

$H_3$, we do not expect differences in how machine and human cooperation respond to framing.

Figure 4 reports cooperation rates. Comparing Figure 3 with the left panel of Figure 4 immediately shows that adding human participants diminishes cooperation, which is strongly confirmed statistically.Footnote 19 As the middle panel of Figure 4 shows, when interacting with humans, machine cooperation rates of (machine) first movers are still relatively high in the first round, yet they react strongly when the human counterpart defects, as many do (right panel of Figure 4): In the baseline, 40.6% of human participants defect in the first round; in the enemy condition, 32.1% do; and in the competition condition, 44.1% do. Machines reciprocate defection and, as a result, human defectors end up with much smaller payoffs than they could have earned by cooperating: the payoff of human participants who cooperated (defected) in the first round was 169 (102) in baseline, 176 (160) in enemy, and 174 (118) in competition. All differences are significant.Footnote 20

Cooperation conditional on platform, round, and identity of the player (machine vs. human)

The impact of framing is much less pronounced in the mixed group, and now the enemy frame triggers the highest cooperation rate. This framing effect is driven by machine choices (Table 4 in the Appendix). Arguably, the human–machine interaction itself is a powerful frame that dominates other, more subtle details of the game’s presentation. A possible explanation is that, in human–machine interactions, there is a stronger mismatch in commonly shared norms, leading to greater uncertainty about what can be expected from each other, mitigating the framing effects that we see within groups of either only machines or only humans.

4. Discussion

LLMs have the potential to profoundly change, substantially enrich, and radically facilitate experimental economics research. Yet to fully leverage this potential, researchers need a toolkit that is easy and free to use, based on well-established norms and standards in experimental economics, that can be tailored to almost all specific tasks of interactive decision-making among machines, and that can be used for experiments in which human participants interact with machines. Providing such a toolkit is the main contribution of this paper. Our tool allows researchers to efficiently sample LLMs. Two very accessible and intuitive versions of our tool empower experimenters with little Python experience to run a wide variety of machine–machine experiments (Sections 2.2 and 2.3). For experimenters with greater Python versatility, we offer an even more flexible version of the tool (Section 2.4). Finally, we integrate our tool with oTree (Section 2.5), which is particularly appealing for experimenters who want to test human–machine interaction.

Our illustrative experiment provides important insights into machine behavior and human–machine interaction. We find strong framing effects in machine-only treatments that are partly similar to those expected from previous human-only treatments, yet they tend to be even more pronounced among machines. Perhaps surprisingly, framing effects are less pronounced and qualitatively different when machines interact with human participants. We find that machines respond very sensitively to human defection and that many humans fail to anticipate that machines punish exploitative strategies. This suggests that there is a mismatch in what these different classes of actors expect from each other, making coordination on a shared norm more difficult.

Understanding that framing matters differently in machine–human versus machine–machine and human–human interaction is crucial for interpreting experiments involving human–machine interactions. It seems promising to integrate different numbers of machines, from zero to ![]() $n$, as occurs in the field, to study such effects. We provide the toolkit for this endeavor.

$n$, as occurs in the field, to study such effects. We provide the toolkit for this endeavor.

One important line of future research is machine incentives. In experiments with human subjects, preferences are typically induced through monetary incentives (Smith, Reference Smith1976). However, machines, including GPT, operate based on an objective function defined during training, making it difficult to financially incentivize them in any given experiment due to the absence of personal desires such as money, prestige, or other human rewards in machines. Johnson and Obradovich (Reference Johnson and Obradovich2022) attempted to address this by compensating the parent company OpenAI according to machine behavior. However, this cannot affect machines the way money affects humans, and it remains untested whether this approach actually influences machine preferences and, if so, how.

An alternative would be to explicitly instruct machines to maximize their game payoff. If such preference induction were successful, we would perhaps learn something about the machine’s computational capability and its beliefs about how humans respond to machine behavior, but we could not learn anything about how the machine would naturally behave across game framings, which is our research question. Similarly, while we could in principle also guide machine behavior through fine-tuning or simulated environments, we were interested in GPT’s “genuine” choices based on its knowledge at the onset of the experiment, not in what we can train it to do. Of course, it is well known that any version of GPT is heavily fine-tuned before release using a technique called “Reinforcement Learning from Human Feedback” (e.g., OpenAI, 2023). That said, our tool could be used to study the effectiveness and impact of various approaches to incentivize machines. For instance, do machines exhibit more or less care when a human, charity, political party, or the parent company is compensated on the machine’s behalf? This research will be essential for understanding the role of incentives for machine behavior in various applications requiring interactions with humans or other machines.

While large language models are a promising new tool in the kit of experimental economics, professional standards for their use must still be developed. As our framing application shows, results may vary substantially depending on the choice of language model (GPT-3.5 vs. GPT-4 in our application). Very likely, results are sensitive not only to framing (which we tested) but also to other, seemingly more minor, differences in prompts. Other model parameters (like temperature) are also likely to matter. Such sensitivity not only calls for preregistrations that include all model parameters. One must also worry about the fact that most large language models (including the currently most powerful GPT model) are proprietary. There is no guarantee that older models will remain available for an extended period. Even if most of the training data is frozen (as in the two large language models that we tested), these models are meant to learn over time from the queries of their users.Footnote 21 For all these reasons, results generated with the help of (these)Footnote 22 large language models are strictly speaking not replicable.

Our framing results, in conjunction with other studies, indicate that in certain domains, machine behavior might help experimenters build hypotheses about human behavior. Should this prove robustly true in some domains, easily implementable pilot experiments with machines might offer a cost-effective and efficient method to guide and inform subsequent human subject research. Leveraging the potential predictive power of machines could help guide the choice and design of human subject experiments, ultimately leading to more robust and generalizable findings. Our toolkit facilitates the implementation of even large-scale endeavors involving thousands of player roles across dozens of experimental settings that differ substantially in complexity of interaction, making it easily accessible and available to everyone, paving the way for new discoveries that deepen our understanding of both human and machine behavior.

Data availability statement

The replication material for the study is available at https://doi.org/10.7910/DVN/SPJFJ5 (Harvard Dataverse).

Our code is available at github.com/mrpg/ego.

Acknowledgements

We thank Heinrich Nax, Christoph Schottmüller, Tobias Werner, our beta-testers, referees, and audiences in Cologne, Erfurt and Pisa for helpful comments, and Simon Weidtmann for excellent research assistance. All remaining errors are our own. Funding by the German Research Foundation (DFG) under Germany’s Excellence Strategy (EXC 2126/2 – 390838866 ) is gratefully acknowledged.

Competing interests

The authors have no competing interests to declare that are relevant to the content of this article.

Ethical standards

Ethics approval was obtained by the Ethics Council of the Max Planck Society within the framework of the General Approval for Procedures, Experiments and Projects Following the Protocol that is Standard in Experimental Economics.