Creativity, or the capacity to generate novel and useful ideas, solutions, or products (Runco & Jaeger, Reference Runco and Jaeger2012; Stein, Reference Stein1953), is a fundamental psychoeducational concept. Much effort has been dedicated to its assessment to identify and foster creative talent (Kaufman et al., Reference Kaufman, Plucker and Baer2008; Runco & Acar, Reference Runco and Acar2024b). Divergent thinking (DT) tasks have been the center of these efforts because they resemble key aspects of challenges that require creative thinking: they are ill-defined, open-ended, and devoid of a single correct solution. Whereas creativity is clearly broader than DT (Cropley, Reference Cropley2006), these tasks have been popular measures of creativity due to their objectivity compared to self-reports and greater association with measures of creative achievement than intelligence tests on average (Gerwig, et al., Reference Gerwig, Miroshnik, Forthmann, Benedek, Karwowski and Holling2021; Kim, Reference Kim2008).

DT tasks are either verbal or nonverbal (Plucker et al., Reference Plucker, Meyer, Liu, Runco and Acar2024; Runco & Acar, Reference Runco, Acar, Runco and Acar2024a). Examples of verbal DT tests include Alternate Uses (Guilford et al., Reference Guilford, Merrifield and Wilson1958; “List different uses for a brick”), Instances (Wallach & Kogan, Reference Wallach and Kogan1965; List things that move on wheels), Similarities (Wallach & Kogan, Reference Wallach and Kogan1965; “How are carrots and refrigerator alike”), and Consequences (Guilford et al., Reference Guilford, Christensen and Merrifield1958; What would happen if people had a sixth finger). These tasks present one or several verbal, open-ended prompt(s), instructing participants to generate many, different, and/or creative responses (see Acar et al. (Reference Acar, Runco and Park2020) for various instructions used). Then, these responses are often scored for fluency (number of responses), flexibility (variety of responses), originality (unusualness, cleverness, and remoteness of responses), and elaboration (degree of detail and elegance of the responses), as well as sometimes for quality and appropriateness (usefulness).

Frequently, researchers select DT items without considering the nature of the prompts they selected. Because DT tasks are open-ended, the nature of the responses, which are used to obtain creative potential estimates, is potentially dependent on the prompts. In other words, the nature of the responses may be different when “cup” is selected as the Alternate Uses test prompt rather than “brick.” If this is true, such an item selection should be made deliberately, and factors influencing the scoring outcomes should be considered. Although this problem applies to any kind of DT test item, verbal DT tests allow objectively examining prompt-related factors due to the availability of psycholinguistic metrics of test prompts such as word frequency and age of acquisition (Forthmann et al., Reference Forthmann, Gerwig, Holling, Çelik, Storme and Lubart2016; Ogurlu et al., Reference Ogurlu, Acar and Ozbey2023). This information is important because the selection of the tasks can be more deliberate. Some psychometric outcomes can be estimated before collecting the data, such as the reliability of the scores and the extent to which items are at the same difficulty level. This knowledge can also be used to determine the tasks used in pre- and post-assessments to ensure that at least some test prompts’ qualities are constant (Kaya & Acar, Reference Kaya and Acar2019). In this study, we focus on elementary school children’s responses to a DT test and examine if psycholinguistic characteristics of the verbal DT prompts can predict the originality of the responses.

Literature review

Extant literature has documented evidence linking psycholinguistic characteristics to aspects of creativity, such as ideational fluency. In one early study by Cofer and Shevitz (Reference Cofer and Shevitz1952), who used word association tests (similar to DT tests in that both are open-ended), the number of associations made was positively related to the presented prompts’ word frequency count within the given language. In other words, prompts with greater frequency are linked to greater number of responses produced. Adapting the Remote Associate Tests, Vitrano et al. (Reference Vitrano, Altarriba and Leblebici-Basar2021) tested the same effect by considering the stimuli’s word frequency and concreteness (vs abstractness). They found a positive relationship between word frequency and the number of associations made, but there was no difference in terms of concreteness. Notably, these two tasks allow associations and thus provide a fluency score, but they are not true DT tasks.

Past research with fluency scores from DT tests provided somewhat mixed evidence. Forthmann et al. (Reference Forthmann, Gerwig, Holling, Çelik, Storme and Lubart2016) found that high-frequency objects generated more ideas than low-frequency ones. Additionally, they investigated the effect of explicit instructions (to be fluent versus to be creative) on fluency, revealing that high-frequency words led to significantly more ideas in the be-fluent condition than in the be-creative condition. However, no difference in fluency was observed for low-frequency words, regardless of the instructions. However, Forster (Reference Forster2008) reported no statistically significant relation between word frequency metrics and fluency in the Alternate Uses Test. However, words with more common uses were found to have lower originality. The inconsistent findings in Forthmann et al. (Reference Forthmann, Gerwig, Holling, Çelik, Storme and Lubart2016) and Forster (Reference Forster2008) on originality may be explained by different factors, such as how the DT tests were administered (explicit instructions, time; Acar et al., Reference Acar, Runco and Park2020; Paek et al., Reference Paek, Alabbasi, Acar and Runco2021), how word frequency was operationalized (lemma frequencies vs word form frequencies; Brysbaert & New, Reference Brysbaert and New2009), and linguistic and cultural differences (German vs. English).

Later, Ogurlu et al. (Reference Ogurlu, Acar and Ozbey2023) conducted a meta-analysis of studies using Alternate Uses Test items and found that fluency is positively related to word frequencies when Alternate Uses Test items are administered with shorter time limits. In other words, using Alternate Uses Test items with higher word frequencies allows for more responses when these items are given with strict time limits. Ogurlu et al. (Reference Ogurlu, Acar and Ozbey2023) explored some other psycholinguistic characteristics besides word frequency, such as word length, bigram, semantic diversity, lexical decision latency, and age of acquisition, and none of them were significant. Importantly, these psycholinguistic characteristics may predict performance on other aspects of DT, such as originality or flexibility, and such predictions can be made for different kinds of DT tests, such as Instances rather than Alternate Uses Test alone.

In the present work, we focus on originality and examine if the originality of produced responses is related to the psycholinguistic characteristics of their prompts. Wilson et al. (Reference Wilson, Guilford and Christensen1953) defined original ideas as those that are unusual, clever, and remote. Such ideation can be effectively captured by DT tests, allowing for response generation with varying levels of originality. The importance of originality has been underlined in both empirical and conceptual work. Among different aspects of DT (e.g., fluency, flexibility, originality, and elaboration), originality (which is sometimes interchangeably used with novelty) is the one that is most strongly tied to creativity in empirical work (Acar et al., Reference Acar, Burnett and Cabra2017; Diedrich et al., Reference Diedrich, Benedek, Jauk and Neubauer2015), and it is also considered the backbone of creativity (Rothenberg & Hausman, Reference Rothenberg and Hausman1976; Runco, Reference Runco1988). Runco et al. (Reference Runco, Illies and Eisenman2005) stated that “originality is a necessary part of creativity, but creative things are more than just original” (p. 137).

Conceptual framework

Psycholinguistic characteristics of verbal DT prompts can predict the originality of the responses for various reasons. One key concept to link originality to psycholinguistic characteristics is prompt openness. Ogurlu et al. (Reference Ogurlu, Acar and Ozbey2023) defined openness as “the potential of an open-ended test prompt to lead to a greater or lower ideational fluency, originality, and flexibility due to the item characteristics” (p. 3). Runco and Albert (Reference Runco and Albert1985) argued that the level of openness of a DT item can enhance ideational originality due to a greater level of divergence and associative pathways. Openness can be seen in the figural DT test items as the degree to which the prompts resemble something specific or not (Runco et al., Reference Runco, Abdulla, Paek, Al-Jasim and Alsuwaidi2016). Figural prompts may be considered more open when they do not resemble anything (e.g., an object) specific. Witczak et al. (Reference Witczak, Krzysik, Bromberek-Dyzman, Thierry and Jończyk2024) compared performance on verbal Alternate Uses Test prompts to the performance when an image is added to the verbal prompt. This disambiguation led to higher fluency and lower originality, which is consistent with Runco and Albert’s (Reference Runco and Albert1985) prediction regarding originality. Verbal DT prompts may be considered more open depending on the degree to which the verbal prompt involves words or concepts that are easier to retrieve from memory and one’s familiarity with the prompt. Psycholinguistic characteristics can reflect and capture these characteristics of the prompts. Using words with a greater number of word frequency or semantic flexibility may then allow for more associative pathways to follow. According to this view, word frequency is a positive predictor of originality, like ideational fluency, as the verbal prompts provide more freedom for ideational exploration. This is also consistent with the extended effort hypothesis (Parnes, Reference Parnes1961) that views originality as a direct outcome of fluency. Past work has shown that word frequency is a positive predictor of originality, and the factors that enhance fluency could also enhance originality. In other words, selecting a highly open verbal DT item will likely trigger more responses (higher fluency), and having more ideas supply more original responses.

Another relevant concept is the path of least resistance (Finke et al., Reference Finke, Ward and Smith1992). This theory suggests that individuals generate ideas that come to mind easily and often stop when generating ideas becomes more challenging. However, the most straightforward and most accessible ideas are usually the least creative, which also explains why responses generated for DT tasks are progressively more creative (Christensen et al., Reference Christensen, Guilford and Wilson1957). One could expect a negative relationship between word frequency and originality because prompts with a higher word frequency value may indicate lower “resistance” (higher accessibility), resulting in the generation of quickly accessible and ordinary responses. Supporting this point, Forster (Reference Forster2008) found that when prompt objects were familiar and lacked distinctive features, the originality of the responses decreased. Other factors, such as explicit instructions and respondents’ task commitment, can also play a major role. The potential negative impact of the path of least resistance on originality may be mitigated by explicit instructions or specific strategies such as brainstorming (Rietzschel et al., Reference Rietzschel, Nijstad and Stroebe2014), especially if the respondents aim to show their maximal performance.

Memory functions and experiences are at the nexus of the relationship between psycholinguistics and originality. On the one hand, memory is integral to forming psycholinguistic processes such as comprehension, production, and language acquisition (Harley, Reference Harley2013; Menn & Dronkers, Reference Menn and Dronkers2016). Many psycholinguistic metrics, such as the age of acquisition and lexical decision latency, rely on memory functions or its formation and development. On the other hand, overall, memory functions are positively related to creativity (Gerver et al., Reference Gerver, Griffin, Dennis and Beaty2023; Madore et al., Reference Madore, Addis and Schacter2015). Research showed that performance on the Alternate Uses Test is influenced by retrieval from episodic and semantic memory (Hass & Beaty, Reference Hass and Beaty2018) and broad retrieval ability (Miroshnik et al., Reference Miroshnik, Forthmann, Karwowski and Benedek2023; Silvia et al., Reference Silvia, Beaty and Nusbaum2013). Accordingly, Runco and Acar (Reference Runco and Acar2010) found that original ideation benefits from both personal and social (vicarious) experiences. Conversely, Forster (Reference Forster2008) found a negative relationship between familiar prompt objects without distinctive features and the originality of the responses. Mixed findings regarding the impact of psycholinguistic characteristics on originality require further investigation. For example, Runco and Acar (Reference Runco and Acar2010) counted the number of original responses rather than assessing the average originality of the responses, which controls for fluency confound (Clark & Mirels, Reference Clark and Mirels1970). Thus, one key consideration is an assessment of originality that is not confounded by fluency. Likewise, Forster’s (Reference Forster2008) findings relied on subjectively rated familiarity with the objects rather than a standard word frequency metric, although these two were correlated.

Psycholinguistic indicators

Psycholinguistic characteristics are broad, and some are more relevant to our investigation. One key characteristic is word frequency, which is defined as a word’s frequency of occurrence in language. This is valuable because the frequency with which a word appears is one of the most reliable indicators of its processing efficiency (Brysbaert et al., Reference Brysbaert, Mandera and Keuleers2018). Frequently occurring words within a language are more familiar; therefore, they are processed more quickly and recalled faster, while they may not be as easy to recognize as low-frequency words (Monsell et al., Reference Monsell, Doyle and Haggard1989; Yonelinas, Reference Yonelinas2002). Because DT items are open-ended and involve memory processes, word frequency metrics (e.g., British National Corpus Consortium, 2007; Brysbaert & New, Reference Brysbaert and New2009; Davies, Reference Davies2008) may influence the quantity and quality of the responses (Dumas et al., Reference Dumas, Organisciak and Doherty2021; Forthmann et al., Reference Forthmann, Gerwig, Holling, Çelik, Storme and Lubart2016; Ogurlu et al., Reference Ogurlu, Acar and Ozbey2023).

Another key psycholinguistic factor is semantic diversity (Hoffman et al., Reference Hoffman, Lambon Ralph and Rogers2013), which indicates the range of contexts in which a word can be used. Words with greater semantic diversity tend to have more meanings than those with lower diversity. This variability can enhance word recognition (Hoffman & Woollams, Reference Hoffman and Woollams2015), even when frequency, document count, and age of acquisition are accounted for. Words with high semantic diversity are more straightforward to process than those with low diversity, even after controlling for frequency (Pagán et al., Reference Pagán, Bird, Hsiao and Nation2020). The semantic diversity of a verbal prompt indicates the number of potential associative pathways available during ideation and can influence the diversity or uniformity of the responses. Theoretically, using words with lower semantic diversity will restrict the ideational pathways, leading to response similarity and lower originality. It is also possible that the semantic diversity of the prompt may influence whether the respondents utilize flexibility or persistence pathways more effectively (Nijstad et al., Reference Nijstad, De Dreu, Rietzschel and Baas2010). The flexibility pathway may be more often used with verbal prompts with a high semantic diversity, whereas the persistence pathway may be more important with verbal prompts with a lower semantic diversity.

Age of acquisition is another indicator showing the age at which a word is typically learned (Morrison & Ellis, Reference Morrison and Ellis2000). Low-frequency words are typically acquired later in life; therefore, there is a negative correlation between the age of acquisition and word frequency (Johnston & Barry, Reference Johnston and Barry2006). The age of acquisition of a word influences reaction times in word association and semantic categorization tasks (Brysbaert et al., Reference Brysbaert, Van Wijnendaele and De Deyne2000; Catling & Johnston, Reference Catling and Johnston2005) and performance on lexical decision latency and naming tasks (Cortese & Khanna, Reference Cortese and Khanna2007). Because the responses were produced by elementary-aged children (grades 3 through 5), the age of acquisition was included in the variable set.

Related to word frequency, we also included bigram (frequency of a letter pair in a word), item mean reaction time (mean reaction time to a word prompt), and word length (number of letters in a word) metrics in our model. Research shows that word frequency is negatively related to mean reaction time (Brysbaert et al., Reference Brysbaert, Mandera and Keuleers2018) and word length (Gerth & Festman, Reference Gerth and Festman2021). Essentially, words that are longer and or prompt a slower reaction time occur less frequently within language and by extension, could lead to restricted prompt openness. Similarly, bigrams serve as another metric of word frequency and have been shown to aid the accurate identification of low frequency words (Biederman, Reference Biederman1966; Chetail, Reference Chetail2015). Specifically, when words were shown tachistoscopically, low frequency words with low frequency bigrams in general had a lower threshold of word identification (Chetail, Reference Chetail2015), which could result in ease of recall and a correspondingly broader prompt openness. Lastly, prior research has found a significant interaction between time spent on task and frequency on participant fluency scores (Ogurlu et al., Reference Ogurlu, Acar and Ozbey2023). When time on DT task was constrained word frequency negatively impacted fluency. Due to the interconnected nature of fluency and originality within DT assessments (Parnes, Reference Parnes1961) further examination is warranted for how these psycholinguistic indicators effect response originality.

The present study

Past research has indicated word frequency predicts fluency in Alternate Uses Test performance. In this present study, we explore the impact of specific psycholinguistic characteristics of two different verbal divergent tests (Alternate Uses and Instances) on the originality of the responses produced by elementary school children. Specifically, we analyzed the following psycholinguistic variables as independent variables: word frequency, semantic flexibility, word length, bigram, item reaction time, and age of acquisition. Because children responded to multiple DT prompts, we conducted multilevel analyses to account for dependency in the dataset where responses (Level 1) are nested within individuals (Level 2).

Methods

Sample

The study sample comprised 387 elementary-aged student participants who completed the Measure of Original Thinking in Elementary School (MOTES). Student participants were collected from six branches of the same public school network located in a major southwestern metropolitan area in the United States. Data were collected during school hours in groups (n < 20) using computers and headsets. Groups were formed based on student schedules and parental consent. A single Qualtrics survey link, which included all MOTES prompts and instructions, was used to administer the assessment.

Approximately 386 third-, fourth-, and fifth-grade elementary school students (Mage = 9.36, SD = .97) completed the MOTES. Of the total sample, 137 (35.4%) were third graders, 132 (34.1%) fourth graders, and 118 (30.5%) fifth graders. The total sample consisted of 182 (47.1%) male and 205 (52.9%) female students. Concerning race and ethnicity, 125 (32.5%) were Hispanic or Latinx, 114 (29.6%) Black/African American, 60 (15.6%) White/European American, three (1%) American Indian/Indigenous identity, and eight with multiple ethnic identities (2.1%). 132 (34.1%) students were considered to be English language learners (ELLs), 60 (15.5%) were gifted and talented (GT) identified, and 49 (12.7%) received special education services. As compensation for participating, students received a toy gift.

Measure

The MOTES consists of 27 prompts across three subsequent MOTES tests: Test 1: How Do You Use It? (Alternate Uses); Test 2: What Is an Example? (Instances); and Test 3: Complete the Sentence. Each test consisted of nine items, one of which was a practice item to introduce participants to each test. For the purposes of this study, only responses from tests 1 and 2 will be utilized for further analysis because Test 3 involved prompts with multiple words, whereas Test 1 and Test 2 prompts had one root word. Additionally, one item from MOTES Test 1 (light bulb) was removed from further analysis because it is two words and thus did not have associated psycholinguistic metrics. With that in mind, this dataset’s possible number of responses was 15 × 386, or 5790. However, due to time limitations on children’s responses, there were 450 missing responses (7.8%), for a total of 5340 responses, all of which were rated for originality using automated originality scoring.

Variables

The variance of response originality scores was distinguished at two levels to examine the effects of psycholinguistics on originality scores. Level 1 refers to the individual responses and their corresponding originality scores. Level 2 refers to the participants’ overall originality performance. In essence, we seek to employ multilevel modeling to measure psycholinguistics’ relative effect on response originality while simultaneously accounting for individual differences in originality.

All of the predictors tested were Level-1 variables. They refer to variables that directly relate to the prompts themselves and, by extension, the resulting originality of responses to said prompts. These variables consist of psycholinguistic metrics collected for all 15 of the included MOTES prompts. These metrics include SubtlWF (a standardized form of word frequency), word length, semantic diversity, age of acquisition, and bigram mean. SubtlWF, which indicates word frequency per million, was collected using archival data from Brysbaert and New (Reference Brysbaert and New2009) and Brysbaert et al. (Reference Brysbaert, Mandera and Keuleers2018). Semantic diversity (SemD) scores were collected from Hoffman et al. (Reference Hoffman, Lambon Ralph and Rogers2013). SemD represents the log transformed and reversed sign of the average cosine similarity between all the contexts of a given word (Hoffman et al., Reference Hoffman, Lambon Ralph and Rogers2013). Therefore, words with a greater number of dissimilar contexts can be represented with a corresponding larger SemD. The age of acquisition (i.e., the average age in which a word is learned) data for included prompts were collected from Kuperman et al. (Reference Kuperman, Stadthagen-Gonzalez and Brysbaert2012), including ratings for 30,121 English content words. Bigram mean, or the sum of two letter sequences contained within included prompts (e.g., “BALL”) divided by the number of successive two letter sequences (i.e., BA, AL & LL) collected by the English lexicon project (Balota et al., Reference Balota, Yap, Hutchison, Cortese, Kessler, Loftis, Neely, Nelson, Simpson and Treiman2007) and included as another metric of word frequency. Word length was calculated by counting the number of letters within each of the included MOTES item prompts. In addition to psycholinguistic metrics, the type of question (i.e., DT prompt type) was included as a level 1 dummy coded variable with “Uses” prompts noted as 0 and “Instances” as 1. Finally, individual response originality served as the outcome variable at level 1 and participants average response originality served as the outcome variable at level 2. Originality scores were computed using automatic scoring following Acar et al. (Reference Acar, Dumas, Organisciak and Berthiaume2024) and Organisciak et al. (Reference Organisciak, Acar, Dumas and Berthiaume2023). All variables, except for prompt type were treated as continuous variables.

Procedure

Participants were administered the MOTES electronically using a single survey link to Qualtrics. To prepare text responses for scoring, all responses were reviewed by one of the lead researchers, who then removed double spaces and periods as well as indicated possible spelling errors. In order to best preserve student responses, no other edits were made to participant responses during preprocessing. Participants’ responses to MOTES were judged by a panel of five human raters and an automated originality scoring system. Raters were instructed to rate individually one prompt at a time on a 1 (not original) to 5 (very original) scale. They were instructed to follow a normal distribution for their ratings, with most scores being 2, 3, and 4 rather than extreme 1 or 5. As a result, raters show a high level of agreement (α = .844). In the current study, we employed automated originality scoring through the Ocsai platform (Organisciak et al., Reference Organisciak, Acar, Dumas and Berthiaume2023), which fine-tuned deep neural network-based large language models by training them on human-evaluated responses. Beyond scoring, large language models are also used for test item generation (Belzak et al., Reference Belzak, Naismith and Burstein2023). Our findings here can be used to extend the automation to the generation of test items by providing specificity around the nature of the items to select based on psycholinguistics. Automated scoring highly correlated with the human rating for How Do You Use It? (r = .79) and What Is an Example? (r = .91). Due to these high correlations and the recent shift in DT assessment scoring to automated scoring methods, only automated originality scores were used for further analysis.

Data analysis overview

The present study employs a 2-level hierarchical linear model (HLM) approach with individuals (i.e., student participants) at Level 2 and responses (i.e., ideas generated for MOTES Games 1 and 2) at Level 1. This allowed for the accounting of personal DT ability, which likely differs among study participants. It is also necessary because of the possibility of variability between item responses by the same individual and between individuals. The details of the model and the study variables are presented below. Analyses were conducted using JASP 0.19.0.0 and SPSS Statistics 29.0.1.0. SPSS Statistics 29.0.1.0 was used to compute an unconditional model and test whether HLM was appropriate for data analysis. Subsequent models were analyzed using JASP 0.19.3.0.

First, an Unconditional Model (Model 1) was analyzed to test for differences in prompt automated originality at baseline (intercept) and students’ average automated originality scores (slope). The model’s residual variances were then used to calculate an intraclass correlation coefficient (ICC), quantifying automated originality variance at the item and student levels.

After testing Model 1, bivariate correlations between predictors were assessed for high degrees of multicollinearity. All predictors of interest were put into Model 2 to test their statistical significance simultaneously with associated random effects. Then, all predictors with non-statistically significant fixed effects (i.e., p < .05) were removed to form Model 3. Following that, Model 3 was assessed for its omnibus predictive capability compared to Model 1.

The retained predictors’ random effects were then individually tested by examining the changes in model deviance relative to changes in model degrees of freedom with the assistance of a X 2 goodness of fit table. To aid in this process, Model 3 was rerun using restricted maximum likelihood to provide an appropriate deviance score to assess the effect of individual random effects being removed. This comparison model was then deemed Model 3a. Random effects were removed with replacement for each predictor until all retained predictors’ random effects could be assessed. This procedure generated seven sub-models (Model 3b, 3c, 3d, 3e etc.) which were compared against Model 3a. The sub-model with the lowest model deviance relative to the comparison model, (i.e., Model 3a) was then selected as the new comparison model (Model 4a) and retained for further analysis. This was done to limit the possibility of nonsignificant random effects over inflating model deviance and masking the significance of other random effects. As a result, the single random effect with the largest decrease to model deviance associated with its removal was considered nonsignificant and therefore removed from further analysis. For the purpose of transparency and replicability, all associated data and relevant syntax have been posted to Open Science Framework (OSF) and can be reached using the following link https://osf.io/e3gr8/.

Results

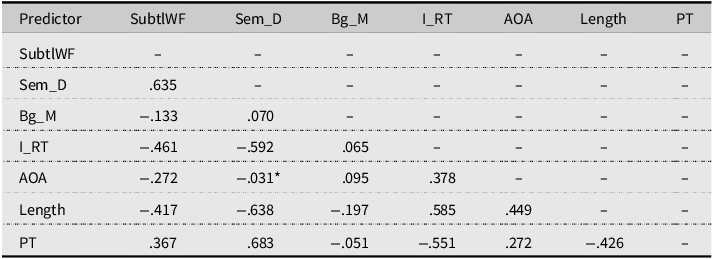

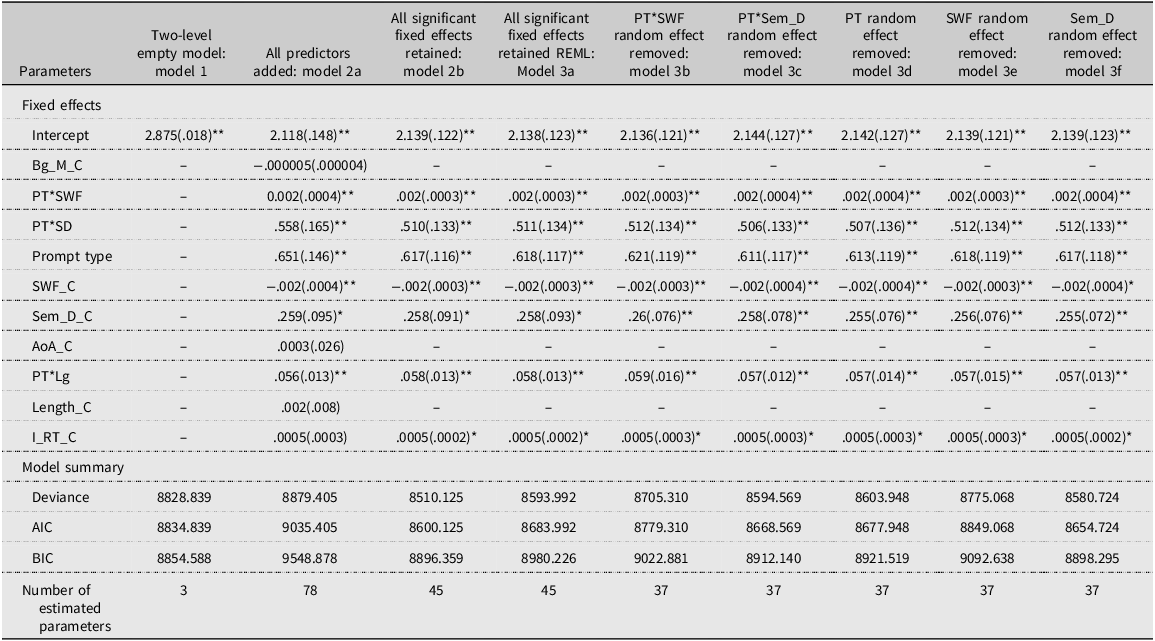

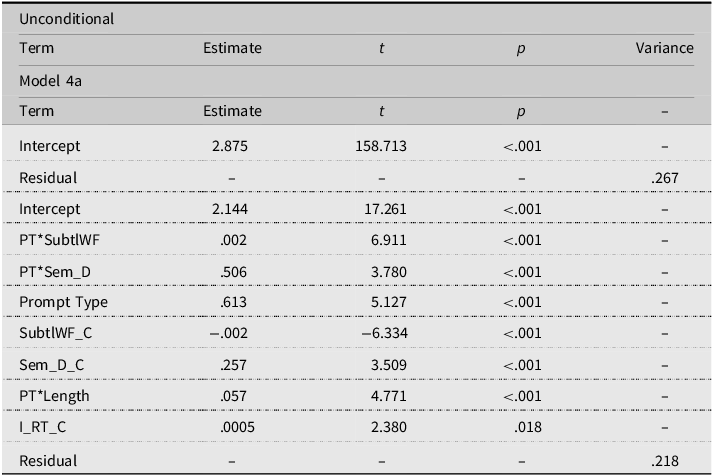

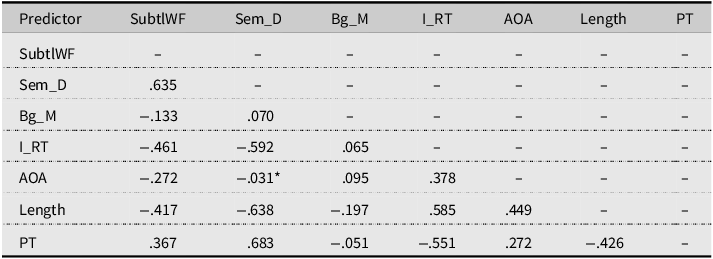

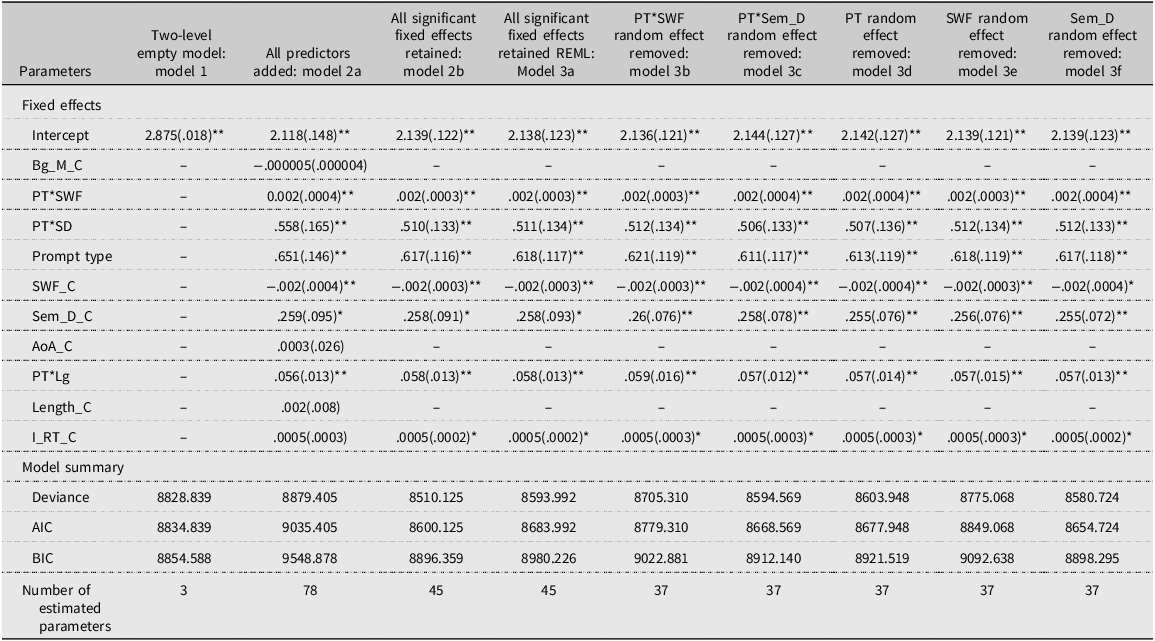

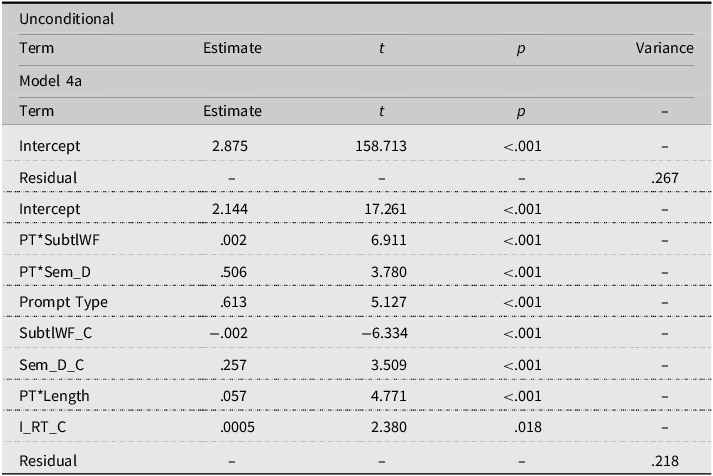

The first step of the model building was the unconditional model (Model 1; see Table 1). Because the resultant ICC (.288) was greater than .10, it indicated the need to use multilevel modeling to account for data clustering (Lee, Reference Lee2000). The unconditional model (Model 1) results suggest that the average student originality score is 2.875, with a starting residual variance of .267. Because several predictors were found to have medium to high correlation (i.e., <|.4|) (see Table 2), there was a concern for path dependency affecting the testing of subsequent models. In other words, the order in which predictors are added to subsequent models can drastically affect the significance of individual predictors and their contribution to the omnibus model. Therefore, Model 2 was comprised of all test predictors (i.e., DT prompt type, age of acquisition, SubtlWF, bigram mean, item reaction time, word length, and semantic diversity) and three interaction terms, (Prompt Type*Semantic Diversity, Prompt Type* Word Length, and Prompt Type*Word Frequency). As shown in Tables 3–5, age of acquisition, word length, and bigram mean were found to be nonsignificant (p > .05). Item reaction time was also nonsignificant with p = .067. However, due to concerns of path dependency and inflated p-values from included random effects, item reaction time was retained while the other three predictors were removed. This resulted in Model 2b, which consisted of the remaining predictors, including item reaction time. As shown in Tables 3–5, all model 2b predictors were found to be statistically significant, p < .05 and were retained for further analysis.

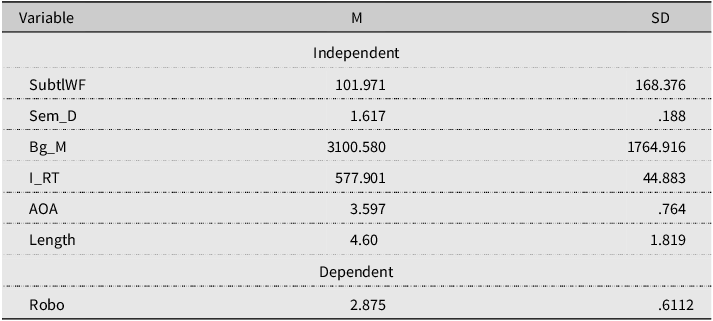

Psycholinguistic predictors and AI originality scores descriptive statistics

Note: SubtlWF = standardized form of word frequency; Bg_M = bigram frequency mean: I_RT = item reaction time; AOA = age of acquisition; Robo = AI generated originality score.

Predictor bivariate correlations

Note: *Indicates significance at p < .05 all other correlations are significant at p < .001 PT = Prompt Type.

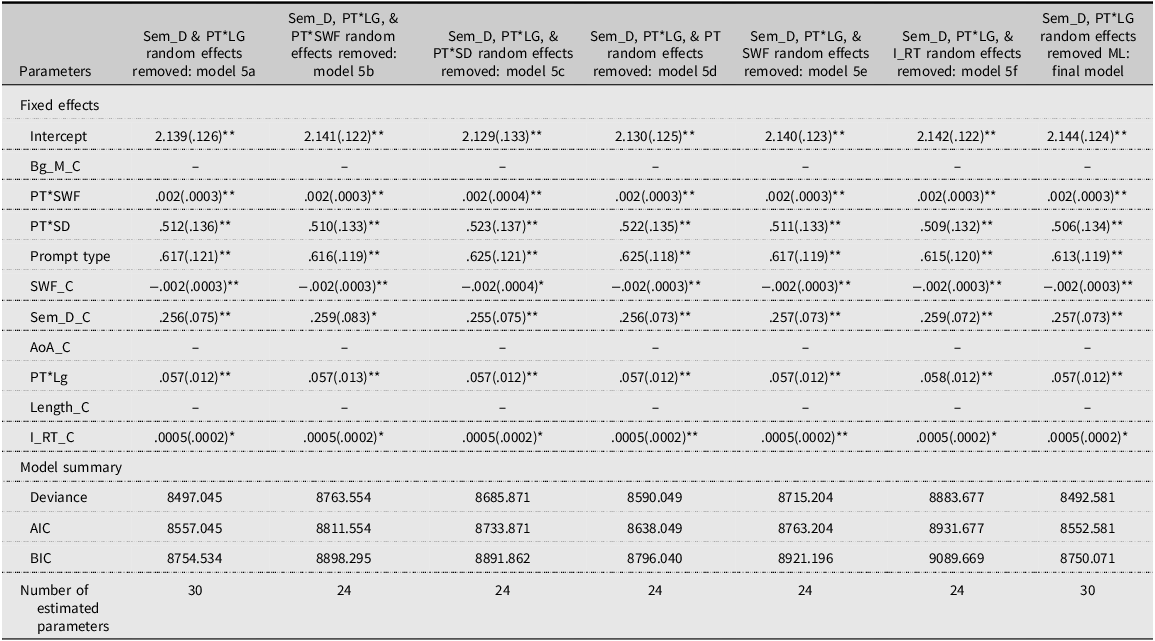

Model summaries

Note: *p < .05. **p < .001. Bg_M_C= mean centered bigram frequency mean; PT*SWF=interaction term between prompt type and word frequency, PT*SD= interaction term between prompt type and semantic diversity; SWF_C= mean centered standardized form of word frequency; Sem_D_C = mean centered semantic diversity; AOA_C = mean centered age of acquisition; PT*LG= interaction term between prompt type and world length; I_RT_C = mean centered item reaction time.

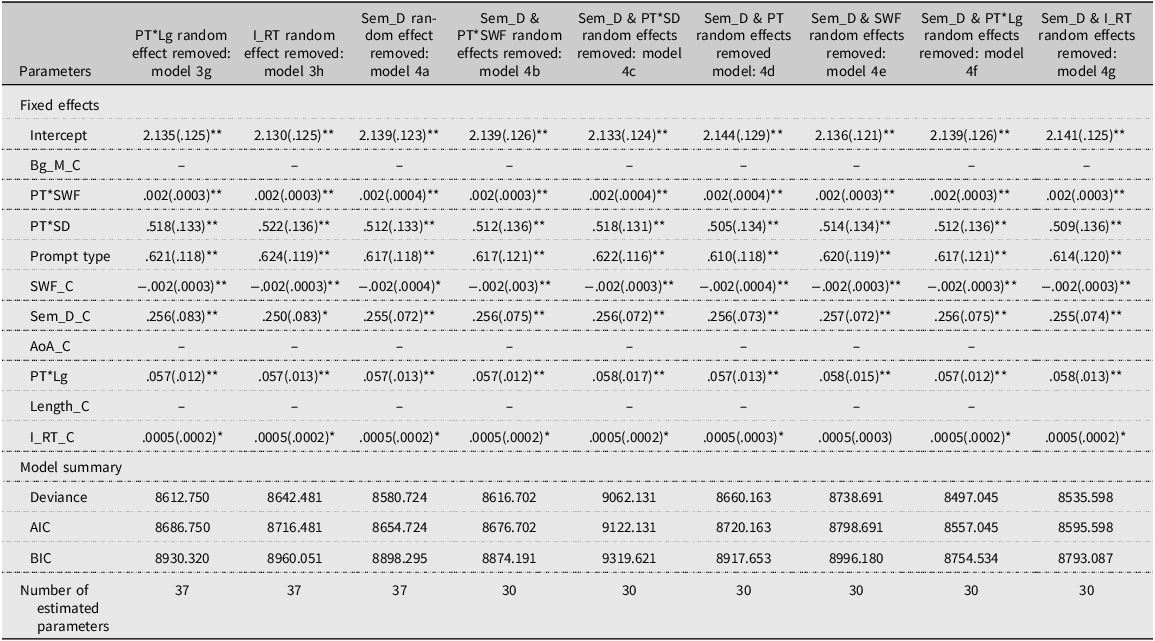

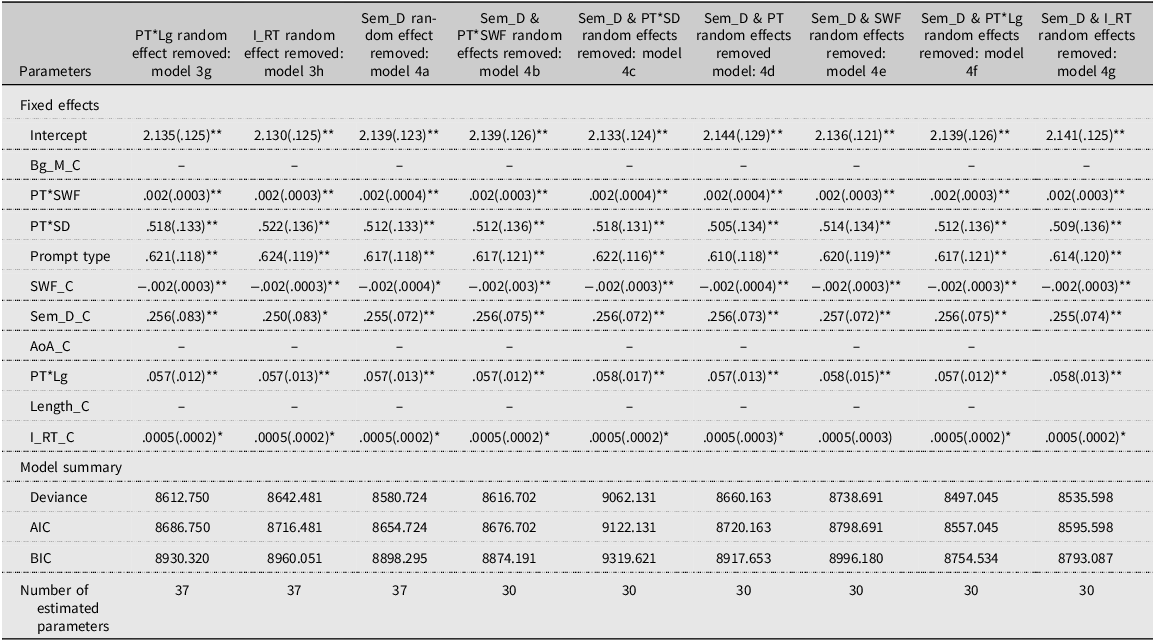

Model summaries

Note: *p < .05. **p < .001. Bg_M_C = mean centered bigram frequency mean; PT*SWF = interaction term between prompt type and word frequency, PT*SD= interaction term between prompt type and semantic diversity; SWF_C = mean centered standardized form of word frequency; Sem_D_C = mean centered semantic diversity; AOA_C = mean centered age of acquisition; PT*LG = interaction term between prompt type and world length; I_RT_C = mean centered item reaction time.

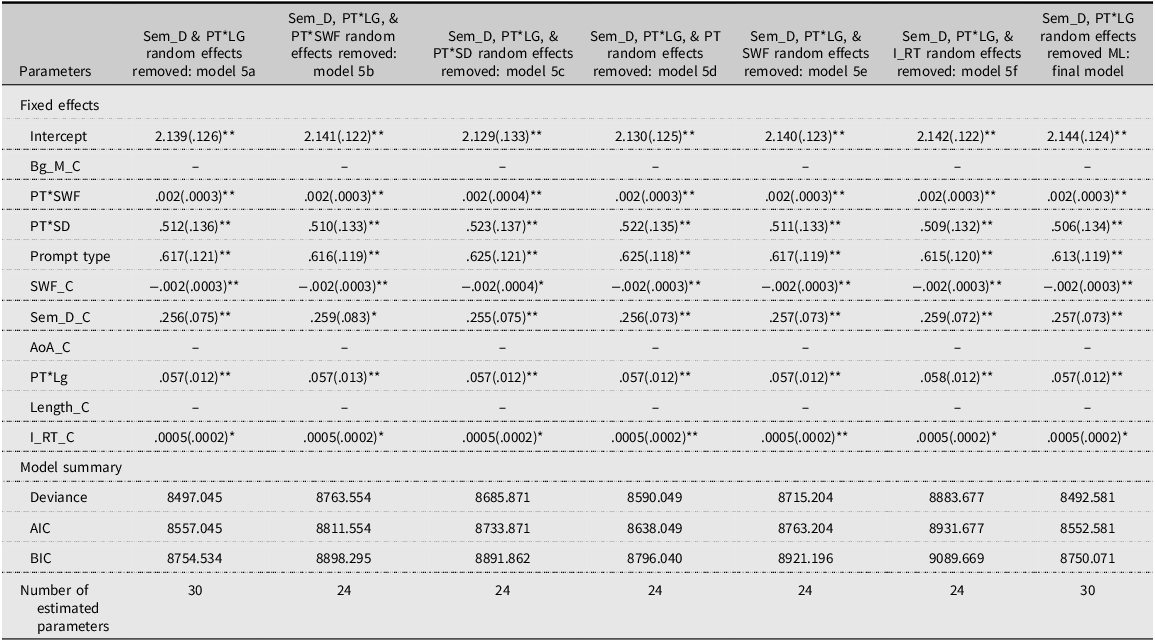

Model summaries

Note: *p < .05. **p < .001. Bg_M_C = mean centered bigram frequency mean; PT*SWF = interaction term between prompt type and word frequency, PT*SD = interaction term between prompt type and semantic diversity; SWF_C = mean centered standardized form of word frequency; Sem_D_C = mean centered semantic diversity; AOA_C = mean centered age of acquisition; PT*LG = interaction term between prompt type and world length; I_RT_C = mean centered item reaction time.

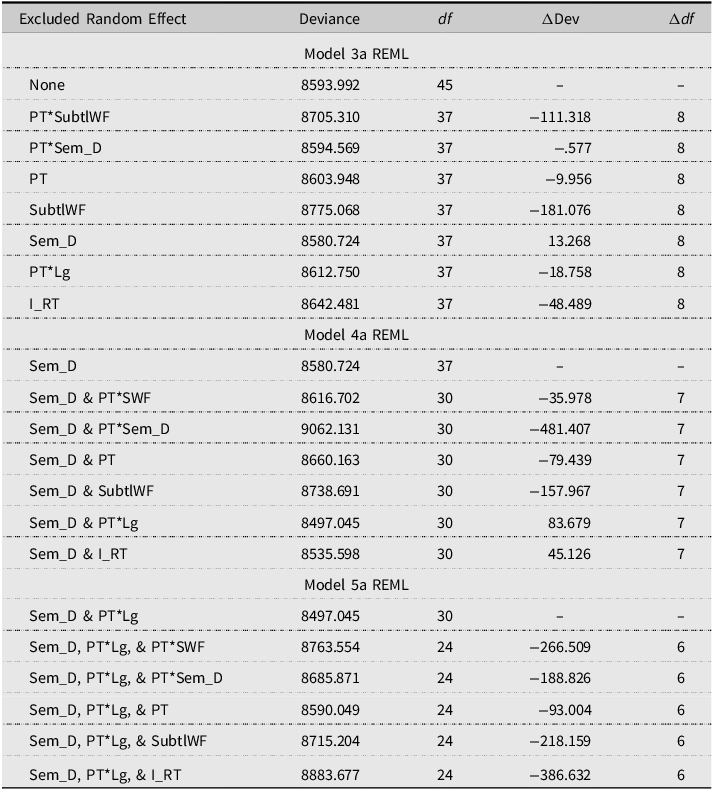

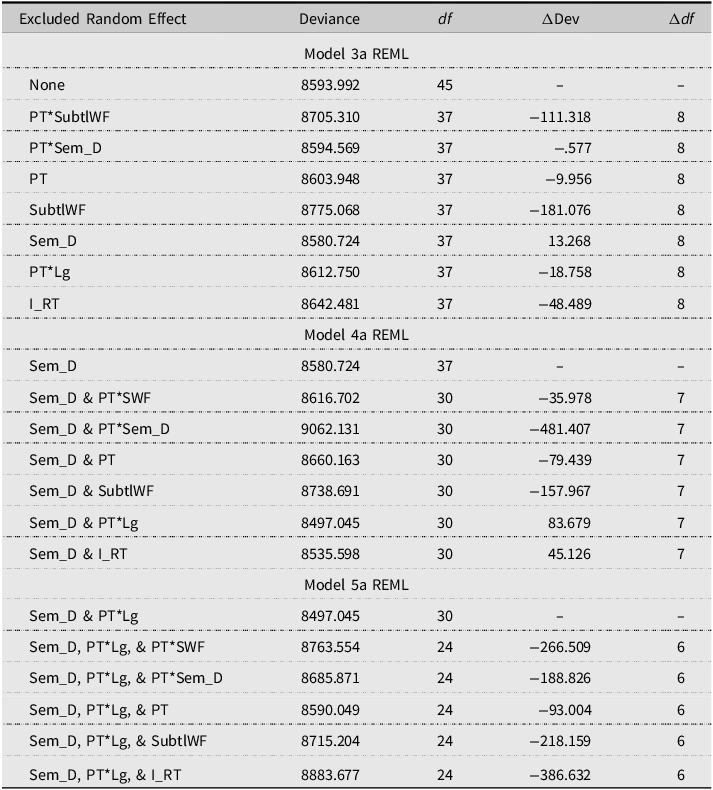

Model 3a was generated using the same retained predictors from Model 2b and the restricted maximum likelihood (REML) function. As presented in Tables 3–5, Model 3a has an overall model deviance of 8593.992 with a corresponding 45 degrees of freedom. Successive models (3b–3h) were generated by removing individual retained predictors’ random effects with replacement. Table 6 presents a complete report of all tested sub-models excluded random effects, resultant Δdeviances (ΔDev), and Δ degrees of freedom (Δdf). Several Model 3 sub models did not show a significant increase in model deviance (χ2 < 15.51, df = 8) 3c (prompt type *semantic diversity random effect excluded ΔDev = −.577), 3d (prompt type random effect excluded ΔDev = −9.956) and 3f (semantic diversity random effect excluded ΔDev = 13.268).

Model deviance for excluded random effects

To mitigate the possibility of Type II errors due to nonsignificant random effects inflating model deviance, only the random effect with the largest associated decrease in model deviance was deemed nonsignificant. All other random effects were retained for further analysis. Therefore, Model 3f (semantic diversity random effect excluded) was used as the new comparison model (i.e., Model 4a). Sub-models (4b–4g) were then generated following the same aforementioned procedure with model deviance, ΔDev, and Δdf presented in Table 6. Sub-models 4f (semantic diversity & prompt type*word length random effect excluded ΔDev = 83.679) and 4g (semantic diversity & item reaction time ΔDev = 45.126) both displayed significant decreases in model deviance compared to model 4a. However, following the aforementioned precautions due to inflated model deviance, model 4f was selected as the new comparison model (i.e., Model 5a). Sub-models (i.e., 5b–5f) were generated by removing random effects with replacement and associated ΔDev and Δdf reported in Table 6. Because all sub models showed significant increases in model deviance (χ2 < 12.59 df = 6), Model 5a was retained as the final model.

Therefore, Model 5a (χ2 = 8497.045 df = 30, p < .05) was retained as the final model with all included predictors’ random effects included except for semantic diversity and prompt type by word length. To calculate the residual variance of the final model, we ran Model 5a using the maximum likelihood test method. The residual variance decreased significantly from the unconditional model (.267) to Model 5a (.218) (See Table 7). In other words, Model 5a explains approximately 18.4% of the variance in originality scores. That means 18.4% of the variability in the originality of the responses was explained by specific psycholinguistic characteristics of the selected prompts. According to this final model, response originality is positively related to semantic diversity (b = .257, SE = .071) and item reaction time (b = .0005, SE = .0002), whereas it is negatively related to word frequency (b = −.002, SE = .0004). Furthermore, response originality was more strongly associated with the Instances prompt than the Uses prompt (b = .613, SE = .119). Interaction effects of Prompt Type with Word Frequency (b = .002, SE = .0004), Semantic Diversity (b = .506, SE = .134), and Word length (b = .057, SE = .012) showed that response originality is larger when Instances prompts had greater word frequency, semantic diversity, or word length when compared to the Uses prompts. In other words, psycholinguistic characteristics seem to be more impactful on response originality for the Instances prompts than the Uses prompts.

Model comparison of fixed effects and residual variances

Note: C = refers to the listed variable being mean centered. To aid in interpretation of predictor fixed effects, SubtlWF, Sem_D, and I_RT were centered by their means.

Discussion

In the present study, we tested the relationship between response originality and psycholinguistic characteristics of the DT prompts. Our multilevel model showed that psycholinguistics characteristics could explain about 23% of the variability in original thinking. Specifically, word frequency, semantic diversity, reaction time, and word length were significant predictors. Furthermore, DT prompt type (Instances versus Uses) moderated their influence.

Word frequency

We found that word frequency is negatively related to response originality. In fact, word frequency was the only negative predictor in the model. That means responses were less original when DT prompts were picked from those with a larger word frequency value. Larger word frequency implies greater accessibility and lower path resistance toward the prompt. It may be that experienced, or what some call “old ideas” (Benedek et al., Reference Benedek, Jauk, Fink, Koschutnig, Reishofer, Ebner and Neubauer2014a), predominates idea generation process, leaving less room for “new ideas,” which are employed when generating responses that are not directly tied to past experiences (Gilhooly et al., Reference Gilhooly, Fioratou, Anthony and Wynn2007). These findings partly conflict with Mednick’s (Reference Mednick1962) associative theory of creativity, asserting that greater associations increase the probability of finding a creative solution. Here, we observed the opposite effect, where responding to items with greater Word frequency, which are expected to elicit more associations, is associated with lower originality. Importantly, Mednick (Reference Mednick1962) did not manipulate the prompts but investigated individual differences in associative ability. He compared the association patterns between high- and low-creative individuals and proposed highly creative ones to have a flat associative hierarchy, characterized by a smaller slope of production over time compared to the low-creative individuals who quickly run out of associations after generating a lot early on.

On the other hand, it is also possible to interpret our findings in line with Mednick’s (Reference Mednick1962) perspective that word frequency allows for quick idea generation followed by a dramatic decline (increasing greater negative slope) and therefore resulting in diminished chance of getting at an original response. Notably, past research showed that word frequency is related to more idea quantities in the Alternate Uses test when people are given a short time (Forthmann et al., Reference Forthmann, Gerwig, Holling, Çelik, Storme and Lubart2016; Ogurlu et al., Reference Ogurlu, Acar and Ozbey2023). So, the link between word frequency and response quantity is well established. However, generating more responses is not necessarily conducive to having original ideas. In fact, the opposite appears to be true. Gonthier and Besançon (Reference Gonthier and Besançon2024) found that fluency negatively affects originality and elaboration: individuals producing fewer ideas had higher mean originality and elaboration scores. In a recent study, time use appeared to explain this: greater time use (latency) was positively related to originality and negatively related to fluency (Acar et al., Reference Acar, Abdulla and Runco2019, Reference Acar, Berthiaume, Kagan, Dumas and Organisciakn.d.).

Reaction time, word length, and age of acquisition

Reaction time was positively related to originality. This finding reinforces our interpretation of the negative relationship between originality and word frequency. Words that trigger slower reactions (longer latency) tend to have a lower word frequency (Johnston & Barry, Reference Johnston and Barry2006). Consequently, picking words with a longer reaction time may result in less automated, more deliberate, purposeful, and effortful cognitive processes. This is consistent with recent research that underlines the critical role of executive functions in creative processes (Benedek et al., 2014; Zabelina et al., Reference Zabelina, Friedman and Andrews-Hanna2019).

On the other hand, age of acquisition and word length did not significantly affect originality. Research shows that age of acquisition and word length are negatively related to word frequency (Johnston & Barry, Reference Johnston and Barry2006). It may be that their presence in the same model, along with word frequency, has led to a masking effect, where the potential influence of age of acquisition and word length has been masked or accounted for by word frequency. However, that does not mean word length is irrelevant. We found a significant interaction between word length and prompt type, showing that the impact of word length is specific to DT prompt type. This is discussed later in the Discussion section.

Semantic diversity

We found a positive relationship between semantic diversity and originality. Semantic diversity measures how much a word’s meaning changes or differs across various situations, contexts, or uses (Hoffman et al., Reference Hoffman, Lambon Ralph and Rogers2013). A word with high semantic diversity is used in a wide range of contexts with varying interpretations. In contrast, a word with low semantic diversity is used in more consistent or similar contexts. Semantic diversity indicates the degree to which a word can be used flexibly due to its ambiguity. Thus, ambiguous words tend to elicit more diverse meanings and associations, increasing various interpretations and diminishing the possibility of one following a standard ideational pattern. In turn, words with a high semantic diversity are expected to contribute to original thinking. This very definition of semantic diversity aligns well with the definition of DT: the ability to think in various directions (Runco, Reference Runco, Runco and Pritzker1999). As a result, it appears that picking words with a high level of semantic diversity could serve well as a DT prompt by eliciting more original responses on average.

Importantly, semantic diversity did not contribute to the quantity of responses (Ogurlu et al., Reference Ogurlu, Acar and Ozbey2023). The fact that it contributed to originality but not quantity can be interpreted in terms of the dual pathway theory of creativity (Nijstad et al., Reference Nijstad, De Dreu, Rietzschel and Baas2010). According to this theory, creativity is driven by two processes: persistence, the ability to continue working on a problem despite challenges, and flexibility, the ability to switch between different ideas or strategies. These processes work together to generate novel solutions, with persistence ensuring sustained effort and flexibility, allowing for the exploration of diverse possibilities. It may be that greater semantic diversity is conducive to utilizing the flexibility pathway, which is more supportive of original ideation. Conversely, word frequency is more strongly linked to the persistence path, which is more suitable for greater productivity. Because the MOTES is a single-response test, a direct test of the relationship among semantic diversity, word frequency, and ideational fluency was not testable in the present work.

Moderating influence of prompt type

Interestingly, the relationship between word frequency and originality was moderated by prompt type. That means, as word frequency increases, the negative relationship between word frequency and originality becomes weaker for the Instances prompts when compared to Uses. We also found a moderating effect of prompt type for semantic diversity and word length. The relationship between semantic diversity and response originality was greater (i.e., more strongly positive) for Instances than Uses. Likewise, the relationship between word length and response originality was greater for Instances than Uses. Note that word length did not have a significant main effect. Thus, its influence on the originality of responses is limited to the Instances task. Research has shown that word length is related to recall success: shorter words are more easily recalled than longer words (Baddeley, Reference Baddeley1999). Longer words are more likely to be avoided in everyday language because they are considered uneconomic (Zipf, Reference Zipf1965[Reference Zipf1935]). Neuroscientific research also showed that longer words trigger higher neurophysiological activity in the early phases, which may have been caused by lower familiarity with them (Hauk & Pulvermuller, Reference Hauk and Pulvermüller2004).

The consistency of the moderating influence of prompt type across semantic diversity, word frequency, and word length requires a close look into the differences between the Uses and Instances tasks. The Instances task asks respondents to identify things that belong to a specific category (i.e., things that are “red” or “big”), whereas Uses require finding uses for a common object. Silvia (Reference Silvia2011) compared these two tasks and argued that the Uses task is more likely to engage executive functions, whereas the Instances task is more associational. This also explains the inapplicability of Mednick’s (Reference Mednick1962) hypothesis to the Uses task. Mednick’s work was based on the word association’s tasks, which more closely resemble Instances prompts over the Uses test.

Implications

The findings of this study underscore that performance on originality in verbal DT tests depends to some degree on the psycholinguistic characteristics of the word prompts used. These findings have important implications for both theory and measurement. From a theoretical perspective, the results suggest that linguistic features shape the cognitive accessibility of associative networks that underlie idea generation leading to greater or lower originality. Consequently, creative performance on verbal DT tasks is not solely a function of an individual’s creative potential but is also partially determined by the linguistic affordances of the stimulus itself (Acar & Runco, Reference Acar and Runco2024; Runco, Reference Runco1992).

From a measurement standpoint, these findings emphasize that the arbitrary selection of prompts can introduce systematic variance unrelated to the construct being measured, potentially biasing interpretations of originality scores. This is particularly relevant in pre- and post-designs, where differences between test administrations could reflect item-level differences rather than true change in creative ability. To improve fairness and validity, test developers should therefore standardize prompt selection based on psycholinguistic equivalence rather than surface content similarity. On the other hand, it is important to keep in mind the target construct being measured through DT tasks. Our findings, along with past evidence (Ogurlu et al., Reference Ogurlu, Acar and Ozbey2023), indicated that what benefits originality appears to work against fluency as in the case of word frequency. Therefore, the scorable outcomes of DT tasks must be determined in advance to not only inform the explicit instructions (Reiter-Palmon et al., Reference Reiter-Palmon, Forthmann and Barbot2019) but also the type and use of psycholinguistics for prompt selection. Our findings are also directly applicable to the development of parallel test forms. When constructing alternate forms of verbal DT assessments, selecting prompts that match on key psycholinguistic parameters such as word frequency and semantic diversity can minimize unintended difficulty differences and ensure comparable measurement across administrations. Such alignment would enhance measurement invariance (Embretson & Reise, Reference Embretson and Reise2000) and facilitate more accurate interpretations of pre-post or longitudinal changes in creative performance.

Furthermore, our findings extend to AI-based creativity assessment. Recent advances, such as the Ocsai platform (Organisciak et al., Reference Organisciak, Acar, Dumas and Berthiaume2023), employ large language models fine-tuned on human-rated DT responses to automate originality scoring. MOTES is scored using the methods provided in Ocsai. Beyond scoring, large language models have also been used for automated item generation (Belzak et al., Reference Belzak, Naismith and Burstein2023). The patterns observed here can inform such algorithms by identifying which linguistic characteristics yield balanced yet discriminating DT prompts. Currently, MOTES has a validated set of items but could use a dynamic item pool to draw from. The present results provide empirical guidance for refining and improving these systems: incorporating psycholinguistic indicators as item selection or weighting criteria could improve the validity and interpretability of automated scoring outputs.

Collectively, these implications converge on a central insight: psycholinguistic calibration is integral to the valid assessment of creative thinking. By accounting for linguistic properties in both human- and AI-mediated testing contexts, researchers and practitioners can reduce construct-irrelevant variance, improve fairness, and enhance the replicability and interpretability of findings across studies and populations (Messick, Reference Messick1995).

Limitations and future directions

Several limitations of the present study should be acknowledged, some of which suggest promising directions for future research. First, participants were asked to produce only a single response per prompt. It remains unclear whether similar psycholinguistic effects on originality would emerge under alternative task structures that allow multiple responses (e.g., three or unlimited). Allowing participants to generate additional ideas may engage broader associative networks and alter the influence of linguistic variables such as word frequency. Prior research has shown that later responses in DT tasks tend to be more original, a pattern known as the serial order effect (Beaty & Silvia, Reference Beaty and Silvia2012; Christensen et al., Reference Christensen, Guilford and Wilson1957). Because of the design of MOTES, this effect could not be examined in the current study. Future research should replicate the present findings using DT task structure that permits multiple responses to determine whether psycholinguistic influences remain consistent across response positions. Moreover, future studies may explore whether the psycholinguistic characteristics of DT prompts themselves modulate the magnitude or direction of the serial order effect.

Second, the study sample consisted exclusively of elementary school children, which may constrain the generalizability of the findings. Psycholinguistic properties may exert stronger effects in this population because children’s vocabularies and semantic networks are still developing (Wulff et al., Reference Wulff, Hills and Mata2022). It is therefore essential to examine whether these patterns hold in older youth and adults, whose more extensive lexical knowledge and metacognitive strategies may reduce sensitivity to linguistic affordances (Cosgrove et al., Reference Cosgrove, Beaty, Diaz and Kenett2023).

Third, potential time-related effects represent an important consideration for future research. In the MOTES administration, participants were provided a sufficient but finite period of time to generate their responses. However, differences in allotted time could substantially influence how psycholinguistic variables affect performance. Ogurlu et al. (Reference Ogurlu, Acar and Ozbey2023) found that word frequency, which tends to enhance fluency, has a stronger effect when task duration is short. Extending or shortening the response window could therefore shift the balance fluency and originality. Future studies should systematically manipulate time constraints to clarify how temporal conditions moderate psycholinguistic effects.

Building on these methodological considerations, future research should also extend this line of inquiry to other DT tasks (e.g., Similarities) and other measures of verbal creativity to determine whether the observed psycholinguistic effects generalize across task types. While the current study focuses on originality, subsequent work should examine flexibility and appropriateness as complementary indicators of creative performance. Further, examining interactions between psycholinguistic characteristics and demographic variables (i.e., race, ethnicity, and English language learner status) would help identify potential sources of differential item functioning and enhance the fairness of creativity assessments.

Replication package

All associated data and relevant syntax have been posted to Open Science Framework (OSF) and can be reached using the following link https://osf.io/e3gr8.