37.1 Introduction

To learn about their world, infants have to make sense of the ‘great blooming, buzzing confusion’ of their environment (James, Reference James1890). They have to learn that the streams of sound that their caregivers emit are communicative and meaningful. Acquiring language is a key developmental milestone that children reach in their early years. Already before their first birthday, infants learn many things about speech and language. For example, in their first year of life, infants discover which sounds are meaningful in their native language (Kuhl, Reference Kuhl2004; Werker and Tees, Reference Werker and Tees1984), they learn to segment words from the continuous speech stream (Jusczyk, Reference Jusczyk1999), and they start to link these word forms to meaning (Johnson, Reference Johnson2016). Infants’ brains are ‘language-ready’ (Hagoort, Reference Hagoort2017), but their brains are also still rapidly developing in interaction with their environment (Westermann, Reference Westermann2016). The environment in which language learning needs to occur is usually noisy, with many possible referents, cluttered visual information (Yu et al., Reference Yu, Zhang, Slone and Smith2021), and auditory background noise. We now know that children are active learners (Bazhydai et al., Reference Bazhydai, Westermann and Parise2020; Begus et al., Reference Begus, Gliga and Southgate2016; Kidd et al., Reference Kidd, Piantadosi and Aslin2012; Stahl and Feigenson, Reference Stahl and Feigenson2015): they selectively attend to important information. Being able to select the relevant information for language learning enables language growth (D’souza et al., Reference D’souza, D’souza and Karmiloff-Smith2017). It is essential to know what neural processes help children in this attentional selection for language learning and how environmental cues and neural maturation influence these processes. This insight will help us understand individual differences in language development and give clues for providing an optimal learning situation in both typical and atypical development.

The current chapter will showcase the potential importance of neural tracking, that is, the alignment between neural activity and rhythmic speech patterns, for attentional selection during speech processing development. We will review recent research on neural speech tracking in infants and its relation to later language development. Finally, we will discuss how electrophysiological maturation across infancy may change neural tracking in infancy and influence the trajectory of both typical and atypical language development.

37.1.1 Using Rhythm for First-Language Acquisition

One important cue that infants use for language learning is rhythm (Gleitman and Wanner, Reference Gleitman and Wanner1982). Newborns can already distinguish different languages based on their rhythmic characteristics (Nazzi et al., Reference Nazzi, Floccia and Bertoncini1998; Ramus and Mehler, Reference Ramus and Mehler1999; Ramus et al., Reference Ramus and Mehler1999). Seven- to eight-month-olds use rhythmic properties to segment words from a continuous speech stream (Johnson and Jusczyk, Reference Johnson and Jusczyk2001; Jusczyk et al., Reference Jusczyk1999). This has been proposed to be an important bootstrapping mechanism for language learning (Gervain et al., Reference Gervain, Christophe, Mazuka, Gussenhoven and Chen2020; Höhle, Reference Höhle2009).

Our hypothesis is that the oscillatory properties of the human brain are particularly suited to pick up rhythmic properties of language. In the current chapter, we specify how the neural tracking of rhythmic speech properties might help children to selectively attend to important information in their input, thus paving the way for language learning.

37.1.2 Proposal: Importance of Neural Tracking for Temporal Attention and Impact Maturation

We here propose that rhythmic neural tracking of speech (Giraud and Poeppel, Reference Giraud and Poeppel2012), that is, the synchronisation between neural oscillations and speech rhythm, is central to active language learning. Neural oscillations provide temporal windows of alternating reduced and enhanced excitability (Buzsáki and Watson, Reference Buzsáki and Watson2022; Fries, Reference Fries2015; VanRullen, Reference VanRullen2016), enabling more effective processing at high-excitability states (Lakatos et al., Reference Lakatos, Musacchia and O’Connel2013; VanRullen, Reference VanRullen2016). Neural synchronisation to external stimuli has been proposed to allow for sensory selection (Schroeder and Lakatos, Reference Schroeder and Lakatos2009). During speech processing, neural tracking of speech acoustics might help to group information into analysable units such as words and phrases (Ding and Simon, Reference Ding and Simon2014; Goswami, Reference Goswami2018; Keitel and Gross, Reference Keitel and Gross2016; see also Chapters 3, 5, and 35). We here propose that neural tracking assists language development by guiding infants’ attention towards informative units in speech, helping infants to learn to segment the continuous speech signal into informative units and from there bootstrap language learning (Gervain et al., Reference Gervain, Christophe, Mazuka, Gussenhoven and Chen2020; Höhle, Reference Höhle2009). It is important to realise that the infant brain is still rapidly developing, with electrophysiological brain activity speeding up with infant development (Anderson and Perone, Reference Anderson and Perone2018; Cellier et al., Reference Cellier, Riddle, Petersen and Hwang2021; Menn et al., Reference Menn, Männel and Meyer2023a). We hypothesise that this electrophysiological maturation gives rise to different processing constraints and opportunities at different points in development, with optimal analysis time windows shifting with development.

37.2 Neural Tracking of Speech

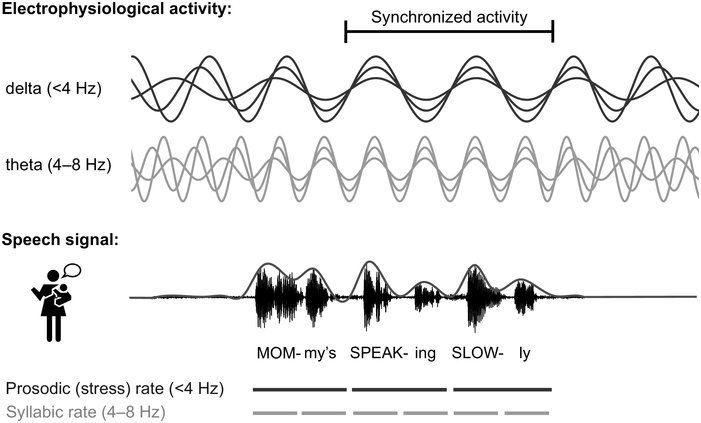

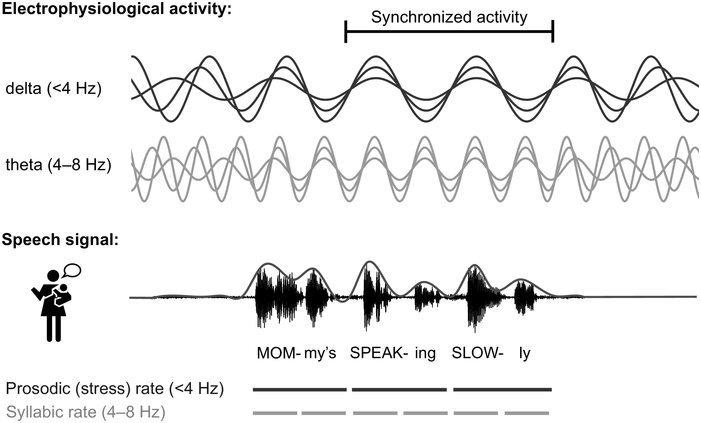

In adults, it is now well established that rhythmic properties of speech set up a predictive context (Rothermich and Kotz, Reference Rothermich and Kotz2013) that is crucial for speech decoding (Gagnepain et al., Reference Gagnepain, Henson and Davis2012; Rimmele et al., Reference Rimmele, Morillon, Poeppel and Arnal2018; Zion Golumbic et al., Reference Zion Golumbic, Poeppel and Schroeder2012). Rhythm in speech is most obvious in the amplitude envelope modulation of the speech waveform (see Figure 37.1; Giraud and Poeppel, Reference Giraud and Poeppel2012; Goswami, Reference Goswami2012), with clear peaks from 2 to 10 Hz across languages, corresponding to the syllable rate (see Poeppel and Assaneo, Reference Poeppel and Assaneo2020, for a recent review). At a higher frequency (~30–50 Hz), modulations are associated with phonemic features, and at a lower temporal modulation rate (<4 Hz) with prosodic stress and lexical and phrasal structure, for example through the intonation contour (Giraud and Poeppel, Reference Giraud and Poeppel2012; Rosen et al., Reference Rosen, Carlyon, Darwin and Russell1992).

Illustration of neural tracking of speech.

Electrophysiological activity in the delta and theta range is assumed to synchronise to amplitude modulations in speech (see also Chapter 35). The line above the speech signal displays the amplitude envelope. Note that the delta and theta band is lower in infants compared to the canonical frequency bands in adults (Anderson and Perone, Reference Anderson and Perone2018; Cellier et al., Reference Cellier, Riddle, Petersen and Hwang2021), and that the speech rates in infant-directed speech are typically slower than in adult-directed speech, with ~3–6 Hz as the typical infant-directed syllable rate (Cox et al., Reference Cox, Bergmann and Fowler2023; Raneri et al., Reference Raneri, Von Holzen, Newman and Ratner2020).

Figure 37.1 Long description

Two types of brain waves are depicted; delta is less than 4 hertz and theta is between 4 through 8 hertz. A waveform of speech is shown. The text below the speech signal reads, Mom my's Speaking Slowly. Prosodic rate and syllabic rate are denoted with broken lines.

By now, it is well established that electrophysiological brain activity tracks the temporal modulations in speech (Figure 37.1; Gross et al., Reference Gross, Hoogenboom and Thut2013; Luo and Poeppel, Reference Luo and Poeppel2007; see Poeppel and Assaneo, Reference Poeppel and Assaneo2020, for a review). In the brain, alternating periods of excitation and inhibition result in rhythmic fluctuations of neuronal activity. The speed of fluctuation depends on internal neuronal frequency properties and differs between neuronal populations (Buzsáki, Reference Buzsáki2006; Buzsáki and Watson, Reference Buzsáki and Watson2022; Hutcheon and Yarom, Reference Hutcheon and Yarom2000). Rhythmic fluctuations across larger groups of neurons can be measured as neural oscillations at different frequencies on the scalp using electroencephalography (EEG). Neural oscillations provide windows of alternating reduced and enhanced excitability, giving temporal windows for analysing and grouping information (Buzsáki and Watson, Reference Buzsáki and Watson2022; Fries, Reference Fries2015; VanRullen, Reference VanRullen2016). At rest, oscillations in the auditory cortex are hierarchically organised into the delta (< 4 Hz), theta (4–8 Hz), and gamma (> 30 Hz) range (Giraud et al., Reference Giraud, Kleinschmidt and Poeppel2007; Keitel and Gross, Reference Keitel and Gross2016), and thus closely match the frequencies of stress patterns, syllables, and phonemes. This resulted in the proposal that rhythmic properties of speech entrain neuronal firing (Giraud and Poeppel, Reference Giraud and Poeppel2012; Gross et al., Reference Gross, Hoogenboom and Thut2013; Lalor and Foxe, Reference Lalor and Foxe2010; Luo and Poeppel, Reference Luo and Poeppel2007; Peelle and Davis, Reference Peelle and Davis2012), causing neurons to align both the frequency and the phase of their firing patterns to the input (Regan, Reference Regan1977; Zaehle et al., Reference Zaehle, Lenz, Ohl and Herrmann2010). This rhythmical neural tracking enables the forming of temporal predictions about salient events in the input, ensuring the brain is most excitable at times when the speech signal carries the most information (Lakatos et al., Reference Lakatos, Musacchia and O’Connel2013; Large and Jones, Reference Large and Jones1999; Rimmele et al., Reference Rimmele, Morillon, Poeppel and Arnal2018; Schroeder and Lakatos, Reference Schroeder and Lakatos2009). This helps in grouping information in analysable units such as words, syllables, and phrases (Ding and Simon, Reference Ding and Simon2014; Goswami, Reference Goswami2018; Keitel et al., Reference Keitel, Gross and Kayser2018) and facilitates speech processing (Cason and Schön, Reference Cason and Schön2012; Doelling et al., Reference Doelling, Arnal, Ghitza and Poeppel2014; Henry and Obleser, Reference Henry and Obleser2012; Keitel et al., Reference Keitel, Gross and Kayser2018; Peelle et al., Reference Peelle, Gross and Davis2013; see Meyer, Reference Meyer2018, for a review) by assisting the segmentation and identification of linguistic units from speech.

Natural speech is not perfectly rhythmic, and bottom-up cues alone might be insufficient to explain the synchronisation between neural activity and the speech envelope (Meyer et al., Reference Meyer, Sun and Martin2020). Indeed, speech tracking has been found to be influenced by cross-modality influences as well as to be top-down-modulated by linguistic knowledge and attention. The influence of linguistic knowledge on neural tracking has, for example, been shown by Ding et al. (Reference Ding, Melloni, Zhang, Tian and Poeppel2016), while other studies have confirmed that neural tracking is modulated by semantic content (Broderick et al., Reference Broderick, Anderson and Lalor2019; Kaufeld et al., Reference Kaufeld, Bosker and Ten Oever2020).

In addition to linguistic knowledge, visual information also affects neural tracking of speech (Crosse et al., Reference Crosse, Butler and Lalor2015; Power et al., Reference Power, Mead, Barnes and Goswami2012a; Zion Golumbic et al., Reference Zion Golumbic, Cogan, Schroeder and Poeppel2013). Rhythmic movements of the mouth, lips, and jaw often occur in synchrony with the auditory signal, even slightly preceding it (Chandrasekaran et al., Reference Chandrasekaran, Trubanova, Stillittano, Caplier and Ghazanfar2009). This makes facial cues important for following or even predicting the rhythm of speech and thus likely aiding speech tracking (Bourguignon et al., Reference Bourguignon, Baart, Kapnoula and Molinaro2020; Park et al., Reference Park, Kayser, Thut and Gross2016, Reference Park, Ince, Schyns, Thut and Gross2018; Zoefel, Reference Zoefel2021). Indeed, visual information from mouth movements aids in synchronising neural oscillations in both adults (Bauer et al., Reference Bauer, Debener and Nobre2020; Biau et al., Reference Biau, Wang, Park, Jensen and Hanslmayr2021; Bourguignon et al., Reference Bourguignon, Baart, Kapnoula and Molinaro2020; Peelle and Sommers, Reference Peelle and Sommers2015; Thézé et al., Reference Thézé, Giraud and Mégevand2020; Zoefel, Reference Zoefel2021) and children (Power et al., Reference Power, Foxe, Forde, Reilly and Lalor2012b; but see Çetinçelik et al., Reference Çetinçelik, Rowland and Snijders2023, Reference Çetinçelik, Jordan-Barros, Rowland and Snijders2024).

Finally, neural tracking is also modulated by attention. Speech is rarely heard under ideal acoustic conditions, so listeners must selectively attend to the relevant speech stream and filter out irrelevant noise. Neural entrainment has been proposed to be a core mechanism for attentional selection, maximising temporal attention on to the behaviourally important parts of the signal (Lakatos et al., Reference Lakatos, Karmos, Mehta, Ulbert and Schroeder2008; Obleser and Kayser, Reference Obleser and Kayser2019; Zion Golumbic et al., Reference Zion Golumbic, Poeppel and Schroeder2012). Indeed, when presented with multiple talkers simultaneously, rhythmic neural tracking helps in attending to one of multiple speech streams and synchronisation reflects the attended speaker (O’Sullivan et al., Reference O’Sullivan, Power and Mesgarani2015; Power et al., Reference Power, Foxe, Forde, Reilly and Lalor2012b; Zion Golumbic et al., Reference Zion Golumbic, Poeppel and Schroeder2012).

It is good to realise that the speech–brain synchronisation measured in most studies needs not to arise from an alignment of ongoing endogenous neural oscillations, that is, from underlying oscillatory activity that is shifted in phase due to the rhythmical input (Figure 37.1). Instead, the synchronisation may reflect a series of auditory responses evoked by acoustic extrema in the speech signal, which are superimposed on neural activity and thus appear in the same frequency as the speech rhythm (see, for example, Keitel et al., Reference Keitel, Obleser, Jessen and Henry2021). Recent evidence regarding the involvement of genuine oscillations in speech tracking suggests that, at least in some cases, rhythmic responses persist even after stimulation has ended (van Bree et al., Reference van Bree, Sohoglu, Davis and Zoefel2021; Zoefel et al., Reference Zoefel, ten Oever and Sack2018). This suggests an involvement of oscillatory entrainment in speech tracking, likely in combination with evoked responses (Doelling et al., Reference Doelling, Florencia Assaneo, Bevilacqua, Pesaran and Poeppel2019).

It is important to keep the distinction between evoked and oscillatory accounts in mind when interpreting findings from neural tracking. However, oscillations have been argued to reflect basic operating mechanisms of the brain, which are employed by specialised cognitive processes (Friederici and Singer, Reference Friederici and Singer2015; Fries, Reference Fries2015). During speech processing, the brain needs to flexibly adapt its operating frequencies to the speech characteristics. Even evoked responses will therefore necessarily, at least to a certain degree, occur within the frequency ranges that the brain is able to process and communicate in. As we will argue below, maturation of underlying oscillatory circuits during infancy constrains the information that infants can process and thus affects neural tracking – even if the underlying mechanism were evoked rather than entrained.

37.3 Neural Speech Tracking in Infants

In recent years, there has been increasing evidence that electrophysiological activity in the infant brain already tracks the rhythm of speech (Attaheri et al., Reference Attaheri, Choisdealbha and Di Liberto2022; Menn et al., Reference Menn, Michel, Meyer, Hoehl and Männel2022a; Ortiz Barajas et al., Reference Ortiz Barajas, Guevara and Gervain2021). In particular, it has been shown that newborns track the syllable rate (3–6 Hz) of simple repeated sentences in the native and non-native language (Ortiz Barajas et al., Reference Ortiz Barajas, Guevara and Gervain2021). This study did not test the tracking of other rhythms, therefore leaving it unclear whether newborns already track the slow prosodic (stress) rate and the fast phoneme rate in speech. The youngest age for which tracking of prosodic stress has been shown is for four-month-olds, who were found to track sung nursery rhymes in the delta and theta rate (Attaheri et al., Reference Attaheri, Choisdealbha and Di Liberto2022). However, infants’ early focus on prosody (Nazzi et al., Reference Nazzi, Jusczyk and Johnson2000) makes it likely that they already track prosodic stress earlier. The youngest age tested for phoneme-rate tracking is 10-month-olds by Menn et al. (Reference Menn, Ward and Braukmann2022b), who found significant tracking of the phoneme rate of spoken nursery rhymes in these infants. More research is needed to investigate the onset of neural tracking of speech in the prosodic stress rate and the phonemic rate.

At least by seven months of age, infants do not require perfectly rhythmic speech for neural tracking but can also track natural speech, such as cartoons (Jessen et al., Reference Jessen, Fiedler, Münte and Obleser2019), maternal speech in natural interactions (Menn et al., Reference Menn, Michel, Meyer, Hoehl and Männel2022a), and live maternal singing (Nguyen et al., Reference Nguyen, Reisner and Lueger2023). Given that natural speech can at most be considered quasi-rhythmic (Jadoul et al., Reference Jadoul, Ravignani, Thompson, Filippi and de Boer2016; Turk and Shattuck-Hufnagel, Reference Turk and Shattuck-Hufnagel2013), robust synchronisation of neural activity to speech likely requires continuous updating through top-down modulation. Similar to adults, there is some evidence for a modulation of infants’ neural tracking of speech by visual information, linguistic knowledge, and attention.

Tan et al. (Reference Tan, Kalashnikova, Di Liberto, Crosse and Burnham2022) compared neural tracking in visual-only, auditory-only, and audiovisual speech, finding an audiovisual speech benefit for five-month-old infants and adults, but not for four-year-olds. Another study did not find a benefit of visual cues in 10-month-olds (in ideal listening conditions with slow infant-directed speech (IDS) without background noise), showing equally robust neural tracking of audiovisual speech when visual cues were present versus when they were blocked (Çetinçelik et al., Reference Çetinçelik, Jordan-Barros, Rowland and Snijders2024). Possibly, infant brains particularly rely on audiovisual information prior to the onset of linguistic knowledge. At later ages the audiovisual speech benefit is largest in relatively noisy and challenging conditions (Ross et al., Reference Ross, Saint-Amour, Leavitt, Javitt and Foxe2006; Sumby and Pollack, Reference Sumby and Pollack1954).

Evidence for an influence of linguistic knowledge on neural tracking of speech acoustics in infants currently only comes from artificial language studies showing that statistical learning modulates tracking of artificial speech. Choi et al. (Reference Choi, Batterink, Black, Paller and Werker2020) presented six-month-old infants with trisyllabic pseudowords concatenated to syllable strings, which were presented at a fixed syllable rate while the infants’ EEG was recorded. While the infants initially showed synchronisation to the syllable rate only, they transitioned to neural tracking of both the syllable rate and the rate of the trisyllabic pseudowords by the end of the experiment. This progression to tracking of the pseudoword rate indicates a top-down influence of newly acquired knowledge on neural tracking of the artificial speech stream, though studies on naturalistic speech are currently still lacking.

In addition to visual information and linguistic knowledge, infants’ neural tracking also likely benefits from attentional selection. Kalashnikova et al. (Reference Kalashnikova, Peter, Di Liberto, Lalor and Burnham2018) observed stronger tracking to IDS compared to adult-directed speech (ADS) in seven-month-old infants. The authors attribute this IDS tracking benefit to infants’ increased attention to IDS (Cooper and Aslin, Reference Cooper and Aslin1990; Frank et al., Reference Frank, Alcock and Arias-Trejo2020). It should be noted, though, that the studies by Tan et al. (Reference Tan, Kalashnikova, Di Liberto, Crosse and Burnham2022) and Çetinçelik et al. (Reference Çetinçelik, Jordan-Barros, Rowland and Snijders2024) observed no relationship between attention (to visual cues) and neural tracking. It is also possible that the IDS tracking benefit is based on increased amplitude modulations at the rate of prosodic stress in IDS over ADS (Leong et al., Reference Leong, Kalashnikova, Burnham and Goswami2017; Menn et al., Reference Menn, Michel, Meyer, Hoehl and Männel2022a; Räsänen et al., Reference Räsänen, Kakouros and Soderstrom2018).

37.4 Infants’ Neural Tracking and Their Later Language Development

Multiple studies suggest that infants’ rhythmic neural tracking of speech relates to language abilities. Snijders (Reference Snijders2020) demonstrated that 7.5-month-olds’ neural tracking of spoken nursery rhymes at the rhythm of stressed syllables (1.5–2 Hz) relates to their word segmentation abilities at nine months. Expanding on this finding, neural tracking at the stressed-syllable rate at 10 months has been found to predict vocabulary development at two years (Menn et al., Reference Menn, Ward and Braukmann2022b) and at 18 months (Çetinçelik et al., Reference Çetinçelik, Jordan-Barros, Rowland and Snijders2024). The predictive effect of the tracking of slow rhythms (0.5–4 Hz) in speech for vocabulary development was replicated by Attaheri et al. (Reference Attaheri, Choisdealbha and Rocha2024) using spoken nursery rhymes.

Interestingly, some studies provide evidence for a relationship between neural tracking at the syllable rate, rather than the stressed-syllable rate, and vocabulary acquisition. Both Hahn and Snijders (Reference Hahn and Snijders2023) and Çetinçelik et al. (Reference Çetinçelik, Rowland and Snijders2023) found a positive relationship between 10-month-olds’ neural tracking of speech in the syllable rate and vocabulary growth until 18 months. Note that the syllable rates in these studies were relatively low, as they were based on the syllable rate of the actual IDS stimuli used in the experiments – resulting in a syllable rate within the canonical delta frequency range (2.5–3.5 Hz both in Hahn and Snijders, Reference Hahn and Snijders2023, and in the studies of Çetinçelik et al.).

Taken together, recent studies provide evidence that rhythmic neural tracking of speech predicts word segmentation and later vocabulary, but further studies are needed to establish whether tracking of specific frequency ranges related to stimulus characteristics is especially relevant for language acquisition or whether there is a more general role for neural tracking in the delta frequency range.

37.5 Possible Mechanisms

As presented above, speech tracking at specific frequency ranges might be related to later language development. One interpretation would be that infants who preferably track in that specific frequency range are somehow at an advantage for language development. This may be a result of their individual ‘electrophysiological profile’, that is, the location and power distribution of prominent peaks (and potentially also non-rhythmic, aperiodic activity) in the infant’s electrophysiological spectrum (Ostlund et al., Reference Ostlund, Donoghue and Anaya2022). In particular, individual differences in electrophysiological maturation between infants will lead to differences in spectral characteristics of brain rhythms, which will allow them to process information at different frequencies. Infants whose electrophysiological profile leads them to preferentially track at the stressed-syllable rate may benefit in their use of rhythmic cues for word segmentation. Another interpretation would be that tracking is flexible, with infants adapting the frequency they are tracking depending on which parts of the input signal they currently pay attention to. In this interpretation, tracking at specific frequency ranges for specific stimuli might be beneficial for language development. Neural speech tracking would then reflect infants’ attention to specific parts of the speech signal (e.g., stressed syllables), simultaneously acting as a core mechanism for maximising temporal attention on these parts (Lakatos et al., Reference Lakatos, Karmos, Mehta, Ulbert and Schroeder2008; Obleser and Kayser, Reference Obleser and Kayser2019; Zion Golumbic et al., Reference Zion Golumbic, Poeppel and Schroeder2012). We would like to argue for a combination of the two interpretations: neural speech tracking maximises the uptake of relevant information from the noisy multimodal environment, while being constrained by the maturation of the underlying oscillatory circuits (see Haegens and Zion Golumbic, Reference Haegens and Zion Golumbic2017; Meyer et al., Reference Meyer, Sun and Martin2020; Rimmele et al., Reference Rimmele, Morillon, Poeppel and Arnal2018, for related accounts of adult speech processing). Successful speech processing and language learning require neural activity to adapt flexibly to the quasi-rhythmic input, which can only occur within the limits of the developing neural system (Menn et al., Reference Menn, Männel and Meyer2023a).

37.5.1 Maturational Constraints

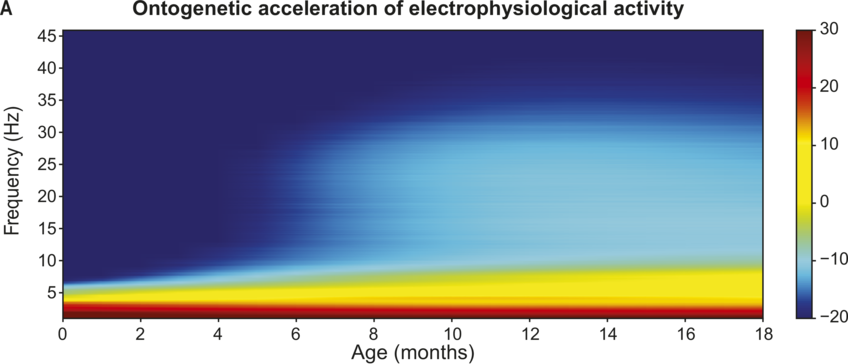

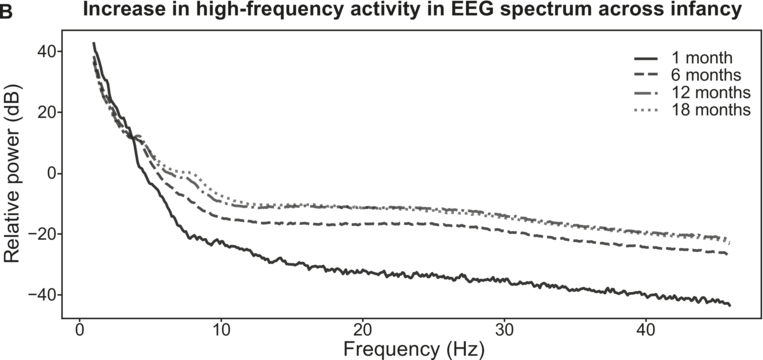

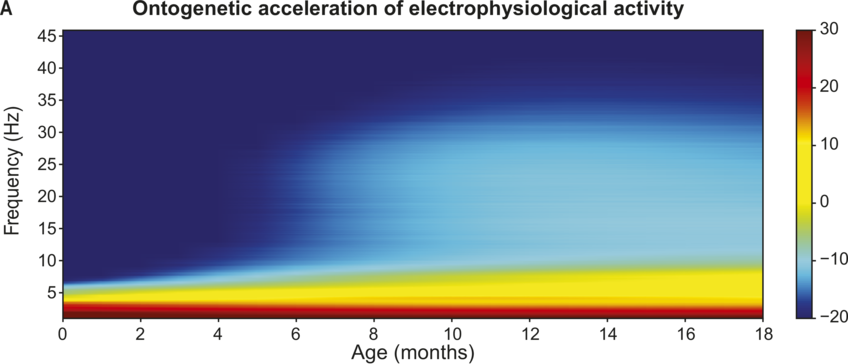

To understand the mechanistic role of neural tracking in language development and how this changes with age, we need to take brain maturation into account. The system’s constraints change with maturation, which will impact language processing possibilities. The infant brain is not fully developed at birth and maturational aspects of the brain are reflected in its electrophysiology (Hill et al., Reference Hill, Clark, Bigelow, Lum and Enticott2022; Schaworonkow and Voytek, Reference Schaworonkow and Voytek2021; Vanhatalo and Kaila, Reference Vanhatalo and Kaila2006). In infancy, slow neural oscillations are predominant and there is a general speed-up of electrophysiological rhythms across early childhood (Anderson and Perone, Reference Anderson and Perone2018; see Figure 37.2).Footnote 1 The individual alpha peak frequency (iAPF) is one of the most robust markers of cerebral maturation (Rodríguez-Martínez et al., Reference Rodríguez-Martínez, Ruiz-Martínez, Barriga Paulino and Gómez2017; Valdés-Sosa et al., Reference Valdés-Sosa, Biscay and Galán1990). In the developing brain at posterior sites, the dominant alpha rhythm gradually shifts from 3–6 Hz in infants to 8–12 Hz in adulthood (Cellier et al., Reference Cellier, Riddle, Petersen and Hwang2021; Gable et al., Reference Gable, Miller and Bernat2022; Marshall et al., Reference Marshall, Bar-Haim and Fox2002; Schaworonkow and Voytek, Reference Schaworonkow and Voytek2021; Stroganova et al., Reference Stroganova, Orekhova and Posikera1999). The gradual increase of the iAPF is possibly a product of increased myelination (i.e., the formation of white matter tracts in the brain, especially between thalamus and cortex; Freschl et al., Reference Freschl, Azizi, Balboa, Kaldy and Blaser2022; Segalowitz et al., Reference Segalowitz, Santesso and Jetha2010). Faster iAPF has been related to increases in speed of information processing (Klimesch et al., Reference Klimesch, Doppelmayr, Schimke and Pachinger1996; Surwillo, Reference Surwillo1961) and attentional performance (Tröndle et al., Reference Tröndle, Popov, Dziemian and Langer2022). In particular, the iAPF has been hypothesised to reflect the size of the temporal integration window (Bastiaansen et al., Reference Bastiaansen, Berberyan, Stekelenburg, Schoffelen and Vroomen2020; Cecere et al., Reference Cecere, Rees and Romei2015; VanRullen, Reference VanRullen2016; White, Reference White1963; but see Buergers and Noppeney, Reference Buergers and Noppeney2022; London et al., Reference London, Benwell and Cecere2022; Ruzzoli et al., Reference Ruzzoli, Torralba, Morís Fernández and Soto-Faraco2019). The temporal integration window is the time needed to separate two events (either cross-modal or within modality). This means that a faster iAPF will make it easier to segregate information occurring in quick temporal succession, indicating that the acceleration in iAPF across infancy and childhood will allow children to dissociate information at smaller temporal intervals as they mature. In line with this, infants initially have very long temporal integration windows (Hochmann and Kouider, Reference Hochmann and Kouider2022; Tsurumi et al., Reference Tsurumi, Kanazawa, Yamaguchi and Kawahara2021): while adults can differentiate tones if they are separated by at least 20 ms (Giraud, Reference Giraud2020; Joliot et al., Reference Joliot, Ribary and Llinás1994), 7.5-month-old infants need a total of ~150 ms difference in tone onsets in order to process two tones as separate (Benasich and Tallal, Reference Benasich and Tallal2002). The iAPF might thus reflect processing constraints that change with development, determining the limits of neural temporal processing. Notably, alpha activity is not classically associated with neural tracking during speech processing (see Meyer, Reference Meyer2018, for a review). However, the acceleration of alpha and the corresponding decrease in temporal integration windows has been associated with higher audiovisual integration abilities (Ronconi et al., Reference Ronconi, Vitale and Federici2023; Zhou et al., Reference Zhou, Cui and Yang2022). Given the importance of visual cues for infants’ language acquisition (Çetinçelik et al., Reference Çetinçelik, Rowland and Snijders2021; Hollich et al., Reference Hollich, Newman and Jusczyk2005) and potentially also the neural tracking of audiovisual speech (Power et al., Reference Power, Foxe, Forde, Reilly and Lalor2012b), maturation in peak alpha frequency may be especially related to the development of audiovisual speech processing.

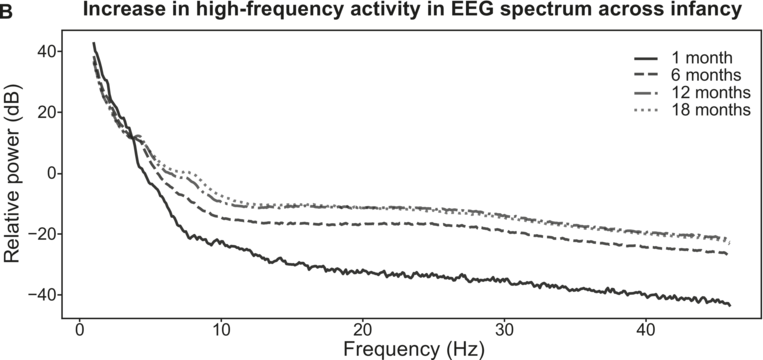

Overview of EEG maturation in infancy.

Electrophysiological activity during speech processing during infancy (modelled from Menn et al., Reference Menn, Männel and Meyer2023b). Slow electrophysiological activity (< 5–10 Hz) is initially prevalent. There is a general acceleration in the frequencies of electrophysiological activity across early childhood (A). High-frequency activity starts to emerge around six months of age (B).

Figure 37.2(A) Long description

The darker shades of gray represent higher levels of activity. The horizontal axis is age in months, and the vertical axis is frequency. A color gradient scale ranges from minus 20 to 30. The values are estimated.

Figure 37.2(B) Long description

It plots four declining lines that originate at (2, 40) and terminate at (48, minus 10), (48, minus 8) and (48, minus 40). The legends for 1 month, 6 months, 12 months and 18 months are given at the top right of the graph. The values are estimated.

In addition to the increase in iAPF, fast oscillatory activity (i.e., gamma-band rhythms) only gradually emerges in the infant brain, based on continuous changes in the excitation–inhibition balance across development. In the adult brain, there is a balance between excitatory and inhibitory activity, and neural excitation is followed by somewhat proportional inhibition (Shu et al., Reference Shu, Hasenstaub and McCormick2003). The excitation–inhibition balance in the brain matures with development, giving rise to windows of plasticity in which the excitation–inhibition balance is optimal for neural plasticity and learning, thus enabling a sensitive period during childhood (Werker and Hensch, Reference Werker and Hensch2015). The excitation–inhibition balance is also crucial for the emergence of neural oscillations (Buzsáki, Reference Buzsáki2006; Buzsáki and Watson, Reference Buzsáki and Watson2022; Poil et al., Reference Poil, Hardstone, Mansvelder and Linkenkaer-Hansen2012), which arise from alternating periods of excitation and inhibition. Slow electrophysiological activity in the delta and theta range is already present in the auditory language areas prenatally (Arichi et al., Reference Arichi, Whitehead and Barone2017; Chipaux et al., Reference Chipaux, Colonnese and Mauguen2013; Moghimi et al., Reference Moghimi, Shadkam and Mahmoudzadeh2020; Routier et al., Reference Routier, Mahmoudzadeh and Panzani2017; Vecchierini et al., Reference Vecchierini, André and d’Allest2007). In contrast, faster oscillatory rhythms (i.e., gamma-range activity), which require the rapid interaction between excitatory neurons and inhibitory interneurons, only gradually emerge towards the second half of the first year (Le Van Quyen et al., Reference Le Van Quyen, Khalilov and Ben-Ari2006; Pivik et al., Reference Pivik, Andres and Tennal2019). This is potentially caused by the delayed migration of inhibitory interneurons until after birth (Xu et al., Reference Xu, Broadbelt and Haynes2011). It has recently been proposed that this trajectory of electrophysiological development from slow to fast affects infants’ processing of temporal information in speech (Menn et al., Reference Menn, Männel and Meyer2023a; see Figure 37.2). The developing brain might be initially well suited for picking up especially the low-frequency rhythmic regularities in the environment (such as prosodic stress and syllable rhythms) but struggles with information at shorter timescales, such as individual phonemes. Indeed, it has been shown that infants still struggle to segment individual speech sounds from fluent speech. Bijeljac-Babic et al. (Reference Bijeljac-Babic, Bertoncini and Mehler1993) tested newborns’ ability to discriminate short speech sequences. While newborns showed significant discrimination of bisyllabic versus trisyllabic sequences, they showed no evidence for discriminating bisyllabic utterances that only differ in the number of phonemes within a syllable. This indicates that newborns’ speech processing initially focuses on larger units of speech, but they are not yet able to process the fast pace of the phoneme rhythm. Young infants’ inability to process phoneme-rate information in fluent speech may seem at odds with countless studies demonstrating their remarkable ability to discriminate between unfamiliar phonemes (Kuhl, Reference Kuhl2007; see Werker et al., Reference Werker, Yeung and Yoshida2012, for a comprehensive review). However, these studies typically present to-be-distinguished phonemes individually with long inter-stimulus intervals, which may suit infants’ long temporal integration windows. Infants learn phonemes from fluent speech, and they show the first signs of native phoneme acquisition around 6–12 months of age, coinciding with the emergence of high-frequency electrophysiological activity. This activity would potentially allow them to segment phonemes from fluent speech. It is therefore likely that the emergence of high-frequency electrophysiological activity constrains phonological acquisition towards the second half of the first year. Studies investigating young infants’ phoneme recognition in fluent speech are currently scarce (but see Menn et al., Reference Menn, Männel and Meyer2023b).

Taken together, there is strong evidence for a maturation of electrophysiological processing speed across infancy and early childhood, as indexed by both the acceleration of iAPF and the emergence of high-frequency activity. This maturation in electrophysiological processing abilities may provide infants with novel possibilities to process speech as they age (see Elman, Reference Elman1996, for a similar idea on chronotopic constraints). We hypothesise that developmental constraints on speech processing may guide infants’ attention to specific parts of the speech signal, namely those at timescales the infant is equipped to process based on their electrophysiological capabilities. This will be reflected in their neural tracking. As a result, different input rhythms are important across development, initially slow prosodic rhythms and later also the faster phonological rhythms (also see Menn et al., Reference Menn, Männel and Meyer2023a).

37.6 Implications for Language Acquisition Research

Children are active learners, selectively attending to important information. We argue that this is reflected in their neural tracking of speech, with neural tracking reflecting their temporal attention. Neural tracking maximises the uptake of relevant information from the noisy multimodal environment while being constrained by the maturation of the underlying oscillatory circuits. While it has been shown that infants track speech from an early age, it is currently still unclear which factors affect infants’ neural tracking. More research is needed to establish the modulation of infants’ neural tracking by neural maturation, as well as by cross-modal influences, linguistic knowledge, and attention. We expect neural tracking to change with development (due to both maturational constraints and developing linguistic knowledge), but also with, for instance, task demands and motivational or attentional state.

Our proposal has several consequences for language acquisition research. It is important to take individual differences in electrophysiological profile into account, both maturational differences as well as individual differences (neurodiversity, see below in Section 37.6.1) and changes in attentional or motivational state. First of all, we hypothesise changes in tracking based on neural development. Electrophysiological maturation constrains infants’ possibilities for speech processing, initially only allowing them to focus on slow prosodic and syllable rhythms of speech. In line with electrophysiological acceleration, we hypothesise that neural tracking will transition to faster speech rhythms across the first year of life, as electrophysiological maturation allows them also to process speech information at this timescale. We hope that more awareness of and knowledge about maturational constraints in language acquisition will further our understanding of speech processing, including interpretations about phonemic processing in continuous speech, and how larger units and chunks might be most effectively processed there (Menn et al., Reference Menn, Männel and Meyer2023a, Reference Menn, Männel and Meyer2023b).

Secondly, neural tracking will be influenced by linguistic and cognitive development. As linguistic knowledge is built up, different rhythms will be more important for infants’ speech processing, affecting neural tracking. Furthermore, linguistic knowledge will serve as a top-down influence on tracking (Choi et al., Reference Choi, Batterink, Black, Paller and Werker2020). In addition, cognitive processes such as working memory and executive functioning are developing and may affect neural tracking. When assessing developmental differences, it is important to distinguish general brain maturation effects from effects due to the development of cognitive processing and representations (with brain maturation and cognitive development obviously also having mutual influences).

Thirdly, besides the developmental effects due to electrophysiological maturation and cognitive development, we expect that infants’ focus on different timescales in speech is also affected by task demands and infant state. Above, we already discussed possible influences of multimodal input, but also other characteristics of the input might affect neural tracking. For instance, while we assume that infants initially employ information in the slow prosodic stress rhythm for higher-level linguistic abstraction, there may be situations in which this information is not informative. This could, for instance, be the case in studies with artificially rhythmic stimulus materials. We then expect infants to shift their attention to different rates providing more informative cues, which would be reflected in an increase of neural tracking in the attended rate and a decrease of tracking in the normally expected prosodic rate. Also, in natural speech, different language and stimulus characteristics can determine whether it is important for the child to track specific rhythms, for example depending on whether the stressed-syllable rhythm gives cues to segment words from continuous speech in the particular language or stimulus set. Cross-linguistic differences in rhythmic cues and their informativeness for linguistic inference may also lead to different results in studies investigating different languages. Additionally, neural tracking may shift depending on the infant’s current state. It may be easier to process bottom-up cues provided by acoustic amplitude modulations compared to top-down influences on tracking, which may require more effort (Song and Iverson, Reference Song and Iverson2018). Infants may therefore resort to bottom-up tracking at the rate of strong acoustic modulations in cases of low ‘motivation’ (e.g., if the infant is tired).

Thus, it is important to take stimulus characteristics into account. Researchers often use generic frequency bands to establish neural tracking, while it is crucial to report and use stimulus-specific frequency characteristics of the speech input (see Keitel et al., Reference Keitel, Gross and Kayser2018). Only then can we discover how stimulus characteristics interact with underlying oscillatory possibilities in establishing neural tracking, and the mechanisms through which neural tracking might be related to successful language acquisition. Besides stimulus-specific speech regularities, stimulus variability also needs to be taken into account. We expect different properties of neural tracking when stimuli are repeated over and over again (such as in Ortiz Barajas et al., Reference Ortiz Barajas, Guevara and Gervain2021), compared to when there is more variability (in both content and prosody) in natural speech.

37.6.1 Implications for Atypical Language Acquisition: Autism

Besides maturational differences, individual differences in neural make-up (‘neurodiversity’) are also expected to be important for neural tracking and its relation with language acquisition. Neurodevelopmental conditions often give rise to variation in attentional selection capabilities, which can enable language growth or result in language delay (D’souza et al., Reference D’souza, D’souza and Karmiloff-Smith2017; Grice et al., Reference Grice, Wehrle and Krüger2023). We argue that differences in neural constraints will result in different neural tracking possibilities, which might be reflections of how attentional processes influence language acquisition, possibly through differences in the mechanistic constraints that are in place. Here, we will work out how neural constraints and their maturation might relate to variability in language development in autism (see Chapter 47 for an overview of behavioural entrainment in autism).

One current hypothesis about biological mechanisms in autism states that the balance of neural excitation and inhibition (E/I balance) is altered in autistics (Bruining et al., Reference Bruining, Hardstone and Juarez-Martinez2020; Dickinson et al., Reference Dickinson, Jones and Milne2016; Rubenstein and Merzenich, Reference Rubenstein and Merzenich2003; Snijders et al., Reference Snijders, Milivojevic and Kemner2013) and that this E/I imbalance may lead to differences in neural oscillations. Indeed, autistic children show differential development in EEG oscillations and non-oscillatory electrophysiological activity (Tierney et al., Reference Tierney, Gabard-Durnam, Vogel-Farley, Tager-Flusberg and Nelson2012), and these electrophysiological differences relate to language development (Romeo et al., Reference Romeo, Choi and Gabard-Durnam2021; Shuffrey et al., Reference Shuffrey, Pini and Potter2022; Wilkinson et al., Reference Wilkinson, Gabard-Durnam and Kapur2020). In a recent study, it has been shown that the development of E/I imbalances across childhood and adolescence are associated with individual differences in listening comprehension in both autistic and non-autistic children (Plueckebaum et al., Reference Plueckebaum, Meyer, Beck and Menn2023). Additionally, there are some indications that the maturation of iAPF is atypical in autistic children (Edgar et al., Reference Edgar, Dipiero and McBride2019; Green et al., Reference Green, Dipiero and Koppers2022; but see Carter Leno et al., Reference Carter Leno, Pickles and van Noordt2021; Lefebvre et al., Reference Lefebvre, Delorme and Delanoë2018). Individual differences in iAPF development for autistic individuals have been related to atypicalities in temporal audiovisual integration. In particular, there are indications that autistic individuals may show a widened temporal-binding window for integration and consequently decreased sensitivity to asynchrony in audiovisual speech (Zhou et al., Reference Zhou, Cui and Yang2022). This suggests that autistic individuals employ visual information to a much lesser degree for generating auditory predictions. Indeed, Ronconi et al. (Reference Ronconi, Vitale and Federici2023) showed that audiovisual integration in autistic children is primarily driven by auditory processing, which phase-resets visual activity. Whether reduced reliance on visual information in autism impacts neural tracking of speech is an open issue.

In a recent study assessing infants with a family history of autism, we did not identify differences in neural tracking of sung audiovisual nursery rhymes compared to infants with no autism family history (Menn et al., Reference Menn, Ward and Braukmann2022b), although the identified relation between an increase in stressed-syllable tracking at 10 months with a later larger vocabulary was stronger for infants with an autism family history. In contrast, in a small sample of adults, reduced neural tracking of auditory-only speech in autistic versus non-autistic individuals has been identified (Jochaut et al., Reference Jochaut, Lehongre and Saitovitch2015). Differences between these studies might reflect developmental differences, or might be due to different stimuli (song versus speech, audio versus audiovisual). In future work, it is important to establish how neural tracking of speech is related to neural development, and how that might result in variability in language acquisition also in other E/I-atypical populations. Research investigating the development of E/I balance in infancy is only just emerging, but recent studies have reported early imbalances also for infants with genetic risks for ADHD (Begum-Ali et al., Reference Begum‐Ali, Goodwin and Mason2022; Carter Leno et al., Reference Carter Leno, Pickles and van Noordt2021), and their relationship to language development poses an exciting venue for future research.

37.7 Conclusion

In this chapter we reviewed current evidence on infants’ neural tracking of speech. Neural oscillations in the infant brain synchronise with the rhythm of speech, tracking it at different frequencies. This predicts word segmentation and later language abilities. We present the hypothesis that rhythmic neural speech tracking reflects infants’ attention to specific parts of the speech signal (e.g., stressed syllables), and simultaneously acts as a core mechanism for maximising temporal attention on to those parts. Neural constraints on speech tracking might be influenced by neural maturation, and we set out how this might be reflected in both typical and atypical language development.

Summary

This chapter reviews research on infants’ neural tracking of speech, and how this process predicts later language abilities. We hypothesise that neural speech tracking reflects infants’ temporal attention to specific parts of the speech signal. Neural maturation in typical and atypical development might influence constraints on neural tracking.

Implications

Future research on neural tracking of speech should take maturational constraints into account, as well as individual differences herein. Temporal stimulus characteristics should always be specifically described to understand the interaction between environmental input, brain and language development, and infant state.

Gains

Understanding underlying neural mechanisms in the developing brain and their interaction with the environment is crucial for understanding individual differences in speech perception and language development.