Introduction

Measuring the students’ knowledge in a particular subject is not easy. Often, months or even years of study must be summarized in a single assessment. For this reason, it is important to write proper exams within the academy, which involves time and effort for lecturers. In this regard, multiple-choice items (MC) are popular nowadays for measuring knowledge. They allow evaluating a considerable number of students in an accurate, objective, and quick way that makes lecturers able to sample efficiently large syllabus (Haladyna et al., Reference Haladyna, Rodriguez and Stevens2019).

For an exam to be effective, items’ difficulties should vary, predominating the items with an intermediate difficulty. More importantly, items must possess strong psychometric properties to effectively differentiate between examinees’ knowledge levels. That is why the stems and response options must be clear enough and avoid error induced by poor writing so only the examinees’ knowledge is assessed. Additionally, examinees with diverse personality traits have different academic performances (Beaujean et al., Reference Beaujean, Firmin, Attai, Johnson, Firmin and Mena2011; Chamorro-Premuzic & Furnham, Reference Chamorro-Premuzic and Furnham2003, Reference Chamorro-Premuzic and Furnham2008; Coenen et al., Reference Coenen, Golsteyn, Stolp and Tempelaar2021; Conard, Reference Conard2006; De Feyter et al., Reference De Feyter, Caers, Vigna and Berings2012; Furnham et al., Reference Furnham, Chamorro-Premuzic and McDougall2003; Furnham & Chamorro-Premuzic, Reference Furnham and Chamorro-Premuzic2004; Nye et al., Reference Nye, Orel and Kochergina2015). Sometimes, these personality traits interact with the item-writing flaws and make the assessment unfair and dependent on the examinees’ personality (Frary, Reference Frary1991).

Standardized recommendations for writing items were first proposed by Haladyna and Downing (Reference Haladyna and Downing1989a, Reference Haladyna and Downing1989b). They reviewed numerous manuals written by lecturers about their own experiences creating items. By compiling the information, they identified common practices and finally collected 31 recommendations (Haladyna et al., Reference Haladyna, Downing and Rodriguez2002). Fourteen out of these 31 initial recommendations were specifically focused on writing alternatives to the correct answer, which are called distractors (Haladyna & Rodriguez, Reference Haladyna and Rodriguez2013). The importance of writing distractors lies in the complexity of making them plausible. This task often consumes the most time when designing an exam. If examinees easily discard the alternatives to the correct answer, the items will lack discrimination making it impossible to distinguish between individuals with high and low content knowledge. This meticulous writing demands considerable effort, which sometimes leads teachers to prefer alternatives that are easier to generate, such as “None-of-the-above” (NOTA; Frey et al., Reference Frey, Petersen, Edwards, Pedrotti and Peyton2005). However, the use of NOTA is often discouraged, despite limited research on the relevance of writing recommendations and inconclusive empirical evidence (Tarrant et al., Reference Tarrant, Ware and Mohammed2009). Therefore, finding a strategy that reduces the cost of writing items without compromising their quality is highly relevant.

Use of NOTA

In their initial review, Haladyna and Downing (Reference Haladyna and Downing1989b) noted that items with NOTA are more difficult and less discriminatory. Test scores are also less reliable, and criterion validity is negatively affected. They concluded that they had no advantage in this alternative to consider their recommendation, and reinforce this recommendation years later, in their manual (Haladyna & Rodriguez, Reference Haladyna and Rodriguez2013). Even so, they commented that the ideal approach was to write distractors that provide information to the item and focus on the question.

Nevertheless, contradictory results are still found, though three general issues could be highlighted. First, some authors point out that item difficulty increases when NOTA is included. Some of them consider this increment as something positive when the test would be overly easy otherwise (Frary, Reference Frary1991; Rich & Johanson, Reference Rich and Johanson1990), whereas others argue that this increment is artificial and unrelated with the examinees’ skills (DiBattista et al., Reference DiBattista, Sinnige-Egger and Fortuna2014; Downing, Reference Downing2005). Mathematically, including NOTA increases difficulty and reduces discrimination, unless very few items with NOTA are included (Persson, Reference Persson2023). However, there is a general agreement that the increase in difficulty is more pronounced when NOTA is the correct answer, as opposed to being a distractor (DiBattista et al., Reference DiBattista, Sinnige-Egger and Fortuna2014; Martínez et al., Reference Martínez, Moreno, Martín and Trigo2008; Pachai et al., Reference Pachai, DiBattista and Kim2015). Some authors also commented on the uncertainty NOTA creates during the assessment as one of the biggest problems for the examinees (Boland et al., Reference Boland, Lester and Williams2010; Dochy et al., Reference Dochy, Moerkerke, De Corte and Segers2001; Frary, Reference Frary1991). When defending their use, the recommendation is to include NOTA only when better distractors cannot be created, considering it a superior alternative to a non-functional distractor, as it enhances discrimination (Pachai et al., Reference Pachai, DiBattista and Kim2015; Rich & Johanson, Reference Rich and Johanson1990). Yet, Little (Reference Little2023) reports that, when including NOTA as a one of the distractors, neither performance nor confidence is harmed when students evaluate item-by-item, whereas they indicate, later on, that they were less confident when they found NOTA items, which states a contradiction in the two different moments. She suggests that there are beliefs about NOTA that could lead students to think that their performance would worsen, even when they do not have real evidence about that. However, she do not have information about the impact in the perceived harm when NOTA is the correct answer, so student could determine that NOTA was always an incorrect answer, leading to a better performance and more confidence in their answers.

Lastly, some authors discuss the use of NOTA concerning testing effects, meaning the formative power of assessment. When NOTA is the correct answer, the exam does not aid examinees in knowledge acquisition, as they lack access to the correct answer during the test (Blendermann et al., Reference Blendermann, Little and Gray2020; Jang et al., Reference Jang, Pashler and Huber2014; Odegard & Koen, Reference Odegard and Koen2007). In this regard, NOTA can devaluate the assessment’s purpose as an educational tool, as items can be answered correctly without understanding the syllabus (Boland et al., Reference Boland, Lester and Williams2010). Additionally, its inclusion may increase guessing, which reduces the reliability of obtained scores (Persson, Reference Persson2023).

Personality Traits

Similar to NOTA, research on personality shows contradictory results. Chamorro-Premuzic and Furnham (Reference Chamorro-Premuzic and Furnham2003) found a positive relationship between Conscientiousness and examination grades and a negative relationship between these grades with Extraversion and Neuroticism. These authors also reported the same positive relationship between academic performance and Conscientiousness and a negative one with Extraversion (Furnham et al., Reference Furnham, Chamorro-Premuzic and McDougall2003). Both studies considered the overall marks in the first two academic years. They also found a positive correlation between statistics examination grades and Conscientiousness and a negative correlation with Extraversion (Furnham & Chamorro-Premuzic, Reference Furnham and Chamorro-Premuzic2004). Conard (Reference Conard2006) identified a positive relationship between three different measures of academic performance (college GPA, course performance, and class attendance) and Conscientiousness, but found no relationship with other traits. Again, Chamorro-Premuzic and Furnham (Reference Chamorro-Premuzic and Furnham2008) found that academic performance, based on overall marks in the first university year, correlated positively with Openness to Experience and Conscientiousness. Beaujean et al. (Reference Beaujean, Firmin, Attai, Johnson, Firmin and Mena2011) examined the relationship between personality and reading and math achievement. They found that Conscientiousness and Openness to Experience influenced both reading and math performance, Agreeableness predicted reading achievement, and Extraversion affected math performance. Moreover, De Feyter et al. (Reference De Feyter, Caers, Vigna and Berings2012) found an effect of four out of the five Big Five traits. Agreeableness, Conscientiousness, and Neuroticism positively predicted academic success (measured as the proportion of obtained to attempted credits), while Extraversion showed a negative relationship. Nye et al. (Reference Nye, Orel and Kochergina2015) found an effect on academic success (measured as GPA and a Russian unified exam comprising Math, Lenguage, and Social Sciences) for all the traits except Conscientiousness. In their study, they reported a negative relationship with Extroversion and a positive effect on Agreeableness, Neuroticism, and Openness to experience. Finally, Coenen et al. (Reference Coenen, Golsteyn, Stolp and Tempelaar2021) found that Conscientiousness had a positive relationship with academic performance and Risk Preference had a negative relationship, while they found no significant results for Emotional Stability. Academic performance was measured by grades in a quantitative methods course.

Aim and Objective

Considering this information, the main interest of this article is to explain the use of NOTA and analyze whether there are differences when it is used as the correct answer versus a distractor, to determine if it negatively affects the quality of multiple-choice items. To accomplish this, three different forms of the same statistics items were designed based on the presence of NOTA, and its function within the item, resulting in three different exams. Thus, we could systematize the use of NOTA in the same items but with different roles and compare it with its absence.

Further, while personality traits and academic performance have been linked in numerous studies, most analyses include only correlational data. More complex models could more accurately capture the effect of personality traits on the probability of answering exam questions correctly, which in turn influences academic performance. Although the evidence from the cited studies shows that the personality traits most closely linked to academic performance are Conscientiousness, Extraversion, and Neuroticism, including all Big-Five personality traits and Impulsivity in more refined analyses could reveal the effects of other traits.

Additionally, the relationship between personality and how examinees handle NOTA options could be relevant. As Frary (Reference Frary1991) pointed out, personality might be related to differences in reactions to this type of item, as only some examinees reported increased uncertainty, whereas Little (Reference Little2023) defended that this is a student’s belief but has no real implication in their performances.

Unraveling the true functioning of NOTA and determining if its use could be acceptable under certain circumstances will help lecturers save time and effort in creating items, allowing them to invest it in others. This will lead to exams that better reflect examinee performance, ultimately enhancing validity and fairness in assessment. In this sense, an experimental study is proposed, as the different conditions can be manipulated and controlled to draw stronger conclusions. Moreover, using more complex models could help capture variance related to items and examinees combined, as well as their potential interaction. Crossed random-effects models could model these complex relationships, allowing for the inclusion of covariates related to examinees (such as personality traits) or items (psychometric properties or writing flaws, like NOTA in this case). Further, we also estimate a Linear Logistic Test Model (LLTM; Fisher, Reference Fisher1973) to estimate the general effect of including NOTA as the correct answer or a distractor.

The specific hypotheses evaluated are as follows:

-

1. The presence of NOTA may decrease the probability of answering correctly. This effect will be more noticeable when NOTA is the correct answer than when it is a distractor.

-

2. Personality traits will affect the probability of answering correctly (Conscientiousness, Emotional Stability and Openness to Experience in a positive direction; Extroversion and Impulsivity in a negative direction).

-

3. Examinees will exhibit different behavior when answering NOTA items, and this behavior could be influenced by their personalities.

Method

Participants

The examinees were 449 volunteer psychology students at Universidad Autónoma de Madrid (UAM) who signed a consent indicating the tasks of the study and could leave the experiment anytime if they decide. They were rewarded with extra credits that they could exchange in any course associated with the rewards program. However, they were requested to pass the Statistics I course, which is a mandatory course for first-year undergraduates, to get access to the experiment. This project was approved by the research ethics committee of the university.

The main task had three forms, randomly distributed across participants to guarantee the experimental requirements: Form A was answered by 146 examinees, Form B by 156 examinees, and Form C by 147 examinees. A control task was conducted, and some of the data were eliminated due to the detection of disengagement. The control task was a series of questions where participants had to choose a specific answer; otherwise, they were excluded. After this check, 435 examinees (140 for Form A, 152 for Form B, and 143 for Form C) were retained. For Form A, the group included 22 males and 103 females, with a mean age of 20.66 years (SD = 3.79). For Form B, the group included 26 males and 119 females, with a mean age of 20.49 years (SD = 2.17). For Form C, the group included 14 males and 125 females, with a mean age of 19.95 years (SD = 1.76).

The data were collected in two different proctored sessions with a limited time, in order to unify the administration conditions as much as possible. Therefore, the answers from both sessions were linked through an anonymous ID, and the examinees who do not provide data for one of the sessions were removed from the final database. Finally, 409 examinees (125 for Form A, 145 for Form B, and 139 for Form C) were retained. The mean age of the examinees was 20.36 years old (SD = 2.68, min = 17, max = 59). Sixty-two examinees were males, and 347 were females.

Design of the Study

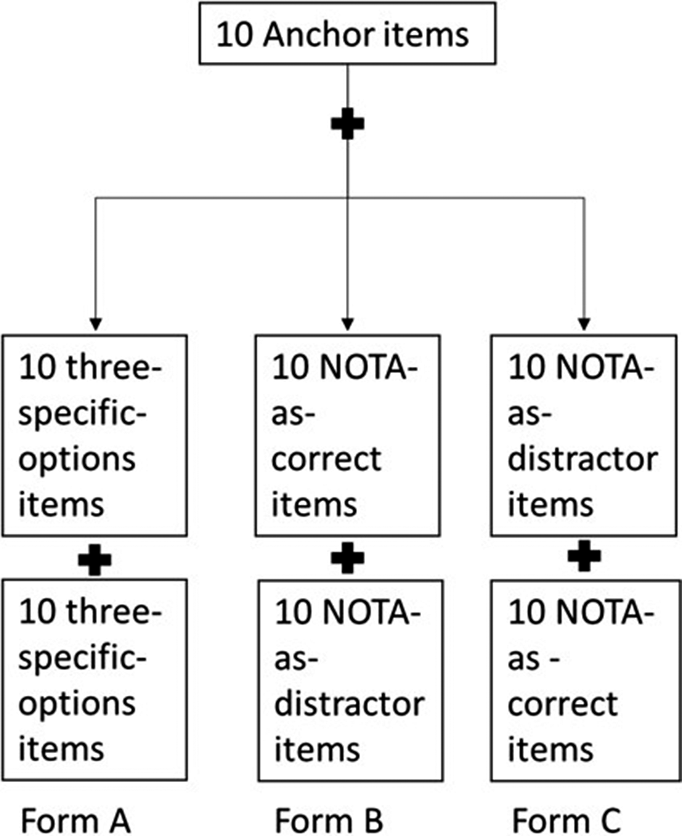

A concept inventory to capture the differences between the use of NOTA was developed. These instruments have been traditionally made to address misconceptions in some fields, especially in physics and chemistry. Halloun and Hestenes (Reference Halloun and Hestenes1985) developed the first inventory concept to help the examinees understand Newtonian physics concepts. They demonstrate that it was especially useful to change examinees’ previous beliefs about difficult concepts. For this reason and knowing that statistical misconceptions are common and hard to change, these instruments are suitable for the present study. The starting point to create the task was the Statistic Concepts Inventory (SCI), which was developed to assess Engineering examinees’ understanding of statistical concepts (Stone et al., Reference Stone, Allen, Rhoads, Murphy, Shehab and Saha2003). The inventory includes items referred to as probability, descriptive statistics, inferential statistics, and graphical displays. This inventory was adapted for psychology students, resulting in 30 items with three-alternative tests to measure descriptive and inferential statistics. Ten items had three specific options, which were the same for all examinees, as an anchor test. Then, the other 20 items were balanced to finally get three versions of each one: one with three specific options, one with NOTA as the correct answer and two distractors, and one with NOTA as one of the distractors, besides the correct option and another distractor. With these items, three forms of the test were created. One had three specific options for each of the 30 items, and the other two included the 10 anchor items and 20 with the NOTA option, balancing its use as the correct option or one of the distractors between these two different forms. The three versions of the test were randomly distributed to the participants. Figure 1 shows a graphic about the designed task.

Structure of the three forms of SCI tasks.

An example of item for the three forms is shown in Table 1, and the complete SCI (in Spanish) can be consulted in the Supplemental Appendix.

Example of an item in their three versions

The correct answer is in bold. Please note that Forms B and C are counterbalanced. In 10 items, NOTA is the correct answer in Form B and another 10 times is a distractor, and the same happen in Form C.

In terms of personality traits instruments, two different tests were used: a Spanish version of the big-five personality test, OPERAS (Costa & McCrae, Reference Costa and McCrae1992; Vigil-Colet et al., Reference Vigil-Colet, Morales-Vives, Camps, Tous and Lorenzo-Seva2013), and a Spanish version of Barrat’s Impulsiveness Scale (BIS-11; Oquendo et al., Reference Oquendo, Baca-García, Graver, Morales, Montalvan and Mann2001; Patton et al., Reference Patton, Stanford and Barrat1995). OPERAS contains 40 personality items, 7 of each big-five personality scale and the rest considering Social Desirability, and respondents must answer in a Likert scale from 1 to 5. Regarding the BIS-11, the scale contains 30 items in which respondents must answer a 4-point frequency scale. Some personal and academic information was also collected. In particular, the participants were asked about their age, gender, and their marks in the Spanish University-access exam and each statistics subject in the degree (Research methods, Statistics I and Statistics II).

Procedure and Data Analyses

The data were collected in two proctored sessions, one for the SCI and, a week later, the personality and personal data.

To start with the analyses, only the 10 items for the anchor test were considered to determine if the three groups that have taken the three different test versions were comparable in terms of test reliability and items difficulty. To assess measurement invariance across the three groups, a Multiple Indicators, Multiple Causes (MIMIC) model was employed (Jöreskog & Goldberger, Reference Jöreskog and Goldberger1975). Whereas a multi-group confirmatory factor analysis could account for a more complete invariance study, they can be more sensitive to small group sizes, potentially leading to reduced statistical power to detect non-invariance or estimation difficulties. Alternatively, MIMIC models incorporate group variables directly as predictors of the latent factor and/or the observed item indicators, being more appropriate considering the sample size. Then, the reliability of the complete test in every form and the personality scales were analyzed.

A long format database was used to perform the rest of the analyses, and all the variables were standardized, in order to better compare the coefficients. To model the probability of getting the correct answer for each item, a crossed random-effects analysis was performed. We took a Bayesian approach, as it could be more suitable for complex structures, like crossed random-effects models could have, as it can enhance stability for the estimations. Moreover, we set weak priors, specifically normal distributions with means of zero and standard deviations of three for the mean and standard deviation on items and examinees. Setting weak prior distributions allows us to regularize the estimations without giving information that could bias them, and normal distributions are common when talking about personality traits and items properties.

In these models, items and examinees are the random effects and the different covariates are fixed effects (Janssen et al., Reference Janssen, Schepers, Peres, De Boeck and Wilson2004). These models offer significant advantages in analyzing complex data structures compared to more simple models. For instance, ANOVAs average one of the factors, or simple regressions consider only one of them, leading to a partial understanding of the explained variability, and sometimes failing to capture all of it (Baayen et al., Reference Baayen, Davidson and Batesm2008; Hoffman, Reference Hoffman2015; Judd et al., Reference Judd, Westfall and Kenny2012). However, crossed random-effects models incorporate different factors as sources of variability (in this case, items and examinees), overcoming these problems. This leads to manage complex interactions between variables from distinct levels and estimate variances across these levels. They efficiently manage incomplete data, allowing for the use of partial information without discarding complete observations. Additionally, they provide flexibility in including predictors at various levels, enhancing the ability to capture the intricate relationships between variables, which leads to a more detailed understanding of the sources of variability and the dynamics within the data (Judd et al, Reference Judd, Westfall and Kenny2017).

The different items were considered repeated measures from the examinees’ knowledge level and personality traits as variables that do not change over time. The dependent variable is the achievement of the correct answer (a dichotomic variable, where 0 is a failure and 1 is an achievement). The differences in difficulty between items and the possible differences between examinees when they deal with NOTA as an option were also considered in the crossed random-effects model.

In particular, the examinees-level effects considered were impulsivity (IM), conscientiousness (CO), agreeableness (AG), emotional stability (ES), openness to experience (OE), and extraversion (EX). As information about the grades of the three courses (“Research Methods,” “Statistics I,” and “Statistics II”) was relatively related, we finally decided to only include information about the course grade that was passed by the larger percentage of students, which was “Research Methods.”

Further, the item-level effects were the discrimination of the different options (correct answer, most chosen distractor, and less chosen distractor), NOTA as the correct answer, and NOTA as a distractor.

To further test the effect of NOTA and complement the crossed random-effects model performed, a Bayesian-LLTM analysis was also performed, which have been used in some studies evaluating the effect of the response options (Hagenmüller, Reference Hagenmüller2020; Hohensinn & Baghaei, Reference Hohensinn and Baghaei2017). LLTM is an extension of the Rasch model (Rasch, Reference Rasch1980), which decomposes the item parameter (in this case, difficulty) into different categories that could increase or decrease the value. Applying this model could help to determine the impact of NOTA as the correct answer and a distractor in general terms, besides the specific question.

The analyses were conducted with Mplus (version 8; Muthén & Muthén, Reference Muthén and Muthén2017) and R software (version 4.1.2; Development Core Team, 2021), in particular with “brms” (Bürkner, Reference Bürkner2021) and “rstan” (Stan Development Team, 2021) packages.

Results

Groups Equivalence

Classical Test Theory

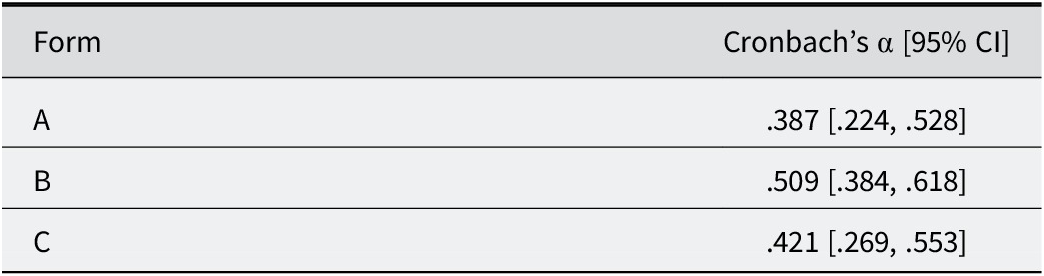

The first step was checking that the three groups were equivalent, so only the 10 anchor items were considered, and the data for the 435 examinees that complete the SCI were used. The score reliability in the three anchor tests was compared (Feldt et al., Reference Feldt, Woodruff and Salih1987), and Table 2 shows Cronbach’s α for the three forms and their confidence intervals.

Cronbach’s α for the 10-item forms and confidence intervals (95%)

The alpha comparison did not show significant differences between the three groups’ reliability (χ22; .05 = 1.602, p = .449).

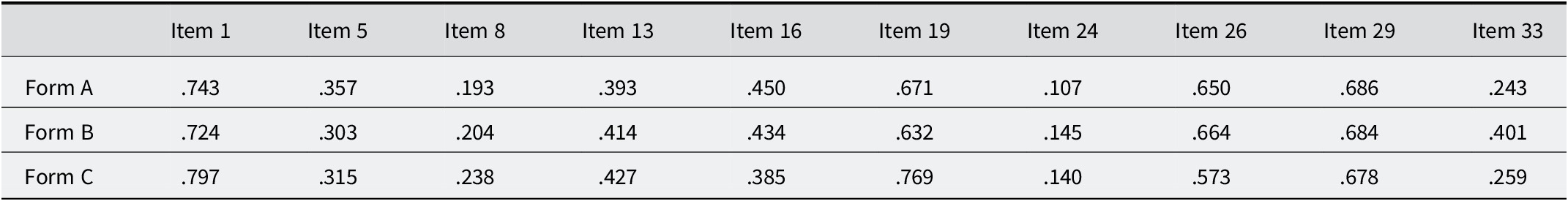

Then, the differences in difficulty in the anchor test were checked. Table 3 shows the values in difficulty between the three forms.

Difficulty in anchor items in the three forms

A between-subjects ANOVA with Bonferroni multiple-comparison correction was performed for every item, and no significant differences were found, as the corresponding α for a 99% confidence level is 0.003 (F 1: 2, 432 = 1.141, p = .32; F 5: 2, 432 = 0.535, p = .586; F 8: 2, 432 = 0.465, p = .628; F 13: 2, 432 = 0.169, p = .844; F 16: 2, 432 = 0.677, p = .509; F 19: 2, 432 = 3.452, p = .033; F 24: 2, 432 = 0.523, p = .593; F 26: 2, 432 = 1.489, p = .227; F 29: 2, 432 = 0.01, p = .99; F33: 2, 432 = 5.432, p = .005).

MIMIC Model

Finally, a MIMIC model to assure the groups’ equivalence was performed (Jöreskog & Goldberger, Reference Jöreskog and Goldberger1975; Muthén, Reference Muthén1989), as the sample size could be controversial in terms of analysis stability, and it is not recommended to perform a multiple-groups confirmatory analysis, as MIMIC models entail the analysis of a single covariance matrix. This implies a more parsimonious model favoring the estimation of the invariance model that can otherwise be difficult to estimate (i.e., multiple-groups CFA entails three variance–covariance matrices estimated with categorical data; Brown, Reference Brown2015). In MIMIC models, the latent factor and the items were regressed onto the form (i.e., the group was taken as a covariate). To assure that performing a MIMIC model is correct, we first fit a one-factor CFA model (χ235; 0.05 = 35.769, p = .432; RMSEA = .007; CFI = .995; TLI = .993). Given the results, which indicate a good fit of the data, we proceed with the MIMIC model, which also provides a good fit for the data (χ244; 0.05 = 45.279, p = .418; RMSEA = .008; CFI = .991; TLI = .989). The form covariate did not change the factor structure or produce strain areas in the solution (all modification indices were < 10.0). This result is consistent with the previous ones and shows that the indicators are invariant for the three forms.

Complete Forms Analyses

Classical Test Theory

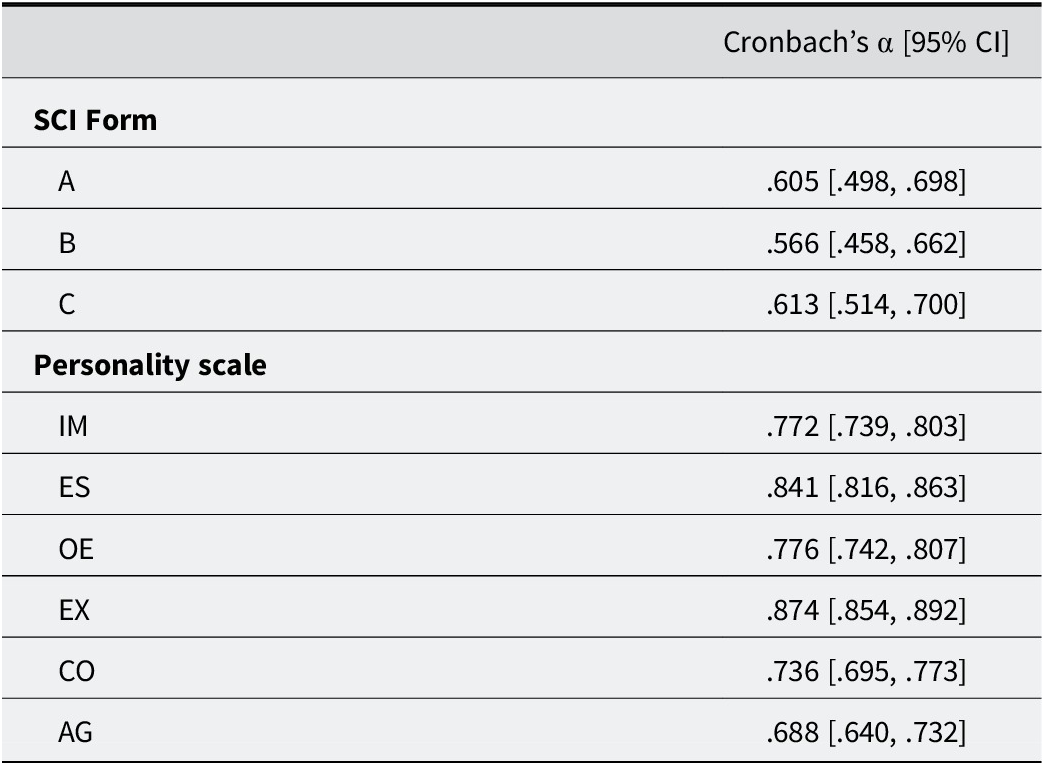

As with the anchor test, the reliability of the complete SCI forms and the personality scales were checked. Table 4 shows the Cronbach’s α and the confidence intervals of each measure.

Cronbach’s α for the completed forms and personality scales (with confidence intervals)

As it can be seen, Cronbach’s α values did not show significant differences across the three complete forms but were lower than expected. In terms of personality, all the scales have acceptable reliability, but it is not equal across the scales.

Difficulty index for all the items was also calculated. Table 5 shows the results for the three different forms.

Difficulty for all the items in the three forms

White background: 3-specific-options items; light gray background: NOTA-as-distractor items; dark gray: NOTA-as correct items.

Crossed Random-Effects Model

A first Bayesian crossed random-effects model with all the variables mentioned in the Data Analyses section was conducted. Then, the non-significant variables were eliminated to optimize the model and to explain as much variance as possible with the significant variables.

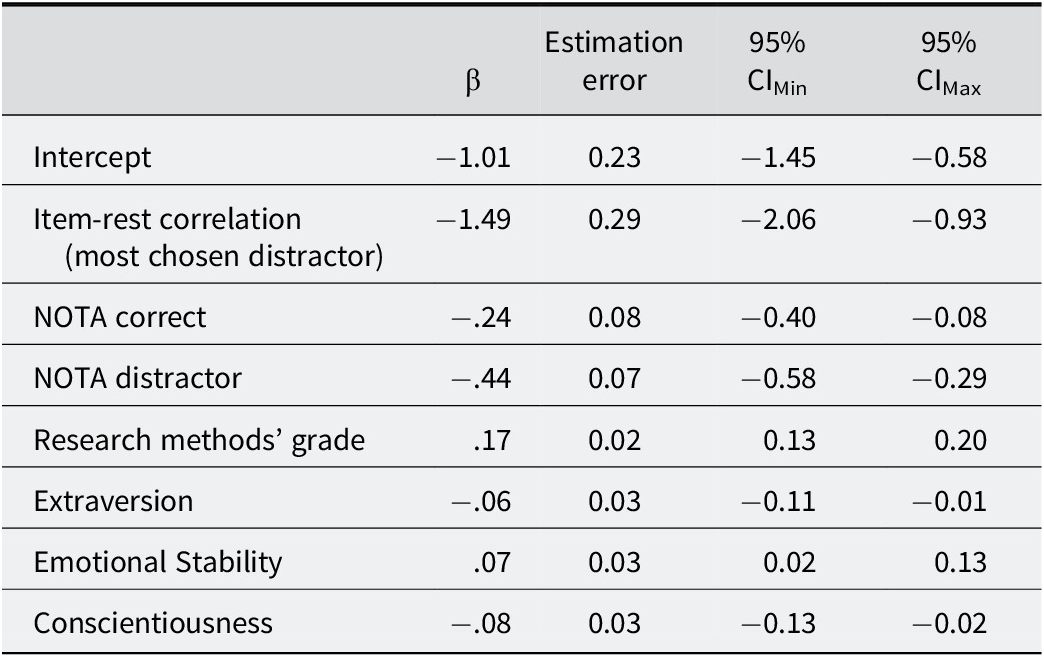

Table 6 shows the results of the optimized model, including all the statistically significant variables.

Fixed effects model estimates for correct answer probability (final model, standardized)

Note: CI = confidence interval.

Related to the items, the significant variables were the item-rest correlation for the most chosen distractor (β = −1.49; 95% CI [−2.06, −0.93]) and the inclusion of NOTA as a correct answer (β = −.24; 95% CI [−0.40, −0.08]) and as a distractor (β = −.44; 95% CI [−0.58, −0.29]). The three variables point to the increase in the probability of failure of the item when they are presented. Statistically significant differences in three of the personality traits were found: extraversion, emotional stability, and conscientiousness. According to the results, extroverted (β = −.06; 95% CI [−0.11, −0.01]) and conscientious (β = −.08; 95% CI [−0.13, −0.02]) people tend to fail more, whereas emotionally stable (β = .07; 95% CI [0.02, 0.13]) people get the correct answer. However, these effects are small. Lastly, the research methods’ grades (β = .17; 95% CI [0.13, 0.20]) are useful to predict the probability of getting the correct answer, even when the syllabus of these subjects is not directly related to the content of the SCI.

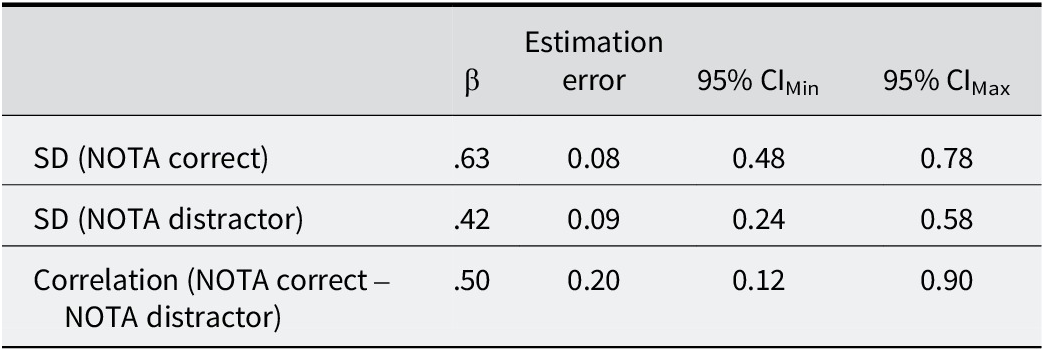

According to the model, examinees were expected to react to NOTA items in different ways depending on personal variables. Table 7 shows random effects estimation, which in this sense reflects the estimations for the standard deviations associated with NOTA as the correct answer or a distractor. Significant results support the hypothesis that there are differences between examinees when they have to deal with the presence of NOTA as an alternative, as the variance is different among examinees.

Random effects estimates for the final model

Note: CI = confidence interval; SD = standard deviation.

Significant results were found for all the values, which means that there are differences between examinees, both when NOTA is the correct answer and a distractor.

LLTM

A Bayesian LLTM (Fisher, Reference Fisher1973) was performed to check if NOTA as an option resulted significantly, reinforcing the previous conclusions. In this case, to distinguish between the complete item and the item without alternatives, question is used.

First, to check the fit of the data, the Rasch model was estimated, and a Bootstrap Goodness-of-Fit analysis using Pearson chi-squared was performed, showing a good fit of the data (p = .9, with a 95% of confidence level). This result suggests that the data do not significantly deviate from the model’s expectations. Furthermore, the correlation between the difficulty parameters for the Rasch model and the easiness parameter for the LLTM is −0.99. This high correlation confirms that the assumptions of the model have been met.

Table 8 shows the LLTM parameters for every stem, NOTA as the correct answer and NOTA as a distractor. In this case, lower values represent easiness, and higher values represent difficulty.

LLTM parameters

Note: CI: confidence interval; SE = standard error.

As it can be seen, the results obtained performing LLTM are similar to the crossed random-effects results, showing that NOTA both as the correct answer (η = 0.20; 95% CI [0.04, 0.35]) and as a distractor (η = 0.31; 95% CI [0.16, 0.46]) increase items’ difficulty.

Discussion

The main goal of this study was to assess whether crossed random-effects models can capture how examinee and item characteristics influence the probability of correct answers, and ultimately their scores. In particular, we were interested in the effect of flaws in the stems or the alternative answers, as NOTA. These flaws could lead to the evaluation of other variables such as their ability to detect these flaws and guess the correct answer, which would affect the reliability and validity of the results, as we were confounding the explained variance by measuring variables different from the knowledge level in the course.

As the results in the reliability of the scores and items’ difficulty indicate that the three groups were equivalent, and this conclusion is supported by the MIMIC model results, we considered that the results related to NOTA were going to be reliable as well. However, higher values of Cronbach’s α would be desirable to reinforce the security of the conclusions. The exam difficulty, in combination with the fact that it was a low-stakes assessment, could have affected the value of this indicator, introducing a greater percentage of guessing (Ulitzsch et al., Reference Ulitzsch, von Davier and Pohl2020). In this sense, a pilot study could have been beneficial to detect this issue, which is one limitation of the study. Moreover, the purpose of a concept inventory could be different from the purpose of a conventional exam, which could lead to differences in terms type of knowledge being assessed (more specific in an exam and wider in a concept inventory). In this sense, maybe the structure of a conventional exam could have been more suitable, which would be considered in future studies.

About the item-level results, we found that the item-rest correlation of the most chosen distractor was the most impactful variable, which seems logical considering that values of this indicator are expected to be high and negative. The higher, more positive value in this indicator, the greater the probability of failing the item. NOTA as an option also seems to harm the examinees’ performance, being greater when NOTA is one of the distractors, and it seems to interact with examinees. These results were consistent when performing a Bayesian LLTM, but contradict the first hypothesis, as it was expected that the probability of getting the correct answer would be lower when NOTA is the correct answer and not a distractor, as seen in other studies (DiBattista et al., Reference DiBattista, Sinnige-Egger and Fortuna2014; Martínez et al., Reference Martínez, Moreno, Martín and Trigo2008; Pachai et al., Reference Pachai, DiBattista and Kim2015). This could be explained by the fact that in the other studies, the overall difficulty is lower than in this one. More research studies are needed, but the conservative recommendation would be to avoid including NOTA options, as they reduce the probability of getting the correct answer. Even if we consider the increment in difficulty as something desirable, this increment can be considered artificial, as we are introducing more uncertainty in the items, and the positive effects could be related with the deletion of a non-plausible distractor, rather than the inclusion of NOTA (Butler, Reference Butler2018; Pachai et al., Reference Pachai, DiBattista and Kim2015). Further, the inclusion of NOTA does not provide any positive evaluation effects, as the examinees do not have access to the correct answer when taking the exam and NOTA is the correct answer, and prevent knowledge acquisition (Blendermann et al., Reference Blendermann, Little and Gray2020; Jang et al., Reference Jang, Pashler and Huber2014; Odegard & Koen, Reference Odegard and Koen2007). Moreover, the difficulty increment is not equal across the examinees, so the assessment turns out to be less fair when NOTA is used. We hypothesized that the inclusion of NOTA could lead to an increase in difficulty for the most competent individuals, as they could detect some unintended details in the response alternatives and doubt about the correct answer, while less competent individuals could disregard them. However, we would like to explore in-depth the examinees’ variables that affect the different behavior in the presence of NOTA.

Further, about the examinee-level effects, there are small but significant effects on Extraversion, Emotional Stability, and Conscientiousness. A specific measurement of the effect size cannot be given, as researchers have not already provided an accurate way to report it in crossed random-effects models (Correll et al., Reference Correll, Mellinger and Pedersen2022). Greater values in Extraversion and Conscientiousness lead to a greater probability of failing the item, in contrast to Emotional Stability, which leads to a greater probability of getting the correct answer. Extraversion and Emotional Stability results coincide with the results found in other studies (Chamorro-Premuzic & Furnham, Reference Chamorro-Premuzic and Furnham2003; De Feyter et al., Reference De Feyter, Caers, Vigna and Berings2012; Furnham et al., Reference Furnham, Chamorro-Premuzic and McDougall2003; Furnham & Chamorro-Premuzic, Reference Furnham and Chamorro-Premuzic2004; Nye et al., Reference Nye, Orel and Kochergina2015). The Conscientiousness results were surprising, as the opposite effect was expected, due to the results in other papers (Beaujean et al., Reference Beaujean, Firmin, Attai, Johnson, Firmin and Mena2011; Chamorro-Premuzic & Furnham, Reference Chamorro-Premuzic and Furnham2003, Reference Chamorro-Premuzic and Furnham2008; Coenen et al., Reference Coenen, Golsteyn, Stolp and Tempelaar2021; Conard, Reference Conard2006; De Feyter et al., Reference De Feyter, Caers, Vigna and Berings2012; Furnham et al., Reference Furnham, Chamorro-Premuzic and McDougall2003; Furnham & Chamorro-Premuzic, Reference Furnham and Chamorro-Premuzic2004). However, in the case of these studies, academic performance is measured by taking into account the marks in many subjects, which could give a more general perspective, as these marks have greater consequences on examinees’ academic trajectory. The different perspectives could have also affected the generalization of the results. However, our experimental focus and the aim to link the information about personality traits and the use of NOTA did not allow us to consider the other authors’ focus. Furthermore, Nye et al. (Reference Nye, Orel and Kochergina2015) considered in their study that the education system and the cultural context can explain some differences between studies. Furnham and Monsen (Reference Furnham and Monsen2009) found no relationship between math ability and conscientiousness. SCI includes statistical concepts items that the examinees have not specifically prepared for the task, so it required math ability to answer and could explain the differences in the results. Finally, examinees that have obtained a high grade in the Research methods subject also seem to have a greater probability of getting the correct answer, even when the SCI items were unrelated to this subject’s syllabus.

This work is not exempt from limitations. As mentioned, Cronbach’s α values are lower than desirable, so better results on reliability could lead to more stable conclusions. These results are also influenced by the low-stakes assessment conditions, as the examinees earned extra credit for participation, but there is no impact in their real evaluations, which would be more realistic but is unethical, as the expectation was that NOTA harmed academic performance. Furthermore, the great difficulty of the exam could also lead to a greater failure percentage than expected in this kind of assessment. Easier items should be incorporated into the exam to reduce the general difficulty and increase the variability, so this could lead to avoid range restriction in the examinees’ scores and, as a result, increase the reliability. On the other hand, the replacement of the distractors with NOTA has depended on the original SCI data (Stone, Reference Stone2006), and the logic was using the most useful distractors, replacing the ones that were worse psychometric properties. We thought that more “natural alternatives” are, indeed, easier to generate and were the first in which the lectures think when writing an exam, so eliminating these alternatives sounded more implausible. However, replacing different distractors could have led to different results in terms of difficulty and discrimination, even if we are using the same items in the three versions of the test.

Some other future lines are exploring the Conscientiousness results in future works to find out if there are cultural differences in the higher education trajectories in Spain, compared to other countries, or if the type of contents in SCI could lead to differences in performance (Furnham & Monsen, Reference Furnham and Monsen2009; Nye et al., Reference Nye, Orel and Kochergina2015). In this sense, exploring the different Conscientiousness facets could provide some answers, as the OPERAS does not include them, and other works have provided evidence of different correlations in these facets with academic performance (Chamorro-Premuzic & Furnham, Reference Chamorro-Premuzic and Furnham2003).

Furthermore, exploring what happen when we consider the omissions would be important, as they could also explain the performance of the examinees and provide more information about the examinees’ behavior when they must deal with items (Ulitzsch et al., Reference Ulitzsch, von Davier and Pohl2020). Future studies should work on IRT models that include the effect of the omissions on the probability of getting the correct answer, the personality traits, and the items’ characteristics. These models would also include the discrimination differences between items, and a guessing parameter, which could provide more information about the item’s functioning.

Finally, although differences between examinees have been found when NOTA is used as a response, it is still necessary to detect the reason it is not related to the specific personality traits included in this work. In this sense, as the anchor items did not vary between forms, statistical power to detect interactions between NOTA and personality traits could be reduced. Moreover, the small sample size could also lead to fail to detect the specific personality traits related to the effect of NOTA.

Some other traits, such as the intolerance of uncertainty, could be relevant in this context and help us explain the differences (Bryan et al., Reference Bryan, Beadel, McLeish and Teachman2021).

In conclusion, the results point out that including NOTA reduces the fairness in the assessment, introducing variability that does not depend on the examinees’ knowledge. Personality traits are also important and trying to reduce their impact in the evaluation, for instance, providing a quiet space and enough time for the evaluation, or making more stimulant activities for extroverted examinees to retain the important concepts could reduce the harmful impact in the final assessment. Furthermore, crossed random-effect models provide higher-quality results, as they can consider a greater amount of variability related to items and examinees.

Supplementary Material

The supplementary material for this article can be found at http://doi.org/10.1017/SJP.2026.10022.

Data Availability Statement

Data are available upon request due to privacy and ethical considerations.

Author Contribution

Conceptualization: all authors; Data curation: S.S.; Formal analysis: all authors; Investigation: S.S., C.G.; Methodology: all authors; Project administration: C.G.; Resources: C.G., R.O.; Software: S.S., R.O.; Supervision: C.G., R.O.; Validation: C.G.; Writing—original draft: S.S.; Writing—review and editing: all authors.

Competing Interests

None declared.