Recent years have seen an increase in the adoption of artificial intelligence (AI) across industries (Soulami, Benchekroun & Galiulina, Reference Soulami, Benchekroun and Galiulina2024). AI is no longer limited to back-end processes or automation of administrative tasks; it now influences how work is performed, who performs it, and how decisions are made (Kelley, Reference Kelley2022). Most organizations report they are investing in AI (Mayer et al., 2025). In the United States, estimates of workplace AI adoption vary but consistently show rapid growth. According to Gallup (Pendell, Reference Pendell2025), workplace AI use has nearly doubled over the past 2 years, with 19% of employees now using AI every week and an additional 40% engaging with it a few times per year. The Federal Reserve reports that between 20% and 40% of employees currently use AI at work, with adoption rates accelerating over time (Crane, Green & Soto, Reference Crane, Green and Soto2025). More conservative estimates by the Pew Research Center suggest that many employees have limited direct experience with AI: only 16% report currently using it for some part of their work, but another 25% believe their tasks could be performed with AI even if they are not yet doing so (Lin & Parker, Reference Lin and Parker2025). Overall, these findings indicate that AI use will become an increasingly necessary part of employees’ work as the technology continues to develop (Singh, Reference Singh2025).

Advancements in AI offer promising transformations for the workplace as they have been linked to positive outcomes for employees and employers alike, such as innovation, learning, performance, job satisfaction, and well-being (Ayoko, Reference Ayoko2021; Malik, Budhwar & Kazmi, Reference Malik, Budhwar and Kazmi2023; Pereira, Hadjielias, Christofi & Vrontis, Reference Pereira, Hadjielias, Christofi and Vrontis2023). However, organizations may struggle to realize these benefits if employees are reluctant to adopt AI, with mounting evidence documenting a sizable portion of employees being concerned about, hesitant, or opposed to the use of AI at work (Basile, Bradshaw & Charbonnier, Reference Basile, Bradshaw and Charbonnier2025; Gillespie, Lockey & Curtis, Reference Gillespie, Lockey and Curtis2021; Lin & Parker, Reference Lin and Parker2025). Indeed, many organizations acknowledge that they have yet to reach AI maturity (i.e., how equipped they are to leverage AI in their workplace) and are seeking ways to improve implementation (Basile et al., Reference Basile, Bradshaw and Charbonnier2025; Mayer et al., Reference Mayer, Yee, Chui and Roberts2025; Weill, Woerner & Sebastian, Reference Weill, Woerner and Sebastian2024). This misalignment raises questions about the factors that contribute to employees’ willingness to integrate AI in their work, as employee perceptions may play a valuable role in whether AI is used, resisted, or abandoned (Kelley, Reference Kelley2022).

Building on an extended version of the Technology Acceptance Model (TAM; Davis, Reference Davis1989b; Shuhaiber & Mashal, Reference Shuhaiber and Mashal2019), the objective of this study is to investigate the perceptions that inform employees’ affective attitudes toward using AI in the workplace and how these affective attitudes translate into intentions to use AI in the job. Specifically, we examine how perceived usefulness, perceived ease-of-use, and trust in AI influence employees’ evaluations of using AI, as these perceptions are relevant within organizational contexts that increasingly rely on AI to facilitate work (Daugherty & Wilson, Reference Daugherty and Wilson2024). In addition, this study considers whether these relationships differ based on employees’ gender, education level, or leadership status, providing insight into potential heterogeneity in workplace AI acceptance. Using two samples of employed adults, this study offers several contributions to the literature.

First, this study contributes to the organizational science literature on AI at work by shifting attention from the outcomes of AI implementation to whether and why employees are willing to use AI in the first place. That is, prior organizational science research has primarily focused on the outcomes of and boundary conditions for AI once it is already in use (Bankins, Ocampo, Marrone, Restubog & Woo, Reference Bankins, Ocampo, Marrone, Restubog and Woo2024; Malik et al., Reference Malik, Budhwar and Kazmi2023; Pereira et al., Reference Pereira, Hadjielias, Christofi and Vrontis2023), while largely overlooking the factors that facilitate its successful adoption (Bankins et al., Reference Bankins, Ocampo, Marrone, Restubog and Woo2024). To address this gap, the present study bridges insights from the technology adoption literature that center on the users’ perceptions of technology by applying TAM (Davis, Reference Davis1989b) to the context of AI in the workplace. Although TAM has been used to explain the adoption of earlier workplace technologies such as the internet (e.g., Lederer, Maupin, Sena & Zhuang, Reference Lederer, Maupin, Sena and Zhuang2000) and smartphones (e.g., Moon & Chang, Reference Moon and Chang2014), its use to study AI at work is limited. Examining AI through a TAM lens – beyond traditional workplace technologies (e.g., phone, email messaging systems, basic task automation tools) – is necessary given that AI can function independently and adaptively, taking on a more autonomous and influential role in an organization (Glikson & Woolley, Reference Glikson and Woolley2020). Importantly, this model positions employees’ affective attitudes toward using AI as the underlying mechanism linking AI perceptions to intentions to use, extending past workplace models that focus on the direct pathways (e.g., Kohnke, Nieland, Straatmann & Mueller, Reference Kohnke, Nieland, Straatmann and Mueller2024).

Second, this study advances organizational science and technology adoption research by including employees’ trust in AI as part of the technology acceptance process (i.e., an extension of TAM, Shuhaiber & Mashal, Reference Shuhaiber and Mashal2019), responding to calls to better understand how trust shapes employees’ experiences with AI at work (Kelly, Kaye & Oviedo-Trespalacios, Reference Kelly, Kaye and Oviedo-Trespalacios2023). Although trust is recognized as an influential element of the social and psychological work environment (Dirks & Ferrin, Reference Dirks and Ferrin2001), organizational research has traditionally focused on trust in co-workers, leaders, and organizations (Fulmer & Gelfand, Reference Fulmer and Gelfand2012). As AI systems increasingly participate in work processes, however, trust is no longer confined to human relationships (Glikson & Woolley, Reference Glikson and Woolley2020). Recent scholarship highlights the importance of employees’ trust in the AI they may rely on in their jobs, particularly given the ethical considerations these systems raise (Dirks & de Jong, Reference Dirks and de Jong2022; Glikson & Woolley, Reference Glikson and Woolley2020). This trust is critical in workplace contexts where AI systems may be used to automate decisions with employment-related consequences (Raghavan, Barocas, Kleinberg & Levy, Reference Raghavan, Barocas, Kleinberg and Levy2020), as a teammate (Georganta & Ulfert, Reference Georganta and Ulfert2024; Ulfert et al., Reference Ulfert, Georganta, Centeio Jorge, Mehrotra and Tielman2024), or with strict managerial control and limited transparency for employees (Kellogg, Valentine & Christin, Reference Kellogg, Valentine and Christin2020). By using the extended version of TAM that incorporates trust (Shuhaiber & Mashal, Reference Shuhaiber and Mashal2019), this study contributes beyond models that simply treat trust as a direct antecedent of AI use intentions (e.g., Emon, Hassan, Nahid & Rattanawiboonsom, Reference Emon, Hassan, Nahid and Rattanawiboonsom2023; Lu, Yeh & Lai, Reference Lu, Yeh and Lai2025; Su et al., Reference Su, Wang, Liu, Zhang, Wang and Li2025). Rather, this study disentangles how trust relates to intentions to use AI at work through their affective attitudes.

Third, this study contributes to both literatures by questioning whether TAM’s AI acceptance processes operate uniformly across employees. With AI being adopted across various industries and job roles (Kochhar, Reference Kochhar2023), understanding the heterogeneity in predictors of employees’ intentions to use AI across different subgroups is critical. This study explores how individual differences in demographic characteristics (i.e., gender, education level) and organizational roles (i.e., leadership status) may influence for whom and under what conditions these relationships hold in the workplace context.

Finally, this study contributes to the technology adoption literature by further extending empirical tests of TAM to an employee sample focused on AI in the workplace. The current study probes whether perceived ease-of-use, perceived usefulness, and trust function as theorized in TAM by influencing employees’ affective attitudes toward using AI, which in turn shapes their intentions to use AI in workplace settings. Testing these relationships in employee samples is necessary as non-work samples may not accurately reflect these relationships in the workplace setting. For example, a meta-analysis by Wu, Zhao, Zhu, Tan and Zheng (Reference Wu, Zhao, Zhu, Tan and Zheng2011) concludes that TAM relationships are inflated in student samples and studies focused on technologies in commercial settings, reinforcing the importance of context- and sample-specific research. Narrowing in on the workplace context and AI as a specific type of technology allows for more targeted theoretical and practical insights.

Literature review, study hypotheses, and exploratory questions

Overview of the TAM

The TAM (Davis, Reference Davis1989b) offers a foundational framework that stems from broader theories of human behavior (e.g., Fishbein & Ajzen, Reference Fishbein and Ajzen1975) to explain why users adopt new technologies. Central to TAM is the role of affective attitudes toward using technology as a proximal driver of behavioral intentions (Davids, 1989; Glikson & Woolley, Reference Glikson and Woolley2020; Zhang, Zhang, Mu, Zhang & Fu, Reference Zhang, Zhang, Mu, Zhang, Fu, Zhao and Li2009). Within this framework, affective attitudes reflect one’s evaluative judgments about using a given technology (Longoni, Bonezzi & Morewedge, Reference Longoni, Bonezzi and Morewedge2019; Venkatesh, Morris, Davis & Davis, Reference Venkatesh, Morris, Davis and Davis2003); that is, whether engaging with the technology is expected to be experienced as positive and enjoyable. According to TAM, affective attitudes toward using technology like AI function as the mediating mechanism between individuals’ perceptions of the technology and their behavioral intentions to use it (Dwivedi et al., Reference Dwivedi, Hughes, Ismagilova, Aarts, Coombs, Crick and Williams2021; Taylor & Todd, Reference Taylor and Todd1995; Zhang et al., Reference Zhang, Zhang, Mu, Zhang, Fu, Zhao and Li2009). The initial model focused on two specific perceptions as predictors of affective attitudes toward using technology (Davis, Reference Davis1989a, Reference Davis1989b): perceived usefulness (i.e., the degree to which technology improves job performance) and perceived ease-of-use (i.e., the degree to which technology is perceived as user-friendly).

However, as TAM is rooted in more expansive theories of attitude formation and behavioral intentions, its conceptual foundation allows for the inclusion of additional predictors of affective attitudes toward using AI beyond these two core perceptions. For example, trust has emerged as a critical factor in the broader technology adoption literature (Dang & Li, Reference Dang and Li2025; Fakhrhosseini et al., Reference FakhrHosseini, Chan, Lee, Jeon, Son, Rudnik and Coughlin2024; Schuetz, Kuai, Lacity & Steelman, Reference Schuetz, Kuai, Lacity and Steelman2025) as well as a top concern for employees about adopting AI in the workplace (Gillespie et al., Reference Gillespie, Lockey and Curtis2021; Lynch, Reference Lynch2025). Trust refers to the willingness to use a system based on the perception that it is dependable and will perform reliably in ways that align with the users’ expectations (McKnight, Reference McKnight2005). Recent work has extended TAM to include trust as an antecedent, highlighting its relationship with affective attitudes toward using technology (Choung, David & Ross, Reference Choung, David and Ross2023; Kelly et al., Reference Kelly, Kaye and Oviedo-Trespalacios2023; Schuetz et al., Reference Schuetz, Kuai, Lacity and Steelman2025; Shuhaiber & Mashal, Reference Shuhaiber and Mashal2019; Wu et al., Reference Wu, Zhao, Zhu, Tan and Zheng2011).

While TAM has been heavily applied to AI use in consumer samples (e.g., Jiang, Niu, Wang, Yuan & Chen, Reference Jiang, Niu, Wang, Yuan and Chen2024; Liu et al., Reference Liu, Yang, Ren, Jia, Ma, Luo, Fang, Qi and Zhang2024), fewer studies have examined its utility in understanding employee use of AI in workplace contexts. Consumer use of AI technologies is commonly for convenience, entertainment, or learning enhancement, and individuals often have a fair amount of discretion in whether, when, and how they use it (Hollebeek, Menidjel, Sarstedt, Jansson & Urbonavicius, Reference Hollebeek, Menidjel, Sarstedt, Jansson and Urbonavicius2024; Kennedy, Tyson & Saks, Reference Kennedy, Tyson and Saks2023; Malodia, Islam, Kaur & Dhir, Reference Malodia, Islam, Kaur and Dhir2021). In contrast, the implementation of workplace AI is often influenced by organizational power dynamics and may be introduced through top-down decisions from company leadership with minimal employee input; this approach to implementation can generate concerns regarding managerial control and ethical risks as AI is integrated into organizational processes that influence hiring systems, performance evaluation, and job security (Almeida, Junça Silva, Lopes & Braz, Reference Almeida, Junça Silva, Lopes and Braz2025; Kelley, Reference Kelley2022; Oosthuizen, Reference Oosthuizen2019). AI use at work may also yield unique risks when adopted through a bottom-up approach in which employees utilize AI tools in their job without the awareness of organizational leaders or in violation of organizational policies (Christian, Reference Christian2023; Ivanti, 2025). Employees may experience some of the same benefits as other AI users, but the consequences of AI use in the workplace may be more consequential and less within their control. Given this unique context, there is a need to apply TAM to better understand how employees form affective attitudes toward using AI in the workplace and what drives their willingness to engage with it.

Perceived usefulness of AI at work

Employees will likely view using technology favorably when it makes their jobs and lives better (Vraňaková & Gyurák Babeľová, Reference Vraňaková and Gyurák Babeľová2025). According to TAM, perceived usefulness refers to the degree to which an individual believes that using a specific technology will improve their effectiveness (Davis, Reference Davis1989a). That is, the perceived usefulness of AI captures the extent to which these tools are expected to facilitate efficient task completion, which is closely tied to important work outcomes such as individual productivity and job performance (DeLone & McLean, Reference DeLone and McLean2003). On the one hand, AI in the workplace context is often intended to serve as an instrumental resource that aids employees in managing their workload and boosting productivity, for example, by automating repetitive tasks, assisting with decision-making or streamlining workflows (Jin et al., Reference Jin, Wang, Zhang, Tian, Shi and Zhao2025). On the other hand, employees may perceive AI as irrelevant to their work if it offers little added value or even as an obstacle that hinders task execution and impedes their performance (Goodhue & Thompson, Reference Goodhue and Thompson1995). How employees evaluate AI in terms of its usefulness reflects the utility they believe it provides for their work, and these perceptions are expected to influence employees’ affective attitudes toward using AI in the workplace (Daly, Wiewiora & Hearn, Reference Daly, Wiewiora and Hearn2025).

Research has demonstrated that perceived usefulness predicts affective attitudes toward using technology (King & He, Reference King and He2006; Moon & Kim, Reference Moon and Kim2001). Although direct examinations of perceived usefulness in AI-specific workplace settings are still emerging, existing studies offer support for its relevance in shaping users’ affective attitudes (Smith, Reference Smith2024). Recent studies have demonstrated a positive association between perceived usefulness and affective attitudes toward using AI among student samples (Aldraiweesh & Alturki, Reference Aldraiweesh and Alturki2025; Chen, Jiang, Zhou & Li, Reference Chen, Jiang, Zhou and Li2025; Nuryakin, Rakotoarizaka & Musa, Reference Nuryakin, Rakotoarizaka and Musa2023), programmers (Kim, Cha, Yoon & Lee, Reference Kim, Cha, Yoon and Lee2024), hotel staff (Wang & Hou, Reference Wang and Hou2025), and those in construction (Na et al., Reference Na, Heo, Han, Shin and Roh2022). Additional findings indicate that individuals are more inclined to hold affective attitudes toward using AI and embrace technological innovations when they clearly see how these tools can enhance their efficiency at work (Ahn & Chen, Reference Ahn and Chen2022; Jo & Park, Reference Jo and Park2023; Kim et al., Reference Kim, Cha, Yoon and Lee2024; Kim & Kim, Reference Kim and Kim2024). These patterns suggest that employees are likely to be more receptive to workplace AI when they are able to see it as genuinely relevant and useful. Grounded in the TAM framework and supported by empirical evidence highlighting the link between perceived usefulness and affective attitudes toward using AI, we propose:

Hypothesis 1: Perceived usefulness of AI at work will be positively related to affective attitudes toward using AI at work.

Perceived ease of AI use at work

Employees’ affective attitudes toward using AI are guided not only by how useful they expect it to be but also by how confident they would feel interacting with it. In the TAM framework, perceived ease-of-use is the extent to which an individual perceives using the technology as both easy to use and requiring little effort (Davis, Reference Davis1989a, Reference Davis1989b). In the workplace context, this means that AI may be evaluated based on how manageable it is to use as part of one’s job. AI tools that are difficult to learn or operate (i.e., lower ease-of-use) may generate frustration or hesitation (Chen et al., Reference Chen, Jiang, Zhou and Li2025; Chhabra, Kaushal & Girija, Reference Chhabra, Kaushal and Girija2025), whereas AI tools that seem clear, understandable, and easy to learn (i.e., higher ease-of-use) may be more likely to be viewed as supportive work resources rather than as threatening or burdensome technologies (Brougham & Haar, Reference Brougham and Haar2018). By capturing employees’ anticipations of the effort required to use AI, perceived ease-of-use is expected to influence how positively they feel toward using AI in their job.

Aligned with TAM, perceived ease-of-use has been found to be one of the primary determinants of users’ affective attitudes toward using AI, as it influences their confidence in their ability to navigate AI systems (Istiqomah & Alfansi, Reference Istiqomah and Alfansi2024). Although the empirical evidence on this relationship in workplace samples is limited, research in other domains, such as education, has shown that individuals report more favorable affective attitudes toward using AI when they perceive it as accessible and low-effort to use (Chen et al., Reference Chen, Jiang, Zhou and Li2025; Liu, Liu & Xie, Reference Liu, Liu and Xie2023; Nuryakin et al., Reference Nuryakin, Rakotoarizaka and Musa2023; Sudaryanto, Hendrawan & Andrian, Reference Sudaryanto, Hendrawan and Andrian2023); the sense of ease may reduce anxiety around unfamiliar technologies and foster a more open-minded attitude toward their potential benefits (Kim et al., Reference Kim, Cha, Yoon and Lee2024). However, the results from employee samples are promising. Evidence from organizational contexts suggests that perceived ease-of-use of AI is associated with more affective attitudes among hotel employees (Wang & Hou, Reference Wang and Hou2025). When AI tools feel approachable, employees may be more likely to positively view them as viable resources (Sadeghi, Reference Sadeghi2024). For example, employees may feel more comfortable with AI tools if they perceive them to be intuitive and user-friendly (Na et al., 2022). Taken together, these findings suggest that easy-to-use AI tools are more likely to be viewed favorably by employees. Thus, we propose:

Hypothesis 2: Perceived ease of AI use at work will be positively related to affective attitudes toward using AI at work.

In alignment with TAM, a substantial body of research has demonstrated the relationship between individuals’ perceptions of technology’s ease-of-use and their perceptions of its usefulness (Hansen, Saridakis & Benson, Reference Hansen, Saridakis and Benson2018). For example, Nuryakin et al. (Reference Nuryakin, Rakotoarizaka and Musa2023) and Shao, Nah, Makady and McNealy (Reference Shao, Nah, Makady and McNealy2024) extended this relationship to digital and AI systems, respectively, showing that users were more likely to perceive the technology as useful when they believed it to be user-friendly and intuitive. Perceived ease-of-use has been associated with perceived usefulness of AI technologies across various subpopulations, including remote workers (Taş & Kiraz, Reference Taş and Kiraz2023), construction employees (Na et al., 2022), and university students (Aldraiweesh & Alturki, Reference Aldraiweesh and Alturki2025; Chen et al., Reference Chen, Jiang, Zhou and Li2025). These findings reinforce perceived ease-of-use as a key driver of perceived usefulness in AI-enhanced applications. This study aims to build on this evidence by further examining the relationship between perceived ease of AI use and perceived usefulness among employees.

Hypothesis 3: Perceived ease of AI use at work will be positively related to perceived usefulness of AI at work.

Trust in AI at work

Trust has long been of interest in organizational sciences (Colquitt, Scott & LePine, Reference Colquitt, Scott and LePine2007; Dirks & de Jong, Reference Dirks and de Jong2022; Mayer, Davis & Schoorman, Reference Mayer, Davis and Schoorman1995). Much of the workplace trust literature has traditionally focused on trust in human or organizational referents, such as coworkers (e.g., Simons & Peterson, Reference Simons and Peterson2000), leaders (e.g., Gillespie & Mann, Reference Gillespie and Mann2004), and organizations (e.g., Gustafsson, Gillespie, Searle, Hope Hailey & Dietz, Reference Gustafsson, Gillespie, Searle, Hope Hailey and Dietz2020), with comparatively less research on trust in intelligent systems such as AI (Dirks & de Jong, Reference Dirks and de Jong2022). However, Glikson and Woolley’s (Reference Glikson and Woolley2020) review on employees’ trust in AI highlights the importance of its reliability and transparency, which they pose as integral to the ethical adoption of AI in the workplace.

Extended versions of TAM (e.g., Shuhaiber & Mashal, Reference Shuhaiber and Mashal2019) echo this proposition, specifically capturing the relationship between trust and affective attitudes that has been documented in the literature (e.g., Dang & Li, Reference Dang and Li2025; Schuetz et al., Reference Schuetz, Kuai, Lacity and Steelman2025). From the employee perspective, AI can have consequential impacts on their lives when used by organizations to allocate tasks that manage employees’ workload, assist in selection processes that determine who is hired, or influence performance evaluations that are tied to rewards (e.g., raises) and punishments (e.g., termination) (Cao, Duan, Edwards & Dwivedi, Reference Cao, Duan, Edwards and Dwivedi2021; Park, Ahn, Hosanagar & Lee, Reference Park, Ahn, Hosanagar and Lee2021). Further, AI can be used to offer these decisions even as the events are unfolding (Kar & Kushwaha, Reference Kar and Kushwaha2023). In situations where AI operates inconsistently or in ways that are opaque to employees, they may become skeptical in their evaluations of it; however, employees may feel more positively about using or be willing to adopt AI when they perceive the AI systems as trustworthy (Cao et al., Reference Cao, Duan, Edwards and Dwivedi2021; Kelley, Reference Kelley2022).

Most of the prior studies supporting a positive relationship between trust and affective attitudes have not been specific to the use of AI technologies (Kim, Reference Kim2012; Wu et al., Reference Wu, Zhao, Zhu, Tan and Zheng2011); however, emerging evidence suggests that trust in AI is associated with more affective attitudes toward using AI (Choung et al., Reference Choung, David and Ross2023; Dang & Li, Reference Dang and Li2025). In Dang and Li’s (Reference Dang and Li2025) systematic review of 562 empirical studies, affective attitudes toward using AI were the second most frequent outcome of trust in AI. Choung et al. (Reference Choung, David and Ross2023) found trust was related to affective attitudes toward using AI voice assistants in a study of college students and replicated their findings in a diverse national sample. Building on this foundation, we propose that trust in AI systems will be positively associated with employees’ affective attitudes toward using AI in the workplace setting.

Hypothesis 4: Trust in AI at work will be positively related to affective attitudes toward using AI at work.

Affective attitudes toward using AI at work

Affective attitudes toward using technology, as outlined by TAM, are integral to the way individuals form their intentions to engage with technological systems (David, 1989; Fishbein & Ajzen, Reference Fishbein and Ajzen1975; Marikyan, Papagiannidis & Stewart, Reference Marikyan, Papagiannidis and Stewart2023). Extending this framework to affective attitudes toward using AI, more recent studies have suggested that affective attitudes shape intentions to use AI-specific technologies among both university students (Aldraiweesh & Alturki, Reference Aldraiweesh and Alturki2025) and employees (Cao et al., Reference Cao, Duan, Edwards and Dwivedi2021; Emon et al., Reference Emon, Hassan, Nahid and Rattanawiboonsom2023; Na et al., 2022). For example, Emon et al. (Reference Emon, Hassan, Nahid and Rattanawiboonsom2023) surveyed 350 professionals across IT, finance, and healthcare in Bangladesh and found that affective attitudes toward using ChatGPT significantly predicted their intentions to use it at work. Likewise, Na et al. (2022) analyzed responses from marketing professionals in the United States and concluded that affective attitudes were a significant driver of AI tool adoption in digital advertising roles. We expect that employees’ affective attitudes toward using AI will play a meaningful role in shaping their intentions to engage with workplace AI.

Hypothesis 5: Affective attitudes toward using AI at work will be positively related to intentions to use AI at work.

Intentions to use AI at work

As AI becomes increasingly embedded in the workplace, intentions to use AI are a critical component in whether organizations can successfully implement these technologies and avoid falling behind (Crane et al., Reference Crane, Green and Soto2025). Positive perceptions of AI (i.e., perceived ease-of-use, perceived usefulness, and trust) support favorable affective attitudes, and subsequently, a greater likelihood of adopting AI at work. When individuals believe a technology is easy to use, it positively influences their positive attitude, increasing the likelihood of their engagement with the technology (Na et al., 2022; Nuryakin et al., Reference Nuryakin, Rakotoarizaka and Musa2023; Shao et al., Reference Shao, Nah, Makady and McNealy2024). For instance, students are more likely to have favorable affective attitudes toward using AI and express intentions to use it when they perceive it as simple and accessible (Liu et al., Reference Liu, Liu and Xie2023; Sudaryanto et al., Reference Sudaryanto, Hendrawan and Andrian2023), underscoring the role of perceptions in AI adoption decisions. Dwivedi, Rana, Jeyaraj, Clement and Williams (Reference Dwivedi, Rana, Jeyaraj, Clement and Williams2019) found that both perceived ease-of-use and perceived usefulness significantly predict affective attitudes, ultimately influencing behavioral intentions to adopt new technologies. Similarly, Almeida et al. (Reference Almeida, Junça Silva, Lopes and Braz2025) found that among full-time recruiters in Portugal, both perceived ease-of-use and perceived usefulness were indirectly associated with AI adoption through affective attitudes toward using AI. Furthermore, Na et al. (2022) found that perceived ease-of-use and perceived usefulness indirectly predict AI technology use through user affective attitudes, thus reinforcing the role of these perceptions in shaping AI adoption. However, the strength of these relationships may vary. In a sample of professionals in Bangladesh, Emon and Khan (Reference Emon and Khan2025) found support for the indirect effect of perceived usefulness on intentions to use AI through affective attitudes but not for the indirect effect of perceived ease-of-use. As one of the limited studies to include trust as a predictor, Choung et al. (Reference Choung, David and Ross2023) demonstrated that perceived ease-of-use, perceived usefulness, and trust were all related to affective attitudes toward using AI, which in turn were related to behavioral intentions to use AI. Thus, the extant literature supports that perceived ease-of-use, perceived usefulness, and trust likely shape employees’ intentions to use AI at work through their influence on affective attitudes toward using it.

Hypothesis 6: Perceived usefulness of AI(6a), perceived ease of AI use(6b), and trust in AI (6c) at work will indirectly influence intentions to use AI at work through employees’ affective attitudes toward using AI.

Exploring individual differences

As AI becomes increasingly integrated into the workplace (Pereira et al., Reference Pereira, Hadjielias, Christofi and Vrontis2023), it is essential to consider how individual differences may capture boundary conditions for employees’ perceptions and adoption of AI. Although the research on AI perceptions in organizational settings is relatively nascent, existing literature on technology acceptance suggests mixed findings regarding the moderating impact of demographic factors such as gender and education on potential users’ expectations about AI, thereby influencing their affective attitudes and intentions to adopt AI (Bankins et al., Reference Bankins, Ocampo, Marrone, Restubog and Woo2024; Yu, Xu & Ashton, Reference Yu, Xu and Ashton2023). For example, Horodyski (Reference Horodyski2023) found no evidence that gender moderated the relationships between human resources recruiters’ perceptions of AI and their intentions to use AI in their job. In contrast, Zhang, Schießl, Plößl, Hofmann and Gläser-Zikuda (Reference Zhang, Schießl, Plößl, Hofmann and Gläser-Zikuda2023) reported that gender moderated the relationship between perceived ease-of-use and perceived usefulness, such that the relationship was stronger for female teachers than for male teachers, but gender did not moderate any other paths. In non-work samples, Ma and Lei (Reference Ma and Lei2024) supported that individuals with higher levels of education, compared to lower levels, had stronger relationships only for perceived ease-of-use’s relationships with perceived usefulness and intentions to use AI. Ultimately, more empirical research is needed to clarify the influence of gender and education on the proposed model in employee samples.

In addition to demographic factors, leadership status may be a meaningful moderator to assess in the workplace context. AI integration may not have the same effects on all employees (Einola & Khoreva, Reference Einola and Khoreva2023). That is, employees across the organizational hierarchy may hold different expectations about AI based on their leadership experiences or lack thereof. Examining AI perceptions across leadership levels is particularly important, as leaders play a pivotal role in facilitating or hindering AI adoption in organizations (Bevilacqua, Masárová, Perotti & Ferraris, Reference Bevilacqua, Masárová, Perotti and Ferraris2025; Fountaine, McCarthy & Saleh, Reference Fountaine, McCarthy and Saleh2019; Kar, Kar & Gupta, Reference Kar, Kar and Gupta2021; Singh & Pandey, Reference Singh and Pandey2024). However, to our knowledge, prior work has not quantitatively examined whether holding a leadership position in one’s organization influences the relationship between AI perceptions, affective attitudes toward using AI, and intentions to use it. Thus, to explore the roles of gender, education, and leadership status, we pose the following exploratory questions:

Exploratory Question: Does the proposed model differ based on employee (1a) gender identity, (1b) employee education level, and (1c) employee leadership status?

Method

Participants and procedures

An online survey was administered to collect data for this study. We collected two independent cross-sectional survey samples of employed adults in the United States to test our hypotheses. Comparing relationships across samples strengthens the validity of our findings. One sample was recruited from Prolific (Sample 1), a crowdsourcing platform that has been found to connect researchers with quality samples (Douglas, Ewell & Brauer, Reference Douglas, Ewell and Brauer2023; Eyal, David, Andrew, Zak & Ekaterina, Reference Eyal, David, Andrew, Zak and Ekaterina2021). The second sample (Sample 2) was recruited via advertisements on the Meta social media platform. The data collection for this manuscript was approved by the Clemson University Institutional Review Board (Protocol Number: IRB2024-0248). Informed consent was obtained from each participant in both samples. To abide by ethical guidelines and discourage fraudulent responses to preserve data integrity (Agrawal et al., Reference Agrawal, Watson, Schuster, Cotten, Tidwell, Baker and Wang2025), we included the following sentence in the informed consent: “Any suspicious or low-quality survey responses will not be compensated.” Email addresses were only collected for Sample 2 to facilitate the distribution of participant incentives. To maintain the confidentiality of the data, the data for both samples were stored on password-protected computers and files that could only be accessed by the research team.

Once data collection was complete, both samples were thoroughly screened for data quality, using fraud detection features on the survey platform Qualtrics (e.g., RelevantID, reCaptcha), attention checks (e.g., “Please select ‘somewhat disagree’ to continue”), and patterns in response metadata (e.g., IP location). Low-quality and suspicious responses were removed prior to data analysis. Acknowledging the risk of potentially misclassifying legitimate responses as suspicious, we worked closely with the IRB to ensure full compliance with participant rights and research ethics, while balancing these concerns against the scientific responsibility to ensure data quality and use research funds appropriately.

After data cleaning, Sample 1 consisted of 1,047 participants, and Sample 2 consisted of 177 participants. In Sample 1, participants were 40.02 years old (standard deviation = 13.30) on average. Most participants identified as White (69.26%), female (54.29%), worked full-time (63.52%), and had at least a Bachelor’s degree (55.97%). In Sample 2, participants were 32.63 years old (standard deviation = 13.04) on average. The majority of participants identified as White (70.66%), worked full-time (56.74%), and had at least a Bachelor’s degree (61.58%). The sample was almost evenly split with participants who identified as female (48.31%) versus male (48.88%). Additional demographic information for both samples can be found in Table 1.

Demographic characteristics of participants across both samples

Percentages reflect the proportion of participants within each sample.

Measures

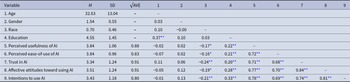

Both samples completed the same measures. Perceived ease of AI use at work, perceived usefulness of AI at work, trust in AI at work, affective attitudes toward using AI at work, and intentions to use AI at work were measured with scales adapted from Venkatesh et al. (Reference Venkatesh, Morris, Davis and Davis2003). Trust in AI at work was assessed with the measure from Slade, Dwivedi, Piercy and Williams (Reference Slade, Dwivedi, Piercy and Williams2015). The measures were adapted to include “AI in the workplace” in each item to capture the work context. Participants responded to a five-point Likert scale to indicate the extent to which they agreed or disagreed with each item (1 = “Strongly Disagree,” 5 = “Strongly Agree”). The full list of items for the measures is provided in Table 2. Additional analyses are presented in Appendix A to address potential concerns that the third item in the intentions to use AI at work scale may have been framed as consumer-centric rather than employee-centric.

Results of the measurement model (Sample 1/Sample 2)

AI = artificial intelligence. All items were adapted to focus on artificial intelligence (AI) in the workplace context. All measures were adapted from Venkatesh et al. (Reference Venkatesh, Morris, Davis and Davis2003), except for the trust measure, which was adapted from Slade et al. (Reference Slade, Dwivedi, Piercy and Williams2015). Sample 1 values are presented first; Sample 2 values are presented second. Factor loadings are standardized. Cronbach’s α represents the internal consistency reliability of the full measure. Composite reliability (CR) and average variance extracted (AVE) values for the full measures support convergent validity. Sample sizes: Sample 1 (N = 1,047); Sample 2 (N = 177).

Data analysis

To test the hypothesized relationships, we used structural equation modeling in the R 4.3.1 package lavaan 0.6-17 (Rosseel, Reference Rosseel2012), which offers a flexible, open-source software for latent variable modeling. We analyzed a partial mediation model that examined direct and indirect effects to assess how employee perceptions influence AI adoption intentions through affective attitudes toward using AI. Bias-corrected and accelerated bootstrapped 95% confidence intervals (CIs) were computed for the indirect effects using 5,000 resamples to obtain robust estimates under a non-normal sampling distribution. For hypothesis testing, we controlled for age, gender, race, and education as covariates.

For the exploratory questions, we estimated three separate multi-group structural equation models to determine whether the proposed relationships differed based on (1a) participants’ gender (male versus female), (1b) education (less than a Bachelor’s degree versus a Bachelor’s degree and higher), or (1c) leadership status (leader versus non-leader). The Wald chi-square test was used to compare the equality-constrained coefficients to unconstrained models, tested separately for gender, education, and leadership status. All control variables were included in the analyses, with the exception that gender was excluded from the gender-specific model and education from the education-specific model.

Results

Measurement model

The assessment of the measurement model for both samples included evaluations of the confirmatory factor analysis, convergent validity, internal consistency reliability, and discriminant validity. Using confirmatory factor analysis, the five-factor measurement model (i.e., perceived ease-of-use, perceived usefulness, trust, affective attitudes toward using AI, and intentions to use AI) was tested for each sample with items loaded onto their respective scales. The measurement models for both samples demonstrated an overall acceptable fit (Sample 1: χ2 (125) = 1037.01, p < .001, CFI = 0.96, RMSEA = 0.08, SRMR = 0.07; Sample 2: χ2 (125) = 336.66, p < .001, CFI = 0.94, RMSEA = 0.10, SRMR = 0.06). The five-factor measurement model for each sample was also statistically superior compared to a one-factor model (Sample 1: χ2 (135) = 6510.97, p < .001, CFI = 0.70, RMSEA = 0.21, SRMR = 0.09, Δχ2 (10) = 5473.96, p < .001; Sample 2: χ2 (135) = 849.95, p < .001, CFI = 0.79, RMSEA = 0.17, SRMR = 0.07, Δχ2 (10) = 513.29, p < .001), minimizing concerns of common method bias.

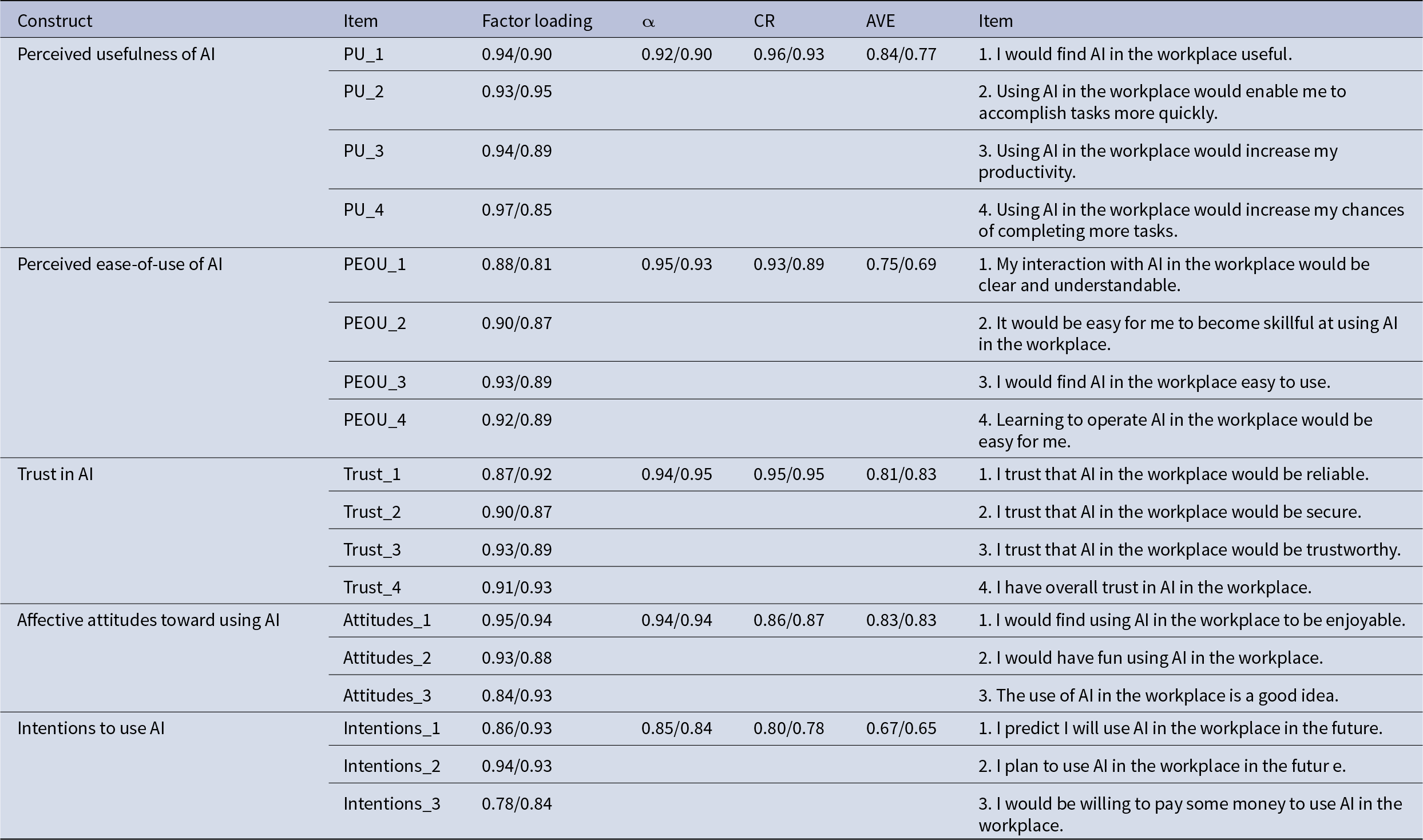

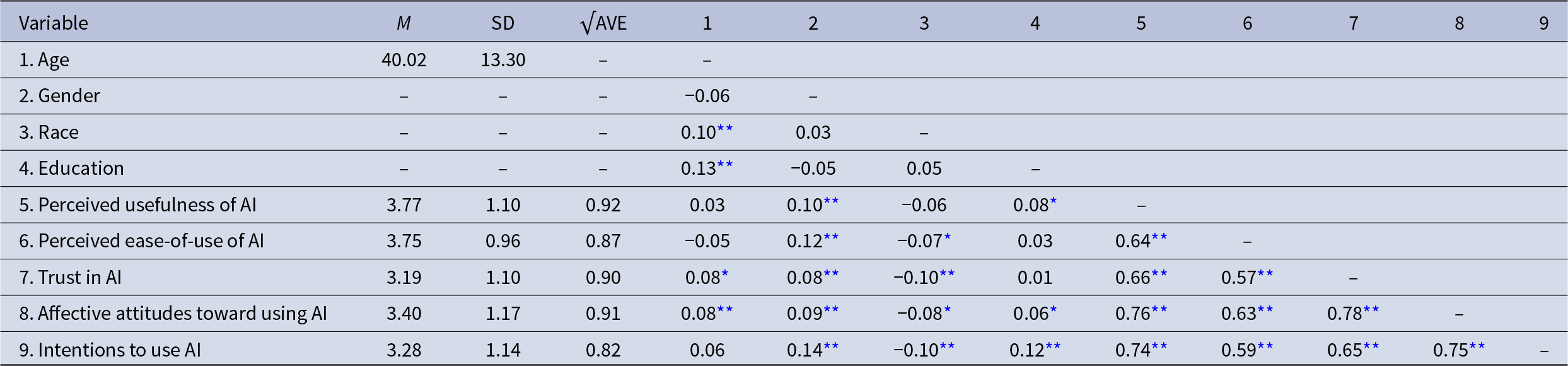

As shown in Table 2, all items in the five-factor measurement models had factor loadings greater than the recommended cutoff of 0.70 (Hair, Black, Babin, Anderson & Tatham, Reference Hair, Black, Babin, Anderson and Tatham2006). Further supporting convergent validity, the average explained variance values were found to be well above the threshold of 0.50 (Hair et al., Reference Hair, Black, Babin, Anderson and Tatham2006). Cronbach’s alpha (Sample 1: 0.85–0.95, Sample 2: 0.84–0.85) and composite reliability (Sample 1: 0.80–0.96, Sample 2: 0.78–0.95) values demonstrated satisfactory internal reliability. The Heterotrait–Monotrait (HTMT) ratio was used to assess discriminant validity. The results are presented in Table 3, and confirmed that the HTMT ratios were less than the acceptable threshold of 0.90 (Henseler, Ringle & Sarstedt, Reference Henseler, Ringle and Sarstedt2015), except for the relationship between affective attitudes toward using AI at work and intentions to use AI at work in Sample 2 (HTMT ratio = 0.91). Additionally, the square roots of the average explained variance values were greater than the correlations between the variables, as shown in Tables 4 and 5. Overall, these results support the reliability, convergent validity, and discriminant validity of the measures for the collected data.

HTMT ratios (Sample 1/Sample 2)

Heterotrait–Monotrait (HTMT) ratio values represent discriminant validity. Sample 1 values are presented first, then the Sample 2 values. All items were adapted to focus on artificial intelligence (AI) in the workplace.

Sample 1: Means, SDs, √AVE, and correlations

N = 1,047. Presented are means (M), standard deviations (SD), square roots of the average variance extracted (√AVE), and bivariate correlations. Gender (1 = Female, 2 = Male). Race (0 = Non-White, 1 = White). Education (1 = Less than high school degree, 2 = High school graduate or GED, 3 = Some college but no degree, 4 = Associate degree, 5 = Bachelor’s degree, 6 = Master’s degree, 7 = Professional school degree, 8 = Doctorate degree. All items were adapted to focus on artificial intelligence (AI) in the workplace.

* p < .05, **p < .01.

Sample 2: Means, SDs, √AVE, and correlations

N = 177. Presented are means (M), standard deviations (SD), square roots of the average variance extracted (√AVE), and bivariate correlations. Gender (1 = Female, 2 = Male). Race (0 = Non-White, 1 = White). Education (1 = Less than high school degree, 2 = High school graduate or GED, 3 = Some college but no degree, 4 = Associate degree, 5 = Bachelor’s degree, 6 = Master’s degree, 7 = Professional school degree, 8 = Doctorate degree. All items were adapted to focus on artificial intelligence (AI) in the workplace.

* p < .05, **p < .01.

Hypothesis results

The results for hypothesis testing, including the direct effects of the predictors on intentions to use AI at work, are shown in Figure 1. Hypothesis 1 was supported as the path from perceived usefulness of AI at work to affective attitudes toward using AI at work was significant (Sample 1: b = 0.42, p < .001; Sample 2: b = 0.36, p < .001). For Hypothesis 2, the path from perceived ease-of-use of AI at work to affective attitudes toward using AI at work had mixed results across the two samples. Hypothesis 2 was supported in Sample 1 (b = 0.19, p < .001) but was not supported in Sample 2 (b = 0.09, p = .606). The findings for Hypothesis 3 were consistently supported in both samples; perceived ease-of-use of AI at work was significantly related to perceived usefulness of AI at work (Sample 1: b = 0.81, p < .001; Sample 2: b = 0.87, p < .001). The direct effect of trust in AI at work on affective attitudes toward using AI at work was significant (Sample 1: b = 0.56, p < 0.001; Sample 2: b = 0.62, p < .001), supporting Hypothesis 4. Hypothesis 5 was also supported, such that affective attitudes toward using AI at work were significantly associated with intentions to use AI at work (Sample 1: b = 0.30, p < .001; Sample 2: b = 0.35, p = .048).

Conceptual model with path significance for direct effects. Sample 1 (N = 1,047); Sample 2 (N = 177). This figure displays direct effects only. Path coefficients are displayed in order: Sample 1, followed by Sample 2. Unstandardized estimates are presented. Standard errors are shown in parentheses. All items were adapted to focus on artificial intelligence (AI) in the workplace. *p < .05, ***p < .001.

For the mediation hypotheses, we examined the 95% CIs of the bootstrapped indirect effect. If the 95% CI did not include zero, the indirect effect was determined to be significant. Consistent with Hypothesis 6a, the indirect effect of perceived usefulness of AI at work on intentions to use AI at work through affective attitudes toward using AI at work was significant (Sample 1: estimate = 0.13, 95% CI [0.08, 0.18]; Sample 2: estimate = 0.13, 95% CI [0.03, 0.32]). Regarding Hypothesis 6b, the results differed between Sample 1 and Sample 2. The indirect effect of perceived ease-of-use of AI at work on intentions to use AI at work through affective attitudes toward using AI at work was significant for Sample 1 (estimate = 0.06, 95% CI [0.03, 0.09]) but not for Sample 2 (estimate = 0.03, 95% CI [−0.04, 0.29]). Hypothesis 6c was also supported: the indirect effect of trust in AI at work on intentions to use AI at work through affective attitudes toward using AI at work was significant (Sample 1: estimate = 0.17, 95% CI [0.11, 0.23]; Sample 2: estimate = 0.22, 95% CI [0.01, 0.51]). Because Sample 2 was smaller (N = 177), these findings should be interpreted with some caution, as smaller samples can yield less stable parameter estimates. The results summarizing the direct and indirect effects are presented in Table 6 (Sample 1) and Table 7 (Sample 2).

Structural equation modeling results – Sample 1

Sample 1 (N = 1,047). Unstandardized coefficients are presented. The standard errors (SE) are provided in parentheses. The 95% confidence intervals (CI) are presented in brackets. All items were adapted to focus on artificial intelligence (AI) in the workplace. This analysis controlled for age, gender, race, and education.

* p < .05, **p < .01.

Structural equation modeling results – Sample 2

Sample 2 (N = 177). Unstandardized coefficients are presented. The standard errors (SE) are provided in parentheses. The 95% confidence intervals (CI) are presented in brackets. All items were adapted to focus on artificial intelligence (AI) in the workplace. This analysis controlled for age, gender, race, and education.

* p < .05, **p < .01.

Exploratory question results

Finally, the analyses for the exploratory questions indicated that the hypothesized paths did not significantly differ based on (1a) gender (Sample 1: Wald test = 7.60 (8), p = .473; Sample 2: Wald test = 11.15 (8), p = .193), (1b) education (Sample 1: Wald test = 9.49 (8), p = .303; Sample 2: Wald test = 6.17 (8), p = .629), or (1c) leadership status (Sample 1: Wald test = 5.98 (8), p = .649; Sample 2: Wald test = 5.54 (8), p = .699).

Discussion

Given the rapid growth and organizational integration of AI in the workplace (Rane, Choudhary & Rane, Reference Rane, Choudhary and Rane2024), understanding how employees form intentions to adopt these tools is critical for optimizing implementation. To date, organizational science has primarily devoted more attention to the consequences of AI use than to the psychological processes that influence employees’ willingness to engage with AI (Bankins et al., Reference Bankins, Ocampo, Marrone, Restubog and Woo2024), underscoring the opportunity to consider insights from the technology adoption literature. Such integration is essential for understanding how organizations can foster employee acceptance of AI in an effort to maximize its intended benefits.

Drawing on the TAM (Davis, Reference Davis1989b), this study examined how affective attitudes toward using AI served as a mechanism connecting perceptions of AI to willingness to use it. These findings help clarify how employees evaluate the use of AI systems in organizational settings. Across two samples of employed adults, perceived usefulness of AI at work and trust in AI at work indirectly predicted intentions to use AI at work through affective attitudes toward using AI at work. Employees appear more inclined to evaluate AI use favorably and adopt it when viewed as enhancing their performance and when they trust its fairness and reliability. In contrast, perceived ease-of-use of AI at work was less consistent, calling into question this TAM pathway in workplace settings. The observed relationships did not differ across groups based on participants’ gender, education, and leadership status, suggesting that these patterns hold across diverse workplace groups. The findings emphasize the value of an extended TAM framework in workplace contexts by highlighting trust as a meaningful addition to the model and reaffirming perceived usefulness and affective attitudes toward using AI as core components. At the same time, the findings for perceived ease-of-use illustrate why it is necessary to validate technology adoption models developed in non-work contexts in employee samples.

Theoretical implications

This study offers several theoretical implications for organizational science and technology adoption. First, the perceived usefulness and trust in AI pathways suggest these perceptions are insightful indicators of how employees evaluate AI use in the workplace. In both samples, perceived usefulness and trust in AI were indirectly related to willingness to use AI at work through more affective attitudes, providing robust support for applying TAM to modern work contexts. Of note, trust in AI did not have a significant direct effect on intentions to use in the hypothesized model for either sample, demonstrating full mediation rather than partial mediation. This finding refines how trust operates within technology adoption frameworks, particularly for the workplace context. In contrast to prior AI adoption research that has modeled trust as a direct predictor of intention to use AI systems (Dang & Li, Reference Dang and Li2025), this study clarifies that employees’ trust in AI influences its adoption through affective attitudes.

Beyond the implications for TAM, these findings create opportunities to connect technology adoption models with broader organizational science theories to explain why perceptions of AI matter for employees’ reactions. For example, psychological contract theory (Coyle-Shapiro, Costa, Doden & Chang, Reference Coyle-Shapiro, Costa, Doden and Chang2019; Rousseau, Reference Rousseau1995) explains that employees have subjective beliefs about the obligations between themselves and their organizations, and the perceived fulfillment or breach of these obligations influences their affective attitudes and behaviors. From a psychological contract theory perspective, employees’ perceptions of AI trustworthiness and usefulness may reflect implicit expectations about whether the organization is fulfilling ethical obligations (e.g., fair, transparent, reliable AI) and pragmatic obligations (e.g., AI that enables effective job performance). When AI is considered trustworthy and useful, employees may perceive psychological contract fulfillment, eliciting positive affective responses and greater willingness to use the AI. Contrarily, perceptions that it is untrustworthy or unhelpful may indicate psychological contract breach, abating affective responses, and intensifying resistance toward the AI. As another example, social exchange theory (Emerson, Reference Emerson1976; Cropanzano & Mitchell, Reference Cropanzano and Mitchell2005) suggests that the relationships between employees and their organizations are guided by norms of reciprocity; when employees receive favorable treatment from their organization, they are likely to respond with better affective attitudes and behaviors (Colquitt et al., Reference Colquitt, Scott, Rodell, Long, Zapata, Conlon and Wesson2013). In line with social exchange theory, employees may interpret how organizations implement AI as signals of how the organization values them and their work. Employees may evaluate whether the AI represents an investment in their success with helpful, dependable tools and reciprocate these efforts from the organization with more favorable affective attitudes and greater intentions to use the AI. If employees do not believe their organizations are providing them with adequate AI (e.g., it undermines performance or is unreliable), then they may struggle to view its use positively and may not be inclined to use it in return.

Considering the study’s findings in light of psychological contract (Coyle-Shapiro et al., Reference Coyle-Shapiro, Costa, Doden and Chang2019; Rousseau, Reference Rousseau1995) and social exchange (Cropanzano & Mitchell, Reference Cropanzano and Mitchell2005) theories illustrates how integrating organizational science and technology adoption can enhance understanding of AI acceptance and why research is needed to focus on the workplace. In organizational settings, employees’ engagement with AI often occurs within systems that are introduced and managed by the organization with implications for their core work processes (Almeida et al., Reference Almeida, Junça Silva, Lopes and Braz2025; Kelley, Reference Kelley2022; Oosthuizen, Reference Oosthuizen2019), rather than being discretionary or for entertainment. Consequently, employees’ perceptions of AI trustworthiness and usefulness likely reflect not only evaluations of using the technology but also broader, context-embedded interpretations of the organization’s intent, obligations, and support.

Second, the findings provide theoretical insights into well-established technology adoption predictors that may be less relevant in organizational settings. The perceived ease-of-use pathway was not replicated across both samples; the indirect effect on intentions to use AI via affective attitudes was significant only in Sample 1 and nonsignificant in Sample 2. Although Sample 2 had lower statistical power, blaming the inconsistency on sample size alone may overlook theoretically driven explanations. Even in Sample 1, in which the indirect was significant, the perceived ease-of-use pathway was smaller than those of trust and perceived usefulness. This is consistent with emerging research on AI in the workplace that has found perceived ease-of-use to be nonsignificant or a weaker predictor of employees’ affective attitudes and intentions to use AI (Talha, Nasreen, Farooq & Fatima, Reference Talha, Nasreen, Farooq and Fatima2025). These patterns may be surprising given that they conflict with the foundational technology adoption literature that supports perceived ease-of-use as a central component of TAM (Davis, Reference Davis1989a, Reference Davis1989b) and potentially even counter our previous arguments about integrating TAM insights with psychological contract theory (Coyle-Shapiro et al., Reference Coyle-Shapiro, Costa, Doden and Chang2019; Rousseau, Reference Rousseau1995) and social exchange theory (Cropanzano & Mitchell, Reference Cropanzano and Mitchell2005). For example, it could be argued that employees believe their organization is fulfilling its responsibilities and treating them well by providing AI that is easy to use. Thus, it is reasonable to inquire why perceived ease-of-use might not elicit similar attitudinal and behavioral responses.

Several alternative explanations may help clarify why perceived ease-of-use showed a weaker or nonsignificant effect than trust or perceived usefulness: employees’ priorities when evaluating AI, the role of distal and proximal predictors, the type of expectations employees hold for their organization, and the extent to which perceived ease-of-use is about the AI itself versus their own capabilities. One possibility is that, even if perceived ease-of-use reflects an expectation employees hold of their employers, it may not have strong incremental effects once higher work-related priorities are taken into account. In professional settings, employees may be more concerned about whether AI enhances their efficiency because its use is often tied to performance expectations (Chui, Hall, Singla, Sukharevsky & Yee, Reference Chui, Hall, Singla, Sukharevsky and Yee2023; Rainie, Anderson, Mcclain, Vogels & Gelles-Watnick, Reference Rainie, Anderson, Mcclain, Vogels and Gelles-Watnick2023), making perceived usefulness a more proximal predictor of affective attitudes and intentions. As such, perceived ease-of-use may play a weaker role, and its effects may be washed out.

It also may be that perceived ease-of-use is more accurately modeled as a more distal predictor. That is, employees may expect AI to be easy to use as a prerequisite for facilitating its usefulness and subsequent desired outcomes; finding AI easy to use may be of limited value to employees if they do not believe using it would actually benefit their performance. Consistent with this interpretation, perceived ease-of-use was a significant predictor of perceived usefulness in both samples, and supplemental analyses indicated a significant serial mediation pathway (perceived ease-of-use → perceived usefulness → affective attitudes → intentions to use; Sample 1: estimate = 0.10, 95% CI [0.07, 0.15]; Sample 2: estimate = 0.11, 95% CI [0.03, 0.30]). From a social exchange perspective, ease-of-use may be perceived as such a basic obligation for responsible AI implementation that employees do not interpret it as a signal of meaningful investment from the organization, especially when considered alongside more salient perceptions like usefulness; as a result, employees may not reciprocate with stronger affective attitudes and intentions to use the AI unless it results in greater perceived usefulness as well.

Lastly, it is possible that perceived ease-of-use partially captures employees’ evaluations of their own abilities rather than their assessments of the AI alone. Perceived usefulness and trustworthiness of AI are more clearly tied to evaluations of the quality, reliability, and value of the AI itself as provided by the organization. Contrarily, perceived ease-of-use reflects beliefs about how easy it would be for an employee to become skillful at using AI (Venkatesh et al., Reference Venkatesh, Morris, Davis and Davis2003), which may encompass not only features of the technology (e.g., intuitive design, clear instructions) but also self-perceptions such as learning ability, prior experiences with similar technology, or general self-efficacy (Venkatesh & Bala, Reference Venkatesh and Bala2008). Supplemental findings examining this study’s sample characteristics may support this concern. For example, even though the analyses controlled for age in both samples, a t-test revealed that Sample 2 (M = 32.69, standard deviation = 13.04) was significantly younger than Sample 1 (M = 40.02, standard deviation = 13.30), t(243.74) = −6.98, p < .001. Since younger adults tend to be more knowledgeable of AI and have more experience using AI outside of work (Culp-Roche et al., Reference Culp-Roche, Hampton, Hensley, Wilson, Thaxton-Wiggins, Otts, Fruh and Moser2020; Pew Research Center, 2025), it may influence their perceived ease-of-use, affective attitudes, and intentions to use AI at work, which may partially explain the differences in relationship patterns between these constructs across the samples. Furthermore, given that psychological contract and social exchange processes focus on the organization’s obligations or treatment, perceptions that stem from employees’ own abilities may not map as clearly onto these mechanisms. If ease-of-use is less reflective of the organization’s investments, this perception may be a weaker driver of affective attitudes and intentions alongside other perceptions in the workplace setting. However, consistent with the serial mediation pathway, perceived ease-of-use – regardless of whether it reflects characteristics of the AI or the employee – can function as an enabling factor, fostering perceptions of usefulness that more directly influence affective attitudes and intentions to use.

Finally, the lack of differences in findings based on employees’ gender identity, education level, or leadership status suggests that the mechanisms underlying intentions to use AI in the workplace may operate similarly across key demographic and professional characteristics. Rather than indicating a lack of nuance, this pattern suggests that core evaluative processes related to AI adoption may be broadly shared across employee groups in organizational settings. This finding challenges the assumptions that certain individual characteristics influence technology acceptance (Porter & Donthu, Reference Porter and Donthu2006; Venkatesh et al., Reference Venkatesh, Morris, Davis and Davis2003) and reinforces the robustness of the proposed predictors in the workplace, as their effects did not vary due to personal or professional backgrounds. From an organizational perspective, this consistency may reflect features of the work context itself. In many workplaces, employees are exposed to similar expectations, training opportunities, and norms surrounding technology use, which may reduce demographic variability in how AI is evaluated. As a result, demographic differences that often emerge in consumer or general population samples may be decreased in organizational contexts where technology use is structured and role-based. These findings highlight the importance of considering context when interpreting individual differences in technology acceptance and suggest that workplace AI adoption may be driven more by shared evaluative criteria than by demographic distinctions.

Practical implications

The findings provide practical insights for organizations and potentially society more broadly. For organizations, the findings highlight the need to prioritize employee perceptions when building AI systems. It is important that employees trust the AI in their workplace. Technical reliability is essential as AI affective attitudes and intentions to adopt depend on how employees interpret the system’s transparency and fairness. Clear communication about how AI functions and what safeguards are in place can increase trust and reduce skepticism (Choung et al., Reference Choung, David and Ross2023). Embedding explainability features and offering feedback loops can reduce concerns associated with unclear, black-box systems (Choung et al., Reference Choung, David and Ross2023).

To foster perceptions of AI usefulness, the introduction of AI tools at work should be explicitly framed around how they will enhance productivity and reduce workload burdens for employees. Involving employees in the design or selection of AI systems can also help tailor features to job-specific needs, increasing the likelihood that they will view AI as valuable to their performance. Leaders play a key role in this process by creating open environments where employees are encouraged to ask questions and explore how AI can support their work (Brougham & Haar, Reference Brougham and Haar2018). Although our findings produced inconsistent results across samples, usability also remains relevant. Organizations should invest in intuitive interface design and ensure that AI tools are clearly aligned with employees’ job responsibilities, as compatibility with existing workflows can influence adoption (Kim et al., Reference Kim, Cha, Yoon and Lee2024). To promote perceptions of usefulness and ease-of-use, training should not only cover how to use AI but also how it aligns with employees’ job functions and workflows. When systems are seen as collaborative tools rather than replacements, they are more likely to be embraced (Almeida et al., Reference Almeida, Junça Silva, Lopes and Braz2025).

Beyond organizational practices, these findings also carry implications as AI becomes more embedded in daily life within and outside of the workplace. Because workplace AI systems often represent a sustained and consequential point of contact that individuals have with AI, employees’ experiences with AI at work may shape their expectations for how AI should function in other domains, including public services, consumer technologies, and digital platforms (de Fine Licht & de Fine Licht, Reference de Fine Licht and de Fine Licht2020; Raisch & Krakowski, Reference Raisch and Krakowski2021). As a result, the workplace may serve as an important context in which individuals form broader judgments about AI’s usability, reliability, and appropriate human oversight, contributing to wider societal conversations about effectively integrating AI.

Limitations and future research directions

While the study offers several contributions, it is not without limitations. First, our survey used a cross-sectional design, therefore we cannot establish causal inferences due to the lack of temporal precedence from cross-sectional data. Future research could incorporate longitudinal data to better capture how employee perceptions of AI evolve (Jeong, Kim & Lee, Reference Jeong, Kim and Lee2024). However, cross-sectional designs can still provide meaningful insights (Spector, Reference Spector2019), particularly for examining AI-related perceptions and intentions to use that focus on a future-oriented outcome. Our approach follows best practices to include control variables in cross-sectional research (Spector, Reference Spector2019) and is consistent with prior published studies that used cross-sectional designs to examine mediation processes (Cole, Cox & Stavros, Reference Cole, Cox and Stavros2019; Gürlek & Uygur, Reference Gürlek and Uygur2021). Future research should also collect objective or other-report data about employees’ AI usage at work to reduce common method bias and expand the tested model to include actual AI use behaviors.

Second, although the measures in our studies are well-established and continue to be widely used and adapted to a variety of technologies (e.g., ChatGPT, Camilleri, Reference Camilleri2024; mobile financial applications, Chang & Benson, Reference Chang and Benson2023; telemedical services, Cobelli, Cassia & Donvito, Reference Cobelli, Cassia and Donvito2023; big data tools and systems; Iyer & Bright, Reference Iyer and Bright2024), future research could use more recently published AI-specific measures (e.g., Brougham & Haar, Reference Brougham and Haar2018; Park, Woo & Kim, Reference Park, Woo and Kim2024) that test research efforts focused on other AI perceptions (e.g., AI-related job insecurity, perceived adaptability of AI) relevant to the workplace. Future research may also integrate additional factors specific to the context of AI in the workplace that may influence the AI perceptions and their consequences; for example, capturing the role of organizational power dynamics (e.g., the transparency of AI implementation, employee involvement in decision-making related to AI implementation and use, the rewards or punishments associated with AI adoption) in future work may provide valuable insights into boundary conditions for this model.

Third, the smaller size of Sample 2 (N = 177) relative to Sample 1 (N = 1,047) may have contributed to some of the differences in hypothesis results, as smaller samples have lower power. Nonetheless, Sample 2 provides valuable complementary evidence as it was collected through a non-crowd sourced platform. Future research may benefit from obtaining larger samples across multiple sampling sources to further replicate the findings.

Fourth, our study examined general AI use in the workplace, rather than focusing on specific AI technologies. Future studies should explore how this model may differ across various AI functions (e.g., generative AI, predictive analytics, process automation) and applications (e.g., human resource management, task automation, chatbots) to better inform context-specific implementation strategies. Fifth, although an advantage of our study is that we sampled employees in a wide variety of jobs, we did not test environmental factors such as industry, job type, or organizational policies related to AI. These elements may significantly shape how AI is perceived and used. In addition, variables like leadership communication or managerial support could play a key role in fostering trust and openness to change (Yue, Men & Ferguson, Reference Yue, Men and Ferguson2019). Finally, although our moderators were nonsignificant, this does not rule out the role of other individual differences. Psychological variables such as technological self-efficacy or openness to change may offer stronger explanatory power. Future studies should test these moderators to better understand variability in employee responses.

Conclusion

This study examined how employee perceptions of AI, specifically perceived usefulness, perceived ease-of-use, and trust, shape affective attitudes toward using AI and intentions to use it at work. Across two samples of working adults, affective attitudes played a key mediating role, with perceived usefulness and trust emerging as consistent predictors of adoption intentions. The findings highlight the importance of user-centered design and trust-building efforts when integrating AI into workplace settings. Moving forward, organizations should consider integrating both technical and psychological factors when implementing AI, ensuring that employees are not only equipped with the necessary skills but also trust the technology they use at the workplace.

Data Availability Statement

The data for this study are available from the corresponding author upon request.

Funding Statement

This research did not receive any specific grant from funding agencies in the public, commercial, or not-for-profit sectors.

Conflict(s) of Interest

We declare that we have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this article.

Ethical Standards

The data collection for this manuscript was approved by the Clemson University Institutional Review Board (Protocol Number: IRB2024-0248).

Appendix A

Additional attention was devoted to addressing potential concerns about the third item in the intentions to use AI at work scale (“I would be willing to pay some money to use AI in the workplace”), being consumer-centric rather than employee-centric. First, recent evidence indicates that some employees pay for AI tools themselves rather than solely relying on employer-provided options. For example, a report from Deloitte (2024) found that nearly one-third of employees personally paid for the AI tools they used at work. Second, to ensure that retaining this item did not alter our conclusions, we re-ran all of the analyses outlined in the Data Analysis section without the third intention to use AI at work item. The measurement models for Sample 1 (χ2 (109) = 925.23, p < .001, CFI = 0.96, RMSEA = 0.08, SRMR = 0.06) and Sample 2 (χ2 (109) = 285.91, p < .001, CFI = 0.95, RMSEA = 0.10, SRMR = 0.05) without the item were comparable to the main analyses with the full three-item scale (presented in the Results section of the main manuscript). Importantly, the pattern of conclusions about hypothesis support remained consistent with and without the third item. The full results are available from the corresponding author. The only notable difference was that, in Sample 2, the HTMT ratio between affective attitudes toward AI at work and intentions to use AI at work was below the 0.90 threshold without the third item (HTMT ratio = 0.81). As such, we proceeded with the analyses using the full three-item scale for the intentions to use AI at work scale.