1 Introduction

Relationships among teachers are known to influence educational outcomes. Teachers’ relationships with each other are positively correlated with teaching performances (Spillane et al., Reference Spillane, Shirrell and Adhikari2018), perceptions of trust for peers (Kolleck et al., Reference Kolleck, Schuster, Hartmann and Gräsel2021) and students’ achievements, among others. In particular, teaching networks, composed of relationships in which teachers discuss their instructional practice with peers are vital to school climates. Teachers are more willing to engage with school policies if they feel more connected with other teachers and principals (Moolenaar et al., Reference Moolenaar, Daly and Sleegers2010). They are more willing to change and adopt new teaching practices if they are part of the teaching network and if the new practice has been adopted by teachers in their network (Penuel et al., Reference Penuel, Frank, Sun, Kim and Singleton2013). On the other hand, school climates are known to influence teaching networks. Teachers tend to have larger networks following professional development programs and tend to be more connected in schools that previously engaged in school-wide initiatives (Weinbaum et al., Reference Weinbaum, Cole, Weiss and Supovitz2008).

In this article, we study the relationship between teachers’ networks and school climates in schools. We study whether and how teachers’ advice interactions are related to their perceptions of schools. The network includes information about from whom the teachers seek advice during the school year. The school climates perceived by the teachers are measured as their responses to a set of survey questions called item responses.Footnote 1 Among the survey questions, three categories of questions were given to all teachers: perceived satisfaction with the school, perceived quality of the students, and perceived impact on school policies. To test and understand the relationship between the network and the perceived school climate, we propose a novel methodology that jointly analyzes a network and item responses from a questionnaire. Possible questions that can be answered with our methodology include whether the advice-seeking network or the latent dimensions of the advice-seeking network are related to the item responses or the latent dimensions of the item responses. Our methodology is applicable for the joint analysis of any data consisting of a network and item responses.

While several studies have looked into the relationship between the network of teachers and the school climate, rarely do they assess the relationship using fully specified statistical models (see, e.g., Broda et al., Reference Broda, Granger, Chow and Ross2023). More often, summary statistics are used as inputs for further assessment (Bonsignore et al., Reference Bonsignore, Hansen, Galyardt, Aleahmad and Hargadon2011), but focusing on summary statistics ignores important network information; in fact, two drastically different networks can be reduced to similar summary statistics, and thus the structures of the networks beyond the summary statistics are subsequently lost. For example, networks with similar node degree distributions can have different community structures (Paul & Chen, Reference Paul and Chen2022).

In this article, we develop a joint network and item response model (JNIRM), where we quantify and test the relationship between network and item responses via theorizing a shared data generation process for both data modalities. We devise the latent variables/spaces to describe the network formation (also called latent network dimensions) and the latent variables to describe the item responses, and quantify their co-varying dynamics with shared distributions. This approach allows the information in the item responses to inform the generation of the networks and has the potential to improve the cost efficiency of network studies without collecting information from new participants. Our proposed method offers new possibilities for investigations into the social factors of educational and health outcomes by increasing the amount of information utilized in estimating network parameters, leading to more powerful and cost-effective studies.

Compared with uni-modal models for only networks or item responses, our new method allows for more efficiently designed studies when the number of samples (cost of the conducting the proposed study) is constrained. Without collecting additional data with more study samples, our model is able to increase the precision of model parameter estimates and model predictive performances. This is because during our custom built Markov chain Monte Carlo (MCMC) estimation algorithm, the estimation of the latent network variables is not only informed by the network data, but also by the school climate item responses. In this way, we increase the amount of information in estimating network/item response parameters, leading to more powerful and cost-effective studies. In addition, modeling dependence as co-varying dynamics is a flexible and intuitive option. No prior knowledge about the direction of the dependence is needed.

The proposed method is different from that in Fosdick and Hoff (Reference Fosdick and Hoff2015), which models the correlation between latent variables estimated from a network and simple attribute data. Meanwhile, item responses or responses to questionnaires or test items are complicated types of attribute data with complex dimensionalities. A questionnaire consisting a multitude of survey items is often thoughtfully designed by domain expects (although AI-generated items recently became available) and is used to measure a specific facet of an individual, such as intelligence, personality trait, risk of developing psychiatric outcomes, or disordered behaviors and symptoms. Through this process, data from the item responses are used to indicate and differentiate individuals on abstract latent constructs. Not providing a latent variable theory about the generation of the item responses oversimplifies the problem and is a lost opportunity to model the measurement process. Thus, in such scenarios with multidimensional and dependent item responses, the method proposed in Fosdick and Hoff (Reference Fosdick and Hoff2015) may not be applicable.

In the following sections, we first describe the motivating application, followed by the JNIRM. We propose an MCMC algorithm to estimate the proposed model, and then we report results for the applications. Lastly, we discuss and conclude.

2 Motivating application

In this section, we present a real-life example, where the research question is whether and how a network and an item response matrix are related. School staff members in elementary schools were asked to list to whom they sought information and advice around mathematics instruction and curriculum. Data were collected in 2015 across

![]() $14$

elementary schools as part of a larger study in a mid-western school district in the United States (see more information about the data in the Supplementary Material).

$14$

elementary schools as part of a larger study in a mid-western school district in the United States (see more information about the data in the Supplementary Material).

We focus on one school from that district with 24 school staff members. Each staff member was asked to indicate to whom they turned for advice during the school year, and at most

![]() $12$

advisors were reported per teacher though all 24 listed less than

$12$

advisors were reported per teacher though all 24 listed less than

![]() $12$

. The presence of an at least monthly advice-seeking relationship is counted as an observed edge. In the adjacency matrix,

$12$

. The presence of an at least monthly advice-seeking relationship is counted as an observed edge. In the adjacency matrix,

![]() $x_{a,b}=1$

if teacher a seeks advice from teacher b. Because advice-seeking relationships are directional, and

$x_{a,b}=1$

if teacher a seeks advice from teacher b. Because advice-seeking relationships are directional, and

![]() $\boldsymbol {X}$

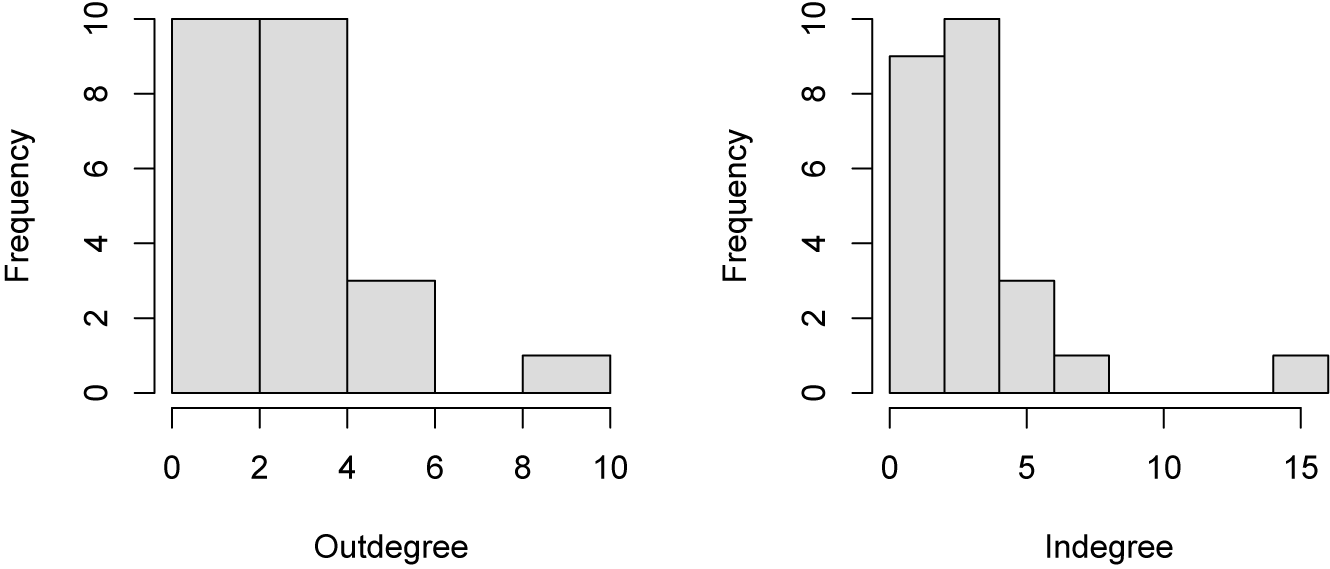

is asymmetric. Teacher a seeking advice from teacher b does not imply that teacher b seeks advice from teacher a. The degree distributions are shown in Figure 1. The indegree distribution is more skewed than the outdegree distribution suggesting that some of the advice was sought from a few experienced advisors, and not all individuals provided advice.

$\boldsymbol {X}$

is asymmetric. Teacher a seeking advice from teacher b does not imply that teacher b seeks advice from teacher a. The degree distributions are shown in Figure 1. The indegree distribution is more skewed than the outdegree distribution suggesting that some of the advice was sought from a few experienced advisors, and not all individuals provided advice.

Figure 1 Degree distribution for the advice-seeking network. Frequencies of number of persons one seeks advice from are outdegree, and frequencies of number of persons seeking advice from the same person are indegree.

Figure 2 shows the advice-seeking network where the staff members are shown as vertices and the arrows point from the advice-seeking to the advice provider. The number of arrows pointing to Node 1 indicates that Node 1 provided advice to many other staff members in that school. The density of this network is 0.14 meaning that 14% of the possible

![]() $24 \times 23$

advice-seeking relationships are observed. Reciprocity, the extent to which Node i asked Node j for advice also coincides with Node j asking Node i for advice, is 0.20 confirming that this network is largely asymmetric. Transitivity, the extent to which connections among pairs of nodes

$24 \times 23$

advice-seeking relationships are observed. Reciprocity, the extent to which Node i asked Node j for advice also coincides with Node j asking Node i for advice, is 0.20 confirming that this network is largely asymmetric. Transitivity, the extent to which connections among pairs of nodes

![]() $(i, j)$

and

$(i, j)$

and

![]() $(j, k)$

co-occurs with a connection between i and k, is 0.31. Transitivity is based on non-directional ties, so it is a better measure of how much school staff self-cluster in their networks. A transitivity of 0.31 indicates moderately low clustering.

$(j, k)$

co-occurs with a connection between i and k, is 0.31. Transitivity is based on non-directional ties, so it is a better measure of how much school staff self-cluster in their networks. A transitivity of 0.31 indicates moderately low clustering.

Figure 2 An advice-seeking social network among school staff in one school. Nodes are teachers, and the arrow points from the advice-seeker to the advice-provider.

The teachers were presented with the School Staff Social Network Questionnaire (SSSNQ; Pustejovsky & Spillane, Reference Pustejovsky and Spillane2009), which includes a variety of items about the school climate. We chose

![]() $16$

items on the questionnaire that were distributed to all teachers; of the

$16$

items on the questionnaire that were distributed to all teachers; of the

![]() $16$

items, four items are about the teacher’s overall satisfaction with the school (we will call these items the satisfaction items), seven items concern the teacher’s perceived influence over school policies (we will call these items the policy items), and five items concern the teacher’s perceptions of the students (we will call these items the student items). Each of the three subscales of the climate questionnaire includes items designed to measure a different construct: the satisfaction, the perceived influence over school policies, and the perception of students. We describe the complete list of questions under the three different constructs in the Supplementary Material.

$16$

items, four items are about the teacher’s overall satisfaction with the school (we will call these items the satisfaction items), seven items concern the teacher’s perceived influence over school policies (we will call these items the policy items), and five items concern the teacher’s perceptions of the students (we will call these items the student items). Each of the three subscales of the climate questionnaire includes items designed to measure a different construct: the satisfaction, the perceived influence over school policies, and the perception of students. We describe the complete list of questions under the three different constructs in the Supplementary Material.

The satisfaction items and the policy items were measured on a five-point Likert scale, and the student items were measured on a four-point scale. For the five-point scale, the response options were “Strongly Disagree,” “Disagree,” “Neutral,” “Agree,” and “Strongly Agree”; for the four-point scale, the response options were “None,” “A little,” “Some,” and “A great deal.” The scale data were treated as continuous using a linear model. We chose not to dichotomize the data because we found that after dichotomization, the data were not sufficiently informative for the latent structure of the items.

2.1 Dimensionality of the school climate questionnaire

We explored the dimensions of the item responses with a principle component analysis (PCA) of the correlation matrix. We applied the PCA to the data from all schools (see details of school-level dimensionality analysis in the Supplementary Material) to increase our sample size. Although a multigroup factor model or a multilevel factor model may be more appropriate, we considered a PCA as sufficient for an exploratory analysis, given that the inter-school differences were rather small (see the Supplementary Material for a comparison of four schools). The eigenvalues showed that there might be 3 or 4 components. The eigen values were

![]() $4.811 (28\%), 2.052 (14\%), 1.521 (9\%)$

, and

$4.811 (28\%), 2.052 (14\%), 1.521 (9\%)$

, and

![]() $1.060 (7\%)$

for the first four components, respectively.

$1.060 (7\%)$

for the first four components, respectively.

Given that there are three subscales in the questionnaire, we rotated the first three components to a theoretical target structure that corresponds to the three subscales (with a zero loading on the components that do not correspond to the subscale an item belongs to). All items of the same subscale were given the same high loadings in the target theoretical structure (i.e., a value of two different high values yield the same rotation result). The rotation was performed using Michael Browne’s target rotation, which is also implemented in CEFA (Browne et al., Reference Browne, Cudeck, Tateneni and Mels2008), using the target.rot function in the psych R package (Revelle & Revelle, Reference Revelle and Revelle2015).

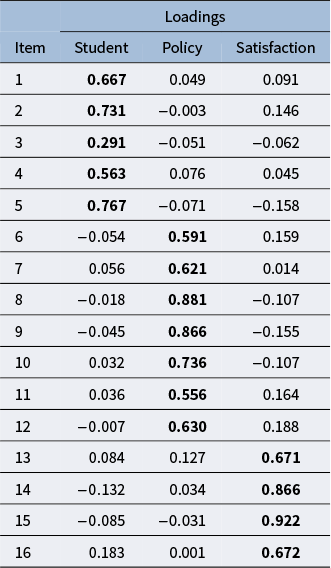

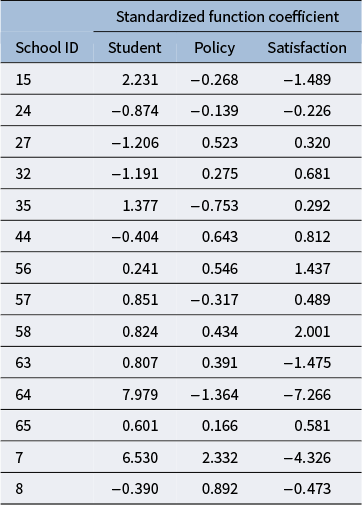

The loadings of the rotated components are shown in Table 1. The loadings that are expected to be high based on the theoretical structure are in bold. The rotated components showed a clear correspondence with the theoretical structure, with the items from the same subscale loading on the same component and having low loadings on the other two components. To assess the similarity between the rotated components and the theoretical structure, we also calculated the congruence coefficients (see the results in Table 2). The congruence coefficients are the cosines between the rotated components and the components defined by the theoretical structure. Table 2 shows that the congruence coefficients are high between the corresponding components and the theoretical structure. Therefore, we believe that the three rotated components correspond to the three subscales. We will apply the same target rotation to the results from the joint analysis of the network data and questionnaire data in the application section. The joint model will be described next.

Table 1 Rotated loadings of the PCA

Note: The loadings that are expected to be high based on the target matrix are in bold.

Table 2 Congruence coefficients

3 The joint network and item response model

In this section, we describe the JNIRM. Conceptually, the model is a joint model for two kinds of data, and it consists of three components: (1) a model for the network data, (2) a model for the item response data, and (3) a joint component that connects the previous two components. We will explain each of the three components.

3.1 Modeling the network component

In a latent space model, a node’s information is summarized by low-dimensional continuous latent variables and can sometimes be visually represented in a low-dimensional space. The latent variable value for a node can be written in vector form with the number of elements in the vector equaling the number of dimensions. We restrict the scope of our discussion to vector latent space models that use the vector product to model the connections between nodes (see Wang, Reference Wang2021 for details about vector latent space models). One reason for using vector latent space models is to maintain consistency with the item response component of the joint framework. Models for item responses often use the vector product between a latent person variable and an item slope in the item response function, for example, the two-parameter IRT model and the factor models (De Boeck & Wilson, Reference De Boeck and Wilson2004).

We can summarize the relational information of person a as a sender in the network using a K-dimensional vector

![]() $\boldsymbol {u}_a$

, called the latent position of sender a. Similarly, we can summarize the relational information of person b as a receiver in the network using a K-dimensional vector

$\boldsymbol {u}_a$

, called the latent position of sender a. Similarly, we can summarize the relational information of person b as a receiver in the network using a K-dimensional vector

![]() $\boldsymbol {v}_b$

, called the latent position of receiver b. The latent position for a node is the coordinate of the node on the corresponding latent network dimension. In this article, we model the probability of a connection from a sender a to a receiver b using the vector product

$\boldsymbol {v}_b$

, called the latent position of receiver b. The latent position for a node is the coordinate of the node on the corresponding latent network dimension. In this article, we model the probability of a connection from a sender a to a receiver b using the vector product

![]() $\boldsymbol {u}_a^T\boldsymbol {v}_b$

, called the multiplicative UV effect. In network analysis, other forms of the vector products also exist, for example, the bilinear effect (Hoff, Reference Hoff2005). The multiplicative UV effects model allows for separate sender and receiver role specific latent variables for a node and is therefore more suitable for a directed network. For a directed network with a low level of reciprocity, the multiplicative UV effect is a better choice than the bilinear effect. We use the multiplicative UV effect to model the directed relationships in the advice-seeking network.

$\boldsymbol {u}_a^T\boldsymbol {v}_b$

, called the multiplicative UV effect. In network analysis, other forms of the vector products also exist, for example, the bilinear effect (Hoff, Reference Hoff2005). The multiplicative UV effects model allows for separate sender and receiver role specific latent variables for a node and is therefore more suitable for a directed network. For a directed network with a low level of reciprocity, the multiplicative UV effect is a better choice than the bilinear effect. We use the multiplicative UV effect to model the directed relationships in the advice-seeking network.

3.1.1 Continuous edge weights

We introduce the vector latent space model for a network with continuous quantitative edge weights. Let

![]() $\boldsymbol {X}$

be an

$\boldsymbol {X}$

be an

![]() $N \times N$

adjacency matrix representing the network, where N is the number of persons in the network. The edge value

$N \times N$

adjacency matrix representing the network, where N is the number of persons in the network. The edge value

![]() $X_{a,b}$

represents the relationship from sender a to receiver b,

$X_{a,b}$

represents the relationship from sender a to receiver b,

![]() $a,b =1,\ldots ,N$

and

$a,b =1,\ldots ,N$

and

![]() $b \neq a$

. For a directed network with continuous edge weights, the latent space model can be written as

$b \neq a$

. For a directed network with continuous edge weights, the latent space model can be written as

where

![]() $\delta $

is a fixed intercept parameter accounting for the density of the network. As mentioned before,

$\delta $

is a fixed intercept parameter accounting for the density of the network. As mentioned before,

![]() $\boldsymbol {u}_a$

and

$\boldsymbol {u}_a$

and

![]() $\boldsymbol {v}_b$

are latent positions for sender a and receiver b. The error for the edge from sender a to receiver b is

$\boldsymbol {v}_b$

are latent positions for sender a and receiver b. The error for the edge from sender a to receiver b is

![]() $e_{a,b}$

, and its covariance structure with

$e_{a,b}$

, and its covariance structure with

![]() $e_{b,a}$

follows Equation (3.1). We call

$e_{b,a}$

follows Equation (3.1). We call

![]() $\sigma ^2_e$

the error variance and

$\sigma ^2_e$

the error variance and

![]() $\rho $

the within-dyadFootnote

2

correlation. The within-dyad correlation accounts for reciprocity, that is, the degree to which relationships are reciprocated. When there is reciprocity,

$\rho $

the within-dyadFootnote

2

correlation. The within-dyad correlation accounts for reciprocity, that is, the degree to which relationships are reciprocated. When there is reciprocity,

![]() $X_{a,b}$

and

$X_{a,b}$

and

![]() $X_{b,a}$

are more likely to be the same. This model is identical to keeping only the multiplicative component of the additive and multiplicative effects (AME) model of Hoff (Reference Hoff2021). We use

$X_{b,a}$

are more likely to be the same. This model is identical to keeping only the multiplicative component of the additive and multiplicative effects (AME) model of Hoff (Reference Hoff2021). We use

![]() $\boldsymbol {U} $

and

$\boldsymbol {U} $

and

![]() $\boldsymbol {V} $

to denote the

$\boldsymbol {V} $

to denote the

![]() $N \times K$

matrices of the coordinates on the latent network dimensions for sender and receiver, and

$N \times K$

matrices of the coordinates on the latent network dimensions for sender and receiver, and

![]() $\boldsymbol {E}$

to denote the

$\boldsymbol {E}$

to denote the

![]() $N \times N$

matrix of network errors. The approximation of the posterior distributions of the unknown quantities is facilitated by setting prior distributions an

$N \times N$

matrix of network errors. The approximation of the posterior distributions of the unknown quantities is facilitated by setting prior distributions an

![]() $N(0, \sigma ^{-2}_0)$

with

$N(0, \sigma ^{-2}_0)$

with

![]() $\sigma ^{-2}_0>0$

for

$\sigma ^{-2}_0>0$

for

![]() $\delta $

, a

$\delta $

, a

![]() $\text {Unif}(-1, 1)$

for

$\text {Unif}(-1, 1)$

for

![]() $\rho $

, and a

$\rho $

, and a

![]() $\text {gamma} (1/2, 1/2)$

for

$\text {gamma} (1/2, 1/2)$

for

![]() $\sigma _e^{-2}$

. The prior for the covariance of the latent network dimensions is described in the joint component.

$\sigma _e^{-2}$

. The prior for the covariance of the latent network dimensions is described in the joint component.

Random node-specific intercepts or node specific additive effects can be added to the above network model (Hoff, Reference Hoff2021; Krivitsky et al., Reference Krivitsky, Handcock, Raftery and Hoff2009). In this case, Equation (3.1) will be modified to read

![]() $X_{a,b} = \delta + \eta _a + \xi _b + \boldsymbol {u}_a^T \boldsymbol {v}_b + e_{a,b}$

, with a suitable correlation structure on

$X_{a,b} = \delta + \eta _a + \xi _b + \boldsymbol {u}_a^T \boldsymbol {v}_b + e_{a,b}$

, with a suitable correlation structure on

![]() $\eta _a, \xi _b,\boldsymbol {u}_a$

, and

$\eta _a, \xi _b,\boldsymbol {u}_a$

, and

![]() $\boldsymbol {v}_b$

. Karrer and Newman (Reference Karrer and Newman2011) make a case for modeling the heterogeneity of the node degrees with additive node-level (fixed) effects in stochastic blockmodels, resulting in degree-corrected stochastic blockmodels (see discussions about the additive node-level effects in stochastic blockmodels in Jin, Reference Jin2015 and Zhao et al., Reference Zhao, Levina and Zhu2012). Without the additive node-level effects, blockmodels tend to identify clusters based on node degrees (e.g., a cluster of active nodes versus a cluster of less active nodes), especially when there is large heterogeneity in the node degrees. However, it is less clear if the additive node-level effects, for example, the random row and column intercepts

$\boldsymbol {v}_b$

. Karrer and Newman (Reference Karrer and Newman2011) make a case for modeling the heterogeneity of the node degrees with additive node-level (fixed) effects in stochastic blockmodels, resulting in degree-corrected stochastic blockmodels (see discussions about the additive node-level effects in stochastic blockmodels in Jin, Reference Jin2015 and Zhao et al., Reference Zhao, Levina and Zhu2012). Without the additive node-level effects, blockmodels tend to identify clusters based on node degrees (e.g., a cluster of active nodes versus a cluster of less active nodes), especially when there is large heterogeneity in the node degrees. However, it is less clear if the additive node-level effects, for example, the random row and column intercepts

![]() $\eta _a, \xi _b$

, are needed to model all types of networks in latent variable models. Indeed, the additive effects are useful to capture higher (or lower) nodal activity directed to all nodes in the network irrespective of their positions in the latent space. On the other hand, the length of the latent variable of the node contributes to the activity level of the node in relation to other nodes in the space—this is captured by the multiplicative nature of the vector products to model connections. In small data sets with moderate levels of heterogeneity of the node degrees, the latent positions alone might be sufficient for modeling the activity levels of the nodes. Although, in principle, we can expect improvement in the recovery of the node degrees with added additive effects, a situation might arise where the improvement is negligible.

$\eta _a, \xi _b$

, are needed to model all types of networks in latent variable models. Indeed, the additive effects are useful to capture higher (or lower) nodal activity directed to all nodes in the network irrespective of their positions in the latent space. On the other hand, the length of the latent variable of the node contributes to the activity level of the node in relation to other nodes in the space—this is captured by the multiplicative nature of the vector products to model connections. In small data sets with moderate levels of heterogeneity of the node degrees, the latent positions alone might be sufficient for modeling the activity levels of the nodes. Although, in principle, we can expect improvement in the recovery of the node degrees with added additive effects, a situation might arise where the improvement is negligible.

In this article, we choose not to include additive effects in the network component of the framework. In our application of the advice-seeking network and the school climate questionnaire, we observe comparable recovery of node degrees as well as comparable model fit with and without the nodal effects in the model. Therefore, we think the nodal effects are not needed in the modeling of the advice-seeking network though this might not be the case for other networks.

Although the proposed approach for the network component of the joint framework can be seen as an AME model, the difference of our approach is that we connect the network component with the item response component using a covariance-based joint component. See details about the joint component in Section 3.3.

3.1.2 Identifiability

The coordinates of the latent network dimensions,

![]() $\boldsymbol {U}$

and

$\boldsymbol {U}$

and

![]() $\boldsymbol {V,}$

are identified up to rotations, while

$\boldsymbol {V,}$

are identified up to rotations, while

![]() $\boldsymbol {U}\boldsymbol {V}^T$

is directly identified following the condition that either

$\boldsymbol {U}\boldsymbol {V}^T$

is directly identified following the condition that either

![]() $\boldsymbol {U}$

or

$\boldsymbol {U}$

or

![]() $\boldsymbol {V}$

is centered. Suppose

$\boldsymbol {V}$

is centered. Suppose

![]() $\boldsymbol {1}_N$

is the N-dimensional column vector of

$\boldsymbol {1}_N$

is the N-dimensional column vector of

![]() $1$

s;

$1$

s;

![]() $\boldsymbol {J}_N = \boldsymbol {1}_N \boldsymbol {1}_N^T$

is the

$\boldsymbol {J}_N = \boldsymbol {1}_N \boldsymbol {1}_N^T$

is the

![]() $N \times N$

matrix of

$N \times N$

matrix of

![]() $1$

s; and

$1$

s; and

![]() $\boldsymbol {H}$

is the centering matrix,

$\boldsymbol {H}$

is the centering matrix,

![]() $\boldsymbol {H} = \boldsymbol {I}_N - \frac {1}{N} \boldsymbol {1}_N \boldsymbol {1}_N^T$

.

$\boldsymbol {H} = \boldsymbol {I}_N - \frac {1}{N} \boldsymbol {1}_N \boldsymbol {1}_N^T$

.

Lemma 1. Let either

![]() $\boldsymbol {U}$

or

$\boldsymbol {U}$

or

![]() $\boldsymbol {V}$

be centered, that is,

$\boldsymbol {V}$

be centered, that is,

![]() $ \boldsymbol {H}\boldsymbol {U} = \boldsymbol {U}\text {or } \boldsymbol {H}\boldsymbol {V} = \boldsymbol {V}$

. If two sets of parameters

$ \boldsymbol {H}\boldsymbol {U} = \boldsymbol {U}\text {or } \boldsymbol {H}\boldsymbol {V} = \boldsymbol {V}$

. If two sets of parameters

![]() $\delta $

,

$\delta $

,

![]() $\boldsymbol {U} $

,

$\boldsymbol {U} $

,

![]() $\boldsymbol {V} $

, and

$\boldsymbol {V} $

, and

![]() $\delta '$

,

$\delta '$

,

![]() $\boldsymbol {U'} $

,

$\boldsymbol {U'} $

,

![]() $\boldsymbol {V'} $

lead to the same

$\boldsymbol {V'} $

lead to the same

![]() $E(X_{a,b})$

, then

$E(X_{a,b})$

, then

![]() $\delta = \delta '$

,

$\delta = \delta '$

,

![]() $\boldsymbol {U} = \boldsymbol {U'} \boldsymbol {O} $

, and

$\boldsymbol {U} = \boldsymbol {U'} \boldsymbol {O} $

, and

![]() $\boldsymbol {V} = \boldsymbol {V'} \boldsymbol {O}^{-1} $

, where

$\boldsymbol {V} = \boldsymbol {V'} \boldsymbol {O}^{-1} $

, where

![]() $\boldsymbol {O}$

is a

$\boldsymbol {O}$

is a

![]() $K \times K$

nonsingular matrix.

$K \times K$

nonsingular matrix.

The proof of this lemma is in the Supplementary Material. The proof follows similar arguments to those laid out in Huang et al. (Reference Huang, Soliman, Paul and Xu2022) and Zhang et al. (Reference Zhang, Xue and Zhu2020). To resolve the rotational indeterminacy and reflection of the axes in the latent space, we follow the approach in Fosdick and Hoff (Reference Fosdick and Hoff2015), Hoff (Reference Hoff2015a, Reference Hoff2021), and Minhas et al. (Reference Minhas, Hoff and Ward2019) and use the first K left and right singular vectors of the posterior means of the vector products as the estimates of

![]() $\boldsymbol {U}$

and

$\boldsymbol {U}$

and

![]() $\boldsymbol {V}$

. In this way, we can obtain estimates of the latent positions following the convergence of the vector products, and not the convergence of the latent positions themselves. The convergence of the vector products will be assessed and reported. The consequence of this approach is that we do not have a posterior distribution of the latent positions, only of the vector products.

$\boldsymbol {V}$

. In this way, we can obtain estimates of the latent positions following the convergence of the vector products, and not the convergence of the latent positions themselves. The convergence of the vector products will be assessed and reported. The consequence of this approach is that we do not have a posterior distribution of the latent positions, only of the vector products.

3.1.3 Binary network

The observed advice-seeking network in our application is binary and not continuous. We model the observed binary edge

![]() $X_{a,b}$

as the binary indicator that the latent continuous measure,

$X_{a,b}$

as the binary indicator that the latent continuous measure,

![]() $\phi _{a,b}$

is bigger than zero, that is,

$\phi _{a,b}$

is bigger than zero, that is,

![]() $X_{a,b} = 1$

, when

$X_{a,b} = 1$

, when

![]() $\phi _{a,b}>0$

. For a binary network,

$\phi _{a,b}>0$

. For a binary network,

![]() $\phi _{a,b}$

replaces

$\phi _{a,b}$

replaces

![]() $X_{a,b}$

in the proposed methodology in Equation (3.1). The error variance of the latent continuous measure is assumed to be

$X_{a,b}$

in the proposed methodology in Equation (3.1). The error variance of the latent continuous measure is assumed to be

![]() $1$

(as in probit models) to identify the scale of the latent continuous measure.

$1$

(as in probit models) to identify the scale of the latent continuous measure.

3.2 Modeling the item response component

To model the school climate questionnaire, we propose a latent variable model with vector products for continuous observations. Through this methodology, we aim to get three sets of information: (1) item intercept, (2) item slope, and (3) the latent variable values. The item intercept reflects the average of the responses across all persons for each item. A higher intercept indicates a higher average response. The item slope reflects how well the item differentiates in terms of the latent variable. One unit difference in the latent variable results in a large difference in the probabilities of response when the item slope is large.

Consider the continuous item responses,

![]() $\boldsymbol {Y}$

, an

$\boldsymbol {Y}$

, an

![]() $N \times M$

matrix, where N is the number of persons and M is the number of items. The response,

$N \times M$

matrix, where N is the number of persons and M is the number of items. The response,

![]() $Y_{p,i}$

, represents person p’s response to item i,

$Y_{p,i}$

, represents person p’s response to item i,

![]() $p = 1,2,\ldots , N$

and

$p = 1,2,\ldots , N$

and

![]() $i = 1,2,\ldots , M$

. Let D be the number of dimensions or latent variables in the item responses. The model can be written as

$i = 1,2,\ldots , M$

. Let D be the number of dimensions or latent variables in the item responses. The model can be written as

where

![]() $\boldsymbol {\alpha }_i$

and

$\boldsymbol {\alpha }_i$

and

![]() $\boldsymbol {\theta }_p$

are the D-dimensional vectors containing the item slopes and the person latent variables of the item responses;

$\boldsymbol {\theta }_p$

are the D-dimensional vectors containing the item slopes and the person latent variables of the item responses;

![]() $\beta _i$

is the intercept of item i; and

$\beta _i$

is the intercept of item i; and

![]() $\sigma ^2_{\epsilon }$

is the error variance for the item responses. As is common in models for item responses, the parameters for the items (including the item slope,

$\sigma ^2_{\epsilon }$

is the error variance for the item responses. As is common in models for item responses, the parameters for the items (including the item slope,

![]() $\boldsymbol {\alpha }_i$

and the item intercept,

$\boldsymbol {\alpha }_i$

and the item intercept,

![]() $\beta _i$

) are fixed, and the person variable,

$\beta _i$

) are fixed, and the person variable,

![]() $\boldsymbol {\theta }_p$

are random. We use

$\boldsymbol {\theta }_p$

are random. We use

![]() $\boldsymbol {\beta } $

to denote the

$\boldsymbol {\beta } $

to denote the

![]() $M \times 1$

vector of item intercepts,

$M \times 1$

vector of item intercepts,

![]() $\boldsymbol {A}$

to denote the

$\boldsymbol {A}$

to denote the

![]() $M \times D$

matrix of item slopes,

$M \times D$

matrix of item slopes,

![]() $\boldsymbol {\Theta }$

to denote the

$\boldsymbol {\Theta }$

to denote the

![]() $N \times D$

matrix of latent variables of item responses, and

$N \times D$

matrix of latent variables of item responses, and

![]() $\boldsymbol {\Psi }$

to denote the

$\boldsymbol {\Psi }$

to denote the

![]() $N \times M$

matrix of item response errors. Let us define

$N \times M$

matrix of item response errors. Let us define

![]() $\boldsymbol {\xi }_i$

as a vector of item parameters,

$\boldsymbol {\xi }_i$

as a vector of item parameters,

![]() $ \boldsymbol {\xi }_i = (\boldsymbol {\alpha }_i^T, \beta _i)^T$

. Approximation of the posterior distribution of the item parameters is facilitated by setting a

$ \boldsymbol {\xi }_i = (\boldsymbol {\alpha }_i^T, \beta _i)^T$

. Approximation of the posterior distribution of the item parameters is facilitated by setting a

![]() $\text {MVN} (\boldsymbol {\mu }_{\xi }, \boldsymbol {\Sigma }_{\xi }), \boldsymbol {\mu }_{\xi } = (1,1,\ldots ,1,0)^T, \boldsymbol {\Sigma }_{\xi } = \boldsymbol {I}_{D+1} $

prior distribution for

$\text {MVN} (\boldsymbol {\mu }_{\xi }, \boldsymbol {\Sigma }_{\xi }), \boldsymbol {\mu }_{\xi } = (1,1,\ldots ,1,0)^T, \boldsymbol {\Sigma }_{\xi } = \boldsymbol {I}_{D+1} $

prior distribution for

![]() $\boldsymbol {\xi }_i$

. We set a prior distribution of

$\boldsymbol {\xi }_i$

. We set a prior distribution of

![]() $\text {gamma } (1/2, 1/2)$

for

$\text {gamma } (1/2, 1/2)$

for

![]() $\sigma _{\epsilon }^{-2}$

. The prior for the covariance of the latent variables of the item responses is described in the joint component. In the case of binary item response, similar to binary network edge value, we can model the binary network response as the binary indicator that the latent continuous measure,

$\sigma _{\epsilon }^{-2}$

. The prior for the covariance of the latent variables of the item responses is described in the joint component. In the case of binary item response, similar to binary network edge value, we can model the binary network response as the binary indicator that the latent continuous measure,

![]() $\eta _{p,i}$

is larger than zero, that is,

$\eta _{p,i}$

is larger than zero, that is,

![]() $Y_{p,i} = 1$

, when

$Y_{p,i} = 1$

, when

![]() $\eta _{p,i}>0$

.

$\eta _{p,i}>0$

.

Identifiability: The latent variables of the item responses and the item slopes,

![]() $\boldsymbol {\Theta }$

and

$\boldsymbol {\Theta }$

and

![]() $\boldsymbol {A,}$

are identified up to rotations while

$\boldsymbol {A,}$

are identified up to rotations while

![]() $\boldsymbol {\Theta } \boldsymbol {A}^T$

is directly identified following the condition that either

$\boldsymbol {\Theta } \boldsymbol {A}^T$

is directly identified following the condition that either

![]() $\boldsymbol {\Theta }$

or

$\boldsymbol {\Theta }$

or

![]() $\boldsymbol {A}$

is centered. Following convention, we focus on the condition that

$\boldsymbol {A}$

is centered. Following convention, we focus on the condition that

![]() $\boldsymbol {\Theta }$

is centered.

$\boldsymbol {\Theta }$

is centered.

Lemma 2. Assume

![]() $\boldsymbol {\Theta }$

is centered, that is,

$\boldsymbol {\Theta }$

is centered, that is,

![]() $ \boldsymbol {H}\boldsymbol {\Theta } = \boldsymbol {\Theta }$

. If two sets of parameters

$ \boldsymbol {H}\boldsymbol {\Theta } = \boldsymbol {\Theta }$

. If two sets of parameters

![]() $\boldsymbol {\beta }$

,

$\boldsymbol {\beta }$

,

![]() $\boldsymbol {\Theta } $

,

$\boldsymbol {\Theta } $

,

![]() $\boldsymbol {A} $

, and

$\boldsymbol {A} $

, and

![]() $\boldsymbol {\beta '}$

,

$\boldsymbol {\beta '}$

,

![]() $\boldsymbol {\Theta }' $

,

$\boldsymbol {\Theta }' $

,

![]() $\boldsymbol {A}' $

lead to the same

$\boldsymbol {A}' $

lead to the same

![]() $E(Y_{p,i})$

, then

$E(Y_{p,i})$

, then

![]() $\boldsymbol {\beta } = \boldsymbol {\beta '}$

,

$\boldsymbol {\beta } = \boldsymbol {\beta '}$

,

![]() $\boldsymbol {\Theta } = \boldsymbol {\Theta '} \boldsymbol {O} $

, and

$\boldsymbol {\Theta } = \boldsymbol {\Theta '} \boldsymbol {O} $

, and

![]() $\boldsymbol {A} = \boldsymbol {A'} \boldsymbol {O}^{-1} $

, where

$\boldsymbol {A} = \boldsymbol {A'} \boldsymbol {O}^{-1} $

, where

![]() $\boldsymbol {O}$

is a

$\boldsymbol {O}$

is a

![]() $D \times D$

nonsingular matrix.

$D \times D$

nonsingular matrix.

The proof of Lemma 2 is in the Supplementary Material. In addition to the condition identified above, we also fix the latent variables to have unit variances and orthogonality between dimensions following the current factor model (and item response model) literature. Similar to the network component of the JNIRM, we also use the first D left and right singular vectors of the posterior means of the vector products as the estimates of

![]() $\boldsymbol {\theta }$

and

$\boldsymbol {\theta }$

and

![]() $\boldsymbol {\alpha }$

. In this approach, only the convergence of the vector products is needed for the estimation of the item slopes and the person variables, not the convergence of the item slopes and the latent variables themselves.

$\boldsymbol {\alpha }$

. In this approach, only the convergence of the vector products is needed for the estimation of the item slopes and the person variables, not the convergence of the item slopes and the latent variables themselves.

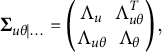

3.3 Modeling the joint component

Recall that the network component of the joint framework is modeled following Equation (3.1), and that the item response component of the joint framework is modeled following Equation (3.2). In these two components, we use normal distributions for the network dimensions and the latent variables of the item responses separately. To connect these two sets of variables, we use a multivariate normal distribution with a joint covariance matrix:

In Equation (3.3), we use

![]() $\boldsymbol {\Sigma }_{u\theta }$

to denote the omnibus covariance matrix capturing the co-varying relationships between network and item responses, which can be partitioned into four blocks,

$\boldsymbol {\Sigma }_{u\theta }$

to denote the omnibus covariance matrix capturing the co-varying relationships between network and item responses, which can be partitioned into four blocks,

![]() $\Lambda _u$

,

$\Lambda _u$

,

![]() $\Lambda _{u\theta }^T$

,

$\Lambda _{u\theta }^T$

,

![]() $\Lambda _{u\theta }$

, and

$\Lambda _{u\theta }$

, and

![]() $\Lambda _{\theta }$

with

$\Lambda _{\theta }$

with

![]() $\Lambda _{u\theta }^T$

being the transpose of

$\Lambda _{u\theta }^T$

being the transpose of

![]() $\Lambda _{u\theta }$

containing the same parameters. In this notation, for simplicity and to reduce the complexity of notations, we use subscript u to denote the network component, omitting subscript v, and subscript

$\Lambda _{u\theta }$

containing the same parameters. In this notation, for simplicity and to reduce the complexity of notations, we use subscript u to denote the network component, omitting subscript v, and subscript

![]() $\theta $

to denote the item response component. Approximation of the posterior distribution of

$\theta $

to denote the item response component. Approximation of the posterior distribution of

![]() $ \boldsymbol {\Sigma }_{u\theta } $

is facilitated by setting a prior distribution of

$ \boldsymbol {\Sigma }_{u\theta } $

is facilitated by setting a prior distribution of

![]() $ \text {Wishart} (\boldsymbol {I}_{2K+D}, 2K+D+2)$

. The covariance of the latent network dimensions

$ \text {Wishart} (\boldsymbol {I}_{2K+D}, 2K+D+2)$

. The covariance of the latent network dimensions

![]() $(\boldsymbol {u}_p, \boldsymbol {v}_p)^T$

is the

$(\boldsymbol {u}_p, \boldsymbol {v}_p)^T$

is the

![]() $2K \times 2K$

matrix

$2K \times 2K$

matrix

![]() $\boldsymbol {\Lambda }_{u}$

, and the covariance of the latent variables of the item responses is the

$\boldsymbol {\Lambda }_{u}$

, and the covariance of the latent variables of the item responses is the

![]() $D \times D$

matrix

$D \times D$

matrix

![]() $\boldsymbol {\Lambda }_{\theta }$

. If the network dimensions are independent, then

$\boldsymbol {\Lambda }_{\theta }$

. If the network dimensions are independent, then

![]() $\boldsymbol {\Lambda }_{u}$

is a diagonal matrix. If the dimensions of the item responses are independent, then

$\boldsymbol {\Lambda }_{u}$

is a diagonal matrix. If the dimensions of the item responses are independent, then

![]() $\boldsymbol {\Lambda }_{\theta }$

is a diagonal matrix. In JNIRM, we can test whether the network and item responses are dependent by testing whether the

$\boldsymbol {\Lambda }_{\theta }$

is a diagonal matrix. In JNIRM, we can test whether the network and item responses are dependent by testing whether the

![]() $D \times 2K$

matrix

$D \times 2K$

matrix

![]() $\boldsymbol {\Lambda }_{u\theta } = 0.$

See details in the next section. The model is estimated using a Gibbs sampler outlined in Section 4.

$\boldsymbol {\Lambda }_{u\theta } = 0.$

See details in the next section. The model is estimated using a Gibbs sampler outlined in Section 4.

3.4 Advantages

The superiority of JNIRM includes (1) more realistic model representation of the mutual impact of social relationship and school climate, (2) flexible accommodations of missing observations, and (3) cost effective study designs that improve precision of inference without collecting additional data. First, unlike regression models that assume one-directional relationships between advice-seeking networks and three school climate dimensions,—assuming that school climate dimensions impact teachers’ advice-seeking behaviors or vice versa—JNIRM acknowledges the mutual relationship between them. Changes in the school climate often correlate with changes in advice-seeking networks, but teachers’ relationships with each other can also influence school climate. In this way, JNIRM can offer a more realistic representation of the relationship between the advice-seeking networks and school climate, allowing for a more flexible interpretation of the results. Second, network regression models with school climate as covariates assume that each teacher’s school climate score is available (covariates are assumed to be fixed observations in a regression model) and that the school climate data are fully observed (no missing observation can be accommodated in covariates of a regression model). This assumption becomes problematic when data include missing observations for teacher’s school climate scores; in such situations, these teachers’ information may be discarded from the model, or additional data processing procedures for missing data are warranted, which often comes with their own modeling assumptions and inconsistencies. Regression methods struggle to handle situations where data are incomplete when incorporating school climate information as covariates. Lastly, by simultaneously modeling the reciprocal influence between networks and behaviors in a shared data generation process, we are able to improve model performance and prediction accuracy in certain situations where networks are sparse. During the MCMC sampling, the estimation of the latent network variables is not only informed by the network data, but also by the school climate item responses. In this way, without collecting additional data with more sample size, we are able to increase the amount of information in estimating network parameters, leading to more powerful and cost-effective studies.

3.5 Exploring the dependence between the network and item responses

In this section, we discuss how to make inferences regarding the dependence between the network and item responses. Due to the previously described solution for the identification issues, we cannot directly determine uncertainty about the covariance matrix based on the MCMC chain. Instead, following Fosdick and Hoff (Reference Fosdick and Hoff2015), we use the multivariate test of independence and the CCA to study the dependence between the two components of the joint framework. We first discuss the test and then CCA.

3.5.1 The multivariate test of independence

The classical multivariate test of independence (see Anderson, Reference Anderson1962) is applied using the estimated network dimensions and the latent variables of the item response. More specifically, the null and the alternative hypotheses of the test are

The test statisticFootnote

3

is

![]() $t = \frac {\max _{ \Lambda _u, \Lambda _{\theta }} L_0(\Lambda _u, \Lambda _{\theta } | \boldsymbol {u}_p, \boldsymbol {v}_p, \boldsymbol {\theta }_p) }{ \max _{\boldsymbol {\Sigma }_{u\theta }} L(\boldsymbol {\Sigma }_{u\theta }|\boldsymbol {u}_p, \boldsymbol {v}_p, \boldsymbol {\theta }_p)} = \left ( \frac { |\hat {\boldsymbol {\Sigma }}_{u\theta }|}{|\hat {\Lambda }_u| \cdot | \hat {\Lambda }_{\theta }|} \right )$

, where

$t = \frac {\max _{ \Lambda _u, \Lambda _{\theta }} L_0(\Lambda _u, \Lambda _{\theta } | \boldsymbol {u}_p, \boldsymbol {v}_p, \boldsymbol {\theta }_p) }{ \max _{\boldsymbol {\Sigma }_{u\theta }} L(\boldsymbol {\Sigma }_{u\theta }|\boldsymbol {u}_p, \boldsymbol {v}_p, \boldsymbol {\theta }_p)} = \left ( \frac { |\hat {\boldsymbol {\Sigma }}_{u\theta }|}{|\hat {\Lambda }_u| \cdot | \hat {\Lambda }_{\theta }|} \right )$

, where

![]() $L_0$

and L are the likelihood with and without restricting

$L_0$

and L are the likelihood with and without restricting

![]() $\Lambda _{u\theta }=0$

, and estimates of the covariance matrix are obtained using the estimated latent network dimensions and the latent variables of the item responses. The critical region of the test is

$\Lambda _{u\theta }=0$

, and estimates of the covariance matrix are obtained using the estimated latent network dimensions and the latent variables of the item responses. The critical region of the test is

![]() $t < t(\alpha )$

, where

$t < t(\alpha )$

, where

![]() $t(\alpha )$

is the threshold value such that the probability of an observed t is smaller than

$t(\alpha )$

is the threshold value such that the probability of an observed t is smaller than

![]() $t(\alpha )$

with probability

$t(\alpha )$

with probability

![]() $\alpha $

.

$\alpha $

.

3.5.2 Canonical correlation analysis

Following the rejection of the null hypothesis in the classical multivariate test of independence between the network and item responses, we may wish to know where the lack of independence lies. We can use the CCA to achieve this goal. In this section, we provide a brief overview of CCA, and we focus on how it can be applied to understand the relationship between the network and item responses. For more detailed discussions about this topic, we refer readers to Anderson (Reference Anderson1962), Marden (Reference Marden2015), Sherry and Henson (Reference Sherry and Henson2005), and Thorndike (Reference Thorndike2000).

CCA is a useful technique to study the relationship between two sets of variables. In this case, the first set of variables includes the network dimensions from the network component of the JNIRM, and the second set of variables includes the latent variables from the item response component of the JNIRM. Using CCA, we estimate the largest correlation obtainable between a linear combination of the network dimensions and a linear combination of the latent variables of the item responses. Then, a second largest correlation is obtained with linear combinations that are uncorrelated with the first linear combinations. The process is repeated, with each set of linear combinations maximizing the correlation subject to being uncorrelated with the previous combinations.

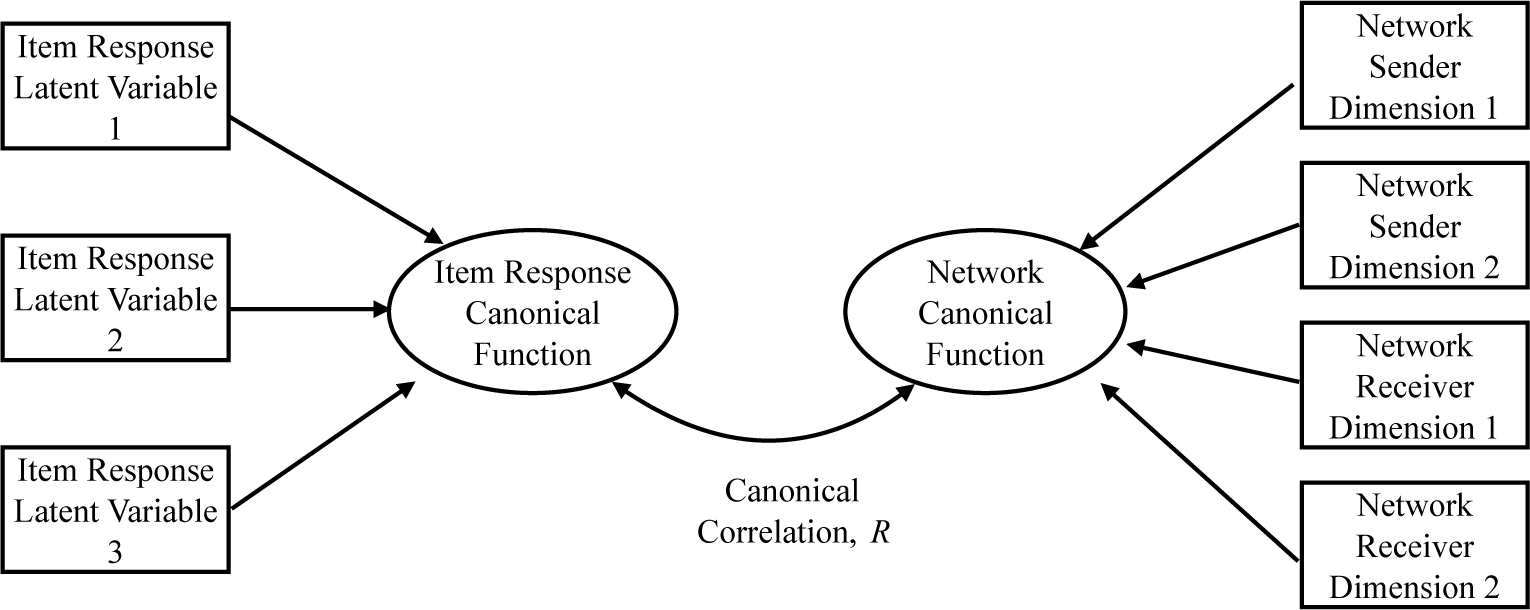

In Figure 3, we demonstrate CCA with a hypothetical JNIRM when the number of dimensions for the network is two, and the number of dimensions for the item responses is three. In the left side of the figure, the latent variables of the item responses are linearly combined to create the item response canonical function, and a similar process occurs in the right side of the figure for the network. The weights of the linear combination are called the canonical weights or the function coefficients, and the resulting weighted sum is called the canonical function. For example, an item response canonical function is the weighted sum of the latent variables of the item responses. There can be as many canonical functions as the smallest set of variables at one of the two sides. The canonical functions are orthogonal to each other following the independence of the linear combinations. We will base our interpretations with CCA on standardized function coefficients.

Figure 3 Illustration of the network and item response canonical functions in a canonical correlation analysis with three latent variables from the item responses and two network dimensions. The canonical correlation is the correlation between the network and item response canonical functions, which are linear combinations of the item response latent variables and the network dimensions.

In the center of Figure 3, the canonical correlation,

![]() $R,$

is shown as the correlation between the network and the item response canonical functions. The square of the canonical correlation,

$R,$

is shown as the correlation between the network and the item response canonical functions. The square of the canonical correlation,

![]() $R^2$

, represents the proportion of variance shared by the network and the item response canonical functions. The square of the second canonical correlation indicates the proportion of the shared variance between the second pair of canonical functions based on the remaining relationship (independent of the first pair of canonical functions).

$R^2$

, represents the proportion of variance shared by the network and the item response canonical functions. The square of the second canonical correlation indicates the proportion of the shared variance between the second pair of canonical functions based on the remaining relationship (independent of the first pair of canonical functions).

Significance tests can be conducted in association with the canonical correlations. Testing each correlation separately for statistical significance is not readily available, instead, the correlations are tested in a hierarchal fashion. First, we test whether all correlations are zero; if that null hypothesis is rejected, we can test whether the

![]() $I-1$

(I is the total number of canonical correlations) smallest correlations are—or whether the remaining shared variance is—zero, etc.

$I-1$

(I is the total number of canonical correlations) smallest correlations are—or whether the remaining shared variance is—zero, etc.

4 Bayesian inference

In this section, we propose an algorithm for estimating the proposed JNIRM using MCMC methods. We devise a Gibbs sampler for the proposed model and discuss how to model binary data. In the following, we provide details of the full conditionals for each unknown quantity, and the algorithm is implemented through iterative sampling of the unknown quantities from their full conditional distributions. With sufficient iterations, we obtain stable Markov chains to approximate various quantities of the targeted posterior distributions. With random initial values on the unknown parameters, we conduct posterior computation by iterating the following steps:

-

• simulate

$\boldsymbol {U}, \boldsymbol {V}$

from their full conditional distributions;

$\boldsymbol {U}, \boldsymbol {V}$

from their full conditional distributions; -

• simulate

$\boldsymbol {\Theta }$

from their full conditional distributions;

$\boldsymbol {\Theta }$

from their full conditional distributions; -

• simulate

$\boldsymbol {\Sigma }_{u \theta }$

from its full conditional distribution;

$\boldsymbol {\Sigma }_{u \theta }$

from its full conditional distribution; -

• simulate

$\sigma ^{-2}_e$

,

$\sigma ^{-2}_e$

,

$\rho ,$

and

$\rho ,$

and

$\delta $

from their full conditional distributions;

$\delta $

from their full conditional distributions; -

• simulate

$\sigma ^{-2}_{\epsilon }$

from its full conditional distributions;

$\sigma ^{-2}_{\epsilon }$

from its full conditional distributions; -

• simulate

$\boldsymbol {\xi }_i$

from its full conditional distributions.

$\boldsymbol {\xi }_i$

from its full conditional distributions.

4.1 Full conditionals of the latent variables and the covariance

4.1.1 Multiplicative UV effect

The item responses are related to the network through the dependence between

![]() $\boldsymbol {\Theta }$

and

$\boldsymbol {\Theta }$

and

![]() $\boldsymbol {U}, \boldsymbol {V}$

. We are interested in the joint full conditional distribution of

$\boldsymbol {U}, \boldsymbol {V}$

. We are interested in the joint full conditional distribution of

![]() $\boldsymbol {\Theta }$

and

$\boldsymbol {\Theta }$

and

![]() $\boldsymbol {U}, \boldsymbol {V}$

. For person p, we focus on the first dimension for sender,

$\boldsymbol {U}, \boldsymbol {V}$

. For person p, we focus on the first dimension for sender,

![]() $u_{p,1}$

conditional on the other dimensions,

$u_{p,1}$

conditional on the other dimensions,

![]() $u_{p,2},\ldots ,u_{p,K}$

and all dimensions of

$u_{p,2},\ldots ,u_{p,K}$

and all dimensions of

![]() $\boldsymbol {v}_p $

,

$\boldsymbol {v}_p $

,

![]() $\boldsymbol {v}_p = (v_{p,1},\ldots ,v_{p,K})^T$

. Given that

$\boldsymbol {v}_p = (v_{p,1},\ldots ,v_{p,K})^T$

. Given that

![]() $\boldsymbol {u}_p, \boldsymbol {v}_p$

, and

$\boldsymbol {u}_p, \boldsymbol {v}_p$

, and

![]() $\boldsymbol {\theta }_p$

follow a multivariate normal distribution, the joint probability model for

$\boldsymbol {\theta }_p$

follow a multivariate normal distribution, the joint probability model for

![]() $u_{p,1}$

and

$u_{p,1}$

and

![]() $\theta _{p,1}$

,…,

$\theta _{p,1}$

,…,

![]() $\theta _{p,D}$

conditional on

$\theta _{p,D}$

conditional on

![]() $u_{p,2},\ldots ,u_{p,K}$

and

$u_{p,2},\ldots ,u_{p,K}$

and

![]() $\boldsymbol {v}_p$

is also a multivariate normal distribution with conditional mean

$\boldsymbol {v}_p$

is also a multivariate normal distribution with conditional mean

![]() $\boldsymbol {\mu }_{u\theta |\dots }$

and conditional covariance matrix

$\boldsymbol {\mu }_{u\theta |\dots }$

and conditional covariance matrix

![]() $\boldsymbol {\Sigma }_{u\theta |\dots }$

. We can define the conditional covariance matrix as a block matrix:

$\boldsymbol {\Sigma }_{u\theta |\dots }$

. We can define the conditional covariance matrix as a block matrix:

$ \boldsymbol {\Sigma }_{u\theta |\dots } = \begin {pmatrix} \Lambda _u &\Lambda _{u\theta }^T\\ \Lambda _{u\theta } &\Lambda _{\theta } \end {pmatrix}, \nonumber $

and the inverse of this block matrix is

$ \boldsymbol {\Sigma }_{u\theta |\dots } = \begin {pmatrix} \Lambda _u &\Lambda _{u\theta }^T\\ \Lambda _{u\theta } &\Lambda _{\theta } \end {pmatrix}, \nonumber $

and the inverse of this block matrix is

$$ \begin{align} \boldsymbol{\Sigma}_{u\theta|\dots}^{-1} = \begin{pmatrix} \Lambda_u &\Lambda_{u\theta}^T\\ \Lambda_{u\theta} &\Lambda_{\theta} \end{pmatrix}^{-1} = \begin{pmatrix} (\Lambda_u - \Lambda_{u\theta}^T \Lambda_{\theta}^{-1} \Lambda_{u\theta})^{-1} & - (\Lambda_u - \Lambda_{u\theta}^T \Lambda_{\theta}^{-1} \Lambda_{u\theta})^{-1} \Lambda_{u\theta}^T \Lambda_{\theta}^{-1}\\ - (\Lambda_{\theta} - \Lambda_{u\theta} \Lambda_u^{-1} \Lambda_{u\theta}^T)^{-1} \Lambda_{u\theta} \Lambda_u^{-1} &(\Lambda_{\theta} - \Lambda_{u\theta} \Lambda_u^{-1} \Lambda_{u\theta}^T)^{-1} \end{pmatrix}. \end{align} $$

$$ \begin{align} \boldsymbol{\Sigma}_{u\theta|\dots}^{-1} = \begin{pmatrix} \Lambda_u &\Lambda_{u\theta}^T\\ \Lambda_{u\theta} &\Lambda_{\theta} \end{pmatrix}^{-1} = \begin{pmatrix} (\Lambda_u - \Lambda_{u\theta}^T \Lambda_{\theta}^{-1} \Lambda_{u\theta})^{-1} & - (\Lambda_u - \Lambda_{u\theta}^T \Lambda_{\theta}^{-1} \Lambda_{u\theta})^{-1} \Lambda_{u\theta}^T \Lambda_{\theta}^{-1}\\ - (\Lambda_{\theta} - \Lambda_{u\theta} \Lambda_u^{-1} \Lambda_{u\theta}^T)^{-1} \Lambda_{u\theta} \Lambda_u^{-1} &(\Lambda_{\theta} - \Lambda_{u\theta} \Lambda_u^{-1} \Lambda_{u\theta}^T)^{-1} \end{pmatrix}. \end{align} $$

We can further define the inverse of the conditional covariance matrix as another block matrix:

$ \boldsymbol {\Sigma }_{u\theta |\dots }^{-1} = \begin {pmatrix} Q_u &Q_{\theta u}\\ Q_{u\theta } & Q_{\theta }\end {pmatrix}, $

with each component as a function of

$ \boldsymbol {\Sigma }_{u\theta |\dots }^{-1} = \begin {pmatrix} Q_u &Q_{\theta u}\\ Q_{u\theta } & Q_{\theta }\end {pmatrix}, $

with each component as a function of

![]() $\Lambda $

s. Therefore, the probability model for

$\Lambda $

s. Therefore, the probability model for

![]() $u_{p,i}, \theta _{p,1},\ldots ,\theta _{p,D}$

conditional on

$u_{p,i}, \theta _{p,1},\ldots ,\theta _{p,D}$

conditional on

![]() $u_{p,2},\ldots , u_{p,K}, v_{p,1},\ldots ,v_{p,K}$

can be written as

$u_{p,2},\ldots , u_{p,K}, v_{p,1},\ldots ,v_{p,K}$

can be written as

where

![]() $S_u$

is the first block of the block matrix

$S_u$

is the first block of the block matrix ![]()

Let us now look at the first dimension for all persons and rewrite the network component as:

![]() $\boldsymbol {R} = \boldsymbol {X}- \delta \boldsymbol {1} \boldsymbol {1}^T - \sum _{k=2}^K \boldsymbol {u}_k \boldsymbol {v}_k^T $

, where

$\boldsymbol {R} = \boldsymbol {X}- \delta \boldsymbol {1} \boldsymbol {1}^T - \sum _{k=2}^K \boldsymbol {u}_k \boldsymbol {v}_k^T $

, where

![]() $\boldsymbol {u}_k$

is the

$\boldsymbol {u}_k$

is the

![]() $N \times 1$

vector representing the kth network dimension for sender, and

$N \times 1$

vector representing the kth network dimension for sender, and

![]() $\boldsymbol {v}_k$

is the

$\boldsymbol {v}_k$

is the

![]() $N \times 1$

vector representing the kth network dimension for receiver. Then, we have

$N \times 1$

vector representing the kth network dimension for receiver. Then, we have

![]() $\boldsymbol {R} = \boldsymbol {u}_1^T \boldsymbol {v}_1 + \boldsymbol {E}$

. Decorrelating the error, we have

$\boldsymbol {R} = \boldsymbol {u}_1^T \boldsymbol {v}_1 + \boldsymbol {E}$

. Decorrelating the error, we have

![]() $\boldsymbol {\tilde {R}} = c \boldsymbol {R} + d \boldsymbol {R}^T$

, where

$\boldsymbol {\tilde {R}} = c \boldsymbol {R} + d \boldsymbol {R}^T$

, where

![]() $ c= \sigma _e^{-1} ((1+\rho )^{-1/2} + (1- \rho )^{-1/2})/2$

and

$ c= \sigma _e^{-1} ((1+\rho )^{-1/2} + (1- \rho )^{-1/2})/2$

and

![]() $ d= \sigma _e^{-1} ((1+\rho )^{-1/2} - (1- \rho )^{-1/2})/2$

. We can write the model for

$ d= \sigma _e^{-1} ((1+\rho )^{-1/2} - (1- \rho )^{-1/2})/2$

. We can write the model for

![]() $\boldsymbol {\tilde {R}}$

in a vectorized form and obtain the likelihood for the decorrelated network as

$\boldsymbol {\tilde {R}}$

in a vectorized form and obtain the likelihood for the decorrelated network as

![]() $ f(\boldsymbol {\tilde {r}} | \boldsymbol {v}_1, \boldsymbol {u}_1) \propto \exp \left ( - \frac {1}{2} \left ( \boldsymbol {\tilde {r}} - \boldsymbol {M} \boldsymbol {u}_i \right )^T\left ( \boldsymbol {\tilde {r}} - \boldsymbol {M} \boldsymbol {u}_i \right ) \right )$

and

$ f(\boldsymbol {\tilde {r}} | \boldsymbol {v}_1, \boldsymbol {u}_1) \propto \exp \left ( - \frac {1}{2} \left ( \boldsymbol {\tilde {r}} - \boldsymbol {M} \boldsymbol {u}_i \right )^T\left ( \boldsymbol {\tilde {r}} - \boldsymbol {M} \boldsymbol {u}_i \right ) \right )$

and

![]() $\boldsymbol {M} = c(\boldsymbol {v}_1 \otimes \boldsymbol {I}) + d(\boldsymbol {I} \otimes \boldsymbol {v}_1)$

.

$\boldsymbol {M} = c(\boldsymbol {v}_1 \otimes \boldsymbol {I}) + d(\boldsymbol {I} \otimes \boldsymbol {v}_1)$

.

We can write the full conditional distribution of

![]() $\boldsymbol {u}_{1}$

:

$\boldsymbol {u}_{1}$

:

The full conditional distribution of the other network dimensions and the latent variables of item responses can be derived in a similar fashion.

Recall that prior for

![]() $\boldsymbol {\Sigma }_{u \theta }$

is

$\boldsymbol {\Sigma }_{u \theta }$

is

![]() $\boldsymbol {\Sigma }_{u \theta }^{-1} \sim \text {Wishart} (\boldsymbol {I}_{2K+D}, 2K+D+2)$

. Let

$\boldsymbol {\Sigma }_{u \theta }^{-1} \sim \text {Wishart} (\boldsymbol {I}_{2K+D}, 2K+D+2)$

. Let

![]() $\boldsymbol {F'}$

be an

$\boldsymbol {F'}$

be an

![]() $N \times (2K+D)$

matrix with the pth row as

$N \times (2K+D)$

matrix with the pth row as

![]() $(\boldsymbol {u}_{p}^T, \boldsymbol {v}_{p}^T, \boldsymbol {\theta }_{p}^T)$

. The full conditional for

$(\boldsymbol {u}_{p}^T, \boldsymbol {v}_{p}^T, \boldsymbol {\theta }_{p}^T)$

. The full conditional for

![]() $\boldsymbol {\Sigma }_{u \theta }$

follows a inverse-Wishart

$\boldsymbol {\Sigma }_{u \theta }$

follows a inverse-Wishart

![]() $(\boldsymbol {I}_{2K+D} + \boldsymbol {F'}^T \boldsymbol {F'}, N+2K+D+2)$

.

$(\boldsymbol {I}_{2K+D} + \boldsymbol {F'}^T \boldsymbol {F'}, N+2K+D+2)$

.

4.2 Full conditionals of

$\sigma ^{-2}_e$

,

$\sigma ^{-2}_e$

,

$\rho $

, and

$\rho $

, and

$\delta $

$\delta $

To derive the full conditional distribution of the network error term, we look at the network model with only the error term:

![]() $\boldsymbol {E} = \boldsymbol {X} - \delta \boldsymbol {1} \boldsymbol {1}^T - \boldsymbol {U}^T \boldsymbol {U}$

for the bilinear effect, and

$\boldsymbol {E} = \boldsymbol {X} - \delta \boldsymbol {1} \boldsymbol {1}^T - \boldsymbol {U}^T \boldsymbol {U}$

for the bilinear effect, and

![]() $\boldsymbol {E} = \boldsymbol {X} - \delta \boldsymbol {1} \boldsymbol {1}^T - \boldsymbol {U}^T \boldsymbol {V}$

for the multiplicative UV effect. Recall that the prior for

$\boldsymbol {E} = \boldsymbol {X} - \delta \boldsymbol {1} \boldsymbol {1}^T - \boldsymbol {U}^T \boldsymbol {V}$

for the multiplicative UV effect. Recall that the prior for

![]() $\sigma _e^{-2}$

is gamma

$\sigma _e^{-2}$

is gamma

![]() $(1/2, 1/2)$

. Therefore, the full conditional of

$(1/2, 1/2)$

. Therefore, the full conditional of

![]() $\sigma _e^{-2}$

is also gamma

$\sigma _e^{-2}$

is also gamma

$\left (\frac {N^2 + 1}{2}, 1/2 \left ( 1+ \sum _{a < b} \begin {pmatrix} e_{a,b}\\ e_{b,a} \end {pmatrix}^T \begin {pmatrix} 1 & \rho \\ \rho & 1 \end {pmatrix}^{-1} \begin {pmatrix} e_{a,b}\\ e_{b,a} \end {pmatrix} + \sum _{a =1}^N (1 + \rho )^{-1} e_{a,a} ^2 \right )\right )$

.

$\left (\frac {N^2 + 1}{2}, 1/2 \left ( 1+ \sum _{a < b} \begin {pmatrix} e_{a,b}\\ e_{b,a} \end {pmatrix}^T \begin {pmatrix} 1 & \rho \\ \rho & 1 \end {pmatrix}^{-1} \begin {pmatrix} e_{a,b}\\ e_{b,a} \end {pmatrix} + \sum _{a =1}^N (1 + \rho )^{-1} e_{a,a} ^2 \right )\right )$

.

For

![]() $\rho $

, the full conditional distribution of the within-dyad correlation is not a well-known distribution. To update parameter

$\rho $

, the full conditional distribution of the within-dyad correlation is not a well-known distribution. To update parameter

![]() $\rho $

, we use Metropolis–Hastings with a uniform prior between

$\rho $

, we use Metropolis–Hastings with a uniform prior between

![]() $-1$

and

$-1$

and

![]() $1$

. We approximate the full conditional distribution by sampling values from a proposed probability model and accept with some probability. The estimation of the intercept parameter,

$1$

. We approximate the full conditional distribution by sampling values from a proposed probability model and accept with some probability. The estimation of the intercept parameter,

![]() $\delta $

, can be seen as a Bayesian linear regression problem with a design matrix

$\delta $

, can be seen as a Bayesian linear regression problem with a design matrix

![]() $\boldsymbol {1} \boldsymbol {1}^T $

and a normal prior.

$\boldsymbol {1} \boldsymbol {1}^T $

and a normal prior.

4.3 Full conditionals of item parameters and

$\sigma ^{-2}_{\epsilon }$

$\sigma ^{-2}_{\epsilon }$

Recall that

![]() $\boldsymbol {\xi }_i$

is the

$\boldsymbol {\xi }_i$

is the

![]() $(D +1) \times 1$

vector of parameters for item i,

$(D +1) \times 1$

vector of parameters for item i,

![]() $\boldsymbol {\xi }_i = (\boldsymbol {\alpha }_i^T, \beta _i)^T$

. To derive the full conditional distributions of the item parameters, we rewrite the model for the ith item as

$\boldsymbol {\xi }_i = (\boldsymbol {\alpha }_i^T, \beta _i)^T$

. To derive the full conditional distributions of the item parameters, we rewrite the model for the ith item as

where

![]() $\boldsymbol {y}_i$

is the ith column vector of the item response matrix;

$\boldsymbol {y}_i$

is the ith column vector of the item response matrix;

![]() $\boldsymbol {G}$

is an

$\boldsymbol {G}$

is an

![]() $N \times (D + 1)$

design matrix,

$N \times (D + 1)$

design matrix,

![]() $\boldsymbol {G} = (\boldsymbol {\Theta }, \boldsymbol {1}_{N \times 1})$

; and

$\boldsymbol {G} = (\boldsymbol {\Theta }, \boldsymbol {1}_{N \times 1})$

; and

![]() $\boldsymbol {\Theta }$

is the

$\boldsymbol {\Theta }$

is the

![]() $N \times D$

matrix of the latent variables of the item responses. The error of the item response model follows a normal distribution,

$N \times D$

matrix of the latent variables of the item responses. The error of the item response model follows a normal distribution,

![]() $\epsilon _{p,i} \overset {iid}{\sim } N(0, \sigma ^2_{\epsilon })$

.

$\epsilon _{p,i} \overset {iid}{\sim } N(0, \sigma ^2_{\epsilon })$

.

Recall that the prior for

![]() $\boldsymbol {\xi }_i$

is a multivariate normal distribution,

$\boldsymbol {\xi }_i$

is a multivariate normal distribution,

![]() $\boldsymbol {\xi }_i \sim N (\boldsymbol {\mu }_{\xi }, \boldsymbol {\Sigma }_{\xi })$

, then the full conditional of

$\boldsymbol {\xi }_i \sim N (\boldsymbol {\mu }_{\xi }, \boldsymbol {\Sigma }_{\xi })$

, then the full conditional of

![]() $\boldsymbol {\xi }_i$

also follows a multivariate normal distribution with mean

$\boldsymbol {\xi }_i$

also follows a multivariate normal distribution with mean

![]() $\boldsymbol {\mu }_{\xi }^{\ast}$

and variance

$\boldsymbol {\mu }_{\xi }^{\ast}$

and variance

![]() $\boldsymbol {\Sigma }_{\xi }^{\ast}$

:

$\boldsymbol {\Sigma }_{\xi }^{\ast}$

:

![]() $\boldsymbol {\mu }_{\xi }^{\ast} = \Sigma _{\xi }^{\ast} \left ( \sigma ^{-2}_{\epsilon } \boldsymbol {G}^T \boldsymbol {\eta }_i + \boldsymbol {\Sigma }_{\xi }^{-1} \boldsymbol {\mu }_{\xi } \right )$

,

$\boldsymbol {\mu }_{\xi }^{\ast} = \Sigma _{\xi }^{\ast} \left ( \sigma ^{-2}_{\epsilon } \boldsymbol {G}^T \boldsymbol {\eta }_i + \boldsymbol {\Sigma }_{\xi }^{-1} \boldsymbol {\mu }_{\xi } \right )$

,

![]() $ \Sigma _{\xi }^{\ast} =\left ( \sigma ^{-2}_{\epsilon } ( \boldsymbol {G}^T \boldsymbol {G}) + \boldsymbol {\Sigma }_{\xi }^{-1} \right )^{-1}$

.

$ \Sigma _{\xi }^{\ast} =\left ( \sigma ^{-2}_{\epsilon } ( \boldsymbol {G}^T \boldsymbol {G}) + \boldsymbol {\Sigma }_{\xi }^{-1} \right )^{-1}$

.

The prior for

![]() $\sigma ^{-2}_{\epsilon }$

is gamma (1/2, 1/2). Therefore, the posterior for

$\sigma ^{-2}_{\epsilon }$

is gamma (1/2, 1/2). Therefore, the posterior for

![]() $\sigma ^{-2}_{\epsilon }$

is also gamma

$\sigma ^{-2}_{\epsilon }$

is also gamma

![]() $( \frac {N \ast M +1}{2}, 1/2 \ast (1 + \sum _{p=1}^N \sum _{i=1}^M \epsilon _{p,i}^2 ) )$

.

$( \frac {N \ast M +1}{2}, 1/2 \ast (1 + \sum _{p=1}^N \sum _{i=1}^M \epsilon _{p,i}^2 ) )$

.

For binary data,

![]() $X_{a,b}$

and

$X_{a,b}$

and

![]() $y_{p,i}$

are functions of the latent responses,

$y_{p,i}$

are functions of the latent responses,

![]() $\phi _{a,b}$

and

$\phi _{a,b}$

and

![]() $\eta _{p,i}$

. The data can be seen as binary indicators of the latent responses such that

$\eta _{p,i}$

. The data can be seen as binary indicators of the latent responses such that

![]() $X_{a,b} = 1$

if

$X_{a,b} = 1$

if

![]() $\phi _{a,b}> 0$

and

$\phi _{a,b}> 0$

and

![]() $Y_{p,i} = 1$

if

$Y_{p,i} = 1$

if

![]() $\eta _{p,i}> 0$

. The error variances of the latent responses,

$\eta _{p,i}> 0$

. The error variances of the latent responses,

![]() $\sigma ^2_e$

and

$\sigma ^2_e$

and

![]() $\sigma ^2_{\epsilon }$

, are assumed to be

$\sigma ^2_{\epsilon }$

, are assumed to be

![]() $1$

.

$1$

.

5 School climate and teachers’ advice-seeking network

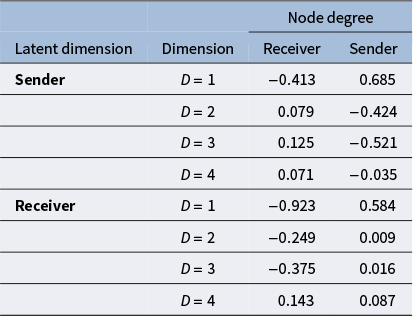

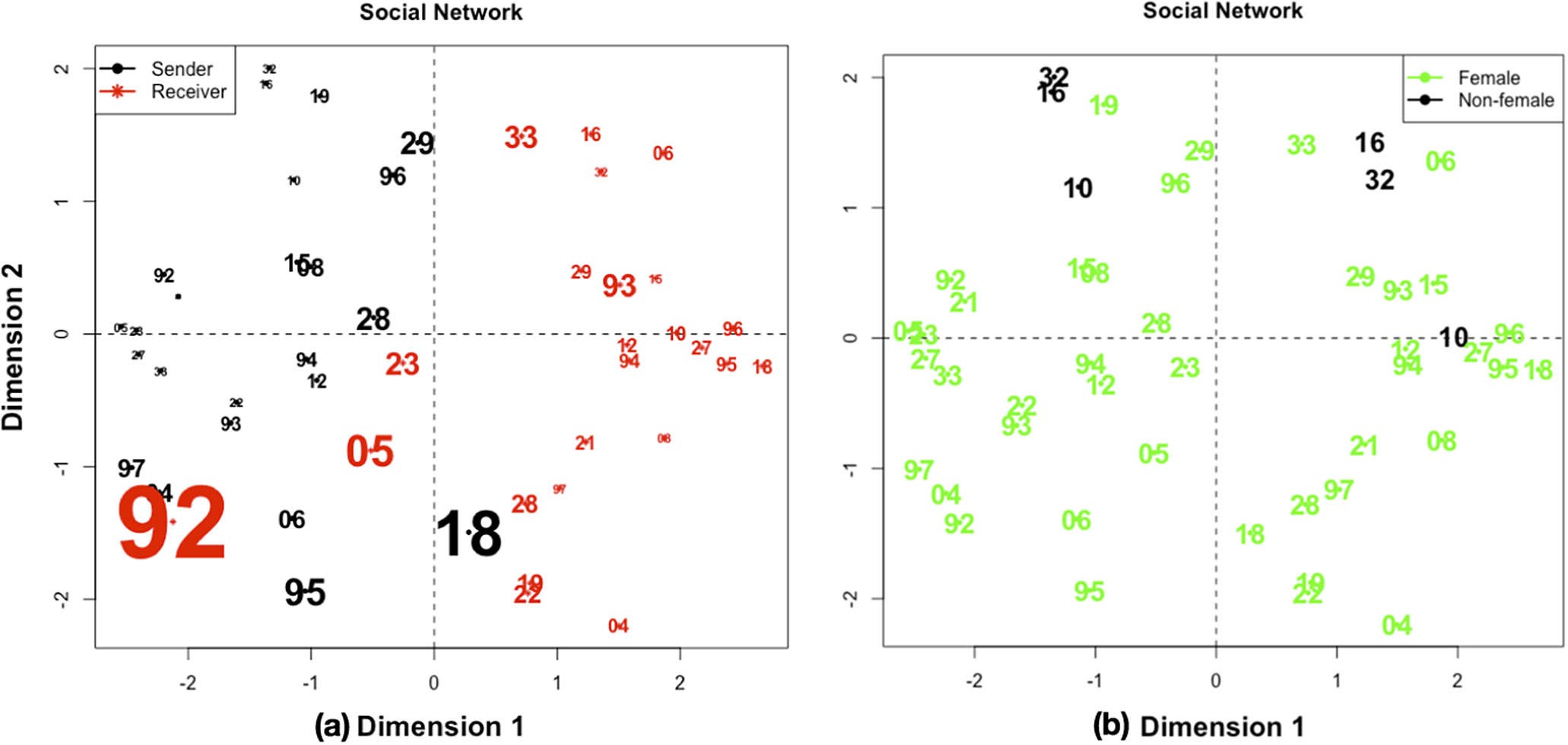

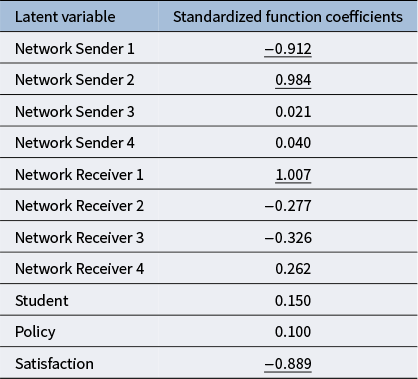

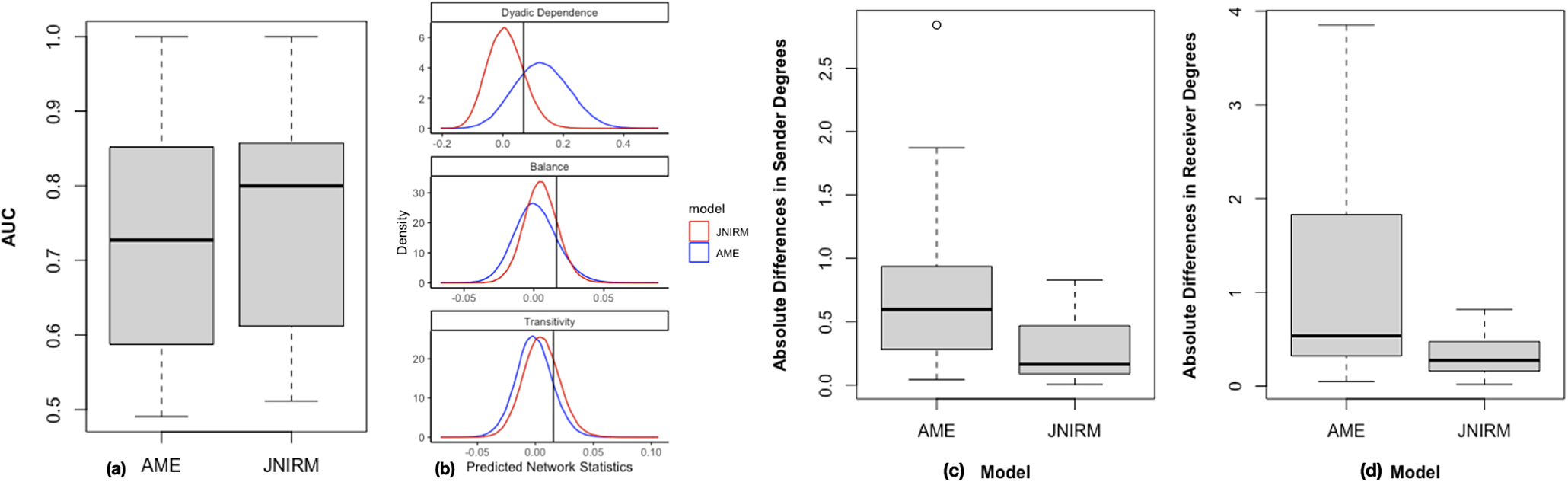

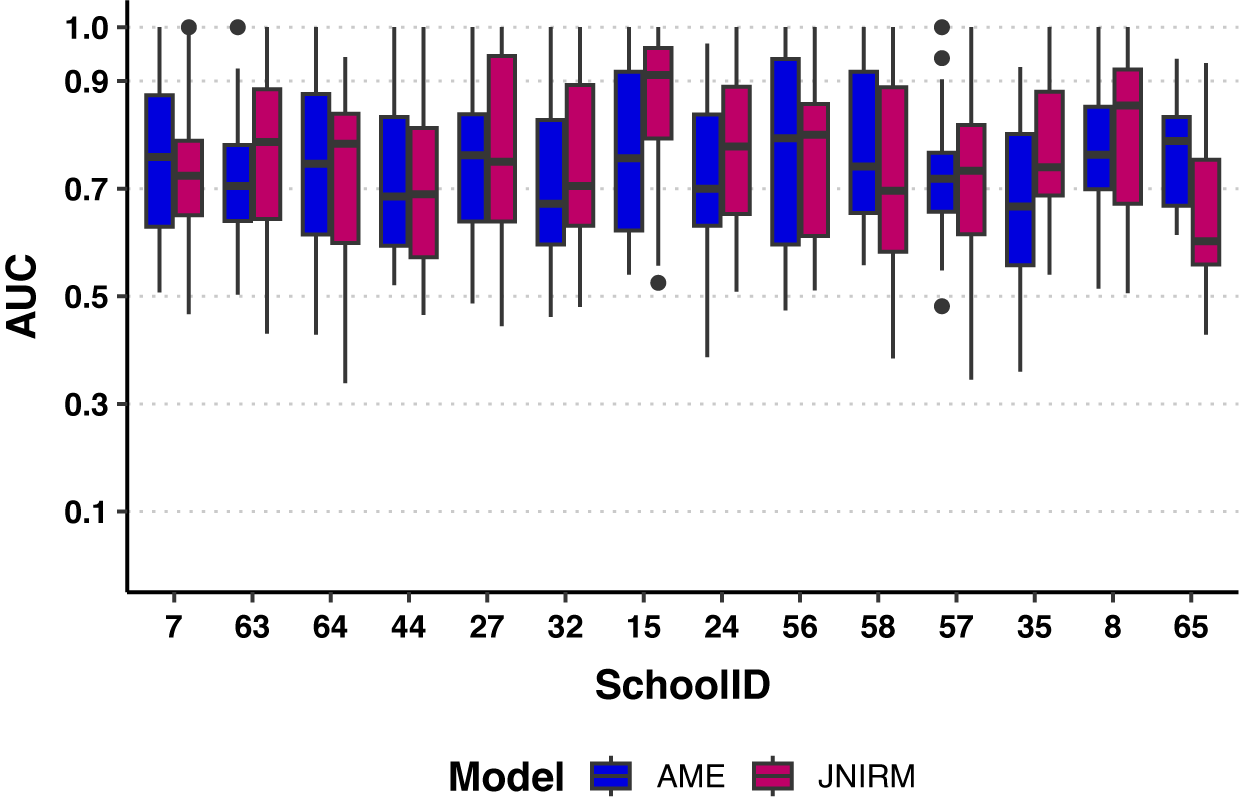

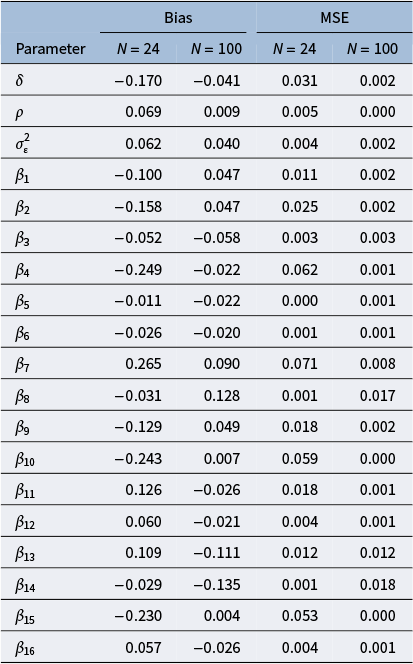

We fit the JNIRT to our advice-seeking network and survey response data. We applied the model to each of the 14 schools separately. There were statistically significant relationships between the network and item responses in 3 out of the 14 schools. The power of the test was small because of the small sample sizes per school. We extensively investigated the results for one of the three schools, and we discuss the results of the other two schools to evaluate the generalizability of the relationship between the network dimensions and the questionnaire dimensions. We also provide a summary of the relationships across all schools.

5.1 Estimation

Because the structures of the advice-seeking networks are distinct across different schools, and there is not much inter-school activity, we fit the JNIRM to each school separately. To be succinct in our report, we chose one school, school 56, as a more detailed demonstration, and summarized the results of the other schools. See discussions about the different network structures recovered for different schools and detailed reports for applications with multiple schools and other individual schools in the Supplementary Material. There were a total of

![]() $26$

teachers in school 56, and the density of the directed network is

$26$

teachers in school 56, and the density of the directed network is

![]() $0.143$

. The receiver and the sender degrees had a negative correlation of

$0.143$

. The receiver and the sender degrees had a negative correlation of

![]() $-0.514$