1 Introduction

Longitudinal mental health assessments in mobile health (mHealth) applications have been found to be a scalable and effective way to capture information on a user’s current mental health status (Pardes et al., Reference Pardes, Lynch, Miclette, McGeoch and Daly2022). Because these assessments may be performed many times over the course of months or years, researchers have the opportunity to model the trajectory of users’ mental health over time. For example, researchers may gain an understanding of changes in an mHealth user’s depressive symptoms over time by the regular completion of the Patient Health Questionnaire-4 (PHQ-4) (Löwe et al., Reference Löwe, Wahl, Rose, Spitzer, Glaesmer, Wingenfeld, Schneider and Brähler2010). While each assessment yields only a handful of item responses, the high-frequency sampling makes each individual’s longitudinal record intrinsically high-dimensional, and analysis thus focuses on recovering the underlying latent trajectory that generates the data.

Yet recovering these trajectories accurately demands proper handling of the measurement scale: most questionnaire items use Likert responses, which violate assumptions like normality and can bias standard models (Wu & Leung, Reference Wu and Leung2017). Ordinal regression addresses this by assuming a latent continuous variable that is mapped to observed categories via a link function and threshold values (McCullagh, Reference McCullagh1980).

Like ordinal regression, polytomous IRT models (Reeve & Fayers, Reference Reeve and Fayers2005) use continuous latent variables to quantify constructs from multi-category items. In particular, the graded response model (GRM) (Samejima, Reference Samejima1968) is tailored to ordered outcomes, such as Likert-scale responses. Specifically, in the typical GRM model,

$$ \begin{align} P_{jk}(\theta) & = \begin{cases} 1 & \text{ for } k=1, \\ \text{Pr}(Y_j \geq k |\theta) & \text{ for } k=2, \dots, K_j,\\ 0 & \text{ for } k>K_j, \end{cases} \end{align} $$

$$ \begin{align} P_{jk}(\theta) & = \begin{cases} 1 & \text{ for } k=1, \\ \text{Pr}(Y_j \geq k |\theta) & \text{ for } k=2, \dots, K_j,\\ 0 & \text{ for } k>K_j, \end{cases} \end{align} $$

where j represents a questionnaire item, k represents an ordered response,

![]() $K_j$

represents the maximum ordered response for item j,

$K_j$

represents the maximum ordered response for item j,

![]() $\theta $

represents the latent construct of interest, and

$\theta $

represents the latent construct of interest, and

![]() $P_{jk}(\cdot )$

represents an inverse link function (Samejima, Reference Samejima1968). Additional parameters may also be included in the model to adjust for item difficulty and discrimination (Hays et al., Reference Hays, Morales and Reise2000). A special case of the GRM (Cole et al., Reference Cole, Turner and Gitchel2019) includes item difficulty parameters,

$P_{jk}(\cdot )$

represents an inverse link function (Samejima, Reference Samejima1968). Additional parameters may also be included in the model to adjust for item difficulty and discrimination (Hays et al., Reference Hays, Morales and Reise2000). A special case of the GRM (Cole et al., Reference Cole, Turner and Gitchel2019) includes item difficulty parameters,

![]() $\delta _j$

, item discrimination parameters,

$\delta _j$

, item discrimination parameters,

![]() $a_j$

, and shared threshold parameters,

$a_j$

, and shared threshold parameters,

![]() $\tau _{k}$

, with a logistic link such that

$\tau _{k}$

, with a logistic link such that

$$ \begin{align} P_{jk}(\theta) & = \begin{cases} 1 & \text{ for } k=1, \\ \frac{1}{1+\text{exp}(-[a_j(\theta-\delta_{j})-\tau_{k-1}])} & \text { for } k=2, \dots, K_j,\\ 0 & \text{ for } k>K_j. \end{cases} \end{align} $$

$$ \begin{align} P_{jk}(\theta) & = \begin{cases} 1 & \text{ for } k=1, \\ \frac{1}{1+\text{exp}(-[a_j(\theta-\delta_{j})-\tau_{k-1}])} & \text { for } k=2, \dots, K_j,\\ 0 & \text{ for } k>K_j. \end{cases} \end{align} $$

This model assumes that the thresholds

![]() $\tau _k$

are constant across items, that is, that the scale is the same for different items, an assumption that has precedent in psychometric research where scales are the same across items (Lubbe & Schuster, Reference Lubbe and Schuster2019).

$\tau _k$

are constant across items, that is, that the scale is the same for different items, an assumption that has precedent in psychometric research where scales are the same across items (Lubbe & Schuster, Reference Lubbe and Schuster2019).

IRT models may be extended for use in longitudinal data by allowing

![]() $\theta $

to vary within subjects over time (Choi, Reference Choi2024; Proust-Lima et al., Reference Proust-Lima, Philipps, Perrot, Blanchin and Sébille2022; Wang & Nydick, Reference Wang and Nydick2020). For example, Liu and Hedeker (Reference Liu and Hedeker2006) used IRT and mixed-effects modeling techniques to model latent subject ability over time based on multi-item Likert-scale questionnaires. Random intercepts and slopes were included to account for variation between subjects and over time. While simple parametric approaches for analyzing longitudinal data, such as random time slopes, are useful in assessing the change in latent continuous variables overtime, they often lack flexibility or are misspecified to capture nonlinear trends in the data (Wang et al., Reference Wang, Chiou and Müller2016). Thus, it is often preferred to use nonparametric methods to model trajectories as a function of time, that is, perform functional data analysis (FDA) (Liu & Wang, Reference Liu and Wang2020; Wang et al., Reference Wang, Chiou and Müller2016).

$\theta $

to vary within subjects over time (Choi, Reference Choi2024; Proust-Lima et al., Reference Proust-Lima, Philipps, Perrot, Blanchin and Sébille2022; Wang & Nydick, Reference Wang and Nydick2020). For example, Liu and Hedeker (Reference Liu and Hedeker2006) used IRT and mixed-effects modeling techniques to model latent subject ability over time based on multi-item Likert-scale questionnaires. Random intercepts and slopes were included to account for variation between subjects and over time. While simple parametric approaches for analyzing longitudinal data, such as random time slopes, are useful in assessing the change in latent continuous variables overtime, they often lack flexibility or are misspecified to capture nonlinear trends in the data (Wang et al., Reference Wang, Chiou and Müller2016). Thus, it is often preferred to use nonparametric methods to model trajectories as a function of time, that is, perform functional data analysis (FDA) (Liu & Wang, Reference Liu and Wang2020; Wang et al., Reference Wang, Chiou and Müller2016).

Gaussian processes (GPs) are stochastic processes such that every finite collection of random variables has a multivariate Gaussian distribution (Liu et al., Reference Liu, Wang and Kong2019). GPs are specified by a covariance matrix that accounts for correlation between points and controls for smoothness, making them an attractive option for FDA (Shi & Choi, Reference Shi and Choi2011). Because of their correlation structure, they are useful for modeling trajectories in a variety of settings (Chen & Zhang, Reference Chen and Zhang2020; Kim et al., Reference Kim, Lee and Essa2011).

There is precedent for using GP regression in both ordinal data and IRT settings. For example, Komori et al. (Reference Komori, Teraji, Shiroshita and Nittono2022) used Bayesian ordinal GPR to determine which facial features in children are perceived as cute, where cuteness was measured on a scale of integer values from 1 to 5. Others have explored the use of power link functions in ordinal GPR (Li et al., Reference Li, Wang and Dey2019). Chen and Zhang (Reference Chen and Zhang2020) used a latent GP model to model longitudinal survey data in psychology applications, remarking how their approach is similar to IRT models. While their paper provides a foundation in using GPs for longitudinal surveys, they only demonstrated the use of the ordinal component of their model in a simple simulation setting, without the complexity of real-world data that may complicate computation and parameter estimation. Kang and Kottas (Reference Kang and Kottas2024) showed how GPs can be used to understand mental health trajectories derived from ecological momentary assessments (EMAs). However, their method requires analyzing different items separately. It also uses the continuation logits representation of the multinomial distribution, resulting in different latent curves for each consecutive category combination. The interpretation of these curves is less clear than a single curve representing the latent construct of interest.

While GPs are frequently used in a Bayesian setting, where model fit is usually performed using Markov chain Monte Carlo (MCMC) techniques to sample from the posterior distribution, sampling from the posterior distribution of GPs can be challenging, especially in the often high-dimensional setting of a longitudinal analysis (Riutort-Mayol et al., Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). Many researchers, including Chen and Zhang (Reference Chen and Zhang2020), choose to use empirical Bayes to estimate these parameters. However, a fully Bayesian approach to fitting a GP model may be desired.

In addition to modeling individual-level trends using a GP, researchers may also be interested in population-level trajectories within an IRT model. This may be done by modeling the mean function of a GP in a hierarchical setting using parametric or nonparametric approaches (Chen & Zhang, Reference Chen and Zhang2020). While Chen and Zhang (Reference Chen and Zhang2020) mentioned the possibility of using nonparametric approaches for estimating the mean function of the GP prior placed on individual trajectories, in practice, they modeled the mean function of the GP using only simple linear regression, reducing the model’s flexibility in recovering overall trends.

Smoothing splines, which balance model fit and smoothness, are a popular FDA technique that can be useful for modeling the mean of a GP (Chen & Zhang, Reference Chen and Zhang2020; Rice & Rosenblatt, Reference Rice and Rosenblatt1983; Shi et al., Reference Shi, Wang, Murray-Smith and Titterington2007). Smoothing splines can generally be described as a set of piecewise polynomial equations joined together at points called knots (Wang, Reference Wang2011). B-splines are a type of spline that have been shown to have excellent numerical properties, are sparse (desirable in high-dimensional settings), and are flexible in the presence of equally spaced knots (De Boor, Reference De Boor1978; Eilers & Marx, Reference Eilers and Marx2010). Specifically, define q knots

![]() $\boldsymbol {\xi }=\{\xi _1\leq \xi _2 \leq , \cdots \leq \xi _q\}$

. Then the

$\boldsymbol {\xi }=\{\xi _1\leq \xi _2 \leq , \cdots \leq \xi _q\}$

. Then the

![]() $j^{th}$

B-spline of degree p evaluated at point x can be defined recursively as (Lyche et al., Reference Lyche, Manni and Speleers2018)

$j^{th}$

B-spline of degree p evaluated at point x can be defined recursively as (Lyche et al., Reference Lyche, Manni and Speleers2018)

$$ \begin{align} B_{j,p, \boldsymbol{\xi}}(x) := \frac{x-\xi_j}{\xi_{j+p}-\xi_j}B_{j,p-1, \boldsymbol{\xi}}(x)+\frac{\xi_{j+p+1}-x}{\xi_{j+p+1}-\xi_{j+1}}B_{j+1, p-1, \boldsymbol{\xi}}(x), \end{align} $$

$$ \begin{align} B_{j,p, \boldsymbol{\xi}}(x) := \frac{x-\xi_j}{\xi_{j+p}-\xi_j}B_{j,p-1, \boldsymbol{\xi}}(x)+\frac{\xi_{j+p+1}-x}{\xi_{j+p+1}-\xi_{j+1}}B_{j+1, p-1, \boldsymbol{\xi}}(x), \end{align} $$

with

$$ \begin{align} B_{j,0,\boldsymbol{\xi}}(x):=\begin{cases} 1 & \text{if } x\in [\xi_i, \xi_{i+1}),\\ 0 & \text{otherwise}. \end{cases} \end{align} $$

$$ \begin{align} B_{j,0,\boldsymbol{\xi}}(x):=\begin{cases} 1 & \text{if } x\in [\xi_i, \xi_{i+1}),\\ 0 & \text{otherwise}. \end{cases} \end{align} $$

Smooth curves may be estimated as a weighted sum of realizations of basis functions that are evaluated at points along the domain. In terms of regression, these weights may be thought of as coefficients that need to be estimated. Shi et al. (Reference Shi, Wang, Murray-Smith and Titterington2007) showed that B-spline basis functions are an excellent choice for GP functional regression, where it is computationally efficient to estimate the coefficients associated with the basis functions. B-splines also have local support, which allows one part of a curve fit to be adjusted without causing undue adjustments to other parts of the curve (De Boor, Reference De Boor1978). In practice, the degree of

![]() $p=3$

is commonly chosen and is the default in many standard statistical packages, such as in the splines package in R (R Core Team, 2022). A drawback to splines is that they may be highly sensitive to the location of the spline knots, which can be difficult to choose or estimate (Yang et al., Reference Yang, Zhang and Zhang2023). Cross-validation and other model fit evaluation techniques may be used to make these decisions.

$p=3$

is commonly chosen and is the default in many standard statistical packages, such as in the splines package in R (R Core Team, 2022). A drawback to splines is that they may be highly sensitive to the location of the spline knots, which can be difficult to choose or estimate (Yang et al., Reference Yang, Zhang and Zhang2023). Cross-validation and other model fit evaluation techniques may be used to make these decisions.

There are many challenges associated with GP ordinal regression. For example, without additional constraints, the ordinal regression problem is scale invariant. A common solution is to fix the variance associated with the GP and to model the correlation matrix. However, modeling correlation matrices is much more challenging than covariance matrices (DeYoreo & Kottas, Reference DeYoreo and Kottas2018). The ordering constraint in the threshold values,

![]() $\boldsymbol {\tau }$

, also results in highly correlated posterior draws. DeYoreo and Kottas (Reference DeYoreo and Kottas2018) used mixture modeling, which allows the threshold values to be fixed, reducing computational burdens. However, the interpretation of this mixture modeling in an IRT setting is not clear.

$\boldsymbol {\tau }$

, also results in highly correlated posterior draws. DeYoreo and Kottas (Reference DeYoreo and Kottas2018) used mixture modeling, which allows the threshold values to be fixed, reducing computational burdens. However, the interpretation of this mixture modeling in an IRT setting is not clear.

One option to improve computational efficiency is the use of low-rank approximations of GPs (Reeve & Fayers, Reference Reeve and Fayers2005). Solin and Särkkä (Reference Solin and Särkkä2020) proposed the use of Hilbert space methods for reduced-rank GP regression. Riutort-Mayol et al. (Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023) explored the practical use of this rank reduction technique and demonstrated that Hilbert basis functions can be used to adequately approximate GPs in practical settings when the number of basis functions is sufficiently large and an appropriate boundary factor is assumed. More specific details on the computational challenges associated with a GP IRT model will be discussed in Section 4.2 after the full model is specified for clarity. We will also provide more detail on the Hilbert approximation and how it improves computational speed and posterior draw mixing in Section 4.3.

This article contributes to the current literature by demonstrating how mental health trajectories can be modeled as a GP within a hierarchical IRT model using Hilbert approximations for computational efficiency. Specifically, we use a GRM with equal thresholds for each item and place a GP prior on the latent construct of interest for each individual over time. We also capture population-level trends over time via smoothing splines. We explore this modeling approach in the context of mHealth survey data on a Likert scale collected in an educational setting. Because mHealth data are high-dimensional, we approximate the posterior distribution of the latent mental health trajectory GP using Hilbert basis functions to facilitate computational efficiency while still obtaining reasonable parameter uncertainty estimates. The efficacy of this approach is demonstrated via simulation. We also discuss model inference in the context of a motivating example. While in this article we present the methodology under a GRM assumption, we emphasize that the methods can easily be extended to other IRT settings where ordinal data meant to capture the trajectory of a unidimensional latent construct are collected frequently over time.

The rest of the article is outlined as follows. In Section 2, we introduce our motivating example. In Section 3, we propose the ordinal GP model for health trajectories (GPMHT). In Section 4, we outline our methods for Bayesian inference, including the prior specification, challenges associated with sampling from the joint posterior, and the Hilbert space approximation of the joint posterior. In Section 5, we discuss our simulation study to show that our Bayesian sampling techniques converge adequately and recover the unknown parameters and trajectories. In Section 6, we present the results of our motivating example analysis and compare our model to a model fit without a GP prior. Finally, we offer a few conclusions in Section 7.

2 Motivating example: College Experience study

Researchers at Dartmouth University have been using mHealth applications to study student health and behavior for many years, and have made their data publicly available (Wang et al., Reference Wang, Chen, Chen, Li, Harari, Tignor, Zhou, Ben-Zeev and Campbell2017). The most recent study, known as the College Experience Study, follows over 200 students who enrolled as freshmen in 2017 or 2018 over the course of their four years at Dartmouth via the StudentLife app (Nepal et al., Reference Nepal, Liu, Pillai, Wang, Vojdanovski, Huckins, Rogers, Meyer and Campbell2024). We focus on the students’ freshman year for reasons discussed in Appendix A.

The College Experience data are comprised of sensing data and EMAs intended to capture students’ behavior and mental health. We wish to develop a model that can be used to understand student mental health trajectories based on EMA responses. Included in the EMA data are responses to the photographic affect meter (PAM) (Pollak, Reference Pollak2012), PHQ-4, and four questions from the State Self-Esteem Scale (SSES) (Heatherton & Polivy, Reference Heatherton and Polivy1991) to measure mood, depression, and self-esteem, respectively. Each of these measures was requested approximately weekly from students via the app, although response rates vary. Other information on the students was collected, such as location, audio, and movement. However, due to a large amount of missing data, we focus on developing a flexible modeling framework for the EMAs without covariates.

We chose to model the SSES data because they represent multi-item surveys where responses varied over time. To avoid overfitting, we only included the 191 students who completed the SSES at least 30 times over the course of the three terms. The SSES is generally issued as a 20-item questionnaire to assess performance, social, and appearance self-esteem. Responses are on a Likert scale with the following options: 1) not at all, 2) a little bit, 3) somewhat, 4) very much, and 5) extremely. In the College Experience study, only four items were administered: 1) Right now, I worry about what other people think of me, 2) Right now, I am pleased with my appearance, 3) Right now, I feel as smart as others, and 4) Right now, overall, I feel good about myself. Note that item one is reverse coded. However, it is assumed for the rest of the article that it has been recoded so that a 5 represents high self-esteem and a 1 represents low self-esteem.

Importantly, the GRM assumes that there is a unidimensional latent construct that is measured by each item on a questionnaire (Andrich, Reference Andrich1978), while the SSES was designed to measure multiple aspects of a person’s self-esteem. However, studies have found that while the SSES captures multiple aspects of self-esteem, the composite score can be used as an effective measure of general self-esteem consistent with other rating scales (Linton & Richard, Reference Linton and Richard1996; Webster et al., Reference Webster, Howell and Shepperd2022).

The concept of an overall self-esteem measure is supported by our exploratory data analysis. Figure 1 contains the average student response to the SSES items by day. We see that although the questions vary in “difficulty” (responses tend to be highest for item 1 and lowest for item 4), the trend in responses appears to be consistent across all items. Thus, we felt comfortable using the four SSES items to measure an overall self-esteem construct.

Figure 1 Average SSES responses by day and question over the course of the students’ freshman year.

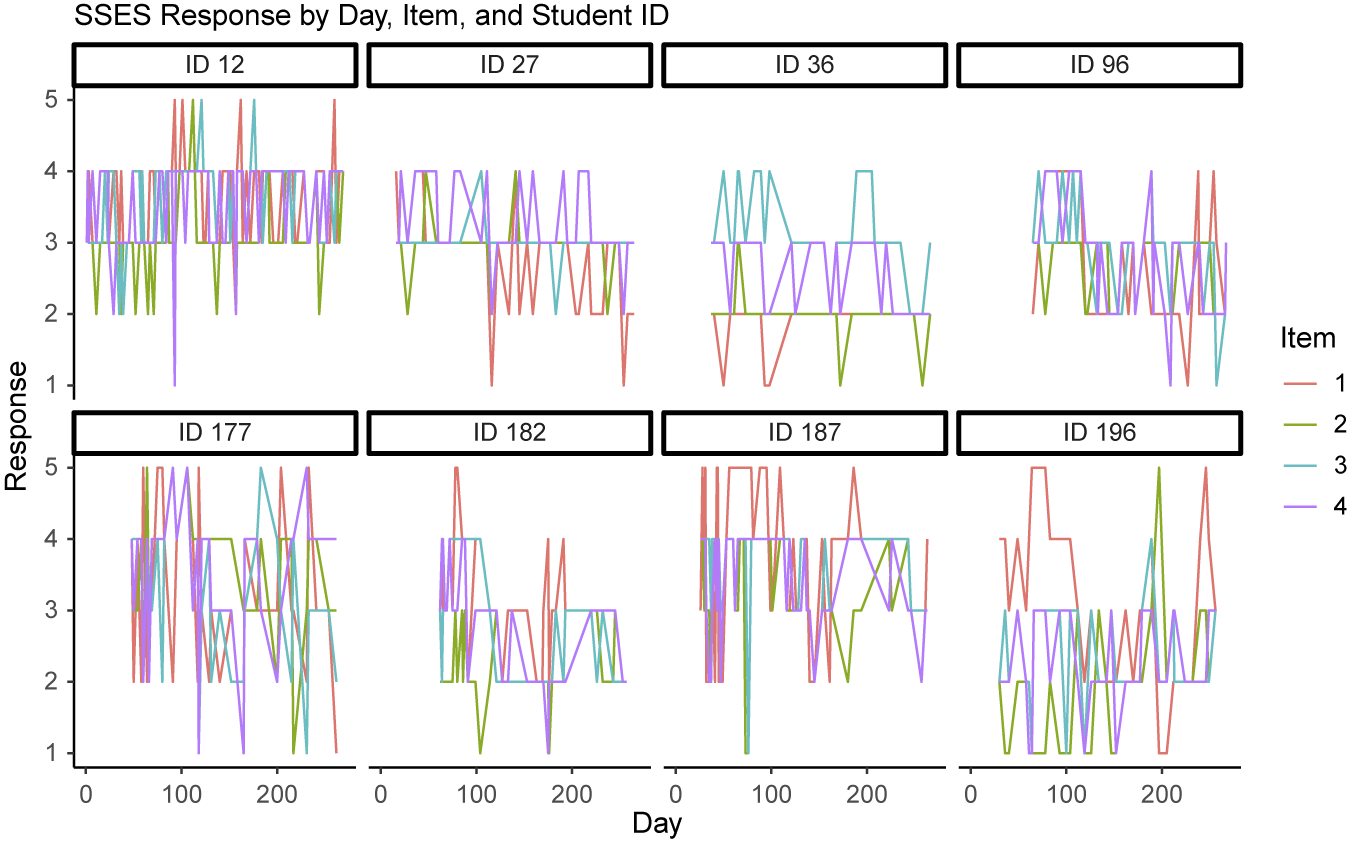

While the trend in self-esteem appears clear in Figure 1, the trend is less obvious in the patterns of individual students. Figure 2 shows the responses of a random subset of eight students over the three terms. Due to the discrete nature of the scale, it is difficult to ascertain common patterns in self-esteem from these raw values. We show in this article how we can obtain smooth individual-level self-esteem trajectories for students that help us better picture trends in their mental health.

Figure 2 SSES responses by day, item, and student over the course of the students’ freshman year.

In general, we found that item 1 responses tend to be higher than the responses for items 2–4. We also found evidence that item discrimination may be lower for item 1 than the other items, as indicated by the decreased variation in responses. Please refer to a histogram of the data in Appendix B for additional support of these assertions (Figure B1).

The goals of the analysis of the College Experience study are as follows: 1) to understand how the self-esteem of all freshmen at Dartmouth changes over the course of their first year on campus and 2) quantify and visualize how individual students’ self-esteem trajectories differ from the average Dartmouth freshman population. Understanding general trends in self-esteem can guide the university in offering interventions to the general student body. By studying trends in individual students, officials may be able to identify students whose trajectories reveal concerning patterns that deviate from the average who might need extra support.

3 The proposed Gaussian process model for health trajectories

In this section, we introduce the GPMHT, describe it in the context of our motivating example, and discuss adjustments for other settings.

3.1 GPMHT

Let

![]() $Y_{ijt}$

represent subject i’s response to item j in a Likert scale questionnaire on day t. We assume that all responses can take on integer values

$Y_{ijt}$

represent subject i’s response to item j in a Likert scale questionnaire on day t. We assume that all responses can take on integer values

![]() $1, \dots , K,$

where

$1, \dots , K,$

where

![]() $K>2$

. Let

$K>2$

. Let

![]() $\delta _j$

represent the “difficulty” of item j for

$\delta _j$

represent the “difficulty” of item j for

![]() $j=1, \dots , J$

and let

$j=1, \dots , J$

and let

![]() $\tau _0<\tau _1 < \tau _2 < \dots < \tau _{K-1}<\tau _K$

, where

$\tau _0<\tau _1 < \tau _2 < \dots < \tau _{K-1}<\tau _K$

, where

![]() $\tau _0=-\infty $

and

$\tau _0=-\infty $

and

![]() $\tau _K=\infty $

, represent threshold values that map values from the real numbers to the ordinal Likert scale. Let

$\tau _K=\infty $

, represent threshold values that map values from the real numbers to the ordinal Likert scale. Let

![]() $\theta _{i,t}$

represent the latent construct of interest, self-esteem in our motivating example, for subject i on day t. Then, we define

$\theta _{i,t}$

represent the latent construct of interest, self-esteem in our motivating example, for subject i on day t. Then, we define

$$ \begin{align} Pr(Y_{i,j, t} =k) &= \begin{cases} 1- \text{logit}^{-1}(\phi_{i,j,t, 1}) & \text{if } k=1,\\ \text{logit}^{-1}(\phi_{i,j,t,k-1})-\text{logit}^{-1}(\phi_{i,j,t,k}) & \text{if } 1<k<K,\\ \text{logit}^{-1}(\phi_{i,j,t,k-1}) & \text{if } k=K,\\ \end{cases} \end{align} $$

$$ \begin{align} Pr(Y_{i,j, t} =k) &= \begin{cases} 1- \text{logit}^{-1}(\phi_{i,j,t, 1}) & \text{if } k=1,\\ \text{logit}^{-1}(\phi_{i,j,t,k-1})-\text{logit}^{-1}(\phi_{i,j,t,k}) & \text{if } 1<k<K,\\ \text{logit}^{-1}(\phi_{i,j,t,k-1}) & \text{if } k=K,\\ \end{cases} \end{align} $$

where

![]() $\text {logit}^{-1}(\phi )=1/(1+e^{-\phi })$

. This is a formulation of the GRM described in Equation (1.2). Thus, we assume that the items of the SSES measure a unidimensional construct, that the difference between two consecutive points on the scale is equal for all items, higher ratings represent higher values of the latent construct, and responses to items administered at the same time are conditionally independent of each other given the latent trait. We chose the logit link to relax the assumption of an underlying latent normal distribution implied by a probit link.

$\text {logit}^{-1}(\phi )=1/(1+e^{-\phi })$

. This is a formulation of the GRM described in Equation (1.2). Thus, we assume that the items of the SSES measure a unidimensional construct, that the difference between two consecutive points on the scale is equal for all items, higher ratings represent higher values of the latent construct, and responses to items administered at the same time are conditionally independent of each other given the latent trait. We chose the logit link to relax the assumption of an underlying latent normal distribution implied by a probit link.

We now introduce a hierarchical component to the model to understand the trajectory of the latent construct

![]() $\theta _{i,t}$

. Assume that we have observations (item responses) recorded for subject i at

$\theta _{i,t}$

. Assume that we have observations (item responses) recorded for subject i at

![]() $T_i$

unique time points. Let

$T_i$

unique time points. Let

![]() $\mathbf {t}_i=(t_{i,1}, \dots , t_{i, T_i})'$

represent a

$\mathbf {t}_i=(t_{i,1}, \dots , t_{i, T_i})'$

represent a

![]() $T_i \times 1$

vector of observed days/time points for subject i. We consider

$T_i \times 1$

vector of observed days/time points for subject i. We consider

![]() $\boldsymbol {\theta }_i=(\theta _{i,t_{i,1}}, \dots , \theta _{i,t_{i,T_i}})'$

to be realizations from a function of

$\boldsymbol {\theta }_i=(\theta _{i,t_{i,1}}, \dots , \theta _{i,t_{i,T_i}})'$

to be realizations from a function of

![]() $\mathbf {t}_i$

such that

$\mathbf {t}_i$

such that

![]() $\boldsymbol {\theta }_i=f(\mathbf {t}_i)$

. Let

$\boldsymbol {\theta }_i=f(\mathbf {t}_i)$

. Let

![]() $t_{i,r}$

and

$t_{i,r}$

and

![]() $t_{i,s}$

represent the

$t_{i,s}$

represent the

![]() $r^{th}$

and

$r^{th}$

and

![]() $s^{th}$

elements of

$s^{th}$

elements of

![]() $\mathbf {t}_i$

, respectively. Then we assume that this function follows a GP prior (Shi & Choi, Reference Shi and Choi2011) with a squared exponential kernel such that

$\mathbf {t}_i$

, respectively. Then we assume that this function follows a GP prior (Shi & Choi, Reference Shi and Choi2011) with a squared exponential kernel such that

$$ \begin{align} \psi(t_{i,r}, t_{i,s})& = \exp\left\{-\frac{(t_{i,r} - t_{i,s})^2}{2\ell^2}\right\}, \end{align} $$

$$ \begin{align} \psi(t_{i,r}, t_{i,s})& = \exp\left\{-\frac{(t_{i,r} - t_{i,s})^2}{2\ell^2}\right\}, \end{align} $$

where

![]() $\boldsymbol {\mu }(\mathbf {t}_i)$

is a

$\boldsymbol {\mu }(\mathbf {t}_i)$

is a

![]() $T_i \times 1$

vector representing the mean function and

$T_i \times 1$

vector representing the mean function and

![]() $\boldsymbol {\psi }$

is a

$\boldsymbol {\psi }$

is a

![]() $T_i \times T_i$

matrix where the element in the

$T_i \times T_i$

matrix where the element in the

![]() $r^{th}$

row and

$r^{th}$

row and

![]() $s^{th}$

column equals

$s^{th}$

column equals

![]() $\psi (t_{i,r}, t_{i,s})$

. Further,

$\psi (t_{i,r}, t_{i,s})$

. Further,

![]() $\ell $

represents the lengthscale of the kernel, determining the smoothness of the GP. We chose to use the squared exponential kernel because it is a simple kernel function that depends only on the distance between points (regardless of direction) while ensuring smoothness. Other kernels could be explored if desired. Specifically, a Matérn kernel (

$\ell $

represents the lengthscale of the kernel, determining the smoothness of the GP. We chose to use the squared exponential kernel because it is a simple kernel function that depends only on the distance between points (regardless of direction) while ensuring smoothness. Other kernels could be explored if desired. Specifically, a Matérn kernel (

![]() $\nu =3/2$

or

$\nu =3/2$

or

![]() $5/2$

) could be used for rougher sample paths, rational–quadratic for multi-scale variation, or periodic components if seasonality is desired. Given the ordinal likelihood and our sampling resolution, differences among these kernels primarily affect high-frequency roughness of

$5/2$

) could be used for rougher sample paths, rational–quadratic for multi-scale variation, or periodic components if seasonality is desired. Given the ordinal likelihood and our sampling resolution, differences among these kernels primarily affect high-frequency roughness of

![]() $g_i(\mathbf {t}_i)=\boldsymbol {\theta }_i-\mu (\mathbf {t}_i)$

, which is weakly identified relative to the low-frequency mean

$g_i(\mathbf {t}_i)=\boldsymbol {\theta }_i-\mu (\mathbf {t}_i)$

, which is weakly identified relative to the low-frequency mean

![]() $\mu (\mathbf {t}_i)$

. For this reason—and to keep the manuscript focused—we retain the SE kernel in the main analysis and note kernel exploration as a natural extension for future work.

$\mu (\mathbf {t}_i)$

. For this reason—and to keep the manuscript focused—we retain the SE kernel in the main analysis and note kernel exploration as a natural extension for future work.

While

![]() $\boldsymbol {\theta }_i$

represents smooth individual trajectories at the individual level, we also want to collect information on the average trajectory at the population level. We do this using cubic B-splines with D degrees of freedom (this corresponds to D-3 interior knots). Specifically, we set

$\boldsymbol {\theta }_i$

represents smooth individual trajectories at the individual level, we also want to collect information on the average trajectory at the population level. We do this using cubic B-splines with D degrees of freedom (this corresponds to D-3 interior knots). Specifically, we set

where

![]() $\boldsymbol {B}_{\mu }(t)$

corresponds to a

$\boldsymbol {B}_{\mu }(t)$

corresponds to a

![]() $D \times 1$

vector of B-spline basis functions evaluated at t, and

$D \times 1$

vector of B-spline basis functions evaluated at t, and

![]() $\boldsymbol {\beta }$

is a

$\boldsymbol {\beta }$

is a

![]() $D\times 1$

vector of coefficients. We place the knots equally across the days of the study. A higher value of D corresponds to a more flexible curve, but can also be prone to overfit if the curve becomes too wiggly. In practice, we chose the value of D by comparing cross-validation results under different values, as will be discussed in Section 6.2.

$D\times 1$

vector of coefficients. We place the knots equally across the days of the study. A higher value of D corresponds to a more flexible curve, but can also be prone to overfit if the curve becomes too wiggly. In practice, we chose the value of D by comparing cross-validation results under different values, as will be discussed in Section 6.2.

There are several constraints required to ensure identifiability of the GRM in Equation (3.2). Because

![]() $Y_{ij}$

depends on

$Y_{ij}$

depends on

![]() $\theta _{i,t}$

and the cutpoints only through

$\theta _{i,t}$

and the cutpoints only through

![]() $a_j\{\theta _{i,t}-(\delta _j+\tau _k)\}$

, the likelihood is invariant to location and scale transformations. We therefore adopt the following conventions. First, to fix the location, we omit an intercept from

$a_j\{\theta _{i,t}-(\delta _j+\tau _k)\}$

, the likelihood is invariant to location and scale transformations. We therefore adopt the following conventions. First, to fix the location, we omit an intercept from

![]() $\boldsymbol {\mu }(x)$

. With

$\boldsymbol {\mu }(x)$

. With

![]() $\{\tau _k\}$

ordered, this determines the decomposition between latent traits, item locations, and step offsets. Second, we take the GP prior in Equation (3.3) to have unit marginal variance.

$\{\tau _k\}$

ordered, this determines the decomposition between latent traits, item locations, and step offsets. Second, we take the GP prior in Equation (3.3) to have unit marginal variance.

While we have formulated this model as a GRM with equal thresholds, Equations (3.1) and (3.2) can be adjusted for other settings. Specifically, a different link function may be used in (3.1) while still maintaining the ordinal nature of the data. In Equation (3.2), one may wish to assume different scales for each item by incorporating different thresholds

![]() $\tau _{j,k}$

. These adjustments may be easily made, although, care should be taken to ensure parameter identifiability.

$\tau _{j,k}$

. These adjustments may be easily made, although, care should be taken to ensure parameter identifiability.

4 Bayesian inference on the posterior

Because of the complexity of the model outlined in Section 3.1, we turned to Bayesian methods for inference. In this section, we first introduce our prior specification. We then explain the challenges associated with sampling from the joint posterior and describe the Hilbert space approximation as a potential solution.

4.1 Prior specification

We selected fairly noninformative priors for our unknown parameters, reflecting our lack of prior information. Specifically, we assumed that

![]() $\ell \sim \mathcal {LN}(0,0.5)$

, where

$\ell \sim \mathcal {LN}(0,0.5)$

, where

![]() $\mathcal {LN}$

represents the log-normal distribution. We assumed that

$\mathcal {LN}$

represents the log-normal distribution. We assumed that

![]() $\tau _1, \dots , \tau _4$

each were normally distributed with mean zero and variance four under the constraint that

$\tau _1, \dots , \tau _4$

each were normally distributed with mean zero and variance four under the constraint that

![]() $\tau _1<\tau _2< \dots <\tau _{K-1}$

. We then assumed

$\tau _1<\tau _2< \dots <\tau _{K-1}$

. We then assumed

![]() $a_j^* \sim \mathcal {LN}(0, 0.5)$

such that

$a_j^* \sim \mathcal {LN}(0, 0.5)$

such that

![]() $a_j=a^*_j/(1/J\sum _{j=1}^Ja^*_j)$

. Next, we let

$a_j=a^*_j/(1/J\sum _{j=1}^Ja^*_j)$

. Next, we let

![]() $\delta ^*_j \sim \mathcal {N}(0, 1)$

and

$\delta ^*_j \sim \mathcal {N}(0, 1)$

and

![]() $\delta _j=\delta ^*_j-1/J\sum _{i=1}^J\delta _j^*$

, where

$\delta _j=\delta ^*_j-1/J\sum _{i=1}^J\delta _j^*$

, where

![]() $\mathcal {N}$

represents the normal distribution. Note that the sum-to-zero constraint on

$\mathcal {N}$

represents the normal distribution. Note that the sum-to-zero constraint on

![]() $\delta _j$

and the multiply-to-one constraint on

$\delta _j$

and the multiply-to-one constraint on

![]() $a_j$

are not strictly necessary for identifiability. However, in our setting, we found them helpful to speed convergence, with minimal impact on the interpretation of the latent long-term trends. Since we only have four items for analysis, identifying a complex latent structure over time may be difficult (Akour & AL-Omari, Reference Akour and AL-Omari2024). Other authors have discussed this issue, including Fox (Reference Fox2010) who notes that it can be challenging to identify a complex IRT model by fixing parameters of a prior, and these additional constraints may be helpful. In practice, the combination of weakly informative priors and constraints mainly aids convergence when relatively few items or observations are available. As more intensive mHealth data accumulate, the likelihood will dominate the weakly informative priors, and fewer constraints may be applied. Finally, we assumed

$a_j$

are not strictly necessary for identifiability. However, in our setting, we found them helpful to speed convergence, with minimal impact on the interpretation of the latent long-term trends. Since we only have four items for analysis, identifying a complex latent structure over time may be difficult (Akour & AL-Omari, Reference Akour and AL-Omari2024). Other authors have discussed this issue, including Fox (Reference Fox2010) who notes that it can be challenging to identify a complex IRT model by fixing parameters of a prior, and these additional constraints may be helpful. In practice, the combination of weakly informative priors and constraints mainly aids convergence when relatively few items or observations are available. As more intensive mHealth data accumulate, the likelihood will dominate the weakly informative priors, and fewer constraints may be applied. Finally, we assumed

![]() $\beta _d \sim \mathcal {N}(0, \sigma ^2)$

for

$\beta _d \sim \mathcal {N}(0, \sigma ^2)$

for

![]() $d=1, \dots , D$

. We then set

$d=1, \dots , D$

. We then set

![]() $\sigma \sim \mathcal {C}(0,1),$

where

$\sigma \sim \mathcal {C}(0,1),$

where

![]() $\mathcal {C}$

represents the Cauchy distribution. In this way, we established a random effects interpretation for fitting the splines, and the spline coefficients are centered at zero. One can interpret this prior as a Bayesian equivalent to ridge regression, so that the spline coefficients are drawn toward zero (James et al., Reference James, Witten, Hastie and Tibshirani2013). A discussion of the full likelihood and joint posterior distribution can be found in Appendices C and D, respectively.

$\mathcal {C}$

represents the Cauchy distribution. In this way, we established a random effects interpretation for fitting the splines, and the spline coefficients are centered at zero. One can interpret this prior as a Bayesian equivalent to ridge regression, so that the spline coefficients are drawn toward zero (James et al., Reference James, Witten, Hastie and Tibshirani2013). A discussion of the full likelihood and joint posterior distribution can be found in Appendices C and D, respectively.

4.2 Challenges with sampling from full joint posterior distribution

Because of the complexity of the joint posterior distribution induced by the GPMHT likelihood and its prior specification, its closed form is not readily available. Thus, we have to rely on MCMC techniques to sample from the posterior, which is a computationally expensive task. Note that MCMC methods sampling from the exact joint posterior require inverting the

![]() $n\times n$

covariance matrix of the latent GP at each update, an

$n\times n$

covariance matrix of the latent GP at each update, an

![]() $\mathcal {O}(n^3)$

operation (Riutort-Mayol et al., Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). In intensive longitudinal designs, where n grows with both the number of participants and repeated measurements, performing these inversions at every iteration becomes computationally prohibitive (Gu et al., Reference Gu, Wang and Berger2018).

$\mathcal {O}(n^3)$

operation (Riutort-Mayol et al., Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). In intensive longitudinal designs, where n grows with both the number of participants and repeated measurements, performing these inversions at every iteration becomes computationally prohibitive (Gu et al., Reference Gu, Wang and Berger2018).

Even aside from the

![]() $\mathcal {O}(n^3)$

cost of repeatedly inverting GP covariance matrices, Gibbs sampling (Gelfand, Reference Gelfand2000)—though appealing when full conditionals are available in closed form—introduces additional difficulties. Recall that

$\mathcal {O}(n^3)$

cost of repeatedly inverting GP covariance matrices, Gibbs sampling (Gelfand, Reference Gelfand2000)—though appealing when full conditionals are available in closed form—introduces additional difficulties. Recall that

where

![]() $\phi ^*_{i,j,t}=a_j(\theta _{i,t}-\delta _j)+\epsilon _{i,j,t}$

,

$\phi ^*_{i,j,t}=a_j(\theta _{i,t}-\delta _j)+\epsilon _{i,j,t}$

,

![]() $\epsilon _{i,j,t}\sim \mathrm {Logistic}(0,1)$

and refer to Appendix E for details. Under this threshold formulation, the full conditional of

$\epsilon _{i,j,t}\sim \mathrm {Logistic}(0,1)$

and refer to Appendix E for details. Under this threshold formulation, the full conditional of

![]() $\phi ^*_{i,j,t}$

is truncated into the region

$\phi ^*_{i,j,t}$

is truncated into the region

![]() $[\tau _{k-1},\tau _k]$

when

$[\tau _{k-1},\tau _k]$

when

![]() $Y_{i,j,t}=k$

. When

$Y_{i,j,t}=k$

. When

![]() $a_j(\theta _{i,t}-\delta _j)$

lies far from this interval, draws would concentrate in extreme tails, causing numerical instability and poor mixing. In addition, the ordered cut-off points

$a_j(\theta _{i,t}-\delta _j)$

lies far from this interval, draws would concentrate in extreme tails, causing numerical instability and poor mixing. In addition, the ordered cut-off points

![]() $\tau _1<\cdots <\tau _{K-1}$

mix slowly, since their moves must respect both the order constraint and the current values of

$\tau _1<\cdots <\tau _{K-1}$

mix slowly, since their moves must respect both the order constraint and the current values of

![]() $\boldsymbol {\phi }^{*}=\{\phi _{i,j,t}^*\}$

and

$\boldsymbol {\phi }^{*}=\{\phi _{i,j,t}^*\}$

and

![]() $\mathbf {Y}=\{Y_{i,j,t}\}$

. These couplings among

$\mathbf {Y}=\{Y_{i,j,t}\}$

. These couplings among

![]() $\theta _{i,t}$

,

$\theta _{i,t}$

,

![]() $\delta _j, a_j$

, and

$\delta _j, a_j$

, and

![]() $\boldsymbol {\tau }$

are amplified further in high-dimensional longitudinal data, yielding highly autocorrelated chains even with long runs.

$\boldsymbol {\tau }$

are amplified further in high-dimensional longitudinal data, yielding highly autocorrelated chains even with long runs.

We have attempted to fit the GP ordinal regression model using an MCMC sampler with a probit link for computational simplicity. In applied analyses, the strong posterior dependence between the cut-off points and the latent trajectories led to poor mixing and inadequate exploration of the parameter space; even for long runs, standard convergence diagnostics did not indicate convergence. Increasing the number of iterations was impractical due to the cubic cost of repeated GP covariance matrix inversions at each iteration.

We further explored methods outside of Gibbs sampling, including the use of Rstan or Stan (Stan Development Team, 2024). Stan performs MCMC using the No U-Turn Sampler (NUTS) version of Hamiltonian Monte Carlo to facilitate efficient sampling from the posterior (Hoffman & Gelman, Reference Hoffman and Gelman2014). While NUTS is efficient in many regards, its computation times can still be high, especially when estimating continuous functions like a GP and in the case of high-dimensional data, such as the College Experience study (Stan Development Team, 2024). In practice, applying NUTS to the exact joint posterior did not achieve convergence after seven days of computation, primarily because each iteration requires repeated Cholesky factorizations of large GP covariance matrices. We therefore adopted a Hilbert-space GP approximation to reduce computational burden and enable tractable sampling.

4.3 Hilbert approximation for GPs

We give a brief description of the Hilbert approximation for GPs. For more detailed explanations and derivations, one should see Riutort-Mayol et al. (Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). It can be shown that stationary covariance functions may be represented by their spectral densities (Riutort-Mayol et al., Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). The squared-exponential kernel spectral density is defined as

Next, we can define a domain,

![]() $\Omega $

, such that for some positive value, L,

$\Omega $

, such that for some positive value, L,

![]() $\Omega \in [-L, L]$

. Let

$\Omega \in [-L, L]$

. Let

![]() $\lambda _q$

and

$\lambda _q$

and

![]() $\{\phi _q(x)\}_{q=1}^{\infty }$

represent the eigenvalues and eigenvectors, respectively, of the Laplacian operator with respect to the domain

$\{\phi _q(x)\}_{q=1}^{\infty }$

represent the eigenvalues and eigenvectors, respectively, of the Laplacian operator with respect to the domain

![]() $\Omega $

. Then, for sufficiently large m,

$\Omega $

. Then, for sufficiently large m,

$$ \begin{align} \psi(t_r, t_s) \approx \sum_{q=1}^m S(\sqrt{\lambda_q}) \phi_q(t_r) \phi_q(t_s') = \boldsymbol{\phi}(t_r)'\boldsymbol{\Delta}\boldsymbol{\phi}(t_s), \end{align} $$

$$ \begin{align} \psi(t_r, t_s) \approx \sum_{q=1}^m S(\sqrt{\lambda_q}) \phi_q(t_r) \phi_q(t_s') = \boldsymbol{\phi}(t_r)'\boldsymbol{\Delta}\boldsymbol{\phi}(t_s), \end{align} $$

where

![]() $\boldsymbol {\phi }(t)=\{\phi _q(t)\}_{q=1}^m \in \mathbb {R}^m$

is the column vector of basis functions and

$\boldsymbol {\phi }(t)=\{\phi _q(t)\}_{q=1}^m \in \mathbb {R}^m$

is the column vector of basis functions and

![]() $\boldsymbol {\Delta }\in \mathbb {R}^{m\times m}$

is a diagonal matrix of

$\boldsymbol {\Delta }\in \mathbb {R}^{m\times m}$

is a diagonal matrix of

![]() $S(\sqrt {\lambda _q})$

for

$S(\sqrt {\lambda _q})$

for

![]() $q=1, \dots , m$

. Let

$q=1, \dots , m$

. Let

![]() $\boldsymbol {\Phi }_i$

be the

$\boldsymbol {\Phi }_i$

be the

![]() $T_i\times m$

matrix of eigenfunctions. Then we can write

$T_i\times m$

matrix of eigenfunctions. Then we can write

where

![]() $\boldsymbol {\mu }_i$

is the

$\boldsymbol {\mu }_i$

is the

![]() $T_i \times 1$

mean vector evaluated at times

$T_i \times 1$

mean vector evaluated at times

![]() $t_{i,1}, \dots , t_{i, T_i}$

. If the mean is assumed to be zero, by properties of the multivariate normal distribution,

$t_{i,1}, \dots , t_{i, T_i}$

. If the mean is assumed to be zero, by properties of the multivariate normal distribution,

$$ \begin{align} f_i(t) \approx \sum_{q=1}^m\left(S(\sqrt{\lambda_q}\right)^{1/2}\phi_q(t)\eta_q, \end{align} $$

$$ \begin{align} f_i(t) \approx \sum_{q=1}^m\left(S(\sqrt{\lambda_q}\right)^{1/2}\phi_q(t)\eta_q, \end{align} $$

where

![]() $\eta _q \sim \mathcal {N}(0,1)$

. Assuming that the inputs

$\eta _q \sim \mathcal {N}(0,1)$

. Assuming that the inputs

![]() $|t|$

are sufficiently small enough to be in

$|t|$

are sufficiently small enough to be in

![]() $\Omega $

and m is sufficiently large, then this is an appropriate approximation for the density of a GP. This can easily be adjusted for the case of a nonzero mean, since the mean can just be added to the GP realization.

$\Omega $

and m is sufficiently large, then this is an appropriate approximation for the density of a GP. This can easily be adjusted for the case of a nonzero mean, since the mean can just be added to the GP realization.

In general, this approximation is more efficient than sampling from an exact GP because it reframes the problem into a linear model with coefficients

![]() $\eta _1, \dots , \eta _m$

. It also avoids the inversion of the covariance function (Riutort-Mayol et al., Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). While the initial computational cost of calculating the basis functions is

$\eta _1, \dots , \eta _m$

. It also avoids the inversion of the covariance function (Riutort-Mayol et al., Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023). While the initial computational cost of calculating the basis functions is

![]() $\mathcal {O}(m^2n)$

, the computational cost at each iteration of the sampler is only

$\mathcal {O}(m^2n)$

, the computational cost at each iteration of the sampler is only

![]() $\mathcal {O}(mn+m)$

. With

$\mathcal {O}(mn+m)$

. With

![]() $m<<n$

, this results in a significant computational advantage in high-dimensional settings when compared to samplers that require the inversion of the covariance function, which has a computational cost of

$m<<n$

, this results in a significant computational advantage in high-dimensional settings when compared to samplers that require the inversion of the covariance function, which has a computational cost of

![]() $\mathcal {O}(n^3)$

.

$\mathcal {O}(n^3)$

.

Of course, the approximation in Equation (4.5) relies on the values of L and m. We followed the suggested methodology in Riutort-Mayol et al. (Reference Riutort-Mayol, Bürkner, Andersen, Solin and Vehtari2023) to tune these hyperparameters, as described in more detail in Appendix F. As part of the tuning, we also used context-specific metrics to assess whether L and m were sufficiently large such that larger values would not lead to significantly different results. Specifically, we calculated the within sample posterior predictive mean absolute deviation for items 1–4 (

![]() $MAD_1$

–

$MAD_1$

–

![]() $MAD_4$

), which takes on values between 0 and 4, to assess convergence of the algorithm. To calculate this, assume we have R samples from the joint posterior distribution for each parameter. Let

$MAD_4$

), which takes on values between 0 and 4, to assess convergence of the algorithm. To calculate this, assume we have R samples from the joint posterior distribution for each parameter. Let

![]() $\hat {y}_{i,j,t}^{(r)}$

represent the

$\hat {y}_{i,j,t}^{(r)}$

represent the

![]() $r^{th}$

predicted value for

$r^{th}$

predicted value for

![]() $y_{i,j,t}$

. This is found by sampling from the model in Equations (3.1) and (3.2), where

$y_{i,j,t}$

. This is found by sampling from the model in Equations (3.1) and (3.2), where

![]() $a_j$

,

$a_j$

,

![]() $\theta _{i,t}$

,

$\theta _{i,t}$

,

![]() $\delta _j$

, and

$\delta _j$

, and

![]() $\tau _1, \dots , \tau _{K-1}$

are replaced by their

$\tau _1, \dots , \tau _{K-1}$

are replaced by their

![]() $r^{th}$

draw from the joint posterior distribution. We can then calculate

$r^{th}$

draw from the joint posterior distribution. We can then calculate

$$ \begin{align} MAD_j= \frac{1}{\sum_{i=1}^n|\mathbf{t}_i|}\sum_{i=1}^n\sum_{t \in \mathbf{t}_i} \frac{1}{R}\sum_{r=1}^R|y_{i,j, t}-\hat{y}_{i,j,t}^{(r)}|, \end{align} $$

$$ \begin{align} MAD_j= \frac{1}{\sum_{i=1}^n|\mathbf{t}_i|}\sum_{i=1}^n\sum_{t \in \mathbf{t}_i} \frac{1}{R}\sum_{r=1}^R|y_{i,j, t}-\hat{y}_{i,j,t}^{(r)}|, \end{align} $$

where

![]() $|\mathbf {t}_i|$

represents the cardinality of

$|\mathbf {t}_i|$

represents the cardinality of

![]() $\mathbf {t}_i$

. For each observation, we used

$\mathbf {t}_i$

. For each observation, we used

![]() $R=6,000$

samples from the posterior to calculate this metric. We also used the predicted values to calculate the accuracy of the model for each item. We refer to these values as

$R=6,000$

samples from the posterior to calculate this metric. We also used the predicted values to calculate the accuracy of the model for each item. We refer to these values as

![]() $Acc_1$

–

$Acc_1$

–

![]() $Acc_4$

. The accuracy metric penalizes all incorrect predictions equally, discarding the importance of ordinality in the model.

$Acc_4$

. The accuracy metric penalizes all incorrect predictions equally, discarding the importance of ordinality in the model.

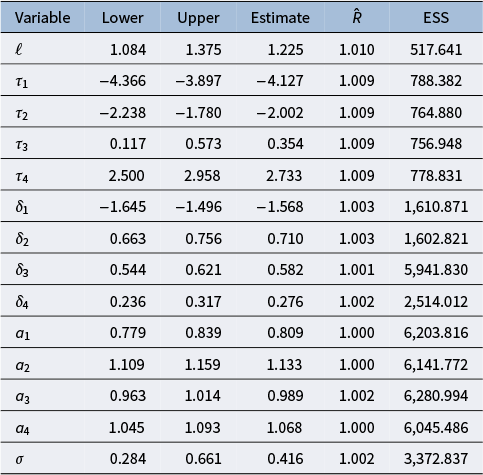

Each time the model was fit, we sampled from the posterior using four chains with 3,000 samples each. A burn-in of 1,500 was used for each chain. We used four chains to ensure that chains converged to similar values regardless of starting point. Because we used multiple chains in our sampling procedure, we assessed the between and within chain variability of our parameter estimates using

![]() $\hat {R}$

, where values close to one indicate that the chains have converged adequately (Vehtari et al., Reference Vehtari, Gelman, Simpson, Carpenter and Bürkner2021). We also found the bulk effective sample size (ESS) (Vehtari et al., Reference Vehtari, Gelman, Simpson, Carpenter and Bürkner2021) for each parameter to assess the movement within chains. It is recommended that each parameter have an

$\hat {R}$

, where values close to one indicate that the chains have converged adequately (Vehtari et al., Reference Vehtari, Gelman, Simpson, Carpenter and Bürkner2021). We also found the bulk effective sample size (ESS) (Vehtari et al., Reference Vehtari, Gelman, Simpson, Carpenter and Bürkner2021) for each parameter to assess the movement within chains. It is recommended that each parameter have an

![]() $\hat {R}$

value less than 1.01, and a sufficiently large ESS (at least 100 per chain) (Stan Development Team, 2024). We found 3,000 draws from the posterior gave us ample room to meet these convergence standards. Sampling was done on 4 cores using around 30 GB of RAM for 191 subjects and 272 possible observed time points. Each fitting took around 6 hours. Note that, in the following sections, we will provide diagnostics and simulations that support the efficacy of our sampling algorithm. The Stan code is available in Appendix L.

$\hat {R}$

value less than 1.01, and a sufficiently large ESS (at least 100 per chain) (Stan Development Team, 2024). We found 3,000 draws from the posterior gave us ample room to meet these convergence standards. Sampling was done on 4 cores using around 30 GB of RAM for 191 subjects and 272 possible observed time points. Each fitting took around 6 hours. Note that, in the following sections, we will provide diagnostics and simulations that support the efficacy of our sampling algorithm. The Stan code is available in Appendix L.

In addition to tuning the Hilbert approximation, the number of splines used for the mean function must be tuned. We did this by comparing the within-sample predictive accuracy and mean absolute deviation measures discussed before for several different numbers of knots. We also compared cross-validation predictive accuracy measures to decide. We do not go into great detail here since spline tuning is well-studied, but the process for the College Experience study will be described in Section 6.2.

5 Simulation study

In order to assess whether samples from our MCMC algorithm adequately approximate the joint posterior distribution and recover unknown parameters and latent trajectories, we performed multiple simulation studies in this section.

5.1 Simulation for parameter recovery

To perform the simulation study for parameter recovery, we simulated

![]() $M=100$

data sets. These data were designed to emulate the data observed in the College Experience study by using the same structure of missingness and parameter values derived from the College Experience study. The procedure for generating each data set is described in Appendix G. After creating a simulated data set, we then used

$M=100$

data sets. These data were designed to emulate the data observed in the College Experience study by using the same structure of missingness and parameter values derived from the College Experience study. The procedure for generating each data set is described in Appendix G. After creating a simulated data set, we then used

![]() $\texttt {Rstan}$

to sample from the joint posterior. For the simulation, we used four chains with 2,000 samples each. For each chain, we used a burn-in of 1,000. We decreased the number of draws from the main analysis to reduce computation time for the simulations. For the Hilbert approximation used

$\texttt {Rstan}$

to sample from the joint posterior. For the simulation, we used four chains with 2,000 samples each. For each chain, we used a burn-in of 1,000. We decreased the number of draws from the main analysis to reduce computation time for the simulations. For the Hilbert approximation used

![]() $c=2.271$

and

$c=2.271$

and

![]() $m=21$

to match the values, we found to be adequate for the College Experience data.

$m=21$

to match the values, we found to be adequate for the College Experience data.

5.1.1 Parameter recovery results

To assess parameter recovery, uncertainty, and convergence, we calculated several diagnostics for each of the parameters and 100 simulated data sets. Specifically, we found the bias, mean squared error (MSE), standard error (SE), standard deviation (SD), and coverage probability (CP) of the 95% credible intervals (CIs) for each parameter. Formulas for these metrics can be found in Appendix H. We also reported the average

![]() $\hat {R}$

and ESS value for each parameter across all 100 data sets. All of the diagnostics mentioned in this section for the simulation study are shown in Table 1.

$\hat {R}$

and ESS value for each parameter across all 100 data sets. All of the diagnostics mentioned in this section for the simulation study are shown in Table 1.

Table 1 Simulation diagnostics based on 100 simulated data sets for each parameter

We found that each of the parameters exhibits a bias close to zero. Further, the SE and MSE values were close to zero for all parameters. The reported SD values for the cutoffs,

![]() $\tau _1, \dots , \tau _4$

, were somewhat high, possibly due to the high correlation in the parameters. However, the CPs for the 95% CIs were all at least 95% for these parameters. The CP for

$\tau _1, \dots , \tau _4$

, were somewhat high, possibly due to the high correlation in the parameters. However, the CPs for the 95% CIs were all at least 95% for these parameters. The CP for

![]() $\ell $

was 96%, suggesting that our Hilbert approximation is working appropriately. All parameters had a 95% CI CP of at least 93%, well within the range of reasonable values when only 100 data sets are simulated. We also found average

$\ell $

was 96%, suggesting that our Hilbert approximation is working appropriately. All parameters had a 95% CI CP of at least 93%, well within the range of reasonable values when only 100 data sets are simulated. We also found average

![]() $\hat {R}$

values less than 1.01, suggesting the convergence of the chains. The average ESS was also reasonably large, averaging at least 100 samples per chain, for all parameters.

$\hat {R}$

values less than 1.01, suggesting the convergence of the chains. The average ESS was also reasonably large, averaging at least 100 samples per chain, for all parameters.

5.2 Simulation for trajectory recovery

Next, we simulated data to assess the model’s ability to recover individual mental health trajectories, with

![]() $\boldsymbol {\theta }_i$

defined as the sum of a population-level mean function (Equation (3.5)) and a subject-specific, zero-mean GP with covariance

$\boldsymbol {\theta }_i$

defined as the sum of a population-level mean function (Equation (3.5)) and a subject-specific, zero-mean GP with covariance

![]() $\boldsymbol {\psi }(\mathbf {t}, \mathbf {t}')$

(Equation (3.4)). Focusing on the Hilbert approximation to the GP, we evaluated recovery of individual deviations,

$\boldsymbol {\psi }(\mathbf {t}, \mathbf {t}')$

(Equation (3.4)). Focusing on the Hilbert approximation to the GP, we evaluated recovery of individual deviations,

![]() $g_i(\mathbf {t}_i)=\boldsymbol {\theta }_i - \boldsymbol {\mu }(\mathbf {t}_i)$

, across scenarios using a single simulated dataset per setting to reflect practical conditions.

$g_i(\mathbf {t}_i)=\boldsymbol {\theta }_i - \boldsymbol {\mu }(\mathbf {t}_i)$

, across scenarios using a single simulated dataset per setting to reflect practical conditions.

First, let us consider a scenario where the data are generated as in Section 5.1. However, we drew the mean function from a multivariate normal distribution with mean vector

![]() $\mathbf {0}$

, a correlation matrix given by a squared exponential kernel with lengthscale 0.5, and SD 0.5. By generating the data from a multivariate normal distribution with an infinitely differentiable covariance function, we are able to see whether splines, which have a finite number of knots, can adequately approximate a smooth mean function in this setting. We chose a lengthscale of 0.5 because we wanted to evaluate trajectory recovery under the scenario where the true underlying function is moderately wiggly. After fitting the model to the generated data, we then compared plots of the individual deviations from the mean function to the true deviations in the simulated data. The results for eight randomly selected trajectories are shown in Figure 3. The black lines indicate the true trajectory while the blue lines represent the median of the posterior distribution. Note that some of the black lines (true trajectory) are shorter than the blue lines (estimated trajectory) because in the College Experience study different students entered and left the study at different points in the year. As described in Section 5.1 and Appendix G, the data were generated to mimic this entry and exit pattern. Since our goal was to model the trajectory over the course of the entire freshman year, we felt it was useful to see the trajectory estimates (and uncertainty) associated with time points outside of a subject’s study period. This led to the blue and black curves displaying different lengths. We see that in most scenarios, the algorithm does an excellent job at capturing individual deviations from the mean for periods where data are available. For most parts of the curve, the true trajectory lies within the 95% CI bounds. We also see how the GP is useful in flexibly capturing a variety of different shapes in individual deviations from the mean function. Importantly, the uncertainty associated with our trajectory estimates is much greater during periods where data are unavailable (i.e., late entry or early exit) when compared to periods where data are available. To see how well the splines capture the mean trend, please see Appendix I.1. Note that the smooth mean function in the generated data was approximated well by the spline mean function with 16 degrees of freedom.

$\mathbf {0}$

, a correlation matrix given by a squared exponential kernel with lengthscale 0.5, and SD 0.5. By generating the data from a multivariate normal distribution with an infinitely differentiable covariance function, we are able to see whether splines, which have a finite number of knots, can adequately approximate a smooth mean function in this setting. We chose a lengthscale of 0.5 because we wanted to evaluate trajectory recovery under the scenario where the true underlying function is moderately wiggly. After fitting the model to the generated data, we then compared plots of the individual deviations from the mean function to the true deviations in the simulated data. The results for eight randomly selected trajectories are shown in Figure 3. The black lines indicate the true trajectory while the blue lines represent the median of the posterior distribution. Note that some of the black lines (true trajectory) are shorter than the blue lines (estimated trajectory) because in the College Experience study different students entered and left the study at different points in the year. As described in Section 5.1 and Appendix G, the data were generated to mimic this entry and exit pattern. Since our goal was to model the trajectory over the course of the entire freshman year, we felt it was useful to see the trajectory estimates (and uncertainty) associated with time points outside of a subject’s study period. This led to the blue and black curves displaying different lengths. We see that in most scenarios, the algorithm does an excellent job at capturing individual deviations from the mean for periods where data are available. For most parts of the curve, the true trajectory lies within the 95% CI bounds. We also see how the GP is useful in flexibly capturing a variety of different shapes in individual deviations from the mean function. Importantly, the uncertainty associated with our trajectory estimates is much greater during periods where data are unavailable (i.e., late entry or early exit) when compared to periods where data are available. To see how well the splines capture the mean trend, please see Appendix I.1. Note that the smooth mean function in the generated data was approximated well by the spline mean function with 16 degrees of freedom.

Figure 3 A comparison of simulated trajectories (black curves) to the estimated trajectories (as determined by the median of the posterior distribution, in blue). The shaded region represents 95% CI bounds. The data were designed to match the College Experience study. Thus, the black curves may be shorter than the blue curves to mimic late entry or early exit in the study. The full estimated curve is shown in blue since we want to make inference on the entire freshman year.

Many other simulations were performed to assess individual trajectory recovery under different values of

![]() $\ell $

, different numbers of items, and different numbers of possible ordinal responses. For brevity, the results of a select number of these simulations are in Appendix I. In general, we found that the model appropriately captures individual deviations from trajectories, even in challenging situations, including small values of

$\ell $

, different numbers of items, and different numbers of possible ordinal responses. For brevity, the results of a select number of these simulations are in Appendix I. In general, we found that the model appropriately captures individual deviations from trajectories, even in challenging situations, including small values of

![]() $\ell $

and low numbers of possible ordinal responses.

$\ell $

and low numbers of possible ordinal responses.

5.3 Simulation for comparison to baseline model

We also performed a simulation to compare model fit and trajectory recovery between our model and a baseline model without GP trajectories. Specifically, we defined the baseline model using Equations (3.1) and (3.2). However, we assumed

![]() $\theta _{i,t}=\mu (t)+\alpha _i$

, where

$\theta _{i,t}=\mu (t)+\alpha _i$

, where

![]() $\alpha $

is a random intercept such that

$\alpha $

is a random intercept such that

![]() $\alpha _i \sim \mathcal {N}(0, \sigma ^2_{int})$

and

$\alpha _i \sim \mathcal {N}(0, \sigma ^2_{int})$

and

![]() $\mu (t)$

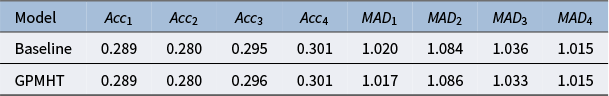

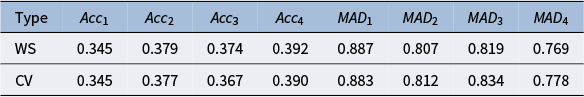

is defined as in Equation (3.5). To be consistent with the GPMHT, we used 16 degrees of freedom for the B-splines in the mean function. We used the same data generation procedure as described in Section 5.2 and fit the generated data to both the GPMHT and baseline models. The within-sample accuracy and mean absolute deviation for each item and model are shown in Table 2. We do not see much of a difference in within-sample predictive accuracy. Note that in the context of ordinal regression, we might not expect large changes in predictive accuracy or model fit measures based on changes to the latent trajectory. This is because the fitted values and predictions are dependent on the threshold values. Thus, small changes in the latent trajectory will not impact the observed ordinal category if they do not cross thresholds, especially when the two models have the same mean functions that center the latent values in the same threshold region.

$\mu (t)$

is defined as in Equation (3.5). To be consistent with the GPMHT, we used 16 degrees of freedom for the B-splines in the mean function. We used the same data generation procedure as described in Section 5.2 and fit the generated data to both the GPMHT and baseline models. The within-sample accuracy and mean absolute deviation for each item and model are shown in Table 2. We do not see much of a difference in within-sample predictive accuracy. Note that in the context of ordinal regression, we might not expect large changes in predictive accuracy or model fit measures based on changes to the latent trajectory. This is because the fitted values and predictions are dependent on the threshold values. Thus, small changes in the latent trajectory will not impact the observed ordinal category if they do not cross thresholds, especially when the two models have the same mean functions that center the latent values in the same threshold region.

Table 2 Accuracy and mean absolute deviation metrics for items 1–4 in simulated data comparing a model with no GP trajectories (Baseline) to the GPMHT

We can also compare the latent trajectories in the two models. Since both models have the same mean function, we compare the individual deviations from the average under the two models. These are shown in Figure 4. We see that the 95% CI bounds for the individual deviation from the average under the baseline model are more narrow than the GPMHT. However, the baseline model does not capture any changes in the deviation over time. This difference is especially stark for IDs 14, 85, and 148, where there are very clear nonlinear trends in the individual deviations from the average. Conversely, the GPMHT mostly captures these trends, and the uncertainty associated with them, although it does struggle with some who entered the study late (see ID 76). In general, even without changes to model fit or predictive accuracy, we see value in the additional complexity provided by a GP trajectory.

Figure 4 A comparison of simulated trajectories (black curves) to the estimated trajectories for both the baseline model (red) and GPMHT (blue) determined by the median of the posterior distribution). The shaded regions represent 95% CI bounds. This simulation was designed to emulate the College Experience study.

6 Application to college experience study

In this section, we discuss our GPMHT in the context of our motivating example: the College Experience study. We explain how the data were prepared for use in the model, discuss computational details, and present parameter estimates with their associated 95% CIs. Finally, we show how the fitted model can be used to make inference on individual mental health trajectories, as well as the average mental health trajectory of all the participants in the study.

6.1 Data preparation and missingness

The cleaned data represent 191 students over the course of 272 days during their freshman year. The weekend before the beginning of the fall term of their freshman year represents day 0 for all students, regardless of when they entered the study. For a complete discussion on data preparation, see Appendices A and J. Note that the data do not indicate when each student is sent a notification, only when they respond to a notification. Thus, it is not clear whether the cause of a missing EMA response is due to a non-response to an EMA survey or if no EMA was sent that day. Regardless of the cause, missing EMA responses are not imputed or considered in the analysis, implying that non-responses are missing at random (MAR). We acknowledge that this is a simplifying assumption. For example, on days where students are experiencing lower self-esteem, they may be less likely to respond to the EMA. However, without knowing which days an EMA was sent, it is not possible to distinguish mechanisms of missingness. Formally, we treat missingness as ignorable under an MAR assumption conditional on observed history and calendar/time-of-term effects: letting

![]() $R_{it}$

indicate response for person i on day t, we assume

$R_{it}$

indicate response for person i on day t, we assume

where

![]() $\mathcal {H}_{it}$

includes past observed EMA responses and calendar/time-of-term indicators. Because prompt delivery times were not logged, we cannot distinguish non-prompt days from nonresponse, so this assumption is required for analysis.

$\mathcal {H}_{it}$

includes past observed EMA responses and calendar/time-of-term indicators. Because prompt delivery times were not logged, we cannot distinguish non-prompt days from nonresponse, so this assumption is required for analysis.

6.2 College experience data computational details

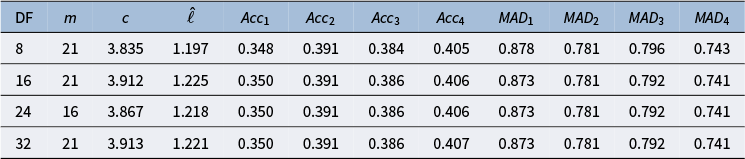

We fit the GPMHT with the Hilbert approximation on the College Experience data using four different degrees of freedom: 8, 16, 24, and 32. Recall that the prior on

![]() $\boldsymbol {\beta }$

represents Bayesian regularization akin to ridge regression in a frequentist setting (James et al., Reference James, Witten, Hastie and Tibshirani2013). Thus, we needed the number of knots to be sufficiently large to ensure adequate flexibility. However, the model is controlled for overfit through regularization. The tuning results for the optimal value of L and m for each number of knots are shown in Table 3. The intermediate tuning steps and additional information are available in Appendix K.

$\boldsymbol {\beta }$

represents Bayesian regularization akin to ridge regression in a frequentist setting (James et al., Reference James, Witten, Hastie and Tibshirani2013). Thus, we needed the number of knots to be sufficiently large to ensure adequate flexibility. However, the model is controlled for overfit through regularization. The tuning results for the optimal value of L and m for each number of knots are shown in Table 3. The intermediate tuning steps and additional information are available in Appendix K.

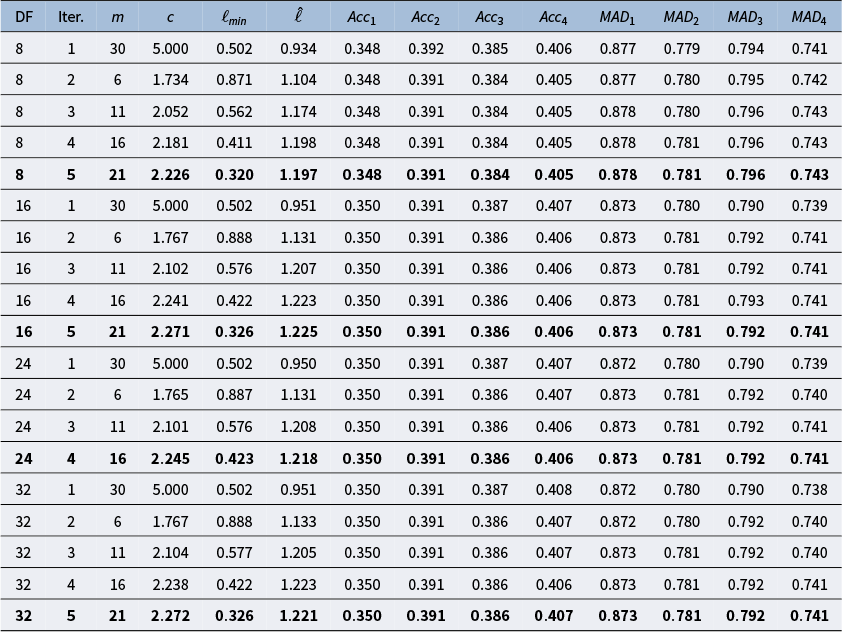

Table 3 The optimal values of m and L, where

![]() $L=cS$

for each B-spline scenario

$L=cS$

for each B-spline scenario

Note: DF refers to the degrees of freedom associated with the B-splines. For each DF value, we also present other metrics, including the estimated value

![]() $\hat {\ell }$

, accuracy, and mean absolute deviation for each item.

$\hat {\ell }$

, accuracy, and mean absolute deviation for each item.

Regardless of the number of knots used for the B-splines in the mean function, the value of

![]() $\hat {\ell }$

was estimated to be between 1.197 and 1.225. This small range of values suggests that the number of knots does not significantly impact the estimation of

$\hat {\ell }$

was estimated to be between 1.197 and 1.225. This small range of values suggests that the number of knots does not significantly impact the estimation of

![]() $\ell $

. We also found the model fit, as determined by within-sample predictive accuracy and mean absolute deviation, to be comparable across all models.

$\ell $

. We also found the model fit, as determined by within-sample predictive accuracy and mean absolute deviation, to be comparable across all models.

To make a final decision on the number of knots, we also looked at the out-of-sample predictive accuracy of the models with the optimal values of L and m for a given number of B-spline knots as determined by our Hilbert approximation tuning. This was done by randomly selecting around 20% of observations (not entire subjects) to be withheld from the data during training. We then predicted the withheld values and calculated the accuracy and mean absolute deviation for each item. Similar to the within-sample values, 6,000 draws from the predictive posterior distribution were used to compute these values. The results are shown in Table 4.

Table 4 Cross-validation accuracy and mean absolute deviation for each B-spline degree of freedom considered

We did not find a difference in cross-validation predictive accuracy or mean absolute deviation depending on the degrees of freedom used for the B-splines. The differences are small, but according to Table 3, there does appear to be a slight improvement in within-sample predictive metrics when more than 8 degrees of freedom are used. Recall that because our prior on

![]() $\boldsymbol {\beta }$