3.1 Origin and Measurement of Endogenous Brain Rhythms

Rhythmic activity in measured brain signals is observed virtually everywhere in the brain. In humans, these brain rhythms have been associated with perceptual and cognitive processes and are usually measured with noninvasive tools such as electroencephalography (EEG) or magnetoencephalography (MEG), but also using intracranial recordings such as electrocorticography (ECoG). Importantly, rhythmic neural activity is also present spontaneously, when there is no sensory input or cognitive tasks.

To understand the origins of the various brain rhythms, which may reflect multiple mechanisms, it is useful to consider different levels of observation (for review: Cannon et al., Reference Cannon, McCarthy and Lee2014; Pittman-Polletta et al., Reference Pittman-Polletta, Kocsis, Vijayan, Whittington and Kopell2015; Doelling et al., Reference Doelling, Herbst, Arnal, van Wassenhove, Wöllner and London2023). As shown in animal research, at the microscopic level, individual neurons respond optimally at preferred “resonance” frequencies, which depend on neuronal membrane properties that act as high- and low-pass filters (Llinás, Reference Llinás1988; Hutcheon and Yarom, Reference Hutcheon and Yarom2000; Buzsáki and Draguhn, Reference Buzsáki and Draguhn2004). Importantly, spontaneous self-sustained oscillations in neurons, at their preferred frequency, arise from the interplay of currents that destabilize the resting membrane potential (Hutcheon and Yarom, Reference Hutcheon and Yarom2000). At the macroscopic level, by measuring activity arising from several thousands of neurons, brain rhythms reflect synchronized rhythmic activity of neuronal populations, either locally or across areas. Locally, such synchronized activity has been described as arising from excitatory-inhibitory circuitry (Skinner et al., Reference Skinner, Kopell and Marder1994; Whittington et al., Reference Whittington, Traub, Kopell, Ermentrout and Buhl2000; Rotstein et al., Reference Rotstein, Pervouchine and Acker2005; Klausberger and Somogyi, Reference Klausberger and Somogyi2008; Buzsáki and Watson, Reference Buzsáki and Watson2012; Hyafil et al., Reference Hyafil, Giraud, Fontolan and Gutkin2015a). Importantly, it is thought that the macroscopic rhythmic activity that can be measured during rest is related – likely in a complex manner reflecting interactions of multiple mechanisms (Rotstein, Reference Rotstein2017; Stark et al., Reference Stark, Levi and Rotstein2022) – to the microscopic frequency preferences of the underlying neurons (Hutcheon and Yarom, Reference Hutcheon and Yarom2000).

3.1.1 Macroscopic Measures of Preferred Neural Frequencies

Preferred frequencies of neuronal populations can be identified in various ways. Using noninvasive transcranial magnetic stimulation (TMS) and EEG, it has been shown that stimulating a brain region with a single impulse leads to resonance activity at the population’s preferred (“natural”) frequency (Marshall and Fox, Reference Marshall and Fox2004; Rosanova et al., Reference Rosanova, Adenauer and Valentina2009). This line of research has demonstrated that the occipital cortex shows dominant activity in the alpha frequency range (8–14 Hz), the parietal cortex in the beta frequency range (15–30 Hz), and the frontal cortex in the gamma frequency range (above 30 Hz) (Rosanova et al., Reference Rosanova, Adenauer and Valentina2009). Regarding the temporal cortex, it is currently unfeasible to use TMS to identify its preferred frequencies due to the proximity to facial muscles and resulting severe muscle artifacts. Instead, sensory stimulation methods have been used. For example, when participants listen to rhythmic sounds at different rates, the auditory cortex shows an auditory steady-state response (ASSR) (Picton et al., Reference Picton, John, Dimitrijevic and Purcell2003) that is largest at the system’s preferred frequency. ASSR research indicated that the auditory cortex shows a preferred frequency in the beta/gamma range, often averaging at around 40 Hz, although there are large inter-individual differences (i.e., between 30 and 80 Hz across participants) (Zaehle et al., Reference Zaehle, Rach and Herrmann2010; Baltus and Herrmann, Reference Baltus and Herrmann2016; Teng et al., Reference Teng, Tian, Rowland and Poeppel2017). Additionally, this method revealed a topographic organization within the auditory cortex, with different neural populations along the auditory pathway showing different frequency preferences (Weisz and Lithari, Reference Weisz and Lithari2017). In sum, magnetic or sensory rhythmic stimulation are a feasible way to identify the preferred or natural frequencies of large neuronal populations, and the results are relatively well grounded in underlying neuronal resonance phenomena.

3.1.2 Networks of Endogenous Brain Rhythms

Another line of research into intrinsic or “endogenous” brain activity uses MEG or EEG resting state data to identify long-range connectivity patterns (Brookes et al., Reference Brookes, Woolrich and Luckhoo2011; Vidaurre et al., Reference Vidaurre, Hunt and Quinn2018). These networks have originally been studied in functional magnetic resonance imaging (fMRI) data (e.g., Fox and Raichle, Reference Fox and Raichle2007), but recent findings highlight that these networks can be further specified by underlying frequency-specific functional connections. Using MEG, prominent networks in the alpha and beta frequency ranges have been identified by analyzing correlations between band-pass filtered amplitude envelopes across brain areas (Brookes et al., Reference Brookes, Woolrich and Luckhoo2011). For example, the default mode network (DMN) has been associated with alpha-band connectivity within and across medial-frontal and parietal cortices, whereas the sensorimotor network has been associated with beta-band connections in corresponding areas (Brookes et al., Reference Brookes, Woolrich and Luckhoo2011). Using phase-coupling, it has been shown that the brain engages in different states characterized by frequency-specific connectivity patterns, for example posterior alpha and anterior delta/theta (below 4 Hz and 4–8 Hz, respectively) network activity (Vidaurre et al., Reference Vidaurre, Hunt and Quinn2018). Overall, functional connectivity networks highlight long-range frequency-specific activity during rest, but the varied and diverse rhythmic activity of different brain areas can be more granularly captured by taking a closer look at region-specific patterns of brain activity, as captured, for example, by spectral profiles (Keitel and Gross, Reference Keitel and Gross2016; Mellem et al., Reference Mellem, Wohltjen, Gotts, Avniel and Martin2017; Capilla et al., Reference Capilla, Arana and García-Huéscar2022; Komorowski et al., Reference Komorowski, Rykaczewski and Piotrowski2023; Lubinus et al., Reference Lubinus, Keitel, Obleser, Poeppel and Rimmele2023).

3.1.3 Spectral Profiles

The above-described research shows the large-scale organization of endogenous brain rhythms related to preferred neuronal frequency and network-associated brain activity. Another line of research has focused on analyzing local spectral power to characterize the prevalence of brain rhythms. This method is often used to find the dominant frequency of brain regions (or prevalent mixtures of dominant frequencies), but the underlying “mechanisms” (e.g., resonance phenomena or frequency-specific network activity) are less clear. For example, using ECoG data, it has been shown that the theta rhythm is the most prominent across the cortex, while alpha is prominently present in posterior brain regions and beta is mostly present in central areas (Groppe et al., Reference Groppe, Bickel and Keller2013). In MEG data, it has been shown that the dominant peak frequency of regional brain rhythms is not randomly distributed but follows a cortical gradient from faster (“sensory”) to slower (“higher-level”) peak frequencies along the posterior–anterior axis (Mahjoory et al., Reference Mahjoory, Schoffelen, Keitel and Gross2020; but see Mellem et al., Reference Mellem, Wohltjen, Gotts, Avniel and Martin2017). These studies successfully highlight the across-participant similarities in regional spectral activity. Spectral profiles, however, are not only characteristic for specific brain areas but also show unique patterns for individuals that allow for the classification of people based on the spectral power distributions (da Silva Castanheira et al., Reference da Silva Castanheira, Orozco Perez, Misic and Baillet2021).

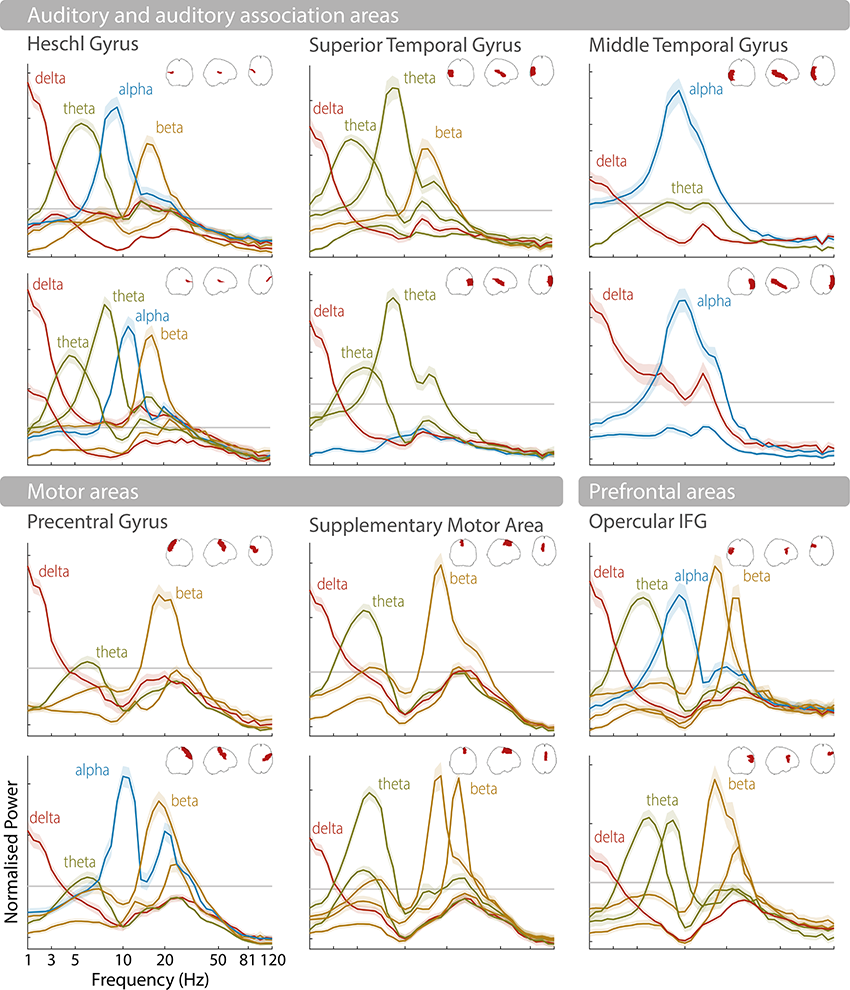

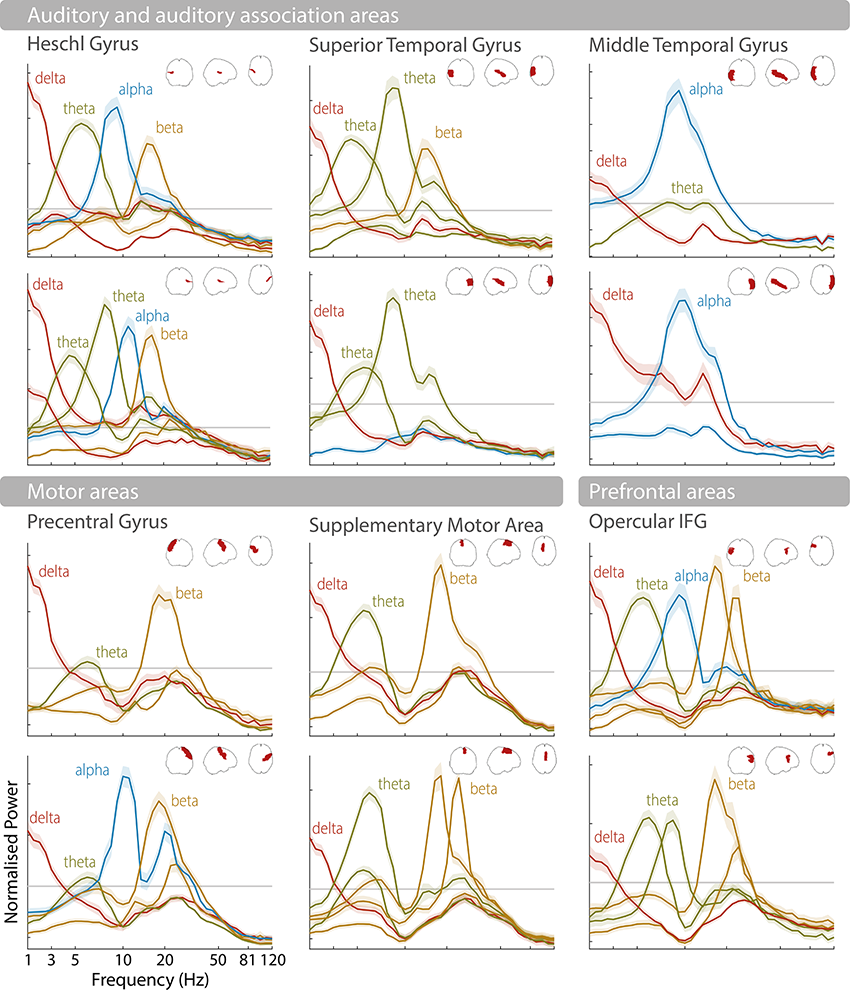

Instead of looking at the single most prominent spectral peak to characterize rhythmic activity, it is also possible to analyze consistent patterns or combinations of brain rhythms in a given brain region (Keitel and Gross, Reference Keitel and Gross2016; Komorowski et al., Reference Komorowski, Rykaczewski and Piotrowski2023). This has shown that each brain area engages in different spectral “modes” over time (between two and nine across different cortical areas), and that these rhythmic patterns are characteristic enough to be used for classification of brain areas (Keitel and Gross, Reference Keitel and Gross2016; Lubinus et al., Reference Lubinus, Orpella and Keitel2021). Language-relevant areas, such as auditory, motor, and frontal areas, are often characterized by prominent spectral peaks in the delta, theta, and beta frequency bands (see Figure 3.1). As highlighted in the following, these endogenous brain rhythms make them ideally suited to process speech (Giraud and Poeppel, Reference Giraud and Poeppel2012).

Spectral profiles of language-relevant brain areas.

Normalized power spectra as found in Keitel and Gross (Reference Keitel and Gross2016) for 12 brain areas according to the automated anatomical labelling (AAL) atlas (Tzourio-Mazoyer et al., Reference Tzourio-Mazoyer, Landeau and Papathanassiou2002; Bohland et al., Reference Bohland, Bokil, Allen and Mitra2009). Upper rows show the left hemisphere, lower rows the right hemisphere (see schematic area projections for area locations). Shaded error bars illustrate the standard error of the mean across participants. Power peaks are labelled according to their peak frequency (e.g., delta, theta, alpha, beta).

Figure 3.1 Long description

The horizontal axis represents the frequency in hertz and the vertical axis represents the normalized power. The first set is labeled auditory and auditory association areas, which include Heschl gyrus, Superior temporal gyrus, and middle temporal gyrus. It shows 6 graphs arranged in three columns. These graphs plot fluctuating curves for delta, theta, alpha and beta in Heschl gyrus, delta, theta and beta in superior temporal gyrus, and delta and alpha in the middle temporal gyrus. The first set is labeled auditory and auditory association areas, which include Heschl gyrus, Superior temporal gyrus, and middle temporal gyrus. The second set is labeled motor areas, which include precentral gyrus, supplementary motor area and opercular I F G.

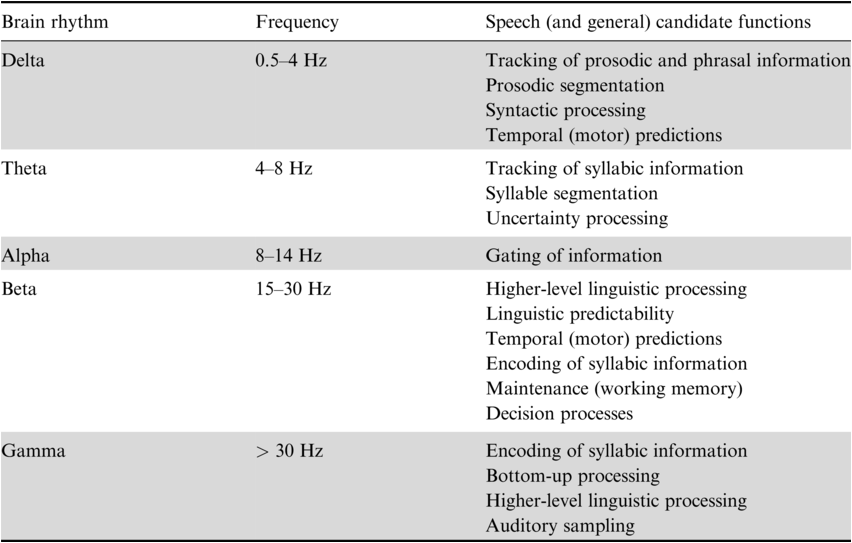

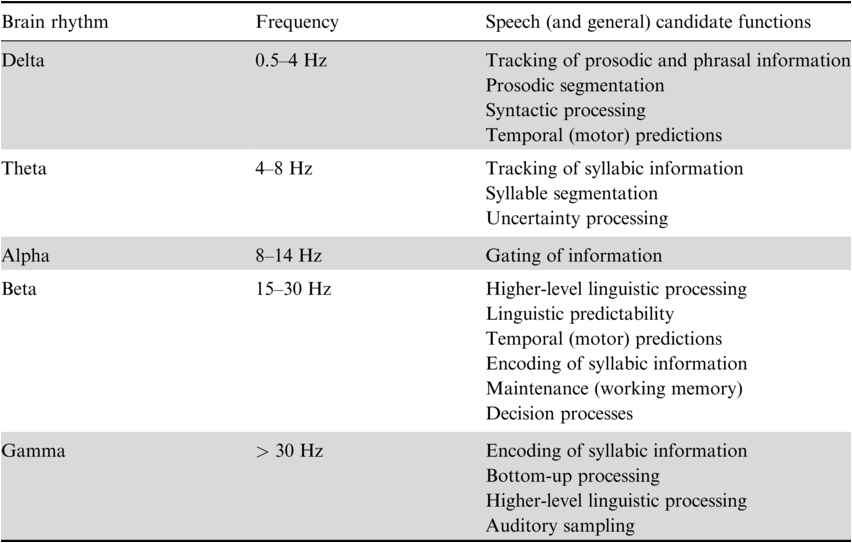

3.2 Brain Rhythms Involved in Speech and Language Processing

As discussed above, spectral profiles indicate complex patterns of endogenous brain rhythms that characterize different brain areas. Importantly, it has been suggested that brain rhythms observed during rest indicate the functional architecture of the brain and can be related to specific cognitive processes (Deco et al., Reference Deco, Jirsa and McIntosh2011; Siegel et al., Reference Siegel, Donner and Engel2012; Keitel and Gross, Reference Keitel and Gross2016). In the following, we discuss how different brain rhythms have been related to speech and language processing (Table 3.1).

3.2.1 Theta Brain Rhythms and Their Role in Syllable Segmentation

Identifying linguistic units from spoken language is nontrivial because of the lack of invariance in the continuous speech signal – that is, the speech acoustics vary depending on the speaker and the context. Segmenting the speech acoustics allows the generation of neural representations of the appropriate temporal granularity that match a given linguistic unit and can be forwarded to subsequent processes such as phoneme encoding (Ghitza, Reference Ghitza2011, Reference Ghitza2012; Giraud and Poeppel, Reference Giraud and Poeppel2012). Across languages, speech acoustics show dominant slow-amplitude modulations in the theta frequency range, a timescale that has been originally suggested to correspond to the syllabic rate in speech (Ding et al., Reference Ding, Patel and Chen2017; Varnet et al., Reference Varnet, Ortiz-Barajas, Guevara Erra, Gervain and Lorenzi2017). Such slow-amplitude modulations (reflecting the “speech envelope”) pass the cochlear filter and are represented in the auditory cortex (Rosen, Reference Rosen1992; Shamma, Reference Shamma2001). Despite the lack of explicit cues, acoustic landmarks in the speech envelope have been suggested to aid speech segmentation by aligning the high excitability phase of endogenous auditory cortex theta brain rhythms to the syllabic rate, termed “entrainment” or “speech tracking” (Giraud et al., Reference Giraud, Kleinschmidt and Poeppel2007; Luo and Poeppel, Reference Luo and Poeppel2007; Ghitza and Greenberg, Reference Ghitza and Greenberg2009; Giraud and Poeppel, Reference Giraud and Poeppel2012; Peelle and Davis, Reference Peelle and Davis2012; Gross et al., Reference Gross, Hoogenboom and Thut2013; Haegens and Zion Golumbic, Reference Haegens and Zion Golumbic2018; Kösem et al., Reference Kösem, Bosker and Takashima2018; Rimmele et al., Reference Rimmele, Morillon, Poeppel and Arnal2018; Poeppel and Assaneo, Reference Poeppel and Assaneo2020). Accordingly, relevant syllabic information has been suggested to fluctuate at this time scale (Sun and Poeppel, Reference Sun and Poeppel2023). It has been debated whether acoustic landmarks are defined by the rapid increases in acoustic energy in the envelope, the energy peaks related to mid-vowels, or linguistic syllable onsets (Ghitza, Reference Ghitza2011; Gross et al., Reference Gross, Hoogenboom and Thut2013; Doelling et al., Reference Doelling, Arnal, Ghitza and Poeppel2014; Aubanel et al., Reference Aubanel, Davis and Kim2016; Oganian and Chang, Reference Oganian and Chang2019). Thus, syllable segmentation may be achieved by speech envelope landmarks and, at the neural level, endogenous theta brain rhythms constraining the “search space for segmentation” (for review and a critical discussion: Adolfi et al., Reference Adolfi, Wareham and van Rooij2023). According to a large corpus analysis, actually only a small percentage of the slow-amplitude modulations in the speech envelope correlates with syllable onsets, suggesting that the brain may apply additional computations to extract syllable boundaries from the envelope (c.f. Schmidt et al., Reference Schmidt, Chen and Keitel2023; Zhang et al., Reference Zhang, Zou and Ding2023). Although stronger speech tracking has been observed for intelligible compared to unintelligible speech (Luo and Poeppel, Reference Luo and Poeppel2007; Peelle and Davis, Reference Peelle and Davis2012; Gross et al., Reference Gross, Hoogenboom and Thut2013), speech tracking and comprehension seem not to be directly related. For example, tracking occurs for unattended (Zion Golumbic et al., Reference Zion Golumbic, Ding and Bickel2013; Rimmele et al., Reference Rimmele, Zion Golumbic, Schröger and Poeppel2015), unintelligible, or backwards speech (Howard and Poeppel, Reference Howard and Poeppel2010), whereas higher-level processing areas more selectively track relevant speech (Zion Golumbic et al., Reference Zion Golumbic, Ding and Bickel2013; Keitel et al., Reference Keitel, Gross and Kayser2018). However, speech tracking might be related to comprehension indirectly through the interaction with other processes (Pefkou et al., Reference Pefkou, Arnal, Fontolan and Giraud2017). In addition, in the context of predictive coding approaches, theta tracking has been assigned a specific role in uncertain speech contexts (Donhauser and Baillet, Reference Donhauser and Baillet2020). While it is a matter of ongoing debate whether speech tracking reflects an oscillatory mechanism or merely evoked potentials (Doelling and Assaneo, Reference Doelling and Assaneo2021; Oganian et al., Reference Oganian, Kojima and Breska2023), some have suggested that both contribute (Doelling et al., Reference Doelling, Assaneo, Bevilacqua, Pesaran and Poeppel2019).

Typically, syllable tracking has been observed in the auditory cortex (e.g., Luo and Poeppel, Reference Luo and Poeppel2007; Zion Golumbic et al., Reference Zion Golumbic, Ding and Bickel2013; Gross et al., Reference Gross, Hoogenboom and Thut2013; Park et al., Reference Park, Ince, Schyns, Thut and Gross2015), but also in frontal (including inferior frontal gyrus [IFG]) motor cortices (Park et al., Reference Park, Ince, Schyns, Thut and Gross2015; Assaneo et al., Reference Assaneo, Rimmele and Orpella2019; Rimmele et al., Reference Rimmele, Poeppel and Ghitza2021), or middle temporal brain areas (Rimmele et al., Reference Rimmele, Poeppel and Ghitza2021). Spectral profiles suggest endogenous theta brain rhythms in the bilateral auditory cortex and auditory association areas (Heschl’s gyrus, superior temporal gyrus [STG], left middle temporal gyrus [MTG]), the bilateral motor cortex, and the (pre-)frontal cortex (see Figure 3.1 – note that theta is also typically observed in the hippocampus and other brain areas that are not reported here) (Giraud et al., Reference Giraud, Kleinschmidt and Poeppel2007; Keitel and Gross, Reference Keitel and Gross2016; Lubinus et al., Reference Lubinus, Orpella and Keitel2021). In addition to measures during rest, endogenous auditory cortex theta rhythms have been indicated using other approaches (Teng et al., Reference Teng, Tian, Rowland and Poeppel2017, Reference Teng, Tian, Doelling and Poeppel2018). Importantly, auditory cortex syllable tracking and endogenous theta rhythms have been putatively linked, as theta power increases during comprehension compared to rest (Keitel and Gross, Reference Keitel and Gross2016) (for further evidence: Wilsch et al., Reference Wilsch, Neuling, Obleser and Herrmann2018; Zoefel et al., Reference Zoefel, Allard, Anil and Davis2019; Becker and Hervais-Adelman, Reference Becker and Hervais-Adelman2023).

3.2.2 Endogenous Gamma and Theta-Gamma Coupling during Speech Comprehension

Another brain rhythm that has been related to speech processing is the gamma brain rhythm observed in the auditory cortex (Lakatos et al., Reference Lakatos, Shah and Knuth2005; Fontolan et al., Reference Fontolan, Morillon, Liegeois-Chauvel and Giraud2014). Within a spectral profiling approach, gamma peaks are difficult to observe when averaging spectral activity across participants, due to the large inter-individual variance in gamma peak frequencies (Baltus and Herrmann, Reference Baltus and Herrmann2016).

An approach that assigns a generic role to gamma is the communication-through-coherence (CTC) framework. In CTC, neural communication channels are established through brain rhythm synchronization with suggested roles of different brain rhythms across the brain (Fries, Reference Fries2005, Reference Fries2015; for a criticism of CTC: Schneider et al., Reference Schneider, Broggini and Dann2021). Gamma has been suggested to reflect bottom-up processing across sensory domains and processes. Although this has mostly been tackled in the visual domain, evidence for gamma being related to bottom-up processing of speech comes from human depth-electrode recordings showing that gamma activity in the primary auditory cortex is related to bottom-up processing that is modulated by the phase of delta-beta brain rhythms in the association auditory cortex (AAC) (Fontolan et al., Reference Fontolan, Morillon, Liegeois-Chauvel and Giraud2014). Such bottom-up processing has been interpreted in the predictive coding framework (Rao and Ballard, Reference Rao and Ballard1999; Friston, Reference Friston2005; Fontolan et al., Reference Fontolan, Morillon, Liegeois-Chauvel and Giraud2014).

Another line of research suggests a more specific role of gamma brain rhythms reflecting computations for syllable encoding (Shamir et al., Reference Shamir, Ghitza, Epstein and Kopell2009; Ghitza, Reference Ghitza2011; Giraud and Poeppel, Reference Giraud and Poeppel2012), in contrast to the more generic communication channel interpretation of CTC. During speech processing, theta–gamma phase–amplitude coupling (PAC) in the auditory cortex has been proposed to reflect the encoding of the fine-grained phonemic information of a syllabic unit (Shamir et al., Reference Shamir, Ghitza, Epstein and Kopell2009; Ghitza, Reference Ghitza2011; Giraud and Poeppel, Reference Giraud and Poeppel2012; Gross et al., Reference Gross, Hoogenboom and Thut2013; Hyafil et al., Reference Hyafil, Giraud, Fontolan and Gutkin2015a; Lizarazu et al., Reference Lizarazu, Lallier and Molinaro2019). Although some studies suggested higher-level linguistic processing beyond encoding (Palva et al., Reference Palva, Palva and Shtyrov2002; Di Liberto et al., Reference Di Liberto, O’Sullivan and Lalor2015), others showed that theta-gamma coupling follows the input and likely reflects encoding processes (Gross et al., Reference Gross, Hoogenboom and Thut2013; Lizarazu et al., Reference Lizarazu, Lallier and Molinaro2019). Theta-gamma coupling strength has been observed to vary with speech intelligibility (Gross et al., Reference Gross, Hoogenboom and Thut2013; Pefkou et al., Reference Pefkou, Arnal, Fontolan and Giraud2017; Lizarazu et al., Reference Lizarazu, Lallier and Molinaro2019). In line with an encoding interpretation, Lizarazu et al. (Reference Lizarazu, Lallier and Molinaro2019) showed that the peak of the gamma response was coupled to the theta phase and followed the speech rate when speech was intelligible. Interestingly, developmental work suggests enhancement of gamma brain rhythms during a period of increased (sub)phonemic learning (Barajas et al., Reference Barajas, Erra and Gervain2021). Such a bottom-up encoding view is also compatible with neurophysiologically inspired computational models of speech recognition that show theta-gamma coupling can improve syllable recognition (Hyafil et al., Reference Hyafil, Fontolan, Kabdebon, Gutkin and Giraud2015b; Hovsepyan et al., Reference Hovsepyan, Olasagasti and Giraud2020) (see also brain–computer interface decoding studies: Proix et al., Reference Proix, Delgado Saa and Martin2022).

Apart from a role of theta-gamma coupling for syllable encoding, endogenous gamma activity in the auditory cortex has also been connected to auditory and language processing. In particular, the individual peak of gamma oscillations measured through ASSRs, which reflects an individual’s endogenous auditory resonance frequency, is thought to determine the rate at which auditory information is sampled (Baltus and Herrmann, Reference Baltus and Herrmann2016). This individual auditory temporal resolution has been connected with general auditory processing (Baltus and Herrmann, Reference Baltus and Herrmann2015) as well as speech and language-specific features (Lehongre et al., Reference Lehongre, Ramus, Villiermet, Schwartz and Giraud2011). It is particularly informative that an “oversampling,” that is, a higher individual gamma frequency, putatively resulting in sub-optimal phonemic processing, has been shown to predict several linguistic deficits in individuals with dyslexia (Lehongre et al., Reference Lehongre, Ramus, Villiermet, Schwartz and Giraud2011). In addition, individuals with dyslexia show weaker entrainment to the phonemic rate (around 30 Hz) than individuals without dyslexia (Lehongre et al., Reference Lehongre, Ramus, Villiermet, Schwartz and Giraud2011), and enhancing low-gamma activity using transcranial alternating current stimulation (tACS) shows improved phonemic processing (Marchesotti et al., Reference Marchesotti, Nicolle and Merlet2020). The power of gamma activity during rest has also been shown to predict speech-in-noise perception (Houweling et al., Reference Houweling, Becker and Hervais-Adelman2020), further supporting a role of endogenous gamma activity in speech processing.

3.2.3 Delta Brain Rhythms Related to Prosodic Processing

Slower brain rhythms below 2 Hz observed in auditory (and motor) cortices match the timescale of speech prosody and have been suggested to be involved in its neural tracking (Bourguignon et al., Reference Bourguignon, De Tiege and De Beeck2013; Meyer et al., Reference Meyer, Henry, Gaston, Schmuck and Friederici2016; Molinaro et al., Reference Molinaro, Lizarazu, Lallier, Bourguignon and Carreiras2016; Kotz et al., Reference Kotz, Ravignani and Fitch2018; Rimmele et al., Reference Rimmele, Poeppel and Ghitza2021). Endogenous delta brain rhythms are also evident in the spectral profiles of these brain areas (Keitel and Gross, Reference Keitel and Gross2016; Lubinus et al., Reference Lubinus, Orpella and Keitel2021; see Figure 3.1), although this endogenous activity has not been explicitly related to prosody tracking during speech processing. Prosody is reflected in the melodic pitch contour of speech with several supra-segmental acoustic features contributing to prosody perception, including the rise and fall time of pitch (tone), stress (based on changes in pitch, segment length, and loudness), and rhythmic aspects (Bourguignon et al., Reference Bourguignon, De Tiege and De Beeck2013; Paulmann, Reference Paulmann, Hickok and Small2016; also see Section 3 in this volume). Prosody can mark linguistic boundaries but also reflect emotional states (Paulmann, Reference Paulmann, Hickok and Small2016; Inbar et al., Reference Inbar, Grossman and Landau2020; Pell and Kotz, Reference Pell and Kotz2021; van Rijn and Larrouy-Maestri, Reference van Rijn and Larrouy-Maestri2023). Neural tracking of prosody has been typically observed in the right lateralized auditory cortex (Sammler et al., Reference Sammler, Grosbras, Anwander, Bestelmeyer and Belin2015). A particularly relevant landmark for the alignment of brain rhythms to prosodic information seems to be the pauses in the speech signal, which delta tracking has been specifically connected to (Bourguignon et al., Reference Bourguignon, De Tiege and De Beeck2013; Chalas et al., Reference Chalas, Daube and Kluger2023). Delta tracking, however, might reflect speech onset processing rather than sustained rhythmic activity (Chalas et al., Reference Chalas, Daube and Kluger2023).

3.2.4 Hemispheric Lateralization of Brain Rhythms

It has been argued that, after an initial bilaterally symmetric neural representation of speech in the primary auditory cortex, an auditory hemispheric asymmetry allows for an optimization of auditory processing in general, and speech processing specifically, with complementary and parallel processing in the two hemispheres (Zatorre and Belin, Reference Zatorre and Belin2001; Zatorre et al., Reference Zatorre, Belin and Penhune2002; Poeppel, Reference Poeppel2003; Washington and Tillinghast, Reference Washington and Tillinghast2015; Zatorre, Reference Zatorre2022; Robert et al., Reference Robert, Zatorre and Gupta2024). In the right hemisphere, slower delta-theta brain rhythms (~4–8 Hz) are observed, while the left hemisphere shows a regime of faster gamma brain rhythms (~40 Hz) (Poeppel, Reference Poeppel2003). For auditory processing in general, it has been argued that the right hemisphere is involved in the processing of spectral modulations relevant for object discrimination and the left hemisphere in temporal modulations relevant for action processing (Zatorre et al., Reference Zatorre, Belin and Penhune2002; Albouy et al., Reference Albouy, Benjamin, Morillon and Zatorre2020; Robert et al., Reference Robert, Zatorre and Gupta2024). Along those lines, melody processing is thought to depend more on spectral cues and speech perception more on temporal cues (Albouy et al., Reference Albouy, Benjamin, Morillon and Zatorre2020). For speech processing, it has been argued that the slower delta-theta brain rhythms in the right hemisphere reflect long integration windows (~150–250 ms) relevant for prosodic and syllable segmentation, while the faster brain rhythms reflect short integration windows (~20–40 ms) involved in processing fast formant transitions in stop consonants (here, formant transitions indicate rapid changes in vocal tract resonances at the release of the stop constriction) relevant for phoneme encoding (Poeppel, Reference Poeppel2003).

These theories suggest a differential specialization for acoustic modulations based on the spectral profiles and prevailing brain rhythms of the two hemispheres. Recently, top-down influences have been suggested to affect such lateralization, particularly for the processing of slower spectro-temporal modulations, and explain the more heterogeneous findings reported in the literature (Assaneo et al., Reference Assaneo, Rimmele and Orpella2019; Flinker et al., Reference Flinker, Doyle, Mehta, Devinsky and Poeppel2019; Albouy et al., Reference Albouy, Benjamin, Morillon and Zatorre2020). Interestingly, for endogenous brain rhythms recorded during a resting state, typically lateralization is not explicitly analyzed and rhythmic patterns appear to be mostly bilateral (Keitel and Gross, Reference Keitel and Gross2016; Mellem et al., Reference Mellem, Wohltjen, Gotts, Avniel and Martin2017; Vidaurre et al., Reference Vidaurre, Hunt and Quinn2018; Capilla et al., Reference Capilla, Arana and García-Huéscar2022). Some, however, report a lateralization of resting state brain rhythms in line with the hemispheric lateralization accounts (Giraud et al., Reference Giraud, Kleinschmidt and Poeppel2007), and others (Giroud et al., Reference Giroud, Trébuchon and Schön2020) used transient pure tone stimuli in intracerebral recordings in epilepsy patients to reveal hemispheric lateralization of intrinsic brain rhythms.

3.2.5 Brain Rhythms Related to Higher-Level Linguistic Processing

Beta and delta are the brain rhythms that have been most frequently related to higher-level linguistic processing (Weiss and Mueller, Reference Weiss and Mueller2012; Bonhage et al., Reference Bonhage, Meyer, Gruber, Friederici and Mueller2017; Schaller et al., Reference Schaller, Weiss and Müller2017; Martorell et al., Reference Martorell, Morucci, Mancini, Molinaro, Grimaldi, Brattico and Shtyrov2023; Tavano et al., Reference Tavano, Rimmele, Michalareas, Poeppel, Grimaldi, Brattico and Shtyrov2023). Spectral profiles suggest endogenous delta brain rhythms in auditory and language-processing-relevant auditory association cortices (Figure 3.1; Keitel and Gross, Reference Keitel and Gross2016; Heschl’s gyrus, STG, MTG), in prefrontal areas (opercular IFG), and in motor cortices (precentral gyrus, supplementary motor areas [SMAs]) (see also Lubinus et al., Reference Lubinus, Orpella and Keitel2021). Endogenous beta rhythms are typically reported in the motor cortices and prefrontal areas, but also in Heschl’s gyrus, left STG, and prefrontal areas, but typically not in MTG (Keitel and Gross, Reference Keitel and Gross2016; Lubinus et al., Reference Lubinus, Orpella and Keitel2021).

Beta brain rhythms have been related to several higher-level linguistic processes. In the predictive coding context particularly, they have been associated with the predictability of various types of linguistic information (Meyer et al., Reference Meyer, Obleser and Friederici2012; Weiss and Mueller, Reference Weiss and Mueller2012; Lewis et al., Reference Lewis, Wang and Bastiaansen2015; Bonhage et al., Reference Bonhage, Meyer, Gruber, Friederici and Mueller2017; Schaller et al., Reference Schaller, Weiss and Müller2017; Martorell et al., Reference Martorell, Morucci, Mancini, Molinaro, Grimaldi, Brattico and Shtyrov2023) and temporal predictions from the motor cortex during speech comprehension (Keitel et al., Reference Keitel, Gross and Kayser2018; Morillon et al., Reference Morillon, Arnal, Schroeder and Keitel2019; see also Morillon and Baillet, Reference Morillon and Baillet2017). Computational modelling work suggests the beta rhythm might indicate the precision modulation of the prediction error during speech comprehension (see also Fontolan et al., Reference Fontolan, Morillon, Liegeois-Chauvel and Giraud2014; Cao et al., Reference Cao, Thut and Gross2016; Sedley et al., Reference Sedley, Gander and Kumar2016; Chao et al., Reference Chao, Takaura, Wang, Fujii and Dehaene2018; Weissbart et al., Reference Weissbart, Kandylaki and Reichenbach2020). For example, beta tracking of surprisal (using temporal response functions [TRFs]) has been related to word predictability in continuous speech (Weissbart et al., Reference Weissbart, Kandylaki and Reichenbach2020; Zioga et al., Reference Zioga, Weissbart, Lewis, Haegens and Martin2023). Others suggest a role of beta suppression in the combination of words into meaning through its reflection of a general-purpose inhibitory system involved in word negation (Zuanazzi et al., 2022; for review: Beltrán et al., Reference Beltrán, Liu and de Vega2021), or its role in word category discrimination, action semantics, semantic memory, and working memory during language comprehension (Haarmann et al., Reference Haarmann, Cameron and Ruchkin2002; Piai et al., Reference Piai, Roelofs, Rommers and Maris2015; for review: Weiss and Mueller, Reference Weiss and Mueller2012). Furthermore, beta might be involved in sentence structure-based processing, with beta power increasing with syntactic processing demands, or for sentences compared to word lists (Bastiaansen and Hagoort, Reference Bastiaansen and Hagoort2006; Bastiaansen et al., Reference Bastiaansen, Magyari and Hagoort2009; Meyer et al., Reference Meyer, Obleser and Friederici2012). Beta is typically observed in several areas of the motor cortex (McCarthy et al., Reference McCarthy, Moore-Kochlacs and Gu2011; Pavlides et al., Reference Pavlides, Hogan and Bogacz2015), suggesting a close relationship between motor processing and language comprehension (Weiss and Mueller, Reference Weiss and Mueller2012). Computational modelling work supports the idea that the beta brain rhythm is particularly suitable for maintaining and preserving neural activity in time to link past and present input (Roopun et al., Reference Roopun, Kramer and Carracedo2008; Engel and Fries, Reference Engel and Fries2010; Kopell et al., Reference Kopell, Whittington and Kramer2011; Gelastopoulos et al., Reference Gelastopoulos, Whittington and Kopell2019) (for a decision multiplexing framework, see Rassi et al., Reference Rassi, Zhang and Mendoza2023).

As discussed above, delta brain rhythms are observed in auditory, auditory association, and motor cortices, and have been suggested to be involved in prosody tracking. More recently, it has been argued that these slow brain rhythms might also reflect the tracking of the syntactic phrasal structure in the absence of acoustic (prosodic) cues (Meyer et al., Reference Meyer, Obleser and Friederici2012; Ding et al., Reference Ding, Melloni, Tian and Poeppel2016; Meyer et al., Reference Meyer, Henry, Gaston, Schmuck and Friederici2016; Bonhage et al., Reference Bonhage, Meyer, Gruber, Friederici and Mueller2017; Meyer, Reference Meyer2018; Meyer et al., Reference Meyer, Sun and Martin2020; Henke and Meyer, Reference Henke and Meyer2021; Lu et al., Reference Lu, Jin, Pan and Ding2022). Ding et al. (Reference Ding, Melloni, Tian and Poeppel2016) interpreted such tracking to indicate hierarchical syntactic processing (for a criticism of this interpretation: Frank and Christiansen, Reference Frank and Christiansen2018; Kazanina and Tavano, Reference Kazanina and Tavano2023; Lo et al., Reference Lo, Henke, Martorell and Meyer2023). Computational modelling research suggests neural oscillatory mechanisms as possible candidates for delta brain rhythms underlying hierarchical linguistic processing (Martin and Doumas, Reference Martin and Doumas2017; ten Oever and Martin, Reference ten Oever and Martin2021). Currently, research is lacking that systematically relates endogenous brain rhythms in the delta band to higher-level linguistic processing during speech comprehension.

3.2.6 Motor Cortex Brain Rhythms Recruited during Speech and Language Processing

When using fine-grained spectral profiling, intrinsic rhythmic activity in cortical motor areas (including precentral gyri, supplementary motor areas, and also inferior frontal gyrus as speech-motor-related brain areas) is consistently characterized by peaks in the delta, theta, and beta frequency bands (see Figure 3.1). Traditionally, the motor cortex has mostly been associated with prominent beta activity (Pfurtscheller et al., Reference Pfurtscheller, Stancák and Neuper1996). This characteristic beta power, as now established, is not due to sustained rhythmic activity but reflects transient “beta bursts” (Jones, Reference Jones2016; Sherman et al., Reference Sherman, Lee and Law2016). As mentioned above, beta activity in general is associated with higher-level linguistic and predictive processing. In the motor cortex specifically, beta activity has been connected to motor control (Pfurtscheller et al., Reference Pfurtscheller, Stancák and Neuper1996) and top-down interactions with the auditory cortex (Abbasi and Gross, Reference Abbasi and Gross2020).

Apart from beta activity, rhythmic delta activity in the motor cortex has gained attention in auditory and speech perception research. The “active sensing” framework postulates that cortical motor activity shapes sensory processing (Morillon et al., Reference Morillon, Hackett, Kajikawa and Schroeder2015). In particular, rhythmic delta activity in the motor cortex is thought to impose temporal constraints on auditory sampling and is involved in generating temporal predictions for acoustic stimuli, including speech (Keitel et al., Reference Keitel, Gross and Kayser2018). In line with this, it has been shown that the left motor cortex tracks slow delta band fluctuations in continuous speech and that the strength of this tracking predicts comprehension in noise (Keitel et al., Reference Keitel, Gross and Kayser2018). Furthermore, delta-beta phase-amplitude coupling in left motor areas also predicts speech-in-noise comprehension (Keitel et al., Reference Keitel, Gross and Kayser2018), highlighting a potential role of oscillatory cross-frequency mechanisms for temporal predictions in auditory perception (Arnal et al., Reference Arnal, Doelling and Poeppel2014; Fontolan et al., Reference Fontolan, Morillon, Liegeois-Chauvel and Giraud2014; Morillon and Baillet, Reference Morillon and Baillet2017).

Motor cortices also show prominent endogenous activity in the theta band, similar to auditory and other frontal areas (see Figure 3.1). Interestingly, a theta-frequency-specific coupling between auditory and motor cortices has been found that matches the average syllabic rate, which has been termed “intrinsic speech-motor rhythm” (see also He et al., Reference Becker and Hervais-Adelman2023; Barchet et al., Reference Barchet, Henry, Pelofi and Rimmele2024). Importantly, behavioral studies have related individual intrinsic motor rhythms in the theta band (quantified by the speaking rate) and individual synchronization tendencies (mapping auditory-motor coupling strength) to speech perception skills (Assaneo et al., Reference Assaneo, Rimmele and Orpella2019, Reference Assaneo, Rimmele, Sanz Perl and Poeppel2021; Lubinus et al., Reference Lubinus, Keitel, Obleser, Poeppel and Rimmele2023; see also Pfordresher et al., Reference Pfordresher, Greenspon, Friedman and Palmer2021). Notably, the relationship between endogenous motor rhythms and perception has also been behaviorally estimated and computationally modelled in the field of music research (Zamm et al., Reference Zamm, Wang and Palmer2018; Roman et al., Reference Roman, Roman, Kim and Large2023). Besides these attempts on the behavioral and theoretical level, we note again that research that systematically relates endogenous brain rhythms in the motor cortex to the brain rhythms observed during task-related processing is scarce.

The motor cortex also shows other important frequency-specific interactions with the auditory cortex. For example, during listening to speech, delta and theta band activity in left precentral gyrus influence the phase of same-band activity in the left auditory cortex, and this is associated with the strength of auditory speech tracking (Park et al., Reference Park, Ince, Schyns, Thut and Gross2015). Similarly, alpha-band power in several central brain regions (including bilateral precentral gyri and supplementary motor areas) influences speech tracking in the delta band in the left auditory cortex (Keitel et al., Reference Keitel, Ince, Gross and Kayser2017). The above findings are in line with a role of the motor cortex in predicting the timing of events and controlling neural excitability, also in the auditory cortex (Arnal and Giraud, Reference Arnal and Giraud2012). Taken together, these findings show that motor cortex activity can influence auditory speech tracking in a top-down manner using different frequency channels.

3.3 Conclusion

Endogenous brain rhythms measured during rest reflect the functional organization of the brain and are thought to be recruited during speech and language processing. Specific roles for endogenous brain rhythms have been proposed for speech and syllable segmentation, phonetic encoding, prosodic segmentation, but also higher-level linguistic predictions and temporal predictions. Recently, there have been methodological advances in the analysis of endogenous rhythmic activity, revealing complex patterns of brain rhythms as well as frequency-specific networks. Crucially, more work is required that directly relates endogenous brain rhythms observed during rest to brain rhythms observed during task-related processing. Possibly, future research would use invasive single-cell recordings to unambiguously show that the same neurons that display rhythmic activity during rest are also involved in task-related operations. Additionally, altering endogenous brain rhythms through noninvasive brain stimulation and testing behavioral consequences is a promising route to test causal relationships. Furthermore, more differentiation of the various brain rhythms observed in different brain areas regarding their characteristic features is required. For example, do brain rhythms of a brain area reflect transient bursts, continuous rhythmic activity, or aperiodic activity, and to what extent do they reflect local or global neural circuitry? Ultimately, testing the functional relevance of endogenous brain rhythms is a crucial bottleneck for proving influential neural oscillatory theories of speech and language processing.

3.4 Acknowledgements

Anne Keitel was supported by the Medical Research Council (grant number MR/W02912X/1). Both Johanna M. Rimmele and Anne are members of the Scottish-EU Critical Oscillations Network (SCONe), funded by the Royal Society of Edinburgh (RSE Saltire Facilitation Network Award to Anne, Reference Number 1963). Johanna was supported by the Max Planck Institute for Empirical Aesthetics and the Max Planck NYU Center for Language, Music, and Emotion (CLaME).

Summary

An important cornerstone to understand ubiquitous neural oscillations is the link between endogenous brain rhythms measured during rest and brain rhythms measured during task processing. We reviewed the literature on brain rhythms involved in speech and language processing, and their putative link to endogenous brain rhythms, especially in the theta, gamma and delta, and beta frequency ranges.

Implications

Neural oscillatory models to date include different levels of language processing. Attempts to directly relate these brain rhythms observed during task-related processing to endogenous brain rhythms are sparse. We provide a road map for future research on how to tackle the currently missing explicit links between endogenous brain rhythms and their role in speech and language processing for novices and experts.

Gains

To understand the neural underpinnings of speech and language will allow us to explain a wide variety of phenomena in the future, ranging from lower-level processes such as speech segmentation and syllable encoding up to higher-level language processing, including phrasal and prosodic processing. It is one crucial piece of the puzzle of why speech and language are temporally organized as they are.