1 Introduction

Enumeration reducibility (

![]() $\le _e$

) is a reducibility that captures the notion of how difficult it is to enumerate a given set of numbers. There are several definitions, but the one we find most useful in this article is the given by Friedberg and Rogers [Reference Friedberg and Rogers2] when they introduced the reducibility.

$\le _e$

) is a reducibility that captures the notion of how difficult it is to enumerate a given set of numbers. There are several definitions, but the one we find most useful in this article is the given by Friedberg and Rogers [Reference Friedberg and Rogers2] when they introduced the reducibility.

Definition 1.1. For sets

![]() $A,B\subseteq \omega $

we say that

$A,B\subseteq \omega $

we say that

![]() $A\le B$

if there is a c.e. set of axioms W such that

$A\le B$

if there is a c.e. set of axioms W such that

Here

![]() $(D_u)_{u\in \omega }$

is the collection of all finite sets given by strong indexes.

$(D_u)_{u\in \omega }$

is the collection of all finite sets given by strong indexes.

One useful property of this definition is that it gives us a collection of enumeration operators

![]() $(\Psi _e)_{e\in \omega }$

. We define

$(\Psi _e)_{e\in \omega }$

. We define

![]() $A=\Psi _e(B)$

if

$A=\Psi _e(B)$

if

![]() $A\le _eB$

via the eth c.e. set

$A\le _eB$

via the eth c.e. set

![]() $W_e$

. Enumeration reducibility is a reducibility on the positive information about a set. This can be seen by the fact that if

$W_e$

. Enumeration reducibility is a reducibility on the positive information about a set. This can be seen by the fact that if

![]() $A\subseteq B$

then

$A\subseteq B$

then

![]() $\Psi _e(A)\subseteq \Psi _e(B)$

.

$\Psi _e(A)\subseteq \Psi _e(B)$

.

Enumeration reducibility is a pre-order and the equivalence classes form an upper semi-lattice

![]() $\mathcal {D}_e$

with least element

$\mathcal {D}_e$

with least element

![]() $\mathbf {0}_e$

, consisting of all c.e. sets, and joins given by the usual operation. There is also an enumeration jump given by

$\mathbf {0}_e$

, consisting of all c.e. sets, and joins given by the usual operation. There is also an enumeration jump given by

![]() $A\mapsto \bigoplus _{e\in \omega } \Psi _e(A) \oplus \bigoplus _{e\in \omega } \overline {\Psi _e(A)}$

. Like with the Turing jump we have that

$A\mapsto \bigoplus _{e\in \omega } \Psi _e(A) \oplus \bigoplus _{e\in \omega } \overline {\Psi _e(A)}$

. Like with the Turing jump we have that

![]() $A<_e A'$

.

$A<_e A'$

.

One aspect of enumeration reducibility that has been well studied is its relationship with Turing reducibility. The Turing degrees embed into the enumeration degrees via the map induced by

![]() $A\mapsto A\oplus \overline {A}$

. This follows from the fact that

$A\mapsto A\oplus \overline {A}$

. This follows from the fact that

![]() $A\oplus \overline {A}\le _e B\oplus \overline {B}\iff A\le _T B$

. This embedding is known to be a proper embedding [Reference Medvedev9], and the Turing and enumeration jump coincide on these degrees. The image of the Turing degrees is known as the total degrees.

$A\oplus \overline {A}\le _e B\oplus \overline {B}\iff A\le _T B$

. This embedding is known to be a proper embedding [Reference Medvedev9], and the Turing and enumeration jump coincide on these degrees. The image of the Turing degrees is known as the total degrees.

Definition 1.2. We say that a set A is total if

![]() $\overline {A}\le _eA$

. We say that A is cototal if

$\overline {A}\le _eA$

. We say that A is cototal if

![]() $A\le _e \overline {A}$

. A degree is total (cototal) if it contains a total (cototal) set.

$A\le _e \overline {A}$

. A degree is total (cototal) if it contains a total (cototal) set.

It is known that the total degrees are a proper subclass of the cototal degrees and that the cototal degrees are a proper subclass of all enumeration degrees [Reference Andrews, Ganchev, Kuyper, Lempp, Miller, Soskova and Soskova1]. It is known that both the jump [Reference Kalimullin7] and the total degrees [Reference Ganchev and Soskova3] are definable within the structure

![]() $(\mathcal {D}_e, \le _e)$

.

$(\mathcal {D}_e, \le _e)$

.

While there are many similarities between these classes of degrees they are structurally different. One notable difference is the fact that, while there are minimal Turing degrees, Gutteridge [Reference Gutteridge5] proved that the enumeration degrees are downwards dense.

We have seen that Turing reducibility can be defined in terms of enumeration reducibility. An important early result of Selman [Reference Selman12] shows how to define enumeration reducibility in terms of Turing reducibility.

Theorem 1.3 (Selman’s theorem)

![]() $A\le _e B$

if and only if, for all X if

$A\le _e B$

if and only if, for all X if

![]() $B\le _e X\oplus \overline {X}$

then

$B\le _e X\oplus \overline {X}$

then

![]() $A\le _e X\oplus \overline {X}$

.

$A\le _e X\oplus \overline {X}$

.

This theorem states that an enumeration degree is uniquely determined by total degrees above it. This means that the total degrees form an automorphism base for the enumeration degrees.

Sanchis [Reference Sanchis11] introduced the notion of hyperenumeration reducibility

![]() $\le _{he}$

, an analogue of enumeration reducibility relating to hyperarithmetic reducibility rather than Turing reducibility.

$\le _{he}$

, an analogue of enumeration reducibility relating to hyperarithmetic reducibility rather than Turing reducibility.

Definition 1.4 (Sanchis [Reference Sanchis11])

We say that

![]() $A\le _{he} B$

if there is a c.e. set W such that

$A\le _{he} B$

if there is a c.e. set W such that

Sanchis proved that

![]() $\le _{he}$

is a pre-order giving rise to the hyperenumeration degrees

$\le _{he}$

is a pre-order giving rise to the hyperenumeration degrees

![]() $\mathcal {D}_{he}$

and proved that the map

$\mathcal {D}_{he}$

and proved that the map

![]() $A\mapsto A\oplus \overline {A}$

induces an embedding of the hyperarithmetic degrees into the hyperenumeration degrees. The main result of Sanchis’ paper was proving that there is a hyperenumeration degree that is not in the image of this embedding. In keeping with the notion of total degrees in the enumeration degrees we call the degrees in the image of this embedding the hypertotal degrees.

$A\mapsto A\oplus \overline {A}$

induces an embedding of the hyperarithmetic degrees into the hyperenumeration degrees. The main result of Sanchis’ paper was proving that there is a hyperenumeration degree that is not in the image of this embedding. In keeping with the notion of total degrees in the enumeration degrees we call the degrees in the image of this embedding the hypertotal degrees.

Definition 1.5. We say that a set A is hypertotal if

![]() $\overline {A}\le _{he}A$

. We say that A is hypercototal if

$\overline {A}\le _{he}A$

. We say that A is hypercototal if

![]() $A\le _{he} \overline {A}$

. A degree is hypertotal (hypercototal) if it contains a hypertotal (hypercototal) set.

$A\le _{he} \overline {A}$

. A degree is hypertotal (hypercototal) if it contains a hypertotal (hypercototal) set.

In this article we look at a couple of the aspects of the relationships between Turing reducibility and enumeration reducibility and see if they also hold for the relationship between hyperarithmetic reducibility and hyperenumeration reducibility. In Section 3 we show that Selman’s theorem fails for hyperenumeration reducibility. The proof of this works by constructing a uniformly e-pointed tree without dead ends that is not of hypertotal degree.

E-pointed trees were introduced by McCarthy [Reference McCarthy8] and were used to characterize the cototal enumeration degrees.

Definition 1.6. A tree T is e-pointed if, for every path

![]() $P\in [T]$

, we have that T is c.e. in P. We say that T is uniformly e-pointed if there is a single operator

$P\in [T]$

, we have that T is c.e. in P. We say that T is uniformly e-pointed if there is a single operator

![]() $\Psi _e$

such that for all paths

$\Psi _e$

such that for all paths

![]() $P\in [T]$

we have

$P\in [T]$

we have

![]() $T=\Psi _e(P)$

.

$T=\Psi _e(P)$

.

McCarthy proved that the degree of a uniformly e-pointed tree on

![]() $2^{<\omega }$

is a cototal set, and characterized the cototal degrees as the degrees of uniformly e-pointed trees on

$2^{<\omega }$

is a cototal set, and characterized the cototal degrees as the degrees of uniformly e-pointed trees on

![]() $2^{<\omega }$

without dead ends and as the degrees of general e-pointed trees on

$2^{<\omega }$

without dead ends and as the degrees of general e-pointed trees on

![]() $2^{<\omega }$

.

$2^{<\omega }$

.

Goh et al. [Reference Le Goh, Jacobsen-Grocott, Miller and Soskova4] and Jacobsen-Grocott [Reference Jacobsen-Grocott6] have studied e-pointed trees on

![]() $\omega ^{<\omega }$

. They found some interesting connections to hyperenumeration reducibility and the notion of hypercototality. They proved that every uniformly e-pointed tree is hypercototal and that the enumeration degrees of these trees are the same as the degrees of hypercototal sets and the same as the degrees of general e-pointed trees.

$\omega ^{<\omega }$

. They found some interesting connections to hyperenumeration reducibility and the notion of hypercototality. They proved that every uniformly e-pointed tree is hypercototal and that the enumeration degrees of these trees are the same as the degrees of hypercototal sets and the same as the degrees of general e-pointed trees.

They found that when considering e-pointed trees on

![]() $\omega ^{<\omega }$

without dead ends things become different. They proved that there is an arithmetic set that is not enumeration equivalent to any e-pointed tree without a dead end. They also proved that there is a uniformly e-pointed tree without dead ends is not of cototal enumeration degree.

$\omega ^{<\omega }$

without dead ends things become different. They proved that there is an arithmetic set that is not enumeration equivalent to any e-pointed tree without a dead end. They also proved that there is a uniformly e-pointed tree without dead ends is not of cototal enumeration degree.

In Section 3 we prove a stronger separation.

Theorem 1.7. There is a uniformly e-pointed tree

![]() $T^{\mathcal {G}}\subseteq \omega ^{<\omega }$

with no dead ends such that

$T^{\mathcal {G}}\subseteq \omega ^{<\omega }$

with no dead ends such that

![]() $T^{\mathcal {G}}$

is not hypertotal.

$T^{\mathcal {G}}$

is not hypertotal.

This has some interesting corollaries, one of which is the failure of Selman’s theorem for hyperenumeration reducibility.

Corollary 1.8. There are sets

![]() $A,B$

such that

$A,B$

such that

![]() $B\nleq _{he} A$

and for any X, if

$B\nleq _{he} A$

and for any X, if

![]() $A\le _{he}X\oplus \overline {X}$

then

$A\le _{he}X\oplus \overline {X}$

then

![]() $B\le _{he} X\oplus \overline {X}$

.

$B\le _{he} X\oplus \overline {X}$

.

This is one way in which hyperenumeration reducibility is different from enumeration reducibility. In Section 4 we prove that, like the enumeration degrees, the hyperenumeration degrees are downwards dense, giving another example of how these degree structures are similar. We prove this by adapting Gutteridge’s original proof, however we discover that, in general, priority arguments that work for the enumeration degrees will not work for the hyperenumeration degrees. We give an explanation of this issue in Section 4 and describe a tool that can work is some cases.

In Section 5 we look at some other natural reducibilities that could be considered hyperarithmetic analogues of enumeration reducibility, and we consider their relationship to hyperarithmetic reducibility and enumeration reducibility.

2 Preliminaries

Some basic points of notation. We use

![]() $n,m,i,j,k$

for natural numbers. We use

$n,m,i,j,k$

for natural numbers. We use

![]() $\alpha ,\beta ,\gamma $

for ordinals. We use

$\alpha ,\beta ,\gamma $

for ordinals. We use

![]() $\sigma ,\tau ,\rho ,\upsilon ,x,y,z$

to represent strings of natural numbers.

$\sigma ,\tau ,\rho ,\upsilon ,x,y,z$

to represent strings of natural numbers.

![]() $\langle \sigma \rangle $

corresponds to the Gödel number of the string

$\langle \sigma \rangle $

corresponds to the Gödel number of the string

![]() $\sigma $

. We use T and S to refer to trees in

$\sigma $

. We use T and S to refer to trees in

![]() $\omega ^{<\omega }$

. We will give a brief overview of some of the tools of higher computability theory that we will use in this article. A more in depth introduction to higher computability can be found in Sacks’ book [Reference Sacks10].

$\omega ^{<\omega }$

. We will give a brief overview of some of the tools of higher computability theory that we will use in this article. A more in depth introduction to higher computability can be found in Sacks’ book [Reference Sacks10].

2.1 Hyperenumeration reducibility

It is useful to define enumeration reducibility in terms of operators

![]() $(\Psi _e)_{e\in \omega }$

. Using Definition 1.4 we can define hyperenumeration operators

$(\Psi _e)_{e\in \omega }$

. Using Definition 1.4 we can define hyperenumeration operators

![]() $(\Gamma _e)_{e\in \omega }$

in a similar way.

$(\Gamma _e)_{e\in \omega }$

in a similar way.

Definition 2.1. For the eth c.e. set

![]() $W_e$

we define the hyperenumeration operators

$W_e$

we define the hyperenumeration operators

![]() $\Gamma _e$

by

$\Gamma _e$

by

![]() $n\in \Gamma _e(A)\iff \forall f\in \omega ^\omega \exists \sigma \preceq f, u\in \omega [ \langle n, \sigma , u\rangle \in W_e\land D_u\subseteq A]$

.

$n\in \Gamma _e(A)\iff \forall f\in \omega ^\omega \exists \sigma \preceq f, u\in \omega [ \langle n, \sigma , u\rangle \in W_e\land D_u\subseteq A]$

.

Now we examine the relationship between

![]() $\Gamma _e$

and

$\Gamma _e$

and

![]() $\Psi _e$

. Both use the same set

$\Psi _e$

. Both use the same set

![]() $W_e$

in their definition. Consider the tree

$W_e$

in their definition. Consider the tree

![]() $S_e^A$

defined by

$S_e^A$

defined by ![]() . From the definition of

. From the definition of

![]() $\Gamma _e$

, we have that

$\Gamma _e$

, we have that

![]() $n\in \Gamma _e(A)$

if and only if

$n\in \Gamma _e(A)$

if and only if

![]() $S_e^A$

does not have an infinite path starting with n. We have that

$S_e^A$

does not have an infinite path starting with n. We have that

![]() $S_e^A\le _e \overline {A}$

and

$S_e^A\le _e \overline {A}$

and

![]() $\overline {S_e^A}\le _e A$

.

$\overline {S_e^A}\le _e A$

.

The form of

![]() $S_e^A$

inspires us to come up with the notion of a hyperenumeration of a set.

$S_e^A$

inspires us to come up with the notion of a hyperenumeration of a set.

Definition 2.2. We say that a tree S is a hyperenumeration of a set B if

![]() ${B= \{n: \forall f\in [S](f(0)\ne n)\}}$

.

${B= \{n: \forall f\in [S](f(0)\ne n)\}}$

.

From this we have that

![]() $B\le _{he}\overline {S}$

via the same operator for every hyperenumeration S of B. By coding a set X into a layer of

$B\le _{he}\overline {S}$

via the same operator for every hyperenumeration S of B. By coding a set X into a layer of

![]() $S_e^X$

, we have that for every X such that B is

$S_e^X$

, we have that for every X such that B is

![]() $\Pi ^1_1$

in X, there is a hyperenumeration S of B such that

$\Pi ^1_1$

in X, there is a hyperenumeration S of B such that

![]() $S\equiv _T X$

. So the hyperenumerations of B characterize the hypertotal degrees above

$S\equiv _T X$

. So the hyperenumerations of B characterize the hypertotal degrees above

![]() $\deg _{he}(B)$

much like how the enumerations of B characterize the total e-degrees above the

$\deg _{he}(B)$

much like how the enumerations of B characterize the total e-degrees above the

![]() $\deg _e(B)$

.

$\deg _e(B)$

.

While the notation is a little different, Sanchis [Reference Sanchis11] used a similar idea when proving the existence of a non-hypertotal degree.

Sanchis proved some other results about hyperenumeration reducibility that we will use in this article.

Lemma 2.3 (Sanchis [Reference Sanchis11])

For sets

![]() $A,B\subseteq \omega $

we have the following:

$A,B\subseteq \omega $

we have the following:

-

1. If there is a

$\Pi ^1_1$

set V such that

$\Pi ^1_1$

set V such that  then

then

$A\le _{he} B$

.

$A\le _{he} B$

. -

2. If

$A\le _e B$

then

$A\le _e B$

then

$A\le _{he} B$

and

$A\le _{he} B$

and

$\overline {A}\le _{he} \overline {B}$

.

$\overline {A}\le _{he} \overline {B}$

.

2.2 Admissible sets and higher computability theory

The usual definition of a

![]() $\Pi _1^1$

set of natural numbers is a set of the form

$\Pi _1^1$

set of natural numbers is a set of the form

![]() $m\in X\iff \forall f\in \omega ^\omega \exists n [R(f,n,m)]$

where R is a computable relation. However admissibility gives us another definition in terms of

$m\in X\iff \forall f\in \omega ^\omega \exists n [R(f,n,m)]$

where R is a computable relation. However admissibility gives us another definition in terms of

![]() $L_{\omega _1^{{CK}}}$

that is useful.

$L_{\omega _1^{{CK}}}$

that is useful.

Definition 2.4. A set M is admissible if it is transitive, closed under union, pairing, and Cartesian product as well as satisfying the following two properties:

-

Δ1 -comprehension: For every

$\Delta _1$

definable class

$\Delta _1$

definable class

$A\subseteq M$

and set

$A\subseteq M$

and set

$a\in M$

the set

$a\in M$

the set

$A\cap a\in M$

.

$A\cap a\in M$

. -

Σ1 -collection: For every

$\Sigma _1$

definable class relation

$\Sigma _1$

definable class relation

$R\subseteq M^2$

and set

$R\subseteq M^2$

and set

$a\in M$

such that

$a\in M$

such that

$a\subseteq \mathrm {dom}(R)$

there is

$a\subseteq \mathrm {dom}(R)$

there is

$b\in M$

such that

$b\in M$

such that

$a= R^{-1}[b]$

.

$a= R^{-1}[b]$

.

The smallest admissible set is

![]() $\mathrm {HF}$

, the collection of hereditarily finite sets. Looking at the

$\mathrm {HF}$

, the collection of hereditarily finite sets. Looking at the

![]() $\Delta _1$

and

$\Delta _1$

and

![]() $\Sigma _1$

subsets of

$\Sigma _1$

subsets of

![]() $\mathrm {HF}$

is one notion of computability. We have that the

$\mathrm {HF}$

is one notion of computability. We have that the

![]() $\Delta _1$

subsets of

$\Delta _1$

subsets of

![]() $\mathrm {HF}$

are computable sets and the

$\mathrm {HF}$

are computable sets and the

![]() $\Sigma _1$

subsets of

$\Sigma _1$

subsets of

![]() $\mathrm {HF}$

are the c.e. sets. We generalize this to an arbitrary admissible set M by calling a set

$\mathrm {HF}$

are the c.e. sets. We generalize this to an arbitrary admissible set M by calling a set

![]() $A\subseteq M M$

-computable if it is a

$A\subseteq M M$

-computable if it is a

![]() $\Delta _1$

subset of M and M-c.e. if it is a

$\Delta _1$

subset of M and M-c.e. if it is a

![]() $\Sigma _1$

subset of M.

$\Sigma _1$

subset of M.

The smallest admissible set containing

![]() $\omega $

is

$\omega $

is

![]() $L_{\omega _1^{{CK}}}$

. We have that the

$L_{\omega _1^{{CK}}}$

. We have that the

![]() $L_{\omega _1^{{CK}}}$

-c.e. subsets of

$L_{\omega _1^{{CK}}}$

-c.e. subsets of

![]() $\omega $

are precisely the

$\omega $

are precisely the

![]() $\Pi ^1_1$

sets. This means that the

$\Pi ^1_1$

sets. This means that the

![]() $L_{\omega _1^{{CK}}}$

-computable subsets of

$L_{\omega _1^{{CK}}}$

-computable subsets of

![]() $\omega $

sets are the hyperarithmetic sets. Note that

$\omega $

sets are the hyperarithmetic sets. Note that

![]() $\Delta _1$

-comprehension means that the hyperarithmetic sets are precisely the sets in

$\Delta _1$

-comprehension means that the hyperarithmetic sets are precisely the sets in

![]() $\mathcal {P}(\omega )\cap L_{\omega _1^{{CK}}}$

.

$\mathcal {P}(\omega )\cap L_{\omega _1^{{CK}}}$

.

These results about

![]() $\Pi ^1_1$

and hyperarithmetic sets can be relativized for some set X. We define

$\Pi ^1_1$

and hyperarithmetic sets can be relativized for some set X. We define

![]() $L_X$

to be the smallest admissible set containing X. We have that

$L_X$

to be the smallest admissible set containing X. We have that

![]() $A\subseteq \omega $

is

$A\subseteq \omega $

is

![]() $\Pi ^1_1$

in X if and only if it is

$\Pi ^1_1$

in X if and only if it is

![]() $L_X$

-c.e. and hyperarithmetic in X if and only if

$L_X$

-c.e. and hyperarithmetic in X if and only if

![]() $A\in L_X$

. Note that while we have

$A\in L_X$

. Note that while we have

![]() $\mathrm {ORD}^{L_X}=\omega _1^X$

and

$\mathrm {ORD}^{L_X}=\omega _1^X$

and

![]() $L_{\omega _1^X}\subseteq L_X$

it is only sometimes the case that

$L_{\omega _1^X}\subseteq L_X$

it is only sometimes the case that

![]() $L_X=L_{\omega _1^X}$

.

$L_X=L_{\omega _1^X}$

.

2.3 Some facts about trees

We will deal a lot with trees in this chapter so it is useful to have operations on trees. For a tree

![]() $S\subseteq \omega ^{<\omega }$

and string x we define

$S\subseteq \omega ^{<\omega }$

and string x we define

![]() $\mathrm {Ext}(S, x)$

to be the tree of extensions of x.

$\mathrm {Ext}(S, x)$

to be the tree of extensions of x. ![]() . A relation on trees that we will use is

. A relation on trees that we will use is

![]() $\preceq $

. We say

$\preceq $

. We say

![]() $T\preceq S$

if S is an end extension of T. That is,

$T\preceq S$

if S is an end extension of T. That is,

![]() $T\subseteq S$

and for all

$T\subseteq S$

and for all

![]() $\sigma \in S$

the longest initial segment of

$\sigma \in S$

the longest initial segment of

![]() $\sigma $

that is in T is a leaf in T.

$\sigma $

that is in T is a leaf in T.

Now we define

![]() $\mathrm {rank}(S)$

for a well founded tree S using transfinite recursion. We define

$\mathrm {rank}(S)$

for a well founded tree S using transfinite recursion. We define

![]() $\mathrm {rank}(\emptyset )=0$

. Given a tree S we define

$\mathrm {rank}(\emptyset )=0$

. Given a tree S we define

![]() $\mathrm {rank}(S)=\sup _{i\in S} \mathrm {rank}(\mathrm {Ext}(S, i))+1$

.

$\mathrm {rank}(S)=\sup _{i\in S} \mathrm {rank}(\mathrm {Ext}(S, i))+1$

.

As it turns out, this function

![]() $\mathrm {rank}$

is in fact

$\mathrm {rank}$

is in fact

![]() $L_{\omega _1^{{CK}}}$

-partial computable, i.e., its graph is

$L_{\omega _1^{{CK}}}$

-partial computable, i.e., its graph is

![]() $L_{\omega _1^{{CK}}}$

-c.e. To help the reader feel more familiar with computability on

$L_{\omega _1^{{CK}}}$

-c.e. To help the reader feel more familiar with computability on

![]() $L_{\omega _1^{{CK}}}$

we include a sketch of the proof of this fact.

$L_{\omega _1^{{CK}}}$

we include a sketch of the proof of this fact.

For a tree

![]() $T\in L_{\omega _1^{{CK}}}$

and function

$T\in L_{\omega _1^{{CK}}}$

and function

![]() $f\in L_{\omega _1^{{CK}}}$

we say that f is a rank function on T if

$f\in L_{\omega _1^{{CK}}}$

we say that f is a rank function on T if

![]() $\mathrm {dom}(f)=T$

,

$\mathrm {dom}(f)=T$

,

![]() $\mathrm {range}(f)\subseteq {\omega _1^{{CK}}}$

, for each leaf

$\mathrm {range}(f)\subseteq {\omega _1^{{CK}}}$

, for each leaf

![]() $x\in T$

we have

$x\in T$

we have

![]() $f(x)=1$

and for each non-leaf

$f(x)=1$

and for each non-leaf

![]() $y\in T$

we have that

$y\in T$

we have that ![]() . Since the quantifies are all bounded it is

. Since the quantifies are all bounded it is

![]() $L_{\omega _1^{{CK}}}$

-computable to check if f is a rank function on T. If f is a rank function on T then f is unique and

$L_{\omega _1^{{CK}}}$

-computable to check if f is a rank function on T. If f is a rank function on T then f is unique and

![]() $f(\emptyset )=\mathrm {rank}(T)$

. So we can define

$f(\emptyset )=\mathrm {rank}(T)$

. So we can define

![]() $\mathrm {rank}$

by

$\mathrm {rank}$

by

![]() $\mathrm {rank}(T) =\alpha $

if there is a rank function f on T such that

$\mathrm {rank}(T) =\alpha $

if there is a rank function f on T such that

![]() $f(\emptyset )=\alpha $

or

$f(\emptyset )=\alpha $

or

![]() $\alpha =0$

and

$\alpha =0$

and

![]() $T=\emptyset $

. So we now have a

$T=\emptyset $

. So we now have a

![]() $\Sigma _1$

definition of

$\Sigma _1$

definition of

![]() $\mathrm {rank}$

. The only problem is that its domain may not consist of all well founded trees

$\mathrm {rank}$

. The only problem is that its domain may not consist of all well founded trees

![]() $T\in L_{\omega _1^{{CK}}}$

.

$T\in L_{\omega _1^{{CK}}}$

.

To prove that the domain of

![]() $\mathrm {rank}$

is all well founded trees in

$\mathrm {rank}$

is all well founded trees in

![]() $L_{\omega _1^{{CK}}}$

we use induction on the true rank of T (i.e., T’s rank according to V as we do not yet know that T has rank in

$L_{\omega _1^{{CK}}}$

we use induction on the true rank of T (i.e., T’s rank according to V as we do not yet know that T has rank in

![]() $L_{\omega _1^{{CK}}}$

). Suppose all trees of rank less than T are in the domain of rank. Then for each

$L_{\omega _1^{{CK}}}$

). Suppose all trees of rank less than T are in the domain of rank. Then for each

![]() $i\in T$

there is a rank function

$i\in T$

there is a rank function

![]() $f_i$

for

$f_i$

for

![]() $\mathrm {Ext}(T, i)$

. Since the map,

$\mathrm {Ext}(T, i)$

. Since the map,

![]() $S\mapsto f$

where f is the rank function on S, is

$S\mapsto f$

where f is the rank function on S, is

![]() $L_{\omega _1^{{CK}}}$

-c.e,

$L_{\omega _1^{{CK}}}$

-c.e,

![]() $\Sigma _1$

-collection tells us that the map

$\Sigma _1$

-collection tells us that the map

![]() $i\mapsto f_i$

is in

$i\mapsto f_i$

is in

![]() $L_{\omega _1^{{CK}}}$

. So we can build a rank function

$L_{\omega _1^{{CK}}}$

. So we can build a rank function

![]() $f\in L_{\omega _1^{{CK}}}$

on T by

$f\in L_{\omega _1^{{CK}}}$

on T by ![]() and

and

![]() $f(\emptyset )=\sup _{i\in T} f_i(\emptyset )+1$

.

$f(\emptyset )=\sup _{i\in T} f_i(\emptyset )+1$

.

One nice result of this is that if a tree

![]() $T\in L_{\omega _1^{{CK}}}$

is well founded, then it has rank

$T\in L_{\omega _1^{{CK}}}$

is well founded, then it has rank

![]() $<{\omega _1^{{CK}}}$

and the set of all well founded trees in

$<{\omega _1^{{CK}}}$

and the set of all well founded trees in

![]() $L_{\omega _1^{{CK}}}$

is

$L_{\omega _1^{{CK}}}$

is

![]() $L_{\omega _1^{{CK}}}$

-c.e. This could also be seen by observing that trees in

$L_{\omega _1^{{CK}}}$

-c.e. This could also be seen by observing that trees in

![]() $L_{\omega _1^{{CK}}}$

are

$L_{\omega _1^{{CK}}}$

are

![]() $\Delta _0$

definable and so hyperarithmetic.

$\Delta _0$

definable and so hyperarithmetic.

3 A uniformly e-pointed tree in

$\omega ^\omega $

without dead ends that is not of hyper total degree

$\omega ^\omega $

without dead ends that is not of hyper total degree

In this section we prove the following theorem.

Theorem 3.1. There is a uniformly e-pointed tree

![]() $T^{\mathcal {G}}\subseteq \omega ^{<\omega }$

with no dead ends such that

$T^{\mathcal {G}}\subseteq \omega ^{<\omega }$

with no dead ends such that

![]() $T^{\mathcal {G}}$

is not hypertotal.

$T^{\mathcal {G}}$

is not hypertotal.

3.1 The forcing partial order

We will be using a similar forcing to the one used in [Reference Le Goh, Jacobsen-Grocott, Miller and Soskova4] to construct a uniformly e-pointed tree that is not of introenumerable degree.

Let

![]() $\{T_\sigma :\sigma \in \omega ^{<\omega }\}$

be an effective listing of all finite trees in

$\{T_\sigma :\sigma \in \omega ^{<\omega }\}$

be an effective listing of all finite trees in

![]() $\omega ^{<\omega }$

where for each

$\omega ^{<\omega }$

where for each

![]() $\sigma \in \omega ^{<\omega }$

the sequence

$\sigma \in \omega ^{<\omega }$

the sequence ![]() lists each finite tree that contains

lists each finite tree that contains

![]() $T_\sigma $

infinitely often. We will define the reduction

$T_\sigma $

infinitely often. We will define the reduction

![]() $\Psi $

by which our tree will be e-pointed as

$\Psi $

by which our tree will be e-pointed as

![]() $\Psi (p)= \bigcup _{n\in \omega } T_{p{\restriction } n}$

.

$\Psi (p)= \bigcup _{n\in \omega } T_{p{\restriction } n}$

.

We define a condition to be some

![]() $p=(T^p,L^p:T^p\times T^p\rightarrow {\omega _1^{{CK}}})\in L_{\omega _1^{{CK}}}$

where the following hold:

$p=(T^p,L^p:T^p\times T^p\rightarrow {\omega _1^{{CK}}})\in L_{\omega _1^{{CK}}}$

where the following hold:

-

1.

$T^p\subseteq \omega ^{<\omega }$

is a well founded tree.

$T^p\subseteq \omega ^{<\omega }$

is a well founded tree. -

2. For each

$\sigma \in T^p$

we have that

$\sigma \in T^p$

we have that

$ T_\sigma \subseteq T^p$

.

$ T_\sigma \subseteq T^p$

. -

3. For each

$\sigma ,\tau \in T^p$

we have that

$\sigma ,\tau \in T^p$

we have that

$L^p(\sigma ,\tau )=0$

if and only if

$L^p(\sigma ,\tau )=0$

if and only if

$\sigma \in T_\tau $

.

$\sigma \in T_\tau $

. -

4. If

$\sigma \in T^p$

and

$\sigma \in T^p$

and

$\rho \prec \tau \in T^p$

then

$\rho \prec \tau \in T^p$

then

$L^p(\sigma ,\tau )=0$

or

$L^p(\sigma ,\tau )=0$

or

$L^p(\sigma ,\tau )<L^p(\sigma ,\rho )$

.

$L^p(\sigma ,\tau )<L^p(\sigma ,\rho )$

. -

5. For each

$\tau \in T^p$

and

$\tau \in T^p$

and

$n<\omega $

the set

$n<\omega $

the set

$\{\sigma : L^p(\sigma ,\tau )\le n\}$

is finite.

$\{\sigma : L^p(\sigma ,\tau )\le n\}$

is finite.

Rules 1 and 2 are there to ensure that our conditions have not defined the full tree. The purpose of L is to ensure that each node is eventually enumerated along each path. We can think of

![]() $L^p(\sigma ,\tau )=\alpha $

as promising that within

$L^p(\sigma ,\tau )=\alpha $

as promising that within

![]() $\alpha $

many steps along an extension of

$\alpha $

many steps along an extension of

![]() $\tau $

we will enumerate

$\tau $

we will enumerate

![]() $\sigma $

.

$\sigma $

.

For two conditions p and q we say

![]() $p\le q$

if

$p\le q$

if

![]() $T^q\preceq T^p$

and

$T^q\preceq T^p$

and

![]() $L^q\subseteq L^p$

. For a filter

$L^q\subseteq L^p$

. For a filter

![]() $\mathcal {G}$

we define

$\mathcal {G}$

we define

![]() $T^{\mathcal {G}}=\bigcup _{p\in \mathcal {G}}T^p$

. The fact that we must have

$T^{\mathcal {G}}=\bigcup _{p\in \mathcal {G}}T^p$

. The fact that we must have

![]() $T^q\preceq T^p$

means that if

$T^q\preceq T^p$

means that if

![]() $p\in \mathcal {G}$

,

$p\in \mathcal {G}$

,

![]() $\sigma $

is not a leaf in

$\sigma $

is not a leaf in

![]() $T^p$

and

$T^p$

and ![]() then

then ![]() . So we have a way of forcing strings into the complement of

. So we have a way of forcing strings into the complement of

![]() $T^{\mathcal {G}}$

.

$T^{\mathcal {G}}$

.

Proposition 3.2. The set of conditions is

![]() $L_{\omega _1^{{CK}}}$

-c.e. and the relation

$L_{\omega _1^{{CK}}}$

-c.e. and the relation

![]() $\le $

on conditions is

$\le $

on conditions is

![]() $L_{\omega _1^{{CK}}}$

-computable.

$L_{\omega _1^{{CK}}}$

-computable.

Proof. Properties 2–5 are all straightforwardly

![]() $\Delta _1$

conditions. To check if a tree T is well founded we ask if there is a rank function

$\Delta _1$

conditions. To check if a tree T is well founded we ask if there is a rank function

![]() $f\in L$

such that

$f\in L$

such that

![]() $f(\sigma )=\mathrm {rank}(\mathrm {Ext}(T, \sigma ))$

, so a

$f(\sigma )=\mathrm {rank}(\mathrm {Ext}(T, \sigma ))$

, so a

![]() $\Sigma _1$

question. So property 1 is a

$\Sigma _1$

question. So property 1 is a

![]() $\Sigma _1$

condition. Hence the set of valid conditions is

$\Sigma _1$

condition. Hence the set of valid conditions is

![]() $L_{\omega _1^{{CK}}}$

-c.e.

$L_{\omega _1^{{CK}}}$

-c.e.

![]() $q\le p$

is clearly

$q\le p$

is clearly

![]() $\Delta _0$

so

$\Delta _0$

so

![]() $\le $

is an

$\le $

is an

![]() $L_{\omega _1^{{CK}}}$

-computable relation with

$L_{\omega _1^{{CK}}}$

-computable relation with

![]() $L_{\omega _1^{{CK}}}$

-c.e. domain.

$L_{\omega _1^{{CK}}}$

-c.e. domain.

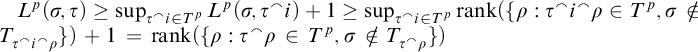

Proposition 3.3. For a condition p we have ![]() for all

for all

![]() $\sigma ,\tau \in T^p$

.

$\sigma ,\tau \in T^p$

.

Proof. We will use induction on

![]() $L^p(\sigma ,\tau )$

. Base case,

$L^p(\sigma ,\tau )$

. Base case,

![]() $L^p(\sigma ,\tau )=0$

. Then

$L^p(\sigma ,\tau )=0$

. Then

![]() $\sigma \in T_\tau $

so

$\sigma \in T_\tau $

so ![]() and

and

![]() $\mathrm {rank}(\emptyset )=0$

. Now suppose the proposition holds for all

$\mathrm {rank}(\emptyset )=0$

. Now suppose the proposition holds for all

![]() $\beta <\alpha $

and

$\beta <\alpha $

and

![]() $L^p(\sigma ,\tau )=\alpha $

. Then we have for each

$L^p(\sigma ,\tau )=\alpha $

. Then we have for each ![]() we have

we have ![]() by induction hypothesis. By property 4 and definition of

by induction hypothesis. By property 4 and definition of

![]() $\mathrm {rank}$

we have

$\mathrm {rank}$

we have  .

.

In order for this forcing notion to have nontrivial generics we need a way to extend conditions. Fix a condition p. Let

![]() $A\subseteq \omega ^{<\omega }$

be a set such that for all

$A\subseteq \omega ^{<\omega }$

be a set such that for all ![]() we have

we have

![]() $\sigma \in T^p$

and

$\sigma \in T^p$

and ![]() . For such an A we can define

. For such an A we can define

![]() $q=p[A]$

by

$q=p[A]$

by

![]() $T^{q}=T^p\cup A$

and

$T^{q}=T^p\cup A$

and

![]() $L^{q}$

given by

$L^{q}$

given by

Lemma 3.4. If A meets the requirement of the definition then

![]() $p[A]$

is a valid condition. If we also have that

$p[A]$

is a valid condition. If we also have that

![]() $T^p\preceq T^p\cup A$

then

$T^p\preceq T^p\cup A$

then

![]() $p[A]\le p$

.

$p[A]\le p$

.

Proof. We show that

![]() $q=p[A]$

is well defined. Our requirement for A ensures that 1 and 2 hold. For 3–5, since

$q=p[A]$

is well defined. Our requirement for A ensures that 1 and 2 hold. For 3–5, since

![]() $L^p=L^q\restriction T^p\times T^p$

the only way we can run into a problem is when considering

$L^p=L^q\restriction T^p\times T^p$

the only way we can run into a problem is when considering ![]() . If

. If

![]() $\rho \prec \tau \in T^q$

then by definition

$\rho \prec \tau \in T^q$

then by definition ![]() . If

. If ![]() then

then

![]() $L^p(\tau ,\rho )\ge L^p(\tau , \sigma )$

. If

$L^p(\tau ,\rho )\ge L^p(\tau , \sigma )$

. If

![]() $0<L^p(\tau , {\sigma })<\omega $

then

$0<L^p(\tau , {\sigma })<\omega $

then ![]() . If

. If

![]() $L^p(\tau , {\sigma })\ge \omega $

then

$L^p(\tau , {\sigma })\ge \omega $

then ![]() . So 4 holds.

. So 4 holds.

Fix n and

![]() $\tau $

and consider the set

$\tau $

and consider the set

![]() $\{\rho : L^q(\rho ,\tau )\le n\}$

. If

$\{\rho : L^q(\rho ,\tau )\le n\}$

. If

![]() $\tau \in T^p$

then we have added at most n many elements to the set, so it is still finite. If

$\tau \in T^p$

then we have added at most n many elements to the set, so it is still finite. If ![]() and

and

![]() $\rho $

is in this set then either

$\rho $

is in this set then either

![]() $\rho $

belongs to the finite set

$\rho $

belongs to the finite set

![]() $\{\rho : L^p(\rho ,{\sigma })\le n+1\}$

or

$\{\rho : L^p(\rho ,{\sigma })\le n+1\}$

or

![]() $\langle \rho \rangle \le n$

. So there are only finitely many

$\langle \rho \rangle \le n$

. So there are only finitely many

![]() $\rho $

that can be in

$\rho $

that can be in

![]() $\{\rho : L^q(\rho ,\tau )\le n\}$

. So 5 holds.

$\{\rho : L^q(\rho ,\tau )\le n\}$

. So 5 holds.

Now consider the set

![]() $\{\rho : L^q(\rho ,\tau )=0\}$

. If

$\{\rho : L^q(\rho ,\tau )=0\}$

. If

![]() $\tau \in T^p$

then

$\tau \in T^p$

then

![]() $L^q({\sigma },\tau )\ge 1$

for each

$L^q({\sigma },\tau )\ge 1$

for each

![]() $\sigma \in A$

, so we have

$\sigma \in A$

, so we have

![]() $\{\rho : L^q(\rho ,\tau )=0\}=\{\rho : L^p(\rho ,\tau )=0\}=T_\tau $

. If

$\{\rho : L^q(\rho ,\tau )=0\}=\{\rho : L^p(\rho ,\tau )=0\}=T_\tau $

. If

![]() $\tau \in A$

then by definition of

$\tau \in A$

then by definition of

![]() $L^q$

we have

$L^q$

we have

![]() $\rho \in T_{\tau }$

if and only if

$\rho \in T_{\tau }$

if and only if

![]() $L^q(\rho ,{\tau })=0$

. So 3 holds.

$L^q(\rho ,{\tau })=0$

. So 3 holds.

Since

![]() $L^p\subseteq L^q$

if

$L^p\subseteq L^q$

if

![]() $T^p\preceq T^p\cup A=T^q$

then

$T^p\preceq T^p\cup A=T^q$

then

![]() $p[A]\le p$

.

$p[A]\le p$

.

Corollary 3.5. If

![]() $\mathcal {G}$

is a sufficiently generic filter then

$\mathcal {G}$

is a sufficiently generic filter then

![]() $T^{\mathcal {G}}$

is a uniformly e-pointed tree with no dead ends.

$T^{\mathcal {G}}$

is a uniformly e-pointed tree with no dead ends.

Proof. First we show that for each condition p and

![]() $\sigma \in T^p$

the set

$\sigma \in T^p$

the set

![]() $\{q\le p: \sigma \text { is not a dead end}\}$

is dense below p. If

$\{q\le p: \sigma \text { is not a dead end}\}$

is dense below p. If

![]() $\sigma $

is a dead end in

$\sigma $

is a dead end in

![]() $T^p$

then enumeration of

$T^p$

then enumeration of

![]() $(T_\sigma )_{\sigma \in \omega ^\omega }$

gives us an i such that

$(T_\sigma )_{\sigma \in \omega ^\omega }$

gives us an i such that ![]() . Thus we can take

. Thus we can take ![]() where

where

![]() $\sigma $

is no longer a dead end. So

$\sigma $

is no longer a dead end. So

![]() $T^{\mathcal {G}}$

does not have any dead ends.

$T^{\mathcal {G}}$

does not have any dead ends.

To show that

![]() $T^{\mathcal {G}}$

is uniformly e-pointed consider some path

$T^{\mathcal {G}}$

is uniformly e-pointed consider some path

![]() $P\in [T^{\mathcal {G}}]$

. We will show that

$P\in [T^{\mathcal {G}}]$

. We will show that

![]() $T^G=\bigcup _{\sigma \prec P}T_\sigma $

. If

$T^G=\bigcup _{\sigma \prec P}T_\sigma $

. If

![]() $\sigma \in T^{\mathcal {G}}$

then

$\sigma \in T^{\mathcal {G}}$

then

![]() $\sigma \in T^p$

for some

$\sigma \in T^p$

for some

![]() $p\in G$

. So by property 2 we have that

$p\in G$

. So by property 2 we have that

![]() $T_\sigma \subseteq T^p\subseteq T^{\mathcal {G}}$

. On the other hand if

$T_\sigma \subseteq T^p\subseteq T^{\mathcal {G}}$

. On the other hand if

![]() $\sigma \in T^{\mathcal {G}}$

then consider a sequence

$\sigma \in T^{\mathcal {G}}$

then consider a sequence

![]() $p_0>p_1>\dots \subseteq G$

with

$p_0>p_1>\dots \subseteq G$

with

![]() $P\restriction n\in T^{p_n}$

. Now consider the sequence

$P\restriction n\in T^{p_n}$

. Now consider the sequence

![]() $(L^{p_n}(\sigma ,P\restriction n))_{n\in \omega }$

. Since

$(L^{p_n}(\sigma ,P\restriction n))_{n\in \omega }$

. Since

![]() $L^{p_n}\subseteq L^{p_{n+1}}$

property 4 means that this is a decreasing sequence. Since

$L^{p_n}\subseteq L^{p_{n+1}}$

property 4 means that this is a decreasing sequence. Since

![]() ${\omega _1^{{CK}}}$

is a well order there is n such that

${\omega _1^{{CK}}}$

is a well order there is n such that

![]() $L^{p_n}(\sigma ,P\restriction n)=0$

. So we have that

$L^{p_n}(\sigma ,P\restriction n)=0$

. So we have that

![]() $\sigma \in T_{P\restriction n}$

. Hence

$\sigma \in T_{P\restriction n}$

. Hence

![]() $T^G=\bigcup _{\sigma \prec P}T_\sigma $

.

$T^G=\bigcup _{\sigma \prec P}T_\sigma $

.

3.2 The forcing relation

Now that we have a forcing partial order and some useful operations on conditions, we will talk about forcing with conditions. We define

![]() $S_e^p\subseteq \omega ^{<\omega }$

to be the tree where

$S_e^p\subseteq \omega ^{<\omega }$

to be the tree where

![]() $x\notin S_e^p\iff \exists y\prec x [y\in \Psi _e(T^p)]$

. For a filter

$x\notin S_e^p\iff \exists y\prec x [y\in \Psi _e(T^p)]$

. For a filter

![]() $\mathcal {G}$

we define

$\mathcal {G}$

we define

![]() $S_e^{\mathcal {G}} \bigcap _{p\in \mathcal {G}} S_e^p$

. So

$S_e^{\mathcal {G}} \bigcap _{p\in \mathcal {G}} S_e^p$

. So

![]() $x\notin S_e^{\mathcal {G}}\iff \exists y\prec x [y\in \Psi _e(T^{\mathcal {G}})]$

. By definition of

$x\notin S_e^{\mathcal {G}}\iff \exists y\prec x [y\in \Psi _e(T^{\mathcal {G}})]$

. By definition of

![]() $\Gamma _e$

we have that

$\Gamma _e$

we have that

![]() $\Gamma _e(T^{\mathcal {G}})=\{n: \mathrm {Ext}(S_{e}^{\mathcal {G}}, n) \text { is well founded}\}$

.

$\Gamma _e(T^{\mathcal {G}})=\{n: \mathrm {Ext}(S_{e}^{\mathcal {G}}, n) \text { is well founded}\}$

.

We define

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

if

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

if

![]() $\mathrm {rank}(\mathrm {Ext}(S_e^p,x))\le \alpha $

. From this definition it is clear that if

$\mathrm {rank}(\mathrm {Ext}(S_e^p,x))\le \alpha $

. From this definition it is clear that if

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

then for any

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

then for any

![]() $\mathcal {G}\ni p$

we have that

$\mathcal {G}\ni p$

we have that

![]() $\mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

. We now work towards proving the opposite direction.

$\mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

. We now work towards proving the opposite direction.

Lemma 3.6. Fix a condition p. Suppose that for each

![]() $i\in \omega ,r\le p$

there is

$i\in \omega ,r\le p$

there is

![]() $q\le r$

such that

$q\le r$

such that ![]() for some

for some

![]() $\beta < {\omega _1^{{CK}}}$

then there is

$\beta < {\omega _1^{{CK}}}$

then there is

![]() $\hat {p}\le p$

and

$\hat {p}\le p$

and

![]() $\alpha <{\omega _1^{{CK}}}$

such that

$\alpha <{\omega _1^{{CK}}}$

such that

![]() $\hat {p}\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

.

$\hat {p}\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

.

Proof. The function

![]() $(q,e)\mapsto S_e^q$

is

$(q,e)\mapsto S_e^q$

is

![]() $L_{\omega _1^{{CK}}}$

-partial computable so by composition, the map

$L_{\omega _1^{{CK}}}$

-partial computable so by composition, the map

![]() $(q,e,x)\mapsto \mathrm {rank}(\mathrm {Ext}(S_e^q, x))$

is also

$(q,e,x)\mapsto \mathrm {rank}(\mathrm {Ext}(S_e^q, x))$

is also

![]() $L_{\omega _1^{{CK}}}$

-partial computable. So the set

$L_{\omega _1^{{CK}}}$

-partial computable. So the set

is

![]() $L_{\omega _1^{{CK}}}$

-c.e.

$L_{\omega _1^{{CK}}}$

-c.e.

For each i we will define a condition

![]() $r_i$

as follows. For each leaf

$r_i$

as follows. For each leaf

![]() $\sigma \in T^p$

let

$\sigma \in T^p$

let

![]() $k_\sigma $

be the ith number such that

$k_\sigma $

be the ith number such that ![]() . Now we define

. Now we define ![]() and define

and define

![]() $r_i=p[A_i]$

. The definition of

$r_i=p[A_i]$

. The definition of

![]() $r_i$

only involves computable operations so the map

$r_i$

only involves computable operations so the map

![]() $i\mapsto r_i$

is

$i\mapsto r_i$

is

![]() $L_{\omega _1^{{CK}}}$

-computable and since

$L_{\omega _1^{{CK}}}$

-computable and since

![]() $ \omega \in L_{\omega _1^{{CK}}}$

the set

$ \omega \in L_{\omega _1^{{CK}}}$

the set

![]() $\{(i,r_i)\}\in L_{\omega _1^{{CK}}}$

by

$\{(i,r_i)\}\in L_{\omega _1^{{CK}}}$

by

![]() $\Sigma _1$

-collection. Using

$\Sigma _1$

-collection. Using

![]() $\Sigma _1$

-collection again, this time with the set C, we get that there is a function

$\Sigma _1$

-collection again, this time with the set C, we get that there is a function

![]() $f\in L_{\omega _1^{{CK}}}$

such that

$f\in L_{\omega _1^{{CK}}}$

such that

![]() $f(i) =(q_i,\beta _i)$

for some

$f(i) =(q_i,\beta _i)$

for some

![]() $q_i\le r_i$

and

$q_i\le r_i$

and

![]() $\beta _i$

such that

$\beta _i$

such that ![]() . Let

. Let

![]() $\alpha =\sup _i\{\beta _i:i\in \omega \}$

. Since

$\alpha =\sup _i\{\beta _i:i\in \omega \}$

. Since

![]() $f\in L_{\omega _1^{{CK}}}$

,

$f\in L_{\omega _1^{{CK}}}$

,

![]() $\alpha <{\omega _1^{{CK}}}$

.

$\alpha <{\omega _1^{{CK}}}$

.

To build

![]() $\hat {p}$

let

$\hat {p}$

let

![]() $T^{\hat {p}}=\bigcup _{i\in \omega } T^{q_i}$

. Since

$T^{\hat {p}}=\bigcup _{i\in \omega } T^{q_i}$

. Since

![]() $f\in L_{\omega _1^{{CK}}}$

we have that

$f\in L_{\omega _1^{{CK}}}$

we have that

![]() $T^{\hat {p}}\in L_{\omega _1^{{CK}}}$

.

$T^{\hat {p}}\in L_{\omega _1^{{CK}}}$

.

![]() $T^{\hat {p}}$

will satisfy property 1 because the sets

$T^{\hat {p}}$

will satisfy property 1 because the sets

![]() $T^{q_i}\setminus T^p$

are disjoint and so

$T^{q_i}\setminus T^p$

are disjoint and so

![]() $T^{\hat {p}}$

is well founded.

$T^{\hat {p}}$

is well founded.

We define

![]() $L^{\hat {p}}$

using the following tools. For

$L^{\hat {p}}$

using the following tools. For

![]() $\tau \in T^{\hat {p}}$

let

$\tau \in T^{\hat {p}}$

let

![]() $\tau _p$

be the longest initial segment of

$\tau _p$

be the longest initial segment of

![]() $\tau $

that is in

$\tau $

that is in

![]() $T^p$

. For

$T^p$

. For

![]() $\sigma ,\tau \in T^{\hat {p}}$

let

$\sigma ,\tau \in T^{\hat {p}}$

let ![]() . Note that both of these operations are

. Note that both of these operations are

![]() $L_{\omega _1^{{CK}}}$

-computable. Define

$L_{\omega _1^{{CK}}}$

-computable. Define

$$\begin{align*}L^{\hat{p}}(\sigma,\tau)= \begin{cases} L^{p}(\sigma,\tau) & \sigma,\tau\in T^p\\ 0 & \sigma \in T_\tau\\ L^p(\sigma,\tau_p)- |\tau|+|\tau_p| & \sigma\in T^p\setminus T_\tau,\tau\notin T^p, L^p(\sigma,\tau_p)<\omega\\ \langle \sigma\rangle + \mathrm{rank}(\sigma,\tau) &\text{otherwise.} \end{cases} \end{align*}$$

$$\begin{align*}L^{\hat{p}}(\sigma,\tau)= \begin{cases} L^{p}(\sigma,\tau) & \sigma,\tau\in T^p\\ 0 & \sigma \in T_\tau\\ L^p(\sigma,\tau_p)- |\tau|+|\tau_p| & \sigma\in T^p\setminus T_\tau,\tau\notin T^p, L^p(\sigma,\tau_p)<\omega\\ \langle \sigma\rangle + \mathrm{rank}(\sigma,\tau) &\text{otherwise.} \end{cases} \end{align*}$$

Now we prove that

![]() $\hat {p}$

is a valid condition. Since it is built in an effective way out of

$\hat {p}$

is a valid condition. Since it is built in an effective way out of

![]() $L_{\omega _1^{{CK}}}$

-computable functions

$L_{\omega _1^{{CK}}}$

-computable functions

![]() $L^{\hat {p}}$

is

$L^{\hat {p}}$

is

![]() $L_{\omega _1^{{CK}}}$

-computable. Since

$L_{\omega _1^{{CK}}}$

-computable. Since

![]() $\mathrm {dom}(L^{\hat {p}})\in L_{\omega _1^{{CK}}}$

we have that

$\mathrm {dom}(L^{\hat {p}})\in L_{\omega _1^{{CK}}}$

we have that

![]() $L^{\hat {p}}\in L_{\omega _1^{{CK}}}$

. So we have that

$L^{\hat {p}}\in L_{\omega _1^{{CK}}}$

. So we have that

![]() $\hat {p}\in L_{\omega _1^{{CK}}}$

.

$\hat {p}\in L_{\omega _1^{{CK}}}$

.

Now we show that

![]() $\hat {p}$

has the properties of a condition. Property 2 is straightforward. Property 3 follows from the definition of

$\hat {p}$

has the properties of a condition. Property 2 is straightforward. Property 3 follows from the definition of

![]() $L^{\hat {p}}$

and the fact that it held for each

$L^{\hat {p}}$

and the fact that it held for each

![]() ${q_i}$

.

${q_i}$

.

For property 4 consider

![]() $\sigma ,\rho \prec \tau $

, and suppose that

$\sigma ,\rho \prec \tau $

, and suppose that

![]() $L^{\hat {p}}(\sigma ,\rho )>0$

. We look at several cases:

$L^{\hat {p}}(\sigma ,\rho )>0$

. We look at several cases:

-

•

$\sigma ,\tau \in T^p$

. Then

$\sigma ,\tau \in T^p$

. Then

$\rho \in T^p$

so by 4 for p we have

$\rho \in T^p$

so by 4 for p we have

$L^{\hat {p}}(\sigma ,\tau )=L^p(\sigma ,\tau )<L^p(\sigma ,\rho )=L^{\hat {p}}(\sigma ,\rho )$

.

$L^{\hat {p}}(\sigma ,\tau )=L^p(\sigma ,\tau )<L^p(\sigma ,\rho )=L^{\hat {p}}(\sigma ,\rho )$

. -

•

$\sigma \in T_\tau $

. Then

$\sigma \in T_\tau $

. Then

$L^{\hat {p}}(\sigma ,\tau )=0<L^{\hat {p}}(\sigma ,\rho )$

.

$L^{\hat {p}}(\sigma ,\tau )=0<L^{\hat {p}}(\sigma ,\rho )$

. -

•

$\sigma \in T^p\setminus T_\tau ,\tau \notin T^p, L^p(\sigma ,\tau _p)<\omega $

. We have two subcases: if

$\sigma \in T^p\setminus T_\tau ,\tau \notin T^p, L^p(\sigma ,\tau _p)<\omega $

. We have two subcases: if

$\rho \notin T^p$

then

$\rho \notin T^p$

then

$\tau _p=\rho _p$

so

$\tau _p=\rho _p$

so

$L^{\hat {p}}(\sigma ,\tau )= L^p(\sigma ,\tau _p)- |\tau |+|\tau _p|< L^p(\sigma ,\tau _p)- |\rho |+|\rho _p| =L^{\hat {p}}(\sigma ,\rho )$

. If

$L^{\hat {p}}(\sigma ,\tau )= L^p(\sigma ,\tau _p)- |\tau |+|\tau _p|< L^p(\sigma ,\tau _p)- |\rho |+|\rho _p| =L^{\hat {p}}(\sigma ,\rho )$

. If

$\rho \in T^p$

then

$\rho \in T^p$

then

$\rho \preceq \tau _p$

so

$\rho \preceq \tau _p$

so

$L^{\hat {p}}(\sigma ,\tau )= L^p(\sigma ,\tau _p)- |\tau |+|\tau _p|< L^p(\sigma ,\tau _p)\le L^p(\sigma ,\rho ) =L^{\hat {p}}(\sigma ,\rho )$

.

$L^{\hat {p}}(\sigma ,\tau )= L^p(\sigma ,\tau _p)- |\tau |+|\tau _p|< L^p(\sigma ,\tau _p)\le L^p(\sigma ,\rho ) =L^{\hat {p}}(\sigma ,\rho )$

. -

• Otherwise

$L^{\hat {p}}(\sigma ,\tau )= \langle \sigma \rangle + \mathrm {rank}(\sigma ,\tau )$

. If

$L^{\hat {p}}(\sigma ,\tau )= \langle \sigma \rangle + \mathrm {rank}(\sigma ,\tau )$

. If

$\rho \notin T^p$

or

$\rho \notin T^p$

or

$\sigma \notin T^p$

then

$\sigma \notin T^p$

then

$L^{\hat {p}}(\sigma ,\rho )=\langle \sigma \rangle + \mathrm {rank}(\sigma ,\rho )>\langle \sigma \rangle + \mathrm {rank}(\sigma ,\tau )$

as

$L^{\hat {p}}(\sigma ,\rho )=\langle \sigma \rangle + \mathrm {rank}(\sigma ,\rho )>\langle \sigma \rangle + \mathrm {rank}(\sigma ,\tau )$

as

$\rho \prec \tau $

. If

$\rho \prec \tau $

. If

$\rho ,\sigma \in T^p$

then consider i such that

$\rho ,\sigma \in T^p$

then consider i such that

$\tau \in T^{q_i}$

. By Proposition 3.3 we have that

$\tau \in T^{q_i}$

. By Proposition 3.3 we have that

$\mathrm {rank}(\sigma ,\tau )\le L^{q_i}(\sigma ,\tau )<L^{q_i}(\sigma , \rho )=L^p(\sigma ,\rho )=L^{\hat {p}}(\sigma ,\rho )$

.

$\mathrm {rank}(\sigma ,\tau )\le L^{q_i}(\sigma ,\tau )<L^{q_i}(\sigma , \rho )=L^p(\sigma ,\rho )=L^{\hat {p}}(\sigma ,\rho )$

.

For property 5 fix

![]() $\tau $

and n. Suppose that

$\tau $

and n. Suppose that

![]() $L^{\hat {p}}(\sigma ,\tau )\le n$

. Then one of the following is true:

$L^{\hat {p}}(\sigma ,\tau )\le n$

. Then one of the following is true:

![]() $L^p(\sigma ,\tau )\le n$

or

$L^p(\sigma ,\tau )\le n$

or

![]() $\sigma \in T_\tau $

or

$\sigma \in T_\tau $

or

![]() $L^p(\sigma ,\tau _p)-|\tau |+|\tau _p|\le n$

or

$L^p(\sigma ,\tau _p)-|\tau |+|\tau _p|\le n$

or

![]() $\langle \sigma \rangle +\mathrm {rank}(\sigma ,\tau ) \le n$

. So

$\langle \sigma \rangle +\mathrm {rank}(\sigma ,\tau ) \le n$

. So

![]() $\sigma $

is a member of the finite set

$\sigma $

is a member of the finite set

![]() $\{\sigma : L^p(\sigma ,\tau )\le n\}\cup T_\tau \cup \{\sigma : L^p(\sigma ,\tau _p)\le n +|\tau |\}\cup \{\sigma : \langle \sigma \rangle \le n\}$

. Hence the set

$\{\sigma : L^p(\sigma ,\tau )\le n\}\cup T_\tau \cup \{\sigma : L^p(\sigma ,\tau _p)\le n +|\tau |\}\cup \{\sigma : \langle \sigma \rangle \le n\}$

. Hence the set

![]() $\{\sigma : L^{\hat {p}}(\sigma ,\tau )\le n\}$

is finite.

$\{\sigma : L^{\hat {p}}(\sigma ,\tau )\le n\}$

is finite.

So we have shown that

![]() $\hat {p}$

is a valid condition. Since

$\hat {p}$

is a valid condition. Since

![]() $T^p\preceq T^{\hat {p}}$

and

$T^p\preceq T^{\hat {p}}$

and

![]() $L^p\subseteq L^{\hat {p}}$

we have

$L^p\subseteq L^{\hat {p}}$

we have

![]() $\hat {p}\le p$

. Consider

$\hat {p}\le p$

. Consider

![]() $\mathrm {Ext}(S_e^{\hat {p}}, x)$

. By definition of

$\mathrm {Ext}(S_e^{\hat {p}}, x)$

. By definition of

![]() $T^{\hat {p}}$

we have that

$T^{\hat {p}}$

we have that

![]() $S_e^{\hat {p}}\subseteq S_e^{q_i}$

for each

$S_e^{\hat {p}}\subseteq S_e^{q_i}$

for each

![]() $i\in \omega $

, so

$i\in \omega $

, so ![]() . Thus we have

. Thus we have

![]() $\mathrm {rank}(\mathrm {Ext}(S_e^{\hat {p}}, x))\le \alpha $

as desired.

$\mathrm {rank}(\mathrm {Ext}(S_e^{\hat {p}}, x))\le \alpha $

as desired.

Now we use this lemma to show that if a condition p cannot be extended to some q that forces

![]() $S_e^{\mathcal {G}}$

to have computable rank then p in fact forces

$S_e^{\mathcal {G}}$

to have computable rank then p in fact forces

![]() $S_e^{\mathcal {G}}$

to be ill founded. We say that

$S_e^{\mathcal {G}}$

to be ill founded. We say that

![]() $p\Vdash \mathrm {Ext}(S^{\mathcal {G}}_e, x)$

is ill founded if for all sufficiently generic filters

$p\Vdash \mathrm {Ext}(S^{\mathcal {G}}_e, x)$

is ill founded if for all sufficiently generic filters

![]() $\mathcal {G}\ni p$

we have that

$\mathcal {G}\ni p$

we have that

![]() $\mathrm {Ext}(S_e^{\mathcal {G}}, x)$

contains an infinite path.

$\mathrm {Ext}(S_e^{\mathcal {G}}, x)$

contains an infinite path.

Lemma 3.7. If for all

![]() $ q\le p$

and

$ q\le p$

and

![]() $\alpha <{\omega _1^{{CK}}}$

we have

$\alpha <{\omega _1^{{CK}}}$

we have

![]() $q\nVdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

then

$q\nVdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

then

![]() $p\Vdash \mathrm {Ext}(S^{\mathcal {G}}_e, x)$

is ill founded.

$p\Vdash \mathrm {Ext}(S^{\mathcal {G}}_e, x)$

is ill founded.

Proof. We define

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

if

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

if

![]() $\forall q\le p, \alpha <{\omega _1^{{CK}}} [q\nVdash \mathrm {rank} (\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha ]$

. To prove this lemma, we first prove the simpler statement: if

$\forall q\le p, \alpha <{\omega _1^{{CK}}} [q\nVdash \mathrm {rank} (\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha ]$

. To prove this lemma, we first prove the simpler statement: if

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

then there is

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

then there is

![]() $q\le p,i\in \omega $

such that

$q\le p,i\in \omega $

such that ![]() .

.

Suppose this statement fails for some p and x. Then

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

, so we have that

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

, so we have that

![]() $\forall q\le p, \alpha <{\omega _1^{{CK}}} [q\nVdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha ]$

, and there is no

$\forall q\le p, \alpha <{\omega _1^{{CK}}} [q\nVdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha ]$

, and there is no

![]() $q\le p, i\in \omega $

such that

$q\le p, i\in \omega $

such that ![]() so we have

so we have ![]() . So by Lemma 3.6 there is

. So by Lemma 3.6 there is

![]() $\hat {p}\le p$

such that

$\hat {p}\le p$

such that

![]() $\hat {p}\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

. This contradicts the fact that

$\hat {p}\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))\le \alpha $

. This contradicts the fact that

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}},x))=\infty $

, so the statement holds.

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}},x))=\infty $

, so the statement holds.

Now we use this to prove the lemma. Since the set

![]() $\{q:q\le p\}\supseteq \{q:q\le r\}$

for

$\{q:q\le p\}\supseteq \{q:q\le r\}$

for

![]() $r\le p$

we have that if

$r\le p$

we have that if

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

then

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

then

![]() $r\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

for all

$r\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

for all

![]() $r\le p$

. So if

$r\le p$

. So if

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

then the set

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

then the set ![]() is dense above p. So if

is dense above p. So if

![]() $p\in \mathcal {G}$

for some sufficiently generic

$p\in \mathcal {G}$

for some sufficiently generic

![]() $\mathcal {G}$

then there is

$\mathcal {G}$

then there is

![]() $q\in \mathcal {G}$

and

$q\in \mathcal {G}$

and

![]() $i\in \omega $

such that

$i\in \omega $

such that ![]() . By repeating this argument we can build a sequence

. By repeating this argument we can build a sequence

![]() $X\in \omega ^\omega $

such that for all

$X\in \omega ^\omega $

such that for all

![]() $y\prec X$

there is

$y\prec X$

there is

![]() $q\in \mathcal {G}$

such that

$q\in \mathcal {G}$

such that

![]() $q\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, y))=\infty $

. We have that

$q\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, y))=\infty $

. We have that

![]() $X\in S_e^{\mathcal {G}}$

as otherwise there would be some

$X\in S_e^{\mathcal {G}}$

as otherwise there would be some

![]() $r\in \mathcal {G}$

and

$r\in \mathcal {G}$

and

![]() $y\prec X$

such that

$y\prec X$

such that

![]() $r\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, y))=0$

, a contradiction of

$r\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, y))=0$

, a contradiction of

![]() $\mathcal {G}$

being a filter and

$\mathcal {G}$

being a filter and

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

.

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, x))=\infty $

.

Now we have all the tools needed to prove the main result of this section.

Theorem 3.1. There is a uniformly e-pointed tree

![]() $T^{\mathcal {G}}\subseteq \omega ^{<\omega }$

with no dead ends such that

$T^{\mathcal {G}}\subseteq \omega ^{<\omega }$

with no dead ends such that

![]() $T^{\mathcal {G}}$

is not hypertotal.

$T^{\mathcal {G}}$

is not hypertotal.

Proof. We show that for a sufficiently generic

![]() $\mathcal {G}$

we have that

$\mathcal {G}$

we have that

![]() $T^{\mathcal {G}}$

is not hypertotal. We say

$T^{\mathcal {G}}$

is not hypertotal. We say

![]() $p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})$

if there is

$p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})$

if there is

![]() $\sigma \in T^p$

and

$\sigma \in T^p$

and

![]() $\alpha <{\omega _1^{{CK}}}$

such that

$\alpha <{\omega _1^{{CK}}}$

such that

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle ))\le \alpha $

, or if there is

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle ))\le \alpha $

, or if there is

![]() $\sigma \notin T^p$

such that the initial segment of

$\sigma \notin T^p$

such that the initial segment of

![]() $\sigma $

in

$\sigma $

in

![]() $T^p$

is not a leaf and

$T^p$

is not a leaf and

![]() $p\Vdash \mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )$

is ill founded. To show that

$p\Vdash \mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )$

is ill founded. To show that

![]() $T^{\mathcal {G}}$

is not hypertotal it is enough for us to show that the sets

$T^{\mathcal {G}}$

is not hypertotal it is enough for us to show that the sets

![]() $\{p: p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})\}$

are dense for each e. To see this consider the two cases. If

$\{p: p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})\}$

are dense for each e. To see this consider the two cases. If

![]() $p\in \mathcal {G}$

and there is

$p\in \mathcal {G}$

and there is

![]() $\sigma \in T^p$

and

$\sigma \in T^p$

and

![]() $\alpha <{\omega _1^{{CK}}}$

such that

$\alpha <{\omega _1^{{CK}}}$

such that

![]() $p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle ))\le \alpha $

then we have that

$p\Vdash \mathrm {rank}(\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle ))\le \alpha $

then we have that

![]() $\mathrm {Ext}(S_e^p, \langle \sigma \rangle )$

is well founded and so

$\mathrm {Ext}(S_e^p, \langle \sigma \rangle )$

is well founded and so

![]() $\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )\subseteq \mathrm {Ext}(S_e^p, \langle \sigma \rangle )$

is also well founded so

$\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )\subseteq \mathrm {Ext}(S_e^p, \langle \sigma \rangle )$

is also well founded so

![]() $\sigma \in T^{\mathcal {G}}\cap \Gamma _e(T^{\mathcal {G}})$

. On the other hand if there is

$\sigma \in T^{\mathcal {G}}\cap \Gamma _e(T^{\mathcal {G}})$

. On the other hand if there is

![]() $\sigma \notin T^p$

such that the initial segment of

$\sigma \notin T^p$

such that the initial segment of

![]() $\sigma $

in

$\sigma $

in

![]() $T^p$

is not a leaf and

$T^p$

is not a leaf and

![]() $p\Vdash \mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )$

is ill founded, then by definition

$p\Vdash \mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )$

is ill founded, then by definition

![]() $p\in \mathcal {G}$

means that

$p\in \mathcal {G}$

means that

![]() $\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )$

is ill founded, so

$\mathrm {Ext}(S_e^{\mathcal {G}}, \langle \sigma \rangle )$

is ill founded, so

![]() $\sigma \notin \Gamma _e(T^{\mathcal {G}})$

. Since the initial segment of

$\sigma \notin \Gamma _e(T^{\mathcal {G}})$

. Since the initial segment of

![]() $\sigma $

in

$\sigma $

in

![]() $T^p$

is not a leaf, no

$T^p$

is not a leaf, no

![]() $q\le p$

has

$q\le p$

has

![]() $\sigma \in T^q$

so

$\sigma \in T^q$

so

![]() $\sigma \notin T^{\mathcal {G}}$

.

$\sigma \notin T^{\mathcal {G}}$

.

Suppose towards a contradiction that

![]() $\{p: p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})\}$

is not dense. Let p be such that for all

$\{p: p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})\}$

is not dense. Let p be such that for all

![]() $q\le p$

we have

$q\le p$

we have

![]() $q\nVdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})$

. Consider some leaf

$q\nVdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})$

. Consider some leaf

![]() $\sigma \in T^p$

and let

$\sigma \in T^p$

and let

![]() $i,j$

be such that

$i,j$

be such that ![]() . Now consider

. Now consider ![]() ; this is well defined by Lemma 3.4. By assumption on p we have that

; this is well defined by Lemma 3.4. By assumption on p we have that ![]() is ill founded, so by Lemma 3.7 there is

is ill founded, so by Lemma 3.7 there is

![]() $r\le q,\alpha <{\omega _1^{{CK}}}$

such that

$r\le q,\alpha <{\omega _1^{{CK}}}$

such that ![]() . Now consider

. Now consider ![]() . Since

. Since ![]() we have

we have ![]() and thus the condition

and thus the condition

![]() $r'$

is a valid condition. Since

$r'$

is a valid condition. Since

![]() $r\le p$

and

$r\le p$

and

![]() $\sigma $

is a leaf in

$\sigma $

is a leaf in

![]() $T^p$

we have that

$T^p$

we have that

![]() $r'\le p$

. But we have

$r'\le p$

. But we have

![]() $S_e^r\supseteq S_e^{r'}$

so

$S_e^r\supseteq S_e^{r'}$

so ![]() a contradiction. So we have that the set

a contradiction. So we have that the set

![]() $\{p: p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})\}$

is dense.

$\{p: p\Vdash \overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})\}$

is dense.

So for sufficiently generic

![]() $\mathcal {G}$

we have that

$\mathcal {G}$

we have that

![]() $T^{\mathcal {G}}$

is uniformly e-pointed without dead ends and for all e we have

$T^{\mathcal {G}}$

is uniformly e-pointed without dead ends and for all e we have

![]() $\overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})$

, and thus

$\overline {T^{\mathcal {G}}}\ne \Gamma _e(T^{\mathcal {G}})$

, and thus

![]() $\overline {T^{\mathcal {G}}}\nleq _{he} T^G$

.

$\overline {T^{\mathcal {G}}}\nleq _{he} T^G$

.

This now allows us to conclude the following.

Corollary 3.8. There are sets

![]() $A,B$

such that

$A,B$

such that

![]() $B\nleq _{he} A$

and for any X, if

$B\nleq _{he} A$

and for any X, if

![]() $A\le _{he}X\oplus \overline {X}$

then

$A\le _{he}X\oplus \overline {X}$

then

![]() $B\le _{he} X\oplus \overline {X}$

.

$B\le _{he} X\oplus \overline {X}$

.

Proof. We will have

![]() $A=T$

and

$A=T$

and

![]() $B=\overline {T}$

where T is a uniformly e-pointed tree with no dead ends that is not hypertotal. Suppose that T is

$B=\overline {T}$

where T is a uniformly e-pointed tree with no dead ends that is not hypertotal. Suppose that T is

![]() $\Pi ^1_1$

in X. Since T has no dead ends, there must be a path

$\Pi ^1_1$

in X. Since T has no dead ends, there must be a path

![]() $P\in [T]$

such that

$P\in [T]$

such that

![]() $P\le _h X$

. So

$P\le _h X$

. So

![]() $T\le _e P$

and by Lemma 2.3 we have

$T\le _e P$

and by Lemma 2.3 we have

![]() $\overline {T}\le _{he} \overline {P}\le _h X$

. So we get that

$\overline {T}\le _{he} \overline {P}\le _h X$

. So we get that

![]() $\overline {T}\le _{he}X\oplus \overline {X}$

.

$\overline {T}\le _{he}X\oplus \overline {X}$

.

4 Downwards density

In this section we prove that the hyperenumeration degrees are downwards dense. The first part involves lifting the finite injury construction of the Gutteridge operator to a construction in

![]() $L_{\omega _1^{{CK}}}$

.

$L_{\omega _1^{{CK}}}$

.

4.1 The hyper Gutteridge operator

Gutteridge [Reference Gutteridge5] proved the downwards density of the non-

![]() $\Delta ^0_2$

enumeration degrees using an operator

$\Delta ^0_2$

enumeration degrees using an operator

![]() $\Theta $

with the properties that if

$\Theta $

with the properties that if

![]() $\Psi _e(\Theta (A))=A$

then A is c.e. and if

$\Psi _e(\Theta (A))=A$

then A is c.e. and if

![]() $\Theta (A)$

is c.e. then A is

$\Theta (A)$

is c.e. then A is

![]() $\Delta ^0_2$

. Here we will take Gutteridge’s construction and run it in

$\Delta ^0_2$

. Here we will take Gutteridge’s construction and run it in

![]() $L_{\omega _1^{{CK}}}$

to produce a hyperenumeration operator

$L_{\omega _1^{{CK}}}$

to produce a hyperenumeration operator

![]() $\Lambda $

with similar properties. Thus we get the following result.

$\Lambda $

with similar properties. Thus we get the following result.

Theorem 4.1. If

![]() $A\subseteq \omega $

and

$A\subseteq \omega $

and

![]() $A\nleq _{he} \overline {\mathcal {O}}$

then there is

$A\nleq _{he} \overline {\mathcal {O}}$

then there is

![]() $C\subseteq \omega $

such that

$C\subseteq \omega $

such that

![]() $\emptyset <_{he} C<_{he} A$

.

$\emptyset <_{he} C<_{he} A$

.

Proof. Recall the definition of

![]() $\Theta $

: there is a c.e. set

$\Theta $

: there is a c.e. set

![]() $B=\bigoplus _{k\in \omega }n_k$

which is the join of

$B=\bigoplus _{k\in \omega }n_k$

which is the join of

![]() $\omega $