Introduction

Embedded pragmatic clinical trials (ePCTs) are studies that evaluate interventions under real-world conditions, testing not only effectiveness but whether they can be implemented and sustained within existing systems [Reference Harrison1,Reference Tambor, Moloney and Greene2]. These trials depend on effective partnerships between researchers and healthcare providers to ensure that interventions are feasible, acceptable and aligned with health system priorities [Reference Harrison1,Reference McCreedy, Olson and Baier3] Yet engagement is often informal, without structured approaches to assess context or strengthen alignment before implementation.

The readiness assessment for pragmatic trials (RAPT) model was developed to help research teams evaluate the extent to which an intervention is prepared for pragmatic testing [Reference Baier, Jutkowitz, Mitchell, McCreedy and Mor4]. RAPT includes nine domains spanning evidence, implementation protocol, risk, feasibility, alignment, cost, measurement, acceptability, and potential impact. Although typically used as a research self-assessment, RAPT can also provide a shared framework for researchers and partners to examine feasibility, surface barriers, and identify priorities for refinement before launching a trial.

The US-based methods case study demonstrates how a structured framework can help research teams to identify contextual factors that would otherwise undermine feasibility, and how readiness assessment can evolve into and support active co-design and collaboration. We describe how RAPT was applied collaboratively to engage a physician group during ePCT planning. Using RAPT during early partner engagement may offer several advantages, for example enabling structured discussion of operational context, a factor linked to implementation success in ePCTs [5], and dialog that builds trust and accountability central to learning health system research [6,Reference Reddy, McCreedy, Mitchell and Baier7].

Materials and methods

Setting and partnership context

Bluestone Physician Services provides on-site primary, behavioral, and care-management services for adults in assisted living, memory care, and group home settings across several states in the United States. Leaders from Bluestone and Brown University’s Long-Term Care Quality & Innovation (Q&I) Lab share a commitment to generating evidence that improves care for older adults.

Collaboration between the two groups began in 2016, when we explored research opportunities and Bluestone revised its patient participation agreements to ask patients to consent to the use of their clinical data for research purposes. The partnership first resulted in a project in 2021, when we conducted a researcher-initiated ePCT on advance-care planning (ClinicalTrials.gov NCT04852055), a topic that took on urgency during the early days of the COVID-19 pandemic, when the burden of infection was high in congregate care settings [8] and it was important for assisted living residents being transferred to the hospital to have documented end of life wishes. That ePCT, which predated this case study, established foundational trust and formal processes for our partnership, including regular meetings, shared documentation, and transparent decision-making. These practices aligned expectations and deepened mutual understanding of Bluestone’s workflows, terminology, and priorities, laying the groundwork for subsequent projects [Reference Hikaka, McCreedy, Jutkowitz, McCarthy and Baier9].

By 2023, Bluestone leaders were initiating their own research ideas and suggested a project to address premature hospice referrals among assisted living residents with dementia, many of whom were later discharged from hospice alive. Bluestone leaders felt that the hospice referrals often reflected unmet behavioral health or palliative symptom needs that Bluestone clinicians could manage internally. They therefore proposed an intervention focused on refining their existing multidisciplinary case review process to better identify patients earlier and align referrals with patients’ needs and goals of care. Bluestone’s existing case review process was largely retrospective, identifying patients only after high-cost hospitalizations. Leaders therefore sought to assess the feasibility of a more proactive approach.

This intervention concept was preliminary, not yet operationalized or protocolized. To ensure that a future trial would be feasible in real-world care delivery, the Brown-Bluestone team used RAPT to structure planning and discussion. The goal was to clarify processes, data needs, and organizational readiness. Because Bluestone leaders were relatively new to formal research partnerships, RAPT also served to explicitly reinforce embedded research requirements and align study design with the day-to-day realities of care delivery.

The RAPT model

RAPT was developed to help research teams systematically assess whether an intervention and its delivery system are prepared for pragmatic testing [Reference Baier, Jutkowitz, Mitchell, McCreedy and Mor4]. The model includes nine domains: evidence, implementation protocol, risk, feasibility, alignment, cost, measurement, acceptability, and potential impact. Together, these domains reflect the factors most likely to influence successful implementation of an ePCT. Each domain is typically rated along a continuum from low to high readiness and can be summarized graphically to prompt reflection and discussion.

Although designed for self-assessment, RAPT can also structure collaborative reflection with partners, helping identify contextual barriers and priorities for refinement before a trial. In this case study, RAPT served as a facilitation tool to inform early planning for a potential ePCT.

Application of RAPT

We applied the RAPT model collaboratively to support early-stage planning and qualitative readiness assessment of the proposed intervention. RAPT was used to structure facilitated discussion and reflection about feasibility, context, and alignment across the model’s nine domains. The goal was to use RAPT to focus on which aspects of the proposed approach were sufficiently developed and which required additional refinement prior to pragmatic testing.

The RAPT session occurred in May 2023, three months into a year-long planning project, after meetings to clarify the clinical problem, proposed approach, and organizational context. Prior to the session, participants had access to a written description of the proposed case review process, summary information on Bluestone’s existing workflows, and preliminary discussion of potential outcomes and data sources; no finalized protocol or cost-benefit analysis had been developed. During a recorded Zoom session, participants anonymously rated each domain, reviewed aggregated results in real time, and discussed their rationale. A Brown project director facilitated discussion but did not participate in voting.

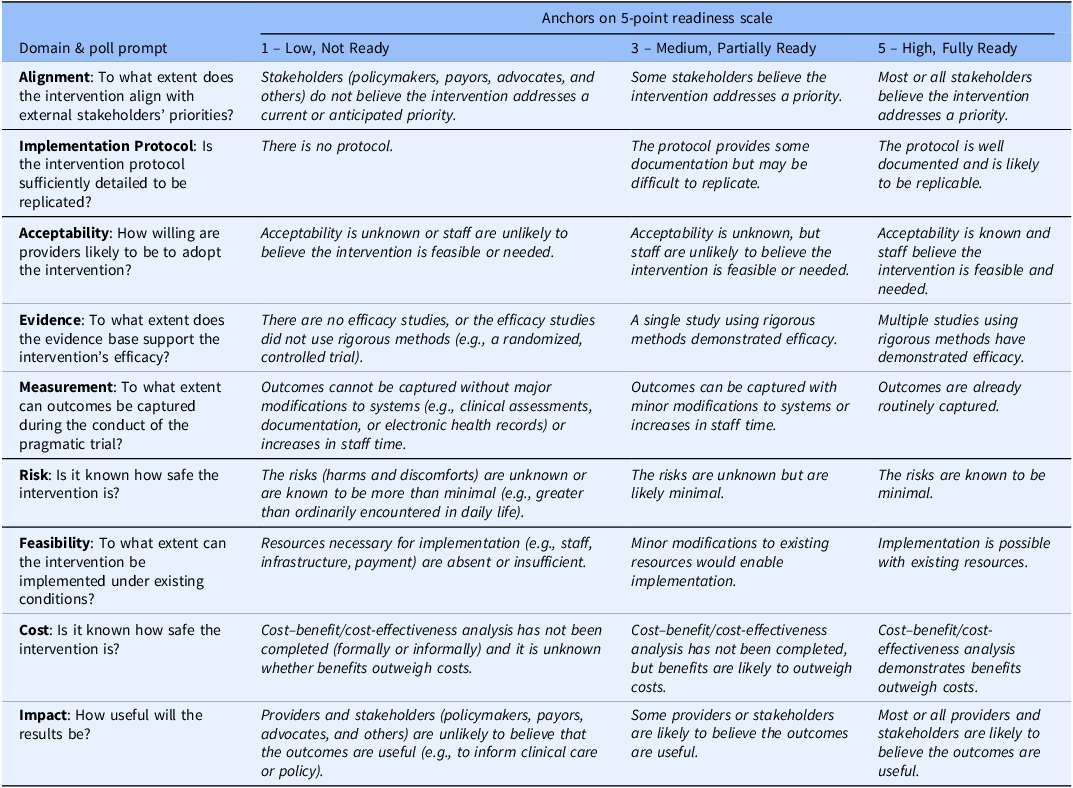

Participants rated each domain on a five-point scale anchored at low (1), moderate (3), and high readiness (5) readiness for pragmatic research, based on their individual perspective (Table 1). Ratings were entered anonymously using Zoom polling and displayed in real time, ensuring equal opportunity for input across roles and supporting open discussion. After the anonymous polling captured individual ratings, discussion was then facilitated to support shared understanding of those ratings, jointly consider factors related to feasibility, workflow fit, and data availability, and identify which domains would require additional preparatory work or clarification. Differences in perspective were addressed through discussion, rather than formal adjudication.

RAPT domain-level polling response choices

RAPT = readiness assessment for pragmatic trials.

Data sources and analysis

The RAPT session generated domain ratings, participant comments, and facilitator notes. Following the session, the research team summarized domain ratings, rationale, and illustrative quotes, then shared this summary with Bluestone partners for their review and agreement regarding interpretation and next steps. Analysis focused on domains of low or moderate readiness and related contextual factors. Findings are summarized in Table 1 to highlight participants’ reasoning and contextual insights. All materials are available on request.

Ethical considerations

This project focused on providers’ professional experiences and organizational processes to inform future research design and was not considered human subjects research; institutional review board review was not required.

Results

The May 2023 discussion included eight participants: five Bluestone leaders (members of the executive team, a data analyst, and a physician) and three Brown researchers (two faculty investigators, including a physician, and a data analyst). A Brown project director facilitated. The group assessed a proposed intervention: a risk-score algorithm designed to flag patients at high six-month mortality risk and trigger provider-led end-of-life care plan reviews, advance care planning conversations, and related care coordination actions.

The RAPT assessment and discussion clarified data and workflow needs and identified areas requiring further definition before an ePCT. Overall qualitative readiness levels reflect collective understanding following discussion, which focused on understanding the rationale for differing perspectives rather than forcing agreement or consensus.

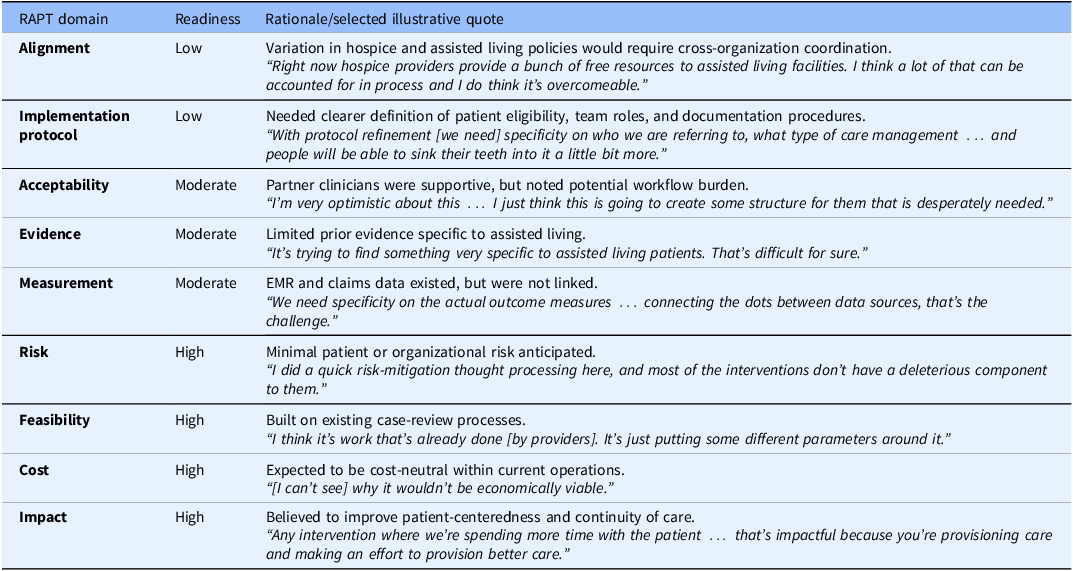

Table 2 summarizes domain-level assessments and rationale. Participants judged readiness low in two domains (Alignment and Implementation Protocol), moderate in three (Acceptability, Evidence, and Measurement), and high in four (Risk, Feasibility, Cost, and Impact). These assessments reflected Bluestone’s stage of operational planning: the proposed approach was conceptually aligned with organizational priorities, but workflow, documentation, and data linkage required further development.

RAPT domain-level readiness assessments with selected illustrative partner quotes

EMR = electronic medical record; RAPT = readiness assessment for pragmatic trials.

The Alignment domain, which focuses on fit with external stakeholders’ priorities, policies, and incentives, was assessed as low because the team recognized that improving the timing of hospice referrals would require coordination across multiple organizations, including hospice providers and assisted living communities whose policies and incentives varied widely. The Implementation Protocol domain was also assessed as low, reflecting the need to specify which Bluestone patients would be targeted, which team members would lead case reviews, and how recommendations would be documented and followed.

Domains assessed as moderate revealed additional considerations for planning. Acceptability, which focuses on appropriateness and buy-in within the partnering health system, reflected enthusiasm among Bluestone clinicians tempered by recognition that new workflows could create initial burden. Evidence was assessed as moderate because the team identified few evidence-based models directly applicable to assisted living residents. Measurement was also assessed as moderate, reflecting uncertainty about how best to capture outcomes pragmatically using existing electronic health records and claims data.

Domains assessed as high (Risk, Feasibility, Cost, and Impact) reflected the team’s belief that the proposed approach posed minimal patient or organizational risk, could be implemented within existing structures, and aligned with Bluestone’s organizational priorities around proactive, patient-centered care.

Overall, the RAPT session helped partners articulate key data, workflow, and organizational factors relevant to the proposed pragmatic study. Participants identified gaps in readiness related to patient eligibility definitions, delineation of team roles within the case review process, and the need for reliable linkages between electronic health record and claims data to support outcome measurement. The discussion surfaced workflow dependencies and operational constraints that had not been fully articulated during earlier planning conversations, including questions of workflow ownership, outcome definitions, and data availability. In addition, participants highlighted variability in external hospice and assisted living practices that would require coordination beyond Bluestone’s direct control as a physician practice.

Together, these findings informed identification of priority areas requiring further development before advancing to pragmatic testing. As a result of the RAPT discussion, the team identified the need to strengthen data infrastructure, clarify measurement strategies, refine workflows, and expand participation to include additional Bluestone leaders, such as the Senior Director of Value-Based Care Programs. Subsequent planning activities included examining claims data to identify patterns of hospice live discharges, reviewing electronic health record data sources to support outcome measurement, and assessing evidence-based practices relevant to assisted living populations. The discussion also prompted development of a mortality risk score to support proactive identification of patients most likely to benefit from goal-based, prognosis-driven interventions and appropriate timing of hospice enrollment.

Discussion

This methods case study examined how the RAPT model was applied to structure partner engagement during early trial planning. Using RAPT in partnership with Bluestone Physician Services leaders demonstrated how a readiness framework can also serve as a facilitation tool for partner engagement and co-design. Beyond generating qualitative assessments, the process structured reflection, promoted transparency, and supported shared ownership of research decisions. Grounded in anonymous polling, collective interpretation, and equal opportunity for input, the discussion format created a balanced environment for researchers and provider partners to explore feasibility, contextual fit, and workflow alignment before developing and implementing an ePCT protocol. This approach aligns with learning health systems and partnered research principles that emphasize mutual trust, accountability, and knowledge generation [6,Reference Reddy, McCreedy, Mitchell and Baier7].

These findings underscore the value of using readiness assessment as an early translational strategy rather than solely as a pre-trial checkpoint. In this case, applying RAPT during early planning revealed gaps related to intervention definition, workflow ownership, data integration, and external coordination that could have limited feasibility had a trial proceeded prematurely. Identifying these issues prior to protocol development allowed the partnership to sequence preparatory activities deliberately, strengthening operational alignment and shared understanding before advancing toward pragmatic testing. This use of RAPT illustrates how readiness frameworks can support early co-design and improve the translational trajectory of partner-embedded research.

This experience highlights two complementary functions of readiness assessment. Conceptually, RAPT evaluates whether an intervention and delivery system are ready for pragmatic testing [Reference Baier, Jutkowitz, Mitchell, McCreedy and Mor4] In practice, applying it collaboratively shifts the tool from a diagnostic checklist to a participatory planning framework. The exercise encouraged reflection on both operational and relational readiness, aligning expectations and surfacing contextual barriers that might otherwise have undermined implementation feasibility and fidelity in a trial.

The discussion highlighted the importance of the Alignment and Implementation Protocol domains, which focus on an intervention’s contextual fit with organizational priorities and its replicability within complex systems. These domains encourage researchers to engage around priorities, incentives, and workflow realities, developing a more nuanced understanding of context and recognizing providers as experts in their environments and co-leaders in the research process. By identifying issues early, the Brown-Bluestone team could focus subsequent planning on operational and data considerations rather than troubleshooting barriers once a study was underway. Other teams may find similar value in using RAPT to structure early dialog about feasibility and contextual fit during proposal development or pilot design.

This case study has several limitations. It reflects the experience of a single research-provider partnership and one facilitated RAPT session during early planning, which may limit generalizability to other contexts and stages of pragmatic trial development. However, several practical insights emerged. The use of anonymous polling, joint review of results, and documentation of participants’ reasoning enhanced transparency and facilitated shared learning. For research teams seeking to build sustainable partnerships, RAPT offers a low-burden, adaptable structure for group discussion and reflection that can strengthen both the quality and feasibility of embedded pragmatic research.

Since the completion of this project, RAPT-Indigenous (RAPT-I) has expanded the RAPT framework to explicity incorporate domains focused on context, equity, partnership, scalability, and sustainability [Reference Hikaka, McCreedy, Jutkowitz, McCarthy and Baier9] Although developed for research with Indigenous populations, these domains reflect considerations broadly relevant to pragmatic research. Their inclusion reinfoces the importance of shared leadership, contextual fit, and sustainability from the outset of study planning. Future research could explore how RAPT and RAPT-I can be used to engage partners and support more equitable and contextually grounded pragmatic research.

Conclusion

Applying RAPT in partnership created a structured space for dialog between researchers and provider partners, consistent with principles of partner-engaged research [Reference Reddy, McCreedy, Mitchell and Baier7]. The process helped establish shared understanding of feasibility and contextual fit, clarified workflow and data needs, and identified domains requiring additional attention before launching a trial. More broadly, this case demonstrates how a readiness framework can be repurposed as a practical method for partner engagement, offering a structured and replicable approach to co-designing feasible, contextually grounded pragmatic research.

Acknowledgments

We thank the Bluestone Physician Services leaders for their participation in the co-design process presented in this case study.

Author contributions

Rosa R. Baier: Conceptualization, Formal analysis, Investigation, Methodology, Supervision, Writing-original draft, Writing-review & editing; Peter T. Serina: Data curation, Formal analysis, Investigation, Project administration, Writing-original draft; Ann Reddy: Data curation, Formal analysis, Methodology, Project administration, Writing-review & editing; Anna Stanislawski: Formal analysis, Investigation, Writing-review & editing; Nate Hunkins: Formal analysis, Investigation; Ellen McCreedy: Conceptualization, Data curation, Formal analysis, Funding acquisition, Investigation, Methodology, Project administration, Writing-review & editing.

Funding statement

This work was supported by the National Institute on Aging (NIA) of the National Institutes of Health under Award Number U54AG063546, which funds the NIA Imbedded Pragmatic Alzheimer’s and AD-Related Dementias Clinical Trials Collaboratory (NIA IMPACT Collaboratory). The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. The authors have no commercial associations or sources of support that might pose a conflict of interest.

Competing interests

The authors declare none.