Many studies in fields such as psychology and educational sciences obtain information about attributes of subjects through observational studies, in which raters score subjects using multiple-item rating scales. Usually, the items are assumed to measure a unidimensional latent variable (denoted by

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() ), though multidimensional generalizations exist. Examples that we consider in this article are instruments measuring attributes of teachers such as efficient classroom management (e.g., Van der Scheer et al., Reference Van der Scheer, Bijlsma and Glas2019), instructional skills (e.g., Van der Scheer et al., Reference Van der Scheer, Glas and Visscher2017; Bijlsma et al., Reference Bijlsma, Glas and Visscher2022), and differential instruction (e.g., Faber et al., Reference Faber, Glas and Visscher2018).

), though multidimensional generalizations exist. Examples that we consider in this article are instruments measuring attributes of teachers such as efficient classroom management (e.g., Van der Scheer et al., Reference Van der Scheer, Bijlsma and Glas2019), instructional skills (e.g., Van der Scheer et al., Reference Van der Scheer, Glas and Visscher2017; Bijlsma et al., Reference Bijlsma, Glas and Visscher2022), and differential instruction (e.g., Faber et al., Reference Faber, Glas and Visscher2018).

Observation instruments are typically comprised of polytomously scored items, such as Likert-type scales. Dichotomously scored items are a special case. Data collected using multiple-item rating scales are often analyzed using item response theory (IRT) models (van der Linden, Reference Linden2016). There are several advantages of using an IRT model over analyzing scores obtained by aggregating item responses. IRT separates the effects of items and person in the response data. This so-called parameter separation supports comparison of measures on different, though linked, instruments, easy handling of missing data, including planned missingness by design, optimal item administration designs and heteroscedastic definitions of measurement error. IRT offers the possibility to explain differences in tests scores across individuals who took (partly) different tests, without these differences being affected by test- (or item-)specific effects.

Next to item-related effects, observations may contain rater-related effects and effects due to other measurement-facets, such as the specific tasks administered or the time-points of administration. Such measurement effects are often considered a nuisance because they add noise to the estimates of the attributes of the objects of measurement. Note that in this article the terms objects, objects of measurement and subjects are used interchangeably, they refer to the target of the measurement, say an essay of a student, the proficiency of a teacher, etc. Estimating a so-called generalizability theory (GT) model allows to disentangle the total variation in observations into variation due to differences across the objects of measurement (e.g., subjects) and variation due to measurement error facets (e.g., raters, occasions; Cronbachet al., Reference Cronbach, Rajaratnam and Gleser1963; Brennan, Reference Brennan2001). Using the estimates of the variance components, the impact of measurement error on the target of interest scores can be assessed (Cronbach and Shavelson, Reference Cronbach and Shavelson2014). Further, generalizability coefficients computed from these variance components can be used to index the (relative) magnitude of the effect of the sampled measurement occasions on the observed scores of the objects of measurement. These coefficients express the degree to which observed scores can be generalized over the measurement occasions (e.g., Fan & Sun Reference Fan and Sun2014, Vispoel et al., Reference Vispoel, Morris and Kilinc2018) and can be adapted to assess specific types of reliability, like test–retest and inter-rater reliability (e.g., Brennan, Reference Brennan2001, Vispoel et al., Reference Vispoel, Morris and Kilinc2019, Shrout & Fleiss Reference Shrout and Fleiss1979, McGraw & Wong, Reference McGraw and Wong1996, Ten Hove et al., Reference Ten Hove, Jorgensen and Van der Ark2021, Reference Ten Hove, Jorgensen and Van der Ark2022).

The combination of an IRT and GT model (labeled the GT-IRT model) provides a powerful framework for the analysis of ratings obtained by itemized instruments (Patz et al., Reference Patz, Junker, Johnson and Mariano2002; Glas, Reference Glas2012; Choi and Wilson, Reference Choi and Wilson2018; Shin et al., Reference Shin, Rabe-Hesketh and Wilson2019; Huang and Cai, Reference Huang and Cai2023). In this article, it will be shown how the GT-IRT model can be used to correct for measurement error in an integrated approach—for instance, how the GT-IRT model can be used to disattenuate regression coefficients in linear models. The problem that needs to be tackled is that the advantages of IRT outlined above come with a price. The GT model, and also the possible multilevel models in which the GT model may be embedded, uses the latent variables

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() of IRT as dependent or independent variables rather than directly observed variables or functions of directly observed variables (e.g., scale composites). However, these latent IRT variables are estimates rather than direct observations, and the sampling variance of the

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

of IRT as dependent or independent variables rather than directly observed variables or functions of directly observed variables (e.g., scale composites). However, these latent IRT variables are estimates rather than direct observations, and the sampling variance of the

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() estimates must be taken into account. One way of dealing with the problem is using plausible value imputation (see, for instance, Khorramdel et al., Reference Khorramdel, Davier, Gonzalez, Yamamoto, D. and B.2020). One of the issues is that the measurement model and structural model (the linear model on the latent person parameters) must be estimated jointly, and plausible values would not help if they come from just the measurement model alone and therefore do not have the correct relationship with the other variables. In the present article, an extension of this approach is used, where the plausible values are the full set of samples from a Markov chain Monte Carlo (MCMC) estimation procedure for a fully Bayesian model (see, for instance, Fox & Glas, Reference Fox and Glas2001, Reference Fox and Glas2003).

estimates must be taken into account. One way of dealing with the problem is using plausible value imputation (see, for instance, Khorramdel et al., Reference Khorramdel, Davier, Gonzalez, Yamamoto, D. and B.2020). One of the issues is that the measurement model and structural model (the linear model on the latent person parameters) must be estimated jointly, and plausible values would not help if they come from just the measurement model alone and therefore do not have the correct relationship with the other variables. In the present article, an extension of this approach is used, where the plausible values are the full set of samples from a Markov chain Monte Carlo (MCMC) estimation procedure for a fully Bayesian model (see, for instance, Fox & Glas, Reference Fox and Glas2001, Reference Fox and Glas2003).

This article is structured as follows. First, we present the IRT model to map the discrete item responses to a continuous latent scale, and the GT model to separate the variance in this latent measurement into variance components of interest and variance components of nuisance. Generalizability coefficients to assess the measurements’ reliability and agreement among raters are also discussed. Second, a Bayesian estimation procedure that allows for the estimation of the model parameters in an integrated approach is outlined. Third, using examples from the field of educational measurement, it is shown how the GT-IRT model can be embedded in linear regression and factor analysis models, that incorporate the latent variables associated with the variance of interest, and how this procedure can be used to answer substantive research questions.

1. Theoretical Background

In this section, we consider a straightforward case with a fully crossed design. Generalizations to observational designs that are not fully crossed will be treated in the example sections. We outline the model for a two-faceted observational design where raters (i.e., the first measurement facet, indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$r = 1,\ldots ,R$$\end{document}

![]() ) assess objects (e.g., work by students, lessons delivered by teachers; indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j = 1,\ldots ,N$$\end{document}

) assess objects (e.g., work by students, lessons delivered by teachers; indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j = 1,\ldots ,N$$\end{document}

![]() ) on a second measurement facet (e.g., tasks, time-points; indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$t = 1,\ldots ,T$$\end{document}

) on a second measurement facet (e.g., tasks, time-points; indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$t = 1,\ldots ,T$$\end{document}

![]() ) using an instrument with K items (indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$k = 1,\ldots ,K$$\end{document}

) using an instrument with K items (indexed

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$k = 1,\ldots ,K$$\end{document}

![]() ) to provide information about an attribute of the objects (e.g., teacher proficiency or creativity). It is assumed that each item k has

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$M_k + 1$$\end{document}

) to provide information about an attribute of the objects (e.g., teacher proficiency or creativity). It is assumed that each item k has

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$M_k + 1$$\end{document}

![]() response categories labeled

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$m = 0,\ldots ,M_k$$\end{document}

response categories labeled

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$m = 0,\ldots ,M_k$$\end{document}

![]() and that the instances of both measurement facets (i.e., the raters and time-points) are randomly sampled from a larger pool of potential instances of these facets.

and that the instances of both measurement facets (i.e., the raters and time-points) are randomly sampled from a larger pool of potential instances of these facets.

To put the presentation of the GT-IRT model in perspective, consider the setup of the first example that will be presented in the section Empirical Examples. The example pertains to the evaluation of changes in the instructional skills of 34 teachers after they participated in an intensive data-based decision making intervention (Van der Scheer et al., Reference Van der Scheer, Glas and Visscher2017). The teachers were recorded three times prior to the intervention, and three times after the intervention, and all recordings were assessed by four independent raters. Instructional skills were measured with an observation instrument of 35 Likert-scale items with 4 response categories. So in this example,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$N=34$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R=4$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R=4$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$T=6$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$T=6$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$K=35$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$K=35$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$M_k = 3$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$M_k = 3$$\end{document}

![]() , for all k.

, for all k.

Note that this setup could also be viewed as a 3-facet

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j \times r \times t \times k$$\end{document}

![]() design. However, in IRT models and their applications, such as in large-scale educational surveys, the items making up a test are usually treated as a fixed facet, where no generalization to a larger population of possible items is involved. For simplicity, in subsequent steps we drop the item subscript k from the GT part of the model. Interactions of item effects with other facets of measurement are still reflected in the GT-IRT model. For instance, if an incomplete item administration design is used, item parameters can still vary across other measurement facets (r and t). The possibilities of viewing items as randomly sampled from some domain will be returned to in the Conclusions section.

design. However, in IRT models and their applications, such as in large-scale educational surveys, the items making up a test are usually treated as a fixed facet, where no generalization to a larger population of possible items is involved. For simplicity, in subsequent steps we drop the item subscript k from the GT part of the model. Interactions of item effects with other facets of measurement are still reflected in the GT-IRT model. For instance, if an incomplete item administration design is used, item parameters can still vary across other measurement facets (r and t). The possibilities of viewing items as randomly sampled from some domain will be returned to in the Conclusions section.

1.1. IRT Model

The IRT model is used to map the discrete item responses onto a continuous latent scale and to model both item and subject parameters. The IRT model used here is the generalized partial credit model (GPCM; Muraki, Reference Muraki1992) in a logistic representation. This choice is not essential. Verhelst et al. (Reference Verhelst, Glas and Vries1997) have shown that the GPCM generally yields results that are similar to those obtained by the graded response model (Samejima, Reference Samejima1969) or the sequential model (Tutz, Reference Tutz1990). The reason is that their item response curves, relating observed responses and the latent variable

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() , are very close. The same holds for the logistic and normal-ogive (or probit) representation of these models.

, are very close. The same holds for the logistic and normal-ogive (or probit) representation of these models.

The measurement occasion pertains to the observation of the target of measurement j and one or more facets that influence the observation, say, a rater r, and a time-point t. In the GPCM, the probability of a response

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$Y_{jrtk}$$\end{document}

![]() in categories

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$m = 0,\ldots ,M_k$$\end{document}

in categories

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$m = 0,\ldots ,M_k$$\end{document}

![]() is given by

is given by

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\alpha _k$$\end{document}

![]() is the discrimination parameter of item k,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{kh}$$\end{document}

is the discrimination parameter of item k,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{kh}$$\end{document}

![]() are item-location parameters, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

are item-location parameters, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() is the latent variable associated with measurement occasion jrt. It is assumed that

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k0} = 0 $$\end{document}

is the latent variable associated with measurement occasion jrt. It is assumed that

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k0} = 0 $$\end{document}

![]() and summations with upper-bounds and lower-bounds equal to zero yield a zero result. Note that the denominator is a normalization factor, that is, it is the sum over all response categories to ensure that the probability of all possible responses equals one. As a consequence, the probability of a score in the zero-category is given by

and summations with upper-bounds and lower-bounds equal to zero yield a zero result. Note that the denominator is a normalization factor, that is, it is the sum over all response categories to ensure that the probability of all possible responses equals one. As a consequence, the probability of a score in the zero-category is given by

This general formulation can be further extended to more facets (other than items), by adding more subscripts to the latent variable

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() .

.

1.2. GT Model

The GT model is used to separate the measurement variance into variance components of interest and nuisance components, based on the different facets of the measurement design. Because the IRT model in Formula (1) is a probability model that already accounts for the item-specific effects on the uncertainty of the latent scores and eliminates item-specific random error, the GT variance decomposition concerns the latent observations

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() .

.

The measure of instructional skills of teacher j by rater r at time-point t,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() is decomposed into the main effect of the object of measurement (the teacher) and measurement facets, that is,

is decomposed into the main effect of the object of measurement (the teacher) and measurement facets, that is,

where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{1j}$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{2r}$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{2r}$$\end{document}

![]() , and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{3t}$$\end{document}

, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{3t}$$\end{document}

![]() are the main effects of teacher

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j, j=1,..,N$$\end{document}

are the main effects of teacher

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$j, j=1,..,N$$\end{document}

![]() , rater

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$r, r=1,..R$$\end{document}

, rater

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$r, r=1,..R$$\end{document}

![]() , and time-point

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$t, t=1,..,T$$\end{document}

, and time-point

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$t, t=1,..,T$$\end{document}

![]() , respectively. Further,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{4jr}$$\end{document}

, respectively. Further,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{4jr}$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{5jt}$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{5jt}$$\end{document}

![]() , and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{6rt}$$\end{document}

, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\tau _{6rt}$$\end{document}

![]() , are the two-way interaction effects between subject j and rater r, and time-point t, respectively.

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\epsilon _{jrt}$$\end{document}

, are the two-way interaction effects between subject j and rater r, and time-point t, respectively.

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\epsilon _{jrt}$$\end{document}

![]() is the three-way interaction effect between subject j, rater r, and time-point k, which is confounded with the random error.

is the three-way interaction effect between subject j, rater r, and time-point k, which is confounded with the random error.

In the examples given below, the variable

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{1j}$$\end{document}

![]() will be used in a mixture of the GT model with a linear regressions model to reduce attenuation. In the next section, the model will also be used to construct generalizability coefficients, using the following variance decomposition. The total variance of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

will be used in a mixture of the GT model with a linear regressions model to reduce attenuation. In the next section, the model will also be used to construct generalizability coefficients, using the following variance decomposition. The total variance of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() is decomposed into orthogonal variance components associated with each effect, that is,

is decomposed into orthogonal variance components associated with each effect, that is,

This general variance decomposition model can be applied in many situations. Raters may be crossed with all measurement occasions or may be distributed over measurement occasions according to some linked design (see Empirical Example 2). Facets may be random (drawn from a pool of possible instances) or fixed (when the possible tasks making up a facet are limited). Further, the ratings may pertain to an absolute judgement (where agreement of raters is relevant) or a relative judgement (pertaining to the ordering of objects where consistency across raters is relevant).

1.3. Reliability and Agreement in the GT-IRT Model

Based on the GT-IRT model defined in the previous sections, generalizability coefficients are summary statistics that express the reliability of ordering subjects and are of interest for correlation studies and linear regression analysis. These coefficients also offer the opportunity to assess the reliability of the absolute standings of subjects on an attribute, such as a latent score on a diagnostic test. This is accomplished using indices of dependability, which are closely related to intraclass correlation coefficients (ICCs) for agreement. The difference will be discussed below.

Bechger et al. (Reference Bechger, Maris, Verstralen and Béguin2003) point out that the concepts of reliability in classical test theory (CTT) and IRT are very much alike. Both are derived from the variance decomposition

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var(\theta ) = var(E(\theta | y)) + E(var(\theta | y ))$$\end{document}

![]() , where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

, where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() is a true score or latent person variable,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var(\theta )$$\end{document}

is a true score or latent person variable,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var(\theta )$$\end{document}

![]() is the population variance of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

is the population variance of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$E(\theta | y)$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$E(\theta | y)$$\end{document}

![]() is the expectation of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

is the expectation of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() given the observations y. Reliability is expressed as a variance ratio, that is,

given the observations y. Reliability is expressed as a variance ratio, that is,

The true score

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() is unknown, so an estimator is plugged in. In IRT, this leads to the so-called expected a posteriori (EAP) score. Note that

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var(E(\theta |y))$$\end{document}

is unknown, so an estimator is plugged in. In IRT, this leads to the so-called expected a posteriori (EAP) score. Note that

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var(E(\theta |y))$$\end{document}

![]() is the variance of the EAP estimates over the complete sample of responses. The measurement error on the latent scale, that is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$E(var(\theta | y ))$$\end{document}

is the variance of the EAP estimates over the complete sample of responses. The measurement error on the latent scale, that is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$E(var(\theta | y ))$$\end{document}

![]() , consists of two elements: the uncertainty regarding the position of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

, consists of two elements: the uncertainty regarding the position of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() on the latent scale given the response pattern y and the uncertainty modeled by a GT model.

on the latent scale given the response pattern y and the uncertainty modeled by a GT model.

Besides being an easy summary of the generalizability of the assessments, generalizability coefficients also support a so-called design study (D-study). In this approach, the variance components of the GT model are estimated first in a so-called generalizability study (G-study), and subsequently the results are used in a D-study to estimate the number of raters, time-points and other possible facets to obtain a certain target reliability level. To define generalizability coefficients that facilitate this, an EAP estimator is defined that is analogous to the test score used in CTT and its’ extension, generalizability theory, which is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$S_j = \sum _{r,t} Y_{jrt} /{RT} $$\end{document}

![]() , where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$Y_{jrt}$$\end{document}

, where

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$Y_{jrt}$$\end{document}

![]() is a manifest continuous observation or total score. So we take the average over raters and time-points, that is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$S_j = \sum _{r,t} \theta _{jrt} /{RT} $$\end{document}

is a manifest continuous observation or total score. So we take the average over raters and time-points, that is

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$S_j = \sum _{r,t} \theta _{jrt} /{RT} $$\end{document}

![]() . After decomposing the variance of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$S_j$$\end{document}

. After decomposing the variance of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$S_j$$\end{document}

![]() in a GT-IRT model, in the D-study the desired reliability of an assessment averaged over raters and time-points can be estimated by varying the number of raters R and the number of time-points T in

in a GT-IRT model, in the D-study the desired reliability of an assessment averaged over raters and time-points can be estimated by varying the number of raters R and the number of time-points T in

with

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{1j}^2 = \text {Var}(E(S_j | \textbf{Y}_j )) $$\end{document}

![]() and vector

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\textbf{Y}_j$$\end{document}

and vector

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\textbf{Y}_j$$\end{document}

![]() is the concatenation of all responses given regarding teacher j. The other variance components are posterior variances with an analogous definition, for instance,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{2r}^2 = \text {Var}(\tau _{2r} | \textbf{Y}_j ) $$\end{document}

is the concatenation of all responses given regarding teacher j. The other variance components are posterior variances with an analogous definition, for instance,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{2r}^2 = \text {Var}(\tau _{2r} | \textbf{Y}_j ) $$\end{document}

![]() . Note that Formula (6) is analogous to the expression for reliability in the CTT-version of GT, only the variance components are defined differently. Note further that the three variance components present in Formula (4), that is,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{2r}^2$$\end{document}

. Note that Formula (6) is analogous to the expression for reliability in the CTT-version of GT, only the variance components are defined differently. Note further that the three variance components present in Formula (4), that is,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{2r}^2$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{3t}^2$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\sigma _{3t}^2$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$ \sigma _{6rt}^2$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$ \sigma _{6rt}^2$$\end{document}

![]() , do not appear in the denominator of Formula (6). The reason is that the assessment is averaged over time-points and raters, so it works out the same for all objects in the sense that their ordering is not affected by these factors. If the interest is in an absolute assessment rather than a relative assessment, the assessments of the various raters on various occasions must be as similar as possible. Then, the three omitted variances become important and must be included in the denominator of the coefficient. This leads to a coefficient of Agreement given by

, do not appear in the denominator of Formula (6). The reason is that the assessment is averaged over time-points and raters, so it works out the same for all objects in the sense that their ordering is not affected by these factors. If the interest is in an absolute assessment rather than a relative assessment, the assessments of the various raters on various occasions must be as similar as possible. Then, the three omitted variances become important and must be included in the denominator of the coefficient. This leads to a coefficient of Agreement given by

The variance components are posterior variances defined analogously to the variance components in Formula (6). Again, the expression for Agreement is analogous to the expression in the CTT-version of GT, but with a different definition of the variance components.

In IRT, two versions of reliability are distinguished: global reliability and local reliability. Global reliability refers to the concept as it is used in CTT, say, the extent to which two randomly chosen persons from some population can be distinguished, either in the available sample, or in circumstances with the same number, but other raters and time-points sampled from their respective populations, available. Local reliability is defined locally on the latent scale and refers to the precision with which

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _j$$\end{document}

![]() is placed on the

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

is placed on the

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() -scale. Local reliability is, for instance, of interest for assessing the probability that a person is above or below some cut-off point on the latent scale, or for assessing to what extent two latent scores

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _j$$\end{document}

-scale. Local reliability is, for instance, of interest for assessing the probability that a person is above or below some cut-off point on the latent scale, or for assessing to what extent two latent scores

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _j$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{j'}$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{j'}$$\end{document}

![]() can be distinguished. The value of a person’s latent variable can be estimated by its posterior expectation and the precision of the estimate can be represented by the associated posterior variance. If the measurement involves raters and time-points, the introduction of a GT model on

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

can be distinguished. The value of a person’s latent variable can be estimated by its posterior expectation and the precision of the estimate can be represented by the associated posterior variance. If the measurement involves raters and time-points, the introduction of a GT model on

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() follows the same lines as above. However, the variance component in the numerator now only pertains to one specific person j. Therefore, the numerator of Formula (5) becomes

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var_j(E(S_j|\textbf{Y}_j))$$\end{document}

follows the same lines as above. However, the variance component in the numerator now only pertains to one specific person j. Therefore, the numerator of Formula (5) becomes

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$var_j(E(S_j|\textbf{Y}_j))$$\end{document}

![]() , and the denominator is adapted accordingly.

, and the denominator is adapted accordingly.

1.4. Estimation

In the examples, concurrent estimates are made for three integrated models: an IRT model, a GT model and a linear model.

First, we need to find a suitable IRT model. As already mentioned above, IRT models have many advantages but these advantages only apply when two conditions are met: the IRT model must fit the data, and when linking the model via the latent variable

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta $$\end{document}

![]() to a GT model, or any other regression model for that matter, the variance in the estimates of the latent variables must be properly taken into account. Starting with the requirement of model fit, it must at least be shown that the item parameters apply to all sub-populations (say all raters, all time-points, etc.) and that the item response probabilities given by the formulas 1 and 2 as a function of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

to a GT model, or any other regression model for that matter, the variance in the estimates of the latent variables must be properly taken into account. Starting with the requirement of model fit, it must at least be shown that the item parameters apply to all sub-populations (say all raters, all time-points, etc.) and that the item response probabilities given by the formulas 1 and 2 as a function of

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() lead to a reasonable representation of the data in all these sub-populations. The latter is known as the requirement of no differential item functioning. Two approaches are possible to evaluate these requirements. The first is to run a separate analysis in a frequentist framework, say a marginal maximum likelihood (MML) framework and use the by now quite comprehensive collection of fit indices available in such a framework (see, for instance, Glas, Reference Glas, B. S. and D. C.2005). The second approach is to evaluate the requirements in the Bayesian framework used for the concurrent estimation of the GT-IRT model (see, for instance, Fox, Reference Fox2010, Levy & Mislevy, Reference Levy and Mislevy2016). Though testing the IRT model is an essential first step, it is beyond the scope of the present article.

lead to a reasonable representation of the data in all these sub-populations. The latter is known as the requirement of no differential item functioning. Two approaches are possible to evaluate these requirements. The first is to run a separate analysis in a frequentist framework, say a marginal maximum likelihood (MML) framework and use the by now quite comprehensive collection of fit indices available in such a framework (see, for instance, Glas, Reference Glas, B. S. and D. C.2005). The second approach is to evaluate the requirements in the Bayesian framework used for the concurrent estimation of the GT-IRT model (see, for instance, Fox, Reference Fox2010, Levy & Mislevy, Reference Levy and Mislevy2016). Though testing the IRT model is an essential first step, it is beyond the scope of the present article.

The next step is to obtain a concurrent estimate of the parameters of the GT-IRT model and the regression model. At least two options are open: an MML procedure or a Bayesian approach. A drawback of the MML approach is that the complex dependency structures in the data requires the evaluation of various nested integrals (see, for instance, Fox & Glas, Reference Fox and Glas2001, p. 287). A fully Bayesian approach does not have that drawback. An MCMC procedure can be used to generate the posterior distributions of all parameters. From these distributions, point estimates can be obtained from their posterior means or medians. The repeated draws created in the procedure are equivalent to a huge set of plausible values. One of the nice things about Bayesian estimation using MCMC computational methods is that functions of parameters can be sampled along with their constituent parameters. Therefore, the computation of credibility regions for the generalizability coefficients, which are functions of variances, is relatively straightforward. Further, a Bayesian approach allows to incorporate prior beliefs about the distribution of model parameters, which eases estimation of, e.g., variance components for small sample sizes (which is often the case in observational studies). More common is to use vague and uninformative priors and this approach will be followed in the examples. Further explanation will follow below.

One of the important issues when using an MCMC procedure is whether the Markov chain has actually converged. There are many tools available for checking convergence, but that topic will not be extensively discussed in the present article. All examples were computed using 60,000 iterations with 5,000 burn in iterations, which proved to be more than sufficient for convergence, judging from adequate mixing in the trace plots.

1.5. Identification

An IRT model must be identified by fixing the origin and scale of the latent dimension. This is typically done by constraining either item parameters or the mean and variance of the distribution of latent parameters. Regarding using constraints on the item parameters, often the item parameters are already known through estimation in other applications and are entered as fixed constants. If this is not the case, the simplest way is to fix one discrimination parameter to one, and one location parameter to zero. This may, however, not be an optimal solution if the item parameters that are fixed to identify the model are poorly conditioned by the data. For instance, if a dichotomously scored item has a very small number of correct responses, the standard error of such an item is very large, this will propagate to all other item parameters, which will all become inconveniently large. The same occurs when the discrimination parameter of the item used for identification is very low or very high. Therefore, a better approach is to set the sum of the item location parameters to zero, and the product of the item discrimination parameters to one. However, depending on the priors and the MCMC computational algorithm, this approach is not straight-forward. The alternative is fixing population parameters. Without the presence of a linear model on the ability distribution, fixing its mean and variance zero and one, respectively, is the simplest approach. However, in the present framework, the scale of the latent dimension is made up of several variance components. Fixing the mean and variance of the best conditioned distribution, usually the distribution of the main effect of the objects of measurement j, is a practicable approach.

1.6. Priors

Bayesian estimation using MCMC computational methods entails drawing parameters from the posterior distribution to map out its shape, where the posterior distribution is proportional to the product of likelihood of the parameters given the data and the prior distributions of the parameters. For the IRT model, several suggestions are available. For instance, Albert (Reference Albert1992) suggests a flat prior on positive reals for discrimination parameters and a flat prior on the real line for the difficulties. Alternatively, the discrimination parameters can also be given a log normal prior or a truncated normal prior (a normal distribution restricted to the positive reals with a mean and variance both equal to one). The latter approach was used in the examples below. In these examples, the location parameters are given normal priors with variance equal to 1.0 and means equal to the category index within the item. The latter was done to reflect the likely order of the location parameters on the latent scale.

Finally, for variances an inverse gamma distribution is usually chosen, while Fox and Glas (Reference Fox and Glas2001) suggest Jeffrey’s prior and discuss uniform and inverse-chi-squared priors as an alternative for small sample sizes. In the examples given below, priors for precision parameters (the reciprocal of variances, such as the variance components in a GT model) were Gamma distributions with the two parameters equal to 0.01, such that the expectation and variance of the precision were equal to 1.0 and 500, respectively. So this prior was quite vague. The covariance matrix introduced below in the last example was given an inverse Wishart distribution with an identity matrix as a parameter.

2. Empirical Examples

All three examples presented below are derived from a research project regarding teachers’ instructional skills. The aim of the present section is to give some examples of the modeling possibilities of the approach presented above. For more information about the context in which the data were collected and the items used, refer to the articles mentioned in the examples. The analyses presented below are no strict replications of the data analyses in the articles: the selection of items for the analyses is different, and the results of the analyses should not be used for substantive conclusions. For such purposes, refer to the original three articles. All examples in the sequel were analyzed using both the program Open Bugs and the program JAGS (Plummer, Reference Plummer2017) via the R package runjags. Scripts and data files associated with this article are available on the Open Science Framework (OSF, https://osf.io/knzw9/).

2.1. Example 1: Evaluation of Teachers’ Instructional Skills

In this example, the data from Van der Scheer et al. (Reference Van der Scheer, Glas and Visscher2017) are used. In the research project, changes in the instructional skills of 34 teachers participating in an intensive data-based decision-making intervention were evaluated. In this example, we discuss three approaches to the estimation of IRT item parameters, G- and D-coefficients for various research designs, and results of a linear model based on the latent attribute scores issued from the GT-IRT model as outcome variables.

2.1.1. Data

The 34 teachers were recorded three times prior to the intervention, and three times after the intervention, and all recordings were rated by four independent raters. Instructional skills were measured using the so-called the ICALT (International Comparative Analysis of Learning and Teaching, Van de Grift, Reference Van de Grift2007, Reference Van de Grift2014) consisting of 35 Likert-scale items with 4 response categories.

2.1.2. Modeling Procedure

The item responses are modeled by the GPCM, so the probability model for the response by a rater r at time-point t, when judging teacher j in category

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$m=0,...,3$$\end{document}

![]() on an item k is given by the Formulas (1) and (2).

on an item k is given by the Formulas (1) and (2).

The measurements

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\theta _{jrt}$$\end{document}

![]() are decomposed into main effects of the object of measurement and all effects of the measurement facets, as well as all their interaction effects. The model is given in Formula (3), and its variance decomposition is given in Formula (4). In this example,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$J=34$$\end{document}

are decomposed into main effects of the object of measurement and all effects of the measurement facets, as well as all their interaction effects. The model is given in Formula (3), and its variance decomposition is given in Formula (4). In this example,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$J=34$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R=4$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$R=4$$\end{document}

![]() , and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$T=6$$\end{document}

, and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$T=6$$\end{document}

![]() . Thus, there were 816 responses (

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$N \times T \times R = 34 \times 6 \ \times 4 = 816$$\end{document}

. Thus, there were 816 responses (

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$N \times T \times R = 34 \times 6 \ \times 4 = 816$$\end{document}

![]() ) to the 34 items. To keep the tables of the present example concise, 10 out of the 34 items were randomly chosen for the analyses, yielding 8160 observed item responses for analysis.

) to the 34 items. To keep the tables of the present example concise, 10 out of the 34 items were randomly chosen for the analyses, yielding 8160 observed item responses for analysis.

The first step in the analysis is to establish that the IRT model fits the data. We will not go into detail here, but the items fit the model adequately, as can be inferred from the supplementary information provided with the article of Van der Scheer et al. (Reference Van der Scheer, Glas and Visscher2017). As a next step, three approaches are considered for the estimation of the GT-IRT model.

2.1.3. Results

Concurrent Estimates To take all uncertainty regarding the model into account, the ideal procedure is to obtain concurrent draws of all IRT and GT parameters from their joint full posterior simultaneously. The results of this approach will be presented first. However, in many situations, this approach proves impractical, so alternatives will be presented next.

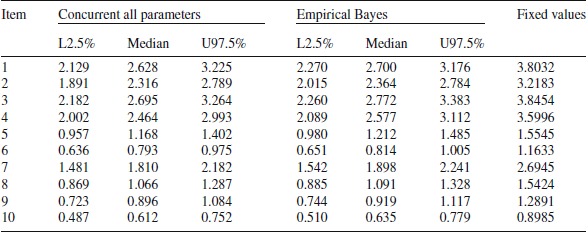

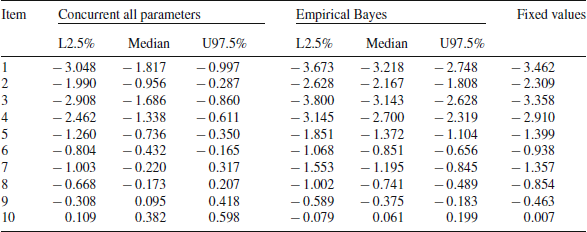

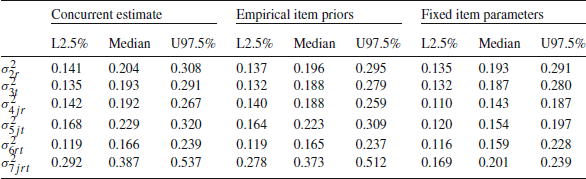

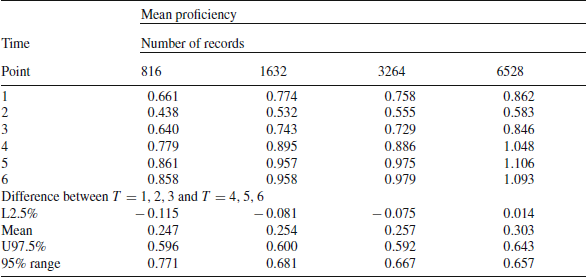

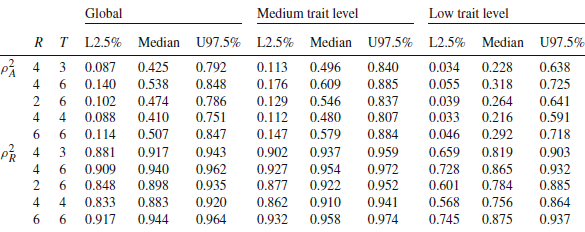

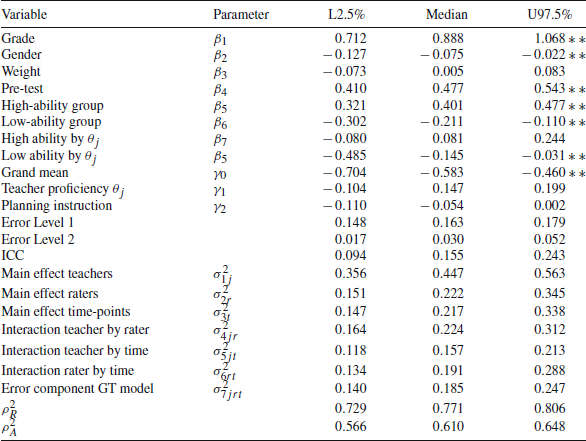

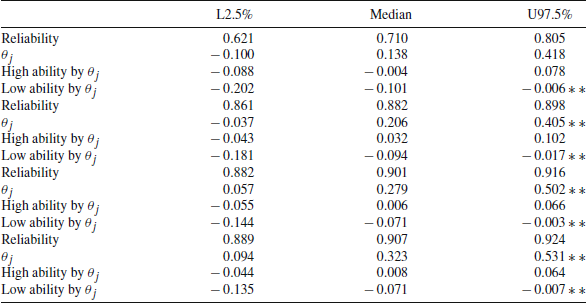

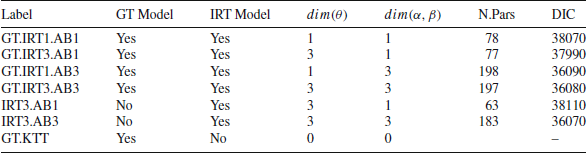

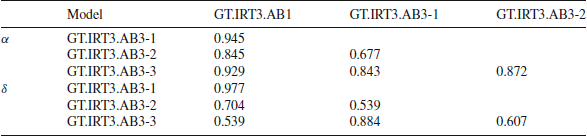

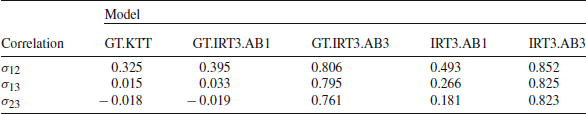

Under the heading “Concurrent all parameters”, Tables 1 and 2 give the estimates of the discrimination parameters (Table 1) and the average of the three item location parameters

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k1}$$\end{document}

![]() ,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k2}$$\end{document}

,

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k2}$$\end{document}

![]() and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k3}$$\end{document}

and

\documentclass[12pt]{minimal}

\usepackage{amsmath}

\usepackage{wasysym}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{amsbsy}

\usepackage{mathrsfs}

\usepackage{upgreek}

\setlength{\oddsidemargin}{-69pt}

\begin{document}$$\delta _{k3}$$\end{document}

![]() (Table 2). For every item, this average gives an indication of the overall location of the item on the latent scale. The column labeled “Median” gives the median of the MCMC generated posterior distribution and the columns labeled “L2.5%” and “U97.5%” give the boundaries of the central 95% credibility region.

(Table 2). For every item, this average gives an indication of the overall location of the item on the latent scale. The column labeled “Median” gives the median of the MCMC generated posterior distribution and the columns labeled “L2.5%” and “U97.5%” give the boundaries of the central 95% credibility region.