Introduction

Organoids are in vitro models of a particular organ, or developmental stage of an organ, created in the laboratory by the directed growth, differentiation, and self-organization of pluripotent stem cells. One particular type of organoids is neural organoids, that is, organoid models of a particular part of the nervous system. In the bioethical literature, specific attention has been paid to neural organoids that model parts of the brain and the ethical questions that are raised by the possibility that such organoids may develop sentience or consciousness.Footnote 1 It has been argued by some that this possibility requires precautionary action and regulation of neural organoid research.Footnote 2

In these debates, some authors use the term “sentience” and others use the term “consciousness.” There are many possible differences between these two terms, but we will primarily use the term “sentience” in this paper because it is usually taken to be the more basic term with fewer commitments in relation to the complexity or quality of what is experienced by the entity that has the experience. For the purposes of this paper, we define sentience as having (the ability to have) valenced experiences, and we accept the premise that sentience matters morally. If a particular entity has valenced experiences, then there is a reason to take those experiences into account in ethical evaluations of how that entity should be treated; that is, it gives that entity a certain degree of moral status. If neural organoids were sentient, then they would therefore have that degree of moral status, and if there was genuine uncertainty about the likelihood of sentience in neural organoids, then precautionary action might be pro tanto justified.

Primarily focusing on neural organoids that are not implanted into the central nervous system of a living animal, we will analyze three different lines of argument to justify the possibility that neural organoids are sentient or may develop sentience; that is, arguments based on (1) a foundational philosophical theory, (2) a theory of the relation between brain function and sentience, and (3) analogy with known sentient entities. We will show that, apart from arguments based on constitutive panpsychism or adjacent theories, none of the lines of argument straightforwardly justify the claim that neural organoids have sentience. In addition, we will argue that scientific developments in our ability to create more complex neural organoids are unlikely to lead to the creation of organoids with sentience in the near to medium future, and that precautionary action is, therefore, not required or justified at the present moment. Furthermore, we will demonstrate that precautionary reasoning plays a significant role in all three of the lines of argument, and argue that there is a significant risk of equivocation on the term “it is possible that” between two meanings, that is, “it is not impossible that” and “it is likely that.” We will further argue that precaution in relation to organoid sentience should only start to bite when there is an actual possibility that a type of organoid exhibits sentience, not merely when sentience is a logical possibility.

Neural organoids: The current state of the art and a note on terminology

Because of the restrictions to growth and survivability of cells—caused by the limits of oxygen diffusion through neural tissue—current neural organoids are small spherical structures with a diameter of up to 4 mm, and they will often have a necrotic core. This means that there are limits to how many cells they can contain and how complex their structure can be.

In the scientific and bioethical literature, organoids that model a particular part of the brain are sometimes called “brain organoids,” but this is potentially or perhaps even actually misleading.Footnote 3 No current neural organoid models “the brain,”Footnote 4 and there are no scientific developments on the horizon that would allow the creation of even a very simple brain from stem cells in the laboratory. The use of the term “brain organoids” is particularly problematic in relation to neural organoids made from human stem cells, since there are no conceivable scientific developments outside of the realm of science fiction that would allow scientists to create an organoid brain model of the size and complexity of an adult human brain. Any brain organoid created from human cells will, therefore, be a human “brain organoid” because of its cellular origin and composition, but it will not be “human brain” organoid in size and complexity.

Evaluating the possibility of sentience

There are three main ways in which to evaluate the possibility of sentience for a particular entity. We can proceed on the basis of: (1) a foundational philosophical theory; (2) a theory of the relation between brain or neural function and sentience; or (3) analogy with known sentient entities. In many cases, we will not be able to establish definitively that an entity is sentient,Footnote 5 but only that it is possible or perhaps even likely that it is. We will, therefore, also need bridging premises in our ethical argument, stating what the implications of possible sentience are, for example, bridging premises about the proper level of precaution. In the following, we will consider the possible sentience of neural organoids using the three approaches outlined, but in reverse order, and, therefore, start with analogy.

Sentience by analogy

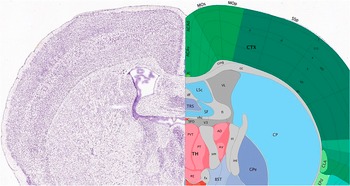

The simplest argument from analogy would be to simply parallelize cortical neural organoids and mammalian brains and claim that because we know that mammals are sentient,Footnote 6 we must assume that cortical neural organoids are sentient, or that it is at the very least possible that cortical organoids are sentient. The problem with this argument is that there is a large number of disanalogies between currently realizable cortical neural organoids and even the simplest mammalian brain. The most studied simple mammalian brain is probably the brain of the mouse (Mus musculus) (see Figure 1).Footnote 7 The mouse brain has about 68 million neurons, of which 14 million are in the cortex, and about 34 million other cells with various functions. It also has an extremely complex structure, with different neuron types clustering in particular anatomical regions and sub-regions.Footnote 8 One recent paper estimates the existence of 29 main classes of neurons in the mouse brain, further subdividable into 315 subclasses.Footnote 9 Even mature cortical neural organoids have far fewer neurons and a much simpler structure.

Representative coronal slice of mouse brain with brain regions identified.

Analogical arguments have also been used as the basis for arguments that cephalopod mollusks, decapod crustaceans, and, most recently, insects are probably sentient and can experience pain.Footnote 10 The starting point for these arguments is the assumption that mammals are sentient, and an analogy is then drawn and substantiated by evidence between the mammalian nervous system and mammalian behavior and the nervous system or behavior of the non-mammalian animal.

In terms of nervous system analogies, this may, for instance, be:

1. Nociception. The animal possesses receptors sensitive to noxious (i.e., harmful, damaging) stimuli (nociceptors).

2. Sensory integration. The animal possesses brain regions capable of integrating information from different sensory sources.

3. Integrated nociception. The animal possesses neural pathways connecting the nociceptors to the integrative brain regions.Footnote 11

These analogies, apart from “sensory integration,” fail for the kind of neural organoids that raise prima facie worries about sentience, that is, cortical neural organoids. A cortical neural organoid has no nociceptors (or any other peripheral receptors) and no neural pathways connecting the nociceptors to integrative brain regions. We might, in the future, construct an assembloid incorporating peripheral nociceptors with cortical structures, but given that pain transmission and perception in mammals is extremely complex, that might not be sufficient to fulfil the requirement for integrated nociception.

Another way in which a neural organoid could acquire or be connected to nociceptors is by being implanted into the relevant brain or spinal cord region of a living animal. In this scenario, it might be possible that the organoid would connect in such a way to the nervous system of the animal that the organoid could feel pain and thereby qualify as having sentience. What would be the ethical implications of this? One possible answer is that it would have absolutely no ethical implications. Prior to the implantation, we had one entity that could feel pain (i.e., the living animal), and, after the implantation, we still have only one entity that can feel pain. If the organoid has integrated with the nervous system of the animal, then the organoid is no longer a separate entity that counts in enumerating the number of sentient entities present. A possible counterargument is that the quality of the pain perception could have changed such that the animal is now more sentient or sentient in a way that has more ethical weight. Humans experience pain differently from rats, and a rat with a human neural organoid implant might experience pain in a more “humanised” and thereby normatively important way. Lurking in the background here may be the assumption that if a human neural organoid has experiences, then these experiences will be human, or at least human-like experiences, but that assumption rests on an equivocation on the term “human.”Footnote 12 A human neural organoid is simply an organoid created from human stem cells. We have no good reason to believe that an entity’s being derived from human stem cells in itself contributes to a phenomenologically human way of experiencing the world. The organoid will have a completely different embodiment and will be connected to a completely different nervous system. Whatever phenomenology its experiences have are therefore actually very unlikely to be a human phenomenology.Footnote 13

Another issue is that many of the other indicators that have been advocated in the literature on pain and sentience in non-mammalian species are behavioral indicators, where an animal responds to injury in a way that is best explained by the animal having a negatively valenced experience when injured. From the same paper referenced above,Footnote 14 we, for instance, get the following behavioral indicators:

4. Analgesia. The animal’s behavioural response to a noxious stimulus is modulated by chemical compounds affecting the nervous system in either or both of the following ways: (a) The animal possesses an endogenous neurotransmitter system that modulates (in a way consistent with the experience of pain, distress or harm) its responses to threatened or actual noxious stimuli. (b) Putative local anaesthetics, analgesics (such as opioids), anxiolytics or antidepressants modify an animal’s responses to threatened or actual noxious stimuli in a way consistent with the hypothesis that these compounds attenuate the experience of pain, distress or harm.

5. Motivational trade-offs. The animal shows motivational trade-offs, in which the negative value of a noxious or threatening stimulus is weighed (traded-off) against the positive value of an opportunity for reward, leading to flexible decision-making. Enough flexibility must be shown to indicate centralized, integrative processing of information involving an common measure of value.

6. Flexible self-protection. The animal shows flexible self-protective behaviour (e.g., wound-tending, guarding, grooming, rubbing) of a type likely to involve representing the bodily location of a noxious stimulus.

7. Associative Learning. The animal shows associative learning in which noxious stimuli become associated with neutral stimuli, or in which novel ways of avoiding noxious stimuli are learned through reinforcement. Note: habituation and sensitisation are not sufficient to meet this criterion.

8. Analgesia preference. Animals can show that they value a putative analgesic or anaesthetic when injured in one or more of the following ways: (a) The animal learns to self-administer putative analgesics or anaesthetics when injured. (b) The animal learns to prefer, when injured, a location at which analgesics or anaesthetics can be accessed. (c) The animal prioritises obtaining these compounds over other needs (such as food) when injured.Footnote 15

For current neural organoids, these criteria cannot be applied. Although they have neural activity, they have no behavior. They cannot move and, for that simple reason, cannot demonstrate, for instance, “flexible self-protection.” Organoids are not full organisms.

A further problem occurs in relation to inferences about sentience in neural constructs based on functions or behaviors that have been learnt through externally generated stimuli. The mere fact that a neural construct produces a particular output based on a particular set of inputs, and that the output over time more closely approximates a desired output, does not in itself imply any kind of sentience. Let us, for instance, consider the research behind the paper published in 2022 with the tantalizing title “In vitro neurons learn and exhibit sentience when embodied in a simulated game-world.”Footnote 16 The research showed that two-dimensional networks of both human and mouse neurons could be taught to play the simple computer game Pong when provided with the right input stimulation, depending on whether or not the Pong task had been successfully performed. The authors claim that:

It is proposed that these neural cultures would meet the formal definition of sentience as being ‘responsive to sensory impressions’ through adaptive internal processes.Footnote 17

They reference this formal definition of sentience to a 2020 paper by Karl Friston and colleagues. In the latter paper, we find the following:

Our use of the word ‘sentience’ here is in the sense of ‘responsive to sensory impressions’. It is not used in the philosophy of mind sense; namely, the capacity to perceive or experience subjectively, i.e., phenomenal consciousness, or having ‘qualia’. Sentience here, simply implies the existence of a non-empty subset of systemic states; namely, sensory states.Footnote 18

This is, as clearly stated, not the definition of sentience that can form the basis for a particular ethical worry about how we treat sentient entities. If being sentient is merely being in possession of “sensory states,” then a radiator thermostat or an automatic windscreen wiper system would count as being sentient. They have sensory states and respond (mostly) appropriately to sensory impressions.

Similarly, a neural organoid that learnt to dose itself with analgesia in response to a certain set of inputs would, on this account, not be sentient in the sense of having a negatively valenced pain experience. It would merely respond to a non-experienced “sensory state.”

In itself, function is not an indicator of sentience. Pace any adherents of panpsychism and panprotopsychism (see more below), none of us believe that our current smartphone, which has an almost infinitely higher level of functionality than merely being able to play Pong, is actually sentient, despite having many varied sensory states.

The same point can be made for living systems in general, considering, for instance, the very well-studied phenomenon of bacterial chemotaxis, that is, “… a biased movement of bacteria toward the beneficial chemical gradient or away from a toxic chemical gradient.”Footnote 19 We cannot infer from the fact that phenol attracts Escherichia coli, but repels Salmonella typhimurium, that E. coli bacteria have a positively valenced experience (pleasure?) when they sense the presence of phenol and move along the phenol gradient toward the source, or that Salmonella bacteria experience something like pain in the same situation.

Sentience by neural function

The second line of argument used to support the possibility of sentience in neural organoids turns on a theory of consciousness,Footnote 20 that is, a theoretical framework that identifies explanatory links between neural activity and consciousness, and/or provides indicators for when measurable neural activity indicates the presence of consciousness/conscious experience.Footnote 21 A recent review of the literature identifies 22 distinct theories of consciousness that vary quite widely in relation to the specific explanatory link proposed, the level of philosophical justification (and plausibility?), and current empirical validation of the indicators derived from the theory.Footnote 22 This indicates the first possible issue for this line of argument. Specifically, that measurable neural activity that counts as an indicator of consciousness on one theory may not count as an indicator of consciousness on another, equally plausible theory. Furthermore, there is more than one plausible theory with some empirical validation. The review, for instance, provides a more in-depth description of four of the 22 theories that it lists.Footnote 23

One of the currently most favored theories of consciousness is the Integrated Information Theory (IIT), which provides a metric for the degree to which measurable neural activity indicates consciousness.Footnote 24 On the basis of this theory, Andrea Lavazza and Marcello Massimini,Footnote 25 in a much-cited paper, argue that:

Scientists have created so-called mini-brains as developed as a few-months-old fetus, albeit smaller and with many structural and functional differences. However, cerebral organoids exhibit neural connections and electrical activity, raising the question whether they are or (which is more likely) will one day be somewhat sentient. In principle, this can be measured with some techniques that are already available (the Perturbational Complexity Index, a metric that is directly inspired by the main postulate of the Integrated Information Theory of consciousness), which are used for brain-injured non-communicating patients. If brain organoids were to show a glimpse of sensibility, an ethical discussion on their use in clinical research and practice would be necessary.Footnote 26

They further argue that:

In addition, an entity that has at least a potential for cognitive functions, and thus is in potentia capable of thought, may aspire to be considered a person, since an individual capable of thought will be also capable of rationality—the hallmark of personhood for a number of theories, including the Kantian one. And persons have rights that objects or other forms of life do not have. Such rights, in particular those to life, respect, and autonomy, are generally considered inalienable. People also have dignity, ‘worth beyond value’ in Kant’s words, although this concept is controversial and its application is not often shared. If a brain organoid were endowed with some features, or properties in a philosophical sense, typical of a person or a person in potentia, this would not mean that it is a person in the usual sense of the term, but surely that it could not be treated as a commodity or a means in the Kantian sense.Footnote 27

Let us first note that the first claim about the current developmental stage of neural organoids is simply false. Whereas current organoids may contain cells that are equivalent to fetal or mature brain cells, and may contain structures that, for example, replicate cortical layering of neurons, they are in no way “as developed as [the brain of] a few-month-old fetus” because they lack the overall structure of the fetal brain (see for instance the fetal MRI brain atlas).Footnote 28

Second, there is an logically dubious and normatively unjustified inferential shift in Lavazza and Massimini’s argument above from “show[s] a glimpse of sensibility” to “is in potentia capable of thought” and from there to the ethical claim that “surely it could not be treated as a commodity or a means in the Kantian sense.” Anyone who thinks that this argument, as it currently stands, is valid and sound would immediately have to stop treating all mammals and potentially many other animals as commodities or means in the Kantian sense. Nevertheless, it may just be that Lavazza and Massimini’s argument is poorly articulated, failing to make explicit several key bridging premises. In which case, there are more sophisticated ways of arguing that a theoretically based metric linking neural activity and consciousness could be used to detect consciousness (or perhaps more likely sentience) in neural organoids (see, for instance, the 2024 paper by Maxence Gaillard, which also explores the IIT and the Pertubational Complexity Index).Footnote 29 On the question of the legitimacy of the IIT, it should also be noted that, in June 2023, the COGITATE consortium reported on its theory-neutral study to evaluate the IIT against another prominent theory of consciousness—the Global Neuronal Workspace Theory (GNWT).Footnote 30 The study failed to confirm its two central hypotheses: (1) that certain forms of consciousness-related neural activity would be observed toward the back of the brain; and (2) that consciousness-related activity would be found in the front of the brain.

In terms of whether an argument for sentience from a theoretically grounded metric or set of metrics might work in principle, one general set of problems about developing and validating such non-introspective “markers” or “signatures” of consciousness has already been pointed out by Seth and Bayne:

To test a theory of consciousness (ToC), we need to be able to reliably detect both consciousness and its absence. At present, experimenters typically rely on a subject’s introspective capacities, either directly or indirectly, to identify their states of consciousness. However, this approach is problematic, for not only is the reliability of introspection questionable, but there are many organisms or systems (for example, infants, individuals with brain damage and non-human animals) who might be conscious but are unable to produce introspective reports. Thus, there is a pressing need to identify non-introspective ‘markers’ or ‘signatures’ of consciousness.

Numerous such indicators have been proposed in recent years. Some of these — such as the perturbational complexity index (PCI)— have been proposed as markers of consciousness as such, whereas others — such as the optokinetic nystagmus response or distinctive bifurcations in neural dynamics — have been proposed as markers of specific kinds of conscious contents. The former have been applied fruitfully to assessing global states of consciousness in individuals with brain injury whereas the latter have been deployed in ‘no-report’ studies of conscious content, in which overt behavioural reports are not made. Whatever its focus, however, any proposed indicator of consciousness must be validated: we need to know that it is both sensitive and specific. Although approaches to validation based on introspection have the problems mentioned above, theory-based approaches are also problematic. Because ToCs are themselves contentious, it seems unlikely that appealing to theory-based considerations could provide the kind of intersubjective validation required for an objective marker of consciousness. Solving the measurement problem thus seems to require a method of validation that is based neither solely on introspection nor on theoretical considerations. The literature contains a number of proposals for addressing this problem, but none is uncontroversial.Footnote 31

The general problem identified here is that in order to validate a metric or an indicator for consciousness, we need to be able to confidently state whether or not a specific organism or part of an organism is currently conscious or not. We cannot assess whether an indicator of consciousness is “both sensitive and specific” unless we know what the ground truth is, for example, whether a negative indication is a “true negative” or a “false negative.” We may be able to establish this ground truth for the cases at either end of the range of the scale from “definitely conscious” to “definitely not conscious.” But what we need in the organoid case is an indicator that is both sensitive and specific for the middle range, where there is uncertainty. Otherwise, we will either under- or overestimate the possibility of the current presence of consciousness.

A second set of problems is that many of the most prominent or popular theories of consciousness either explicitly or implicitly presuppose a brain with functional differentiation between different regions and structures, as, for instance, shown in the ongoing dispute over the role of the prefrontal cortex in conscious experience,Footnote 32 or the discussions about exactly why the cerebellum is not implicated in consciousness.Footnote 33 The problem that arises here is that neural organoids are not structured in this way. They, for instance, do not have a prefrontal cortex with subcortical connections to an occipital cortex and a hippocampus. Whatever role functional and structural differentiation plays in the generation of consciousness in the mammalian brain, organoids do not have it. This does not mean that it is impossible for a neural organoid to be conscious or sentient, but just that indicators for consciousness developed for and validated on highly structured brains may not be good or valid indicators for consciousness in an organoid.

Taken together, all the issues identified indicate that although we have a number of contenders for a theory of consciousness linking neural activity to consciousness, we have no valid ways of measuring or estimating whether the measurable neural activity in an organoid is an indication of consciousness or sentience.

Sentience by philosophical theory

The philosophical theories of conscience or sentience that offer the most direct support to the possibility of sentience in neural organoids are panpsychism and its newer variant, panprotopsychism.Footnote 34 The most commonly held panpsychist view is what Goff calls “constitutive panpsychism”:

Constitutive panpsychism – At least some fundamental material entities are conscious; facts about human and animal consciousness are grounded in facts about the consciousness of their fundamental material parts.Footnote 35

Much here hinges on the precise exegesis of “fundamental material entities,” but let us, for the sake of argument, assume that some kind of neuronal panpsychism is true, and that the fundamental material entities that are conscious (or protoconscious) are individual neurons. The only conscious entities we have certain knowledge of seem to generate their consciousness through complex neural networks, and for the question of consciousness in neural organoids, neuronal panpsychism would practically and in its implications incorporate most variants of panpsychism that ascribe consciousness to simpler material entities than neurons. Accepting neuronal panpsychism leads to the result, by definition, that neural organoids are conscious, because they contain material entities that are conscious.

Does that matter ethically? This again seems to hinge on how precaution should be applied. The consciousness that a single neuron possibly has would seemingly be fairly simple, and even if that scales up significantly in an organoid, we still have to decide to what degree that consciousness is ethically cognizable. In order to do that, we have to be able to estimate the presence, absence, and “quality” of those features of consciousness in an organoid that are potentially ethically important. We cannot get that knowledge simply by looking at the number of neurons or the complexity of connections. It is generally agreed that the cerebellum is not conscious, but the human cerebellum has many more neurons than the brains of small mammals. The requirement of some degree of knowledge about the consciousness present in the organoid will then require arguments that are very similar to those discussed above, that is, either arguments by analogy to structures known to be conscious (i.e., animals and animal brains) or arguments very similar to those relating to a theory of consciousness.

The role of precaution

As has become evident above, the ethical arguments relating to consciousness in organoids rely on empirical premises about the absence or presence of sentience, and the “quality” of that sentience. Few dispute that sentience morally matters. Further, few would disagree that if we had incontrovertible evidence of valenced experiences in organoids, then this should entitle them to the same kind or degree of moral status as other entities that have similar valenced experiences. Nevertheless, on all of the three main lines of argument we have analyzed above, it is clear that we need a premise bridging from uncertain or inconsistent evidence of sentience to ethically justified action guidance.

The precautionary principle has been invoked as such a bridging premise.Footnote 36 It often takes the form of something like “if it is possible that neural organoids are sentient, then act as if they are.” However, the force of this condition rests on three distinct propositions: that organoid sentience is a credible empirical possibility, that such a possibility grounds at least a prima facie duty to prevent harm, and that this duty already warrants limiting current research. Mere logical conceivability is insufficient; only a non-trivial evidential possibility—backed by reproducible data—should activate precaution. At present, cortical organoids lack nociceptors, peripheral inputs, and large-scale functional architecture, all standard “warning signs” in animal-sentience debates.Footnote 37 Further, from a neural function perspective, until neural organoids exhibit electrophysiological patterns that at least one well-supported theory of consciousness links to valenced experience, then the epistemic case for strong precaution—in the sense exhibited by the above conditional—remains weak.

Even when that threshold is crossed, it is questionable that the precautionary principle is binary. On the one hand, Sawai et al. urge that,Footnote 38 given persistent uncertainty, human cerebral organoids be treated as if conscious, a stance extended by Niikawa and colleagues in recommending default animal-welfare coverage.Footnote 39 On the other hand, Birch and Browning defend a proportionate model in which protective measures scale with both the gravity of potential welfare harm and evidential strength.Footnote 40 A defensible middle path would introduce graduated safeguards—routine welfare monitoring, independent ethics review, and preregistration—while reserving moratoria or animal- or person-like rights for the point at which organoids satisfy—alla Seth and BayneFootnote 41—a clearly specified and sensitive evidential threshold.

Furthermore, precaution also entails opportunity costs. Sunstein warns that over-precaution can paralyze innovation,Footnote 42 and Koplin and Savulescu note that unduly restrictive rules could delay therapies for neurological disorders.Footnote 43 A legitimate and normatively satisfactory precautionary approach must therefore weigh two uncertainties: the moral risk of harming a possibly sentient organoid and the social risk of foregoing scientific and therapeutic benefits. Decision-theory tools, such as expected moral value and option value, can help to make those trade-offs explicit.Footnote 44

To give the precautionary principle practical bite in neural organoid contexts without sliding into alarmism or complacency, precautionary duties should, arguably, intensify only when structural or functional “red flags”—for example, integrated thalamocortical circuitry or sustained, stimulus-dependent neural dynamics exceeding accepted perturbational-complexity thresholds—converge with predictions from more than one theory of consciousness.Footnote 45 At that juncture, the burden of justification shifts to researchers, who may be required to meet animal-level welfare standards, avoid painful manipulations, or even pause a program. Until such evidence accrues, current neural organoids remain too rudimentary to justify precautionary or even proportionate regulation.

Conclusion

The practical conclusion of the analyses above is that there is no current need for precautionary action or regulation of neural organoid research based on the possibility that such organoids may be sentient. Current neural organoids are very, very unlikely to be sentient, and this assessment also holds for new neural organoids that can be created through likely near or medium-term scientific developments. This claim is obviously contingent on the current state of research and likely developments, but we believe it to be fully justified at the time of writing. Even the currently most advanced neural assembloids contain far fewer neurons than any mammalian brain, and they do not contain all of the elements necessary for perception or sentience.Footnote 46

Perhaps the more interesting and philosophically relevant conclusion is that analyzing the possibility of sentience in neural organoids actualizes many of the same questions that are relevant to the ongoing and increasingly sophisticated discussions of the possibility of sentience in non-mammalian animals.

Finally, our analysis has shown that employing precautionary reasoning in relation to sentience in neural organoids and other entities requires much more specificity in relation to when precaution should start to bite. That is, the proponent of a precautionary argument must specify what kind of sentience the argument refers to, and the quality and strength of evidence for that kind of sentience that is required for “it is possible that” to be made out.