1. Introduction and background

The visual representation of musical works has multiple conventions around the world and musical scenes. These conventions have been used and improved over many years, adapting to the musical needs of their times, from developing signs such a neumes, notehead, tone clusters or Sprechtimme notation (Brown Reference Brown1986: 188; Gaare Reference Gaare1997; Byron Reference Byron2006: 6; Strayer Reference Strayer2013: 1) that represent an expected or approximated tone; graphics and text that provoke a musical responses (Cage Reference Cage1961: 35–40; Schröder Reference Schröder2011; Bogue Reference Bogue2014: 10) or notation that indicates how to interact with new technology designed to make music in novel ways (Burtner Reference Burtner2003; Hewitt Reference Hewitt2012; Mays and Faber Reference Mays and Faber2014; Huberth and Nanou Reference Huberth and Nanou2016) to notation that focuses on performative actions (Kojs Reference Kojs2011; Santini Reference Santini2020), real-time interactivity (Yoder Reference Yoder2010: 9–16; Shafer Reference Shafer2016) or notation as composition and improvisation tool (Jennings Reference Jennings2005; Spronck Reference Spronck2016: 52–55, Reference Spronck2016; Mauro Reference Mauro2024).

1.1. Notation adaptability

Music notation requires different symbols and conventions depending on musical ideas it is intended to portray, as well as its target readers/performers. While traditional music notation is perfectly adequate for a trained classical musician, its notational complexities may limit accessibility for amateur musicians (Stenberg and Cross Reference Stenberg and Cross2019) who might prefer a notation that instructs them what specific keys or string to play, such as in the case of tablatures (Gaare Reference Gaare1997: 18). However, as new technology becomes integrated into music making, new notation systems must be developed to accommodate the novel capabilities provided by these technologies. In many cases, previous music writing conventions are combined with the new notation symbols or systems (Hewitt Reference Hewitt2012; Kretz Reference Kretz2012; Kim-Boyle Reference Kim-Boyle2014). This hybridity of notational approaches is often employed by composers working with acoustics plus electronics, as both the traditional performative practices and interactions with the new technology are necessary, as exemplified by Matthew Burtner’s Noisegate 67 or compositions using the SAMPO (Burtner Reference Burtner2002; Portovedo et al. Reference Portovedo, Lopes and Mendes2017; Sampo 2024).

The performer working with a technologically augmented acoustic instrument must control both acoustic and non-acoustic sound. The latter is usually synthesised, recorded or produced by actuators – although actuators ultimately produce acoustic sounds, impetus comes from some form of interaction with electronic components and conversion of electrical to mechanical energy, for example (Kapur et al. Reference Kapur, Singer, Benning and Tzanetakis2007; McPherson and Kim Reference McPherson and Kim2010). While making a visual representation of the desired acoustic sound is usually solved by utilising conventional western music notation, the synthesis usually requires new symbols, descriptive text, graphics or other elements that portray the sound-image intended by the composer.

1.2. Different kinds of notation strategies

There are infinite ways to approach representation; nevertheless, they can be catalogued under the following categories, based on what the notation is describing:

-

The expected sound, specifying pitch, duration and, to some degree, timbre, for example, traditional western notation;

-

How to interact with a musical instrument, such as in the case of action-based notation for some of Helmut Lachenmann and Mauricio Kagel’s compositions;

-

Actions to be followed, sometimes regardless of the expected sound or instruments used, for example, indeterminacy in John Cage;

-

A musical path to follow using certain conventions, as is usual in musical scenes such as in Jazz and Gamelan music; or,

-

A suggestion that stimulates musical outcomes (normally through graphics), often relying on cultural background, visual and spatial conventions, Gestalt principles found between the notated elements, etc.

Whereas we can separate notational strategies in these categories, this does not mean that they are opposite to each other, in fact, they are regularly used together. Scores written for augmented instruments benefited from using a combination of notational approaches. In Noisegate 67 for augmented saxophone, Matthew Burtner combines traditional notation with extra staves populated with graphic notation that represents the way in which the performer must interact with force resistance sensors mounted on each key of the saxophone. This interaction is then used with a mapping system that allows the performer to control filters in a noise generator (Burtner Reference Burtner2002). In Seth Cluett’s A state of mutual tension, a wind ensemble is augmented by mounting loudspeakers on 3D printed extensions. The sound of each instrument can be rerouted into any of the loudspeakers, shifting the perception of each instruments’ sound. In this case, the routing indications are described in the score using text (Cluett Reference Cluett2018). In Tod Machover’s seminal work, Begin Again Again… for hypercello, a staff on the score indicates how to interact with the hypercello’s sensors. The symbols used represent musical gestures that are automatically generated by the electronic, using a notation that resembles traditional western music notation. The ‘electronics’ staff also features text indications for performative actions and improvisation guidelines (Machover Reference Machover and Forney2011).

1.3. The problem with scores today

In the western musical tradition, notation conventions were slowly adopted over many years, along with the development of musical instruments, performing techniques and musical needs. Nevertheless, modern musical practices require new notational strategies, and establishing notation conventions is yet to be achieved. For instance, in his doctoral thesis, Christian Dimpker quotes Ertuğrul Sevsay’s statement that there is no conventional method of notation for playing strings behind the bridge. Then, Dimpker presents examples of this technique represented by different composers using similar strategies, mostly including X-shaped noteheads but with the addition of an extra symbol or verbal (written) instruction (Dimpker Reference Dimpker2012: 98–102).

Some musical traditions and new approaches to making music require a completely different notational approach. For instance, for Javanese Gamelan music – traditionally learned aurally – many notation techniques had been proposed, of which cipher notation is the most commonly used. This notation uses numbers to represent pitches, while rhythmic figures use lines on top of the numbers in a similar manner to beams in the traditional western music score notation. However, they are not always written, as each instrument covers a specific function within the music, needing to realise rhythmic or melodic patterns that do not need to be written once they are learned. The cipher notation is, to some degree, just a teaching device, and the aural tradition still persists (Sumarsam 2023). On the other hand, works relying on technology often need technologically mediated scores, such as in the case of Cat Hope’s animated graphic notation and the potential of this approach (Hope Reference Hope2017, Reference Hope2020, Reference Hope2025); code-based scores – such as those used with Super-Collider, Faust, Chuck and many others; networked music – as is often the case in compositions for laptop orchestras – (Hewitt Reference Hewitt2012) or shared sonic environments and collaborative composition systems (Barbosa Reference Barbosa2003, Reference Barbosa2005). One can argue that for these examples, conventions are established, but they are unique to each platform or programming language.

Additionally, in the field of digital musical interfaces, many approaches to represent music made using augmented instruments have been explored but, similarly to the previously discussed examples, no conventions have been established. This is probably due to the fact that there are no established conventions on how augmented instruments’ physical designs, sonic capabilities and ways of interaction should be. The use of multiple musical representation and communication methods today, lacking conventions for technologically mediated music, resembles the oral tradition that preceded western musical notation, in which ‘early-notated works were typically not effective representations of the orally produced music’ (Patterson Reference Patterson2015: 37).

Furthermore, western notational practices had historically been tied to the musical instruments, and the way in which music is perceived has been determined by notational choices (Gaare Reference Gaare1997). These historical precedents should make us reflect about the future of representation for mixed electronic/acoustic music. Establishing conventions on portraying sound-images through representation might help unify and strengthen visions for technologically enhanced instruments and music in the coming years. In the first instance, it might be necessary to understand the score as an element of the musical work rather than a mere representation tool, as it has become more evident with new interactive, reactive and generative scores.

Before new electronic and digital technologies got involved in the western musical tradition, the musical score had historically been the main way to represent musical works. While it is true that early forms of musical notation were insufficient without the accompaniment of oral demonstrations, the evolution from neumes (9th century) to modern notation helped in conferring notated score a great deal of independence from the oral transmission of music. ‘For the first time in the West, [after Guido d’Arezzo’s introduction of the staff] the pitches of a melody could be transmitted without the aid of oral tradition. Musicians could learn new songs without hearing them first’ (Strayer Reference Strayer2013).

However, the notated score alone was not considered to be the musical work (Goehr Reference Goehr2007: 176–204) since the idea of ‘a fixed creation independently of its many possible performances had no regulative force in a practice that demanded adaptable and functional music, and which allowed an open interchange of musical material (Goehr Reference Goehr2007: 185)’. Music compositions were considered finished works only after most notation conventions had been established in the 1800s when notation became central in establishing the reputation of composers. Lydia Goehr argues that it was then when the idea of the musical work as a ‘composer’s unique, objectified expression, a public and permanently existing artifact made up of musical elements’ appeared (Goehr Reference Goehr1989). With the acknowledgment of the composer’s creative work – as understood under E.T.A. Hoffmann’s Werktreue concept – locked and fixed, the representation through notation became central to the history of western music as well as to performative practices.

The musical work is intimately linked to its notated representation and how these symbols relate to the performer. In the light of the current musical practices, such as the use of technology as a strong component of music making, we believe that a modern work-concept – the way in which we recognise the essence of the work – should consider these two elements, notation and performance, as fundamental to the music work. Additionally, the medium – instruments, technology, interactive systems, etc. – should also be part of the ontology of that work-concept.

In the following sections, we discuss a theoretical framework through which score can be viewed as an essential component of the musical work, allowing for the many notational approaches that have been used, in particular but not uniquely, in the new trends of technologically mediated music to become active elements of the musical work. We focus on a case study presenting the notation strategies for a string instruments augmentation system aiming to address the need for new notational conventions for augmented instruments.

2. Conceptual framework and case study

A new work-concept model, presented in (Ramos Flores Reference Ramos Flores2021), establishes the equal importance of three elements in the ontology of the musical work: Score, Performance and Medium. This tripartite model is built upon Jean Molino and Jean Jacques Nattiez’s ideas.

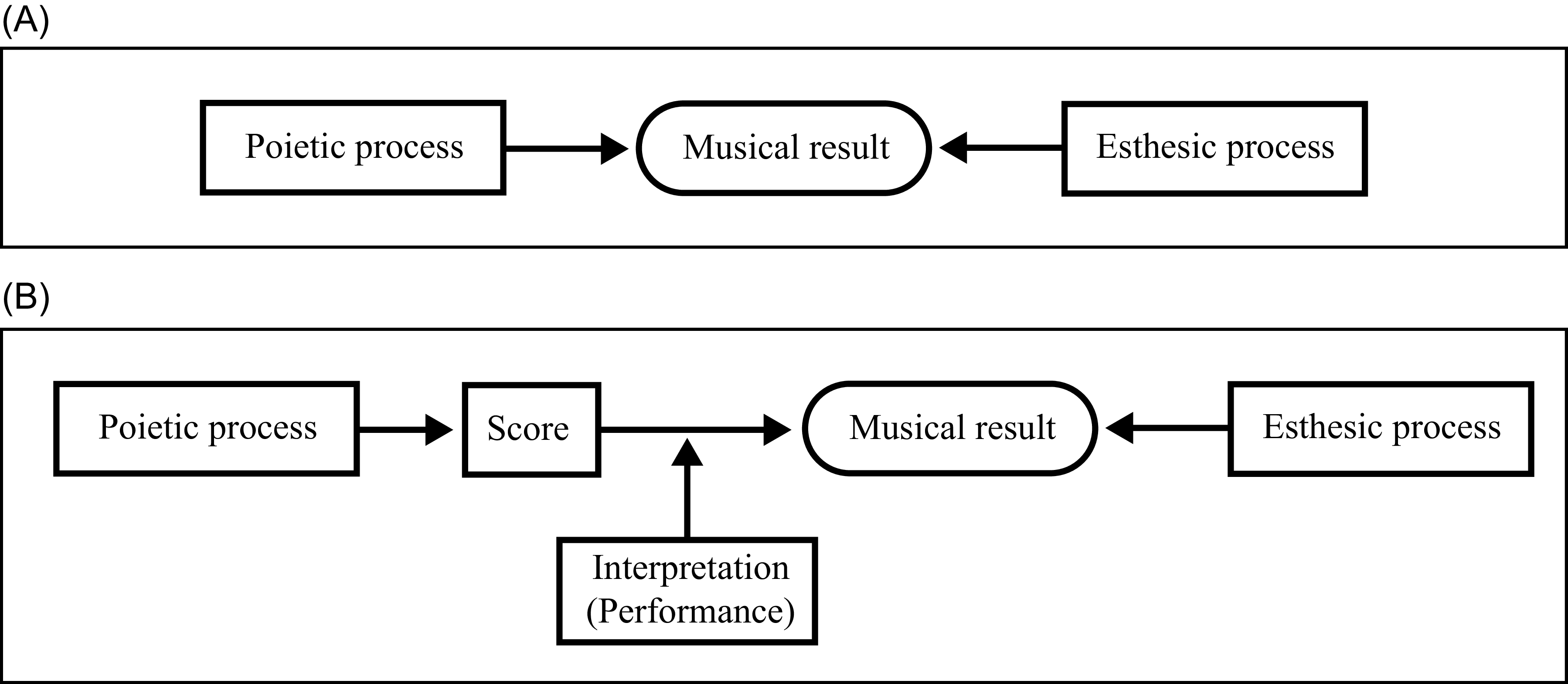

Jean Molino argues that the musical work is not an intermediary in a unidirectional process between composer and listener, and it is not just a message but a symbolic form (a piano sonata for instance) that results from the process of creation (poetic) as it encounters the process of reception (esthesic) (Nattiez Reference Nattiez1990: 17). Nattiez takes this model and inserts the score and performance in the path of the poietic process, acknowledging the importance of both in the creation of the work (Nattiez Reference Nattiez1990: 73) (see Figure 1).

(A) Molino’s model and (B) Nattiez’s model.

In our model, we integrate the poietic process along with the score and interpretation, and we add a new component, the medium. The esthesic process is not eliminated, but it is integrated in the score and performance. We redefine the terms score, performance and medium, as explained in the next section.

2.1. The tripartite model: a new work-concept for current musical practices

The tripartite model has been devised because the ways in which we make music are changing, and new approaches are shifting the focus away from previous paradigms: we can create music directly on a computer manipulating recorded sounds or through multiple electronic music production techniques, networking smart systems, feeding information into A.I. systems, manipulating electronics (circuit bending) or re-programming old videogame hardware (chiptune), amongst many other possibilities. As new ways of imagining, designing, building, making and listening to music arise – including making music for/with augmented instruments – we find previous models inadequate. Preceding models neglect acoustic phenomena and materiality of musical works, preventing us from recognising and embracing music-making’s physicality and the curation of actions and mediums that produce sound as part of the creative process and the work itself. We see the need to consider the imagination and effort it takes to create the systems that allow us to create music in novel ways as part of the musical work.

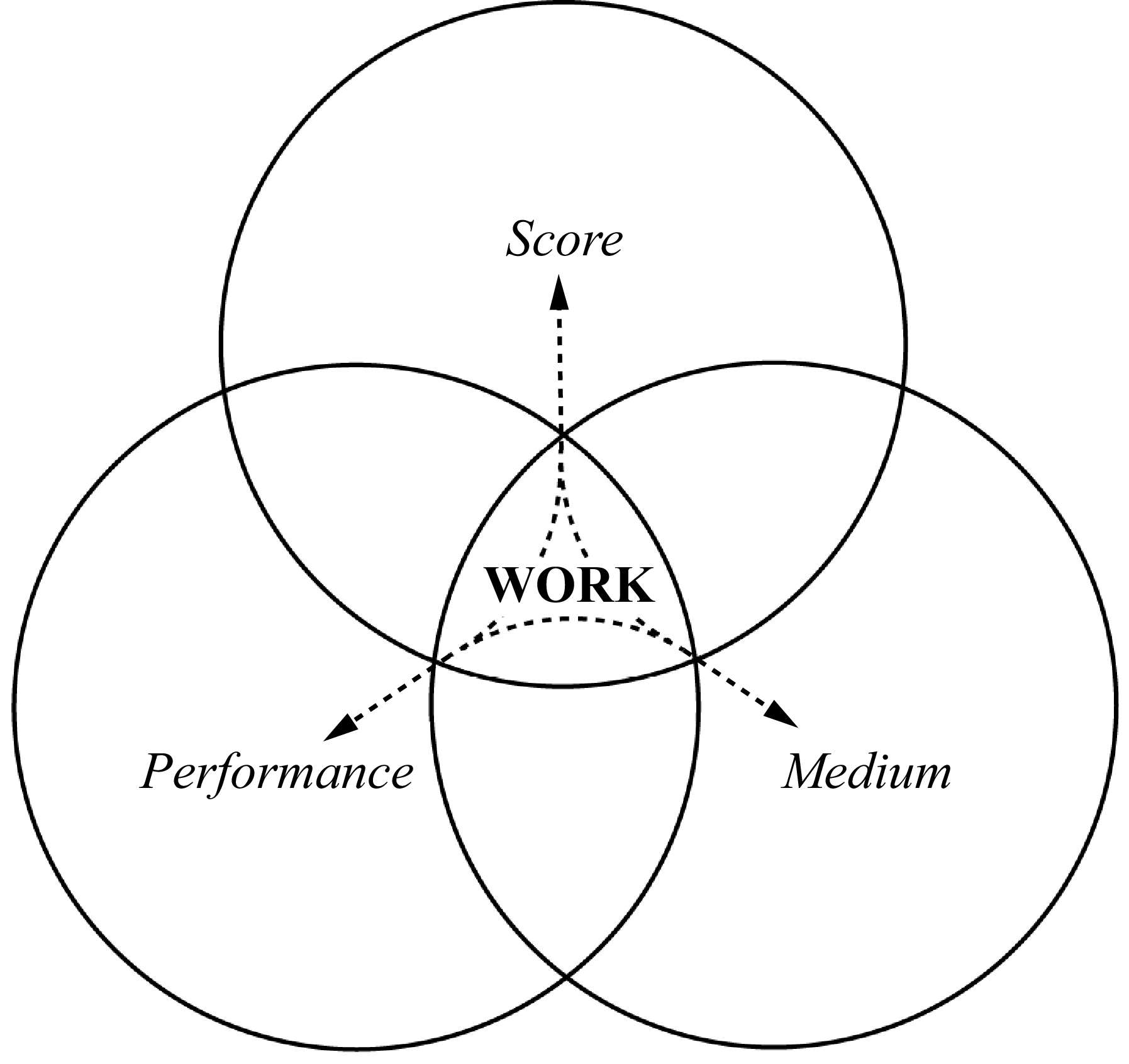

Our model integrates three components: the score, the performance and the medium (see Figure 2). It should be noted that they are spelled using italicised font; this is to prevent confusion with the original terms that do not imply what is described below. While other words could be used, we decided to use these ones to stay close to the original meanings of the words and expand them.

Tripartite model. The interactions flow in any direction.

The score is the sound-image the composer initially imagines. The score represents the composer’s design, both in its dynamic (the creative act) and static states (the product). While the score (non-italics) is the representation, the score (what is represented) is the essence of the work. In a piano sonata, the score is the notation of the composition, while the score is the structure, the harmonic progression, the melodies, articulations and every other musical aspect, as well as the interactions between all of these elements. The score is the mental image of the music, regardless of whether it is created by an individual or as a result of a collaborative process, and independently of the compositional techniques, from the traditional approach imagining sounds or sitting at the piano, to designing processes that determine musical aspects – such as an algorithm (Xenakis Reference Xenakis1956; Mozart Reference Mozart1793; Shapiro and Huber Reference Shapiro and Huber2021), the computation of information fed into a machine learning system (Dubnov and others Reference Dubnov, Assayag, Lartillot and Bejerano2003) or the process of audio degradation by recording, reproducing and re-recording multiple times in Alvin Lucier’s I’m Sitting in a Room.

The performance comprises three different stages: first, the virtual performance that the composer imagines while designing the work. This is rooted in the composer’s experience as a performer, or their knowledge of the instruments and how performers would interact with them. The second stage is a collaboration with performers, in which changes occur to the work. Note that the first and second stages are often blurred into one as the composer can be a skilled performer, or the composer might be working with an alternative music making system such as programming a composition to be performed by a computer. The final stage is the actual performance of the work – either live, recorded, synthetically produced, etc. For a piano sonata, the three stages of performance are (1) the composer’s previous knowledge and exploration of the instrument, as well as how the imagined composition would feel under the hands of the performer and sound with one or another piano or acoustic space. This stage also includes the composer playing the in-progress-composition. (2) Then, the composition is workshopped with a pianist. (3) Finally, the third stage is the performance in a real concert, where the acoustics of the room, the audience and the performer’s physical and mental state influence the music as it is transported from the abstract to the real world. Meanwhile, for the composition encoded in software, for instance, a SuperCollider code, the stages are (1) designing an overall structure of the code making correct use of the programming language, (2) running the code and listening to the result in order to make appropriate changes or debug and (3) running the code and performing in front of a live audience.

The medium represents the materiality of sound – the physical and acoustic qualities of the sound – and the physical object that produces the sound. It is normally determined by an instrument. This could be a musical instrument (acoustic or electronic), the human voice, synthesiser, software, a household object or any other body or system (such as production software or even a full studio equipment set) used to produce or modify sound. Under specific circumstances, the medium can also be found in the qualities of the acoustic space in which the work is being performed, which can also be a core element of the composition; for instance, the use of large reverberant spaces in the works of Oliveros, Dempster and Panaiotis – the Deep Listening Band (Oliveros Reference Oliveros1995).

The presented model implies that the notated score is now embedded in the score as an integral component of the work. What this means is that it now shares the same space as the composer in the creative process, as it is a tool that represents the work and becomes the mould that the composer shapes as he listens back to it in the creative process. This does not imply that the score shares authorship, but it is recognised as an influential actor in the poietic and esthesic acts.

2.2. The score in the score

In the Introduction section, we argued that notation can be catalogued under five different groups. In this section, we discuss how those groups fit in the tripartite model.

Scores that describe the expected sound – with a certain degree of specificity – are usually those that follow the traditional western notation or similar strategies. Their influence on musical work lies in the strong tradition that suggests specific traditional outcomes. Noise control, for instance, is hard to represent using this notation style. In consequence, adhering to this notational style limits musicians’ experimentation with noise and non-traditional sounds, as new symbols (usually graphics) would be necessary. In this way, notation working as a limiting space shapes the final work sound as suggested by the tripartite model.

Traditional notation can also affect the way we perceive and approach music. Gaare states that a seven-note diatonic scale notation system makes it difficult to read music that requires multiple accidentals, causing some performers to perceive compositions as easy or difficult depending on the accidentals used. This causes stress that hinders the performance, shaping the final work (Gaare Reference Gaare1997).

Traditional notation has not adapted quickly enough to provide the necessary indications of how to perform some compositions that involve new technologies. Cléo Palacio-Quintin’s hyper-flute, for instance, can detect light changes and use the acquired data to control synthesis. According to Palacio-Quintin (Reference Palacio-Quintin2003), the light sensor is ‘positioned at the headjoint, and is designed to be used in conjunction with stage lighting effects’, this suggests that she had a mental image of the sounds and music she could produce using the photosensor by means of well-planned performative choreographies. Nevertheless, if she – or anybody using a similar technique with any other augmented instrument or controller relying on photosensors – wanted to notate the expected sound resulting from these choreographies, they would find traditional notation inadequate, as the notation would probably have to describe the choreographies and the lighting effects together with the expected sound so that it would be useful to performers wanting to reproduce the intended sounds. This would involve the performance and medium of the tripartite model.

Scores that describe how we interact with a musical instrument have proved to be very effective in exploring instruments to produce new sounds. In Yuunohui, for violin, Julio Estrada notates pitches on a regular staff, while also implementing extra staff-like systems that describe how the bow should be played throughout the piece (Estrada Reference Estrada1989).

Juraj Kojs states that, due to the physicality of music making, notational systems such as tablatures ‘reflect the intimate relationships between the instrument maker, composer, performer and notation, specific to a particular instrument and locality’ (Apel Reference Apel1953; Kojs Reference Kojs2011). New interfaces often require these kinds of scores, depending on their design they might need totally new symbols but that act in a similar way to tablatures, except that instead of describing what or which part of the instrument to interact with, they describe physical gestures to be performed over the body of the interface, for instance rubbing the hand over a multitouch sensor as if drawing a specific shape (Enström and others Reference Enström, Dennis, Schlei and Lynch2015).

In the tripartite model, the score brings the performance and medium together in a unique way. The performance becomes the exploration of the medium, as the score offers a guide to how we can interact with the medium.

Scores that describe actions to be followed usually put less importance on the medium. The score is usually concerned with ideas of order and ‘suggests the presence of a man rather than the presence of a sound’ (Cage Reference Cage1961: 37). In other words, the score focuses on the actions that performers must follow, such as in Cage’s Song Books, where the score suggests the performers what to do but not specific sounds. This does not mean that the composer does not have a mental image of the sounds, but the focus of the score is on how the actions configure a musical outcome.

Sounds resulting from these scores depend mostly from the choice of medium, as the actions proposed by the score may produce any sonic result. In fact, sometimes, thanks to new technologies, the score and medium act as one, especially in the case of interactive compositions: In Look Out, a 2020 composition by the first author of this paper for augmented saxophone and undefined ensemble, the score offers an improvisatory framework. The software working with the augmented saxophone selects passages of indeterminate nature, previously defined by the composer. Pitches (harmonic sequences) are suggested based on audio analysis of the saxophone, but graphic notation, performative actions and melodic contours are selected by the system and displayed on screens for the performers. This interactive score offers a musical structure and suggests musical gestures to unify the ensemble to follow a specific direction; however, sound and performative actions are left to be chosen by the performers (Ramos Flores Reference Ramos Flores2021: 159).

Some musical traditions require scores that present a musical path to follow using certain conventions. Jazz lead-sheets present a melody, harmonic framework, time and dynamic indications. Nevertheless, the ‘how’ is expected to be known and realised by the performers. Here, again, we have a case where the medium comes last, and the score is a guideline of the composition. Nevertheless, in this case, since some conventions on how to follow the score are expected, the score tends to have a greater importance. Often, part of the work of the composer is to select performers with specific sound pallet and skills to perform the compositions in order to approximate the desired score.

Tony Roe has been working with his jazz band Tin Men and the Telephone using a telephone app that allows for the audience to participate actively in the concerts. The app generates the score, as the audience interacts with it by swiping, texting and shaking their smartphones, creating and displaying the score on a screen that shows ‘beats, melodies and harmonies that are being performed by the band on the spot (2016)’.

Finally, graphic notation and other open scores offer a suggestion that stimulates musical outcomes. Composers using this notation style often appeal to certain conventions such as timelines, high and low positions that represent pitch, sizes and shapes that suggest loudness or spectromorphological developments. Some other times, the images suggest shared cultural background and other life experiences such as the perception of nature, physics and human behaviour. When this kind of notation is used, the creative flow in the tripartite model allows for the score to blur the role of the score, letting the creative impetus move more freely between the medium and the performance.

Xenakis’ UPIC system is the seminal interface that translated graphic notation into sonic outcomes, either by using the drawn lines as a wavetable in the audio process or as a control signal. In other words, each line had the capacity to be remapped to transform audio in multiple ways (Marino and others Reference Marino, Serra, Raczinski, Marino and Serra1993; Di Scipio Reference Di Scipio2023).

As discussed in this section, the proposed work-concept takes the concept of the score and expands it as the score, which is a wider aspect of the poietic process. In this larger space, the score (notation) influences the musical work and remains somewhat free to inhabit the performance and medium spaces affording a new understanding of the use of new technology and how we communicate the way in which our musical practices relate to it.

3. Towards a notation for augmented instruments; the Kuturani as a case study

Augmented instruments lack a standard notation due to their diverse development paths. Standardising notation could benefit their development and the music they produce by establishing common ground. This may not be the only way to achieve this, and we acknowledge the work of institutions, universities and groups facilitating ways to share and inform about new developments worldwide through repositories and events such as NIME, TENOR or ICMC. However, we believe that standardisation of notation for new instruments, regardless of their characteristics, would help create a common tradition in a similar way as when western musical notation was developed alongside acoustic instruments, regardless of their designs and performative dissimilarities.

Pedro Rebelo states that the roles and functions of notation are to document, communicate and reflect. He argues that ‘with the act of documenting comes solidification, validation, authority and instruction’. He also believes that, when the codes become language, by virtue of a culture of readership and interpretation, the score transforms into a ‘live’ text that facilitates the passage of the score into the reality of the performance. Furthermore, ‘with the very essence of a phenomenological approach to notation comes the understanding that rather than a tool to express ideas, notation is, like language itself, a generator of ideas, an embodied experience and an action in itself’ (Rebelo Reference Rebelo2010).

Considering Rebelo’s roles and functions, as well as the notational strategy categories, we reflect on our needs writing scores for our instrument augmentation system – the Kuturani – to propose guidelines for standardising notation for augmented instruments, described on section 3.3.

3.1. The Kuturani

The Kuturani, inspired in the hyperinstruments paradigm, has been devised to aid in instrumental augmentation in communities with limited resources. We are not focused on creating a unique hardware design but a conceptual device that sets the basis for creating personalised augmented string instruments. We focus on low costs and easy-to-build designs for non-experts. We use off-the-shelf boards and sensors and develop software, guides, gerber filesFootnote 1 and workshops to help users build personalised Kuturani.

We designed variations of the same concept including similar setups but with different capabilities, depending on the development boards used. While the full description of our development is not relevant to this paper, it is important to mention a few aspects of it that are the basis for our interest in a notation that could be useful in writing for varying augmented instruments:

-

– The Kuturani is not designed for a specific instrument but it is intended to be a device that can be mounted on any string instrument.

-

– It features expansion-programmable ports for analogue sensors, digital sensors and I2C-based sensors.

-

– It includes a set of onboard sensors: A gyroscope/accelerometer helps monitor the overall motion of the instrument; potentiometers, buttons, a rotary encoder and LED display to setup and control the device, as well as audio input and output ports (one version of the Kuturani uses a cell phone for this), see Figure 3 for a prototype of that includes these components.

Figure 3.Prototype of one of the Kuturani versions. It features a (1) gyroscope/accelerometer, (2) microphone, (3) Teensy 4.0 and audio board, (4,6) input and output gain control, (5) rotary encoder, (7) mems microphone (optional), (8) transducer, (9) LED display, (10) push buttons and (11) audio amplifier.

Other augmented instruments are usually designed with a fixed setup in mind. In our development, the user chooses the sensors and the way in which these are used. This presents additional challenges in determining a notation strategy for the Kuturani.

3.2. Mapping strategies

The Kuturani captures data to control synthesis, control parameters or trigger events. As a controller, it can remap data to desired processes using onboard or external software. This feature found in most augmented instruments offers unlimited possibilities but also makes it challenging to establish a standard notation.

John Croft believes that the designed interactions of a controller should meet a few conditions for instrumentality; for instance, the sonic response generated by the computer should be proportionate to the performer’s action and share energetic and morphological characteristics (Croft Reference Croft2007: 64). Aspects of instrumentality are essential creating a relationship between the performer and augmented instruments, allowing for the opportunity to ‘learn’ and improve how to make music with these devices and affording the possibility of expression. Notation should reflect the instrumentality of each augmented instrument, allowing the performer to have a meaningful proprioception response, regardless of the sonic outcome. This response should then be learned based on the composition’s mapping setup.

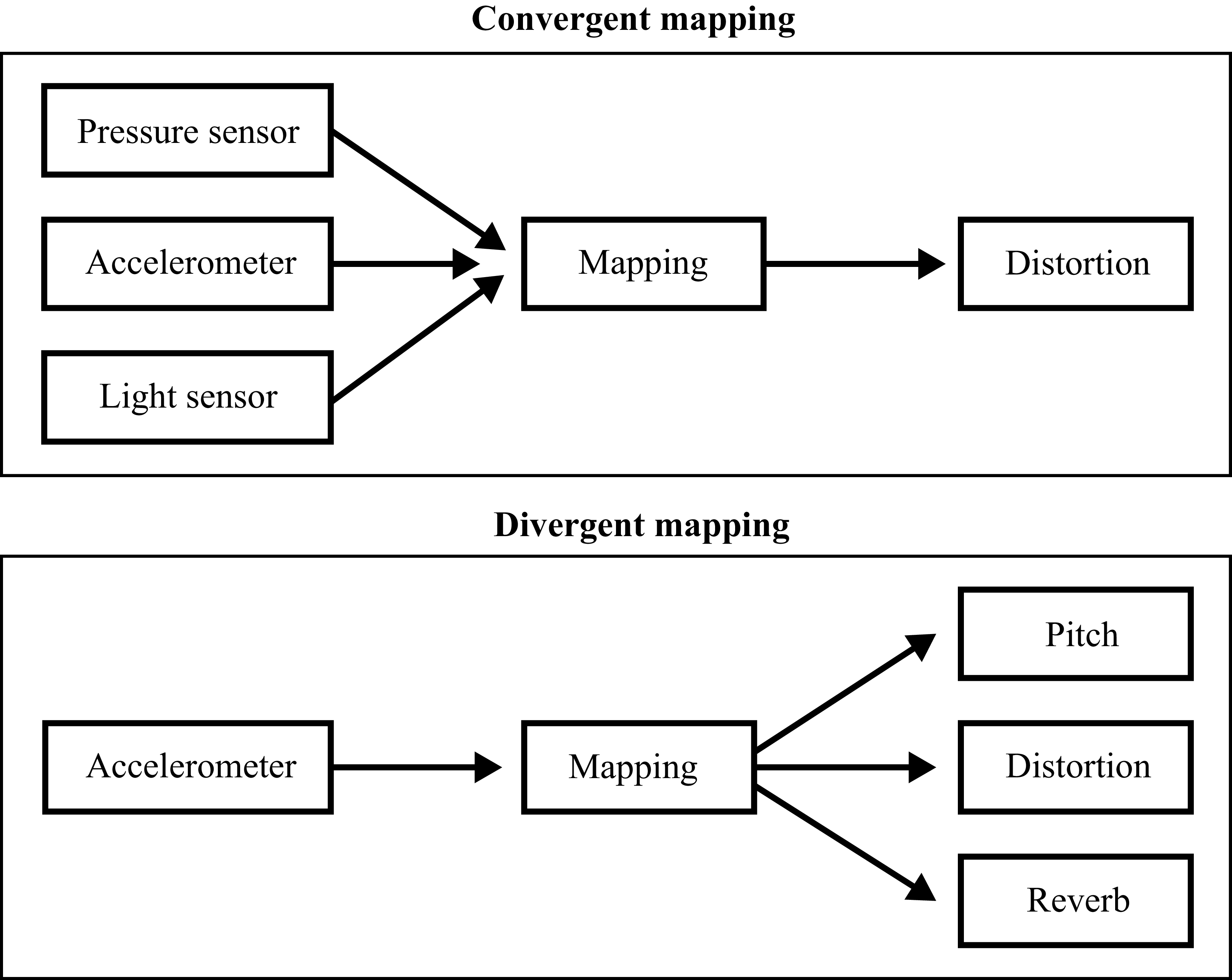

Andy Hunt and Ross Kirk identify two parameter mapping strategies. The conventional strategy allows for a direct mapping between one action and one sonic result; for instance, rotating a knob changes the pitch. However, the one-action-to-one-sonic-result is usually not found in real mechanical acoustic systems (acoustic instruments). To change the pitch of a saxophone, for instance, the performer not only changes the fingering but also requires a specific air pressure to change pitch with the correct intonation. In this case, multiple actions control one parameter. This is known as convergent mapping. On the other hand, sometimes, one action controls various parameters. For example, changing the air pressure on the saxophone changes not only the volume but also the timbre and pitch. This is known as divergent mapping (Hunt and Kirk Reference Hunt and Kirk2000) (see Figure 4).

Two mapping strategies.

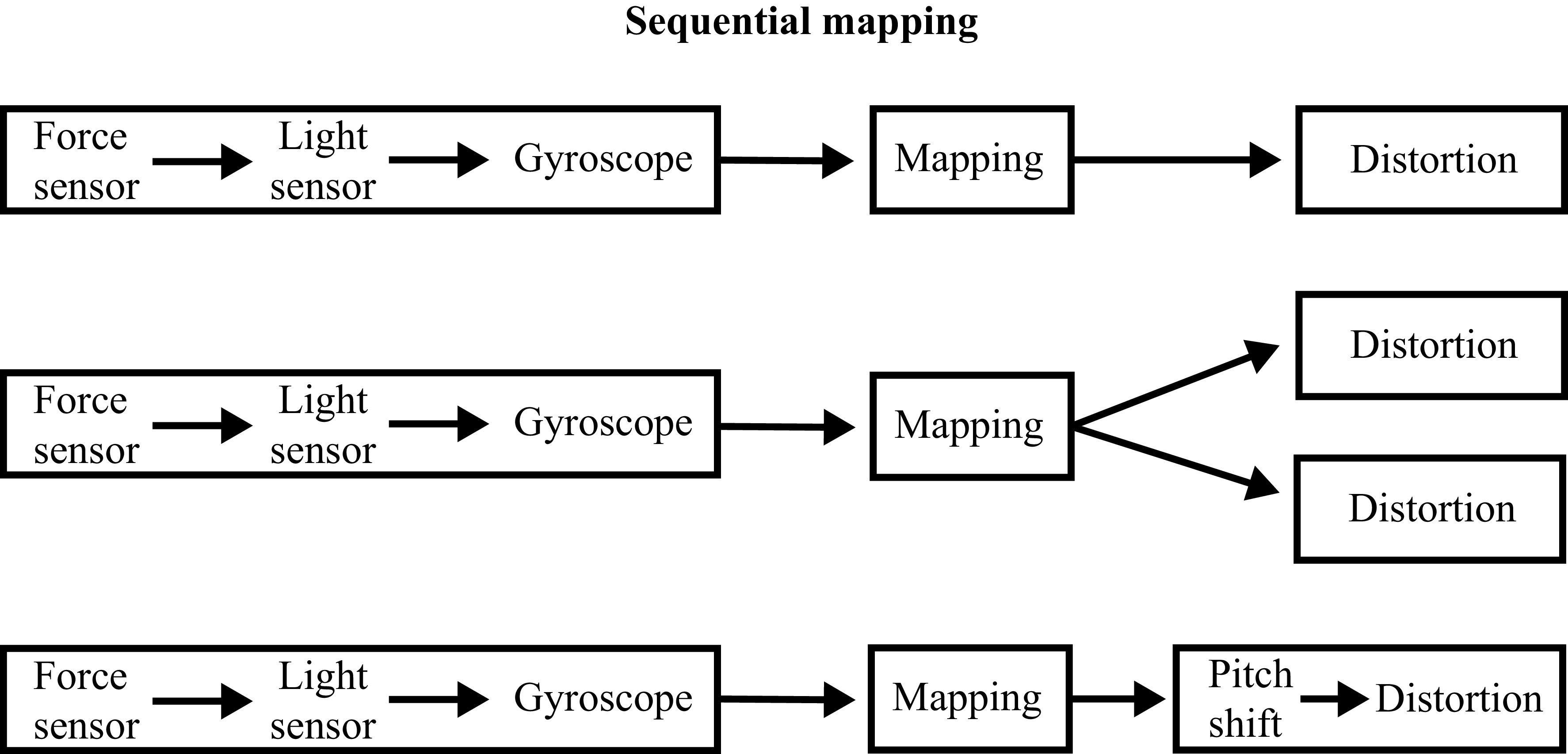

Besides convergent and divergent mapping strategies, a sequential mapping strategy can be beneficial to develop musical gestures or shape the sound (see Figure 5). The Kuturani, with the previously described basic sensor setup and the software’s current state of development, offers many possibilities for shaping sound; however, these have to be carefully programmed and learned. Currently, a small set of sound effects is available. Nonetheless, their parameters can be controlled continuously, which offers the possibility to chain a sequence of effects with evolving characteristics. For example, to achieve a specific sound, it is necessary to learn a sequence of actions, such as tilting the instrument 45 degrees to activate distortion, then knock on the instrument to trigger granular synthesis and finally press button 2 to trigger noise.

A sequential mapping strategy can map multiple events to one sounding result. It can also map the events to multiple parallel or sequential results.

The Kuturani’s expansion ports enable complex interactions with instruments using more suitable sensors for specific compositions or practices. Unlike a device-specific instrument, the Kuturani is a system for exploring and personalising instrument interactions. Regarding mapping strategies, we encourage users to consider performativity, instrumentality and embodiment.

3.3. Notation strategies

Considering the Kuturani’s characteristics, we developed a notation that is user-friendly regardless of the setup chosen. We believe our approach can establish a useful strategy for any augmented instrument. We also think it is important to stress that we present the following strategies with the intention of proposing a common ground in augmented instrument notation. For this reason, we leverage on some established conventions in western music notation – adopted widely around the world – such as the use of staff lines, presenting symbols to be read from left to right or representing low and high pitches accordingly to their position in low or high areas. While we have considered the importance and success of other notation strategies such as tablatures, cipher notation, video scores and others, we still have to face the fact that the nature of the augmented instruments involves notating for the acoustic instrument that is being augmented. In other words, as we are proposing notation for hybrid acoustic/electronic instruments, we propose to extend the traditional western notation with elements that address the electronic components. We have to acknowledge that, in a sense, we are being biased towards this kind of notation but only to be pragmatic. We suggest different strategies according to the notation categories mentioned in section 1.2; however, we are in favour of combining different notational approaches whenever needed. Our strategies are as described in the next subsections.

3.3.1. Notation for expected sound

This notation must reflect a general idea of the expected sound, describing approximate pitch, duration, dynamic, texture, spatialisation and their evolution over time. Our proposed notation, shown in Figure 6, uses symbols already in use together with text notes reminding the performer what sensor should be used. It is expected that instructions on how to use the sensors and their mappings are explained in the score’s notes, and that the performer is familiar with it.

Notation symbols used to represent pitch, duration, dynamics, spatialisation and timbre for the category of ‘Notation for expected sound’.

Some of the properties of sound can be too complex, for this reason, it is expected that the notation will only suggest a range between multiple possibilities previously described. Timbre, for instance, is not easy to represent; however, it can be established that a grainy texture represents timbre 1, while a plain black fill represents timbre 2. Multiple timbres or complex sound properties can be assigned to graphic textures and explained on the setup notes so that they can be recalled using the proposed graphics (see Figure 7).

Example of ‘Notation for expected sound’ category in a musical context.

3.3.2. Notation for the interaction

Helmut Lachenmann’s action-based scores, such as Pression or Gran Torso, as well as the notation for works such as Ishini’ioni and Yuunohui’nahui, by Julio Estrada, are great examples of notation that communicate the way in which the performer must interact with the instrument. We took some of the strategies used in those examples (see Figure 8) and integrated them into our notation guideline presented below:

-

– Each sensor is accounted for independently in a special staff-line. The staff-lines should be located above the regular staff. Each sensor staff-line should be located in a position according to the location of the sensor on the instrument in reference to its ‘pitch zone’ and along the length of the instrument. The staff-line for a sensor located near the low end of the violin strings should be located lower than the staff-line of another sensor located near the bridge. This arrangement will allow the user to identify the sensor by following the logic of the instrument itself and the propoception in relationship to that logic (see Figure 9).

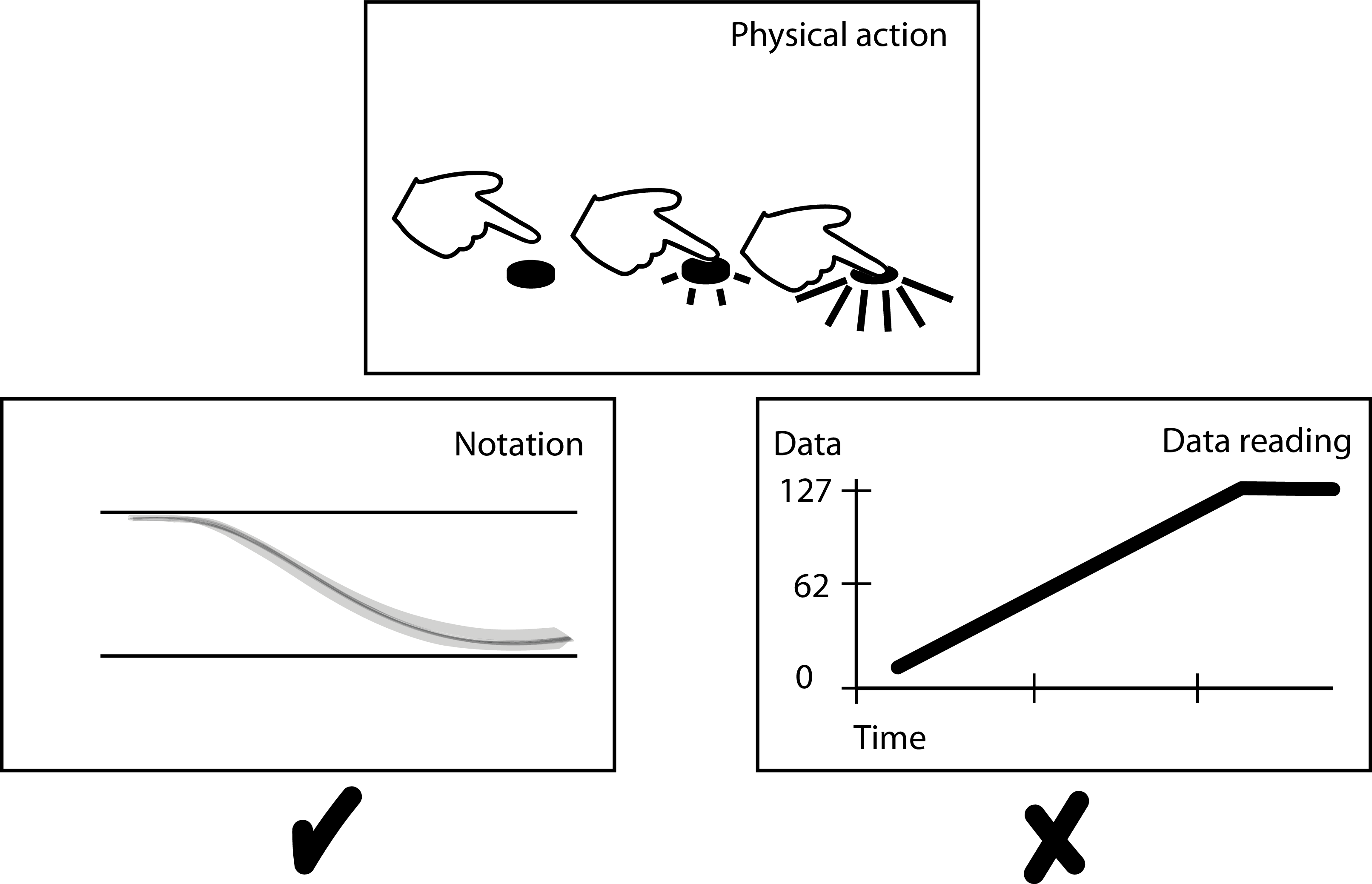

-

– Lines that move up and down represent the range of interaction possible with the continuos data sensors, which is mapped to control different parameters. The upper or higher position of the lines does not correspond to the data value obtained by the sensor but with the ‘logic of physical action’ – that is, moving the hand up or down – to create straight-forward relationship between the notation and the physical gesture For instance, when using a force resistance sensor, pressing hard will result in a high value in the system; however, the physical motion of pressing requires a down movement, which is opposite from the up motion (raise in numbers) of the data value reading. The ‘logic of physical action’ rules and the action should be represented with a descending line (see Figure 10).

-

– For discrete data sensors (on–off), a coloured block should be used.

-

– Dynamics should be indicated using traditional notation. Since gain, depending on the augmented instrument design, could be controlled using sensors, sliders, pedals or any or other devices, it would be difficult to apply the ‘logic of physical action’ with a new notation. The position of the dynamic marks should preferably be above the staff.

-

– The duration of the actions can be specific or suggested according to the metric used for the acoustic instrument.

An example of the notation following the ‘Notation for interaction’ guidelines.

The positions of the sensors staff-lines correspond to their placement over the instrument, with the sensors near the high pitch area of the acoustic instrument on top and sensors closer to the low pitch are of the instrument on the bottom.

The notation follows ‘logic of physical action’. The hand descends to press the sensor and so does the notation despite the fact that the collected data value increased.

3.3.3. Notation for actions to be followed

For this kind of notation group, it is expected that indeterminacy will be involved. Whenever possible, we suggest combining the notation strategies described in section 3.3.2 with any free indications decided by the composer. To exemplify this, Figure 11 shows the first section of performer one’s part in Michael Pisaro’s Ricefall and a similar score for an unspecified augmented instrument.

First five minutes of performer 1 part of Micahel Pisaro’s Ricefall (top) and Composition 3A-D for augmented instrument by Cristohper R.F. (bottom).

This notation group specifies actions, which may not be proposing specific sounds or ways of interaction with the electronic components. Nevertheless, if the actions involved ways of interacting with the instrument, we suggest using any of the strategies described before together with descriptive pictographic elements according to the instrument and setup.

3.3.4. Notation for paths to be followed

The reference notation for this group is a jazz lead-sheet and similar improvisational framework scores. The performer usually improvises over the musical pathways expressed on the score following certain conventions. Since augmented instrument performance does not have defined agreements, we suggest using some indications of reference points in the score. These could be written using notation from sections 3.3.1 or 3.3.2, possibly combined with some form of graphic notation that suggests either physical or musical gestures.

The kind of music where notation paths are indicated, when multiple performers are involved, they usually tend to rely on a strong communication devices such as visual, sounding or physical cues. We suggest that cues are discussed in advance and notated by the performers. We also encourage the composer or designer of the instrument augmenting system to consider the possibility of using performative actions – which are being monitored by the system – to trigger cues, which depending on the system could be sonic, on video, activating lights, or interacting with any other digital media. If this was the case, then cues could be notated using the strategies suggested in the previous sections.

3.3.5. Notation that stimulates musical outcomes

This kind of notation, due to its abstract graphic quality, may remain as usual. Nevertheless, we believe it can be useful to use some symbology that suggests how present the electronics should be versus the acoustic sound. We do not suggest any other strategies here other than the possibility of including any of the strategies previously described, as this notation seeks to stimulate a musical response from the performer, but does not necessarily indicate musical parameters, performative actions or ways of interacting with the instrument.

4. Discussion and conclusions

The tripartite model, presented in section 2.1, is a dynamic system in which all three elements – score, performance and medium – are in constant connection, nurturing and supporting each other. The score becomes an indispensable element that facilitates the exchange of stimuli between these three entities. The representation of musical ideas enables documentation, which in turn facilitates communication and reflection, as discussed by Rebelo (Reference Rebelo2010). The score serves as a mirror for the composer, allowing them to document and reflect on the musical ideas as they take their initial ethereal form. The score facilitates communication, conveying the essence of the work to the performer and the audience, enabling its transformation through performance. The score connects the ethereal form with the medium, allowing it to enter our material reality. While the score is not the work itself, it serves as the means through which the work can be made tangible.

Many notation strategies for electronic music or augmented instruments have been proposed in the past, but none of them has been adopted as universal, possibly due to the strong relationship to their instruments they were developed for. We firmly believe that the notation should not be specific to a device, but appropriate to represent sounds or the way in which to achieve those sounds and that it is the responsibility of the performer to learn how the notation translates to playing a specific instrument, in the same way in which traditional notation can be used with any instrument. For this reason, the notation we propose is based on strategies that resemble solutions for augmented instruments that have been previously proposed by multiple composers. Nevertheless, we have avoided presenting a list of symbols or actions that would be specific to an instrument or data monitoring technique, since the possibilities can be unlimited with the constant development of new sensors that aid in monitoring performance. On the other hand, any sensor can be used in multiple ways over the same or various instruments; this means that specific actions can output multiple results, or the same action can be tracked by different sensors with slightly different results. Considering this, as well as the fact that mapping can convert the readings from the multiple monitoring techniques into any possible sound, we do not aim to create a completely new notational system, but we suggest ways in which we can use the symbology we already have and how it should be presented in the score. We present notation guidelines that aim to be adequate for any augmented instrument; nonetheless, specific cases related to each augmented instrument individually have to be resolved, and we believe that any specific case, independently of the symbology used, can take advantage of what is being proposed in this paper. So, if any sensor is used to track a particular parameter in a specific way, following the proposed guidelines it would be easy to know how to notate whatever symbol is to be used, where it should be placed on the score, and how it would relates to other symbols.

As mapping offers many possibilities of connections between the actions and the resulting sound, we have concluded that the best approach is to use a combination of ‘notation for expected sound’ and ‘notation for interaction’. When improvisation plays an important role in the composition, ‘notation for actions to follow’, ‘notation for paths to follow’ and ‘notation that stimulates musical outcomes’ should be used together with any other strategies that help communicate actions (events) and structures.

While this notation is based on previously used paradigms, it is experimental and still needs to be tested by multiple users. It is our hope that our proposal might be helpful in beginning a standardisation that could impact the direction in which notation could develop and with it a shared understanding on how to relate to augmented instruments.

The topic of embodiment has not been discussed in this paper, and future research must be done to understand how it is implied in the notation and how this representation places embodiment in the close loop found in the relationship between musical idea (score), instrument (medium) and performance.

On the other hand, the tripartite model is a loop that includes only the ‘music makers’ and does not consider the ‘esthesic process’ outside this loop. If this model is pondered with its lack of consideration for the ‘observer’, then we should question what the notation – score, not score – represents to the audience. Is it a way to communicate the score only to an audience capable of understanding the notation? If so, does it no longer fit in the proposed work-concept?

Many other questions are unanswered, for instance how could this notation work with generative or interactive scores? What are the limits of this notation in reference to the current and future technology used in hyperinstruments? What other notational systems already in use could be adopted to expand the proposed notation? We are aware of the fact that the success of the notation is still to be proven and that future work is necessary. However, we hope that what we present in this paper could be useful in setting some basic rules that could become common ground in the development of representation of notation for augmented instruments music.

We strongly believe that the score is an important actor within the tripartite model. This new model for the ontology of the work-concept was devised as a consequence of observing how technology has taken an important role in music making. This new medium, which often is part of the creative process, needed to be integrated and with it a new way to describe – notate – its role in the work-concept is necessary. As the model proposes it, the medium and notation for it have a tremendous influence on the work and how it is received.

Acknowledgements

The research to write this paper was supported by the Postdoctoral Scholarship Programme of DGAPA, UNAM, under the guidence of Dr. Jorge Rodrigo Sigal Sefchovich, co-author of this paper, at UNAM’s Escuela Nacional de Estudios Superiores in Morelia.

Este texto es parte del proyecto de investigación ‘Instrumentos aumentados: Tecnología y creatividad como elementos cardinales de la obra musical’ apoyado por el Programa de Becas Posdoctorales del Programa de Fortalecimiento Académico de la DGAPA, UNAM, bajo la tutela del Dr. Jorge Rodrigo Sigal Sefchovich, coautor del mismo, en la ENES Unidad Morelia.