1 INTRODUCTION

Consider the high-dimensional (HD) linear model

where the rows of the response random vector

![]() $Y \in \mathbb {R}^n$

are independent and identically distributed (i.i.d.) and square-integrable. The rows

$Y \in \mathbb {R}^n$

are independent and identically distributed (i.i.d.) and square-integrable. The rows

![]() $X_j$

,

$X_j$

,

![]() $1 \leq j \leq n$

, of the random covariate matrix

$1 \leq j \leq n$

, of the random covariate matrix

![]() $X \in \mathbb {R}^{n \times p}$

are also i.i.d. and square-integrable, with the number of observations smaller than the number of covariates (

$X \in \mathbb {R}^{n \times p}$

are also i.i.d. and square-integrable, with the number of observations smaller than the number of covariates (

![]() $n < p$

). Assume that

$n < p$

). Assume that

![]() $\mathbb {E}(X_1 U_1) = 0$

, so that the model expresses

$\mathbb {E}(X_1 U_1) = 0$

, so that the model expresses

![]() $Y_1$

as the sum of its projection onto the

$Y_1$

as the sum of its projection onto the

![]() $L_2$

space of equivalence classes of linear functions of

$L_2$

space of equivalence classes of linear functions of

![]() $X_1$

and an orthogonal residual

$X_1$

and an orthogonal residual

![]() $U_1$

.

$U_1$

.

The contribution of this article is twofold. First, we establish a new identification result showing that

![]() $\beta $

can be expressed as a solution to a specific generalized eigenvalue problem (GEP). Second, we leverage this result to develop a novel estimator of

$\beta $

can be expressed as a solution to a specific generalized eigenvalue problem (GEP). Second, we leverage this result to develop a novel estimator of

![]() $\beta $

and analyze its statistical properties.

$\beta $

and analyze its statistical properties.

GEPs involve two matrices

![]() $A, B \in \mathbb {R}^{p \times p}$

. Their solutions consist of scalars

$A, B \in \mathbb {R}^{p \times p}$

. Their solutions consist of scalars

![]() $\lambda _i$

and vectors

$\lambda _i$

and vectors

![]() $v_i$

that satisfy

$v_i$

that satisfy

where the

![]() $\lambda _i$

are called generalized eigenvalues and the

$\lambda _i$

are called generalized eigenvalues and the

![]() $v_i$

are the corresponding generalized eigenvectors. For a given

$v_i$

are the corresponding generalized eigenvectors. For a given

![]() $\lambda _i$

, the subspace spanned by all associated generalized eigenvectors is called the generalized eigenspace corresponding to

$\lambda _i$

, the subspace spanned by all associated generalized eigenvectors is called the generalized eigenspace corresponding to

![]() $\lambda _i$

. When B is the identity matrix, the GEP reduces to the standard eigenvalue problem (Ghojogh, Karray, and Crowley, Reference Ghojogh, Karray and Crowley2019).

$\lambda _i$

. When B is the identity matrix, the GEP reduces to the standard eigenvalue problem (Ghojogh, Karray, and Crowley, Reference Ghojogh, Karray and Crowley2019).

In Theorem 1, we show that the direction of the parameter

![]() $\beta $

in model (1)—that is, the subspace spanned by all vectors collinear with

$\beta $

in model (1)—that is, the subspace spanned by all vectors collinear with

![]() $\beta $

—is identified as the generalized eigenspace corresponding to the unique nonzero generalized eigenvalue of a pair of measurable matrices.

$\beta $

—is identified as the generalized eigenspace corresponding to the unique nonzero generalized eigenvalue of a pair of measurable matrices.

A key consequence of this identification result is that it expands the class of estimators for linear models to include those available for the GEP. As the remainder of the article shows, this is particularly advantageous in HD settings.

In HD models, the dimension of the parameter

![]() $\beta $

, denoted by p, exceeds the number of observations n, often because p grows with n. Estimating

$\beta $

, denoted by p, exceeds the number of observations n, often because p grows with n. Estimating

![]() $\beta $

in HD contexts is challenging and of significant theoretical and practical interest. The high dimensionality implies that the empirical second-moment matrix of the covariates is singular, rendering consistent estimation of

$\beta $

in HD contexts is challenging and of significant theoretical and practical interest. The high dimensionality implies that the empirical second-moment matrix of the covariates is singular, rendering consistent estimation of

![]() $\beta $

by ordinary least squares impossible. Nevertheless, cases with

$\beta $

by ordinary least squares impossible. Nevertheless, cases with

![]() $p> n$

arise frequently in fields, such as economics, finance, health studies, and machine learning (Fan and Li, Reference Fan and Li2006), motivating the development of efficient estimation methods.

$p> n$

arise frequently in fields, such as economics, finance, health studies, and machine learning (Fan and Li, Reference Fan and Li2006), motivating the development of efficient estimation methods.

There is no unique way to address the problem of consistently estimating the parameters of an HD linear model. One major line of research builds on the idea of sparsity. Box and Daniel Meyer (Reference Box and Daniel Meyer1986) originally coined the term factor sparsity to describe models in which most covariates have no effect. Since then, substantial work has focused on estimating parameters in HD sparse linear models (HDSLMs), that is, models in which only a subset of elements in

![]() $\beta $

are nonzero (e.g., Belloni, Chernozhukov, and Hansen, Reference Belloni, Chernozhukov and Hansen2013; van de Geer, Reference van de Geer2016). In this context, saying that a model is (exactly) sparse means that

$\beta $

are nonzero (e.g., Belloni, Chernozhukov, and Hansen, Reference Belloni, Chernozhukov and Hansen2013; van de Geer, Reference van de Geer2016). In this context, saying that a model is (exactly) sparse means that

where s denotes the sparsity level and

![]() $\|v\|_0 = \sum _{i=1}^p \textbf {1}_{|v_i|> 0}$

is the

$\|v\|_0 = \sum _{i=1}^p \textbf {1}_{|v_i|> 0}$

is the

![]() $l_0$

-pseudonorm, which counts the number of nonzero entries in a vector.

$l_0$

-pseudonorm, which counts the number of nonzero entries in a vector.

To estimate sparse linear models, the best subset selection problem (3) arises by combining the sparsity assumption with the least squares principle:

where

![]() $k \in \mathbb {N}$

is the maximum number of nonzero elements in b, and

$k \in \mathbb {N}$

is the maximum number of nonzero elements in b, and

![]() $\|v\|_2 = \left (\sum _{i=1}^p |v_i|^2\right )^{1/2}$

denotes the

$\|v\|_2 = \left (\sum _{i=1}^p |v_i|^2\right )^{1/2}$

denotes the

![]() $l_2$

norm of a vector v. Because of the

$l_2$

norm of a vector v. Because of the

![]() $l_0$

constraint, this optimization is NP-hard (Natarajan, Reference Natarajan1995), so no polynomial-time algorithm is known to solve it. Despite these computational challenges, theoretical results suggest that best subset selection remains an attractive estimator for sparse regression (e.g., Greenshtein, Reference Greenshtein2006; Raskutti, Wainwright, and Yu, Reference Raskutti, Wainwright and Yu2011; Zhang and Zhang, Reference Zhang and Zhang2012; Shen et al., Reference Shen, Pan, Zhu and Zhou2013; Zhang, Wainwright, and Jordan, Reference Zhang, Wainwright and Jordan2014; Bertsimas, King, and Mazumder, Reference Bertsimas, King and Mazumder2016). Recently, optimal or near-optimal solutions have been proposed by Bertsimas et al. (Reference Bertsimas, King and Mazumder2016), who reformulate problem (3) as a mixed integer optimization problem. Implementing this method relies on the licensed GUROBI solver and becomes increasingly demanding in computing time as the dimension of the model grows. For a critical review, see, for example, Hastie, Tibshirani, and Tibshirani (Reference Hastie, Tibshirani and Tibshirani2020).

$l_0$

constraint, this optimization is NP-hard (Natarajan, Reference Natarajan1995), so no polynomial-time algorithm is known to solve it. Despite these computational challenges, theoretical results suggest that best subset selection remains an attractive estimator for sparse regression (e.g., Greenshtein, Reference Greenshtein2006; Raskutti, Wainwright, and Yu, Reference Raskutti, Wainwright and Yu2011; Zhang and Zhang, Reference Zhang and Zhang2012; Shen et al., Reference Shen, Pan, Zhu and Zhou2013; Zhang, Wainwright, and Jordan, Reference Zhang, Wainwright and Jordan2014; Bertsimas, King, and Mazumder, Reference Bertsimas, King and Mazumder2016). Recently, optimal or near-optimal solutions have been proposed by Bertsimas et al. (Reference Bertsimas, King and Mazumder2016), who reformulate problem (3) as a mixed integer optimization problem. Implementing this method relies on the licensed GUROBI solver and becomes increasingly demanding in computing time as the dimension of the model grows. For a critical review, see, for example, Hastie, Tibshirani, and Tibshirani (Reference Hastie, Tibshirani and Tibshirani2020).

Other approaches to the best subset selection problem exist, in particular by solving a Lagrangian version of (3). However, this is not an equivalent problem because of the

![]() $l_0$

constraint. Within this line of research, Huang et al. (Reference Huang, Jiao, Liu and Lu2018) propose a method that, although it approximates the penalized version of (3), still allows one to fix the number of nonzero coordinates in the estimates. Hazimeh and Mazumder (Reference Hazimeh and Mazumder2020) follow a similar approach but combine the

$l_0$

constraint. Within this line of research, Huang et al. (Reference Huang, Jiao, Liu and Lu2018) propose a method that, although it approximates the penalized version of (3), still allows one to fix the number of nonzero coordinates in the estimates. Hazimeh and Mazumder (Reference Hazimeh and Mazumder2020) follow a similar approach but combine the

![]() $l_0$

penalty with an additional

$l_0$

penalty with an additional

![]() $l_q$

penalty to achieve improved algorithmic performance.

$l_q$

penalty to achieve improved algorithmic performance.

Following an approach similar to that leading to the formulation of the best subset selection, we leverage our identification result to define an estimator for the direction of

![]() $\beta $

under a sparsity constraint. We begin by noting that the generalized eigenvector associated with the largest generalized eigenvalue of the GEP (2) is the solution to the maximization of the following Rayleigh quotient:

$\beta $

under a sparsity constraint. We begin by noting that the generalized eigenvector associated with the largest generalized eigenvalue of the GEP (2) is the solution to the maximization of the following Rayleigh quotient:

see, e.g., Golub and Van Loan (Reference Golub and Van Loan2013). Our identification result in Theorem 1 combines this fact with the sparsity assumption to define a sparse estimator:

$$ \begin{align} \operatorname{\mathrm{\arg\!\max}}_{\phi \in \mathbb{R}^p} \frac{\phi^\top X^\top Y Y^\top X \phi}{n \, \phi^\top X^\top X \phi} \quad \text{subject to} \quad \|\phi\|_0 \leq k, \end{align} $$

$$ \begin{align} \operatorname{\mathrm{\arg\!\max}}_{\phi \in \mathbb{R}^p} \frac{\phi^\top X^\top Y Y^\top X \phi}{n \, \phi^\top X^\top X \phi} \quad \text{subject to} \quad \|\phi\|_0 \leq k, \end{align} $$

where

![]() $k \in \mathbb {N}$

. We refer to (4) as the directional best subset selection problem. Our results show that for any solution of (4), say v, the product of v with the orthogonal projection of Y onto

$k \in \mathbb {N}$

. We refer to (4) as the directional best subset selection problem. Our results show that for any solution of (4), say v, the product of v with the orthogonal projection of Y onto

![]() $Xv$

also solves the best subset selection problem (3). Consequently, solving (4) allows us to solve (3). Furthermore, Proposition 2 below implies that both problems share the same minimax convergence rate in the

$Xv$

also solves the best subset selection problem (3). Consequently, solving (4) allows us to solve (3). Furthermore, Proposition 2 below implies that both problems share the same minimax convergence rate in the

![]() $l_2$

norm, specifically

$l_2$

norm, specifically

![]() $(s \log (p/s)/n)^{1/2}$

in the sub-Gaussian setting (Raskutti et al., Reference Raskutti, Wainwright and Yu2011).

$(s \log (p/s)/n)^{1/2}$

in the sub-Gaussian setting (Raskutti et al., Reference Raskutti, Wainwright and Yu2011).

Building on these ideas, we propose an efficient estimator for the direction of

![]() $\beta $

in model (1), specifically designed for HDSLMs. Our estimator is a modified version of the well-established RIFLE algorithm, originally introduced by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to approximate the solution to the GEP under an

$\beta $

in model (1), specifically designed for HDSLMs. Our estimator is a modified version of the well-established RIFLE algorithm, originally introduced by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to approximate the solution to the GEP under an

![]() $l_0$

constraint—an NP-hard problem (Moghaddam, Weiss, and Avidan, Reference Moghaddam, Weiss and Avidan2006)—in settings where

$l_0$

constraint—an NP-hard problem (Moghaddam, Weiss, and Avidan, Reference Moghaddam, Weiss and Avidan2006)—in settings where

![]() $p> n$

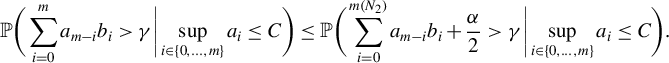

. We show that our estimator attains the minimax convergence rate with high probability in the sub-Gaussian setting. Finally, we establish a central limit theorem (CLT) for our estimator, addressing the nontrivial challenges arising from its nonlinearity. To do so, we prove a theorem providing sufficient conditions for the convergence in probability of a Cauchy product, which may be of independent interest.

$p> n$

. We show that our estimator attains the minimax convergence rate with high probability in the sub-Gaussian setting. Finally, we establish a central limit theorem (CLT) for our estimator, addressing the nontrivial challenges arising from its nonlinearity. To do so, we prove a theorem providing sufficient conditions for the convergence in probability of a Cauchy product, which may be of independent interest.

GEPs have previously been applied to models with many covariates. In particular, the sufficient dimension reduction framework, introduced by Dennis Cook (Reference Dennis Cook1994), uses moment-based methods that can be formulated as GEPs to replace the original covariates with a minimal set of linear combinations while preserving the relevant information (Li, Reference Li2007). Although these methods were not specifically designed for HDSLMs or for the best subset selection problem, Chen, Zou, and Cook (Reference Chen, Zou and Cook2010) proposed a method to induce sparsity in the estimated linear combinations of covariates, thereby achieving variable selection de facto.

Like the RIFLE algorithm, our estimator requires an initial solution, which it then iteratively refines until convergence. We conclude with a series of Monte Carlo experiments demonstrating the superior performance of our estimator when initialized with the Lasso method, which is the Lagrangian form of the

![]() $l_1$

-relaxation of problem (3) Tibshirani, Reference Tibshirani1996; Chen, Donoho, and Saunders, Reference Chen, Donoho and Saunders1998. Although the Lasso often selects an excessive number of variables when optimized for prediction accuracy Bertsimas et al., Reference Bertsimas, King and Mazumder2016; Hastie et al., Reference Hastie, Tibshirani and Tibshirani2020, our experiments show that for realistic signal-to-noise ratios (SNRs), our estimator achieves more reliable variable selection and estimation than existing

$l_1$

-relaxation of problem (3) Tibshirani, Reference Tibshirani1996; Chen, Donoho, and Saunders, Reference Chen, Donoho and Saunders1998. Although the Lasso often selects an excessive number of variables when optimized for prediction accuracy Bertsimas et al., Reference Bertsimas, King and Mazumder2016; Hastie et al., Reference Hastie, Tibshirani and Tibshirani2020, our experiments show that for realistic signal-to-noise ratios (SNRs), our estimator achieves more reliable variable selection and estimation than existing

![]() $l_0$

-constrained estimators.

$l_0$

-constrained estimators.

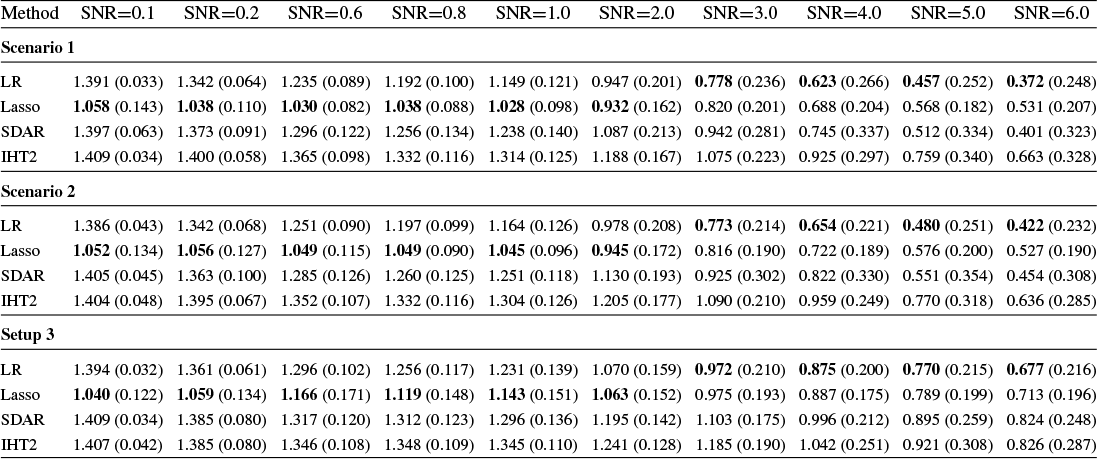

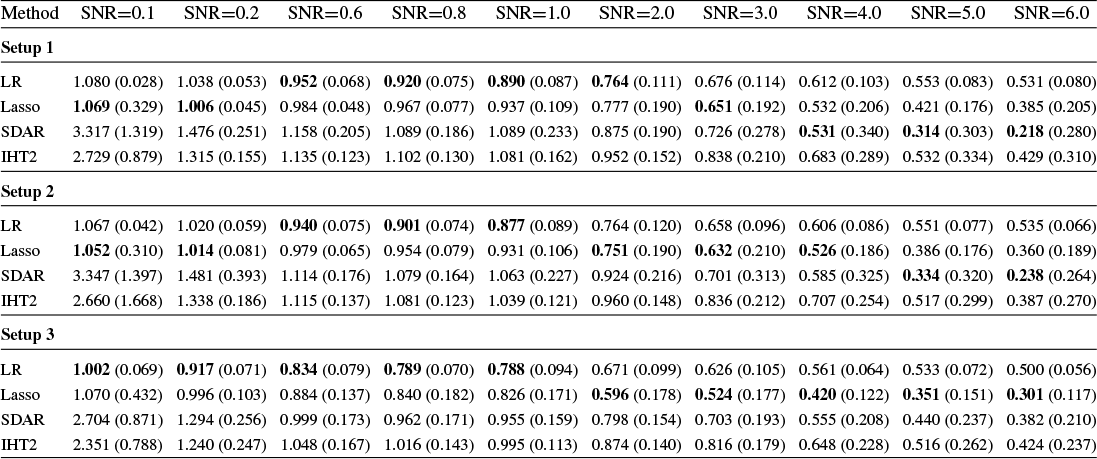

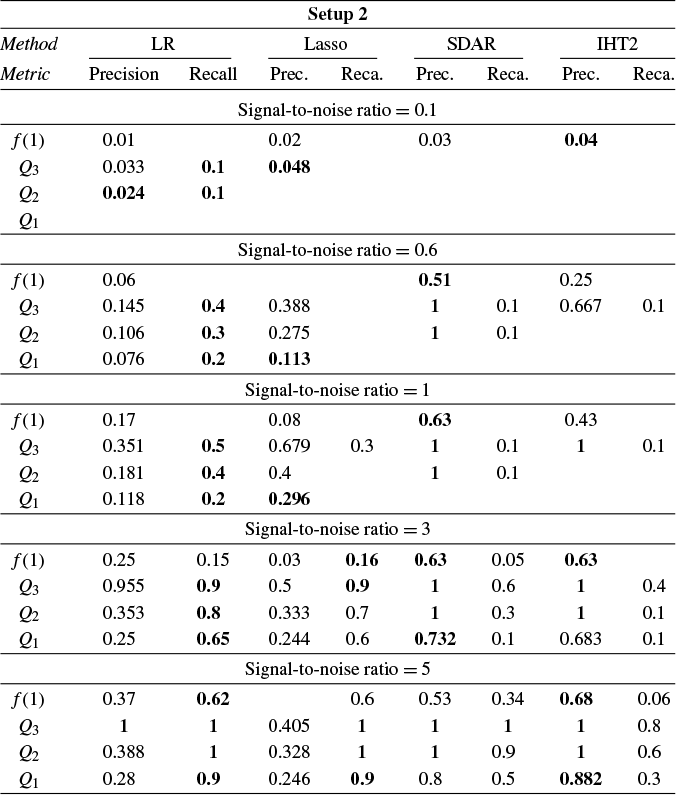

The performance of the proposed inference method, termed Loaded-RIFLE (LR), is benchmarked against three alternatives: the Lasso and two

![]() $l_0$

-constrained or penalized estimators. The first is the two-stage iterative hard thresholding (two-stage IHT; Jain, Tewari, and Kar, Reference Jain, Tewari, Kar, Ghahramani, Welling, Cortes, Lawrence and Weinberger2014), which extends the well-known iterative hard thresholding to approximate problem (3). The second is the support detection and root finding (SDAR) method of Huang et al. (Reference Huang, Jiao, Liu and Lu2018), which approximates the solution to the Lagrangian form of problem (3).

$l_0$

-constrained or penalized estimators. The first is the two-stage iterative hard thresholding (two-stage IHT; Jain, Tewari, and Kar, Reference Jain, Tewari, Kar, Ghahramani, Welling, Cortes, Lawrence and Weinberger2014), which extends the well-known iterative hard thresholding to approximate problem (3). The second is the support detection and root finding (SDAR) method of Huang et al. (Reference Huang, Jiao, Liu and Lu2018), which approximates the solution to the Lagrangian form of problem (3).

These existing methods have well-established minimax convergence rates and the sure screening property Jain et al., Reference Jain, Tewari, Kar, Ghahramani, Welling, Cortes, Lawrence and Weinberger2014; Huang et al., Reference Huang, Jiao, Liu and Lu2018; Guo, Zhu, and Fan, Reference Guo, Zhu and Fan2020. However, to the best of our knowledge, no CLT has yet been established for any of them.

Section 4 reports Monte Carlo experiments comparing these estimators in terms of estimation, variable selection, and prediction. Our results show that LR consistently outperforms its competitors across these metrics in many cases. In particular, LR achieves more accurate variable selection, recovering a substantial share of the true support even under low SNR conditions. Moreover, its prediction accuracy, measured by relative risk, remains robust in low-SNR regimes, highlighting its practical relevance for applications such as financial analysis, where low SNRs are common. Overall, these findings suggest that LR offers a promising alternative for reliable estimation and prediction in noisy HD settings.

The organization of the article is as follows. Section 2 establishes the identification of the direction of

![]() $\beta $

as the solution to a particular GEP. Section 3 builds on this result to estimate the direction of

$\beta $

as the solution to a particular GEP. Section 3 builds on this result to estimate the direction of

![]() $\beta $

under a sparsity assumption. We first introduce our algorithm, LR. We then provide a nonasymptotic bound on its

$\beta $

under a sparsity assumption. We first introduce our algorithm, LR. We then provide a nonasymptotic bound on its

![]() $l_2$

estimation error and derive its convergence rate in sub-Gaussian settings. Next, we show that this rate is minimax optimal in the sub-Gaussian case. Finally, we establish a CLT for the estimator. Section 4 presents Monte Carlo experiments that illustrate the competitiveness of LR in practice. Section 5 concludes. The Appendix collects the proofs of all results, except for the proof of the identification theorem, which is presented in Section 2. It also includes a description of the RIFLE algorithm. Technical lemmas and several standard results used throughout the proofs are provided in the Supplementary Material, where they are proved in full detail. For the convenience of the reader, a Notational Glossary is provided after the Appendix.

$l_2$

estimation error and derive its convergence rate in sub-Gaussian settings. Next, we show that this rate is minimax optimal in the sub-Gaussian case. Finally, we establish a CLT for the estimator. Section 4 presents Monte Carlo experiments that illustrate the competitiveness of LR in practice. Section 5 concludes. The Appendix collects the proofs of all results, except for the proof of the identification theorem, which is presented in Section 2. It also includes a description of the RIFLE algorithm. Technical lemmas and several standard results used throughout the proofs are provided in the Supplementary Material, where they are proved in full detail. For the convenience of the reader, a Notational Glossary is provided after the Appendix.

2 IDENTIFICATION OF THE DIRECTION OF

$\beta $

$\beta $

The purpose of this section is to identify the direction of

![]() $\beta $

in model (1) in terms of the moments of

$\beta $

in model (1) in terms of the moments of

![]() $Y_1$

and

$Y_1$

and

![]() $X_1$

.

$X_1$

.

First, observe that in the model, since the rows of Y and X are i.i.d. in

![]() $L_2$

, and because

$L_2$

, and because

![]() $\mathbb {E}(X_1U_1)=0$

, one easily obtains normal equations

$\mathbb {E}(X_1U_1)=0$

, one easily obtains normal equations

![]() $\mathbb {E}(X_1Y_1)=\mathbb {E}(X_1X_1^\top )\beta $

. For every

$\mathbb {E}(X_1Y_1)=\mathbb {E}(X_1X_1^\top )\beta $

. For every

![]() $\beta \not \in \mathrm {Ker}(\Sigma _X):=\{v\in \mathbb {R}^p; \|\Sigma _X v\|_{2}=0\}$

, that is for every

$\beta \not \in \mathrm {Ker}(\Sigma _X):=\{v\in \mathbb {R}^p; \|\Sigma _X v\|_{2}=0\}$

, that is for every

![]() $\beta $

not belonging to the

$\beta $

not belonging to the

![]() ${kernel}$

of the second moment of

${kernel}$

of the second moment of

![]() $X_1$

, the latter denoted hereafter

$X_1$

, the latter denoted hereafter

![]() $\Sigma _X:=\mathbb {E}(X_1X_1^\top )$

, one implication of these normal equations is

$\Sigma _X:=\mathbb {E}(X_1X_1^\top )$

, one implication of these normal equations is

$$ \begin{align*} \|\beta\|_2=\frac{\phi^\top \Sigma_{XY}}{\phi^\top \Sigma_X \phi} \end{align*} $$

$$ \begin{align*} \|\beta\|_2=\frac{\phi^\top \Sigma_{XY}}{\phi^\top \Sigma_X \phi} \end{align*} $$

with

![]() $\Sigma _{XY}:=\mathbb {E}(X_1Y_1)$

and

$\Sigma _{XY}:=\mathbb {E}(X_1Y_1)$

and

![]() $\phi :=\beta /\|\beta \|_2$

. Hence, rewriting

$\phi :=\beta /\|\beta \|_2$

. Hence, rewriting

![]() $\beta $

in terms of

$\beta $

in terms of

![]() $\phi $

and

$\phi $

and

![]() $\|\beta \|_2$

, one obtains after standard computations that

$\|\beta \|_2$

, one obtains after standard computations that

$$ \begin{align} \mathbb{E}[Y_1-X_1^\top\beta]^2 &=\mathbb{E}(Y_1^2)-\frac{\phi^\top \Sigma_{XY}\Sigma_{YX}\phi}{\phi^\top \Sigma_X \phi} \end{align} $$

$$ \begin{align} \mathbb{E}[Y_1-X_1^\top\beta]^2 &=\mathbb{E}(Y_1^2)-\frac{\phi^\top \Sigma_{XY}\Sigma_{YX}\phi}{\phi^\top \Sigma_X \phi} \end{align} $$

with

![]() $\Sigma _{YX}:=\Sigma _{XY}^\top $

. The condition

$\Sigma _{YX}:=\Sigma _{XY}^\top $

. The condition

![]() $\mathbb {E}(X_1U_1)=0$

means that

$\mathbb {E}(X_1U_1)=0$

means that

![]() $X_1\beta $

is the orthogonal projection of

$X_1\beta $

is the orthogonal projection of

![]() $Y_1$

onto the

$Y_1$

onto the

![]() $L^2$

subspace of linear functions of

$L^2$

subspace of linear functions of

![]() $X_1$

, therefore the Projection theorem (e.g., Parthasarathy, Reference Parthasarathy1977) implies that

$X_1$

, therefore the Projection theorem (e.g., Parthasarathy, Reference Parthasarathy1977) implies that

and thus

$$ \begin{align} \phi \in \operatorname{\mathrm{\arg\!\max}}_{b\in \mathbb{R}^p} \frac{b^\top \Sigma_{XY}\Sigma_{YX}b}{b^\top \Sigma_X b}. \end{align} $$

$$ \begin{align} \phi \in \operatorname{\mathrm{\arg\!\max}}_{b\in \mathbb{R}^p} \frac{b^\top \Sigma_{XY}\Sigma_{YX}b}{b^\top \Sigma_X b}. \end{align} $$

As appears readily, if

![]() $\phi $

satisfies Equation (7), so is every vector in

$\phi $

satisfies Equation (7), so is every vector in

![]() $\Phi =\{\alpha \beta | \alpha \in \mathbb {R}\}$

(the direction of

$\Phi =\{\alpha \beta | \alpha \in \mathbb {R}\}$

(the direction of

![]() $\beta $

) that is not the zero vector. Hence, the identification of

$\beta $

) that is not the zero vector. Hence, the identification of

![]() $\beta $

, for instance via the assumption

$\beta $

, for instance via the assumption

![]() $\mathrm {Ker}(\Sigma _X)=\{0\}$

, ensures the identification of

$\mathrm {Ker}(\Sigma _X)=\{0\}$

, ensures the identification of

![]() $\Phi $

. In contrast, the identification of

$\Phi $

. In contrast, the identification of

![]() $\beta $

is obtained from the identification of

$\beta $

is obtained from the identification of

![]() $\Phi $

by orthogonally projecting

$\Phi $

by orthogonally projecting

![]() $Y_1$

onto the

$Y_1$

onto the

![]() $L_2$

subspace of linear functions of

$L_2$

subspace of linear functions of

![]() $X_1^\top \phi $

for any

$X_1^\top \phi $

for any

![]() $\phi \in \Phi $

. This subspace is closed and convex, which ensures the uniqueness of the orthogonal projection. It is also contained in the subspace of the linear function of

$\phi \in \Phi $

. This subspace is closed and convex, which ensures the uniqueness of the orthogonal projection. It is also contained in the subspace of the linear function of

![]() $X_1$

. Hence, considering the orthogonal projection of

$X_1$

. Hence, considering the orthogonal projection of

![]() $Y_1$

as an element of this latter space uniquely identifies the solution of Equation (6), that is,

$Y_1$

as an element of this latter space uniquely identifies the solution of Equation (6), that is,

![]() $\beta $

in model (1).

$\beta $

in model (1).

The previous paragraph implies the following identification theorem.

Theorem 1. In model (1), assume that

![]() $\Sigma _X$

is positive definite, and let

$\Sigma _X$

is positive definite, and let

![]() $\Phi =\{\alpha \beta | \alpha \in \mathbb {R}\}$

be the direction of

$\Phi =\{\alpha \beta | \alpha \in \mathbb {R}\}$

be the direction of

![]() $\beta $

. Then,

$\beta $

. Then,

where

![]() $\lambda _{\max }$

denotes the largest generalized eigenvalue, defined by

$\lambda _{\max }$

denotes the largest generalized eigenvalue, defined by

$$\begin{align*}\lambda_{\max} = \max_{\phi \in \mathbb{R}^p} \frac{\phi^\top \Sigma_{XY} \Sigma_{YX} \phi}{\phi^\top \Sigma_X \phi}. \end{align*}$$

$$\begin{align*}\lambda_{\max} = \max_{\phi \in \mathbb{R}^p} \frac{\phi^\top \Sigma_{XY} \Sigma_{YX} \phi}{\phi^\top \Sigma_X \phi}. \end{align*}$$

Equation (8) can be obtained by explicitly solving the maximization (7). The calculations are given in the Appendix. Hence, in this section, we have identified

![]() $\Phi $

as the solution set of a generalized Rayleigh quotient given in the argument of Equation (7), or equivalently, as the generalized eigenspace associated with the largest generalized eigenvalue of the matrix pair

$\Phi $

as the solution set of a generalized Rayleigh quotient given in the argument of Equation (7), or equivalently, as the generalized eigenspace associated with the largest generalized eigenvalue of the matrix pair

![]() $(\Sigma _{XY}\Sigma _{YX}, \Sigma _{X})$

(Equation (8)).

$(\Sigma _{XY}\Sigma _{YX}, \Sigma _{X})$

(Equation (8)).

This result highlights a general geometric property of linear models, which, to the best of our knowledge, has not been explicitly noted in the literature. However, we mention that a similar equation appears in the context of partial least squares in Sun, Ji, and Ye (Reference Sun, Ji and Ye2009).

3 ESTIMATION UNDER SPARSITY

In this section, we use the identification result of Section 2 to produce a theory of estimation of the parameter

![]() $\beta $

in model (1) under the assumption of sparsity. Although the literature has proposed solving specific GEPs using various least-squares formulations (e.g., Moghaddam et al., Reference Moghaddam, Weiss and Avidan2006, Sun et al., Reference Sun, Ji and Ye2009, Golub and Van Loan, Reference Golub and Van Loan2013), an original aspect of our approach is to employ a GEP to estimate the parameters of a linear model, a task typically achieved by solving least-squares problems.

$\beta $

in model (1) under the assumption of sparsity. Although the literature has proposed solving specific GEPs using various least-squares formulations (e.g., Moghaddam et al., Reference Moghaddam, Weiss and Avidan2006, Sun et al., Reference Sun, Ji and Ye2009, Golub and Van Loan, Reference Golub and Van Loan2013), an original aspect of our approach is to employ a GEP to estimate the parameters of a linear model, a task typically achieved by solving least-squares problems.

Theorem 1 suggests a directional estimation of

![]() $\beta $

involving two steps. First, obtain an estimation of the direction

$\beta $

involving two steps. First, obtain an estimation of the direction

![]() $\beta $

, say

$\beta $

, say

![]() $\hat {\Phi }$

, via GEP. Second, projecting Y to the span of

$\hat {\Phi }$

, via GEP. Second, projecting Y to the span of

![]() $X\hat {\phi }$

, for

$X\hat {\phi }$

, for

![]() $\hat {\phi }\in \hat {\Phi }$

and

$\hat {\phi }\in \hat {\Phi }$

and

![]() $\|\hat {\phi }\|_2=1$

, to obtain (say)

$\|\hat {\phi }\|_2=1$

, to obtain (say)

![]() $\hat {\nu }$

, an estimate of

$\hat {\nu }$

, an estimate of

![]() $\|\beta \|_2$

, and thus obtaining an estimator of

$\|\beta \|_2$

, and thus obtaining an estimator of

![]() $\beta $

by applying the Slutsky lemma to the product

$\beta $

by applying the Slutsky lemma to the product

![]() $\hat {\phi }\hat {\nu }$

.

$\hat {\phi }\hat {\nu }$

.

If model (1) is sparse, that is,

![]() $\|\beta \|_0=s<n<p$

, one may, in principle, employ the directional best subset section defined in (4) to achieve the first step of the directional estimation. As detailed in Proposition A1 in the Appendix, the solution of the best subsets selection (3) would then be exactly recovered after the second step of the procedure. Nevertheless, as stated in the Introduction, both of these problems are NP-Hard, thus, up to the time when the conjecture

$\|\beta \|_0=s<n<p$

, one may, in principle, employ the directional best subset section defined in (4) to achieve the first step of the directional estimation. As detailed in Proposition A1 in the Appendix, the solution of the best subsets selection (3) would then be exactly recovered after the second step of the procedure. Nevertheless, as stated in the Introduction, both of these problems are NP-Hard, thus, up to the time when the conjecture

![]() $P=NP$

has been (dis)proved, the best one can generally hope for in polynomial time is to approximate their solution. This is exactly our suggestion.

$P=NP$

has been (dis)proved, the best one can generally hope for in polynomial time is to approximate their solution. This is exactly our suggestion.

The key point relative to estimation is that Theorem 1 allows to leverage the computational ideas developed in the GEP literature to estimate

![]() $\beta $

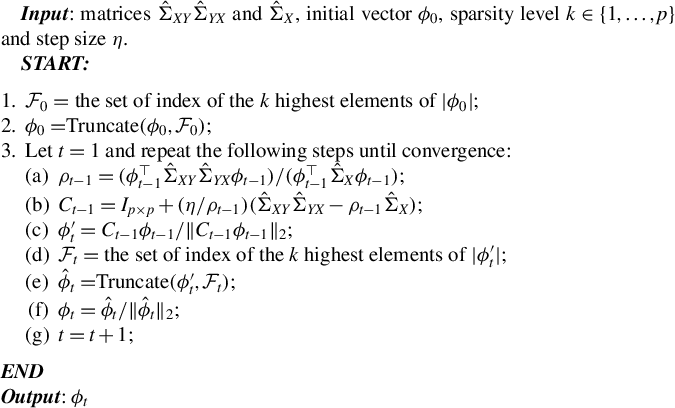

in model (1). We introduce a competitive estimator based on the truncated Rayleigh flow method (called RIFLE) introduced by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to estimate the solution of GEP in HD under a sparsity constraint. This new estimator is described in Algorithm 1 below and is called Loaded-RIFLE (LR) because it is a modified version of RIFLE.

$\beta $

in model (1). We introduce a competitive estimator based on the truncated Rayleigh flow method (called RIFLE) introduced by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to estimate the solution of GEP in HD under a sparsity constraint. This new estimator is described in Algorithm 1 below and is called Loaded-RIFLE (LR) because it is a modified version of RIFLE.

Starting from an initial solution, the RIFLE as introduced by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) approximates the solution of a GEP under a sparsity constraint when

![]() $p> n$

. The approach involves iteratively updating the estimated vector in its ascending direction, normalizing it, truncating it so that only the k coefficients with the largest absolute values remain, and then normalizing again. The resulting algorithm is detailed in the Appendix and is referred to in the main text as Algorithm 2. Our experience with RIFLE suggests that under certain conditions (specifically when the step size parameter denoted by

$p> n$

. The approach involves iteratively updating the estimated vector in its ascending direction, normalizing it, truncating it so that only the k coefficients with the largest absolute values remain, and then normalizing again. The resulting algorithm is detailed in the Appendix and is referred to in the main text as Algorithm 2. Our experience with RIFLE suggests that under certain conditions (specifically when the step size parameter denoted by

![]() $\eta $

is small, as expected, at least according to the theory of Tan et al. (Reference Tan, Wang, Liu and Zhang2018)), the selection of variables performed by the algorithm heavily depends on the order of the absolute values in the initial vector

$\eta $

is small, as expected, at least according to the theory of Tan et al. (Reference Tan, Wang, Liu and Zhang2018)), the selection of variables performed by the algorithm heavily depends on the order of the absolute values in the initial vector

![]() $\phi _0$

. In most cases, we find that this dependency limits the accuracy of the estimate. To mitigate this effect, we introduce a variant of RIFLE, that is LR.

$\phi _0$

. In most cases, we find that this dependency limits the accuracy of the estimate. To mitigate this effect, we introduce a variant of RIFLE, that is LR.

Hereafter, let

![]() $\hat {\Sigma }_X := X^\top X / n$

,

$\hat {\Sigma }_X := X^\top X / n$

,

![]() $\hat {\Sigma }_{XY} := X^\top Y / n$

, and

$\hat {\Sigma }_{XY} := X^\top Y / n$

, and

![]() $\hat {\Sigma }_{YX} := (\hat {\Sigma }_{XY})^\top $

serve as estimators for

$\hat {\Sigma }_{YX} := (\hat {\Sigma }_{XY})^\top $

serve as estimators for

![]() $\Sigma _X$

,

$\Sigma _X$

,

![]() $\Sigma _{XY}$

, and

$\Sigma _{XY}$

, and

![]() $\Sigma _{YX}$

, respectively. Moreover, for any vector

$\Sigma _{YX}$

, respectively. Moreover, for any vector

![]() $v \in \mathbb {R}^p$

and subset

$v \in \mathbb {R}^p$

and subset

![]() $\mathcal {K} \subset \{1, \dots , p\}$

, we define

$\mathcal {K} \subset \{1, \dots , p\}$

, we define

![]() $\text {Truncate}(v, \mathcal {K})$

as the vector in

$\text {Truncate}(v, \mathcal {K})$

as the vector in

![]() $\mathbb {R}^p$

given by

$\mathbb {R}^p$

given by

$$ \begin{align*} \left(\text{Truncate}(v, \mathcal{K})\right)_i = \left\{ \begin{array}{ll} v_i, & \text{if } i \in \mathcal{K}, \\ 0, & \text{otherwise}. \end{array} \right. \end{align*} $$

$$ \begin{align*} \left(\text{Truncate}(v, \mathcal{K})\right)_i = \left\{ \begin{array}{ll} v_i, & \text{if } i \in \mathcal{K}, \\ 0, & \text{otherwise}. \end{array} \right. \end{align*} $$

Algorithm 1. Loaded and truncated Rayleigh flow method (Loaded-RIFLE).

LR can be seen as a kind of backward stepwise RIFLE. It basically runs RIFLE for

![]() $k=\overline {k}$

and then uses the output as input of a new run of RIFLE with

$k=\overline {k}$

and then uses the output as input of a new run of RIFLE with

![]() $k=\overline {k}-1$

. The algorithm proceeds as such until

$k=\overline {k}-1$

. The algorithm proceeds as such until

![]() $k=\underline {k}$

. The only difference lies in the IF condition in Step 4.(b)v., which ensures a somewhat smooth update of the set of selected variables. This step is important in extending the RIFLE theory developed by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to LR.

$k=\underline {k}$

. The only difference lies in the IF condition in Step 4.(b)v., which ensures a somewhat smooth update of the set of selected variables. This step is important in extending the RIFLE theory developed by Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to LR.

The parameter

![]() $\underline {k}$

is a tuning parameter, typically expected to be larger than the true sparsity level s. In the absence of prior knowledge about s,

$\underline {k}$

is a tuning parameter, typically expected to be larger than the true sparsity level s. In the absence of prior knowledge about s,

![]() $\underline {k}$

can be selected using standard tuning procedures (see, e.g., Chetverikov, Reference Chetverikov2024). In the next section, we provide an example and a simulation study illustrating the effectiveness of cross-validation for this purpose.

$\underline {k}$

can be selected using standard tuning procedures (see, e.g., Chetverikov, Reference Chetverikov2024). In the next section, we provide an example and a simulation study illustrating the effectiveness of cross-validation for this purpose.

The theoretical results of the following section suggest that

![]() $\overline {k}$

should be chosen such that

$\overline {k}$

should be chosen such that

![]() $\overline {k} = Cs$

, for some sufficiently large constant C. However, since s is unknown in practice, we propose the following heuristic:

$\overline {k} = Cs$

, for some sufficiently large constant C. However, since s is unknown in practice, we propose the following heuristic:

![]() $\overline {k}$

should be selected to balance the computational cost and the model’s capacity to include all relevant variables. For example, when assuming the prior

$\overline {k}$

should be selected to balance the computational cost and the model’s capacity to include all relevant variables. For example, when assuming the prior

![]() $s \ll n < p$

, the choice

$s \ll n < p$

, the choice

![]() $\overline {k} \approx \frac {3}{4}n$

performs well in practice.

$\overline {k} \approx \frac {3}{4}n$

performs well in practice.

3.1 Minimax Rate of Convergence

Using techniques from Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to study the convergence of RIFLE, we derive a non-asymptotic bound on the

![]() $l_2$

distance between the output of LR and the population target vector, defined hereafter

$l_2$

distance between the output of LR and the population target vector, defined hereafter

![]() $\phi ^\star :=\beta /\|\beta \|_2$

for

$\phi ^\star :=\beta /\|\beta \|_2$

for

![]() $\beta $

in model (1). Based on this bound, we establish the convergence rate of the estimator in a sub-Gaussian setting and demonstrate that it is minimax. Before presenting the theorems, we introduce additional notations, some of which are specific to GEP and some non-standard but useful within the context of this article.

$\beta $

in model (1). Based on this bound, we establish the convergence rate of the estimator in a sub-Gaussian setting and demonstrate that it is minimax. Before presenting the theorems, we introduce additional notations, some of which are specific to GEP and some non-standard but useful within the context of this article.

For any

![]() $Z\in \mathbb {R}^{p\times p}$

and

$Z\in \mathbb {R}^{p\times p}$

and

![]() $k\in \mathbb {N}$

s.t.

$k\in \mathbb {N}$

s.t.

![]() $k\leq p$

, let

$k\leq p$

, let

For fixed k,

![]() $\rho (\cdot ,k):\mathbb {R}^{p\times p} \rightarrow \mathbb {R}$

is a norm on

$\rho (\cdot ,k):\mathbb {R}^{p\times p} \rightarrow \mathbb {R}$

is a norm on

![]() $\mathbb {R}^{p\times p}$

over

$\mathbb {R}^{p\times p}$

over

![]() $\mathbb {R}$

, if Z is symmetric, then

$\mathbb {R}$

, if Z is symmetric, then

![]() $\rho (Z,k)$

is the supremum on a set of maximum eigenvalues of submatrices of Z.

$\rho (Z,k)$

is the supremum on a set of maximum eigenvalues of submatrices of Z.

For any

![]() $k\in \mathbb {N}$

such that

$k\in \mathbb {N}$

such that

![]() $k\leq p$

let

$k\leq p$

let

![]() $\mathcal {F}(p,k):=\{ F\subset \{1,\ldots ,p\}; \mathrm {Card}(F)=k\}$

be the set of sets containing k different integers between

$\mathcal {F}(p,k):=\{ F\subset \{1,\ldots ,p\}; \mathrm {Card}(F)=k\}$

be the set of sets containing k different integers between

![]() $1$

and p. Moreover, let

$1$

and p. Moreover, let

![]() $\{e_i\}_{i=1}^p$

be the canonical basis in

$\{e_i\}_{i=1}^p$

be the canonical basis in

![]() $\mathbb {R}^p$

(i.e.,

$\mathbb {R}^p$

(i.e.,

![]() $e_i$

has zeros on all its elements except on the

$e_i$

has zeros on all its elements except on the

![]() $i^{th}$

position which contains a one) and let

$i^{th}$

position which contains a one) and let

![]() $\mathcal {P}\big (\mathcal {F}(p,k)\big )$

be

$\mathcal {P}\big (\mathcal {F}(p,k)\big )$

be

$$ \begin{align*} \bigg\{P_{(F)}:=(e_{\alpha_1},\ldots,e_{\alpha_k})\in \mathbb{R}^{p\times k} \bigg| F\in \mathcal{F}(p,k); \alpha_i\in F; \alpha_1 < \alpha_2<\cdots< \alpha_k\bigg\}, \end{align*} $$

$$ \begin{align*} \bigg\{P_{(F)}:=(e_{\alpha_1},\ldots,e_{\alpha_k})\in \mathbb{R}^{p\times k} \bigg| F\in \mathcal{F}(p,k); \alpha_i\in F; \alpha_1 < \alpha_2<\cdots< \alpha_k\bigg\}, \end{align*} $$

that is, the set of orthogonal projectors from

![]() $\mathbb {R}^p$

to its k-dimensional subspaces that are spanned by the subsets of

$\mathbb {R}^p$

to its k-dimensional subspaces that are spanned by the subsets of

![]() $\{e_1,\ldots ,e_p\}$

whose cardinalities equal k and that preserve the order of the indexes. This set is useful since for any

$\{e_1,\ldots ,e_p\}$

whose cardinalities equal k and that preserve the order of the indexes. This set is useful since for any

![]() $v\in \mathbb {R}^p$

such that

$v\in \mathbb {R}^p$

such that

![]() $\|v\|_0 = k$

then

$\|v\|_0 = k$

then

![]() $\mathcal {P}\big (\mathcal {F}(p,k)\big )$

contains a matrix P such that

$\mathcal {P}\big (\mathcal {F}(p,k)\big )$

contains a matrix P such that

![]() $w=P^\top v$

,

$w=P^\top v$

,

![]() $w\in \mathbb {R}^k$

and w is equal to v if the former is embodied in

$w\in \mathbb {R}^k$

and w is equal to v if the former is embodied in

![]() $\mathbb {R}^p$

. It is easy to check that any of these P satisfies

$\mathbb {R}^p$

. It is easy to check that any of these P satisfies

![]() $P^\top P= I_{k\times k}$

,

$P^\top P= I_{k\times k}$

,

![]() $\|PP^\top \|_{\mathrm {spec}}=1$

and

$\|PP^\top \|_{\mathrm {spec}}=1$

and

![]() $\|P\|_{\mathrm {spec}}=1$

, where for any

$\|P\|_{\mathrm {spec}}=1$

, where for any

![]() $Z\in \mathbb {R}^{p\times p}$

,

$Z\in \mathbb {R}^{p\times p}$

,

![]() $\|Z\|_{\mathrm {spec}}:= \max _{\|v\|_2=1} \|Zv\|_2$

is the spectral norm of Z.

$\|Z\|_{\mathrm {spec}}:= \max _{\|v\|_2=1} \|Zv\|_2$

is the spectral norm of Z.

For any

![]() $m \in \mathbb {N}$

and

$m \in \mathbb {N}$

and

![]() $Z\in \mathbb {R}^{m\times m}$

, let

$Z\in \mathbb {R}^{m\times m}$

, let

![]() $\lambda _{\mathrm {max}}(Z), \lambda _{\mathrm {min}}(Z), \text { and } \lambda _i(Z)$

be, respectively, the maximum, minimum, and

$\lambda _{\mathrm {max}}(Z), \lambda _{\mathrm {min}}(Z), \text { and } \lambda _i(Z)$

be, respectively, the maximum, minimum, and

![]() $i^{th}$

(in descending order) eigenvalues of Z. If Z is positive definite, let

$i^{th}$

(in descending order) eigenvalues of Z. If Z is positive definite, let

![]() $\kappa (Z) = \lambda _{\mathrm {max}}(Z) / \lambda _{\mathrm {min}}(Z)$

be the condition number of Z (e.g., Golub and Van Loan, Reference Golub and Van Loan2013).

$\kappa (Z) = \lambda _{\mathrm {max}}(Z) / \lambda _{\mathrm {min}}(Z)$

be the condition number of Z (e.g., Golub and Van Loan, Reference Golub and Van Loan2013).

For any

![]() $k\in \mathbb {N}$

s.t.

$k\in \mathbb {N}$

s.t.

![]() $k\leq p$

and any

$k\leq p$

and any

![]() $F\in \mathcal {F}(k,p)$

, let

$F\in \mathcal {F}(k,p)$

, let

![]() $\lambda _i$

,

$\lambda _i$

,

![]() $\lambda _i(F)$

,

$\lambda _i(F)$

,

![]() $\hat {\lambda }_i$

, and

$\hat {\lambda }_i$

, and

![]() $\hat {\lambda }_i(F)$

be the

$\hat {\lambda }_i(F)$

be the

![]() $i^{th}$

(in descending order) generalized eigenvalues of, respectively, the pairs of matrices

$i^{th}$

(in descending order) generalized eigenvalues of, respectively, the pairs of matrices

![]() $\big (\Sigma _{XY}\Sigma _{YX}$

,

$\big (\Sigma _{XY}\Sigma _{YX}$

,

![]() $\Sigma _X \big )$

,

$\Sigma _X \big )$

,

![]() $\big (P_{(F)}^\top \Sigma _{XY}\Sigma _{YX}P_{(F)}$

,

$\big (P_{(F)}^\top \Sigma _{XY}\Sigma _{YX}P_{(F)}$

,

![]() $P_{(F)}^\top \Sigma _X P_{(F)} \big )$

,

$P_{(F)}^\top \Sigma _X P_{(F)} \big )$

,

![]() $\big (\hat {\Sigma }_{XY}\hat {\Sigma }_{YX}$

,

$\big (\hat {\Sigma }_{XY}\hat {\Sigma }_{YX}$

,

![]() $\hat {\Sigma }_X\big ),$

and finally

$\hat {\Sigma }_X\big ),$

and finally

![]() $\big (P_{(F)}^\top \hat {\Sigma }_{XY}\hat {\Sigma }_{YX} P_{(F)}$

,

$\big (P_{(F)}^\top \hat {\Sigma }_{XY}\hat {\Sigma }_{YX} P_{(F)}$

,

![]() $P_{(F)}^\top \hat {\Sigma }_X P_{(F)} \big )$

.

$P_{(F)}^\top \hat {\Sigma }_X P_{(F)} \big )$

.

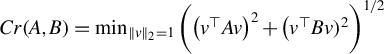

For any

![]() $p\in \mathbb {N}$

and any symmetric matrices

$p\in \mathbb {N}$

and any symmetric matrices

![]() $A,B \in \mathbb {R}^{p\times p}$

, let

$A,B \in \mathbb {R}^{p\times p}$

, let

$$ \begin{align*} Cr(A,B)=\min_{\|v\|_2=1}\bigg( \big(v^\top A v\big)^2 + \big(v^\top B v )^2\bigg)^{1/2} \end{align*} $$

$$ \begin{align*} Cr(A,B)=\min_{\|v\|_2=1}\bigg( \big(v^\top A v\big)^2 + \big(v^\top B v )^2\bigg)^{1/2} \end{align*} $$

be the Crawford number of

![]() $(A,B)$

. Stewart (Reference Stewart1979) shows that if

$(A,B)$

. Stewart (Reference Stewart1979) shows that if

![]() $Cr(A,B)>0,$

the GEP associated with

$Cr(A,B)>0,$

the GEP associated with

![]() $(A,B)$

is definite, namely, it has a complete system of generalized eigenvectors and all its generalized eigenvalues are real.

$(A,B)$

is definite, namely, it has a complete system of generalized eigenvectors and all its generalized eigenvalues are real.

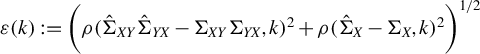

For any

![]() $k\in \mathbb {N}$

such that

$k\in \mathbb {N}$

such that

![]() $k\leq p$

define

$k\leq p$

define

and

$$ \begin{align*} \varepsilon(k):= \bigg( \rho (\hat{\Sigma}_{XY}\hat{\Sigma}_{YX}-\Sigma_{XY}\Sigma_{YX},k)^2 + \rho(\hat{\Sigma}_X-\Sigma_X,k)^2 \bigg)^{1/2}. \end{align*} $$

$$ \begin{align*} \varepsilon(k):= \bigg( \rho (\hat{\Sigma}_{XY}\hat{\Sigma}_{YX}-\Sigma_{XY}\Sigma_{YX},k)^2 + \rho(\hat{\Sigma}_X-\Sigma_X,k)^2 \bigg)^{1/2}. \end{align*} $$

In the next results, we have employed the techniques developed in Tan et al. (Reference Tan, Wang, Liu and Zhang2018) to provide a non-asymptotic bound on the

![]() $l_2$

error of LR.

$l_2$

error of LR.

Theorem 2. In model (1), assume

![]() $\Sigma _X$

is positive definite and that

$\Sigma _X$

is positive definite and that

![]() $\|\beta \|_0=s$

. Let

$\|\beta \|_0=s$

. Let

![]() $\hat {\phi }(t)$

denote the output of Algorithm 1 after t iterations of the last step,

$\hat {\phi }(t)$

denote the output of Algorithm 1 after t iterations of the last step,

![]() $\phi _{0}$

represent the initial truncated vector as specified in Step

$\phi _{0}$

represent the initial truncated vector as specified in Step

![]() $2$

of Algorithm 1 and

$2$

of Algorithm 1 and

![]() $\phi ^\star =\beta /\|\beta \|_2$

stand for the population target vector. Consider the following parameterization for Algorithm 1:

$\phi ^\star =\beta /\|\beta \|_2$

stand for the population target vector. Consider the following parameterization for Algorithm 1:

![]() $\underline {k} = C's<p$

with

$\underline {k} = C's<p$

with

![]() $C'> 1$

,

$C'> 1$

,

![]() $\overline {k} = C" \underline {k}<p-s$

with

$\overline {k} = C" \underline {k}<p-s$

with

![]() $C"> 1$

, and

$C"> 1$

, and

![]() $k' = \overline {k} + s$

. Under this parametrization, select a constant

$k' = \overline {k} + s$

. Under this parametrization, select a constant

![]() $c> 0$

and a step size

$c> 0$

and a step size

![]() $\eta $

such that

$\eta $

such that

$$ \begin{align} \nu &= \left( 1 + \sqrt{\frac{s}{\underline{k}}} \right) \left( 1 - \frac{1 + c}{8} \eta \lambda_{\min}(\Sigma_X) \frac{1}{C_\diamond \kappa(\Sigma_X)} \right)^{1/2} < 1, \end{align} $$

$$ \begin{align} \nu &= \left( 1 + \sqrt{\frac{s}{\underline{k}}} \right) \left( 1 - \frac{1 + c}{8} \eta \lambda_{\min}(\Sigma_X) \frac{1}{C_\diamond \kappa(\Sigma_X)} \right)^{1/2} < 1, \end{align} $$

where

![]() $C_\diamond = (1 + c)/(1 - c)$

. Define

$C_\diamond = (1 + c)/(1 - c)$

. Define

![]() $\Lambda := \lambda _{\mathrm {max}} / (1 + \lambda _{\mathrm {max}}^2)$

. Then, conditioned on the events where

$\Lambda := \lambda _{\mathrm {max}} / (1 + \lambda _{\mathrm {max}}^2)$

. Then, conditioned on the events where

$$ \begin{align} \frac{\varepsilon(k')}{\Delta(k')} &< \frac{\Lambda(1 - \nu) \sqrt{\xi}}{4 \sqrt{10} + \Lambda(1 - \nu) \sqrt{\xi}}, \end{align} $$

$$ \begin{align} \frac{\varepsilon(k')}{\Delta(k')} &< \frac{\Lambda(1 - \nu) \sqrt{\xi}}{4 \sqrt{10} + \Lambda(1 - \nu) \sqrt{\xi}}, \end{align} $$

with

![]() $\xi $

is defined as

$\xi $

is defined as

$$\begin{align*}\xi := \min \left\{ \frac{1}{8 C_\diamond \kappa(\Sigma_X)}, \frac{1}{30 (1 + c) C_\diamond^3 \eta \lambda_{\max} \kappa^3(\Sigma_X)} \right\}, \end{align*}$$

$$\begin{align*}\xi := \min \left\{ \frac{1}{8 C_\diamond \kappa(\Sigma_X)}, \frac{1}{30 (1 + c) C_\diamond^3 \eta \lambda_{\max} \kappa^3(\Sigma_X)} \right\}, \end{align*}$$

the upper bound

$$ \begin{align} \sqrt{1 - |(\phi^\star)^\top \hat{\phi}(t)|} \leq \nu^{t} \sqrt{\xi} + \frac{1}{(1 - \nu)^2} \frac{2\sqrt{5}}{\Lambda \big(\Delta(k') - \varepsilon(k')\big)} \varepsilon(k') \end{align} $$

$$ \begin{align} \sqrt{1 - |(\phi^\star)^\top \hat{\phi}(t)|} \leq \nu^{t} \sqrt{\xi} + \frac{1}{(1 - \nu)^2} \frac{2\sqrt{5}}{\Lambda \big(\Delta(k') - \varepsilon(k')\big)} \varepsilon(k') \end{align} $$

is satisfied almost surely.

Since the theorem extends a known result from Tan et al. (Reference Tan, Wang, Liu and Zhang2018) (originally developed for RIFLE) to LR, we provide a complete proof in the Supplementary Material. This proof also fixes the proof of the theorem for RIFLE given in Tan et al. (Reference Tan, Wang, Liu and Zhang2018). (Our proof is given in the context of our article, but is readily generalizable to the setup of Tan et al. (Reference Tan, Wang, Liu and Zhang2018).)

Theorem 2 enables us to bound the

![]() $l_2$

norm between

$l_2$

norm between

![]() $\phi ^\star $

and

$\phi ^\star $

and

![]() $\hat {\phi }(t)$

. Specifically, when the inner product

$\hat {\phi }(t)$

. Specifically, when the inner product

![]() $\langle \phi ^\star , \hat {\phi }(t) \rangle _2 \geq 0$

, we have

$\langle \phi ^\star , \hat {\phi }(t) \rangle _2 \geq 0$

, we have

![]() $\sqrt {1 - |(\phi ^\star )^\top \hat {\phi }(t)|} = 1/\sqrt {2} \|\phi ^\star - \hat {\phi }(t)\|_2$

.

$\sqrt {1 - |(\phi ^\star )^\top \hat {\phi }(t)|} = 1/\sqrt {2} \|\phi ^\star - \hat {\phi }(t)\|_2$

.

The first term on the right-hand side,

![]() $\nu ^{t} \sqrt {\xi }$

, represents the optimization error, while the second term represents the statistical error (Tan et al., Reference Tan, Wang, Liu and Zhang2018).

$\nu ^{t} \sqrt {\xi }$

, represents the optimization error, while the second term represents the statistical error (Tan et al., Reference Tan, Wang, Liu and Zhang2018).

Assumptions (11) and (12) control the estimation error for the (generalized) spectrum of the matrix pair. Specifically, these assumptions provide bounds on the condition number of

![]() $\Sigma _X$

, on the spectra of submatrices of

$\Sigma _X$

, on the spectra of submatrices of

![]() $\hat {\Sigma }_X$

, and on

$\hat {\Sigma }_X$

, and on

![]() $\hat {\lambda }_i(F)$

for any

$\hat {\lambda }_i(F)$

for any

![]() $F \in \mathcal {F}(p, k')$

and

$F \in \mathcal {F}(p, k')$

and

![]() $i = 1, \dots , k'$

; details can be found in Tan et al. (Reference Tan, Wang, Liu and Zhang2018). As shown later, if

$i = 1, \dots , k'$

; details can be found in Tan et al. (Reference Tan, Wang, Liu and Zhang2018). As shown later, if

![]() $Y_1$

and

$Y_1$

and

![]() $X_1$

are sub-Gaussian, there exist ratios among n,

$X_1$

are sub-Gaussian, there exist ratios among n,

![]() $p(n)$

, and

$p(n)$

, and

![]() $s(n)$

such that the probability of events where these assumptions fail converges to zero as

$s(n)$

such that the probability of events where these assumptions fail converges to zero as

![]() $n \rightarrow \infty $

.

$n \rightarrow \infty $

.

Theorem 2 also requires certain theoretical conditions on the input parameters of Algorithm 1.

Inequalities (9) and (10) impose technical restrictions on step size

![]() $\eta $

. Currently, there is no known method to select

$\eta $

. Currently, there is no known method to select

![]() $\eta $

that consistently meets these theoretical criteria. However, both experience and Assumption (9) from Tan et al. (Reference Tan, Wang, Liu and Zhang2018) suggest selecting

$\eta $

that consistently meets these theoretical criteria. However, both experience and Assumption (9) from Tan et al. (Reference Tan, Wang, Liu and Zhang2018) suggest selecting

![]() $\eta $

small enough to satisfy

$\eta $

small enough to satisfy

![]() $\eta \lambda (\hat {\Sigma }_X) < 1$

.

$\eta \lambda (\hat {\Sigma }_X) < 1$

.

Furthermore, Equation (13) requires that the cosine between the target vector (population)

![]() $\phi ^\star $

and the input vector

$\phi ^\star $

and the input vector

![]() $\phi _0$

be sufficiently large. We discuss the choice of the initial vector in the following result and example. This result gives an explicit convergence rate depending on the initialization of Algorithm 1. The next example shows how this result applies in practice.

$\phi _0$

be sufficiently large. We discuss the choice of the initial vector in the following result and example. This result gives an explicit convergence rate depending on the initialization of Algorithm 1. The next example shows how this result applies in practice.

Proposition 1. In model (1) assume that

![]() $Y_1$

,

$Y_1$

,

![]() $X_{1,1}, \dots , X_{1,p(n)}$

are sub-Gaussian, that

$X_{1,1}, \dots , X_{1,p(n)}$

are sub-Gaussian, that

![]() $\Sigma _X := \mathbb {E}(X_1 X_1^\top )$

is positive definite and that

$\Sigma _X := \mathbb {E}(X_1 X_1^\top )$

is positive definite and that

![]() $\|\beta \|_0 = s$

. Let

$\|\beta \|_0 = s$

. Let

![]() $\hat {\phi }(t)$

denote the output of Algorithm 1 after t iterations in the last step. Let

$\hat {\phi }(t)$

denote the output of Algorithm 1 after t iterations in the last step. Let

![]() $\phi _{0}$

be the initial truncated vector as specified in Step

$\phi _{0}$

be the initial truncated vector as specified in Step

![]() $2$

of Algorithm 1 and

$2$

of Algorithm 1 and

![]() $\phi ^\star =\beta /\|\beta \|_2$

represent the population target vector. Suppose that

$\phi ^\star =\beta /\|\beta \|_2$

represent the population target vector. Suppose that

![]() $\langle \phi ^\star , \hat {\phi }(t) \rangle _2 \geq 0$

a.s. and that

$\langle \phi ^\star , \hat {\phi }(t) \rangle _2 \geq 0$

a.s. and that

![]() $s\log (p/s)/n=o(1)$

and

$s\log (p/s)/n=o(1)$

and

![]() $s/p=o(1)$

. Then, under assumptions (9) and (10) of Theorem 2 if

$s/p=o(1)$

. Then, under assumptions (9) and (10) of Theorem 2 if

with

![]() $\alpha _n = o(1)$

, then there exists a choice of

$\alpha _n = o(1)$

, then there exists a choice of

![]() $t = t(n)$

such that

$t = t(n)$

such that

$$\begin{align*}\|\phi^\star - \hat{\phi}(t)\|_2 = O_p \left( \sqrt{\frac{s \log(p/s)}{n}} \right). \end{align*}$$

$$\begin{align*}\|\phi^\star - \hat{\phi}(t)\|_2 = O_p \left( \sqrt{\frac{s \log(p/s)}{n}} \right). \end{align*}$$

The proof is given in the Appendix. An estimate of the magnitude of

![]() $\beta $

can then be obtained by regressing Y onto the span of

$\beta $

can then be obtained by regressing Y onto the span of

![]() $X\hat {\phi }(t)$

. Let

$X\hat {\phi }(t)$

. Let

![]() $\hat {\upsilon }$

be this estimator, and let

$\hat {\upsilon }$

be this estimator, and let

![]() $\hat {\beta }$

denote the estimator of

$\hat {\beta }$

denote the estimator of

![]() $\beta $

obtained as the product of

$\beta $

obtained as the product of

![]() $\hat {\phi }(t)$

and

$\hat {\phi }(t)$

and

![]() $\hat {\upsilon }$

. Proposition 2 below tells us that for

$\hat {\upsilon }$

. Proposition 2 below tells us that for

![]() $t = t(n)$

growing sufficiently fast, we have

$t = t(n)$

growing sufficiently fast, we have

![]() $\|\hat {\beta } - \beta \|_2 = O_p\big ((s \log (p / s) / n)^{1/2}\big )$

, which is minimax (Raskutti et al., Reference Raskutti, Wainwright and Yu2011).

$\|\hat {\beta } - \beta \|_2 = O_p\big ((s \log (p / s) / n)^{1/2}\big )$

, which is minimax (Raskutti et al., Reference Raskutti, Wainwright and Yu2011).

Example 1 [Loaded-RIFLE-post-Lasso].

To obtain a reliable initial vector, one might follow the heuristic suggested in Tan et al. (Reference Tan, Wang, Liu and Zhang2018) by using a solution from an

![]() $l_1$

relaxation of the

$l_1$

relaxation of the

![]() $l_0$

constrained problem as the initial vector, specifically the Lasso solution for the problem (3) Bertsimas et al., Reference Bertsimas, King and Mazumder2016; Hastie et al., Reference Hastie, Tibshirani and Tibshirani2020.

$l_0$

constrained problem as the initial vector, specifically the Lasso solution for the problem (3) Bertsimas et al., Reference Bertsimas, King and Mazumder2016; Hastie et al., Reference Hastie, Tibshirani and Tibshirani2020.

This choice of initialization is particularly suitable since the Lasso is known to select a large number of covariates when optimized for predictive accuracy. Using its (normalized) solution as the initial vector for Algorithm 1 enables us to reduce the size of the selected support while improving the accuracy of the estimation. As demonstrated in the Monte Carlo experiments in Section 5, this procedure outperforms the other

![]() $l_0$

-constrained estimators considered in this article.

$l_0$

-constrained estimators considered in this article.

The theoretical validity of this initialization procedure depends on how the Lasso is tuned. For example, Chetverikov, Liao, and Chernozhukov (Reference Chetverikov, Liao and Chernozhukov2021) established that the

![]() $l_2$

error of cross-validated Lasso is

$l_2$

error of cross-validated Lasso is

![]() $O_p\big ((s \log (p/s) / n)^{1/2} \times \log (pn)\big )$

. This error rate ensures that the probability of events where the assumptions on

$O_p\big ((s \log (p/s) / n)^{1/2} \times \log (pn)\big )$

. This error rate ensures that the probability of events where the assumptions on

![]() $\phi _0$

in Theorem 2 are violated converges to zero. Other tuning methods for the Lasso that provide similar guarantees are available. For example, one alternative to cross-validation could be to set the penalty parameter based on the self-normalized moderate deviation theory, as proposed in Belloni et al. (Reference Belloni, Chen, Chernozhukov and Hansen2012).

$\phi _0$

in Theorem 2 are violated converges to zero. Other tuning methods for the Lasso that provide similar guarantees are available. For example, one alternative to cross-validation could be to set the penalty parameter based on the self-normalized moderate deviation theory, as proposed in Belloni et al. (Reference Belloni, Chen, Chernozhukov and Hansen2012).

To finalize the theoretical analysis of the convergence of LR, we demonstrate that the convergence rate obtained in Proposition 1 in the sub-Gaussian setting is actually optimal, in the sense that it is the minimax convergence rate of the selection of the directional best subset selection defined by Equation (4). To demonstrate this statement, the following proposition connects the estimation error of

![]() $\beta $

in the model (1) under the assumption of sparsity to the estimation error of

$\beta $

in the model (1) under the assumption of sparsity to the estimation error of

![]() $\phi ^\star $

, thus linking the minimax convergence rates of the two estimators.

$\phi ^\star $

, thus linking the minimax convergence rates of the two estimators.

Proposition 2. In model (1) suppose that

![]() $\Sigma _X := \mathbb {E}(X_1 X_1^\top )$

is positive definite and that

$\Sigma _X := \mathbb {E}(X_1 X_1^\top )$

is positive definite and that

![]() $\|\beta \|_0 = s$

. Let

$\|\beta \|_0 = s$

. Let

![]() $\hat {\phi } \in \mathbb {R}^p$

be an estimator of

$\hat {\phi } \in \mathbb {R}^p$

be an estimator of

![]() $\phi ^\star :=\beta /\|\beta \|_2$

that satisfies the following conditions a.s.:

$\phi ^\star :=\beta /\|\beta \|_2$

that satisfies the following conditions a.s.:

the convergence rate

![]() $\|\hat {\phi } - \phi ^\star \|_2 = O_p(\alpha _n)$

, where

$\|\hat {\phi } - \phi ^\star \|_2 = O_p(\alpha _n)$

, where

![]() $\alpha _n = o(1)$

and

$\alpha _n = o(1)$

and

![]() $(2s / n)^{1/2} / \alpha _n = o(1)$

, and let

$(2s / n)^{1/2} / \alpha _n = o(1)$

, and let

![]() $\hat {\upsilon } = \operatorname {\mathrm {\arg \!\min }}_{m \in \mathbb {R}} \|Y - X \hat {\phi } m\|_2^2$

be an estimator of

$\hat {\upsilon } = \operatorname {\mathrm {\arg \!\min }}_{m \in \mathbb {R}} \|Y - X \hat {\phi } m\|_2^2$

be an estimator of

![]() $\|\beta \|_2$

. Assume that there exist constants

$\|\beta \|_2$

. Assume that there exist constants

![]() $0 < \underline {\kappa } \leq \overline {\kappa } < \infty $

such that, for all

$0 < \underline {\kappa } \leq \overline {\kappa } < \infty $

such that, for all

![]() $u \in \mathbb {R}^p$

with

$u \in \mathbb {R}^p$

with

![]() $\|u\|_2 = 1$

and

$\|u\|_2 = 1$

and

![]() $\|u\|_0 = \min \{2s, p\}$

, the inequality

$\|u\|_0 = \min \{2s, p\}$

, the inequality

holds true almost surely. Then, if

![]() $\hat {\beta } \in \mathbb {R}^p$

is an estimator of

$\hat {\beta } \in \mathbb {R}^p$

is an estimator of

![]() $\beta $

satisfying

$\beta $

satisfying

![]() $\hat {\beta } = \hat {\phi } \hat {\upsilon }$

a.s., we have

$\hat {\beta } = \hat {\phi } \hat {\upsilon }$

a.s., we have

where

![]() $c = O_p(1)$

.

$c = O_p(1)$

.

The proof is given in the Appendix. The proposition provides sufficient conditions to ensure that the

![]() $l_2$

convergence rate of

$l_2$

convergence rate of

![]() $\hat {\beta }$

is dominated by the

$\hat {\beta }$

is dominated by the

![]() $l_2$

convergences of the direction estimator

$l_2$

convergences of the direction estimator

![]() $\hat {\phi }$

.

$\hat {\phi }$

.

This result is important because it implies that the minimax convergence rate in the loss

![]() $l_2$

of the directional best subset selection (4) is the same as that of the best subset selection (3). Raskutti et al. (Reference Raskutti, Wainwright and Yu2011) have proven that in a Gaussian fixed design (that is, restricting oneself to an analysis conditionally on X), if a version of assumption (15) holds, the minimax convergence rate of

$l_2$

of the directional best subset selection (4) is the same as that of the best subset selection (3). Raskutti et al. (Reference Raskutti, Wainwright and Yu2011) have proven that in a Gaussian fixed design (that is, restricting oneself to an analysis conditionally on X), if a version of assumption (15) holds, the minimax convergence rate of

![]() $\|\hat {\beta }-\beta \|_2$

is

$\|\hat {\beta }-\beta \|_2$

is

![]() $(s\log (p/s)/n)^{1/2}$

(since

$(s\log (p/s)/n)^{1/2}$

(since

![]() $\hat {\beta }$

is the best selection of subsets). Given that this rate is obtained in fixed design, it is actually a lower bound on the unconditioned convergence of the best subset selection defined in Equation (3), and thus by Proposition 2 of the directional best subset selection in (4). Since by Proposition 1, LR actually achieves this rate in the sub-Gaussian, unconditioned setting, it must be that the rate obtained in Raskutti et al. (Reference Raskutti, Wainwright and Yu2011) is actually the minimax rate of convergence of the directional best subset selection, thus concluding the demonstration of our claim of optimality of LR.

$\hat {\beta }$

is the best selection of subsets). Given that this rate is obtained in fixed design, it is actually a lower bound on the unconditioned convergence of the best subset selection defined in Equation (3), and thus by Proposition 2 of the directional best subset selection in (4). Since by Proposition 1, LR actually achieves this rate in the sub-Gaussian, unconditioned setting, it must be that the rate obtained in Raskutti et al. (Reference Raskutti, Wainwright and Yu2011) is actually the minimax rate of convergence of the directional best subset selection, thus concluding the demonstration of our claim of optimality of LR.

3.2 Central Limit Theorem

In this section, we establish a CLT for the output of LR. Before presenting the results, we introduce the following definition, which is essential for the statement and proof of the theorem.

Definition 1. In model (1), let

![]() $\phi ^\star :=\beta /\|\beta \|_2$

as above, and

$\phi ^\star :=\beta /\|\beta \|_2$

as above, and

![]() $\|\beta \|_0<k\in \mathbb {N}$

. Hereafter, we reserve the notation

$\|\beta \|_0<k\in \mathbb {N}$

. Hereafter, we reserve the notation

![]() $\mathcal {Q}^\star $

for the set of matrices defined by

$\mathcal {Q}^\star $

for the set of matrices defined by

Moreover, hereafter, we define for any output of Algorithm 2 noted

![]() $\hat {\phi }(t)$

$\hat {\phi }(t)$

where

![]() $P_t$

is the element of

$P_t$

is the element of

![]() $\mathcal {P}(\mathcal {F}(p,\|\hat {\phi }(t)\|_0))$

that is such that if

$\mathcal {P}(\mathcal {F}(p,\|\hat {\phi }(t)\|_0))$

that is such that if

![]() $w= P_t^\top \hat {\phi }(t),$

then w is equal to

$w= P_t^\top \hat {\phi }(t),$

then w is equal to

![]() $\hat {\phi }(t)$

if the former is embodied in

$\hat {\phi }(t)$

if the former is embodied in

![]() $\mathbb {R}^p$

.

$\mathbb {R}^p$

.

We note that any output of Algorithm 1, say

![]() $\hat {\phi }(t)$

, that is such that

$\hat {\phi }(t)$

, that is such that

![]() $Q\big (\hat {\phi }(t)\big )\in \mathcal {Q}^\star $

, has a support containing the support of

$Q\big (\hat {\phi }(t)\big )\in \mathcal {Q}^\star $

, has a support containing the support of

![]() $\phi ^{\star }$

. From Lemma A5 in the Appendix, one can show that in the sub-Gaussian setting, for

$\phi ^{\star }$

. From Lemma A5 in the Appendix, one can show that in the sub-Gaussian setting, for

![]() $t=t(n)$

fast enough,

$t=t(n)$

fast enough,

![]() $Q\big (\hat {\phi }(t)\big )\in \mathcal {Q}^\star $

with probability tending to one, i.e., LR possesses sure screening property Fan and Lv, Reference Fan and Lv2008; Guo et al., Reference Guo, Zhu and Fan2020.

$Q\big (\hat {\phi }(t)\big )\in \mathcal {Q}^\star $

with probability tending to one, i.e., LR possesses sure screening property Fan and Lv, Reference Fan and Lv2008; Guo et al., Reference Guo, Zhu and Fan2020.

In the following, we make use of the following convention.

Notation 1. Let

![]() $\{a_\alpha \}_{\alpha \in \mathbb {N}}$

be a family of operators in a vector space, if

$\{a_\alpha \}_{\alpha \in \mathbb {N}}$

be a family of operators in a vector space, if

![]() $j<i,$

then

$j<i,$

then

![]() $\prod _{\alpha =i}^j a_\alpha =1$

. where in the above expression,

$\prod _{\alpha =i}^j a_\alpha =1$

. where in the above expression,

![]() $1$

represents the unitary operator in the vector space. If

$1$

represents the unitary operator in the vector space. If

![]() $j \geq i,$

the symbol

$j \geq i,$

the symbol

![]() $\prod $

is used conventionally.

$\prod $

is used conventionally.

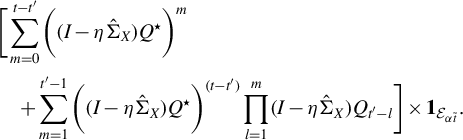

We now state the CLT for the output of Algorithm 1.

Theorem 3. In model (1), suppose that

![]() $ \Sigma _X := \mathbb {E}(X_1 X_1^\top ) $

is positive definite and

$ \Sigma _X := \mathbb {E}(X_1 X_1^\top ) $

is positive definite and

![]() $ \|\beta \|_0=s $

.

$ \|\beta \|_0=s $

.

Let

![]() $ \sigma ^2 = \operatorname {Var}(U_1) $

,

$ \sigma ^2 = \operatorname {Var}(U_1) $

,

![]() $ \phi ^\star := \beta / \|\beta \|_{2} $

and

$ \phi ^\star := \beta / \|\beta \|_{2} $

and

![]() $\hat {\phi }(t)$

be the output of Algorithm 1 after t iteration of the last step. Moreover, let

$\hat {\phi }(t)$

be the output of Algorithm 1 after t iteration of the last step. Moreover, let

![]() $\eta, \phi_{t-1} $

and

$\eta, \phi_{t-1} $

and

![]() $ C_{t-1} $

be as in Algorithm 2 and let

$ C_{t-1} $

be as in Algorithm 2 and let

![]() $ \tilde {\phi _t} := C_{t-1} \phi _{t-1} $

. Assume

$ \tilde {\phi _t} := C_{t-1} \phi _{t-1} $

. Assume

![]() $ t $

is an increasing function of

$ t $

is an increasing function of

![]() $ n $

and that

$ n $

and that

![]() $ \langle \phi ^\star , \hat {\phi }(t) \rangle _2 \geq 0 $

holds almost surely.

$ \langle \phi ^\star , \hat {\phi }(t) \rangle _2 \geq 0 $

holds almost surely.

Suppose there exist constants

![]() $ \xi> 0 $

and

$ \xi> 0 $

and

![]() $ \nu < 1 $

such that

$ \nu < 1 $

such that

$$ \begin{align} \|\phi^\star - \hat{\phi}(t)\|_2 &\leq \nu^t \sqrt{\xi} + O_p\bigg(\sqrt{\frac{s \log(p/s)}{n}}\bigg), \end{align} $$

$$ \begin{align} \|\phi^\star - \hat{\phi}(t)\|_2 &\leq \nu^t \sqrt{\xi} + O_p\bigg(\sqrt{\frac{s \log(p/s)}{n}}\bigg), \end{align} $$

and that

![]() $ \inf _{\|v\|_{2} = 1, \|v\|_0 = k} |v^\top \Sigma _{XY}|> 0 $

with

$ \inf _{\|v\|_{2} = 1, \|v\|_0 = k} |v^\top \Sigma _{XY}|> 0 $

with

![]() $ \log (n)/t(n) = o(1) $

.

$ \log (n)/t(n) = o(1) $

.

Then, conditionally on

![]() $ X $

, there exists a matrix

$ X $

, there exists a matrix

![]() $ Q^\star \in \mathcal {Q}^\star $

and a constant

$ Q^\star \in \mathcal {Q}^\star $

and a constant

![]() $ K> 0 $

such that if

$ K> 0 $

such that if

![]() $ \eta < K $

, then for any unit vector

$ \eta < K $

, then for any unit vector

![]() $ w \in \mathbb {R}^p, \|w\|_0 \leq \|\hat {\phi }(t)\|_0 $

, we have

$ w \in \mathbb {R}^p, \|w\|_0 \leq \|\hat {\phi }(t)\|_0 $

, we have

is asymptotically normal,

$$\begin{align*}\mathcal{AN}\left( 0, w^\top [(I - Q^\star) + \eta \hat{\Sigma}_X Q^\star]^{-1} \eta^2 \hat{\Sigma}_X[(I - Q^\star) + \eta \hat{\Sigma}_X Q^\star]^{-1} w \frac{\sigma^2}{\|\beta\|_{2}^2} \bigg| X \right), \end{align*}$$

$$\begin{align*}\mathcal{AN}\left( 0, w^\top [(I - Q^\star) + \eta \hat{\Sigma}_X Q^\star]^{-1} \eta^2 \hat{\Sigma}_X[(I - Q^\star) + \eta \hat{\Sigma}_X Q^\star]^{-1} w \frac{\sigma^2}{\|\beta\|_{2}^2} \bigg| X \right), \end{align*}$$

with

![]() $ \|\gamma _n\|_2 = o_p(1) $

, where

$ \|\gamma _n\|_2 = o_p(1) $

, where

![]() $ \gamma _n := \kappa _n + \tau _n $

.

$ \gamma _n := \kappa _n + \tau _n $

.

Here,

$$\begin{align*}\kappa_n = \sum_{m=0}^{t-1} \left( \prod_{l=1}^m (I_{p \times p} - \eta \hat{\Sigma}_X) Q_{t-l} \right)(\phi_{t-l} - \tilde{\phi}_{t-l}), \end{align*}$$

$$\begin{align*}\kappa_n = \sum_{m=0}^{t-1} \left( \prod_{l=1}^m (I_{p \times p} - \eta \hat{\Sigma}_X) Q_{t-l} \right)(\phi_{t-l} - \tilde{\phi}_{t-l}), \end{align*}$$

and

$$\begin{align*}\tau_n = \left[ \sum_{m=0}^{t-1} \left( \prod_{l=1}^m (I_{p \times p} - \eta \hat{\Sigma}_X) Q_{t-l} g_{t-1-m} \right) \big( (I - Q^{\star}) + \eta \hat{\Sigma}_X Q^{\star} \big) - I_{p \times p} \right] \phi^\star, \end{align*}$$

$$\begin{align*}\tau_n = \left[ \sum_{m=0}^{t-1} \left( \prod_{l=1}^m (I_{p \times p} - \eta \hat{\Sigma}_X) Q_{t-l} g_{t-1-m} \right) \big( (I - Q^{\star}) + \eta \hat{\Sigma}_X Q^{\star} \big) - I_{p \times p} \right] \phi^\star, \end{align*}$$

with

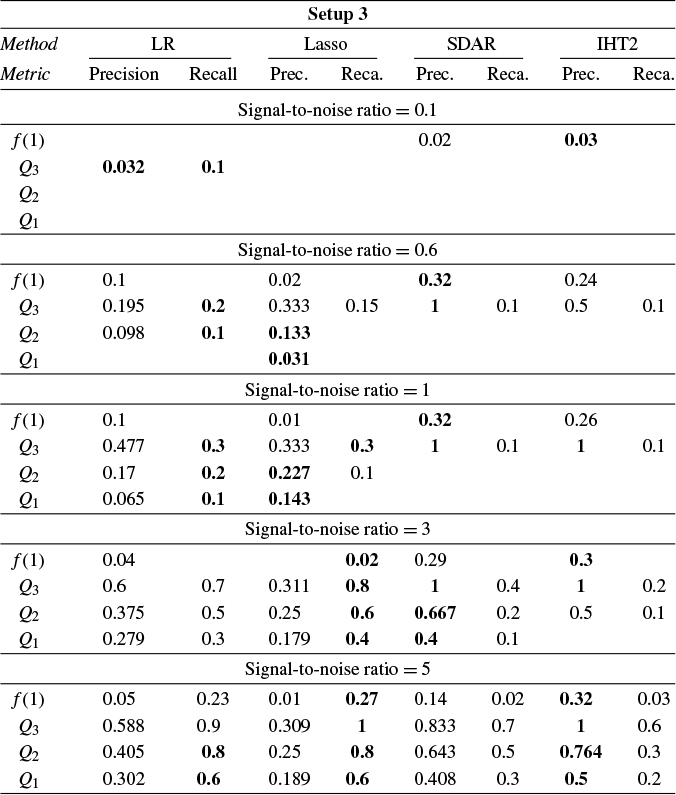

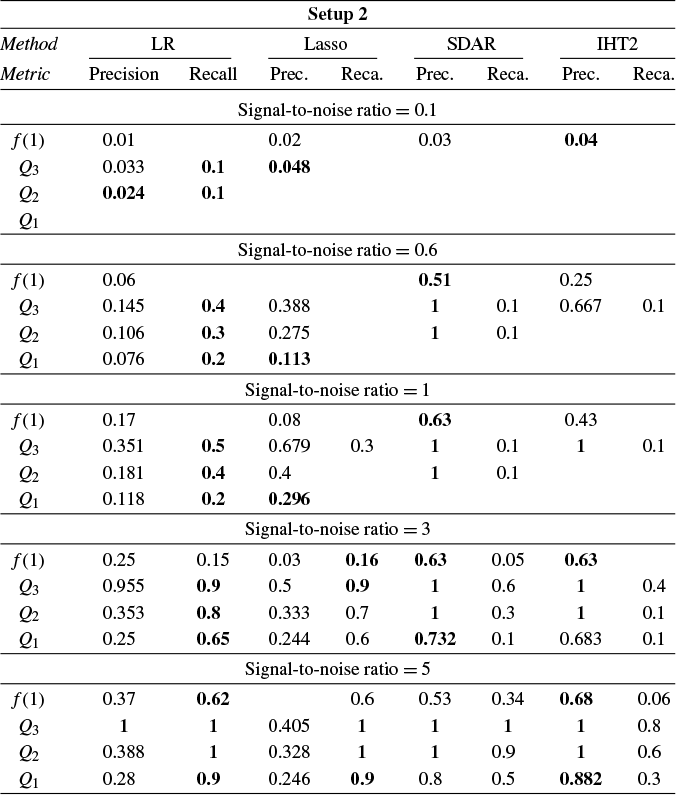

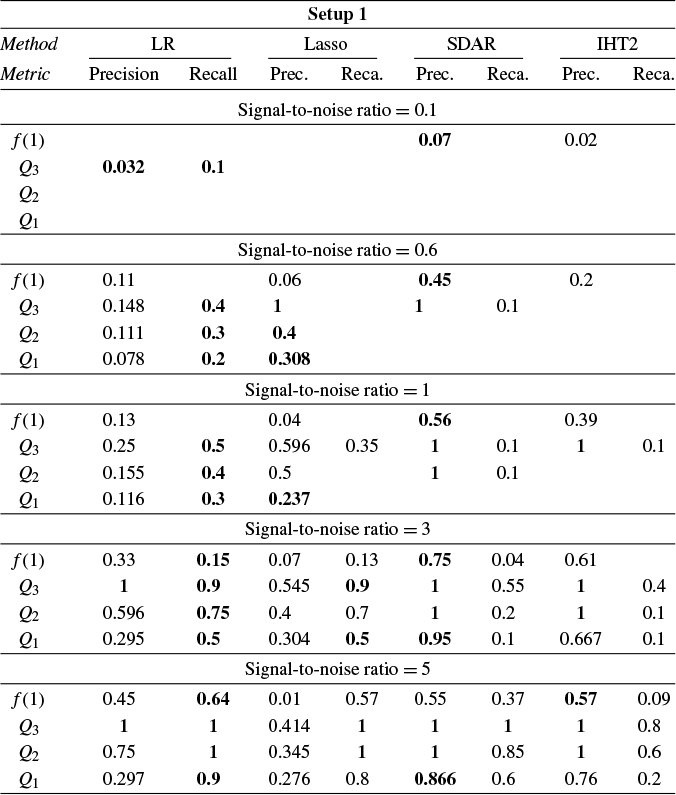

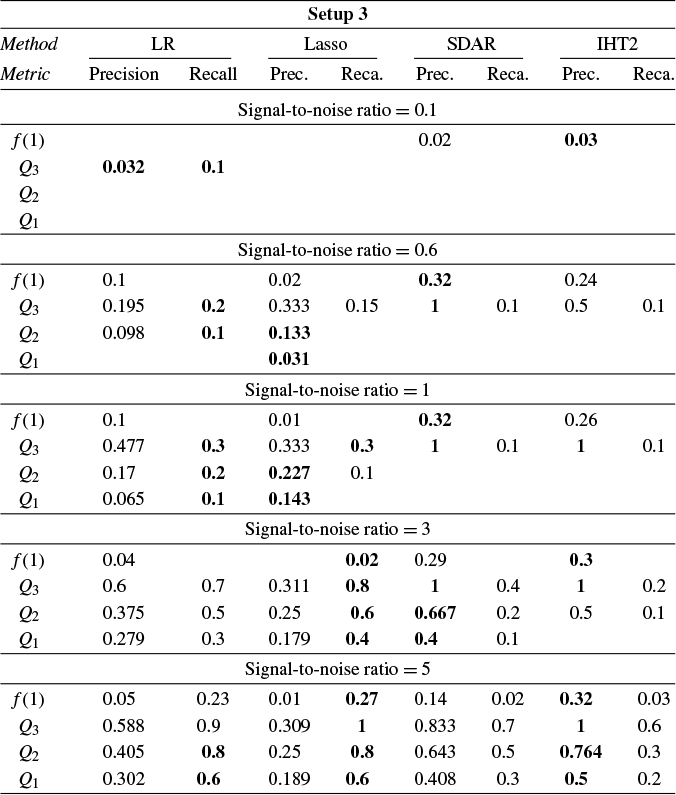

$$\begin{align*}g_{t-1-m} = \frac{\phi_{t-1-m}^\top \hat{\Sigma}_X \phi_{t-1-m}}{\phi_{t-1-m}^\top \hat{\Sigma}_X \phi^\star + \phi_{t-1-m}^\top \frac{X^\top U}{\|\beta\|_{2} n}}. \end{align*}$$