1 Introduction

Artificial intelligence (AI) has become deeply integrated into modern society, and many children today are regularly exposed to AI-powered devices, content, and experiences. According to a recent national survey, an overwhelming majority (87 percent) of American children aged three to twelve now have access to AI-powered devices, from voice assistants to smartphones, with half using them daily (Bickham et al., Reference Bickham, Schwamm and Izenman2024). Children increasingly rely on digital assistants like Siri, Alexa, or ChatGPT to ask questions and seek information, engage in interactive storytelling with social robots, or play with smart toys that can recognize and respond to facial expressions. Additionally, AI algorithms are now integral to adaptive video games and intelligent tutoring systems, which personalize content to suit individual learning needs. More recently, AI has entered the children’s publishing industry, producing storybooks with polished narratives and AI-generated illustrations. The growing presence of AI has sparked significant attention – both in academia and among the general public – about the potential impact of AI on children’s development.

The term “AI” encompasses a wide range of technologies, including computer vision, classification, robotics, natural language processing, and speech recognition. This breadth is reflected in the following definition by the Organisation for Economic Co-operation and Development (OECD): “A machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations, or decisions influencing real or virtual environments. It uses machine and/or human-based inputs to perceive real and/or virtual environments, abstracts such perceptions into models, and employs model inference to formulate options for information or action” (OECD, 2019). While AI is a broad and complex concept, it is often treated in public discourse and everyday understanding as a singular unified technology. For the purpose of this Element, to avoid both oversimplification and getting too caught up in technical details, we adopt a more pragmatic approach to define what we mean by AI rather than relying solely on a technological perspective. We use the term “AI” to refer to the group of technologies or devices that children perceive and engage with as interactive partners – often known as “conversational agents” – which include voice assistants (e.g., Siri, Alexa), chatbots, AI-enabled toys, and social robots. While the underlying technologies that power these agents may vary – often combining multiple methods such as natural language processing, speech recognition, and machine learning – their defining characteristic is the capacity to support meaningful, human-like communication between users and machines. This kind of conversational AI is particularly relevant to child development because conversation plays a crucial role in shaping their growth (Xu, Reference Xu2023). Decades of research has demonstrated that engaging in back-and-forth dialogues with parents, siblings, teachers, and peers helps children develop language skills and deepen their understanding of others and the world around them. Both the quantity and quality of language exposure significantly influence various developmental outcomes (Golinkoff et al., Reference Golinkoff, Hoff, Rowe, Tamis-LeMonda and Hirsh-Pasek2019). Furthermore, conversations are vital for children to learn concepts and acquire knowledge they might not otherwise encounter firsthand (Gelman, Reference Gelman2003). This raises important questions about how interactions with AI fit within, and potentially reshape, the broader landscape of children’s conversational experiences.

It is fair to say that many of our discussions and concerns about AI in relation to children are not entirely new. Since the introduction of digital media technologies for children – beginning with earlier media like television, followed by mobile apps and, more recently, social media – there has been ongoing debate about how these tools influence the way children engage in learning experiences and interact with others, and how this may affect their development. Some are concerned about “displacement” – the idea that excessive use of these technologies by children could reduce the amount and quality of other important activities, thus potentially hindering their social and cognitive development. On the other hand, some believe these technologies offer new ways to enhance and enrich the way children learn. For example, some studies have shown that when parents and children interact with digital books together, children may focus more on the digital features, which can lead to less meaningful conversation (e.g., Munzer et al., Reference Munzer, Miller, Weeks, Kaciroti and Radesky2019). At the same time, digital books with narration features help preliterate children enjoy stories on their own when caregivers are not available (Egert et al., Reference Egert, Cordes and Hartig2022).

We face similar questions when it comes to conversational AI, which could have both positive and negative implications for children’s development. On the one hand, AI can greatly expand children’s access to information, making it easier for them to obtain knowledge by simply asking, much like they would from other people. It also makes it more feasible to cultivate personalized learning experiences for children. On the other hand, AI’s ability to provide quick answers might, to some extent, reduce the need for children to engage in problem-solving and critical reflection, potentially limiting their opportunities to develop both critical thinking and foundational academic skills. In addition, while AI portrayed as a companion might offer children a sense of companionship when desired, there are concerns about how this might affect their relationships with the people around them. These questions about whether conversational AI benefits or hinders children’s learning and development fuel the polarized views that drive much of the current discussion. These views often remain speculative and opinion based due to the lack of direct evidence or the inconclusive nature of the available evidence thus far.

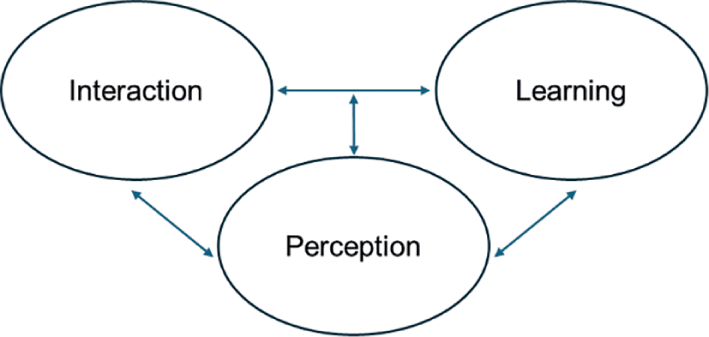

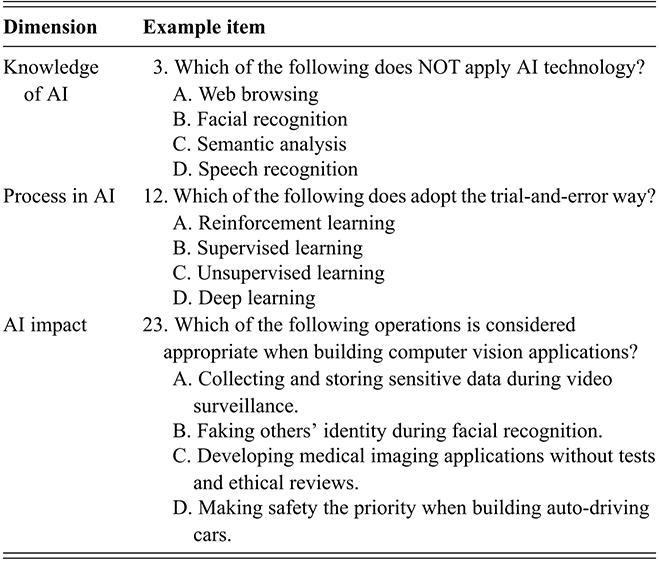

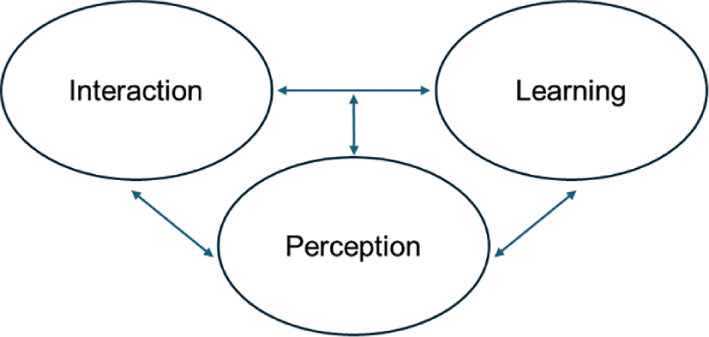

This Element aims to provide an overview of what is currently known about children’s development in the era of AI. At the same time, it acknowledges the many different questions that remain unanswered and seeks to foster readers’ curiosity about these unknowns. To bring structure to this rapidly emerging landscape, the Element focuses on three central areas: interactions, perceptions, and learning. The first area focuses on how interactions serve as a foundation for how children engage with AI. These interactions shape the relationships children form with AI and set the stage for their understanding and learning. The second area, perceptions and beliefs, examines how children make sense of AI based on their experiences and existing understanding of the world. Their perceptions are influenced both by their direct experiences and knowledge of AI and their developmental capacities. The final area, learning and development, explores how AI affects children’s growth in areas like language development, subject-domain learning, problem-solving, and creativity. It also considers the potential benefits and risks of AI in these areas, emphasizing the need for thoughtful design and use of these technologies. Figure 1 illustrates the overall organization of the Element and the relationships among the three areas. We will examine these relationships in greater detail in the Conclusion.

Three focal components concerning children’s interactions with AI.

Figure 1 Long description

Each oval is connected with two-way arrows, showing reciprocal relationships. Interaction and Learning are linked to each other, and both are also connected to Perception, forming a triangular cycle.

When we use the term “children” in this Element, we are primarily referring to those in early childhood – specifically, children in preschool through the early elementary years. This focus is deliberate for two interrelated reasons. First, our inquiry into AI’s implications for child development mirrors longstanding questions about how children acquire knowledge, form beliefs, and interact with social agents – whether human or artificial. This inquiry is closely linked to the following question: To what extent do children distinguish between humans and machines? This question is deeply tied to their developing abilities in categorization and understanding social groupings (Rakison & Dubois, Reference Rakison and Poulin-Dubois2001). Early theorists like Jean Piaget explained that young children often use “magical thinking” or anthropomorphism – that is, they may believe nonhuman things have human-like qualities. Yet contemporary psychologists argue that children’s classifications are not based solely on salient appearances but also on underlying essences – stable, internal properties that define category membership – showing that children have a more sophisticated understanding than previously thought (Gelman, Reference Gelman2003). Children from preschool through elementary school begin to form foundational beliefs about the nature of things, which influence how they categorize and make sense of the world around them. Studying children’s interactions with AI during this period provides an opportunity to explore how these early concepts of category and agency develop when children encounter machines that can behave in human-like ways. Second, and relatedly, for many young children, interactions with AI – such as smart toys, voice assistants, or learning platforms – represent their first encounters with intelligent systems. These early experiences often occur before the influence of formal schooling or strong exposure to cultural narratives about what AI is, offering a unique window into their intuitive and spontaneous thinking.

2 A Note on Research Methods

Exploring the intersection of child development and AI is inherently interdisciplinary, drawing from psychology, computer science, communication, and education – each with its own rich history of research. The current state of research reflects this interdisciplinary nature, grounded in different theories, methodologies, and approaches. Inevitably, these methodologies come with their own strengths and weaknesses, as well as different ways in which the results should be interpreted. Thus, it is necessary to briefly clarify the common methodologies used by researchers to examine AI and child development before diving into the specific study results.

2.1 Types of AI Used in Research Studies

Researchers have used AI in very different ways across studies – some rely on commercial products like Alexa, others use custom-built research prototypes, and some use simulated systems that are actually controlled by humans. These choices affect how we interpret the findings. For example, they influence how generalizable the results are, whether the outcomes could be replicated in real-world settings, and whether the findings reflect typical experiences or ideal, best-case scenarios.

The first type involves using commercially available products. Some of these are specifically designed for children, such as the Echo Dot Kids Edition and Khanmigo, while others are more general purpose and not tailored to child users such as ChatGPT. Given that these products are readily available and commonly used by children, studies using them typically yield results with strong ecological validity, as they reflect real-world conditions and naturalistic usage patterns. For instance, according to Khanmigo’s report (Khan Academy, Reference Academy2024), more than 200,000 individuals used their product during the 2023–2024 school year. Students often use Khanmigo as a tutor, seeking step-by-step support when they get stuck. However, a limitation of using off-the-shelf systems for research is that, since these products are already developed and encapsulated in commercial software, researchers have limited flexibility to modify them. This makes it challenging to isolate and examine the specific mechanisms that might influence children’s interactions with these systems or the learning outcomes they produce. In addition, some of the products studied might not be specifically designed for children (e.g., ChatGPT); as a result, the affordances or limitations observed in these studies might not represent the most ideal outcomes AI could bring to children.

The second type of AI systems used in studies consists of proof-of-concept prototypes or research-developed tools that are intentionally designed to promote specific learning or developmental outcomes. These systems are often grounded in particular theories of child development or pedagogy and are built to embody strategies believed to support those outcomes – such as fostering self-regulation, encouraging dialogic learning, or scaffolding problem-solving. This intentional alignment between theory and design allows researchers to isolate and test the impact of specific features. By comparing children’s outcomes with and without these targeted features or different design principles, studies can generate precise insights into which design elements are effective and under what conditions. As a result, this type of research is especially valuable for advancing both theory and practice – it helps identify not just whether an AI tool works but why it works.

Lastly, the third type of AI is “simulated AI.” This method, often called the “Wizard of Oz” approach, involves researchers secretly manually controlling devices while informing participants that they are interacting with an AI tool. The key advantage of this approach is that it allows the research team to precisely control the AI tool’s behaviors, avoiding confounding factors caused by unpredictable AI errors or limitations. For example, researchers typically follow a strict, predefined script when operating the simulated AI tool, which may even include staged errors to observe specific reactions or scenarios. Moreover, although this method does not employ autonomous AI systems, the rapid advancement of AI technologies toward human-like behaviors makes this approach increasingly relevant. Indeed, using human operators to simulate AI can provide a valuable, forward-looking perspective on how AI may function in the near future.

2.2 Study Design

In addition to understanding the type of AI implementation, it is also important to consider how studies on AI are designed, as this shapes the kinds of questions researchers can ask and the conclusions they can draw. Broadly, studies examining AI and children usually use three main types of design.

The first type comprises descriptive studies, which aim to document and understand patterns of behavior. Researchers use tools like surveys, interviews, field notes, and fine-grained log data to capture children’s access to or engagement with AI, which helps to identify trends and describe phenomena. Several notable examples include recent pulse surveys on children’s use of AI tools (Bickham et al., Reference Bickham, Schwamm and Izenman2024; Madden et al., Reference Madden, Calvin, Hasse and Lenhart2024); they offer readers a bird’s-eye view of the rapid proliferation of AI adoption in early childhood. Other descriptive studies may be smaller in scale but use more in-depth data, such as studies that use log data to record the interactions of a few dozen children with AI over time and understand the types of questions they initiate (Oh et al., Reference Oh, Zhang and Xu2025).

The second type of design consists of correlational studies, which examine relationships between variables to explore how certain factors may predict or relate to children’s interactions with or learning from AI. For example, researchers might investigate whether a child’s age, language background, or prior technology exposure predicts how often they engage with an AI tool or how much they learn from it. While correlational studies do not prove causation, they are valuable for identifying meaningful patterns – such as whether children from higher-income households are more likely to access AI-driven educational apps, or whether children who have more frequent interactions with AI systems show stronger gains in certain skills (Klarin et al., Reference Klarin, Hoff, Larsson and Daukantaitė2024). These findings can point researchers toward important questions to test further through experiments.

The third type of design comprises experimental studies, which are designed to test causal relationships – in other words, to determine whether and how AI directly influences children’s learning or behavior. These studies allow researchers to isolate specific variables and assess their effects through controlled comparisons. For instance, one common approach is to test the “added value” of AI by comparing outcomes between children who engage with an AI-enhanced activity and those who complete the same activity without AI. This helps determine whether AI contributes something meaningful beyond the baseline experience. Other experiments may compare children’s interactions with AI to those with human experts, such as one-on-one tutors. In this case, the human condition serves as a gold standard, allowing researchers to evaluate how closely AI can replicate the effectiveness of expert instruction. Other studies focus on specific design features of AI – such as tone of voice, expressiveness, or responsiveness – by manipulating just one feature while holding everything else constant. This allows for a precise examination of which characteristics of AI influence child outcomes and in what ways. In all cases, choosing what to compare is not a trivial decision. It reflects different underlying research questions and requires careful methodological consideration to ensure the results are both valid and meaningful.

2.3 Study Population

Much of the current research on AI’s role in child development has been conducted in regions that are relatively at the forefront of AI innovation and adoption, such as the United States, Europe, and parts of Asia. This geographic concentration might be a matter of convenience – reflecting where researchers and technical infrastructure are located – as if the study population is agnostic to the outcomes. Yet, the underlying assumption of this approach is that AI technologies are culturally neutral. In reality, AI systems are shaped by the data on which they are trained, which often encode the dominant norms, values, and biases of the societies that produce them. As a result, children do not encounter AI as a culturally blank slate but as systems embedded with implicit assumptions about language, behavior, and identity.

Two key issues highlight why AI cannot be assumed to be neutral and why study populations matter. First, AI systems may perform differently depending on a child’s background. For instance, automatic speech recognition (ASR) systems have been shown to work significantly better for monolingual English-speaking children than for their bilingual peers. A recent study by Thomas et al. (Reference Thomas, Takahesu-Tabori, Stoehr, Varady and Xu2023) found that performance was lowest for bilingual children who were more dominant in their home language. Such disparities introduce inequities in children’s ability to benefit from AI-enhanced educational tools. Second, beyond performance disparities, there are challenges of cultural alignment and representation. Children may treat AI agents not just as tools but as social partners – entities that speak to, respond to, and even form relationships with them. When these AI agents primarily reflect Western, mainstream cultural norms, children from marginalized or minoritized communities may find it harder to relate, resulting in reduced engagement and less effective learning. In one exemplar study, Finkelstein et al. (Reference Finkelstein, Yarzebinski, Vaughn, Ogan, Cassell, Lane, Yacef, Mostow and Pavlik2013) found that children who interacted with an AI tutor that spoke African American Vernacular English (AAVE) built stronger rapport and engaged more deeply, leading to better educational outcomes.

These concerns do not arise in isolation or solely from AI technologies themselves. Rather, they reflect broader societal inequities that are encoded into training data and algorithmic design. Just as teachers must navigate the complexities of supporting students from diverse backgrounds, designers and researchers must attend to how children’s identities and lived experiences shape – and are shaped by – their interactions with AI. Importantly, AI may amplify these challenges in unique ways. For instance, if children view AI as an omniscient source of knowledge, they might be less likely to question the biased information generated by AI than they would with information provided by people. While many studies have acknowledged the ethical and equity implications of AI biases and misalignments, little research has formally explored how these factors might attenuate or modulate the effects AI can have on children from diverse backgrounds. This consideration is essential for framing the interpretation of the research findings discussed in the following sections, as these findings may not fully apply across all cultural, socioeconomic, or contextual settings.

3 Children’s Interactions with AI Agents

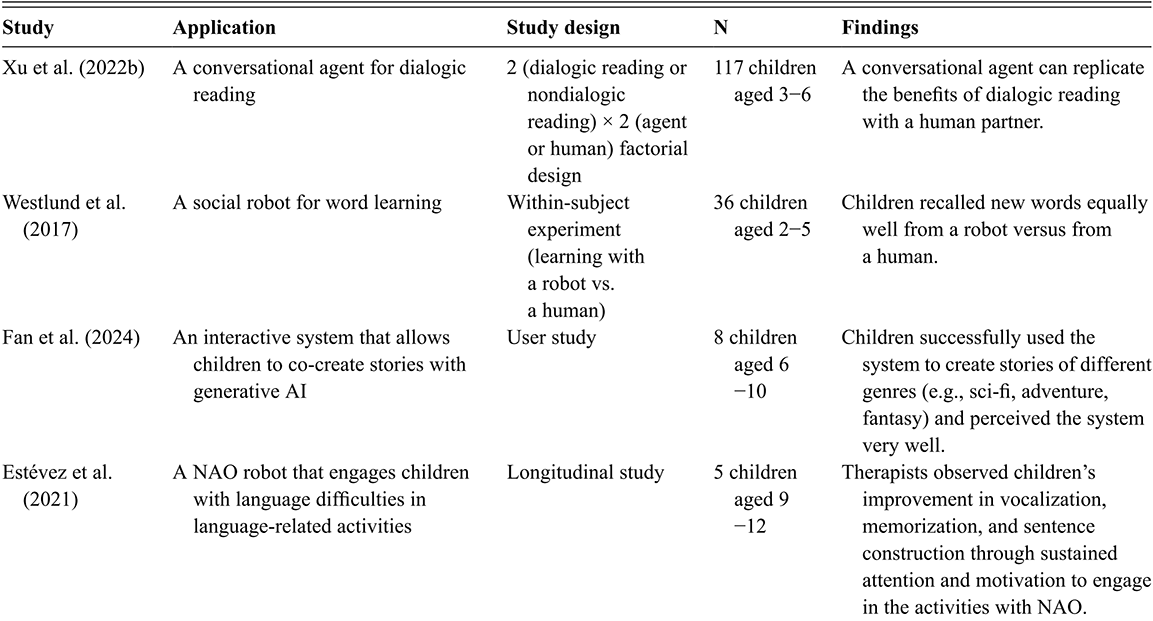

3.1 How Do Children Talk to AI?

Children today interact with AI agents that vary widely in design and capability. Many of these agents can understand spoken or text-based input and generate responses, making them appear capable of conversation on the surface. Researchers studying children’s development are deeply interested in how children actually engage with these agents in everyday contexts. Observing how children talk with AI offers insights not only into their usage patterns but also their interpretations and expectations of these systems.

For example, Lovato et al. (Reference Lovato, Piper and Wartella2019) recorded children’s interactions with Google Assistant and analyzed the types of questions asked. They found that children aged five and six often used these agents to seek factual information – questions about science, technology, math, or practical concerns like the weather. This pattern of fact-seeking may be expected and mirrors the way many adults use voice assistants. However, Lovato et al. also documented a considerable number of socially oriented questions, in which children treated the agent as if it had personal attributes, asking things like “How old are you?” or “What’s your favorite color?” A similar trend emerged in a later study on chatbots powered by generative AI with slightly older children aged eight to ten: Although most questions were factual, some focused on the AI tool’s personal characteristics (Oh et al., Reference Oh, Zhang and Xu2025).

Children’s socially framed questions – such as asking an AI tool about its favorite color or whether it has a family – have been interpreted in different ways. One common interpretation is that children are anthropomorphizing the AI tool, treating it as a social being with thoughts, preferences, or relationships. This view assumes that if children fully understood AI as a mechanical or nonhuman entity, they would not ask such questions. However, this explanation may overlook a second possibility: that children engage in such questioning not only out of belief in the AI’s human likeness but as a form of playful exploration. Even when aware that the AI tool is not a person, children may ask socially oriented questions to test the system’s boundaries, to elicit surprising responses, or simply to enjoy the novelty of the interaction. In this view, such behaviors are driven more by curiosity and experimentation than by mistaken beliefs about the nature of AI.

Tentative support for this perspective comes from a longitudinal study. In a two-month deployment of a social robot, Kanda et al. (Reference Kanda, Sato, Saiwaki and Ishiguro2007) observed that elementary-aged children initially asked many personal questions (e.g., about the robot’s feelings or background), but the frequency of such questions declined over time. A more recent study involving generative AI showed a similar pattern: Children initially posed socially framed questions like “Do you go to school?” but gradually shifted toward more transactional queries, such as requests for help or information (Oh et al., Reference Oh, Zhang and Xu2025). If children truly believed the AI tool was a social being, one might expect such personal questions to increase over time as rapport builds and social connection deepens. Instead, the observed decline in social questions suggests that children’s early interactions may often reflect playfulness and novelty seeking.

3.2 Do Children Talk to AI Differently Than They Talk to Humans?

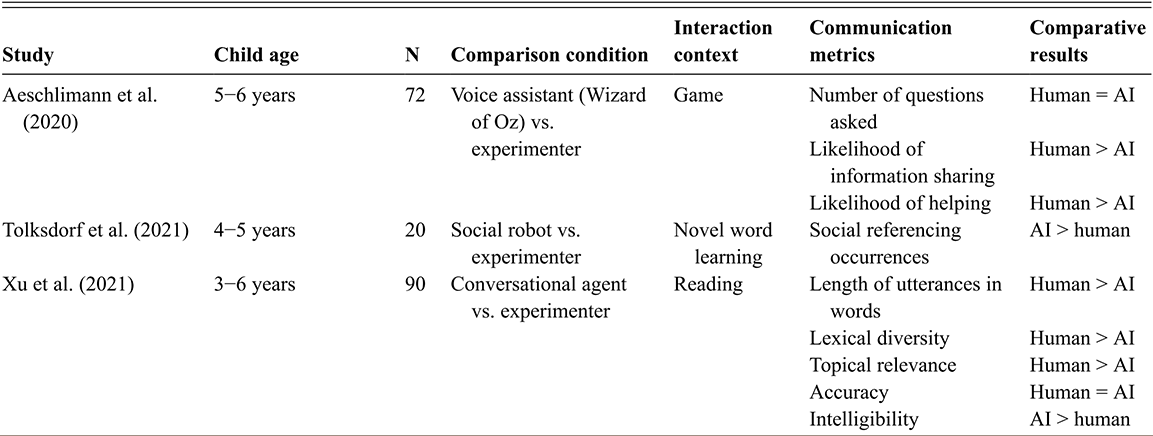

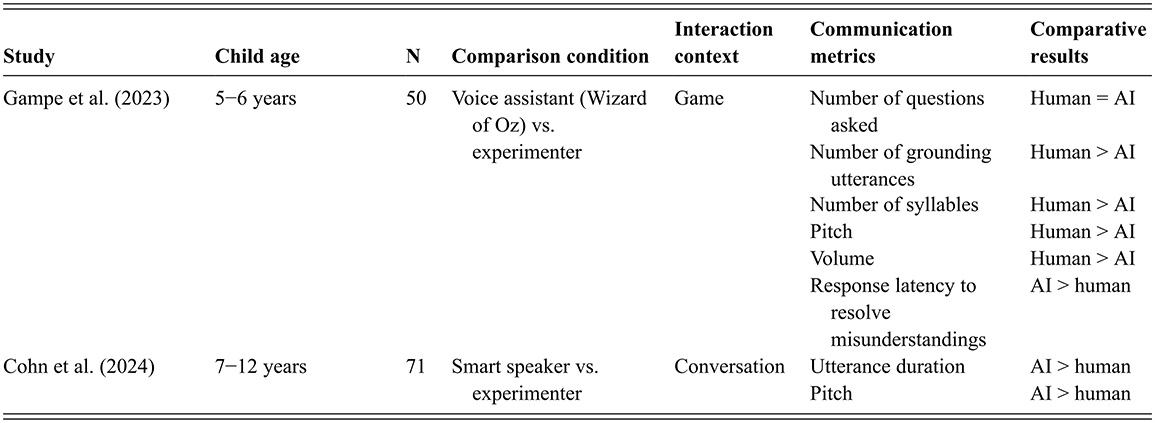

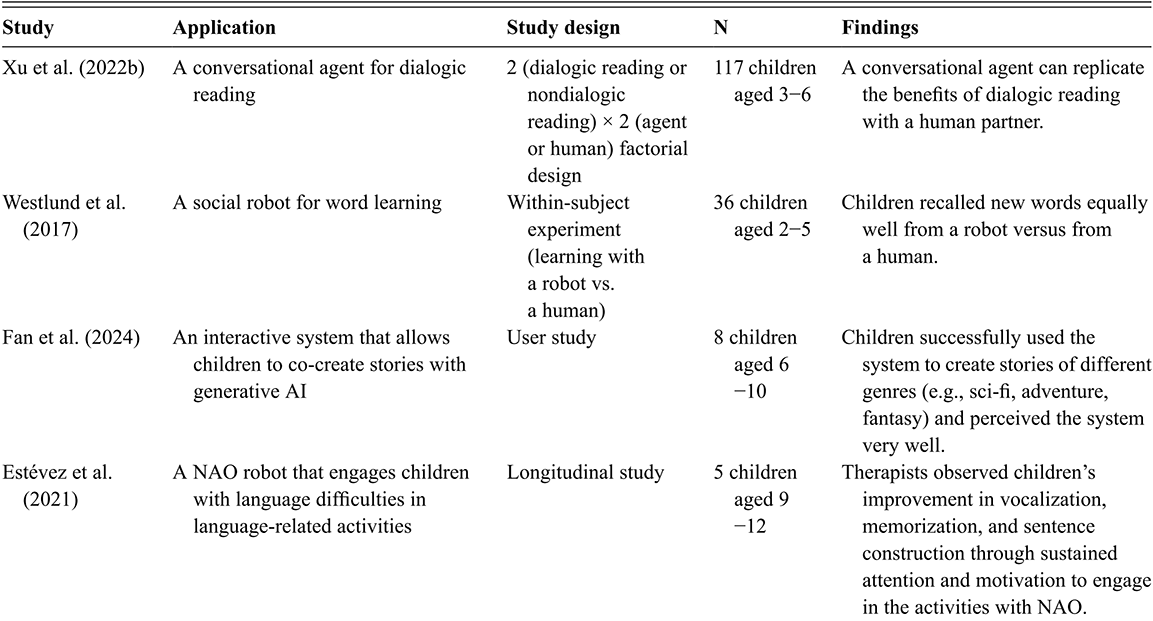

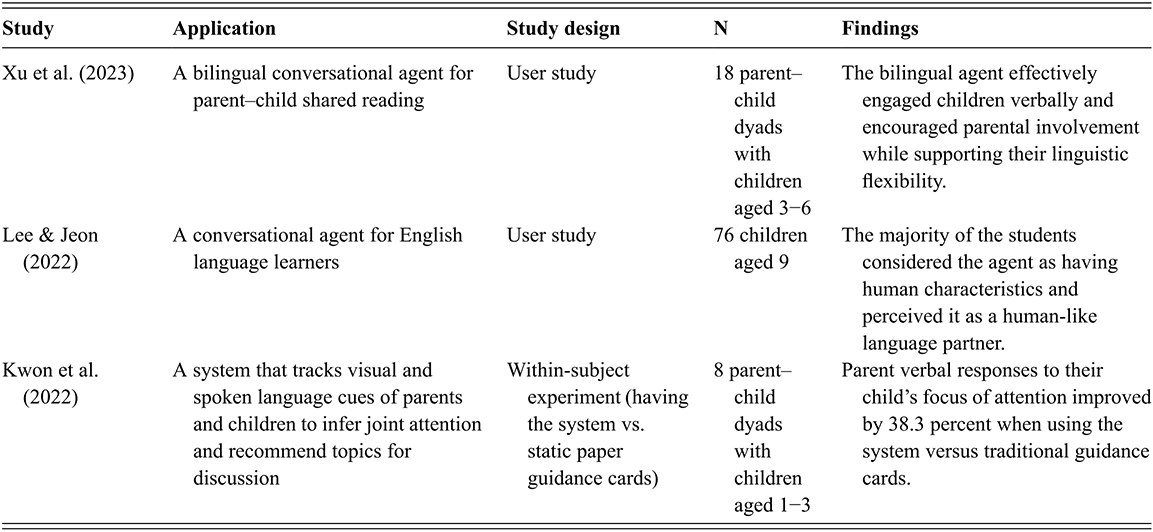

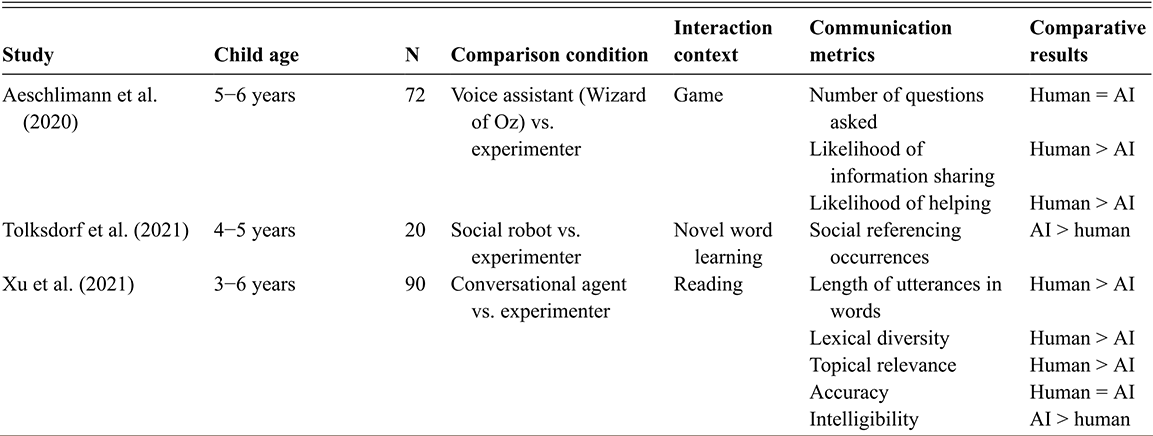

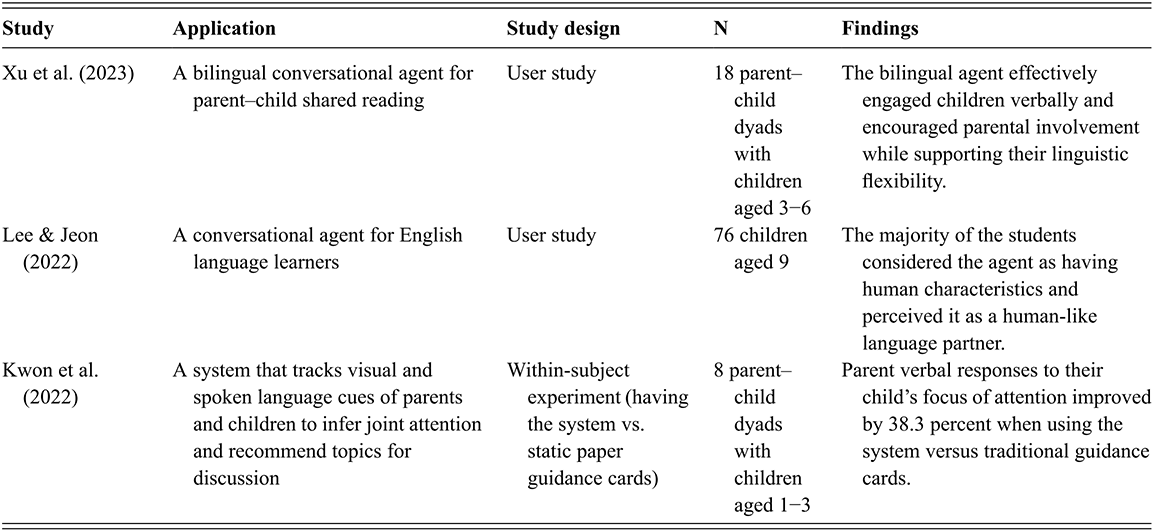

The studies in the previous section revealed interesting patterns in children’s interactions with AI agents, yet it would be challenging to draw conclusions about these behavioral patterns without directly comparing them to children’s interactions with humans. To address this, researchers have employed experimental procedures where children are instructed to interact either with a person, typically an experimenter, or an AI agent. This approach allows researchers to observe children’s communication from different perspectives. We will examine three aspects here: the quantity of communication, the quality of children’s responses, and behaviors that reflect children’s social intentions. Table 1 summarizes all the studies being discussed. It is important to note, however, that how children interact with “humans” may vary considerably depending on the context and the nature of the relationship – for example, interactions with parents may differ markedly from those with strangers. Consequently, conclusions drawn from observed AI–human differences should be approached with caution, as such differences may reflect not only the nature of AI agents versus human partners but also the relational and contextual factors shaping children’s interactions in each case.

3.2.1 Communication Quantity

The quantity of children’s communication with a conversational partner refers to how frequently children participate and how much they talk. Research consistently shows that young children participate more when they interact with a human partner than with AI. For example, Xu et al. (Reference Xu, Wang, Collins, Lee and Warschauer2021) found that children aged three to six responded more often and with longer utterances during storybook reading sessions with a human experimenter than with a smart speaker. Similarly, Gampe et al. (Reference Gampe, Zahner-Ritter, Müller and Schmid2023), whose study involved children aged five to six interacting with either a voice assistant or a human during a treasure hunt game, observed that children used more grounding utterances when engaging with humans. These behaviors suggest higher engagement in human interactions.

3.2.2 Communication Quality

Researchers conceptualize communication quality in children’s speech through multiple dimensions, focusing on both the form and function of language use such as how well children express themselves, stay on topic, and use elaborate language. These dimensions can vary depending on who the conversational partner is. On the one hand, research shows that children generally produce more sophisticated and topically relevant language when interacting with humans than with AI. For instance, Xu et al. (Reference Xu, Wang, Collins, Lee and Warschauer2021) found that children’s responses to human partners were not only longer but also demonstrated greater lexical richness and stronger relevance to the topic being discussed. This suggests that children are more expressive and better able to maintain the topic of conversation when speaking with humans, perhaps because they perceive humans as partners who warrant greater communicative effort.

Other research highlights a different manifestation of communicative effort – adapting speech to facilitate the listener’s comprehension. For example, in a study with children aged nine to twelve, Cohn et al. (Reference Cohn, Barreda, Graf Estes, Yu and Zellou2024) found that participants produced longer utterances and used a higher pitch when conversing with a smart speaker (Alexa) compared to a human experimenter. Such patterns suggest that children may adjust their speech to be more pronounced when interacting with AI, possibly due to being aware of its limitations as a listener and making a deliberate attempt to enhance clarity. Similarly, another study reported that children’s speech is often more intelligible – clearer in articulation – when directed to a conversational agent (Xu et al., Reference Xu, Wang, Collins, Lee and Warschauer2021). In both cases, this type of effort is expressed through adjustments to delivery to accommodate the perceived needs or limitations of AI agents.

3.2.3 Social Intention

Researchers have also examined communication behaviors that may reflect children’s social orientation, expectations, or intentions toward their communicative partner. Evidence suggests that while children can engage with AI, their interactions with human partners are marked by a deeper sense of social attunement and responsiveness. For instance, Aeschlimann et al. (Reference Aeschlimann, Bleiker, Wechner and Gampe2020) found that children were more likely to share information and offer help to human partners than to AI agents, suggesting that they recognize and respond to the social needs of people more than machines. Similarly, Tolksdorf et al. (Reference Tolksdorf, Crawshaw and Rohlfing2021) observed that children engaged in more frequent social referencing – looking to caregivers for guidance – when interacting with a social robot. This behavior may reflect a degree of uncertainty or hesitation in navigating interactions with AI. Adding to this, Gampe et al. (Reference Gampe, Zahner-Ritter, Müller and Schmid2023) showed that children were more proactive in establishing common ground with humans, using strategies such as grounding utterances and clarification efforts more often than they did with AI. Together, these findings suggest that although children interact readily with AI, their social behaviors remain more richly expressed and intentional in human communication contexts.

3.3 How Do Children React When AI Misunderstands Them?

An interesting scenario to explore in children’s communication with AI is when they encounter conversational breakdowns – situations where one party misunderstands the other, leading to a deviation from the intended interaction. During child–AI interactions, these breakdowns can stem from AI’s failure to comprehend a child’s input or, even when it does accurately comprehend the input, it fails to respond in a way that makes sense to the conversation. However, it is important to note that conversational breakdowns are not unique to interactions between children and AI; they also frequently occur in children’s communications with others. Nevertheless, it is reasonable to anticipate that such breakdowns may be exacerbated by the technical limitations of AI systems

In response to these breakdowns, children often use various strategies to restore the interaction, which include repeating their comments, adjusting their volume, articulating their words more clearly, rephrasing their statements, and even changing the subject of their inquiry (Beneteau et al., Reference Beneteau, Richards and Zhang2019; Cheng et al., Reference Cheng, Yen, Chen, Chen and Hiniker2018; Mavrina et al., Reference Mavrina, Szczuka and Strathmann2022). For instance, Mavrina et al. (Reference Mavrina, Szczuka and Strathmann2022) found that children aged six to twelve might repeat a command if Alexa fails to understand it initially, such as repeatedly asking “Alexa, what is the most agile animal in the world?” after Alexa mistakenly provided information about the most venomous animal instead. Children might also adjust their volume, speaking louder to ensure the command is heard, or articulate their words more clearly, emphasizing each syllable to aid recognition. Rephrasing the statement is another common strategy, as observed when children change their request from “Alexa, what is the most agile animal in the entire world?” to “Alexa, who is the most agile?” to enhance clarity. Furthermore, if none of these strategies seem to work, children may seek help from others. For example, when Alexa failed to respond helpfully to a child’s vague request – “Alexa, what can I do together with my mom and sister?” – an adult intervened by reformulating the query into a more specific question: “Alexa, events in [city name] today.”

However, these studies did not compare children’s repair strategies used with AI to those used with humans. Consequently, it remains unclear to what extent these strategies differ. Two experiments have provided evidence suggesting such differences are likely. The first study, conducted by Gampe et al. (Reference Gampe, Zahner-Ritter, Müller and Schmid2023), involved five to six-year-olds interacting with either a person or a voice assistant, both of whom exhibited staged errors in understanding children’s speech. The researchers found that after a stage error occurred, children interacting with the voice assistant were less likely to continue engaging in the conversation, whereas those in the human group were more “forgiving” of their partner’s errors. Thus, communication breakdowns had a more negative impact on engagement with voice assistants than with human partners.

Another study by Li et al. (Reference Li, Thomas, Yu and Xu2024) investigated how children aged four to eight use different repair strategies after communication breakdowns with either a human or an AI partner. Unlike the Gampe et al. (Reference Gampe, Zahner-Ritter, Müller and Schmid2023) study, where errors were staged, this study employed a generative AI–powered storytelling agent that responded to children in real time. This setup allowed communication breakdowns to emerge naturally during the interaction. The study confirmed that children were more likely to encounter communication breakdowns when interacting with AI than with a human partner. Additionally, children were less likely to attempt repairs following these breakdowns, even after the researchers took into consideration the children’s age and language proficiency. The study specifically analyzed the strategies children used after breakdowns occurred. A notable finding was that a significant proportion of children did not resolve breakdowns caused by AI. Specifically, when the AI tool misunderstood them, they often followed the AI tool’s responses rather than attempting to correct the misunderstanding. This raises questions about the developmental value of child–AI interactions, particularly in fostering social communication competence: By forgoing repair strategies – such as repetition, rephrasing, or clarification – children might miss important opportunities to practice and improve important conversation skills.

Li et al. (Reference Li, Thomas, Yu and Xu2024) further explored why children showed different repair behaviors depending on their conversational partner. They speculated that children are more likely to try to repair communication breakdowns when they perceive the partner as an “in-group” member – someone they feel is similar to themselves. This idea is grounded in prior research on children’s motivation to engage in conversations with socially similar others (Julien et al., Reference Julien, Finestack and Reichle2019; Sierksma et al., Reference Sierksma, Spaltman and Lansu2019). Indeed, the researchers found that the more children perceived AI as similar to themselves – measured by a perceived homophily scale (Rubin et al., Reference Rubin, Palmgreen, Sypher, Rubin, Palmgreen and Sypher2020) – the more likely they were to attempt to repair communication breakdowns. These findings point to a broader issue of social alignment and motivation in children’s interactions with others, which will be discussed in the following section.

3.4 Why Do Children Talk “Differently” with AI?

So far, the evidence has suggested disparities between child–AI and child–human communication, but what factors contribute to these differences? One recurring theme emerging from previous studies is that children hold differing expectations of AI’s conversational capabilities, generally perceiving AI agents to be less competent in conversations than human interlocutors. Studies have found that children tend to be more articulate (Xu et al., Reference Xu, Wang, Collins, Lee and Warschauer2021) or speak in a higher pitch (Cohn et al., Reference Cohn, Barreda, Graf Estes, Yu and Zellou2024) when conversing with AI – likely influenced by their beliefs or prior experiences regarding AI’s less-than-ideal accuracy in interpreting speech. Interestingly, children were also found to be very adept in adjusting their communications in real time based on the agent’s reactions. At least one study has shown that when children interacted with either a responsive agent or one providing generic feedback regardless of what they said, their initial levels of engagement (e.g., response rate) were similar. However, as the interactions progressed, the children increasingly chose to engage with the agent that was responsive to them (Xu et al., Reference Xu, He and Levine2024). This phenomenon aligns with theories of children’s “adaptation” based on the status of their interaction partner. The ability to appropriately reciprocate or adjust to a partner’s communicative response is a crucial component of communicative competence. Previous research suggests that as children grow older, their speech increasingly reflects adaptation to their partner’s speech styles (Gampe et al., Reference Gampe, Wermelinger and Daum2019; Street & Cappella, Reference Street and Cappella1989). Even children as young as three years old have been consistently shown to possess the ability to adapt to their partner’s communicative cues. It appears that such adaptations can also be transferred into the context of interactions with AI agents.

Another factor contributing to children’s less motivated conversation with AI could be their perception of AI’s social presence, which will be discussed greater detail in Section 4. Children may view AI as less socially present or emotionally engaging than a human, leading them to adopt more functional or goal-oriented communication (Garg & Sengupta, Reference Garg and Sengupta2020). For example, they might use direct commands instead of engaging in conversational small talk (Kim et al., Reference Kim, Druga and Esmaeili2022). Nonetheless, It is important to note that socially oriented conversations should not necessarily be viewed as the ideal outcome for child–AI interactions. One could reasonably argue that it is beneficial for children to differentiate their modes of interaction with AI, as this capacity may help preserve a clear social boundary between AI and human partners. However, in certain contexts – such as using AI to support children’s social-emotional learning or for therapeutic purposes – a lack of motivation to engage socially with AI may limit its effectiveness. In response to this challenge, developers and researchers have focused on enhancing the empathetic and affective components of AI agents. Features such as emotional validation and appraisal are being designed to encourage children to perceive AI agents not merely as tools for completing tasks but as more socially aware and emotionally responsive partners.

Children’s prior experiences with technology may also shape how they respond to AI. This influence, however, appears to be determined not solely by individual variations in access and experience but also by the presence of AI in society. In particular, the cultural and technological environment in which children are embedded may shape not only their knowledge of AI but also their expectations regarding its capabilities and their attitudes toward it. In a society saturated with AI, even children with limited personal experience may develop strong expectations based on how AI is portrayed in the media, discussed in schools, or used in their communities. A particularly interesting study compared children from Pakistan (a developing country) and the Netherlands (a developed country) in their perceptions of playing with a robotic friend versus a peer (Shahid et al., Reference Shahid, Krahmer and Swerts2014). The researchers found that Pakistani children tended to hold higher expectations of the robot’s social capabilities and expressed greater disappointment when those expectations were unmet, for example, when the robot failed to understand child-directed speech. At the same time, they also showed greater motivation and engagement, as reflected in more animated facial expressions during play. These findings suggest that in contexts where AI is less widespread but more novel, children may approach it with both idealized expectations and heightened enthusiasm. This supports the view that societal-level factors may play an important role in shaping children’s perceptions of, and interactions with, AI.

Overall, the studies reviewed employed quantitative metrics that consistently indicate lower levels of socially oriented behaviors during interactions with AI compared with human partners. Nevertheless, these differences should not be interpreted as indicating a complete absence of such behaviors in AI interactions. Rather, some children still exhibit socially oriented responses when engaging with AI, though to a lesser extent. This phenomenon was first uncovered through Nass et al.’s (Reference Nass, Steuer and Tauber1994) studies on “computers as social actors,” which aimed to explain why individuals often respond socially to AI. Although their study was conducted long before AI reached its current level of sophistication, the computers they used (i.e., NeXT workstations) exhibited basic features of modern AI, albeit in rudimentary form, such as speech, language use, and responsiveness. The researchers designed a series of five studies in which participants interacted with two computers: one served as a “tutor,” providing answers, while the other acted as an “evaluator,” assessing the accuracy of the tutor’s responses. Through these triadic interactions, the researchers found that participants applied “social rules” during the exchanges. For instance, participants tended to offer stronger praise when the computer directly solicited their feedback and exhibited gender stereotypes, perceiving praise from a male (voice) as more convincing than praise from a female (voice). These findings suggest that subtle human-like cues, particularly speech and reciprocity, prompt individuals to perceive a sense of humanity in technological systems. As a result, people unconsciously apply mental frameworks from human-to-human interactions to their engagements with technology. The findings from adult participants were later confirmed in studies with children. In one earlier study using a Wizard of Oz design (Ryokai et al. Reference Ryokai, Vaucelle and Cassell2003), five-year-olds were assigned to take turns co-creating stories with a virtual peer named Sam or a co-present playmate. The researchers analyzed the conversations children had with Sam and found that children not only viewed Sam as a “partner” (indicating “your turn” by saying that it was Sam’s turn) but also made eye contact with Sam when asking questions, much like they did with their co-present playmate. Moreover, children showed proactivity by offering help to Sam, a behavior consistent with how they interacted with human peers. These instances suggest that social elements can emerge in children’s engagement with AI, and that the concept of “computers as social actors” is not necessarily incompatible with the observed differences between child–human and child–AI interactions.

3.5 Can AI Influence Children’s Interactions with Others?

One reason we care about how children interact with AI is not only concern about their engagement with AI itself, but, perhaps more importantly, how these interactions may shape the ways they engage with other people. In particular, since children approach AI in different ways, it is important to consider whether these patterns will carry over to their interactions with humans, potentially leading to behaviors that are perceived as unnatural or socially inappropriate. An often-discussed concern arises from the idea that when children become accustomed to giving commands to AI agents, they may carry these patterns into their interactions with people, potentially neglecting norms of politeness (Arora & Arora, Reference Arora and Arora2022). There are numerous anecdotes highlighting this issue: In one blog post on Medium, a parent of a four-year-old, who was enthusiastic about using Alexa for things like knock-knock jokes, began to question the ramifications for the child’s social etiquette. The parent noted that AI, programmed to tolerate poor manners, inadvertently encouraged the child to “boss around” (Walk, 2016). This anecdote, among many others, reflects a broader public sentiment: These commercially available AI systems are programmed to serve, with no mechanisms to hold users accountable for their verbal behavior.

Evidence beyond speculations and anecdotes to support these concerns is scarce. It takes time for children to develop norms of social interaction and etiquette through repeated interactional experiences, which makes these behaviors difficult to observe. To overcome this, some researchers have focused on a more immediate aspect: whether children can learn new linguistic routines from AI, which allows researchers to observe learning over a shorter period. In a study, children aged five to ten were instructed to talk to an AI agent designed to slow down its speech (Hiniker et al., Reference Hiniker, Wang and Tran2021), but they could ask the AI to speed up by saying the word “Bungo.” After the session, the researchers secretly instructed the children’s parents to slow down their speech. Interestingly, the researchers observed that at least half of the children used the word “Bungo” to ask their parents to speed up while still in the lab. Furthermore, when the participants returned home, 55 percent of the parents reported that their child continued to use this routine, though this should be taken with a grain of salt due to the potential unreliability of self-reporting. This finding provides tentative evidence that children can pick up linguistic cues from AI. However, the results are not entirely conclusive. The word “Bungo” used in this study is relatively nonsensical and novel, which raises the question of whether children used it purely for playfulness or if it indicates a meaningful change in their linguistic patterns.

While the findings are still quite tentative, this study did point out the possibility that children could pick up linguistic routines from interacting with AI. In response to public concerns, some voice assistants, such as Amazon Alexa, have introduced features that praise children for using polite language or flag the use of less courteous expressions. Two studies offer tentative evidence regarding how these features might influence children’s communication behaviors. The first study involved college students who were divided into two groups: one interacted with a digital assistant that rebuked impolite requests (i.e., refusing to provide responses), while the other interacted with a control AI agent that tolerated impolite requests (Bonfert et al., Reference Bonfert, Spliethöver and Arzaroli2018).Footnote 1 The researchers found that the AI agent rejecting impolite requests significantly increased users’ tendency to use polite language during interactions. However, it remains unclear whether users’ behavior was driven by a genuine belief that they should demonstrate politeness with AI, or if it was more motivated by the fact that they realized they would not get the information they needed without using polite language. The second study employed a different strategy (Mandagere, Reference Mandagere2020). Instead of penalizing the non-use of polite language, they focused on positive reinforcement to praise children’s use of polite language. This study observed five families and found that parents reported an increased use of polite language by children between the ages of five and thirteen. compared to the pre-test. However, there are alternative explanations that could undermine the findings due to the study’s design. One issue is that the outcomes relied on parent reports, which are subject to social desirability bias or imperfect recall. Additionally, there was no comparison group, making it difficult to determine whether the observed effects were due to the encouragement from the voice assistants or other factors (e.g., simply the parents’ awareness that they are part of a study focusing on politeness might have influenced their responses).

Beyond the inconclusive evidence, one potential downstream consequence of enforcing politeness in interactions with AI agents is that such features may blur the distinction between human-to-human and human-to-AI conversations, making it harder for children to differentiate between the two contexts. In addition, much public discussion has focused narrowly on politeness, such as saying “thank you” or “please”, even though these expressions represent only one dimension of the broader social norms children must develop when engaging with others. Other important aspects include turn-taking, recognizing and responding to emotions, adapting communication styles to different contexts, and understanding others’ perspectives. To date, we have limited evidence on how interacting with AI might alter, or perhaps not affect, such behaviors. These are behavioral routines that require time to establish, are less malleable, and often demand long-term studies to observe meaningful changes.

3.6 How Do Children Interact with AI When Others Are Present?

Most studies on children’s interactions with AI have focused on one-on-one settings, including those previously discussed (e.g., Gampe et al., Reference Gampe, Zahner-Ritter, Müller and Schmid2023; Xu et al., Reference Xu, Wang, Collins, Lee and Warschauer2021). In these studies, children were typically invited into a laboratory environment to engage in structured activities such as playing a game or listening to a story with an AI agent.

However, when others, such as family members or peers, are present, children’s interactions with AI can take on new dynamics. For example, consider a scenario where a smart speaker is placed in a household. The questions children ask might be overheard by parents or siblings, who could subsequently join the conversation. In addition, children may share smart toys or devices with peers at school, which makes the interactions more collaborative or negotiated. Indeed, a study focusing on families of children aged three to eight revealed that parent-only, child-only, and co-use are all common ways families engage with shared voice assistant devices at home (Wald et al., Reference Wald, Piotrowski, Araujo and van Oosten2023). These findings suggest that children’s interactions with AI are deeply embedded within the broader social and cultural contexts of their daily lives.

When parents and children are co-using AI agents such as voice assistants, these agents may play the role of mediators for managing everyday routines or regulating children’s behavior, though they may also occasionally introduce tensions. Researchers tackling this question often adopt a perspective of viewing AI as an active participant within the dynamics of interaction. This approach highlights how AI, alongside humans, shapes and is shaped by the social, cultural, and technological networks it inhabits. As an example, Beneteau et al. (Reference Beneteau, Boone and Wu2020) studied how the presence of the AI voice assistant Alexa might influence how parents interact with their children in a household context. This study included children across a wide age range, from one to thirteen years old. Overall, the authors found that despite occasional conflicts caused by the smart speaker, families were able to leverage Alexa to further their parenting goals. Regarding these conflicts, the researchers documented how family members might disrupt each other’s interactions with Alexa, likely for two reasons. First, Alexa was often used to facilitate tasks that did not align with another family member’s goals, such as playing music the other family member disliked. This led to tensions in the shared environment where family members co-reside. Second, the sharing nature of the device, where it can only perform tasks requested by one person at a time, contributed to these disruptions. However, neither of these issues seemed to escalate beyond minor glitches. Regarding how Alexa can further parenting goals, it was used as a neutral third-party mediator for managing behaviors, such as setting timers to end an activity that might otherwise have been difficult for the child to terminate. It was particularly interesting that children seemed to be more receptive to advice coming from Alexa than from their parents, likely due to the perceived objectivity resulting from its machine nature. Overall, it appears that while voice assistants might not have been designed to support family dynamics, they have, to some extent, fulfilled these unintended goals.

When peers are present, AI can also influence the nature of children’s interactions, either supporting social play or distracting from it. Pretend play is a fundamental part of how young children interact with their peers, whether they are playing house, acting out adventures, or building imaginary worlds together. When AI technologies like conversational agents enter these play spaces, we may wonder: Can they become engaging playmates or will they pull children away from playing together? Pantoja et al. (Reference Pantoja, Diederich, Crawford and Hourcade2019) looked at how voice agents embodied in animal characters could support this kind of social play among three to four-year-olds. When researchers maintained control over what the agents said by typing in responses in real time, the agents could step in at just the right moments with suggestions and encouragement, which sustained children’s engagement and guided them back to playing together whenever they started to drift apart. This mimics how adults such as preschool teachers or parents would naturally facilitate children’s play, offering gentle prompts and support without taking over the play itself. Although this study used the Wizard of Oz technique where researchers controlled the agents behind the scenes, it still suggests that voice assistants with sophisticated contextual awareness could potentially foster meaningful social play experiences. However, when the researchers tried letting children control what the agents said themselves through a tablet app (where the children could pick topics, feelings, and events for the agent to talk about), the dynamic shifted dramatically. Children became so focused on making the agent talk – doing this almost twice every minute – that they spent less time actually playing with each other compared to when researchers controlled the agents’ speech. While children showed enthusiasm for incorporating the voice agents into their play by making them talk, the tablet interface meant to give children control ended up competing for their attention rather than supporting their social interactions.

Researchers are also curious if AI agents can be designed intentionally to promote interpersonal interactions. Shared reading, for example, is a powerful way for parents and children to bond through stories, conversations, and shared experiences. Yet many educational technologies today focus on one-on-one interactions between a child and device, potentially displacing valuable parent–child interactions rather than supporting them. Xu et al. (Reference Xu, He and Vigil2023) developed a bilingual conversational agent, Rosita, embedded in an e-book designed to promote parent–child co-engagement in reading for families with children aged from three to six (for a demo, see https://youtu.be/UQw9j14e3yA). In addition to questions directed at children on story comprehension, Rosita asked open-ended “family questions” that invited parents and children to connect the story to their own experiences. Their studies found that family-oriented prompts encouraged substantive conversations between parents and children, encompassing daily experiences, shared memories, and discussion of the content at hand (He et al., Reference He, Cervera and Levine2025; Xu et al., Reference Xu, He and Vigil2023). This research demonstrates that when AI agents are thoughtfully designed to consider family dynamics, they can enhance rather than replace interpersonal interactions in families, turning technology into a bridge rather than a barrier to family engagement.

4 Children’s Perceptions of AI

4.1 Do Children Believe AI Is a Living Being Like Humans?

When discussing AI and children, a common question people often ask is whether children believe AI is alive or real, like a person. In fact, children have long been curious about whether other things, such as plants, household machines, toys, or even fictional characters, are alive or real. This line of inquiry is grounded in their conceptual development of animate and inanimate distinction, which centers on their ontological understanding of purposes, functions, and the categorization of interactive technologies among the wide range of entities in the world (Rakison & Poulin-Dubois, Reference Rakison and Poulin-Dubois2001). A prominent theory that explains this development is Piaget’s stage theory, which suggests that children progress through distinct stages of conceptual development. Initially, they may confuse animate and inanimate entities, later developing flawed distinctions (e.g., associating movement with animacy), and ultimately achieving an adult-like understanding (Opfer & Gelman, Reference Opfer and Gelman2011). Although Piaget’s theory remains influential, it has faced criticism, particularly over whether such developments occur as qualitative shifts at fixed stages or along more continuous developmental trajectories (Gelman, Reference Gelman1990). Despite these critiques, both Piagetian theory and perspectives advocating for continuous development agree that childhood is a period of ongoing conceptual growth, where categorizing entities like AI presents challenges. Children often focus on observable traits such as movement, associating these with “animacy” or being “alive” while overlooking the unobservable yet fundamental characteristics that may indicate AI’s nonliving nature (for a review, see Opfer & Gelman, Reference Opfer and Gelman2011).

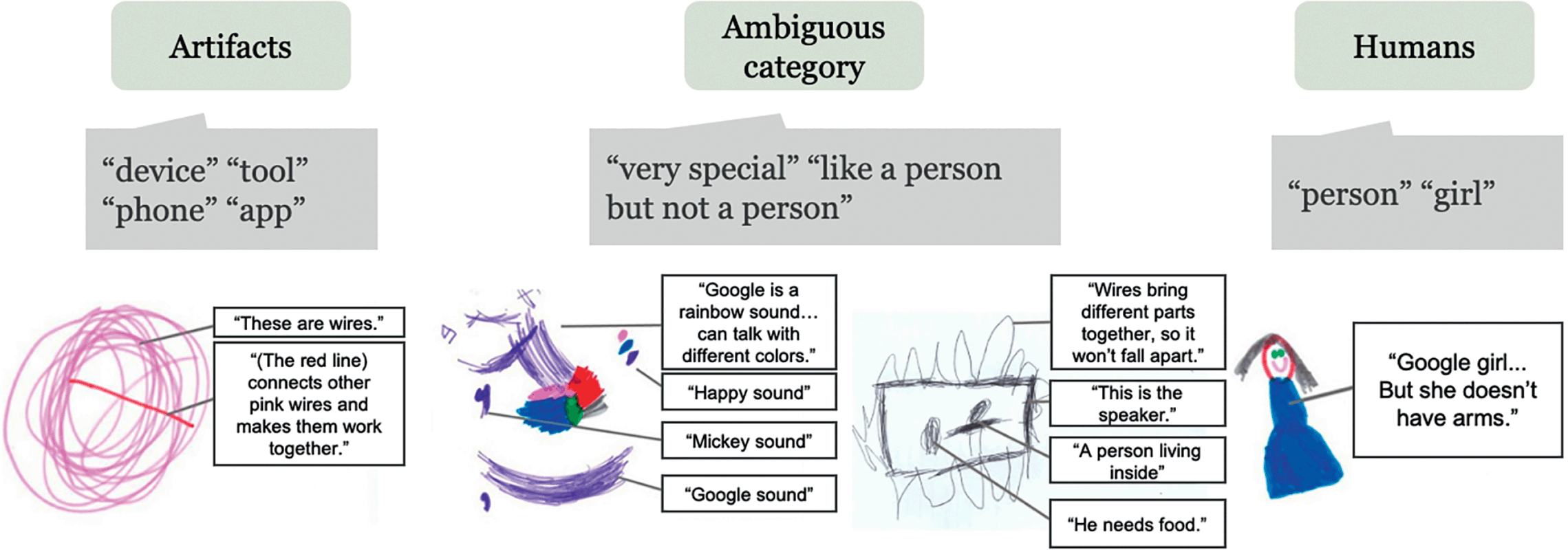

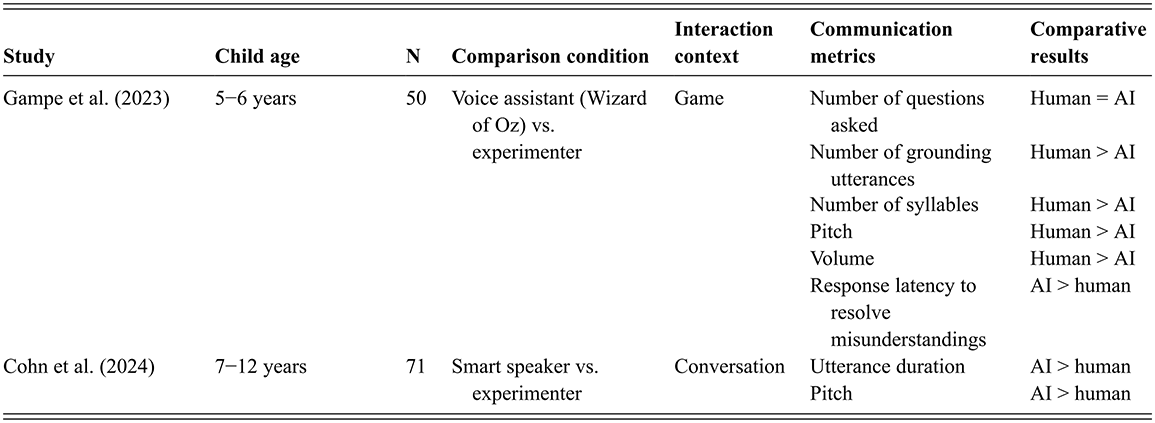

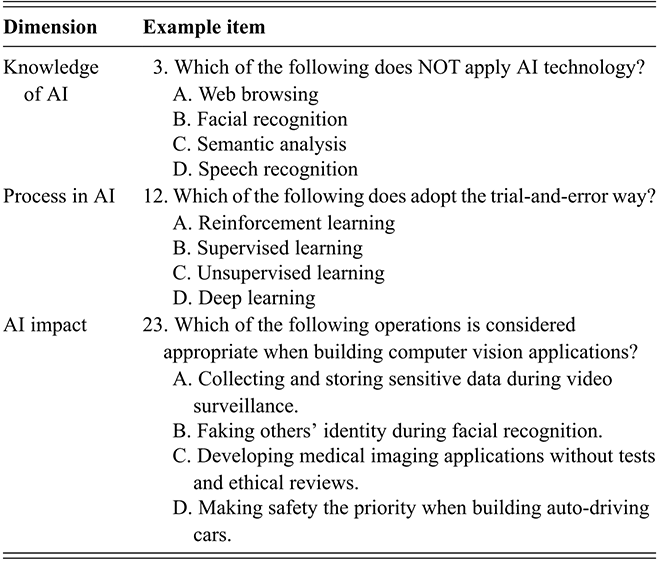

Researchers have approached this inquiry by posing a simple question to children: What is AI? The key takeaway from this line of exploration is that children have mixed beliefs about AI falling along the animate–inanimate spectrum. In one of those studies, Xu and Warschauer (Reference Xu and Warschauer2020) asked children aged three to six years to describe a Google Assistant they had interacted with. Seventeen percent of the participants referred to it as a “human” or “girl,” indicating a human-like perception, while the majority saw it as a technological object, describing it as a “device,” “machine,” or “phone.” However, the study also found that a considerable portion of children who failed to identify the voice assistant as fitting neatly into either category, with some referring to it as “like a human but not a human” or “magic.” The study also uncovered a tentative developmental difference, with all six-year-olds viewing AI firmly as an artifact, whereas those who perceived it as a living being were exclusively three-year-olds. Figure 2 shows examples of children’s drawings as well as their interpretations during the drawing.

Children’s drawing in response to the prompt “What is inside the Google Home smart speaker?”.

Figure 2 Long description

The figure shows how children classify and describe Google across three categories. Artifacts (left): Terms include device, tool, phone, and app. One drawing depicts tangled wires, explained as The red line connects other pink wires and makes them work together. Ambiguous category (center): Described as very special and like a person but not a person. Drawings feature colorful scribbles and box-like shapes. Quotes include Google is a rainbow sound… can talk with different colors, Happy sound, Mickey sound, Wires bring different parts together, so it won’t fall apart, A person living inside, and He needs food. Humans (right): Labeled person and girl. A child drew a girl in a dress without arms, explained as: Google is a girl… but she doesn’t have arms. Overall, the diagram shows children’s views of Google ranging from object to human-like, with blurred categories in between.

Another study focusing on slightly older children (aged six to ten years) found that, when asked what Alexa is (similar to Google Assistant), many used terms that reflected an artifact-oriented view, such as “computer chips” and “hard disks” (Festerling & Siraj, Reference Festerling and Siraj2020). Some responses also revealed how these children positioned AI relative to humans, reasoning that humans must “put” their own intelligence into the machines they build or program, and therefore the intelligence of the “made” can never exceed that of the “maker.”

Beyond asking children to categorize AI, another approach examined which perceived characteristics lead them to view it as human-like or distinctly nonhuman. These characteristics are often grouped into cognitive (thoughts), psychological (feelings or emotions), and behavioral (actions or speech) properties (Melson et al., Reference Melson and Kahn2009). When an entity displays either all or none of these properties, children may find it less challenging to categorize the object as either animate or inanimate. However, entities displaying only some of these properties are more likely to prompt uncertainty. Viewed as a continuum, the animate–inanimate distinction places some objects clearly at either end, while others occupy a more ambiguous middle ground.

Studies indicate that children vary in the extent to which they attribute cognitive, psychological, and behavioral capabilities to AI. For instance, Xu and Warschauer (Reference Xu and Warschauer2020) found that while most children aged three to six believed that AI has cognitive capabilities (being able to think and remember things), they were less likely to believe that AI has the capability to feel things like a friend or have emotions. When asked about their reasoning behind this, children frequently pointed to the AI agent’s capacity to engage in dialogue, especially in a contingent way. In short, it appears that AI’s adaptability and speech capability were seen and perceived as strong indicators of intelligence, potentially contributing to the children’s categorization of AI as human-like. Another study focused on children aged six to ten years and similarly found that they assigned some degree of social and mental capacity to voice assistants, and this tendency was more prominent among younger children (Girouard-Hallam et al., Reference Girouard-Hallam, Streble and Danovitch2021). Interestingly, the tendency to attribute human-like characteristics to AI appears to extend beyond childhood. While few studies have explicitly compared children and adults, Cohn et al. (Reference Cohn, Barreda, Graf Estes, Yu and Zellou2024) conducted a study in which both children (aged seven to twelve) and adults interacted with Amazon Alexa and were asked how much they thought Alexa was like a real person. Across both adult and child groups, roughly 30–40 percent of participants responded affirmatively. Although the proportion was slightly higher among children, the difference did not appear to be statistically significant.

Such human-like perceptions of AI arise not only from conversational cues but also from other factors, such as physical appearance and movement. For instance, Melson et al. (Reference Melson and Kahn2009) found that the majority of children aged seven to fifteen years affirmed that AIBO, the robotic dog, had mental states, social awareness, and moral standing. Similarly, Beran et al. (Reference Beran, Ramirez-Serrano, Kuzyk, Fior and Nugent2011) suggested that a significant proportion of children between the ages of five to sixteen years in their study ascribed cognitive, behavioral, and psychological characteristics to robots. Beran et al.’s study noted that children’s assigning animacy to robots is driven more by robots’ physical movements than by their intelligence (though it is likely that the robot’s rather repetitive patterns at that time led children to not perceive it as highly intelligent, this may not be the case with current AI). When children were asked why they considered the robot to be a living being, most children pointed to the robot’s humanoid appearance and its seeming ability to move spontaneously.

Together, these findings suggest that children’s perceptions of AI as human-like are shaped by a constellation of factors, including appearance (especially humanoid features), physical movement, and the capacity for contingent interaction. Rather than treating these dimensions as competing or mutually exclusive, it may be more accurate to view them as complementary cues that children weigh, often simultaneously, when making judgments about the “humanness” of AI.

4.2 Does AI Make Decisions on Its Own?

The previous section examined children’s beliefs about various characteristics of AI. These observable capabilities, however, raise a deeper question: Do children perceive such behaviors as driven by programming or by an AI’s own “mind”? This question relates to whether children see AI as possessing a mind. People intuitively conceive of minds in terms of two broad capacities: agency and experience (Gray & Gray, Reference Gray, Gray and Wegner2007). Agency involves the ability to form intentions, reason, pursue goals, plan, communicate, and act. Experience encompasses the capacity to feel emotions, sense pleasure or pain, perceive through the senses, remember experiences, express a personality, and possess consciousness.

A handful of studies have used this mind perception framework to examine children’s perceptions of AI agents, including voice assistants and social robots. Brink (Reference Brink2018) recruited children aged three to seventeen years to watch short videos of a humanoid robot and then asked them questions about their perceptions of the robot’s agency and capacity for experience. Through cluster analysis, Brink suggested that over half of the child participants considered the robots to have low agency and limited capacity for feelings or emotions (experience), while another quarter viewed the robots as having high agency but still lacking capacity for experience. A more recent study used a similar method where children aged four to eleven watched videos of a mix of robots and voice assistants (Flanagan et al., Reference Flanagan, Wong and Kushnir2023). The children generally ascribed low-level experiences to these agents while still attributing a certain degree of agency to them (an average of one on a scale from zero to three).

A small body of studies have compared children’s mind perception of AI agents with humans as a benchmark. For instance, Flanagan et al. (Reference Flanagan, Rottman and Howard2021) found that children aged five to seven, in general, attributed a similar level of ability to choose to both a robot and a human child. Another study investigated whether children understand that different people can hold individual beliefs, in contrast to conversational agents linked to the internet, which share a common set of beliefs due to synchronization (Dietz et al., Reference Dietz, Outa, Lowe, Landay and Gweon2023). Children watched videos in which a human shared different pieces of information with two separate agents – either two people or two smart speaker AI tools. The key difference was that in the human condition, each person heard different information, while in the AI condition, both devices – being connected to the internet – were assumed to share the same information. Children were then asked to judge what each agent (human or AI) would know. The findings revealed that while adults correctly inferred that AI devices would hold the same information, some children treated the AI agents more like humans, attributing individual knowledge to each. This suggests that children may have difficulty distinguishing between the belief systems of humans and AI agents.

Taken together, the studies suggest that children, to some extent, perceive AI agents as having agency, which might have implications for how they interpret their interactions with AI. For example, children might believe that AI agents willingly choose to engage with them. In our own studies, many children agreed with the statement “the agent interacts with me because it chooses to do so.” This belief may increase children’s engagement, as the interactions feel more genuine and meaningful. However, attributing agency to AI also raises critical developmental concerns. Children’s belief in AI’s autonomous decision-making may lead to misconceptions about the nature of these systems, which are fundamentally governed by algorithms rather than conscious intent. Such misunderstandings can affect children’s interpretation of responsibility, accountability, and the distinction between sentient beings and programmed machines. For example, when AI systems err, children might mistakenly hold the AI personally accountable rather than recognizing the limitations inherent in its design or data.

4.3 Does AI Deserve to Be Treated Fairly?

Although research in this area is still in its early stages, one emerging trend from these studies is that children generally believe it is wrong to treat AI unfairly or badly, yet they do not believe that AI deserves liberal or civil rights. One study explored this idea by creating scenarios where a humanoid robot seemed to be treated unfairly. In the study, children aged nine, twelve, and fifteen took turns playing a game with the robot (Khan Jr et al., 2012). Midway through the game, before Robovie could take its turn, an experimenter abruptly picked the robot up and shoved it into a closet, despite Robovie protesting, “It is unfair, Robovie needs to get my turn.” In a post-interview, children who witnessed the disruption and the robot’s protest expressed that the treatment of the robot was unjust and that the robot deserves fair treatment. However, a much smaller portion of the children considered Robovie as having civil liberties (e.g., can be sold or owned) or civil rights (e.g., can vote or be paid for work). Part of these findings were corroborated by a newer study that explicitly focused on children’s considerations of fair treatment with familiar AI agents including Google Assistant and a NAO humanoid robot. On average, these children believed that it is not okay to yell at or hit an AI agent (Flanagan et al., Reference Flanagan, Wong and Kushnir2023).

This pattern – children extending courtesy and care toward an embodied agent while still viewing it as object – was further supported by Newhart et al. (Reference Newhart, Warschauer and Sender2016). In their study, children with chronic illnesses used robots to attend school remotely. Classmates quickly anthropomorphized the robots, referring to them by name, greeting them in the hallway, and even hugging them. Yet this human-like treatment had clear bounds. When one student, Samuel, attended picture day in person, his classmates questioned why both he and the robot were in the class photo, insisting “that’s one person.” Once Samuel was physically present, the robot was no longer seen as a legitimate stand-in and was wheeled back to its charging station. This shift underscores how children can toggle between treating the robot as socially real and categorizing it as an object, depending on context and physical presence. Indeed, children often described the robot as a teammate or friend during games, yet still talk about “parking,” “recharging,” or “upgrading” it when play is over (Ahumada-Newhart & Eccles, Reference Ahumada-Newhart and Eccles2020; Ahumada-Newhart et al., Reference Ahumada-Newhart, Schneider and Riek2023). Together, these studies reveal that children demonstrate empathy and moral reasoning in their interactions with AI, yet they very often conceptualize these agents as tools – owned and controlled rather than autonomous beings with rights.

What drives children to decide whether AI deserves moral treatment? Some might argue that the findings from previous studies – where children indicated it is not okay to treat AI agents poorly – can be seen as evidence of children demonstrating empathy toward AI, reflecting social connection. However, a recent study offers alternative explanations by exploring children’s reasoning behind their moral treatment of AI in more detail (Oh et al., Reference Oh, Zhang and Xu2025). In this study, researchers used classic moral treatment questions, asking children whether it is okay to put Alexa in the cold or throw it in a box. After receiving their responses, they followed up with “Why?” By analyzing children’s answers to these follow-up questions, the researchers found that children’s concerns were less about whether AI might suffer psychological harm or be deprived of rights through unfair treatment. Instead, their reasoning often stemmed from the belief that AI’s usefulness might be compromised if it is not kept in good condition, which aligns with their view of AI as a tool. For example, a child reasoned why they should treat an Alexa device gently: “Alexa wouldn’t be as useful and then you are wasting plenty of money. It’s like buying an entire house and smashing it.” And another child similarly commented that “It might run out of battery and what are you going to do? Nothing. They are quite expensive.” Thus, at first glance, children’s reluctance to harm AI agents might seem like a sign of empathy toward the machines. However, a deeper analysis suggests that their behavior could instead reflect an effort to preserve the AI agent’s value as a useful but fragile tool.

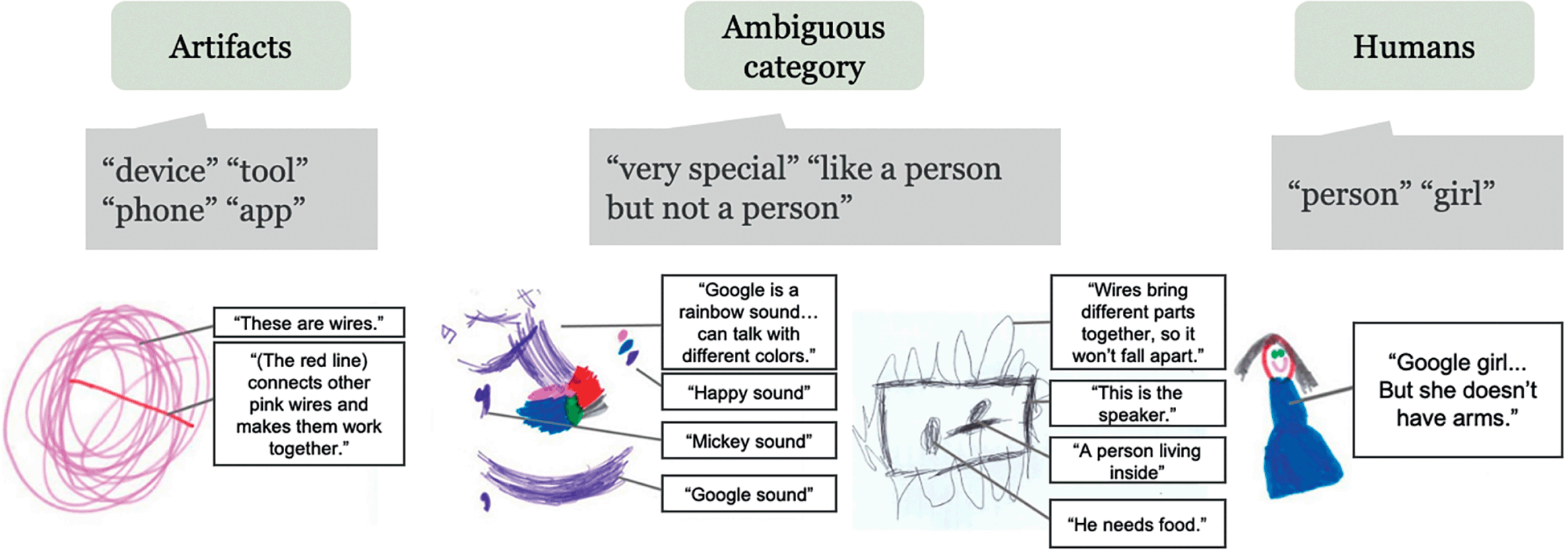

5 Children’s Trust in AI-Generated Information

5.1 AI versus Humans: Who to Trust?

While researchers are still working to understand children’s decision-making in trust toward AI, existing research on human interactions suggests a consensus that children do not blindly trust all the information they receive (Harris & Corriveau, Reference Harris and Corriveau2011). As AI becomes an increasingly integral part of how children acquire knowledge, a critical question emerges: When comparing human informants to AI informants, how do children decide whom to trust? This question falls within the broader domain of selective trust research, which examines how children evaluate and interact with different sources of information. Within this framework, two measures are commonly used to capture children’s trust in human versus AI informants: endorsement and preference for seeking new information. Endorsement assesses which source – AI or human – a child is more likely to accept when the two provide conflicting information. Preference for seeking new information examines whether children choose to approach the AI or the human when they require additional information.

Stower et al. (Reference Stower, Kappas and Sommer2024) conducted a study where both a person and a robot were asked to label a novel object. Both informants were deemed reliable, as they had previously provided accurate labels for familiar objects. However, when the person and the robot gave conflicting labels for the novel object, children aged three to six years were more likely to trust the robot’s label. Another study expanded on these findings by adding two additional factors: first, how children’s trust in humans versus AI evolves with age, and second, whether this trust depends on the type of information being sought (Girouard-Hallam & Danovitch, Reference Girouard-Hallam and Danovitch2022). Using a similar research paradigm, the study confirmed that both factors significantly influence children’s trust. Specifically, children aged four to five and seven to eight were more likely to trust AI when seeking factual information (such as an animal’s dietary habits). In contrast, children were more inclined to endorse and seek information from humans when the questions were personal – pertaining to the child’s own information or information about other people. This pattern contextualizes Stower et al.’s (Reference Stower, Kappas and Sommer2024) results: object labeling falls within the domain of factual information, which may explain the greater trust placed in the robot in that study.

Girouard-Hallam and Danovitch (Reference Girouard-Hallam and Danovitch2022) also observed that the tendency to differentiate trust by information type became more pronounced with age. To explain this developmental trend, they investigated why children change the kinds of information they seek from different informants. Their findings indicated that these information-seeking behaviors and preferences were less related to children’s beliefs about each informant’s general capabilities (e.g., whether the informant can learn new things) and more closely tied to their beliefs about the informant’s access to information in specific domains. Regarding AI agents, children were aware of the voice assistant’s ability to access information via the internet and its broad scope of knowledge, making it better suited for factual information. In contrast, the human informant was viewed as having a privileged status when it came to providing personal information about the experimenter.