1. Introduction

The Desert Fireball Network (DFN) is a multi-camera network designed to determine meteorite fall positions and pre-atmospheric orbits of meteoroids. The network consists of autonomous observatories spread across the Australian continent, capturing nightly observations of fireballs (Howie et al. Reference Howie, Paxman, Bland, Towner, Sansom and Devillepoix2017a). One of the aims of the network is to determine meteoroid flux density at 1 AU at centimetre to decimetre sizes.

The necessity of accurately characterising the meteoroid flux is underscored by incidents such as the unexpectedly large meteoroid impact on the James Webb Space Telescope (JWST) mirror (Moorhead et al. Reference Moorhead, Milbrandt and Kingery2023). Improved predictive models are required to assess potential risks, especially from meteor streams like the Taurids, known to contain significant numbers of larger objects within resonant debris streams (Devillepoix et al. Reference Devillepoix2021; Spurný et al. Reference Spurný, Boroviĉka and Mucke2017). Higher-order variations, such as latitudinal, daily, and seasonal differences, must also be considered to achieve reliable predictions (Robertson et al. Reference Robertson, Pokorný, Granvik, Wheeler and Rumpf2021; Ozerov et al. Reference Ozerov, Smith, Dotson, Longenbaugh and Morris2024).

At millimetre size, the large number of recorded impacts by a single ground-based meteor camera readily enables population numbers studies (Blaauw, Campbell-Brown, & Kingery Reference Blaauw, Campbell-Brown and Kingery2016; Vida et al. Reference Vida, Blaauw Erskine, Brown, Kambulow, Campbell-Brown and Mazur2022). At metre sizes and beyond, Brown et al. (Reference Brown, Spalding, ReVelle, Tagliaferri and Worden2002, Reference Brown2013) have used orbital sensors with global coverage. These studies suffer from low number statistics, but are somewhat easier to de-bias (as long as the system sensitivity is well understood, transient effects like cloud cover do not get in the way of detecting bolides from orbit).

In the centimetre to decimetre range, the number of impacts detected by a ground-based network drops rapidly owing to the steep population index; de-biasing observations requires careful analysis (Halliday, Griffin, & Blackwell Reference Halliday, Griffin and Blackwell1996). An accurate measure of the sky and the amount of time that the network was observing. The DFN consists of approximately 30 cameras, which may or may not be observing given the operational conditions at the time of observation, including weather, time of night, moon altitude, equipment failures, or misconfiguration. With a network of that size, controlling for all these different factors that may affect observation is challenging. Additionally, combining the effective observed volume is complex (Devillepoix et al. Reference Devillepoix2020).

The only significant attempt in tackling the centimetre to decimetre range is the work of Halliday et al. (Reference Halliday, Griffin and Blackwell1996) using the Canadian camera network of the Meteor Observation and Recovery Project (MORP). MORP consisted of 12 stations, with five rectilinear cameras at each, tiling the visible sky. The network observed mass ranges from 1 g to hundreds of kilograms (Halliday et al. Reference Halliday, Griffin and Blackwell1996). Observation frames were divided into quadrants between elevations of 8–20

![]() $^\circ$

, and 20–58

$^\circ$

, and 20–58

![]() $^\circ$

, with each quadrant rated as clear or cloudy. The sky at 70 km altitude was divided into cells, and visibility from multiple cameras was assessed to arrive at a total observation area and clear observation time. Meteors that did not pass through these defined cells were discarded.

$^\circ$

, with each quadrant rated as clear or cloudy. The sky at 70 km altitude was divided into cells, and visibility from multiple cameras was assessed to arrive at a total observation area and clear observation time. Meteors that did not pass through these defined cells were discarded.

In this study, we present the methodology we have developed for estimating the influx rate of meteors detected by the DFN. As a case study, we apply this method to observations collected during the period when the Southern Taurids meteor shower showed increased activity at fireball sizes, as documented by Devillepoix et al. (Reference Devillepoix2021). Given DFN’s capability to estimate mass kinematically (Gritsevich & Popelenskaya Reference Gritsevich and Popelenskaya2008; Sansom et al. Reference Sansom, Bland, Paxman and Towner2015), an accurate estimation of influx rate can significantly enhance our understanding of meteoroid dynamics and associated risks.

Ehlert & Erskine (Reference Ehlert and Erskine2020) discusses several methods for debiasing meteor flux measurements in optical surveys, particularly those relating to the NASA All-Sky Fireball Network. A notable claim from that paper is that, despite advances in analysis and automation, no fully automated, universally robust method has been found to reliably identify clear-sky periods from all-sky camera data alone.

Campbell-Brown, Blaauw, & Kingery (Reference Campbell-Brown, Blaauw and Kingery2016) addresses the challenges of combining observations from different meteor cameras and the necessary steps to debias result flux measurements for scientific comparability. The methods used in that study include standardisations of flux measurements using a common reference limiting magnitude. They achieved inter-camera flux consistency through manual calibration and magnitude normalization, our method generalizes this approach to millions of all-sky images, automatically quantifying clear-sky conditions and network coverage. This enables unbiased flux estimates at centimetre to metre scales, where manual normalisation becomes infeasible.

2. Data

To estimate the meteoroid impact flux density on Earth, two things are needed: a surveying system that accurately detects fireballs, and a way to measure how much area and time the system has effectively surveyed for.

The surveying system consists of the DFN observatories’ main imaging system: Nikon D800/D800E/D810 associated with a Samyang 8 mm f/3.5 UMC Fish-eye CS II, with a liquid crystal shutter. Capture mode is 25-second-long exposures every 30 s, ISO 3200 or 6400, with the lens operated at f/4. The liquid crystal shutter is operated at 10 Hz (see Howie et al. Reference Howie, Paxman, Bland, Towner, Sansom and Devillepoix2017b for details). The system captures images continuously from nautical twilight (Sun altitude

![]() $\lt-6$

degrees), unless it detects that the conditions are too cloudy or if there is some technical issue preventing observations (Howie et al. Reference Howie, Paxman, Bland, Towner, Sansom and Devillepoix2017a). The data it produces are 14 bit raw images in the camera’s native lossless compression file format (Nikon NEF).

$\lt-6$

degrees), unless it detects that the conditions are too cloudy or if there is some technical issue preventing observations (Howie et al. Reference Howie, Paxman, Bland, Towner, Sansom and Devillepoix2017a). The data it produces are 14 bit raw images in the camera’s native lossless compression file format (Nikon NEF).

The 10 Hz liquid crystal shutter provides temporal modulation of fireball trails, enabling flight direction and timing information to be derived during subsequent trajectory reduction, rather than from image timestamps alone. Observation start and end times rounded to minutes or 15-min intervals, as reported in the survey coverage analysis, reflect operational selection boundaries for data processing, not the intrinsic timing precision of individual fireball detections. Individual fireball timestamps may be reported with sub-second resolution as they originate from the trajectory reduction pipeline. The flux density calculations presented here do not rely on millisecond-level absolute timing accuracy, but rather on the completeness and consistency of the survey coverage metrics.

2.1. Fireball detection and reduction

The software that detects fireballs is described by Towner et al. (Reference Towner2020); this task is run on board the observatories. The results are logged and transferred to the central server, the fireball candidates then get reviewed by a human, and if confirmed as fireballs they are systematically ingested. Automated routines then retrieve the raw image data, as well as images suitable for astrometric calibration. Calibration images are automatically solved following Devillepoix et al. (Reference Devillepoix2018). The fireball track gets decoded and picked by a human, and the picked pixel coordinates are converted to sky coordinates. If a fireball has been detected by more than one camera, it gets triangulated using the least-squares method of Borovicka (Reference Borovicka1990). Speed is estimated using the Kalman filtering method of Sansom et al. (Reference Sansom, Bland, Paxman and Towner2015). The pre-atmospheric orbit of the meteoroid is calculated following the code of Jansen-Sturgeon et al. (Reference Jansen-Sturgeon, Sansom, Devillepoix, Bland, Towner, Howie and Hartig2020), with Monte Carlo runs establishing the uncertainty based on the uncertainty on speed and direction.

The mass at the beginning of luminous flight (

![]() $m_0$

) is estimated using the dynamic trajectory analysis method of Gritsevich (Reference Gritsevich2008) for large, decelerating meteoroids, and the method of Stevenson et al (2025, in preparation) for small meteoroids showing minimal deceleration. Both methods proceed by first deriving a ballistic coefficient (

$m_0$

) is estimated using the dynamic trajectory analysis method of Gritsevich (Reference Gritsevich2008) for large, decelerating meteoroids, and the method of Stevenson et al (2025, in preparation) for small meteoroids showing minimal deceleration. Both methods proceed by first deriving a ballistic coefficient (

![]() $\alpha$

) from a curve fit to raw altitude/velocity data. The ballistic coefficient reflects the ratio between the drag and weight forces acting upon a meteoroid during atmospheric flight. From this value, entry mass can be estimated using the following formula, which assumes uniform ablation, spherical morphology, and a consistent bulk density (Sansom et al. Reference Sansom2019).

$\alpha$

) from a curve fit to raw altitude/velocity data. The ballistic coefficient reflects the ratio between the drag and weight forces acting upon a meteoroid during atmospheric flight. From this value, entry mass can be estimated using the following formula, which assumes uniform ablation, spherical morphology, and a consistent bulk density (Sansom et al. Reference Sansom2019).

![]() $c_d$

and

$c_d$

and

![]() $A_0$

refer to the drag and shape change coefficients of a meteoroid. Following a commonly adopted simplifying assumption in dynamic meteoroid modelling (Gritsevich Reference Gritsevich2008; Gritsevich & Popelenskaya Reference Gritsevich and Popelenskaya2008), the product

$A_0$

refer to the drag and shape change coefficients of a meteoroid. Following a commonly adopted simplifying assumption in dynamic meteoroid modelling (Gritsevich Reference Gritsevich2008; Gritsevich & Popelenskaya Reference Gritsevich and Popelenskaya2008), the product

![]() $c_d A_0$

is taken to be 1 in the present analysis.

$c_d A_0$

is taken to be 1 in the present analysis.

![]() $\rho_0$

and

$\rho_0$

and

![]() $h_0$

are respectively the critical density and altitude of the exponential atmosphere, as defined by Gritsevich & Popelenskaya (Reference Gritsevich and Popelenskaya2008). Finally,

$h_0$

are respectively the critical density and altitude of the exponential atmosphere, as defined by Gritsevich & Popelenskaya (Reference Gritsevich and Popelenskaya2008). Finally,

![]() $\rho_m$

and

$\rho_m$

and

![]() $\gamma$

are the bulk density and entry angle of the meteoroid.

$\gamma$

are the bulk density and entry angle of the meteoroid.

The results in the present work use the same set of fireballs from the Southern Taurids stream as Devillepoix et al. (Reference Devillepoix2021).

2.2. Data used to determine effective observing time

The area and time the system has effectively surveyed are not automatically generated as a data product. As images are received by the on-board computer, a rough assessment using star counts is performed for operational purposes, to decide whether the system should continue observing (and to save shutter actuations). However, this system has a very high threshold and only triggers when the sky is completely overcast, to avoid missing very bright fireballs that can penetrate through thin cloud layers.

A decision was made early on to keep all the data captured by the cameras, even images that do not contain fireballs. There were multiple motivating factors for storing all that data:

-

• being able to go back into the archive to find fireballs that had too low signal to noise to be detected, but could be useful to constrain the trajectory of a fireball detected by other observatories (Shober et al. Reference Shober2022).

-

• be able to later mine the data for other transients, notably astrophysical transients (LIGO Scientific Collaboration and Virgo Collaboration et al. 2019; Kann et al. Reference Kann2023).

-

• keeping all the data provided some contingency as well as validation data when the fireball detection software of Towner et al. (Reference Towner2020) was being commissioned.

-

• enabling the clear-sky survey, subject of the present work.

Keeping the data was enabled on the observatories side by the availability of appropriate temporary storage (terabyte-scale hard drive disks), and data centre space available to use for the program (petabyte on tape). Maintenance trips include among their tasks the replacement of hard drives for empty ones. The drives are then returned to the Perth campus of Curtin University, and are uploaded via a high-speed link to the nearby Pawsey supercomputing research centre’s systems. The permanent storage system that was provided by Pawsey for that use is a tape-backed media database called Mediaflux that is unsuitable for mass parallel access to the images and logs. Originally two 4TB hard drives were used on-board the cameras, meaning that the raw files would fill up the disk space after about 4 months of clear weather. Thanks to larger capacity drives becoming available and an added slot in the observatories, this capacity was extended to three 10TB drives, which gave each observatory enough space to store

![]() $~1.5$

yr of raw data. We later implemented another strategy to increase how long the systems could run autonomously: once the disks were near full (>90%), a data compression task was run on the oldest data that did not contain fireballs. The compression turns the 14 bit raw ~45 MB images into full-resolution 8-bit colour JPEG files, a ~10:1 compression factor, this is enough to ensure that hard disk space is not a limiting factor for continued observations (other parts such as the mechanical shutter would fail first).

$~1.5$

yr of raw data. We later implemented another strategy to increase how long the systems could run autonomously: once the disks were near full (>90%), a data compression task was run on the oldest data that did not contain fireballs. The compression turns the 14 bit raw ~45 MB images into full-resolution 8-bit colour JPEG files, a ~10:1 compression factor, this is enough to ensure that hard disk space is not a limiting factor for continued observations (other parts such as the mechanical shutter would fail first).

We use this compressed data product to calculate the effective clear-sky time-area product. For the purpose of determining clear sky conditions, it was found that the lossy compressed JPEG images are nearly as good as the raw data product. This means that we only need to work with a more manageable ~300 TB dataset, rather than the full 3PB archive. In some cases that was the only option as when the observatories run out of disk space, such as when a maintenance cycle needed to be extended so the disks were not replaced with empty ones, only the compressed version is kept.

The network has been in continuous operation since mid-2014. For this project we extracted a full year of data (2015) from the tapes. For validation purposes we focus on the period from 2015-10-01 to 2015-12-31, as images from that period had been fully examined manually by Devillepoix et al. (Reference Devillepoix2021), providing a good basis for validation of the methods used. This period was chosen due to its association with the Southern Taurid meteor shower (Devillepoix et al. Reference Devillepoix2021; Egal, Wiegert, & Brown Reference Egal, Wiegert and Brown2022). Additionally, observations in that period all have associated orbit and derivative information (e.g. meteor shower, radiant). The calculated radiants for the period of activity in 2015 (blue to red colour is the solar longitude) can be seen in Figure 5 in Supplementary Material. It is worth noting that these dates were used for final processing but the results for the size–frequency distribution (SFD) are related to only part of that period as that was the actual period of activity. Specifically, the Southern Taurid period of activity was between 2015-10-27 and 2015-11-17 (solar longitudes

![]() $213^\circ$

and

$213^\circ$

and

![]() $234^\circ$

, respectively). Over that period, 27 unique cameras recorded images. The total hours of observation anywhere in the network was 245.75 over that period. If all cameras were observing over that whole period that would give a total image count of 796 230. Due to a combination of optimisations (for sun altitude calculated locally, being different across the network) and the occasional malfunction the actual number of images recorded over that period was 385 284, which is still a very large number to sort manually and that only corresponds to a single event.

$234^\circ$

, respectively). Over that period, 27 unique cameras recorded images. The total hours of observation anywhere in the network was 245.75 over that period. If all cameras were observing over that whole period that would give a total image count of 796 230. Due to a combination of optimisations (for sun altitude calculated locally, being different across the network) and the occasional malfunction the actual number of images recorded over that period was 385 284, which is still a very large number to sort manually and that only corresponds to a single event.

As detailed later in Section 3.2, when evaluating how clear an image is, the astrometric mapping of the camera must be known. To achieve this at scale, for each image that needs to be evaluated for clear sky conditions, we use the astrometric solution nearest in time already calculated for this particular camera as part of the fireball data processing pipeline (Section 2.1).

3. Methods: Clear sky survey pipeline

The end product of the clear sky survey pipeline is a measure of how much Earth area is being monitored for meteoroid impacts over time.

3.1. Discretisation of the area-time monitored for fireballs

The millions of images to process, taken over a long period by a network of irregularly spaced sensors, calls for discretising the time and area we are working with. The time discretisation choice is straightforward: the cameras take picture synchronously over the entire network every 30 s.

However, the discretisation method for the area covered is not evident. The on-sky effective field of view of the all-sky sensors varies because some are affected by obstructions (trees, antennas, buildings, etc.). It is also not rare for a sensor to be partially cloudy. Ideally one would like to be able to retain significant granularity when assessing the effective clear observable sky of each sensor. Furthermore the monitored areas in the atmosphere need to be easily aggregated between neighbouring camera systems in the network, in a scalable way.

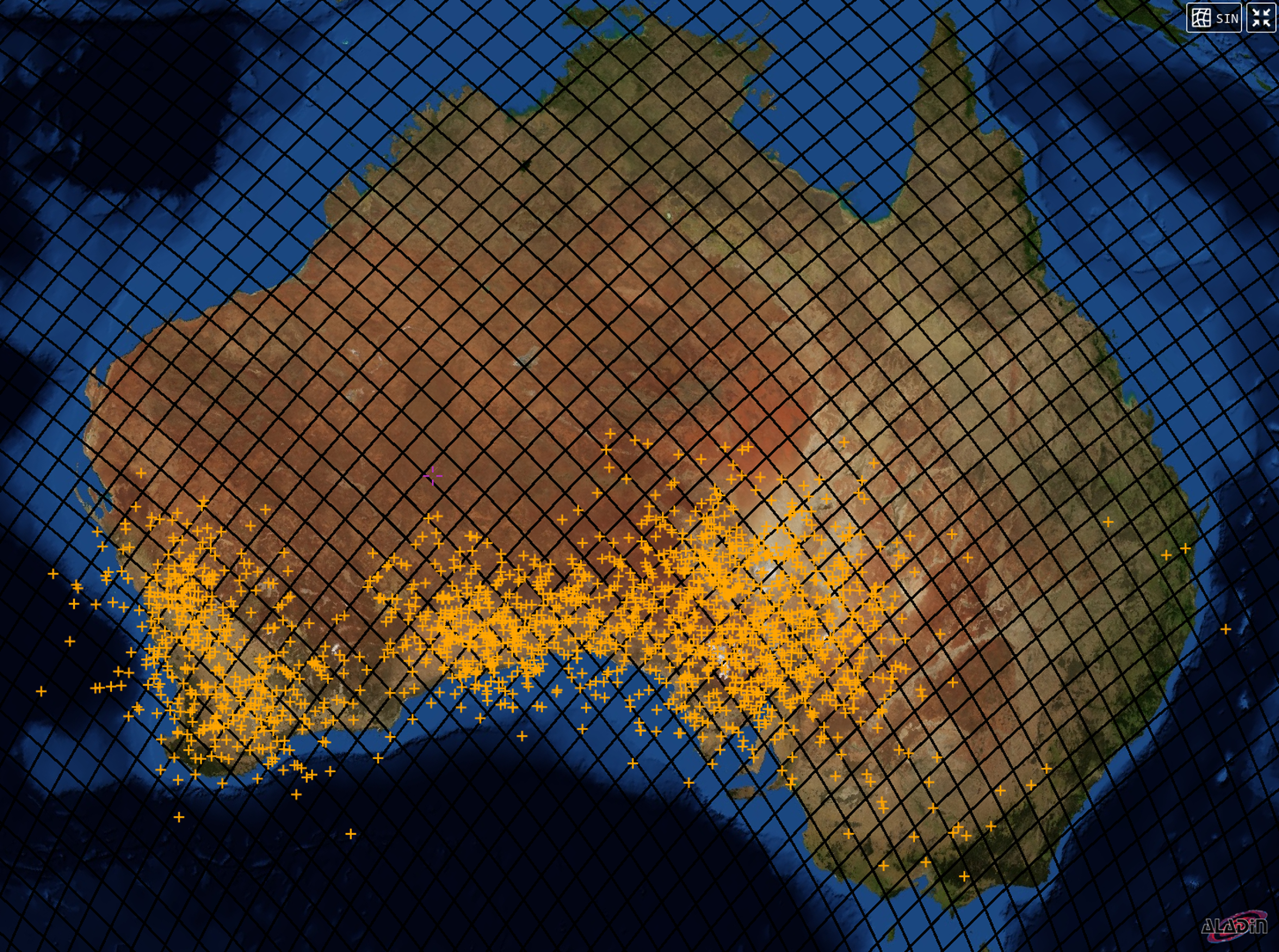

The Hierarchical Equal Area isoLatitude Pixelisation (HEALPix) framework developed by Górski et al. (Reference Górski, Hivon, Banday, Wandelt, Hansen, Reinecke and Bartelmann2005) provides a way to partition the sky into equal-area regions, which is particularly useful for a multi-camera all-sky survey like the one described here. In simple terms, HEALPix provides a mapping from spherical coordinates to discrete sky regions (called HEALPix elements). The spherical coordinates can be geographic (latitude, longitude) or celestial (right ascension, declination). Because HEALPix elements have equal area on the celestial sphere, they are suitable for aggregating observations from different camera locations. The mathematical method for mapping spherical coordinates to HEALPix elements is described in detail by Górski et al. (Reference Górski, Hivon, Banday, Wandelt, Hansen, Reinecke and Bartelmann2005), and the implementation is provided by AstroPy (Robitaille et al. Reference Robitaille2013). Figure 1 shows the HEALPix grid over Australia at order 6, with the DFN fireball impact locations overlaid.

3.2. Processing steps

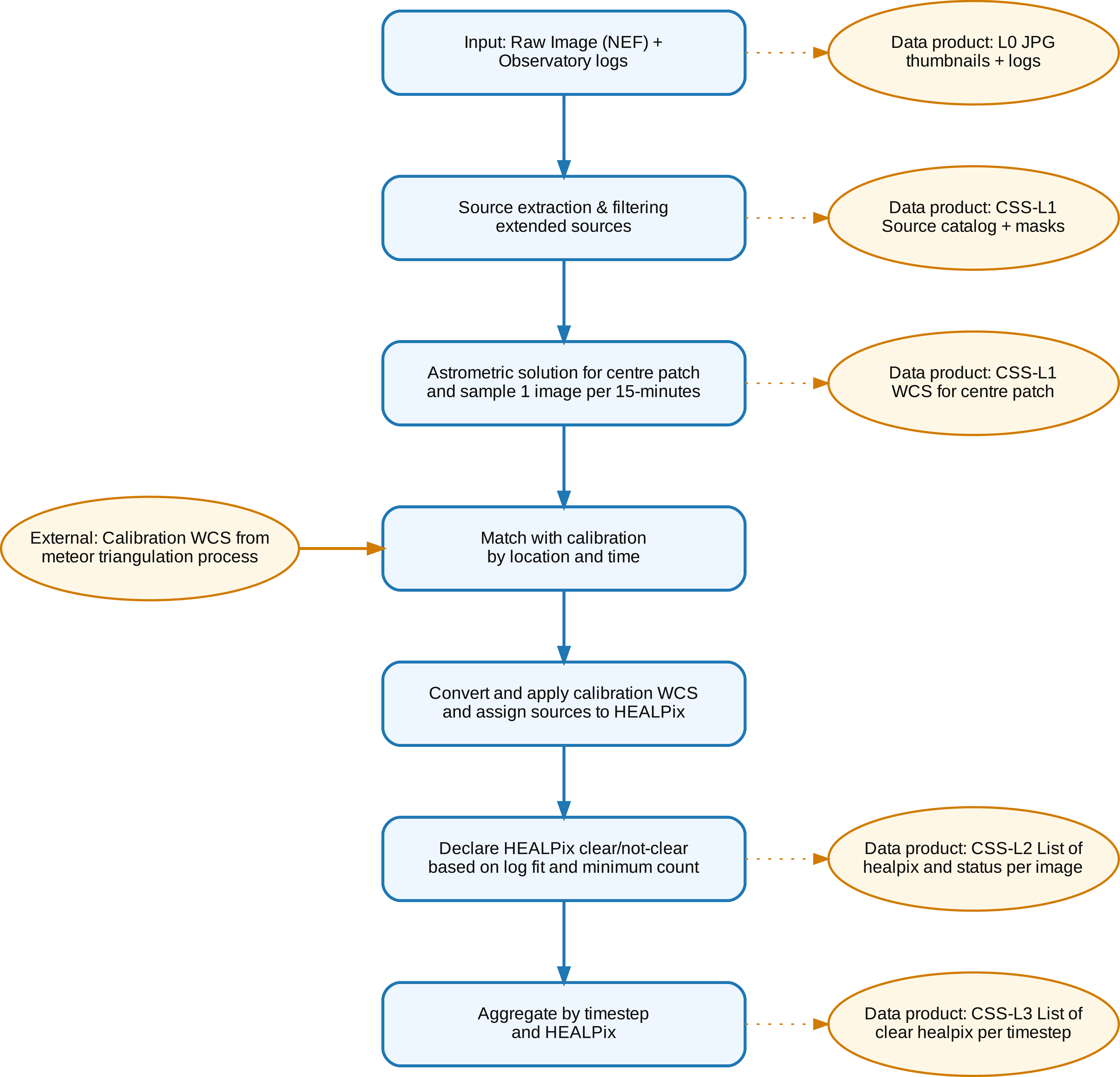

The data processing pipeline we have developed is organised in several steps that each generate intermediate data products (Figure 2). These products follow a hierarchical structure: Level 0 (L0) consists of raw compressed images and engineering logs; Level 1 (L1) provides per-image star catalogues derived from source detection; Level 2 (L2) assesses clear-sky status for individual equal-area sky regions from each camera; and Level 3 (L3) aggregates these measurements across the entire network to produce the final survey product.

Black grid: HEALPix grid at order 6 over Australia (individual HEALPix elements shown as rhomboid cells with

![]() $\sim$

103 km side length,

$\sim$

103 km side length,

![]() $10\,630\,\text{km}^2$

in area). Orange +: impact locations of all meteoroids detected by the DFN.

$10\,630\,\text{km}^2$

in area). Orange +: impact locations of all meteoroids detected by the DFN.

Processing steps to arrive at a clear sky data product. Blue rectangles: high-level processing tasks. Orange circles: Data products.

These intermediate data products are organised using two data storage solutions. Firstly, large files are stored on collections (called buckets) on the Acacia object store system provided by Pawsey (a Ceph-based Weil et al. 2006 S3 compatible object store); we refer to this system below as the ‘object store’. Secondly, a NoSQL document database (using MongoDB) keeps track of the existence and locations of all the files in the object store and their metadata; we refer to this system as the ‘database’.

The database also keeps track of the overall progress on the data processing steps.

Level 0: JPG images and engineering logs. The raw images and engineering logs on tape for all the years of operation of DFN amount to ~2.5 PB of data.

In batches, these files are retrieved from the tape store, and staged to a temporary fast storage area at the Pawsey Supercomputing Centre. The raw (Nikon NEF) file format is converted to a full resolution JPEG. These compressed images (10:1 compared to raw) and engineering logs form the L0 data product. They are organised in buckets on the object store.

The Mongo database keeps track of the existence and location (URL) in the object store of all the files ingested and their metadata, including images and engineering logs which are logs produced by the observatory for the observing session and are stored next to the images for the session in the object store. The metadata stored in the Mongo database includes the time and location of capture of the data for this level.

Level 1: Per image light source catalogue. The next level of data product (Level 1) is produced by performing the following steps:

-

1. Select one image in each 15 min intervals (we assume observing conditions do not change significantly in 15 min).

-

2. Image preprocessing to mask out contiguous regions that are not showing stars. These correspond to the Moon and surrounding sky where stars cannot be seen and ground objects, such as trees and masts.

-

3. Extracting the point sources (stars) pixel coordinates and brightness using the Python Source Extractor library (SEP; Barbary Reference Barbary2016).

-

4. Astrometrically solve using astrometry.net Lang et al. (Reference Lang, Hogg, Mierle, Blanton and Roweis2010) the centre patch of the image. Only the centre patch is used as there are significant lens distortions away from the centre. This is used for verification and for cataloguing.

-

5. Converting the stars pixel coordinates to equatorial sky coordinates. For this step we re-use astrometric solutions that are generated automatically for doing fine astrometry on detected bolides and convert the solution to the current time. That solution is computationally expensive and takes into account the significant lens distortions. To facilitate this process we ingested the transformation parameters for all astrometrically solved images into the Mongo database (typically one solution per camera system every couple of days). For each clear-sky survey image analysed we queried the astrometric solution closest in time for the same observing system and translated the solution to the current sidereal time.

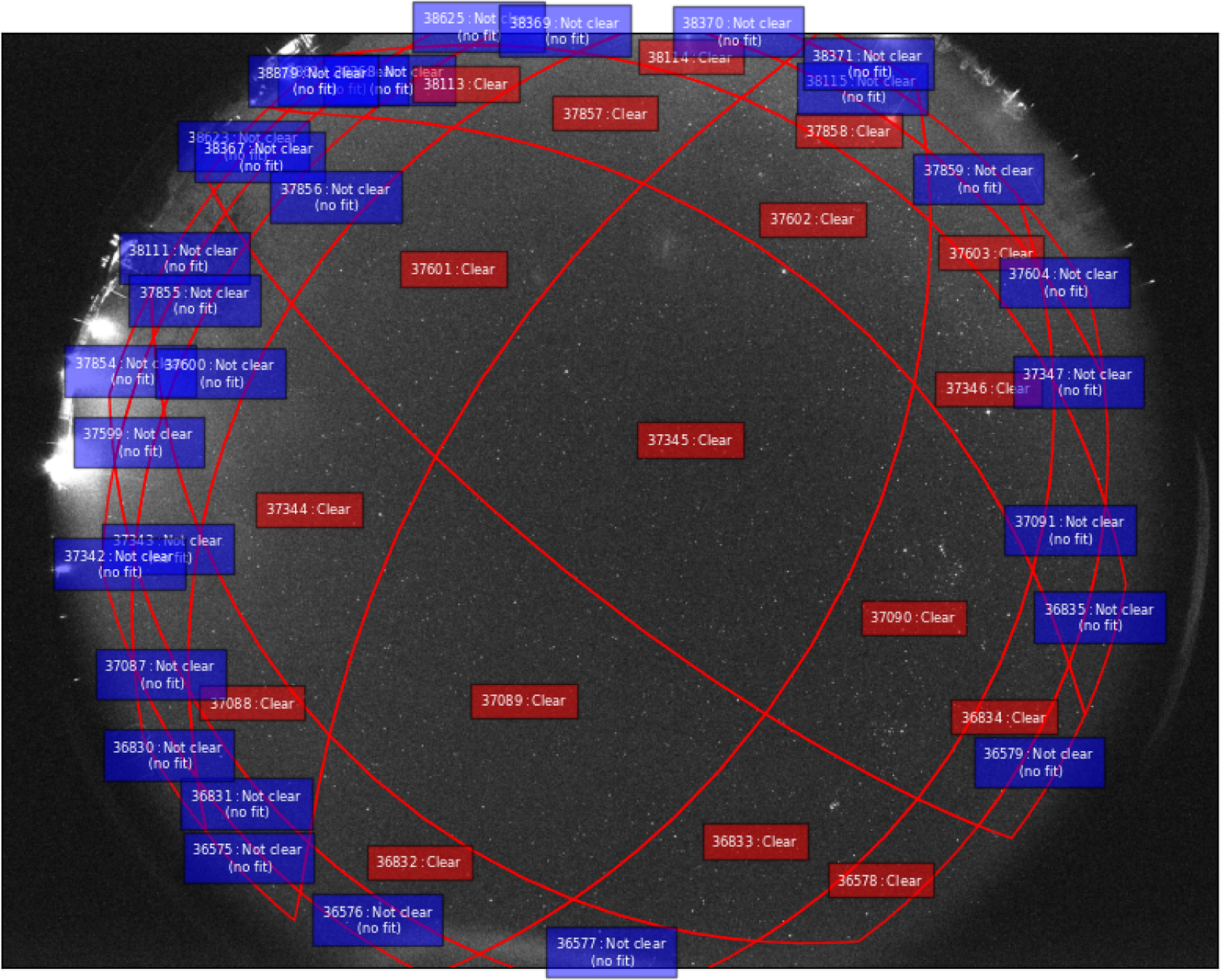

Level 2: Per-camera clear-sky status. The L2 data product consists of calculating the pixel boundaries on the image for the 70 km atmospheric shell HEALPix (see Section A for details) as they would appear from the location of the camera. The sources are then matched to the corresponding HEALPix element number and if the sources fit a logarithmic function down to a threshold and there are enough sources visible then the HEALPix element is considered clear for that location on that 15 min window. Specifically, for this step, the sources for that window are sorted according to the measured flux. If a logarithmic fit (log10) to the flux distribution yields a coefficient of determination (

![]() $R^2$

), calculated using sklearn.metrics.r2_score, greater than 0.75, and there are at least 10 sources detected within that HEALPix element, the region is classified as ‘clear.’ That is then recorded in a ‘clear HEALPix element’ entry for each image and record in a file next to the image on the Mongo database (see Figures 3 and 5 for illustrative examples)

$R^2$

), calculated using sklearn.metrics.r2_score, greater than 0.75, and there are at least 10 sources detected within that HEALPix element, the region is classified as ‘clear.’ That is then recorded in a ‘clear HEALPix element’ entry for each image and record in a file next to the image on the Mongo database (see Figures 3 and 5 for illustrative examples)

Clear HEALPix example. This figure illustrates the distribution of clear-sky conditions across HEALPix elements for a single observation window. Each coloured cell represents a HEALPix element at 70 km altitude that was classified as clear based on the flux distribution of detected sources.

This clear-sky classification is based on the shape and goodness-of-fit of the flux distribution, not on a single limiting magnitude threshold. A fixed limiting magnitude is not assumed, as camera sensitivity and atmospheric conditions vary between observing sessions. Thin or patchy clouds may still permit detection of bright stars, but typically distort the expected logarithmic flux distribution, causing the HEALPix element to be classified as not clear. The minimum source count requirement (10 sources per HEALPix element) helps avoid misclassification under marginal conditions where few detections could spuriously fit the distribution criterion. By design, this conservative approach prioritizes accurate effective observing time estimation over maximising apparent coverage, ensuring that the survey area reflects conditions suitable for reliable fireball detection and flux analysis.

Level 3: Network-wide clear-sky clear. The L3 data product consists of aggregating the clear HEALPix element classifications across all cameras and time steps to create a final data product of multi-camera HEALPix element conditions over the entire survey area. This data product provides the effective collection area for the phenomena observed and is used for debiasing the observations.

All the clear HEALPix windows are collected by timestamp, including the identifiers of the cameras that have been observing that particular window at that time (see Figure 6).

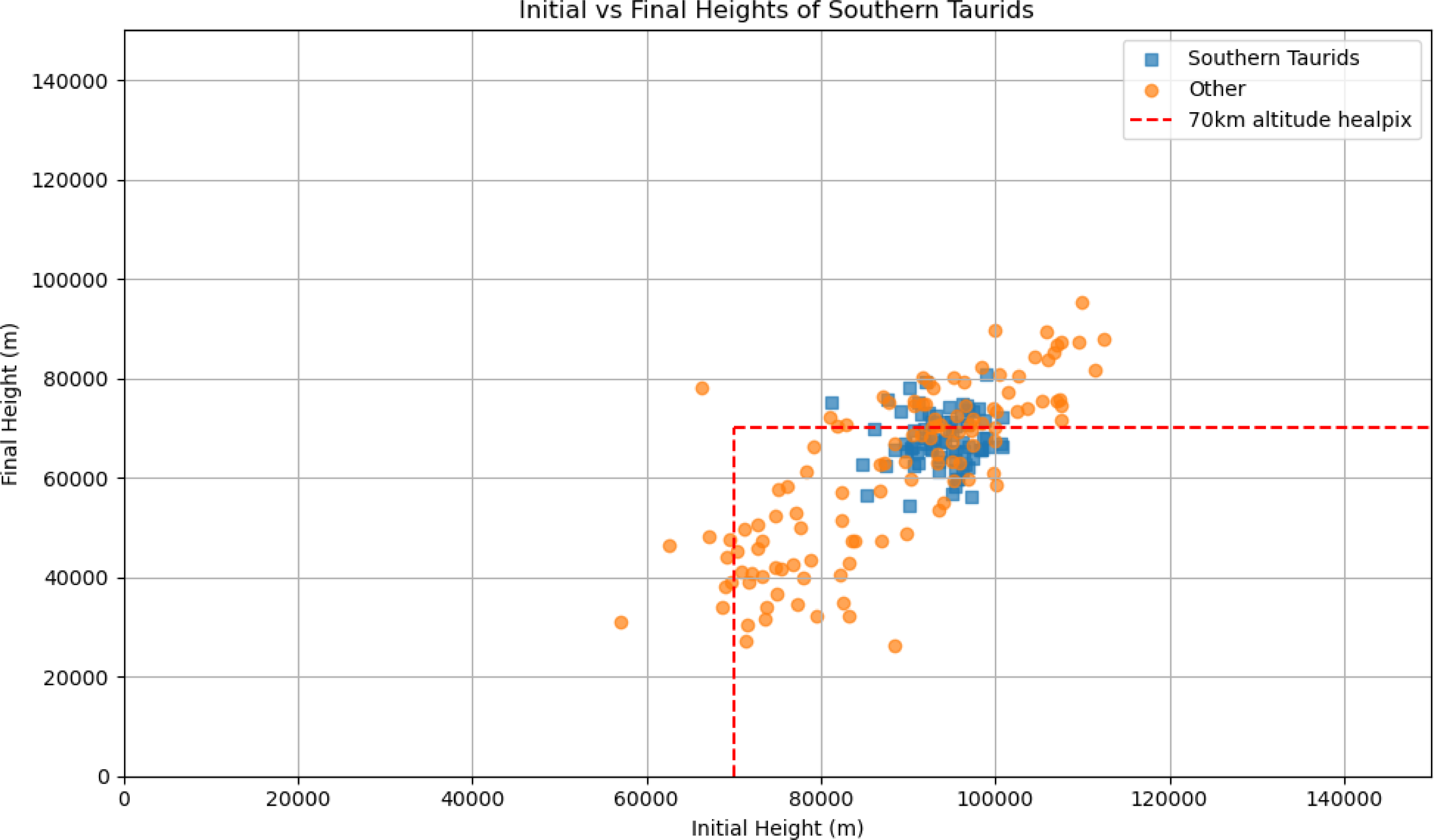

Start vs. end height distribution of detected meteors. This figure shows the distribution of start vs. end height of the light path, highlighting the 70 km altitude shell used in the DFN clear sky survey.

Single camera clear HEALPix example. This panel shows the clear-sky HEALPix elements as observed by a single DFN camera during a 15-min window. The highlighted HEALPix elements indicate areas where the flux distribution of detected sources met the criteria for clear-sky classification (logarithmic fit

![]() $R^2 \gt 0.75$

, at least 10 sources).

$R^2 \gt 0.75$

, at least 10 sources).

Single time step with clear HEALPix elements and detected fireball. This figure presents a snapshot of the network’s coverage at a specific time step, showing all HEALPix elements classified as clear by at least two cameras. The positions of the cameras and the HEALPix element containing a detected fireball are overlaid.

4. Results

We process the data for last three months of 2015 (2015-10-01 14:00 to 2015-12-31 20:15 UTC). This period fully encompasses the Southern Taurid activity period (first recorded fireball occurred on 2015-10-30T12:04:38 and the last on 2015-12-08T13:13:48). The first section in this results section consists of a summary covering the three months including quality controls for the derived clear-sky data. The subsequent (section 4.2) aims to calculate the impact flux density for the 2015 apparition of the Southern Taurids meteoroid stream.

4.1. Overall time-area coverage

The clear-sky coverage for the last three months of 2015 is discretised using a 15 min timestep. During each timestep a camera is considered to be effectively observing if it has captured 30 images. This condition ensures that the data is complete in in the sense that the capture control system worked as configured.

Timesteps that we excluded were usually because they overlapped with the start or end of operation for the day. Other causes for excluding these camera timesteps include occasional malfunctions that led to missed shots, and some systems being in test mode (e.g. a camera’s location identified as ‘Test_lab’). A list of the included cameras operation time can be found on Table 2.

During the period of study, we assessed a total of 4 213 timesteps of 15 min between dusk and dawn. The total number of images for the period was 1 713 609 from a total of 33 unique cameras. For all timesteps the cameras observing were on average 12.54 and not observing 1.89 meaning that they were excluded from the survey for any one of the aforementioned reasons.

The location of the cameras as defined by their GPS coordinates is not stable throughout the observation and drifts by a few metres over the span of the survey, so they were assigned to the centre of their corresponding box containing these coordinate points with a maximum distance of 100 m from that centroid. The reasons for such drift can be rounding errors and GPS inaccuracies but also movement/substitution of the camera by the maintenance team during deployment or maintenance. Approximate locations of the cameras and the width and length of the corresponding bounding box that contains all the recorded GPS points is given in Table 2.

Each HEALPix has a specific number (e.g. 37 089 was one of those as can be seen in Figure 5). That number is referring to that area in the sky and is the same between cameras, but viewed (if visible from that location) at a different angle. The number of individual HEALPix observed by any camera to be clear at any time in the period was 217 while the number of clear HEALPix observed by more than camera one was 157. That is because HEALPix at the edges of the network were never observed by more than one camera.

Each HEALPix was observed on average 1 426 timesteps by a single camera and 521 on average by more than one cameras. Observations for the network start on average at 10:30 UTC and end on average at 20:30 UTC with an average duration of just under 10 h each day.

When we sum up all the HEALPix observed by at least two camera for that observation period we arrive at an effective total observation of

![]() $1.5817464 \times 10^{12} \; \mathrm{km^{2}\,h}$

. The full table detailing the amount of time each HEALPix is clear one at least two cameras is given as supplementary material in

Table 3, and visualised in Figure 2 in Supplementary Material.

$1.5817464 \times 10^{12} \; \mathrm{km^{2}\,h}$

. The full table detailing the amount of time each HEALPix is clear one at least two cameras is given as supplementary material in

Table 3, and visualised in Figure 2 in Supplementary Material.

4.2. Meteoroid flux density

In order to arrive at a debiased result for the flux density, we select which events we can include in the count. There are two filtering steps. First, we filter on events per camera based on whether that camera was observing. For that we consider a camera to be observing, when there are exactly 30 images for that 15 min timestep. Secondly, at network level, we only include fireballs that have been observed through a window considered clear for the timestep during which the fireball happened. Finally, we select those observations that are attributable to the Southern Taurids.

For the period corresponding to the survey, the first fireball event is on 2015-10-01 14:00:00 and the last 2015-12-31 18:30:00. The total number of events were 647, however, only 585 are ‘valid’ in the sense that only those are taken into account as they come form cameras that are observing given the definition of observing in Section 4.1, namely that were observed during a 15 min timestep for which exactly 30 images were recorded. From the summary on Figure 1 in Supplementary Material we observe no notable patterns of rejection of events over the period.

For the second filtering step, as the trajectory of the fireball is known, we use the latitude and longitude corresponding to the beginning and the end of the bright flight and assign the fireball to the lowest in altitude HEALPix that was clear for that timestep and observable by more than one camera. Using that filter we keep 141 and reject 56 fireballs. In 115 observations the lowest HEALPix coincides with the 70 km altitude HEALPix and in 26 it does not. As can be seen from Figure 4 where height of the shell is shown in comparison to the dataset this is expected.

From the calculated radiants for the selected period (as already calculated by Devillepoix et al. Reference Devillepoix2021) we found 54 fireballs that are part of the Southern Taurids and 87 other fireballs, and the period for those Southern Taurid observations between 2015-10-30T12:04:38.626 and 2015-12-08T13:13:48.446. As described in that study the meteoroid’s heliocentric orbit is determined by backtracking the meteoroid from the start of its luminous flight out of Earth’s gravitational influence to the Hill sphere, using Monte Carlo methods to estimate uncertainties.

The dynamic mass, assuming a spherical cross-section for the meteoroids using the calculation described by Gritsevich & Popelenskaya (Reference Gritsevich and Popelenskaya2008), is shown in Figure 4 in Supplementary Material. For consistency with prior DFN analyses (Sansom et al. Reference Sansom, Bland, Paxman and Towner2015), an ablation coefficient of

![]() $4\times10^{-8}$

kg/J is adopted. A complete description of the mass calculation can be found in Sansom et al. (Reference Sansom, Bland, Paxman and Towner2015).

$4\times10^{-8}$

kg/J is adopted. A complete description of the mass calculation can be found in Sansom et al. (Reference Sansom, Bland, Paxman and Towner2015).

By using the calculated observation area rate for the activity period of the Southern Taurids (

![]() $2.2658823 \times 10^{16} \; \mathrm{\frac{m^{2}}{yr}}$

), we can arrive at the normalised influx rate table Table 4.

$2.2658823 \times 10^{16} \; \mathrm{\frac{m^{2}}{yr}}$

), we can arrive at the normalised influx rate table Table 4.

We can then calculate the cumulative size-frequency distribution, which is presented in Figure 7 alongside the linear fit for a spherical models for different density assumptions. The presence of a discontinuity around the 1 gram point has been documented previously by Halliday et al. (Reference Halliday, Griffin and Blackwell1996). This discontinuity represents a change in the distribution pattern, indicating that particles or fragments below and above this mass threshold may have different formation or fragmentation histories. The break or shift suggests a physical or mechanical process that affects the population of particles differently on either side of 1 g, which could be due to changes in fragmentation dynamics, aerodynamic sorting, or sampling biases. In the case of the DFN part of this discrepancy will be due to selection bias because the network is less sensitive to smaller meteors.

Fit to cumulative mass-frequency distribution. This plot shows the cumulative size-frequency distribution (SFD) of meteoroids detected by the DFN, with a fitted power-law model. The presence of a break around 1 g is highlighted.

The above fit corresponds to

![]() $\log N = a M_i + b$

with:

$\log N = a M_i + b$

with:

-

1.

$a = -0.13$

and

$a = -0.13$

and

$b = 3.87$

(mass index

$b = 3.87$

(mass index

$s = 1.13$

) for the first segment up to mass 1.42 and then

$s = 1.13$

) for the first segment up to mass 1.42 and then -

2.

$a = -0.73$

and

$a = -0.73$

and

$b = 4.82$

(mass index

$b = 4.82$

(mass index

$s = 1.73$

)

$s = 1.73$

)

5. Discussion

Compared to CAMS results presented by Devillepoix et al. (Reference Devillepoix2021), the results seem to complete the picture for that stream well extending to lower masses. In Figure 8, the result is plotted for 3 different assumed densities, namely 300, 500, and 600

![]() $\mathrm{kg/m}^3$

. The resulting plot seems to form a better continuity when using a

$\mathrm{kg/m}^3$

. The resulting plot seems to form a better continuity when using a

![]() $300\,\mathrm{kg/m}^3$

assumption. This is a reasonable assumption based on the cometary origins of this stream (Devillepoix et al. Reference Devillepoix2021) as these have been estimated to have densities around that range (Ceplecha Reference Ceplecha1988). This does not mean that this is the actual density of the meteoroids, as the calculation of the mass depends on multiple assumptions about shape and physical properties. Mass estimation in the CAMS data is done using the photometric method, whereas here we use the dynamic mass (Sansom et al. Reference Sansom, Bland, Paxman and Towner2015).

$300\,\mathrm{kg/m}^3$

assumption. This is a reasonable assumption based on the cometary origins of this stream (Devillepoix et al. Reference Devillepoix2021) as these have been estimated to have densities around that range (Ceplecha Reference Ceplecha1988). This does not mean that this is the actual density of the meteoroids, as the calculation of the mass depends on multiple assumptions about shape and physical properties. Mass estimation in the CAMS data is done using the photometric method, whereas here we use the dynamic mass (Sansom et al. Reference Sansom, Bland, Paxman and Towner2015).

Comparison with CAMS results. This figure compares the DFN cumulative mass-frequency distribution with results from the CAMS survey, plotted for three different assumed meteoroid densities (300, 500, and

![]() $600\,\mathrm{kg/m^3}$

). The comparison demonstrates the effect of density assumptions on mass estimates and highlights the continuity and differences between the two surveys’ results.

$600\,\mathrm{kg/m^3}$

). The comparison demonstrates the effect of density assumptions on mass estimates and highlights the continuity and differences between the two surveys’ results.

Given the difference of the calculation and debiasing methods in the two surveys, the data considered in this study cannot be considered to conclusively estimate density; however, such an estimate is possible if additional data, such as the photometric mass or a simultaneous radar observation, were added.

The use of HEALPix for debiasing meteor network observations has proven highly effective at automating time-area coverage calculations across large datasets. By dividing the sky into equal area pixels and tracking clear sky conditions for each pixel over time, we can accurately measure the effective survey volume of the Desert Fireball Network.

The resulting time-area measurements provide a robust basis for calculating true meteoroid flux rates. By knowing exactly when and where the network had clear views of the sky from multiple cameras, we can properly normalise meteor counts and derive unbiased population statistics.

While developed for the DFN, this approach could be valuable for other meteor camera networks seeking to debias their observations and calculate accurate flux rates. The use of standardised HEALPix coordinates makes the results comparable between different surveys.

The dates that are used here are comparable with the period of high activity for the newly discovered branch of the Taurid meteor complex described by Spurný et al. (Reference Spurný, Boroviĉka and Mucke2017).

The fitted slope is somewhat unexpected on both segments as, for the first segment it shows as unusually flat in comparison to Halliday et al. (Reference Halliday, Griffin and Blackwell1996) but that can be explained due to observation bias. The masses recorded there are below one gram and the resulting light path is equally small. As a consequence both due to the optical limits and the automated detection limits described in Towner et al. (Reference Towner2020), leads to systematic bias.

Using HEALPix in meteor observations to debias observations can be useful for other surveys and it is the intention to release this functionality as a Python library for other users.

The method presented was also useful for adjusting the flux for biased streams in an automated way, projecting the collecting area to the perpendicular to the radiant. A future improvement would be to use HEALPix, which are on the orbital apex of the Earth orbit and assign also that HEALPix number to an observation. That way any correction in terms of the radiant of a focused stream would be much simplified. It is likely a useful tool to much more easily visualise the shape of cometary debris field as the Earth travels through it. Additionally, anisotropies in the influx can more readily be evaluated given the equal area nature of HEALPix.

Interestingly, even though there is insufficient data to estimate meteoroid density in this study, such an estimate is a clear possibility if we add data that can be made available. Specifically adding constraints on the mass by using photometric methods could provide sufficient explanatory power. Adding such information requires additional analysis and can form the basis of future work.

In summary, using equal-area pixels (HEALPix) with the origin at the geographic centre, coupled with logarithmic fitting of source flux distributions, provides a robust and automated method for calculating collection area across large datasets from multiple observatories. Additionally, using a shell centred on the apex of Earth’s motion enables more accurate correction for directional biases in meteoroid influx, facilitating improved flux normalization and inter-network comparisons.

6. Conclusions

This study leverages the HEALPix framework, an equal-area pixelization method, to accurately estimate the portion of the sky that is clear and effectively map the spatial distribution of meteors. HEALPix’s equal-area pixels facilitate uniform analysis and easy aggregation of observations from multiple cameras, significantly simplifying the process of calculating observed areas and times (Górski et al. Reference Górski, Hivon, Banday, Wandelt, Hansen, Reinecke and Bartelmann2005). This approach enables precise, unbiased assessments of meteor distributions and enhances our ability to characterise spatial-temporal variations in meteoroid activity, crucial for improving flux estimation and predictive models.

The Desert Fireball Network and Global Fireball Observatory programs have been funded by the Australian Research Council as part of the Australian Discovery Project scheme (DP170102529, DP200102073, DP230100301), the Linkage Infrastructure, Equipment and Facilities scheme (LE170100106), and receives institutional support from Curtin University.

The overall aim to provide an automated way to estimate clear-sky conditions in a way that can be used in other multi-camera networks is successfully demonstrated by comparing the mass size-frequency distribution derived using the debiased influx, to other studies.

Supplementary material

The supplementary material for this article can be found at https://doi.org/10.1017/pasa.2026.10168

Acknowledgements

Parts of this work were developed during the employment of K. Servis by the Commonwealth Scientific and Industrial Research Organisation at Pawsey Supercomputing and Research Centre in Perth, Western Australia and Centre National de la Recherche Scientifique at Laboratoire d’Astrophysique de Marseille in Marseille, France.

This research made use of Astropy (Robitaille et al. Reference Robitaille2013), a community-developed core Python package for Astronomy.

Appendix A. Clear HEALPix estimation method

The aim of the DFN clear sky survey is to provide a robust automated estimate of the amount of time and area surveyed when DFN observations took place (Bland & Artemieva Reference Bland and Artemieva2006) in millions of images.

The method we used to estimate the observed volume by measuring observations along a shell 70 km above MSL following the clear-sky survey of Halliday et al. (Reference Halliday, Griffin and Blackwell1996) and measuring how many cameras can view, which part of the shell at any given time. The choice of 70 km altitude was made so that the shell is representative of a boundary that we expect the light-path to cross for all the objects of interest that will be included in the final count. An overview of where that shell lies can be found in Figure 4 and initial and final height of the observed fireballs and for those specifically belonging to the Southern Taurids is given.

So that the estimates of observations can be used between cameras and can be extrapolated at a larger scale, we used the Hierarchical Equal Area isoLatitude Pixelisation (HEALPix) framework developed by Górski et al. (Reference Górski, Hivon, Banday, Wandelt, Hansen, Reinecke and Bartelmann2005). We translated the WGS84 geographical coordinates of Earth at an altitude of 70 km to HEALPix elements with NSIDE 64, which divides the observed shell into equal-area regions of 10 606.58 km

![]() $^2$

. Each HEALPix element was transformed into an image polygon at the time and location of each camera, and each point light source observed was assigned to the corresponding HEALPix element. By ordering the sources in terms of flux using a minimum of 10 sources, if the fluxes fit a logarithmic function, we consider that the origin is astronomical and the HEALPix element for that camera and time was declared ‘clear’ (see Figures 3 and 5).

$^2$

. Each HEALPix element was transformed into an image polygon at the time and location of each camera, and each point light source observed was assigned to the corresponding HEALPix element. By ordering the sources in terms of flux using a minimum of 10 sources, if the fluxes fit a logarithmic function, we consider that the origin is astronomical and the HEALPix element for that camera and time was declared ‘clear’ (see Figures 3 and 5).

Subsequently, the HEALPix elements for a given 15 min timestep were collected together to arrive at the total observation area for that time step (see Figure 6).