1 Introduction

Empirical research in the psychological, social, and health sciences often features data that contain both continuous and ordered categorical (ordinal) random variables. Examples of continuous variables are household income, blood pressure, or time spent doing an activity of interest, while examples of ordinal variables are responses to rating scales (measuring, e.g., personality traits, wellbeing, or overall health), relationship status (with categories, such as single, in a relationship, and married), or grouped measurements of continuous variables like one’s income group. The association between a continuous and an ordinal variable is typically modeled by means of polyserial correlation (Pearson, Reference Pearson1909). Polyserial correlation is a key building block in the analysis of mixed data, particularly structural equation models (SEMs). For instance, the popular R package lavaan (Rosseel, Reference Rosseel2012) for SEM analyses by default uses polyserial correlation for SEMs involving both continuous and ordinal variables.

The polyserial correlation model postulates the existence of a latent continuous variable that underlies and governs the observed ordinal variable through an unobserved discretization process. The correlation between the observed continuous variable and the latent variable is called polyserial correlation, whereas the correlation between the observed continuous and the observed ordinal variable is called point polyserial correlation, where the latter can be computed from the former. For identification, the polyserial correlation model assumes that the observed continuous variable and the latent continuous variable are jointly normally distributed, thereby making it a partially-latent normality model. Estimation is typically conducted by means of maximum likelihood (ML; Cox, Reference Cox1974; Olsson et al., Reference Olsson, Drasgow and Dorans1982). However, the validity of the partially-latent normality assumption is often questionable in practice (e.g., Barbiero, Reference Barbiero2025; Bedrick, Reference Bedrick1995; Demirtas & Hedeker, Reference Demirtas and Hedeker2016). Disquietingly, though, central statistical properties of polyserial correlation crucially depend on this very assumption to hold true: Violations of partially-latent normality have been shown to have potentially devastating effects on identification (e.g., Moss & Grønneberg, Reference Moss and Grønneberg2023) and ML estimation (e.g., Bedrick, Reference Bedrick1995) of polyserial correlation. Nonnormality of (partially) latent variables can also introduce serious biases in SEM analyses conducted with software that assumes such normality (e.g., Foldnes & Grønneberg, Reference Foldnes and Grønneberg2020, Reference Foldnes and Grønneberg2022), such as lavaan (Rosseel, Reference Rosseel2012), LISREL (Jöreskog & Sörbom, Reference Jöreskog and Sörbom1996), and Mplus (Muthén & Muthén, Reference Muthén and Muthén2026).

Motivated by the susceptibility of polyserial correlation to partially-latent nonnormality, the contributions of this article are twofold. First, we study estimation of the polyserial correlation model when a (possibly empty) subset of the observed data are of low-quality and therefore may have not been generated by a normal distribution, such as (but not limited to) outliers in the continuous variable and/or careless responses in the ordinal variable. Consequently, such observations are uninformative for estimating the polyserial correlation model. This situation is called partial model misspecification because the assumption of partially-normality might be violated for parts of the data, but is satisfied for the remaining data. We demonstrate that already one single uninformative observation can suffice for ML estimation of polyserial correlation to yield arbitrary results and, furthermore, that the accuracy of ML estimation is highly susceptible to even minor misspecification of partially-latent normality. Partial misspecification stems from classic literature on robust statistics (e.g., Huber & Ronchetti, Reference Huber and Ronchetti2009) where it is known as Huber contamination model, owing to Huber (Reference Huber1964).

Second, in wake of the non-robustness of ML estimation to uninformative observations generated by partially-latent nonnormality, we propose an alternative estimator that is designed to be robust against such partial misspecification. The proposed methodology applies density power divergence (DPD) estimation (Basu et al., Reference Basu, Harris, Hjort and Jones1998) to the polyserial correlation model and achieves robustness by implicitly downweighting observations that cannot be sufficiently well fitted by that model. The ensuing weights are a useful tool for pinpointing potential sources of (partial) model misspecification. As an additional methodological contribution, we devise a simple rescaling of the weights to ensure that they are contained in the unit interval. Overall, to the best of our knowledge, the proposed methodology is the first contamination-robust approach to polyserial correlation.

In line with the partial misspecification framework, the robust estimator allows the model to be misspecified for an unknown fraction of uninformative observations, but makes no assumption on how and where partial misspecification occurs (which may be absent altogether). Consequently, partial misspecification can manifest through an unlimited and unrestricted variety of ways, such as (but not limited to) outliers or careless responses. Conversely, if the polyserial correlation model is correctly specified for all observations in a sample, then the robust estimator is, just like ML, consistent for the true parameter vector, and, therefore, generalizes ML estimation.

Studying their respective behavior under model misspecification, we show that both ML and the robust estimator still converge in probability, but to different limits. Crucially, the robust estimator converges to a parameter vector that is closer to the true parameter vector than ML, thereby gaining its robustness. Moreover, the robust estimator and ML remain asymptotically normally distributed, allowing for statistical inference both under correct and incorrect specification of the polyserial correlation model.

In robust statistics, there is a well-established fundamental tension between robustness and efficiency for estimation procedures for data involving continuous variables (e.g., Hampel et al., Reference Hampel, Ronchetti, Rousseeuw and Stahel1986; Huber & Ronchetti, Reference Huber and Ronchetti2009). Our estimator is no exception: The price to pay for its enhanced robustness manifests in the form of diminished efficiency compared to ML. However, we show that sacrificing as little as 2% of efficiency suffices to obtain a substantial gain in robustness, so the efficiency loss is only comparatively minor. An additional price of our robust estimator is increased computational intensity. Nevertheless, using our implementation, the robust estimator usually executes in less than 2 seconds on a regular laptop, so the overall computational burden should remain small in practical applications. This implementation of our proposed methodology is publicly and freely available as part of the R package robcat (for “ROBust CATegorical data analysis”; Welz et al., Reference Welz, Alfons and Mair2026a) on CRAN (the Comprehensive R Archive Network) at https://CRAN.R-project.org/package=robcat.

This article is organized as follows. Section 2 reviews related literature, while Section 3 summarizes the polyserial correlation model and its ML estimation. Section 4 describes the partial misspecification framework adopted in this article. Section 5 introduces our robust estimator, and Section 6 derives its theoretical and computational properties. Section 7 carries out simulation studies to compare the performance of the robust estimator to ML in a variety of settings. Section 8 provides an empirical application on data from personality psychology. Section 9 discusses and concludes.

2 Literature

Potential violations of the normality assumption that underlies the polyserial correlation model have been studied in previous literature, though the misspecification framework used therein is fundamentally different from the partial misspecification framework adopted in this article. Specifically, previous literature focuses on distributional misspecification, where the polyserial correlation model is misspecified (usually through nonnormality) for the entire observed sample. In contrast, in partial misspecification, the model is only misspecified for a (possibly empty) subset of the sample. We explain the differences between partial and distributional misspecification in more detail in Section 4.2.

Focusing on distributional misspecification. Bedrick (Reference Bedrick1995) shows that the accuracy of normality-based ML estimation of polyserial correlation crucially depends on whether or not the marginal distribution of the latent variable that underlies the observed ordinal variable is normal. If that distribution is not normal, but, for instance, an exponential or t-distribution, ML estimates of polyserial correlation may be attenuated (Brogden, Reference Brogden1949; Kraemer, Reference Kraemer1981; Lord, Reference Lord1963). For a dichotomous ordinal variable, Demirtas and Vardar-Acar (Reference Demirtas, Vardar-Acar, Chen and Chen2017) and Demirtas and Hedeker (Reference Demirtas and Hedeker2016) devise an algorithm that relates polyserial correlation to point polyserial correlation when the underlying joint distribution (which they assume to be known) is not bivariate normal. For a given potentially nonnormal joint distribution and a dichotomous ordinal variable, Cheng and Liu (Reference Cheng and Liu2016) derive a general expression for the maximum point polyserial coefficient (in population). Barbiero (Reference Barbiero2025) generalizes the results of Cheng and Liu (Reference Cheng and Liu2016) to a polytomous ordinal variable.

Moss and Grønneberg (Reference Moss and Grønneberg2023, Section 2) and Grønneberg et al. (Reference Grønneberg, Moss and Foldnes2020, Section 2.5) use partial identification analyses to study distributional misspecification of the polyserial model from a theoretical perspective. Specifically, given known marginal distributions of the observed continuous and latent continuous variables but keeping their joint distribution unspecified, they derive partial identification sets for the partially-latent correlation. They show that the partial identification sets tend to be uninformatively wide. Consequently, polyserial correlation is very sensitive to partially-latent joint nonnormality because different nonnormal distributions can yield widely different correlations. To reduce the width of the partial identification sets, one must make restrictive assumptions on the partially-latent joint distribution, such as Gaussian-like characteristics or equipping it with a parametric structure.

In a broader context, violations of normality assumptions have been studied extensively in the psychometric literature, especially with respect to the distribution of latent variables (e.g., Asparouhov & Muthén, Reference Asparouhov and Muthén2016; Lyhagen & Ornstein, Reference Lyhagen and Ornstein2023; Monroe, Reference Monroe2018; Moss & Grønneberg, Reference Moss and Grønneberg2023; Roscino & Pollice, Reference Roscino, Pollice, Zani, Cerioli, Riani and Vichi2006; Yuan et al., Reference Yuan, Bentler and Chan2004, and the references therein). A particular focus of recent literature has been the polychoric correlation model of Pearson and Pearson (Reference Pearson and Pearson1922), which models through a latent bivariate normality distribution the association of two latent variables that govern two observed ordinal variables (see Olsson, Reference Olsson1979 for a modern exposition). Foldnes and Grønneberg (Reference Grønneberg, Moss and Foldnes2020) and Jin and Yang-Wallentin (Reference Jin and Yang-Wallentin2017) show that ML estimation of polychoric correlation is highly susceptible to distributional misspecification, leading to possibly large biases in SEM analyses based on polychoric correlation (Foldnes & Grønneberg, Reference Foldnes and Grønneberg2022; Grønneberg & Foldnes, Reference Grønneberg and Foldnes2022).Footnote 1 Welz et al. (Reference Welz, Mair and Alfons2026b) are concerned with partial misspecification of the polychoric correlation model, where latent normality is violated due to a (possibly empty) subset of data points that were generated by an unspecified and unknown nonnormal process. We use the same (partial) misspecification framework in this article. Welz et al. (Reference Welz, Mair and Alfons2026b) show that having in a sample already about 5% of such observations, commonly referred to as contamination, can suffice for a substantial estimation bias. Contamination might arise due to, for instance but not limited to, careless responding to polytomous items, which has been identified as a major threat to the validity of psychometric analyses (e.g., Alfons & Welz, Reference Alfons and Welz2024; Arias et al., Reference Arias, Garrido, Jenaro, Martinez-Molina and Arias2020; Bowling et al., Reference Bowling, Huang, Bragg, Khazon, Liu and Blackmore2016; Huang et al., Reference Huang, Liu and Bowling2015; Meade & Craig, Reference Meade and Craig2012; Ward & Meade, Reference Ward and Meade2023, and references therein). As a remedy, Welz et al. (Reference Welz, Mair and Alfons2026b) propose a fully efficient contamination-robust estimator of the polychoric correlation model that makes no assumptions on the prevalence and type of contamination (which is possibly absent altogether). Their estimator exploits the theory of C-estimation (Welz, Reference Welz2024), being a general framework of robust estimation with categorical data.

In the context of item response theory (IRT), Itaya and Hayashi (Reference Itaya and Hayashi2025) focus on the seminal model by Rasch (Reference Rasch1960), whose estimation may be compromised by contamination due to, for instance, careless responding or random guessing. They therefore robustify marginal ML estimation thereof by using a minimum DPD estimator (Basu et al., Reference Basu, Harris, Hjort and Jones1998) and design a majorization–minimization algorithm for this purpose. In this article, we also utilize DPD estimation theory to robustify the (joint) ML estimation of the polyserial correlation model.

3 Polyserial correlation

This section defines (point) polyserial correlation and then reviews ML estimation thereof.

3.1 The polyserial correlation model

Suppose we observe a continuous real-valued random variable X with unknown population mean

![]() $\mu = \mu _X \in \mathbb {R}$

and unknown population variance

$\mu = \mu _X \in \mathbb {R}$

and unknown population variance

![]() $\sigma ^2 = \sigma ^2_X> 0$

. In addition, suppose that we also observe a polytomous ordinal random variable Y that takes values in some finite set

$\sigma ^2 = \sigma ^2_X> 0$

. In addition, suppose that we also observe a polytomous ordinal random variable Y that takes values in some finite set

![]() $\mathcal {Y}$

of known cardinality r. Without loss of generality, we assume throughout this article that

$\mathcal {Y}$

of known cardinality r. Without loss of generality, we assume throughout this article that

![]() $\mathcal {Y} = \{1,2,\dots , r\}$

. Further assume that there exists a latent continuous random variable

$\mathcal {Y} = \{1,2,\dots , r\}$

. Further assume that there exists a latent continuous random variable

![]() $\eta $

governing the ordinal variable Y through the unobserved discretization process

$\eta $

governing the ordinal variable Y through the unobserved discretization process

$$ \begin{align} Y = \begin{cases} 1 & \mathrm{ if }\ \eta < \tau_1, \\2 & \mathrm{ if }\ \tau_1 \leq \eta < \tau_2,\\3 & \mathrm{ if }\ \tau_2 \leq \eta < \tau_3,\\\vdots & \\r & \mathrm{ if }\ \tau_{r-1} \leq \eta, \end{cases} \end{align} $$

$$ \begin{align} Y = \begin{cases} 1 & \mathrm{ if }\ \eta < \tau_1, \\2 & \mathrm{ if }\ \tau_1 \leq \eta < \tau_2,\\3 & \mathrm{ if }\ \tau_2 \leq \eta < \tau_3,\\\vdots & \\r & \mathrm{ if }\ \tau_{r-1} \leq \eta, \end{cases} \end{align} $$

where

![]() $-\infty < \tau _1 < \tau _2 < \cdots < \tau _{r-1} < +\infty $

are fixed but unknown threshold parameters. In practice, Y often denotes the responses to a Likert-type rating item with r response categories.

$-\infty < \tau _1 < \tau _2 < \cdots < \tau _{r-1} < +\infty $

are fixed but unknown threshold parameters. In practice, Y often denotes the responses to a Likert-type rating item with r response categories.

The primary object of interest is the fixed but unknown population correlation between the observed X and the latent

![]() $\eta $

, denoted by

$\eta $

, denoted by

To identify the correlation coefficient

![]() $\rho $

, one usually assumes that X and

$\rho $

, one usually assumes that X and

![]() $\eta $

are jointly normally distributed according to

$\eta $

are jointly normally distributed according to

$$ \begin{align} \begin{pmatrix} X \\ \eta \end{pmatrix} \sim \mathrm{N}_2 \left( \begin{pmatrix} \mu \\ 0 \end{pmatrix} , \begin{pmatrix} \sigma^2 & \rho\sigma \\ \rho\sigma & 1 \end{pmatrix} \right). \end{align} $$

$$ \begin{align} \begin{pmatrix} X \\ \eta \end{pmatrix} \sim \mathrm{N}_2 \left( \begin{pmatrix} \mu \\ 0 \end{pmatrix} , \begin{pmatrix} \sigma^2 & \rho\sigma \\ \rho\sigma & 1 \end{pmatrix} \right). \end{align} $$

The bivariate normality model (3.2) implies the marginal normality properties

![]() $X\sim \mathrm {N}(\mu , \sigma ^2)$

and

$X\sim \mathrm {N}(\mu , \sigma ^2)$

and

![]() $\eta \sim \mathrm {N}(0,1)$

. It also identifies the correlation coefficient

$\eta \sim \mathrm {N}(0,1)$

. It also identifies the correlation coefficient

![]() $\rho \in (-1,1)$

through the familiar identity

$\rho \in (-1,1)$

through the familiar identity

![]() $\mathbb {C}\mathrm {or} \left [X,\ \eta \right ] = \mathbb {C}\mathrm {ov} \left [X,\ \eta \right ]\big /\sqrt {\mathbb {V}\mathrm {ar} \left [ X \right ]\mathbb {V}\mathrm {ar} \left [ \eta \right ]} = \rho $

. Note that since the variable

$\mathbb {C}\mathrm {or} \left [X,\ \eta \right ] = \mathbb {C}\mathrm {ov} \left [X,\ \eta \right ]\big /\sqrt {\mathbb {V}\mathrm {ar} \left [ X \right ]\mathbb {V}\mathrm {ar} \left [ \eta \right ]} = \rho $

. Note that since the variable

![]() $\eta $

is unobserved, its population mean and variance are not jointly identifiable, which is why they are fixed to 0 and 1, respectively.

$\eta $

is unobserved, its population mean and variance are not jointly identifiable, which is why they are fixed to 0 and 1, respectively.

Combining the discretization model (3.1) with the bivariate normality model (3.2) yields the polyserial correlation model (Pearson, Reference Pearson1913), or, in short, polyserial model. In this model, the correlation parameter

![]() $\rho = \mathbb {C}\mathrm {or} \left [X,\ \eta \right ]$

is referred to as the polyserial correlation coefficient. If Y is dichotomous, the polyserial model reduces to the biserial correlation model of Pearson (Reference Pearson1909). The theoretical properties of biserial correlation are studied by Tate (Reference Tate1955a, Reference Tate1955b) as well as Jaspen (Reference Jaspen1946), and those of polyserial correlation by Olsson et al. (Reference Olsson, Drasgow and Dorans1982).

$\rho = \mathbb {C}\mathrm {or} \left [X,\ \eta \right ]$

is referred to as the polyserial correlation coefficient. If Y is dichotomous, the polyserial model reduces to the biserial correlation model of Pearson (Reference Pearson1909). The theoretical properties of biserial correlation are studied by Tate (Reference Tate1955a, Reference Tate1955b) as well as Jaspen (Reference Jaspen1946), and those of polyserial correlation by Olsson et al. (Reference Olsson, Drasgow and Dorans1982).

The polyserial model is subject to

![]() $d = r + 2$

parameters, namely, the mean and variance parameters

$d = r + 2$

parameters, namely, the mean and variance parameters

![]() $(\mu , \sigma ^2)$

of the observed X, the polyserial correlation coefficient

$(\mu , \sigma ^2)$

of the observed X, the polyserial correlation coefficient

![]() $\rho $

from the normality model (3.2), as well as the

$\rho $

from the normality model (3.2), as well as the

![]() $r-1$

thresholds from the discretization process (3.1) of the latent

$r-1$

thresholds from the discretization process (3.1) of the latent

![]() $\eta $

. These parameters are jointly collected in a d-dimensional parameter vector

$\eta $

. These parameters are jointly collected in a d-dimensional parameter vector

where the vector

![]() $\boldsymbol {\tau } = (\tau _1,\dots , \tau _{r-1})^\top $

contains the

$\boldsymbol {\tau } = (\tau _1,\dots , \tau _{r-1})^\top $

contains the

![]() $r-1$

thresholds.

$r-1$

thresholds.

Under the polyserial model evaluated at a parameter vector

![]() $\boldsymbol {\theta }\in \boldsymbol {\Theta }$

, the density of the observed-latent pair

$\boldsymbol {\theta }\in \boldsymbol {\Theta }$

, the density of the observed-latent pair

![]() $(X,\eta )$

of continuous variables is given by

$(X,\eta )$

of continuous variables is given by

$$\begin{align*}{p}_{X\eta}^{}\left(x,v; \boldsymbol{\theta}\right) = \phi_2 \left( \begin{pmatrix} x \\ v \end{pmatrix}; \begin{pmatrix} \mu \\ 0 \end{pmatrix} , \begin{pmatrix} \sigma^2 & \rho\sigma \\ \rho\sigma & 1 \end{pmatrix} \right), \qquad x,v\in\mathbb{R}, \end{align*}$$

$$\begin{align*}{p}_{X\eta}^{}\left(x,v; \boldsymbol{\theta}\right) = \phi_2 \left( \begin{pmatrix} x \\ v \end{pmatrix}; \begin{pmatrix} \mu \\ 0 \end{pmatrix} , \begin{pmatrix} \sigma^2 & \rho\sigma \\ \rho\sigma & 1 \end{pmatrix} \right), \qquad x,v\in\mathbb{R}, \end{align*}$$

where

![]() $\phi _2(\cdot; \boldsymbol {m}, \boldsymbol {S})$

is the density of the bivariate normal distribution with population mean

$\phi _2(\cdot; \boldsymbol {m}, \boldsymbol {S})$

is the density of the bivariate normal distribution with population mean

![]() $\boldsymbol {m}$

and covariance matrix

$\boldsymbol {m}$

and covariance matrix

![]() $\boldsymbol {S}$

. Further, denote by

$\boldsymbol {S}$

. Further, denote by

![]() ${P}_{X\eta }^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

the distribution function corresponding to the normal density

${P}_{X\eta }^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

the distribution function corresponding to the normal density

![]() ${p}_{X\eta }^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

.

${p}_{X\eta }^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

.

For the observed variables

![]() $(X,Y)$

, the joint density at a realization

$(X,Y)$

, the joint density at a realization

![]() $x\in \mathbb {R}$

and a response

$x\in \mathbb {R}$

and a response

![]() $y\in \mathcal {Y} = \{1,\dots , r\}$

of the ordinal Y under the polyserial model at parameter

$y\in \mathcal {Y} = \{1,\dots , r\}$

of the ordinal Y under the polyserial model at parameter

![]() $\boldsymbol {\theta }$

reads

$\boldsymbol {\theta }$

reads

where we adopt the conventions

![]() $\tau _0 = -\infty $

and

$\tau _0 = -\infty $

and

![]() $\tau _r = +\infty $

.Footnote

2

The joint distribution function of the observed

$\tau _r = +\infty $

.Footnote

2

The joint distribution function of the observed

![]() $(X,Y)$

under the polyserial model can now be expressed as

$(X,Y)$

under the polyserial model can now be expressed as

where the third equality follows from (3.3) in conjunction with the linearity of the integral operator. As such,

![]() ${P}_{XY}^{}\left (x,y; \boldsymbol {\theta }\right )$

is the distribution function associated with density

${P}_{XY}^{}\left (x,y; \boldsymbol {\theta }\right )$

is the distribution function associated with density

![]() ${p}_{XY}^{}\left (x,y; \boldsymbol {\theta }\right )$

, so we refer to it as the polyserial model distribution.

${p}_{XY}^{}\left (x,y; \boldsymbol {\theta }\right )$

, so we refer to it as the polyserial model distribution.

Our expressions for the polyserial model distribution and density are different but equivalent to the more commonly used expressions in Olsson et al. (Reference Olsson, Drasgow and Dorans1982), which are provided in Section A of the Supplementary Material.

3.2 Point polyserial correlation

In addition to the correlation between the observed X and the latent

![]() $\eta $

, one might also be interested in the correlation between X and the observed ordinal Y. The correlation between the observed X and Y is known as point polyserial correlation. In order to identify the desired point polyserial correlation

$\eta $

, one might also be interested in the correlation between X and the observed ordinal Y. The correlation between the observed X and Y is known as point polyserial correlation. In order to identify the desired point polyserial correlation

![]() $\mathbb {C}\mathrm {or} \left [X,\ Y\right ]$

, one needs to assign a numerical interpretation to the r answer categories of Y, that is, introduce a scoring system. Given a scoring system, the point polyserial correlation coefficient

$\mathbb {C}\mathrm {or} \left [X,\ Y\right ]$

, one needs to assign a numerical interpretation to the r answer categories of Y, that is, introduce a scoring system. Given a scoring system, the point polyserial correlation coefficient

![]() $\widetilde {\rho } = \mathbb {C}\mathrm {or} \left [X,\ Y\right ]$

is identified by the polyserial model and can be estimated by using estimates of the polyserial model parameters

$\widetilde {\rho } = \mathbb {C}\mathrm {or} \left [X,\ Y\right ]$

is identified by the polyserial model and can be estimated by using estimates of the polyserial model parameters

![]() $\boldsymbol {\theta }$

. We provide details in Section A of the Supplementary Material.

$\boldsymbol {\theta }$

. We provide details in Section A of the Supplementary Material.

3.3 Maximum likelihood estimation

Suppose we observe a sample

![]() $\{(X_i, Y_i)\}_{i=1}^N$

of N independent copies of

$\{(X_i, Y_i)\}_{i=1}^N$

of N independent copies of

![]() $(X,Y)$

generated by the polyserial model at some true parameter vector

$(X,Y)$

generated by the polyserial model at some true parameter vector

![]() $\boldsymbol {\theta }_{\ast} = \left (\rho _{\ast}, \mu _{\ast}, \sigma ^2_{\ast}, \boldsymbol {\tau }_{\ast}^\top \right )^\top $

. The statistical problem is to estimate the true

$\boldsymbol {\theta }_{\ast} = \left (\rho _{\ast}, \mu _{\ast}, \sigma ^2_{\ast}, \boldsymbol {\tau }_{\ast}^\top \right )^\top $

. The statistical problem is to estimate the true

![]() $\boldsymbol {\theta }_{\ast}$

from the observed sample, which is traditionally achieved by the ML estimator proposed by Cox (Reference Cox1974) and Olsson et al. (Reference Olsson, Drasgow and Dorans1982).

$\boldsymbol {\theta }_{\ast}$

from the observed sample, which is traditionally achieved by the ML estimator proposed by Cox (Reference Cox1974) and Olsson et al. (Reference Olsson, Drasgow and Dorans1982).

The ML estimator (MLE) of

![]() $\boldsymbol {\theta }_{\ast}$

is defined as the log-likelihood maximizer

$\boldsymbol {\theta }_{\ast}$

is defined as the log-likelihood maximizer

$$ \begin{align} \widehat{\boldsymbol{\theta}}_N^{\mathrm{\ MLE}} = \arg\max_{\boldsymbol{\theta}\in\boldsymbol{\Theta}} \left\{\sum_{i=1}^N \log\big( {p}_{XY}^{}\left(X_i, Y_i; \boldsymbol{\theta}\right) \big)\right\}, \end{align} $$

$$ \begin{align} \widehat{\boldsymbol{\theta}}_N^{\mathrm{\ MLE}} = \arg\max_{\boldsymbol{\theta}\in\boldsymbol{\Theta}} \left\{\sum_{i=1}^N \log\big( {p}_{XY}^{}\left(X_i, Y_i; \boldsymbol{\theta}\right) \big)\right\}, \end{align} $$

where the parameter space

is the set of legal parameters

![]() $\boldsymbol {\theta }$

the MLE maximizes over. As such,

$\boldsymbol {\theta }$

the MLE maximizes over. As such,

![]() $\boldsymbol {\Theta }$

rules out degenerate cases, such as

$\boldsymbol {\Theta }$

rules out degenerate cases, such as

![]() $\rho = \pm 1$

, non-positive standard deviations (SDs), or non-monotonic thresholds. Assuming that the polyserial model is correctly specified for the data at hand, Cox (Reference Cox1974) and Olsson et al. (Reference Olsson, Drasgow and Dorans1982) show that the MLE is consistent for the true

$\rho = \pm 1$

, non-positive standard deviations (SDs), or non-monotonic thresholds. Assuming that the polyserial model is correctly specified for the data at hand, Cox (Reference Cox1974) and Olsson et al. (Reference Olsson, Drasgow and Dorans1982) show that the MLE is consistent for the true

![]() $\boldsymbol {\theta }_{\ast}$

, asymptotically normally distributed, and fully efficient.

$\boldsymbol {\theta }_{\ast}$

, asymptotically normally distributed, and fully efficient.

As a computationally attractive alternative to ML estimation, Olsson et al. (Reference Olsson, Drasgow and Dorans1982) propose a two-step (TS) estimation procedure. In the first step, one computes as estimators of

![]() $\mu $

,

$\mu $

,

![]() $\sigma ^2$

, and

$\sigma ^2$

, and

![]() $\tau _k,k=1,\dots ,r-1,$

the sample statistics

$\tau _k,k=1,\dots ,r-1,$

the sample statistics

$$ \begin{align} \widehat{\mu}_{\textrm{TS}} = \frac{1}{N}\sum_{i=1}^N X_i, \quad \widehat{\sigma}^2_{\textrm{TS}} = \frac{1}{N-1} \sum_{i=1}^N \left(X_i - \widehat{\mu}_{\textrm{TS}}\right)^2, \quad \widehat{\tau}_{k,\textrm{TS}} = \Phi^{-1}\left(\frac{1}{N}\sum_{j=1}^k N_{Y,j} \right), \end{align} $$

$$ \begin{align} \widehat{\mu}_{\textrm{TS}} = \frac{1}{N}\sum_{i=1}^N X_i, \quad \widehat{\sigma}^2_{\textrm{TS}} = \frac{1}{N-1} \sum_{i=1}^N \left(X_i - \widehat{\mu}_{\textrm{TS}}\right)^2, \quad \widehat{\tau}_{k,\textrm{TS}} = \Phi^{-1}\left(\frac{1}{N}\sum_{j=1}^k N_{Y,j} \right), \end{align} $$

respectively, where ![]() denotes the empirical marginal frequency of the j-th response option of Y. In the second step, one substitutes for these sample statistics in the log-likelihood in (3.5) and maximizes the ensuing log-likelihood with respect to the remaining parameter,

denotes the empirical marginal frequency of the j-th response option of Y. In the second step, one substitutes for these sample statistics in the log-likelihood in (3.5) and maximizes the ensuing log-likelihood with respect to the remaining parameter,

![]() $\rho $

, via conditional ML (conditional on the sample statistics). The main advantage of the TS approach is reduced computing time because one needs to numerically solve the maximization problem (3.5) only with respect to one parameter,

$\rho $

, via conditional ML (conditional on the sample statistics). The main advantage of the TS approach is reduced computing time because one needs to numerically solve the maximization problem (3.5) only with respect to one parameter,

![]() $\rho $

, rather than all d parameters in

$\rho $

, rather than all d parameters in

![]() $\boldsymbol {\theta }$

. A drawback is diminished efficiency as compared to (joint) ML, where all d parameters are estimated simultaneously. By means of simulation experiments, Olsson et al. (Reference Olsson, Drasgow and Dorans1982) find that if the polyserial model is correctly specified, ML and the TS estimator produce similar results, with differences decreasing with an increasing number of response options for Y. The TS approach is the default estimator for polyserial correlation in the SEM software package lavaan (Rosseel, Reference Rosseel2012).

$\boldsymbol {\theta }$

. A drawback is diminished efficiency as compared to (joint) ML, where all d parameters are estimated simultaneously. By means of simulation experiments, Olsson et al. (Reference Olsson, Drasgow and Dorans1982) find that if the polyserial model is correctly specified, ML and the TS estimator produce similar results, with differences decreasing with an increasing number of response options for Y. The TS approach is the default estimator for polyserial correlation in the SEM software package lavaan (Rosseel, Reference Rosseel2012).

While it is in principle possible to robustify the TS approach against contamination by using the same estimation theory as in this article, doing so would result in substantial theoretical and computational drawbacks. We discuss this in detail in Section C.4 of the Supplementary Material.

Alternative estimators of polyserial correlation have been proposed by Bedrick and Breslin (Reference Bedrick and Breslin1996), Lord (Reference Lord1963), and Brogden (Reference Brogden1949), with Bedrick (Reference Bedrick1990, Reference Bedrick1992), Koopman (Reference Koopman1983) and Kraemer (Reference Kraemer1981) studying the theoretical properties of the latter two. We discuss these approaches in more detail in Section 4.2, after conceptualizing misspecification of the polyserial model.

4 Model misspecification

In order to study the effects of partial model misspecification, we first define this concept and then explain how it differs from distributional misspecification.

4.1 Partial misspecification of the polyserial model

The polyserial model is misspecified if at least one observation for the continuous-ordinal variable pair

![]() $(X,Y)$

has not been generated by the bivariate partially-latent normality model in (3.2). Akin to Welz et al. (Reference Welz, Mair and Alfons2026b), we consider a partial misspecification framework where only a fraction

$(X,Y)$

has not been generated by the bivariate partially-latent normality model in (3.2). Akin to Welz et al. (Reference Welz, Mair and Alfons2026b), we consider a partial misspecification framework where only a fraction

![]() $(1-\varepsilon )$

of observations in a given sample are generated by an underlying normal distribution

$(1-\varepsilon )$

of observations in a given sample are generated by an underlying normal distribution

![]() $P_{X\eta }$

with true parameter

$P_{X\eta }$

with true parameter

![]() $\boldsymbol {\theta }_{\ast}$

, whereas a fixed but unknown fraction

$\boldsymbol {\theta }_{\ast}$

, whereas a fixed but unknown fraction

![]() $\varepsilon $

of observations are generated from some different but unspecified underlying distribution

$\varepsilon $

of observations are generated from some different but unspecified underlying distribution

![]() $H_{X\eta }$

.Footnote

3

Since

$H_{X\eta }$

.Footnote

3

Since

![]() $H_{X\eta }$

is unspecified, its correlation structure may differ from

$H_{X\eta }$

is unspecified, its correlation structure may differ from

![]() $P_{X\eta }$

so that observations

$P_{X\eta }$

so that observations

![]() $(X,Y)$

generated by the underlying

$(X,Y)$

generated by the underlying

![]() $H_{X\eta }$

(after discretization) may be uninformative for the true polyserial correlation coefficient.

$H_{X\eta }$

(after discretization) may be uninformative for the true polyserial correlation coefficient.

Formally, the polyserial model is said to be partially misspecified if the unknown sampling distribution of the observed-latent variable pair

![]() $(X,\eta )$

is given by

$(X,\eta )$

is given by

for

![]() $x,v\in \mathbb {R}$

. Correspondingly, the implied unknown sampling distribution of the observed variables

$x,v\in \mathbb {R}$

. Correspondingly, the implied unknown sampling distribution of the observed variables

![]() $(X,Y)$

reads

$(X,Y)$

reads

for

![]() $x\in \mathbb {R}, y\in \mathcal {Y}$

, where

$x\in \mathbb {R}, y\in \mathcal {Y}$

, where

is the distribution of the observations for which the polyserial model is (partially) misspecified. The unknown and unspecified discretization thresholds

![]() $-\infty = \tau _{\varepsilon ,0} < \tau _{\varepsilon ,1}<\dots < \tau _{\varepsilon ,r-1}< \tau _{\varepsilon ,r} = +\infty $

need not equal the true discretization thresholds

$-\infty = \tau _{\varepsilon ,0} < \tau _{\varepsilon ,1}<\dots < \tau _{\varepsilon ,r-1}< \tau _{\varepsilon ,r} = +\infty $

need not equal the true discretization thresholds

![]() $\tau _{{\ast},1} < \dots < \tau _{{\ast},r-1}$

of the polyserial model. Furthermore, denote by

$\tau _{{\ast},1} < \dots < \tau _{{\ast},r-1}$

of the polyserial model. Furthermore, denote by

![]() $f_{\varepsilon ,XY}$

the unknown density corresponding to the sampling distribution

$f_{\varepsilon ,XY}$

the unknown density corresponding to the sampling distribution

![]() $F_{\varepsilon ,XY}$

.

$F_{\varepsilon ,XY}$

.

Misspecification models of the type in (4.1) are standard in the robust statistics literature, where they are known as Huber contamination models, owing to pioneering work of Huber (Reference Huber1964). Following Welz et al. (Reference Welz, Mair and Alfons2026b), we therefore adopt terminology from robust statistics and call

![]() $\varepsilon $

the contamination fraction, the uninformative

$\varepsilon $

the contamination fraction, the uninformative

![]() $H_{X\eta }$

the contamination distribution (or simply contamination), and

$H_{X\eta }$

the contamination distribution (or simply contamination), and

![]() $F_{\varepsilon ,X\eta }$

the contaminated distribution. In the case of a zero-valued contamination fraction (

$F_{\varepsilon ,X\eta }$

the contaminated distribution. In the case of a zero-valued contamination fraction (

![]() $\varepsilon = 0$

), there is no misspecification so that the polyserial model is correctly specified. Indeed, if

$\varepsilon = 0$

), there is no misspecification so that the polyserial model is correctly specified. Indeed, if

![]() $\varepsilon = 0$

, then

$\varepsilon = 0$

, then

![]() $F_{0,X\eta }(\cdot ,\cdot ) = {P}_{X\eta }^{}\left (\cdot ,\cdot; \boldsymbol {\theta }_{\ast}\right )$

and

$F_{0,X\eta }(\cdot ,\cdot ) = {P}_{X\eta }^{}\left (\cdot ,\cdot; \boldsymbol {\theta }_{\ast}\right )$

and

![]() $F_{0,XY}(\cdot ,\cdot ) = {P}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }_{\ast}\right )$

, so the partial misspecification framework in (4.1) nests the correctly specified polyserial model.

$F_{0,XY}(\cdot ,\cdot ) = {P}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }_{\ast}\right )$

, so the partial misspecification framework in (4.1) nests the correctly specified polyserial model.

Neither the contamination fraction

![]() $\varepsilon $

nor the contamination distribution

$\varepsilon $

nor the contamination distribution

![]() $H_{X\eta }$

in (4.1) is assumed to be known. Thus, both quantities are left completely unspecified in practice, which “means that we are not making any assumptions on the degree, magnitude, or type of contamination (which is possibly absent altogether)” (Welz et al., Reference Welz, Mair and Alfons2026b). Hence, the polyserial model may be misspecified due to an unlimited variety of reasons, for instance, but not limited to outliers or nonnormality in the continuous X, and/or careless responding or item misunderstanding in the ordinal Y. Because

$H_{X\eta }$

in (4.1) is assumed to be known. Thus, both quantities are left completely unspecified in practice, which “means that we are not making any assumptions on the degree, magnitude, or type of contamination (which is possibly absent altogether)” (Welz et al., Reference Welz, Mair and Alfons2026b). Hence, the polyserial model may be misspecified due to an unlimited variety of reasons, for instance, but not limited to outliers or nonnormality in the continuous X, and/or careless responding or item misunderstanding in the ordinal Y. Because

![]() $\varepsilon $

and

$\varepsilon $

and

![]() $H_{X\eta }$

are unspecified in practice, the polyserial model distribution

$H_{X\eta }$

are unspecified in practice, the polyserial model distribution

![]() ${P}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }_{\ast}\right )$

remains the distribution of interest: Our only aim is to estimate the parameter

${P}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }_{\ast}\right )$

remains the distribution of interest: Our only aim is to estimate the parameter

![]() $\boldsymbol {\theta }_{\ast}$

of the polyserial model while reducing the impact of potential contamination in the observed data. Consequently, the contaminated distributions

$\boldsymbol {\theta }_{\ast}$

of the polyserial model while reducing the impact of potential contamination in the observed data. Consequently, the contaminated distributions

![]() $F_{\varepsilon ,XY}$

and

$F_{\varepsilon ,XY}$

and

![]() $F_{\varepsilon ,X\eta }$

are never estimated. They are purely theoretical objects to study the properties of estimators of the polyserial model when that model is partially misspecified due to data contamination.

$F_{\varepsilon ,X\eta }$

are never estimated. They are purely theoretical objects to study the properties of estimators of the polyserial model when that model is partially misspecified due to data contamination.

While no assumption is made on the specific value of the contamination fraction

![]() $\varepsilon $

, we impose the restriction

$\varepsilon $

, we impose the restriction

![]() $\varepsilon \in [0,0.5)$

, which is common in robust statistics (e.g., Hampel et al., Reference Hampel, Ronchetti, Rousseeuw and Stahel1986, p. 67). This restriction ensures proper identification: If half or more of the data points were contaminated, it would no longer be possible to distinguish between contamination and observations generated by the polyserial model, at least not without further assumptions. Under such additional assumptions, also values of

$\varepsilon \in [0,0.5)$

, which is common in robust statistics (e.g., Hampel et al., Reference Hampel, Ronchetti, Rousseeuw and Stahel1986, p. 67). This restriction ensures proper identification: If half or more of the data points were contaminated, it would no longer be possible to distinguish between contamination and observations generated by the polyserial model, at least not without further assumptions. Under such additional assumptions, also values of

![]() $\varepsilon \geq 0.5$

could be considered. We refer to Welz et al. (Reference Welz, Mair and Alfons2026b) for a more detailed discussion.

$\varepsilon \geq 0.5$

could be considered. We refer to Welz et al. (Reference Welz, Mair and Alfons2026b) for a more detailed discussion.

Figure 1 visualizes a simulated example data set generated by the contaminated distribution

![]() $F_{\varepsilon ,X\eta }$

in (4.1) with contamination fraction

$F_{\varepsilon ,X\eta }$

in (4.1) with contamination fraction

![]() $\varepsilon = 0.15$

. In this example, the contamination distribution

$\varepsilon = 0.15$

. In this example, the contamination distribution

![]() $H_{X\eta }$

, whose random draws are orange dots, is a shifted bivariate t-distribution with noncentrality parameter set to

$H_{X\eta }$

, whose random draws are orange dots, is a shifted bivariate t-distribution with noncentrality parameter set to

![]() $(10,-2)^\top $

, scale matrix

$(10,-2)^\top $

, scale matrix

![]() $\mathrm {diag}(0.25, 0.25)$

, and 10 degrees of freedom. The remaining realizations (gray dots) are generated by the bivariate normal distribution

$\mathrm {diag}(0.25, 0.25)$

, and 10 degrees of freedom. The remaining realizations (gray dots) are generated by the bivariate normal distribution

![]() $P_{X\eta }$

with true parameters

$P_{X\eta }$

with true parameters

![]() $\mu _{\ast} = 0$

,

$\mu _{\ast} = 0$

,

![]() $\sigma _{\ast}^2 = 1$

, and

$\sigma _{\ast}^2 = 1$

, and

![]() $\rho _{\ast} = 0.5$

. Here, contamination manifests through somewhat larger values in the X-dimension and inflation of the first two response options in the Y-dimension (after discretization), resulting in a (partially) misspecified polyserial model. Applying the MLE in (3.5) to the observed data for

$\rho _{\ast} = 0.5$

. Here, contamination manifests through somewhat larger values in the X-dimension and inflation of the first two response options in the Y-dimension (after discretization), resulting in a (partially) misspecified polyserial model. Applying the MLE in (3.5) to the observed data for

![]() $(X,Y)$

in Figure 1 yields a sign-flipped polyserial correlation estimate of

$(X,Y)$

in Figure 1 yields a sign-flipped polyserial correlation estimate of

![]() $-0.522$

, representing a substantial bias with respect to the true value of

$-0.522$

, representing a substantial bias with respect to the true value of

![]() $\rho _{\ast} = 0.5$

. In contrast, the robust estimator—which will be introduced in Section 5 and uses the exact same information as ML—estimates a correlation of

$\rho _{\ast} = 0.5$

. In contrast, the robust estimator—which will be introduced in Section 5 and uses the exact same information as ML—estimates a correlation of

![]() $0.498$

, which is accurate for the true value of

$0.498$

, which is accurate for the true value of

![]() $\rho _{\ast} = 0.5$

.

$\rho _{\ast} = 0.5$

.

Figure 1 Simulated data where the polyserial model is misspecified for a fraction

![]() $\varepsilon = 0.15$

of the

$\varepsilon = 0.15$

of the

![]() $N=10,000$

points. The gray dots are draws of

$N=10,000$

points. The gray dots are draws of

![]() $(X,\eta )$

from the polyserial model with true parameters

$(X,\eta )$

from the polyserial model with true parameters

![]() $\rho = 0.5, \mu = 0, \sigma ^2 = 1$

, while the orange dots are draws from a contamination distribution

$\rho = 0.5, \mu = 0, \sigma ^2 = 1$

, while the orange dots are draws from a contamination distribution

![]() $H_{X\eta }$

, being a bivariate t-distribution here with noncentrality parameter

$H_{X\eta }$

, being a bivariate t-distribution here with noncentrality parameter

![]() $(10,-2)^\top $

, scale matrix

$(10,-2)^\top $

, scale matrix

![]() $\mathrm {diag}(0.25, 0.25)$

, and 10 degrees of freedom. The horizontal lines mark the thresholds that discretize the latent

$\mathrm {diag}(0.25, 0.25)$

, and 10 degrees of freedom. The horizontal lines mark the thresholds that discretize the latent

![]() $\eta $

to the observed ordinal Y with five response options. The numbers in parentheses indicate the population marginal probability of the respective response option under the true polyserial model.

$\eta $

to the observed ordinal Y with five response options. The numbers in parentheses indicate the population marginal probability of the respective response option under the true polyserial model.

We stress that there exist nonnormal joint distributions of

![]() $(X,\eta )$

that, after discretizing the latent

$(X,\eta )$

that, after discretizing the latent

![]() $\eta $

variable with fixed thresholds, result in the same density for the observed

$\eta $

variable with fixed thresholds, result in the same density for the observed

![]() $(X,Y)$

as if the partially-latent

$(X,Y)$

as if the partially-latent

![]() $(X,\eta )$

were jointly normal. This phenomenon is known as discretize equivalence of latent variables (Foldnes & Grønneberg, Reference Foldnes and Grønneberg2019). It follows that there exist contamination distributions

$(X,\eta )$

were jointly normal. This phenomenon is known as discretize equivalence of latent variables (Foldnes & Grønneberg, Reference Foldnes and Grønneberg2019). It follows that there exist contamination distributions

![]() $H_{X\eta }$

and contamination fractions

$H_{X\eta }$

and contamination fractions

![]() $\varepsilon> 0$

in (4.1) under which the contaminated density

$\varepsilon> 0$

in (4.1) under which the contaminated density

![]() $f_{\varepsilon ,XY}$

of the observed

$f_{\varepsilon ,XY}$

of the observed

![]() $(X,Y)$

is exactly equal to the polyserial model density in (3.3) at the true parameter, that is,

$(X,Y)$

is exactly equal to the polyserial model density in (3.3) at the true parameter, that is,

![]() $f_{\varepsilon ,XY} (x,y) = {p}_{XY}^{}\left (x,y; \boldsymbol {\theta }_{\ast}\right )$

for all

$f_{\varepsilon ,XY} (x,y) = {p}_{XY}^{}\left (x,y; \boldsymbol {\theta }_{\ast}\right )$

for all

![]() $(x,y)\in \mathbb {R}\times \mathcal {Y}$

. Hence, in this scenario, the polyserial model is misspecified, but the misspecification does not have adverse effects because the true polyserial model density of

$(x,y)\in \mathbb {R}\times \mathcal {Y}$

. Hence, in this scenario, the polyserial model is misspecified, but the misspecification does not have adverse effects because the true polyserial model density of

![]() $(X,Y)$

remains unaffected. To avoid cumbersome notation in our analysis, we follow Welz et al. (Reference Welz, Mair and Alfons2026b) and assume consequential misspecification throughout this article, that is,

$(X,Y)$

remains unaffected. To avoid cumbersome notation in our analysis, we follow Welz et al. (Reference Welz, Mair and Alfons2026b) and assume consequential misspecification throughout this article, that is,

![]() $f_{\varepsilon ,XY} (x,y) \neq {p}_{XY}^{}\left (x,y; \boldsymbol {\theta }_{\ast}\right )$

for at least one response

$f_{\varepsilon ,XY} (x,y) \neq {p}_{XY}^{}\left (x,y; \boldsymbol {\theta }_{\ast}\right )$

for at least one response

![]() $y\in \mathcal {Y}$

whenever

$y\in \mathcal {Y}$

whenever

![]() $\varepsilon> 0$

, given

$\varepsilon> 0$

, given

![]() $x\in \mathbb {R}$

. Nevertheless, “it is silently understood that misspecification need not be consequential” (Welz et al., Reference Welz, Mair and Alfons2026b), in which case there is no practical problem and both ML and robust estimator are consistent for the true

$x\in \mathbb {R}$

. Nevertheless, “it is silently understood that misspecification need not be consequential” (Welz et al., Reference Welz, Mair and Alfons2026b), in which case there is no practical problem and both ML and robust estimator are consistent for the true

![]() $\boldsymbol {\theta }_{\ast}$

.

$\boldsymbol {\theta }_{\ast}$

.

Before we define our robust estimator, we briefly juxtapose the partial misspecification framework to distributional misspecification.

4.2 Distributional misspecification

The polyserial model is said to be distributionally misspecified if it is misspecified for all observations in a sample. This contrasts the partial misspecification framework in (4.1) where the model is only misspecified for a (possibly zero-valued) fraction of the observations. Let

![]() $G = G_{X,\eta }$

denote the unknown joint distribution of X and the latent

$G = G_{X,\eta }$

denote the unknown joint distribution of X and the latent

![]() $\eta $

, which, under distributional misspecification, is nonnormal for all data points. In distributional misspecification, the object of interest is the correlation coefficient between X and

$\eta $

, which, under distributional misspecification, is nonnormal for all data points. In distributional misspecification, the object of interest is the correlation coefficient between X and

![]() $\eta $

under the distribution G, denoted

$\eta $

under the distribution G, denoted

![]() $\rho _G = \mathbb {C}\mathrm {or}_{G} \left [X,\ \eta \right ]$

, instead of the correlation coefficient under bivariate normality (which would be the polyserial correlation coefficient). Although our robust estimator is designed for partial misspecification rather than distributional misspecification, it can in certain situations also offer a robustness gain under distributional misspecification. We discuss this in more detail in Section 7.3.

$\rho _G = \mathbb {C}\mathrm {or}_{G} \left [X,\ \eta \right ]$

, instead of the correlation coefficient under bivariate normality (which would be the polyserial correlation coefficient). Although our robust estimator is designed for partial misspecification rather than distributional misspecification, it can in certain situations also offer a robustness gain under distributional misspecification. We discuss this in more detail in Section 7.3.

5 Robust estimation of polyserial correlation

It is well-known that ML estimation is highly susceptible to partial model misspecification due to data contamination (e.g., Hampel et al., Reference Hampel, Ronchetti, Rousseeuw and Stahel1986; Huber, Reference Huber1964; Huber & Ronchetti, Reference Huber and Ronchetti2009), so it might be desirable to construct alternative estimators that are more robust against contamination. However, attempting to robustify estimation of the polyserial model against contamination bears the inherent challenge of how to handle the mixed-type structure of the data with one continuous and one ordinal variable.

While there exist general frameworks for robust estimation with exclusively continuous variables and exclusively categorical variables, none of them are applicable to mixed variables. On the one hand, robust M-estimation as proposed by Huber (Reference Huber1964) and related methods (e.g., Huber & Ronchetti, Reference Huber and Ronchetti2009) are intended for continuous random variables, and, broadly speaking, achieve robustness by downweighting observations with extreme values. For instance, Alfons et al. (Reference Alfons, Ateş and Groenen2022) use a variation of M-estimation,

![]() $MM$

-estimation, to robustify mediation analyses against outliers, heavy-tailedness, or skewness of the observed distribution. Such estimators are not applicable to mixed data because the ordinal variable cannot take extreme values due to being categorical, nor does it admit a direct numerical interpretation to begin with. On the other hand, the theory of robust C-estimation (Welz, Reference Welz2024) is designed for exclusively categorical variables. C-estimation downweighs categories whose empirical frequency disagrees with their corresponding theoretical frequency under a postulated model. Since one variable in the polyserial model is continuous, it does not have discrete categories, so C-estimators cannot be applied. However, it turns out that the fundamental idea of downweighting data points that cannot be modeled sufficiently well by a postulated model can be exploited to achieve robustness through minimum DPD estimation (Basu et al., Reference Basu, Harris, Hjort and Jones1998). We explain in this section how minimum DPD estimation can be applied to the polyserial model.

$MM$

-estimation, to robustify mediation analyses against outliers, heavy-tailedness, or skewness of the observed distribution. Such estimators are not applicable to mixed data because the ordinal variable cannot take extreme values due to being categorical, nor does it admit a direct numerical interpretation to begin with. On the other hand, the theory of robust C-estimation (Welz, Reference Welz2024) is designed for exclusively categorical variables. C-estimation downweighs categories whose empirical frequency disagrees with their corresponding theoretical frequency under a postulated model. Since one variable in the polyserial model is continuous, it does not have discrete categories, so C-estimators cannot be applied. However, it turns out that the fundamental idea of downweighting data points that cannot be modeled sufficiently well by a postulated model can be exploited to achieve robustness through minimum DPD estimation (Basu et al., Reference Basu, Harris, Hjort and Jones1998). We explain in this section how minimum DPD estimation can be applied to the polyserial model.

For further reference, define the Kullback–Leibler (KL) divergence (Kullback & Leibler, Reference Kullback and Leibler1951) between two bivariate densities

![]() $g_1$

and

$g_1$

and

![]() $g_2$

, each defined on

$g_2$

, each defined on

![]() $\mathbb {R}^2$

(or a common subset thereof), by

$\mathbb {R}^2$

(or a common subset thereof), by

$$ \begin{align} {\mathrm{KL}}\left(g_1 \ \big|\big|\ g_2\right) = \int\int g_1(s,t) \log\left( \frac{g_1(s,t)}{g_2(s,t)} \right) \mathrm{d} t \mathrm{d} s, \end{align} $$

$$ \begin{align} {\mathrm{KL}}\left(g_1 \ \big|\big|\ g_2\right) = \int\int g_1(s,t) \log\left( \frac{g_1(s,t)}{g_2(s,t)} \right) \mathrm{d} t \mathrm{d} s, \end{align} $$

where the integrals are taken over the densities’ domain. If the second dimension of the densities corresponds to a discrete random variable, that is, the first variable is continuous, but the second one is discrete, replace the inner integral in (5.1) by a summation over the second variable’s domain.Footnote 4

Throughout this section, suppose that one has access to a random sample of N independent continuous-ordinal variable pairs,

![]() $(X_i,Y_i), i = 1,\dots , N$

, following the unknown sampling distribution

$(X_i,Y_i), i = 1,\dots , N$

, following the unknown sampling distribution

![]() $F_{\varepsilon ,XY}$

in (4.2). Hence, the polyserial model is possibly misspecified for an unknown fraction

$F_{\varepsilon ,XY}$

in (4.2). Hence, the polyserial model is possibly misspecified for an unknown fraction

![]() $\varepsilon $

of the observed sample.

$\varepsilon $

of the observed sample.

5.1 Maximum likelihood revisited

The observed sample can be uniquely characterized by a particular empirical density function

for

![]() $x\in \mathbb {R}, y\in \mathcal {Y}$

, where the indicator function

$x\in \mathbb {R}, y\in \mathcal {Y}$

, where the indicator function ![]() takes value 1 if an event E is true, and 0 otherwise. As such, the empirical density

takes value 1 if an event E is true, and 0 otherwise. As such, the empirical density

![]() $\widehat {f}_{N}$

only takes nonzero values when evaluated at points in the observed sample

$\widehat {f}_{N}$

only takes nonzero values when evaluated at points in the observed sample

![]() $\{(X_i,Y_i)\}_{i=1}^N$

. The MLE

$\{(X_i,Y_i)\}_{i=1}^N$

. The MLE

![]() $\widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

in (3.5) can be expressed as the minimizer of the KL divergence between the empirical density

$\widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

in (3.5) can be expressed as the minimizer of the KL divergence between the empirical density

![]() $\widehat {f}_{N}$

and model density

$\widehat {f}_{N}$

and model density

![]() ${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

, which is given by

${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

, which is given by

$$ \begin{align} {\mathrm{KL}}\left(\widehat{f}_{N} \ \big|\big|\ {p}_{XY}^{}\left(\cdot,\cdot; \boldsymbol{\theta}\right)\right) = \frac{1}{N}\sum_{i=1}^N\Bigg( \log\left(\widehat{f}_{N}^{}\left(X_i,Y_i\right) \right) - \log\left( {p}_{XY}^{}\left(X_i,Y_i; \boldsymbol{\theta}\right)\right) \Bigg) \end{align} $$

$$ \begin{align} {\mathrm{KL}}\left(\widehat{f}_{N} \ \big|\big|\ {p}_{XY}^{}\left(\cdot,\cdot; \boldsymbol{\theta}\right)\right) = \frac{1}{N}\sum_{i=1}^N\Bigg( \log\left(\widehat{f}_{N}^{}\left(X_i,Y_i\right) \right) - \log\left( {p}_{XY}^{}\left(X_i,Y_i; \boldsymbol{\theta}\right)\right) \Bigg) \end{align} $$

and follows by definition of

![]() $\widehat {f}_{N}$

and the KL divergence in (5.1) as well as the convention

$\widehat {f}_{N}$

and the KL divergence in (5.1) as well as the convention

![]() $0\log (0)=0$

.Footnote

5

We refer to White (Reference White1982) for a detailed exposition of the connection between KL divergence and ML.

$0\log (0)=0$

.Footnote

5

We refer to White (Reference White1982) for a detailed exposition of the connection between KL divergence and ML.

As alluded to earlier, ML estimation tends to be highly susceptible to contamination in the observed sample. It may therefore be desirable to consider alternatives that are designed to more robust against contamination. It turns out that the idea of minimizing a given divergence between the empirical density

![]() $\widehat {f}_{N}$

and the model density

$\widehat {f}_{N}$

and the model density

![]() ${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

can be exploited to construct contamination-robust estimators by choosing alternative divergences to the KL divergence. This is exactly what minimum power divergence estimation (Basu et al., Reference Basu, Harris, Hjort and Jones1998) does.

${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

can be exploited to construct contamination-robust estimators by choosing alternative divergences to the KL divergence. This is exactly what minimum power divergence estimation (Basu et al., Reference Basu, Harris, Hjort and Jones1998) does.

5.2 Minimum power divergence

Basu et al. (Reference Basu, Harris, Hjort and Jones1998) propose a class of divergences between two densities that generalizes the KL divergence, but is less affected by data contamination. Suppose

![]() $g_1$

and

$g_1$

and

![]() $g_2$

are bivariate densities supported on

$g_2$

are bivariate densities supported on

![]() $\mathbb {R}^2$

or a common subset thereof. For a fixed tuning constant

$\mathbb {R}^2$

or a common subset thereof. For a fixed tuning constant

![]() $\alpha> 0$

, the divergence of Basu et al. (Reference Basu, Harris, Hjort and Jones1998) between

$\alpha> 0$

, the divergence of Basu et al. (Reference Basu, Harris, Hjort and Jones1998) between

![]() $g_1$

and

$g_1$

and

![]() $g_2$

is defined by

$g_2$

is defined by

$$ \begin{align*} {\mathrm{D}_\alpha}\left(g_1 \ \big|\big|\ g_2\right) = \int\int \Bigg( g_2^{1+\alpha}(s,t) - \left(1+\frac{1}{\alpha}\right)g_1(s,t)g_2^\alpha(s,t) + \frac{1}{\alpha}g_1^{1+\alpha}(s,t) \Bigg)\mathrm{d} t\mathrm{d} s, \end{align*} $$

$$ \begin{align*} {\mathrm{D}_\alpha}\left(g_1 \ \big|\big|\ g_2\right) = \int\int \Bigg( g_2^{1+\alpha}(s,t) - \left(1+\frac{1}{\alpha}\right)g_1(s,t)g_2^\alpha(s,t) + \frac{1}{\alpha}g_1^{1+\alpha}(s,t) \Bigg)\mathrm{d} t\mathrm{d} s, \end{align*} $$

where each integral is taken over the densities’ domain. Note that the divergence

![]() $\mathrm {D}_\alpha $

is not defined at

$\mathrm {D}_\alpha $

is not defined at

![]() $\alpha = 0$

. To overcome this issue, Basu et al. (Reference Basu, Harris, Hjort and Jones1998) define

$\alpha = 0$

. To overcome this issue, Basu et al. (Reference Basu, Harris, Hjort and Jones1998) define

![]() $\mathrm {D}_0$

as the limit of

$\mathrm {D}_0$

as the limit of

![]() $\mathrm {D}_\alpha $

as

$\mathrm {D}_\alpha $

as

![]() $\alpha \downarrow 0$

, that is,

$\alpha \downarrow 0$

, that is,

$$ \begin{align} {\mathrm{D}_0}\left(g_1 \ \big|\big|\ g_2\right) = \lim_{\alpha\downarrow 0}{\mathrm{D}_\alpha}\left(g_1 \ \big|\big|\ g_2\right) = \int\int g_1(s,t) \log\left( \frac{g_1(s,t)}{g_2(s,t)} \right) \mathrm{d} t \mathrm{d} s = {\mathrm{KL}}\left(g_1 \ \big|\big|\ g_2\right), \end{align} $$

$$ \begin{align} {\mathrm{D}_0}\left(g_1 \ \big|\big|\ g_2\right) = \lim_{\alpha\downarrow 0}{\mathrm{D}_\alpha}\left(g_1 \ \big|\big|\ g_2\right) = \int\int g_1(s,t) \log\left( \frac{g_1(s,t)}{g_2(s,t)} \right) \mathrm{d} t \mathrm{d} s = {\mathrm{KL}}\left(g_1 \ \big|\big|\ g_2\right), \end{align} $$

where the second equality follows from the identity

![]() $z\mapsto \log z = \lim _{\alpha \downarrow 0} \alpha ^{-1}(z^\alpha - 1)$

and the third equality from the definition of the KL divergence in (5.1). Basu et al. (Reference Basu, Harris, Hjort and Jones1998) call the divergence

$z\mapsto \log z = \lim _{\alpha \downarrow 0} \alpha ^{-1}(z^\alpha - 1)$

and the third equality from the definition of the KL divergence in (5.1). Basu et al. (Reference Basu, Harris, Hjort and Jones1998) call the divergence

![]() $\mathrm {D}_\alpha , \alpha \geq 0$

, the DPD. It follows that the DPD generalizes the KL divergence. We explain in a moment the intuition behind the DPD.

$\mathrm {D}_\alpha , \alpha \geq 0$

, the DPD. It follows that the DPD generalizes the KL divergence. We explain in a moment the intuition behind the DPD.

As before, if the second dimension of the two densities

![]() $g_1, g_2$

corresponds to a discrete random variable, that is, the first variable in

$g_1, g_2$

corresponds to a discrete random variable, that is, the first variable in

![]() $g_j$

is continuous, but the second one is discrete, replace the inner integral in the DPD

$g_j$

is continuous, but the second one is discrete, replace the inner integral in the DPD

![]() $\mathrm {D}_\alpha , \alpha \geq 0$

, by a summation over the second variable’s domain.

$\mathrm {D}_\alpha , \alpha \geq 0$

, by a summation over the second variable’s domain.

5.3 Proposed robust estimator

For

![]() $\alpha> 0$

, the DPD between the empirical density

$\alpha> 0$

, the DPD between the empirical density

![]() $\widehat {f}_{N}$

in (5.2) and the polyserial model’s density

$\widehat {f}_{N}$

in (5.2) and the polyserial model’s density

![]() ${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

reads

${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

reads

$$ \begin{align} {\mathrm{D}_\alpha}\left(\widehat{f}_{N} \ \big|\big|\ {p}_{XY}^{}\left(\cdot,\cdot; \boldsymbol{\theta}\right)\right) = \int_{\mathbb{R}} \sum_{y\in\mathcal{Y}}{p}_{XY}^{1+\alpha}\left(x,y; \boldsymbol{\theta}\right)\mathrm{d} x - (1+\alpha^{-1}) \frac{1}{N}\sum_{i=1}^N {p}_{XY}^{\alpha}\left(X_i,Y_i; \boldsymbol{\theta}\right) + \alpha^{-1}, \end{align} $$

$$ \begin{align} {\mathrm{D}_\alpha}\left(\widehat{f}_{N} \ \big|\big|\ {p}_{XY}^{}\left(\cdot,\cdot; \boldsymbol{\theta}\right)\right) = \int_{\mathbb{R}} \sum_{y\in\mathcal{Y}}{p}_{XY}^{1+\alpha}\left(x,y; \boldsymbol{\theta}\right)\mathrm{d} x - (1+\alpha^{-1}) \frac{1}{N}\sum_{i=1}^N {p}_{XY}^{\alpha}\left(X_i,Y_i; \boldsymbol{\theta}\right) + \alpha^{-1}, \end{align} $$

which follows from writing out

![]() $\widehat {f}_{N}$

. For the choice

$\widehat {f}_{N}$

. For the choice

![]() $\alpha = 0$

, the DPD

$\alpha = 0$

, the DPD

![]() ${\mathrm {D}_0}\left (\widehat {f}_{N} \ \big |\big |\ {p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )\right )$

reduces to the KL divergence in (5.3) by (5.4).

${\mathrm {D}_0}\left (\widehat {f}_{N} \ \big |\big |\ {p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )\right )$

reduces to the KL divergence in (5.3) by (5.4).

For a pre-specified tuning constant

![]() $\alpha \geq 0$

, our proposed estimator minimizes the divergence

$\alpha \geq 0$

, our proposed estimator minimizes the divergence

![]() $\mathrm {D}_\alpha $

between

$\mathrm {D}_\alpha $

between

![]() $\widehat {f}_{N}$

and

$\widehat {f}_{N}$

and

![]() ${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

with respect to the model parameter

${p}_{XY}^{}\left (\cdot ,\cdot; \boldsymbol {\theta }\right )$

with respect to the model parameter

![]() $\boldsymbol {\theta }\in \boldsymbol {\Theta }$

. Specifically, our proposed estimator is given by

$\boldsymbol {\theta }\in \boldsymbol {\Theta }$

. Specifically, our proposed estimator is given by

In particular, if

![]() $\alpha = 0$

, the estimator

$\alpha = 0$

, the estimator

![]() $\widehat {\boldsymbol {\theta }}_N$

coincides with the MLE

$\widehat {\boldsymbol {\theta }}_N$

coincides with the MLE

![]() $\widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

(see Section 5.1). Since it minimizes a DPD, estimator

$\widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

(see Section 5.1). Since it minimizes a DPD, estimator

![]() $\widehat {\boldsymbol {\theta }}_N$

constitutes a minimum DPD estimator (Basu et al., Reference Basu, Harris, Hjort and Jones1998). As such, a limit theory for

$\widehat {\boldsymbol {\theta }}_N$

constitutes a minimum DPD estimator (Basu et al., Reference Basu, Harris, Hjort and Jones1998). As such, a limit theory for

![]() $\widehat {\boldsymbol {\theta }}_N$

is readily available from Basu et al. (Reference Basu, Harris, Hjort and Jones1998), who derive the theoretical properties of such estimators for general models. Before we turn to the estimator’s limit theory, we first provide some intuition as to why minimum DPD estimators are more robust than ML whenever

$\widehat {\boldsymbol {\theta }}_N$

is readily available from Basu et al. (Reference Basu, Harris, Hjort and Jones1998), who derive the theoretical properties of such estimators for general models. Before we turn to the estimator’s limit theory, we first provide some intuition as to why minimum DPD estimators are more robust than ML whenever

![]() $\alpha> 0$

.

$\alpha> 0$

.

An estimator defined as the minimum of a (differentiable) loss function can be equivalently characterized as a root of the loss’ gradient; a characterization known as estimating equation. The estimating equation of the MLE

![]() $(\alpha = 0)$

can be written as

$(\alpha = 0)$

can be written as

$$\begin{align*}\frac{1}{N} \sum_{i=1}^N \boldsymbol{u}_{\boldsymbol{\theta}}\left(X_i,Y_i\right) = \boldsymbol{0}, \end{align*}$$

$$\begin{align*}\frac{1}{N} \sum_{i=1}^N \boldsymbol{u}_{\boldsymbol{\theta}}\left(X_i,Y_i\right) = \boldsymbol{0}, \end{align*}$$

which is satisfied for

![]() $\boldsymbol {\theta } = \widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

, and where the d-vector

$\boldsymbol {\theta } = \widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

, and where the d-vector

denotes the log-likelihood score function. A closed-form expression of

![]() $\boldsymbol {u}_{\boldsymbol {\theta }}\left (x,y\right )$

is provided in Section A of the Supplementary Material. Analogously, the estimating equation of the proposed estimator

$\boldsymbol {u}_{\boldsymbol {\theta }}\left (x,y\right )$

is provided in Section A of the Supplementary Material. Analogously, the estimating equation of the proposed estimator

![]() $\widehat {\boldsymbol {\theta }}_N$

in (5.6) can be shown to read

$\widehat {\boldsymbol {\theta }}_N$

in (5.6) can be shown to read

$$ \begin{align} \frac{1}{N}\sum_{i=1}^N {p}_{XY}^{\alpha}\left(X_i, Y_i; \boldsymbol{\theta}\right) \boldsymbol{u}_{\boldsymbol{\theta}}\left(X_i, Y_i\right) - \boldsymbol{c}_\alpha(\boldsymbol{\theta}) = \boldsymbol{0}, \end{align} $$

$$ \begin{align} \frac{1}{N}\sum_{i=1}^N {p}_{XY}^{\alpha}\left(X_i, Y_i; \boldsymbol{\theta}\right) \boldsymbol{u}_{\boldsymbol{\theta}}\left(X_i, Y_i\right) - \boldsymbol{c}_\alpha(\boldsymbol{\theta}) = \boldsymbol{0}, \end{align} $$

which is satisfied for

![]() $\boldsymbol {\theta } = \widehat {\boldsymbol {\theta }}_N$

and where

$\boldsymbol {\theta } = \widehat {\boldsymbol {\theta }}_N$

and where

![]() $ \boldsymbol {c}_\alpha (\boldsymbol {\theta }) = \int _{\mathbb {R}}\sum _{y\in \mathcal {Y}}{p}_{XY}^{1+\alpha }\left (x,y; \boldsymbol {\theta }\right )\boldsymbol {u}_{\boldsymbol {\theta }}\left (x,y\right )\mathrm {d} x $

is a correction factor independent of observed data. Observe that for

$ \boldsymbol {c}_\alpha (\boldsymbol {\theta }) = \int _{\mathbb {R}}\sum _{y\in \mathcal {Y}}{p}_{XY}^{1+\alpha }\left (x,y; \boldsymbol {\theta }\right )\boldsymbol {u}_{\boldsymbol {\theta }}\left (x,y\right )\mathrm {d} x $

is a correction factor independent of observed data. Observe that for

![]() $\alpha = 0$

, the estimating equation (5.7) equals that of the MLE,Footnote

6

in which all observations in the sample

$\alpha = 0$

, the estimating equation (5.7) equals that of the MLE,Footnote

6

in which all observations in the sample

![]() $\{(X_i, Y_i)\}_{i=1}^N$

are weighted equally. Conversely, when

$\{(X_i, Y_i)\}_{i=1}^N$

are weighted equally. Conversely, when

![]() $\alpha> 0$

, the term

$\alpha> 0$

, the term

$$\begin{align*}\frac{1}{N}\sum_{i=1}^N {p}_{XY}^{\alpha}\left(X_i, Y_i; \boldsymbol{\theta}\right) \boldsymbol{u}_{\boldsymbol{\theta}}\left(X_i, Y_i\right) \end{align*}$$

$$\begin{align*}\frac{1}{N}\sum_{i=1}^N {p}_{XY}^{\alpha}\left(X_i, Y_i; \boldsymbol{\theta}\right) \boldsymbol{u}_{\boldsymbol{\theta}}\left(X_i, Y_i\right) \end{align*}$$

in estimating equation (5.7) “provides a relative-to-the-model downweighting for [contaminated] Footnote 7 observations” (Basu et al., Reference Basu, Harris, Hjort and Jones1998, p. 551) with individual-specific weights

A weight

![]() $\widetilde {w}_{i,\alpha }(\boldsymbol {\theta })$

is bounded from below by 0 and takes values close to 0 for observations that the polyserial model cannot fit well, such as contaminated data points. As such, “observations that are widely discrepant to the [polyserial] model will get nearly zero weights” (Basu et al., Reference Basu, Harris, Hjort and Jones1998, pp. 551–552). Larger choices of

$\widetilde {w}_{i,\alpha }(\boldsymbol {\theta })$

is bounded from below by 0 and takes values close to 0 for observations that the polyserial model cannot fit well, such as contaminated data points. As such, “observations that are widely discrepant to the [polyserial] model will get nearly zero weights” (Basu et al., Reference Basu, Harris, Hjort and Jones1998, pp. 551–552). Larger choices of

![]() $\alpha> 0$

lead to more stringent downweighting. For instance, a contaminated observation with an ordinal response

$\alpha> 0$

lead to more stringent downweighting. For instance, a contaminated observation with an ordinal response

![]() $Y_i$

that strongly disagrees with the latent threshold structure implied by the model density

$Y_i$

that strongly disagrees with the latent threshold structure implied by the model density

![]() $p_{XY}$

would receive a weight close to 0.

$p_{XY}$

would receive a weight close to 0.

If contamination is absent

![]() $(\varepsilon = 0)$

, it can be shown that the robust estimator

$(\varepsilon = 0)$

, it can be shown that the robust estimator

![]() $\widehat {\boldsymbol {\theta }}_N$

and the MLE

$\widehat {\boldsymbol {\theta }}_N$

and the MLE

![]() $\widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

in (3.5) are asymptotically equal to one another for all choices

$\widehat {\boldsymbol {\theta }}_N^{\mathrm {\ MLE}}$

in (3.5) are asymptotically equal to one another for all choices

![]() $\alpha \geq 0$

. This property is implied by the property of Fisher consistency that minimum DPD estimators possess (implied by Theorem 1 in Basu et al., Reference Basu, Harris, Hjort and Jones1998, see page 551 therein).

$\alpha \geq 0$

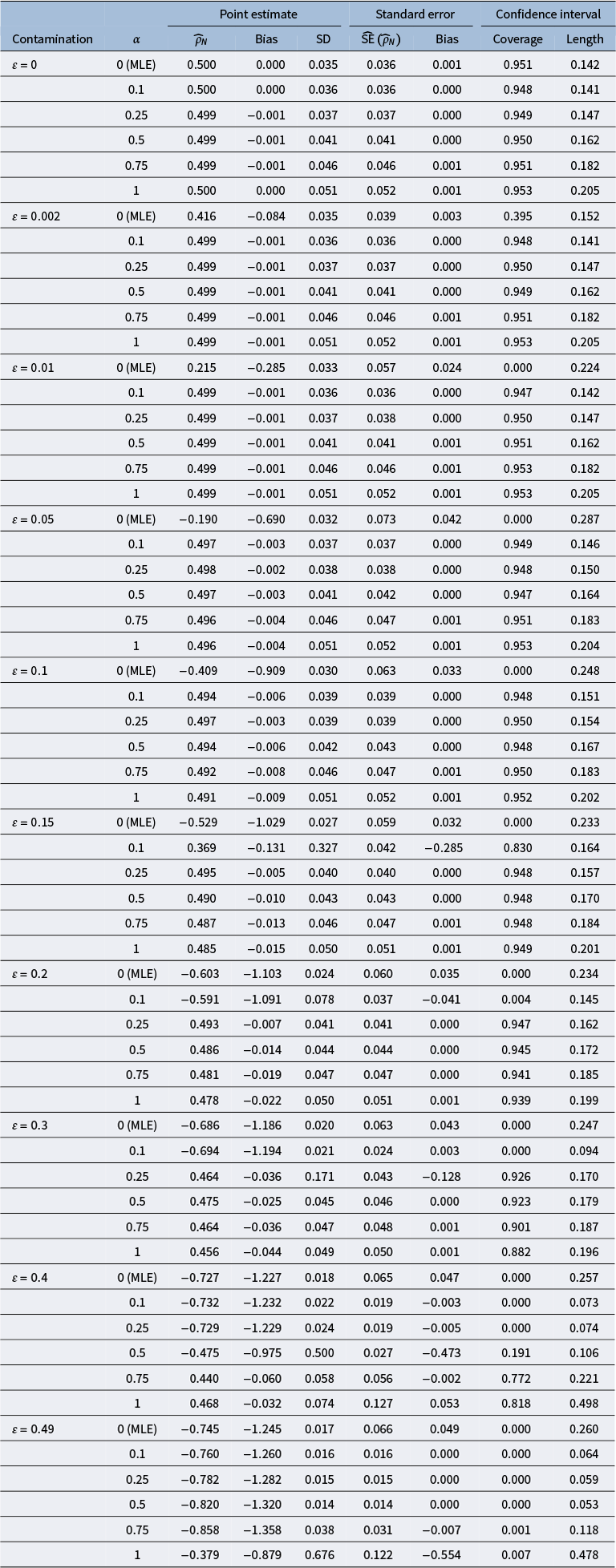

. This property is implied by the property of Fisher consistency that minimum DPD estimators possess (implied by Theorem 1 in Basu et al., Reference Basu, Harris, Hjort and Jones1998, see page 551 therein).