1. Introduction

Human language is constantly evolving. The world we live in is governed by information and communication technologies. Our time, sometimes dubbed the digital era, must thus be prepared to face changes in the way we communicate, and implement mechanisms to adapt to it.

Changes in communication derive from multiple aspects. The use of non-standard written language might stem from cultural or societal factors, among others, or it may simply happen by mistake. In this survey, we refer to both phenomena as misspellings. Misspellings have become pervasive in the digital written production since the revolutionary Web 2.0 led people interact freely through social media, blogs, forums, etc. Even though misspellings are generally unintentional, in some contexts, these may also be intentional. Of course, the presence of misspellings complicates the reading of a text. Notwithstanding this, and somehow, surprisingly, humans have the ability to read and comprehend misspelt text without much effort and, sometimes, even without realising their presence (Andrews Reference Andrews1996; Healy Reference Healy1976; McCusker et al. Reference McCusker, Gough and Bias1981; Shook et al. Reference Shook, Chabal, Bartolotti and Marian2012). Computers do not have similar capabilities, though. Although the NLP community has long downplayed the problem of misspellings (if not for grammatical error correction (Shishibori et al. Reference Shishibori, Lee, Oono and Aoe2002) or text normalisation (Damerau Reference Damerau1964)), it is by now abundant evidence that misspellings represent a serious risk to the performance of NLP systems (Baldwin et al. Reference Baldwin, Cook, Lui, MacKinlay and Wang2013; Heigold et al. Reference Heigold, Varanasi, Neumann and van Genabith2018; Moradi and Samwald Reference Moradi and Samwald2021; Náplava et al. Reference Náplava, Popel, Straka and Straková2021; Nguyen and Grieve Reference Nguyen and Grieve2020; Plank Reference Plank2016; Vinciarelli Reference Vinciarelli2005; Yang and Gao Reference Yang and Gao2019), even for the latest generation of large language models (Moffett and Dhingra Reference Moffett and Dhingra2025).

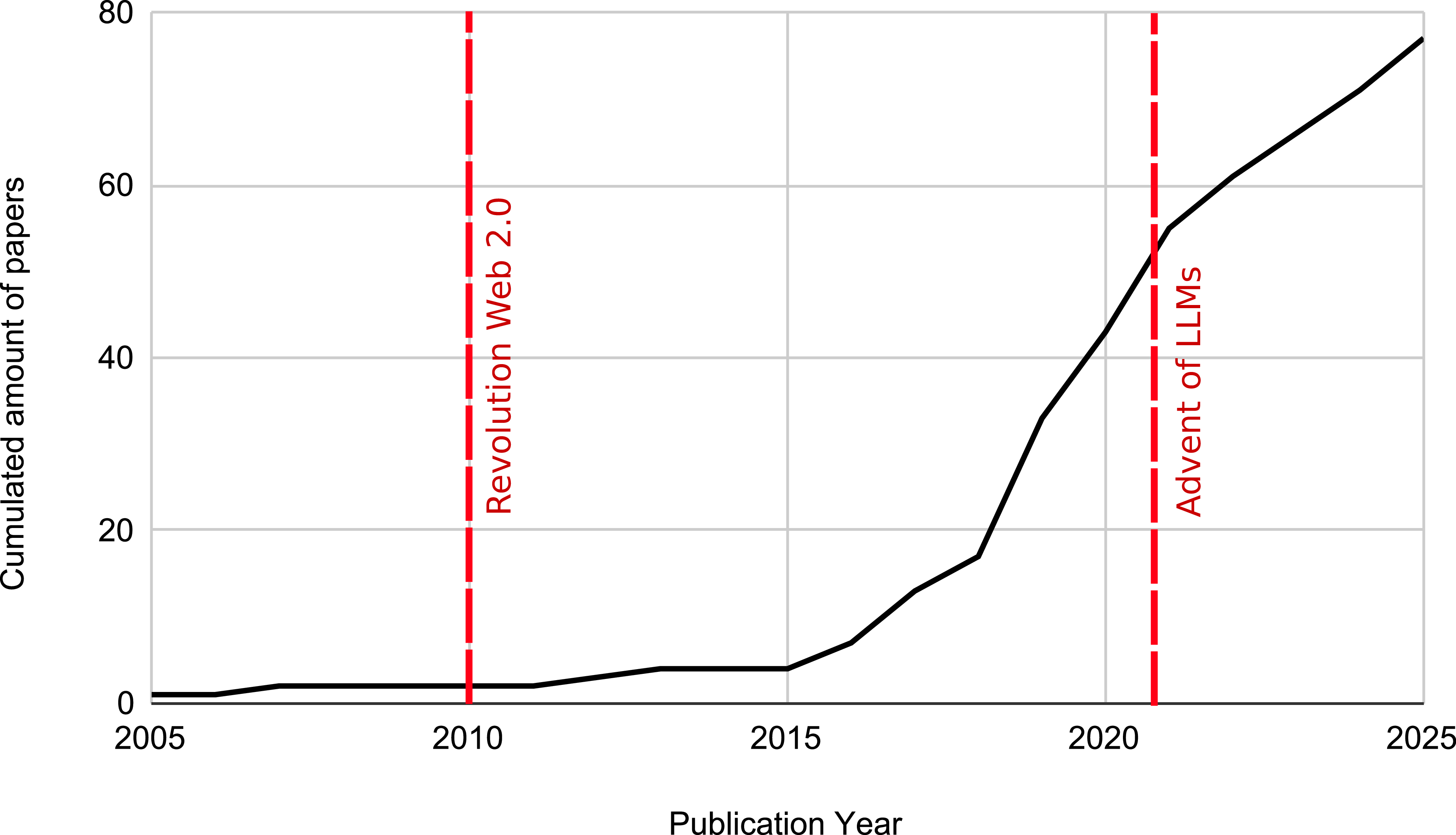

This survey addresses misspellings as a pervasive phenomenon that negatively impacts downstream NLP tasks. We do not focus on general-purpose automatic spelling correction methods, for which recent, comprehensive reference material is already available (see, e.g., Bryant et al. Reference Bryant, Yuan, Qorib, Cao, Ng and Briscoe2023; Hládek et al. Reference Hládek, Staš and Pleva2020; Wang et al. Reference Wang, Wang, Dang, Liu and Liu2021b). Instead, our focus is on the implications and challenges that misspellings pose for NLP methods that are explicitly designed to be robust to them in downstream applications. We cover research published since 2009, as earlier work is already comprehensively reviewed by Subramaniam et al. (Reference Subramaniam, Roy, Faruquie and Negi2009). Since then, the topic has gained increasing attention. A growing number of methods have been proposed to specifically address the problem of misspellings (Belinkov and Bisk Reference Belinkov and Bisk2018; Heigold et al. Reference Heigold, Varanasi, Neumann and van Genabith2018), alongside dedicated benchmarks (Michel and Neubig Reference Michel and Neubig2018) and even shared tasks and data challenges (Basili et al. Reference Basili, Lopresti, Ringlstetter, Roy, Schulz and Subramaniam2010; Dey et al. Reference Dey, Govindaraju, Lopresti, Natarajan, Ringlstetter and Roy2011; Lopresti et al. Reference Lopresti, Roy, Schulz and Subramaniam2009). This survey aims to provide a comprehensive overview of these recent advancements in the field.

The study of misspellings in NLP is paramount not only as a means for improving the performance of current systems, but also for reasons that are ultimately bound to safety and ethics. Misspellings are, as hinted above, not always an involuntary phenomenon. Misspellings may sometimes be carefully and maliciously designed (Li et al. Reference Li, Ji, Du, Li and Wang2019a) with the purpose of disguising certain words to elude the control of automatic content moderation tools or spam detection filters. The study of misspelling can help in mitigating the proliferation of hate speech or in preventing unwanted content from reaching the final audience. Additionally, the fact that certain misspellings act as obfuscations for computers but not for humans suggests that studying this phenomenon from a psycholinguistic perspective might inspire alternative, more efficient methods for text representation and processing.

The rest of this survey is organised as follows. In Section 2, we offer an overview of the history of misspellings in the digital era, analysing the main trends before and after the proliferation of user-generated content, the upsurge of neural networks and the advent of large language models (LLMs). In Section 3, we describe how the phenomenon is regarded through the lens of linguistics and NLP. In Section 4, we survey previous work on the potential harm of misspellings. Section 5 is devoted to describing methods specifically devised to counter misspellings. Section 6 deals with the challenges misspellings pose in multilingual contexts. The main tasks, evaluation measures, venues and datasets dedicated to misspellings are discussed in Section 7. Section 8 is devoted to analysing the phenomenon of misspellings from the point of view of modern LLMs. Section 9 discusses the main applications in which the presence of misspellings gains special relevance. Section 10 concludes by also pointing to promising directions of research.

2. A brief history of misspellings

The history of misspellings in NLP spans several decades, dating back at least to Blair (Reference Blair1960); Damerau (Reference Damerau1964) seminal works on spelling error detection and correction published in the 1960s. Here, we provide a concise overview of this long-standing topic, focusing on three main phases that hinge upon the proliferation of the so-called Web 2.0 and the subsequent spread of (often carelessly generated) user-generated content, and the advent of LLMs. The term Web 2.0 was first coined by Darcy DiNucci in 1999, but it was not until 2004 that it gained popularity through the Web 2.0 Conference.Footnote 1 It took some time for user-generated content to take hold on the Internet, something we identify as happening around 2010. This section, therefore, briefly surveys the history of misspellings before (Section 2.1) and after (Section 2.2) this turning point.

2.1 Before 2010: fewer data, less misspellings

Before the explosion of user-generated data on the Internet, the vast majority of content available on the web (static web pages, journal articles, etc.) was characterised by the fact that the content was moderately well curated. As a result, the amount of data was relatively limited, and the available data contained few misspellings. For this reason, automated text analysis technologies were rarely concerned with the presence of misspellings, if at all. The study of misspellings was confined to the development of automatic correction tools that aid users in producing misspelling-free texts by, for example, correcting typos or applying OCR-produced errors.

Arguably, the first works on misspellings were those by Blair (Reference Blair1960) and Damerau (Reference Damerau1964), which proposed the earliest dictionary-based methods for spelling correction. In this survey, we do not focus on spelling correction per se (we refer the interested reader to Bryant et al. Reference Bryant, Yuan, Qorib, Cao, Ng and Briscoe2023; Hládek et al. Reference Hládek, Staš and Pleva2020; Wang et al. Reference Wang, Wang, Dang, Liu and Liu2021b), but rather on NLP systems designed to be resilient to misspellings. In this context, Vinciarelli (Reference Vinciarelli2005) stands out as one of the pioneering studies, specifically addressing errors introduced by OCR technologies.

Some studies seemed to indicate that the problem of misspellings was not paramount for text classification technologies, at least when these concern the classification by topic of (curated) text documents (Agarwal et al. Reference Agarwal, Godbole, Punjani and Roy2007). The situation differed somewhat when shifting to other, less curated sources, such as emails, blogs, forums and SMS data, or when analysing the output generated by automatic speech recognition engines from call centres. The problem attracted little attention from the research community at the time, and it was not until 2007 that a dedicated workshop, called Analysis for Noisy Unstructured Text Data (AND), emerged and renewed interest in the field (see also Section 7.4).

To the best of our knowledge, the only survey on NLP systems robust to noise was published in 2009 (Subramaniam et al. Reference Subramaniam, Roy, Faruquie and Negi2009). This survey primarily focused on handling noise in OCR scans, blogs, call centre transcriptions and similar sources.

2.2 After 2010: the rise of social networks and deep learning

Since 2010, user-generated content has become increasingly pervasive, mainly due to the revolution of social networks. At the same time, deep learning technologies have taken the world by storm (Krizhevsky et al. Reference Krizhevsky, Sutskever and Hinton2012), not only due to the increase in performance they show off in most NLP tasks (Collobert et al. Reference Collobert, Weston, Bottou, Karlen, Kavukcuoglu and Kuksa2011), but also because of their potential to eliminate the need for manual feature engineering; instead, the neural network itself learns to represent the input. This raises questions about the necessity of a pre-processing step for correcting misspellings beforehand.

The increasing prevalence of misspelt data and the proliferation of NLP technologies have inspired numerous studies analysing the impact of misspellings on state-of-the-art models (Section 4), as well as papers proposing systems that are resilient to misspellings (Section 5).

The study of misspellings in NLP presents significant benefits. The most apparent advantage is the enhancement of performance in any NLP tool, but not only. Systems that are resilient to misspellings are also safer. For reasons discussed later, some misspellings are intentional, designed to evade the scrutiny of content moderation tools or spam filters. Ultimately, the study of misspelling resilience aims to deepen our understanding of language (further discussions are offered in Section 10).

This increased interest in the subject was partially fostered by the work of Belinkov and Bisk (Reference Belinkov and Bisk2018); Heigold et al. (Reference Heigold, Varanasi, Neumann and van Genabith2018); Edizel et al. (Reference Edizel, Piktus, Bojanowski, Ferreira, Grave and Silvestri2019), who showed the performance of different models decrease noticeably in the presence of misspellings. This renewed momentum has led to the appearance of dedicated workshops devoted to studying the phenomenon from the point of view of user-generated content (such as the WNUT workshop seriesFootnote 2 ) as well as from the point of view of machine translation (such as the WMT workshop/conference seriesFootnote 3 ); more information about dedicated tasks and datasets can be found in Section 7.4.

Between 2017 and 2022, neural machine translation emerged as the most prolific field in the study of misspellings, closely followed by sentiment analysis.

2.3 After 2020: the advent of LLMs

In recent years, the trend has shifted markedly: whereas earlier research focused heavily on developing methods to combat misspellings in downstream tasks (with a disproportionate emphasis on machine translation), the field has now moved toward a growing number of evaluation papers that analyse the ability of LLMs to handle misspellings.

The advent of LLMs around 2020–2021 has dramatically reshaped the NLP and AI landscape, including research on misspelling resilience. Due to their high computational costs, LLMs are not easily amenable to experimentation with new training methods aimed at improving robustness to misspellings. Moreover, some language models (especially commercial ones) already exhibit strong resistance to misspellings (Moffett and Dhingra Reference Moffett and Dhingra2025). Nevertheless, there is substantial interest in understanding how LLMs handle misspellings in both downstream tasks and more general settings, as evidenced by the relatively high number of papers published on the topic between 2024 and 2025 (see, e.g., Pan et al. Reference Pan, Leng and Xiong2024; Wang et al. Reference Wang, Hu, Hou, Chen, Zheng, Wang, Yang, Ye, Huang, Geng, Jiao, Zhang and Xie2024, Reference Wang, Gu, Wei, Gao, Song and Chen2025; Zhang et al. Reference Zhang, Hao, Li, Zhang and Zhao2024).

Figure 1 shows the publication trends with respect to all NLP papers related to misspellings.

Publication trends in NLP papers on misspellings (2004–2025).

3. What is a misspelling?

The term misspelling is too broad a concept, which has come to encompass many different types of unconventional typographical alterations. In this section, we turn to review the main considerations behind this term as viewed through the lens of linguistics and NLP, and we try to break down the many subtle nuances it encompasses. While in NLP, the terms misspelling and noise are by and large interchangeable, in linguistics, the term error is more commonly employed. Other terms like typo, mistake or slip are often used in more general, non-specialised contexts. Throughout this survey, we prefer the term misspelling since it clearly evokes a link with text, and since noise and error are too wide hypernyms that the target audience of this survey might find rather ambiguous.

3.1 Under the lens of linguistics

In linguistics, there are primarily three fundamental viewpoints for models dealing with misspellings, which we cover in this section. The first is that of general linguistics (Section 3.1.1), where some authors have attempted to define and categorise various types of misspellings. The second one is that of sociolinguistics (Section 3.1.2), in which authors analyse misspellings from a social perspective. The last one originates from psycholinguistics (Section 3.1.3), which rather focuses on cognitively relevant aspects of the problem.

3.1.1 General linguistic perspective

As already mentioned, in general linguistics, there is no broadly agreed-upon definition of what a misspelling is, and the term error is often preferred. Error analysis is one important field of linguistics that studies the phenomenon of errors in second language learners. James (Reference James2013) defines errors as an unsuccessful bit of language. Richards and Schmidt (Reference Richards and Schmidt2013) instead define errors as the use of a linguistic item (e.g., a word, a grammatical unit, a speech act, etc.) in a way that a fluent or native speaker of the language regards as showing faulty or incomplete learning.

In general linguistics, it is customary to draw a distinction between error and mistake. Richards and Schmidt (Reference Richards and Schmidt2013) point out that errors are due to a lack of knowledge of the speaker, while mistakes are made because of other compounding reasons, such as fatigue or carelessness. In the same work, errors are classified as belonging to lexical error (i.e., surface forms which are not included in a vocabulary), phonological error (i.e., in the pronunciation) and grammatical error (i.e., not compliant to syntactic rules). Interestingly enough, none of these concepts seems to embrace the possibility that misspellings may be created as a voluntary act (more on this later).

3.1.2 Sociolinguistic perspective

Sociolinguistics focuses on the concept of spelling variation (Nguyen and Grieve Reference Nguyen and Grieve2020). The word misspelling itself carries an implicit judgement against the author: the author of the noise is responsible for missing the correct normative spelling of the word. Contrarily, the sociolinguistic perspective considers that there are no such errors, but rather variations in spelling. These variations can originate from social needs, such as avoiding censorship, expressing group identity or representing regional or national dialects.

Over time, linguistic koinés and speech communities may develop alternative spellings for certain words, whether intentionally or through gradual convention. In the NLP literature, this phenomenon is also referred to as spelling variation, that is, a deviation from the standard orthography that may or may not be perceived as an error. Since there is no universally agreed definition of misspelling, these socially driven orthographic variations deserve special attention. From a morphological standpoint, they can be seen as systematic deviations from the normative form, and as such they evolve diachronically, alongside the language itself.

3.1.3 Psycholinguistic perspective

After outlining key conventions in general linguistics and sociolinguistics, it is essential to emphasise the significant relationship between psycholinguistics and the phenomenon of misspellings. As stated by Fernández and Cairns (Reference Fernández and Cairns2010), psycholinguistics investigates the cognitive processes involved in the use of language, rather than the structure of language itself. In the case of reading, psycholinguistics is concerned with understanding the cognitive processes that underlie this activity, from the acquisition of the sensory stimulus derived from the visual perception of letters, to the subsequent comprehension and cognitive reorganisation of the information within the brain.

The branch of psycholinguistics that is most relevant to the topic of this survey is the one devoted to studying the cognitive processes behind the acts of writing and reading. It has been noted on several occasions that humans are able to read long and complex sentences that include misspelt words with little reduction in performance. The most notable example of this is that of garbled words, in which the internal letters are randomly transposed. Despite this, humans are able to read them with high accuracy. Some related work in the psycholinguistics literature includes the work by Andrews (Reference Andrews1996); Healy (Reference Healy1976); McCusker et al. (Reference McCusker, Gough and Bias1981); Shook et al. (Reference Shook, Chabal, Bartolotti and Marian2012). This cognitive ability of human beings has inspired some of the methods we describe in Section 5.2.

Some researchers in the field of NLP have gained inspiration from lessons learnt in psycholinguistics and have taken advantage of these to devise models robust to misspellings. For example, characters that are graphically similar can be interchanged without significantly affecting human reading comprehension (e.g., cl0sed for closed). This other intuition has inspired some of the methods that we discuss in Section 5.5.

From a computational point of view, the study of misspellings would certainly benefit from the synergies with linguistics and psycholinguistics. The cognitive abilities humans display represent a source of inspiration for methods dealing with misspellings or the creation of adversarial attacks. As an example, consider spam emails in which the content is made of garbled words or in which graphically similar characters have been replaced.

3.2 Under the lens of NLP

In the field of NLP, there is no single, clear-cut definition of misspelling. Indeed, the same type of problem (morphological error) is often expressed with different words, such as noise, typo, and spelling mistake.

The most common of these, along with misspelling, is noise, which is defined as any non-standard spelling variation (Nguyen and Grieve Reference Nguyen and Grieve2020). While this definition of noise may seem appropriate, any attempt to provide a universal definition of misspellings would appear contrived and, above all, imprecise.

To tackle this issue and establish clear boundaries around the concept of misspelling, NLP researchers have proposed various categorisations, which draw from different points of view: For example, from the perspective of the word surface, from the point of view of the user who generated them, or pointing up to the methods used to generate misspelt datasets in an experimental setting. The types of misspellings can be fine-grained, where multiple categories of misspellings are defined in detail, or less fine-grained, where fewer, more representative categories are selected. This lack of uniformity, among other things, complicates the search for relevant papers on the subject (which has indeed represented one of the significant challenges we faced when developing this survey).

In this section, we cover some of the main categorisations of misspellings proposed in the literature. In doing so, we note that the problem of misspelling can be approached from diverse viewpoints and thus there are multiple perspectives on this matter. For example, Heigold et al. Reference Heigold, Varanasi, Neumann and van Genabith(2018) have established three categories based on the word surface:

-

• character swaps: nice

$\rightarrow$

ncie, the position of two subsequent characters is exchanged;

$\rightarrow$

ncie, the position of two subsequent characters is exchanged; -

• word scrambling: absolute

$\rightarrow$

alusobte, the order of the characters is permuted with the exception of the first and last one;

$\rightarrow$

alusobte, the order of the characters is permuted with the exception of the first and last one; -

• character flipping: nice

$\rightarrow$

nite, one character is replaced by another.

$\rightarrow$

nite, one character is replaced by another.

Belinkov and Bisk (Reference Belinkov and Bisk2018) proposed an alternative classification of misspellings into two main categories based on the dataset generation method:

-

• Natural misspellings: real misspellings that occur in real-world data spontaneously;

-

• Synthetic misspellings: misspellings artificially generated by means of procedural rules.

Note that the differentiation between natural and synthetic misspellings does not establish a clear-cut boundary, as any misspelling generated synthetically could plausibly occur naturally. That is to say, the difference between natural and synthetic misspellings is extrinsic to the word surface form, and regards the mode of generation (spontaneous vs. procedural) while both represent (or mimic) the same underlying phenomenon. Indeed, this distinction is functional to experimental setups and was originally conceived with dataset generation in mind. Synthetic misspellings are more widely used due to the low frequency of natural misspellings, which hinders the collection of large, diverse corpora.Footnote 4

What Belinkov and Bisk (Reference Belinkov and Bisk2018) referred to as natural misspellings actually involves lexical lists that provide context for the misspellings, which can be exploited to artificially introduce natural-sounding misspellings into otherwise correct sentences. In such cases, we will refer to a third category of hybrid misspelling, and reserve the term natural misspelling for real-world misspellings found in actual data. Further aspects related to dataset generation will be discussed in Section 7.4.

Several other researchers, including van der Goot et al. (Reference van der Goot, van Noord and van Noord2018) and Nguyen and Grieve (Reference Nguyen and Grieve2020), have emphasised the user’s intention rather than focusing solely on the surface-level characteristics of misspelt words. They propose to distinguish between intentional and unintentional misspellings. For instance, Nguyen and Grieve (Reference Nguyen and Grieve2020) observed that intentional misspellings, such as lengthening a word, are often used to add emphasis to an opinionated statement. An example of this could be the use of the interjection wow in a context where the user wants to emphasise their surprise, as in wooooooooooow. Furthermore, the categorisation of misspellings can be more or less fine-grained; in this respect, van der Goot et al. (Reference van der Goot, van Noord and van Noord2018) have proposed as many as 14 different categories of misspellings, while Heigold et al. (Reference Heigold, Varanasi, Neumann and van Genabith2018) have proposed only 3.

It is thus important to bear in mind that the variability in the terminologies and approaches to this topic—along with the lack of a universal definition for this type of problem—represents one of the greatest challenges faced by NLP researchers. As for our survey, we found it particularly useful to list the types of misspellings for dataset generation (see Section 7.4).

4. How serious is the problem?

SC/TN tools are commonly employed as a pre-processing step in many industrial applications as a way to cope with misspellings, while the core of the system is designed to work with clean text. (Such an approach represents the simplest scenario within the so-called double-step methods that will be surveyed in Section 5.3.) A legitimate question that arises is the following: Can we simply rely on SC/TN tools and consider the problem solved?

As the reader might have wondered, the answer is no. According to Plank (Reference Plank2016), non-standard (or non-canonical) language is a complex matter: there is no commonly agreed definition of what constitutes a misspelling, nor of what makes a text be considered normalised. For instance, Plank (Reference Plank2016) notes that there is no single standard form for spelling variations such as labor in American English and labour in British English. This highlights the fact that the notion of a misspelling is, in some cases, inherently context-dependent, that is, what counts as an error in one variety (e.g., labour in American English) may be fully standard in another (e.g., British English), and that NLP systems need to account for such contextual dependencies on a task-by-task basis (more about context-dependent misspellings can be found in Mays et al. Reference Mays, Damerau and Mercer1991).

From an application-oriented perspective, the use of spelling correction and text normalisation tools is often beneficial, as it can improve downstream performance by removing orthographic noise safely in many contexts. However, from a linguistic research perspective, and for certain specific applications (Section 9), such tools may mask valuable information about variation, evolution or intentional deviations from standard orthography. For example, text normalisation may remove dialectal traits that could be essential for certain analyses. In literary contexts, for instance, preserving a character’s role might require translating a dialectal expression in the source language into a comparable dialectal expression in the target language. Finally, psycholinguistics studies suggest humans are capable of processing misspellings without significant effort (Andrews Reference Andrews1996; McCusker et al. Reference McCusker, Gough and Bias1981; Rayner et al. Reference Rayner, White, Johnson and Liversedge2006). By removing misspellings as a pre-processing step, we lose the opportunity to better comprehend how natural language is processed and how to improve automated NLP tools accordingly.

A second, legitimate question is Can we simply ignore the phenomenon? This section is devoted to answering this question. Throughout it, we offer a comprehensive review of past efforts devoted to quantifying the extent to which vanilla systems’ performance degrades when in the presence of misspellings. This performance decay is typically large, and is typically assessed with respect to artificial and natural misspellings (Baldwin et al. Reference Baldwin, Cook, Lui, MacKinlay and Wang2013; Belinkov and Bisk Reference Belinkov and Bisk2018).

Note that the work presented in this section focuses on measuring the impact of misspellings in methods that do not make any attempt to counter them. Systems specifically designed to be robust against misspellings will be described in Section 5.

4.1 The harm of synthetic misspellings

Synthetic misspellings are the most commonly employed type of misspelling in the related literature, likely due to the ease with which they can be artificially generated, making them convenient for testing specific approaches without the need for large datasets of naturally occurring errors.

In this section, we review related studies, dividing them into two groups: those conducted before the transformer era (Section 4.1.1) and those focusing on quantifying the impact of misspellings on BERT and other transformer-based models (Section 4.1.2).

4.1.1 Impact on pre-BERT models

The problem was partially dismissed by Agarwal et al. (Reference Agarwal, Godbole, Punjani and Roy2007), who tested traditional classifiers (SVM and Naive-Bayes) using bag-of-words representations, against misspellings generated using an automatic tool (dubbed SpellMess) which considers insertions, deletions, substitutions and QWERTY errors,Footnote 5 in two well-known datasets for text classification (Reuters-21578 and 20 Newsgroups). Their results show that even moderately high levels of noise (affecting up to 40 per cent of the words) did not affect classification accuracy as much as expected. The authors conjectured that this can be explained by the fact that many of the features affected by noise are rather uninformative, and that when classifying by topic, abundant patterns still remain in the rest of the training data, even at high levels of noise.

Quite some time later, Belinkov and Bisk (Reference Belinkov and Bisk2018) confronted various Character-based and BPE-basedFootnote 6 encoded neural translators with synthetic misspellings. Their results demonstrated that all machine translation models were significantly affected by the presence of synthetic misspellings.Footnote 7 This paper became influential and has served to raise awareness on the problem of misspellings.

Inspired by the latter, Naik et al. (Reference Naik, Ravichander, Sadeh, Rosé and Neubig2018) conducted robustness experiments on Natural Language Inference (NLI) models using different types of synthetic misspellings, such as swapping adjacent characters or inserting QWERTY errors. The authors designed a stress test to assess whether the qualitative results of NLI models are driven not only by strong pattern matching but also by genuine natural language understanding procedures. The paper goes on by demonstrating that the performance of NLI models, built on top of BiLSTMs and Word2Vec, declines when misspellings are inserted in the test set.

Heigold et al. (Reference Heigold, Varanasi, Neumann and van Genabith2018) carried out experiments considering different types of synthetic noise on the tasks of morphological tagging and machine translation and using different types of encodings, including word-based, Character-based, and BPE-based ones. In their experiments, different models were trained independently on different variants of the training set, including clean, the original set without misspellings; scramble, obtained by permuting the order of the characters with the exception of the first and last one in each word; swap@10, that randomly swaps 10 per cent of subsequent characters; and flip@10, that randomly replaces 10 per cent of the characters with another one. Every pair of (system, training set variant) was tested against similar variants generated out of the test set. The results show the performance of all tested models degrades noticeably when exposed to synthetic misspellings different from those on which the system was trained.

4.1.2 Impact on BERT and transformer-based models

BERT, the popular transformer model proposed by Devlin et al. (Reference Devlin, Chang, Lee and Toutanova2019), has garnered a great deal of attention due to its ability to deliver state-of-the-art performance across a wide range of NLP tasks. Given its success, several studies have focused on analysing the sensitivity of BERT-based models to misspellings. Yang and Gao (Reference Yang and Gao2019) tested Vanilla BERT (i.e., a raw instance of BERT that does not implement any specific method to counter misspellings) in both extrinsic (customer review and question answering) and intrinsic (semantic similarity) tasks. To this aim, the authors employed both word-level noise (i.e., noise involving the addition or removal of entire words from a sentence) as well as various types of misspellings, showing that misspellings are significantly more detrimental to BERT than word-level noise.

Kumar et al. (Reference Kumar, Makhija and Gupta2020) confronted BERT against QWERTY misspellings at various probabilities. The scope of this work was to quantify the extent to which the presence of misspellings harms the performance of a fine-tuned BERT in the tasks of sentiment analysis (on IMDb and SST-2 datasets) and textual similarity (STS-B dataset). Their results demonstrated that BERT is highly sensitive to this type of misspelling, even at low rates.

Moradi and Samwald (Reference Moradi and Samwald2021) carried out a systematic evaluation of BERT and other language models (RoBERTa, XLNet, ELMo) in different tasks (text classification, sentiment analysis, named-entities recognition, semantic similarity, and question answering), considering different types of synthetic misspellings. Their results confirm that the performance of all tested models degrades noticeably for all tasks and types of misspelling. For instance, RoBERTa experiences a significant decrease in performance, with a loss of 33 per cent accuracy in text classification and a 30 per cent decrease in accuracy in sentiment analysis.

Ravichander et al. (Reference Ravichander, Dalmia, Ryskina, Metze, Hovy and Black2021) conducted an evaluation assessment of the effect of misspellings on question-answering performance, considering various state-of-the-art language models (BiDAF, BiDAF-ELMo, BERT, and RoBERTa). The authors of this study focused on errors induced by specific input interfaces (such as translation, audio transcription, or keyboard) and devised ways for generating synthetic misspellings that represent these errors. The experiments conducted revealed a significant decrease in performance across all models for all types of noise, with

![]() $F_1$

drops ranging from 6.1 to 11.7, depending on the nature of the affected words. Additionally, their results indicated that the harm of synthetic misspellings is generally more pronounced than that caused by natural misspellings.

$F_1$

drops ranging from 6.1 to 11.7, depending on the nature of the affected words. Additionally, their results indicated that the harm of synthetic misspellings is generally more pronounced than that caused by natural misspellings.

Satheesh et al. (Reference Satheesh, Beckh, Klug, Allende-Cid, Houben and Hassan2025) created a robustness benchmark for Question Answering based on misspellings of different types. The authors used BERT and other open-source models (Electra, Gelectra, Roberta-XLM), reporting a significant degradation in performance in all cases.

Both Liu et al. (Reference Liu, Schwartz and Smith2019) and Röttger et al. (Reference Röttger, Vidgen, Nguyen, Waseem, Margetts and Pierrehumbert2021) evaluate model performance on difficult data distributions, including misspellings. Liu et al. (Reference Liu, Schwartz and Smith2019) introduce a method called inoculation by fine-tuning, which involves creating normal and challenging versions of both training and test sets. The model is initially trained on the normal set and tested on both versions. If the performance is high on the normal set but low on the challenging set, the model is then fine-tuned using the challenging training set. This approach helps to determine whether the issue lies with the model itself or the original training data. If the model’s performance improves with this fine-tuning, it suggests that data augmentation could be sufficient for the model to generalise better. The method was applied to NLI datasets (some proposed in Naik et al. (Reference Naik, Ravichander, Sadeh, Rosé and Neubig2018) and involved two models: the ESIM model of Chen et al. (2017b) and the decomposable attention model of Parikh et al. (Reference Parikh, Täckström, Das and Uszkoreit2016). The results revealed that all the tested models struggle with synthetic misspellings, even if fine-tuned.

Röttger et al. (Reference Röttger, Vidgen, Nguyen, Waseem, Margetts and Pierrehumbert2021) tested the effectiveness of previously trained hate speech detection models when in the presence of misspellings. Specifically, the models are evaluated on 29 functional classes, including categories such as hate expressed using denied positive statements and denouncements of hate that quote it. This approach allows for very detailed results on the model’s ability to detect different types of hate speech. Among the 29 classes, 5 are related to the presence of misspellings (called spelling variations in the paper). The models tested include BERT, Google’s Perspective, and TwoHat’s SiftNinja. The results revealed that all models struggle to handle misspellings, but the model Perspective fared significantly better than the others.

4.2 The harm of natural misspellings

Naturally occurring misspellings are invaluable resources for testing NLP applications in real-world settings (Baldwin et al. Reference Baldwin, Cook, Lui, MacKinlay and Wang2013; Belinkov and Bisk Reference Belinkov and Bisk2018). However, they are rarely employed in practice since collecting natural misspellings is anything but a simple task. With a lack of consensus on what precisely a misspelling is, some authors have considered as “natural misspellings” phenomena like the errors generated by second language learners (Náplava et al. Reference Náplava, Popel, Straka and Straková2021) or by OCR scans (Vinciarelli Reference Vinciarelli2005). Having said this, natural misspellings may include all the types of misspellings listed in Section 7.4.2, as long as they are user-generated.

In this section, we review works that try to characterise the presence of natural misspellings (Section 4.2.1) and other studies that test the resiliency of different models to natural misspellings (Section 4.2.2).

4.2.1 Where do natural misspellings tend to occur?

Previous studies related to the analysis of natural misspellings are often devoted to understanding which types of misspellings are more likely to occur in which domains (Baldwin et al. Reference Baldwin, Cook, Lui, MacKinlay and Wang2013; Plank Reference Plank2016).

Identifying where natural misspellings are most common is far from trivial, since the very notion of a domain is itself ambiguous. According to Plank (Reference Plank2016), real-world data emerge as complex interactions of many more dimensions (language, genre, register, age group, etc.) than what we can realistically anticipate in an experimental setting. While certain domains of information, such as user-generated content, are known to be particularly prone to generating misspellings, the interplay of these dimensions means that where a misspelling occurs is often a matter of overlapping factors rather than a single one.

Baldwin et al. (Reference Baldwin, Cook, Lui, MacKinlay and Wang2013) compared the rate of out-of-vocabulary terms (as a proxy of the number of misspellings) expected to be found in texts as a function of how curated these texts are. The results were arranged in an ordinal scale of increasing levels of curation: tweets, comments, forums, blogs, Wikipedia articles, and documents from the British National Corpus. In their study, the authors took into account some lexical units like the word length, the sentence length, and the rates of out-of-vocabulary terms, finding interesting direct correlations between the level of formality of the text and the average word and sentence length, with an anti-correlated rate of out-of-vocabulary terms. The same study analysed the perplexity of language models when processing different types of text. The results show that models trained and tested in similar domains (hence close to each other in terms of the degree of formality) tend to display lower perplexity. For example, a model trained on tweets (highly informal) shows a much lower perplexity when used to process blog forums (somewhat informal) than when used to process Wikipedia articles (highly formal).

4.2.2 Testing resilience against natural misspellings

Nguyen and Grieve (Reference Nguyen and Grieve2020) studied how robust different word embedding techniques (such as word2vec variants and FastText) are to deviations from conventional spelling forms (including misspellings, among others) typical of social-media content. Using two datasets from Reddit and Twitter, the authors found that even techniques that are not specifically designed to take into account spelling variations (like the word2vec’s skip-gram model) manage to capture them to some extent. Interestingly enough, the authors draw a connection between intentional spelling variations (like an elongated word “goooood”) and performance, suggesting that these variations typically arise in well-controlled situations, acting as a form of sentiment markers, and, for this reason, models are somehow able to make sense out of them. This is in contrast to unintentional misspellings, which are haphazardly distributed and tend thus to be harder to handle.

In addition to synthetic misspellings, Agarwal et al. (Reference Agarwal, Godbole, Punjani and Roy2007) carried out experiments on natural misspellings. To this aim, the authors used datasets from user-generated content, including logs from call centres, emails, and SMS. Their results suggest that real noise in user-generated content exhibits some patterns, attributable to the consistent usage of abbreviations and the repetition certain users make of specific errors. The results showed the models tested (SVM and Naive-Bayes) performed better than when confronted with synthetic misspellings (see Section 4.1).

In a similar vein, Ravichander et al. (Reference Ravichander, Dalmia, Ryskina, Metze, Hovy and Black2021) conducted experiments not only using synthetic misspellings (see Section 4.1) but also natural ones, in the context of question answering using the XQuAD dataset as a reference. The authors considered two types of natural misspellings: keyboard misspellings and automatic speech recognition (ASR) noise. For keyboard misspellings, natural misspellings were created by asking people to retype XQuAD questions without being able to correct their input when they made a mistake. For ASR, natural noise was created by reading and transcribing every question three times, by three different persons. The experiments showed a noticeable decrease in performance across all tested models (BiDAF, BiDAF-ELMo, BERT, and RoBERTa) for all types of noise. However, synthetic misspellings appeared, on average, to be slightly more problematic than natural ones. Among the types of natural noise, the one generated via ASR was found to be the most harmful. RoBERTa, the top-performing model of the lot, experienced a decay of 8 per cent terms in

![]() $F_1$

when confronted with such misspellings.

$F_1$

when confronted with such misspellings.

The study by Benamar et al. (Reference Benamar, Grouin, Bothua and Vilnat2022) provides a detailed evaluation of how state-of-the-art subword tokenisers handle misspelt words. Specifically, they investigated two French versions of BERT (FlauBERT and CamemBERT) against natural misspellings originating in three different domains (medical, legal, and emails). To test the tokenisation of misspellings, the authors randomly extracted 100 misspelt words from each corpus and paired them with their correct forms. The test was conducted by measuring the cosine similarity between tokens generated for the misspelt and clean terms. In all three domains, the average similarity was very low (19 per cent in the legal domain, 39 per cent in the medical domain, and 27 per cent in the email domain). The authors also observed that incorporating POS tagging information drastically helped to improve performance. For example, CamemBERT scored 92 per cent of the average cosine similarity in the email domain with the aid of POS tags.

4.3 The harm of hybrid misspellings

As recalled from Section 3.2, aside from the synthetic and natural misspellings, there is a third type of misspelling called hybrid that refers to real misspellings that have been artificially injected in different contexts for evaluation purposes (more on this in Section 7.4.3). To our knowledge, the only published record that employs hybrid misspellings to quantify the performance impact on systems that do not handle them is that of Belinkov and Bisk (Reference Belinkov and Bisk2018). In their study, words from error correction databases (such as Wikipedia edits and second language learner corrections) were injected into the IWSLT 2016 machine translation dataset. While hybrid misspellings had less of an impact on machine translation tasks compared to synthetic misspellings, Belinkov and Bisk (Reference Belinkov and Bisk2018) noted that hybrid and natural misspellings are more challenging to evaluate in a rigorous manner.

5. Methods

In this section, we offer a comprehensive overview of previous efforts devoted to counter misspellings. We organise existing methods according to the following categorisation:

-

• Data augmentation (Section 5.1): methods that enhance the training set with perturbed signals to develop resiliency to them. This group can be further divided into two sub-categories:

-

• Character-order-invariant representations (Section 5.2): methods devoted to counter one specific type of misspelling caused by variations in the natural order of the characters.

-

• Double step (Section 5.3): techniques that carry out a step of spelling correction (step 1) before solving the final task (step 2).

-

• Tuple methods (Section 5.4): methods that, in order to train a model, use a list of misspellings each annotated with the corrected surface form.

-

• Other methods (Section 5.5): relevant techniques that do not squarely belong to any of the above categories.

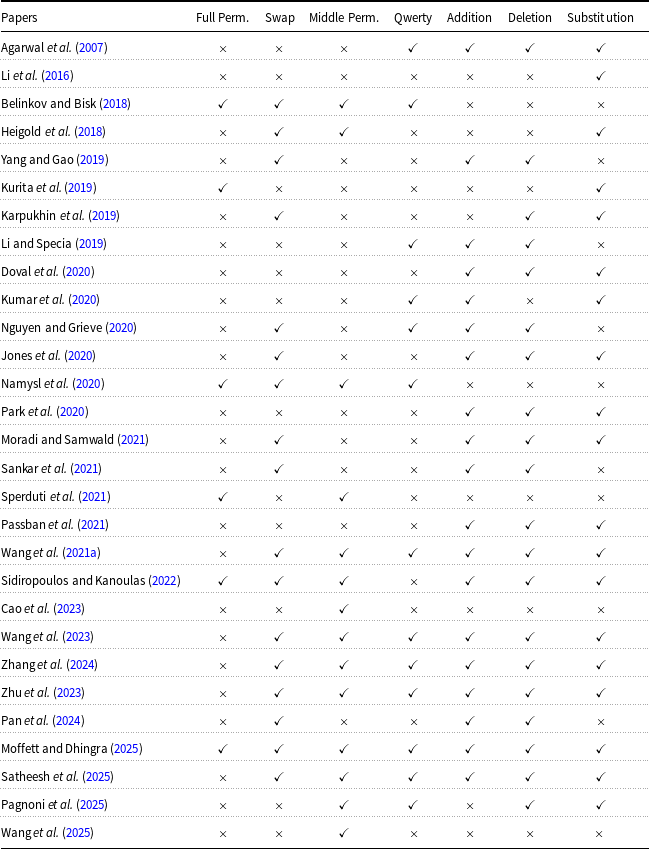

While, in principle, the methods are largely orthogonal to the tasks they have been applied to (more on this in Section 7.1), a few incidental patterns can be observed: for example, data augmentation methods have been more frequently tested in machine translation, whereas double-step methods have been applied across a wider variety of tasks. Methods specifically devoted to POS tagging are not homogeneous and are thus included in the “other methods” category. Table 1 provides a comprehensive overview of the associations between methodological principles, tasks, types of misspellings, models, datasets, and evaluation metrics, aggregating information from the papers discussed in this section, and is intended as a practical guide for the reader.

Reference guide for the methods discussed in Section 5, along with tasks and misspellings addressed, type of models, datasets, and metrics used in the evaluation

5.1 Data augmentation

One of the earliest attempts to address the problem of misspellings in NLP comes down to expanding the training set with misspelt instances, so that the model learns to deal with them during training.

Although data augmentation techniques typically lead to direct improvements, there are important limitations worth mentioning. Augmenting the training set entails an additional cost, sometimes derived from complex techniques that seek to uncover the weaknesses of the model. Yet another important limitation regards its circumscription to a limited frame time. The misspelling phenomenon is not stationary, since language is in constant evolution. While core spelling conventions in languages like English remain relatively stable over long periods, vocabulary changes, slang, dialectal variations, and even official spelling reforms in some languages introduce orthographic shifts that impact misspellings and their treatment in NLP (for a more detailed discussion of these diachronic aspects, see Sections 3.1.2 and 6.5). Additionally, data augmentation typically over-represents certain types of misspellings, thus injecting sampling selection bias into the model (i.e., the prevalence of the phenomena represented in the training set widely differs with respect to the prevalence expected for the test data as a result of a selection policy). Finally, misspellings consist of different character combinations, making it nearly impossible to achieve comprehensive coverage.

We first review a direct application of data augmentation strategies to the problem of misspellings (Section 5.1.1) and then move to describing methods that use a specific kind of generation procedure based on adversarial training (Section 5.1.2)

5.1.1 Generalised data augmentation

To the best of our knowledge, the first attempt to cope with misspellings by means of data augmentation is by Heigold et al. (Reference Heigold, Varanasi, Neumann and van Genabith2018). The methodology consists of analysing the type of misspellings that most harmed the performance of a machine translator, and inserting similar occurrences in the training set. In a similar vein, Belinkov and Bisk (Reference Belinkov and Bisk2018) injected misspellings of various types in a parallel corpus, including the full permutation, character swapping, middle permutation, and insertion of QWERTY errors. Information about how precisely these misspellings are individuated, and about other types of misspellings, is available in Section 7.4 devoted to datasets.

Data augmentation has been extensively applied to the problem of machine translation (Karpukhin et al. Reference Karpukhin, Levy, Eisenstein and Ghazvininejad2019; Li and Specia Reference Li and Specia2019; Passban et al. Reference Passban, Saladi and Liu2021; Vaibhav et al. Reference Vaibhav Singh and Neubig2019; Zheng et al. Reference Zheng, Liu, Ma, Zheng and Huang2019) as a means to confer resiliency to misspellings to the models (for the most part, Character-based neural approaches). For example, Vaibhav et al. (Reference Vaibhav Singh and Neubig2019) augment the training instances of French and English languages in the EP dataset (see Section 7.4) by using the MTNT dataset of Michel and Neubig (Reference Michel and Neubig2018) (see Section 7.4). Karpukhin et al. (Reference Karpukhin, Levy, Eisenstein and Ghazvininejad2019) experimented with four different types of misspellings, correspondingly generated by deleting, inserting, substituting, and swapping characters, that were applied to 40 per cent of the training instances for Czech, German, and French source languages. Some authors have investigated the idea of backtranslation (i.e., reversing the natural direction of the translation, thus translating from the target language to the source language) as a mechanism to generate additional data. The idea is to generate the source translation-equivalent in domains in which resources for the target language are more abundant. The final goal is thus to enhance the source data and to inject misspellings so that a machine translation model resilient to misspellings can be later trained (Li and Specia Reference Li and Specia2019; Zheng et al. Reference Zheng, Liu, Ma, Zheng and Huang2019). In particular, Zheng et al. (Reference Zheng, Liu, Ma, Zheng and Huang2019) applied this technique to social media content for English-to-French, based on the observation that training data for this social media rarely contain misspellings in the target side, or do so in very limited quantities. They used additional techniques to expand the training set, including the use of out-of-domain documents (they considered the domain of news) along with their automatic translations.

Similarly, Li and Specia (Reference Li and Specia2019) combined the idea of backtranslation with a method called Fuzzy Matches (Bulté and Tezcan Reference Bulté and Tezcan2019). Fuzzy Matches takes as input a parallel corpus and a monolingual dataset and, for each sentence in the monolingual dataset, searches for the most similar ones in the parallel corpus, and returns the translation equivalent (i.e., its parallel view) as a potential translation for the original sentence. This method was applied to a monolingual corpus containing misspellings either backwards (this happens when the monolingual corpus is from the target language) and forward (this happens when the monolingual corpus is from the source language), thus generating (clean) translation approximations of noisy data. They combined this heuristic with a method to generate a monolingual corpus based on generating automatic transcriptions from audio files (using the so-called Automatic Speech Recognition software), in the hope that these transcriptions would eventually contain misspellings.

A different approach for developing resiliency to misspellings is the so-called fine-tuning approach that, in the context of machine translation, comes down to using a pre-trained translator model (typically trained on clean data) and performing additional epochs of training using source instances with injected misspellings (Namysl et al. Reference Namysl, Behnke and Köhler2020). Passban et al. (Reference Passban, Saladi and Liu2021) experimented with a variant of this approach, called Target Augmented Fine-Tuning (TAFT), that consists of concatenating, at the end of the target sentence, the correct spelling of the misspelt term of the source sentence. The idea is to condition the model not only to produce the target sentence but also to discover the correct spelling of the affected source word.

Data augmentation has been applied to problems other than machine translation as well. For example, Namysl et al. (Reference Namysl, Behnke and Köhler2020) propose a mechanism for generating misspelt entries for the tasks of named entity recognition (NER) and neural sequence labelling (NSL) characters of the words in a sentence as follows. Given a word

![]() $w=(c_1, \ldots , c_n)$

consisting of

$w=(c_1, \ldots , c_n)$

consisting of

![]() $n$

characters, a pseudo-character

$n$

characters, a pseudo-character

![]() $\epsilon$

is inserted before every character and after the last one, thus obtaining a new token

$\epsilon$

is inserted before every character and after the last one, thus obtaining a new token

![]() $w = (\epsilon , c_1, \epsilon , c_2, \epsilon ,\ldots ,c_n, \epsilon )$

. For example, given the word spell, a token

$w = (\epsilon , c_1, \epsilon , c_2, \epsilon ,\ldots ,c_n, \epsilon )$

. For example, given the word spell, a token

![]() $\epsilon$

s

$\epsilon$

s

![]() $\epsilon$

p

$\epsilon$

p

![]() $\epsilon$

e

$\epsilon$

e

![]() $\epsilon$

l

$\epsilon$

l

![]() $\epsilon$

l

$\epsilon$

l

![]() $\epsilon$

is created. Subsequently, a few of these characters are randomly chosen and replaced with another character randomly drawn from a certain probability distribution (called the character confusion matrix) that also includes

$\epsilon$

is created. Subsequently, a few of these characters are randomly chosen and replaced with another character randomly drawn from a certain probability distribution (called the character confusion matrix) that also includes

![]() $\epsilon$

in its domain. For example, two possible derivations would be (note the underlined characters):

$\epsilon$

in its domain. For example, two possible derivations would be (note the underlined characters):

Finally, all the remaining pseudo-characters are removed. In our example, this would give rise to the words (i) smpell and (ii) spel, respectively.

5.1.2 Adversarial training

A different, related strategy for augmenting the data is by means of adversarial training. Adversarial training is a robustness-oriented learning paradigm in which a model is trained not only on the original (clean) data, but also on adversarially perturbed variants of it. These perturbations are deliberately crafted to exploit the model’s weaknesses and induce errors, with the goal of improving its resilience. In the context of misspellings, adversarial training typically involves injecting orthographic perturbations into the training data so that the model learns to maintain performance despite such input variations. There are two main types of adversarial training that have been applied to the problem of misspellings: the black-box setting and the white-box setting, which we discuss in what follows.

In the black-box setting, perturbations are created without direct access to the model, often by applying predefined transformation rules or by using surrogate models. Here, a general-purpose model is trained to develop robustness to adversarial samples.

Li et al. (Reference Li, Ji, Du, Li and Wang2019a) propose TextBugger, a method to generate misspellings by means of adversarial attacks. The method first searches for the most influential sentences (those for which the classifier returns the highest confidence scores) and then identifies the most important words in each such sentence (those that, if removed, would lead to a change in the classifier output). These words are altered by injecting misspellings either in training or in test documents.

In the White-box setting, perturbations are generated using knowledge of the model’s parameters or gradients, and the objective function is a perturbation-aware loss, that is, a loss that jointly optimises performance on clean inputs and on their adversarially perturbed counterparts. This contrasts with the traditional loss, which only accounts for clean inputs.

Zhou et al. (Reference Zhou, Zhang, Jin, Peng, Xiao and Cao2020) generate adversarial examples via a perturbation-aware loss following Goodfellow et al. (Reference Goodfellow, Shlens and Szegedy2015), that is, a perturbation to the input optimised for damaging the loss of the model. Their neural model was dubbed Robust Sequence Labelling (RoSeq) and was applied to the problem of named-entity recognition (NER). The idea is to optimise both for the original model’s loss and for the perturbation loss, simultaneously. Note that in this case, there is no explicit augmentation of training data, but rather an implicit regularisation in the loss function that carries out the adversarial training approach.

Cheng et al. (Reference Cheng, Jiang and Macherey2019) applied a similar idea but in the context of machine translation. The method is called Doubly Adversarial Input since, in this case, the perturbation is applied both to the source and to the target sentences. The most influential words in a sentence (hence, the candidates to perturb) are identified by searching for possible replacements that, if used in place of the original word, would yield the maximum (cosine) distance in the embedding space with respect to the original vector. The set of candidate words that are electable for this replacement is made of words that are likely to occur in place of the original one according to a language model trained for the source or target language, correspondingly. For the target sentences, this set is further expanded with words that the translator model itself considers likely. Later on, Park et al. (Reference Park, Sung, Lee and Kang2020) extended this idea to the concept of subwords and their segmentation (see also Kudo and Richardson Reference Kudo and Richardson2018).

5.2 Character-order-agnostic methods

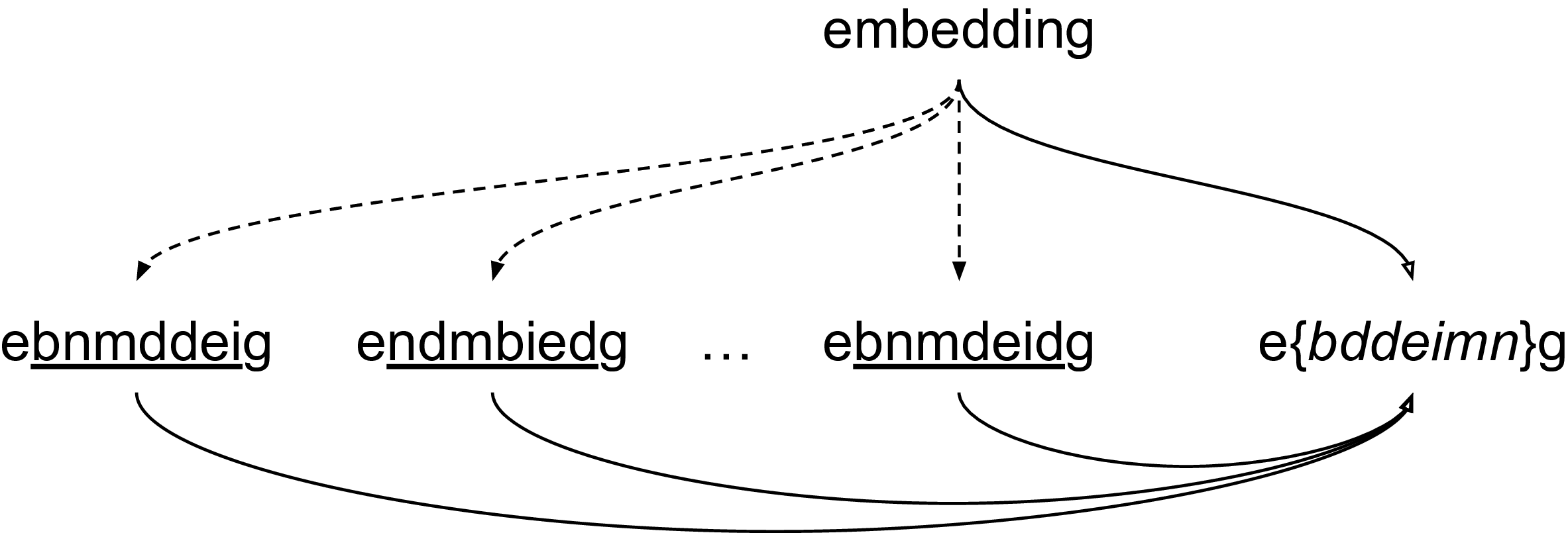

There is abundant evidence from the psycho-linguistics literature indicating that humans are able to read garbled text (i.e., text in which the character-order within words is rearranged, often called scrambled) without major difficulties, as long as the first and last letters remain in place, as in, The chatrecras in tihs sencetne hvae been regarraned. (see, e.g., Andrews Reference Andrews1996; McCusker et al. Reference McCusker, Gough and Bias1981; Rayner et al. Reference Rayner, White, Johnson and Liversedge2006). This is not true for computational language models relying on current representation mechanisms, though (Heigold et al. Reference Heigold, Varanasi, Neumann and van Genabith2018; Yang and Gao Reference Yang and Gao2019). Character-order-agnostic methods (Belinkov and Bisk Reference Belinkov and Bisk2018; Malykh et al. Reference Malykh, Logacheva and Khakhulin2018; Sakaguchi et al. Reference Sakaguchi, Duh, Post and Durme2017; Sperduti et al. Reference Sperduti, Moreo and Sebastiani2021) gain inspiration from these observations and propose different mechanisms that defy the need for representing the internal order of the characters; Figure 2 depicts this intuition.

Conceptualisation of an order-agnostic representation for garbled words. Dotted lines denote garbled variants of the original word, on the top. Solid lines denote an order-agnostic representation of a surface form word. If all characters (but the first and last) are represented as a set, then the representation of the original word and the garbled variants coincide.

The earliest published work we are aware of is by Sakaguchi et al. (Reference Sakaguchi, Duh, Post and Durme2017). Their model, called the Semi-Character Recurrent Neural Network (ScRNN), represents the first and last characters of a word as separate one-hot vectors, while the internal characters are encoded as a bag-of-characters, that is, a juxtaposition of one-hot vectors where character order is disregarded. The model was applied specifically to spelling correction, rather than to any particular downstream application. ScRNN was adopted as the first stage of a double-step method by Pruthi et al. (Reference Pruthi, Dhingra and Lipton2019) (covered in Section 5.3).

Later on, Belinkov and Bisk (Reference Belinkov and Bisk2018) proposed a representation mechanism, called meanChar, that was tested in machine translation contexts. In particular, the representation comes down to averaging the character embeddings of a word, and then using a word-level encoder, along the lines of the CharCNN proposed by Kim (Reference Kim2014).

Malykh et al. (Reference Malykh, Logacheva and Khakhulin2018) proposed Robust Word Vectors (RoVe), a method that generates three vector representations out of each word: the Begin (B), Middle (M), and End (E) vectors. These vectors correspond to the juxtaposition of the one-hot vectors of certain characters in a word. For example, given the word previous, B is the sum of the one-hot vectors of the first three characters (pre), E is the sum of the one-hot vectors of the last three characters (ous), while M sums the one-hot vectors of all characters in the word (and not only of the remaining central characters, as the name may suggest). The method showed promising results in three different languages, including Russian, English, and Turkish, and in three different tasks, including paraphrase detection, sentiment analysis, and identification of textual entailment.

Sperduti et al. (Reference Sperduti, Moreo and Sebastiani2021) proposed a pre-processing trick, called BE-sort, to tackle the problem. The method comes down to alphabetically sorting all middle characters of a word, excluding the first and the last character, so that the original word itself (e.g., embedding) as well as any potentially garbled variant of it (e.g., edbemindg, ebmeinddg, etc.) would end up being represented by the exact same surface token (e.g., ebddeimng). This pre-processing is not only applied to the words in the training corpus, but also to every future test word. Word embeddings learned by using Skip-gram with negative sampling on a BE-sorted variant of the British National Corpus were found to perform almost on par, across 17 standard intrinsic tasks, with respect to word embeddings learned on the original corpus, and much better than word embeddings learned on variants of this corpus in which words were garbled at different probabilities.

5.3 Double-step with text normalisation

As the name suggests, double-step methods tackle any task by performing two subsequent steps: first, a task-agnostic text normalisation step addresses and corrects any misspellings in the input text; second, the actual task of interest is performed, with the assumption that the input is now error-free. Since the first step removes misspellings from the source text, some authors have suggested that double-step methods represent the opposite of data-augmentation-based approaches. For example, Plank Reference Plank2016 analyses the problem by which models are trained on clean (canonical) data, but tested on potentially noisy data, and suggests that a key component for enabling resiliency to out-of-vocabulary terms and adaptation to language variation would come down to modelling variety (i.e., enlarging the training data) rather than simply cleaning the test.

In this survey, we cover spelling correction methods only when they are specifically aimed at improving the performance of a downstream task (i.e., when they serve as the “first step” in a double-step approach), and we refer readers interested in general-purpose correction methods to Bryant et al. (Reference Bryant, Yuan, Qorib, Cao, Ng and Briscoe2023); Hládek et al. (Reference Hládek, Staš and Pleva2020); Wang et al. (Reference Wang, Wang, Dang, Liu and Liu2021b). This in no way diminishes the importance of spelling correctors; indeed, in most application contexts, spelling correction directly improves final task performance and is often sufficient for many industrial solutions (Bhargava et al. Reference Bhargava, Spasojevic and Hu2017). However, our survey focuses on the implications of misspellings for the entire processing pipeline, that is, cases where removing misspellings might lead to the loss of potentially useful signals for the target task. Some examples of relevant applications are provided in Section 9.

There are two main strategies for implementing double-step methods. The first one, which we could call the independent approach, in which the error correction step is carried out independently from the second, task-specific step, which receives the cleaned input. The second one, which we call the end-to-end approach, instead considers the correction step and the task-specific step as dependent, and optimises both jointly. In most cases, the methods have used the first strategy; for example, Schulz et al. (Reference Schulz, Pauw, Clercq, Desmet, Hoste, Daelemans and Macken2016) proposed a new modular text correction method to serve as the first step in a double-step process. The correction method is structured into three internal layers: (i) a preprocessing layer, which performs text tokenisation; (ii) a suggestion layer, which generates several possible corrections; and (iii) a final decision layer, which selects the best correction. After this correction stage, the second step of the double-step process consists of training and testing POS tagging and NER models on the normalised text. The authors showed that their modular correction method improves robustness to misspellings in the downstream task.

Ljubesic et al. (Reference Ljubesic, Erjavec and Fiser2017) evaluated a standard tagger on non-standard Slovene text and observed a clear drop in accuracy. To address this, the authors incorporated lexical normalisation data, aligning non-standard word forms with their standardised counterparts, either through lexicon-based mappings or automatically generated normalisations. Adding this information to the tagger’s feature set improved its ability to handle spelling variants and colloquial forms, leading to notable gains in POS tagging accuracy.

Later on, Riordan et al. (Reference Riordan, Flor and Pugh2019) set up an experiment inserting Character-based representations into neural word-based content scoring models,Footnote 8 evaluating whether text correction alone or in combination with character-level modelling provides greater improvements on responses that include misspellings. While Character-based information appears to have minimal impact, spelling correction improves the models’ resilience to misspellings in the downstream task.

Pruthi et al. (Reference Pruthi, Dhingra and Lipton2019) adopted a variant of ScRNN (covered in Section 5.2) as the first step of a double-step strategy applied to the problem of sentiment analysis and part-of-speech tagging. The variant implements heuristics for handling the unknown tokens (typically denoted by UNK) that ScRNN produces whenever it encounters an out-of-vocabulary (OOV) word (i.e., words that were not considered during the training phase). In particular, three mechanisms are explored: (i) pass-through, in which the UNK token is replaced by the original OOV term; (ii) back-off to neutral, in which the UNK token is replaced by a word that has a neutral value for the classification task; and (iii) back-off to a background model, in which another, more generic (hence less suitable for the task), spelling corrector is invoked in place of ScRNN.

Kurita et al. (Reference Kurita, Belova and Anastasopoulos2019) propose the Contextual Denoising Autoencoder. The Autoencoder receives as input the incorrect version of a textual token (e.g., wrod, incorrect spelling of a word) and predicts its denoised version in the output (e.g., word) by leveraging contextual information. The base architecture that Kurita et al. (Reference Kurita, Belova and Anastasopoulos2019) employed is a transformer model. To embed words, Kurita et al. (Reference Kurita, Belova and Anastasopoulos2019) exploited the CNN encoder of ELMO. van der Goot et al. (Reference van der Goot, Ramponi, Caselli, Cafagna and Mattei2020) created a new lexical normalisation benchmark for the Italian language and showed how a normalisation step can slightly improve resiliency to misspellings in dependency parsing. Both Li et al. (Reference Li, Rei and Specia2021) and Passban et al. (Reference Passban, Saladi and Liu2021) propose methods for machine translation that take into account error correction in an end-to-end manner. Both approaches resort to an auxiliary task based on a double decoder for correcting the input. Given the noisy instance

![]() $x'$

, the decoder is trained to produce its translated version

$x'$

, the decoder is trained to produce its translated version

![]() $y$

, while the correction decoder is trained to regenerate

$y$

, while the correction decoder is trained to regenerate

![]() $x$

, the clean version of

$x$

, the clean version of

![]() $x'$

. The two decoders are jointly optimised by means of a weighted loss that takes into account the translation error and the reconstruction loss simultaneously.

$x'$

. The two decoders are jointly optimised by means of a weighted loss that takes into account the translation error and the reconstruction loss simultaneously.

5.4 The tuple-based methods

By tuple-based methods, we refer to a broad family of approaches in which the input data is represented as tuples that explicitly list relevant spelling variations. This term does not characterise a specific learning paradigm but rather describes a representational format for the data; as such, it places no constraint on the type of method used to learn from these data. Consequently, the methods grouped under this section are diverse in nature. The two most common formats for representing training data are: (i) pairs of the form

![]() $(x, x')$

, where

$(x, x')$

, where

![]() $x$

is a clean instance and

$x$

is a clean instance and

![]() $x'$

is a misspelt variant, and (ii) triplets of the form

$x'$

is a misspelt variant, and (ii) triplets of the form

![]() $(x, x', y)$

where

$(x, x', y)$

where

![]() $y$

is a task-dependent target (e.g., a translation of

$y$

is a task-dependent target (e.g., a translation of

![]() $x$

). Here,

$x$

). Here,

![]() $x$

and

$x$

and

![]() $x'$

can be words, sentences, or other lexical units.

$x'$

can be words, sentences, or other lexical units.

Alam and Anastasopoulos (Reference Alam and Anastasopoulos2020) used a tuple-based method to endow a transformer-based machine translator with resiliency to misspellings. To do so, they resorted to a dataset originally designed for grammatical error correction and consisting of tuples

![]() $(x, x')$

, with

$(x, x')$

, with

![]() $x'$

a misspelt version of the sentence

$x'$

a misspelt version of the sentence

![]() $x$

. The idea is to generate translations of

$x$

. The idea is to generate translations of

![]() $x$

to create new tuples

$x$

to create new tuples

![]() $(x', y)$

in which the misspelling-free translation

$(x', y)$

in which the misspelling-free translation

![]() $y$

is presented as the desired output for the misspelt input

$y$

is presented as the desired output for the misspelt input

![]() $x'$

; tuples thus created are then used to fine-tune a transformer model.

$x'$

; tuples thus created are then used to fine-tune a transformer model.

Zhou et al. (Reference Zhou, Zeng, Zhou, Anastasopoulos and Neubig2019) proposed a cascade model based on triples for machine translation. Given a triplet

![]() $(x, x', y)$

(in which

$(x, x', y)$

(in which

![]() $x$

,

$x$

,

![]() $x'$

, and

$x'$

, and

![]() $y$

are defined as before), the model combines two auto-encoders sequentially: the first one is a denoising auto-encoder that receives

$y$

are defined as before), the model combines two auto-encoders sequentially: the first one is a denoising auto-encoder that receives

![]() $x$

as the expected output for input

$x$

as the expected output for input

![]() $x'$

, while the second one is a translation decoder that receives

$x'$

, while the second one is a translation decoder that receives

![]() $y$

as the expected output for the encoded representations of

$y$

as the expected output for the encoded representations of

![]() $x$

and

$x$

and

![]() $x'$

.

$x'$

.

Edizel et al. (Reference Edizel, Piktus, Bojanowski, Ferreira, Grave and Silvestri2019) propose Misspelling Oblivious word Embeddings (MOE), a variant of FastText (Bojanowski et al. Reference Bojanowski, Grave, Joulin and Mikolov2017; Joulin et al. Reference Joulin, Grave, Bojanowski and Mikolov2017), which, in turn, is a variant of the CBOW architecture of word2vec (Mikolov et al. Reference Mikolov, Chen, Corrado and Dean2013) that endows the architecture with the ability to model subword information. The idea is to enhance the loss function of FastText with a component that favours the embeddings of subwords from misspelt terms to be close to the embedding of the correct term. To this aim, the authors created a dataset of word tuples

![]() $(x, x')$

by relying on a probabilistic error model that captures the probability of mistakenly typing a character

$(x, x')$

by relying on a probabilistic error model that captures the probability of mistakenly typing a character

![]() $c'$

when the intended character was

$c'$

when the intended character was

![]() $c$

by taking into account the entire word and its context. The probabilistic model was developed using an internal query log of Facebook.

$c$

by taking into account the entire word and its context. The probabilistic model was developed using an internal query log of Facebook.

Closely related, Doval et al. (Reference Doval, Vilares and Gómez-Rodríguez2020) propose a modification of the Skip-Gram with Negative Sampling (SGNS) architecture of word2vec (Mikolov et al. Reference Mikolov, Chen, Corrado and Dean2013) based on triplets of the form

![]() $(w, w', b_j)$

, where

$(w, w', b_j)$

, where

![]() $b_j$

is the

$b_j$

is the

![]() $j$

-th word in a set of bridge words, and

$j$

-th word in a set of bridge words, and

![]() $w'$

is a misspelt version of word

$w'$

is a misspelt version of word

![]() $w$

. The intuition behind bridge words is as follows. Consider the occurrence of the word friend in a document that also contains the misspelt form frèinnd in similar contexts; consider, for example, the sentence my friend is tall and my frèinnd is tall. The method first pre-processes the text by eliminating double letters and accents. In our example, frèinnd would thus become freind (note that two letters remain swapped). Then, two sets of bridge words are generated, each containing all the words that would result from eliminating one single character from friend and freind, respectively. In our example, this would lead to one set of bridge words for the clean word friend, that is,

$w$

. The intuition behind bridge words is as follows. Consider the occurrence of the word friend in a document that also contains the misspelt form frèinnd in similar contexts; consider, for example, the sentence my friend is tall and my frèinnd is tall. The method first pre-processes the text by eliminating double letters and accents. In our example, frèinnd would thus become freind (note that two letters remain swapped). Then, two sets of bridge words are generated, each containing all the words that would result from eliminating one single character from friend and freind, respectively. In our example, this would lead to one set of bridge words for the clean word friend, that is,

![]() $\{$

riend, fiend, frend, frind, fried, frien

$\{$

riend, fiend, frend, frind, fried, frien

![]() $\}$

, and another one for the misspelt word freind, that is,

$\}$

, and another one for the misspelt word freind, that is,

![]() $\{$

reind, feind, frind, freid, frein

$\{$

reind, feind, frind, freid, frein

![]() $\}$