6.1 Speech and the Temporal Coordination of Behavior

The main purpose of language is to exchange information in communication with others. In the case of spoken language, all acoustic information is conveyed through the spectrally and temporally fluctuating patterns of the sound signal (Martin, Reference Martin1972; Rosen, Reference Rosen1992). These evolve over time and into dialogue in an alternation of speaking and listening. In this rhythmic aspect, speech is ultimately a form of adaptive sensorimotor behavior that is shaped by our perpetual interaction with the environment. This implies that we need to be able to flexibly adjust behavior due to rapidly changing circumstances, goals, and demands. The successful use of speech therefore not only requires situational balancing of goals and demands but also adequate use of limited neural and cognitive resources. This necessitates precise temporal coordination of dynamics as diverse as turn-taking, signal encoding and decoding, interfacing with short-term and long-term memory, allocating attention, and combining temporally distinct speech elements to optimize communication. Yet, how temporal coordination is achieved within and between speakers and listeners remains a challenging question, as perhaps best expressed in Lashley’s original reflection on the problem of serial order in behavior (Lashley, Reference Lashley and Jeffress1951).

Some acoustic signal dynamics have been shown to directly map onto neurophysiological signatures in speech processing. Compelling evidence showed that neural oscillatory activity mirrors rhythmic features of the speech signal (Giraud et al., Reference Giraud, Kleinschmidt and Poeppel2007; Ding et al., Reference Ding, Melloni, Zhang, Tian and Poeppel2016; see Chapters 3 and 5). The links between the acoustic signal and brain rhythms imply that speech is at least in parts both a reflection and a driver of neural signaling and cognitive mechanisms such as attention allocation that themselves are not restricted to speech. However, so far, it is still mostly unclear how acoustic rhythms interact with neural rhythms and the dynamic allocation of memory and attention or across domains, as indicated by visual enhancement effects (Schroeder and Lakatos, Reference Schroeder and Lakatos2008; Schroeder et al., Reference Schroeder and Lakatos2008). A better understanding of how these interactions shape and are themselves shaped by speech rhythms would therefore not only improve any explanation of human speech capacities but could also inform new perspectives on biological and cognitive aspects of ontogenetic and phylogenetic speech development. This extended approach might provide explanations on how successful temporal coordination emerges from the “composite of many interactive systems” that make up cerebral functions, thus delivering a crucial building block to answering the question of how the human brain factors time into the “problem of serial order in behavior” (Lashley, Reference Lashley and Jeffress1951, p. 135).

6.2 Linking Production and Perception Cycles to Temporal Processing

Considering the complex rhythmic structure of speech, one basic domain-general mechanism that might interleave with speech processing is temporal processing, that is, a mechanism underlying the encoding, decoding, and use of temporal information in motor and nonmotor behavior (Buhusi and Meck, Reference Buhusi and Meck2005; Ivry and Schlerf, Reference Ivry and Schlerf2008). Other possible interactions between “systems” emerge from the need to rapidly activate and flexibly allocate attention and memory resources to particular speech events as they unfold in time (Large and Jones, Reference Large and Jones1999; Schroeder and Lakatos, Reference Schroeder and Lakatos2008). Here, speaking and listening to speech share a common denominator in the encoding and decoding of temporal structure, the dynamic allocation of attention and memory, and the temporal coordination of these processes with the overarching goal to ensure stable speech processing and consequently successful communication.

Temporal processing has been attributed to a distributed brain network (Ivry and Schlerf, Reference Ivry and Schlerf2008; Merchant et al., Reference Merchant, Harrington and Meck2013; Wiener, Reference Wiener, Merchant and de Lafuente2024). The main nodes of this network are the basal ganglia, the supplementary motor area (SMA) and prefrontal cortical areas, and the cerebellum, each of which seems to support specific aspects of temporal processing. Current views on these classically denoted motor areas extend to perception as well (e.g., Petacchi et al., Reference Petacchi, Laird, Fox and Bower2005; Chen et al., Reference Chen, Penhune and Zatorre2008; Baumann et al., Reference Baumann, Borra and Bower2015), and thus questions emerged as to if, how, and why this potentially domain-general temporal processing network might interface with speech production and perception (Schirmer, Reference Schirmer2004; Buhusi and Meck, Reference Buhusi and Meck2005; Kotz and Schwartze, Reference Kotz and Schwartze2010).

Answering these questions relates back to the notion of speech communication as a form of adaptive behavior. The intricate interplay of production and perception in conversation can be subsumed but also further differentiated considering the organizational structure of the perception–action (PA) cycle framework (Fuster, Reference Fuster, Grafman, Holyoak and Boller1995, Reference Fuster2004; Fuster and Bressler, Reference Fuster and Bressler2015). The PA cycle is a neurofunctional implementation of the fundamental principle of biological adaptation, expressed in the notion that environmental events change the activity of neural receptors to generate adaptive motor behavior, which, in turn, generates environmental events that induce further changes and thus give rise to a self-contained periodic cycle or function circle (Uexküll, Reference Uexküll1926, p. 126; Fuster and Bressler, Reference Fuster and Bressler2015). The PA cycle framework suggests that the development of the human prefrontal cortex (PFC) during the course of evolution added unparalleled capacities for temporal coordination and the timely (predictive) activation of memory to the PA cycle (Fuster and Bressler, Reference Fuster and Bressler2015). As the PFC coordinates motor and perceptual brain networks, it essentially exerts a “temporal syntactic function,” which Fuster operationally defined as the “bridging of temporally separate elements of a behavioral gestalt,” such as words forming an utterance (Fuster, Reference Fuster, Grafman, Holyoak and Boller1995, p. 175). In interaction with other brain areas, this bridging function denotes the PFC as the highest node within executive and perceptual hierarchies that govern the temporal coordination of all goal-directed behavior (Fuster and Bressler, Reference Fuster and Bressler2015).

Brain areas that connect to the PFC in support of this temporal syntactic function comprise neocortical areas such as primary sensory and association cortices but also the same subcortical areas that are implicated in temporal processing, namely the basal ganglia and the cerebellum that might exert a modulatory influence on the PA cycle (Fuster, Reference Fuster, Grafman, Holyoak and Boller1995; Fuster and Bressler, Reference Fuster and Bressler2015). Nevertheless, the parallel enlargement of the cerebellum and the PFC throughout evolution (MacLeod et al., Reference MacLeod, Zilles, Schleicher, Rilling and Gibson2003; Habas, Reference Habas2021) and the well-documented cerebellar connectivity with the thalamus and basal ganglia as well as with central executive, default mode, salience, attentional, and language networks, stand exemplary for a wider network that might allow for more specialized interactions such as that of temporal processing and the PA cycle suggested here (Ramnani, Reference Ramnani2006; Krienen and Buckner, Reference Krienen and Buckner2009; Strick et al., Reference Strick, Dum and Fiez2009). It is of note, though, that within the PA cycle, temporal processing is not conceived as processing of time but processing in time (Fuster, Reference Fuster, Grafman, Holyoak and Boller1995). Currently, it is also computationally largely unspecified how the PFC performs its temporal syntactic function. Nevertheless, the central notion of repeated and predictive activation that defines the PA cycle, potentially in a periodic fashion, points to the fundamental role of the corresponding neural rhythms in the temporal coordination of goal-directed behavior. In a similar vein, Lashley noted that temporal coordination of behavior may be achieved through “temporally spaced waves” that govern states of “facilitative excitation” (Lashley, Reference Lashley and Jeffress1951, p. 127). One may speculate that at least in those instances in which behavioral rhythms directly map onto neural rhythms, as shown for speech, neural oscillatory activity and temporal processing mechanisms instantiate a “master clock signal” that facilitates temporal coordination. This would essentially mean combining processing in time with processing of time, thus adding temporal specificity to the PA cycle with the aim to tune the PFC temporal syntactic function for optimal predictive adaptation.

6.3 The Complexity of Predicting “When”

Being able to predict what elements occur in an utterance is a powerful way to guide speech communication. However, to optimally adapt to an inherently dynamic environment, the human brain might likewise aim to predict specifically when something occurs (Schwartze and Kotz, Reference Schwartze and Kotz2013). Neural oscillations across different frequency bands offer a solution for the coding of temporal information and the generation of temporal predictions, directing neural and cognitive resources to specific points in time. Whereas associations or feedforward and feedback networks are good at predicting what occurs, temporal coordination and temporal prediction are achieved most directly and in an energy-efficient manner by oscillations and by oscillation-based synchrony (Buzsaki and Draguhn, Reference Buzsaki and Draguhn2004).

Due to the rhythmic complexity of speech, it is often difficult to maintain any strict differentiation of processing in time and of time or of what as opposed to when predictions. According to Rosen (Reference Rosen1992), the acoustic signal carries rhythmic features on at least three distinct levels: envelope (fluctuations in overall amplitude at rates between 2 and 50 Hz), periodicity (50–500 Hz), and fine structure (0.5–10 kHz), each of which is defined by characteristic acoustic, auditory, and perceptual manifestations and their specific roles in linguistic contrasts. Correspondingly, Lashley discussed a series of hierarchies of organization for speech, ranging from the order of vocal movements to the discourse level (Lashley, Reference Lashley and Jeffress1951). This complexity leaves it open as to whether the interaction of temporal processing with the PA cycle would manifest in one or multiple rhythms and which feature or organizational level(s) would carry it. Human multitasking abilities seem to imply parallel processing in several PA cycles (Fuster and Bressler, Reference Fuster and Bressler2015). However, if the notion of a “master clock signal” that governs temporal coordination holds true, it suggests a special status of one rhythm and that neural oscillatory activity in the respective frequency band is used for specific temporal prediction. This raises the issue of potential interference effects and confusion between production and perception as speakers almost simultaneously are listeners to their own speech and perceivers of their surroundings, including the environment and the interlocutor. Although different rhythms can in principle coexist in the same and different brain areas, but also interact with each other (Buzsaki and Draguhn, Reference Buzsaki and Draguhn2004), parallel processing of the same rhythm likely requires a more fine-grained differentiation of brain areas and connections implied in temporal processing and the PA cycle. For example, it has been shown that the dentate nucleus, the main output relay of the cerebellum, divides into motor and cognitive subcompartments with distinct connectivity patterns to the SMA (Dum and Strick, Reference Dum and Strick2003; Akkal et al., Reference Akkal, Dum and Strick2007). Engagement of the SMA in temporal processing can be differentiated along an anterior–posterior axis (Schwartze et al., Reference Schwartze, Rothermich and Kotz2012). This organization is maintained for connections from the anterior SMA to the PFC and from the posterior SMA to premotor areas, and connections from both to the basal ganglia (Kotz et al., Reference Kotz, Anwander, Axer and Knösche2013). Although the picture is far from complete in this regard, such a configuration might allow parallel processing of the same rhythm in neighboring areas in production and perception.

6.4 Tracing a Fundamental Driving Rhythm

Lashley was not only positive that rudiments of every human behavioral mechanism can be traced down the evolutionary scale but also that they are represented in “primitive activities” (i.e., simple and evolutionarily ancient) of the nervous system (Lashley, Reference Lashley and Jeffress1951, p. 134). Several potential candidates have been put forward. However, following Lashley’s rationale, a way to identify a likely driving rhythm is to reduce the rhythmic and organizational complexity of the speech signal to a simpler and evolutionary ancient level. The frame/content (f/c) theory of the origin of speech differentiates such a level, considering that speech is first and foremost a motor behavior that is expressed through serially organized movements while speaking (MacNeilage, Reference MacNeilage1998, Reference MacNeilage2008). Its central tenet is the mandibular cycle, that is, alternating opening and closing mouth movements, which constitute a general-purpose carrier or frame into which vowels and consonants are combined to form specific content (MacNeilage, Reference MacNeilage1998, Reference MacNeilage2008; see Chapter 2). Viewed at this level, continuous speech is carried by the biphasic cycles of a mandibular oscillation that can take simple contrastive forms such as baba but also all of the more complex forms found in speech (MacNeilage, Reference MacNeilage2008). Accordingly, opening the mouth to produce vowels and closing it to produce consonants is regarded as the most fundamental organizational level of serial order in speech (MacNeilage, Reference MacNeilage2008).

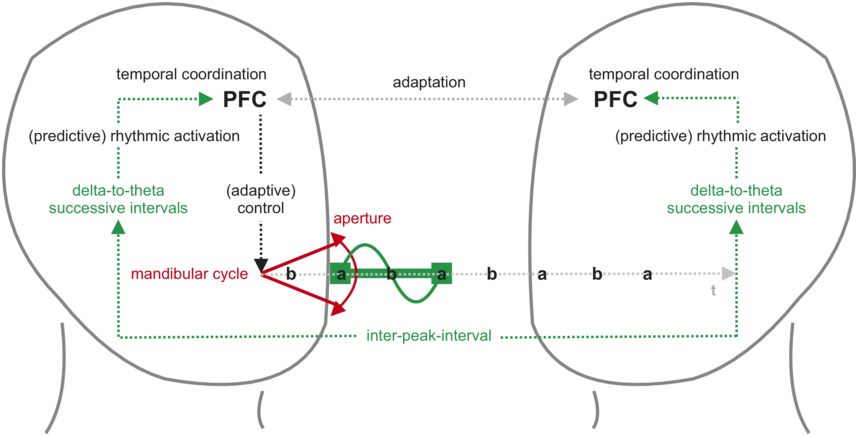

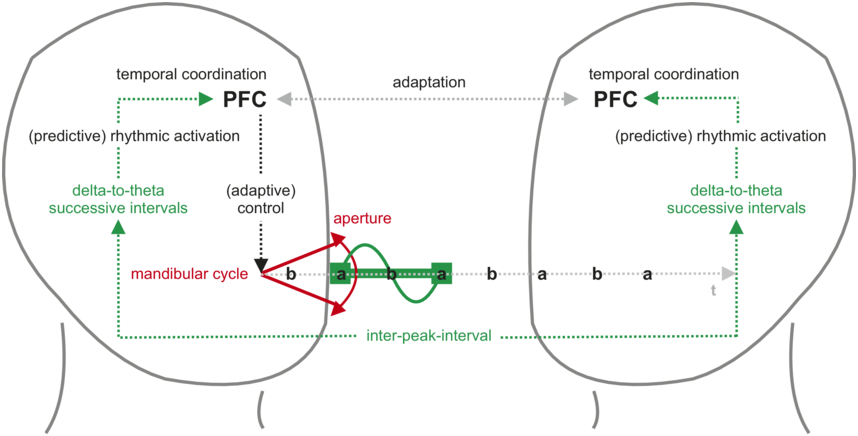

The corresponding articulatory gestures and the resultant syllables are associated with a fluctuation in sound energy that reaches peaks with maxima of oral aperture, giving rise to a syllabic rhythm (Greenberg, 2006). During conversation, this syllabic rhythm is conveyed to the listener but also as feedback to the speaker, designating it as an anchor for temporal coordination and the driver of predictive adaptation (Figure 6.1). Syllabic rhythm is typically realized at rates that correspond to delta and theta frequencies (ca. 2–8 Hz), that is, a relatively slow neural rhythm with a period in the hundreds-of-milliseconds range. The correspondence between syllabic rhythm and neural activity is most directly expressed in the concept of the theta syllable, conceived as a unit of speech information that is defined by cortical function (Ghitza, Reference Ghitza2013).

Syllabic rhythm in the speech perception–action cycle.

Speakers (left) produce an utterance with a syllabic rhythm through opening and closing movements of the mouth (mandibular cycle) and associated fluctuations in sound energy. Maximized oral aperture during vowel production generates energy peaks that define the rhythm’s rate over successive inter-peak intervals. In speakers (through feedback, left) and listeners (right), this rhythm maps onto neural oscillations in the delta-to-theta frequency range (about 2–8 Hz). This direct mapping and/or the repeated use of interval-based temporal processing mechanisms allows for factoring “time” into behavior and into intrapersonal and interpersonal adaptation. Adaptation entails timely and predictive activation to optimally allocate neural and cognitive resources in production and perception. At the highest level of the underlying processing hierarchy, the PFC provides the temporal integration and coordination capacities that bridge the temporally separate elements of the utterance into one behavioral gestalt for monitoring, planning, and comprehension.

Figure 6.1 Long description

The delta-to-theta successive intervals represent the rhythmic brainwave activity. The arrow indicates that the P F C is receiving information about these intervals. This is the cyclical movement of the jaw. The diagram shows the cycle as a series of points: b, a, b, a, b, a, b, a, b, a, and so on. The flow of the mandibular cycle in each hemisphere is as follows: P F C leads to adaptive control, followed by delta to theta successive intervals, rhythmic activation and temporal activation. A double-headed arrow between the P F Cs of the left and right hemisphere labels adaptation and another arrow labels inter-peak interval.

As an acoustically and neurally defined concept, the theta syllable rhythm does not draw on orthography, instead suggesting that information transmission in speech communication operates on syllabic “packets” (Ghitza and Greenberg, Reference Ghitza and Greenberg2009). This has important implications for signal processing as it shifts the focus from abstract points in time such as word or sentence boundaries to the concrete physical markers of time that are instantiated by successive energy peaks, potentially defining one driving rhythm across speech production and perception on the one hand and speech-related temporal processing and temporal coordination mechanisms on the other (Schwartze and Kotz, Reference Schwartze and Kotz2013; Kotz and Schwartze, Reference Kotz, Schwartze, Hickok and Luck2016).

6.5 Towards Cycles of Interaction

If the same low-frequency driving rhythm underlies the interaction of production and perception, temporal processing, and temporal coordination, it would have a broad range of implications for adaptive behavior. However, identification of this rhythm and the corresponding frequency range also imply specific limits and a potential differentiation of the role of oscillation- and interval-based temporal processing mechanisms.

Speaking and listening ultimately involve parallel acts of synchronizing information flow between the encoding and decoding capacities of speakers and listeners (Greenberg, Reference Greenberg, Greenberg and Ainsworth2012). In a similar vein, the f/c theory emphasizes the key role of the sociocultural dyad from an evolutionary perspective: “Language must have been a sociocultural invention. The first word only became a word when a receiver and a sender came to treat a particular sound complex as standing for a particular concept” (MacNeilage, Reference MacNeilage2008, p. 44). Temporal processing mechanisms may facilitate temporal coordination and synchronization also at this interpersonal level, which adds the rhythm of interaction as another temporal level to the threefold rhythmic differentiation (Rosen, Reference Rosen1992) of the acoustic signal. However, whereas oscillations and oscillation-based synchrony provide the most efficient means to synchronize encoding and decoding capacities at faster rates, interval-based temporal processing might be most efficient at rates in the hundreds-of-millisecond to seconds range and in guiding temporally predictive adaptation in the socially interactive PA cycles that are essential for the development of speech communication capacities.

Throughout the human lifespan, social interaction shapes and improves linguistic competence (Tomasello, Reference Tomasello2000; Kuhl, Reference Kuhl2007; Mundy and Jarrold, Reference Mundy and Jarrold2010; Dikker et al., Reference Dikker, Wan and Davidesco2017; Mundy, Reference Mundy2018) such as learning and proficiency in a nonnative language (Perani et al., Reference Perani, Abutalebi and Paulesu2003; Jeong et al., Reference Jeong, Sugiura and Sassa2010, Reference Jeong, Hashizume and Sugiura2011; Consonni et al., Reference Consonni, Cafiero and Marin2013; Verga and Kotz, Reference Verga and Kotz2013, Reference Verga and Kotz2019; Kuhlen et al., Reference Kuhlen, Bogler, Brennan and Haynes2017), while its absence can lead to linguistic deficits (Krashen, Reference Krashen1973; Fromkin et al., Reference Fromkin, Krashen, Curtiss, Rigler and Rigler1974; Curtiss, Reference Curtiss1977). The reason is debated. However, several theories converge on defining a social partner as an attentional enhancer/modulator based on two interrelated characteristics of social contexts, namely that i) they are intrinsically multimodal (Gogate and Bahrick, Reference Gogate and Bahrick2001; Gogate et al., Reference Gogate and Bahrick2001), and ii) two partners mutually and dynamically join attention in a common ground (Hollich et al., Reference Hollich, Hirsh-Pasek and Golinkoff2000; Tomasello, Reference Tomasello2000; Kuhl, Reference Kuhl2007; Sage and Baldwin, Reference Sage and Baldwin2010). In this shared conversational ground, a speaker and a listener can become temporally aligned at multiple levels, as indicated by converging speech rates (Schultz et al., Reference Schultz, O’Brien and Phillips2016), phonetic realizations (Mukherjee et al., Reference Mukherjee, Badino and Hilt2019), body postures (Shockley et al., Reference Shockley, Santana and Fowler2003, Reference Shockley, Baker, Richardson and Fowler2007), gaze (Richardson and Dale, Reference Richardson and Dale2005; Richardson et al., Reference Richardson, Dale and Kirkham2007), but also neural activity (Stephens et al., Reference Stephens, Silbert and Hasson2010). By contrast, reduced listener/speaker coupling is associated with difficulties in speech understanding (e.g., Liu et al., Reference Liu, Ding and Li2021).

In conversations, this mutual exchange may assume a rhythmic quality through the smooth transition of turns between a speaker and a listener, which has been suggested to rely on avoiding both long silences and long overlaps (Stivers et al., Reference Stivers, Enfield and Brown2009; Garrod and Pickering, Reference Garrod and Pickering2015). Rudimental forms of turn-taking have been observed in interactive communication in many other species (Ghazanfar and Takahashi, Reference Ghazanfar and Takahashi2014; Takahashi et al., Reference Takahashi, Fenley and Ghazanfar2016; Ravignani et al., Reference Ravignani, Verga and Greenfield2019). In humans, it is assumed that interactive alignment provides the backbone for turn-taking: By allowing conversing partners to match their mental representations (Garrod and Pickering, Reference Garrod and Pickering2004; Pickering and Garrod, Reference Pickering and Garrod2004; Menenti et al., Reference Menenti, Pickering and Garrod2012), it facilitates the prediction not only of what is coming next (i.e., a response from the listener) but also – most importantly – of when the next conversational turn should start. This efficient cognitive strategy (Koban et al., Reference Koban, Ramamoorthy and Konvalinka2019; Mukherjee et al., Reference Mukherjee, Badino and Hilt2019) benefits from nonlinguistic convergence (e.g., shared gaze and attention; Richardson and Dale, Reference Richardson and Dale2005; Menenti et al., Reference Menenti, Pickering and Garrod2012). Yet, linguistic information deriving from the jaw movements subtending syllable production has been proposed to drive the mutual entrainment of endogenous oscillators in the speaker’s and listener’s brains (Wilson and Wilson, Reference Wilson and Wilson2005; Kotz et al., Reference Kotz, Ravignani and Fitch2018; see Figure 6.1). Thus, even in dyadic conversations, syllables emerge as basic fundamental units of speech in relation to mouth and jaw movements in an action–perception cycle (MacNeilage, Reference MacNeilage2008). Thus, dyadic mutual alignment is expected to elicit fronto-central brain activity, reflecting an action–perception feedback loop (Pulvermüller, Reference Pulvermüller2018). Indeed, neural activity peaks in the mouth motor region (Glanz et al., Reference Glanz, Derix and Kaur2018) and ventral premotor cortex (Wilson et al., Reference Wilson, Saygin, Sereno and Iacoboni2004; Gordon et al., Reference Gordon, Tranel and Duff2014; Glanz et al., Reference Glanz, Derix and Kaur2018). Interestingly, similar orofacial movements such as lip-smacking in nonhuman animals have gained attention as possible precursors of cyclic speech components in the theta range (MacNeilage, Reference MacNeilage1998; Ghazanfar et al., Reference Ghazanfar, Morrill and Kayser2013; Kotz et al., Reference Kotz, Ravignani and Fitch2018). Lip-smacking is an affiliative signal observed in many primate species and is characterized by the rhythmic (vertical) opening and closing of the jaws. During the evolution of the human lineage, lip-smacking may have coupled with vocal signals to produce babbling (i.e., consonant-vocal speech-like sounds; MacNeilage, Reference MacNeilage1998, Reference MacNeilage2008; Ghazanfar and Takahashi, Reference Ghazanfar and Takahashi2014).

6.6 Conclusion

Speech communication generates acoustic and interpersonal rhythms that span multiple timescales. However, the mere fact that all speech communication is rhythmic does by no means imply that the role of the respective temporal information for speech processing is trivial. Speech serves to communicate meaning, and the acoustic signal encodes the respective information over time. Time and temporal coordination are thus not only crucial factors for the “logical and orderly arrangement of thought and action” that is at the heart of the problem of serial order of behavior (Lashley, Reference Lashley and Jeffress1951) but also for the reconstruction of orderly thought and action from the acoustic signal. The perception–action cycle framework (Fuster, Reference Fuster, Grafman, Holyoak and Boller1995) defines the basis for the arrangement and the perceptual bridging of speech events over time. However, to continuously optimize predictive adaptation to the environment, this cycle may interface with other mechanisms and with other sensory domains. To this end, oscillation- and interval-based temporal processing mechanisms may guide a temporally specific variant of the perception–action cycle to integrate what and when aspects of speech for successful communication.

Summary

Speech communication evokes rhythms that span multiple timescales. The acoustic signal evolves serially, necessitating adequate temporal coordination in production and perception. To optimize temporal coordination of neural and cognitive mechanisms, temporal processing mechanisms may exploit rhythmic speech features to guide a temporally specific variant of the perception–action cycle.

Implications

Rhythmic speech features indicate various neural and movement mechanisms. Rhythmic features in the delta-to-theta range might establish the common ground for interactions of evolutionarily ancient serial ordering, temporal processing, and higher-order temporal coordination mechanisms. This integrative perspective offers starting points for investigating the relation of speech and temporal processing capacities.

Gains

A concrete form of linguistic performance, speech communication directly reflects fundamental neural and cognitive mechanisms including rhythmic motor control, serial ordering, temporal coordination, or attention and memory allocation. A better understanding of how speech rhythm interfaces with temporal processing in perception and action can thus inform about key aspects of cognition.