It is illuminating to look back on the articles on conceptual choices (Collier and Adcock Reference Collier and Adcock1999) and measurement validity (Adcock and Collier Reference Adcock and Collier2001) that I coauthored with David Collier.Footnote 1 I reflect on these articles together since our work on the first fed into the second. My main theme will be how dualisms can hinder conversation across methodological communities. Specifically, I critically examine both the qualitative/quantitative and positivist/interpretive dualisms.

Let me first restate the shared core of our articles. We started from a commonplace observation: Political scientists routinely formulate their concepts in varying and even conflicting ways. Neither bemoaning nor celebrating this variety, we took it as a given and argued that it makes unpersuasive any generic assertions by scholars that their own conceptions are somehow inherently right or better. In framing conceptual choices as “real choices … from a range of alternatives,” we encouraged political scientists to set aside judging conceptual choices as right/wrong, true/false. Instead, we prescribed justifying their choices pragmatically in light of their specific “goals and context of research” and recognizing that other scholars with other goals and in other contexts sensibly make different choices (Reference Collier and Adcock1999: 562). We wanted political scientists to distinguish, as we put it in our second article, between the often complex and contested “background concept” and the “systematized concept” they deploy in their own research and should self-consciously craft through pragmatic choices (Reference Adcock and Collier2001: 532–33).

I find the observation and prescriptions here as compelling today as I did when we wrote. But what I do see as unhelpful was our use of the qualitative/quantitative dualism. This dualism was most prominent in our measurement validity article – subtitled “A Shared Standard for Qualitative and Quantitative Research” – and was also used in our prior talk of “qualitative and quantitative analysts” (Reference Collier and Adcock1999: 537). Using this dualism was itself a conceptual choice, and I now see how, as I will explain, it obscured a key difference in the relation of each article to interpretive research. Yet I also find the positivist/interpretive dualism that many interpretivists deploy as an alternative to the quantitative/qualitative dualism to be equally obscuring. Hence, while my own substantive research on the history of political science (Adcock Reference Adcock2014) is interpretivist in methodology, my argument here is not to replace one dualism with another. I argue that recognition and conversation across methodological communities in political science is best served by leaving behind both dualisms and rethinking accordingly the pursuit of shared standards that Collier and I (Reference Adcock and Collier2001) avowed.

Methodological Debate, New Institutions, and Dueling Dualisms

To revisit the qualitative/quantitative dualism, I will situate our articles relative to intellectual and institutional shifts in political science methodology. During the late 1990s, Gary King, Robert E. Keohane, and Sidney Verba’s Designing Social Inquiry: Scientific Inference in Qualitative Research (Reference King, Keohane and Verba1994) was the focal point of debate. They contended that there is an “essential logic underlying all social scientific research,” best articulated in “discussions of quantitative research” but equally applicable and especially needed in “qualitative research” design (3). When Collier and I used qualitative/quantitative, we participated in an intellectual response to King, Keohane, and Verba that was subsequently summed up in the title of Henry Brady and Collier’s edited volume Rethinking Social Inquiry: Diverse Tools, Shared Standards (Reference Brady and Collier2004). This response replaced talk of one “essential logic” with talk of “shared standards” and favored methodological insights flowing from “qualitative” to “quantitative” as much as in the other direction.

But methodological practice is arguably shaped more by institutions than by the merit of specific positions. Between the 1960s and 1990s, generations of political science PhD students had attended the Inter-university Consortium for Political and Social Research summer training program in quantitative methods. A second key institution, since 1986, was the American Political Science Association’s (APSA) Political Methodology section, also dominated by statistical methods. To rebalance this landscape, scholars in multiple departments were planning two new institutions as we wrote: (1) the Consortium on Qualitative Research Methods (CQRM), which offered its first training institute in 2002; and (2) the Qualitative Methods section of the APSA, of which David Collier was the founding president in 2002–03.

Creating institutions to advance “qualitative methods” tied choices about the methodological positions and methods falling within the remit of “qualitative” to resource allocation decisions: What would be taught at CQRM, and represented in the section’s panels and so on? These decisions, alongside emerging doubts about the qualitative/quantitative dualism, would lead to the 2008 renaming of these institutions to reflect their welcome of multimethod work. CQRM became the Institute for Qualitative and Multi-Method Research (IQMR) and the section Qualitative and Multi-Methods Research (QMMR), as they are to this day.

But for me the most transformative conversation accompanying the new institutions concerned the relation of “qualitative” to “interpretive” research. In the section’s inaugural newsletter, its first two presidents situated “interpretive” research within “qualitative” methods (Bennett Reference Bennett2003: 1; Collier, Seawright, and Brady Reference Collier, Seawright and Brady2003: 6). From an institutional perspective, these statements supported interpretivist claims to share in the newly created resources. But from an intellectual perspective, they struck interpretive scholars as failing to acknowledge pivotal differences. In the next newsletter issue, Dvora Yanow (Reference Yanow2003: 9–10) replied that interpretive methods have “philosophical presuppositions” fundamentally different from the “positivist philosophical presuppositions” that she saw “qualitative methods” in political science as increasingly sharing with “quantitative” research.Footnote 2

The new section’s newsletter hence showcased dueling dualisms: the quantitative/qualitative dualism, which subsumed interpretivism within “qualitative,” versus an interpretive/positivist dualism, which grouped qualitative and quantitative methods together as “positivist.” At the time I questioned if this second dualism served conversation across methodological communities (Adcock Reference Adcock2003: 16). Over time my initial skepticism has only been reinforced. But I have also come to consider the quantitative/qualitative dualism just as dubious if assessed by the goal of recognition and conversation across methodological communities.

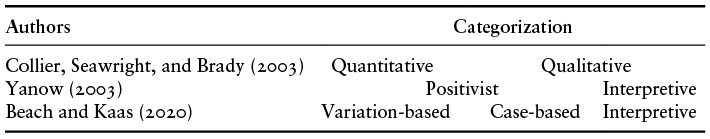

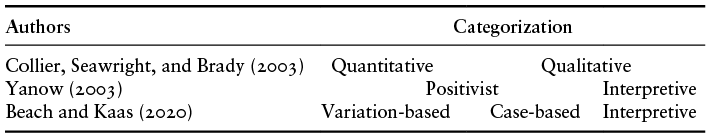

To discuss these dualisms without reproducing them requires a terminology beyond them. For this end, I will adopt Derek Beach and Jonas Gejl Kaas’ (Reference Beach and Kaas2020) trichotomy of variation-based, case-based, and interpretive research. Their label “variation-based” highlights the variables at the heart of what has been called “quantitative” work while avoiding implications about the level of measurement of those variables. “Case-based” highlights the centrality of the number and selection of cases – whether in a process tracing single-case study, a most-similar paired comparison, or a fifteen-case qualitative comparative analysis study – while avoiding implying (as “qualitative” may) that case-based researchers avoid quantitative data. The third label recognizes the interpretivist claim to distinctiveness, even as the trichotomy rejects moves to lump other researchers together under the elusive label “positivist.” My adoption of this trichotomy is, of course, itself a conceptual choice, which Table 19.1 situates alongside the alternatives provided by the dualisms whose 2003 duel I have spotlighted.

Interpretivist Responses and The Perils of Dubious Dualisms

The trajectories of the dueling dualisms have diverged since 2003. Qualitative/quantitative has lost much of its formerly taken-for-granted dominance. While I highlighted the challenge from interpretivism, the divide between variation- and case-based research has been complicated by multimethod research, and variation-based research itself subdivided as the rise of experiments and their institutionalization in APSA’s Experimental Methods section elevated another dualism: experimental/observational.

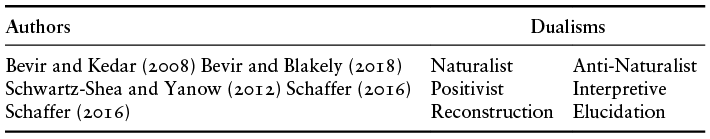

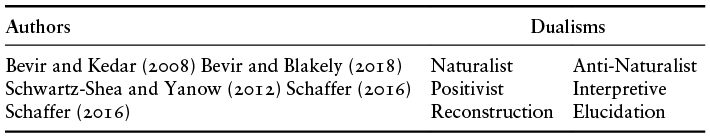

The positivist/interpretive dualism has, by contrast, secured its standing as foundational to the methodological identity of all too many interpretivists. In this section I reflect on responses from the expanding interpretivist community to my articles with Collier, engaging first the response of Mark Bevir and coauthors (Bevir and Kedar Reference Bevir and Kedar2008; Bevir and Blakely Reference Bevir and Blakely2018), who reformulate the positivist/interpretive dualism as a philosophical dualism of naturalism/anti-naturalism. Second, I engage Frederic Charles Schaffer’s (Reference Bevir and Blakely2018) response as he applies the positivist/interpretive dualism to conceptual work to distinguish “positivist reconstruction” from “interpretive elucidation.” Table 19.2 sums up the three dualisms of the interpretivist community that I critically discuss in this section.

Mark Bevir and Asaf Kedar’s (Reference Bevir and Kedar2008) “Concept Formation in Political Science: An Anti-Naturalist Critique of Qualitative Methodology” specifically critiques our conceptual choices article. They grant a potential opening to “interpretive political science” in our treatment of reification, attention to conceptual change, and recognition of the situatedness of scholars and how normative concerns inform conceptual choices (511–12). But they charge that our article “neglects the fact that objects of social inquiry have accounts of themselves” (512) and thereby forecloses this opening. It is a frustrating charge since we did explicitly, if briefly, allow that “the analyst’s treatment of democracy vis-à-vis non-democracy may need to reflect the viewpoint of the individuals who are being studied” (Reference Collier and Adcock1999: 556).

Bevir and Kedar’s critique pushed me, however, to reflect upon our openness to interpretivism and thereby uncover a key difference between our two articles. If our conceptual choices article is more open to interpretivism than Bevir and Kedar credit, our later measurement validity article is not. I missed this initially because the qualitative/quantitative dualism masked the differing reach of our use of “qualitative” in each article: In the first it encompassed both case-based and interpretive research, but in the second it focused on case-based research alone. I am comfortable with the difference in the types of research engaged in each article, since interpretivist scholars emphasize the role of concepts in their research but do not tend to see themselves as engaged in measurement. Our articulation of measurement validity as a shared standard did not, in practice, extend beyond variation and case-based research to interpretive research, and attempting such an extension would misrecognize interpretivist commitments. I do wish we had seen and explicitly stated this at the time. Realizing it retrospectively has shown me how the qualitative/quantitative dualism can undermine recognition and conversation across methodological communities.

Other moves in Bevir and Kedar (Reference Bevir and Kedar2008), reiterated in Bevir and Jason Blakely (Reference Bevir and Blakely2018: chap. 4), pushed me to clarify my thinking about “essentialism.” Both works distinguish a “strong essentialism,” holding that all social science concepts do/should have essential element/s (which Giovanni Sartori [Reference Sartori1984] illustrates for them), from “weak essentialism,” holding that this fits only some and not all such concepts (which Collier illustrates for them). Whatever our article’s position is called, Bevir and his coauthors correctly see it as incompatible with their assertion that “the particularity and contingency” of social life (Bevir and Kedar Reference Bevir and Kedar2008: 508) is so deeply pervasive that any “logic of commonality” must be avoided since the “features of social reality are never natural or essential types, with recurring nuclei of features” (Bevir and Blakely Reference Bevir and Blakely2018: 75). Their assertion is a one-size-fits-all mandate dictating how social scientists should conceive of what they study. I would rather leave scholars to judge for themselves which of their concepts they might productively conceive of in terms of a “recurring nuclei of features” and which not.

I would suggest, moreover, that interpretivist scholars do, in practice, sometimes find it useful to conceive certain concepts in terms of a “nuclei of features.” Take, for example, Peregrine Schwartz-Shea’s crisp, clear statement that “interpretive social science consists of researchers’ interpretations of actors’ interpretations” (Reference Schwartz-Shea2020: 462). If it is essentialist to see interpreting actors’ interpretations as an “essential” baseline commonality of interpretivist work, then by Bevir and his coauthors’ definition, some interpretivists might in practice be “weak essentialists” too (I certainly am one).

Lurking behind this issue is a disagreement over philosophy’s relationship to methodology. Bevir and Kedar contrast judging methods “in pragmatic terms (i.e., in terms of their substantive utility for certain lines of inquiry)” with judging them “from a philosophical standpoint” (Reference Bevir and Kedar2008: 503). Where Collier and I saw examining examples of conceptual usage in political science as a sufficient basis to advocate our pragmatic approach, they consider our article “beset by problems that arise from a lack of systematic philosophical reflection” (514).

There are at least two reasons why I do not share the faith of Bevir and his coauthors “that our discipline can only benefit” (Bevir and Kedar Reference Bevir and Kedar2008: 514) from philosophizing. First, their view of philosophy moves beyond self-reflection about one’s own assumptions to wielding assertions about the assumptions of others as a tool of critique. As much as I welcome philosophical self-clarification, I suspect that political scientists targeted by such philosophical critique are more likely to consider their methodological assumptions to have been mischaracterized than they are to be persuaded to change them. Second, I do not believe that philosophers agree to the extent Bevir and Kedar assert (Reference Bevir and Kedar2008: 504–06, 513–14), and even if they did, I do not see why we should defer to another discipline in judging how to undertake our own. Claiming that “social scientists … must catch up to philosophy” (Bevir and Blakely Reference Bevir and Blakely2018: 15) strikes me as more paternalistic (cf. Schwartz-Shea Reference Schwartz-Shea2020: 2) than persuasive.

My skepticism of overplaying the philosophy card was reinforced by Bevir and his coauthors’ naturalism/anti-naturalism dualism. Although their criticisms of some of our specific moves are clarifying, I failed to engage these criticisms initially owing to being alienated by their packaging of them under this sweeping philosophical dualism. Having been a chemistry undergraduate, and subsequently watched one college friend go on to a dissertation in theoretical nanophysics and another in geophysics field research, I do not think research aims and activities fit a single model even in one natural science discipline, let alone across them all. If there is no one natural science model, then dividing social scientists dualistically into those who accept and those who reject such a model strikes me as a grave philosophical misdirection. When Bevir and Kedar asserted that my article with Collier had “naturalist assumptions,” I felt misrecognized in a manner akin, perhaps, to how Yanow felt in reading interpretive work presented as a subpart of “qualitative” methods.

While the anti-naturalism/naturalism dualism is distinctive to Bevir and coauthors’ philosophical agenda, the interpretive/positivist dualism it reworks has become standard in interpretive methodologies and methods. It anchors Schwartz-Shea and Yanow’s Reference Schwartz-Shea and Yanow2012 Interpretive Research Design: Concepts and Processes, which inaugurated Routledge’s Series on Interpretive Methods, and is applied to conceptual work in Schaffer’s (Reference Schaffer2016) series contribution, Elucidating Social Science Concepts: An Interpretivist Guide. His book begins from a dualistic contrast of “positivist reconstruction” with “interpretive elucidation.” Since both my articles with Collier are treated as examples of “positivist reconstruction,” reflecting on Schaffer’s treatment illustrates how the positivist/interpretive dualism may also produce misrecognition.

After introducing his dualism, Schaffer (Reference Schaffer2016) argues that three problems “dog the positivist project of conceptual reconstruction” (12). I focus on “one-sidedness,” which involves “privileging those meanings of a concept that are important to the researcher while ignoring other meanings that are salient to situated actors themselves” (12). How one-sidedness “blinds the scholar to actors’ self-understandings” is ironically itself exemplified in Schaffer’s own presentation of “positivist reconstruction” (12). He begins with quotes from our measurement validity article and Gary Goertz and James Mahoney’s (Reference Goertz and Mahoney2012) A Tale of Two Cultures. The quotes suggest these works share the view that concepts should represent “actually existing reality as accurately as possible” (Goertz Reference Goertz2006: 4). But Goertz (Reference Goertz2006: 3–5) developed his “causal, ontological, and realist view of concepts” as an explicit “contrast” to the “semantic approach” of prior work on concepts. More generally, where Bevir and Kedar (Reference Bevir and Kedar2008) engaged, albeit contentiously, with differences between Collier’s work on concepts and Sartori’s, Schaffer elides these differences in presenting Sartori, Collier, and Goertz together one-sidedly, without acknowledging their self-understandings.

I do not object to Schaffer crafting a category that brings these scholars together. There is a clear line of conversation between them, and the category is potentially useful. My concern is that his choices in labeling and structuring his category undermine cross-methodological recognition and communication. His use of “reconstruction” is grounded in the conversation of scholars being categorized, and as such Schaffer’s preference for “reconstruction” over “concept formation” (Reference Schaffer2016: 13) persuades me. But adding “positivist” saddles his category with counterproductive baggage. It is ungrounded since none of the scholars self-identify in this way, and it implies a philosophical agreement that elides how much Goertz’s “causal, ontological, and realist view of concepts” differs from the pragmatist position at the core of my articles with Collier.

In terms of conceptual structure, Schaffer allows in a footnote that “individual scholars … may hold views that correspond only partially with, or perhaps differ from, the ones I have laid out” (Reference Schaffer2016: 24n2). But this leaves unaffected the lumping he carries out in the main text. Schaffer could have avoided this by structuring “reconstruction” as a prototypical concept with Sartori as the prototype. This would fit with the central role he gives Sartori as the figure relative to whom interpretivist criticisms are introduced. Or he could have introduced “reconstruction” through a narrative: starting from Sartori, following continuities and changes in Collier’s seminal 1990s works, and the shift subsequently proposed by Goertz (Reference Goertz2006). My takeaway is that a potentially productive conversation between reconstruction and elucidation as approaches to concepts is undermined by overlaying this distinction with the dubious positivist/interpretive dualism.

Conclusion: Rethinking Shared Standards

Earlier, I situated my 1999 and 2001 articles with Collier as participating in a response to King, Keohane, and Verba (Reference King, Keohane and Verba1994) that replaced their talk of one “essential logic underlying all social scientific research” with talk of “shared standards.” To conclude, I rethink shared standards in the light of my theme of dubious dualisms.

I have argued for moving beyond the qualitative/quantitative dualism to recognize interpretive research as its own methodological community. Moreover, to sidestep the freighted terms of this dualism, I adopted a trichotomy of variation-based, case-based, and interpretive research. Given our pragmatic approach to concepts, it would be self-contradictory to justify this trichotomy as somehow representing reality as accurately as possible – it obviously simplifies the complexity of political science’s methodological archipelago, as any typology must. But I do think it better serves the goal of recognition and conversation across methodological communities than either of the dueling dualisms I have judged as dubious. One payoff of switching to a trichotomy is that, without adding much complexity, it enables us to rethink shared standards since a standard could be shared by two categories without the third. Hence, tweaking our measurement validity article’s subtitle to “a shared standard for variation- and case-based research” would clarify that “measurement validity” does not extend to interpretive research and better convey the kinds of examples and techniques we integrate.

Objections here might come not from interpretivists all too willing to lump variation-based and case-based research together as “positivist” but from scholars who stress the distinctiveness of case-based from variation-based research. In their influential 2006 article and subsequent 2012 book, Goertz and Mahoney argued that these are “two cultures” with “different norms, practices, and tool kits” (Reference Goertz and Mahoney2012: vii).Footnote 3 Derek Beach and Rasmus Brun Pedersen in turn refined this line of argument to distinguish among subtypes of Causal Case-Study Methods (2016).

Without taking a position for or against (see Kuehn and Rohlfing Reference Kuehn and Rohlfing2022) the two cultures argument, I want to suggest that it can in practice reinforce rather than contradict measurement validity as a shared standard. Beach and Pedersen (Reference Beach and Brun Pedersen2016: chaps. 1 and 2) argue that variation-based and case-based research each analyze different types of causal claims using different types of evidence, and this entails different ways to define concepts. But they also rely extensively on our measurement validity framework as they treat conceptualization and measurement (chaps. 4 and 5). I find this use of our work heartening, since most manuscripts I review drawing on our article are variation-based. That some of the most eloquent proponents of the distinctiveness of case-based research find our article’s formulation of measurement validity useful suggests that our aspiration to articulate a shared standard was not a fools’ errand.

My conclusion is that shared standards can complement rather than compete with recognition of methodological differences. As another example, I would highlight that Beach and his coauthors share with interpretivists a focus on clarifying epistemological and ontological assumptions and aligning choices of methodology and method with these assumptions. If this is another standard that in practice resonates in more than one methodological community, why not call it a “shared standard”? My rethinking suggests that doing so need not imply a further claim that this standard should be accepted also by variation-based researchers, just as we can call measurement validity a shared standard without making a claim on interpretivists.

In sum, the pursuit of shared standards need not be a search for a single set of standards for our very heterogeneous discipline. Rather, it can be a search for a plurality of standards each shared in the sense that researchers in more than one methodological community recognize it as applicable to themselves. Such standards can provide (or develop from) focal points of mutually illuminating conversation between methodological communities, and no community is, I believe, so distinct as to have no standard it shares with another. To seek out such shared standards is not to deny methodological differences but to pursue a network of bridges across those differences.