17.1 Introduction

Spoken language is a complex and intricately structured physical signal. Its rich variation stems from a myriad of sources, which are not easily decomposable into discrete variables. What is more, many of these sources of information are related, yielding correlations in the speech signal that are difficult to disentangle experimentally. For spoken language processing, however, this redundancy might actually prove to be valuable, because it enables the brain to leverage one feature to draw inferences about another feature, thereby facilitating comprehension. What seems like an annoying property from an experimental perspective might be an integral condition for perceptual inference.

To give a key example, the structures of syntax and prosody are related, due to which syntactic and prosodic variation are correlated in the speech signal (Cooper and Paccia-Cooper, Reference Cooper and Paccia-Cooper1980; Ferreira, Reference Ferreira1993; Gee and Grosjean, Reference Gee and Grosjean1983; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1978, Reference Selkirk1984; Shattuck-Hufnagel and Turk, Reference Shattuck-Hufnagel and Turk1996).Footnote 1 This means that syntax and prosody probabilistically cue one another in comprehension (Cole, Reference Cole2015; Cutler et al., Reference Cutler, Dahan and Van Donselaar1997; Martin, Reference Martin2016, Reference Martin2020; Wagner and Watson, Reference Wagner and Watson2010). From the perceiver’s perspective, redundancy is quite useful, because it supports syntactic inference when the intended syntactic analysis of a spoken utterance is underdetermined. This scenario is essentially the norm for pre-linguistic infants, who strongly rely on prosodic information because their syntactic abilities are not yet sufficient to fully parse the utterances they hear. But the situation is also common in adults, who use prosody to disambiguate sentences that are structurally ambiguous. From the experimental perspective of a cognitive neuroscientist, however, this mixing of information sources is very challenging, because it makes it difficult to determine their unique contributions to the neural signal. For instance, as modulations of syntax and prosody are temporally correlated, syntactic and prosodic accounts of empirical findings in the electrophysiological literature are largely compatible with the same set of results: Like syntactic effects, neural signatures of prosodic processing frequently show up in the delta band (0.5–4 Hz) (Boucher et al., Reference Boucher, Gilbert and Jemel2019; Bourguignon et al., Reference Bourguignon, Tiège and de Beeck2013; Ghitza, Reference Ghitza2017; Glushko et al., Reference Glushko, Poeppel and Steinhauer2022; Henke and Meyer, Reference Henke and Meyer2021; Inbar et al., Reference Inbar, Genzer, Perry, Grossman and Landau2023; Meyer et al., Reference Meyer, Henry, Gaston, Schmuck and Friederici2017; Rimmele et al., Reference Rimmele, Poeppel and Ghitza2021). It is therefore often unclear to what extent seemingly syntactic effects are actually due to prosodic properties of the stimulus that are (often unavoidably) correlated with the syntactic manipulations, or vice versa.

As a case in point, a seminal study by Ding et al. (Reference Ding, Melloni, Zhang, Tian and Poeppel2016) showed that neural activity “tracks” the hierarchical structure of phrases and sentences in connected speech. This effect has been replicated with different manipulations of syntactic structure, in different languages, and in different experimental situations (e.g., Blanco-Elorrietta et al., Reference Blanco-Elorrieta, Ding, Pylkkänen and Poeppel2020; Burroughs et al., Reference Burroughs, Kazanina and Houghton2021; Makov et al., Reference Makov, Sharon and Ding2017; Martin and Doumas, Reference Martin and Doumas2017), suggesting that the signal in question is indeed a neurophysiological marker of syntactic processing. On the other hand, several authors have instead argued that these neural tracking effects do not reflect syntactic structure. One type of account attributes the effect to the overt or imposed prosodic properties of the speech signal (e.g., Boucher et al., Reference Boucher, Gilbert and Jemel2019; Glushko et al., Reference Glushko, Poeppel and Steinhauer2022; Inbar et al., Reference Inbar, Genzer, Perry, Grossman and Landau2023). Such prosodic accounts of syntactic tracking effects are seemingly successful for two related reasons. They are descriptively accurate because syntax and prosody are temporally correlated, both exhibiting periodicities in the delta range. The electrophysiological effects they elicit during spoken language processing therefore have similar spectro-temporal properties, so syntactic effects are often amenable to a prosodic explanation. A deeper, more explanatory reason for the success of prosodic accounts is architectural: Syntax and prosody are ontologically related (Cooper and Paccia-Cooper, Reference Cooper and Paccia-Cooper1980; Féry, Reference Féry2016; Ferreira, Reference Ferreira1993; Gee and Grosjean, Reference Gee and Grosjean1983; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1978, Reference Selkirk1984; Shattuck-Hufnagel and Turk, Reference Shattuck-Hufnagel and Turk1996). It is due to their interdependence that syntax and prosody are temporally correlated and largely compatible with the same electrophysiological results; temporal correlations are based on ontological relations. Insofar as prosodic accounts aim to explain syntactic tracking effects as being purely prosodic, they will therefore be architecturally incomplete, as prosodic information does not arise in speech autonomously.

Accordingly, we will show that prosodic accounts of neural tracking effects often explicitly or implicitly incorporate a role for syntax. But this does not completely invalidate those accounts. Quite the opposite, it is actually a good state of affairs, as the quest for sources of information that uniquely or exclusively explain neural effects might miss what we perceive to be the goal of the neurobiology of language, that is, to explain how language comprehension in the brain actually works. In naturally produced spoken language, neither prosody nor syntax is ever presented in isolation, so in order to understand how the brain makes sense of language, it should be explained how the interaction between different sources of (linguistic) information, and their functional interdependence, drives the neural signal. In this chapter, we present four types of arguments against prosodic accounts of syntactic tracking effects, and in favor of studying syntax and prosody together when investigating the neural foundations of speech tracking. The chapter is structured as follows: In Section 17.2, we introduce the central concept of neural tracking, and we explain which inferences are (not) licensed from such neural data (logical argument). Section 17.2.1 then discusses empirical studies that show that the brain tracks syntactic structure in connected speech. In Section 17.2.2, we review studies that have used similar experimental paradigms to investigate tracking of prosodic structure. We critically evaluate whether a prosodic account of these findings is empirically accurate (empirical argument). In Section 17.3, we discuss the tight relationship between syntax and prosody (ontological argument) from the perspectives of cognitive architecture (Section 17.3.1) and processing (Section 17.3.2). The final Section 17.4 provides a summary of our main arguments and recommendations for future work (strategic argument).

17.2 Neural Tracking

Neural tracking refers to (pseudo-)rhythmic modulations in the neural signal as a consequence of (pseudo-)rhythmic repetition of energy or information in the stimulus (Chapters 3 and 5). This process has been argued to result from endogenous oscillations synchronizing with or entraining to repeated properties of the input (“intrinsic synchronicity” in Meyer et al., Reference Meyer, Sun and Martin2020) and/or from the repetition of responses evoked by the input (Frank and Yang, Reference Frank and Yang2018; Oganian et al., Reference Oganian, Kojima and Breska2023). We use the term “tracking” descriptively, which means that we do not take a stance here on the neurobiological origin of this effect. Rather, we are concerned with the cognitive implications of observing tracking effects: If the brain represents variable (or type) X, the repeated presentation of token x at frequency f will lead to an increase in power in the frequency spectrum of the neural response at f, showing that the brain recognized x. In such a case, we say that the brain tracks X.

As such, empirical observations of neural tracking immediately present us with an inferential problem: If two relevant variables are temporally correlated in the input, their repeated presentation will elicit a neural response whose spectral properties align with the timescales of both variables. Logic dictates that without additional information, we cannot determine the representational source of this tracking effect. A crucial corollary is that neural tracking effects cannot be explained exclusively in terms of either one of these variables. More generally, as the mapping between neural signals and cognitive events is rarely (if ever) one to one (Mehler et al., Reference Mehler, Morton and Jusczyk1984; Westlin et al., Reference Westlin, Theriault and Katsumi2023), the interpretation of neural data is inherently uncertain. Yet, due to the use of ingenious experimental designs, it has been possible to show that electrophysiological brain activity tracks both syntactic and prosodic structure.

17.2.1 Tracking Syntactic Structure

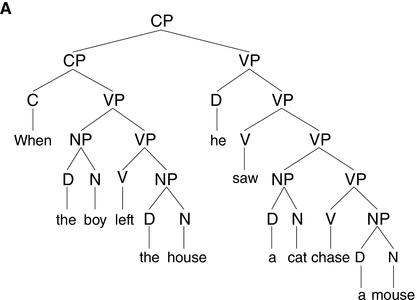

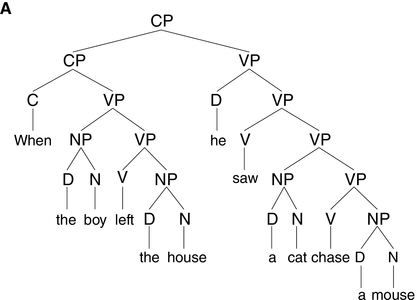

The syntactic structure of phrases and sentences is hierarchically organized (see Figure 17.1A). Speech, on the other hand, is essentially linear in its physicality, which means that syntax is not visible in the acoustic signal in the way lower-level linguistic information is. To understand spoken language, the brain must infer, or internally construct, syntactic structure using knowledge of grammar.

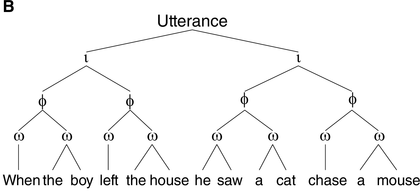

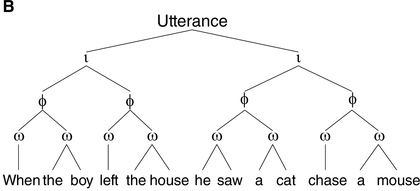

A syntactic hierarchy (A) and a prosodic hierarchy (B) for the sentence “when the boy left the house, he saw a cat chase a mouse.”

The prosodic structure is based on a subjectively natural pronunciation of the sentence. It uses the labels from Selkirk (Reference Selkirk, Goldschmit, Riggle and Yu2011), where ι = intonational phrase, φ = phonological phrase, and ω = prosodic word. As our aim is to illustrate differences in the general organization of prosodic and syntactic hierarchy (i.e., (a)symmetry of and (non)correspondence between prosodic and syntactic constituents), the syntactic structure in (A) is simplified. CP = complementizer phrase, VP = verb phrase, NP = noun phrase, and D = determiner.

Two main paradigms have been used to study neural tracking of syntactic structure. One paradigm relies on strict control of the presentation rate of linguistic structure, whereby this structure is frequency-tagged. The idea behind this approach to tracking is that when a unit of information is repeatedly presented at a specific frequency, the neural response to that type of information becomes synchronized with its presentation rate. In a magnetoencephalography (MEG) study by Ding et al. (Reference Ding, Melloni, Zhang, Tian and Poeppel2016), participants listened to connected speech sequences of monosyllabic words, which were isochronously presented at 4 Hz (i.e., each word lasted 250 ms). Within these sequences, two adjacent words could repeatedly be grouped into 500 ms phrases, and two adjacent phrases could repeatedly be grouped into 1,000 ms sentences, such as in [S [NP new plans] [VP gave hope]]. It was found that electrophysiological brain activity concurrently tracked the time courses of words, phrases, and sentences, showing peaks in the neural power spectrum at 4 Hz (words), 2 Hz (phrases), and 1 Hz (sentences). Because all words were synthesized independently and presented isochronously, the speech sequences contained no overt prosody. As a result, only the words were clearly defined by acoustic boundaries and therefore physically discernible in the speech signal. Phrases and sentences, instead, were dissociated from prosodic cues and thus had to be internally constructed using knowledge of grammar (Burroughs et al., Reference Burroughs, Kazanina and Houghton2021; Ding et al., Reference Ding, Melloni, Zhang, Tian and Poeppel2016; Martin and Doumas, Reference Martin and Doumas2017). Indeed, neural activity emerged only at those timescales that correspond to linguistic structures: When the sequences contained words that could be grouped into two-word phrases at most, such as [NP new plans] [NP dry fur], brain recordings revealed a tracking effect at the 2 Hz phrase rate only. No effect emerged at the 1 Hz sentence rate, supposedly because there are no naturally sensible groupings present at 1 Hz. Moreover, neural tracking of syntactic structure does not appear during sleep (Makov et al., Reference Makov, Sharon and Ding2017), nor when the listener does not understand the language (Ding et al., Reference Ding, Melloni, Zhang, Tian and Poeppel2016) or when the speech is embedded in noise (Blanco-Elorrietta et al., Reference Blanco-Elorrieta, Ding, Pylkkänen and Poeppel2020), suggesting that syntactic tracking effects are linked to actual understanding (i.e., comprehension of the structures).

Because overt prosodic cues are commonly removed from the speech input in frequency-tagging studies, these effects do not easily lend themselves to a prosodic explanation. In another relevant paradigm, the same question is addressed using naturalistic stimuli in which prosody is overtly present but matched across conditions. Kaufeld et al. (Reference Kaufeld, Bosker and ten Oever2020) investigated whether neural speech tracking is modulated by the syntactic and semantic content of the linguistic input. In an electroencephalography (EEG) study, they presented participants with naturally spoken stimuli in three conditions: regular sentences, pseudoword sentences, and unstructured word lists. Tracking was quantified through phase coupling between the speech envelope and neural activity. The presentation rate of phrases, which was derived from manual annotations of the stimuli, served as the frequency band of interest (within the delta band). At this phrasal timescale, speech-brain coupling was stronger for regular sentences than for both pseudoword sentences and word lists (see also Coopmans et al., Reference Coopmans, de Hoop, Hagoort and Martin2022a). Importantly, this effect was replicated in more direct measures of syntactic tracking, that is, when coupling was computed between the EEG signal and abstract annotations that reflect syntactic structure but contain no acoustic information. These findings show that the brain tracks the timescale of syntactic phrases more strongly when this timescale contains meaningful information and is therefore relevant for language comprehension (Coopmans et al., Reference Coopmans, de Hoop, Hagoort and Martin2022a; Kaufeld et al., Reference Kaufeld, Bosker and ten Oever2020; Keitel et al., Reference Keitel, Gross and Kayser2018; ten Oever et al., Reference ten Oever, Carta, Kaufeld and Martin2022). Thus, like the tracking effects of frequency-tagging studies, these coupling-based tracking effects are linked to actual understanding of the structures.

Because these effects have been attributed to the tracking of syntactic structure (Blanco-Elorrietta et al., Reference Blanco-Elorrieta, Ding, Pylkkänen and Poeppel2020; Burroughs et al., Reference Burroughs, Kazanina and Houghton2021; Coopmans et al., Reference Coopmans, de Hoop, Hagoort and Martin2022a; Ding et al., Reference Ding, Melloni, Zhang, Tian and Poeppel2016; Kaufeld et al., Reference Kaufeld, Bosker and ten Oever2020; Martin and Doumas, Reference Martin and Doumas2017, Reference Martin and Doumas2019; ten Oever et al., Reference ten Oever, Carta, Kaufeld and Martin2022), we will conveniently refer to them as “syntactic tracking effects.” Note that this is not meant to imply that the effects are necessarily or exclusively reflective of syntactic structure. One should be skeptical of such claims of exclusivity. The brain tracks anything it recognizes, including transitional probabilities (Henin et al., Reference Henin, Turk-Browne and Friedman2021), rule-based chunks (Jin et al., Reference Jin, Lu and Ding2020), and semantic properties of words (Frank and Yang, Reference Frank and Yang2018). If these variables occur at the frequency of phrases (as they often do, even in natural language), their tracking effects will show up at the phrase rate. As already mentioned in Section 17.2, this logically means that not one of these factors exclusively explains tracking effects. Nevertheless, the idea that syntactic tracking effects can be explained in terms of prosody is quite prominent, because syntax and prosody are temporally correlated. Due to their similar periodicity, they are largely compatible with the same electrophysiological results and are therefore experimentally and analytically difficult to tease apart. In this chapter, we evaluate, both empirically and conceptually, how well prosodic explanations of syntactic tracking effects fare. In the next section (Section 17.2.2), we will discuss empirical studies linking electrophysiological signals to prosodic processing. To foreshadow our conclusion, none of these studies demonstrate purely prosodic effects. Rather, in each case we find modulatory effects of syntactic structure. This is expected given the strong relationship between syntax and prosody in natural language, which will be discussed in Section 17.3.

17.2.2 Tracking Prosodic Structure

The prosodic structure of spoken utterances is hierarchically organized. As illustrated in Figure 17.1B, an utterance contains one or more intonational phrases (denoted ι), which are composed of phonological phrases (φ, also called intermediate phrases), which are in turn composed of prosodic words (ω) (Beckman and Pierrehumbert, Reference Beckman and Pierrehumbert1986; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1984, Reference Selkirk, Goldschmit, Riggle and Yu2011; Shattuck-Hufnagel and Turk, Reference Shattuck-Hufnagel and Turk1996).Footnote 2 Prosodic phrasing is phonetically marked in various ways. The boundaries between ι-phrases, for instance, are cued by a silent pause, a change in pitch or fundamental frequency, and lengthening of segments preceding the boundary (Beckman and Pierrehumbert, Reference Beckman and Pierrehumbert1986; Cooper and Paccia-Cooper, Reference Cooper and Paccia-Cooper1980; Ferreira, Reference Ferreira1993; Nespor and Vogel, Reference Nespor and Vogel1986; Price et al., Reference Price, Ostendorf, Shattuck‐Hufnagel and Fong1991; Selkirk, Reference Selkirk1984; Wightman et al., Reference Wightman, Shattuck‐Hufnagel, Ostendorf and Price1992). In addition to these phonetic markers of prosodic structure, people process prosody even if it is not overtly realized in the stimulus (e.g., in reading). This is known as implicit prosody, a subvocally activated prosodic representation that is projected onto the stimulus and can affect syntactic processing in much the same way as overt prosody does (Breen, Reference Breen2014; Fodor, Reference Fodor1998, Reference Fodor2002).

Prosodic structure has rhythmic properties, with phrasal boundaries periodically occurring roughly once per second in naturally produced speech (Inbar et al., Reference Inbar, Grossman and Landau2020; Stehwien and Meyer, Reference Stehwien and Meyer2022). These low-frequency prosodic regularities provide a possible source of information for the brain to exploit. Indeed, electrophysiological studies have shown that sentential prosody is tracked by slow neural activity in the delta range. While this overlaps quite strongly with the reported timescale of syntactic processing, we will show in our discussion of these results in the current section that none of them unequivocally shows that syntactic tracking effects can be explained in terms of prosodic processing.

In spoken language comprehension, the boundaries of multi-word chunks are accompanied by a slow event-related potential (ERP) component in the EEG signal. This so-called Closure Positive Shift (CPS) is elicited by ι-boundaries that are phonetically marked through a pitch change and pre-boundary lengthening (Steinhauer et al., Reference Steinhauer, Alter and Friederici1999). Given the low-frequency rhythmicity of ι-phrases, it is expected that the neural response to repeated phrase closures has a low frequency as well. Indeed, a closure-related CPS is periodically elicited at regular two- to three-second intervals (Roll et al., Reference Roll, Lindgren, Alter and Horne2012; Schremm et al., Reference Schremm, Horne and Roll2015), and CPS effects elicited by ι-boundaries contribute to delta-band speech tracking (Inbar et al., Reference Inbar, Genzer, Perry, Grossman and Landau2023). As these CPS effects are observed even when overt prosodic cues are absent, it has been suggested that there are endogenous, time-driven constraints on the grouping of words into multi-word chunks, and that these constraints operate at a frequency corresponding to the (lower) delta band (Chapter 18; Henke and Meyer, Reference Henke and Meyer2021; Meyer et al., Reference Meyer, Henry, Gaston, Schmuck and Friederici2017; Roll et al., Reference Roll, Lindgren, Alter and Horne2012; Vetchinnikova et al., Reference Vetchinnikova, Konina, Williams, Mikušová and Mauranen2023).

In this context, it is relevant to mention a set of seemingly discrepant findings from the ERP literature. On the one hand, it has been shown in adult listeners that a CPS can be elicited by prosodically modulated stimulus sequences from which all syntactic cues are removed (e.g., hummed sentences, sentence melodies) (Gilbert et al., Reference Gilbert, Boucher and Jemel2015; Pannekamp et al., Reference Pannekamp, Toepel, Alter, Hahne and Friederici2005; Steinhauer and Friederici, Reference Steinhauer and Friederici2001). This shows that prosodic structure alone is sufficient to elicit a CPS. On the other hand, the CPS in spoken sentence processing is modulated by whether contextually induced syntactic expectations do or do not support a prosodic boundary (Kerkhofs et al., Reference Kerkhofs, Vonk, Schriefers and Chwilla2007), even to the extent that a CPS can be elicited by syntactic phrase boundaries that are not marked by prosodic boundary cues (Itzhak et al., Reference Itzhak, Pauker, Drury, Baum and Steinhauer2010). Moreover, infant studies show that prosodically induced CPS effects are dependent on syntactic development, as they are only observed in children who have acquired knowledge of phrase structure (Männel and Friederici, Reference Männel and Friederici2011). In contradiction to the earlier conclusion, these findings indicate that prosodic boundary cues are neither necessary nor sufficient to elicit a CPS. Addressing the apparent discrepancy, the results can be reconciled by the idea that, due to the tight interplay between syntax and prosody, the presence of one type of information in the input automatically activates the other. If prosodic modulations trigger syntactic representations, and syntactic cues activate prosodic structure, prosodic phrasing can be initiated even when acoustic or syntactic markers of phrase closure are not explicitly present. Either type of information is sufficient to elicit a closure-related CPS, provided that the listener is linguistically proficient – the interactive mapping between syntax and prosody requires understanding of speech structure based on syntactic knowledge (Männel and Friederici, Reference Männel and Friederici2011). Summing up this brief discussion of the ERP literature, even for a brain response that might initially seem to be a pure reflection of (implicit) prosodic processing, the role of syntax cannot be ignored.

A similar argument can be made about the results of a recent frequency-tagging study. Specifically, Glushko et al. (Reference Glushko, Poeppel and Steinhauer2022) claim that the syntactic tracking effects observed by Ding et al. (Reference Ding, Melloni, Zhang, Tian and Poeppel2016) predominantly reflect prosodic rather than syntactic processing. In Ding et al. (Reference Ding, Melloni, Zhang, Tian and Poeppel2016), the 2 Hz peak corresponded to the phrasal timescale and was therefore interpreted as reflecting syntactic tracking. The prosodic account put forward by Glushko et al. instead holds that the 2 Hz peak for sentences with a 2+2 syntactic structure, such as [NP new plans] [VP gave hope], reflects an implicit grouping of the sentence into “new plans / gave hope” (the / indicates a prosodic break), which contains two equally sized φ-phrases. This prosodic analysis is possible despite the absence of prosodic cues in isochronous speech because people are known to project implicit prosodic structure onto the input (e.g., following a same-size-sister constraint; see Section 17.3.1). In an EEG experiment, Glushko et al. (Reference Glushko, Poeppel and Steinhauer2022) used sentences with a 1+3 syntactic structure, such as [NP John] [VP likes big trees], which can be analyzed via a 2+2 prosodic grouping as well. In line with their hypothesis, these isochronously presented sentences elicit a 2 Hz peak in the neural power spectrum despite the absence of a major syntactic boundary in the middle of the sentence (i.e., between “likes” and “big”). This delta effect initially suggests that people prosodically analyzed these sentences in a way that is not suggested by their syntactic structure. However, it is not obvious that the listeners’ implicit prosodic analysis is purely prosodic, or whether it is also sensitive to the syntactic structure of the sentence. Notice that the 2+2 prosodic grouping of a sentence with such a 1+3 structure retains some of its syntactic constituency. In “John likes big trees,” for instance, the 2+2 grouping yields “John likes / big trees.” While the elements to the left of the prosodic break (“John likes”) do not form a syntactic constituent, the elements to the right of the break (“big trees”) do, making this prosodic grouping syntactically not entirely unacceptable. In other words, the prosodic units are not identical to the syntactic constituents, but the prosodic boundary does correspond to a relevant syntactic boundary. It is easy to come up with sequentially similar sentences for which this is not the case, such as [NP the cute kid] [VP laughs]. Applying a 2+2 prosodic grouping to this 3+1 structure yields “the cute / kid laughs.” This grouping intuitively sounds much less natural, plausibly because neither of the two resulting prosodic phrases corresponds to a syntactic phrase. Thus, while it initially seems that implicit groupings are induced by mechanisms other than syntactic processing, they might still be sensitive to syntax, in the sense that where people place implicit prosodic boundaries is affected by the syntactic structure underlying the sentence.

Studies with naturally produced speech also show that prosodic modulations affect low-frequency neural activity. For instance, Bourguignon et al. (Reference Bourguignon, Tiège and de Beeck2013) found similar levels of speech-brain coupling in the delta band for listeners’ native speech, nonnative speech, and hummed speech. As these effects are independent of people’s ability to process the meaning and syntactic structure of the input, they were explained in terms of the similar prosodic rhythmicity of the different stimulus types. Relatedly, Boucher et al. (Reference Boucher, Gilbert and Jemel2019) reject the idea that delta activity tracks abstract, non-sensory information. Instead, they argue that delta oscillations underlie sensory chunking via entrainment to articulated sounds. In their EEG study, participants listened to sentences, meaningless syllable strings, and tone sequences, all of which were prosodically matched by having similar patterns of timing, pitch, and energy. Delta entrainment was considerably reduced for tone sequences compared to both types of speech stimuli, arguably because tone sequences do not contain articulated sounds. No difference was found between sentences and syllable strings, suggesting that the syntactic content of sentences did not affect delta entrainment. To explain these results, Boucher et al. (Reference Boucher, Gilbert and Jemel2019) argue that delta oscillations entrain to temporal groups marked by articulated sounds, which are equally present in spoken sentences and syllable strings. This account, however, cannot explain existing work that does show a relationship between delta-band activity and the high-level linguistic content of speech. First, both Kaufeld et al. (Reference Kaufeld, Bosker and ten Oever2020) and Coopmans et al. (Reference Coopmans, de Hoop, Hagoort and Martin2022a) find stronger speech-brain coupling at the delta rate for sentences than for prosodically matched stimuli that lack syntactic structure or meaning. Second, Keitel et al. (Reference Keitel, Gross and Kayser2018) reported stronger delta-band tracking for comprehended than for uncomprehended spoken sentences. And third, recent EEG studies by Meyer et al. show that delta phase and power reflect people’s syntactic parsing choices independent of delta entrainment to speech prosody (Henke and Meyer, Reference Henke and Meyer2021; Meyer et al., Reference Meyer, Henry, Gaston, Schmuck and Friederici2017). If delta tracking only reflects the sensory chunking of speech into temporal groups, it is unclear why it is stronger for regular sentences than for prosodically matched pseudoword sentences, why it correlates with behavioral measures of comprehension, and why it is predictive of people’s syntactic analysis of a sentence.

In sum, it has been shown that delta-band neural activity tracks overt and implicit prosody during speech comprehension. Our empirical evaluation reveals both that these prosodic tracking effects are modulated by syntax and that prosodic explanations either do not cover the full range of empirical results or implicitly incorporate a notion of syntactic structure. In the rest of this chapter, we will argue that this is expected, because syntax and prosody in natural language are strongly tied together (Section 17.3). For this reason, we should not aim to explain syntactic tracking effects as being prosodic in nature, but rather, face the challenging task of determining how prosody and syntax complement and constrain one another, both in the stimulus and in the neural signal. We end by discussing such an interactive approach to syntax and prosody in the brain (Section 17.4).

17.3 The Relationship between Syntactic and Prosodic Constituency

17.3.1 Structural Alignments and Discrepancies

Prosody is often characterized in terms of the boundaries marking the edges of prosodic constituents and the relative prominence (or accent) assigned to a designated element within these constituents (Cole, Reference Cole2015; Shattuck-Hufnagel and Turk, Reference Shattuck-Hufnagel and Turk1996; Wagner and Watson, Reference Wagner and Watson2010). Both of these play an important role in language processing, but they signal different aspects of the meanings of sentences: While prosodic prominence tends to mark focus and discourse status, prosodic boundaries are commonly related to the boundaries of syntactic structure. Concerning the latter, Selkirk (Reference Selkirk1978) observed that a particular intonational contour is required for certain syntactic constituents, such as preposed adverbials (e.g., “In Pakistan // Tuesday is a holiday”), nonrestrictive relative clauses (e.g., “Tuesday // which is a weekday // is a holiday”), and parenthetical expressions (e.g., “Tuesday is // Jane said // a holiday”), each of which forms a separate ι-phrase (delineated by //). Here, the boundaries of prosodic constituents directly coincide with the boundaries marking major syntactic phrases. In most cases, however, intonational phrasing is not directly informed by syntax but rather based on prosodic structure, which is derived from syntactic constituency but not identical to it (Ferreira, Reference Ferreira1993; Féry, Reference Féry2016; Gee and Grosjean, Reference Gee and Grosjean1983; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1984).

Recall that segments at the end of prosodic phrases are often lengthened and followed by a pause (Cooper and Paccia-Cooper, Reference Cooper and Paccia-Cooper1980; Ferreira, Reference Ferreira1993; Nespor and Vogel, Reference Nespor and Vogel1986; Price et al., Reference Price, Ostendorf, Shattuck‐Hufnagel and Fong1991; Wightman et al., Reference Wightman, Shattuck‐Hufnagel, Ostendorf and Price1992). Structurally ambiguous phrases, such as “old men and women,” can be disambiguated by these prosodic cues. That is, the word “men” and the subsequent pause are longer in “old men / and women,” where they occur at the end of a noun phrase, than in “old / men and women,” where they occur phrase-medially. As prosodic phrase boundaries tend to align with syntactic phrase boundaries, acoustic cues such as pre-boundary lengthening provide information about syntactic structure. However, these cues are not diagnostic, because pre-boundary lengthening can vary in the absence of syntactic differences. For instance, compared to longer subjects, shorter subjects are less likely to be accompanied by boundary-related phonetic cues in production (pre-boundary lengthening and subsequent pause; Gee and Grosjean, Reference Gee and Grosjean1983; Watson and Gibson, Reference Watson and Gibson2004) and they do not elicit a CPS in comprehension (Hwang and Steinhauer, Reference Hwang and Steinhauer2011). Rather than directly reflecting syntax, it appears that the magnitude of pre-boundary lengthening effects is related to the perceived strength of prosodic boundaries (Ferreira, Reference Ferreira1993; Price et al., Reference Price, Ostendorf, Shattuck‐Hufnagel and Fong1991; Wightman et al., Reference Wightman, Shattuck‐Hufnagel, Ostendorf and Price1992). Thus, syntactic structure affects phonetic variation indirectly, through intermediate prosodic structure.

It has repeatedly been observed that prosodic structure reflects syntactic structure in important ways, but is not isomorphic to it (Ferreira, Reference Ferreira1993; Féry, Reference Féry2016; Gee and Grosjean, Reference Gee and Grosjean1983; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1984). Several arguments have been presented for a prosodic structure representation that is distinct from syntactic structure (Selkirk, Reference Selkirk, Goldschmit, Riggle and Yu2011; Shattuck-Hufnagel and Turk, Reference Shattuck-Hufnagel and Turk1996). First, the two have different formal properties. Compared to syntactic hierarchies, which are deeply embedded and fundamentally asymmetrical, prosodic structure is symmetrical and rather flat (Gee and Grosjean, Reference Gee and Grosjean1983; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1978, Reference Selkirk1984). Second, prosodic constituents may systematically deviate from syntactic constituents. For instance, the sentences “John is eager to please” and “John is easy to please” are superficially similar and, when produced naturally, receive the same analysis in terms of prosodic constituency. However, their underlying syntactic structures are very different: “John” is the subject of “please” in the first sentence, but its object in the second sentence. Moreover, prosodically well-formed sequences may be syntactically unacceptable, as in the ungrammatical “John is easy to please Mary.” Note again that the prosodically similar “John is eager to please Mary” is fully acceptable, showing that syntactic deviance need not be reflected in prosodic deviance. Third, and conversely, there is considerable variability in the prosodic realization of one and the same syntactic structure. Non-syntactic factors such as speech rate, semantic coherence, discourse status, and independent phonological well-formedness constraints all affect prosodic structuring (Ferreira, Reference Ferreira1993; Frazier et al., Reference Frazier, Clifton and Carlson2004; Gee and Grosjean, Reference Gee and Grosjean1983; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1984, Reference Selkirk, Goldschmit, Riggle and Yu2011; Watson and Gibson, Reference Watson and Gibson2004).

One such non-syntactic factor that has received considerable attention is the same-size-sister constraint, which reflects speakers’ tendency to place boundaries at locations such that the prosodic phrases on both sides of the boundary are roughly the same weight and length (Cooper and Paccia-Cooper, Reference Cooper and Paccia-Cooper1980; Fodor, Reference Fodor1998; Gee and Grosjean, Reference Gee and Grosjean1983). This preference for balance results in symmetry in the prosodic hierarchy (see Figure 17.1B), contrasting with the asymmetrical structure of syntactic hierarchy (Figure 17.1A). A well-known case is presented by recursively embedded clauses, such as “this is the cat that chased the rat that ate the cheese.” The syntactic structure of this sentence is asymmetrical and deeply nested, as shown by the number of closing brackets at the end of [this [is [the cat [that chased [the rat [that ate [the cheese]]]]]]]. When produced naturally, however, the intonational bracketing is rather flat, roughly corresponding to “this is the cat // that chased the rat // that ate the cheese,” with all ι-phrases being approximately the same size, and with none of the ι-breaks corresponding to clause boundaries (Chomsky and Halle, Reference Chomsky and Halle1968). That prosodic boundaries might occur at places that are not major syntactic boundaries is also seen in Figure 17.1B, where the subject and the finite verb in the main clause form a prosodic constituent (the ω-word “he saw”) to the exclusion of the object (“a cat chase a mouse”), which is not consistent with the syntactic constituency analysis (Figure 17.1A).

To sum up, syntax and prosody are systematically related, but their structures are not isomorphic. According to Féry (Reference Féry2016), the relation of syntax to prosody is one of homomorphism. Homomorphic maps are structure-preserving, but in contrast to isomorphisms they need not be one to one, which means that the inverse relation is not necessarily structure-preserving. In other words, the structure of syntax is preserved in prosodic structure, but because of the smaller number of constituents in the prosodic structure, not all syntactic details are retained in the map.Footnote 3 Syntactic structure therefore cannot uniquely be derived from prosodic patterns. This would explain why prosodic boundaries nearly always index syntactic boundaries, while many syntactic boundaries are not prosodically marked.

17.3.2 Syntax–Prosody Interactions in Language Processing

Given that the boundaries of prosodic structure tend to align with syntactic phrase boundaries, prosodic information can be used as an informative cue during syntactic processing, and conversely, knowledge of syntax can be used to make inferences about prosodic structure (for reviews, see Cutler et al., Reference Cutler, Dahan and Van Donselaar1997; Wagner and Watson, Reference Wagner and Watson2010). Concerning the former, behavioral studies with syntactically ambiguous sentences show that people use prosodic boundary cues to resolve temporary syntactic ambiguities in comprehension and production (Kjelgaard and Speer, Reference Kjelgaard and Speer1999; Marslen-Wilson et al., Reference Marslen-Wilson, Tyler, Warren, Grenier and Lee1992; Millotte et al., Reference Millotte, Wales and Christophe2007; Schafer et al., Reference Schafer, Speer, Warren and White2000; Speer et al., Reference Speer, Kjelgaard and Dobroth1996). For instance, in a constrained production experiment by Schafer et al. (Reference Schafer, Speer, Warren and White2000), participants had to produce sentences with early- versus late-closure ambiguities, as in the examples below. In the late-closure analysis of the subordinate clause, “the square” is the direct object of “moves” (as in 2), whereas it is the subject of the main clause when the subordinate clause is closed early (as in 1). Speakers consistently placed an ι-boundary at the subordinate clause boundary, whose location varied depending on the meaning the speakers wanted to convey.

1. When that triangle moves // the square will …

2. When that triangle moves the square // it …

In a subsequent comprehension experiment, it was found that listeners use the speakers’ prosodic phrasing to constrain their syntactic analysis (Schafer et al., Reference Schafer, Speer, Warren and White2000). The same results are reported for globally ambiguous sentences, which show that speakers and listeners typically avoid attaching two syntactic constituents that are separated by a prosodic break. In a sentence such as “when you learn gradually you worry more,” people use prosodic boundaries to disambiguate the intended reading (here, left versus right attachment of “gradually”), both in speaking and in listening (Kraljic and Brennan, Reference Kraljic and Brennan2005; Price et al., Reference Price, Ostendorf, Shattuck‐Hufnagel and Fong1991; Snedeker and Trueswell, Reference Snedeker and Trueswell2003).

Similar effects of prosodic phrasing on the interpretation of locally ambiguous sentences are reported in the ERP literature. These effects reflect not only the processing of the prosodic boundary itself (see Section 17.2.2) but also its downstream consequences for sentence interpretation. Consider the sentences in 3 and 4, which are identical up to the embedded verb (Bögels et al., Reference Bögels, Schriefers, Vonk, Chwilla and Kerkhofs2010). Depending on the argument structure of this verb, de soldaat “the soldier” is either the object in the embedded sentence (in 4, when the embedded verb is the transitive vermoorden “to kill”) or the direct object of the main verb beval “ordered” (in 3, when the embedded verb is the intransitive vuren “to fire”). If the sentence is presented without overt prosodic boundaries and is truncated before the embedded verb, people have a preference for the intransitive reading.

3. De commandant beval // de soldaat te vuren en …

The commander ordered // the soldier to fire and …

4. De commandant beval // de soldaat te vermoorden en …

The commander ordered // to kill the soldier and …

When these sentences are presented with the intonational phrasing indicated, the ι-boundary after the verb beval “ordered” elicits a CPS in the ERP signal, indexing the closure of a prosodic phrase. Moreover, because people are biased against integrating information across a prosodic boundary, the presence of this ι-boundary creates the expectation that the upcoming words will form a constituent. This means that de soldaat “the soldier” is initially not interpreted as belonging to beval “ordered,” but rather as the object of the upcoming verb, which is therefore expected to be transitive (as in 4). Indeed, the disambiguating intransitive verb in 3 elicited an increased N400, reflecting processing difficulty associated with a verb whose argument structure violates a prosody-induced syntactic expectation (Bögels et al., Reference Bögels, Schriefers, Vonk, Chwilla and Kerkhofs2010; Friederici et al., Reference Friederici, von Cramon and Kotz2007; Steinhauer et al., Reference Steinhauer, Alter and Friederici1999). Corroborating the behavioral data, these ERP results show that prosodic information can determine people’s syntactic analyses of locally ambiguous sentences by completely reversing their default parsing preferences (see also Henke and Meyer, Reference Henke and Meyer2021).

It is important to note that prosody can only disambiguate two interpretations of the same sentence if their constituent boundaries are located at different places (Nespor and Vogel, Reference Nespor and Vogel1986). An ambiguous sentence such as “flying planes can be dangerous” is difficult to disambiguate prosodically because the words that cause the ambiguity – “flying planes” – form a syntactic constituent in both meanings. Even for structurally ambiguous sentences, however, a local prosodic boundary is not an unambiguous cue to syntactic closure or attachment. Rather, what matters is the global prosodic representation, in which the informativeness of a prosodic boundary is evaluated relative to other prosodic and phonological cues, including the strength of other boundaries and the lengths of the constituents it flanks. That is, a φ-boundary is more decisive syntactically when it is the only prosodic cue than when it is preceded by a phonologically stronger ι-boundary (Carlson et al., Reference Carlson, Clifton and Frazier2001; Clifton et al., Reference Clifton, Carlson and Frazier2002). And prosodic boundaries are perceived to be more informative about the intended structure of a sentence when they flank short constituents than when they flank long constituents (Clifton et al., Reference Clifton, Carlson and Frazier2006). Other things being equal, the probability of a prosodic boundary increases when the constituents surrounding it are longer (Ferreira, Reference Ferreira1993; Frazier et al., Reference Frazier, Clifton and Carlson2004; Gee and Grosjean, Reference Gee and Grosjean1983; Hwang and Steinhauer, Reference Hwang and Steinhauer2011; Watson and Gibson, Reference Watson and Gibson2004). Thus, when the surrounding constituents are short, the prosodic boundary is not justified by constituent length, so listeners assume it to be driven by syntactic structure (Clifton et al., Reference Clifton, Carlson and Frazier2006). In accordance with the non-isomorphism between prosodic and syntactic structure, prosodic phrasing cues syntactic decomposition probabilistically, not diagnostically.

Concerning the effect of syntax on prosodic parsing, it has been shown that syntactic constituency guides the perception of prosody. Cole et al. (Reference Cole, Mo and Baek2010) asked untrained listeners to prosodically transcribe naturalistic speech and found that syntactic context made an independent contribution to the perception of prosodic boundaries. Similarly, in a prosodic boundary detection task with spoken sentences, Buxó-Lugo and Watson (Reference Buxó-Lugo and Watson2016) found that the probability of boundary marking was higher for syntactically licensed locations than for non-licensed locations, even when the acoustic properties of the boundaries were experimentally controlled (see also Fodor and Bever, Reference Fodor and Bever1965). These two studies show that prosodic boundaries are perceptually more salient when they coincide with the boundaries of syntactic constituents. This is in line with acquisition studies showing that children are sensitive to the acoustic correlates of syntactic structure. Very young infants prefer speech in which artificially inserted pauses coincide with syntactic phrase boundaries compared to speech in which the pauses are inserted in the middle of phrases (Hirsh-Pasek et al., Reference Hirsh-Pasek, Kemler Nelson and Jusczyk1987; Jusczyk et al., Reference Jusczyk, Hirsh-Pasek and Kemler Nelson1992; Kemler Nelson et al., Reference Kemler Nelson, Hirsh-Pasek, Jusczyk and Cassidy1989). What is more, infants use their sensitivity to the prosodic marking of syntactic units to recognize these units in natural speech. After being familiarized with phonological word sequences that were spoken both as a prosodically well-formed syntactic constituent and as a syntactic non-constituent (i.e., the prosodic structuring indexed syntactic constituency), infants prefer listening to passages in which the sequences constitute a constituent over passages in which the sequences cross a phrase boundary and form a non-constituent (Soderstrom et al., Reference Soderstrom, Seidl, Kemler Nelson and Jusczyk2003). Prosodic well-formedness thus facilitates the identification and recognition of syntactic units in continuous speech.

There might be a role for delta tracking in modulating this relationship between syntactic and prosodic grouping. Infants’ preference for coinciding syntactic and prosodic boundaries holds more strongly for child-directed speech than for adult-directed speech (Kemler Nelson et al., Reference Kemler Nelson, Hirsh-Pasek, Jusczyk and Cassidy1989). A possible reason is that child-directed speech is characterized by strong delta-band regularities (i.e., enhanced amplitude modulation and rhythmic regularity; Leong et al., Reference Leong, Kalashnikova, Burnham and Goswami2017), which support the perceptual inference of higher-level linguistic structure. Indeed, it has been observed in young infants that speech-brain coupling at the prosodic stress rate is higher for child-directed speech than for adult-directed speech (Menn et al., Reference Menn, Michel, Meyer, Hoehl and Männel2022). Given that prosodic boundaries often coincide with syntactic boundaries, the infant brain might be able to bootstrap its syntactic acquisition by neurally tracking the prosodic delta-band modulations that are prominent in child-directed speech (Jusczyk et al., Reference Jusczyk, Hirsh-Pasek and Kemler Nelson1992; Männel and Friederici, Reference Männel and Friederici2011; Soderstrom et al., Reference Soderstrom, Seidl, Kemler Nelson and Jusczyk2003).

17.4 Integrating Prosodic and Syntactic Accounts of Neural Tracking Effects

In the preceding sections, we have given several reasons for why we should not attempt to explain syntactic tracking effects in prosodic terms (or vice versa). Our arguments are logical (i.e., due to the absence of one-to-one mappings, neural signals do not uniquely index cognitive events; see Section 17.2), empirical (i.e., the neural correlates of prosodic processing are modulated by syntax; see Section 17.2.2), and ontological (i.e., prosodic and syntactic constituency are ontologically related; see Section 17.3). In this last section, we will offer a fourth argument, which is more strategic. That is, because neither prosody nor syntax ever appears in isolation in natural language, it makes sense if neurobiological investigations of language attempt to determine how the interaction between prosody and syntax, both in the stimulus and in the brain, facilitates processing. This aligns naturally with what we perceive to be the goal of the neurobiology of language, that is, to explain how the interplay between different sources of linguistic information ultimately yields comprehension via neurobiological mechanisms.

By presenting this strategic argument in favor of an integrative approach, we do not mean to say that a disjunctive approach, in which it is investigated whether and to what extent single cognitive factors modulate neural processing, should not be pursued. On the contrary, this approach is required to determine whether the brain cares about a given cognitive feature in the first place, so it naturally precedes the integrative approach. However, when its results are interpreted, it is important to keep in mind that in natural language processing, the brain never encounters that feature in isolation. It should therefore be acknowledged that single-component explanations are only part of the story, and that a conjunctive understanding is ultimately desired. Moreover, when researchers try to isolate the contribution of one factor (e.g., prosody, syntax) as part of the disjunctive approach, it is important that they take adequate caution in their experimental designs by establishing proper baselines for neural responses that are amenable to a high-level linguistic explanation. This is particularly important when one aims to dissociate the neural contributions of two factors that are highly correlated. It can be done by including control conditions that only differ from the experimental condition in the variable of interest (e.g., natural sentences versus prosodically matched pseudoword sentences; Coopmans et al., Reference Coopmans, de Hoop, Hagoort and Martin2022a; Kaufeld et al., Reference Kaufeld, Bosker and ten Oever2020), or by including prosodic control predictors in encoding models of language-related brain activity (Slaats et al., Reference Slaats, Weissbart, Schoffelen, Meyer and Martin2023). Properly accounting for prosodic variance will be helpful in detecting and understanding the remaining effects whose variance in neural dynamics is more directly attributable to syntactic processing (i.e., “true” syntactic tracking effects).

In line with this disjunctive approach, many recent studies have tried to dissociate different linguistic factors in order to account for the linguistic properties underlying tracking effects. This approach has been very effective and successful, but such functional decompositions are not the end result when the ultimate aim is to explain how language is represented in the brain. While syntax and prosody comprise formally distinct systems (Beckman and Pierrehumbert, Reference Beckman and Pierrehumbert1986; Féry, Reference Féry2016; Ferreira, Reference Ferreira1993; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1978, Reference Selkirk1984, Reference Selkirk, Goldschmit, Riggle and Yu2011; Shattuck-Hufnagel and Turk, Reference Shattuck-Hufnagel and Turk1996), it is unlikely that the brain strictly distinguishes them during natural language comprehension, precisely because they are so strongly tied together. Thus, beyond trying to attribute tracking effects exclusively to one linguistic component, or looking at the remaining variance to be explained by that one component, we consider it critical to also think about ways in which the interaction between the components (linguistic or otherwise) drives the neural signal. When it comes to syntax and prosody, this type of interactive approach is common both in theoretical linguistics (e.g., Féry, Reference Féry2016; Nespor and Vogel, Reference Nespor and Vogel1986; Selkirk, Reference Selkirk1984, Reference Selkirk, Goldschmit, Riggle and Yu2011) and psycholinguistics (e.g., Bögels et al., Reference Bögels, Schriefers, Vonk, Chwilla and Kerkhofs2010; Buxó-Lugo and Watson, Reference Buxó-Lugo and Watson2016; Coopmans et al., Reference Coopmans, Struiksma, Coopmans and Chen2022b; Friederici et al., Reference Friederici, von Cramon and Kotz2007; Hwang and Steinhauer, Reference Hwang and Steinhauer2011; Itzhak et al., Reference Itzhak, Pauker, Drury, Baum and Steinhauer2010; Kerkhofs et al., Reference Kerkhofs, Vonk, Schriefers and Chwilla2007; Kjelgaard and Speer, Reference Kjelgaard and Speer1999; Steinhauer et al., Reference Steinhauer, Alter and Friederici1999), but the literature on neural speech tracking remains predominantly focused on single-component explanations.

With the advanced analysis techniques that have become available in recent years, it is now possible to study the neural basis of language comprehension in naturalistic contexts (e.g., audiobook listening), where the interaction between, and co-occurrence of, syntactic and prosodic information is natural and commonplace. In a recent MEG decoding study, Degano et al. (Reference Degano, Donhauser, Gwilliams, Merlo and Golestani2023) utilized this opportunity and found that the neural encoding of syntactic information in natural speech is boosted by prosody. This important result indicates that the alignment between syntax and prosody yields a syntactic representational gain in the neural signal (Degano et al., Reference Degano, Donhauser, Gwilliams, Merlo and Golestani2023), and more generally, it shows the promise of the interactive approach. The time is ripe for cognitive neuroscientists to investigate how the brain interprets and constructs spoken language by (de)composing the mutually constraining rhythms of syntactic and prosodic structures. But while doing so, it is important to keep in mind that neural tracking of a given variable, be it physical or linguistic, may only be the tip of the iceberg in terms of the infrastructure for language comprehension in the brain.

17.5 Funding Information

Andrea E. Martin was supported by an Independent Max Planck Research Group and a Lise Meitner Research Group “Language and Computation in Neural Systems,” by NWO Vidi grant 016.Vidi.188.029 to AEM, and by Big Question 5 (to Prof. dr. Roshan Cools and Dr. Andrea E. Martin) of the Language in Interaction Consortium funded by NWO Gravitation Grant 024.001.006 to Prof. dr. Peter Hagoort. Cas W. Coopmans was supported by NWO Vidi grant 016.Vidi.188.029 to AEM.

17.6 Acknowledgements

We are grateful to Hatice Zora and two anonymous reviewers for valuable comments on an earlier draft of this chapter.

Summary

Empirical results in the literature on neural speech tracking are commonly attributed to either syntax or prosody. Here, we present four types of arguments against attempts to explain putatively syntactic tracking effects in prosodic terms (or vice versa). The arguments are based on logic, empirical observations, ontology, and strategy.

Implications

Because syntactic and prosodic structure are strongly related in speech and language, future research should be less focused on disjunctive explanations of neural tracking effects. Instead, researchers should attempt to explain how the natural interaction between syntactic and prosodic information, and their functional interdependence, drives the neural signal.

Gains

The interrelatedness between syntax and prosody is well known in linguistics, and their interactions have been widely studied in psycholinguistics. We show that when this interactive approach is embraced in cognitive neuroscience as well, it will greatly increase our understanding of how the brain makes sense of natural language.