1 Introduction

Inductive logic programming plays an important role in knowledge discovering, which has been applied in various practical applications such as natural language processing, multi-agent systems, and bioinformatics (Muggleton et al. Reference Muggleton, Raedt, Poole, Bratko, Flach, Inoue and Srinivasan2012; Gulwani et al. Reference Gulwani, Hernández-Orallo, Kitzelmann, Muggleton, Schmid and Zorn2015; Cropper et al. Reference Cropper, Dumancic, Evans and Muggleton2022). Logic programming under stable models (also known as answer set programming or ASP in short) is one of the major formalisms for representing incomplete knowledge and nonmonotonic reasoning (Gelfond and Lifschitz Reference Gelfond and Lifschitz1988; Brewka et al. Reference Brewka, Eiter and Truszczynski2011; Erdem et al. Reference Erdem, Gelfond and Leone2016). As the semantics of standard stable models is unable to handle priority and uncertain information, various extensions of ASP have been proposed in the literature, including prioritized logic programming (Schaub and Wang Reference Schaub and Wang2001; Baral Reference Baral2002) and probabilistic logic programs (Raedt and Kimmig Reference Raedt and Kimmig2015),

![]() ${LP}^{MLN}$

(Lee and Wang Reference Lee and Wang2016) among others.

${LP}^{MLN}$

(Lee and Wang Reference Lee and Wang2016) among others.

Another major extension of ASP are the possibilistic logic programs (Nicolas et al. Reference Nicolas, Garcia and Stéphan2005, Reference Nicolas, Garcia, Stéphan and Lefèvre2006; Dubois and Prade Reference Dubois and Prade2020), in which each rule is assigned a weight (also called necessity). Possibility theory has been applied in several areas (Dubois and Prade Reference Dubois and Prade2020) such as belief revision, information fusion, and preference modeling. For instance, the statement ‘if a person is a resident in New York, then they are a USA citizen with a possibility of

![]() $0.7$

’ can be conveniently expressed as a possibilistic rule below, which is a pair of a rule and a number.

$0.7$

’ can be conveniently expressed as a possibilistic rule below, which is a pair of a rule and a number.

The semantics of possibilistic logic programs is defined by an extension of stable models called possibilistic stable models or poss-stable models (Nicolas et al. Reference Nicolas, Garcia and Stéphan2005, Reference Nicolas, Garcia, Stéphan and Lefèvre2006) (see Section 2 for details). The possibilistic extension of ASP is different from probabilistic ones, as Zadeh (Reference Zadeh1999) has commented, if our focus is on the meaning of information rather than with its measure, the proper framework for information analysis is possibilistic rather than probabilistic in nature. We are not going to discuss this statement in detail but point out that possibilistic information is more on the side of representing the priority of formulas and rules. Moreover, as probability axioms are not enforced in possibilistic reasoning, it is easier for the user to manage “possibilistic” information than “probabilistic” information (Dubois et al. Reference Dubois, Nguyen and Prade2000).

Because the poss-stable models can deal with non-monotonicity and uncertainty simultaneously, they can be applied to reasoning about uncertain epistemic beliefs and possibilistic dynamic systems. In addition to analyzing the epistemic belief of a single agent, poss-stable models can be applied to reasoning about trust and belief among autonomous agents via argumentation (Maia and Alcântara Reference Maia and Alcântara2016), or to reasoning about possibilities across multiple information sources via a possibilistic multi-context systemFootnote 1 (Jin et al. Reference Jin, Wang and Wen2012; Yang et al. Reference Yang, Wang, Hu, Feng and Liu2023). Besides, a poss-NLP can also represent a dynamic system (Hu et al. Reference Hu, Wang and Inoue2025) whose dynamic characterization is depicted via the possibilistic interpretation transitions. Since an early study (Inoue Reference Inoue2011) theoretically revealed a strong mathematical relationship between the attractors/steady states of Boolean networks (BNs for short) and the stable models, some ASP-based methods (Mushthofa et al. Reference Mushthofa, Torres, Van de Peer, Marchal and De Cock2014; Khaled et al. Reference Khaled, Benhamou and Trinh2023) have been developed to find attractors in BNs such as Genetic Regulatory Networks (GRNs for short). It follows that the poss-stable models can correspond to the steady states of the dynamic system.

To our best knowledge, the problem of inductive reasoning with possibilistic logic programs has not been investigated in the literature. Informally, given a set of positive examples and a set of negative examples, the task is to induce a possibilistic logic program that satisfies these examples.

Let us consider the following example that is adapted from Examples 1 and 8 in Nicolas et al. (Reference Nicolas, Garcia, Stéphan and Lefèvre2006), which shows how possibilistic logic programs under poss-stable model semantics are used for representing quantitative priority information.

Example 1.1. Assume that a clinical expert system contains the following knowledge:

-

- If medicine A is taken, the possibility of relieving the vomiting is

$0.7$

; if medicine B is taken, the possibility of relieving the vomiting is

$0.7$

; if medicine B is taken, the possibility of relieving the vomiting is

$0.6$

.

$0.6$

. -

- A physician prescribes medicine B if a vomiting patient has not taken medicine A.

-

- If medicine A is taken, pregnancy causes malnutrition with a possibility of

$0.7$

; if medicine B is taken, pregnancy causes malnutrition with a possibility of

$0.7$

; if medicine B is taken, pregnancy causes malnutrition with a possibility of

$0.1$

.

$0.1$

.

This set of background (medical) knowledge can be expressed as the possibilistic logic program

![]() $\overline {P_{med}}$

below (under possibilistic stable models, see Section 2).

$\overline {P_{med}}$

below (under possibilistic stable models, see Section 2).

\begin{equation*} \overline {P_{med}} = \left \{ \begin{array}{c} (\mathit{relief} \leftarrow vomiting, medA, 0.7), \\ (\mathit{relief} \leftarrow vomiting, medB, 0.6), \\ (medB \leftarrow vomiting, \textit {not } \, medA, 1), \\ (malnutrition \leftarrow medA, pregnancy, 0.7), \\ (malnutrition \leftarrow medB, pregnancy, 0.1) \end{array} \right \} \end{equation*}

\begin{equation*} \overline {P_{med}} = \left \{ \begin{array}{c} (\mathit{relief} \leftarrow vomiting, medA, 0.7), \\ (\mathit{relief} \leftarrow vomiting, medB, 0.6), \\ (medB \leftarrow vomiting, \textit {not } \, medA, 1), \\ (malnutrition \leftarrow medA, pregnancy, 0.7), \\ (malnutrition \leftarrow medB, pregnancy, 0.1) \end{array} \right \} \end{equation*}

Intuitively, the first rule says that if someone is vomiting and she takes the medicine A then her vomiting symptom will be relieved with the possible degree 0.7. Similarly, the second rule says that the medicine B can relieve the vomiting symptom with possible degree 0.6. These possible degrees are given by experts in this field.

Suppose that “A woman is definitely in pregnancy and she suffers from vomiting”, which can be presented as the two rules in

![]() $\overline {P_{fact}}$

.

$\overline {P_{fact}}$

.

The knowledge base expressed as program

![]() $\overline {B_{med}} = \overline {P_{fact}} \cup \overline {P_{med}}$

can be expanded further by learning new rules from new observations (examples).

$\overline {B_{med}} = \overline {P_{fact}} \cup \overline {P_{med}}$

can be expanded further by learning new rules from new observations (examples).

Let us assume that we have the following three examples:

-

$\overline {A_1}$

$\overline {A_1}$

$\{ (pregnancy,1), (vomiting,1), (medA,1), (\mathit{relief},0.7), (malnutrition,0.7) \}$

,

$\{ (pregnancy,1), (vomiting,1), (medA,1), (\mathit{relief},0.7), (malnutrition,0.7) \}$

, -

$\overline {A_2}$

$\overline {A_2}$

$\{ (pregnancy,1), (vomiting,1), (medB,1), (\mathit{relief},0.6), (malnutrition,0.1) \}$

,

$\{ (pregnancy,1), (vomiting,1), (medB,1), (\mathit{relief},0.6), (malnutrition,0.1) \}$

, -

$\overline {A_3}$

$\overline {A_3}$

$\{ (pregnancy,1), (vomiting,1), (medA,0.7), (\mathit{relief},0.7) \}$

$\{ (pregnancy,1), (vomiting,1), (medA,0.7), (\mathit{relief},0.7) \}$

where

![]() $\overline {A_1}$

and

$\overline {A_1}$

and

![]() $\overline {A_2}$

are positive examples (possibilistic stable models), while

$\overline {A_2}$

are positive examples (possibilistic stable models), while

![]() $\overline {A_3}$

is a negative example.

$\overline {A_3}$

is a negative example.

![]() $\overline {{A_1}}$

is not a possibilistic stable model of the current

$\overline {{A_1}}$

is not a possibilistic stable model of the current

![]() $\overline {B_{med}}$

, nor is

$\overline {B_{med}}$

, nor is

![]() $\overline {A_2}$

. This means that some knowledge of the expert system is missing from the complete

$\overline {A_2}$

. This means that some knowledge of the expert system is missing from the complete

![]() $\overline {B_{med}}$

. What are the missing rules?

$\overline {B_{med}}$

. What are the missing rules?

For instance, if we add another rule

into

![]() $\overline {B_{med}}$

, then both

$\overline {B_{med}}$

, then both

![]() $\overline {A_1}$

and

$\overline {A_1}$

and

![]() $\overline {A_2}$

are possibilistic stable models of

$\overline {A_2}$

are possibilistic stable models of

![]() $\overline {B_{med}} \cup \{ \overline {r} \}$

, while

$\overline {B_{med}} \cup \{ \overline {r} \}$

, while

![]() $\overline {A_3}$

is not. How can we discover such a rule?

$\overline {A_3}$

is not. How can we discover such a rule?

Thus, in this paper we aim to establish a framework for learning possibilistic rules from a given background possibilistic program, a set of positive examples and a set of negative examples. For instance, our method will be able to generate the rule

![]() $(medA \leftarrow vomiting, \textit {not }\, medB, 1)$

from the background knowledge

$(medA \leftarrow vomiting, \textit {not }\, medB, 1)$

from the background knowledge

![]() $\overline {B_{med}}$

, positive examples

$\overline {B_{med}}$

, positive examples

![]() $E^+$

and negative examples

$E^+$

and negative examples

![]() $E^-$

. In order to set up our framework for induction in possibilistic programs, we first introduce the notion of induction tasks and then investigate its properties including useful characterizations of solutions for induction tasks. Based on these results, we present algorithms for computing solutions for induction tasks. We have also implemented and evaluated our algorithm using three randomly generated datasets.

$E^-$

. In order to set up our framework for induction in possibilistic programs, we first introduce the notion of induction tasks and then investigate its properties including useful characterizations of solutions for induction tasks. Based on these results, we present algorithms for computing solutions for induction tasks. We have also implemented and evaluated our algorithm using three randomly generated datasets.

In this paper, we will focus on the class of possibilistic normal logic programs (poss-NLPs) and our main contributions are summarized as follows.

-

• We propose a definition of induction in poss-NLPs for the first time and investigate its properties.

-

• We present two algorithms ilpsm and ilpsmmin for computing induction solutions for poss-NLPs. We show that our algorithms are sound and complete. The first algorithm computes a poss-NLP that is a solution for the given induction task, while the second one finds a minimal solution.

-

• We study two special cases of inductive reasoning for poss-NLPs. The first one is that observations are complete, that is, the given positive examples are exactly the possibilistic stable models, while all other possibilistic interpretations are negative ones. In such a special case, we obtain an elegant characterization for induction solutions in terms of poss-stable models. The other special case is when an input poss-NLP is an ordinary NLP. In this case, we show that the induction problem coincides with the standard induction for NLPs.

-

• We also generalize our definition of induction for poss-NLPs to a more general case where an interpretation is a partial interpretation. We show that this generalized induction problem can be reduced to the induction problem of poss-NLPs as defined in Definition 3.1. Thus, the algorithms ILPSM and ILPSMmin are applicable to the more general case of induction for poss-NLPs.

-

• We have implemented the algorithm ilsmmin to learn ordinal logic programs from stable models, which calls the answer set solver Clingo (Gebser et al. Reference Gebser, Kaminski, Kaufmann and Schaub2019). Comparison experimental results show that ilsmmin outperforms ilasp (Law et al. Reference Law, Russo and Broda2014) on the randomly generated tasks of inducing NLP from stable models, in which ilasp can learn non-ground answer set programs from partial interpretations.

The rest of this paper is organized as follows. In Section 2, we briefly recall some basics of possibilistic logic programs and poss-stable models. In Section 3, we propose the induction task for poss-NLPs and analyze its properties. Its two different algorithms are proposed and analyzed in Section 4. Section 5 discusses some variants of the induction for poss-NLPs. Section 6 presents an efficient implementation and reports the results of the experimental evaluation. Section 7 discusses related work. Finally, Section 8 concludes the paper. All proofs have been relegated to the appendix.

2 Preliminaries

In this section, we briefly introduce some basics of possibilistic (normal) logic programs (poss-NLPs) and fix the notations that will be used in the paper (Nicolas et al. Reference Nicolas, Garcia, Stéphan and Lefèvre2006). For a set

![]() $O$

,

$O$

,

![]() $\vert O \vert$

denotes cardinality of

$\vert O \vert$

denotes cardinality of

![]() $O$

.

$O$

.

2.1 Possibilistic logic programs

We first introduce the syntax of poss-NLPs in this subsection. We assume a propositional logic

![]() $\mathcal{L}$

over a finite set

$\mathcal{L}$

over a finite set

![]() $\mathcal{A}$

of atoms. Literals (positive and negative), terms, clauses, models, and satisfiability are defined as usual. A possibilistic formula

$\mathcal{A}$

of atoms. Literals (positive and negative), terms, clauses, models, and satisfiability are defined as usual. A possibilistic formula

![]() $\overline {\phi }$

is a pair

$\overline {\phi }$

is a pair

![]() $(\phi ,\alpha )$

with

$(\phi ,\alpha )$

with

![]() $\phi$

being a (propositional) formula of

$\phi$

being a (propositional) formula of

![]() $\mathcal L$

and

$\mathcal L$

and

![]() $\alpha$

being the weight of

$\alpha$

being the weight of

![]() $\phi$

. In general, this

$\phi$

. In general, this

![]() $\alpha$

is an element of a given lattice

$\alpha$

is an element of a given lattice

![]() $({\mathcal{Q}},\le )$

(Dubois and Prade Reference Dubois and Prade1998). In this paper,

$({\mathcal{Q}},\le )$

(Dubois and Prade Reference Dubois and Prade1998). In this paper,

![]() $({\mathcal{Q}},\le )$

is assumed to be a totally ordered set where

$({\mathcal{Q}},\le )$

is assumed to be a totally ordered set where

![]() $\mathcal{Q}$

is finite. Intuitively, every pair of weights in the finite set

$\mathcal{Q}$

is finite. Intuitively, every pair of weights in the finite set

![]() $\mathcal{Q}$

of a given induction task is comparable.

$\mathcal{Q}$

of a given induction task is comparable.

For example,

![]() ${\mathcal{Q}}= \{ 0.1, 0.6, 0.7, 1 \}$

and

${\mathcal{Q}}= \{ 0.1, 0.6, 0.7, 1 \}$

and

![]() $\leq$

is the ‘less than or equal’ relation for real numbers. The supremum of

$\leq$

is the ‘less than or equal’ relation for real numbers. The supremum of

![]() $\mathcal{Q}$

in this

$\mathcal{Q}$

in this

![]() $({\mathcal{Q}},\le )$

is

$({\mathcal{Q}},\le )$

is

![]() $1$

. Except for a finite set of decimals in

$1$

. Except for a finite set of decimals in

![]() $[0, 1]$

,

$[0, 1]$

,

![]() $\mathcal{Q}$

can also be a set of adverbs representing necessities. For instance,

$\mathcal{Q}$

can also be a set of adverbs representing necessities. For instance,

![]() ${\mathcal{Q}}= \{ slightly, highly, extremely, absolutely \}$

and

${\mathcal{Q}}= \{ slightly, highly, extremely, absolutely \}$

and

![]() $slightly \leq highly \leq extremely \leq absolutely$

. The supremum of

$slightly \leq highly \leq extremely \leq absolutely$

. The supremum of

![]() $\mathcal{Q}$

in this

$\mathcal{Q}$

in this

![]() $({\mathcal{Q}},\le )$

is

$({\mathcal{Q}},\le )$

is

![]() $absolutely$

. Hereafter, we use

$absolutely$

. Hereafter, we use

![]() $\mu$

to denote the supremum of

$\mu$

to denote the supremum of

![]() $\mathcal{Q}$

in

$\mathcal{Q}$

in

![]() $({\mathcal{Q}},\le )$

.

$({\mathcal{Q}},\le )$

.

The possibilistic formula

![]() $(\phi ,\alpha )$

intuitively states that the ordinary formula

$(\phi ,\alpha )$

intuitively states that the ordinary formula

![]() $\phi$

is certain at least to the level

$\phi$

is certain at least to the level

![]() $\alpha$

. If

$\alpha$

. If

![]() $\phi$

is an atom (resp. a literal, a clause and a term) then

$\phi$

is an atom (resp. a literal, a clause and a term) then

![]() $(\phi ,\alpha )$

is a possibilistic atom (resp. literal, clause and term). Hereafter, we use the symbol

$(\phi ,\alpha )$

is a possibilistic atom (resp. literal, clause and term). Hereafter, we use the symbol

![]() $X$

to denote the classic projection ignoring all uncertainties from its possibilistic counterpart

$X$

to denote the classic projection ignoring all uncertainties from its possibilistic counterpart

![]() $\overline {X}$

. Given a set

$\overline {X}$

. Given a set

![]() $\mathcal{A}$

of atoms and a complete lattice

$\mathcal{A}$

of atoms and a complete lattice

![]() $(\mathcal{Q}, \le )$

, a set

$(\mathcal{Q}, \le )$

, a set

![]() $\overline {I}\subseteq {\mathcal{A}}\times {\mathcal{Q}}$

of possibilistic atoms is called a possibilistic interpretation over

$\overline {I}\subseteq {\mathcal{A}}\times {\mathcal{Q}}$

of possibilistic atoms is called a possibilistic interpretation over

![]() $\mathcal{A}$

and

$\mathcal{A}$

and

![]() $(\mathcal{Q},\le )$

if

$(\mathcal{Q},\le )$

if

![]() $\vert \{ (x,\alpha ) \in \overline {I} \mid \alpha \in {\mathcal{Q}} \} \vert \leq 1$

for each

$\vert \{ (x,\alpha ) \in \overline {I} \mid \alpha \in {\mathcal{Q}} \} \vert \leq 1$

for each

![]() $x \in {\mathcal{A}}$

. Thus, for a possibilistic interpretation

$x \in {\mathcal{A}}$

. Thus, for a possibilistic interpretation

![]() $\overline {I}$

,

$\overline {I}$

,

![]() $(x,\alpha _1)\in \overline {I}$

and

$(x,\alpha _1)\in \overline {I}$

and

![]() $(x,\alpha _2)\in \overline {I}$

imply

$(x,\alpha _2)\in \overline {I}$

imply

![]() $\alpha _1=\alpha _2$

. Given a possibilistic interpretation

$\alpha _1=\alpha _2$

. Given a possibilistic interpretation

![]() $\overline {I}$

, we use

$\overline {I}$

, we use

![]() $I$

to denote the classic interpretation

$I$

to denote the classic interpretation

![]() $\{p\mid (p,\alpha )\in \overline {I} \}$

.

$\{p\mid (p,\alpha )\in \overline {I} \}$

.

Before introducing possibilistic logic programs, we recall the set operations for finite sets of possibilistic formulas.

Let

![]() $\overline {\Gamma _1}$

and

$\overline {\Gamma _1}$

and

![]() $\overline {\Gamma _2}$

be two finite sets of possibilistic formulas.

$\overline {\Gamma _2}$

be two finite sets of possibilistic formulas.

-

•

$\overline {\Gamma _1} \sqsubseteq \overline {\Gamma _2}$

if for each

$\overline {\Gamma _1} \sqsubseteq \overline {\Gamma _2}$

if for each

$(\phi ,\alpha )\in \overline {\Gamma _1}$

there exists

$(\phi ,\alpha )\in \overline {\Gamma _1}$

there exists

$(\phi ,\beta )\in \overline {\Gamma _2}$

such that

$(\phi ,\beta )\in \overline {\Gamma _2}$

such that

$\alpha \le \beta$

. Consequently,

$\alpha \le \beta$

. Consequently,

$\overline {\Gamma _1} \sqsubset \overline {\Gamma _2}$

if

$\overline {\Gamma _1} \sqsubset \overline {\Gamma _2}$

if

$\overline {\Gamma _1} \sqsubseteq \overline {\Gamma _2}$

and

$\overline {\Gamma _1} \sqsubseteq \overline {\Gamma _2}$

and

$\overline {\Gamma _1} \neq \overline {\Gamma _2}$

.

$\overline {\Gamma _1} \neq \overline {\Gamma _2}$

. -

•

$ \overline {\Gamma _1}\sqcup \overline {\Gamma _2}=\{(x, \alpha )\mid (x,\alpha )\in \overline {\Gamma _1}, x \notin \Gamma _2\}\cup \{(x,\beta )\mid (x,\beta )\in \overline {\Gamma _2},x \notin \Gamma _1\}\cup \{(x,\max \{\alpha ,\beta \})\mid (x,\alpha )\in \overline {\Gamma _1}, (x,\beta )\in \overline {\Gamma _2}\}$

.

$ \overline {\Gamma _1}\sqcup \overline {\Gamma _2}=\{(x, \alpha )\mid (x,\alpha )\in \overline {\Gamma _1}, x \notin \Gamma _2\}\cup \{(x,\beta )\mid (x,\beta )\in \overline {\Gamma _2},x \notin \Gamma _1\}\cup \{(x,\max \{\alpha ,\beta \})\mid (x,\alpha )\in \overline {\Gamma _1}, (x,\beta )\in \overline {\Gamma _2}\}$

. -

•

$ \overline {\Gamma _1}\sqcap \overline {\Gamma _2}=\{(x, \min \{\alpha ,\beta \})\mid (x,\alpha )\in \overline {\Gamma _1}, (x,\beta )\in \overline {\Gamma _2}\}$

.

$ \overline {\Gamma _1}\sqcap \overline {\Gamma _2}=\{(x, \min \{\alpha ,\beta \})\mid (x,\alpha )\in \overline {\Gamma _1}, (x,\beta )\in \overline {\Gamma _2}\}$

.

A possibilistic normal logic program (poss-NLP for short) on a complete lattice

![]() $({\mathcal{Q}},\le )$

is a finite set of possibilistic normal rules (or rules) of the form

$({\mathcal{Q}},\le )$

is a finite set of possibilistic normal rules (or rules) of the form

![]() $\overline {r} = (r,\alpha )$

, where

$\overline {r} = (r,\alpha )$

, where

-

•

$\alpha \in {\mathcal{Q}}$

is the weight of

$\alpha \in {\mathcal{Q}}$

is the weight of

$\overline {r}$

, also written as

$\overline {r}$

, also written as

$N(\overline {r})$

, and

$N(\overline {r})$

, and -

•

$r$

is a classic (normal) rule of the form:(1)with

$r$

is a classic (normal) rule of the form:(1)with \begin{align} p_0\leftarrow p_1,\ldots , p_m,\textit {not}\, p_{m+1}, \ldots , \textit {not} \, p_n \end{align}

\begin{align} p_0\leftarrow p_1,\ldots , p_m,\textit {not}\, p_{m+1}, \ldots , \textit {not} \, p_n \end{align}

$n \geq 0$

and

$n \geq 0$

and

$p_i\in {\mathcal{A}}\,(0\le i\le n)$

.

$p_i\in {\mathcal{A}}\,(0\le i\le n)$

.

A possibilistic logic program is also referred to as a possibilistic logic knowledge base or a possibility theory in the literature.

Given a poss-NLP

![]() $\overline {P}$

, its classic counterpart, consisting of all classic rules in the poss-NLP, is denoted as

$\overline {P}$

, its classic counterpart, consisting of all classic rules in the poss-NLP, is denoted as

![]() $P$

. Let

$P$

. Let

![]() $r$

be a classic rule of the form (1). We denote

$r$

be a classic rule of the form (1). We denote

![]() $\textit {hd}(r)=p_0$

,

$\textit {hd}(r)=p_0$

,

![]() ${\textit {bd}{^+}}(r)=\{p_1,\ldots , p_m\}$

,

${\textit {bd}{^+}}(r)=\{p_1,\ldots , p_m\}$

,

![]() ${\textit {bd}{^-}}(r)=\{p_{m+1},\ldots , p_n\}$

and

${\textit {bd}{^-}}(r)=\{p_{m+1},\ldots , p_n\}$

and

![]() $\textit {bd}(r)={\textit {bd}{^+}}(r)\cup \textit {not} \,{\textit {bd}{^-}}(r)$

. Thus, the rule

$\textit {bd}(r)={\textit {bd}{^+}}(r)\cup \textit {not} \,{\textit {bd}{^-}}(r)$

. Thus, the rule

![]() $r$

can be written as

$r$

can be written as

where

![]() $\textit {not} \, S=\{\textit {not} \, p\mid p\in S\}$

. The rule

$\textit {not} \, S=\{\textit {not} \, p\mid p\in S\}$

. The rule

![]() $r$

is definite if

$r$

is definite if

![]() ${\textit {bd}{^-}}(r)=\emptyset$

. For a possibilistic normal logic rule

${\textit {bd}{^-}}(r)=\emptyset$

. For a possibilistic normal logic rule

![]() $\overline {r}$

, we also denote

$\overline {r}$

, we also denote

![]() $\textit {hd}(\overline {r})=\textit {hd}(r)$

,

$\textit {hd}(\overline {r})=\textit {hd}(r)$

,

![]() ${\textit {bd}{^+}}(\overline {r})={\textit {bd}{^+}}(r)$

and

${\textit {bd}{^+}}(\overline {r})={\textit {bd}{^+}}(r)$

and

![]() ${\textit {bd}{^-}}(\overline {r})={\textit {bd}{^-}}(r)$

. A possibilistic definite (logic) program is a finite set of possibilistic definite rules.

${\textit {bd}{^-}}(\overline {r})={\textit {bd}{^-}}(r)$

. A possibilistic definite (logic) program is a finite set of possibilistic definite rules.

Two classic rules

![]() $r_1$

and

$r_1$

and

![]() $r_2$

of the form (1) are identical if

$r_2$

of the form (1) are identical if

![]() $\textit {hd}(r_1) = \textit {hd}(r_2)$

and

$\textit {hd}(r_1) = \textit {hd}(r_2)$

and

![]() $\textit {bd}(r_1) = \textit {bd}(r_2)$

. For instance, rule

$\textit {bd}(r_1) = \textit {bd}(r_2)$

. For instance, rule

![]() $p_0\leftarrow p_1, p_2,\textit {not} \, p_3, \textit {not} \, p_4$

is the same as rule

$p_0\leftarrow p_1, p_2,\textit {not} \, p_3, \textit {not} \, p_4$

is the same as rule

![]() $p_0\leftarrow p_2, p_1,\textit {not} \, p_4, \textit {not} \, p_3$

. Similar to the assumption in Possibilistic Logic, we assume that every classic rule occurs at most once in a poss-NLP.

$p_0\leftarrow p_2, p_1,\textit {not} \, p_4, \textit {not} \, p_3$

. Similar to the assumption in Possibilistic Logic, we assume that every classic rule occurs at most once in a poss-NLP.

Given two poss-NLPs

![]() $\overline {P_1}$

and

$\overline {P_1}$

and

![]() $\overline {P_2}$

, the operations

$\overline {P_2}$

, the operations

![]() $\cup$

and

$\cup$

and

![]() $-$

over poss-NLPs can be defined as follows (Garcia et al. Reference Garcia, Lefèvre, Papini, Stéphan and Würbel2018).

$-$

over poss-NLPs can be defined as follows (Garcia et al. Reference Garcia, Lefèvre, Papini, Stéphan and Würbel2018).

-

•

$\overline {P_1}\sqcup \overline {P_2}=\{(r, \alpha )\mid (r,\alpha )\in \overline {P_1}, r \notin P_2\}\cup \{(r,\beta )\mid (r,\beta )\in \overline {P_2},r \notin P_1\}\cup \{(r,\max \{\alpha ,\beta \})\mid (r,\alpha )\in \overline {P_1}, (r,\beta )\in \overline {P_2}\}$

.

$\overline {P_1}\sqcup \overline {P_2}=\{(r, \alpha )\mid (r,\alpha )\in \overline {P_1}, r \notin P_2\}\cup \{(r,\beta )\mid (r,\beta )\in \overline {P_2},r \notin P_1\}\cup \{(r,\max \{\alpha ,\beta \})\mid (r,\alpha )\in \overline {P_1}, (r,\beta )\in \overline {P_2}\}$

. -

•

$\overline {P_1} - \overline {P_2} = \{ (r, \alpha ) \mid (r,\alpha )\in \overline {P_1}, r \notin P_2 \} \cup \{ (r, \alpha ) \mid (r,\alpha )\in \overline {P_1}, (r,\beta )\in \overline {P_2}, \alpha \gt \beta \}$

.

$\overline {P_1} - \overline {P_2} = \{ (r, \alpha ) \mid (r,\alpha )\in \overline {P_1}, r \notin P_2 \} \cup \{ (r, \alpha ) \mid (r,\alpha )\in \overline {P_1}, (r,\beta )\in \overline {P_2}, \alpha \gt \beta \}$

.

2.2 Possibilistic stable models

In this subsection, we introduce the semantics of poss-NLPs, that is, possibilistic stable models or poss-stable models (Nicolas et al. Reference Nicolas, Garcia, Stéphan and Lefèvre2006). To this end, we first recall basics of normal logic programs under stable models (Gelfond and Lifschitz Reference Gelfond and Lifschitz1988).

An atom set

![]() $S$

satisfies a definite logic program

$S$

satisfies a definite logic program

![]() $P$

, written as

$P$

, written as

![]() $S \models P$

, if

$S \models P$

, if

![]() ${\textit {bd}{^+}}(r) \subseteq S$

implies

${\textit {bd}{^+}}(r) \subseteq S$

implies

![]() $\textit {hd}(r) \in S$

for each

$\textit {hd}(r) \in S$

for each

![]() $r \in P$

.

$r \in P$

.

![]() $S$

is a stable model of

$S$

is a stable model of

![]() $P$

, written as

$P$

, written as

![]() $S \in \mathit{SM}(P)$

, if

$S \in \mathit{SM}(P)$

, if

![]() $S \models P$

and there exists no

$S \models P$

and there exists no

![]() $S' \subset S$

such that

$S' \subset S$

such that

![]() $S' \models P$

. In fact, a definite logic program

$S' \models P$

. In fact, a definite logic program

![]() $P$

has the unique stable model which is its least Herbrand model

$P$

has the unique stable model which is its least Herbrand model

![]() $\mathit{Cn}(P)$

(Lloyd Reference Lloyd2012) (the set of consequences of

$\mathit{Cn}(P)$

(Lloyd Reference Lloyd2012) (the set of consequences of

![]() $P$

).

$P$

).

![]() $\mathit{Cn}(P)$

is also the least fixpoint

$\mathit{Cn}(P)$

is also the least fixpoint

![]() $\mathit{lfp}(T_P)$

of the immediate consequence operator

$\mathit{lfp}(T_P)$

of the immediate consequence operator

![]() $T_P:2^{\mathcal{A}} \rightarrow 2^{\mathcal{A}}$

defined by

$T_P:2^{\mathcal{A}} \rightarrow 2^{\mathcal{A}}$

defined by

![]() $T_P(A)=\textit {hd}(\mathit{App}(P, A))$

, where

$T_P(A)=\textit {hd}(\mathit{App}(P, A))$

, where

![]() $\textit {hd}(P) = \{ \textit {hd}(r) \mid r \in P \}$

and

$\textit {hd}(P) = \{ \textit {hd}(r) \mid r \in P \}$

and

![]() $\mathit{App}(P, A) = \{ r \in P \mid {\textit {bd}{^+}}(r) \subseteq A \}$

. That is, for a definite logic program

$\mathit{App}(P, A) = \{ r \in P \mid {\textit {bd}{^+}}(r) \subseteq A \}$

. That is, for a definite logic program

![]() $P$

, we have

$P$

, we have

![]() $\mathit{Cn}(P) = \mathit{lfp}(T_P)$

.

$\mathit{Cn}(P) = \mathit{lfp}(T_P)$

.

The following proposition is useful for checking whether

![]() $S = \mathit{lfp}(T_P)$

for a set

$S = \mathit{lfp}(T_P)$

for a set

![]() $S$

of atoms. A definite logic program

$S$

of atoms. A definite logic program

![]() $P$

is grounded if it can be ordered as a sequence

$P$

is grounded if it can be ordered as a sequence

![]() $(r_1, \ldots , r_n)$

such that

$(r_1, \ldots , r_n)$

such that

![]() $ r_i \in \mathit{App}(P,\textit {hd}(\{ r_1, \ldots , r_{i-1} \}))$

for each

$ r_i \in \mathit{App}(P,\textit {hd}(\{ r_1, \ldots , r_{i-1} \}))$

for each

![]() $1 \leq i \leq n$

.

$1 \leq i \leq n$

.

Proposition 2.1 (Proposition 1 of Nicolas et al. (Reference Nicolas, Garcia, Stéphan and Lefèvre2006)). Let

![]() $P$

be a definite logic program and

$P$

be a definite logic program and

![]() $S$

be an atom set.

$S$

be an atom set.

![]() $S$

is a least Herbrand model of

$S$

is a least Herbrand model of

![]() $P$

if and only if

$P$

if and only if

-

•

$S = \textit {hd}(\mathit{App}(P,S))$

, and

$S = \textit {hd}(\mathit{App}(P,S))$

, and

-

•

$\mathit{App}(P,S)$

is grounded.

$\mathit{App}(P,S)$

is grounded.

An atom set

![]() $S$

is a stable model of normal logic program (NLP)

$S$

is a stable model of normal logic program (NLP)

![]() $P$

if

$P$

if

![]() $S$

is the stable model of

$S$

is the stable model of

![]() $P^S$

, the reduct of

$P^S$

, the reduct of

![]() $P$

w.r.t.

$P$

w.r.t.

![]() $S$

, where

$S$

, where

![]() $P^S$

denotes the definite logic program

$P^S$

denotes the definite logic program

![]() $\{\textit {hd}(r) \leftarrow {\textit {bd}{^+}}(r) \mid r \in P, {\textit {bd}{^-}}(r) \cap S = \emptyset \}$

(Gelfond and Lifschitz Reference Gelfond and Lifschitz1988). A stable model of

$\{\textit {hd}(r) \leftarrow {\textit {bd}{^+}}(r) \mid r \in P, {\textit {bd}{^-}}(r) \cap S = \emptyset \}$

(Gelfond and Lifschitz Reference Gelfond and Lifschitz1988). A stable model of

![]() $P$

is also referred to as an answer set of

$P$

is also referred to as an answer set of

![]() $P$

. The set of all stable models for

$P$

. The set of all stable models for

![]() $P$

is denoted as

$P$

is denoted as

![]() $\mathit{SM}(P)$

.

$\mathit{SM}(P)$

.

Now we are ready to introduce the notion of poss-stable models (Dubois and Prade Reference Dubois and Prade2024), which is a generalization of the stable models for ordinary NLPs. First, we extend the definitions of reduct, rule applicability, and consequence operator to the case of poss-NLPs.

Given a possibilistic definite rule

![]() $\overline {r} = (r, \alpha )$

where

$\overline {r} = (r, \alpha )$

where

![]() $r$

is of the form

$r$

is of the form

![]() $p \leftarrow \{p_1,\ldots ,p_m$

, if there exists weights

$p \leftarrow \{p_1,\ldots ,p_m$

, if there exists weights

![]() $\alpha _1, \ldots , \alpha _m$

such that

$\alpha _1, \ldots , \alpha _m$

such that

![]() $(p_i,\alpha _i)\in \overline {A}$

for all

$(p_i,\alpha _i)\in \overline {A}$

for all

![]() $i\,(1\le i\le m)$

(

$i\,(1\le i\le m)$

(

![]() $1\le i\le m$

), then we say that

$1\le i\le m$

), then we say that

![]() $\overline {r}$

is

$\overline {r}$

is

![]() $\beta$

-applicable in a possibilistic atom set

$\beta$

-applicable in a possibilistic atom set

![]() $\overline {A}$

.

$\overline {A}$

.

A definite rule

![]() $r$

is applicable in an atom set

$r$

is applicable in an atom set

![]() $A$

if

$A$

if

![]() ${\textit {bd}{^+}}(r) \subseteq A$

. Correspondingly, a possibilistic definite rule

${\textit {bd}{^+}}(r) \subseteq A$

. Correspondingly, a possibilistic definite rule

![]() $(r,\alpha )$

with

$(r,\alpha )$

with

![]() ${\textit {bd}{^+}}(r)=\{p_1,\ldots ,p_m\}$

is

${\textit {bd}{^+}}(r)=\{p_1,\ldots ,p_m\}$

is

![]() $\beta$

-applicable in a possibilistic atom set

$\beta$

-applicable in a possibilistic atom set

![]() $\overline {A}$

with

$\overline {A}$

with

![]() $\beta =\min \{\alpha ,\alpha _1,\ldots , \alpha _m\}$

if there exists

$\beta =\min \{\alpha ,\alpha _1,\ldots , \alpha _m\}$

if there exists

![]() $(p_i,\alpha _i)\in \overline {A}$

for every

$(p_i,\alpha _i)\in \overline {A}$

for every

![]() $i\,(1\le i\le m)$

. Otherwise, it is not applicable in

$i\,(1\le i\le m)$

. Otherwise, it is not applicable in

![]() $\overline {A}$

. For a certain atom

$\overline {A}$

. For a certain atom

![]() $q \in {\mathcal{A}}$

and a possibilistic definite program

$q \in {\mathcal{A}}$

and a possibilistic definite program

![]() $\overline {P}$

, define

$\overline {P}$

, define

Additionally,

![]() $\mathit{App}(\overline {P}, \overline {A}) = \bigcup _{q \in A} \mathit{App}(\overline {P}, \overline {A}, q)$

.

$\mathit{App}(\overline {P}, \overline {A}) = \bigcup _{q \in A} \mathit{App}(\overline {P}, \overline {A}, q)$

.

Given a possibilistic definite logic program

![]() $\overline {P}$

and a possibilistic atom set

$\overline {P}$

and a possibilistic atom set

![]() $\overline {A}$

, the immediate possibilistic consequence operator

$\overline {A}$

, the immediate possibilistic consequence operator

![]() ${\mathcal{T}}_{\overline {P}}$

as Definition 9 in (Nicolas et al. Reference Nicolas, Garcia, Stéphan and Lefèvre2006) maps a possibilistic atom set

${\mathcal{T}}_{\overline {P}}$

as Definition 9 in (Nicolas et al. Reference Nicolas, Garcia, Stéphan and Lefèvre2006) maps a possibilistic atom set

![]() $\overline {A}$

to another one as follows.

$\overline {A}$

to another one as follows.

Then the iterated operator

![]() ${\mathcal{T}}_{\overline {P}}^k$

is defined by

${\mathcal{T}}_{\overline {P}}^k$

is defined by

![]() ${\mathcal{T}}_{\overline {P}}^0 = \emptyset$

and

${\mathcal{T}}_{\overline {P}}^0 = \emptyset$

and

![]() ${\mathcal{T}}_{\overline {P}}^{n+1} = {\mathcal{T}}_{\overline {P}}({\mathcal{T}}_{\overline {P}}^{n})$

for each

${\mathcal{T}}_{\overline {P}}^{n+1} = {\mathcal{T}}_{\overline {P}}({\mathcal{T}}_{\overline {P}}^{n})$

for each

![]() $n \geq 0$

. For a possibilistic definite logic program

$n \geq 0$

. For a possibilistic definite logic program

![]() $\overline {P}$

, we can compute the set

$\overline {P}$

, we can compute the set

![]() $\mathit{Cn}(\overline {P})$

of possibilistic consequences via

$\mathit{Cn}(\overline {P})$

of possibilistic consequences via

![]() $\mathit{Cn}(\overline {P}) = \mathit{lfp}({\mathcal{T}}_{\overline {P}})$

where

$\mathit{Cn}(\overline {P}) = \mathit{lfp}({\mathcal{T}}_{\overline {P}})$

where

![]() $\mathit{lfp}({\mathcal{T}}_{\overline {P}}) = \bigsqcup _{n\geq 0}{\mathcal{T}}_{\overline {P}}^n$

is the least fixpoint of the immediate possibilistic consequence operator

$\mathit{lfp}({\mathcal{T}}_{\overline {P}}) = \bigsqcup _{n\geq 0}{\mathcal{T}}_{\overline {P}}^n$

is the least fixpoint of the immediate possibilistic consequence operator

![]() ${\mathcal{T}}_{\overline {P}}$

.

${\mathcal{T}}_{\overline {P}}$

.

The possibilistic reduct of a poss-NLP

![]() $\overline {P}$

w.r.t. an atom set

$\overline {P}$

w.r.t. an atom set

![]() $S$

is the possibilistic definite logic program

$S$

is the possibilistic definite logic program

A set

![]() $\overline {S}$

of possibilistic atom is a poss-stable model (or poss-stable model) of poss-NLP

$\overline {S}$

of possibilistic atom is a poss-stable model (or poss-stable model) of poss-NLP

![]() $\overline {P}$

if

$\overline {P}$

if

![]() $\overline {S} = \mathit{Cn}(\overline {P}^S)$

. The set of all poss-stable models of

$\overline {S} = \mathit{Cn}(\overline {P}^S)$

. The set of all poss-stable models of

![]() $\overline {S}$

is denoted

$\overline {S}$

is denoted

![]() $\mathit{PSM}(\overline {P})$

.

$\mathit{PSM}(\overline {P})$

.

The following example illustrates some of the above notions for poss-NLPs.

Example 2.1.

Let

![]() ${\mathcal{A}} = \{ a,b,c \}$

,

${\mathcal{A}} = \{ a,b,c \}$

,

![]() $\overline {P} = \{(a \leftarrow \textit {not} \, b,0.6),(a \leftarrow ,0.9),(b \leftarrow , 0.6),(c \leftarrow a,b,0.8)\}$

and

$\overline {P} = \{(a \leftarrow \textit {not} \, b,0.6),(a \leftarrow ,0.9),(b \leftarrow , 0.6),(c \leftarrow a,b,0.8)\}$

and

![]() $\overline {S} = \{ (a,0.9), (b,0.6), (c,0.6) \}$

. Then

$\overline {S} = \{ (a,0.9), (b,0.6), (c,0.6) \}$

. Then

![]() $\mathit{Cn}(\overline {P}^S) = \{ (a,0.9),(b,0.6),(c,0.6) \}$

since

$\mathit{Cn}(\overline {P}^S) = \{ (a,0.9),(b,0.6),(c,0.6) \}$

since

![]() $\overline {P}^S = \{(a \leftarrow , 0.9),(b \leftarrow , 0.6),(c \leftarrow a,b,0.8)\}$

and

$\overline {P}^S = \{(a \leftarrow , 0.9),(b \leftarrow , 0.6),(c \leftarrow a,b,0.8)\}$

and

\begin{align*} {\mathcal{T}}_{\overline {P}^S}^0 = &{\mathcal{T}}_{\overline {P}^S}(\emptyset ) = \{ (a,0.9),(b,0.6) \}, \\ {\mathcal{T}}_{\overline {P}^S}^1 = &{\mathcal{T}}_{\overline {P}^S}(\{ (a,0.9),(b,0.6) \}) = \{ (a,0.9),(b,0.6),(c,0.6) \}, \\ {\mathcal{T}}_{\overline {P}^S}^2 = &{\mathcal{T}}_{\overline {P}^S}(\{ (a,0.9),(b,0.6),(c,0.6) \}) = \{ (a,0.9),(b,0.6),(c,0.6) \} = \overline {S}. \end{align*}

\begin{align*} {\mathcal{T}}_{\overline {P}^S}^0 = &{\mathcal{T}}_{\overline {P}^S}(\emptyset ) = \{ (a,0.9),(b,0.6) \}, \\ {\mathcal{T}}_{\overline {P}^S}^1 = &{\mathcal{T}}_{\overline {P}^S}(\{ (a,0.9),(b,0.6) \}) = \{ (a,0.9),(b,0.6),(c,0.6) \}, \\ {\mathcal{T}}_{\overline {P}^S}^2 = &{\mathcal{T}}_{\overline {P}^S}(\{ (a,0.9),(b,0.6),(c,0.6) \}) = \{ (a,0.9),(b,0.6),(c,0.6) \} = \overline {S}. \end{align*}

As a result,

![]() $\overline {S} \in \mathit{PSM}(\overline {P})$

.

$\overline {S} \in \mathit{PSM}(\overline {P})$

.

The next proposition shows that there is a one-to-one correspondence between the set

![]() $\mathit{PSM}(\overline {P})$

of poss-stable models for

$\mathit{PSM}(\overline {P})$

of poss-stable models for

![]() $\overline {P}$

and the set

$\overline {P}$

and the set

![]() $\mathit{SM}(P)$

of stable models for

$\mathit{SM}(P)$

of stable models for

![]() $P$

.

$P$

.

Proposition 2.2 (Proposition 10 of Nicolas et al. (Reference Nicolas, Garcia, Stéphan and Lefèvre2006)). Let

![]() $\overline {P}$

be a poss-NLP.

$\overline {P}$

be a poss-NLP.

-

• If

$A$

is a stable model of

$A$

is a stable model of

$P$

, then

$P$

, then

$\mathit{Cn}(\overline {P}^A)$

is a poss-stable model of

$\mathit{Cn}(\overline {P}^A)$

is a poss-stable model of

$\overline {P}$

.

$\overline {P}$

. -

• If

$\overline {A}$

is a poss-stable model of

$\overline {A}$

is a poss-stable model of

$\overline {P}$

, then

$\overline {P}$

, then

$A$

is a stable model of

$A$

is a stable model of

$P$

.

$P$

.

The immediate possibilistic consequence operator introduced in Nicolas et al. (Reference Nicolas, Garcia, Stéphan and Lefèvre2006) is for possibilistic definite logic programs only, but it can be generalized to poss-NLPs as follows. Beforehand, let us define the applicability of poss-rules in a poss-NLP.

Definition 2.1 (Poss-rule applicability). Given a possibilistic interpretation

![]() $ \overline {I}$

and a weight

$ \overline {I}$

and a weight

![]() $\beta$

, a possibilistic normal rule

$\beta$

, a possibilistic normal rule

![]() $(r,\alpha )$

is

$(r,\alpha )$

is

![]() $\beta$

-applicable in

$\beta$

-applicable in

![]() $ \overline {I}$

if the possibilistic definite rule

$ \overline {I}$

if the possibilistic definite rule

![]() $({hd}(r)\leftarrow {{bd}{^+}}(r),\alpha )$

is

$({hd}(r)\leftarrow {{bd}{^+}}(r),\alpha )$

is

![]() $\beta$

-applicable in

$\beta$

-applicable in

![]() $ \overline {I}$

and,

$ \overline {I}$

and,

![]() ${{bd}{^-}}(r)\cap I=\emptyset$

. Otherwise, it is not applicable in

${{bd}{^-}}(r)\cap I=\emptyset$

. Otherwise, it is not applicable in

![]() $\overline {I}$

.

$\overline {I}$

.

With this new definition of applicability in hand, Equation (2), the definition of

![]() $\mathit{App}(\overline {P}, \overline {A}, q)$

of applicable rules over a poss-NLP

$\mathit{App}(\overline {P}, \overline {A}, q)$

of applicable rules over a poss-NLP

![]() $\overline {P}$

, still works for poss-NLPs. Consequently, the immediate possibilistic consequence operator over a poss-NLP, Equation 3, can be easily extended to poss-NLPs as follows.

$\overline {P}$

, still works for poss-NLPs. Consequently, the immediate possibilistic consequence operator over a poss-NLP, Equation 3, can be easily extended to poss-NLPs as follows.

Definition 2.2 (Immediate possibilistic consequence operator

![]() ${\mathcal{T}}_{\overline {P}}$

). Let

${\mathcal{T}}_{\overline {P}}$

). Let

![]() $\overline {P}$

be a poss-NLP and

$\overline {P}$

be a poss-NLP and

![]() $\overline {I} \in 2^{{\mathcal{A}}\times {\mathcal{Q}}}$

be a possibilistic interpretation. We define

$\overline {I} \in 2^{{\mathcal{A}}\times {\mathcal{Q}}}$

be a possibilistic interpretation. We define

![]() ${\mathcal{T}}_{\overline {P}}: 2^{{\mathcal{A}}\times {\mathcal{Q}}}\rightarrow 2^{{\mathcal{A}}\times {\mathcal{Q}}}$

as follows.

${\mathcal{T}}_{\overline {P}}: 2^{{\mathcal{A}}\times {\mathcal{Q}}}\rightarrow 2^{{\mathcal{A}}\times {\mathcal{Q}}}$

as follows.

The following example illustrates how the reduct of a poss-NLP wrt a poss-interpretation and its least fixpoint can be computed.

Example 2.2.

Let

![]() $\overline {I}=\{(q, 0.9), (s, 0.7)\}$

be a possibilistic interpretation and

$\overline {I}=\{(q, 0.9), (s, 0.7)\}$

be a possibilistic interpretation and

![]() $\overline {P} = \{ r_1, r_2, r_3, r_4 \}$

be a poss-NLP where

$\overline {P} = \{ r_1, r_2, r_3, r_4 \}$

be a poss-NLP where

\begin{align*} & r_1 = ( p \leftarrow q,s, 0.9),\\ &r_2 = ( p \leftarrow \textit {not} \, r, 0.9),\\ &r_3 = ( p \leftarrow \textit {not} \, s, 0.7),\\ &r_4 = ( p \leftarrow r, 0.7). \end{align*}

\begin{align*} & r_1 = ( p \leftarrow q,s, 0.9),\\ &r_2 = ( p \leftarrow \textit {not} \, r, 0.9),\\ &r_3 = ( p \leftarrow \textit {not} \, s, 0.7),\\ &r_4 = ( p \leftarrow r, 0.7). \end{align*}

By Definition 2.1 , we have

-

•

$r_1$

is 0.7-applicable in

$r_1$

is 0.7-applicable in

$\overline {I}$

since

$\overline {I}$

since

${\textit {bd}{^+}}(r_1) \subseteq I$

and

${\textit {bd}{^+}}(r_1) \subseteq I$

and

${\textit {bd}{^-}}(r_1) \cap I = \emptyset$

and

${\textit {bd}{^-}}(r_1) \cap I = \emptyset$

and

$\min \{0.9, 0.7, 0.9\}=0.7$

;

$\min \{0.9, 0.7, 0.9\}=0.7$

; -

•

$r_2$

is 0.9-applicable in

$r_2$

is 0.9-applicable in

$\overline {I}$

since

$\overline {I}$

since

${\textit {bd}{^+}}(r_2) \subseteq I$

and

${\textit {bd}{^+}}(r_2) \subseteq I$

and

$\textit {bd}{^-}(r_2) \cap I = \emptyset$

and

$\textit {bd}{^-}(r_2) \cap I = \emptyset$

and

$\min \{0.9\}=0.9$

;

$\min \{0.9\}=0.9$

; -

•

$r_3$

is not applicable in

$r_3$

is not applicable in

$\overline {I}$

since

$\overline {I}$

since

${\textit {bd}{^-}}(r_3) \cap I \neq \emptyset$

;

${\textit {bd}{^-}}(r_3) \cap I \neq \emptyset$

; -

•

$r_4$

is not applicable in

$r_4$

is not applicable in

$\overline {I}$

since

$\overline {I}$

since

$\textit {bd}{^+}(r_4) \not \subseteq I$

.

$\textit {bd}{^+}(r_4) \not \subseteq I$

.

By Definition

2.2

, it follows that

![]() ${\mathcal{T}}_{\overline {P}}(\overline {I})=\{(p,0.9)\}$

as

${\mathcal{T}}_{\overline {P}}(\overline {I})=\{(p,0.9)\}$

as

![]() $\max \{0.9,0.7\}=0.9$

.

$\max \{0.9,0.7\}=0.9$

.

As the next proposition shows, the immediate consequence operator for poss-NLPs in Definition 2.2 reserves the key properties of the original immediate consequence operator for ordianry NLPs.

Proposition 2.3 (Equivalent consequence). For a given poss-NLP

![]() $\overline {P}$

and a possibilistic interpretation

$\overline {P}$

and a possibilistic interpretation

![]() $\overline {I}$

,

$\overline {I}$

,

![]() ${\mathcal{T}}_{\overline {P}^I}(\overline {I}) = {\mathcal{T}}_{\overline {P}}(\overline {I})$

.

${\mathcal{T}}_{\overline {P}^I}(\overline {I}) = {\mathcal{T}}_{\overline {P}}(\overline {I})$

.

Such an integration simplifies the reasoning and representation concerning poss-stable models. For instance, Corollary 2.1 discussed a property of a poss-stable model without directly touching upon the GL-reduct. This corollary states that a possibilistic interpretation

![]() $\overline {I}$

is still a poss-stable model of the extension of a poss-NLP

$\overline {I}$

is still a poss-stable model of the extension of a poss-NLP

![]() $\overline {P}$

when

$\overline {P}$

when

![]() $\overline {P}$

absorbs a new poss-NLP

$\overline {P}$

absorbs a new poss-NLP

![]() $\overline {B}$

such that

$\overline {B}$

such that

![]() ${\mathcal{T}}_{\overline {B}}(\overline {I}) \sqsubseteq \overline {I}$

.

${\mathcal{T}}_{\overline {B}}(\overline {I}) \sqsubseteq \overline {I}$

.

Corollary 2.1 (Absorption for a poss-stable model). Given a possibilistic interpretation

![]() $\overline {I}$

and two poss-NLPs

$\overline {I}$

and two poss-NLPs

![]() $\overline {P}$

and

$\overline {P}$

and

![]() $\overline {B}$

,

$\overline {B}$

,

![]() $\overline {I} \in \mathit{PSM}(\overline {P} \sqcup \overline {B})$

if

$\overline {I} \in \mathit{PSM}(\overline {P} \sqcup \overline {B})$

if

![]() $\overline {I} \in \mathit{PSM}(\overline {P})$

and

$\overline {I} \in \mathit{PSM}(\overline {P})$

and

![]() ${\mathcal{T}}_{\overline {B}}(\overline {I}) \sqsubseteq \overline {I}$

.

${\mathcal{T}}_{\overline {B}}(\overline {I}) \sqsubseteq \overline {I}$

.

3 Induction tasks for possibilistic logic programs

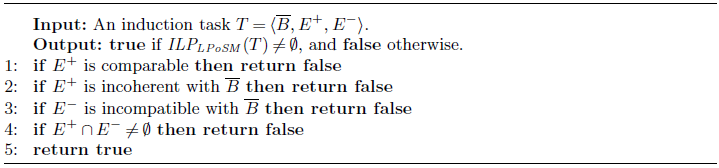

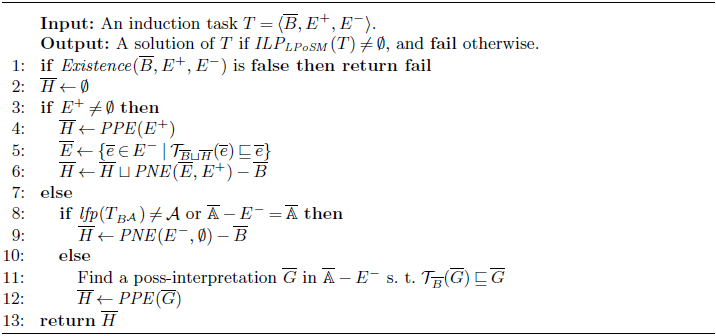

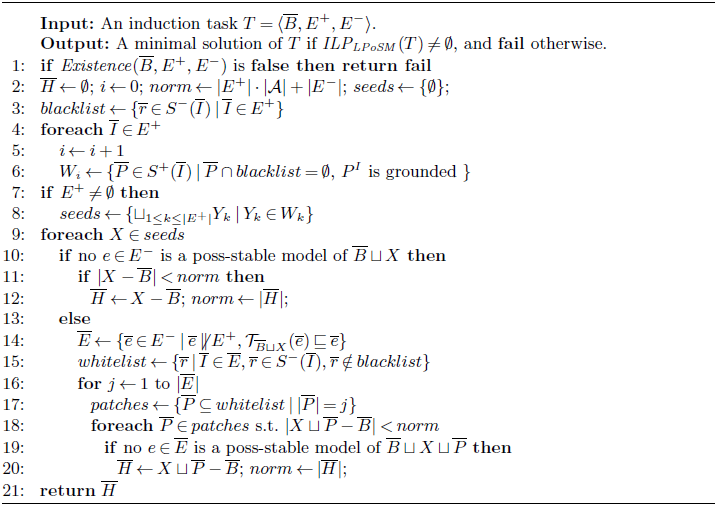

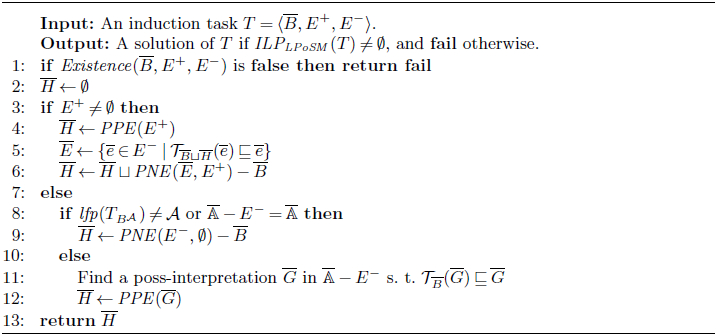

In this section, we first present the definition of induction tasks for poss-NLPs and then investigates some properties. These results will further be applied in computing (minimal) induction solutions. The necessary and sufficient condition for existing a solution for an induction task relies on the relationships such as (in)comparability and coherency among the components of the task. To solve such an induction task, we propose an algorithm ilpsm for the construction of a particular solution and further propose another algorithm ilpsmmin to identify a minimal solution. To narrow the search scope of the algorithm ilpsmmin, the concept of solution space is introduced and its properties are investigated.

Informally, assume that there is a given background knowledge base formalized as a set of possibilistic rules, and a set of (positive and negative) examples, the induction task is to extract a new poss-NLP that covers positive examples but none of the negative examples. We first formally define the notion of induction tasks for poss-NLPs in Definition 3.1.

Definition 3.1 (Induction task for poss-NLPs). Let

![]() $\mathcal{A}$

be a set of atoms and

$\mathcal{A}$

be a set of atoms and

![]() $\mathcal{Q}$

be a complete lattice. An induction task for poss-NLPs is a tuple

$\mathcal{Q}$

be a complete lattice. An induction task for poss-NLPs is a tuple

![]() $T = {\langle { \overline {B}, E^+, E^-}\rangle }$

, where the background knowledge

$T = {\langle { \overline {B}, E^+, E^-}\rangle }$

, where the background knowledge

![]() $\overline {B}$

is a poss-NLP over

$\overline {B}$

is a poss-NLP over

![]() $\mathcal{A}$

and

$\mathcal{A}$

and

![]() $\mathcal{Q}$

,

$\mathcal{Q}$

,

![]() $E^+$

and

$E^+$

and

![]() $E^-$

are two sets of possibilistic interpretations called the set of positive examples and the set of negative examples, respectively. A hypothesis

$E^-$

are two sets of possibilistic interpretations called the set of positive examples and the set of negative examples, respectively. A hypothesis

![]() $\overline {H}$

is a NLP over

$\overline {H}$

is a NLP over

![]() $\mathcal{A}$

and

$\mathcal{A}$

and

![]() $\mathcal{Q}$

. We say

$\mathcal{Q}$

. We say

![]() $\overline {H}$

is a solution of the induction task

$\overline {H}$

is a solution of the induction task

![]() $T$

if the following two conditions are satisfied:

$T$

if the following two conditions are satisfied:

The set of all solutions of the induction task is denoted

![]() $\mathit{ILP_{LPoSM}}(T)$

.

$\mathit{ILP_{LPoSM}}(T)$

.

For convenience, we assume that

![]() $\mathcal{A}$

and

$\mathcal{A}$

and

![]() $\mathcal{Q}$

consists of the atoms and weights occurring in the induction task

$\mathcal{Q}$

consists of the atoms and weights occurring in the induction task

![]() $T$

, receptively, unless explicitly stated otherwise.

$T$

, receptively, unless explicitly stated otherwise.

Note that Condition G1 requires only

![]() $E^+$

is a portion of the poss-stable models of

$E^+$

is a portion of the poss-stable models of

![]() $\overline {B} \sqcup \overline {H}$

. This “partiality of examples” should not be confused with the “model partiality”. The following example shows some instances of induction tasks.

$\overline {B} \sqcup \overline {H}$

. This “partiality of examples” should not be confused with the “model partiality”. The following example shows some instances of induction tasks.

Example 3.1.

For the scenario in Example

1.1

, the induction task can be represented as

![]() $T_1 = {\langle {\overline {B_{med}}, \{ \overline {A_1},\overline {A_2} \}, \{ \overline {A_3} \} }\rangle }$

where

$T_1 = {\langle {\overline {B_{med}}, \{ \overline {A_1},\overline {A_2} \}, \{ \overline {A_3} \} }\rangle }$

where

\begin{align*} & \overline {A_1} = \{ (pregnancy,1), (vomiting,1), (medA,1), (\mathit{relief},0.7), (malnutrition,0.7) \},\\ & \overline {A_2} = \{ (pregnancy,1), (vomiting,1), (medB,1), (\mathit{relief},0.6), (malnutrition,0.1) \},\\ & \overline {A_3} = \{ (pregnancy,1), (vomiting,1), (medA,0.7), (\mathit{relief},0.7) \}. \end{align*}

\begin{align*} & \overline {A_1} = \{ (pregnancy,1), (vomiting,1), (medA,1), (\mathit{relief},0.7), (malnutrition,0.7) \},\\ & \overline {A_2} = \{ (pregnancy,1), (vomiting,1), (medB,1), (\mathit{relief},0.6), (malnutrition,0.1) \},\\ & \overline {A_3} = \{ (pregnancy,1), (vomiting,1), (medA,0.7), (\mathit{relief},0.7) \}. \end{align*}

Here

![]() ${\mathcal{A}} = \{ pregnancy, vomiting, medA, medB, \mathit{relief}, malnutrition \}$

and

${\mathcal{A}} = \{ pregnancy, vomiting, medA, medB, \mathit{relief}, malnutrition \}$

and

![]() ${\mathcal{Q}} = \{ 0.1,0.6,0.7,1 \}$

and

${\mathcal{Q}} = \{ 0.1,0.6,0.7,1 \}$

and

![]() $0.1 \leq 0.6 \leq 0.7 \leq 1$

. The induction task

$0.1 \leq 0.6 \leq 0.7 \leq 1$

. The induction task

![]() $T_1$

has a solution

$T_1$

has a solution

![]() $\overline {H_1} = \{ (medA \leftarrow vomiting, \textit {not} \, medB, 1) \}$

. Also,

$\overline {H_1} = \{ (medA \leftarrow vomiting, \textit {not} \, medB, 1) \}$

. Also,

![]() $\overline {H_2} = \overline {H_1} \cup \{ (\mathit{relief} \leftarrow vomiting, 0.6) \}$

is a solution of

$\overline {H_2} = \overline {H_1} \cup \{ (\mathit{relief} \leftarrow vomiting, 0.6) \}$

is a solution of

![]() $T_1$

. Let

$T_1$

. Let

![]() $\overline {P_{med}} = \overline {B_{med}} \cup \overline {H_1}$

. Then

$\overline {P_{med}} = \overline {B_{med}} \cup \overline {H_1}$

. Then

![]() $\overline {A_1}$

and

$\overline {A_1}$

and

![]() $\overline {A_2}$

are poss-stable models of

$\overline {A_2}$

are poss-stable models of

![]() $\overline {P_{med}}$

, while

$\overline {P_{med}}$

, while

![]() $\overline {A_3}$

is not.

$\overline {A_3}$

is not.

However, the induction task

![]() $T_2 = {\langle {\overline {B_{med}}, \{ \overline {A_1},\overline {A_2}, \{ (pregnancy, 0.6) \} \}, \{ \overline {A_3} \} }\rangle }$

has no solution, that is,

$T_2 = {\langle {\overline {B_{med}}, \{ \overline {A_1},\overline {A_2}, \{ (pregnancy, 0.6) \} \}, \{ \overline {A_3} \} }\rangle }$

has no solution, that is,

![]() $\mathit{ILP_{LPoSM}}(T_2) = \emptyset$

.

$\mathit{ILP_{LPoSM}}(T_2) = \emptyset$

.

For induction task

![]() $T_3 = {\langle {\emptyset , \{ \{ (p, 0.3), (q,0.3) \} \}, \emptyset }\rangle }$

, we have

$T_3 = {\langle {\emptyset , \{ \{ (p, 0.3), (q,0.3) \} \}, \emptyset }\rangle }$

, we have

![]() ${\mathcal{A}} = \{ p, q \}$

and

${\mathcal{A}} = \{ p, q \}$

and

![]() ${\mathcal{Q}} = \{ 0.3 \}$

. This induction task has more than one solution. For instance,

${\mathcal{Q}} = \{ 0.3 \}$

. This induction task has more than one solution. For instance,

![]() $\overline {H_3}$

,

$\overline {H_3}$

,

![]() $\overline {H_4}$

,

$\overline {H_4}$

,

![]() $\overline {H_5}$

,

$\overline {H_5}$

,

![]() $\overline {H_6}$

are all solutions of

$\overline {H_6}$

are all solutions of

![]() $T_3$

, that is,

$T_3$

, that is,

![]() $\{ \overline {H_3}, \overline {H_4}, \overline {H_5}, \overline {H_6} \} \subseteq \mathit{ILP_{LPoSM}}(T_3)$

, where

$\{ \overline {H_3}, \overline {H_4}, \overline {H_5}, \overline {H_6} \} \subseteq \mathit{ILP_{LPoSM}}(T_3)$

, where

![]() $\overline {H_3} = \{ (p \leftarrow , 0.3), (q \leftarrow p, 0.3) \}$

,

$\overline {H_3} = \{ (p \leftarrow , 0.3), (q \leftarrow p, 0.3) \}$

,

![]() $\overline {H_4} = \{ (p \leftarrow q, 0.3), (q \leftarrow , 0.3) \}$

,

$\overline {H_4} = \{ (p \leftarrow q, 0.3), (q \leftarrow , 0.3) \}$

,

![]() $\overline {H_5} = \{ (p \leftarrow , 0.3), (q \leftarrow , 0.3) \}$

, and

$\overline {H_5} = \{ (p \leftarrow , 0.3), (q \leftarrow , 0.3) \}$

, and

![]() $\overline {H_6} = \{ (p \leftarrow , 0.3), (q \leftarrow , 0.3), (q \leftarrow p, 0.3), (p \leftarrow q, 0.3) \}$

.

$\overline {H_6} = \{ (p \leftarrow , 0.3), (q \leftarrow , 0.3), (q \leftarrow p, 0.3), (p \leftarrow q, 0.3) \}$

.

For induction task

![]() $T_4 = {\langle { \emptyset , \{ \{ (p, 0.3), (q,0.3) \} \}, \{ \{ (p, 0.3), (q,0.3) \} \}}\rangle }$

, it is obvious that

$T_4 = {\langle { \emptyset , \{ \{ (p, 0.3), (q,0.3) \} \}, \{ \{ (p, 0.3), (q,0.3) \} \}}\rangle }$

, it is obvious that

![]() $\mathit{ILP_{LPoSM}}(T_4) = \emptyset$

.

$\mathit{ILP_{LPoSM}}(T_4) = \emptyset$

.

For induction task

![]() $T_5 = {\langle { \{ (p \leftarrow , 1) \}, \{\{(q,1),(p,1)\}\}, \{\{(q,1)\}\} }\rangle }$

, it can be checked that

$T_5 = {\langle { \{ (p \leftarrow , 1) \}, \{\{(q,1),(p,1)\}\}, \{\{(q,1)\}\} }\rangle }$

, it can be checked that

![]() $\{ (q \leftarrow , 1) \} \subseteq \mathit{ILP_{LPoSM}}(T_5)$

.

$\{ (q \leftarrow , 1) \} \subseteq \mathit{ILP_{LPoSM}}(T_5)$

.

We recall that any two stable models of a NLP are

![]() $\subseteq$

-incomparable. Namely,

$\subseteq$

-incomparable. Namely,

![]() $\mathit{SM}(P)$

of any NLP

$\mathit{SM}(P)$

of any NLP

![]() $P$

is

$P$

is

![]() $\subseteq$

-incomparable. For a poss-NLP, there is a similar property. Before formally stating the property, we note that the (in)comparability of interpretations can be similarly defined for poss-interpretations.

$\subseteq$

-incomparable. For a poss-NLP, there is a similar property. Before formally stating the property, we note that the (in)comparability of interpretations can be similarly defined for poss-interpretations.

Definition 3.2 (Incomparability for possibilistic interpretations). Let

![]() $\overline {I}$

and

$\overline {I}$

and

![]() $\overline {J}$

be two possibilistic interpretations, and

$\overline {J}$

be two possibilistic interpretations, and

![]() $\overline {S}$

be a set of possibilistic interpretations.

$\overline {S}$

be a set of possibilistic interpretations.

-

1.

$\overline {I}$

and

$\overline {I}$

and

$\overline {J}$

are comparable, written as

$\overline {J}$

are comparable, written as

$\overline {I} \parallel \overline {J}$

, if

$\overline {I} \parallel \overline {J}$

, if

$I\subseteq J$

or

$I\subseteq J$

or

$J\subseteq I$

. Otherwise, they are incomparable, written as

$J\subseteq I$

. Otherwise, they are incomparable, written as

$\overline {I} \not \parallel \overline {J}$

.

$\overline {I} \not \parallel \overline {J}$

. -

2.

$\overline {I}$

is incomparable w.r.t.

$\overline {I}$

is incomparable w.r.t.

$\overline {S}$

, written as

$\overline {S}$

, written as

$\overline {I} \not \parallel \overline {S}$

, if

$\overline {I} \not \parallel \overline {S}$

, if

$\overline {I} \not \parallel \overline {J}$

for every

$\overline {I} \not \parallel \overline {J}$

for every

$\overline {J}\in S$

. Otherwise,

$\overline {J}\in S$

. Otherwise,

$\overline {I}$

is comparable w.r.t.

$\overline {I}$

is comparable w.r.t.

$\overline {S}$

, written as

$\overline {S}$

, written as

$\overline {I} \parallel \overline {S}$

.

$\overline {I} \parallel \overline {S}$

. -

3.

$\overline {S}$

is incomparable if

$\overline {S}$

is incomparable if

$\overline {I} \not \parallel \overline {J}$

for every pair of different interpretations

$\overline {I} \not \parallel \overline {J}$

for every pair of different interpretations

$\overline {I}\in \overline {S}$

and

$\overline {I}\in \overline {S}$

and

$\overline {J} \in \overline {S}$

. Otherwise,

$\overline {J} \in \overline {S}$

. Otherwise,

$\overline {S}$

is comparable.

$\overline {S}$

is comparable.

By the minimality of stable models, two different

![]() $\subseteq$

-comparable interpretations cannot simultaneously be poss-stable models of the same poss-NLP.

$\subseteq$

-comparable interpretations cannot simultaneously be poss-stable models of the same poss-NLP.

Proposition 3.1 (Incomparability between poss-stable models). Given two different possibilistic interpretations

![]() $\overline {I}$

and

$\overline {I}$

and

![]() $\overline {J}$

such that

$\overline {J}$

such that

![]() $\overline {I} \parallel \overline {J}$

,

$\overline {I} \parallel \overline {J}$

,

![]() $\{ \overline {I}, \overline {J} \} \not \subseteq \mathit{PSM}(\overline {P})$

for any poss-NLP

$\{ \overline {I}, \overline {J} \} \not \subseteq \mathit{PSM}(\overline {P})$

for any poss-NLP

![]() $\overline {P}$

.

$\overline {P}$

.

Intuitively, two different comparable possibilistic interpretations cannot simultaneously appear in the set of stable models of the same poss-NLP. This result are useful for optimizing the algorithms for solving induction tasks in poss-NLPs, which actually provides two heuristics for early termination of some search paths. First, the induction task has no solution if two positive examples in the given

![]() $E^+$

are comparable. Moreover, a negative example in

$E^+$

are comparable. Moreover, a negative example in

![]() $E^-$

can be ignored immediately if it is comparable with a positive example in

$E^-$

can be ignored immediately if it is comparable with a positive example in

![]() $E^+$

.

$E^+$

.

Induction task

![]() $T = {\langle { \overline {B}, E^+, E^-}\rangle }$

of poss-NLP from poss-stable models in two aspects at least. On one hand, an induction task has no solution if two positive examples in the given

$T = {\langle { \overline {B}, E^+, E^-}\rangle }$

of poss-NLP from poss-stable models in two aspects at least. On one hand, an induction task has no solution if two positive examples in the given

![]() $E^+$

are comparable as Example 3.2. On the other hand, a negative example

$E^+$

are comparable as Example 3.2. On the other hand, a negative example

![]() $\overline {e} \in E^-$

can be ignored before the solving process if

$\overline {e} \in E^-$

can be ignored before the solving process if

![]() $\overline {e}$

is comparable with a positive example in

$\overline {e}$

is comparable with a positive example in

![]() $E^+$

.

$E^+$

.

Example 3.2.

Let

![]() $\overline {S} = \{ \overline {I}, \overline {J} \}$

be a set of possibilistic interpretations, where

$\overline {S} = \{ \overline {I}, \overline {J} \}$

be a set of possibilistic interpretations, where

![]() $\overline {I} = \{ (p,0.3), (q,0.5) \}$

and

$\overline {I} = \{ (p,0.3), (q,0.5) \}$

and

![]() $\overline {J} = \{ (p,0.4), (q,0.4) \}$

. It is evident that

$\overline {J} = \{ (p,0.4), (q,0.4) \}$

. It is evident that

![]() $\overline {I} \parallel \overline {J}$

, but

$\overline {I} \parallel \overline {J}$

, but

![]() $\{ \overline {I}, \overline {J} \} \not \subseteq \mathit{PSM}(\overline {P})$

for any poss-NLP

$\{ \overline {I}, \overline {J} \} \not \subseteq \mathit{PSM}(\overline {P})$

for any poss-NLP

![]() $\overline {P}$

. Thus,

$\overline {P}$

. Thus,

![]() $T_{21} = {\langle {\emptyset , E^+, \emptyset }\rangle }$

has no solution when

$T_{21} = {\langle {\emptyset , E^+, \emptyset }\rangle }$

has no solution when

![]() $E^+ = \overline {S}$

or

$E^+ = \overline {S}$

or

![]() $E^+ = \{ \{ (p,0.3), (q,0.5) \}, \{ (p, 0.4) \} \}$

.

$E^+ = \{ \{ (p,0.3), (q,0.5) \}, \{ (p, 0.4) \} \}$

.

In the process of extracting a poss-NLP from both positive and negative examples, we are going to obtain some other necessary conditions for a solution of the induction task, which will be useful for implementation of algorithms for induction for poss-NLPs.

By Proposition 2.2,

![]() $\mathit{PSM}(\overline {P}) = \overline {S}$

implies

$\mathit{PSM}(\overline {P}) = \overline {S}$

implies

![]() $\mathit{SM}(P) = S$

, but not vice versa.

$\mathit{SM}(P) = S$

, but not vice versa.

Example 3.3.

Let

![]() $\overline {S} = \{ \overline {I}, \overline {J} \}$

and

$\overline {S} = \{ \overline {I}, \overline {J} \}$

and

![]() $\overline {P} = \{ \overline {r_1}, \overline {r_2}, \overline {r_3} \}$

where

$\overline {P} = \{ \overline {r_1}, \overline {r_2}, \overline {r_3} \}$

where

![]() $\overline {I} = \{ (p,0.3), (q,0.5) \}$

,

$\overline {I} = \{ (p,0.3), (q,0.5) \}$

,

![]() $\overline {J} = \{ (p,0.4), (r,0.4) \}$

,

$\overline {J} = \{ (p,0.4), (r,0.4) \}$

,

![]() $\overline {r_1} = (p \leftarrow ,0.3)$

, and

$\overline {r_1} = (p \leftarrow ,0.3)$

, and

![]() $\overline {r_2} = (q \leftarrow \textit {not} \, r, 0.6)$

,

$\overline {r_2} = (q \leftarrow \textit {not} \, r, 0.6)$

,

![]() $\overline {r_3} = (r \leftarrow \textit {not} \, q,0.4)$

. It is evident that

$\overline {r_3} = (r \leftarrow \textit {not} \, q,0.4)$

. It is evident that

![]() $\mathit{SM}(P) = S$

but

$\mathit{SM}(P) = S$

but

![]() $\mathit{PSM}(\overline {P}) \neq \overline {S}$

.

$\mathit{PSM}(\overline {P}) \neq \overline {S}$

.

We note that changing the weights of rules in

![]() $\overline {P}$

does not make that the resulting poss-NLP

$\overline {P}$

does not make that the resulting poss-NLP

![]() $\overline {H}$

to satisfy the condition

$\overline {H}$

to satisfy the condition

![]() $\mathit{PSM}(\overline {H}) = \overline {S}$

, here

$\mathit{PSM}(\overline {H}) = \overline {S}$

, here

![]() $H = P$

. To see this, on the contrary we assume that

$H = P$

. To see this, on the contrary we assume that

![]() $\mathit{PSM}(\overline {H}) = \overline {S}$

for some

$\mathit{PSM}(\overline {H}) = \overline {S}$

for some

![]() $\overline {H}$

obtained from

$\overline {H}$

obtained from

![]() $\overline {P}$

by updating the weights of three rules. We note that

$\overline {P}$

by updating the weights of three rules. We note that

![]() $\overline {I} \in \mathit{PSM}(\overline {H})$

and

$\overline {I} \in \mathit{PSM}(\overline {H})$

and

![]() $r_1$

is the only rule in

$r_1$

is the only rule in

![]() $P$

that supports the atom

$P$

that supports the atom

![]() $p$

. Thus, the weight

$p$

. Thus, the weight

![]() $\alpha _1$

of

$\alpha _1$

of

![]() $r_1$

has to be

$r_1$

has to be

![]() $0.3$

. On the other hand,

$0.3$

. On the other hand,

![]() $\overline {J} \in \mathit{PSM}(\overline {H})$

would imply

$\overline {J} \in \mathit{PSM}(\overline {H})$

would imply

![]() $\alpha _1 = 0.4$

for the identical rationale, a contradiction.

$\alpha _1 = 0.4$

for the identical rationale, a contradiction.

From the above example, given a collection

![]() $\overline {S}$

of poss-interpretations, an ordinary NLP

$\overline {S}$

of poss-interpretations, an ordinary NLP

![]() $H$

can be constructed from

$H$

can be constructed from

![]() $\overline {S}$

such that

$\overline {S}$

such that

![]() $\mathit{SM}(H) = S$

, but there may be no

$\mathit{SM}(H) = S$

, but there may be no

![]() $\overline {H}$

such that

$\overline {H}$

such that

![]() $\mathit{PSM}(\overline {H}) = \overline {S}$

. This shows that, due to the introduction of weights in rules, the problem of induction for poss-NLPs is not as easy as in the case of ordinary NLPs.

$\mathit{PSM}(\overline {H}) = \overline {S}$

. This shows that, due to the introduction of weights in rules, the problem of induction for poss-NLPs is not as easy as in the case of ordinary NLPs.

In the rest of this section, we will investigate some properties of induction tasks for poss-programs, especially, the existence of induction solutions. To this aim, we first introduce two notions that are used for characterizing rules that can cover a candidate model (supporting positive examples and blocking negative examples, respectively).

Let

![]() $\overline {S}$

be a set of possibilistic interpretations. We define

$\overline {S}$

be a set of possibilistic interpretations. We define

where

![]() $\mathit{PPE}(\overline {I}) = \{ (x \leftarrow \textit {not} \, ({\mathcal{A}} - I), \alpha ) \mid \text{ for all } (x,\alpha ) \in \overline {I} \}$

.

$\mathit{PPE}(\overline {I}) = \{ (x \leftarrow \textit {not} \, ({\mathcal{A}} - I), \alpha ) \mid \text{ for all } (x,\alpha ) \in \overline {I} \}$

.

In an induction task, if

![]() $\overline {I}$

in

$\overline {I}$

in

![]() $E^+$

, then

$E^+$

, then

![]() $\mathit{PPE}(\overline {I})$

contains the possibilistic rules that potentially cover the positive example. Thus,

$\mathit{PPE}(\overline {I})$

contains the possibilistic rules that potentially cover the positive example. Thus,

![]() $\mathit{PPE}(E^+)$

contains the rules that are potentially cover

$\mathit{PPE}(E^+)$

contains the rules that are potentially cover

![]() $E^+$

(i. e., all positive examples) if

$E^+$

(i. e., all positive examples) if

![]() $E^+$

is incomparable.

$E^+$

is incomparable.

Proposition 3.2 (Satisfiability for program

![]() $\mathit{PPE}(\overline {S})$

). For a given set

$\mathit{PPE}(\overline {S})$

). For a given set

![]() $\overline {S}$

of possibilistic interpretations,

$\overline {S}$

of possibilistic interpretations,

![]() $\mathit{PSM}(\mathit{PPE}(\overline {S})) = \overline {S}$

if

$\mathit{PSM}(\mathit{PPE}(\overline {S})) = \overline {S}$

if

![]() $\overline {S}$

is incomparable.

$\overline {S}$

is incomparable.

Example 3.4.

Let

![]() $T_{22} = {\langle {\emptyset , E^+, \emptyset }\rangle }$

be an induction task, where

$T_{22} = {\langle {\emptyset , E^+, \emptyset }\rangle }$

be an induction task, where

![]() $E^+ = \{ \{ (p, 0.5), (r, 0.5) \}, \{ (q, 0.3), (r, 0.8) \} \}$

. Then

$E^+ = \{ \{ (p, 0.5), (r, 0.5) \}, \{ (q, 0.3), (r, 0.8) \} \}$

. Then

![]() ${\mathcal{A}} = \{ p,q,r \}$

,

${\mathcal{A}} = \{ p,q,r \}$

,

![]() ${\mathcal{Q}} = \{ 0.3, 0.5, 0.8 \}$

and

${\mathcal{Q}} = \{ 0.3, 0.5, 0.8 \}$

and

![]() $0.3 \leq 0.5 \leq 0.8$

. Obviously,

$0.3 \leq 0.5 \leq 0.8$

. Obviously,

![]() $E^+$

is incomparable. It is easy to see that

$E^+$

is incomparable. It is easy to see that

![]() $\mathit{PSM}(\mathit{PPE}(E^+)) = E^+$

. So,

$\mathit{PSM}(\mathit{PPE}(E^+)) = E^+$

. So,

![]() $\mathit{PPE}(E^+) \in \mathit{ILP_{LPoSM}}(T_{22})$

, here

$\mathit{PPE}(E^+) \in \mathit{ILP_{LPoSM}}(T_{22})$

, here

![]() $\mathit{PPE}(E^+) = \{ (p \leftarrow \textit {not} \, q, 0.5), (r \leftarrow \textit {not} \, q, 0.5), (q \leftarrow \textit {not} \, p, 0.3), (r \leftarrow \textit {not} \, p, 0.8) \}$

.