1. Introduction

The activities of social media companies in the realm of online communication have emerged as one of the most pressing challenges for liberal democracies in our time. With the rapid and widespread growth in the use of these companies’ platforms, they have become spaces where various forms of illegal content circulate – such as fraud or scams, child sexual abuse material, insults, defamation, infringements of intellectual property rights, and, depending on the legal system, incitement to violence or hate speech.Footnote 1 In response to this public problem, national and international public actors committed to human rights, democracy and the rule of law have engaged in several paths to find the most appropriate response.Footnote 2 Paradoxically, however, recent efforts to regulate social media have led to accusations that such measures undermine freedom of expression and democratic values, highlighting the deeply contested nature of this issue.Footnote 3

In recent years, the European Union (EU) has progressively put in place the most advanced and complex set up of initiatives addressing various issues regarding online communications – ranging from copyright violationsFootnote 4 and content that qualifies as a ‘terrorist offence’,Footnote 5 to other types of illegal content such as incitement to violence or hate speechFootnote 6 – and policy instruments such as codes of conduct. It has presented its legal framework as a move towards better protection of the fundamental rights of individuals.Footnote 7 Practically, the EU has chosen to build its legal framework upon platform-led content moderation, a set of practices developed by social media companies according to their own principles, grounded in market logics.Footnote 8 In this context, the EU is choosing to entrust social media companies with the responsibility of identifying and removing illegal content on the basis of EU and Member State law, thereby transforming these private actors into central nodes in the implementation of EU and national legal rules that have fundamental rights implications.

This paper aims to investigate in greater detail this legal framework and the role that social media companies play within it. To do this, the paper draws on regulation studies and on one of the analytical frameworks they propose, the Regulator–Intermediary–Target (RIT) model. Although other studies have examined social media platforms’ governance from a regulatory intermediation perspective, they mainly focus on how platforms enlist third parties to support their own policy developmentFootnote 9 or how these third parties advise them in the context of their own ‘appeal’ procedures.Footnote 10 This paper offers to consider the scenario in which social media companies themselves act as regulatory intermediaries, in the context of the implementation of the law on illegal content online in the EU.Footnote 11 The paper first presents four main developments that have marked the legal framework applicable to social media and illegal content in the EU. Then, it applies the RIT model to shed light on the new collaborative relationship between public authorities and social media companies, arguing that platform-led content moderation is being institutionalised. Finally, the paper critically discusses the implications of such legal framework, both from the point of view of the tension between the self-interest of companies and their mandate to implement the law and from that of the relationship between the EU and the adoption of market-based forms of governance. In this regard, this paper contributes to two fields. First, from a critical legal perspective, it contributes to studies on EU digital law by analysing the market-driven approach that characterises the current European legal framework to illegal content online. Second, from the perspective of regulation studies, it introduces a new case of regulatory intermediation in the digital context, that of social media platforms.

The following section outlines the key elements of regulation studies that will shed light on the legal framework (Section 2). Then, the paper highlights four main developments that help understand the evolution of the European legal framework regarding illegal content online (Section 3). The fourth (Section 4) applies the RIT model to the current approach of the EU, showing how the European legal framework is in effect institutionalising platform-led content moderation. Finally, the last (Section 5) critically discusses the implications of this framework.

2. Regulation studies as an analytical lens

This section aims to briefly present some key elements from the field of regulation studies and provide insights into why regulatory concepts are fruitful tools for analysing the evolution of the European legal framework for illegal content online.

In a nutshell, regulation studies have emerged in response to significant transformations in the organisation and functioning of the state during the late 1970s and early 1980s. From this perspective, regulation Footnote 12 refers to ‘intentional, organised attempts to manage or control risk or the behaviours of a different party through the exercise of authority, usually through the use of mechanisms of standard-setting, monitoring and information-gathering and behaviour modification to address a collective tension or problem’.Footnote 13 This definition is intended to be broad in order to be inclusive; indeed, in practice, the term ‘regulation’ is polysemic, as it is used in a variety of ways within perspectives that claim to be part of the field of regulation studies. Some analyses use the term in its ‘stated-centred’ dimension, referring to it as a synonym for ‘state-made law’.Footnote 14 Other perspectives see regulation as a social phenomenon, where regulation is understood as involving various social domains and a multitude of actors beyond the state.Footnote 15 Early contributions to this field introduced the notion of the ‘regulatory state’, describing ‘a series of changes in the nature and functions of the state that have resulted from a shift in the prevailing style of governance following sweeping reforms in the public sector within many industrialised states throughout the 1980s and 1990s’.Footnote 16 The regulatory state thus describes a form of organisation where the state governs societal developments from a distance, rather than through the direct provision of goods and services as was characteristic of the welfare state in the post-war period.Footnote 17 In this perspective, the state plays more of a steering role,Footnote 18 acting as a ‘manager of political authority’ rather than a monopolist of this authority that aims to change the societal world through ‘command-and-control’ instruments.Footnote 19 In other words, it seeks to ‘orchestrate’ other social actorsFootnote 20 in a ‘decentred’ way.Footnote 21 One such other social actor that regulation studies have focused on is the figure of the independent regulatory agency,Footnote 22 but the field has also expanded its analyses to other institutions, actors and activities.Footnote 23

Regulation studies offer complementary tools to legal studies. In the latter, law is a synonym of regulation. (Statute) Law is primarily understood as a binding set of norms, adopted by a country’s legislative body according to very specific rules and procedures, and enforced by judicial authorities, in accordance with the principle of the separation of powers.Footnote 24 In democratic regimes, law is seen as the primary instrument of state action, owing notably to its strong legitimacyFootnote 25 – which stems from its adoption through a process aimed at substantively emphasising democratic representationFootnote 26 – and to its mandatory character – supported by the coercive power of the state, which is supposed to ensure its effective implementationFootnote 27 (regulation studies qualify this as a ‘command-and-control’ form of actionFootnote 28 ). These elements combined underline that the law is a central element of state action, which, at least in liberal democracies, is adopted with a view to serving public interest; that is, it protects the rights of individuals while balancing them against the rights of others, just as it serves to hold power accountable.Footnote 29 It is the analysis of laws adopted according to these processes that has implications for legal science, particularly from the point of view of the interpretation of norms as carried out by legal doctrine and the courts.Footnote 30

Over the past decades, the increasing pace of globalisation and the growing interconnection between countries have diminished the law’s status as the primary tool of government action, notably in public international law.Footnote 31 The transnational dimension of contemporary political issues and actors challenges the territorial boundaries of the states – extraterritoriality of the law being an exception with its own challenges and geopolitical implications.Footnote 32 Issues such as climate change, the rise of the Internet, and the growth of a globally interconnected financial sector with systemically important actors have underscored the limitations and inadequacies of state-level legal responses.Footnote 33

From an academic point of view, this scope broadens the analysis of normative phenomena beyond law and legal doctrine analysis. As such, grounding an analysis in a regulation studies perspective means adopting a lens that goes beyond the mere analysis of legal texts and consider all regulatory developments and actors within a broader perspective in order to understand the complexity of the normative phenomenon at play. This is particularly true of the issue of the legal framework for illegal content online in the EU, the evolution of which is sketched below.

3. Four key developments of the European legal framework for illegal content online

This section outlines four key developments to provide a general overview of the evolution of the context surrounding the European legal framework for illegal content online. The first concerns the broader human rights framework, particularly the right to freedom of expression, which shapes the landscape for particular issues relating to illegal speech (A). The second is the rise of social media platforms as transformative actors in the communication space. Operating in a largely unregulated environment at their beginnings, these platforms have developed their own systems of self-regulation (B). A third significant development is the response at the Member State level, where dissatisfaction with the failures of platform-led self-regulation prompted the adoption of national legislation to address illegal online content (C). Finally, the EU has moved to consolidate these fragmented national responses by establishing a more comprehensive legal framework, which now serves as the basis for regulating illegal online speech across the Union (D).

A. The human right to freedom of expression

In liberal democracies, communication activities are understood primarily in terms of the principle of freedom of expression, which rests on the fundamental idea that individuals are granted the liberty to express themselves in the face of the state. From a legal perspective, Article 19 of the International Covenant on Civil and Political Rights (ICCPR) affirms the right to freedom of expression. In European law, Article 10 of the European Convention on Human Rights and Article 11 of the Charter of Fundamental Rights of the European Union, as well as provisions in the vast majority of Member States’ constitutions, also enshrine this principle.Footnote 34 The right to freedom of expression generally states that this freedom includes the right to hold opinions and to receive and impart information and ideas without interference by public authorities, regardless of frontiers. The right thus contains a dual dimension: an outward-facing aspect – the ability to express opinions – and an inward-facing one – the right to receive information.Footnote 35 Therefore, freedom of expression is viewed as a key component of a broader information ecosystem, whose health is necessary for democratic life and institutions.Footnote 36 This right is thus considered a cornerstone of any democratic society.Footnote 37

Nevertheless, liberal democracy regimes do not guarantee an absolute right to freedom of expression. Through their legal frameworks or their constitutional jurisprudence, they contain a system of restrictions that can be applied to this right. For example, Article 10(2) ECHR states that ‘the exercise of these freedoms, since it carries with it duties and responsibilities, may be subject to such formalities, conditions, restrictions or penalties as are prescribed by law and are necessary in a democratic society (…)’. Restrictions on the right to freedom of expression are therefore possible, provided that they are anchored in law, that they pursue public interest, and are proportionate to the aim pursued.Footnote 38 In such cases, judicial authorities (up to the European Court of Human Rights) may review the legality of such restrictions.Footnote 39

Furthermore, some legal texts have anchored the idea that the right to freedom of expression can also be limited for specific types of speech, thus codifying the abovementioned regime of restrictions. As a consequence, certain types of speech are considered ‘illegal’, due to the potential harm they pose to the rights and interests of others.Footnote 40 Such approach – based on the possibility of restricting freedom of expression in specific cases – is widely shared across the EU.Footnote 41 At the international level, the United Nations (UN) also made this possibility clear. For example, Article 20 ICCPR imposes a duty on States to prohibit all forms of ‘national, racial or religious hatred’: on this basis, States are allowed to adopt provisions that restrict freedom of expression for cases when this right is used by individuals to spread speech that constitutes forms of forbidden hatred.Footnote 42 Despite its recognition by the UN, not all Western liberal democracy regimes share this approach. The United States (US) has notably a more absolutist interpretation of freedom of speech, guaranteed by the First Amendment of the US Constitution. Therefore, its legal framework broadly protects controversial forms of expression that would be considered illegal in the EU.Footnote 43

Accordingly, as a principle, individuals enjoy the guarantee of freedom of expression; the State may restrict that freedom under strictly defined conditions; and judicial authorities may review the legality of such restrictions. However, divergences can also occur between liberal legal regimes on how lawmakers and judicial authorities can balance freedom of expression with other social interests, such as human dignity and equality.Footnote 44

B. Social media platforms and the emergence of a self-regulated online communication environment

The massive expansion of information technologies since the beginning of the 21st century has led to a change in communication activities between individuals and other societal actors such as businesses or governments, which now increasingly occur through platforms operated by social media companies.

From a legal perspective, during the initial debates on Internet regulation in the 1990s, both the US and the EU adopted legal regimes granting immunity to companies that provide communication services between third parties. In the US, this immunity was codified in 1996 through Section 230 of the communication Decency Act (CDA), enacted by Congress with the aim of fostering the growth of emerging Internet companies by shielding them from legal obligations that might hinder their economic development.Footnote 45 Under this provision, companies offering online communication services are not held liable for unlawful content posted by third parties on their platforms. This exemption stands in contrast to the editorial responsibility traditionally imposed on press and audiovisual media.Footnote 46 In 2000, the EU followed a similar approach with the adoption of the E-commerce Directive (ECD), which enshrined the same principle of immunity, with a slight modification: companies operating in the EU that facilitate online communication are not held legally responsible for the presence of illegal content on their services, provided they act promptly to remove such content upon notification (a model known as ‘conditional liability exemption’).Footnote 47 As a result, from the outset, the legal framework governing companies engaged in enabling and expanding online communication has been characterised by a primary emphasis on promoting economic development and limiting state intervention.Footnote 48

With the deployment and increasingly widespread use of social media platforms, a significant portion of communications now occurs online.Footnote 49 Social media companies provide services that enable users to communicate and share content.Footnote 50 From this perspective, over the past two decades, their platforms have grown into primary arenas for public discourse, increasingly shaping democratic debates and social interactions.Footnote 51 However, social media companies are primarily businesses that aim to make a profit. To do so, their business model consists mainly of collecting personal data from users of their services in order to create consumer profiles that are sold to third-party companies for targeted and highly personalised advertising.Footnote 52 As a result, their business model incentivises maximising user engagement because it enables the companies to collect more user data.Footnote 53 To achieve this, platforms amplify the virality of content – that is, how quickly and widely a piece of content can spread across the internet – notably by implementing algorithmic filtering mechanisms that promote highly engaging content and that aim to keep users on the platforms as long as possible.Footnote 54 As people increasingly engage in publishing and sharing content online, there is growing concern that algorithmic amplification may be favouring sensationalist, misleading or harmful material.Footnote 55

In this context, companies have had to develop instruments and tools that limit unpleasant content for consumers to ensure that individuals continue using social media platforms and thereby enable the companies to continue to collect their data. It is on this basis that companies have developed their own self-regulatoryFootnote 56 practices, now commonly referred to as ‘content moderation’.Footnote 57 Indeed, the challenge for social media companies is to ensure that users spend as much time as possible using their services. If companies algorithmically amplify the virality of content on their platforms to this end, they must also ensure that this amplification does not exceed certain limits, causing users to leave their platforms altogether because they have been confronted with irrelevant or excessively shocking content.Footnote 58 The practices of content moderation are therefore of self-regulatory nature because they aim to eliminate the negative externalities resulting from content amplification on social media companies’ platforms. In this sense, content moderation serves as a form of ‘risk mitigation for the platforms’ business modelFootnote 59 and echoes the logic of Internet self-regulation.Footnote 60

Content moderation practices rely on complex architecture, which extensively incorporates automated systems. Over time, social media platforms have developed increasingly sophisticated content moderation practices, which rely on a blending of automated and human review processes, to remove or de-rank unwanted types of content – that is, not only content that contravenes their terms of use but all types of content that could constitute negative externalities and lead users to turn away from the companies’ services.Footnote 61 Content moderation practices were initially informal and handled on a case-by-case basis by company executives.Footnote 62 As platforms expanded, moderation practices became more standardised and automated, relying on algorithm-driven detection and filtering technologies.Footnote 63 These technologies include hash-matching methods, which compare uploaded content against a database of material previously identified as illegal or harmful, and classification techniques, which use probabilistic models to assess the likelihood that a given piece of content belongs to a specific category, such as ‘hate speech’.Footnote 64 This ‘industrialisation’ of content moderationFootnote 65 involves multiple stakeholders: executives define policies and change community guidelines and standards,Footnote 66 engineers refine automated moderation tools accordingly, and outsourced human moderators handle complex cases.Footnote 67

The emergence of content moderation as a form of self-regulation is thus a direct consequence of a specific legal environment, one in which the state remained largely absent for an extended period. From the perspective of companies, content moderation primarily serves an economic and commercially driven purpose, aimed at retaining users on their platforms. Simultaneously, it can also function as a strategy to try to keep state intervention at bay, by aiming to demonstrate that stricter governmental oversight is unnecessary, on the grounds that companies are capable of preventing harm on their services on the basis of their own terms of use.Footnote 68 In this sense, content moderation also fulfils a regulatory-oriented function.Footnote 69

C. The short-lived legislative responses of EU Member States to illegal online content

Following several media scandals in the 2010s, several EU Member States began to view the self-regulation of social media platforms as insufficient. One of the major issues for these Member States was the perceived inadequate respect for their legal regimes: content that was illegal under their national laws continued to proliferate online without being removed.Footnote 70 These Member States interpreted this as a sign of the inadequacy of self-regulatory approaches and considered adopting binding legislation for social media platforms, thereby modifying the liability exemption regime.Footnote 71

The most significant manifestation of this shift was seen in Germany, which adopted the ‘Netzwerkdurchsetzungsgesetz’ (NetzDG) in 2017.Footnote 72 The adoption of similar legislation also occurred in France (with the ‘Loi Avia’ in 2020) and in Austria (with the ‘Kommunikationsplattformengesetz’ in 2021). In terms of their approach, these three laws pursued a similar and rather simple goal – namely, to ensure that ‘what is illegal offline must also be illegal online’.Footnote 73 On this basis, they sought to introduce an obligation for social media companies to remove illegal content in accordance with certain provisions of their national criminal law. In the case of GermanyFootnote 74 and Austria,Footnote 75 companies were required to remove such content within a predefined time frame – ranging from 24 hours for manifestly illegal content to seven days for content whose illegality was harder to determine. In the case of France, companies were required to remove such content within an even shorter time frame, which could go down to one hour.Footnote 76

These three laws faced criticism and were ultimately abandoned. In France, the provisions of the law imposing removal obligations on social media companies never came into force. Indeed, the French Constitutional Council ruled these provisions unconstitutional, arguing that the short time frames imposed for content removal posed a serious threat to the freedom of expression of French citizens using social media platforms.Footnote 77 In Germany, although the law was enacted and enforced, it faced similar criticism. Critics warned that the law created incentives for the over-removal of content (because the companies were not encouraged to conduct a detailed analysis of the legality of pieces of content, but rather to remove this content to avoid liability), thereby undermining users’ freedom of expression on social media platforms.Footnote 78 In Austria, the law also came into force but was immediately challenged in domestic courts by the affected companies, who argued that it was incompatible with EU law provisions that ensured the proper functioning of the single market. Following legal proceedings, the Court of Justice of the European Union (CJEU) confirmed this incompatibility, paving the way for a revision of the law at the domestic level.Footnote 79 As a consequence, when the EU announced its intention to adopt a comprehensive legal framework for social media platforms, Austria – as well as Germany – declared that their national laws would be withdrawn once the EU framework entered into force.Footnote 80 In 2025, none of these laws are in force.

The initiatives at the Member State level – thought of as responses to the limitations of platforms’ self-regulation – and their subsequent rapid abandon show the difficulty of ‘simply’ applying the legal regime applicable offline to the online environment without adaptation to its technical and market-driven specificities. This can be explained by the fact that the rise of internet-mediated communication is particularly complex from a socio-technical point of view. Platforms enable users to express themselves on a large scale, both through direct interactions with other users and by commenting and discussing in forums or algorithmically curated feeds.Footnote 81 Furthermore, online, the issue of communication is viewed through a different lens than in the analogue world: while it still involves citizens expressing their opinions, this expression occurs through the economic and technological affordances of digital platforms. Consequently, discussion around regulation is framed in different terms – focusing on ‘content’ that is ‘published’ by ‘users’.Footnote 82 It is primarily through this lens that issues of expression and communication are addressed. This economic environment and new mode of communication complicate the enforcement mechanisms for illegal speech from a fundamental rights-oriented perspective, as applicable offline.Footnote 83 Two characteristics of the online environment in particular challenge such an approach, both linked to the fact that the online environment is controlled by private platforms. First, the technological affordances of these platforms enable the instantaneous and global dissemination of content, resulting in a volume and velocity of speech that exceed the capacities of conventional enforcement institutions, mainly judicial authorities.Footnote 84 Second, the transnational nature of platforms creates jurisdictional ambiguities: it is often unclear which legal framework applies or which judicial authority has jurisdiction in cases involving cross-border speech.Footnote 85 As a result, a significant portion of illegal online speech remains unprosecuted, even as its visibility and prevalence increase due to algorithmic amplification.Footnote 86

D. The EU’s attempt at a comprehensive approach for illegal online content

Since the Cambridge Analytica scandal in 2016,Footnote 87 and unlike the legislative responses of some Member States, the EU has embarked on a path with a primary expectation to encourage companies to standardise their content moderation practices and then to formalise the agreement between companies while supplementing it with constitutional requirements.Footnote 88 This last step aims to provide what is known as a ‘comprehensive’ approach.Footnote 89

Three main features characterise the EU’s response. First, a gradual shift from non-binding to binding measures – spanning the spectrum from ‘soft law’Footnote 90 to ‘hard law’ instrumentsFootnote 91 . Second, a collaborative approach favouring co-regulation in partnership with the platforms themselves.Footnote 92 And third, a combination of elements that emphasise the desired balance between better protection of fundamental rights and better economic integration across the Union.Footnote 93

As an initial step, the EU introduced several codes of conduct that were jointly drafted with social media companies, which voluntarily committed to their principles. These include the EU Code of Conduct on Countering Illegal Hate Speech Online (2016),Footnote 94 the EU Code of Practice on Disinformation (initially adopted in 2018 and revised in 2022),Footnote 95 the EU Code of Practice against Terrorist Content Online (developed in 2015 within the EU Internet Forum), and, more recently, the EU Code of Conduct on Age-Appropriate Design, under development since 2023.

Partly in parallel, the EU has adopted several binding legislative acts – some of which either incorporate these earlier codes or replace them with formal legal obligations. For instance, the Code of Practice against Terrorist Content Online was superseded by the Regulation (EU) 2021/784 on addressing the dissemination of terrorist content online (TERREG).Footnote 96 Although not directly related to a code of conduct, the EU also adopted the Directive (EU) 2019/790 on Copyright in the Digital Single Market (CDSMD), which imposes binding copyright responsibilities on social media platforms.Footnote 97

Finally, the last legislative development to date is the Digital Services Act (DSA), adopted in 2022. This law constitutes a significant milestone in EU digital governance, as it pursues several objectives simultaneously. On the one hand, the DSA intends, according to the European Commission, to better protect the fundamental rights of social media platform users. To this end, this law introduces obligations for companies that include due diligence requirements,Footnote 98 enhanced transparency regarding platform decision-making processesFootnote 99 and the use of algorithmic systems,Footnote 100 better data access for researchersFootnote 101 and the involvement of third parties to help content moderation procedures.Footnote 102 On the other hand, this law also aims to promote economic integration, as it reaffirms the principle of the single market for digital services. Indeed, seeing Member States adopt their own legislation targeting social media platforms, the European Commission began to fear fragmentation of the EU’s internal market and felt the need to reaffirm the principle of a common market for digital services in the EU as a whole.Footnote 103 The DSA must therefore be seen as the successor to the ECD, whose immunity regime it incorporates and maintains – but with the addition of new procedural obligations, with the intention of adopting an approach that is comprehensive as a whole.Footnote 104 An important aspect of the DSA is its risk-based approach.Footnote 105 According to Articles 34 and 35, very large online platforms (VLOPs) must conduct regular risk assessment measures, implement risk mitigation measures, and undergo regular independent audits.Footnote 106 Importantly, Article 45 elevates certain codes of conduct to binding status, notably the Code of Conduct on Countering Illegal Hate Speech Online and the Code of Practice on Disinformation.Footnote 107 These elements signal a shift towards a preventive regulatory paradigm.Footnote 108 Such a paradigm differs markedly from legal provisions that lay down precise obligations that the addresses of the provisions are expected to comply with, and where compliance is ensured by the threat of sanctions and monitored through ex post judicial proceedings.Footnote 109 Risk-based rules emphasise anticipatory regulatory governance mechanisms.Footnote 110 Furthermore, they require a greater involvement of private actors, who must put in place a risk management approach and are entrusted to act accordingly and in good faith. In this respect, social media companies are considered partners in a new form of governanceFootnote 111 – partners that can contribute to the development of rules, and which may be responsible for ensuring that these rules are implemented and properly enforced.

Thus, the EU’s legal framework for illegal content is built upon three main legal instruments (TERREG, CDSMD and the DSA), with the DSA acting as the cornerstone. Together, these three instruments represent the most recent and far-reaching effort to address the public issue of illegal online content on a large scale. To better understand the broader implications of this complex framework and how it operates in practice, the insights and tools offered by regulation studies can be particularly valuable.

4. Disentangling the EU legal framework with the RIT model

Regulation studies provide a series of tools and concepts that can be useful for analysing and understanding structures and dynamics in European digital law. The RIT modelFootnote 112 is one of these conceptual frameworks that can contribute to analysing and explaining laws that do not fit well into the ‘command-and-control’ logic.Footnote 113 Unlike legally binding instruments that rely on a vertical, top-down form of regulation between two actors (ie, the state imposing obligations on an entity that is hierarchically subordinate to it), the RIT model conceptualises regulation as a tripartite system, where ‘intermediaries (I) play diverse roles between the regulator (R) and the targets of regulation (T)’.Footnote 114 The involvement of regulatory intermediaries can arise in situations where these intermediaries possess capabilities that regulators lack or because they are perceived as more efficient or more legitimate in implementing or enforcing regulation. Several such capabilities exist, such as operational capacity (when regulators lack necessary resources or find it too costly to carry out a regulatory function themselves), expertise (when intermediaries possess specialised knowledge that the regulator lacks), independence (from the regulator and/or the target), or legitimacy (when the targets of regulation perceive intermediaries as more credible or legitimate enforcers of regulatory mandates than the regulator).Footnote 115

This section aims to use the RIT model to disentangle and better understand the nuances and implications of the European legal framework for illegal content online. First, Subsection A highlights how the provisions of the CDSMD, TERREG, and DSA impose an obligation on platforms to remove illegal content on the basis of both European law and the national laws of Member States. Subsection B then argues that these obligations effectively institutionalise the content moderation practices already put in place by social media companies, formalising them into legal enforcement processes and changing the nature of content moderation itself from the perspective of social media companies.

A. The obligation to remove illegal content

The approach of the EU is primarily grounded in three key legislative instruments, complemented by a range of codes of conduct. When looking in detail at the obligations contained in binding provisions, there are at least two different types of obligations: those that only imply the EU and the company in the regulatory relationship (eg, the obligations in the DSA that relate to transparency or due process in content moderation, where the company is expected to change its own practices); and those that include a third actor, the user (and its online content) (eg, obligations that relate to taking down illegal content on the platforms). The focus of the paper is exclusively on the latter.

The RIT model is particularly useful to make sense of tripartite regulatory relationships and, as such, can help to better understand this specific constellation within the EU’s legal framework. Across the different laws, there are several key legal provisions that establish an obligation for social media companies to remove illegal content on behalf of the EU, effectively mandating them by law to act as regulatory intermediaries. The first provision is Article 17(4) of the CDSMD, which requires ‘online content-sharing service providers’ to ensure that user-uploaded content does not infringe intellectual property rights.Footnote 116 If infringing content is found on their platforms, these companies may be held liable unless they act promptly to remove it. To assess whether content violates copyright, companies must interpret and apply relevant national and EU legal standards, removing content they determine to be illegal.Footnote 117 Notably, this obligation encompasses both ex post and ex ante monitoring, requiring platforms to respond to infringing content after publication and to prevent such content from being uploaded in the first place (if they haven’t secured a licence for the content and if the content has been notified to them in advance by the rightsholders).Footnote 118 A similar logic underpins TERREG with its Article 3’s ‘removal orders’. Under this provision, upon notification by a Member State that specific content qualifies as a ‘terrorist offence’, platforms are required to remove it within one hour.Footnote 119 The DSA, for its part, contains several provisions that fall within the scope of regulatory intermediation. Chief among these are the ‘orders to act against illegal content’ set out in Article 9 DSA, which are very similar to TERREG’s ‘removal orders’. This article requires platforms to take down content deemed illegal under EU or national law upon receiving an order from a judicial or administrative authority. To avoid liability, social media platforms must comply by removing the specified content.Footnote 120 In addition, the DSA provides other mechanisms through which judicial or administrative authorities can compel platforms to enforce the law on their behalf. This is the case with Article 16, which allows public authorities to report content using the same interfaces available to ordinary users. Under this provision and in relationship with Article 6 DSA, platforms may also be held liable if they fail to remove illegal content brought to their attention.Footnote 121 Furthermore, under Article 22 of the DSA, public authorities may be certified as trusted flaggers. In such situations, their content reports are given priority by platforms. This Article is in fact formalising a practice that has already existed for some time (notably in the field of ‘counterterrorism’), where public authorities have put together ‘Internet Referral Units’ designed to report specific types of content to platforms on the Internet.Footnote 122

Together, the provisions entailed in these three legal instruments constitute a shift whereby the interpretation and implementation of the law concerning illegal content, traditionally the primary responsibility of judicial bodies, are now directly delegated to online platforms.Footnote 123 In its legal approach to illegal content online, the EU (R, the Regulator) has therefore opted to engage social media companies directly as regulatory intermediaries (I, the intermediary) with a view to removing illegal content posted by users (T, the target) on the basis of EU and Member State law.

B. Institutionalising pre-existing content moderation

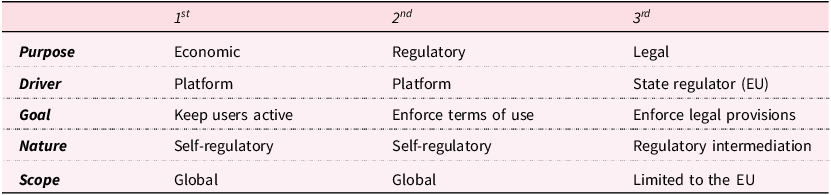

As explained above, social media companies already moderate content on their platforms, without it being required by law. They do it both for economic reasons – to keep users on their services for longer periods by presenting them with content they enjoy – and to try to keep the state regulator away – by showing that they can effectively self-regulate, notably by enforcing their own terms and conditions.Footnote 124 With the EU now mandating social media companies to remove illegal content online on its behalf, content moderation will be used (in the EU at least) to fulfil a regulatory intermediation mandate. Therefore, a new function is ‘added’ to content moderation from the perspective of social media platforms: content moderation now serves at the same time as a process for platforms to filter content for users, enforce companies’ terms and conditions, and fulfil the European intermediation mandate. In other words, the obligation imposed on social media companies to remove illegal content online on the basis of EU law and Member State law is institutionalising platform-led content moderation. As a consequence, content moderation is no longer just a form of platform self-regulation but has become a genuine co-regulatory tool used by the EU to enforce the law via the obligation imposed on companies to act as regulatory intermediaries. Content moderation, from the perspective of platforms, is now constituted of three layers: one of them is imposed by the EU, which is distinct from, yet entangled with, its original dual self-regulatory roles. This is summarised in Table 1.

The three layers of content moderation (from the perspective of platforms)

Source: author’s elaboration.

It is important to acknowledge a key limitation of Table 1: it should not be inferred that, prior to the entry into force of the EU legal framework, platforms did not already engage in the removal of illegal content at the request of state authorities. On the contrary, such practices were already in place and have been the subject of considerable academic discussion, investigating the complex interactions and implications of state–platform relationships.Footnote 125 Rather than implying the absence of prior state involvement, Table 1 is meant to illustrate the emergence of a an ‘institutionalised’ layer of content moderation. This evolution entails an increasing complexity in the roles that platforms are expected to fulfil, with significant implications that are examined in the following section.

5. Discussion

A. The issues of private firms’ self-interest and public disempowerment in regulatory intermediation frameworks

Regulatory intermediaries are typically independent regulatory agencies established to carry out a specific mandate. These agencies often resemble public authorities in terms of institutional codes but are organised to maintain a degree of independence from the state regulator, often operating ‘outside the structural hierarchy of public administrations’.Footnote 126 Such agencies are common across industrialised nations, for example in sectors like financial governance or telecommunications. By contrast, it is far less common for private firms to assume the role of regulatory intermediaries, although examples do exist. When they do, these firms are usually specialised entities tasked with performing a clearly defined regulatory function on behalf of the state. In most cases, their core activity involves compliance activities, helping other firms adhere to governance standards.Footnote 127 The academic literature on regulatory intermediation includes several empirical studies that explore the specific challenges arising when private firms, rather than independent agencies, act as regulatory intermediaries. One such study examines regulatory governance in the building sector. It underlines how, in this sector, rule development, rule implementation, monitoring and enforcement increasingly fall under the purview of private regulatory intermediaries. This development raises issues. First, the studyFootnote 128 shows that regulatory intermediaries themselves have accelerated the trend toward privatisation of regulation because it serves their self-interest: ‘from a private interest point of view, privatised enforcement creates a market for this service’.Footnote 129 A second issue often follows: by delegating implementation and enforcement to regulatory intermediaries, state regulators risk losing expertise and becoming overly dependent on them over time.Footnote 130 This risk is not limited to public authorities. Regulatory intermediaries may indeed ‘seek ever more specialisation and professionalisation as a way to raise barriers against competitors entering or operating in their market for regulatory services’.Footnote 131 Consequently, delegating regulatory intermediation to private firms creates a fundamental tension: these actors may prioritise their own interests over the public interest that primary regulation is meant to serve. Similar dynamics appear in other domains. For example, a study on big audit firms in transnational global governance shows how these firms, acting as regulatory intermediaries in global supply chains, influenced policymaking to create more rules, which allowed them to increase their market share, as they were already best placed to help implement these new rules.Footnote 132 Another case involves US credit rating agencies,Footnote 133 which act as regulatory intermediaries when they determine borrowing costs and access to capital for public and private debtors. Their credit ratings are considered legitimate and serve as a source of authority because of the public mandate granted to them.Footnote 134 The study of these rating agencies demonstrates that despite major failures – such as flawed risk assessments that favoured the agencies’ direct clients and largely contributed to the 2008 financial crisis – credit rating agencies remain to this day central to global finance. The analysis of their case reveals how, in certain contexts, regulatory intermediaries can increase the regulator’s resource dependence on them, enabling them to maintain power over time. This strategy is referred to as ‘Public (Dis)-empowerment of Private Intermediaries’.Footnote 135 Consequently, it is ‘the capacities and power of (potential) intermediaries that significantly shape the attractiveness of delegating to them in the first place; at the same time, these capacities and power also render private intermediaries autonomous and resilient toward states’ (later) attempts to control them and circumscribe their behavior’.Footnote 136 The core problem of such situation is that ‘long-term and extensive reliance on particular resource-strong intermediaries predisposes regulators to neglect the maintenance or buildup of capacities that could substitute for intermediary resources’, effectively dis-empowering public authorities on the long term.Footnote 137

The situation regarding social media companies acting as regulatory intermediaries within the legal framework of the EU for illegal online content shares similarities with these cases. Even though social media companies are not specialised entities in the regulation of online content, they have developed their own content moderation practices that aim at regulating content online. Moreover, they assume a gatekeeper position in online communication.Footnote 138 The massive infrastructure they have developed for content moderation purposes means they have an operational capacity that is convenient for the EU to mobilise. Indeed, social media companies have developed an extensive, globally scaled infrastructure for content moderation purposes,Footnote 139 deeply integrated with automated systems that are costly to train and implement and require significant technical expertise.Footnote 140 Crucially, this infrastructure should not be viewed as a static or pre-existing resource; like any complex system, it requires ongoing maintenance and monitoring. These maintenance and monitoring costs – both financial and human – are substantial. For instance, Meta (which operates Facebook and Instagram) said that it employed more than 40,000 people in content moderation across the globe in its transparency report dating back to October 2024,Footnote 141 with roles distributed between headquarters (where policy and engineering decisions are madeFootnote 142 ) and outsourced locations tasked with routine and often-burdensome moderation work.Footnote 143 From this perspective, social media companies possess a level of operational capacity and resources that the EU itself does not. As a consequence, the delegation of regulatory enforcement to these companies appears to be a very cost-efficient option for the EU, as the financial burden of maintaining the necessary moderation infrastructure falls on the platforms themselves (which represent costs that they would incur regardless, as a consequence of operating their own moderation processes).Footnote 144

However, this situation raises a significant issue. The state regulator (the EU) finds itself dependent on the resources and operational capacity of social media companies. Based on this observation, and in line with what the literature on regulatory intermediation has highlighted in the cases mentioned above where an intermediation mandate was delegated to private firms, it is entirely plausible that social media companies will fulfil their obligation to remove illegal online content on behalf of the EU and its Member States in a manner that primarily serves their own interests and actively seeks to disempower the public regulator. This could manifest similarly to what has been observed in the regulatory governance of buildings, with social media companies implementing their intermediation mandate in such a way that it leads to the standardisation of their own processes, which then becomes the norm, effectively excluding smaller actors from meeting these standards and thereby concentrating regulatory power in the hands of the few existing players in the market. Furthermore, as previously noted, the business model of social media companies relies primarily on the collection of users’ personal data, which originates directly from their interaction with shared online content. These companies, therefore, have a direct financial incentive to maximise such interactions, which could influence how they implement their obligation to remove illegal content in situations where their financial incentives collide with their intermediation mandate. Overall, this problematic situation is exacerbated by the current lack of transparency regarding how social media companies automate their content moderation processes (notably for reasons of intellectual property and industrial secrecyFootnote 145 ), leaving the state regulator partly in the dark when it comes to assessing the actual implementation of the obligation imposed on these companies to remove illegal content on its behalf (even if the DSA does give the European Commission fairly extensive powers to request internal information from social media companies, this has had only a limited impact so far due to a lack of cooperation of these companies).

This situation raises the question of why the EU chose a legal framework based on such an arrangement. One possible explanation lies in the relationship between the EU and its (continued) commitment to forms of governance based on market principles.

B. ‘Institutionalisation’ and the logic of market-based governance in the EU

The EU’s decision to delegate responsibility for removing illegal online content to social media companies, acting as regulatory intermediaries, may initially appear risky, given the possibility that these companies could prioritise their own commercial interests over the interest in protecting individual rights in the context of illegal content online. However, this choice is not an anomaly; rather, it reflects the EU’s commitment to governance models grounded primarily in market principles.

This commitment has already been highlighted in relation to the EU’s broader approach to cyber governance. Indeed, as Farrand compellingly illustrates, the EU’s approach to digital governance closely aligns with the principles of ordoliberalism – a school of thought that emphasises the importance of market principles and the self-regulation of the private sector. As a consequence, issues in the digital realm tend to be framed primarily as economic rather than social problems.Footnote 146 Ordoliberal ideas – particularly the belief that economic order should be at the centre of public decision-making – are embedded ‘within the institutional practices of the EU itself’.Footnote 147 This has fostered a form of path dependency when it came to choosing a legal framework in the realm of the ‘cyber’, leading the EU to favour regulatory approaches based on ‘regulated self-regulation’ – in other words, approaches grounded in the notion that the regulator’s role is confined to establishing a general framework of guiding principles, intended to shape and steer the self-regulation of another entity.Footnote 148 Within this framework, the challenges posed by certain facets of the Internet (such as online communication and illegal online content in particular) are treated less as social or democratic issues and more as matters of market competition and market power distribution. Consequently, the EU’s legal responses tend to mirror this economic framing: they emphasise ‘market restructuring’ through mechanisms such as risk assessments, mitigation measures and cooperation with private actors directly. Hiltunen reaches a similar conclusion regarding EU platform law.Footnote 149 He argues that although both the DSA and the Digital Markets Act (DMA) were presented by the European Commission as progressive measures intended to radically transform the digital space within the EU – marking a turning point toward stronger protection of individual rights – these two legislative instruments are, above all, characterised by a form of ‘internal market rationality’. This rationality prioritises ‘the facilitation of transnational freedom for private actors and a program for competition that largely brackets off concerns for distributional justice’.Footnote 150 In advancing this argument, Hiltunen suggests that the European legal framework primarily serves to uphold the pre-existing principles of the single market and to prevent legal fragmentation within the EU. Consequently, the EU’s legal architecture is aimed less at ensuring forms of redistribution of power and more at restructuring the market as it stands, with a view to ‘unleash platforms’ potential in Europe’.Footnote 151 Drawing on both accounts, it becomes clear that the EU’s choice is rooted in a persistent belief in the capacity of markets to self-regulate. This conviction underpins the idea that regulatory intervention should remain limited, intervening only to correct specific market failures while preserving the foundational principles of market-based governance.

In this context, it is worth briefly addressing how the question and the scope of the institutionalisation process of private governance orders arise. This article advances the idea that the EU is institutionalising platform-led content moderation, drawing on the regulatory intermediation literature to show how such an arrangement entails risks from the perspective of the private interests of the companies tasked with implementing the law. This emphasis on the potentially problematic scope of institutionalisation contrasts with other academic approaches, such as digital constitutionalism, which explicitly defends the view that institutionalisation processes like the one described in this paper are, in fact, indicative of a form of constitutionalisation of the private order – purportedly enabling a better protection of fundamental rights in the digital space.Footnote 152 However, the present article is not alone in questioning whether such institutionalisation is of concern. Other studies have examined how the legitimisation of social media platforms’ power by the EU may raise issues, and how those who interpret this as a constitutionalisation of a private order overlook the fact that it was law that in the first place constituted existing asymmetrical power relations. In this regard, Maroni, for example, explains in detail how the transparency requirements contained in the DSA do not necessarily represent a step toward better user protection online but may instead serve to legitimise the existing strength and operational practices of social media companies.Footnote 153 Similarly, other authors directly challenge the premises on which digital constitutionalism rests. Terzis, for instance, regards the project as laudable in terms of its objectives – aiming to rebalance power relations in the digital space and to strengthen the defence of rights online – but highlights a fundamental contradiction: the failure to recognise that the emergence of powerful social media companies was not due to an absence of law in the digital realm, but rather to the role of law and its application in enabling the accumulation and consolidation of such concentrations of power. In other words, Terzis underscores the ‘constitutive role of law within the political economy of technology’.Footnote 154 Likewise, Costello argues that applying constitutionalist terminology to the digital context is, in fact, ‘a misleading descriptor of the activity of private actors in digital spaces’. In this sense, invoking constitutionalism amounts to relying on a ‘false friend’.Footnote 155 In a similar vein, though focusing not on digital constitutionalism but on the relevance of the broader human rights framework in platform governance, two authors reach conclusions aligned with the view that discourses portraying the institutionalisation of private governance as an adequate solution are problematic. Griffin, for example, demonstrates how the legal framework of human rights – due to its emphasis on individual rights protection – fails to address societal challenges that are inherently collective, as they stem directly from structural power asymmetries favouring large social media companies.Footnote 156 Griffin stresses the ‘depoliticised and individualistic’ nature of human rights language, seeing it not as an effective counterbalance to corporate power but rather as a potential source of its legitimisation. Similarly, Sander warns against what he terms ‘marketized conceptions of human rights’, which risk legitimising existing power relations in the digital domain. To counter this risk, he advocates for a more structural conception of human rights, better suited to confronting such forms of power.Footnote 157

Based on this work, the process of institutionalising private governance orders can be understood from two contrasting perspectives. The first views it as a form of constitutionalisation that enhances the protection of fundamental rights. The second – adopted in this article – interprets it primarily as a continuation of a commitment to market principes, along with the inherent risks (highlighted above) such an approach entails.

6. Conclusion

Over the past several years, the EU has intensified its efforts to regulate the digital sphere. A central focus of these efforts has been the role of social media platforms and the complex challenges posed by online communication, particularly with respect to the enforcement of legal norms on illegal content. This paper has provided a detailed analysis of the legal framework adopted by the EU in this area. Building on this analysis, it introduced the field of regulation studies as a valuable lens through which to analyse the EU’s framework. In particular, it applied the Regulator-Intermediary-Target (RIT) model to show how the EU is relying on social media companies as regulatory intermediaries, responsible for implementing both EU and Member State law in the area of illegal content online. On this basis, the paper has argued that the EU is effectively institutionalising platform-led content moderation. For the platforms, this institutionalisation constitutes an additional ‘layer’ of moderation – added to their existing moderation activities, which were developed to serve their own economic interests and to support a form of self-regulation.

The paper then set out to discuss the implications of the EU’s legal framework. It highlighted the findings from empirical studies on regulatory intermediation to show that when the role of regulatory intermediary is assigned to private firms, significant tensions can arise. These tensions stem from the potential conflict between the intermediary’s self-interest and the implementation of regulation in line with the original intention of the legislator. Moreover, such arrangements carry the risk of enabling strategies of public disempowerment on the part of intermediaries. The paper also briefly explored the rationale behind the EU’s decision to adopt this framework, emphasising that it reflects a commitment to governance models grounded in market principles.

From a larger perspective, the case study of the EU’s approach to illegal content is situated within a shifting geopolitical context – one that has evolved significantly since the EU adopted the different parts of its current legal framework. Since the election of Donald Trump as US President, the regulation of social media companies has become a contentious issue in transatlantic relations, with the US exerting strong pressure on the EU regarding laws governing what they perceive as ‘their’ platforms, viewing EU laws on illegal online content as attempts to undermine the competitiveness of American firms.Footnote 158 Recently, the political climate within the EU has also changed, marked by what could be described as a ‘deregulatory turn’, in which the European Commission actively seeks to simplify existing EU legal frameworks under the stated objective of enhancing competitiveness across the Union.Footnote 159 Against this backdrop, the future of the EU’s approach for illegal content online raises important questions. As this paper has argued, the current framework carries an inherent risk: social media companies may implement their intermediation mandate in ways that primarily serve their own interests. This risk could have been mitigated by a clear commitment from EU governing bodies to maintain strong oversight and exert continuous pressure to ensure that platforms act faithfully and in line with the public interest underlying the fight against illegal online content. Yet, the current geopolitical context appears to point in the opposite direction. Rather than reinforcing a robust implementation of the current legal framework, EU institutions seem inclined to avoid antagonising the US in applying these rules to US companies, while simultaneously pursuing a deregulatory agenda within the Union. The danger of this trajectory lies in the possibility that a framework already fraught with risks, without rigorous enforcement or oversight, may ultimately become little more than an instrument that entrenches existing power relations over the long term.

Acknowledgements

The author wishes to express sincere gratitude to Prof. Sophie Weerts for her invaluable contribution to this paper, particularly for providing the initial idea and for her continuous support, multiple proofreads, and insightful comments. The author is also deeply thankful to Prof Martino Maggetti and Prof Markus Hinterleitner for their constructive feedback and suggestions on an early version of this work presented at the University of Lausanne in May 2024. Finally, the author extends heartfelt thanks to the two anonymous reviewers for the time and effort they devoted to reviewing this paper and for their highly relevant comments, which significantly strengthened both the argument and the overall quality of the article.

Competing interests

None.