1 Introduction

The relationship between nonnegative polynomials and sums of squares is a fundamental problem in real algebraic geometry. Much is now known about the constructions and the existence of nonnegative polynomials that are not sums of squares (of polynomials) [Reference Choi and Lam14, Reference Reznick35, Reference Reznick39, Reference Blekherman3, Reference Blekherman, Iliman and Kubitzke6, Reference Brugallé, Degtyarev, Itenberg and Mangolte7]. Decomposing

![]() $P^k$

as a sum of squares, where k is an odd integer, also provides a certificate of nonnegativity of P, and it is reasonable to ask whether this works for all nonnegative polynomials P. We initiate a systematic study of nonnegative polynomials P such that

$P^k$

as a sum of squares, where k is an odd integer, also provides a certificate of nonnegativity of P, and it is reasonable to ask whether this works for all nonnegative polynomials P. We initiate a systematic study of nonnegative polynomials P such that

![]() $P^k$

is not a sum of squares for any odd positive integer k. We call such polynomials stubborn. Currently not much is known about stubborn polynomials, except for some isolated examples. One of our main results is that all extreme rays of the convex cone of nonnegative ternary sextics (homogeneous polynomials in

$P^k$

is not a sum of squares for any odd positive integer k. We call such polynomials stubborn. Currently not much is known about stubborn polynomials, except for some isolated examples. One of our main results is that all extreme rays of the convex cone of nonnegative ternary sextics (homogeneous polynomials in

![]() $3$

variables of degree

$3$

variables of degree

![]() $6$

) are stubborn. More generally, for a nonnegative ternary form P we develop a new invariant

$6$

) are stubborn. More generally, for a nonnegative ternary form P we develop a new invariant

![]() $\delta ^{\,\textrm {sos}}$

of real singularities of P, which we call a sum of squares invariant or SOS-invariant, such that if the sum of

$\delta ^{\,\textrm {sos}}$

of real singularities of P, which we call a sum of squares invariant or SOS-invariant, such that if the sum of

![]() $\delta ^{\,\textrm {sos}}$

over all real singularities of P is sufficiently large, then P is stubborn. This implies the result for ternary sextics, and allows us to construct stubborn forms in higher degrees. We compare the SOS-invariant to the classical delta invariant of a plane curve singularity, and show that they agree for singularities of multiplicity

$\delta ^{\,\textrm {sos}}$

over all real singularities of P is sufficiently large, then P is stubborn. This implies the result for ternary sextics, and allows us to construct stubborn forms in higher degrees. We compare the SOS-invariant to the classical delta invariant of a plane curve singularity, and show that they agree for singularities of multiplicity

![]() $2$

, but are not equal in general. We show that stubborn forms exist, for a fixed degree and number of variables, whenever nonnegative forms are not equal to sums of squares. We also prove that the set of nonnegative forms that are not stubborn is a convex cone, which includes the interior of the cone of nonnegative forms. We now go into more details and review the history of the problem.

$2$

, but are not equal in general. We show that stubborn forms exist, for a fixed degree and number of variables, whenever nonnegative forms are not equal to sums of squares. We also prove that the set of nonnegative forms that are not stubborn is a convex cone, which includes the interior of the cone of nonnegative forms. We now go into more details and review the history of the problem.

For positive integers

![]() $n, d$

, let

$n, d$

, let

![]() $F_{n,d}$

denote the space of real forms (homogeneous polynomials) of degree d in n variables. We note that for analyzing questions about nonnegativity and sums of squares it suffices to consider homogeneous polynomials, as homogenization and dehomogenization preserve the properties of being nonnegative and being a sum of squares. From now on we will work with forms. A form

$F_{n,d}$

denote the space of real forms (homogeneous polynomials) of degree d in n variables. We note that for analyzing questions about nonnegativity and sums of squares it suffices to consider homogeneous polynomials, as homogenization and dehomogenization preserve the properties of being nonnegative and being a sum of squares. From now on we will work with forms. A form

![]() $P\in F_{n,d}$

is said to be nonnegative if

$P\in F_{n,d}$

is said to be nonnegative if

![]() $P(\mathbf {X})\geq 0$

for any

$P(\mathbf {X})\geq 0$

for any

![]() $\mathbf {X}\in \mathbb {R}^n$

. If

$\mathbf {X}\in \mathbb {R}^n$

. If

![]() $P(\mathbf {X})>0$

for all

$P(\mathbf {X})>0$

for all

![]() $\mathbf {X}\neq \mathbf {0}$

, then P is called strictly positive. If

$\mathbf {X}\neq \mathbf {0}$

, then P is called strictly positive. If

![]() $P=H_1^2+\dots +H_r^2$

for some

$P=H_1^2+\dots +H_r^2$

for some

![]() $H_1,\dots , H_r\in F_{n,d/2}$

of degree

$H_1,\dots , H_r\in F_{n,d/2}$

of degree

![]() $d/2$

, then P is said to be a sum of squares. Trivially, every sum of squares is nonnegative and the degree d of a nonnegative form must be even. Following Choi and Lam [Reference Choi and Lam13], let

$d/2$

, then P is said to be a sum of squares. Trivially, every sum of squares is nonnegative and the degree d of a nonnegative form must be even. Following Choi and Lam [Reference Choi and Lam13], let

![]() $P_{n,d}$

and

$P_{n,d}$

and

![]() $\Sigma _{n,d}$

denote the closed convex cones of nonnegative forms and, respectively, sum of squares forms in

$\Sigma _{n,d}$

denote the closed convex cones of nonnegative forms and, respectively, sum of squares forms in

![]() $F_{n,d}$

. The interior

$F_{n,d}$

. The interior

![]() $\textrm {int}(P_{n,d})$

of

$\textrm {int}(P_{n,d})$

of

![]() $P_{n,d}$

consists exactly of strictly positive forms of degree d. Let

$P_{n,d}$

consists exactly of strictly positive forms of degree d. Let

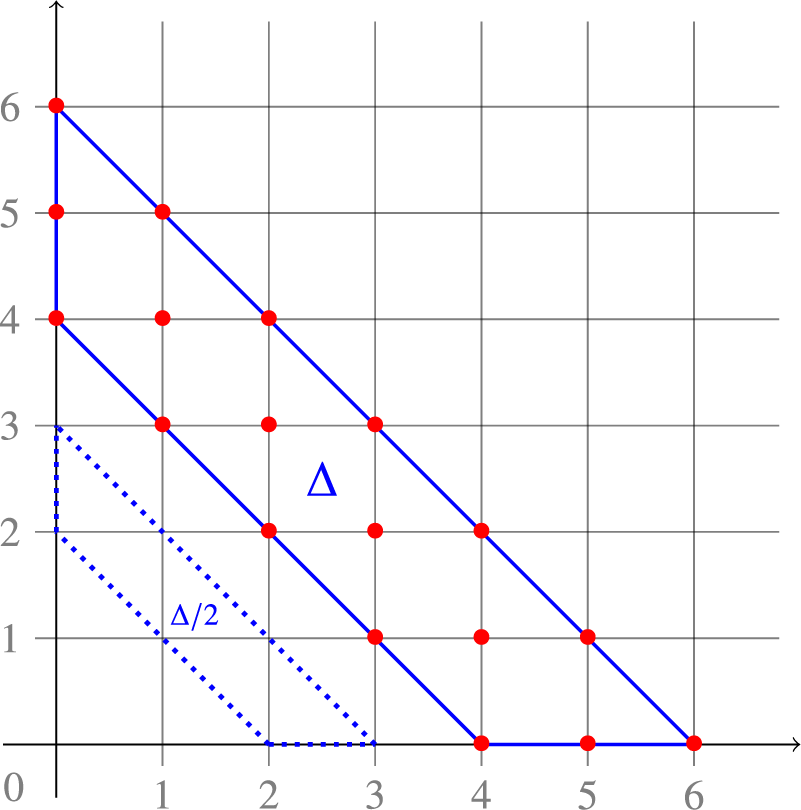

![]() $\Delta _{n,d}:=P_{n,d}\setminus \Sigma _{n,d}$

be the difference of the two cones. Hilbert [Reference Hilbert23] proved that

$\Delta _{n,d}:=P_{n,d}\setminus \Sigma _{n,d}$

be the difference of the two cones. Hilbert [Reference Hilbert23] proved that

![]() $\Delta _{n,d} \neq \emptyset $

if and only if

$\Delta _{n,d} \neq \emptyset $

if and only if

![]() $n \ge 3$

and

$n \ge 3$

and

![]() $d \ge 6$

or

$d \ge 6$

or

![]() $n \ge 4$

and

$n \ge 4$

and

![]() $d \ge 4$

, see Figure 3. The ternary sextic Motzkin form

$d \ge 4$

, see Figure 3. The ternary sextic Motzkin form

![]() $M\in F_{3,6}$

,

$M\in F_{3,6}$

,

was the first explicit example of a nonnegative form that is not a sum of squares [Reference Motzkin27]. Another early example of a form in

![]() $\Delta _{3,6}$

was

$\Delta _{3,6}$

was

$$ \begin{align}\begin{aligned} R\ =&\ X_1^6 +X_2^6 + X_3^6 + 3X_1^2X_2^2X_3^2\\ &- (X_1^4X_2^2 + X_1^2X_2^4+X_1^4X_3^2+X_1^2X_3^4+X_2^4X_3^2+ X_2^2X_3^4), \end{aligned}\end{align} $$

$$ \begin{align}\begin{aligned} R\ =&\ X_1^6 +X_2^6 + X_3^6 + 3X_1^2X_2^2X_3^2\\ &- (X_1^4X_2^2 + X_1^2X_2^4+X_1^4X_3^2+X_1^2X_3^4+X_2^4X_3^2+ X_2^2X_3^4), \end{aligned}\end{align} $$

found by Robinson in [Reference Robinson40].

Hilbert’s 17th Problem asks whether, for

![]() $P \in P_{n,d}$

, there exists Q in some

$P \in P_{n,d}$

, there exists Q in some

![]() $F_{n,d'}$

so that

$F_{n,d'}$

so that

![]() $Q^2P \in \Sigma _{n,d+2d'}$

. In 1927 Artin [Reference Artin1] solved this problem in the affirmative, even in a more general setting of real closed fields. In equivalent terms, any nonnegative form can be written as a sum of squares of rational functions. Later, multiple authors studied variations of Hilbert’s 17th problem [Reference Pólya31, Reference Habicht20, Reference Delzell17, Reference Reznick37]. In particular, for a nonnegative form Q it was desirable to know whether for all

$Q^2P \in \Sigma _{n,d+2d'}$

. In 1927 Artin [Reference Artin1] solved this problem in the affirmative, even in a more general setting of real closed fields. In equivalent terms, any nonnegative form can be written as a sum of squares of rational functions. Later, multiple authors studied variations of Hilbert’s 17th problem [Reference Pólya31, Reference Habicht20, Reference Delzell17, Reference Reznick37]. In particular, for a nonnegative form Q it was desirable to know whether for all

![]() $P\in P_{n,d}$

the form

$P\in P_{n,d}$

the form

![]() $PQ^k$

is a sum of squares for some large

$PQ^k$

is a sum of squares for some large

![]() $k\geq 1$

. This question is two-fold. For a fixed P one can consider it as a strengthening of the Hilbert’s 17th problem, as the denominator is constrained to be a power of a fixed polynomial Q. On the other hand, taking

$k\geq 1$

. This question is two-fold. For a fixed P one can consider it as a strengthening of the Hilbert’s 17th problem, as the denominator is constrained to be a power of a fixed polynomial Q. On the other hand, taking

![]() $P=1$

and allowing only odd k, one tries to represent some odd power of Q as a sum of squares. Reznick showed [Reference Reznick36] that any strictly positive form P multiplied by a large enough power

$P=1$

and allowing only odd k, one tries to represent some odd power of Q as a sum of squares. Reznick showed [Reference Reznick36] that any strictly positive form P multiplied by a large enough power

![]() $Q^k$

for

$Q^k$

for

![]() $Q=X_1^2+\dots +X_n^2$

is a sum of squares (this does not hold for all nonnegative forms P [Reference Reznick38]). More generally, Scheiderer proved [Reference Scheiderer42] that if

$Q=X_1^2+\dots +X_n^2$

is a sum of squares (this does not hold for all nonnegative forms P [Reference Reznick38]). More generally, Scheiderer proved [Reference Scheiderer42] that if

![]() $Q \in \textrm {int}(P_{n,d'})$

and

$Q \in \textrm {int}(P_{n,d'})$

and

![]() $P\in \textrm {int}(P_{n,d})$

are two strictly positive forms, then

$P\in \textrm {int}(P_{n,d})$

are two strictly positive forms, then

![]() $PQ^k\in \Sigma _{n,d+d'k}$

is a sum of squares for all sufficiently large k. Thus, if

$PQ^k\in \Sigma _{n,d+d'k}$

is a sum of squares for all sufficiently large k. Thus, if

![]() $P\in \textrm {int}(P_{n,d})$

is strictly positive, then

$P\in \textrm {int}(P_{n,d})$

is strictly positive, then

![]() $P^k\in \Sigma _{n,kd}$

is a sum of squares for all sufficiently large k. In the present work we study this property for non-sum of squares forms

$P^k\in \Sigma _{n,kd}$

is a sum of squares for all sufficiently large k. In the present work we study this property for non-sum of squares forms

![]() $P\in \partial P_{n,d}$

in the boundary of the cone of nonnegative forms. Being a square, an even power

$P\in \partial P_{n,d}$

in the boundary of the cone of nonnegative forms. Being a square, an even power

![]() $P^{2k}=(P^k)^2\in \Sigma _{n,2kd}$

of P is a sum of squares. We say that P admits an odd sum of squares power, if

$P^{2k}=(P^k)^2\in \Sigma _{n,2kd}$

of P is a sum of squares. We say that P admits an odd sum of squares power, if

![]() $P^{2k+1}\in \Sigma _{n,(2k+1)d}$

is a sum of squares for some

$P^{2k+1}\in \Sigma _{n,(2k+1)d}$

is a sum of squares for some

![]() $k\geq 0$

. Otherwise, the form

$k\geq 0$

. Otherwise, the form

![]() $P\in \partial P_{n,d}$

will be called stubborn.

$P\in \partial P_{n,d}$

will be called stubborn.

A nonnegative form

![]() $P\in P_{n,d}$

is said to be extremal, if it spans an extreme ray of the cone

$P\in P_{n,d}$

is said to be extremal, if it spans an extreme ray of the cone

![]() $P_{n,d}$

. The set of extremal forms in

$P_{n,d}$

. The set of extremal forms in

![]() $P_{n,d}$

is denoted by

$P_{n,d}$

is denoted by

![]() $\mathcal {E}(P_{n,d})$

. When

$\mathcal {E}(P_{n,d})$

. When

![]() $P\in \mathcal {E}(P_{n,d})$

spans an exposed extreme ray, we say that P is an exposed extremal form. The Motzkin form (1.1) is an example of a non-exposed extremal form in

$P\in \mathcal {E}(P_{n,d})$

spans an exposed extreme ray, we say that P is an exposed extremal form. The Motzkin form (1.1) is an example of a non-exposed extremal form in

![]() $P_{3,6}$

(see [Reference Choi and Lam14, p.

$P_{3,6}$

(see [Reference Choi and Lam14, p.

![]() $8$

], [Reference Reznick34, Thm.

$8$

], [Reference Reznick34, Thm.

![]() $5$

] and the proof of [Reference Blekherman, Hauenstein, Ottem, Ranestad and Sturmfels4, Thm.

$5$

] and the proof of [Reference Blekherman, Hauenstein, Ottem, Ranestad and Sturmfels4, Thm.

![]() $2$

]), while the Robinson form (1.2) is an exposed extremal ternary sextic (see [Reference Choi and Lam13, Thm.

$2$

]), while the Robinson form (1.2) is an exposed extremal ternary sextic (see [Reference Choi and Lam13, Thm.

![]() $3.8$

]). In [Reference Choi and Lam13, Reference Choi and Lam14] Choi and Lam also studied the following ternary sextic and quaternary quartic:

$3.8$

]). In [Reference Choi and Lam13, Reference Choi and Lam14] Choi and Lam also studied the following ternary sextic and quaternary quartic:

$$ \begin{align} \begin{aligned} S\ &=\ X_1^4X_2^2+X_2^4X_3^2+X_3^4X_1^2-3X_1^2X_2^2X_3^2,\\ Q\ &=\ X_4^4+X_1^2X_2^2+X_1^2X_3^2+X_2^2X_3^2-4X_1X_2X_3X_4. \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} S\ &=\ X_1^4X_2^2+X_2^4X_3^2+X_3^4X_1^2-3X_1^2X_2^2X_3^2,\\ Q\ &=\ X_4^4+X_1^2X_2^2+X_1^2X_3^2+X_2^2X_3^2-4X_1X_2X_3X_4. \end{aligned} \end{align} $$

They showed that

![]() $S\in \Delta _{3,6}$

,

$S\in \Delta _{3,6}$

,

![]() $Q\in \Delta _{4,4}$

are non-sum of squares extremal nonnegative forms. In 1979 Stengle [Reference Stengle44] proved that the ternary sextic

$Q\in \Delta _{4,4}$

are non-sum of squares extremal nonnegative forms. In 1979 Stengle [Reference Stengle44] proved that the ternary sextic

is stubborn. The paper [Reference Stengle44] reports that Reznick had proved that S is stubborn by a different argument. In 1982, Choi, Dai, Lam and Reznick [Reference Choi, Dai, Lam and Reznick12] cited Stengle’s example (1.4) and claimed the same property for M instead of S. No proofs for M or S were given at the time. In Subsection 3.4 we include this earlier proof of the fact that M is stubborn.

This work was in particular motivated by a query from Jim McEnerney about references for these claimed results. In his talk at the Conference on Applied Algebraic Geometry (AG23) held in Eindhoven in July 2023, Reznick posed a conjecture that all extremal forms in

![]() $\Delta _{3,6}$

are stubborn. In the present work we settle this conjecture.

$\Delta _{3,6}$

are stubborn. In the present work we settle this conjecture.

Theorem 1.1. Let

![]() $P\in \mathcal {E}(P_{3,6})\cap \Delta _{3,6}$

be an extremal nonnegative ternary sextic which is not a sum of squares. Then P is stubborn, that is,

$P\in \mathcal {E}(P_{3,6})\cap \Delta _{3,6}$

be an extremal nonnegative ternary sextic which is not a sum of squares. Then P is stubborn, that is,

![]() $P^{2k+1}\in \Delta _{3,6(2k+1)}$

is not a sum of squares for

$P^{2k+1}\in \Delta _{3,6(2k+1)}$

is not a sum of squares for

![]() $k\geq 0$

.

$k\geq 0$

.

As a direct consequence of this result we have the following.

Corollary 1.2. The forms M, R and S are all stubborn, that is,

![]() $M^{2k+1}, R^{2k+1}, S^{2k+1} \in \Delta _{3,6(2k+1)}$

are not sums of squares for all

$M^{2k+1}, R^{2k+1}, S^{2k+1} \in \Delta _{3,6(2k+1)}$

are not sums of squares for all

![]() $k\geq 0$

.

$k\geq 0$

.

Remark 1.3. Theorem 1.1 does not imply Stengle’s result above, as the ternary sextic

![]() $T\in \Delta _{3,6}$

is not extremal (see Subsection 3.5). Furthermore, by a result of Scheiderer [Reference Scheiderer42] that we stated above, all sufficiently large powers

$T\in \Delta _{3,6}$

is not extremal (see Subsection 3.5). Furthermore, by a result of Scheiderer [Reference Scheiderer42] that we stated above, all sufficiently large powers

![]() $P^{2k+1}$

of a strictly positive form

$P^{2k+1}$

of a strictly positive form

![]() $P\in \textrm{{int}}(P_{n,d})$

are sums of squares.

$P\in \textrm{{int}}(P_{n,d})$

are sums of squares.

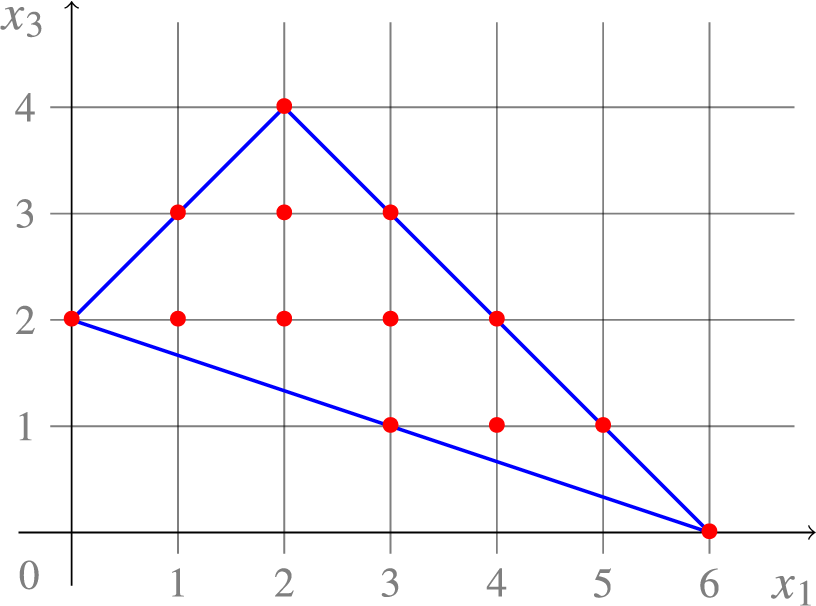

More generally, we develop a new invariant of a real zero of a nonnegative ternary form, that we call the SOS-invariant. It can be compared to the classical delta invariant of a plane curve singularity (see Subsection 2.3). The main idea is that if the sum of SOS-invariants of

![]() $P\in P_{3,d}$

over all its real zeros is too large (specifically, greater than

$P\in P_{3,d}$

over all its real zeros is too large (specifically, greater than

![]() $d^2/4$

), then P must be stubborn, see Theorem 3.10. This happens, for example, for extremal ternary sextics in

$d^2/4$

), then P must be stubborn, see Theorem 3.10. This happens, for example, for extremal ternary sextics in

![]() $\Delta _{3,6}$

. For higher degrees such forms exist due to results of Brugallé et al. from [Reference Brugallé, Degtyarev, Itenberg and Mangolte7]. However, Theorem 1.1 does not admit a direct generalization, as no characterization of extremal forms in

$\Delta _{3,6}$

. For higher degrees such forms exist due to results of Brugallé et al. from [Reference Brugallé, Degtyarev, Itenberg and Mangolte7]. However, Theorem 1.1 does not admit a direct generalization, as no characterization of extremal forms in

![]() $P_{n,d}$

in terms of the number of real zeros is known except for

$P_{n,d}$

in terms of the number of real zeros is known except for

![]() $(n,d) = (3,6)$

.

$(n,d) = (3,6)$

.

By regarding a form

![]() $P\in P_{3,d}\subset P_{n,d}$

with more than

$P\in P_{3,d}\subset P_{n,d}$

with more than

![]() $d^2/4$

real zeros as a form in

$d^2/4$

real zeros as a form in

![]() $n\geq 4$

variables we show that stubborn forms exist in arbitrary number of variables, see Theorem 4.2. In Section 4 we also show that the quaternary quartic

$n\geq 4$

variables we show that stubborn forms exist in arbitrary number of variables, see Theorem 4.2. In Section 4 we also show that the quaternary quartic

![]() $Q\in \Delta _{4,4}$

defined in (1.3) and the Horn form

$Q\in \Delta _{4,4}$

defined in (1.3) and the Horn form

![]() $F\in \Delta _{5,4}$

:

$F\in \Delta _{5,4}$

:

$$ \begin{align} F\ =\ \left(\sum_{j=1}^5 X_j^2\right)^2 - 4\ \sum_{j=1}^5 X_j^2X_{j+1}^2, \end{align} $$

$$ \begin{align} F\ =\ \left(\sum_{j=1}^5 X_j^2\right)^2 - 4\ \sum_{j=1}^5 X_j^2X_{j+1}^2, \end{align} $$

are both stubborn.

Remark 1.4. The Horn form was originally defined as a quadric in

![]() $X_1^2,\dots , X_5^2$

. It was communicated to Hall by Horn in the early 1960s, as a counterexample to a conjecture of Diananda asserting that a quadratic form that is nonnegative on the nonnegative orthant is a sum of a nonnegative form and a quadratic form with nonnegative coefficients only (see [Reference Diananda18, p.25] and [Reference Hall and Newman21, p.334-5]).

$X_1^2,\dots , X_5^2$

. It was communicated to Hall by Horn in the early 1960s, as a counterexample to a conjecture of Diananda asserting that a quadratic form that is nonnegative on the nonnegative orthant is a sum of a nonnegative form and a quadratic form with nonnegative coefficients only (see [Reference Diananda18, p.25] and [Reference Hall and Newman21, p.334-5]).

In Section 5 we initiate a systematic study of the set of non-stubborn forms that admit odd sums of squares powers. For

![]() $k\geq 0$

let us define

$k\geq 0$

let us define

Note that

![]() $\Sigma _{n,d}(1) = \Sigma _{n,d}$

and, since

$\Sigma _{n,d}(1) = \Sigma _{n,d}$

and, since

![]() $P^{2k+3} = P^{2k+1}\cdot P^2$

, we have the inclusion

$P^{2k+3} = P^{2k+1}\cdot P^2$

, we have the inclusion

![]() $\Sigma _{n,d}(2k+1) \subseteq \Sigma _{n,d}(2k+3)$

and so it makes sense to define

$\Sigma _{n,d}(2k+1) \subseteq \Sigma _{n,d}(2k+3)$

and so it makes sense to define

Let

![]() $\Delta _{n,d}(\infty )=P_{n,d}\setminus \Sigma _{n,d}(\infty )$

denote the set of stubborn forms in

$\Delta _{n,d}(\infty )=P_{n,d}\setminus \Sigma _{n,d}(\infty )$

denote the set of stubborn forms in

![]() $P_{n,d}$

. With these notations, we have that

$P_{n,d}$

. With these notations, we have that

![]() $M, R, S, T \in \Delta _{3,6}(\infty )$

,

$M, R, S, T \in \Delta _{3,6}(\infty )$

,

![]() $Q\in \Delta _{4,4}(\infty )$

and

$Q\in \Delta _{4,4}(\infty )$

and

![]() $F\in \Delta _{5,4}(\infty )$

. Since

$F\in \Delta _{5,4}(\infty )$

. Since

![]() $\Sigma _{n,d}$

and

$\Sigma _{n,d}$

and

![]() $P_{n,d}$

are closed convex cones, it is natural to ask whether this is also true for

$P_{n,d}$

are closed convex cones, it is natural to ask whether this is also true for

![]() $\Sigma _{n,d}(2k+1)$

and

$\Sigma _{n,d}(2k+1)$

and

![]() $\Sigma _{n,d}(\infty )$

.

$\Sigma _{n,d}(\infty )$

.

First observe that if

![]() $(P_i) \subset \Sigma _{n,d}(2k+1)$

is a sequence of forms converging to

$(P_i) \subset \Sigma _{n,d}(2k+1)$

is a sequence of forms converging to

![]() $P=\lim _{i\rightarrow \infty } P_i$

, then the sequence of powers

$P=\lim _{i\rightarrow \infty } P_i$

, then the sequence of powers

![]() $(P_i^{2k+1})\subset \Sigma _{n,d(2k+1)}$

converges to

$(P_i^{2k+1})\subset \Sigma _{n,d(2k+1)}$

converges to

![]() $P^{2k+1}=\lim _{i\rightarrow \infty } P^{2k+1}_i$

, which by closedness of the sums of squares cone means that

$P^{2k+1}=\lim _{i\rightarrow \infty } P^{2k+1}_i$

, which by closedness of the sums of squares cone means that

![]() $P\in \Sigma _{n,d}(2k+1)$

and so

$P\in \Sigma _{n,d}(2k+1)$

and so

![]() $\Sigma _{n,d}(2k+1)$

is closed. It is unclear whether

$\Sigma _{n,d}(2k+1)$

is closed. It is unclear whether

![]() $\Sigma _{n,d}(2k+1)$

is convex when

$\Sigma _{n,d}(2k+1)$

is convex when

![]() $k>0$

. That is, if

$k>0$

. That is, if

![]() $P_1^{2k+1}$

and

$P_1^{2k+1}$

and

![]() $P_2^{2k+1}$

are sums of squares, must

$P_2^{2k+1}$

are sums of squares, must

![]() $(P_1+P_2)^{2k+1}$

be a sum of squares as well? We prove in Theorem 5.1 that if

$(P_1+P_2)^{2k+1}$

be a sum of squares as well? We prove in Theorem 5.1 that if

![]() $P_1^{2k+1} $

is a sum of squares and

$P_1^{2k+1} $

is a sum of squares and

![]() $P_2$

is a sum of squares, then

$P_2$

is a sum of squares, then

![]() $(P_1+P_2)^{2k+1}$

is a sum of squares. This is a special case of the more general Theorem 5.3, which in particular yields convexity of

$(P_1+P_2)^{2k+1}$

is a sum of squares. This is a special case of the more general Theorem 5.3, which in particular yields convexity of

![]() $\Sigma _{n,d}(\infty )$

. Note however that

$\Sigma _{n,d}(\infty )$

. Note however that

![]() $\Sigma _{n,d}(\infty )$

is not closed, when

$\Sigma _{n,d}(\infty )$

is not closed, when

![]() $\Delta _{n,d}(\infty ) \neq \emptyset $

, that is, if

$\Delta _{n,d}(\infty ) \neq \emptyset $

, that is, if

![]() $\Delta _{n,d}\neq \emptyset $

(cf. Theorem 4.2). Indeed, a form

$\Delta _{n,d}\neq \emptyset $

(cf. Theorem 4.2). Indeed, a form

![]() $P\in \Delta _{n,d}(\infty )=P_{n,d}\setminus \Sigma _{n,d}(\infty )$

lies in the closure of the open cone

$P\in \Delta _{n,d}(\infty )=P_{n,d}\setminus \Sigma _{n,d}(\infty )$

lies in the closure of the open cone

![]() $\textrm {int}(P_{n,d}) \subset \Sigma _{n,d}(\infty )$

of strictly positive forms, each of which admits an odd power which is a sum of squares by [Reference Scheiderer42]. Thus, the Motzkin form (1.1) can be obtained as the limit

$\textrm {int}(P_{n,d}) \subset \Sigma _{n,d}(\infty )$

of strictly positive forms, each of which admits an odd power which is a sum of squares by [Reference Scheiderer42]. Thus, the Motzkin form (1.1) can be obtained as the limit

![]() $M=\lim _{\varepsilon \rightarrow 0+} M_\varepsilon $

, where

$M=\lim _{\varepsilon \rightarrow 0+} M_\varepsilon $

, where

![]() $ M_\varepsilon = M + \varepsilon (X_1^2 + X_2^2 + X_3^2)^3$

is strictly positive for

$ M_\varepsilon = M + \varepsilon (X_1^2 + X_2^2 + X_3^2)^3$

is strictly positive for

![]() $\varepsilon>0$

. By Theorem 5.1, we see that

$\varepsilon>0$

. By Theorem 5.1, we see that

![]() $\{ \varepsilon \geq 0: M_\varepsilon \in \Sigma _{3,6}(2k+1)\}$

is an interval of the form

$\{ \varepsilon \geq 0: M_\varepsilon \in \Sigma _{3,6}(2k+1)\}$

is an interval of the form

![]() $[\beta _{2k+1},\infty )$

for some

$[\beta _{2k+1},\infty )$

for some

![]() $\beta _{2k+1}>0$

. The coefficient of

$\beta _{2k+1}>0$

. The coefficient of

![]() $X_1^2X_2^2X_3^2$

in

$X_1^2X_2^2X_3^2$

in

![]() $M_\varepsilon $

is

$M_\varepsilon $

is

![]() $-3+6\varepsilon $

, so

$-3+6\varepsilon $

, so

![]() $\beta _1 \le \frac 12$

. Furthermore, one has

$\beta _1 \le \frac 12$

. Furthermore, one has

![]() $\beta _1\geq \beta _3\geq \beta _5\geq \dots $

and

$\beta _1\geq \beta _3\geq \beta _5\geq \dots $

and

![]() $\lim _{k\rightarrow \infty }\beta _{2k+1} = 0$

. Thus, there are infinitely many k so that

$\lim _{k\rightarrow \infty }\beta _{2k+1} = 0$

. Thus, there are infinitely many k so that

![]() $\Sigma _{3,6}(2k+1) \subsetneq \Sigma _{3,6}(2k+3) $

. We strongly believe that this is true for all

$\Sigma _{3,6}(2k+1) \subsetneq \Sigma _{3,6}(2k+3) $

. We strongly believe that this is true for all

![]() $k\geq 0$

.

$k\geq 0$

.

Remark 1.5. As the property of being nonnegative or a sum of squares is invariant under the (de)homogenization of a polynomial,

![]() $P^{2k+1}$

is a sum of squares for

$P^{2k+1}$

is a sum of squares for

![]() $P\in P_{n,d}$

if and only if so is

$P\in P_{n,d}$

if and only if so is

![]() $p^{2k+1}$

for the dehomogenized polynomial

$p^{2k+1}$

for the dehomogenized polynomial

![]() $p(x_1,\dots , x_{n-1}):=P(x_1,\dots , x_{n-1},1)$

. In particular, stubborn forms exist in

$p(x_1,\dots , x_{n-1}):=P(x_1,\dots , x_{n-1},1)$

. In particular, stubborn forms exist in

![]() $P_{n,d}$

if and only if there are stubborn nonnegative

$P_{n,d}$

if and only if there are stubborn nonnegative

![]() $(n-1)$

-variate polynomials of degree d.

$(n-1)$

-variate polynomials of degree d.

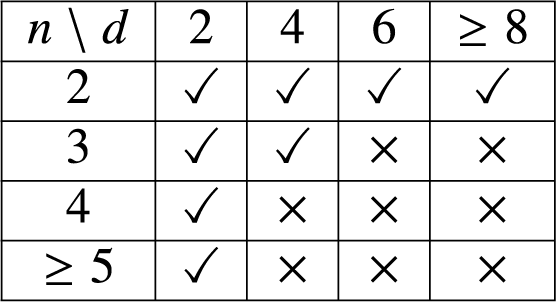

Based on Theorem 1.1 and Theorem 4.1 from Section 4 we make the following conjecture.

Conjecture 1.6. Let

![]() $\Delta _{n,d}\neq \varnothing $

, that is,

$\Delta _{n,d}\neq \varnothing $

, that is,

![]() $n\geq 3$

,

$n\geq 3$

,

![]() $d\geq 6$

or

$d\geq 6$

or

![]() $n\geq 4$

,

$n\geq 4$

,

![]() $d\geq 4$

. Then every extremal nonnegative

$d\geq 4$

. Then every extremal nonnegative

![]() $P \in \mathcal {E}(P_{n,d})\cap \Delta _{n,d}$

which is not a sum of squares is stubborn, that is,

$P \in \mathcal {E}(P_{n,d})\cap \Delta _{n,d}$

which is not a sum of squares is stubborn, that is,

![]() $P\in \Delta _{n,d}(\infty )$

.

$P\in \Delta _{n,d}(\infty )$

.

2 Preliminaries

Here we collect definitions and prove auxiliary results that are used throughout the text.

2.1 Order of vanishing

A form

![]() $F\in F_{n,d}$

has order of vanishing or, simply, multiplicity at least

$F\in F_{n,d}$

has order of vanishing or, simply, multiplicity at least

![]() $m\in \mathbb {N}$

at

$m\in \mathbb {N}$

at

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^{n-1}$

, if

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^{n-1}$

, if

![]() $\partial _{\mathbf {X}}^{\,\alpha } F(\mathbf {X}^*)=0$

for all

$\partial _{\mathbf {X}}^{\,\alpha } F(\mathbf {X}^*)=0$

for all

![]() $\alpha \in \mathbb {N}^n$

with

$\alpha \in \mathbb {N}^n$

with

![]() $\vert \alpha \vert =\alpha _1+\dots +\alpha _n\leq m-1$

. This is equivalent to the vanishing of directional derivatives of order up to

$\vert \alpha \vert =\alpha _1+\dots +\alpha _n\leq m-1$

. This is equivalent to the vanishing of directional derivatives of order up to

![]() $m-1$

,

$m-1$

,

$$ \begin{align} \frac{\mathrm{{d}}^i}{\mathrm{{d}}\varepsilon^i}\bigg|_{\varepsilon=0} F(\mathbf{X}^*+\varepsilon \mathbf{V})\ =\ 0\quad\textrm{for all} \quad \mathbf{V}\in \mathbb{C}^n\quad \textrm{and}\quad i=0,1,\dots, m-1. \end{align} $$

$$ \begin{align} \frac{\mathrm{{d}}^i}{\mathrm{{d}}\varepsilon^i}\bigg|_{\varepsilon=0} F(\mathbf{X}^*+\varepsilon \mathbf{V})\ =\ 0\quad\textrm{for all} \quad \mathbf{V}\in \mathbb{C}^n\quad \textrm{and}\quad i=0,1,\dots, m-1. \end{align} $$

In particular, F has multiplicity at least

![]() $1$

at its zeros

$1$

at its zeros

![]() $\mathbf {X}^*\in \mathcal {V}(F)\subset \mathbb {P}_{\mathbb {C}}^{n-1}$

and multiplicity at least

$\mathbf {X}^*\in \mathcal {V}(F)\subset \mathbb {P}_{\mathbb {C}}^{n-1}$

and multiplicity at least

![]() $2$

at singular points of the hypersurface

$2$

at singular points of the hypersurface

![]() $\mathcal {V}(F)\subset \mathbb {P}_{\mathbb {C}}^{n-1}$

. If m is the largest integer satisfying (2.1), we say that the multiplicity of F at

$\mathcal {V}(F)\subset \mathbb {P}_{\mathbb {C}}^{n-1}$

. If m is the largest integer satisfying (2.1), we say that the multiplicity of F at

![]() $\mathbf {X}^*$

is (exactly) m and write

$\mathbf {X}^*$

is (exactly) m and write

![]() $m_{\mathbf {X}^*}(F)=m$

.

$m_{\mathbf {X}^*}(F)=m$

.

If

![]() $F\in F_{n,d}$

has multiplicity

$F\in F_{n,d}$

has multiplicity

![]() $2$

at

$2$

at

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^{n-1}$

and the Hessian matrix

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^{n-1}$

and the Hessian matrix

of F at

![]() $\mathbf {X}^*$

is of maximal rankFootnote

1

$\mathbf {X}^*$

is of maximal rankFootnote

1

![]() $\textrm {rk}\,( \textrm {Hess}_{\mathbf {X}^*} F) = n-1$

, one says that

$\textrm {rk}\,( \textrm {Hess}_{\mathbf {X}^*} F) = n-1$

, one says that

![]() $\mathbf {X}^*$

is an ordinary singularity of

$\mathbf {X}^*$

is an ordinary singularity of

![]() $\mathcal {V}(F)$

. A real zero

$\mathcal {V}(F)$

. A real zero

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

of a nonnegative form

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

of a nonnegative form

![]() $P\in P_{n,d}$

is a singular point of

$P\in P_{n,d}$

is a singular point of

![]() $\mathcal {V}(P)\subset \mathbb {P}_{\mathbb {C}}^{n-1}$

. If

$\mathcal {V}(P)\subset \mathbb {P}_{\mathbb {C}}^{n-1}$

. If

![]() $\mathbf {X}^*$

is an ordinary singularity, it is sometimes called a round zero of P [Reference Blekherman, Hauenstein, Ottem, Ranestad and Sturmfels4, Reference Iliman24]. Then, the Hessian matrix

$\mathbf {X}^*$

is an ordinary singularity, it is sometimes called a round zero of P [Reference Blekherman, Hauenstein, Ottem, Ranestad and Sturmfels4, Reference Iliman24]. Then, the Hessian matrix

![]() $\textrm {Hess}_{\mathbf {X}^*} P$

of

$\textrm {Hess}_{\mathbf {X}^*} P$

of

![]() $P\in P_{n,d}$

at such

$P\in P_{n,d}$

at such

![]() $\mathbf {X}^*$

must be positive semidefinite of corank one.

$\mathbf {X}^*$

must be positive semidefinite of corank one.

Remark 2.1. The multiplicity

![]() $m=m_{\mathbf {X}^*}(P)$

of a nonnegative

$m=m_{\mathbf {X}^*}(P)$

of a nonnegative

![]() $P\in P_{n,d}$

at

$P\in P_{n,d}$

at

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

is even. If it was not the case, the Taylor expansion of P at

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

is even. If it was not the case, the Taylor expansion of P at

![]() $\mathbf {X}^*$

, would imply that the restriction

$\mathbf {X}^*$

, would imply that the restriction

$$\begin{align*}P(\mathbf{X}^*+t \mathbf{V})\ =\ \frac{1}{m!} \frac{\mathrm{{d}}^m}{\mathrm{{d}}\varepsilon^m}\bigg|_{\varepsilon =0} P(\mathbf{X}^*+\varepsilon \mathbf{V})\, t^m+O(t^{m+1}) \end{align*}$$

$$\begin{align*}P(\mathbf{X}^*+t \mathbf{V})\ =\ \frac{1}{m!} \frac{\mathrm{{d}}^m}{\mathrm{{d}}\varepsilon^m}\bigg|_{\varepsilon =0} P(\mathbf{X}^*+\varepsilon \mathbf{V})\, t^m+O(t^{m+1}) \end{align*}$$

of P to some line through

![]() $\mathbf {X}^*$

is not nonnegative.

$\mathbf {X}^*$

is not nonnegative.

Given a real zero

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

of

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

of

![]() $P\in P_{n,d}$

, Reznick [Reference Reznick39] considers a subspace

$P\in P_{n,d}$

, Reznick [Reference Reznick39] considers a subspace

of forms of half degree whose square (up to a constant) is bounded from above by P locally around

![]() $\mathbf {X}^*$

, where the topology is (induced by) the Euclidean one. In the following we refer to

$\mathbf {X}^*$

, where the topology is (induced by) the Euclidean one. In the following we refer to

![]() $E(P,\mathbf {X}^*)$

as the local SOS-support of P at

$E(P,\mathbf {X}^*)$

as the local SOS-support of P at

![]() $\mathbf {X}^*$

We call the codimension of this linear subspace of

$\mathbf {X}^*$

We call the codimension of this linear subspace of

![]() $F_{n,d/2}$

the half-degree invariant of P at

$F_{n,d/2}$

the half-degree invariant of P at

![]() $X^*$

and denote it by

$X^*$

and denote it by

$$ \begin{align} \delta^{\,\textrm{hd}}(P,\mathbf{X}^*)\ =\ {n-1+d/2 \choose d/2} - \dim E(P,\mathbf{X}^*). \end{align} $$

$$ \begin{align} \delta^{\,\textrm{hd}}(P,\mathbf{X}^*)\ =\ {n-1+d/2 \choose d/2} - \dim E(P,\mathbf{X}^*). \end{align} $$

In other words, the quantity

![]() $\delta ^{\,\textrm {hd}}(P,\mathbf {X}^*)$

defined in [Reference Reznick39] counts the number of linear conditions that one has to impose on a form Q of degree

$\delta ^{\,\textrm {hd}}(P,\mathbf {X}^*)$

defined in [Reference Reznick39] counts the number of linear conditions that one has to impose on a form Q of degree

![]() $d/2$

so that its square

$d/2$

so that its square

![]() $Q^2$

(up to a multiplicative constant) is bounded from above by P in some neighborhood of

$Q^2$

(up to a multiplicative constant) is bounded from above by P in some neighborhood of

![]() $\mathbf {X}^*\in \mathbb {R}^n$

.

$\mathbf {X}^*\in \mathbb {R}^n$

.

Example 2.2. If

![]() $\mathbf {X}^*$

is a real zero of P, then for

$\mathbf {X}^*$

is a real zero of P, then for

![]() $\varepsilon Q^2$

(with some

$\varepsilon Q^2$

(with some

![]() $\varepsilon>0$

) to be bounded from above by P locally, we must have

$\varepsilon>0$

) to be bounded from above by P locally, we must have

![]() $Q(\mathbf {X}^*)=0$

. Thus, the Taylor expansion of

$Q(\mathbf {X}^*)=0$

. Thus, the Taylor expansion of

![]() $Q^2$

near

$Q^2$

near

![]() $\mathbf {X}^*$

starts with terms of order two or higher. If

$\mathbf {X}^*$

starts with terms of order two or higher. If

![]() $\mathbf {X}^*$

is a round zero, by choosing a sufficiently small

$\mathbf {X}^*$

is a round zero, by choosing a sufficiently small

![]() $\varepsilon>0$

, the value of

$\varepsilon>0$

, the value of

![]() $\varepsilon Q(\mathbf {X}^*+\mathbf {X})^2$

is majorized by

$\varepsilon Q(\mathbf {X}^*+\mathbf {X})^2$

is majorized by

![]() $P(\mathbf {X}^*+\mathbf {X})=\frac {1}{2}\mathbf {X}^{\mathsf T}\textrm{{Hess}}_{\mathbf {X}^*}(P) \mathbf {X} + O(\Vert \mathbf {X}\Vert ^3)$

for all small enough

$P(\mathbf {X}^*+\mathbf {X})=\frac {1}{2}\mathbf {X}^{\mathsf T}\textrm{{Hess}}_{\mathbf {X}^*}(P) \mathbf {X} + O(\Vert \mathbf {X}\Vert ^3)$

for all small enough

![]() $\mathbf {X}\in \mathbb {R}^n$

. As a consequence, there are no further conditions on Q in the case of a round zero and so

$\mathbf {X}\in \mathbb {R}^n$

. As a consequence, there are no further conditions on Q in the case of a round zero and so

![]() $\delta ^{\,\textrm{{hd}}}(P,\mathbf {X}^*)=1$

. If, on the contrary,

$\delta ^{\,\textrm{{hd}}}(P,\mathbf {X}^*)=1$

. If, on the contrary,

![]() $\mathbf {X}^{\prime \mathsf T}\textrm{{Hess}}_{\mathbf {X}^*}(P) \mathbf {X}'=0$

for some

$\mathbf {X}^{\prime \mathsf T}\textrm{{Hess}}_{\mathbf {X}^*}(P) \mathbf {X}'=0$

for some

![]() $\mathbf {X}'\in \mathbb {R}^n$

not proportional to

$\mathbf {X}'\in \mathbb {R}^n$

not proportional to

![]() $\mathbf {X}$

, then (by the nonnegativity of P) the univariate polynomial

$\mathbf {X}$

, then (by the nonnegativity of P) the univariate polynomial

![]() $t\mapsto P(\mathbf {X}^*+t \mathbf {X}')$

has multiplicity at least

$t\mapsto P(\mathbf {X}^*+t \mathbf {X}')$

has multiplicity at least

![]() $4$

at

$4$

at

![]() $t=0$

. For

$t=0$

. For

![]() $\varepsilon Q(\mathbf {X}^*+t\mathbf {X}')^2$

to be bounded from above by

$\varepsilon Q(\mathbf {X}^*+t\mathbf {X}')^2$

to be bounded from above by

![]() $P(\mathbf {X}^*+t\mathbf {X}')$

for small

$P(\mathbf {X}^*+t\mathbf {X}')$

for small

![]() $t\in \mathbb {R}$

, one must have vanishing of the directional derivative,

$t\in \mathbb {R}$

, one must have vanishing of the directional derivative,

![]() $\frac {\mathrm{{d}}}{\mathrm{{d}} t}\big |_{t=0} Q(\mathbf {X}^*+t\mathbf {X}')=0$

. This is a linear condition imposed on

$\frac {\mathrm{{d}}}{\mathrm{{d}} t}\big |_{t=0} Q(\mathbf {X}^*+t\mathbf {X}')=0$

. This is a linear condition imposed on

![]() $Q\in E(P,\mathbf {X}^*)$

(along with

$Q\in E(P,\mathbf {X}^*)$

(along with

![]() $Q(\mathbf {X}^*)=0$

) and hence

$Q(\mathbf {X}^*)=0$

) and hence

![]() $\delta ^{\,\textrm{{hd}}}(P,\mathbf {X}^*)\geq 2$

.

$\delta ^{\,\textrm{{hd}}}(P,\mathbf {X}^*)\geq 2$

.

In a more general case one has to impose conditions on (higher order) directional derivatives of Q. A special case of interest is covered by the following lemma.

Lemma 2.3. Let

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

be a real zero of a nonnegative form

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {R}}^{n-1}$

be a real zero of a nonnegative form

![]() $P\in P_{n,d}$

, let

$P\in P_{n,d}$

, let

![]() $k\in \mathbb {N}$

and let

$k\in \mathbb {N}$

and let

![]() $H\in E(P^k,\mathbf {X}^*)$

. Then

$H\in E(P^k,\mathbf {X}^*)$

. Then

![]() $m_{\mathbf {X}^*}(P^k)= k\,m_{\mathbf {X}^*}(P)$

and

$m_{\mathbf {X}^*}(P^k)= k\,m_{\mathbf {X}^*}(P)$

and

![]() $m_{\mathbf {X}^*}(H)\geq k\,m_{\mathbf {X}^*}(P)/2$

.

$m_{\mathbf {X}^*}(H)\geq k\,m_{\mathbf {X}^*}(P)/2$

.

Proof. The first claim follows from the definition of multiplicity. In particular, for any

![]() $\mathbf {V}\in \mathbb {R}^n$

, the univariate polynomial

$\mathbf {V}\in \mathbb {R}^n$

, the univariate polynomial

![]() $t\mapsto P^k(\mathbf {X}^*+t \mathbf {V})$

is divisible by

$t\mapsto P^k(\mathbf {X}^*+t \mathbf {V})$

is divisible by

![]() $t^{k\,m_{\mathbf {X}^*}(P)}$

, meaning that all directional derivatives of

$t^{k\,m_{\mathbf {X}^*}(P)}$

, meaning that all directional derivatives of

![]() $P^k$

at

$P^k$

at

![]() $\mathbf {X}^*$

of order less than

$\mathbf {X}^*$

of order less than

![]() $k\,m_{\mathbf {X}^*}(P)$

are equal to zero. For

$k\,m_{\mathbf {X}^*}(P)$

are equal to zero. For

![]() $H\in E(P^k,\mathbf {X}^*)$

,

$H\in E(P^k,\mathbf {X}^*)$

,

![]() $\mathbf {V} \in \mathbb {R}^n$

and a sufficiently small

$\mathbf {V} \in \mathbb {R}^n$

and a sufficiently small

![]() $t\in \mathbb {R}$

,

$t\in \mathbb {R}$

,

![]() $H^2(\mathbf {X}^*+t \mathbf {V})$

is bounded (up to a multiplicative constant) by

$H^2(\mathbf {X}^*+t \mathbf {V})$

is bounded (up to a multiplicative constant) by

![]() $P^k(\mathbf {X}^*+t\mathbf {V} )= O(t^{k\,m_{\mathbf {X}^*}(P)})$

. Therefore, the multiplicity of H at

$P^k(\mathbf {X}^*+t\mathbf {V} )= O(t^{k\,m_{\mathbf {X}^*}(P)})$

. Therefore, the multiplicity of H at

![]() $\mathbf {X}^*$

is at least

$\mathbf {X}^*$

is at least

![]() $k\,m_{\mathbf {X}^*}(P)/2$

(here we know by Remark 2.1 that

$k\,m_{\mathbf {X}^*}(P)/2$

(here we know by Remark 2.1 that

![]() $m_{\mathbf {X}^*}(P)$

is even).

$m_{\mathbf {X}^*}(P)$

is even).

One can regard (2.2) as a measure of singularity of the curve

![]() $\mathcal {V}(P)$

at a singular point. As Example 3.12 shows, it is, in general, different from the invariants we introduce in Subsection 2.3 (among which is the classical delta invariant). For a nonnegative form

$\mathcal {V}(P)$

at a singular point. As Example 3.12 shows, it is, in general, different from the invariants we introduce in Subsection 2.3 (among which is the classical delta invariant). For a nonnegative form

![]() $P\in P_{n,d}$

with finitely many real zeros, the total sum of

$P\in P_{n,d}$

with finitely many real zeros, the total sum of

![]() $\delta ^{\,\textrm {hd}}(P,\mathbf {X}^*)$

over all real zeroes

$\delta ^{\,\textrm {hd}}(P,\mathbf {X}^*)$

over all real zeroes

![]() $\mathbf {X}^*$

of P is called the half-degree invariant of P and denoted by

$\mathbf {X}^*$

of P is called the half-degree invariant of P and denoted by

![]() $\delta ^{\,\textrm {hd}}(P)$

.

$\delta ^{\,\textrm {hd}}(P)$

.

2.2 Intersection multiplicity

We first discuss intersection multiplicities and state related results, see [Reference Shafarevich43, Chapter IV] for more details. For two bivariate polynomials

![]() $f, g\in \mathbb {C}[x_1,x_2]$

and a point

$f, g\in \mathbb {C}[x_1,x_2]$

and a point

![]() $\mathbf {x}^*=(x^*_1,x^*_2)\in \mathbb {A}^2_{\mathbb {C}}$

the intersection multiplicity of f and g at

$\mathbf {x}^*=(x^*_1,x^*_2)\in \mathbb {A}^2_{\mathbb {C}}$

the intersection multiplicity of f and g at

![]() $\mathbf {x}^*$

is defined as the dimension of the quotient of the local ring

$\mathbf {x}^*$

is defined as the dimension of the quotient of the local ring

![]() $\mathcal {O}_{\mathbf {x}^*}=\left \{\frac {p}{q}\,:\, p,q\in \mathbb {C}[x_1,x_2],\, q(\mathbf {x}^*)\neq 0\right \}$

of

$\mathcal {O}_{\mathbf {x}^*}=\left \{\frac {p}{q}\,:\, p,q\in \mathbb {C}[x_1,x_2],\, q(\mathbf {x}^*)\neq 0\right \}$

of

![]() $\mathbb {A}^2_{\mathbb {C}}$

at

$\mathbb {A}^2_{\mathbb {C}}$

at

![]() $\mathbf {x}^*$

by the ideal generated by f and g,

$\mathbf {x}^*$

by the ideal generated by f and g,

In particular, we have that

![]() $I_{\mathbf {x}^*}(f,g)$

is strictly positive if and only if

$I_{\mathbf {x}^*}(f,g)$

is strictly positive if and only if

![]() $f(\mathbf {x}^*)=g(\mathbf {x}^*)=0$

. In this case,

$f(\mathbf {x}^*)=g(\mathbf {x}^*)=0$

. In this case,

![]() $I_{\mathbf {x}^*}(f,g)=1$

if and only if the curves

$I_{\mathbf {x}^*}(f,g)=1$

if and only if the curves

![]() $f=0$

and

$f=0$

and

![]() $g=0$

intersect transversally at

$g=0$

intersect transversally at

![]() $\mathbf {x}^*$

, that is, the gradient vectors

$\mathbf {x}^*$

, that is, the gradient vectors

![]() $(\partial _{x_1} f(\mathbf {x}^*), \partial _{x_2} f(\mathbf {x}^*))$

and

$(\partial _{x_1} f(\mathbf {x}^*), \partial _{x_2} f(\mathbf {x}^*))$

and

![]() $(\partial _{x_1} g(\mathbf {x}^*), \partial _{x_2} g(\mathbf {x}^*))$

are linearly independent. If f and g share a common factor in

$(\partial _{x_1} g(\mathbf {x}^*), \partial _{x_2} g(\mathbf {x}^*))$

are linearly independent. If f and g share a common factor in

![]() $\mathbb {C}[x_1,x_2]$

that vanishes at

$\mathbb {C}[x_1,x_2]$

that vanishes at

![]() $\mathbf {x}^*$

, the intersection multiplicity

$\mathbf {x}^*$

, the intersection multiplicity

![]() $I_{\mathbf {x}^*}(f,g)=\infty $

is infinite. For ternary forms (homogenous polynomials in three variables)

$I_{\mathbf {x}^*}(f,g)=\infty $

is infinite. For ternary forms (homogenous polynomials in three variables)

![]() $F, G\in \mathbb {C}[X_1,X_2,X_3]$

and a point

$F, G\in \mathbb {C}[X_1,X_2,X_3]$

and a point

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^2$

one defines the intersection multiplicity of F and G at

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^2$

one defines the intersection multiplicity of F and G at

![]() $\mathbf {X}^*$

as

$\mathbf {X}^*$

as

![]() $I_{\mathbf {X}^*}(F,G):=I_{\mathbf {x}^*}(f,g)$

, where f and g are dehomogenizations of F and G, and

$I_{\mathbf {X}^*}(F,G):=I_{\mathbf {x}^*}(f,g)$

, where f and g are dehomogenizations of F and G, and

![]() $\mathbf {x}^*\in \mathbb {A}_{\mathbb {C}}^2$

is the representative of

$\mathbf {x}^*\in \mathbb {A}_{\mathbb {C}}^2$

is the representative of

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^2$

in the corresponding affine chart. The celebrated Bézout theorem asserts that the number of intersection points of two projective plane curves counted with multiplicities is equal to the product of their degrees.

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^2$

in the corresponding affine chart. The celebrated Bézout theorem asserts that the number of intersection points of two projective plane curves counted with multiplicities is equal to the product of their degrees.

Theorem 2.4 (Bézout’s theorem).

Let

![]() $F, G\in \mathbb {C}[X_1,X_2,X_3]$

be ternary forms that have no common factors of positive degree. Then

$F, G\in \mathbb {C}[X_1,X_2,X_3]$

be ternary forms that have no common factors of positive degree. Then

In particular,

![]() $\mathcal {V}(F)\cap \mathcal {V}(G)\subset \mathbb {P}_{\mathbb {C}}^2$

consists of at most

$\mathcal {V}(F)\cap \mathcal {V}(G)\subset \mathbb {P}_{\mathbb {C}}^2$

consists of at most

![]() $\deg (F)\cdot \deg (G)$

points.

$\deg (F)\cdot \deg (G)$

points.

A (generalized) tangent to

![]() $\mathcal {V}(F)\subset \mathbb {P}^2_{\mathbb {C}}$

at

$\mathcal {V}(F)\subset \mathbb {P}^2_{\mathbb {C}}$

at

![]() $\mathbf {X}^*\in \mathcal {V}(F)$

is a projective zero

$\mathbf {X}^*\in \mathcal {V}(F)$

is a projective zero

![]() $\mathbf {X}'\in \mathbb {P}\left (\mathbf {X}^*\right )^{\perp }\simeq \mathbb {P}^1_{\mathbb {C}}$

of the homogeneous part of

$\mathbf {X}'\in \mathbb {P}\left (\mathbf {X}^*\right )^{\perp }\simeq \mathbb {P}^1_{\mathbb {C}}$

of the homogeneous part of

![]() $F(\mathbf {X}^*+ \mathbf {X}')$

,

$F(\mathbf {X}^*+ \mathbf {X}')$

,

![]() $\mathbf {X}'\in \left (\mathbf {X}^*\right )^\perp $

, of lowest degree

$\mathbf {X}'\in \left (\mathbf {X}^*\right )^\perp $

, of lowest degree

![]() $m_{\mathbf {X}^*}(F)$

. In particular, tangents to

$m_{\mathbf {X}^*}(F)$

. In particular, tangents to

![]() $\mathcal {V}(F)$

at

$\mathcal {V}(F)$

at

![]() $\mathbf {X}^*=[0:0:1]\in \mathcal {V}(F)$

are projective zeros

$\mathbf {X}^*=[0:0:1]\in \mathcal {V}(F)$

are projective zeros

![]() $\mathbf {X}'=[X^{\prime }_1:X^{\prime }_2]\in \mathbb {P}^1_{\mathbb {C}}$

of the degree

$\mathbf {X}'=[X^{\prime }_1:X^{\prime }_2]\in \mathbb {P}^1_{\mathbb {C}}$

of the degree

![]() $m_{\mathbf {x}^*}(f):=m_{\mathbf {X}^*}(F)$

part of the dehomogenized polynomial

$m_{\mathbf {x}^*}(f):=m_{\mathbf {X}^*}(F)$

part of the dehomogenized polynomial

![]() $f(X^{\prime }_1,X^{\prime }_2)=F(X^{\prime }_1,X^{\prime }_2,1)$

. A curve

$f(X^{\prime }_1,X^{\prime }_2)=F(X^{\prime }_1,X^{\prime }_2,1)$

. A curve

![]() $\mathcal {V}(F)\subset \mathbb {P}_{\mathbb {C}}^2$

can have at most

$\mathcal {V}(F)\subset \mathbb {P}_{\mathbb {C}}^2$

can have at most

![]() $m_{\mathbf {X}^*}(F)$

distinct tangents. The following known result inspired our proof of Theorem 1.1; this is essentially [Reference Liang26, Thm.

$m_{\mathbf {X}^*}(F)$

distinct tangents. The following known result inspired our proof of Theorem 1.1; this is essentially [Reference Liang26, Thm.

![]() $3.4$

].

$3.4$

].

Lemma 2.5. For ternary forms

![]() $F, G\in \mathbb {C}[X_1,X_2,X_3]$

and

$F, G\in \mathbb {C}[X_1,X_2,X_3]$

and

![]() $\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^2$

,

$\mathbf {X}^*\in \mathbb {P}_{\mathbb {C}}^2$

,

with equality if and only if

![]() $\mathcal {V}(F)$

and

$\mathcal {V}(F)$

and

![]() $\mathcal {V}(G)$

do not share a tangent at

$\mathcal {V}(G)$

do not share a tangent at

![]() $\mathbf {X}^*$

.

$\mathbf {X}^*$

.

The blow-up of

![]() $\mathbb {A}^2_{\mathbb {C}}$

in a point

$\mathbb {A}^2_{\mathbb {C}}$

in a point

![]() $\mathbf {x}^*=(x_1^*,x_2^*)$

is a surface

$\mathbf {x}^*=(x_1^*,x_2^*)$

is a surface

![]() $S\subset \mathbb {A}^2_{\mathbb {C}}\times \mathbb {P}^1_{\mathbb {C}}$

defined by the polynomial

$S\subset \mathbb {A}^2_{\mathbb {C}}\times \mathbb {P}^1_{\mathbb {C}}$

defined by the polynomial

![]() $(x_1-x_1^*)X^{\prime }_2-(x_2-x_2^*)X^{\prime }_1$

(where

$(x_1-x_1^*)X^{\prime }_2-(x_2-x_2^*)X^{\prime }_1$

(where

![]() $[X_1':X_2']$

are the homogeneous coordinates on

$[X_1':X_2']$

are the homogeneous coordinates on

![]() $\mathbb {P}^1_{\mathbb {C}}$

) together with birational morphism

$\mathbb {P}^1_{\mathbb {C}}$

) together with birational morphism

Points in S with

![]() $[X^{\prime }_1:X^{\prime }_2]=[1:x^{\prime }_2]$

(respectively,

$[X^{\prime }_1:X^{\prime }_2]=[1:x^{\prime }_2]$

(respectively,

![]() $[X^{\prime }_1:X^{\prime }_2]=[x^{\prime }_1:1]$

) form a Zariski open set

$[X^{\prime }_1:X^{\prime }_2]=[x^{\prime }_1:1]$

) form a Zariski open set

![]() $S_1$

(respectively,

$S_1$

(respectively,

![]() $S_2$

) isomorphic to

$S_2$

) isomorphic to

![]() $\mathbb {A}^2_{\mathbb {C}}$

with coordinates

$\mathbb {A}^2_{\mathbb {C}}$

with coordinates

![]() $(x_1,x^{\prime }_2)$

(respectively,

$(x_1,x^{\prime }_2)$

(respectively,

![]() $(x_2,x^{\prime }_1)$

). The total transform of an affine curve

$(x_2,x^{\prime }_1)$

). The total transform of an affine curve

![]() $f=0$

in a point

$f=0$

in a point

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

is the inverse image of

$\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

is the inverse image of

![]() $f=0$

under (2.6), that is, the total transform is a curve on S defined by

$f=0$

under (2.6), that is, the total transform is a curve on S defined by

![]() $\pi ^*f = f\circ \pi $

. The strict transform of

$\pi ^*f = f\circ \pi $

. The strict transform of

![]() $f=0$

in

$f=0$

in

![]() $\mathbf {x}^*$

is the closure of the inverse image of

$\mathbf {x}^*$

is the closure of the inverse image of

![]() $\{f=0\}\setminus \{\mathbf {x}^*\}$

, it coincides with the total transform unless

$\{f=0\}\setminus \{\mathbf {x}^*\}$

, it coincides with the total transform unless

![]() $f(\mathbf {x}^*)=0$

. If

$f(\mathbf {x}^*)=0$

. If

![]() $f(\mathbf {x}^*)=0$

, the total transform of

$f(\mathbf {x}^*)=0$

, the total transform of

![]() $f=0$

is the union of its strict transform and the exceptional line

$f=0$

is the union of its strict transform and the exceptional line

![]() $\pi ^{-1}(\mathbf {x}^*)=\{\mathbf {x}^*\}\times \mathbb {P}^1_{\mathbb {C}}$

, which is just a copy of the projective line.

$\pi ^{-1}(\mathbf {x}^*)=\{\mathbf {x}^*\}\times \mathbb {P}^1_{\mathbb {C}}$

, which is just a copy of the projective line.

Remark 2.6. More generally, one can regard the total transform of

![]() $f=0$

as the (pull-back) divisor on S given by the equation

$f=0$

as the (pull-back) divisor on S given by the equation

![]() $\pi ^*f = f\circ \pi $

. It splits into the strict transform of

$\pi ^*f = f\circ \pi $

. It splits into the strict transform of

![]() $f=0$

in

$f=0$

in

![]() $\mathbf {x}^*$

and the exceptional divisor

$\mathbf {x}^*$

and the exceptional divisor

![]() $m\,\pi ^{-1}(\mathbf {x}^*)$

, where

$m\,\pi ^{-1}(\mathbf {x}^*)$

, where

![]() $m=m_{\mathbf {x}^*}(f)$

is the multiplicity of f at

$m=m_{\mathbf {x}^*}(f)$

is the multiplicity of f at

![]() $\mathbf {x}^*$

.

$\mathbf {x}^*$

.

In a local chart (

![]() $S_1$

or

$S_1$

or

![]() $S_2$

) of S, the strict transform of

$S_2$

) of S, the strict transform of

![]() $f=0$

is a curve in

$f=0$

is a curve in

![]() $\mathbb {A}^2_{\mathbb {C}}$

whose defining polynomial we denote by

$\mathbb {A}^2_{\mathbb {C}}$

whose defining polynomial we denote by

![]() $f'$

. Points

$f'$

. Points

![]() $[X^{\prime }_1:X^{\prime }_2]$

on

$[X^{\prime }_1:X^{\prime }_2]$

on

![]() $\mathbb {P}^1_{\mathbb {C}}\simeq \pi ^{-1}(\mathbf {x}^*)$

at which the strict transform intersects the exceptional line are called the first order infinitely near points of

$\mathbb {P}^1_{\mathbb {C}}\simeq \pi ^{-1}(\mathbf {x}^*)$

at which the strict transform intersects the exceptional line are called the first order infinitely near points of

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

, they are identified with tangents of

$\mathbf {x}^*$

, they are identified with tangents of

![]() $\mathcal {V}(F)$

at

$\mathcal {V}(F)$

at

![]() $\mathbf {X}^*=[x_1^*:x_2^*:1]$

via

$\mathbf {X}^*=[x_1^*:x_2^*:1]$

via

![]() $[X^{\prime }_1:X^{\prime }_2]\mapsto [X^{\prime }_1:X^{\prime }_2:X_3']$

, where

$[X^{\prime }_1:X^{\prime }_2]\mapsto [X^{\prime }_1:X^{\prime }_2:X_3']$

, where

![]() $X^{\prime }_3=-x_1^*X^{\prime }_1-x_2^*X^{\prime }_2$

. Given a first order infinitely near point

$X^{\prime }_3=-x_1^*X^{\prime }_1-x_2^*X^{\prime }_2$

. Given a first order infinitely near point

![]() $\mathbf {x}'\in \pi ^{-1}(\mathbf {x}^*)$

of

$\mathbf {x}'\in \pi ^{-1}(\mathbf {x}^*)$

of

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

, we can blow-up the affine chart

$\mathbf {x}^*$

, we can blow-up the affine chart

![]() $S_i$

containing

$S_i$

containing

![]() $\mathbf {x}'$

again and consider the associated strict transforms of

$\mathbf {x}'$

again and consider the associated strict transforms of

![]() $f'=0$

in

$f'=0$

in

![]() $\mathbf {x}'$

. After doing finitely many successive blow-ups at singular points of

$\mathbf {x}'$

. After doing finitely many successive blow-ups at singular points of

![]() $f=0$

and of its higher order strict transforms, we eventually end up with a smooth curve. This resolution of singularities process is guaranteed to terminate by [Reference Kollár25, Thm. 1.43].

$f=0$

and of its higher order strict transforms, we eventually end up with a smooth curve. This resolution of singularities process is guaranteed to terminate by [Reference Kollár25, Thm. 1.43].

Example 2.7. The polynomial

![]() $f=x_1^2+x_2^4-2x_1x_2^2+x_1^3+2x_1^4-2x_1^3x_2^2+x_1^6$

is singular at

$f=x_1^2+x_2^4-2x_1x_2^2+x_1^3+2x_1^4-2x_1^3x_2^2+x_1^6$

is singular at

![]() $(0,0)$

with

$(0,0)$

with

![]() $m_{(0,0)}(f)=2$

. Its strict transform (in the coordinates

$m_{(0,0)}(f)=2$

. Its strict transform (in the coordinates

![]() $(x_1', x_2)$

,

$(x_1', x_2)$

,

![]() $x_1=x_1'x_2$

) is given by

$x_1=x_1'x_2$

) is given by

and the unique first order infinitely near point

![]() $(x_1',x_2)=(0,0)$

of

$(x_1',x_2)=(0,0)$

of

![]() $f=0$

at

$f=0$

at

![]() $(0,0)$

has multiplicity

$(0,0)$

has multiplicity

![]() $m_{(0,0)}(f')=2$

. The strict transform of

$m_{(0,0)}(f')=2$

. The strict transform of

![]() $f'=0$

in

$f'=0$

in

![]() $(0,0)$

(in the coordinates

$(0,0)$

(in the coordinates

![]() $(x_1",x_2)$

with

$(x_1",x_2)$

with

![]() $x_1'=x_1"x_2$

) is given by

$x_1'=x_1"x_2$

) is given by

and the unique first order infinitely near point

![]() $(x_1",x_2)=(1,0)$

of

$(x_1",x_2)=(1,0)$

of

![]() $f'=0$

at

$f'=0$

at

![]() $(0,0)$

has

$(0,0)$

has

![]() $m_{(1,0)}(f")=2$

. The third blow-up

$m_{(1,0)}(f")=2$

. The third blow-up

![]() $x_1"=1+x_1"'x_2$

reveals

$x_1"=1+x_1"'x_2$

reveals

Since

![]() $f"'=0$

does not have singular points on the exceptional fiber

$f"'=0$

does not have singular points on the exceptional fiber

![]() $x_2=0$

, the resolution of singularities process terminates after the third blow-up.

$x_2=0$

, the resolution of singularities process terminates after the third blow-up.

The following Noether’s formula gives us a way to compute the local intersection multiplicity (2.3) by doing successive blow-ups, it is a refinement of Lemma 2.5.

Theorem 2.8 [Reference Casas-Alvero8, Lemma

$\mathrm{3.3.4}$

], [Reference Chalmovianská and Chalmovianský11, Theorem

$\mathrm{3.3.4}$

], [Reference Chalmovianská and Chalmovianský11, Theorem

$3.10$

].

$3.10$

].

Let

![]() $f, g\in \mathbb {C}[x_1,x_2]$

be polynomials that have no common factors of positive degree and let

$f, g\in \mathbb {C}[x_1,x_2]$

be polynomials that have no common factors of positive degree and let

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

be their common zero. Then

$\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

be their common zero. Then

where the sum is over common first order infinitely near points

![]() $\mathbf {x}'$

of

$\mathbf {x}'$

of

![]() $f=0$

and

$f=0$

and

![]() $g=0$

at

$g=0$

at

![]() $\mathbf {x}^*$

.

$\mathbf {x}^*$

.

We end this subsection with proving that blow-ups preserve nonnegativity of polynomials.

Lemma 2.9. Let

![]() $f\in \mathbb {R}[x_1,x_2]$

be a polynomial that is nonnegative locally around

$f\in \mathbb {R}[x_1,x_2]$

be a polynomial that is nonnegative locally around

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\,\mathbb {R}}$

. If

$\mathbf {x}^*\in \mathbb {A}^2_{\,\mathbb {R}}$

. If

![]() $f(\mathbf {x}^*)=0$

and

$f(\mathbf {x}^*)=0$

and

![]() $[X_1':X_2']\in \mathbb {P}^1_{\mathbb {R}}$

is a real first order infinitely near point of

$[X_1':X_2']\in \mathbb {P}^1_{\mathbb {R}}$

is a real first order infinitely near point of

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

, then the strict transform of

$\mathbf {x}^*$

, then the strict transform of

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

is given by a polynomial that is nonnegative locally around

$\mathbf {x}^*$

is given by a polynomial that is nonnegative locally around

![]() $(\mathbf {x}^*, [X_1':X_2'])\in S$

.

$(\mathbf {x}^*, [X_1':X_2'])\in S$

.

Proof. The blow-up (2.6) maps the real points in

![]() $S\setminus \pi ^{-1}(\mathbf {x}^*)$

bijectively to the real points of

$S\setminus \pi ^{-1}(\mathbf {x}^*)$

bijectively to the real points of

![]() $\mathbb {A}_{\mathbb {R}}^2\setminus \{\mathbf {x}^*\}$

. Then the sign of f at

$\mathbb {A}_{\mathbb {R}}^2\setminus \{\mathbf {x}^*\}$

. Then the sign of f at

![]() $\mathbf {x}\in \mathbb {A}_{\mathbb {R}}^2\setminus \{\mathbf {x}^*\}$

agrees with the sign of

$\mathbf {x}\in \mathbb {A}_{\mathbb {R}}^2\setminus \{\mathbf {x}^*\}$

agrees with the sign of

![]() $f'$

at

$f'$

at

![]() $\pi ^{-1}(\mathbf {x})$

, where

$\pi ^{-1}(\mathbf {x})$

, where

![]() $f'$

is the polynomial defining (in a chart) the strict transform of

$f'$

is the polynomial defining (in a chart) the strict transform of

![]() $f=0$

in

$f=0$

in

![]() $\mathbf {x}^*$

. The claim follows by continuity of

$\mathbf {x}^*$

. The claim follows by continuity of

![]() $f'$

, as the real points of

$f'$

, as the real points of

![]() $S\setminus \pi ^{-1}(\mathbf {x}^*)$

are dense in the real points of S.

$S\setminus \pi ^{-1}(\mathbf {x}^*)$

are dense in the real points of S.

2.3 The delta invariant and the SOS-invariant

The (local) delta invariant

![]() $\delta _{\mathbf {x}^*}(f)$

is a classical invariant of an isolated singular point

$\delta _{\mathbf {x}^*}(f)$

is a classical invariant of an isolated singular point

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

of an algebraic curve

$\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

of an algebraic curve

![]() $f=0$

that can be defined as the dimension

$f=0$

that can be defined as the dimension

of the integral closure

![]() $\overline {\mathcal {O}_{f,\mathbf {x}^*}}$

of the local ring

$\overline {\mathcal {O}_{f,\mathbf {x}^*}}$

of the local ring

![]() $\mathcal {O}_{f,\mathbf {x}^*}:=\mathcal {O}_{\mathbf {x}^*}/(f)$

of the curve

$\mathcal {O}_{f,\mathbf {x}^*}:=\mathcal {O}_{\mathbf {x}^*}/(f)$

of the curve

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

. We set

$\mathbf {x}^*$

. We set

![]() $\delta _{\mathbf {X}^*}(F):=\delta _{\mathbf {x}^*}(f)$

for a form

$\delta _{\mathbf {X}^*}(F):=\delta _{\mathbf {x}^*}(f)$

for a form

![]() $F\in \mathbb {C}[X_1,X_2,X_3]$

and

$F\in \mathbb {C}[X_1,X_2,X_3]$

and

![]() $\mathbf {X}^*\in \mathbb {P}^2_{\mathbb {C}}$

, where f is a dehomogenization of F and

$\mathbf {X}^*\in \mathbb {P}^2_{\mathbb {C}}$

, where f is a dehomogenization of F and

![]() $\mathbf {x}^*$

is the affine representative of

$\mathbf {x}^*$

is the affine representative of

![]() $\mathbf {X}^*$

.

$\mathbf {X}^*$

.

Remark 2.10. The sum

![]() $\delta (F)$

of

$\delta (F)$

of

![]() $\delta _{\mathbf {X}^*}(F)$

over all singular points

$\delta _{\mathbf {X}^*}(F)$

over all singular points

![]() $\mathbf {X}^*\in \mathbb {P}^2_{\mathbb {C}}$

of a reduced plane curve

$\mathbf {X}^*\in \mathbb {P}^2_{\mathbb {C}}$

of a reduced plane curve

![]() $\mathcal {V}(F)$

is known as the (total) delta invariant. By the genus-degree formula [Reference Casas-Alvero9, Section 3.11],

$\mathcal {V}(F)$

is known as the (total) delta invariant. By the genus-degree formula [Reference Casas-Alvero9, Section 3.11],

![]() $\delta (F)$

equals the defect between the geometric genus of

$\delta (F)$

equals the defect between the geometric genus of

![]() $\mathcal {V}(F)$

and its arithmetic genus.

$\mathcal {V}(F)$

and its arithmetic genus.

Similarly to Noether’s formula, one can define the local delta invariant as

![]() $\delta _{\mathbf {x}^*}(f)=0$

in case of a nonsingular point (that is,

$\delta _{\mathbf {x}^*}(f)=0$

in case of a nonsingular point (that is,

![]() $m_{\mathbf {x}^*}(f)=1$

) and otherwise, recursively, as

$m_{\mathbf {x}^*}(f)=1$

) and otherwise, recursively, as

$$ \begin{align} \delta_{\mathbf{x}^*}(f)\ =\ \frac{m_{\mathbf{x}^*}(f)(m_{\mathbf{x}^*}(f)-1)}{2} + \sum_{\mathbf{x}'} \delta_{\mathbf{x}'}(f'), \end{align} $$

$$ \begin{align} \delta_{\mathbf{x}^*}(f)\ =\ \frac{m_{\mathbf{x}^*}(f)(m_{\mathbf{x}^*}(f)-1)}{2} + \sum_{\mathbf{x}'} \delta_{\mathbf{x}'}(f'), \end{align} $$

where the sum is over all first order infinitely near points

![]() $\mathbf {x}'$

of

$\mathbf {x}'$

of

![]() $f=0$

at its isolated singularity

$f=0$

at its isolated singularity

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

. Note that in case of an ordinary singularity, formula (2.8) reveals

$\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {C}}$

. Note that in case of an ordinary singularity, formula (2.8) reveals

![]() $\delta _{\mathbf {x}^*}(f)=1$

. See [Reference Cassou-Noguès and Płoski10, Cor. 5.12] and [Reference Pham29, p. 389] for the equivalence between (2.7) and (2.8).

$\delta _{\mathbf {x}^*}(f)=1$

. See [Reference Cassou-Noguès and Płoski10, Cor. 5.12] and [Reference Pham29, p. 389] for the equivalence between (2.7) and (2.8).

Example 2.11. For the polynomial

![]() $f=x_1^3+(x_2^2-x_1^3-x_1)^2=x_1^2+x_2^4-2x_1x_2^2+x_1^3+2x_1^4-2x_1^3x_2^2+x_1^6$

from Example 2.7 the formula (2.8) gives us

$f=x_1^3+(x_2^2-x_1^3-x_1)^2=x_1^2+x_2^4-2x_1x_2^2+x_1^3+2x_1^4-2x_1^3x_2^2+x_1^6$

from Example 2.7 the formula (2.8) gives us

where

![]() $f'$

and

$f'$

and

![]() $f"$

define strict transforms of

$f"$

define strict transforms of

![]() $f=0$

and

$f=0$

and

![]() $f'=0$

at

$f'=0$

at

![]() $(0,0)$

.

$(0,0)$

.

For a reduced real

![]() $f\in \mathbb {R}[x_1,x_2]$

and

$f\in \mathbb {R}[x_1,x_2]$

and

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {R}}$

we also define the real delta invariant as

$\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {R}}$

we also define the real delta invariant as

![]() $\delta ^{\,\mathbb {R}}_{\mathbf {x}^*}(f)=0$

in case of a nonsingular point and otherwise as

$\delta ^{\,\mathbb {R}}_{\mathbf {x}^*}(f)=0$

in case of a nonsingular point and otherwise as

$$ \begin{align} \delta^{\,\mathbb{R}}_{\mathbf{x}^*}(f)\ =\ \frac{m_{\mathbf{x}^*}(f)(m_{\mathbf{x}^*}(f)-1)}{2} + \sum_{\mathbf{x}'\,-\, \textrm{real} } \delta_{\mathbf{x}'}^{\,\mathbb{R}}(f'), \end{align} $$

$$ \begin{align} \delta^{\,\mathbb{R}}_{\mathbf{x}^*}(f)\ =\ \frac{m_{\mathbf{x}^*}(f)(m_{\mathbf{x}^*}(f)-1)}{2} + \sum_{\mathbf{x}'\,-\, \textrm{real} } \delta_{\mathbf{x}'}^{\,\mathbb{R}}(f'), \end{align} $$

where the sum is over only real first order infinitely near points of

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

. The real delta invariant captures the complexity of resolving a real singularity, and thus it is the most relevant invariant for understanding local nonnegativity of a polynomial.

$\mathbf {x}^*$

. The real delta invariant captures the complexity of resolving a real singularity, and thus it is the most relevant invariant for understanding local nonnegativity of a polynomial.

Finally, the quantity that plays a prominent role in our work is the SOS-invariant

![]() $\delta ^{\,\textrm {sos}}_{\mathbf {x}^*}(f)$

of a nonnegative polynomial f at an isolated real zero

$\delta ^{\,\textrm {sos}}_{\mathbf {x}^*}(f)$

of a nonnegative polynomial f at an isolated real zero

![]() $\mathbf {x}^*$

.

$\mathbf {x}^*$

.

Definition 2.12. The SOS-invariant

![]() $\delta ^{\,\textrm{{sos}}}_{\mathbf {x}^*}(f)$

of a nonnegative polynomial f at an isolated real zero

$\delta ^{\,\textrm{{sos}}}_{\mathbf {x}^*}(f)$

of a nonnegative polynomial f at an isolated real zero

![]() $\mathbf {x}^*$

is defined by

$\mathbf {x}^*$

is defined by

![]() $\delta ^{\,\textrm{{sos}}}_{\mathbf {x}^*}(f)=1$

in case of an ordinary singularity and

$\delta ^{\,\textrm{{sos}}}_{\mathbf {x}^*}(f)=1$

in case of an ordinary singularity and

$$ \begin{align} \delta^{\,\textrm{{sos}}}_{\mathbf{x}^*}(f)\ =\ \frac{m_{\mathbf{x}^*}(f)^2}{4} + \sum_{\mathbf{x}'\,-\,\textrm{{real}} } \delta^{\,\textrm{{sos}}}_{\mathbf{x}'}(f') \end{align} $$

$$ \begin{align} \delta^{\,\textrm{{sos}}}_{\mathbf{x}^*}(f)\ =\ \frac{m_{\mathbf{x}^*}(f)^2}{4} + \sum_{\mathbf{x}'\,-\,\textrm{{real}} } \delta^{\,\textrm{{sos}}}_{\mathbf{x}'}(f') \end{align} $$

in general, where again the sum is over real first order infinitely near points of

![]() $f=0$

at

$f=0$

at

![]() $\mathbf {x}^*$

.

$\mathbf {x}^*$

.

It is easy to see that, for nonnegative f with an isolated real singularity

![]() $\mathbf {x}^*$

one has that

$\mathbf {x}^*$

one has that

By Lemma 3.5 we have equalities in (2.12), if

![]() $\mathbf {x}^*$

is a real zero of f of multiplicity two. However, this is not true in general, as we discuss in Example 3.12. Furthermore, if

$\mathbf {x}^*$

is a real zero of f of multiplicity two. However, this is not true in general, as we discuss in Example 3.12. Furthermore, if

![]() $f\in \mathbb {R}[x_1,x_2]$

is the dehomogenization of a nonnegative ternary form

$f\in \mathbb {R}[x_1,x_2]$

is the dehomogenization of a nonnegative ternary form

![]() $F\in \mathbb {R}[X_1,X_2,X_3]$

and

$F\in \mathbb {R}[X_1,X_2,X_3]$

and

![]() $\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {R}}$

is the affine representative of

$\mathbf {x}^*\in \mathbb {A}^2_{\mathbb {R}}$

is the affine representative of

![]() $\mathbf {X}^*\in \mathbb {P}^2_{\mathbb {R}}$

, then we set

$\mathbf {X}^*\in \mathbb {P}^2_{\mathbb {R}}$

, then we set

![]() $\delta ^{\,\textrm {sos}}_{\mathbf {X}^*}(F)=\delta ^{\,\textrm {sos}}_{\mathbf {x}^*}(f)$

,

$\delta ^{\,\textrm {sos}}_{\mathbf {X}^*}(F)=\delta ^{\,\textrm {sos}}_{\mathbf {x}^*}(f)$

,

![]() $\delta ^{\,\mathbb {R}}_{\mathbf {X}^*}(F):=\delta ^{\,\mathbb {R}}_{\mathbf {x}^*}(f)$

.

$\delta ^{\,\mathbb {R}}_{\mathbf {X}^*}(F):=\delta ^{\,\mathbb {R}}_{\mathbf {x}^*}(f)$

.

Our definition of the SOS-invariant is motivated by two things. First, by using

![]() $\frac {1}{4}m_{\mathbf {x}^*}(f)^2$

(and not

$\frac {1}{4}m_{\mathbf {x}^*}(f)^2$

(and not

![]() $\frac {1}{2}m_{\mathbf {x}^*}(f)(m_{\mathbf {x}^*}(f)-1)$

as in (2.8) and (2.10)), we ensure that

$\frac {1}{2}m_{\mathbf {x}^*}(f)(m_{\mathbf {x}^*}(f)-1)$

as in (2.8) and (2.10)), we ensure that

![]() $\delta ^{\,\textrm{{sos}}}_{\mathbf {x}^*}(f)$

scales well under taking powers of f, see Proposition 3.2. Second,

$\delta ^{\,\textrm{{sos}}}_{\mathbf {x}^*}(f)$

scales well under taking powers of f, see Proposition 3.2. Second,

![]() $\delta ^{\,\textrm{{sos}}}_{\mathbf {X}^*}(F)$

(for

$\delta ^{\,\textrm{{sos}}}_{\mathbf {X}^*}(F)$

(for

![]() $F\in \Sigma _{3,d}$

) is a lower bound for the intersection multiplicity at

$F\in \Sigma _{3,d}$

) is a lower bound for the intersection multiplicity at

![]() $\mathbf {X}^*$

of two forms in

$\mathbf {X}^*$

of two forms in

![]() $E(F,\mathbf {X}^*)$

, see Proposition 3.1. These properties are crucial for the proof of Theorem 1.1.

$E(F,\mathbf {X}^*)$

, see Proposition 3.1. These properties are crucial for the proof of Theorem 1.1.

Remark 2.13. The notion of the SOS-invariant

![]() $\delta ^{\,\textrm{{sos}}}_{(0,0)}(f)$

makes sense if f is nonnegative only in a neighborhood of its real zero. Moreover, one can, more generally, consider a locally nonnegative convergent power series

$\delta ^{\,\textrm{{sos}}}_{(0,0)}(f)$

makes sense if f is nonnegative only in a neighborhood of its real zero. Moreover, one can, more generally, consider a locally nonnegative convergent power series

![]() $f\in \mathbb {R}\{x_1,x_2\}$

at

$f\in \mathbb {R}\{x_1,x_2\}$

at

![]() $(0,0)$

.

$(0,0)$

.

As we already mentioned, in Section 3 we show that for any

![]() $H_1,H_2\in E(P,\mathbf {X}^*)$

in the local SOS-support of

$H_1,H_2\in E(P,\mathbf {X}^*)$

in the local SOS-support of

![]() $P\in P_{n,d}$

we have that

$P\in P_{n,d}$

we have that

![]() $\delta ^{\,\textrm {sos}}_{\mathbf {X}^*}(P) \,\leq \, I_{\mathbf {X}^*}(H_1,H_2)$

. We conjecture that the equality holds if

$\delta ^{\,\textrm {sos}}_{\mathbf {X}^*}(P) \,\leq \, I_{\mathbf {X}^*}(H_1,H_2)$

. We conjecture that the equality holds if

![]() $H_1,H_2\in E(P,\mathbf {X}^*)$

are chosen generically.

$H_1,H_2\in E(P,\mathbf {X}^*)$

are chosen generically.

Finally, we define the (total) SOS-invariant of

![]() $P\in P_{3,d}$

with finitely many zeros in

$P\in P_{3,d}$