1. Introduction

The establishment of electronic music ensembles and laptop ensembles in higher education around the world raises interesting questions of how composition, orchestration and notation relate to the practice of electronic and/or digital musical instruments, DMIsFootnote 1 for short.

There are currently many well-established DMI ensembles, often linked to music colleges, such as PLOrk, SLOrk and CLOrk from Princeton, Stanford and Concordia Universities, and our own Live-electronics ensemble at the Royal College of Music in Stockholm. In these kinds of ensembles, students typically perform, improvise and compose music for ensemble performance using electronic or digital musical instruments. Many DMIs that are presented in research contexts were developed and explored within the framework of such ensembles, see e.g. Salazar et al. (Reference Salazar, Reid and McNamara2017) and Berdahl et al. (Reference Berdahl, Pfalz, Blandino, Beck, Papetti and Saitis2018).

As a composer, I have been increasingly interested in how musical work with DMIs relates to composition practice in general. In this text, I investigate the conditions for composing and notating music for DMIs, relating the instruments’ characteristics to a music theoretical context, particularly orchestration and sound-based music analysis. Some instruments, like the Karlax Controller (Mays and Faber Reference Mays and Faber2014) or the Magnetic Resonator Piano (McPherson Reference McPherson2010), are relatively easy to incorporate in a traditional (Western) music practice thanks to their design, whereas semi-modular sequencer synths like Moog Mother 32 are trickier to deal with in this regard.

Ideally, DMI creators would increasingly focus their explorations not only on interface and sound-generating designs but also on their creations’ relation to a contemporary musicking (Small Reference Small1998) practice. The lack of a (shared) approach to DMIs as parts of a defined contemporary music practice is part of the reason why some of these instruments are limited to their creators’ artistic output.

In this paper, I first discuss DMIs in terms of traditional orchestration, starting from how percussion instruments are described in orchestration literature. After all, the purpose of orchestration teaching is to help composers use and combine sound sources with relevance for a given musical practice. Organology approaches to the description and classification of DMIs have been discussed before, see e.g. Paine (Reference Paine2010) and Magnusson (Reference Magnusson2017). I am then looking at how DMIs can be further explored in terms of symbolic notation for electroacoustic music analysis. The ideas are exemplified with a DMI from my own practice and through a case study of KMH’s Live-electronics ensemble, in which the students develop their own instrument practices on electronic instruments of their choice for the sake of ensemble performance.

2. Background and current research

Throughout the history of electroacoustic music, scholars have discussed how this music relates to established notions of music and its theory. Pierre Schaeffer made a point of relating the possibilities of musique concrète and electronic music to traditional Western music, noting that traditional music theory had reached three dead ends: the note was the archetype of musical objects, sound sources were assumed to be acoustic, and aesthetic and analytical tools could not handle the new music: ‘…it would be better to admit that, after all, we do not know very much about music. And worse still, what we do know is more likely to lead us astray than to guide us’. (Schaeffer Reference Schaeffer2017: 5). Schaeffer’s solution was to develop a form of music-psychology-informed music theory focusing on expanding the concept of the note using a phenomenological approach to sound-based music (Schaeffer Reference Schaeffer2017).

Building on Schaeffer’s research, Denis Smalley’s spectromorphology also describes sound as heard, but through the sets of metaphors describing different aspects of sound events and sound textures in a musical context. Particularly worth noting in this context is Smalley’s notion of gesture surrogacy, how he considered a sound’s perceived gesturality an important aspect of the experience of a sound regardless of its origin, electronic or acoustic (Smalley Reference Smalley1997). Both Smalley and Schaeffer were explicitly focusing on the outcomes of sound activities rather than their physical conditions.

A more recent contribution is Thor Magnusson’s Sonic Writing, where today’s post-digital DMI systems of interactivity, interconnectivity and machine learning are related to a thorough historical discussion of musical instruments, music notation and music theory as they evolved with the development of new techniques and technologies (Magnusson Reference Magnusson2019). Magnusson relates the (coded) rule-based DMI systems of today to a time in music history when focus was on music theory as the condition for music production rather than unique scored works to be reproduced according to the performance practice of its era (Magnusson Reference Magnusson2019).

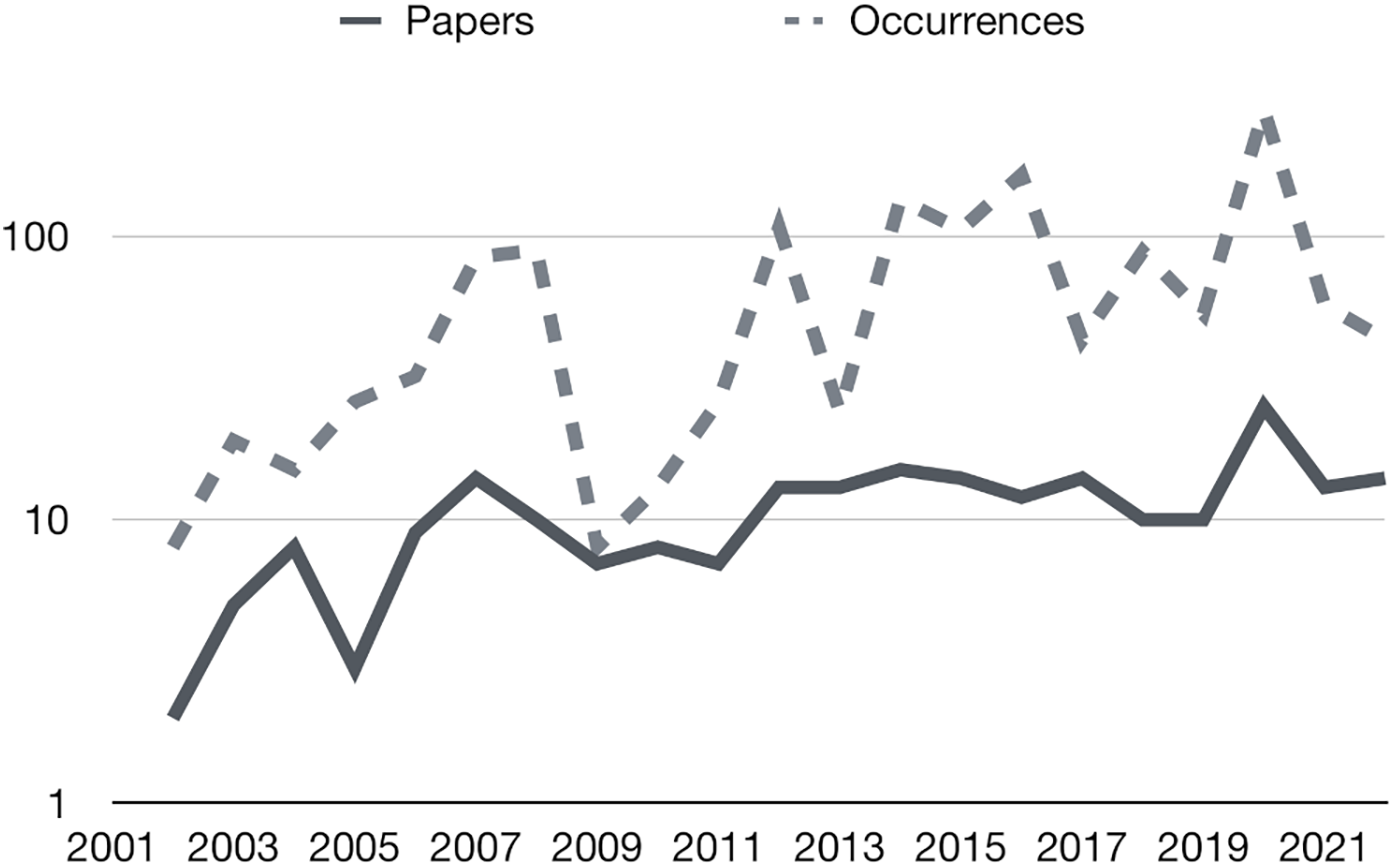

It is easy to see why composition and notation would become important for the development of DMIs, given that many DMIs are developed in the context of higher music education in composition, and the instruments themselves can be hybrids of instruments and musical works. An increasing research interest in notation related to new music technologies can be seen both in the establishment of the international conference TENORFootnote 2 in 2015 and in the number of articles and occurrences of the word ‘notation’ in The International Conference on New Interfaces for Musical Expression (NIME)Footnote 3 proceedings over time, shown in Figure 1. The inventions of new instruments are accompanied by developments of new technologies for notation, such as the Decibel Score Player (Hope and Vickery Reference Hope and Vickery2015), which can work perfectly with the semi-improvisational character of many DMIs, e.g. using animated scores of graphic notation. In the absence of established notation solutions for DMI ensemble performance, graphic notation and its relative openness to interpretation have proven useful for ensemble performance.

Table of the number of papers and number of occurrences of the word ‘notation’ each year in the NIME proceedings from 2001 till 2021.

3. Describing DMIs

3.1. The orchestration manual

My main reason for relating DMIs to traditional orchestration literature is how these sources typically focus on what is important to know for effective and predictable use. How can one or several sounding objects be placed in an artistic context, taking into consideration both practical and aesthetic aspects?

Having worked extensively with both percussion ensembles and DMI performances in various settings, I see several important similarities between the two instrument groups. Percussionists are ready to use any instrument or non-instrument as a sound source (Cope Reference Cope1997). Like the percussion instrument storage of a well-equipped musical institution, the tools available to a DMI performer typically lend themselves to a variety of setups, timbres and playing techniques, while it would be impractical both from a composition and performance perspective to consider the total capacity of one’s equipment as an instrument. Some DMI devices are more of a premise for an instrument than instruments themselves in a traditional sense; both percussionists and DMI performers commonly perform on specific selections and connections of instrument components for the sake of focused work in a given context. While there are indeed important differences between performance with physical objects and electronic devices, we can learn much from how artistic problems are solved in both fields.

3.2. Disposition of traditional instrument descriptions

In The study of orchestration (Adler Reference Adler1989), the chapter on percussion instruments begins with a review of categories such as idiophones and membranophones. Magnusson starts from a similar approach in his organology of NIMEs (Magnusson Reference Magnusson2017). Adler goes on to discuss notation in general terms and the number of players: ‘How many percussion instruments can one person play simultaneously? Could one of these players cover two or more instruments at a time?’ (Adler Reference Adler1989: 372). This question is relevant for DMI setups, too. A Minimoog would not rank as belonging to the more complex electronic instruments today, and still, with its 28 knobs, 17 buttons, 2 wheels and 44 keys, there is an obvious limit to what can be utilised at once in performance using two hands. Following his general description of percussion, Adler moves on to describe particular percussion instruments in terms of instrument build, sound character, particularities of the instrument, successful use, notation principles, and playing techniques (Adler Reference Adler1989).

3.3. General description of DMI instruments

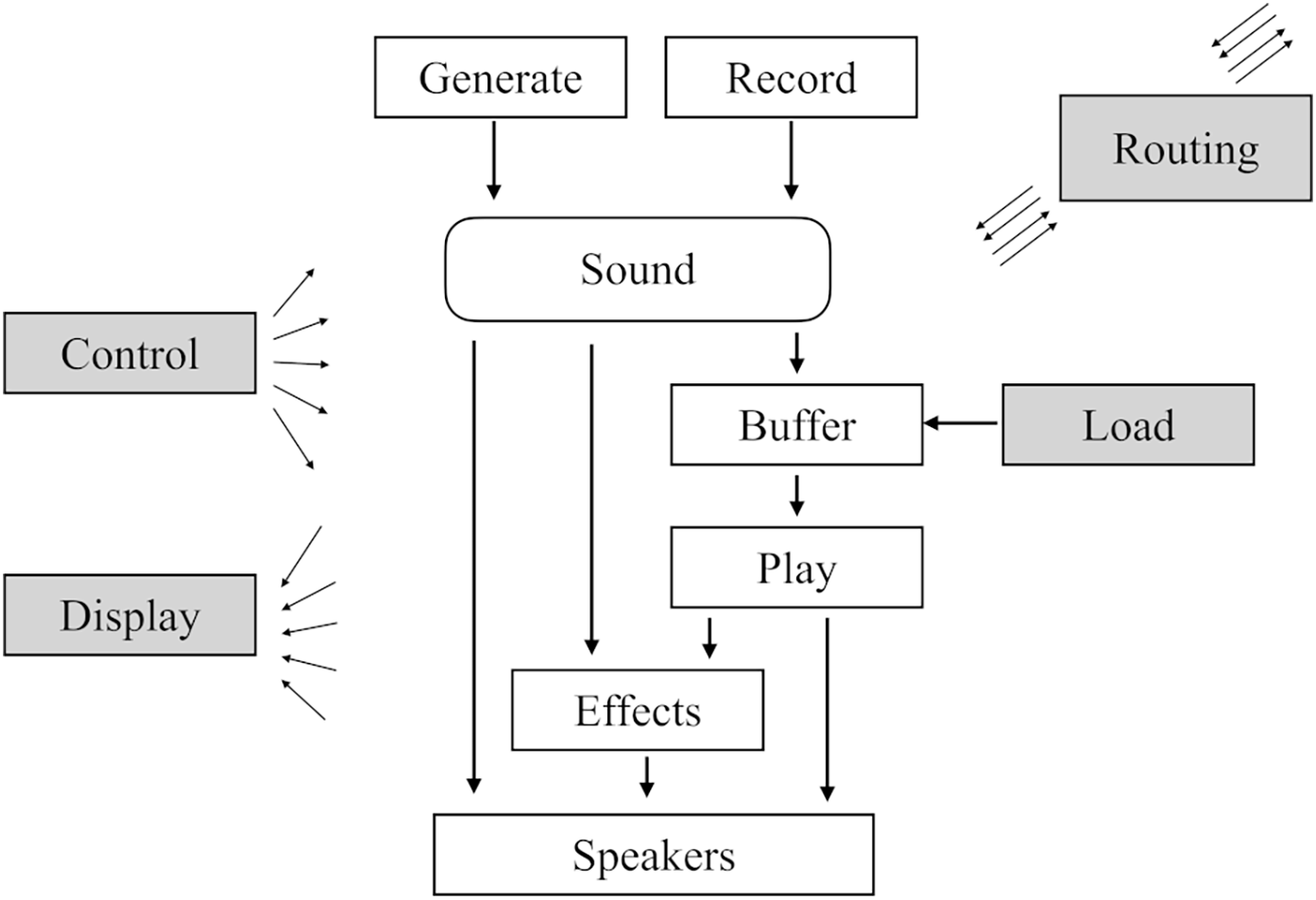

Like strings, pipes and reeds form fundamental sound-producing categories among acoustic musical instruments, the two main categories of electronic music remain the division between the use of recorded or generated (synthesised) sounds, with their legacies from the Paris and Cologne studios. Though the use of buffers for computer music blurs the line between the two, they remain meaningful categories for making sense of DMI designs. Figure 2 shows a possible generalised architecture of a DMI instrument; sounds are generated and/or recorded, possibly stored in a buffer for playback, sent through effects or directly to speakers. And any stage in the process can be subject to control and information display. Also, internal and external routing possibilities can increase the complexity of the instrument (see Figure 2).

The general architecture of a DMI instrument. Internal and/or external routing adds significantly to the complexity of the structure.

3.3.1. Delimiting DMIs

Like a percussionist assembles a setup of instruments for the performance of a particular work, the DMI performer may need to decide how to delimit the totality of a system into one or multiple playable instrument instances. For solo performances, a more complex system of several instrument instances may be called for, while it can be impractical to work simultaneously with multiple instruments when acting as part of a larger ensemble, perhaps for the sake of performer equality. Equality in this case would also typically mean that any sounding DMI instance should have the capacity to adapt reasonably quickly to the musical choices of other performers, or at least as quickly as the other performers.

3.3.2. Continuous sound output

With a few exceptions among acoustic instruments, like spring-wound music boxes or weights on organ keys, a defining feature of DMIs is the capacity for unattended continuous production of sound. This can be problematic for ensemble performance, when attention to the individual expressions of each player is important. Palle Dahlstedt has discussed his moving away from unattended sound flows in his work with DMIs, taking on an approach inspired by acoustic instruments where there can be no sound unless one’s hands are on the instrument; ‘no hands—no sound’ (Dahlstedt Reference Dahlstedt, Ystad, Kronland-Martinet and Jensen2009: 2).

Related are the DMI functions specifically designed for generating additional layers of sound through functions such as loop playback, automated play functions, spectral freeze states, etc. Such functions can all be challenging both for the individual performer and for the ensemble situation when music that is actively performed suddenly co-exists with added layers of sound occupying the exact same spectral space, as is the case when a DMI performance is subject to loop playback or delay lines. An additional challenge with the introduction of loop playback for ensemble improvisation performance is how the loop time setting may inadvertently take on the role of defining everyone’s musical time.

3.3.3. Co-existence with acoustic instruments

The general rule is that the more the DMI behaves like an acoustic instrument in terms of interface related to sound output, the easier it is to integrate into a traditional acoustic setting. The commercial success of early Moog synthesisers having keyboards is a testament to that.

An important aspect of acoustic ensemble performance is how the mixing of sound sources is a constant ear-based negotiated adjustment of dynamics. Regardless if the DMI is more of a sound texture- or a sound gesture-oriented instrument, this means that for a DMI performer to handle an ensemble situation involving acoustic instruments, there is the need for more or less continuous dynamics control, possibly using a volume pedal, borrowing the established method for dynamics control for pipe organs (being an instrument that must handle some of the same challenges as DMIs). More importantly, for chamber music settings, the loudspeaker for the DMI must be placed immediately next to the performer, or the whole ear-based negotiated mixing of sources will fail. No acoustic chamber music performer would accept that their dynamics be subject to additional changes by a separate person across the room.Footnote 4

3.3.4. Playing techniques

Much has been written about the user interfaces, control signals and mappings involved in the design of DMIs, and some novel interfaces like the Reactable Footnote 5 and the Mi. Mu Gloves Footnote 6 gained wide attention thanks to their use by artists like Björk and Imogen Heap (who is part of the developing team for the gloves), respectively. An important question for composers writing for DMIs is what level of predictability can be achieved, given the DMI’s designed mapping of control interface to sound engine(s). Also, can absolute values and/or value changes be accessed from the instrument? Can presets and save states be used during performance?

Skilled playing techniques with DMIs may not be so much about dexterity as they are about achieving increased levels of versatility and immediacy with achieving the desired musical results, given the capacity of one’s instrument.

4. DMIs in relation to symbolic notation

One reason behind my research on sound notation (Sköld Reference Sköld2020, Reference Sköld2023) was to rationalise concepts of electroacoustic music analysis and, also, to move away from individual tailor-made solutions for describing and analysing this music. I see a need for the same treatment of DMI-performed music, where the rich diversity of imaginative interfaces and architectures of NIMEs can easily be confused with an equal diversity of sound capabilities. In other words, different interfaces or even different synthesis engines in DMIs do not necessarily provide equally different-sounding outputs.

And even though different instruments may have their own characteristic sound identities, the practice of electroacoustic music analysis has taught us that there are indeed ways of rationalising also the rich sound possibilities of recorded and synthesised sounds.

4.1. Relating DMIs to sound categories

One starting point for approaching DMIs from the perspective of analysis, which I have used with my students, is to consider the sounding output of DMIs in relation to the expanded diagram of spectromorphological analysis notation symbols by Thoresen and Hedman (Reference Thoresen and Hedman2007), seen in Figure 3. This is a chart of sound spectrum and energy articulation categories for analysis of sound-based music using a phenomenological approach. As a way of making sense of a DMI’s musical potential, one may consider what areas of the analysis category chart can be covered by the instrument. Where lies the instrument’s sounding focus, and to what degree can the instrument’s sound move between the categories of the analysis chart? Some instruments excel in music with slowly evolving timbre structures because of their capacities for sustained sounds of varying complexity. Those sounds belong to Thoresen’s stratified and vacillating energy articulation categories on the left side of the chart (Figure 3). Other instruments, typically setups involving sequencers, can generate sounds in Thoresen’s composite energy articulation category, i.e. rhythmic sounds.

The expanded diagram of the typology in spectromorphological analysis notation. (Thoresen and Hedman Reference Thoresen and Hedman2007: 134). The columns are energy articulation categories with increasing unpredictability towards the leftmost and rightmost columns. The rows are sound spectrum categories, with stable and variable categories.

By refining the analysis to assess which subcategories are most suitable for describing a DMI’s sound, a better understanding of the instrument’s musical capacity can be obtained. For example, sequencer-based DMIs can typically produce rhythmic material in Thoresen’s regular pulse category (not shown in Figure 3). Achieving rhythmic textures described by Thoresen’s oblique or irregular pulse categories may call for instrument design choices that allow deviation from a regular pulse.

4.2. Relating DMIs to sound notation

Relating a DMI’s sound output to Thoresen’s analysis categories means connecting the instrument to a possible music analysis of its output based on descriptive categories related to the music as heard. In my sound notation system, which builds on Thoresen and Hedman’s symbolic notation (Thoresen and Hedman Reference Thoresen and Hedman2007), all sound categories and their symbols are related to a measurable acoustic reality, represented symbolically over a spectrum staff, i.e. a multiple-staff system covering the full audible range (Sköld Reference Sköld2020). Sound notation can, therefore, be used both for describing and instructing musical performance based on timbre-related qualities in sound objects. The main sound notation indicators are spectrum category, spectral width, spectral density, granularity, significant partials, amplitude envelope and modulation/variation (Sköld Reference Sköld2020). A challenge with relating one’s instrument to sound notation rather than Thoresen’s phenomenological analysis notation is the requirement from the interface to allow access to specific pitch values, frequency registers, etc. This may not be practical regarding how DMIs are most commonly used. Case studies have, however, shown that it is possible to reinterpret electroacoustic music from its sound notation transcription (Sköld Reference Sköld2022a), suggesting that it should be possible to do the same with live-electronic music.

4.3. Parameter notation

Having worked for many years with electroacoustic music and live-electronic elements in a contemporary music setting, it is not often that I have come across more generalised action notation for DMIs. There are published examples, such as Jennifer Butler’s pedagogical work (Butler Reference Butler2008), and one can take inspiration from solutions developed for specific instruments and situations, such as the Karlax axis notation (Mays and Faber Reference Mays and Faber2014). An interesting field of notation in this regard concerns scratching, see e.g. the Turntablist Trascription Method (TTM), which takes gesturality, time and loop lengths into account (Carluccio et al. Reference Carluccio, Imboden and Pirtle2000). The notation style in TTM is reminiscent of automation lines in digital audio workstations.

When I have performed other composers’ works, I have preferred a generalised parameter notation that is not necessarily related to the particularities of a user interface, but that shows clearly what DMI parameter settings and changes to achieve in relation to musical time or clock time. I have found graphical representations of rotary control knobs, the kind of knobs found on most hardware synthesisers, convenient symbols for displaying values and value changes to be performed using synthesisers or control surfaces. Displayed automation style lines may be convenient for prescribing vertical fader positions, or gestures as in TTM above, but for performances relying on quickly reaching specific values for one-dimensional parameters, I find rotary knob images more reliable, as exemplified under 5.1.

4.4. Instrument setup reproducibility

An interesting challenge related to the notation of DMIs, and to the growing repertoire of notated works utilising electronic instruments, is the question of instrument setup reproducibility. Historical Western musical scores rely on the interpreter’s knowledge of what instrument setup the notated symbols were meant for and to what extent one can substitute it with more contemporary instruments, as in the case of Mozart’s Horn concertos, written for natural horns, though commonly performed on valved horns with a notably different sounding result. A more recent ‘historical’ example is Peter Eötvös’s Atlantis for voices, orchestra and electronic instruments (Eötvös Reference Eötvös1995), which needs ‘three DX7-II synthesizers OR Native Instruments FM8 1.3.0 or later software synth’.Footnote 7 Given the fame and popularity of the Yamaha DX7, it is safe to assume that music written for it will be possible to reproduce in the foreseeable future. The question is how we should approach notation for lesser-known instruments to ensure results similar or equivalent to the original. In my Requiem oratorio (Sköld Reference Sköld2010), the (relatively simple) synthesiser part’s cover sheet contains information on the synthesis design used for each of the 16 presets needed throughout the work to ensure compatibility with most polyphonic analogue-style synthesisers.

4.5. Summary – The DMI instrumentation questionnaire

With all this in mind, what would one need to know about DMI instruments to compose and or notate for such instruments? These topics could be a starting point:

-

The sound-generating principles and architecture

-

The instrument’s capacity to produce sounds according to a relevant table of sound categories and/or notation

-

- The instrument’s capacity to move between these categories, continuously or in steps

-

-

The interface for performance

-

- The most convenient and communicative way of notating the actions performed using this interface

-

-

Preferably also (notated) examples of the instrument used effectively

5. Examples from DMI practice

5.1. A Micro Modular preset

To exemplify the ideas described above, I start by presenting one instrument setup from my own practice, which I have used extensively in the past for various performances. It is a preset for Clavia’s Nord Micro Modular synthesiser (henceforth, Micro Modular).Footnote 8

5.1.1. Sound-generating principles and architecture

It is a virtual modular setup, stored as a preset, using 4 oscillators, one mono filter, overdrive, one mixer, crossfades and some control functions (see Figure 4). In short, two main oscillators (OscA modules on the left side) provide pitched sounds according to preset diatonic scales (using the KeyQuant modules). Pitch is controlled by one control dial using different pitch ranges for the two oscillators (the blue knob ranges on the Constant modules). Portamento modules make pitch changes continuous, making theremin-style glissandi possible while always landing on the preset scale steps. The other two oscillators (FormantOsc and SpectralOsc) are used for frequency modulation and timbre-related variations of the overall sound.

Screenshot of the author’s instrument preset displayed in the Clavia Nord Micro Modular Editor.

5.1.2. Capacity to produce sounds according to a table of sounds

This virtual modular setup only produces sound in Thoresen’s sustained or stratified energy articulation categories (See Figure 3), i.e. relatively simple and coherent timbre structures without rhythmical elements. The pitched and dystonic (inharmonic) spectrum categories are possible and can be subject to variation. (This relates to the lower half of the chart in Figure 3, which are categories for sounds with varying timbre.) With extreme settings, involving intense frequency modulation, the sound from this preset can approach Thoresen’s complex (non-pitched) spectrum category. While the technology could allow stepwise changes, the user interface only allows for continuous changes, making it difficult to instantly reach specific parameter values.

5.1.3. The interface for performance

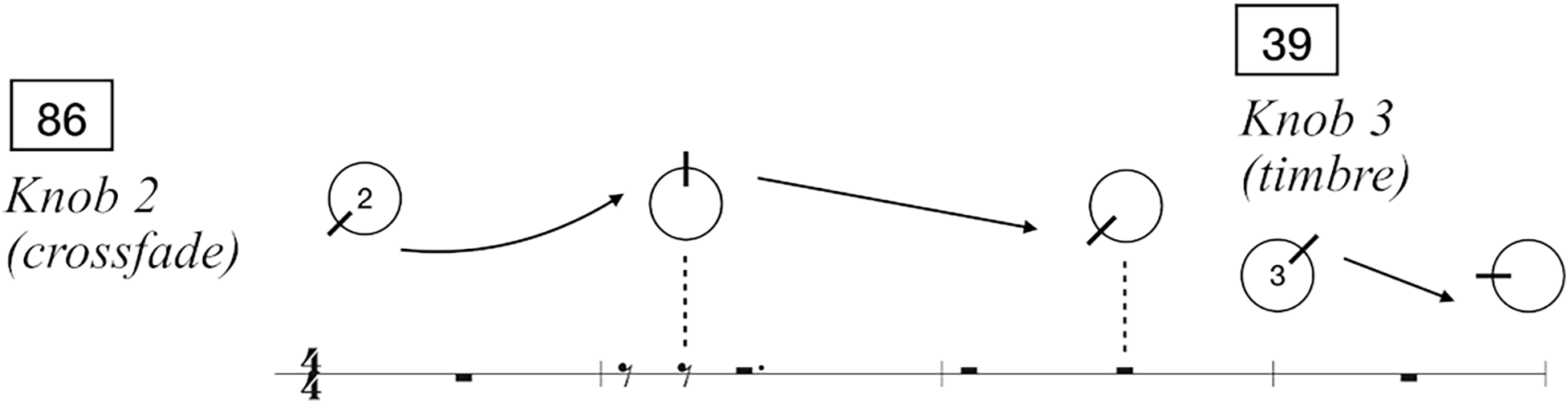

The user interface for the Micro Modular consists of four knobs, the first always acting as the master volume control. The other three are patchable to one or multiple parameters in the virtual analogue setup. For this preset (see Figure 5), Knob 1 controls pitch för both main oscillators, knob 2 only crossfades between the two main oscillators, where the second one is subjected to more elaborate timbre changes. Knob 3 controls timbre changes by introducing frequency modulation, also controlling the frequency of one of the two modulating oscillators. Put in musical terms, the first knob controls pitch, the second controls the number of voices, particularly regarding the two scale-bound oscillators. The third knob ‘cracks’ the sound, going from pure sound waves to more complex sound textures.

Assigned playing controls for the Micro Modular synthesiser preset described in the text.

5.1.4. Suitable notation

When performing on the Micro Modular in traditionally notated ensemble works, I have used a simple and straightforward notation of knob positions and changes along the traditionally scored notation, in combination with numbered preset changes (see Figure 6). This was also the case in an orchestral work, 2 to 2 (Magnusson Reference Magnusson2004) by Swedish composer Per Magnusson, where a combination of pre-defined presets and assigned knob value positions and value changes gave Magnusson total control over the two performed Micro Modular parts in relation to the orchestra.

Example of simple notation of parameter and preset changes.

In my own live-electronic performance practice, the notation has usually been more of a structural overview of a semi-improvised performance relying mainly on a progression of synth preset changes rather than detailed descriptions of knob movements as seen in Figure 7. On the paper are the descriptions of the instruments used, their interconnectivity, and when they are to be used, as well as what presets to change for moving forward in the music. There are also some rudimentary indicators for musical progression in terms of lines and arrows, mainly indicating the extension or introduction of musical elements (see Figure 7). In line with Thor Magnusson’s reasoning in Magnusson (Reference Magnusson2019), one could argue that in this case, the signal connections, the presets and samples used, are the actual score. Instrument (or system) design and composition are one and the same.

Live-electronic performance score from a live performance by the author at Fylkingen, Stockholm in 2007.

5.1.5. Examples of the instrument used effectively

I would argue that the successful use of this instrument in relation to its possibility for music and musicking is as an expressive and versatile foreground element in music compatible with its set scales. I have used iterations of this in various performances thanks to the simplicity of forming varying gestures in a harmonic context. Two variations of this instrument can be heard on the final track on the album Taroom (Sköld Reference Sköld2008)Footnote 9 , which is a collection of pieces developed as live performances. Another version of the instrument can be heard in an acoustic ensemble setting on The Lighthouse by Anagram (2007), written by Anna Einarsson.

5.2. Student instrument descriptions

5.2.1. Introduction

As previously mentioned, an incentive for this research is the extensive practice of live-electronic music that has developed over the past 20 years in my institution. As part of this research, I formulated a short workshop study that my colleague Mattias Petersson and I conducted with students at the Royal College of Music in Stockholm (KMH) within the framework of his course Live-Electronics Ensemble (KMH 2019) in October 2024.

The workshop and the assignment the students made in preparation for it were meant as an indirect exploration of the notation possibilities for live-electronic instruments. The students were asked to describe their instruments and the necessary steps for making (meaningful) sounds with them. Potentially, their assignments could contain all the necessary information to compose and notate music for their instruments (based on the instrument setups they chose to describe). They were instructed not to design notation since I wanted to start from the instruments, their interfaces and performance practices as described by their practitioners.

5.2.2. Participants

Eight students gave their consent to participate in the study. Their average age was 39.13 years (SD = 7.79 years). The students were enrolled in the bachelor’s programmes in electroacoustic music composition and music production at KMH, and all were experienced music creators.

5.2.3. Assignment

Prior to the workshop session, the participants were sent this assignment to prepare for the workshop (author’s translation from Swedish):

Make, each individually, a pedagogical beginner’s instruction on an A4 paper/pdf with text and image describing how to play on one or more instances of your instrument with a given configuration. Patch/status changes are ok, but the musician should not need to patch or re-programme, but ‘just play’.

The instruction should clearly state which controls/approaches to use and their sonic impact/result so that someone else can compose for the instrument with a predictable musical result. NOTE! No instructions for the instrument’s notation should be included.

Note:

-

(a) things you need to keep in mind when playing

-

(b) how predictably the instrument behaves in relation to a desired result

-

(c) whether there are playing modes and playing styles where the instrument comes into its own

-

(d) whether there are playing modes and playing styles that do not work as well on the instrument

Remember that the task is not necessarily about all the playing and sound possibilities of the instrument but about one instance (or possibly a selection of specific instances) of the instrument.

5.2.4. Method

The students were introduced to the assignment two weeks in advance. These were handed in on paper and/or as PDF files in time for the workshop. The basic data for this study are the eight assignment documents. To analyse them, I used a basic form of qualitative text analysis with an open coding approach, using text colour and descriptive code tags to highlight categories of information in the texts, later placing texts from the same categories side by side for comparison. Even though the texts were the results of explicit instructions, they were not structured similarly and emphasised different aspects of the instruments.

5.2.5. Results

The instruments used by the participants were three different semi-modular desktop synthesisers, one modular synthesiser, one SuperCollider synthesis design with a control interface, three examples of acoustic or pre-recorded sound sources with electronic devices (EBows) or effect pedals.

In terms of sound-generating principles and architecture, the eight participants provided straightforward descriptions of the objects, devices and components in their setups, such as the sound generators of the modular, semi-modular and software synths, and the effect pedals used with acoustic and pre-recorded sound sources.

Seven of the eight participants described some form of initial instrument setup or ‘make-a-sound’ instruction as a starting point. The nature of these varied depending on the nature of the instrument. For the synthesisers, these were related to connectivity, synthesiser settings and choice of modules as starting points. For the acoustic sound sources, it was a combination of proposed initial sounds to produce and suggested techniques for capturing and/or interacting with the sound. Three provided step-by-step guides to get started with their instruments.

Because of the nature of the assignment, the largest category of text was about achieving specific-sounding results from the instruments. These insights were related to the participants’ own playing practice and were to a great degree related to adjusting the volume, timbre and complexity of a continuous sound. The instructions described what knobs to turn, what parameters to change, though one would need to try the instrument to understand more of the nature of these timbre changes for the purpose of composition.

Five of the participants encouraged testing or experimenting in order to gain an understanding of their instruments, one suggesting that experimentation is somewhat the purpose of the instrument, and another suggesting that one should let go of control in favour of exploration. Half of the participants talk of unpredictable aspects of the response from their instruments in certain situations, e.g. when working with feedback.

Three of the participants also urged caution when interacting with their instruments, e.g. because of how certain parameter changes may have a greater impact on the sound than expected, or because of how feedback can get out of hand. One participant recommended relying on small parameter changes to avoid too drastic responses from the instrument.

One participant included further playing instructions as a sequence of performance actions, making for a kind of text score for the instrument.

5.2.6. The workshop

Not all of the eight participants could join the subsequent workshop, which was conducted together with Mattias Petersson, who was responsible for the ensemble course. It was soon clear that our plan for the ensemble session was far too ambitious for the two hours we had with the ensemble. My original intention was for the students to use the above-described assignments as the basis for making live-electronic ensemble scores for their fellow students to perform on instruments other than their own.

First, we had everyone present their instrument setups to the group, and after each presentation, another student was given the opportunity to try the instrument.

We then split the students into three ensemble groups and asked each group to write for one of the other two ensembles. But with the limited time they had, it was not realistic to expect scores with elaborate notation prescribing playing techniques and parameter changes to achieve more intricate, predictable results. We ended up with more sweeping changes of intensity, which the students could manage to perform on instruments other than their own with some success.

5.2.7. Assignment and workshop reflection regarding the notation of DMIs

It is easy to see that the case study did not get as far in exploring notation as planned. At the same time, the study reveals aspects of the participants’ DMIs that explain why notation can be complicated in relation to the type of ensemble that our students constitute. In the assignments, I see descriptions of several instruments that function as tools for a compositional practice and explorative improvisation to a larger extent than as tools for the interpretation of pre-composed musical works; several participants emphasised experimentation and exploration, in some cases also connected to a certain level of unpredictability. With this in mind, it seems that a straightforward notation for their instrument practice could be something similar to my own example in Figure 7, i.e. scores detailing the instruments used, their interconnectivity, initial setup requirements, and possibly some preset/state changes at strategic points in time during a performance. One could also assign which parameters to change or what musical direction to aim for (e.g. greater complexity, decreasing intensity, etc.) The actual turning of knobs and faders would be acts of explorative improvisation within the confines of the pre-patched/stored setups.

6. Discussion

While my research on sound notation shows that it is indeed possible to notate timbre structures (Sköld Reference Sköld2022b), I realise that creators of live-electronic music are not necessarily interested in structure first and interpretation later; that is not necessarily how our instruments work. Thus, a monophonic theremin-style instrument, like the Micro Modular preset described above, may not be the representative of the composer/performer’s DMI setups, due to its relative lack of unpredictability and spirit of exploration that comes with more versatile instrument setups. I have worked with more complex instruments too; in the performance detailed in Figure 7, I used a Dave Smith Instruments EvolverFootnote 10 , an analogue and digital semi-modular desktop synthesiser that shares more traits with some of the instruments described in the student assignments. However, I never found it suitable for gestural performance; it is first and foremost a rhythmic and textural instrument. As one of the case study participants recommended, I would commonly prepare what presets to use beforehand and make relatively small parameter changes during performance, as exemplified in 5.1.4.

Two aspects make the Micro Modular preset more compatible with acoustic instruments: (1) its control is built on the use of morph groups, making possible simultaneous control of several parameters (similar to how gestural changes with acoustic instrument performance affect several parameters at once), and (2) it relies on gestural performance rather than textural (or rhythmic). And this division affects notation. For textural or rhythmic sounds, one typically relies on relatively small changes to a continuous sound flow. For re-creation of such musical works, one would need detailed notation of connectivity and parameter values, and only specify the changes along the timeline, the point being that initial settings of low-frequency oscillators and sequencers etc. may determine the development of the music just as much, if not more than the continuous control actions. For gestural control, there would be more focus on the continuous actions performed along the timeline and their results, as in scratch notation. This can be related to Magnusson’s observation that today’s musicologists may need to study the architectures of digital systems for performance rather than the sound output (Magnusson Reference Magnusson2019). One could argue that many of the important musical ideas in a live performance by Caterina Barbieri (Reference Barbieri2015) were defined in the setup of her instruments, including the sequencer programming.

When discussing the composition and notation for DMIs, it seems that there are two main categories of practice to consider, one being the increasing body of work for acoustic ensembles and orchestras involving different forms of live-electronic elements, where the performance situation demands compatibility with a traditional music performance situation. However, most instruments presented here belong to the second category, in which the instruments and their practices were not originally developed with the purpose of recreating someone’s written musical structures. For an ensemble like KMH’s Live-Electronics Ensemble, the full potential of the ensemble lies in the individual performers’ knowledge of their instruments as well as their capacities to explore and find the musical elements in their instruments as needed in relation to the performance instructions that typically involve some freedom for the performers. The case study showed that the performers could easily define starting points for their instrument setups and where to go musically from there, in other words, states/presets and their performance options. However, the nature of these states varied with each participant and instrument, making it difficult to define a shared notation system directly related to their playing practices.

So, how can such an ensemble come together to perform musical works? One solution is to disregard the performers’ own instruments and include the selection/development of instruments in the composition process and have everyone play instruments specific to the composition, as in some of John Richards’s work with DIY instruments (Richards Reference Richards2008), or Paula Matthusen’s Lathyrus for laptop ensemble with dedicated software in each performer’s laptop (Matthusen Reference Matthusen2009). There is, however, a third option, to work with musical structures that leave enough space for exploration while maintaining some organising principles beyond basic intensity curves and instructions when to play; in Jeff Snyder’s Opposite Earth (Snyder Reference Snyder2016), a conductor controls ‘planets’ in orbit acting as rhythmic instructions for individual performers according to a pre-defined table of pitch possibilities and timbre options. Because of this work’s reliance on shared musical values, the ensemble can be a mixture of acoustic and electronic sound sources.

Moving away from instrument-specific notation, graphic notation has proven effective for more open approaches to ensemble composition. Here, Haubenstock-Ramati’s use of anchoring symbols in Alone 1 as described by Fogel (Reference Fogel2023, p.58) can be a useful concept in relation to the idea of exploratory DMI musicking relying on presets/states and their performance options. The anchoring symbols in Alone 1 (large alphabetic characters) could indicate the preset/state changes, while the other multitude of smaller symbols could inform the design of the DMI behaviour related to continuous performance.

7. Conclusion

While DMIs that function and sound similarly to acoustic instruments are relatively easy to integrate with traditional ideas of notation and performance, it can be more challenging with more complex, explorative DMI setups. For them, it seems that connectivity, patch charts and initial parameter settings are just as important for the identity of musical structures as the sequential value changes along a timeline. And perhaps, one problem with having musical notation capture the most significant output of a complex DMI setup is related to the fact that traditional notation practice is concerned with structural changes of basic musical parameters, where the multi-dimensional timbre parameter is somewhat left out.

However, it would be a mistake to conclude that these DMIs belong to a realm beyond the reach of music theory and notational parameters. Different instruments, acoustic and electronic, may have their unique sounds, but this does not stop the diversity of violins from sharing the same notational language. The aim of musical notation is never to describe sounds with the level of detail where we can tell one synth manufacturer’s sawtooth from another.

Challenges with defining and prescribing notation for many electronic instruments remain. But by continuing to ask questions and reflect on the practice that has emerged within the framework of live-electronic ensemble music, we can find new interesting possibilities for composing for DMIs in ways that stay true to the nature of the instruments.

Acknowledgements

I thank Mattias Petersson, Senior Lecturer at the Royal College of Music in Stockholm, for his cooperation in leading the workshop with the live-electronics ensemble.