1. Introduction

Subjective probability estimates provided by human experts are important in many different domains, among them business, finance, marketing, politics, engineering, meteorology, environmental science, and public health (McAndrew et al., Reference McAndrew, Wattanachit, Gibson and Reich2021). In contrast to machine learning algorithms, they provide knowledge based on human intuition and experience without access to large data sets (McAndrew et al., Reference McAndrew, Wattanachit, Gibson and Reich2021). In this way, they can steer decisions and facilitate planning and dealing with risks. The subjective probability estimates can be either predictions for future events (Graefe, Reference Graefe2018; Turner et al., Reference Turner, Steyvers, Merkle, Budescu and Wallsten2014), for example, election outcomes or weather phenomena, a quantification of an expert’s belief in the truth of a statement (Karvetski et al., Reference Karvetski, Olson, Mandel and Twardy2013; Prelec et al., Reference Prelec, Seung and McCoy2017), or any other binary classifications. In the following, we will refer to these probability estimates as forecasts. Accordingly, the human subjects providing them will be termed forecasters. The subject of the forecast, for example, an event to be predicted or a statement to be judged, will be referred to as a query.

If multiple forecasts from different forecasters are available for the query at hand, combining these individual forecasts usually increases performance (Bennett et al., Reference Bennett, Benjamin, Mistry and Steyvers2018; Budescu and Chen, Reference Budescu and Chen2015; McAndrew et al., Reference McAndrew, Wattanachit, Gibson and Reich2021; Satopää, Reference Satopää2022; Satopää et al., Reference Satopää, Salikhov, Tetlock and Mellers2023; Turner et al., Reference Turner, Steyvers, Merkle, Budescu and Wallsten2014), known as the wisdom-of-the-crowds effect (Surowiecki, Reference Surowiecki2005). The combination of forecasts can be realized with behavioral or mathematical combination methods. For behavioral forecast aggregation, the forecasters negotiate a consensus in a social process (Minson et al., Reference Minson, Mueller and Larrick2018; Silver et al., Reference Silver, Mellers and Tetlock2021). In contrast, mathematical aggregation methods use mathematical fusion rules or models to combine the provided individual forecasts to an aggregated forecast (Clemen and Winkler, Reference Clemen and Winkler1999; Hanea et al., Reference Hanea, Wilkinson, McBride, Lyon, van Ravenzwaaij, Singleton Thorn, Gray, Mandel, Willcox and Gould2021; Wilson, Reference Wilson2017).

There is a wide range of different possible mathematical fusion rules for combining forecasts, which can result in different performances of the fused forecast (Satopää et al., Reference Satopää, Pemantle and Ungar2016). Many of them assume the individual forecasters to be independent (Wilson, Reference Wilson2017) or at least do not explicitly consider a possible correlation between the provided probability estimates. Popular examples of such methods are linear opinion pools, i.e., unweighted or weighted averages. While unweighted linear opinion pools, i.e., standard averages, can already achieve solid performance (Clemen, Reference Clemen1989; Turner et al., Reference Turner, Steyvers, Merkle, Budescu and Wallsten2014), weighted linear opinion pools try to increase fusion performance by giving each forecaster a different weight. The weights can be determined by the forecasters’ individual performance (Cooke, Reference Cooke1991; Hanea et al., Reference Hanea, Wilkinson, McBride, Lyon, van Ravenzwaaij, Singleton Thorn, Gray, Mandel, Willcox and Gould2021), their forecasts’ coherence (Karvetski et al., Reference Karvetski, Olson, Mandel and Twardy2013), or the number of cues available to them (Budescu and Rantilla, Reference Budescu and Rantilla2000), or can be optimized for maximum performance (Ranjan and Gneiting, Reference Ranjan and Gneiting2010). Because linear opinion pools can be over- or underconfident, trimmed (Grushka-Cockayne et al., Reference Grushka-Cockayne, Jose and Lichtendahl2017) and extremized (Baron et al., Reference Baron, Mellers, Tetlock, Stone and Ungar2014) linear opinion pools have also been proposed. Besides linear opinion pools, there are also multiplicative opinion pools, which combine forecasts using a product instead of a sum. Examples are the Independent Opinion Pool (Berger, Reference Berger1985), which explicitly assumes independent forecasts and multiplies the individual forecasts, or geometric (logarithmic) pooling (Berger, Reference Berger1985; Dietrich and List, Reference Dietrich, List, Hájek and Hitchcock2016), which is a weighted product of the forecasts. Linear as well as multiplicative opinion pools can also be used with transformations of the probability forecasts. Examples are the probit average (Satopää et al., Reference Satopää, Salikhov, Tetlock and Mellers2023), which avoids a bias of the simple average toward 0.5, or a geometric mean of odds (Satopää et al., Reference Satopää, Baron, Foster, Mellers, Tetlock and Ungar2014). In addition to the linear and multiplicative pooling methods listed above, Bayesian models for forecast aggregation have been proposed that do not consider correlations between forecasters or explicitly assume independent forecasters (Hanea et al., Reference Hanea, Wilkinson, McBride, Lyon, van Ravenzwaaij, Singleton Thorn, Gray, Mandel, Willcox and Gould2021; Trick et al., Reference Trick, Rothkopf and Jäkel2023; Turner et al., Reference Turner, Steyvers, Merkle, Budescu and Wallsten2014). Hanea et al. (Reference Hanea, Wilkinson, McBride, Lyon, van Ravenzwaaij, Singleton Thorn, Gray, Mandel, Willcox and Gould2021) propose an unsupervised Bayesian model that does not rely on historical seed queries to learn the forecasters’ behavior. In contrast, Turner et al. (Reference Turner, Steyvers, Merkle, Budescu and Wallsten2014) introduce a family of different supervised Bayesian models, which either first calibrate the forecasts and then fuse them using an unweighted linear opinion pool or vice versa. Finally, Trick et al. (Reference Trick, Rothkopf and Jäkel2023) explicitly assume independent forecasts and model them with beta distributions conditioned on the truth value.

However, human forecasters are usually correlated (Berger, Reference Berger1985; Hogarth, Reference Hogarth1978; Lichtendahl et al., Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022; Wilson and Farrow, Reference Wilson, Farrow, Dias, Morton and Quigley2018; Winkler et al., Reference Winkler, Grushka-Cockayne, Lichtendahl and Jose2019; Wiper and French, Reference Wiper and French1995). The correlation between forecasters can be attributed to similar data seen (Morris, Reference Morris1986; Winkler, Reference Winkler1981; Winkler et al., Reference Winkler, Grushka-Cockayne, Lichtendahl and Jose2019), similar training (Lichtendahl et al., Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022; Winkler, Reference Winkler1981; Winkler et al., Reference Winkler, Grushka-Cockayne, Lichtendahl and Jose2019), and/or similar methodology, such as statistical procedures as an aid for forecasting (Lichtendahl et al., Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022; Morris, Reference Morris1986; Winkler, Reference Winkler1981; Winkler et al., Reference Winkler, Grushka-Cockayne, Lichtendahl and Jose2019).

If the provided forecasts are correlated but the used fusion method assumes independence, the fused forecast might be overconfident, because the amount of unique information is overestimated and thus uncertainty is reduced too much (Trick et al., Reference Trick, Rothkopf and Jäkel2023; Trick and Rothkopf, Reference Trick, Rothkopf, Camps-Valls, Ruiz and Valera2022; Wilson, Reference Wilson2017). Therefore, forecast aggregation methods should explicitly consider the forecasters’ correlation to avoid overconfidence and improve the fused forecast’s performance (Wilson, Reference Wilson2017). In fact, considering the correlation of forecasters has been declared as one of the major challenges in forecast aggregation according to the review by McAndrew et al. (Reference McAndrew, Wattanachit, Gibson and Reich2021).

There are already several approaches that explicitly propose fusion methods for correlated forecasts. Budescu and Chen (Reference Budescu and Chen2015) introduced a weighted linear opinion pool with higher weights for forecasters that are less correlated to the other forecasters. Other approaches explicitly infer the amount of shared information underlying correlated forecasts by asking the forecasters not only for their own forecast but also for an additional prediction of the other forecasters’ average forecast (Palley and Satopää, Reference Palley and Satopää2023; Palley and Soll, Reference Palley and Soll2019; Peker, Reference Peker2023; Rilling, Reference Rilling2025). Satopää (Reference Satopää2022) proposes a Bayesian model for combining correlated forecasts without additional predictions of other forecasters’ forecasts. However, their model is unsupervised, so they cannot learn the individual behavior of the forecasters, including their correlation, from historical seed queries. In contrast, the model by Babic et al. (Reference Babic, Gaba, Tsetlin and Winkler2022) relies on historical data but assumes the complete evidence that caused the forecasts to be known, which includes the amount of shared information that caused the correlation between the forecasters.

Since the concrete evidence underlying the provided forecasts is difficult to come by in practice, some Bayesian approaches model the unknown information that caused the forecasts as latent variables (Di Bacco et al., Reference Di Bacco, Frederic and Lad2003; Lichtendahl et al., Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022; Satopää et al., Reference Satopää, Pemantle and Ungar2016). Di Bacco et al. (Reference Di Bacco, Frederic and Lad2003) consider two forecasters that provide their probabilistic forecasts for an event H. Their model assumes some knowledge that both forecasters share and some knowledge that only specific forecasters have, represented as events F,

![]() $G_1$

, and

$G_1$

, and

![]() $G_2$

. The provided forecasts are transformed to odds and modeled with a log-normal distribution, jointly with the ratios of the posterior distributions of H given F, H given

$G_2$

. The provided forecasts are transformed to odds and modeled with a log-normal distribution, jointly with the ratios of the posterior distributions of H given F, H given

![]() $G_1$

, and H given

$G_1$

, and H given

![]() $G_2$

. However, their approach is rather theoretical. It remains unclear how to get the model parameters from prior experience. Satopää et al. (Reference Satopää, Pemantle and Ungar2016) propose a partial information framework for the aggregation of K forecasters. They model the information underlying the forecasts as particles of information, either positive or negative. If the sum of all particles

$G_2$

. However, their approach is rather theoretical. It remains unclear how to get the model parameters from prior experience. Satopää et al. (Reference Satopää, Pemantle and Ungar2016) propose a partial information framework for the aggregation of K forecasters. They model the information underlying the forecasts as particles of information, either positive or negative. If the sum of all particles

![]() $X_S$

is positive, the event happens. Each forecaster observes some particle subset

$X_S$

is positive, the event happens. Each forecaster observes some particle subset

![]() $B_i$

, and the subsets of different forecasters can overlap, which generates a correlation between their forecasts. The sums of particles

$B_i$

, and the subsets of different forecasters can overlap, which generates a correlation between their forecasts. The sums of particles

![]() $X_S, X_{B_1}, \dots , X_{B_K}$

are modeled with a multivariate normal distribution with mean 0 and covariances equal to the number of shared particles in different subsets. The sum of particles

$X_S, X_{B_1}, \dots , X_{B_K}$

are modeled with a multivariate normal distribution with mean 0 and covariances equal to the number of shared particles in different subsets. The sum of particles

![]() $X_{B_i}$

is transformed to a probability forecast with probit transformation. With their model, Satopää et al. (Reference Satopää, Pemantle and Ungar2016) show when averages of forecasts should be extremized, i.e., shifted toward 0 or 1, and how this is dependent on how much information the forecasters share. With a similar goal, Lichtendahl et al. (Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022) also model the information causing the forecasts as information particles

$X_{B_i}$

is transformed to a probability forecast with probit transformation. With their model, Satopää et al. (Reference Satopää, Pemantle and Ungar2016) show when averages of forecasts should be extremized, i.e., shifted toward 0 or 1, and how this is dependent on how much information the forecasters share. With a similar goal, Lichtendahl et al. (Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022) also model the information causing the forecasts as information particles

![]() $x_i$

. Some information particles are private for individual forecasters, some are shared between all forecasters. All information particles

$x_i$

. Some information particles are private for individual forecasters, some are shared between all forecasters. All information particles

![]() $x_i$

as well as the target variable

$x_i$

as well as the target variable

![]() $x_t$

, whose value determines the truth value t, are distributed under the same distribution with a conjugate prior on its parameters. By applying Bayes’ rule, the posterior probability of the target variable

$x_t$

, whose value determines the truth value t, are distributed under the same distribution with a conjugate prior on its parameters. By applying Bayes’ rule, the posterior probability of the target variable

![]() $x_t$

is computed as the fusion result given some sufficient statistics of observed information particles

$x_t$

is computed as the fusion result given some sufficient statistics of observed information particles

![]() $x_i$

, which are in turn computed from the observed probabilistic forecasts provided by the forecasters. The unknown sufficient statistics from the shared information particles are integrated out. Two specific conjugate models are discussed, Beta-Bernoulli and Normal-Normal. However, to be applicable to real data, the model is simplified: It is assumed that the probability of the truth value t given all provided forecasts is a generalized linear model.

$x_i$

, which are in turn computed from the observed probabilistic forecasts provided by the forecasters. The unknown sufficient statistics from the shared information particles are integrated out. Two specific conjugate models are discussed, Beta-Bernoulli and Normal-Normal. However, to be applicable to real data, the model is simplified: It is assumed that the probability of the truth value t given all provided forecasts is a generalized linear model.

In contrast to the models presented above, which explicitly model the latent information causing the provided forecasts, other Bayesian models only model the provided probability forecasts as data (Bordley, Reference Bordley1982; Clemen and Winkler, Reference Clemen, Winkler and Viertl1987; French, Reference French1980). French (Reference French1980) as well as Clemen and Winkler (Reference Clemen, Winkler and Viertl1987) transfer the probability estimates to log-odds and model them with a multivariate Gaussian distribution conditioned on the truth value t. With their model and some historical training data, they can learn how individual forecasters behave for true and false queries, i.e., their bias, variance, and uncertainty. Also, using the Gaussian distribution, they can model pairwise correlation between individual forecasters. Bordley (Reference Bordley1982) uses the same multivariate Gaussian model approach in order to derive the weights for a multiplicative fusion rule considering the correlation of forecasters.

While transforming the probability forecasts to log-odds and modeling them with a multivariate Gaussian distribution is mathematically convenient and enables straightforward representation of correlations between forecasts, another possibility is to model them directly with a beta distribution without any transformation. The beta distribution is commonly used to model probabilities since it is the standard distribution over probabilities in Bayesian statistics and the conjugate prior of the Bernoulli distribution. Accordingly, in prior work, we modeled probabilistic forecasts with a beta distribution conditioned on the truth value t (Trick et al., Reference Trick, Rothkopf and Jäkel2023) and used this model for normative aggregation of probability forecasts. However, in that model, we assumed the forecasts provided by different forecasters to be conditionally independent given the truth value t. This assumption, which usually does not hold in reality, caused our beta fusion model to be overconfident on two real-world data sets. Thus, for correlated forecasts, this model is not normative. It does not formalize how to obtain the correct fused uncertainty.

Therefore, in the present work, we introduce a Bayesian model for combining probabilistic forecasts that considers the correlation between forecasts. The new model also represents the forecasts with a beta distribution conditioned on the truth value t. However, in our model, we additionally assume that the forecast provided by a forecaster for a query depends on an interplay of the forecaster’s skill and the query’s difficulty. Moreover, we assume that the correlation between forecasts provided by different forecasters can be attributed to the fact that they answer to the same queries since for all forecasters some queries are easy and others are hard. The resulting Skill–Difficulty Correlated Fusion Model explicitly models the forecasters’ skills and the queries’ difficulties and thereby implicitly models the correlation between forecasts, which can then be considered for fusion. Fusing forecasts according to the Skill–Difficulty Correlated Fusion Model is normative, given that the model assumptions are correct. In particular, correlated forecasts can be fused normatively.

The remainder of the article is structured as follows. Section 2 introduces our new Skill–Difficulty Correlated Fusion Model, including its generative model and a discussion of its parameters, a reparameterization in favor of interpretability and inference, the correlations that can be represented, as well as parameter inference and fusion. In Section 3, we present our evaluations of the model, including a detailed description of the used data set and cross-validation, an evaluation of how the estimated model parameters can be interpreted and how our model fits the data, and a comparison of the Skill–Difficulty Correlated Fusion Model’s fusion performance to related Bayesian models. Finally, we discuss our results and outline conclusions, limitations, and ideas for future work in Section 4.

2. The Skill–Difficulty Correlated Fusion Model

We propose the Skill–Difficulty Correlated Fusion Model, a Bayesian generative model for combining probabilistic forecasts provided by human forecasters, which explicitly models the forecasters’ skills and the queries’ difficulties in order to implicitly model the correlation between forecasts. After introducing the generative model in Section 2.1, we discuss the model parameters’ interpretation in Section 2.2. In Section 2.3, we introduce a reparameterization of the model in favor of parameter interpretability and robustness of inference and discuss the correlations that can be represented using the model in Section 2.4. In Section 2.5, we outline parameter inference and fusion with the model.

2.1. Generative model

We assume K human forecasters to provide subjective probability estimates, i.e., forecasts, on N binary queries. The queries can be factual statements that are either true or false, questions that can be answered with yes or no, or any other binary classification task with a truth value of either 1 or 0. For each query, the probability estimates provided by the forecasters quantify their belief in their answer’s correctness. A probability of 0 indicates that the forecaster is completely certain that the query’s truth value is 0 (e.g., false/no), whereas a probability of 1 means that the forecaster assumes a truth value of 1 (e.g., true/yes) with full certainty.

We formalize the forecast provided by forecaster k for query n as

![]() $x_n^k \in [0,1]$

with

$x_n^k \in [0,1]$

with

![]() $n = 1, \dots , N$

,

$n = 1, \dots , N$

,

![]() $k = 1, \dots , K$

. The truth value of query n is formalized as

$k = 1, \dots , K$

. The truth value of query n is formalized as

![]() $t_n \in \{0,1\}$

for

$t_n \in \{0,1\}$

for

![]() $n = 1, \dots , N$

. Since the beta distribution is the natural choice for modeling probabilities, a previous model (Trick et al., Reference Trick, Rothkopf and Jäkel2023) assumes the forecasts

$n = 1, \dots , N$

. Since the beta distribution is the natural choice for modeling probabilities, a previous model (Trick et al., Reference Trick, Rothkopf and Jäkel2023) assumes the forecasts

![]() $x_n^k$

to be beta-distributed conditioned on the truth value

$x_n^k$

to be beta-distributed conditioned on the truth value

![]() $t_n \in \{0,1\}$

, as

$t_n \in \{0,1\}$

, as

After learning the parameters

![]() $\alpha _j^k$

and

$\alpha _j^k$

and

![]() $\beta _j^k$

from labeled training data, this model represents the forecasting behavior of each forecaster conditioned on the truth value

$\beta _j^k$

from labeled training data, this model represents the forecasting behavior of each forecaster conditioned on the truth value

![]() $t_n$

, including their bias, uncertainty, and variance. In particular, forecaster k’s bias can be expressed with the beta distribution’s mean

$t_n$

, including their bias, uncertainty, and variance. In particular, forecaster k’s bias can be expressed with the beta distribution’s mean

![]() $\mu _j^k=\alpha _j^k/(\alpha _j^k+\beta _j^k)$

. If

$\mu _j^k=\alpha _j^k/(\alpha _j^k+\beta _j^k)$

. If

![]() $\mu _0^k<0.5$

and

$\mu _0^k<0.5$

and

![]() $\mu _1^k>0.5$

, we define forecaster k to be unbiased since they on average provide correct forecasts. The mean forecast

$\mu _1^k>0.5$

, we define forecaster k to be unbiased since they on average provide correct forecasts. The mean forecast

![]() $\mu _j^k$

can also quantify the uncertainty of forecaster k: The closer it is to 0 or 1, the less uncertain the forecaster is on average. However, the uncertainty of the actual forecasts provided by forecaster k is also dependent on their variance, which determines their forecasts’ concentration around the mean

$\mu _j^k$

can also quantify the uncertainty of forecaster k: The closer it is to 0 or 1, the less uncertain the forecaster is on average. However, the uncertainty of the actual forecasts provided by forecaster k is also dependent on their variance, which determines their forecasts’ concentration around the mean

![]() $\mu _j^k$

. This variance can be expressed with the modeling beta distribution’s precision

$\mu _j^k$

. This variance can be expressed with the modeling beta distribution’s precision

![]() $p_j^k=\alpha _j^k + \beta _j^k$

, also known as its concentration parameter (Huang, Reference Huang2005). The higher this precision is, the lower is the beta distribution’s variance. Still, note that it is not the inverse of the distribution’s variance. While explicitly considering the learned behavior of the forecasts, i.e., their bias, uncertainty, and variance, new forecasts

$p_j^k=\alpha _j^k + \beta _j^k$

, also known as its concentration parameter (Huang, Reference Huang2005). The higher this precision is, the lower is the beta distribution’s variance. Still, note that it is not the inverse of the distribution’s variance. While explicitly considering the learned behavior of the forecasts, i.e., their bias, uncertainty, and variance, new forecasts

![]() $x_u^k$

for a previously unseen query u can be fused by inferring the truth value

$x_u^k$

for a previously unseen query u can be fused by inferring the truth value

![]() $t_u$

given the forecasts

$t_u$

given the forecasts

![]() $x_u^k$

and the learned parameters

$x_u^k$

and the learned parameters

![]() $\alpha _j^k$

and

$\alpha _j^k$

and

![]() $\beta _j^k$

using Bayes’ rule. Note that by modeling bias, variance, and uncertainty with a beta distribution conditioned on the truth value

$\beta _j^k$

using Bayes’ rule. Note that by modeling bias, variance, and uncertainty with a beta distribution conditioned on the truth value

![]() $t_n$

, the forecasters are also implicitly calibrated when they are fused. This means that they are corrected for over- or underconfident forecasts, which do not match the relative frequency of occurrence, for example, of a predicted event or a judged true statement (Trick et al., Reference Trick, Rothkopf and Jäkel2023).

$t_n$

, the forecasters are also implicitly calibrated when they are fused. This means that they are corrected for over- or underconfident forecasts, which do not match the relative frequency of occurrence, for example, of a predicted event or a judged true statement (Trick et al., Reference Trick, Rothkopf and Jäkel2023).

In previous work, four variants of this beta fusion model were compared (Trick et al., Reference Trick, Rothkopf and Jäkel2023). While in (2.1), the forecasts are modeled with two completely different beta distributions for

![]() $t_n=0$

and

$t_n=0$

and

![]() $t_n=1$

, the symmetric variant of the model represents the forecasts with symmetric beta distributions for

$t_n=1$

, the symmetric variant of the model represents the forecasts with symmetric beta distributions for

![]() $t_n=0$

and

$t_n=0$

and

![]() $t_n=1$

, i.e., as

$t_n=1$

, i.e., as

$$ \begin{align} \begin{aligned} x_n^k | t_n = 0 &\sim \text{Beta}(\alpha^k, \beta^k)\\ x_n^k | t_n = 1 &\sim \text{Beta}(\beta^k, \alpha^k). \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} x_n^k | t_n = 0 &\sim \text{Beta}(\alpha^k, \beta^k)\\ x_n^k | t_n = 1 &\sim \text{Beta}(\beta^k, \alpha^k). \end{aligned} \end{align} $$

Thus, it assumes the forecasts provided for queries with truth values

![]() $t_n=0$

and the ones for queries with truth values

$t_n=0$

and the ones for queries with truth values

![]() $t_n=1$

to be symmetric around 0.5. This symmetric beta fusion model is of special interest because it implicitly calibrates the forecasts according to a well-known calibration function, the Linear-in-Log-Odds calibration function (Trick et al., Reference Trick, Rothkopf and Jäkel2023). For both the asymmetric (2.1) and the symmetric (2.2) variant of the model, which model each forecaster k separately with hierarchical models, we also investigated non-hierarchical model variants, which consider the forecasters as exchangeable and only learn one parameter set for all of them.

$t_n=1$

to be symmetric around 0.5. This symmetric beta fusion model is of special interest because it implicitly calibrates the forecasts according to a well-known calibration function, the Linear-in-Log-Odds calibration function (Trick et al., Reference Trick, Rothkopf and Jäkel2023). For both the asymmetric (2.1) and the symmetric (2.2) variant of the model, which model each forecaster k separately with hierarchical models, we also investigated non-hierarchical model variants, which consider the forecasters as exchangeable and only learn one parameter set for all of them.

All variants of the beta fusion model shown above assume the forecasts

![]() $x_n^k$

provided by different forecasters k to be conditionally independent given the truth value

$x_n^k$

provided by different forecasters k to be conditionally independent given the truth value

![]() $t_n$

. However, for real-world forecasting data, this assumption is usually not met (Berger, Reference Berger1985; Hogarth, Reference Hogarth1978; Lichtendahl et al., Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022; Wilson and Farrow, Reference Wilson, Farrow, Dias, Morton and Quigley2018; Winkler et al., Reference Winkler, Grushka-Cockayne, Lichtendahl and Jose2019; Wiper and French, Reference Wiper and French1995). Accordingly, our evaluations on two data sets also showed that the beta fusion models are overconfident, which deteriorates their performance. Therefore, in this work, we extend the beta fusion model to consider correlations between forecasters.

$t_n$

. However, for real-world forecasting data, this assumption is usually not met (Berger, Reference Berger1985; Hogarth, Reference Hogarth1978; Lichtendahl et al., Reference Lichtendahl, Grushka-Cockayne, Jose and Winkler2022; Wilson and Farrow, Reference Wilson, Farrow, Dias, Morton and Quigley2018; Winkler et al., Reference Winkler, Grushka-Cockayne, Lichtendahl and Jose2019; Wiper and French, Reference Wiper and French1995). Accordingly, our evaluations on two data sets also showed that the beta fusion models are overconfident, which deteriorates their performance. Therefore, in this work, we extend the beta fusion model to consider correlations between forecasters.

In line with the previous beta fusion models, in our new Skill–Difficulty Correlated Fusion Model, we also model the forecasts

![]() $x_n^k$

with a beta distribution conditioned on the truth value

$x_n^k$

with a beta distribution conditioned on the truth value

![]() $t_n$

. Likewise, we also assume symmetric beta distributions for modeling forecasts with truth value

$t_n$

. Likewise, we also assume symmetric beta distributions for modeling forecasts with truth value

![]() $t_n=0$

and

$t_n=0$

and

![]() $t_n=1$

as in (2.2), because previous evaluations showed that symmetric beta modeling leads to more robust calibration and higher fusion performance (Trick et al., Reference Trick, Rothkopf and Jäkel2023). However, we do not assume that forecast

$t_n=1$

as in (2.2), because previous evaluations showed that symmetric beta modeling leads to more robust calibration and higher fusion performance (Trick et al., Reference Trick, Rothkopf and Jäkel2023). However, we do not assume that forecast

![]() $x_n^k$

by forecaster k for query n is generated merely by the forecaster’s behavior and can thus be modeled with a beta distribution with parameters

$x_n^k$

by forecaster k for query n is generated merely by the forecaster’s behavior and can thus be modeled with a beta distribution with parameters

![]() $\alpha ^k,\beta ^k$

specific for this forecaster k. Instead, here, we assume that forecast

$\alpha ^k,\beta ^k$

specific for this forecaster k. Instead, here, we assume that forecast

![]() $x_n^k$

by forecaster k for query n is generated by both forecaster k’s properties, which we call their skill, and query n’s properties, which we call its difficulty. Accordingly, we model

$x_n^k$

by forecaster k for query n is generated by both forecaster k’s properties, which we call their skill, and query n’s properties, which we call its difficulty. Accordingly, we model

![]() $x_n^k$

with a beta distribution with parameters

$x_n^k$

with a beta distribution with parameters

![]() $\alpha _k$

and

$\alpha _k$

and

![]() $\beta _k$

, specific for forecaster k, and parameters

$\beta _k$

, specific for forecaster k, and parameters

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

, specific for query n. In particular, if

$\delta _n$

, specific for query n. In particular, if

![]() $t_n=0$

,

$t_n=0$

,

![]() $x_n^k$

is modeled with a beta distribution with parameters

$x_n^k$

is modeled with a beta distribution with parameters

![]() $\alpha _k + \gamma _n$

and

$\alpha _k + \gamma _n$

and

![]() $\beta _k + \delta _n$

. If

$\beta _k + \delta _n$

. If

![]() $t_n=1,$

the parameters are interchanged to

$t_n=1,$

the parameters are interchanged to

![]() $\beta _k+\delta _n$

and

$\beta _k+\delta _n$

and

![]() $\alpha _k+\gamma _n$

,

$\alpha _k+\gamma _n$

,

$$ \begin{align} \begin{aligned} x_n^k | t_n=0 &\sim \text{Beta}(\alpha_k+\gamma_n, \beta_k+\delta_n)\\ x_n^k | t_n=1 &\sim \text{Beta}(\beta_k+\delta_n, \alpha_k+\gamma_n). \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} x_n^k | t_n=0 &\sim \text{Beta}(\alpha_k+\gamma_n, \beta_k+\delta_n)\\ x_n^k | t_n=1 &\sim \text{Beta}(\beta_k+\delta_n, \alpha_k+\gamma_n). \end{aligned} \end{align} $$

The prior distribution on the truth value

![]() $t_n$

is a Bernoulli distribution with parameter

$t_n$

is a Bernoulli distribution with parameter

![]() $\pi $

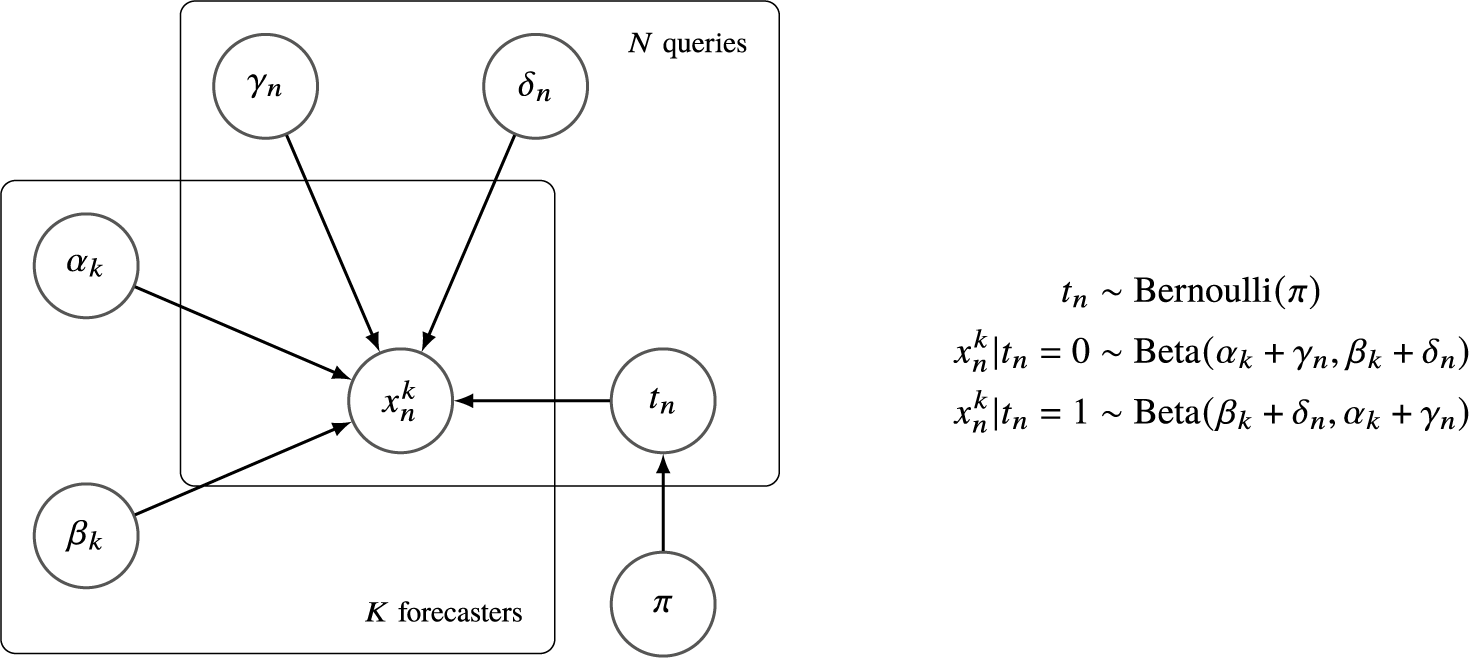

, which is the proportion of true queries with truth value 1. The graphical model of the Skill–Difficulty Correlated Fusion Model is shown in Figure 1.

$\pi $

, which is the proportion of true queries with truth value 1. The graphical model of the Skill–Difficulty Correlated Fusion Model is shown in Figure 1.

The graphical model of the Skill–Difficulty Correlated Fusion Model.

2.2. Interpretation of the model’s parameters as skill and difficulty

The parameters

![]() $\alpha _k$

and

$\alpha _k$

and

![]() $\beta _k$

represent something akin to the skill of forecaster k in the sense that they determine the bias, variance, and uncertainty of forecasts provided by forecaster k independently of the query at hand n. Therefore, in the following,

$\beta _k$

represent something akin to the skill of forecaster k in the sense that they determine the bias, variance, and uncertainty of forecasts provided by forecaster k independently of the query at hand n. Therefore, in the following,

![]() $\alpha _k$

and

$\alpha _k$

and

![]() $\beta _k$

will be termed skill parameters. Equivalently, the parameters

$\beta _k$

will be termed skill parameters. Equivalently, the parameters

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

represent the difficulty of query n since they determine the bias, variance, and uncertainty of forecasts provided for query n independently of the specific forecasters providing them. Accordingly, we call

$\delta _n$

represent the difficulty of query n since they determine the bias, variance, and uncertainty of forecasts provided for query n independently of the specific forecasters providing them. Accordingly, we call

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

difficulty parameters.

$\delta _n$

difficulty parameters.

For interpreting the skill parameters

![]() $\alpha _k$

and

$\alpha _k$

and

![]() $\beta _k$

isolated from the difficulty parameters

$\beta _k$

isolated from the difficulty parameters

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

, we can set the difficulty parameters

$\delta _n$

, we can set the difficulty parameters

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

close to 0, meaning that only the forecasters’ properties are determining the forecasts. In this case, forecasts

$\delta _n$

close to 0, meaning that only the forecasters’ properties are determining the forecasts. In this case, forecasts

![]() $x_n^k$

are distributed according to Beta(

$x_n^k$

are distributed according to Beta(

![]() $\alpha _k, \beta _k$

) for

$\alpha _k, \beta _k$

) for

![]() $t_n=0$

and Beta(

$t_n=0$

and Beta(

![]() $\beta _k, \alpha _k$

) for

$\beta _k, \alpha _k$

) for

![]() $t_n=1$

. Note that with this parametrization, our Skill–Difficulty Correlated Fusion Model equals the symmetric variant of the previously proposed independent beta fusion model in (2.2). If for real data the difficulty parameters

$t_n=1$

. Note that with this parametrization, our Skill–Difficulty Correlated Fusion Model equals the symmetric variant of the previously proposed independent beta fusion model in (2.2). If for real data the difficulty parameters

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

are not equal to 0, the distributions Beta(

$\delta _n$

are not equal to 0, the distributions Beta(

![]() $\alpha _k, \beta _k$

) for

$\alpha _k, \beta _k$

) for

![]() $t_n=0$

and Beta(

$t_n=0$

and Beta(

![]() $\beta _k, \alpha _k$

) for

$\beta _k, \alpha _k$

) for

![]() $t_n=1$

do not describe the forecasts

$t_n=1$

do not describe the forecasts

![]() $x_n^k$

. However, they describe the forecasts we would assume if the queries’ difficulties had no influence on forecaster k’s forecasts. Forecaster k’s bias, which in part defines their skill, is determined by the mean of this beta distribution for

$x_n^k$

. However, they describe the forecasts we would assume if the queries’ difficulties had no influence on forecaster k’s forecasts. Forecaster k’s bias, which in part defines their skill, is determined by the mean of this beta distribution for

![]() $t_n=0$

, which is

$t_n=0$

, which is

![]() $\mu _k=\alpha _k/(\alpha _k+\beta _k)$

. This skill mean describes the average forecast we would expect if the queries’ difficulties had no influence on forecaster k’s forecasts. If

$\mu _k=\alpha _k/(\alpha _k+\beta _k)$

. This skill mean describes the average forecast we would expect if the queries’ difficulties had no influence on forecaster k’s forecasts. If

![]() $\mu _k<0.5$

, we define forecaster k to be unbiased because their mean forecast is closer to the truth value

$\mu _k<0.5$

, we define forecaster k to be unbiased because their mean forecast is closer to the truth value

![]() $t_n$

, so a forced binary decision for the discrete truth value (i.e., 0 or 1) would correctly correspond to the truth value. Thus, if

$t_n$

, so a forced binary decision for the discrete truth value (i.e., 0 or 1) would correctly correspond to the truth value. Thus, if

![]() $\beta _k>\alpha _k$

, forecaster k is unbiased. Forecaster k’s skill is also determined by their uncertainty, which is also quantified by the skill mean

$\beta _k>\alpha _k$

, forecaster k is unbiased. Forecaster k’s skill is also determined by their uncertainty, which is also quantified by the skill mean

![]() $\mu _k$

. The more uncertain, i.e., the closer to 0.5 it is, the more uncertain is forecaster k on average. However, the skill mean

$\mu _k$

. The more uncertain, i.e., the closer to 0.5 it is, the more uncertain is forecaster k on average. However, the skill mean

![]() $\mu _k$

can only provide information on the average uncertainty, not on the actual uncertainties of different forecasts provided, since there might be variance in the provided forecasts. The variance or variability of forecaster k around their skill mean, which is also part of their skill, can be straightforwardly quantified with the beta distribution’s skill precision

$\mu _k$

can only provide information on the average uncertainty, not on the actual uncertainties of different forecasts provided, since there might be variance in the provided forecasts. The variance or variability of forecaster k around their skill mean, which is also part of their skill, can be straightforwardly quantified with the beta distribution’s skill precision

![]() $\rho _k=\alpha _k+\beta _k$

. Thereby, lower precisions indicate higher variance around the mean.

$\rho _k=\alpha _k+\beta _k$

. Thereby, lower precisions indicate higher variance around the mean.

The skill parameters

![]() $\alpha _k$

and

$\alpha _k$

and

![]() $\beta _k$

and the derived skill mean

$\beta _k$

and the derived skill mean

![]() $\mu _k$

and skill precision

$\mu _k$

and skill precision

![]() $\rho _k$

can identify prototypical forecasters. Given that we assume the queries’ difficulties to have no impact on their forecasts, a highly skilled forecaster provides unbiased and certain forecasts with low variance and will thus show a skill mean

$\rho _k$

can identify prototypical forecasters. Given that we assume the queries’ difficulties to have no impact on their forecasts, a highly skilled forecaster provides unbiased and certain forecasts with low variance and will thus show a skill mean

![]() $\mu _k$

close to 0 with high skill precision. An uncertain forecaster provides forecasts close to 0.5 with low variance with

$\mu _k$

close to 0 with high skill precision. An uncertain forecaster provides forecasts close to 0.5 with low variance with

![]() $\mu _k$

close to 0.5 and a high skill precision. If

$\mu _k$

close to 0.5 and a high skill precision. If

![]() $\mu _k$

is close to 1 with high skill precision, forecaster k is a wrong forecaster with a strong bias and thus always provides incorrect forecasts with low uncertainty and low variance and might not have understood the task correctly or is malingering.

$\mu _k$

is close to 1 with high skill precision, forecaster k is a wrong forecaster with a strong bias and thus always provides incorrect forecasts with low uncertainty and low variance and might not have understood the task correctly or is malingering.

The difficulty parameters

![]() $\gamma _n$

and

$\gamma _n$

and

![]() $\delta _n$

can be interpreted when setting the skill parameters

$\delta _n$

can be interpreted when setting the skill parameters

![]() $\alpha _k$

and

$\alpha _k$

and

![]() $\beta _k$

close to 0. As a consequence,

$\beta _k$

close to 0. As a consequence,

![]() $x_n^k$

are distributed according to Beta(

$x_n^k$

are distributed according to Beta(

![]() $\gamma _n, \delta _n$

) for

$\gamma _n, \delta _n$

) for

![]() $t_n=0$

and Beta(

$t_n=0$

and Beta(

![]() $\delta _n, \gamma _n$

) for

$\delta _n, \gamma _n$

) for

![]() $t_n=1$

. Although these distributions might not model real forecasts, which are also determined by the skills of the forecasters providing them, they describe the forecasts we would assume to be provided for query n if the forecasters’ skills had no impact on the forecasts. The mean of this distribution for

$t_n=1$

. Although these distributions might not model real forecasts, which are also determined by the skills of the forecasters providing them, they describe the forecasts we would assume to be provided for query n if the forecasters’ skills had no impact on the forecasts. The mean of this distribution for

![]() $t_n=0$

, the difficulty mean

$t_n=0$

, the difficulty mean

![]() $\eta _n = \gamma _n/(\gamma _n+\delta _n)$

, quantifies the average forecast for query n if the forecasters’ skills had no impact on the forecasts provided for query n. It determines the bias of the forecasts provided for query n, which partly defines its difficulty. If the difficulty mean

$\eta _n = \gamma _n/(\gamma _n+\delta _n)$

, quantifies the average forecast for query n if the forecasters’ skills had no impact on the forecasts provided for query n. It determines the bias of the forecasts provided for query n, which partly defines its difficulty. If the difficulty mean

![]() $\eta _n<0.5$

, hence if

$\eta _n<0.5$

, hence if

![]() $\delta _n>\gamma _n$

, the forecasts for query n are unbiased. Thus, the forecasters on average provide correct answers for the query, so it is rather easy. Its difficulty is, however, also determined by the uncertainty of the forecasts provided. The average uncertainty of the forecasts provided for query n is quantified by the uncertainty of the difficulty mean

$\delta _n>\gamma _n$

, the forecasts for query n are unbiased. Thus, the forecasters on average provide correct answers for the query, so it is rather easy. Its difficulty is, however, also determined by the uncertainty of the forecasts provided. The average uncertainty of the forecasts provided for query n is quantified by the uncertainty of the difficulty mean

![]() $\eta _n$

. More extreme difficulty means closer to 0 or 1 show lower average uncertainty of the forecasts provided for a query. The difficulty precision

$\eta _n$

. More extreme difficulty means closer to 0 or 1 show lower average uncertainty of the forecasts provided for a query. The difficulty precision

![]() $\phi _n=\gamma _n+\delta _n$

quantifies how concentrated the forecasts provided for query n are around the difficulty mean

$\phi _n=\gamma _n+\delta _n$

quantifies how concentrated the forecasts provided for query n are around the difficulty mean

![]() $\eta _n$

, i.e., their variance.

$\eta _n$

, i.e., their variance.

Given difficulty mean

![]() $\eta _n$

and difficulty precision

$\eta _n$

and difficulty precision

![]() $\phi _n$

, we can identify special queries. If

$\phi _n$

, we can identify special queries. If

![]() $\eta _n$

is close to 0 with high difficulty precision, query n is a very easy query, for which forecasters provide unbiased and certain forecasts with low variance, given that we assume the forecasters’ skills to have no impact on the forecasts provided for it. For difficult queries, we distinguish between two types of queries, unknown and trick queries. For unknown queries,

$\eta _n$

is close to 0 with high difficulty precision, query n is a very easy query, for which forecasters provide unbiased and certain forecasts with low variance, given that we assume the forecasters’ skills to have no impact on the forecasts provided for it. For difficult queries, we distinguish between two types of queries, unknown and trick queries. For unknown queries,

![]() $\eta _n$

is close to 0.5 with high difficulty precision, so the forecasters do not know the answer to the query and therefore provide uncertain forecasts close to 0.5 with low variance. In contrast, for trick queries, they provide certain but biased forecasts with low variance because they think they know the correct answer but do not. In this case,

$\eta _n$

is close to 0.5 with high difficulty precision, so the forecasters do not know the answer to the query and therefore provide uncertain forecasts close to 0.5 with low variance. In contrast, for trick queries, they provide certain but biased forecasts with low variance because they think they know the correct answer but do not. In this case,

![]() $\eta _n$

is close to 1 with high difficulty precision.

$\eta _n$

is close to 1 with high difficulty precision.

Note that although we define skill as a property of the forecasters and difficulty as a property of the queries, both the skills of forecasters and the difficulties of queries can be explained by the information that the considered forecasters have. A query can only be unknown if most forecasters lack information about it. At the same time, an easy query requires a majority of forecasters to share correct information about the query, while a trick query requires most forecasters to share incorrect information about it. In contrast, the skill of a forecaster can be attributed to the private information of that individual forecaster.

2.3. Model reparameterization

Since skill and difficulty can be straightforwardly quantified with the skill mean and precision and difficulty mean and precision (Section 2.2), we reparameterized the Skill–Difficulty Correlated Fusion Model: Instead of parameterizing the beta distribution with

![]() $\alpha $

and

$\alpha $

and

![]() $\beta ,$

we now use the beta distribution’s mean

$\beta ,$

we now use the beta distribution’s mean

![]() $\mu = \alpha / (\alpha + \beta )$

and precision

$\mu = \alpha / (\alpha + \beta )$

and precision

![]() $\rho = \alpha + \beta $

as parameters and call the resulting distribution beta

$\rho = \alpha + \beta $

as parameters and call the resulting distribution beta

![]() $^{\prime }$

. This reparameterization improves the stability of Gibbs sampling used for inference. Further, it enhances the interpretability of the model’s parameters and priors. In particular, the skill of a forecaster k is now directly modeled by their skill mean

$^{\prime }$

. This reparameterization improves the stability of Gibbs sampling used for inference. Further, it enhances the interpretability of the model’s parameters and priors. In particular, the skill of a forecaster k is now directly modeled by their skill mean

![]() $\mu _k$

and their skill precision

$\mu _k$

and their skill precision

![]() $\rho _k$

, while the difficulty of a query n is represented by its difficulty mean

$\rho _k$

, while the difficulty of a query n is represented by its difficulty mean

![]() $\eta _n$

and its difficulty precision

$\eta _n$

and its difficulty precision

![]() $\phi _n$

, as defined in Section 2.2. Accordingly, forecasts

$\phi _n$

, as defined in Section 2.2. Accordingly, forecasts

![]() $x_n^k$

are now distributed according to

$x_n^k$

are now distributed according to

$$ \begin{align} \begin{aligned} x_n^k|t_n = 0 &\sim \text{Beta}^{\prime}\left(\frac{\mu_k\rho_k+\eta_n\phi_n}{\rho_k+\phi_n}, \rho_k + \phi_n\right) \\ x_n^k|t_n = 1 &\sim \text{Beta}^{\prime}\left(\frac{(1-\mu_k)\rho_k+(1-\eta_n)\phi_n}{\rho_k+\phi_n}, \rho_k + \phi_n\right). \end{aligned} \end{align} $$

$$ \begin{align} \begin{aligned} x_n^k|t_n = 0 &\sim \text{Beta}^{\prime}\left(\frac{\mu_k\rho_k+\eta_n\phi_n}{\rho_k+\phi_n}, \rho_k + \phi_n\right) \\ x_n^k|t_n = 1 &\sim \text{Beta}^{\prime}\left(\frac{(1-\mu_k)\rho_k+(1-\eta_n)\phi_n}{\rho_k+\phi_n}, \rho_k + \phi_n\right). \end{aligned} \end{align} $$

The full model including all priors is shown in Figure 2. As we discussed in Section 2.2, the skill mean

![]() $\mu _k$

should be below 0.5 if forecaster k is unbiased. To explicitly represent this in our model, we deterministically set

$\mu _k$

should be below 0.5 if forecaster k is unbiased. To explicitly represent this in our model, we deterministically set

![]() $\mu _k$

depending on the binary variable

$\mu _k$

depending on the binary variable

![]() $y_k$

, which indicates if forecaster k is unbiased (

$y_k$

, which indicates if forecaster k is unbiased (

![]() $y_k = 0$

). If this is the case,

$y_k = 0$

). If this is the case,

![]() $\mu _k$

is set to

$\mu _k$

is set to

![]() $\lambda _k$

, which follows a scaled beta

$\lambda _k$

, which follows a scaled beta

![]() $^{\prime }$

distribution with mean

$^{\prime }$

distribution with mean

![]() $u_{\lambda }$

and precision

$u_{\lambda }$

and precision

![]() $p_{\lambda }$

, scaled with 0.5 to only generate values between 0 and 0.5. For a biased forecaster with

$p_{\lambda }$

, scaled with 0.5 to only generate values between 0 and 0.5. For a biased forecaster with

![]() $y_k=1, \mu _k$

is set to

$y_k=1, \mu _k$

is set to

![]() $1-\lambda _k$

, so it is between 0.5 and 1. The prior on the bias indicator variable

$1-\lambda _k$

, so it is between 0.5 and 1. The prior on the bias indicator variable

![]() $y_k$

is a Bernoulli distribution with parameter v, which represents the proportion of biased forecasters. The prior on the skill precision

$y_k$

is a Bernoulli distribution with parameter v, which represents the proportion of biased forecasters. The prior on the skill precision

![]() $\rho _k$

is a gamma distribution with shape and rate set to

$\rho _k$

is a gamma distribution with shape and rate set to

![]() $r_1$

and

$r_1$

and

![]() $r_2$

. The priors over the difficulty parameters are modeled equivalently.

$r_2$

. The priors over the difficulty parameters are modeled equivalently.

![]() $\eta _n$

is deterministically set depending on the indicator variable

$\eta _n$

is deterministically set depending on the indicator variable

![]() $z_n$

, to

$z_n$

, to

![]() $\theta _n$

if

$\theta _n$

if

![]() $z_n=0$

or

$z_n=0$

or

![]() $1-\theta _n$

if

$1-\theta _n$

if

![]() $z_n=1$

.

$z_n=1$

.

![]() $z_n$

indicates if a query is an easy query with

$z_n$

indicates if a query is an easy query with

![]() $\eta _n < 0.5$

for

$\eta _n < 0.5$

for

![]() $z_n=0$

or a trick query with

$z_n=0$

or a trick query with

![]() $\eta _n> 0.5$

for

$\eta _n> 0.5$

for

![]() $z_n=1$

. Accordingly,

$z_n=1$

. Accordingly,

![]() $\theta _n$

is distributed with a scaled beta

$\theta _n$

is distributed with a scaled beta

![]() $^{\prime }$

distribution with mean

$^{\prime }$

distribution with mean

![]() $u_{\theta }$

and precision

$u_{\theta }$

and precision

![]() $p_{\theta }$

, scaled between 0 and 0.5.

$p_{\theta }$

, scaled between 0 and 0.5.

![]() $z_n$

is a Bernoulli variable with parameter w, which is the proportion of trick queries. The difficulty precision has a gamma prior with shape and rate

$z_n$

is a Bernoulli variable with parameter w, which is the proportion of trick queries. The difficulty precision has a gamma prior with shape and rate

![]() $f_1$

and

$f_1$

and

![]() $f_2$

. The prior distribution over the hyperparameters

$f_2$

. The prior distribution over the hyperparameters

![]() $u_\lambda , u_\theta , v, w$

are uniform distributions Beta(1,1), while the priors of

$u_\lambda , u_\theta , v, w$

are uniform distributions Beta(1,1), while the priors of

![]() $p_\lambda , p_\theta , r_1, r_2, f_1, f_2$

are uninformative gamma distributions with shape and rate set to 0.001. As in the original model in Figure 1, the truth value

$p_\lambda , p_\theta , r_1, r_2, f_1, f_2$

are uninformative gamma distributions with shape and rate set to 0.001. As in the original model in Figure 1, the truth value

![]() $t_n$

is Bernoulli-distributed with parameter

$t_n$

is Bernoulli-distributed with parameter

![]() $\pi $

, the proportion of true queries, with a uniform Beta(1,1) prior.

$\pi $

, the proportion of true queries, with a uniform Beta(1,1) prior.

Graphical model of the Skill–Difficulty Correlated Fusion Model with all priors, reparameterized using a beta

![]() $^{\prime }$

distribution with parameters mean and precision and including N training queries with known truth values and M fusion queries with unknown truth values.

$^{\prime }$

distribution with parameters mean and precision and including N training queries with known truth values and M fusion queries with unknown truth values.

2.4. Correlations in the model

The Skill–Difficulty Correlated Fusion Model can model positive correlations between forecasts. On the one hand, it can model the correlation between forecasts provided by two forecasters l and m for different queries,

![]() $x^l$

and

$x^l$

and

![]() $x^m$

, with fixed skill parameters

$x^m$

, with fixed skill parameters

![]() $\mu _l$

and

$\mu _l$

and

![]() $\rho _l$

for forecaster l and

$\rho _l$

for forecaster l and

![]() $\mu _m$

and

$\mu _m$

and

![]() $\rho _m$

for forecaster m but variable difficulty parameters

$\rho _m$

for forecaster m but variable difficulty parameters

![]() $\eta _n$

and

$\eta _n$

and

![]() $\phi _n$

. This correlation is caused by the forecasters seeing the same queries, for example, easy queries, unknown queries, or trick queries. Because the forecasters share information and have similar training and/or cognitive estimation biases, their forecasts for these easy, unknown, or trick queries are similar and thus correlated. On the other hand, the Skill–Difficulty Correlated Fusion Model can represent the correlation between the forecasts provided by multiple forecasters for two queries p and q,

$\phi _n$

. This correlation is caused by the forecasters seeing the same queries, for example, easy queries, unknown queries, or trick queries. Because the forecasters share information and have similar training and/or cognitive estimation biases, their forecasts for these easy, unknown, or trick queries are similar and thus correlated. On the other hand, the Skill–Difficulty Correlated Fusion Model can represent the correlation between the forecasts provided by multiple forecasters for two queries p and q,

![]() $x_p$

and

$x_p$

and

![]() $x_q$

, with fixed difficulty parameters

$x_q$

, with fixed difficulty parameters

![]() $\eta _p$

and

$\eta _p$

and

![]() $\phi _p$

for query p and

$\phi _p$

for query p and

![]() $\eta _q$

and

$\eta _q$

and

![]() $\phi _q$

for query q but variable skill parameters

$\phi _q$

for query q but variable skill parameters

![]() $\mu _k$

and

$\mu _k$

and

![]() $\rho _k$

. These forecasts for queries p and q might be correlated because they come from the same forecasters. In this work, we will use the Skill–Difficulty Correlated Fusion Model to combine forecasts provided by different forecasters for the same query while considering the potential correlation between these forecasters over different queries. Therefore, here, we focus on the first kind of correlation mentioned above: the correlation between the forecasts provided by two forecasters l and m for different queries,

$\rho _k$

. These forecasts for queries p and q might be correlated because they come from the same forecasters. In this work, we will use the Skill–Difficulty Correlated Fusion Model to combine forecasts provided by different forecasters for the same query while considering the potential correlation between these forecasters over different queries. Therefore, here, we focus on the first kind of correlation mentioned above: the correlation between the forecasts provided by two forecasters l and m for different queries,

![]() $x^l$

and

$x^l$

and

![]() $x^m$

.

$x^m$

.

As shown in (2.4), the forecasts of two forecasters l and m for a query n,

![]() $x_n^l$

and

$x_n^l$

and

![]() $x_n^m$

, are conditionally independent given

$x_n^m$

, are conditionally independent given

![]() $\eta _n$

and

$\eta _n$

and

![]() $\phi _n$

by definition, because they are independent draws from a beta

$\phi _n$

by definition, because they are independent draws from a beta

![]() $^{\prime }$

distribution. Accordingly, if all modeled queries have the same difficulty, so

$^{\prime }$

distribution. Accordingly, if all modeled queries have the same difficulty, so

![]() $\eta _n$

and

$\eta _n$

and

![]() $\phi _n$

are the same for all n, there is no correlation between the forecasts provided by different forecasters. If additionally

$\phi _n$

are the same for all n, there is no correlation between the forecasts provided by different forecasters. If additionally

![]() $\phi _n=0$

for all n, so also

$\phi _n=0$

for all n, so also

![]() $\gamma _n=\delta _n=0$

in the original parameterization in (2.3), it can easily be seen that our model equals the independent beta fusion model with symmetric beta distributions shown in (2.2). While

$\gamma _n=\delta _n=0$

in the original parameterization in (2.3), it can easily be seen that our model equals the independent beta fusion model with symmetric beta distributions shown in (2.2). While

![]() $x_n^l$

and

$x_n^l$

and

![]() $x_n^m$

are conditionally independent given

$x_n^m$

are conditionally independent given

![]() $\eta _n$

and

$\eta _n$

and

![]() $\phi _n$

, multiple forecasts provided by forecasters l and m for different queries,

$\phi _n$

, multiple forecasts provided by forecasters l and m for different queries,

![]() $x^l$

and

$x^l$

and

![]() $x^m$

, are, however, not unconditionally independent. If the queries have different difficulties, as we expect in reality,

$x^m$

, are, however, not unconditionally independent. If the queries have different difficulties, as we expect in reality,

![]() $x^l$

and

$x^l$

and

![]() $x^m$

are positively correlated.

$x^m$

are positively correlated.

The correlation between two forecasters is modulated by how much the forecasts provided by these forecasters are determined by their individual skills in comparison to the shared queries’ difficulties. If the queries’ difficulties mainly determine the given forecasts, it is the shared information between forecasters that generates similar forecasts by different forecasters on the same (e.g., easy, unknown, or trick) queries. In this case, the forecasts are highly correlated. If, on the other hand, the individual forecasters’ skills mainly determine the forecasts, the forecasters’ individual private information determines the forecasts, leading to low correlations.

The trade-off between the influence of individual forecasters’ skills and the queries’ difficulties is quantified by the relation between skill parameters

![]() $\mu _k, \rho _k$

and difficulty parameters

$\mu _k, \rho _k$

and difficulty parameters

![]() $\eta _n, \phi _n$

. In particular, it is the relation of skill and difficulty precisions

$\eta _n, \phi _n$

. In particular, it is the relation of skill and difficulty precisions

![]() $\rho _k$

and

$\rho _k$

and

![]() $\phi _n$

that mostly determines the correlation. Higher difficulty precision parameters

$\phi _n$

that mostly determines the correlation. Higher difficulty precision parameters

![]() $\phi _n$

compared to the skill precision parameters

$\phi _n$

compared to the skill precision parameters

![]() $\rho _k$

lead to higher influence of the shared queries’ difficulties on the provided forecasts compared to the forecasters’ individual skills. Thus, the correlation between different forecasters’ provided forecasts increases. In particular, if the difficulty precisions

$\rho _k$

lead to higher influence of the shared queries’ difficulties on the provided forecasts compared to the forecasters’ individual skills. Thus, the correlation between different forecasters’ provided forecasts increases. In particular, if the difficulty precisions

![]() $\phi _n$

tend to infinity for fixed skill parameters, the correlation tends to 1. In this case, the forecasts are fully determined by the queries’ difficulties, so all forecasters provide the same forecasts. If in contrast the difficulty precisions

$\phi _n$

tend to infinity for fixed skill parameters, the correlation tends to 1. In this case, the forecasts are fully determined by the queries’ difficulties, so all forecasters provide the same forecasts. If in contrast the difficulty precisions

![]() $\phi _n$

tend to 0 for fixed skill parameters, the correlation tends to 0, because in this case, the forecasts are only determined by the forecasters’ skills.

$\phi _n$

tend to 0 for fixed skill parameters, the correlation tends to 0, because in this case, the forecasts are only determined by the forecasters’ skills.

Of course, leaving the

![]() $\phi _n$

fixed, the difficulty means

$\phi _n$

fixed, the difficulty means

![]() $\eta _n$

also have an impact on the correlation, for example, by determining how many trick queries there are. However, regardless of the values that the

$\eta _n$

also have an impact on the correlation, for example, by determining how many trick queries there are. However, regardless of the values that the

![]() $\eta _n$

take, the

$\eta _n$

take, the

![]() $\phi _n$

can generate the full range of correlations between 0 and 1.

$\phi _n$

can generate the full range of correlations between 0 and 1.

Figure 3 shows three examples of model specifications leading to different correlations between the forecasts of two forecasters. For the very low correlation close to 0 in Figure 3(a), the difficulty precision parameters

![]() $\phi _n$

were drawn from a gamma distribution with shape 0.1 and rate 1,000, leading to very small

$\phi _n$

were drawn from a gamma distribution with shape 0.1 and rate 1,000, leading to very small

![]() $\phi _n$

close to 0. For the medium correlation in Figure 3(b), difficulty precisions

$\phi _n$

close to 0. For the medium correlation in Figure 3(b), difficulty precisions

![]() $\phi _n$

around an average value of 8 are generated from a gamma distribution with shape 4 and rate 0.5. For the very high correlation close to 1 shown in Figure 3(c), very large difficulty precisions

$\phi _n$

around an average value of 8 are generated from a gamma distribution with shape 4 and rate 0.5. For the very high correlation close to 1 shown in Figure 3(c), very large difficulty precisions

![]() $\phi _n$

are generated from a gamma distribution with shape 1,000 and rate 0.1.

$\phi _n$

are generated from a gamma distribution with shape 1,000 and rate 0.1.

Three examples of simulated forecasts of two forecasters,

![]() $x^1$

and

$x^1$

and

![]() $x^2$

, for 20,000 queries, 10,000 of which with truth value

$x^2$

, for 20,000 queries, 10,000 of which with truth value

![]() $t=0$

(left column) and 10,000 with truth value

$t=0$

(left column) and 10,000 with truth value

![]() $t=1$

(right column), with different correlations, low (a), medium (b), and high (c). The two forecasters are equally skilled with skill parameters

$t=1$

(right column), with different correlations, low (a), medium (b), and high (c). The two forecasters are equally skilled with skill parameters

![]() $\mu _1=\mu _2=0.25$

and

$\mu _1=\mu _2=0.25$

and

![]() $\rho _1=\rho _2=12$

. The difficulty parameters

$\rho _1=\rho _2=12$

. The difficulty parameters

![]() $\eta _n$

and

$\eta _n$

and

![]() $\phi _n$

for different n are drawn from their prior distributions. For generating the low correlation close to 0 for

$\phi _n$

for different n are drawn from their prior distributions. For generating the low correlation close to 0 for

![]() $t=0$

and

$t=0$

and

![]() $t=1$

in (a), their parameters are chosen as

$t=1$

in (a), their parameters are chosen as

![]() $u_{\theta }=0.5, p_{\theta }=4, f_1=0.1, f_2=1,000$

, and

$u_{\theta }=0.5, p_{\theta }=4, f_1=0.1, f_2=1,000$

, and

![]() $w=0.2$

. For generating a medium correlation of 0.46 in (b), they are

$w=0.2$

. For generating a medium correlation of 0.46 in (b), they are

![]() $u_{\theta }=0.5, p_{\theta }=4, f_1=4, f_2=0.5$

, and

$u_{\theta }=0.5, p_{\theta }=4, f_1=4, f_2=0.5$

, and

![]() $w=0.2$

. The forecasts in (c) with a correlation close to 1 are generated with

$w=0.2$

. The forecasts in (c) with a correlation close to 1 are generated with

![]() $u_{\theta }=0.5, p_{\theta }=4, f_1=1,000, f_2=0.1$

, and

$u_{\theta }=0.5, p_{\theta }=4, f_1=1,000, f_2=0.1$

, and

![]() $w=0.2$

.

$w=0.2$

.

2.5. Parameter inference and fusion

In order to use the Skill–Difficulty Correlated Fusion Model to combine multiple subjective probability estimates, the model’s parameters need to be inferred. As can be seen in the graphical model in Figure 2, for N training queries, indexed with

![]() $n=1,\dots ,N$

, the forecasts

$n=1,\dots ,N$

, the forecasts

![]() $x_n^k$

as well as the corresponding truth values

$x_n^k$

as well as the corresponding truth values

![]() $t_n$

are observed. For M fusion queries, indexed with

$t_n$

are observed. For M fusion queries, indexed with

![]() $n=N+1,\dots ,N+M$

, only the forecasts

$n=N+1,\dots ,N+M$

, only the forecasts

![]() $x_n^k$

provided by the K forecasters are observed and the posterior distribution over the truth values

$x_n^k$

provided by the K forecasters are observed and the posterior distribution over the truth values

![]() $t_n$

has to be inferred for fusing these forecasts. Besides, all other parameters in the model, including the forecasters’ skill parameters with their hyperparameters, the training and fusion queries’ difficulty parameters with their hyperparameters and the proportion of true queries

$t_n$

has to be inferred for fusing these forecasts. Besides, all other parameters in the model, including the forecasters’ skill parameters with their hyperparameters, the training and fusion queries’ difficulty parameters with their hyperparameters and the proportion of true queries

![]() $\pi $

need to be inferred from the provided training and fusion queries.

$\pi $

need to be inferred from the provided training and fusion queries.

Inference in the model is done with Gibbs–MH sampling, with probability 0.9 for a Gibbs sampling step and probability 0.1 for an MH steps. In the MH step, we propose to invert both

![]() $t_n$

and

$t_n$

and

![]() $z_n$

of the fusion queries. This is necessary, because the posterior over

$z_n$

of the fusion queries. This is necessary, because the posterior over

![]() $t_n$

might be bimodal, so standard Gibbs sampling might get stuck in one of the modes. The reason for the potential bimodality of

$t_n$

might be bimodal, so standard Gibbs sampling might get stuck in one of the modes. The reason for the potential bimodality of

![]() $t_n$

’s posterior is that we do not know the difficulty parameters of the fusion queries. In particular, the indicator variable

$t_n$

’s posterior is that we do not know the difficulty parameters of the fusion queries. In particular, the indicator variable

![]() $z_n$

for trick queries might be 0 or 1. Thus, for example, a query with all forecasts

$z_n$

for trick queries might be 0 or 1. Thus, for example, a query with all forecasts

![]() $x_n^k$

close to 1 could be a true easy query with

$x_n^k$

close to 1 could be a true easy query with

![]() $t_n=1$

and

$t_n=1$

and

![]() $z_n = 0$

or a false trick query with

$z_n = 0$

or a false trick query with

![]() $t_n=0$

and

$t_n=0$

and

![]() $z_n=1$

. Our MH step allows the sampler to explore both options. The full joint distribution, from which all equations required for Gibbs sampling can be derived, can be found in Section 1 of the Supplementary Material.

$z_n=1$

. Our MH step allows the sampler to explore both options. The full joint distribution, from which all equations required for Gibbs sampling can be derived, can be found in Section 1 of the Supplementary Material.

3. Evaluation

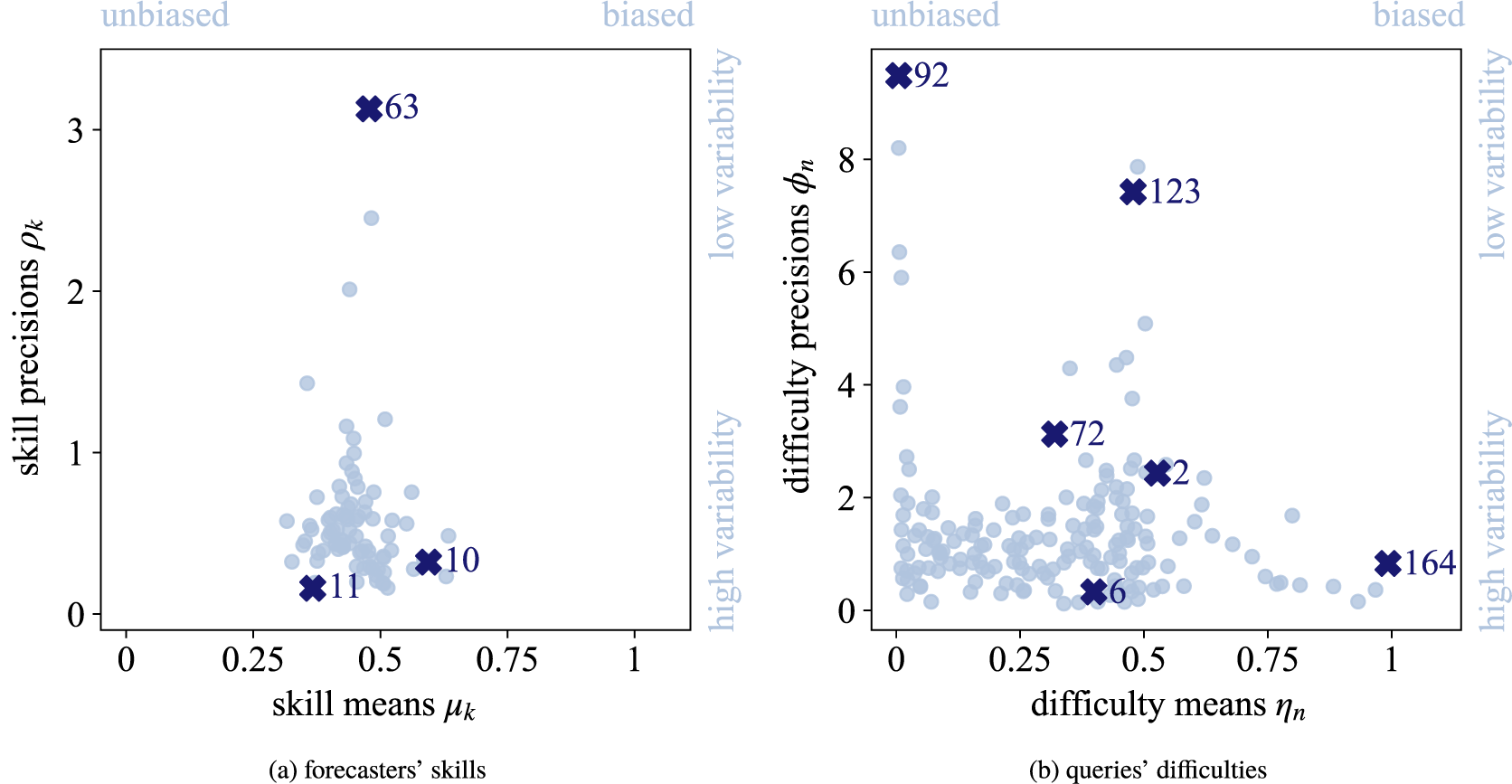

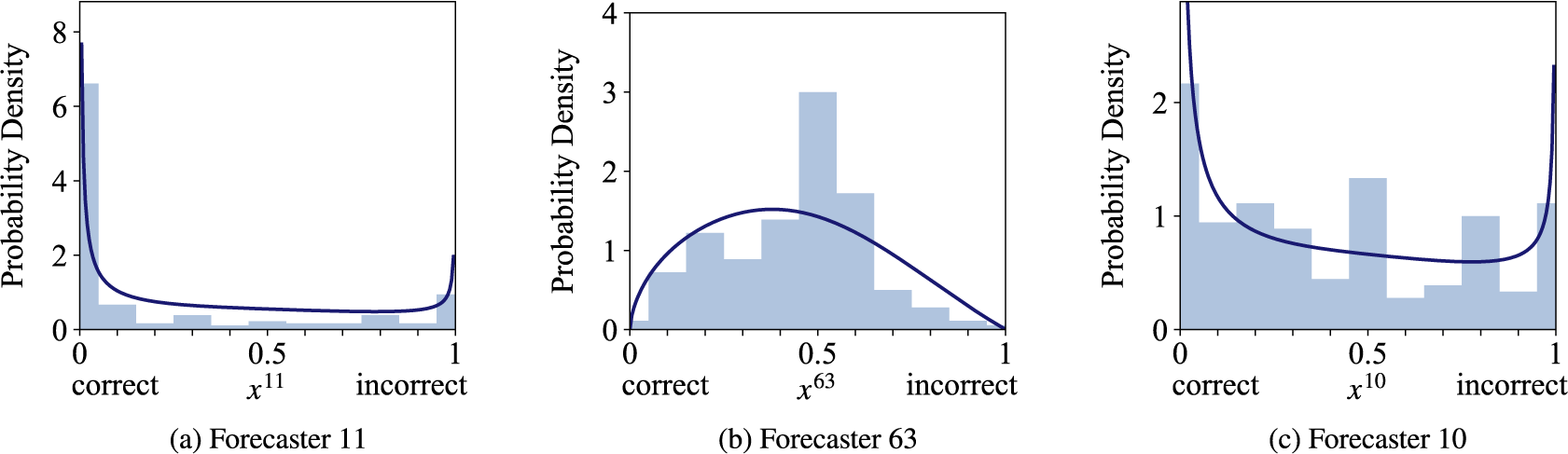

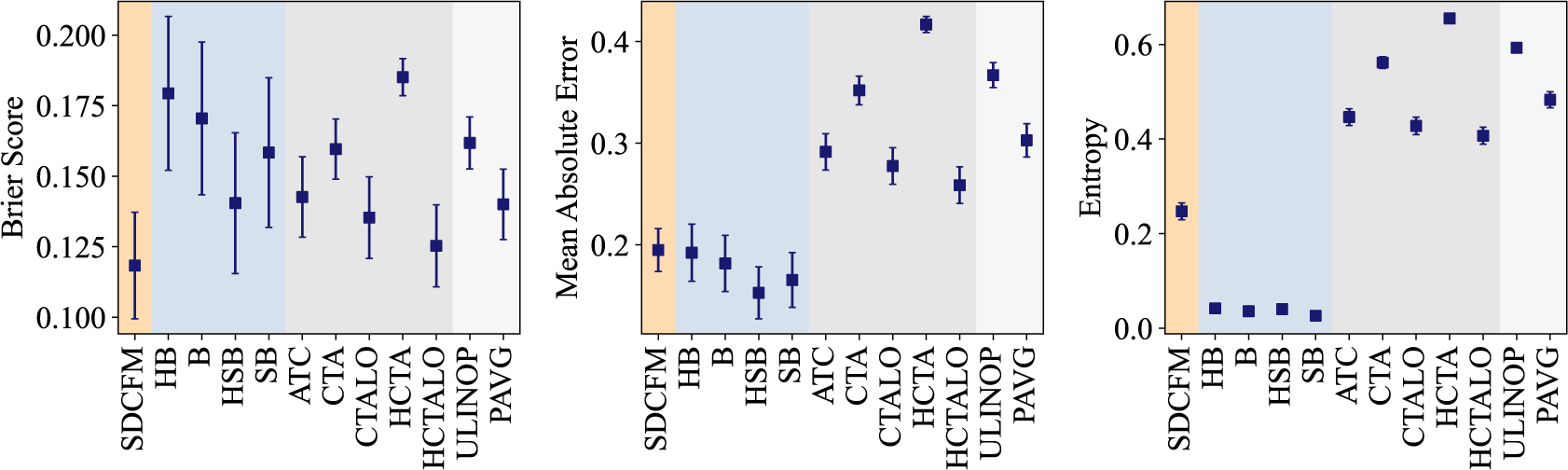

We evaluate the proposed Skill–Difficulty Correlated Fusion Model on the Knowledge Test Confidence (KTeC) data set (Section 3.1) using leave-one-out (LOO) cross-validation (Section 3.2). For exemplary forecasters and queries, we show how our model’s parameters can be interpreted and how the model fits the data (Section 3.3). In addition, we evaluate the fusion performance of the Skill–Difficulty Correlated Fusion Model in comparison to previously proposed Bayesian fusion models in terms of Brier score, mean absolute error, and entropy (Section 3.4).

3.1. Knowledge Test Confidence data set

The KTeC data setFootnote 1 (Trick et al., Reference Trick, Rothkopf and Jäkel2023) includes subjective probability estimates of 85 human forecasters on 180 queries. Each forecaster provided a forecast to all 180 queries, resulting in a total number of 15,300 subjective probability estimates.

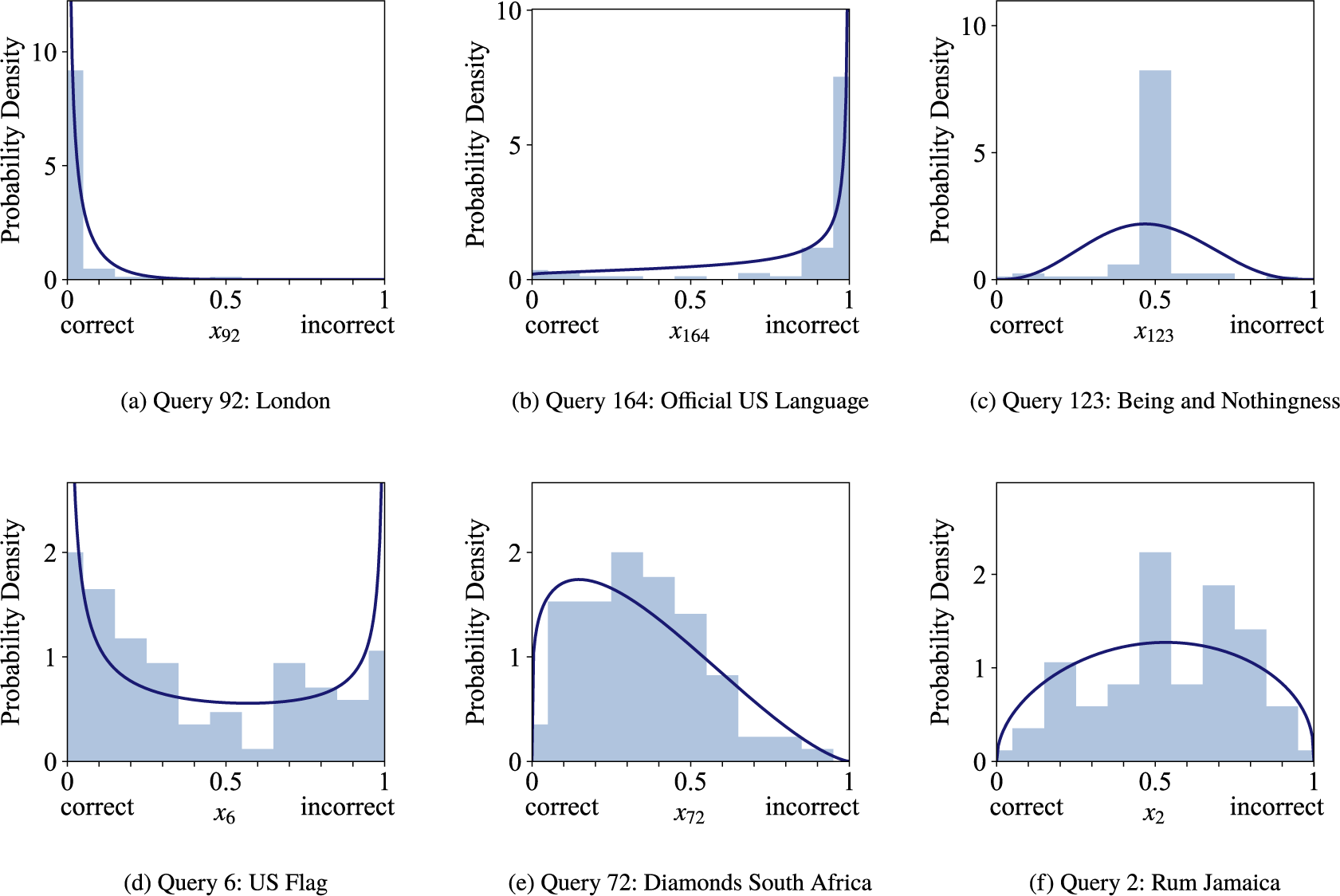

The queries are knowledge statements that are either false (

![]() $t_n=0$

) or true (

$t_n=0$

) or true (

![]() $t_n=1$

), while the number of false and true statements is balanced. There are easy queries known to the majority of forecasters, such as ‘London is the capital of England’ (

$t_n=1$

), while the number of false and true statements is balanced. There are easy queries known to the majority of forecasters, such as ‘London is the capital of England’ (

![]() $t_n=1$

), and hard queries. Hard queries are either unknown to most forecasters, for example, ‘Being and Nothingness was written in 1943’ (

$t_n=1$

), and hard queries. Hard queries are either unknown to most forecasters, for example, ‘Being and Nothingness was written in 1943’ (

![]() $t_n=1$

), or are trick queries that most forecasters judge incorrectly, for example, ‘The official language of the United States is English’ (

$t_n=1$

), or are trick queries that most forecasters judge incorrectly, for example, ‘The official language of the United States is English’ (

![]() $t_n=0$

at the time the data were collected, before March 1, 2025).

$t_n=0$

at the time the data were collected, before March 1, 2025).

The forecasters were students from the University of Osnabrück. Their probabilistic forecasts are their confidence judgments on the given statements. A confidence of 0 indicates that the forecaster is 100% certain that the statement is wrong, whereas a confidence of 1 indicates that the forecaster is 100% certain that the statement is correct. The forecasts could be provided in 11 discrete steps of 0.1 between 0 and 1. For the following evaluations, we preprocessed forecasts of 0 and 1 to 0.001 and 0.999 to avoid computational problems. Note that in previous work, we showed that this score correction has no significant influence on the fusion results (Trick et al., Reference Trick, Rothkopf and Jäkel2023). In the KTeC data set, the forecasts provided by different forecasters are correlated. For queries with truth value 0 the pairwise correlation between two forecasters is on average 0.293, for queries with truth value 1 it is on average 0.333. More details on the data set, for example, how the query statements were designed, can be found in the work of Trick et al. (Reference Trick, Rothkopf and Jäkel2023).

3.2. Cross-validation

We evaluate the Skill–Difficulty Correlated Fusion Model on the KTeC data set described above using LOO cross-validation. Accordingly, we split the data set, consisting of 180 queries, into 180 training and test sets. Each training set is composed of the forecasts of all 85 forecasters on 179 training queries, along with their known truth values. The forecasts on the one remaining fusion query, for which we do not know the truth value, build the respective test sets.

Inference for model training and testing, i.e., fusion, is realized simultaneously using Gibbs–MH sampling, implemented in Python. We ran 100 parallel chains with 500 samples and a burn-in of 500 samples each. To check for convergence, for all continuous parameters, we computed the convergence statistic

![]() $\hat {R}$

, also known as the Gelman–Rubin statistic. All chains converged, with a maximum

$\hat {R}$

, also known as the Gelman–Rubin statistic. All chains converged, with a maximum

![]() $\hat {R}$

of 1.17.

$\hat {R}$

of 1.17.

3.3. Parameter interpretation and model fit