1. Introduction

In the first half of the 20th century, a large-scale rabies epizootic swept across Europe, primarily transmitted by foxes—a transmission pattern markedly different from that observed in many African and Asian regions, where domestic and stray dogs are the principal vectors [Reference Ruan25, Reference Zhang, Jin, Sun, Zhou and Ruan38, Reference Zinsstag, Dürr, Penny, Mindekem, Roth, Gonzalez, Naissengar and Hattendorf39]. The outbreak is believed to have originated near Gdansk in southern Poland around 1939, subsequently advancing westward at an average rate of approximately 20–60 kilometres per year [Reference Lloyd20, Reference Toma and Andral27, Reference van den Bosch, Metz and Diekmann28].

This phenomenon has attracted considerable research interest, leading to the development of several mathematical models aimed at understanding the dynamics of rabies transmission among foxes (e.g., [Reference Anderson, Jackson, May and Smith1, Reference Kallen, Arcuri and Murray14, Reference Liu19, Reference Macdonald21–Reference Ou and Wu23, Reference Thieme, Jäger, Rost and Tautu26, Reference van den Bosch, Metz and Diekmann28]). Drawing on established biological facts [Reference Kaplan16, Reference Macdonald21], the fox population can be divided into three distinct compartments: susceptible foxes, with population density ![]() $S$; infected but non-infectious foxes, with density

$S$; infected but non-infectious foxes, with density ![]() $E$; and infectious (rabid) foxes, with density

$E$; and infectious (rabid) foxes, with density ![]() $I$. It is further assumed that both susceptible and infected foxes are territorial, occupying non-overlapping home ranges, whereas rabid foxes may become aggressive and disoriented, losing their sense of direction and territoriality, and wander randomly. These rabid foxes act as the primary vectors of transmission, spreading the disease through direct contact, typically via biting. Based on these biological insights, Murray, Stanley, and Brown [Reference Murray, Stanley and Brown22] proposed the following mathematical model:

$I$. It is further assumed that both susceptible and infected foxes are territorial, occupying non-overlapping home ranges, whereas rabid foxes may become aggressive and disoriented, losing their sense of direction and territoriality, and wander randomly. These rabid foxes act as the primary vectors of transmission, spreading the disease through direct contact, typically via biting. Based on these biological insights, Murray, Stanley, and Brown [Reference Murray, Stanley and Brown22] proposed the following mathematical model:

\begin{equation}

\begin{cases}

E_{t}=\beta I S-\sigma E- \left[b+(a-b)\displaystyle\frac{N}{K}\right]E,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,\\

I_{t}=D I_{xx}+\sigma E-\alpha I- \left[b+(a-b)\displaystyle\frac{N}{K}\right]I ,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,\\

S_{t}=(a-b)S\left(1-\displaystyle\frac{N}{K}\right)-\beta I S,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,

\end{cases}

\end{equation}

\begin{equation}

\begin{cases}

E_{t}=\beta I S-\sigma E- \left[b+(a-b)\displaystyle\frac{N}{K}\right]E,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,\\

I_{t}=D I_{xx}+\sigma E-\alpha I- \left[b+(a-b)\displaystyle\frac{N}{K}\right]I ,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,\\

S_{t}=(a-b)S\left(1-\displaystyle\frac{N}{K}\right)-\beta I S,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,

\end{cases}

\end{equation} Here, ![]() $N = S + E + I$ denotes the total fox population;

$N = S + E + I$ denotes the total fox population; ![]() $D \gt 0$ is the diffusion rate of rabid foxes;

$D \gt 0$ is the diffusion rate of rabid foxes; ![]() $a \gt 0$ and

$a \gt 0$ and ![]() $b \gt 0$ are, respectively, the per capita birth and natural death rates of all foxes; and

$b \gt 0$ are, respectively, the per capita birth and natural death rates of all foxes; and ![]() $K \gt 0$ is the environmental carrying capacity. The parameter

$K \gt 0$ is the environmental carrying capacity. The parameter ![]() $\beta \gt 0$ represents the disease transmission rate,

$\beta \gt 0$ represents the disease transmission rate, ![]() $\sigma \gt 0$ is the rate at which infected foxes progress to the infectious (rabid) stage, and

$\sigma \gt 0$ is the rate at which infected foxes progress to the infectious (rabid) stage, and ![]() $\alpha \gt 0$ denotes the disease-induced mortality rate of rabid individuals. The spatial variable

$\alpha \gt 0$ denotes the disease-induced mortality rate of rabid individuals. The spatial variable ![]() $x$ represents one-dimensional position. The term

$x$ represents one-dimensional position. The term ![]() $(a - b)N/K$ accounts for mortality resulting from competition for limited resources among all foxes. To ensure a viable population in the absence of disease, it is assumed that

$(a - b)N/K$ accounts for mortality resulting from competition for limited resources among all foxes. To ensure a viable population in the absence of disease, it is assumed that ![]() $a \gt b$.

$a \gt b$.

Clearly, system (1) has at least two nonnegative equilibria: the trivial equilibrium ![]() $(0,0,0)$ and the disease-free equilibrium

$(0,0,0)$ and the disease-free equilibrium ![]() $(0,0,K)$. Moreover, (1) admits a unique positive constant equilibrium

$(0,0,K)$. Moreover, (1) admits a unique positive constant equilibrium ![]() $(E^*,I^*,S^*)$ if and only if

$(E^*,I^*,S^*)$ if and only if

\begin{equation}

\mathcal{R}_0:=\frac{\sigma \beta K}{(\sigma + a)(\alpha + a)} \gt 1.

\end{equation}

\begin{equation}

\mathcal{R}_0:=\frac{\sigma \beta K}{(\sigma + a)(\alpha + a)} \gt 1.

\end{equation} In the case ![]() $D=0$, system (1) becomes an ODE model, for which Anderson et al. [Reference Anderson, Jackson, May and Smith1] established the following result: Introducing rabies into a stable population of healthy foxes leads to three possible dynamical outcomes:

$D=0$, system (1) becomes an ODE model, for which Anderson et al. [Reference Anderson, Jackson, May and Smith1] established the following result: Introducing rabies into a stable population of healthy foxes leads to three possible dynamical outcomes:

(a) If

$\mathcal{R}_0 \lt 1$, the disease eventually dies out.

$\mathcal{R}_0 \lt 1$, the disease eventually dies out.(b) If

$\mathcal{R}_0 \gt 1$, the population exhibits oscillations around

$\mathcal{R}_0 \gt 1$, the population exhibits oscillations around  $(E^*,I^*,S^*)$; moreover, when the above inequality holds but

$(E^*,I^*,S^*)$; moreover, when the above inequality holds but  $\mathcal R_0$ exceeds

$\mathcal R_0$ exceeds  $1$ only slightly, these oscillations are damped over time, and the solution converges to

$1$ only slightly, these oscillations are damped over time, and the solution converges to  $(E^*,I^*,S^*)$.

$(E^*,I^*,S^*)$.(c) In contrast, if

$\mathcal R_0$ is sufficiently large, the system approaches a limit cycle.

$\mathcal R_0$ is sufficiently large, the system approaches a limit cycle.

The number ![]() $\mathcal R_0$ is widely known as the ‘basic reproduction number’ of the ODE model.

$\mathcal R_0$ is widely known as the ‘basic reproduction number’ of the ODE model.

When ![]() $D \gt 0$, the propagation speed of the epizootic front was investigated in [Reference Murray, Stanley and Brown22] under certain parameter conditions, and the minimal wave speed was analytically derived from fundamental epidemiological and ecological parameters.

$D \gt 0$, the propagation speed of the epizootic front was investigated in [Reference Murray, Stanley and Brown22] under certain parameter conditions, and the minimal wave speed was analytically derived from fundamental epidemiological and ecological parameters.

In addition, some studies have incorporated the diffusion of juvenile foxes into the model (see, e.g., Ou and Wu [Reference Ou and Wu23]), since juvenile foxes may leave their home range in autumn and disperse over long distances to establish new territories, potentially carrying rabies during such movements.

In general, the mathematical modelling of ecological processes remains highly challenging, largely due to the absence of well-established ‘first principles’ governing their evolutionary mechanisms. As a model describing the evolving rabid fox population with density ![]() $ I(t, x)$, system (1) does not provide enough information for the spatial location of the infected region. For instance, although the initial rabid fox population

$ I(t, x)$, system (1) does not provide enough information for the spatial location of the infected region. For instance, although the initial rabid fox population ![]() $ I(0, x) $ is naturally assumed to be compactly supported in space, the strong maximum principle implies that

$ I(0, x) $ is naturally assumed to be compactly supported in space, the strong maximum principle implies that ![]() $ I(t, x) \gt 0 $ for all

$ I(t, x) \gt 0 $ for all ![]() $ x \in \mathbb{R} $ once

$ x \in \mathbb{R} $ once ![]() $ t \gt 0 $.

$ t \gt 0 $.

To more accurately characterize the expanding spatial range of rabid foxes, in this paper, we use an evolving one-dimensional interval ![]() $[g(t), h(t)]$ to represent this range, and modify (1) into the following system with moving boundaries:

$[g(t), h(t)]$ to represent this range, and modify (1) into the following system with moving boundaries:

\begin{equation}

\left\{\begin{array}{ll}

E_{t}=\beta I S-\sigma E- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]E,\ \ &t \gt 0, \ g(t) \lt x \lt h(t),\\

I_{t}=D I_{xx}+\sigma E-\alpha I- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]I ,\ \ &t \gt 0, \ g(t) \lt x \lt h(t),\\

S_{t}=(a-b)S\left(1-\displaystyle\frac{E+I+S}{K}\right)-\beta I S,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,\\

E(t,x)=I(t,x)=0, & t\geq 0,\ x\not\in (g(t), h(t)), \\

h'(t)=-\mu I_{x}(t,h(t)) , \ &t \gt 0,\\

g'(t)=-\mu I_{x}(t,g(t)), \ &t \gt 0,\\

E(0,x)=E_0(x),\, I(0,x)=I_0(x), \ \ &-h_0\leq x \leq h_0,\\

S(0,x)=S_{0}(x),\, \ \ \ & -\infty \lt x \lt \infty,\\

h(0)=h_0,\,g(0)=-h_0, &

\end{array}\right.

\end{equation}

\begin{equation}

\left\{\begin{array}{ll}

E_{t}=\beta I S-\sigma E- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]E,\ \ &t \gt 0, \ g(t) \lt x \lt h(t),\\

I_{t}=D I_{xx}+\sigma E-\alpha I- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]I ,\ \ &t \gt 0, \ g(t) \lt x \lt h(t),\\

S_{t}=(a-b)S\left(1-\displaystyle\frac{E+I+S}{K}\right)-\beta I S,\ \ &t \gt 0, \ -\infty \lt x \lt \infty,\\

E(t,x)=I(t,x)=0, & t\geq 0,\ x\not\in (g(t), h(t)), \\

h'(t)=-\mu I_{x}(t,h(t)) , \ &t \gt 0,\\

g'(t)=-\mu I_{x}(t,g(t)), \ &t \gt 0,\\

E(0,x)=E_0(x),\, I(0,x)=I_0(x), \ \ &-h_0\leq x \leq h_0,\\

S(0,x)=S_{0}(x),\, \ \ \ & -\infty \lt x \lt \infty,\\

h(0)=h_0,\,g(0)=-h_0, &

\end{array}\right.

\end{equation}where ![]() $\mu$ and

$\mu$ and ![]() $h_0$ are positive constants. Here,

$h_0$ are positive constants. Here, ![]() $[g(t), h(t)]$ is the infected region at time

$[g(t), h(t)]$ is the infected region at time ![]() $t$, in which

$t$, in which ![]() $I$, the rabid foxes, are diffusive, and

$I$, the rabid foxes, are diffusive, and ![]() $S$ gets infected by

$S$ gets infected by ![]() $I$ to become

$I$ to become ![]() $E$. The expansion of

$E$. The expansion of ![]() $[g(t), h(t)]$ is driven solely by

$[g(t), h(t)]$ is driven solely by ![]() $I$. Both

$I$. Both ![]() $E$ and

$E$ and ![]() $I$ are absent (identically 0) outside the infected region

$I$ are absent (identically 0) outside the infected region ![]() $[g(t), h(t)]$. On the other hand,

$[g(t), h(t)]$. On the other hand, ![]() $S$ is assumed to exist over the entire space

$S$ is assumed to exist over the entire space ![]() $\mathbb{R}$.

$\mathbb{R}$.

The moving boundaries ![]() $ x = h(t) $ and

$ x = h(t) $ and ![]() $ x = g(t) $ are also known as free boundaries; they form part of the unknowns in (3) (apart from

$ x = g(t) $ are also known as free boundaries; they form part of the unknowns in (3) (apart from ![]() $E,I, S$). The fifth and sixth equations in (3), which govern the evolution of these free boundaries, coincide with the well-known Stefan condition. Such a boundary condition was applied to model the propagation of an invasive species by Du and Lin [Reference Du and Lin10] within the framework of a KPP-type scalar reaction-diffusion equation, and has since been extended to a broad range of problems with a single species (see, e.g., [Reference Du and Guo6–Reference Du and Liang9, Reference Du, Matsuzawa and Zhou12, Reference Kaneko, Matsuzawa and Yamada15, Reference Li, Liang and Shen17, Reference Peng and Zhao24, Reference Wang30]). A deduction of the Stefan condition from some plausible biological assumptions can be found in [Reference Bunting, Du and Krakowski2]. Related free boundary models for multi-species systems of Lotka–Volterra type can be found in [Reference Du and Lin11, Reference Guo and Wu13, Reference Wang29, Reference Wang and Zhang31–Reference Wang and Zhao33, Reference Wang, Qin and Wu37], some similar free boundary systems for epidemic models may be found in [Reference Lin and Zhu18, Reference Wang and Du34, Reference Wang, Nie and Du36] and the associated literature. See also [Reference Du4] for a review.

$E,I, S$). The fifth and sixth equations in (3), which govern the evolution of these free boundaries, coincide with the well-known Stefan condition. Such a boundary condition was applied to model the propagation of an invasive species by Du and Lin [Reference Du and Lin10] within the framework of a KPP-type scalar reaction-diffusion equation, and has since been extended to a broad range of problems with a single species (see, e.g., [Reference Du and Guo6–Reference Du and Liang9, Reference Du, Matsuzawa and Zhou12, Reference Kaneko, Matsuzawa and Yamada15, Reference Li, Liang and Shen17, Reference Peng and Zhao24, Reference Wang30]). A deduction of the Stefan condition from some plausible biological assumptions can be found in [Reference Bunting, Du and Krakowski2]. Related free boundary models for multi-species systems of Lotka–Volterra type can be found in [Reference Du and Lin11, Reference Guo and Wu13, Reference Wang29, Reference Wang and Zhang31–Reference Wang and Zhao33, Reference Wang, Qin and Wu37], some similar free boundary systems for epidemic models may be found in [Reference Lin and Zhu18, Reference Wang and Du34, Reference Wang, Nie and Du36] and the associated literature. See also [Reference Du4] for a review.

We note that system (3) differs significantly from the above-mentioned free boundary systems in that two equations in (3) have no diffusion term, and interact nontrivially with a single reaction diffusion equation with free boundaries, which are shared with one of the ODEs in the system. This unusual feature of (3) causes many technical difficulties in the mathematical treatment.

Throughout this paper, the initial functions ![]() $E_0(x)$,

$E_0(x)$, ![]() $I_0(x)$ and

$I_0(x)$ and ![]() $S_0(x)$ in (3) are assumed to satisfy

$S_0(x)$ in (3) are assumed to satisfy

\begin{equation}

\begin{cases}

E_0\in {\rm Lip}([-h_0,h_0]), E_0(-h_0)=E_0(h_0)=0 \,\text{and } E_0(x) \gt 0 \text{ in } (-h_0,h_0);\\

I_0\in C^{2}([-h_0,h_0]), I_0(\pm h_0)=0, I_0'(-h_0) \gt 0 \gt I_0'(h_0) \ {\rm and}\ I_0(x) \gt 0 \\

\quad {\rm in}\ (-h_0,h_0);\\

S_0\in {\rm Lip}(\mathbb{R}),

0 \lt S_0(x)\leq K\ {\rm in}\ (-\infty, \infty).

\end{cases}

\end{equation}

\begin{equation}

\begin{cases}

E_0\in {\rm Lip}([-h_0,h_0]), E_0(-h_0)=E_0(h_0)=0 \,\text{and } E_0(x) \gt 0 \text{ in } (-h_0,h_0);\\

I_0\in C^{2}([-h_0,h_0]), I_0(\pm h_0)=0, I_0'(-h_0) \gt 0 \gt I_0'(h_0) \ {\rm and}\ I_0(x) \gt 0 \\

\quad {\rm in}\ (-h_0,h_0);\\

S_0\in {\rm Lip}(\mathbb{R}),

0 \lt S_0(x)\leq K\ {\rm in}\ (-\infty, \infty).

\end{cases}

\end{equation}For convenience, we will also write

\begin{eqnarray*}

\begin{array}{ll}

&f_{1}(E,I,S):=\beta I S-\sigma E- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]E;\ \ \\

&f_{2}(E,I,S):=\sigma E-\alpha I- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]I;\ \ \\

&f_{3}(E,I,S):=(a-b)S\left(1-\displaystyle\frac{E+I+S}{K}\right)-\beta I S.\ \

\end{array}

\end{eqnarray*}

\begin{eqnarray*}

\begin{array}{ll}

&f_{1}(E,I,S):=\beta I S-\sigma E- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]E;\ \ \\

&f_{2}(E,I,S):=\sigma E-\alpha I- \left[b+(a-b)\displaystyle\frac{E+I+S}{K}\right]I;\ \ \\

&f_{3}(E,I,S):=(a-b)S\left(1-\displaystyle\frac{E+I+S}{K}\right)-\beta I S.\ \

\end{array}

\end{eqnarray*} The local existence and uniqueness of solutions to system (3) are established in Section 2 by employing several novel techniques (including applying the Banach fixed point theorem in combination with a parameterized ODE analysis), and then the local solution is uniquely extended to all time ![]() $t \gt 0$ by deriving suitable a priori bounds for the solution; the main results are stated in Theorems 2.1 and 2.2.

$t \gt 0$ by deriving suitable a priori bounds for the solution; the main results are stated in Theorems 2.1 and 2.2.

The long-time dynamics of (3) is considered in Section 3, based on the comparison principle and the analysis of some associated eigenvalue problems. It is easily seen by the Hopf boundary lemma that ![]() $h'(t) \gt 0 \gt g'(t)$ for

$h'(t) \gt 0 \gt g'(t)$ for ![]() $t \gt 0$, and hence the following limits always exist:

$t \gt 0$, and hence the following limits always exist:

\begin{equation*}h_\infty:=\lim_{t\to\infty} h(t)\in (h_0,\infty],~~ g_\infty:=\lim_{t\to\infty} g(t)\in [-\infty, -h_0).\end{equation*}

\begin{equation*}h_\infty:=\lim_{t\to\infty} h(t)\in (h_0,\infty],~~ g_\infty:=\lim_{t\to\infty} g(t)\in [-\infty, -h_0).\end{equation*}We have either

In the former case, we can show (see Theorem 3.1) that

So the rabid foxes vanish eventually, and we will call this the vanishing case.

If ![]() $h_{\infty}-g_\infty =\infty$, a reasonable understanding of the long-time behaviour of (3) is gained when

$h_{\infty}-g_\infty =\infty$, a reasonable understanding of the long-time behaviour of (3) is gained when ![]() $\mathcal R_0 \gt 1$; in this case, we can show that the range of the rabid foxes spread to the entire space

$\mathcal R_0 \gt 1$; in this case, we can show that the range of the rabid foxes spread to the entire space ![]() $\mathbb{ R}$, with density persisting weakly (see Theorem 3.4), namely

$\mathbb{ R}$, with density persisting weakly (see Theorem 3.4), namely

\begin{equation*}

(g_\infty, h_\infty)=(-\infty,\infty) \,\mbox{and } \limsup_{t\to\infty}\min_{x\in [-l, l]}I(t,x) \gt 0\,\mbox{for any } l \gt 0.

\end{equation*}

\begin{equation*}

(g_\infty, h_\infty)=(-\infty,\infty) \,\mbox{and } \limsup_{t\to\infty}\min_{x\in [-l, l]}I(t,x) \gt 0\,\mbox{for any } l \gt 0.

\end{equation*}This will be called the spreading case in this paper.

Thus, in the parameter regime that ![]() $\mathcal R_0 \gt 1$, the long-time dynamics of the model are governed by a spreading-vanishing dichotomy, according to whether

$\mathcal R_0 \gt 1$, the long-time dynamics of the model are governed by a spreading-vanishing dichotomy, according to whether ![]() $0 \lt h_\infty-g_\infty \lt \infty$ or

$0 \lt h_\infty-g_\infty \lt \infty$ or ![]() $h_{\infty}-g_\infty =\infty$.

$h_{\infty}-g_\infty =\infty$.

We believe that strong persistence of ![]() $I$ holds in the spreading case, namely we believe the following conclusions should be true:

$I$ holds in the spreading case, namely we believe the following conclusions should be true:

\begin{equation*}

(g_\infty, h_\infty)=(-\infty,\infty)\,\mbox{and } \liminf_{t\to\infty}\min_{x\in [-l, l]}I(t,x) \gt 0\,\mbox{for any } l \gt 0,

\end{equation*}

\begin{equation*}

(g_\infty, h_\infty)=(-\infty,\infty)\,\mbox{and } \liminf_{t\to\infty}\min_{x\in [-l, l]}I(t,x) \gt 0\,\mbox{for any } l \gt 0,

\end{equation*}but we have been unable to prove it, mainly due to the lack of compactness of the solutions ![]() $\{(E(t,\cdot), S(t,\cdot)): t \gt 0\}$ in a suitable function space, caused by the lack of enough regularity of the ODE solutions in the system. This question is left as an open problem at the end of the paper.

$\{(E(t,\cdot), S(t,\cdot)): t \gt 0\}$ in a suitable function space, caused by the lack of enough regularity of the ODE solutions in the system. This question is left as an open problem at the end of the paper.

Some easy-to-check sufficient conditions for vanishing and spreading are obtained with the help of certain associated eigenvalue problems, and the results are summarized below (which follow directly from Theorems 3.2, 3.3, 3.4, 3.5, and 3.6):

Let ![]() $(E,I,S,g,h)$ be the solution of (3), and

$(E,I,S,g,h)$ be the solution of (3), and

\begin{equation*}

\mathcal{R}_0^*:=\displaystyle\frac{\beta\sigma K}{(\alpha+b)(\sigma+b)}.

\end{equation*}

\begin{equation*}

\mathcal{R}_0^*:=\displaystyle\frac{\beta\sigma K}{(\alpha+b)(\sigma+b)}.

\end{equation*}Then we have the following conclusions:

(i) If

$\mathcal R_0^*\leq 1$, then vanishing always happens.

$\mathcal R_0^*\leq 1$, then vanishing always happens.(ii) If

$\mathcal R_0^* \gt 1$ and

$\mathcal R_0^* \gt 1$ and  $l_*$ is given by

then vanishing happens if

$l_*$ is given by

then vanishing happens if \begin{equation*}

l_*:=l(b)=\displaystyle\frac{\pi}{2}\sqrt{\frac{D(\sigma+b)}{\sigma \beta K-(\alpha+b)(\sigma+b)}},

\end{equation*}

\begin{equation*}

l_*:=l(b)=\displaystyle\frac{\pi}{2}\sqrt{\frac{D(\sigma+b)}{\sigma \beta K-(\alpha+b)(\sigma+b)}},

\end{equation*} $h_0 \lt l_*$ and

$h_0 \lt l_*$ and  $\mu$ is sufficiently small

$\mu$ is sufficiently small  $($depending on

$($depending on  $E_0, I_0, S_0)$.

$E_0, I_0, S_0)$.(iii) If

$\mathcal R_0 \gt 1$ and

$\mathcal R_0 \gt 1$ and  $\tilde l_*$ is given by

then spreading always occurs when

$\tilde l_*$ is given by

then spreading always occurs when \begin{equation*}

\tilde l_*:=l(a)=\displaystyle\frac{\pi}{2}\sqrt{\frac{D(\sigma+a)}{\sigma \beta K-(\alpha+a)(\sigma+a)}},

\end{equation*}

\begin{equation*}

\tilde l_*:=l(a)=\displaystyle\frac{\pi}{2}\sqrt{\frac{D(\sigma+a)}{\sigma \beta K-(\alpha+a)(\sigma+a)}},

\end{equation*} $h_0\geq \tilde l_*$, and when

$h_0\geq \tilde l_*$, and when  $h_0 \lt \tilde l_*$, spreading occurs for all sufficiently large

$h_0 \lt \tilde l_*$, spreading occurs for all sufficiently large  $\mu \ ($depending on

$\mu \ ($depending on  $E_0$,

$E_0$,  $I_0$ and

$I_0$ and  $S_0)$.

$S_0)$.

2. Existence and uniqueness of a global solution

In this section, we first establish the local existence and uniqueness of solutions to the system (3). Subsequently, by deriving suitable a priori estimates, we show that the local solution can be extended globally. For convenience, we denote

Theorem 2.1. For any given ![]() $(E_0, I_0, S_0)$ satisfying (4) and any

$(E_0, I_0, S_0)$ satisfying (4) and any ![]() $\gamma\in(0,1)$, there exists

$\gamma\in(0,1)$, there exists ![]() $T \gt 0$ depending on

$T \gt 0$ depending on ![]() $\Pi$ and

$\Pi$ and ![]() $\gamma$ such that problem (3) admits a unique solution

$\gamma$ such that problem (3) admits a unique solution ![]() $(E,I,S,h,g)$ for

$(E,I,S,h,g)$ for ![]() $t\in [0, T]$ with

$t\in [0, T]$ with  $g,h\in C^{\frac{3+ \gamma }{2}}((0,T])\cap C^1([0,T])$ and

$g,h\in C^{\frac{3+ \gamma }{2}}((0,T])\cap C^1([0,T])$ and

\begin{align*}

&E\in C^{1}([0,T]; L^{\infty}(\mathbb{ R})),\ I\in C^{1+\frac{\gamma}{2}, 2+\gamma}(\Sigma_{T})\cap C^{(1+\gamma)/2, 1+\gamma}(\overline{\Sigma}_{T}),\\

&\quad S\in C^{1}([0,T]; L^{\infty}(\mathbb{R})),\ \end{align*}

\begin{align*}

&E\in C^{1}([0,T]; L^{\infty}(\mathbb{ R})),\ I\in C^{1+\frac{\gamma}{2}, 2+\gamma}(\Sigma_{T})\cap C^{(1+\gamma)/2, 1+\gamma}(\overline{\Sigma}_{T}),\\

&\quad S\in C^{1}([0,T]; L^{\infty}(\mathbb{R})),\ \end{align*}where

\begin{equation*}

\Sigma_T=\Sigma_{T,g,h}:=\left\{(t,x)\in \mathbb{ R}^{2}:\ t\in(0,T],\ g(t)\leq x\leq h(t)\right\}.

\end{equation*}

\begin{equation*}

\Sigma_T=\Sigma_{T,g,h}:=\left\{(t,x)\in \mathbb{ R}^{2}:\ t\in(0,T],\ g(t)\leq x\leq h(t)\right\}.

\end{equation*} Moreover, there exists ![]() $C \gt 0$ depending on

$C \gt 0$ depending on ![]() $\Pi$ and

$\Pi$ and ![]() $\gamma$, such that

$\gamma$, such that

\begin{eqnarray*}

\sup_{x\in \mathbb{ R}}\|(E(\cdot,x), S(\cdot,x))\|_{C^1([0,T])}+\|I\|_{C^{(1+\gamma)/2, 1+\gamma}(\overline{\Sigma}_{T})}+\|(g,h)\|_{C^{1+\gamma/2}([0, T])}\leq C.

\end{eqnarray*}

\begin{eqnarray*}

\sup_{x\in \mathbb{ R}}\|(E(\cdot,x), S(\cdot,x))\|_{C^1([0,T])}+\|I\|_{C^{(1+\gamma)/2, 1+\gamma}(\overline{\Sigma}_{T})}+\|(g,h)\|_{C^{1+\gamma/2}([0, T])}\leq C.

\end{eqnarray*}Proof. For clarity, we divide the rather involved proof into several steps. Some ideas in nonlocal diffusion models with free boundary (see, e.g., [Reference Cao, Du, Li and Li3]) will be adapted and used here.

Step 1. Some notations.

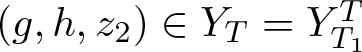

Let  $\displaystyle T_1:= \frac{3h_0}{2(2+|g^{*}|+|h^{*}|)}$ with

$\displaystyle T_1:= \frac{3h_0}{2(2+|g^{*}|+|h^{*}|)}$ with ![]() $h^{*}:=-\mu I_0'(h_0) \gt 0$,

$h^{*}:=-\mu I_0'(h_0) \gt 0$, ![]() $g^{*}:=-\mu I_0'(-h_0) \lt 0$.

$g^{*}:=-\mu I_0'(-h_0) \lt 0$.

For ![]() $0 \lt T\leq T_1$, define

$0 \lt T\leq T_1$, define

\begin{align*}

Y_{1,T}&:= \{g\in C^{1}([0, T]):\, g(0)=-h_0,\ g'(0)=g^*,\ g'(t)\leq \frac{g^*}{7}\,\text{for } t\in[0,T],\\ &\quad \|g'-g^*\|_{C([0,T])}\leq 1\},\\[1mm]

Y_{2,T}&:= \{h\in C^{1}([0, T]):\, h(0)=h_0,\ h'(0)=h^*,\ h'(t)\geq \frac{h^*}{7}\,\text{for } t\in[0,T],\\ &\quad \|h'-h^*\|_{C([0,T])}\leq 1\}.

\end{align*}

\begin{align*}

Y_{1,T}&:= \{g\in C^{1}([0, T]):\, g(0)=-h_0,\ g'(0)=g^*,\ g'(t)\leq \frac{g^*}{7}\,\text{for } t\in[0,T],\\ &\quad \|g'-g^*\|_{C([0,T])}\leq 1\},\\[1mm]

Y_{2,T}&:= \{h\in C^{1}([0, T]):\, h(0)=h_0,\ h'(0)=h^*,\ h'(t)\geq \frac{h^*}{7}\,\text{for } t\in[0,T],\\ &\quad \|h'-h^*\|_{C([0,T])}\leq 1\}.

\end{align*} Step 2. Transformation of problem (3) for given ![]() $(g,h)\in T_{1,T}\times Y_{2,T}$.

$(g,h)\in T_{1,T}\times Y_{2,T}$.

We consider the transformation ![]() $(t,y)\mapsto(t,x)$ defined by

$(t,y)\mapsto(t,x)$ defined by

\begin{equation*}

x=\Psi(t,y):=\frac{g(t)+h(t)+y(h(t)-g(t))}{2} \quad \text{for } y\in\mathbb{R}.

\end{equation*}

\begin{equation*}

x=\Psi(t,y):=\frac{g(t)+h(t)+y(h(t)-g(t))}{2} \quad \text{for } y\in\mathbb{R}.

\end{equation*} For any fixed ![]() $t\in[0,T]$, it is easily seen that

$t\in[0,T]$, it is easily seen that ![]() $\Psi$ is a diffeomorphism on

$\Psi$ is a diffeomorphism on ![]() $\mathbb{R}$ due to

$\mathbb{R}$ due to ![]() $T\in \left.(0, T_1\right]$ (which implies

$T\in \left.(0, T_1\right]$ (which implies ![]() $h(t)-g(t)\geq h_0/2$ for

$h(t)-g(t)\geq h_0/2$ for ![]() $t\in[0,T]$).

$t\in[0,T]$).

By direct calculations, one has

\begin{equation}

\begin{cases}

\displaystyle\frac{\partial y}{\partial x}&=\displaystyle\frac{2}{h(t)-g(t)}:=\rho(t)=\rho_{g,h}(t), \qquad \displaystyle\frac{\partial^2 y}{\partial x^2}=0, \\

\displaystyle\frac{\partial y}{\partial t}&=\displaystyle\frac{-(h(t)-g(t))(h'(t)+g'(t))-(h'(t)-g'(t))(2x-g(t)-h(t))}{(h(t)-g(t))^2}\\

&=-\displaystyle\frac{h'(t)+g'(t)}{h(t)-g(t)}-\displaystyle\frac{h'(t)-g'(t)}{h(t)-g(t)}y:=-\zeta(t,y)=-\zeta_{g,h}(t,y).\\

\end{cases}

\end{equation}

\begin{equation}

\begin{cases}

\displaystyle\frac{\partial y}{\partial x}&=\displaystyle\frac{2}{h(t)-g(t)}:=\rho(t)=\rho_{g,h}(t), \qquad \displaystyle\frac{\partial^2 y}{\partial x^2}=0, \\

\displaystyle\frac{\partial y}{\partial t}&=\displaystyle\frac{-(h(t)-g(t))(h'(t)+g'(t))-(h'(t)-g'(t))(2x-g(t)-h(t))}{(h(t)-g(t))^2}\\

&=-\displaystyle\frac{h'(t)+g'(t)}{h(t)-g(t)}-\displaystyle\frac{h'(t)-g'(t)}{h(t)-g(t)}y:=-\zeta(t,y)=-\zeta_{g,h}(t,y).\\

\end{cases}

\end{equation}Now we define

Then system (3) for ![]() $0 \lt t\leq T$ can be equivalently reformulated as the following two subsystems:

$0 \lt t\leq T$ can be equivalently reformulated as the following two subsystems:

\begin{equation}

\left\{\begin{array}{ll}

E_t=f_1(E,I,S),\ &0 \lt t\leq T,\ g(t) \lt x \lt h(t), \\

S_t=f_3(E,I,S),\ &0 \lt t\leq T,\ x\in \mathbb{R}, \\

E(t,x)=0,\ & 0 \lt t\leq T, \ x\not\in(g(t),h(t)),\\

E(0,x)=E_0(x),\ &-h_0 \lt x \lt h_0, \\

S(0,x)=S_0(x),\ &x\in \mathbb{R}\\

\end{array}\right.

\end{equation}

\begin{equation}

\left\{\begin{array}{ll}

E_t=f_1(E,I,S),\ &0 \lt t\leq T,\ g(t) \lt x \lt h(t), \\

S_t=f_3(E,I,S),\ &0 \lt t\leq T,\ x\in \mathbb{R}, \\

E(t,x)=0,\ & 0 \lt t\leq T, \ x\not\in(g(t),h(t)),\\

E(0,x)=E_0(x),\ &-h_0 \lt x \lt h_0, \\

S(0,x)=S_0(x),\ &x\in \mathbb{R}\\

\end{array}\right.

\end{equation}and

\begin{equation}

\left\{\begin{array}{ll}

z_{2t}-D\rho^{2}z_{2yy}-\zeta z_{2y}=f_2(z_1,z_2,z_3),\ & 0 \lt t\leq T,\ |y| \lt 1, \\

z_{2}(t,1)=z_{2}(t,-1)=0,\ &0 \lt t\leq T, \\

h'(t)=-\mu\rho z_{2y}(t,1),\ &0 \lt t\leq T, \\

g'(t)=-\mu\rho z_{2y}(t,-1),\ & 0 \lt t\leq T, \\

z_{2}(0,y)=I_{0}(h_{0}y)=:z_{20}(y), \ & |y| \lt 1,\\

g(0)=-h_0,\ h(0)=h_0.

\end{array}\right.

\end{equation}

\begin{equation}

\left\{\begin{array}{ll}

z_{2t}-D\rho^{2}z_{2yy}-\zeta z_{2y}=f_2(z_1,z_2,z_3),\ & 0 \lt t\leq T,\ |y| \lt 1, \\

z_{2}(t,1)=z_{2}(t,-1)=0,\ &0 \lt t\leq T, \\

h'(t)=-\mu\rho z_{2y}(t,1),\ &0 \lt t\leq T, \\

g'(t)=-\mu\rho z_{2y}(t,-1),\ & 0 \lt t\leq T, \\

z_{2}(0,y)=I_{0}(h_{0}y)=:z_{20}(y), \ & |y| \lt 1,\\

g(0)=-h_0,\ h(0)=h_0.

\end{array}\right.

\end{equation}Step 3. An extension trick.

For ![]() $T\in \left.(0, T_1\right]$ define

$T\in \left.(0, T_1\right]$ define ![]() $D_T=[0,T]\times [-1,1]$ and

$D_T=[0,T]\times [-1,1]$ and

\begin{align*}

Y_{3,T}:=\Big\{z_2\in C(D_{T}): & z_2\geq 0\,\text{in } D_{T},\, z_2(0,y)=z_{20}(y),\, z_2(t,\pm 1)=0, \\

& \,\sup\limits_{-1\leq y_1,y_2\leq 1,t\in[0,T]\atop y_1\neq y_2}\frac{|z_2(t,y_1)-z_2(t,y_2)|}{|y_1-y_2|}\leq B,\\

&\|z_2-z_{20}\|_{C(D_T)}\leq 1

\Big\},

\end{align*}

\begin{align*}

Y_{3,T}:=\Big\{z_2\in C(D_{T}): & z_2\geq 0\,\text{in } D_{T},\, z_2(0,y)=z_{20}(y),\, z_2(t,\pm 1)=0, \\

& \,\sup\limits_{-1\leq y_1,y_2\leq 1,t\in[0,T]\atop y_1\neq y_2}\frac{|z_2(t,y_1)-z_2(t,y_2)|}{|y_1-y_2|}\leq B,\\

&\|z_2-z_{20}\|_{C(D_T)}\leq 1

\Big\},

\end{align*}with ![]() $B \gt 0$ to be specified later. Clearly

$B \gt 0$ to be specified later. Clearly

\begin{equation*}

Y_T:=\prod_{i=1}^3 Y_{i,T}

\end{equation*}

\begin{equation*}

Y_T:=\prod_{i=1}^3 Y_{i,T}

\end{equation*}is a complete metric space endowed with the following metric:

With ![]() $0 \lt T \lt T_1$, we define a subspace of

$0 \lt T \lt T_1$, we define a subspace of ![]() $Y_{T_1}$, denoted by

$Y_{T_1}$, denoted by  $Y_{T_1}^{T}:=\prod_{i=1}^3 Y_{i,T_1}^{T}$, with

$Y_{T_1}^{T}:=\prod_{i=1}^3 Y_{i,T_1}^{T}$, with

\begin{align*}

Y^{T}_{1,T_1}&:=\{g\in C^{1}([0, T_1]):\ g|_{[0,T]}\in Y_{1,T},\\

&\quad g(t)=g(T)+g'(T)(t-T)\,\text{for }T\leq t\leq T_1\},\\[1mm]

Y^{T}_{2,T_1}&:=\{h\in C^{1}([0, T_1]):\ h|_{[0,T]}\in Y_{2,T}, \\

&\quad h(t)=h(T)+h'(T)(t-T)\text{for }T\leq t\leq T_1\},\\[1mm]

Y^{T}_{3,T_1}&:=\{z_2\in C(D_{T_1}): z_2|_{[0,T]}\in Y_{3,T},\ z_2(t,y)=z_2(T,y)\text{for }T\leq t\leq T_1 \}.

\end{align*}

\begin{align*}

Y^{T}_{1,T_1}&:=\{g\in C^{1}([0, T_1]):\ g|_{[0,T]}\in Y_{1,T},\\

&\quad g(t)=g(T)+g'(T)(t-T)\,\text{for }T\leq t\leq T_1\},\\[1mm]

Y^{T}_{2,T_1}&:=\{h\in C^{1}([0, T_1]):\ h|_{[0,T]}\in Y_{2,T}, \\

&\quad h(t)=h(T)+h'(T)(t-T)\text{for }T\leq t\leq T_1\},\\[1mm]

Y^{T}_{3,T_1}&:=\{z_2\in C(D_{T_1}): z_2|_{[0,T]}\in Y_{3,T},\ z_2(t,y)=z_2(T,y)\text{for }T\leq t\leq T_1 \}.

\end{align*} It is clear that each element of ![]() $Y_{T}$ can be extended to be an element of

$Y_{T}$ can be extended to be an element of  $Y_{T_1}^T$. As a result, in what follows we will always identify

$Y_{T_1}^T$. As a result, in what follows we will always identify ![]() $Y_T$ with

$Y_T$ with  $Y_{T_1}^T$. This extension trick will be used in our proof of local existence to (7).

$Y_{T_1}^T$. This extension trick will be used in our proof of local existence to (7).

Step 4. Solving (6) for any given  $(g,h, z_2)\in Y_T=Y_{T_1}^T\subseteq Y_{T_1}$.

$(g,h, z_2)\in Y_T=Y_{T_1}^T\subseteq Y_{T_1}$.

By the definition of ![]() $T_1$, we have

$T_1$, we have

\begin{equation*}

\frac{7h_0}{2}\geq 2h_0+(2+h^*-g^*)T_1\geq h(t)-g(t)\geq 2h_0+(h^*-g^*-2)T_1\geq \frac{h_0}{2}\,\text{for }t\in[0,T_1],

\end{equation*}

\begin{equation*}

\frac{7h_0}{2}\geq 2h_0+(2+h^*-g^*)T_1\geq h(t)-g(t)\geq 2h_0+(h^*-g^*-2)T_1\geq \frac{h_0}{2}\,\text{for }t\in[0,T_1],

\end{equation*}which implies that the map ![]() $y\to x=\Psi(t,y)$ is a diffeomorphism on

$y\to x=\Psi(t,y)$ is a diffeomorphism on ![]() $\mathbb{R}$ for each

$\mathbb{R}$ for each ![]() $t\in[0,T_1]$. Therefore, the function

$t\in[0,T_1]$. Therefore, the function ![]() $I(t,x):=z_2(t,y)$ is well-defined for all

$I(t,x):=z_2(t,y)$ is well-defined for all ![]() $(t,x)\in\Sigma_{T_1}$. We extend

$(t,x)\in\Sigma_{T_1}$. We extend ![]() $ I(t,x) $ by zero outside the interval

$ I(t,x) $ by zero outside the interval ![]() $ [g(t), h(t)] $ of

$ [g(t), h(t)] $ of ![]() $x$ for each

$x$ for each ![]() $ t \in [0, T_1] $, and denote the extended function by

$ t \in [0, T_1] $, and denote the extended function by ![]() $ \bar{I}(t,x) $.

$ \bar{I}(t,x) $.

For any given ![]() $x\in[g(T_1),h(T_1)]$, let

$x\in[g(T_1),h(T_1)]$, let

\begin{eqnarray*}

\begin{array}{l}

\tilde{E}_0(x):=\left\{\begin{array}{ll}

E_0(x),\ &-h_0\leq x\leq h_0, \\

0,\ &x\not\in[-h_0,h_0]

\end{array}\right.

\\

t_{x}:=\left\{\begin{array}{ll}

t_{x}^{g},\ & \text{if }g(T_1)\leq x \lt -h_0\ {\rm and}\ x=g(t_{x}^{g}), \\

0,\ & \text{if }-h_0\leq x\leq h_0, \\

t_{x}^{h},\ &\text{if }h_0 \lt X\leq h(T_1)\ {\rm and}\ x=h(t_{x}^{h}).

\end{array}\right.

\end{array}

\end{eqnarray*}

\begin{eqnarray*}

\begin{array}{l}

\tilde{E}_0(x):=\left\{\begin{array}{ll}

E_0(x),\ &-h_0\leq x\leq h_0, \\

0,\ &x\not\in[-h_0,h_0]

\end{array}\right.

\\

t_{x}:=\left\{\begin{array}{ll}

t_{x}^{g},\ & \text{if }g(T_1)\leq x \lt -h_0\ {\rm and}\ x=g(t_{x}^{g}), \\

0,\ & \text{if }-h_0\leq x\leq h_0, \\

t_{x}^{h},\ &\text{if }h_0 \lt X\leq h(T_1)\ {\rm and}\ x=h(t_{x}^{h}).

\end{array}\right.

\end{array}

\end{eqnarray*} Clearly, ![]() $t_{g(T_1)}=t_{h(T_1)}=T_1$. Moreover, we set

$t_{g(T_1)}=t_{h(T_1)}=T_1$. Moreover, we set ![]() $t_x=T_1$ for

$t_x=T_1$ for ![]() $x\not\in [g(T_1),h(T_1)]$.

$x\not\in [g(T_1),h(T_1)]$.

We now consider the following ODE problems with parameter ![]() $x$:

$x$:

\begin{equation}

\left\{\begin{array}{ll}

E_t=f_1(E,\bar{I}(t,x),S),\ E(t_x,x)=\tilde{E}_0(x),\, & t_x \lt t\leq T_1, x\in(g(T_1),h(T_1)), \\

E(t,x)=0, & 0\leq t\leq T_1,\ x\not\in[g(t),h(t)],\\

S_t=f_3(E,\bar{I}(t,x),S),\ S(0,x)=S_0(x),\,& 0 \lt t\leq T_1,\ x\in\mathbb{ R}.

\end{array}\right.

\end{equation}

\begin{equation}

\left\{\begin{array}{ll}

E_t=f_1(E,\bar{I}(t,x),S),\ E(t_x,x)=\tilde{E}_0(x),\, & t_x \lt t\leq T_1, x\in(g(T_1),h(T_1)), \\

E(t,x)=0, & 0\leq t\leq T_1,\ x\not\in[g(t),h(t)],\\

S_t=f_3(E,\bar{I}(t,x),S),\ S(0,x)=S_0(x),\,& 0 \lt t\leq T_1,\ x\in\mathbb{ R}.

\end{array}\right.

\end{equation} Before starting to solve (8), let us note that due to ![]() $E(t,x)=\bar{I}(t,x)=0$ for

$E(t,x)=\bar{I}(t,x)=0$ for ![]() $x\not\in[g(t),h(t)]$, for each

$x\not\in[g(t),h(t)]$, for each ![]() $x\in \mathbb{ R}\setminus[-h_0,h_0]$, problem (8) reduces to the following single logistic equation for

$x\in \mathbb{ R}\setminus[-h_0,h_0]$, problem (8) reduces to the following single logistic equation for ![]() $t\in[0,t_x]$:

$t\in[0,t_x]$:

\begin{equation}

S_t=(a-b)S(1-\frac{S}{K})\,\text{for } t\in(0,t_x], \quad S(0,x)=S_0(x),

\end{equation}

\begin{equation}

S_t=(a-b)S(1-\frac{S}{K})\,\text{for } t\in(0,t_x], \quad S(0,x)=S_0(x),

\end{equation}which admits a unique solution  $\hat{S}\in C^{1}([0,t_x])$ satisfying

$\hat{S}\in C^{1}([0,t_x])$ satisfying

\begin{equation}

0 \lt \hat{S}(t,x)\leq K\,\text{for }t\in[0,t_x].

\end{equation}

\begin{equation}

0 \lt \hat{S}(t,x)\leq K\,\text{for }t\in[0,t_x].

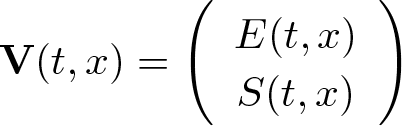

\end{equation}We are now ready to fully solve (8). Set

\begin{equation*}

\textbf{V}:=\left(\begin{array}{c}E\\S\end{array}\right)

\text{and }\textbf{F}(t,x,\textbf{V}):=\left(\begin{array}{c}

f_1(E,\bar{I}(t,x),S) \\

f_3(E,\bar{I}(t,x),S)

\end{array}\right).

\end{equation*}

\begin{equation*}

\textbf{V}:=\left(\begin{array}{c}E\\S\end{array}\right)

\text{and }\textbf{F}(t,x,\textbf{V}):=\left(\begin{array}{c}

f_1(E,\bar{I}(t,x),S) \\

f_3(E,\bar{I}(t,x),S)

\end{array}\right).

\end{equation*} Then the pair ![]() $(E,S)$ is a solution to (8) if and only if

$(E,S)$ is a solution to (8) if and only if ![]() $\mathbf{V}$ is a solution to the following system with parameter

$\mathbf{V}$ is a solution to the following system with parameter ![]() $x\in\mathbb{ R}$:

$x\in\mathbb{ R}$:

\begin{equation}

\begin{cases}

\textbf{V}_t=\textbf{F}(t,x,\textbf{V}) &\text{for }t\in[t_x,T_1],\\

\textbf{V}(t,x)=\left(\begin{array}{c}

0 \\

\hat{S}(t,x)

\end{array}\right)

&\text{for }t\in[0,t_x].

\end{cases}

\end{equation}

\begin{equation}

\begin{cases}

\textbf{V}_t=\textbf{F}(t,x,\textbf{V}) &\text{for }t\in[t_x,T_1],\\

\textbf{V}(t,x)=\left(\begin{array}{c}

0 \\

\hat{S}(t,x)

\end{array}\right)

&\text{for }t\in[0,t_x].

\end{cases}

\end{equation} Since ![]() $f_1$ and

$f_1$ and ![]() $f_3$ are smooth in

$f_3$ are smooth in ![]() $(E,\bar{I},S)$, and

$(E,\bar{I},S)$, and ![]() $\bar{I}$ is continuous and uniformly bounded, it is easy to verify that

$\bar{I}$ is continuous and uniformly bounded, it is easy to verify that ![]() $\textbf{F}$ is Lipschitz continuous in

$\textbf{F}$ is Lipschitz continuous in ![]() $\textbf{V}\in[0,L_1]^2$, uniformly with respect to

$\textbf{V}\in[0,L_1]^2$, uniformly with respect to ![]() $(t,x)\in[0,T_1]\times\mathbb{ R}$, where

$(t,x)\in[0,T_1]\times\mathbb{ R}$, where

Hence, it follows from the fundamental theorem of ODEs that for each ![]() $x\in\mathbb{ R}$, (11) possesses a unique solution

$x\in\mathbb{ R}$, (11) possesses a unique solution ![]() $\textbf{V}\in [C^1([t_x,T_x])]^2$ for some

$\textbf{V}\in [C^1([t_x,T_x])]^2$ for some ![]() $T_x\in \left. (t_x, T_1\right]$. Consequently, for each

$T_x\in \left. (t_x, T_1\right]$. Consequently, for each ![]() $x\in\mathbb{ R}$, (8) has a unique solution

$x\in\mathbb{ R}$, (8) has a unique solution ![]() $(E,S)$ defined on

$(E,S)$ defined on ![]() $[0,T_x]$.

$[0,T_x]$.

Next, we show that ![]() $(E,S)$ can be uniquely extended to

$(E,S)$ can be uniquely extended to ![]() $[0,T_1]$. It suffices to show that for each

$[0,T_1]$. It suffices to show that for each ![]() $x\in\mathbb{ R}$ and

$x\in\mathbb{ R}$ and ![]() $T_a\in[T_x,T_1]$, if

$T_a\in[T_x,T_1]$, if ![]() $(E,S)$ solves (8) for

$(E,S)$ solves (8) for ![]() $t\in[0,T_a]$, then

$t\in[0,T_a]$, then

For each ![]() $x\in\mathbb{ R}$, since

$x\in\mathbb{ R}$, since ![]() $E(t,x)=0$ and

$E(t,x)=0$ and  $S(t,x)=\hat{S}(t,x)$ for

$S(t,x)=\hat{S}(t,x)$ for ![]() $t\in[0,t_x]$, inequality (12) holds obviously for

$t\in[0,t_x]$, inequality (12) holds obviously for ![]() $t\in[0,t_x]$. Hence, it remains to prove (12) for

$t\in[0,t_x]$. Hence, it remains to prove (12) for ![]() $t\in[t_x,T_a]$.

$t\in[t_x,T_a]$.

Denote ![]() $X_g:=g(T_a)$ and

$X_g:=g(T_a)$ and ![]() $X_h:=h(T_a)$. If

$X_h:=h(T_a)$. If ![]() $x\in\left. (-\infty,X_g \right]\cup \left[X_h,\infty) \right.$, it is clear that

$x\in\left. (-\infty,X_g \right]\cup \left[X_h,\infty) \right.$, it is clear that ![]() $T_a\leq t_x$, and thus (12) already holds. If

$T_a\leq t_x$, and thus (12) already holds. If ![]() $x\in [X_g, X_h]$, we complete the proof according to two cases.

$x\in [X_g, X_h]$, we complete the proof according to two cases.

Case (a): ![]() $x\in(-h_0,h_0)$. For such

$x\in(-h_0,h_0)$. For such ![]() $x$ we have

$x$ we have ![]() $E_0(x),S_0(x) \gt 0$, and it follows by continuity that

$E_0(x),S_0(x) \gt 0$, and it follows by continuity that ![]() $E(t,x),S(t,x)\geq 0$ for all

$E(t,x),S(t,x)\geq 0$ for all ![]() $t\in[0,\tau]$ with some small

$t\in[0,\tau]$ with some small ![]() $\tau \gt 0$. Thus, we have

$\tau \gt 0$. Thus, we have

\begin{equation*}

S_t\leq (a-b)S\left(1-\displaystyle\frac{S}{K}\right) \text{for } t\in(0,\tau],

\end{equation*}

\begin{equation*}

S_t\leq (a-b)S\left(1-\displaystyle\frac{S}{K}\right) \text{for } t\in(0,\tau],

\end{equation*}which combined with ![]() $0 \lt S(0,x) \lt K$ yields

$0 \lt S(0,x) \lt K$ yields ![]() $0\leq S(t,x) \lt K$ for

$0\leq S(t,x) \lt K$ for ![]() $t\in[0,\tau]$.

$t\in[0,\tau]$.

Define ![]() $Q:=E+S$; then

$Q:=E+S$; then ![]() $Q$ satisfies

$Q$ satisfies

\begin{equation*}\begin{cases}

Q_t=-(\sigma+b)E+(a-b)S-(a-b)\displaystyle\frac{\bar{I}+Q}{K}Q\leq (a-b)Q(1-\frac{Q}{K}), ~~~t\in[0,\tau],\\

Q(0,x)\leq L_1.

\end{cases}

\end{equation*}

\begin{equation*}\begin{cases}

Q_t=-(\sigma+b)E+(a-b)S-(a-b)\displaystyle\frac{\bar{I}+Q}{K}Q\leq (a-b)Q(1-\frac{Q}{K}), ~~~t\in[0,\tau],\\

Q(0,x)\leq L_1.

\end{cases}

\end{equation*} By comparing ![]() $Q$ with

$Q$ with ![]() $L_1$, we deduce that

$L_1$, we deduce that ![]() $Q(t,x)\leq L_1$ and thus

$Q(t,x)\leq L_1$ and thus ![]() $0\leq E(t,x)\leq L_1$ for

$0\leq E(t,x)\leq L_1$ for ![]() $t\in[0,\tau]$. This establishes (12) for

$t\in[0,\tau]$. This establishes (12) for ![]() $t\in[0,\tau]$.

$t\in[0,\tau]$.

We now claim that (12) holds for all ![]() $t\in[0,T_a]$. From the arguments above, one obtains that

$t\in[0,T_a]$. From the arguments above, one obtains that ![]() $E,S\leq L_1$ as long as

$E,S\leq L_1$ as long as ![]() $E,S\geq 0$. Therefore, it suffices to prove

$E,S\geq 0$. Therefore, it suffices to prove ![]() $E,S\geq 0$ for

$E,S\geq 0$ for ![]() $t\in[0,T_a]$. Suppose on the contrary that this conclusion does not hold; then there exists a first time moment

$t\in[0,T_a]$. Suppose on the contrary that this conclusion does not hold; then there exists a first time moment ![]() $t^*\in\left. (0,T_a\right]$ such that

$t^*\in\left. (0,T_a\right]$ such that ![]() $L_1\geq E(t,x), S(t,x)\geq 0$ for

$L_1\geq E(t,x), S(t,x)\geq 0$ for ![]() $t\in\left. (0,t^* \right]$ and at least one of the following happens:

$t\in\left. (0,t^* \right]$ and at least one of the following happens:

However, using (8) we see there are some continuous functions ![]() $C^x_1(t)$ and

$C^x_1(t)$ and ![]() $C^x_2(t)$ such that

$C^x_2(t)$ such that

These inequalities imply, due to ![]() $E(0,x)=0$ and

$E(0,x)=0$ and ![]() $S(0,x) \gt 0$, that

$S(0,x) \gt 0$, that ![]() $E(t^*,x) \gt 0$ and

$E(t^*,x) \gt 0$ and ![]() $S(t^*,x) \gt 0$. This contradiction indicates that

$S(t^*,x) \gt 0$. This contradiction indicates that ![]() $E,S\geq 0$ for

$E,S\geq 0$ for ![]() $t\in[0,T_a]$ and thus (12) holds.

$t\in[0,T_a]$ and thus (12) holds.

Case (b): ![]() $x\in \left.(X_g,-h_0\right]\cup \left[h_0,X_h)\right.$. By (10), we see that

$x\in \left.(X_g,-h_0\right]\cup \left[h_0,X_h)\right.$. By (10), we see that ![]() $0 \lt S(t,x)\leq K$ for

$0 \lt S(t,x)\leq K$ for ![]() $t\in[0,t_x]$. Let

$t\in[0,t_x]$. Let ![]() $(E_\delta,S_\delta)$ be the solution of

$(E_\delta,S_\delta)$ be the solution of

\begin{equation}

\left\{\begin{array}{ll}

E_t=f_1(E,\bar{I},S),\ & t_x \lt t\leq T_a, \\

S_t=f_3(E,\bar{I},S),\ & t_x \lt t\leq T_a

\end{array}\right.

\end{equation}

\begin{equation}

\left\{\begin{array}{ll}

E_t=f_1(E,\bar{I},S),\ & t_x \lt t\leq T_a, \\

S_t=f_3(E,\bar{I},S),\ & t_x \lt t\leq T_a

\end{array}\right.

\end{equation}with initial conditions

where ![]() $\delta \gt 0$ is a small constant. By the continuous dependence of solutions for ODE on initial values, we know that

$\delta \gt 0$ is a small constant. By the continuous dependence of solutions for ODE on initial values, we know that ![]() $(E_{\delta}, S_\delta)$ is well-defined in

$(E_{\delta}, S_\delta)$ is well-defined in ![]() $[t_x,T_a]$ for all small

$[t_x,T_a]$ for all small ![]() $\delta \gt 0$, and

$\delta \gt 0$, and

\begin{equation*}E(t,x)=\lim_{\delta\to 0}E_\delta(t,x),\ S(t,x)=\lim_{\delta\to 0}S_\delta(t,x)\,\text{uniformly for } t\in[t_x,T_a].\end{equation*}

\begin{equation*}E(t,x)=\lim_{\delta\to 0}E_\delta(t,x),\ S(t,x)=\lim_{\delta\to 0}S_\delta(t,x)\,\text{uniformly for } t\in[t_x,T_a].\end{equation*} Using arguments similar to those of Case (1), we have ![]() $E_{\delta}(t,x), S_\delta(t,x) \gt 0$ for

$E_{\delta}(t,x), S_\delta(t,x) \gt 0$ for ![]() $t\in[t_x,T_a]$. Hence,

$t\in[t_x,T_a]$. Hence, ![]() $E(t,x),S(t,x)\geq 0$ for

$E(t,x),S(t,x)\geq 0$ for ![]() $t\in[t_x,T_a]$.

$t\in[t_x,T_a]$.

Consequently, for any given  $(g,h, z_2)\in Y_T=Y_{T_1}^T\subseteq Y_{T_1}$ and fixed

$(g,h, z_2)\in Y_T=Y_{T_1}^T\subseteq Y_{T_1}$ and fixed ![]() $x\in\mathbb{R}$, (8) admits a unique solution

$x\in\mathbb{R}$, (8) admits a unique solution ![]() $(E(\cdot, x),S(\cdot, x))\in C^{1}([0,T_1])\times C^{1}([0,T_1])$, and

$(E(\cdot, x),S(\cdot, x))\in C^{1}([0,T_1])\times C^{1}([0,T_1])$, and

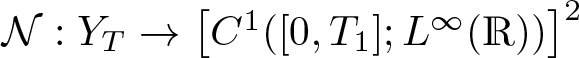

which induces a nonlinear mapping  $\mathcal{N}:Y_T\to \left[C^1([0,T_1];L^\infty(\mathbb{ R}))\right]^2$ given by

$\mathcal{N}:Y_T\to \left[C^1([0,T_1];L^\infty(\mathbb{ R}))\right]^2$ given by

Step 5: We show that ![]() $(E,S)=\mathcal{N}(g,h, z_2)\in {\rm Lip}([0,T_1]\times\mathbb{ R})$.

$(E,S)=\mathcal{N}(g,h, z_2)\in {\rm Lip}([0,T_1]\times\mathbb{ R})$.

Since ![]() $\bar{I}(t,x)=E(t,x)=0$ for

$\bar{I}(t,x)=E(t,x)=0$ for ![]() $t\in [0, T_1]$,

$t\in [0, T_1]$, ![]() $x\not\in [g(t),h(t)]$, it is easily seen that, for every

$x\not\in [g(t),h(t)]$, it is easily seen that, for every ![]() $x\in\mathbb{ R}$,

$x\in\mathbb{ R}$,  $\textbf{V}(t,x)=\left(\begin{array}{c}

E(t,x) \\

S(t,x)

\end{array}\right)

$ satisfies

$\textbf{V}(t,x)=\left(\begin{array}{c}

E(t,x) \\

S(t,x)

\end{array}\right)

$ satisfies

\begin{equation*}

\textbf{V}_t=\textbf{F}(t,x,\textbf{V}) \ \text{for } t\in[0,T], \quad \textbf{V}(0,x)=\textbf{V}_0(x):=\left(\begin{array}{c}

\tilde E_0(x) \\

S_0(x)

\end{array}\right),

\end{equation*}

\begin{equation*}

\textbf{V}_t=\textbf{F}(t,x,\textbf{V}) \ \text{for } t\in[0,T], \quad \textbf{V}(0,x)=\textbf{V}_0(x):=\left(\begin{array}{c}

\tilde E_0(x) \\

S_0(x)

\end{array}\right),

\end{equation*}which is equivalent to the integral equation

\begin{equation}

\textbf{V}(t,x)= \textbf{V}_0(x)+\int_0^t\textbf{F}(\tau,x,\textbf{V}(\tau,x))d\tau \ \text{for }t\in[0,T],\ x\in\mathbb{ R}.

\end{equation}

\begin{equation}

\textbf{V}(t,x)= \textbf{V}_0(x)+\int_0^t\textbf{F}(\tau,x,\textbf{V}(\tau,x))d\tau \ \text{for }t\in[0,T],\ x\in\mathbb{ R}.

\end{equation} Since ![]() $f_1$ and

$f_1$ and ![]() $f_3$ are smooth, by (12) and the choice of

$f_3$ are smooth, by (12) and the choice of ![]() $\bar{I}$, there exists a constant

$\bar{I}$, there exists a constant ![]() $N_1 \gt 0$ such that, for any

$N_1 \gt 0$ such that, for any ![]() $t\in[0,T_1]$ and

$t\in[0,T_1]$ and ![]() $x_1,x_2\in\mathbb{ R}$,

$x_1,x_2\in\mathbb{ R}$,

\begin{equation}\begin{cases}

|\textbf{F}(t,x_1,\textbf{V}(t,x_1))-\textbf{F}(t,x_1,\textbf{V}(t,x_2))|\leq N_1|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|,\\

|\textbf{F}(t,x_1,\textbf{V}(t,x_2))- \textbf{F}(t,x_2,\textbf{V}(t,x_2))|\leq N_1|\bar{I}(t,x_1)-\bar{I}(t,x_2)|.

\end{cases}

\end{equation}

\begin{equation}\begin{cases}

|\textbf{F}(t,x_1,\textbf{V}(t,x_1))-\textbf{F}(t,x_1,\textbf{V}(t,x_2))|\leq N_1|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|,\\

|\textbf{F}(t,x_1,\textbf{V}(t,x_2))- \textbf{F}(t,x_2,\textbf{V}(t,x_2))|\leq N_1|\bar{I}(t,x_1)-\bar{I}(t,x_2)|.

\end{cases}

\end{equation}It then follows from (15) that

\begin{align*}

&|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|\\

\leq&\ |\textbf{V}_0(x_1)-\textbf{V}_0(x_2)|+\int_0^t|F(\tau,x_1,\textbf{V}(\tau,x_1))-F(\tau,x_2,\textbf{V}(\tau,x_2))|d\tau\\

\leq&\ |\textbf{V}_0(x_1)-\textbf{V}_0(x_2)|+N_1\int_0^t(|\bar{I}(\tau,x_1)-\bar{I}(\tau,x_2)|+|\textbf{V}(\tau,x_1)-\textbf{V}(\tau,x_2)|)d\tau\\

\leq&\ \left([\textbf{V}_0]_{{\rm Lip}(\mathbb{ R})}+N_1T_1\sup_{t\in[0,T_1]}[\bar{I}(t,\cdot)]_{{\rm Lip}(\mathbb{ R})}\right)|x_1-x_2|\\

&\quad +N_1\int_0^t|\textbf{V}(\tau,x_1)-\textbf{V}(\tau,x_2)|d\tau.

\end{align*}

\begin{align*}

&|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|\\

\leq&\ |\textbf{V}_0(x_1)-\textbf{V}_0(x_2)|+\int_0^t|F(\tau,x_1,\textbf{V}(\tau,x_1))-F(\tau,x_2,\textbf{V}(\tau,x_2))|d\tau\\

\leq&\ |\textbf{V}_0(x_1)-\textbf{V}_0(x_2)|+N_1\int_0^t(|\bar{I}(\tau,x_1)-\bar{I}(\tau,x_2)|+|\textbf{V}(\tau,x_1)-\textbf{V}(\tau,x_2)|)d\tau\\

\leq&\ \left([\textbf{V}_0]_{{\rm Lip}(\mathbb{ R})}+N_1T_1\sup_{t\in[0,T_1]}[\bar{I}(t,\cdot)]_{{\rm Lip}(\mathbb{ R})}\right)|x_1-x_2|\\

&\quad +N_1\int_0^t|\textbf{V}(\tau,x_1)-\textbf{V}(\tau,x_2)|d\tau.

\end{align*}Because

\begin{align*}

&\sup_{t\in[0,T_1]}[\bar{I}(t,\cdot)]_{{\rm Lip}(\mathbb{ R})}=\sup_{t\in[0,T_1]}[I(t,\cdot)]_{{\rm Lip}([g(t),h(t)])}\\

=&\sup_{t\in[0,T_1],x_1,x_2\in[g(t),h(t)]\atop x_1\neq x_2} \frac{|z_2(t,\Psi^{-1}(t,x_1))-z_2(t,\Psi^{-1}(t,x_2))|}{x_1-x_2}\\

\leq& \sup_{t\in[0,T_1],x_1,x_2\in[g(t),h(t)]\atop x_1\neq x_2}\left([z(t,\cdot)]_{{\rm Lip}([-1,1])}\frac{|\Psi^{-1}(t,x_1)-\Psi^{-1}(t,x_2)|}{x_1-x_2}\right)\leq \frac{4B}{h_0},

\end{align*}

\begin{align*}

&\sup_{t\in[0,T_1]}[\bar{I}(t,\cdot)]_{{\rm Lip}(\mathbb{ R})}=\sup_{t\in[0,T_1]}[I(t,\cdot)]_{{\rm Lip}([g(t),h(t)])}\\

=&\sup_{t\in[0,T_1],x_1,x_2\in[g(t),h(t)]\atop x_1\neq x_2} \frac{|z_2(t,\Psi^{-1}(t,x_1))-z_2(t,\Psi^{-1}(t,x_2))|}{x_1-x_2}\\

\leq& \sup_{t\in[0,T_1],x_1,x_2\in[g(t),h(t)]\atop x_1\neq x_2}\left([z(t,\cdot)]_{{\rm Lip}([-1,1])}\frac{|\Psi^{-1}(t,x_1)-\Psi^{-1}(t,x_2)|}{x_1-x_2}\right)\leq \frac{4B}{h_0},

\end{align*}it follows from Gronwall’s inequality that

\begin{align}

&|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|\leq (1+N_1T_1e^{N_1T_1})\left(\|\textbf{V}_0\|_{{\rm Lip}(\mathbb{ R})}+\frac{4N_1T_1B}{h_0}\right)|x_1-x_2| \nonumber\\

&\quad := K_1 |x_1-x_2|.

\end{align}

\begin{align}

&|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|\leq (1+N_1T_1e^{N_1T_1})\left(\|\textbf{V}_0\|_{{\rm Lip}(\mathbb{ R})}+\frac{4N_1T_1B}{h_0}\right)|x_1-x_2| \nonumber\\

&\quad := K_1 |x_1-x_2|.

\end{align} Similarly, there exists a constant ![]() $K_2 \gt 0$ depending on

$K_2 \gt 0$ depending on ![]() $L_1$ and

$L_1$ and ![]() $\Pi$ such that

$\Pi$ such that

As a result, we conclude that

\begin{align*}

&\|\textbf{V}\|_{{\rm Lip}([0,T_1]\times\mathbb{ R})}=\|\textbf{V}\|_{L^\infty([0,T_1]\times\mathbb{ R})}+[\textbf{V}]_{{\rm Lip}([0,T_1]\times \mathbb{ R})}\\

\leq&\ \|\textbf{V}\|_{L^\infty([0,T_1]\times\mathbb{ R})}+\sup_{t\in[0,T_1],x_1,x_2\in\mathbb{ R}\atop x_1\neq x_2}\frac{|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|}{|x_1-x_2|}\\

&\quad +\sup_{(t,s)\in[0,T_1],x_2\in\mathbb{ R}\atop t\neq s}\frac{|\textbf{V}(t,x_2)-\textbf{V}(s,x_2)|}{|t-s|}\\

\leq&\ L_1+K_1+2K_2 \lt \infty,

\end{align*}

\begin{align*}

&\|\textbf{V}\|_{{\rm Lip}([0,T_1]\times\mathbb{ R})}=\|\textbf{V}\|_{L^\infty([0,T_1]\times\mathbb{ R})}+[\textbf{V}]_{{\rm Lip}([0,T_1]\times \mathbb{ R})}\\

\leq&\ \|\textbf{V}\|_{L^\infty([0,T_1]\times\mathbb{ R})}+\sup_{t\in[0,T_1],x_1,x_2\in\mathbb{ R}\atop x_1\neq x_2}\frac{|\textbf{V}(t,x_1)-\textbf{V}(t,x_2)|}{|x_1-x_2|}\\

&\quad +\sup_{(t,s)\in[0,T_1],x_2\in\mathbb{ R}\atop t\neq s}\frac{|\textbf{V}(t,x_2)-\textbf{V}(s,x_2)|}{|t-s|}\\

\leq&\ L_1+K_1+2K_2 \lt \infty,

\end{align*}which indicates that ![]() $E,S\in {\rm Lip}([0,T_1]\times\mathbb{ R}).$

$E,S\in {\rm Lip}([0,T_1]\times\mathbb{ R}).$

Step 6: We solve a linear parabolic problem arising from (7).

For given  $(g,h, z_2)\in Y_T=Y_{T_1}^T$, from Step 4 we obtain the solution

$(g,h, z_2)\in Y_T=Y_{T_1}^T$, from Step 4 we obtain the solution

Recalling the notation ![]() $z_1(t,y)=E(t,x)$ and

$z_1(t,y)=E(t,x)$ and ![]() $z_3(t,y)=S(t,x)$ for

$z_3(t,y)=S(t,x)$ for ![]() $(t,x)\in\Sigma_{T_1}$, and our estimate

$(t,x)\in\Sigma_{T_1}$, and our estimate

we now set to solve the following linear parabolic initial boundary value problem:

\begin{equation}

\left\{\begin{array}{ll}

\tilde{z}_{2t}-D\rho^{2}\tilde{z}_{2yy}-D\zeta \tilde{z}_{2y}=f_2(z_1,z_2,z_3),\ &t \gt 0,\ y\in[-1,1], \\

\tilde{z}_{2}(t,\pm 1)=0,\ &t\geq 0, \\

\tilde{z}_{2}(0,y)=I_{0}(h_{0}y):=z_{20}(y), \ & y\in[-1,1].

\end{array}\right.

\end{equation}

\begin{equation}

\left\{\begin{array}{ll}

\tilde{z}_{2t}-D\rho^{2}\tilde{z}_{2yy}-D\zeta \tilde{z}_{2y}=f_2(z_1,z_2,z_3),\ &t \gt 0,\ y\in[-1,1], \\

\tilde{z}_{2}(t,\pm 1)=0,\ &t\geq 0, \\

\tilde{z}_{2}(0,y)=I_{0}(h_{0}y):=z_{20}(y), \ & y\in[-1,1].

\end{array}\right.

\end{equation} By the expressions of ![]() $\rho$ and

$\rho$ and ![]() $\zeta$ given in (5), we can calculate to obtain that

$\zeta$ given in (5), we can calculate to obtain that

\begin{equation}

\frac{D}{4h_0^2}\leq D\rho^2(g(t),h(t))\leq \frac{16D}{h_0^2}\,\mbox{for } (t,y)\in D_{T_1},

\end{equation}

\begin{equation}

\frac{D}{4h_0^2}\leq D\rho^2(g(t),h(t))\leq \frac{16D}{h_0^2}\,\mbox{for } (t,y)\in D_{T_1},

\end{equation} \begin{equation}

\begin{aligned}

\|\zeta\|_{C(D_{T_1})}

\leq &\sup_{(t,x)\in D_{T_1}}\left|\frac{h'(t)+g'(t)}{h(t)-g(t)}\right|+ \sup_{(t,x)\in D_{T_1}}\left|\frac{(h'(t)-g'(t))y}{h(t)-g(t)}\right|\\

\leq &\ \frac{4(|g^*|+|h^*|+2)}{h_0}:=C_0.

\end{aligned}

\end{equation}

\begin{equation}

\begin{aligned}

\|\zeta\|_{C(D_{T_1})}

\leq &\sup_{(t,x)\in D_{T_1}}\left|\frac{h'(t)+g'(t)}{h(t)-g(t)}\right|+ \sup_{(t,x)\in D_{T_1}}\left|\frac{(h'(t)-g'(t))y}{h(t)-g(t)}\right|\\

\leq &\ \frac{4(|g^*|+|h^*|+2)}{h_0}:=C_0.

\end{aligned}

\end{equation} Moreover, for any ![]() $P_1=(s_1,y_1)$ and

$P_1=(s_1,y_1)$ and ![]() $P_2=(s_2,y_2)$ belonging to

$P_2=(s_2,y_2)$ belonging to ![]() $D_{T_1}$, with parabolic distance

$D_{T_1}$, with parabolic distance ![]() $\delta(P_1,P_2)=\sqrt{(y_1-y_2)^2+|s_1-s_2|}$, we have

$\delta(P_1,P_2)=\sqrt{(y_1-y_2)^2+|s_1-s_2|}$, we have

\begin{equation}\begin{aligned}

w(R):=&\ D\sup_{P_1,P_2\in D_{T_1} \atop \delta(P_1,P_2)\leq R}|\rho^2(s_1)-\rho^2(s_2)|\\

\leq &\ \frac{448D}{h_0^3}|h(s_2)-h(s_1)+g(s_1)-g(s_2)|\\

\leq &\ \frac{896D}{h_0^3}(2+|g^*|+|h^*|)R^2\to 0\text{as }R\to 0.

\end{aligned}

\end{equation}

\begin{equation}\begin{aligned}

w(R):=&\ D\sup_{P_1,P_2\in D_{T_1} \atop \delta(P_1,P_2)\leq R}|\rho^2(s_1)-\rho^2(s_2)|\\

\leq &\ \frac{448D}{h_0^3}|h(s_2)-h(s_1)+g(s_1)-g(s_2)|\\

\leq &\ \frac{896D}{h_0^3}(2+|g^*|+|h^*|)R^2\to 0\text{as }R\to 0.

\end{aligned}

\end{equation} It is easily seen that ![]() $\zeta$ and

$\zeta$ and ![]() $f_2(z_1, z_2, z_3)$ in (18) are bounded in

$f_2(z_1, z_2, z_3)$ in (18) are bounded in ![]() $L^\infty$. Hence, for any

$L^\infty$. Hence, for any ![]() $(z_2,g,h)\in Y_T$, we can apply the standard

$(z_2,g,h)\in Y_T$, we can apply the standard ![]() $L^p$ theory and Sobolev embedding theorem to conclude that (18) admits a unique solution

$L^p$ theory and Sobolev embedding theorem to conclude that (18) admits a unique solution ![]() $\tilde{z}_2$ with

$\tilde{z}_2$ with

\begin{equation}

\|\tilde{z}_{2}\|_{C^{(1+\gamma)/2, 1+\gamma}(D_{T_1})}\leq C_{T_1}\| \tilde{z}_{2}\|_{W_p^{1,2}(D_{T_1})}\leq C_1,

\end{equation}

\begin{equation}

\|\tilde{z}_{2}\|_{C^{(1+\gamma)/2, 1+\gamma}(D_{T_1})}\leq C_{T_1}\| \tilde{z}_{2}\|_{W_p^{1,2}(D_{T_1})}\leq C_1,

\end{equation}where ![]() $p \gt 3/(2-\gamma)$,

$p \gt 3/(2-\gamma)$, ![]() $C_1$ depends on

$C_1$ depends on ![]() $p$,

$p$, ![]() $\|f_2(z_1,z_2,z_3)\|_{L^p(D_{T_1})}$,

$\|f_2(z_1,z_2,z_3)\|_{L^p(D_{T_1})}$, ![]() $\|I_0\|_{C^2([-h_0,h_0])}$,

$\|I_0\|_{C^2([-h_0,h_0])}$, ![]() $C_0$,

$C_0$, ![]() $h_0$,

$h_0$, ![]() $D_{T_1}$ and

$D_{T_1}$ and ![]() $C_{T_1}$, and

$C_{T_1}$, and ![]() $C_{T_1}$ depends on

$C_{T_1}$ depends on ![]() $D_{T_1}$ and

$D_{T_1}$ and ![]() $\gamma$. Moreover, since

$\gamma$. Moreover, since ![]() $z_1\geq 0$, we see that 0 is a lower solution of (18), and by the strong parabolic maximum principle and the Hopf boundary lemma have

$z_1\geq 0$, we see that 0 is a lower solution of (18), and by the strong parabolic maximum principle and the Hopf boundary lemma have ![]() $z_2(t,y) \gt 0$ and

$z_2(t,y) \gt 0$ and ![]() $\pm z_{2y}(t,\pm 1) \lt 0$ for

$\pm z_{2y}(t,\pm 1) \lt 0$ for ![]() $(t,y)\in(0,T_1]\times(-1,1)$.

$(t,y)\in(0,T_1]\times(-1,1)$.

Step 7: A fixed point problem.

With ![]() $\tilde z_2$ obtained in Step 6, we set

$\tilde z_2$ obtained in Step 6, we set

\begin{equation}

\begin{cases}

\tilde{g}(t):=-h_0-\mu\displaystyle\int^{t}_{0}\rho(\tau)\tilde{z}_{2y}(\tau,-1)d\tau,\\ \tilde{h}(t):=h_0-\mu\displaystyle\int^{t}_{0}\rho(\tau)\tilde{z}_{2y}(\tau,1)d\tau \end{cases}\text{for }t\in[0,T_1].

\end{equation}

\begin{equation}

\begin{cases}

\tilde{g}(t):=-h_0-\mu\displaystyle\int^{t}_{0}\rho(\tau)\tilde{z}_{2y}(\tau,-1)d\tau,\\ \tilde{h}(t):=h_0-\mu\displaystyle\int^{t}_{0}\rho(\tau)\tilde{z}_{2y}(\tau,1)d\tau \end{cases}\text{for }t\in[0,T_1].

\end{equation} Then ![]() $\tilde{g}(0)=-h_0$,

$\tilde{g}(0)=-h_0$, ![]() $\tilde{h}(0)=h_0$,

$\tilde{h}(0)=h_0$, ![]() $\tilde{g}'(0)=g^{*}$,

$\tilde{g}'(0)=g^{*}$,  $\tilde{h}'(0)=h^{*}$,

$\tilde{h}'(0)=h^{*}$, ![]() $-\tilde{g}'(t),\tilde{h}'(t) \lt 0$ for

$-\tilde{g}'(t),\tilde{h}'(t) \lt 0$ for ![]() $t\in[0,T_1]$ and

$t\in[0,T_1]$ and

\begin{equation}

\|\tilde{h}\|_{C^{1+\gamma/2}([0, T_1])}+\|\tilde{g}\|_{C^{1+\gamma/2}([0, T_1])} \leq C_2,

\end{equation}

\begin{equation}

\|\tilde{h}\|_{C^{1+\gamma/2}([0, T_1])}+\|\tilde{g}\|_{C^{1+\gamma/2}([0, T_1])} \leq C_2,

\end{equation}where ![]() $C_2$ depends on

$C_2$ depends on ![]() $C_1$.

$C_1$.

Now, we define a mapping ![]() $\mathfrak{F}:Y_{T}\rightarrow [C^{1}([0,T])]^{2}\times C(D_T)$ by

$\mathfrak{F}:Y_{T}\rightarrow [C^{1}([0,T])]^{2}\times C(D_T)$ by

\begin{eqnarray*}

\mathfrak{F}(g,h, z_2)=(\tilde{g},\tilde{h}, \tilde{z}_2)|_{Y_T}.

\end{eqnarray*}

\begin{eqnarray*}

\mathfrak{F}(g,h, z_2)=(\tilde{g},\tilde{h}, \tilde{z}_2)|_{Y_T}.

\end{eqnarray*} Clearly, if ![]() $(g,h,z_2)$ is a fixed point of

$(g,h,z_2)$ is a fixed point of ![]() $\mathfrak{F}$, then

$\mathfrak{F}$, then ![]() $(E,S,I)$ is a solution of system (3) with

$(E,S,I)$ is a solution of system (3) with ![]() $(E,S):=\mathcal{N}(g,h,z_2)$,

$(E,S):=\mathcal{N}(g,h,z_2)$, ![]() $I(t,x):=z_2(t,y)$, and

$I(t,x):=z_2(t,y)$, and ![]() $\mathcal{N}$ given by (14).

$\mathcal{N}$ given by (14).

Step 8. We show that ![]() $\mathfrak{F}$ is a contraction mapping in

$\mathfrak{F}$ is a contraction mapping in ![]() $Y_T$ with

$Y_T$ with ![]() $B:=C_1$ in the definition of

$B:=C_1$ in the definition of ![]() $Y_{1,T}$, provided that

$Y_{1,T}$, provided that ![]() $T \gt 0$ is small enough. (We note that the extension trick in Step 3 is used in Step 6 already, and it is needed here.)

$T \gt 0$ is small enough. (We note that the extension trick in Step 3 is used in Step 6 already, and it is needed here.)

Obviously,

\begin{equation*}

\sup\limits_{-1\leq y_1,y_2\leq 1,t\in[0,T_1]\atop y_1\neq y_2}\frac{|\tilde{z}_2(t,y_1)-\tilde{z}_2(t,y_2)|}{|y_1-y_2|}\leq \|\tilde{z}_{2y}\|_{L^\infty(D_{T_1})}\leq C_1=B.

\end{equation*}

\begin{equation*}

\sup\limits_{-1\leq y_1,y_2\leq 1,t\in[0,T_1]\atop y_1\neq y_2}\frac{|\tilde{z}_2(t,y_1)-\tilde{z}_2(t,y_2)|}{|y_1-y_2|}\leq \|\tilde{z}_{2y}\|_{L^\infty(D_{T_1})}\leq C_1=B.

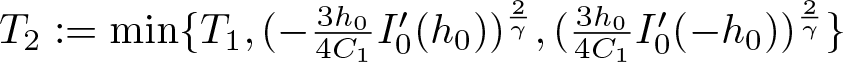

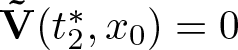

\end{equation*} Denote  $T_2:=\min\{T_1,(-\frac{3h_0}{4C_1}I_0'(h_0))^{\frac{2}{\gamma}},(\frac{3h_0}{4C_1}I_0'(-h_0))^{\frac{2}{\gamma}}\}$. It follows from (22) that

$T_2:=\min\{T_1,(-\frac{3h_0}{4C_1}I_0'(h_0))^{\frac{2}{\gamma}},(\frac{3h_0}{4C_1}I_0'(-h_0))^{\frac{2}{\gamma}}\}$. It follows from (22) that

\begin{eqnarray*}

|\tilde{z}_{2y}(t,1)-z_{20}'(1)|\leq C_1t^{\frac{\gamma}{2}}\leq-\frac{3h_0}{4}I_0'(h_0)\,\text{for }t\in[0,T_2].

\end{eqnarray*}

\begin{eqnarray*}

|\tilde{z}_{2y}(t,1)-z_{20}'(1)|\leq C_1t^{\frac{\gamma}{2}}\leq-\frac{3h_0}{4}I_0'(h_0)\,\text{for }t\in[0,T_2].

\end{eqnarray*} Recalling ![]() $z_{20}'(1)=I_0'(h_0)h_0$ and

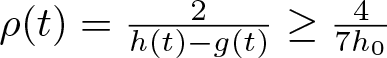

$z_{20}'(1)=I_0'(h_0)h_0$ and  $\rho(t)=\frac 2{h(t)-g(t)}\geq \frac{4}{7h_0}$, one has

$\rho(t)=\frac 2{h(t)-g(t)}\geq \frac{4}{7h_0}$, one has

\begin{eqnarray*}

\tilde{h}'(t)=-\mu\rho(t)\tilde{z}_{2y}(t,1)\geq -\frac{4\mu}{7h_0}\tilde{z}_{2y}(t,1)\geq-\frac{1}{7}\mu I_0'(h_0)=\frac{h^*}{7}\,\text{for }t\in[0,T_2].

\end{eqnarray*}

\begin{eqnarray*}

\tilde{h}'(t)=-\mu\rho(t)\tilde{z}_{2y}(t,1)\geq -\frac{4\mu}{7h_0}\tilde{z}_{2y}(t,1)\geq-\frac{1}{7}\mu I_0'(h_0)=\frac{h^*}{7}\,\text{for }t\in[0,T_2].

\end{eqnarray*}Similarly,

\begin{eqnarray*}

\tilde{g}'(t)=-\mu\rho(t)\tilde{z}_{2y}(t,-1)\leq \frac{g^*}{7}\,\text{for }t\in[0,T_2].

\end{eqnarray*}

\begin{eqnarray*}

\tilde{g}'(t)=-\mu\rho(t)\tilde{z}_{2y}(t,-1)\leq \frac{g^*}{7}\,\text{for }t\in[0,T_2].

\end{eqnarray*}Moreover, for any fixed

\begin{eqnarray*}

T\leq \min\{T_1, T_2,\,C_2^{-2/\gamma},\,C_1^{-2/(1+\gamma)}\},

\end{eqnarray*}

\begin{eqnarray*}

T\leq \min\{T_1, T_2,\,C_2^{-2/\gamma},\,C_1^{-2/(1+\gamma)}\},

\end{eqnarray*}using (22) and (24), we obtain

\begin{align*}

&\|\tilde{z}_2-z_{20}\|_{L^{\infty}(D_{T})}\leq \|\tilde{z}_2\|_{C^{(1+\gamma)/2,0}(D_{T})}T^{(1+\gamma)/2}\leq \|\tilde{z}_2\|_{C^{(1+\gamma)/2,0}(D_{T_1})}T^{(1+\gamma)/2}\\

&\quad \leq C_1 T^{(1+\gamma)/2}\leq 1, \\[1mm]

&\|\tilde{g}'-g^{*}\|_{L^{\infty}([0,T])}\leq \|\tilde{g}'\|_{C^{\gamma/2}([0,T])}T^{\gamma/2}\leq \|\tilde{g}'\|_{C^{\gamma/2}([0,T_1])}T^{\gamma/2}\leq C_2 T^{\gamma/2}\leq 1,\\[1mm]

&\|\tilde{h}'-h^{*}\|_{L^{\infty}([0,T])}\leq \|\tilde{h}'\|_{C^{\gamma/2}([0,T])}T^{\gamma/2}\leq \|\tilde{h}'\|_{C^{\gamma/2}([0,T_1])}T^{\gamma/2}\leq C_2 T^{\gamma/2}\leq 1.

\end{align*}

\begin{align*}

&\|\tilde{z}_2-z_{20}\|_{L^{\infty}(D_{T})}\leq \|\tilde{z}_2\|_{C^{(1+\gamma)/2,0}(D_{T})}T^{(1+\gamma)/2}\leq \|\tilde{z}_2\|_{C^{(1+\gamma)/2,0}(D_{T_1})}T^{(1+\gamma)/2}\\

&\quad \leq C_1 T^{(1+\gamma)/2}\leq 1, \\[1mm]

&\|\tilde{g}'-g^{*}\|_{L^{\infty}([0,T])}\leq \|\tilde{g}'\|_{C^{\gamma/2}([0,T])}T^{\gamma/2}\leq \|\tilde{g}'\|_{C^{\gamma/2}([0,T_1])}T^{\gamma/2}\leq C_2 T^{\gamma/2}\leq 1,\\[1mm]

&\|\tilde{h}'-h^{*}\|_{L^{\infty}([0,T])}\leq \|\tilde{h}'\|_{C^{\gamma/2}([0,T])}T^{\gamma/2}\leq \|\tilde{h}'\|_{C^{\gamma/2}([0,T_1])}T^{\gamma/2}\leq C_2 T^{\gamma/2}\leq 1.

\end{align*} Therefore, ![]() $\mathfrak{F}$ maps

$\mathfrak{F}$ maps ![]() $Y_{T}$ into itself.

$Y_{T}$ into itself.

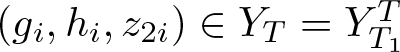

Next, we prove that ![]() $\mathfrak{F}$ is a contraction map on

$\mathfrak{F}$ is a contraction map on ![]() $Y_T$ for all small

$Y_T$ for all small ![]() $T \gt 0$. Let

$T \gt 0$. Let  $(g_i,h_i, z_{2i})\in Y_T=Y_{T_1}^T$,

$(g_i,h_i, z_{2i})\in Y_T=Y_{T_1}^T$, ![]() $(E_i,S_i)=\mathcal{N}(g_i,h_i, z_{2i})$,

$(E_i,S_i)=\mathcal{N}(g_i,h_i, z_{2i})$, ![]() $\tilde{z}_{2i}$ be the solution of (18) for

$\tilde{z}_{2i}$ be the solution of (18) for ![]() $i=1,2$, and

$i=1,2$, and

Then ![]() $W$ solves

$W$ solves

\begin{equation}

\begin{cases}

W_t-D\rho_1^2W_{yy}-D\zeta_1W_y=\Phi, &t\in(0,T_1],y\in[-1,1],\\

W(t,-1)=W(t,1)=0, &t\in(0,T_1],\\

W(0,y)=0, &y\in[-1,1],

\end{cases}

\end{equation}

\begin{equation}

\begin{cases}

W_t-D\rho_1^2W_{yy}-D\zeta_1W_y=\Phi, &t\in(0,T_1],y\in[-1,1],\\

W(t,-1)=W(t,1)=0, &t\in(0,T_1],\\

W(0,y)=0, &y\in[-1,1],

\end{cases}

\end{equation}with

\begin{equation*}

\Phi:=D\tilde{z}_{22yy}(\rho_1^2-\rho_2^2)+D\tilde{z}_{22y}(\zeta_1-\zeta_2)+f_2(z_{11},z_{21},z_{31})-f_2(z_{12},z_{22},z_{32}),

\end{equation*}

\begin{equation*}

\Phi:=D\tilde{z}_{22yy}(\rho_1^2-\rho_2^2)+D\tilde{z}_{22y}(\zeta_1-\zeta_2)+f_2(z_{11},z_{21},z_{31})-f_2(z_{12},z_{22},z_{32}),

\end{equation*}where ![]() $\rho_i:=\rho_{g_i,h_i}$,

$\rho_i:=\rho_{g_i,h_i}$, ![]() $\zeta_i:=\zeta_{g_i,h_i}(t,y)$,

$\zeta_i:=\zeta_{g_i,h_i}(t,y)$, ![]() $z_{1i}(t,y)=E_i(t,x),z_{3i}(t,y)=S_i(t,x)$ with

$z_{1i}(t,y)=E_i(t,x),z_{3i}(t,y)=S_i(t,x)$ with

\begin{equation*}

y=\Psi_i^{-1}(t,x)=\frac{2x-g_i(t)-h_i(t)}{h_i(t)-g_i(t)} \quad \text{for } x\in[g_i(t),h_i(t)]\,\text{and }i=1,2.

\end{equation*}

\begin{equation*}

y=\Psi_i^{-1}(t,x)=\frac{2x-g_i(t)-h_i(t)}{h_i(t)-g_i(t)} \quad \text{for } x\in[g_i(t),h_i(t)]\,\text{and }i=1,2.

\end{equation*}By direct calculations, we have

\begin{equation}

\begin{aligned}

\|\rho^2_1-\rho^2_2\|_{C(D_{T_1})}&=\sup_{(t,y)\in D_{T_1}}|\rho^2_{g_1,h_1}(t)-\rho^2_{g_2,h_2}(t)|\\

&\leq \frac 2 {h_0}\sup_{(t,y)\in D_{T_1}}\left\lvert\frac{2}{h_1(t)-g_1(t)}-\frac{2}{h_2(t)-g_2(t)} \right\rvert\\

&\leq R_1(\|g_1-g_2\|_{C([0,T_1])}+\|h_1-h_2\|_{C([0,T_1])}),

\end{aligned}

\end{equation}

\begin{equation}

\begin{aligned}

\|\rho^2_1-\rho^2_2\|_{C(D_{T_1})}&=\sup_{(t,y)\in D_{T_1}}|\rho^2_{g_1,h_1}(t)-\rho^2_{g_2,h_2}(t)|\\

&\leq \frac 2 {h_0}\sup_{(t,y)\in D_{T_1}}\left\lvert\frac{2}{h_1(t)-g_1(t)}-\frac{2}{h_2(t)-g_2(t)} \right\rvert\\

&\leq R_1(\|g_1-g_2\|_{C([0,T_1])}+\|h_1-h_2\|_{C([0,T_1])}),

\end{aligned}

\end{equation} \begin{equation}

\begin{aligned}

\|\zeta_1-\zeta_2\|_{C(D_{T_1})}&=\sup_{(t,y)\in D_{T_1}}\left\lvert \frac{h_1'+g_1'}{h_1-g_1}-\frac{h_1'-g_1'}{h_1-g_1}y-\frac{h_2'+g_2'}{h_2-g_2}+\frac{h_2'-g_2'}{h_2-g_2}y\right\rvert\\

& \leq R_2(\|g_1-g_2\|_{C^1([0,T_1])}+\|h_1-h_2\|_{C^1([0,T_1])}),

\end{aligned}

\end{equation}

\begin{equation}

\begin{aligned}

\|\zeta_1-\zeta_2\|_{C(D_{T_1})}&=\sup_{(t,y)\in D_{T_1}}\left\lvert \frac{h_1'+g_1'}{h_1-g_1}-\frac{h_1'-g_1'}{h_1-g_1}y-\frac{h_2'+g_2'}{h_2-g_2}+\frac{h_2'-g_2'}{h_2-g_2}y\right\rvert\\

& \leq R_2(\|g_1-g_2\|_{C^1([0,T_1])}+\|h_1-h_2\|_{C^1([0,T_1])}),

\end{aligned}

\end{equation} where ![]() $R_1$ and

$R_1$ and ![]() $R_2$ are constants depending on

$R_2$ are constants depending on ![]() $h_0,g^*,h^*$ and

$h_0,g^*,h^*$ and ![]() $T_1$.

$T_1$.

We now estimate ![]() $\|z_{11}-z_{12}\|_{L^\infty(D_{T_1})}$ and

$\|z_{11}-z_{12}\|_{L^\infty(D_{T_1})}$ and ![]() $\|z_{31}-z_{32}\|_{L^\infty(D_{T_1})}$. Let

$\|z_{31}-z_{32}\|_{L^\infty(D_{T_1})}$. Let ![]() $I_i(t,x):=z_{2i}(t,y)$ for

$I_i(t,x):=z_{2i}(t,y)$ for ![]() $(t,y)\in D_{T_1}$,

$(t,y)\in D_{T_1}$, ![]() $\bar{I}_i(t,x)$ the zero extension of

$\bar{I}_i(t,x)$ the zero extension of ![]() $I_i$ to

$I_i$ to ![]() $[0,T_1]\times \mathbb{ R}$, and

$[0,T_1]\times \mathbb{ R}$, and

\begin{align*}

\mathbf{V}_i&:=\left(\begin{array}{c}

E_i \\

S_i

\end{array}\right), ~~\mathbf{F}_i(t,x,\mathbf{V}_\mathbf{i})=\left(\begin{array}{c}

f_1(E_i,\bar{I}_i(t,x),S_i) \\

f_3(E_i,\bar{I}_i(t,x),S_i)

\end{array}\right)\\

&\quad \text{for }(t,x)\in[0,T_1]\times\mathbb{ R}\,\text{and }i=1,2.

\end{align*}

\begin{align*}

\mathbf{V}_i&:=\left(\begin{array}{c}

E_i \\

S_i

\end{array}\right), ~~\mathbf{F}_i(t,x,\mathbf{V}_\mathbf{i})=\left(\begin{array}{c}

f_1(E_i,\bar{I}_i(t,x),S_i) \\

f_3(E_i,\bar{I}_i(t,x),S_i)

\end{array}\right)\\

&\quad \text{for }(t,x)\in[0,T_1]\times\mathbb{ R}\,\text{and }i=1,2.

\end{align*} Then ![]() $\textbf{V}_i \ (i=1,2) $ solves

$\textbf{V}_i \ (i=1,2) $ solves

and thus ![]() ${\mathbf{\tilde{V}}}:=\mathbf{V}_\mathbf{1}-\mathbf{V}_\mathbf{2}$ satisfies the following equation:

${\mathbf{\tilde{V}}}:=\mathbf{V}_\mathbf{1}-\mathbf{V}_\mathbf{2}$ satisfies the following equation:

Similar to (16), for any ![]() $(t,x)\in[0,T_1]\times\mathbb{ R}$, we have

$(t,x)\in[0,T_1]\times\mathbb{ R}$, we have

\begin{equation}\begin{cases}

|\textbf{F}_1(t,x,\mathbf{V}_\mathbf{1})-\textbf{F}_1(t,x,\mathbf{V}_\mathbf{2})|\leq N_1|{\mathbf{\tilde{V}}}|;\\

|\textbf{F}_1(t,x,\mathbf{V}_\mathbf{2})- \textbf{F}_2(t,x,\mathbf{V}_\mathbf{2})|\leq N_1|\bar{I}_1(t,x)-\bar{I}_2(t,x)|.

\end{cases}

\end{equation}

\begin{equation}\begin{cases}

|\textbf{F}_1(t,x,\mathbf{V}_\mathbf{1})-\textbf{F}_1(t,x,\mathbf{V}_\mathbf{2})|\leq N_1|{\mathbf{\tilde{V}}}|;\\

|\textbf{F}_1(t,x,\mathbf{V}_\mathbf{2})- \textbf{F}_2(t,x,\mathbf{V}_\mathbf{2})|\leq N_1|\bar{I}_1(t,x)-\bar{I}_2(t,x)|.

\end{cases}

\end{equation} It follows that, for any ![]() $0\leq \hat t\leq t\leq T_1$ and

$0\leq \hat t\leq t\leq T_1$ and ![]() $x\in \mathbb{R}$,

$x\in \mathbb{R}$,

\begin{equation*}

| {\mathbf{\tilde{V}}}(t,x)|\leq |{\mathbf{\tilde{V}}}(\hat t,x)|+ N_1\int_{\hat t}^t \Big(|{\mathbf{\tilde{V}}}(s,x)|+|\bar{I}_1(s,x)-\bar{I}_2(s,x)|\Big)ds.

\end{equation*}

\begin{equation*}

| {\mathbf{\tilde{V}}}(t,x)|\leq |{\mathbf{\tilde{V}}}(\hat t,x)|+ N_1\int_{\hat t}^t \Big(|{\mathbf{\tilde{V}}}(s,x)|+|\bar{I}_1(s,x)-\bar{I}_2(s,x)|\Big)ds.

\end{equation*}We may then use the Gronwall inequality, similar to before, to obtain,

\begin{equation}

|{\mathbf{\tilde{V}}}(t,x)|\leq e^{N_1(t-\hat t)}\left[|{\mathbf{\tilde{V}}}(\hat t,x)|+N_1\int_{\hat t}^t|\bar{I}_1(s,x)-\bar{I}_2(s,x)|ds\right] \text{for }(t,x)\in[\hat t,T_1]\times\mathbb{R}.

\end{equation}

\begin{equation}

|{\mathbf{\tilde{V}}}(t,x)|\leq e^{N_1(t-\hat t)}\left[|{\mathbf{\tilde{V}}}(\hat t,x)|+N_1\int_{\hat t}^t|\bar{I}_1(s,x)-\bar{I}_2(s,x)|ds\right] \text{for }(t,x)\in[\hat t,T_1]\times\mathbb{R}.

\end{equation}Denote

\begin{eqnarray*}

&&G_M(t):=max\{g_1(t),g_2(t)\},\quad G_m(t):=min\{g_1(t),g_2(t)\},\\

&&H_M(t):=max\{h_1(t),h_2(t)\}, \quad H_m(t):=min\{h_1(t),h_2(t)\}.

\end{eqnarray*}

\begin{eqnarray*}

&&G_M(t):=max\{g_1(t),g_2(t)\},\quad G_m(t):=min\{g_1(t),g_2(t)\},\\

&&H_M(t):=max\{h_1(t),h_2(t)\}, \quad H_m(t):=min\{h_1(t),h_2(t)\}.

\end{eqnarray*} For any ![]() $x_0\in(-\infty,-h_0)\cup(h_0,\infty)$, let

$x_0\in(-\infty,-h_0)\cup(h_0,\infty)$, let ![]() $t_i^*,i=1,2,$ be the positive constants such that

$t_i^*,i=1,2,$ be the positive constants such that

\begin{equation*}

t_i^*:=\begin{cases}

t_g, &\text{if }x_0=g_i(t_g)\,\text{and }x_0\in[g_i(T_1),-h_0),\\

t_h, &\text{if }x_0=h_i(t_h)\,\text{and }x_0\in(h_0,h_i(T_1)],\\

T_1,&\text{if }x_0\not\in[g_i(T_1),h_i(T_1)].\\

\end{cases}

\end{equation*}

\begin{equation*}

t_i^*:=\begin{cases}

t_g, &\text{if }x_0=g_i(t_g)\,\text{and }x_0\in[g_i(T_1),-h_0),\\

t_h, &\text{if }x_0=h_i(t_h)\,\text{and }x_0\in(h_0,h_i(T_1)],\\

T_1,&\text{if }x_0\not\in[g_i(T_1),h_i(T_1)].\\

\end{cases}

\end{equation*} For any fixed ![]() $t_0\in(0,T_1]$, we divide the estimate of

$t_0\in(0,T_1]$, we divide the estimate of ![]() $ |{\mathbf{\tilde{V}}}(t,x)|$ into several cases according to the position of

$ |{\mathbf{\tilde{V}}}(t,x)|$ into several cases according to the position of ![]() $x_0\in\mathbb{R}$.

$x_0\in\mathbb{R}$.

Case (i). ![]() $x_0\in[H_M(t_0),\infty)$. Clearly,

$x_0\in[H_M(t_0),\infty)$. Clearly, ![]() $H_M(t)\leq H_M(t_0)\leq x_0$ for

$H_M(t)\leq H_M(t_0)\leq x_0$ for ![]() $0 \lt t\leq t_0$. Hence,

$0 \lt t\leq t_0$. Hence, ![]() $E_i(t,x_0)=\bar{I}_i(t,x_0)=0$ for

$E_i(t,x_0)=\bar{I}_i(t,x_0)=0$ for ![]() $t\in[0,t_0]$ and

$t\in[0,t_0]$ and ![]() $i=1,2$, and

$i=1,2$, and ![]() $S=S_i(t,x)$

$S=S_i(t,x)$ ![]() $(i=1,2)$ is a solution to

$(i=1,2)$ is a solution to

\begin{equation}

S_t=(a-b)S(1-\frac{S}{K})\,\text{for } t\in(0,t_0], \quad S(0,x_0)=S_0(x_0).

\end{equation}

\begin{equation}

S_t=(a-b)S(1-\frac{S}{K})\,\text{for } t\in(0,t_0], \quad S(0,x_0)=S_0(x_0).

\end{equation} It follows that ![]() $S_1(t,x_0)=S_2(t,x_0)$ and hence

$S_1(t,x_0)=S_2(t,x_0)$ and hence ![]() ${\mathbf{\tilde{V}}}_1(t,x_0)=0$ for

${\mathbf{\tilde{V}}}_1(t,x_0)=0$ for ![]() $t\in[0,t_0]$.

$t\in[0,t_0]$.

Case (ii). ![]() $x_0\in[H_m(t_0),H_M(t_0))$. Without loss of generality, we assume that

$x_0\in[H_m(t_0),H_M(t_0))$. Without loss of generality, we assume that ![]() $h_1(t_0) \lt h_2(t_0)$. Then

$h_1(t_0) \lt h_2(t_0)$. Then ![]() $H_m(t_0)=h_1(t_0)$,

$H_m(t_0)=h_1(t_0)$, ![]() $H_M(t_0)=h_2(t_0)$,

$H_M(t_0)=h_2(t_0)$, ![]() $0 \lt t_2^* \lt t_0$, and

$0 \lt t_2^* \lt t_0$, and

Hence,

with ![]() $S(t,x_0)$ satisfying (30) for

$S(t,x_0)$ satisfying (30) for ![]() $t\in[0,t_2^*]$, and

$t\in[0,t_2^*]$, and ![]() $E_2(t_2^*, x_0)=E_2(t_2^*, h_2(t_2^*))=0$. It follows that

$E_2(t_2^*, x_0)=E_2(t_2^*, h_2(t_2^*))=0$. It follows that  ${\mathbf{\tilde{V}}}(t_2^*,x_0)=0$. Combining this with (29) (with

${\mathbf{\tilde{V}}}(t_2^*,x_0)=0$. Combining this with (29) (with ![]() $\hat t=t_2^*$) and (31) we obtain

$\hat t=t_2^*$) and (31) we obtain

\begin{eqnarray*}

|{\mathbf{\tilde{V}}}(t_0,x_0)|&\leq& N_1e^{N_1T_1}\int_{t_2^*}^{t_0}I_2(s,x_0)ds\\

&\leq & N_1e^{N_1T_1}\|I_{2}(\cdot,x_0)\|_{L^\infty([0,T_1])}(t_0-t_2^*)\\

&\leq & N_1e^{N_1T_1}\|z_2\|_{L^\infty(D_{T_1})}\frac 7{h^*}(h_2(t_0)-h_2(t_2^*))\\

&\leq & N_1e^{N_1T_1}(1+\|z_{20}\|_{L^\infty([-1,1])})\frac 7{h^*}(h_2(t_0)-h_1(t_0)):=M_1(h_2(t_0)-h_1(t_0)).

\end{eqnarray*}

\begin{eqnarray*}

|{\mathbf{\tilde{V}}}(t_0,x_0)|&\leq& N_1e^{N_1T_1}\int_{t_2^*}^{t_0}I_2(s,x_0)ds\\

&\leq & N_1e^{N_1T_1}\|I_{2}(\cdot,x_0)\|_{L^\infty([0,T_1])}(t_0-t_2^*)\\

&\leq & N_1e^{N_1T_1}\|z_2\|_{L^\infty(D_{T_1})}\frac 7{h^*}(h_2(t_0)-h_2(t_2^*))\\

&\leq & N_1e^{N_1T_1}(1+\|z_{20}\|_{L^\infty([-1,1])})\frac 7{h^*}(h_2(t_0)-h_1(t_0)):=M_1(h_2(t_0)-h_1(t_0)).

\end{eqnarray*} Case (iii). ![]() $x_0\in(h_0, H_m(t_0))$. Clearly,

$x_0\in(h_0, H_m(t_0))$. Clearly, ![]() $t_1^*,t_2^* \lt t_0$. Without loss of generality, we assume that

$t_1^*,t_2^* \lt t_0$. Without loss of generality, we assume that ![]() $t_2^*\leq t_1^*$. It follows that

$t_2^*\leq t_1^*$. It follows that

Hence,

From (29) (with ![]() $\hat t=t_1^*$) and Case (ii), we see that

$\hat t=t_1^*$) and Case (ii), we see that

\begin{eqnarray*}

|{\mathbf{\tilde{V}}}(t_0,x_0)|&\leq& e^{N_1T_1}\left[\|{\mathbf{\tilde{V}}}(t_1^*,x_0)\|+N_1\int_{t_1^*}^{t_0}|I_1(s,x_0)-I_2(s,x_0)|ds\right]\\

&\leq & e^{N_1T_1}\left(M_1|h_2(t_1^*)-h_1(t_1^*)|+N_1T_1\|I_1(\cdot,x_0)-I_2(\cdot,x_0)\|_{L^\infty([0,T_1])}\right)\\

&\leq & M_2\left(\|h_2-h_1\|_{L^\infty([0,T_1])}+\|I_1(\cdot,x_0)-I_2(\cdot,x_0)\|_{L^\infty([0,T_1])}\right),

\end{eqnarray*}

\begin{eqnarray*}

|{\mathbf{\tilde{V}}}(t_0,x_0)|&\leq& e^{N_1T_1}\left[\|{\mathbf{\tilde{V}}}(t_1^*,x_0)\|+N_1\int_{t_1^*}^{t_0}|I_1(s,x_0)-I_2(s,x_0)|ds\right]\\

&\leq & e^{N_1T_1}\left(M_1|h_2(t_1^*)-h_1(t_1^*)|+N_1T_1\|I_1(\cdot,x_0)-I_2(\cdot,x_0)\|_{L^\infty([0,T_1])}\right)\\

&\leq & M_2\left(\|h_2-h_1\|_{L^\infty([0,T_1])}+\|I_1(\cdot,x_0)-I_2(\cdot,x_0)\|_{L^\infty([0,T_1])}\right),

\end{eqnarray*}where ![]() $M_2$ depends on

$M_2$ depends on ![]() $T_1$,

$T_1$, ![]() $N_1$ and

$N_1$ and ![]() $M_1$.

$M_1$.

Case (iv). ![]() $x_0\in[-h_0,h_0]$. In this case, it is clear that