A common and subjective task is classifying objects or subjects, hereafter called items, into predefined, mutually exclusive categories. Examples include content analysis that classifies subjective communications into content categories, meta-analysis that groups empirical studies based on underlying study settings, and medicine that requires appropriate diagnoses of patients. As subsequent analyses and conclusions critically depend on the obtained categorical data, validation is needed. It is, therefore, good practice to assign multiple independent raters and compute the percentage of cases in which these raters chose the same category and thus agreed, where a low percent agreement suggests discarding the data and improving the process. However, researchers also recognized early that this percent agreement is often substantially inflated: It counts not only genuine agreements but also chance agreements in which at least one rater was unsure and coincidently guessed the same category as some other rater, which may happen due to limited choice options. These notions have resulted in chance-corrected agreement coefficients. We provide a review with novel interpretations and connections for so-called quadratic weights.

1 Chance-corrected agreement coefficients

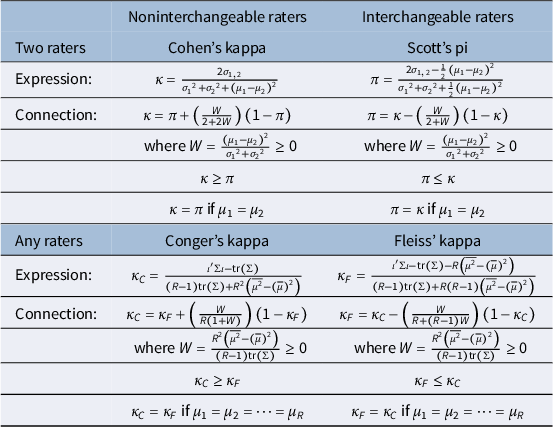

Cohen’s kappa and Scott’s pi are two classic agreement coefficients for two raters (Cohen, Reference Cohen1960; Scott, Reference Scott1955). The chance correction in Cohen’s kappa assumes that raters use their own category proportions (computed from the data) when guessing an item’s category. In contrast, Scott’s pi assumes that the guessing probabilities are the same for both raters by averaging the rater-specific category proportions over the two raters. When extending beyond two raters (i.e., moving to multirater coefficients), Cohen’s kappa generalizes into Conger’s kappa (Conger, Reference Conger1980), and Scott’s pi generalizes into Fleiss’ kappa (Fleiss, Reference Fleiss1971). Both multirater coefficients base the percentage of observed agreement on all items and different rater pairs. However, whereas Conger’s kappa computes the probability of chance agreement by averaging this probability across all rater pairs using rater-specific category proportions, Fleiss’ kappa uses the same (averaged) category proportions for all raters.

Conceptually, the main difference between Scott’s pi and Fleiss’ kappa, on the one hand, and Cohen’s and Conger’s kappas, on the other hand, is that the former two coefficients consider raters to be interchangeable (by averaging the raters’ category proportions), whereas the latter two coefficients do not (Banerjee et al., Reference Banerjee, Capozzoli, McSweeney and Sinha1999; Krippendorff, Reference Krippendorff2004). In addition to Scott’s pi and Fleiss’ kappa, coefficients with interchangeable raters include Krippendorff’s alpha (Krippendorff, Reference Krippendorff2018) and the recently introduced uniform prior coefficient (Van Oest, Reference Van Oest2019; Van Oest & Girard, Reference Van Oest and Girard2022). The latter two coefficients involve traditional versus Bayesian small-sample corrections and converge to Fleiss’ kappa. In the alternative class of noninterchangeable raters, Light’s and Hubert’s kappas complement those of Cohen and Conger (Hubert, Reference Hubert1977; Light, Reference Light1971). Light’s version is the average Cohen’s kappa across all rater pairs (Conger, Reference Conger1980). In contrast, Hubert’s version considers simultaneous agreement among all raters without breaking them up into pairs. It coincides with Conger’s kappa if we measure the degree of simultaneous agreement by the fraction of rater pairs that result in agreement. More generally, the weighted counterparts of these two coefficients coincide if the weight for simultaneous agreement becomes the corresponding average weight across all rater pairs, which is a well-known choice (Mielke et al., Reference Mielke, Berry and Johnston2007, Reference Mielke, Berry and Johnston2009; Schuster & Smith, Reference Schuster and Smith2005; Warrens, Reference Warrens2012a).

Noninterchangeable raters are suitable when all raters classify all items and particularly natural when these raters are fixed (i.e., deliberately selected), such as specific experts, devices, or coding models that constitute the population of interest. In contrast, coefficients with interchangeable raters are needed when raters vary across items (Fleiss, Reference Fleiss1971; Janson & Olsson, Reference Janson and Olsson2004; Landis & Koch, Reference Landis and Koch1977a; Vanbelle, Reference Vanbelle2019). However, the preferred option is less clear-cut when all raters classify all items while being randomly selected to represent a larger population. Zwick (Reference Zwick1988) proposed empirically investigating whether raters vary in category proportions beyond random noise to decide whether raters are interchangeable. A well-known “paradox” of Cohen’s kappa, representing noninterchangeable raters, is that, given the percentage of observed agreement, this kappa increases as raters deviate more in their category proportions, suggesting that rater bias gets rewarded (Feinstein & Cicchetti, Reference Feinstein and Cicchetti1990). However, a counterargument is that the involved ceteris paribus assumption is unrealistic: A change in classification outcomes that triggers additional rater bias typically also decreases the percentage of observed agreement and ultimately Cohen’s kappa, mitigating or even resolving the issue (Hoehler, Reference Hoehler2000).

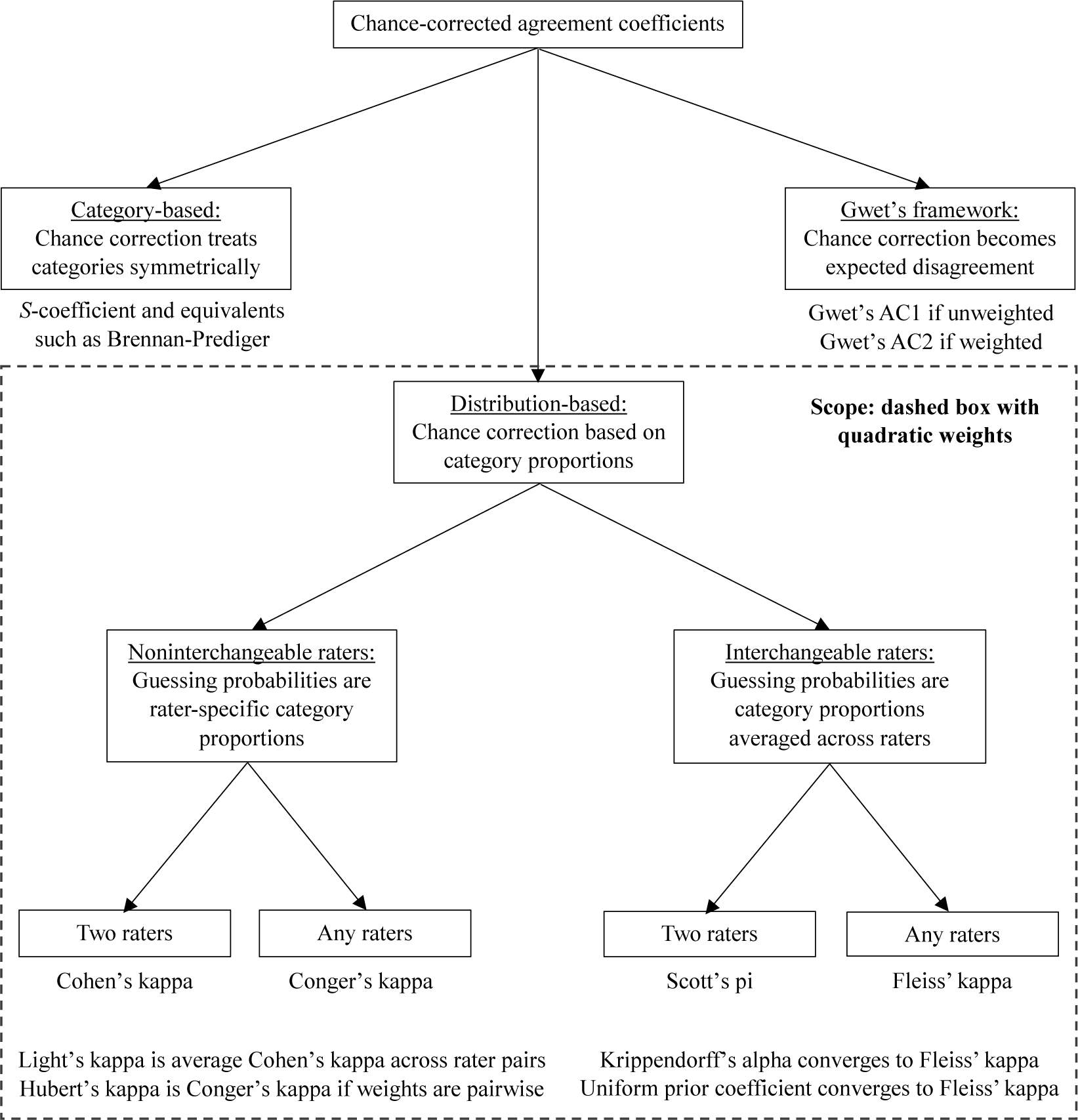

All so-far discussed agreement coefficients are distribution-based, where the chance correction uses raters’ category proportions. An influential alternative class consists of category-based coefficients, where the chance correction uses only information on how many categories and assumes equal category probabilities when raters guess an item’s category. The S-coefficient represents this second class (Bennett et al., Reference Bennett, Alpert and Goldstein1954). Other researchers rediscovered this coefficient via alternative but ultimately equivalent approaches (Brennan & Prediger, Reference Brennan and Prediger1981; Byrt et al., Reference Byrt, Bishop and Carlin1993; Holley & Guilford, Reference Holley and Guilford1964; Janson & Vegelius, Reference Janson and Vegelius1979; Maxwell, Reference Maxwell1977). A third class that has become important is Gwet’s framework, yielding the AC1 and AC2 coefficients (Gwet, Reference Gwet2008, Reference Gwet2014). Figure 1 summarizes all coefficients to guide stakeholders, such as applied researchers, psychometricians, statisticians, and other professionals. The scope of the present study is the distribution-based class, including Cohen’s and Fleiss’ kappas, the most frequently used and cited two-rater and multirater coefficients (e.g., Andres & Hernandez, Reference Andres and Hernandez2020; Vanbelle, Reference Vanbelle2019).

Overview of chance-corrected agreement coefficients plus the scope of the present study.

A second well-known “paradox” of Cohen’s kappa (i.e., distribution-based coefficients) is that a high observed agreement among raters may still result in a low coefficient value if the categories have substantially unequal proportions (Feinstein & Cicchetti, Reference Feinstein and Cicchetti1990). However, others argued that Cohen’s kappa does what it is supposed to do (Vach, Reference Vach2005; Zwick, Reference Zwick1988). Typically, researchers want raters to agree not only on highly prevalent categories (e.g., not having a disease) but particularly on rare categories (e.g., having that disease). A situation in which raters usually agree on highly prevalent categories but never on rare categories may have serious consequences. However, the percent observed agreement, S-coefficient, and Gwet’s coefficient would take high values. The S-coefficient linearly transforms the observed agreement using only the number of categories without other information (Zwick, Reference Zwick1988); Gwet’s coefficient compares the observed agreement with the corresponding expected disagreement, resulting in higher values as the category proportions become more unequal (Vach & Gerke, Reference Vach and Gerke2023).

2 Incorporating weights for partial disagreements

All coefficients allow for generalization to ordinal (ordered) categories (Cohen, Reference Cohen1968; Gwet, Reference Gwet2014). For example, content analysis may require communication ratings on a five-point scale from very negative (1) to very positive (5). In such situations, some rater disagreements are worse than others, requiring a weighting scheme to describe the amount of penalization for each possible rater disagreement. Typically, exact agreement (i.e., both raters choose the same category) receives a disagreement weight of zero, and the maximum possible disagreement corresponds to a weight of one. All other disagreements receive either the maximum weight of one (reducing to the unweighted case of nominal categories) or a lower distance-based weight, reflecting partial disagreement. Two common weighting schemes are linear and quadratic (Cicchetti & Allison, Reference Cicchetti and Allison1971; Fleiss & Cohen, Reference Fleiss and Cohen1973). The former penalizes rater disagreements in proportion to the distance between the chosen categories; the latter penalizes based on the squared distance. A third, less common, weighting scheme uses radical weights, the distance’s square root (Gwet, Reference Gwet2014). Thus, linear, quadratic, and radical weights penalize disagreements by taking the involved distance (expressed as a fraction of the maximum distance) to the power 1, 2, or 0.5. Quadratic weights are most lenient to partial disagreements and usually yield the highest coefficient values (Van Oest, Reference Van Oest2023; Warrens, Reference Warrens2012b, Reference Warrens2013), potentially explaining their popularity in empirical studies (Vanbelle, Reference Vanbelle2016). However, quadratic weights also offer valuable interpretations as correlation coefficients. All three weighting schemes assume equidistant ordinal categories, where adjacent categories are always the same one point apart. Our results require this well-accepted assumption to hold.

Before turning to quadratic weights, we briefly review existing results for linear weights. First, Cohen’s linearly weighted kappa is a weighted average of kappas computed for all possible reductions of the considered ordinal scale (e.g., five-point) into binary scales by merging adjacent categories (Vanbelle & Albert, Reference Vanbelle and Albert2009; Warrens, Reference Warrens2011). Second, Cohen’s linearly weighted kappa is a weighted average of all linearly weighted kappas computed after merging any two adjacent categories in the ordinal scale, effectively reducing the number of categories by one (Warrens, Reference Warrens2012c). Third, these results generalize to any partition of the ordinal scale by merging adjacent categories: Cohen’s linearly weighted kappa is a weighted average of linearly weighted kappas computed for all possible scale partitions given the chosen number of categories. Furthermore, invariance to the number of categories immediately extends this result to the weighted average across all non-trivial partitions (excluding only the original scale and its reduction to one single category); the weights always correspond to the denominator of the involved kappa elements (Warrens, Reference Warrens2012c). Fourth, Kvålseth (Reference Kvålseth2018) showed that Cohen’s linearly weighted kappa equals the corresponding unweighted kappa if cumulative probabilities replace the original probabilities when computing the latter. Fifth, Cohen’s linearly weighted kappa captures the first moment of the distribution of rating distances generated by the two raters: One minus kappa equals the expected observed rating distance, using the bivariate distribution of ratings, expressed as a fraction of the rating distance that would be expected by chance, under independence (Vanbelle, Reference Vanbelle2016). These results for Cohen’s kappa with linear weights yield elegant decompositions and interpretations, but they do not lead to correlation coefficients.

In contrast, Cohen’s quadratically weighted kappa converges to two-factor intraclass correlation coefficients with random and fixed raters (i.e., the so-called ICC(2,1) and ICC(3,1) coefficients), where the items and raters are the two factors (Carrasco, Reference Carrasco2010; Fleiss & Cohen, Reference Fleiss and Cohen1973; Janson & Olsson, Reference Janson and Olsson2001; Schuster, Reference Schuster2004; Schuster & Smith, Reference Schuster and Smith2005; Shrout & Fleiss, Reference Shrout and Fleiss1979; Warrens, Reference Warrens2014). Furthermore, this kappa coefficient equals Lin’s concordance correlation coefficient (Andres & Hernandez, Reference Andres and Hernandez2020; Carrasco, Reference Carrasco2010; King & Chinchilli, Reference King and Chinchilli2001; Kvålseth, Reference Kvålseth2018; Schuster, Reference Schuster2004). Lin’s concordance correlation possesses several attractive properties and measures agreement (not mere association) between two raters when the ratings are on a continuous scale (Barnhart et al., Reference Barnhart, Haber and Song2002; Lin, Reference Lin1989). In addition, Cohen’s quadratically weighted kappa reduces to the Pearson product–moment correlation if the two raters produced ordinal ratings with the same mean and variance (Brusco et al., Reference Brusco, Stahl and Steinley2008; Cohen, Reference Cohen1968; Schuster, Reference Schuster2004). For Conger’s kappa, the multirater version of Cohen’s kappa, quadratic weights imply convergence to two-factor intraclass correlation coefficients with random and fixed raters, and equivalence to Lin’s generalized concordance correlation coefficient that allows for any number of raters (Andres & Hernandez, Reference Andres and Hernandez2020; Barnhart et al., Reference Barnhart, Haber and Song2002; Carrasco & Jover, Reference Carrasco and Jover2003; Lin, Reference Lin1989, Reference Lin2000; Schuster & Smith, Reference Schuster and Smith2005).

Despite these helpful interpretations, quadratic weights have also received criticism, such as making Cohen’s kappa sensitive to the number of included categories (Brenner & Kliebsch, Reference Brenner and Kliebsch1996), making it behave like a coefficient of association instead of agreement (Graham & Jackson, Reference Graham and Jackson1993), and making it non-responsive to changes in the observed exact agreement on the middle category in situations with an odd number of categories combined with at least one rater having a symmetric distribution of category proportions (Warrens, Reference Warrens2012d).

Although interpretations are abundant for Cohen’s and Conger’s kappas, they are scarce for Fleiss’ kappa, the most frequently used and cited multirater agreement coefficient. Gwet (Reference Gwet2014, p. 67) noted that “there is no known procedure that formally generalizes Scott’s coefficient to Fleiss’,” and Stoyan et al. (Reference Stoyan, Pommerening, Hummel and Kopp-Schneider2018, p. 381) referred to “Fleiss’

![]() $\kappa$

, which is known to be difficult to interpret.” Still, one existing result is that Fleiss’ kappa converges to the one-factor intraclass correlation coefficient, where the items constitute the only factor due to interchangeable raters (Banerjee et al., Reference Banerjee, Capozzoli, McSweeney and Sinha1999; Janson & Olsson, Reference Janson and Olsson2004; Landis & Koch, Reference Landis and Koch1977a; Vanbelle, Reference Vanbelle2019). Furthermore, a model-based interpretation is that Fleiss’ kappa estimates the probability that both raters in a pair assign an item to its correct category without guessing (Moss, Reference Moss2023; Van Oest, Reference Van Oest2019; Van Oest & Girard, Reference Van Oest and Girard2022). However, interpretations in terms of concordance and Pearson product–moment correlations remain absent.

$\kappa$

, which is known to be difficult to interpret.” Still, one existing result is that Fleiss’ kappa converges to the one-factor intraclass correlation coefficient, where the items constitute the only factor due to interchangeable raters (Banerjee et al., Reference Banerjee, Capozzoli, McSweeney and Sinha1999; Janson & Olsson, Reference Janson and Olsson2004; Landis & Koch, Reference Landis and Koch1977a; Vanbelle, Reference Vanbelle2019). Furthermore, a model-based interpretation is that Fleiss’ kappa estimates the probability that both raters in a pair assign an item to its correct category without guessing (Moss, Reference Moss2023; Van Oest, Reference Van Oest2019; Van Oest & Girard, Reference Van Oest and Girard2022). However, interpretations in terms of concordance and Pearson product–moment correlations remain absent.

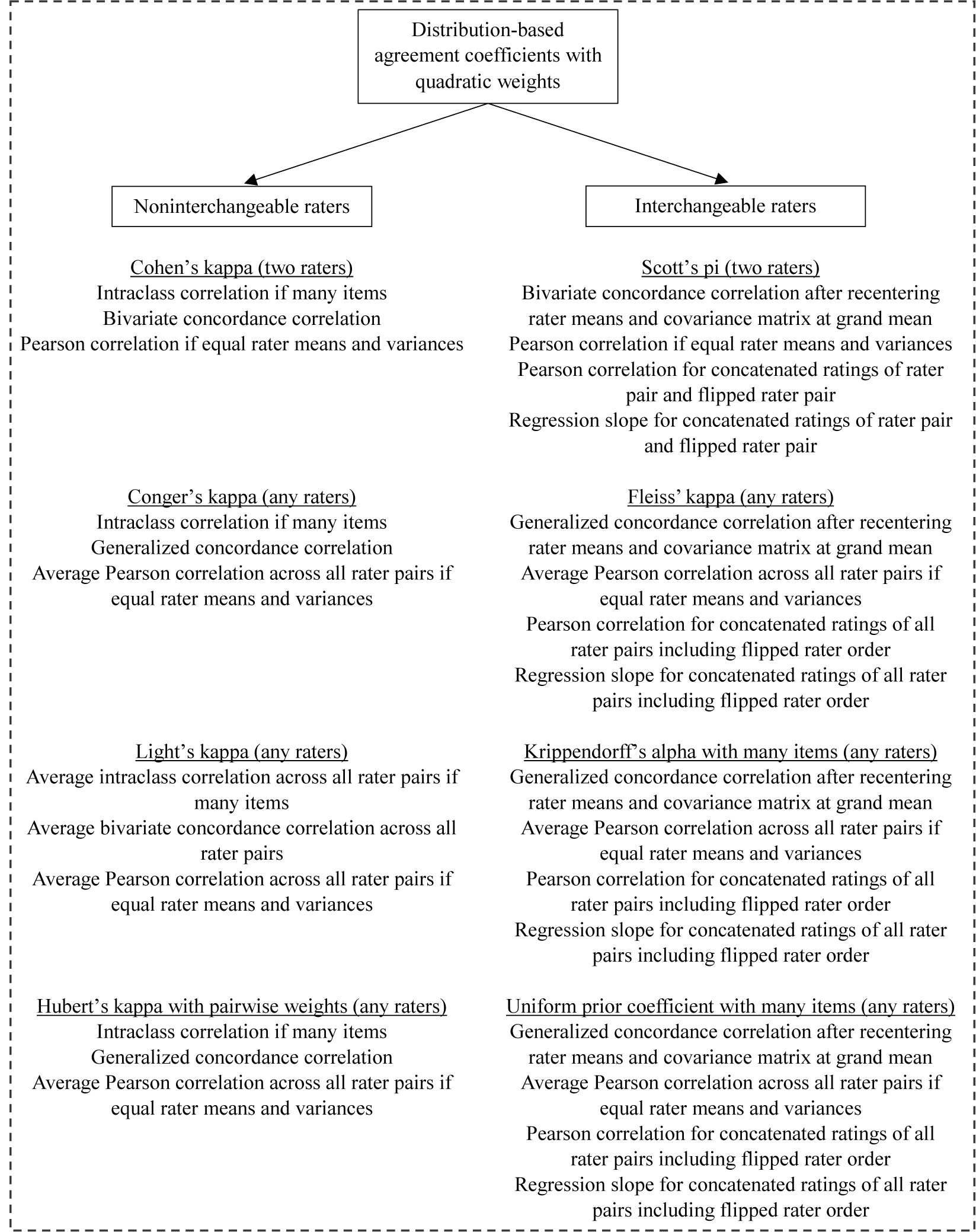

The present study addresses this literature gap and provides novel interpretations of Fleiss’ quadratically weighted kappa (and its two-rater version, Scott’s pi). We write it as Lin’s generalized concordance correlation coefficient after recentering the raters’ mean vector and covariance matrix at the grand mean to reflect interchangeable raters. Furthermore, Fleiss’ quadratically weighted kappa equals the Pearson product–moment correlation and the regression slope coefficient after concatenating the ratings from all different rater pairs (including reversed rater order) into two data columns. Next, we show a linear connection between Fleiss’ and Conger’s quadratically weighted kappas, entirely determined by the raters’ means and variances, and prove that Fleiss’ quadratically weighted kappa never exceeds Conger’s. The two coefficients coincide if the raters share the same mean rating without needing the same rating proportions or variance. All results extend to Scott’s pi and Cohen’s kappa for two raters with quadratic weights. Thus, we present new interpretations and connections for distribution-based coefficients (see the dashed box in Figure 1).

3 Two noninterchangeable raters: Cohen’s quadratically weighted kappa

To establish ideas, we reproduce that Cohen’s kappa, with quadratic weights, is equivalent to Lin’s bivariate concordance correlation and reduces to the Pearson product–moment correlation if the two raters share the same mean and variance. Cohen’s weighted kappa equals one minus the ratio of observed weighted disagreement,

![]() ${d}_o$

, and expected-by-chance weighted disagreement,

${d}_o$

, and expected-by-chance weighted disagreement,

![]() ${d}_e$

(Cohen, Reference Cohen1968). Expressed in population parameters with

${d}_e$

(Cohen, Reference Cohen1968). Expressed in population parameters with

![]() $C$

ordered categories, it is

$C$

ordered categories, it is

$$\begin{align}\kappa =1-\frac{d_o}{d_e}=1-\frac{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\Pr \left({Y}_1=c,{Y}_2=\tilde{c}\right)}{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\Pr \left({Y}_1=c\right)\Pr \left({Y}_2=\tilde{c}\right)},\end{align}$$

$$\begin{align}\kappa =1-\frac{d_o}{d_e}=1-\frac{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\Pr \left({Y}_1=c,{Y}_2=\tilde{c}\right)}{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\Pr \left({Y}_1=c\right)\Pr \left({Y}_2=\tilde{c}\right)},\end{align}$$

where

![]() $v\left(c,\tilde{c}\right)$

denotes the disagreement weight when the first rater chooses category

$v\left(c,\tilde{c}\right)$

denotes the disagreement weight when the first rater chooses category

![]() $c$

and the second rater chooses category

$c$

and the second rater chooses category

![]() $\tilde{c}$

. Furthermore,

$\tilde{c}$

. Furthermore,

![]() ${Y}_1$

and

${Y}_1$

and

![]() ${Y}_2$

denote the raters’ category choices (i.e., ratings), with means

${Y}_2$

denote the raters’ category choices (i.e., ratings), with means

![]() ${\mu}_1$

and

${\mu}_1$

and

![]() ${\mu}_2$

, variances

${\mu}_2$

, variances

![]() ${\sigma_1}^2$

and

${\sigma_1}^2$

and

![]() ${\sigma_2}^2$

, correlation

${\sigma_2}^2$

, correlation

![]() ${\rho}_{1,2}$

, and covariance

${\rho}_{1,2}$

, and covariance

![]() ${\sigma}_{1,2}={\rho}_{1,2}{\sigma}_1{\sigma}_2$

. We use quadratic weights and substitute

${\sigma}_{1,2}={\rho}_{1,2}{\sigma}_1{\sigma}_2$

. We use quadratic weights and substitute

![]() $v\left(c,\tilde{c}\right)={\left(c-\tilde{c}\right)}^2/{\left(C-1\right)}^2$

, where the denominator drops out. The numerator and denominator in (1) correspond to the expectations of the weight

$v\left(c,\tilde{c}\right)={\left(c-\tilde{c}\right)}^2/{\left(C-1\right)}^2$

, where the denominator drops out. The numerator and denominator in (1) correspond to the expectations of the weight

![]() $v\left({Y}_1,{Y}_2\right)$

when considering the joint distribution of

$v\left({Y}_1,{Y}_2\right)$

when considering the joint distribution of

![]() ${Y}_1$

and

${Y}_1$

and

![]() ${Y}_2$

versus independence:

${Y}_2$

versus independence:

$$\begin{align}\kappa =1-\frac{E\left[v\left({Y}_1,{Y}_2\right)\right]}{E\left[v\left({Y}_1,{Y}_2\right)|\mathrm{independence}\right]}=1-\frac{E\left[{\left({Y}_1-{Y}_2\right)}^2\right]}{E\left[{\left({Y}_1-{Y}_2\right)}^2|\mathrm{independence}\right]}.\end{align}$$

$$\begin{align}\kappa =1-\frac{E\left[v\left({Y}_1,{Y}_2\right)\right]}{E\left[v\left({Y}_1,{Y}_2\right)|\mathrm{independence}\right]}=1-\frac{E\left[{\left({Y}_1-{Y}_2\right)}^2\right]}{E\left[{\left({Y}_1-{Y}_2\right)}^2|\mathrm{independence}\right]}.\end{align}$$

Using the definition of variance as the second central moment and writing it in terms of the uncentered moments, we can work out (2) as

$$\begin{align}\kappa &=1-\frac{\mathrm{Var}\left[{Y}_1-{Y}_2\right]+{\left(E\left[{Y}_1-{Y}_2\right]\right)}^2}{\mathrm{Var}\left[{Y}_1-{Y}_2|\mathrm{independence}\right]+{\left(E\left[{Y}_1-{Y}_2|\mathrm{independence}\right]\right)}^2}\notag\\&=1-\frac{\left({\sigma_1}^2+{\sigma_2}^2-2{\sigma}_{1,2}\right)+{\left({\mu}_1-{\mu}_2\right)}^2}{\left({\sigma_1}^2+{\sigma_2}^2\right)+{\left({\mu}_1-{\mu}_2\right)}^2}=\frac{2{\sigma}_{1,2}}{{\sigma_1}^2+{\sigma_2}^2+{\left({\mu}_1-{\mu}_2\right)}^2},\end{align}$$

$$\begin{align}\kappa &=1-\frac{\mathrm{Var}\left[{Y}_1-{Y}_2\right]+{\left(E\left[{Y}_1-{Y}_2\right]\right)}^2}{\mathrm{Var}\left[{Y}_1-{Y}_2|\mathrm{independence}\right]+{\left(E\left[{Y}_1-{Y}_2|\mathrm{independence}\right]\right)}^2}\notag\\&=1-\frac{\left({\sigma_1}^2+{\sigma_2}^2-2{\sigma}_{1,2}\right)+{\left({\mu}_1-{\mu}_2\right)}^2}{\left({\sigma_1}^2+{\sigma_2}^2\right)+{\left({\mu}_1-{\mu}_2\right)}^2}=\frac{2{\sigma}_{1,2}}{{\sigma_1}^2+{\sigma_2}^2+{\left({\mu}_1-{\mu}_2\right)}^2},\end{align}$$

showing that Cohen’s quadratically weighted kappa coincides with Lin’s bivariate concordance correlation coefficient (Lin, Reference Lin1989). Furthermore, (3) implies that Cohen’s quadratically weighted kappa reduces to the Pearson product–moment correlation

![]() ${\rho}_{1,2}$

if we impose equal means and variances, that is,

${\rho}_{1,2}$

if we impose equal means and variances, that is,

![]() ${\mu}_1={\mu}_2$

and

${\mu}_1={\mu}_2$

and

![]() ${\sigma_1}^2={\sigma_2}^2$

(Cohen, Reference Cohen1968).

${\sigma_1}^2={\sigma_2}^2$

(Cohen, Reference Cohen1968).

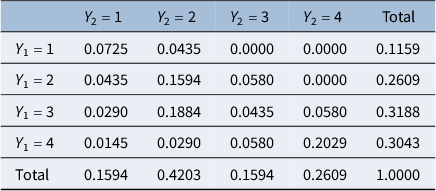

As an illustration, we consider the second contingency table in Table 1 of Landis and Koch (Reference Landis and Koch1977b, p. 161), which compared the multiple sclerosis diagnoses of 69 patients by two neurologists based on four likelihood categories, where “1” is highest and “4” is lowest. Table 1 shows the corresponding joint and marginal distributions.

Joint and marginal distributions of ratings in Landis and Koch’s multiple sclerosis example with two raters

Cohen’s quadratically weighted kappa equals 0.6256. Furthermore, the raters’ means, variances, and covariance become

and

![]() ${\sigma}_{1,2}=0.6780$

, where we omit the tedious details of the covariance calculation that uses the joint distribution. Lin’s bivariate concordance correlation coefficient is

${\sigma}_{1,2}=0.6780$

, where we omit the tedious details of the covariance calculation that uses the joint distribution. Lin’s bivariate concordance correlation coefficient is

the same value as Cohen’s quadratically weighted kappa.

4 Two interchangeable raters: Scott’s quadratically weighted pi

We continue with Scott’s pi in the two-rater setting to show how the previous logic for Cohen’s quadratically weighted kappa extends to agreement coefficients with interchangeable raters. Later, we extend to Fleiss’ kappa with any number of interchangeable raters.

4.1 Bivariate concordance correlation after recentering at the raters’ grand mean

Expressed in population parameters with

![]() $C$

ordered categories, Scott’s pi is

$C$

ordered categories, Scott’s pi is

$$\begin{align}\pi =1-\frac{d_o}{d_e}=1-\frac{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\Pr \left({Y}_1=c,{Y}_2=\tilde{c}\right)}{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\left(\frac{\Pr \left({Y}_1=c\right)+\Pr \left({Y}_2=c\right)}{2}\right)\left(\frac{\Pr \left({Y}_1=\tilde{c}\right)+\Pr \left({Y}_2=\tilde{c}\right)}{2}\right)},\end{align}$$

$$\begin{align}\pi =1-\frac{d_o}{d_e}=1-\frac{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\Pr \left({Y}_1=c,{Y}_2=\tilde{c}\right)}{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^Cv\left(c,\tilde{c}\right)\left(\frac{\Pr \left({Y}_1=c\right)+\Pr \left({Y}_2=c\right)}{2}\right)\left(\frac{\Pr \left({Y}_1=\tilde{c}\right)+\Pr \left({Y}_2=\tilde{c}\right)}{2}\right)},\end{align}$$

where averaging the marginal category proportions over the two interchangeable raters implies the denominator in (4). Next, incorporating quadratic weights by substituting

![]() $v\left(c,\tilde{c}\right)={\left(c-\tilde{c}\right)}^2$

, recognizing the numerator in (4) as the expectation of the weight

$v\left(c,\tilde{c}\right)={\left(c-\tilde{c}\right)}^2$

, recognizing the numerator in (4) as the expectation of the weight

![]() $v\left({Y}_1,{Y}_2\right)$

when considering the joint distribution of

$v\left({Y}_1,{Y}_2\right)$

when considering the joint distribution of

![]() ${Y}_1$

and

${Y}_1$

and

![]() ${Y}_2$

, and recognizing independence in the denominator yields

${Y}_2$

, and recognizing independence in the denominator yields

$$\begin{align}\pi =1-\frac{E\left[{\left({Y}_1-{Y}_2\right)}^2\right]}{d_e}=1-\frac{\left({\sigma_1}^2+{\sigma_2}^2-2{\sigma}_{1,2}\right)+{\left({\mu}_1-{\mu}_2\right)}^2}{d_e},\end{align}$$

$$\begin{align}\pi =1-\frac{E\left[{\left({Y}_1-{Y}_2\right)}^2\right]}{d_e}=1-\frac{\left({\sigma_1}^2+{\sigma_2}^2-2{\sigma}_{1,2}\right)+{\left({\mu}_1-{\mu}_2\right)}^2}{d_e},\end{align}$$

where

$$\begin{align}{d}_e&=\frac{1}{4}{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_1=c\right)\Pr \left({Y}_1=\tilde{c}\right)\nonumber\\&\quad+\frac{1}{4}{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_2=c\right)\Pr \left({Y}_2=\tilde{c}\right)\nonumber\\&\quad+\frac{1}{2}{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_1=c\right)\Pr \left({Y}_2=\tilde{c}\right)\nonumber\\&=\frac{1}{4}E\left[{\left({Y}_1-{\tilde{Y}}_1\right)}^2|\mathrm{indep}.\right]+\frac{1}{4}E\left[{\left({Y}_2-{\tilde{Y}}_2\right)}^2|\mathrm{indep}.\right]+\frac{1}{2}E\left[{\left({Y}_1-{Y}_2\right)}^2|\mathrm{indep}.\right]\nonumber\\&=\frac{1}{4}\left(2{\sigma_1}^2+{\left({\mu}_1-{\mu}_1\right)}^2\right)+\frac{1}{4}\left(2{\sigma_2}^2+{\left({\mu}_2-{\mu}_2\right)}^2\right)+\frac{1}{2}\left({\sigma_1}^2+{\sigma_2}^2+{\left({\mu}_1-{\mu}_2\right)}^2\right)\nonumber\\&={\sigma_1}^2+{\sigma_2}^2+\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2,\end{align}$$

$$\begin{align}{d}_e&=\frac{1}{4}{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_1=c\right)\Pr \left({Y}_1=\tilde{c}\right)\nonumber\\&\quad+\frac{1}{4}{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_2=c\right)\Pr \left({Y}_2=\tilde{c}\right)\nonumber\\&\quad+\frac{1}{2}{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_1=c\right)\Pr \left({Y}_2=\tilde{c}\right)\nonumber\\&=\frac{1}{4}E\left[{\left({Y}_1-{\tilde{Y}}_1\right)}^2|\mathrm{indep}.\right]+\frac{1}{4}E\left[{\left({Y}_2-{\tilde{Y}}_2\right)}^2|\mathrm{indep}.\right]+\frac{1}{2}E\left[{\left({Y}_1-{Y}_2\right)}^2|\mathrm{indep}.\right]\nonumber\\&=\frac{1}{4}\left(2{\sigma_1}^2+{\left({\mu}_1-{\mu}_1\right)}^2\right)+\frac{1}{4}\left(2{\sigma_2}^2+{\left({\mu}_2-{\mu}_2\right)}^2\right)+\frac{1}{2}\left({\sigma_1}^2+{\sigma_2}^2+{\left({\mu}_1-{\mu}_2\right)}^2\right)\nonumber\\&={\sigma_1}^2+{\sigma_2}^2+\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2,\end{align}$$

with

![]() ${\tilde{Y}}_1$

and

${\tilde{Y}}_1$

and

![]() ${\tilde{Y}}_2$

originating from the same distributions as

${\tilde{Y}}_2$

originating from the same distributions as

![]() ${Y}_1$

and

${Y}_1$

and

![]() ${Y}_2$

, respectively. Substituting (6) into (5) yields a new expression for Scott’s pi in terms of the first and second moments:

${Y}_2$

, respectively. Substituting (6) into (5) yields a new expression for Scott’s pi in terms of the first and second moments:

$$\begin{align}\pi =1-\frac{\left({\sigma_1}^2+{\sigma_2}^2-2{\sigma}_{1,2}\right)+{\left({\mu}_1-{\mu}_2\right)}^2}{{\sigma_1}^2+{\sigma_2}^2+\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2}=\frac{2{\sigma}_{1,2}-\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2}{{\sigma_1}^2+{\sigma_2}^2+\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2}.\end{align}$$

$$\begin{align}\pi =1-\frac{\left({\sigma_1}^2+{\sigma_2}^2-2{\sigma}_{1,2}\right)+{\left({\mu}_1-{\mu}_2\right)}^2}{{\sigma_1}^2+{\sigma_2}^2+\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2}=\frac{2{\sigma}_{1,2}-\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2}{{\sigma_1}^2+{\sigma_2}^2+\frac{1}{2}{\left({\mu}_1-{\mu}_2\right)}^2}.\end{align}$$

Furthermore, (7) implies that Scott’s pi with quadratic weights reduces to the Pearson product–moment correlation

![]() ${\rho}_{1,2}$

if we impose equal means and variances, that is,

${\rho}_{1,2}$

if we impose equal means and variances, that is,

![]() ${\mu}_1={\mu}_2$

and

${\mu}_1={\mu}_2$

and

![]() ${\sigma_1}^2={\sigma_2}^2$

.

${\sigma_1}^2={\sigma_2}^2$

.

Comparing expressions (3) and (7) for Cohen’s quadratically weighted kappa and the corresponding Scott’s pi shows that these coefficients coincide if the two raters share the same mean,

![]() ${\mu}_1={\mu}_2$

. This requirement of equal rater means is substantially more relaxed than the typical requirement of raters having the same category proportions for the unweighted kappa and pi coefficients. For example, Banerjee et al. (Reference Banerjee, Capozzoli, McSweeney and Sinha1999, p. 5) noted for nominal categories that “if the two raters are interchangeable, in the sense that the marginal distributions are identical, then Cohen’s and Scott’s measures are equivalent.” In contrast, when the weights are quadratic, having the same mean is already sufficient for interchangeability, making these coefficients equivalent.

${\mu}_1={\mu}_2$

. This requirement of equal rater means is substantially more relaxed than the typical requirement of raters having the same category proportions for the unweighted kappa and pi coefficients. For example, Banerjee et al. (Reference Banerjee, Capozzoli, McSweeney and Sinha1999, p. 5) noted for nominal categories that “if the two raters are interchangeable, in the sense that the marginal distributions are identical, then Cohen’s and Scott’s measures are equivalent.” In contrast, when the weights are quadratic, having the same mean is already sufficient for interchangeability, making these coefficients equivalent.

Next, we rewrite Scott’s pi in (7) as

$$\begin{align}\pi &=\frac{2\left({\sigma}_{1,2}-\frac{1}{4}{\mu_1}^2-\frac{1}{4}{\mu_2}^2+\frac{1}{2}{\mu}_1{\mu}_2\right)}{\left({\sigma_1}^2+\frac{3}{4}{\mu_1}^2-\frac{1}{4}{\mu_2}^2-\frac{1}{2}{\mu}_1{\mu}_2\right)+\left({\sigma_2}^2-\frac{1}{4}{\mu_1}^2+\frac{3}{4}{\mu_2}^2-\frac{1}{2}{\mu}_1{\mu}_2\right)}\nonumber\\&=\frac{2\left({\sigma}_{1,2}+{\mu}_1{\mu}_2-{\left(\frac{\mu_1+{\mu}_2}{2}\right)}^2\right)}{\left({\sigma_1}^2+{\mu_1}^2-{\left(\frac{\mu_1+{\mu}_2}{2}\right)}^2\right)+\left({\sigma_2}^2+{\mu_2}^2-{\left(\frac{\mu_1+{\mu}_2}{2}\right)}^2\right)}=\frac{2{\tilde{\sigma}}_{1,2}}{{{\tilde{\sigma}}_1}^2+{{\tilde{\sigma}}_2}^2},\end{align}$$

$$\begin{align}\pi &=\frac{2\left({\sigma}_{1,2}-\frac{1}{4}{\mu_1}^2-\frac{1}{4}{\mu_2}^2+\frac{1}{2}{\mu}_1{\mu}_2\right)}{\left({\sigma_1}^2+\frac{3}{4}{\mu_1}^2-\frac{1}{4}{\mu_2}^2-\frac{1}{2}{\mu}_1{\mu}_2\right)+\left({\sigma_2}^2-\frac{1}{4}{\mu_1}^2+\frac{3}{4}{\mu_2}^2-\frac{1}{2}{\mu}_1{\mu}_2\right)}\nonumber\\&=\frac{2\left({\sigma}_{1,2}+{\mu}_1{\mu}_2-{\left(\frac{\mu_1+{\mu}_2}{2}\right)}^2\right)}{\left({\sigma_1}^2+{\mu_1}^2-{\left(\frac{\mu_1+{\mu}_2}{2}\right)}^2\right)+\left({\sigma_2}^2+{\mu_2}^2-{\left(\frac{\mu_1+{\mu}_2}{2}\right)}^2\right)}=\frac{2{\tilde{\sigma}}_{1,2}}{{{\tilde{\sigma}}_1}^2+{{\tilde{\sigma}}_2}^2},\end{align}$$

where

![]() ${\tilde{\sigma}}_{1,2}={\sigma}_{1,2}+{\mu}_1{\mu}_2-{\overline{\mu}}^2$

is the covariance after recentering the rater means,

${\tilde{\sigma}}_{1,2}={\sigma}_{1,2}+{\mu}_1{\mu}_2-{\overline{\mu}}^2$

is the covariance after recentering the rater means,

![]() ${\mu}_1$

and

${\mu}_1$

and

![]() ${\mu}_2$

, at the grand mean,

${\mu}_2$

, at the grand mean,

![]() $\overline{\mu}=\left({\mu}_1+{\mu}_2\right)/2$

, and

$\overline{\mu}=\left({\mu}_1+{\mu}_2\right)/2$

, and

![]() ${{\tilde{\sigma}}_1}^2$

and

${{\tilde{\sigma}}_1}^2$

and

![]() ${{\tilde{\sigma}}_2}^2$

are the corresponding recentered variances. Thus, we may interpret Cohen’s quadratically weighted kappa in (3) and Scott’s quadratically weighted pi in (7) as concordance correlation coefficients, where the interchangeable raters in Scott’s pi imply recentering the rater means and (co)variances at the grand mean.

${{\tilde{\sigma}}_2}^2$

are the corresponding recentered variances. Thus, we may interpret Cohen’s quadratically weighted kappa in (3) and Scott’s quadratically weighted pi in (7) as concordance correlation coefficients, where the interchangeable raters in Scott’s pi imply recentering the rater means and (co)variances at the grand mean.

4.2 Pearson correlation and regression slope after concatenating the ratings

Another interpretation of Scott’s quadratically weighted pi is that it equals the Pearson product–moment correlation when the order of the two raters is random, with each of the two possible rater pairs, (1,2) and (2,1), occurring with 50% probability. Since the rater order is random, the two raters in the pair become random and effectively interchangeable with the same mean and variance. Following earlier logic, using equal means and variances, and denoting the two random raters as

![]() ${J}_1$

and

${J}_1$

and

![]() ${J}_2$

, we indeed obtain that Scott’s pi is the corresponding Pearson correlation:

${J}_2$

, we indeed obtain that Scott’s pi is the corresponding Pearson correlation:

$$\begin{align}\pi &=1-\frac{E\left[{\left({Y}_{J_1}-{Y}_{J_2}\right)}^2\right]}{E\left[{\left({Y}_{J_1}-{Y}_{J_2}\right)}^2|\mathrm{independence}\right]}=1-\frac{\mathrm{Var}\left[{Y}_{J_1}\right]+\mathrm{Var}\left[{Y}_{J_2}\right]-2\;\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{\mathrm{Var}\left[{Y}_{J_1}\right]+\mathrm{Var}\left[{Y}_{J_2}\right]}\nonumber\\&=1-\frac{2\;\mathrm{Var}\left[{Y}_{J_1}\right]-2\;\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{2\;\mathrm{Var}\left[{Y}_{J_1}\right]}=\frac{\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{\mathrm{Var}\left[{Y}_{J_1}\right]}=\frac{\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{\sqrt{\mathrm{Var}\left[{Y}_{J_1}\right]\mathrm{Var}\left[{Y}_{J_2}\right]}}=\mathrm{Corr}\left[{Y}_{J_1},{Y}_{J_2}\right].\end{align}$$

$$\begin{align}\pi &=1-\frac{E\left[{\left({Y}_{J_1}-{Y}_{J_2}\right)}^2\right]}{E\left[{\left({Y}_{J_1}-{Y}_{J_2}\right)}^2|\mathrm{independence}\right]}=1-\frac{\mathrm{Var}\left[{Y}_{J_1}\right]+\mathrm{Var}\left[{Y}_{J_2}\right]-2\;\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{\mathrm{Var}\left[{Y}_{J_1}\right]+\mathrm{Var}\left[{Y}_{J_2}\right]}\nonumber\\&=1-\frac{2\;\mathrm{Var}\left[{Y}_{J_1}\right]-2\;\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{2\;\mathrm{Var}\left[{Y}_{J_1}\right]}=\frac{\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{\mathrm{Var}\left[{Y}_{J_1}\right]}=\frac{\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]}{\sqrt{\mathrm{Var}\left[{Y}_{J_1}\right]\mathrm{Var}\left[{Y}_{J_2}\right]}}=\mathrm{Corr}\left[{Y}_{J_1},{Y}_{J_2}\right].\end{align}$$

In practice, researchers can compute Scott’s quadratically weighted pi by computing the Pearson correlation after concatenating the data, that is, combining the ratings from the two possible rater pairs, (1,2) and (2,1), in one extended data set with twice as many ratings. This procedure starts from the original contingency table, containing the rating frequencies of the two raters, and adds the transpose of this contingency table, making the combined contingency table symmetric. As the raters always become interchangeable after combining the two possible rater orders, it does not matter whether the same two raters rated all items or raters varied across items. However, the variance (and standard error) of this Pearson correlation is invalid because the ratings of the flipped rater pair are a direct consequence of the original ratings, violating the requirement of being drawn independently from the same data-generating process. Gwet (Reference Gwet2014, p. 141) and Andres and Hernandez (Reference Andres and Hernandez2025) provided formulas to compute the correct variance of Scott’s pi for fixed raters. Furthermore, Gwet (Reference Gwet2014) proposed an additive jackknife variance component if the raters are random (i.e., the participating raters are not of central interest and instead drawn from a larger population they are supposed to represent).

Next, we note that (9) implies

![]() $\pi =\beta$

, where

$\pi =\beta$

, where

![]() $\beta$

is the slope in

$\beta$

is the slope in

![]() ${Y}_{J_2}=\alpha +\beta {Y}_{J_1}+\varepsilon$

. The reason is that the slope coefficient in a simple linear regression equals the covariance between the independent variable and the dependent variable divided by the variance of the independent variable, that is,

${Y}_{J_2}=\alpha +\beta {Y}_{J_1}+\varepsilon$

. The reason is that the slope coefficient in a simple linear regression equals the covariance between the independent variable and the dependent variable divided by the variance of the independent variable, that is,

![]() $\beta =\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]/\mathrm{Var}\left[{Y}_{J_1}\right]$

(e.g., Wooldridge, Reference Wooldridge2012, p. 29). Thus, analogous to before, researchers can compute Scott’s quadratically weighted pi by estimating the slope coefficient in a simple linear regression for the concatenated ratings of rater pairs (1,2) and (2,1). However, standard regression theory no longer helps obtain the corresponding variance.

$\beta =\mathrm{Cov}\left[{Y}_{J_1},{Y}_{J_2}\right]/\mathrm{Var}\left[{Y}_{J_1}\right]$

(e.g., Wooldridge, Reference Wooldridge2012, p. 29). Thus, analogous to before, researchers can compute Scott’s quadratically weighted pi by estimating the slope coefficient in a simple linear regression for the concatenated ratings of rater pairs (1,2) and (2,1). However, standard regression theory no longer helps obtain the corresponding variance.

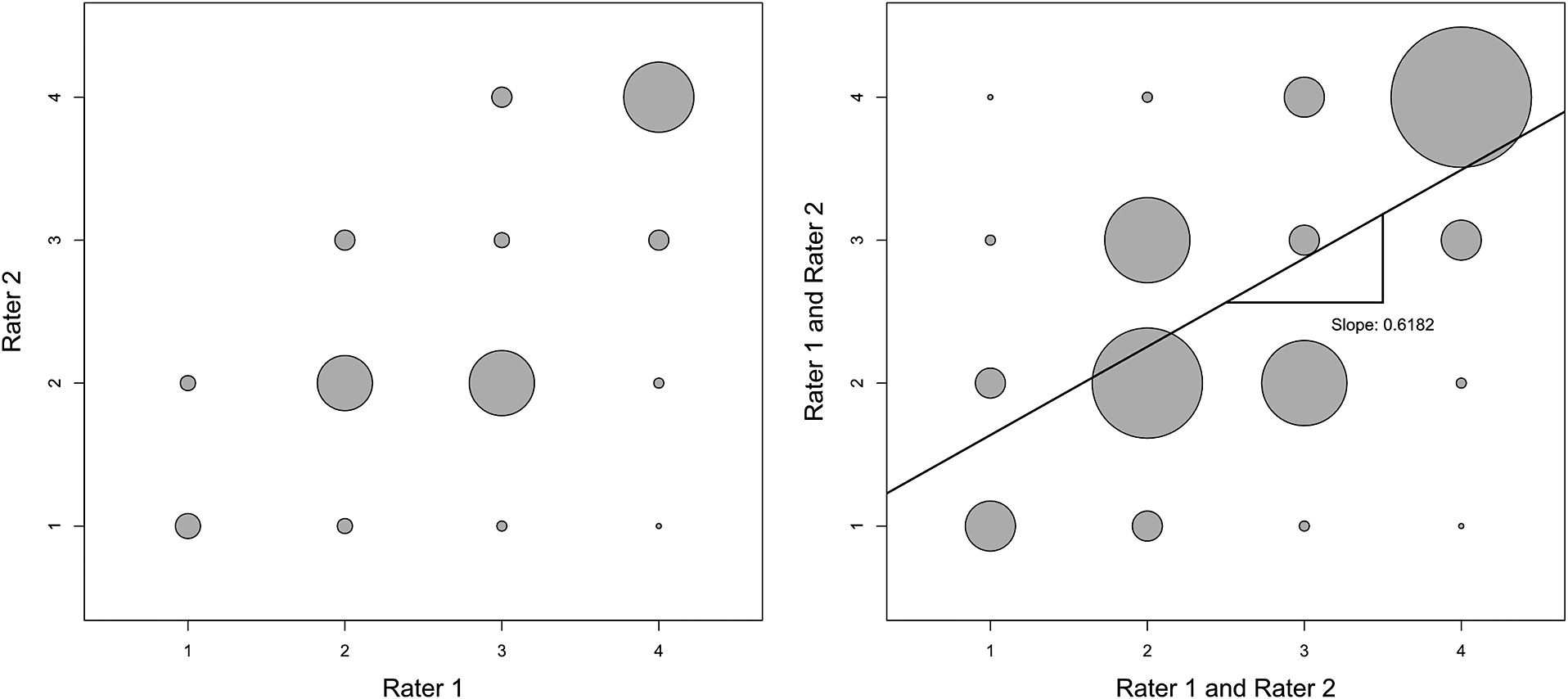

Moving from the left to the right panel in Figure 2 shows how the concatenation works in the multiple sclerosis example of Landis and Koch (Reference Landis and Koch1977b). For each cell, we sum the original rating frequency of the rater pair (1,2) and the diagonal-mirrored frequency of the flipped rater pair (2,1). Fleiss’ kappa equals the Pearson correlation and regression slope in the figure’s right panel.

After concatenation, Scott’s quadratically weighted pi equals the Pearson correlation and regression slope of 0.6182 in Landis and Koch’s two-rater multiple sclerosis example. Scatter plot of original frequencies (left) and combined frequencies with regression line (right).

4.3 Linear function of Cohen’s quadratically weighted kappa

We write Scott’s quadratically weighted pi in (7) as a linear function of Cohen’s quadratically weighted kappa in (3), conditional on the rater means and variances (Appendix A):

where

is the squared difference in rater means divided by the corresponding variance under independence. Its sample counterpart is related to the Wald (or squared t) statistic to test for equal means while allowing for unequal variances. Equation (10) shows that Scott’s pi cannot exceed Cohen’s kappa, so the latter coefficient provides an upper bound for the former. Whereas Warrens (Reference Warrens2010a) proved this result for nominal weights, we demonstrate it for quadratic weights.

Although Scott’s quadratically weighted pi is a linear function of Cohen’s quadratically weighted kappa conditional on the rater means and variances, the relationship between the variance estimators of these coefficients remains complex. The reason is that both the coefficients and rater moments are functions of the data and interact nonlinearly in (10) and (11).

4.4 Numerical example

Continuing with the multiple sclerosis example of Landis and Koch (Reference Landis and Koch1977b, p. 161), we use (7) to compute Scott’s quadratically weighted pi as

$$\begin{align*}\pi =\frac{2\times 0.6780-\frac{1}{2}\times {\left(2.8116-2.5217\right)}^2}{0.9935+1.0901+\frac{1}{2}\times {\left(2.8116-2.5217\right)}^2}=\frac{1.3140}{2.1256}=0.6182.\end{align*}$$

$$\begin{align*}\pi =\frac{2\times 0.6780-\frac{1}{2}\times {\left(2.8116-2.5217\right)}^2}{0.9935+1.0901+\frac{1}{2}\times {\left(2.8116-2.5217\right)}^2}=\frac{1.3140}{2.1256}=0.6182.\end{align*}$$

Next, we use (8) to compute Scott’s pi as Lin’s concordance correlation coefficient after recentering at the raters’ grand mean, which is

![]() $\overline{\mu}=\left(2.8116+2.5217\right)/2=2.6667$

:

$\overline{\mu}=\left(2.8116+2.5217\right)/2=2.6667$

:

$$\begin{align*}{\tilde{\sigma}}_{1,2}&={\sigma}_{1,2}+{\mu}_1{\mu}_2-{\overline{\mu}}^2=0.6780+2.8116\times 2.5217-{(2.6667)}^2=0.6570,\\{{\tilde{\sigma}}_1}^2&={\sigma_1}^2+{\mu_1}^2-{\overline{\mu}}^2=0.9935+{(2.8116)}^2-{(2.6667)}^2=1.7876,\\{{\tilde{\sigma}}_2}^2&={\sigma_2}^2+{\mu_2}^2-{\overline{\mu}}^2=1.0901+{(2.5217)}^2-{(2.6667)}^2=0.3381,\\\pi &=\frac{2{\tilde{\sigma}}_{1,2}}{{{\tilde{\sigma}}_1}^2+{{\tilde{\sigma}}_2}^2}=\frac{2\times 0.6570}{1.7876+0.3381}=\frac{1.3140}{2.1256}=0.6182,\end{align*}$$

$$\begin{align*}{\tilde{\sigma}}_{1,2}&={\sigma}_{1,2}+{\mu}_1{\mu}_2-{\overline{\mu}}^2=0.6780+2.8116\times 2.5217-{(2.6667)}^2=0.6570,\\{{\tilde{\sigma}}_1}^2&={\sigma_1}^2+{\mu_1}^2-{\overline{\mu}}^2=0.9935+{(2.8116)}^2-{(2.6667)}^2=1.7876,\\{{\tilde{\sigma}}_2}^2&={\sigma_2}^2+{\mu_2}^2-{\overline{\mu}}^2=1.0901+{(2.5217)}^2-{(2.6667)}^2=0.3381,\\\pi &=\frac{2{\tilde{\sigma}}_{1,2}}{{{\tilde{\sigma}}_1}^2+{{\tilde{\sigma}}_2}^2}=\frac{2\times 0.6570}{1.7876+0.3381}=\frac{1.3140}{2.1256}=0.6182,\end{align*}$$

yielding the same coefficient value.

We proceed by computing Scott’s quadratically weighted pi as the Pearson product–moment correlation and the regression slope after concatenating the ratings of the original rater pair (1,2) and flipped rater pair (2,1), that is, adding the transposed contingency table to the original one. The correlation and slope coefficients become the covariance divided by the variance (where the two data columns share the same mean and variance after concatenation). The R-code below starts from Landis and Koch’s

![]() $4\times 4$

contingency table and avoids translating this contingency table into item-by-rater tables of ratings. It again yields a value of 0.6182:

$4\times 4$

contingency table and avoids translating this contingency table into item-by-rater tables of ratings. It again yields a value of 0.6182:

y <- matrix(c(5,3,2,1,3,11,13,2,0,4,3,4,0,0,4,14),nrow=4)

y <- (y+t(y))/(2*sum(y))

mean <- sum(1:nrow(y)*rowSums(y))

var <- sum((1:nrow(y))^2*rowSums(y))-mean^2

cov <- sum(outer(1:nrow(y),1:nrow(y))*y)-mean^2

cov/var

[1] 0.6181818

Furthermore, Scott’s quadratically weighted pi is a linear function of Cohen’s quadratically weighted kappa (and vice versa), with the intercept and slope solely depending on the squared difference in means divided by the corresponding variance under independence:

Substituting into (10) yields

$$\begin{align}\frac{W}{2+W}&=\frac{0.0403}{2.0403}=0.0198,\nonumber\\\pi& =0.6182=0.6256-0.0198\times \left(1-0.6256\right)=\kappa -\left(\frac{W}{2+W}\right)\left(1-\kappa \right).\end{align}$$

$$\begin{align}\frac{W}{2+W}&=\frac{0.0403}{2.0403}=0.0198,\nonumber\\\pi& =0.6182=0.6256-0.0198\times \left(1-0.6256\right)=\kappa -\left(\frac{W}{2+W}\right)\left(1-\kappa \right).\end{align}$$

5 Any noninterchangeable raters: Conger’s quadratically weighted kappa

To explain how the previous ideas extend to multirater coefficients with more than two raters, we show that Conger’s quadratically weighted kappa equals Lin’s generalized concordance correlation coefficient and reduces to the average Pearson product–moment correlation across all rater pairs if raters share the same mean and variance. This proof is shorter and less complex than found elsewhere in the literature (Andres & Hernandez, Reference Andres and Hernandez2020; Schuster & Smith, Reference Schuster and Smith2005; Warrens, Reference Warrens2012a); the result for the average Pearson correlation is new. Later, we extend to the more complex analysis of Fleiss’ quadratically weighted kappa with interchangeable raters.

We denote Conger’s quadratically weighted kappa for

![]() $R$

raters as

$R$

raters as

![]() ${\kappa}_C$

to distinguish it from Cohen’s

${\kappa}_C$

to distinguish it from Cohen’s

![]() $\kappa$

for two raters. In Conger’s kappa, both the observed disagreement,

$\kappa$

for two raters. In Conger’s kappa, both the observed disagreement,

![]() ${d}_o$

, and expected disagreement,

${d}_o$

, and expected disagreement,

![]() ${d}_e$

, are averages (or equivalently, sums) across all pairs of different raters

${d}_e$

, are averages (or equivalently, sums) across all pairs of different raters

![]() $\left(r,\tilde{r}\right)$

with

$\left(r,\tilde{r}\right)$

with

![]() $\tilde{r}>r$

(Conger, Reference Conger1980):

$\tilde{r}>r$

(Conger, Reference Conger1980):

$$\begin{align}{\kappa}_C=1-\frac{d_o}{d_e}=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left[{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_r=c,{Y}_{\tilde{r}}=\tilde{c}\right)\right]}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left[{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_r=c\right)\Pr \left({Y}_{\tilde{r}}=\tilde{c}\right)\right]}.\end{align}$$

$$\begin{align}{\kappa}_C=1-\frac{d_o}{d_e}=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left[{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_r=c,{Y}_{\tilde{r}}=\tilde{c}\right)\right]}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left[{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_r=c\right)\Pr \left({Y}_{\tilde{r}}=\tilde{c}\right)\right]}.\end{align}$$

Recognizing the expectations in square brackets, with independence in the denominator, yields

$$\begin{align}{\kappa}_C&=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}E\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2\right]}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}E\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2|\mathrm{independence}\right]}\nonumber\\&=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}\right]+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left(E\left[{Y}_r-{Y}_{\tilde{r}}\right]\right)}^2}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{independence}\right]+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left(E\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{independence}\right]\right)}^2}\nonumber\\&=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2-2{\sigma}_{r,\tilde{r}}\right)+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

$$\begin{align}{\kappa}_C&=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}E\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2\right]}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}E\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2|\mathrm{independence}\right]}\nonumber\\&=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}\right]+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left(E\left[{Y}_r-{Y}_{\tilde{r}}\right]\right)}^2}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{independence}\right]+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left(E\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{independence}\right]\right)}^2}\nonumber\\&=1-\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2-2{\sigma}_{r,\tilde{r}}\right)+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

Next, we use that

![]() ${\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)=\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2$

and simplify (14) to obtain Lin’s generalized concordance correlation coefficient, suitable for any number of raters (Andres & Hernandez, Reference Andres and Hernandez2020; Barnhart et al., Reference Barnhart, Haber and Song2002; Carrasco & Jover, Reference Carrasco and Jover2003; Lin, Reference Lin1989, Reference Lin2000):

${\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)=\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2$

and simplify (14) to obtain Lin’s generalized concordance correlation coefficient, suitable for any number of raters (Andres & Hernandez, Reference Andres and Hernandez2020; Barnhart et al., Reference Barnhart, Haber and Song2002; Carrasco & Jover, Reference Carrasco and Jover2003; Lin, Reference Lin1989, Reference Lin2000):

$$\begin{align}{\kappa}_C=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}}{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

$$\begin{align}{\kappa}_C=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}}{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

We note that (15) reduces to Cohen’s quadratically weighted kappa and the bivariate concordance correlation coefficient in (3) if there are only two raters, with

![]() $R=2$

.

$R=2$

.

Conger’s kappa in (15) becomes the average Pearson product–moment correlation across all rater pairs if raters share the same mean and the same variance,

![]() ${\sigma}^2$

:

${\sigma}^2$

:

$$\begin{align}{\kappa}_C\mid \mathrm{same}\kern0.17em \mathrm{mean}\kern0.17em \mathrm{and}\kern0.17em \mathrm{variance}=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}{\sigma}^2}{\left(R-1\right)\left(R{\sigma}^2\right)}=\frac{\sigma^2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\frac{\sigma^2R\left(R-1\right)}{2}}=\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\left(\begin{array}{c}R\\ {}2\end{array}\right)}.\end{align}$$

$$\begin{align}{\kappa}_C\mid \mathrm{same}\kern0.17em \mathrm{mean}\kern0.17em \mathrm{and}\kern0.17em \mathrm{variance}=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}{\sigma}^2}{\left(R-1\right)\left(R{\sigma}^2\right)}=\frac{\sigma^2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\frac{\sigma^2R\left(R-1\right)}{2}}=\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\left(\begin{array}{c}R\\ {}2\end{array}\right)}.\end{align}$$

Finally, we express (15) in matrix and vector notation for convenient coding in matrix programming languages:

$$\begin{align}{\kappa}_C=\frac{\iota^{\prime}\Sigma \iota -\mathrm{tr}\left(\Sigma \right)}{\left(R-1\right)\mathrm{tr}\left(\Sigma \right)+{R}^2\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)},\end{align}$$

$$\begin{align}{\kappa}_C=\frac{\iota^{\prime}\Sigma \iota -\mathrm{tr}\left(\Sigma \right)}{\left(R-1\right)\mathrm{tr}\left(\Sigma \right)+{R}^2\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)},\end{align}$$

where

![]() $^{\prime }$

denotes the transpose,

$^{\prime }$

denotes the transpose,

![]() $\iota$

is the R-dimensional vector of ones,

$\iota$

is the R-dimensional vector of ones,

![]() $\Sigma =\left({\sigma}_{r,\tilde{r}}\right)$

is the covariance matrix, “tr” is the trace (i.e., sum of diagonal elements),

$\Sigma =\left({\sigma}_{r,\tilde{r}}\right)$

is the covariance matrix, “tr” is the trace (i.e., sum of diagonal elements),

![]() $\overline{\mu}={\iota}^{\prime}\mu /R$

, and

$\overline{\mu}={\iota}^{\prime}\mu /R$

, and

![]() $\overline{\mu^2}=\iota^{\prime }{\mu}^2/R$

, with

$\overline{\mu^2}=\iota^{\prime }{\mu}^2/R$

, with

![]() $\mu$

and

$\mu$

and

![]() ${\mu}^2$

being R-dimensional vectors containing the rater means and squared rater means. Comparing (17) with the matrix expressions in Almehrizi and Emam (Reference Almehrizi and Emam2023) reveals identical numerators and related denominators. Their two-factor intraclass correlation coefficient for absolute agreement (labeled

${\mu}^2$

being R-dimensional vectors containing the rater means and squared rater means. Comparing (17) with the matrix expressions in Almehrizi and Emam (Reference Almehrizi and Emam2023) reveals identical numerators and related denominators. Their two-factor intraclass correlation coefficient for absolute agreement (labeled

![]() ${\rho}_{\left(A,1\right)}$

) converges to Conger’s quadratically weighted kappa in (17), as their only deviating term converges to

${\rho}_{\left(A,1\right)}$

) converges to Conger’s quadratically weighted kappa in (17), as their only deviating term converges to

![]() $\left(R-1\right)\mathrm{tr}\left(\Sigma \right)$

. Furthermore, the term

$\left(R-1\right)\mathrm{tr}\left(\Sigma \right)$

. Furthermore, the term

![]() ${R}^2\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)$

is absent in their two-factor intraclass correlation coefficient for relative consistency (labeled

${R}^2\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)$

is absent in their two-factor intraclass correlation coefficient for relative consistency (labeled

![]() ${\rho}_{\left(C,1\right)}$

). Hence, this coefficient coincides with Conger’s kappa in (17) if all raters share the same mean.

${\rho}_{\left(C,1\right)}$

). Hence, this coefficient coincides with Conger’s kappa in (17) if all raters share the same mean.

As an illustration, we consider Table A.3 of Gwet (Reference Gwet2014, p. 371), showing the five-point color intensity ratings of 29 stickleback fishes by four experienced raters. Based on maximum likelihood estimation, these raters have the following mean vector and covariance matrix:

$$\begin{align*}\mu &=\left(\begin{array}{c}2.8276\\ {}2.6207\\ {}\begin{array}{c}2.7931\\ {}2.8276\end{array}\end{array}\right),\\\Sigma &=\left(\begin{array}{c}2.3496\kern0.5em 1.6932\kern0.5em \begin{array}{cc}1.7574& 1.6254\end{array}\\ {}\begin{array}{ccc}1.6932& 2.0285& \begin{array}{cc}1.5767& 1.7967\end{array}\end{array}\\ {}\begin{array}{c}\begin{array}{ccc}1.7574& 1.5767& \begin{array}{cc}2.3020& 2.0678\end{array}\end{array}\\ {}\begin{array}{ccc}1.6254& 1.7967& \begin{array}{cc}2.0678& 2.8323\end{array}\end{array}\end{array}\end{array}\right),\end{align*}$$

$$\begin{align*}\mu &=\left(\begin{array}{c}2.8276\\ {}2.6207\\ {}\begin{array}{c}2.7931\\ {}2.8276\end{array}\end{array}\right),\\\Sigma &=\left(\begin{array}{c}2.3496\kern0.5em 1.6932\kern0.5em \begin{array}{cc}1.7574& 1.6254\end{array}\\ {}\begin{array}{ccc}1.6932& 2.0285& \begin{array}{cc}1.5767& 1.7967\end{array}\end{array}\\ {}\begin{array}{c}\begin{array}{ccc}1.7574& 1.5767& \begin{array}{cc}2.3020& 2.0678\end{array}\end{array}\\ {}\begin{array}{ccc}1.6254& 1.7967& \begin{array}{cc}2.0678& 2.8323\end{array}\end{array}\end{array}\end{array}\right),\end{align*}$$

implying that

![]() ${\iota}^{\prime}\Sigma \iota =30.5470$

,

${\iota}^{\prime}\Sigma \iota =30.5470$

,

![]() $\mathrm{tr}\left(\Sigma \right)=9.5125$

,

$\mathrm{tr}\left(\Sigma \right)=9.5125$

,

![]() $\overline{\mu}=2.7672$

, and

$\overline{\mu}=2.7672$

, and

![]() $\overline{\mu^2}=7.6650$

. Plugging these numbers into (17) yields the value of Conger’s quadratically weighted kappa and the generalized concordance correlation coefficient, which is also the value reported in Gwet (Reference Gwet2014, p. 158):

$\overline{\mu^2}=7.6650$

. Plugging these numbers into (17) yields the value of Conger’s quadratically weighted kappa and the generalized concordance correlation coefficient, which is also the value reported in Gwet (Reference Gwet2014, p. 158):

$$\begin{align*}{\kappa}_C=\frac{30.5470-9.5125}{3\times 9.5125+16\times \left(7.6650-{(2.7672)}^2\right)}=\frac{21.0345}{28.6552}=0.7341.\end{align*}$$

$$\begin{align*}{\kappa}_C=\frac{30.5470-9.5125}{3\times 9.5125+16\times \left(7.6650-{(2.7672)}^2\right)}=\frac{21.0345}{28.6552}=0.7341.\end{align*}$$

Letting y denote the 29 × 4 item-by-rater data matrix from Gwet (Reference Gwet2014, p. 371), then coding up (17) in R is straightforward and yields the same coefficient value:

R <- ncol(y)

mu <- colMeans(y)

Sigma <- cov(y)*((nrow(y)-1)/nrow(y)) # ML estimation

num <- sum(Sigma)-sum(diag(Sigma))

den <- (R-1)*sum(diag(Sigma))+(R^2)*(mean(mu^2)-(mean(mu))^2)

num/den

[1] 0.7340554

In all remaining programming codes, we will refer to the data matrix as y and skip the calculations of

![]() $R$

(i.e., number of raters),

$R$

(i.e., number of raters),

![]() $\unicode{x3bc}$

, and

$\unicode{x3bc}$

, and

![]() $\Sigma$

because these calculations remain identical.

$\Sigma$

because these calculations remain identical.

6 Any interchangeable raters: Fleiss’ quadratically weighted kappa

We turn to the coefficient of primary interest, Fleiss’ quadratically weighted kappa with any number of interchangeable raters, denoted as

![]() ${\kappa}_F$

. These results are new.

${\kappa}_F$

. These results are new.

6.1 Generalized concordance correlation after recentering at the raters’ grand mean

Fleiss’ kappa computes the observed disagreement,

![]() ${d}_o$

, in the same way as Conger’s: It takes the corresponding average over all pairs of different raters. However, unlike Conger’s kappa, Fleiss’ chance correction aggregates the category proportions across raters and uses these average proportions to compute its expected disagreement,

${d}_o$

, in the same way as Conger’s: It takes the corresponding average over all pairs of different raters. However, unlike Conger’s kappa, Fleiss’ chance correction aggregates the category proportions across raters and uses these average proportions to compute its expected disagreement,

![]() ${d}_e$

(Fleiss, Reference Fleiss1971):

${d}_e$

(Fleiss, Reference Fleiss1971):

$$\begin{align}{\kappa}_F=1-\frac{d_o}{d_e}=1-\frac{\displaystyle\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left[{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_r=c,{Y}_{\tilde{r}}=\tilde{c}\right)\right]}{\left(\begin{array}{c}R\\ {}2\end{array}\right)}}{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\left(\frac{\sum_{r=1}^R\Pr \left({Y}_r=c\right)}{R}\right)\left(\frac{\sum_{\tilde{r}=1}^R\Pr \left({Y}_{\tilde{r}}=\tilde{c}\right)}{R}\right)}.\end{align}$$

$$\begin{align}{\kappa}_F=1-\frac{d_o}{d_e}=1-\frac{\displaystyle\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left[{\sum}_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\Pr \left({Y}_r=c,{Y}_{\tilde{r}}=\tilde{c}\right)\right]}{\left(\begin{array}{c}R\\ {}2\end{array}\right)}}{\sum_{c=1}^C{\sum}_{\tilde{c}=1}^C{\left(c-\tilde{c}\right)}^2\left(\frac{\sum_{r=1}^R\Pr \left({Y}_r=c\right)}{R}\right)\left(\frac{\sum_{\tilde{r}=1}^R\Pr \left({Y}_{\tilde{r}}=\tilde{c}\right)}{R}\right)}.\end{align}$$

Using that

${R}^2/\left(\begin{array}{c}R\\ {}2\end{array}\right)=2R/\left(R-1\right)$

and recognizing the expectations, we can write (18) as

${R}^2/\left(\begin{array}{c}R\\ {}2\end{array}\right)=2R/\left(R-1\right)$

and recognizing the expectations, we can write (18) as

$$\begin{align}{\kappa}_F&=1-\frac{2R}{R-1}\times \frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}E\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2\right]}{\sum_{r=1}^R{\sum}_{\tilde{r}=1}^RE\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2|\mathrm{independence}\right]}\nonumber\\&=1-\frac{2R}{R-1}\times \frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}\right]+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left(E\left[{Y}_r-{Y}_{\tilde{r}}\right]\right)}^2}{\sum_{r=1}^R{\sum}_{\tilde{r}=1}^R\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{indep}.\right]+{\sum}_{r=1}^R{\sum}_{\tilde{r}=1}^R{\left(E\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{indep}.\right]\right)}^2}\nonumber\\&=1-\frac{2R}{R-1}\times \frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2-2{\sigma}_{r,\tilde{r}}\right)+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\sum_{r=1}^R{\sum}_{\tilde{r}=1}^R\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)+{\sum}_{r=1}^R{\sum}_{\tilde{r}=1}^R{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

$$\begin{align}{\kappa}_F&=1-\frac{2R}{R-1}\times \frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}E\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2\right]}{\sum_{r=1}^R{\sum}_{\tilde{r}=1}^RE\left[{\left({Y}_r-{Y}_{\tilde{r}}\right)}^2|\mathrm{independence}\right]}\nonumber\\&=1-\frac{2R}{R-1}\times \frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}\right]+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left(E\left[{Y}_r-{Y}_{\tilde{r}}\right]\right)}^2}{\sum_{r=1}^R{\sum}_{\tilde{r}=1}^R\mathrm{Var}\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{indep}.\right]+{\sum}_{r=1}^R{\sum}_{\tilde{r}=1}^R{\left(E\left[{Y}_r-{Y}_{\tilde{r}}|\mathrm{indep}.\right]\right)}^2}\nonumber\\&=1-\frac{2R}{R-1}\times \frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2-2{\sigma}_{r,\tilde{r}}\right)+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\sum_{r=1}^R{\sum}_{\tilde{r}=1}^R\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)+{\sum}_{r=1}^R{\sum}_{\tilde{r}=1}^R{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

Next, we break up the summations in the denominator into

![]() $r=\tilde{r}$

,

$r=\tilde{r}$

,

![]() $r<\tilde{r}$

, and symmetric

$r<\tilde{r}$

, and symmetric

![]() $r>\tilde{r}$

:

$r>\tilde{r}$

:

$$\begin{align}{\kappa}_F=1-\frac{R}{R-1}\times \frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2-2{\sigma}_{r,\tilde{r}}\right)+2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\left[2{\sum}_{r=1}^R{\sigma_r}^2+2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)\right]+2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

$$\begin{align}{\kappa}_F=1-\frac{R}{R-1}\times \frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2-2{\sigma}_{r,\tilde{r}}\right)+2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\left[2{\sum}_{r=1}^R{\sigma_r}^2+2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)\right]+2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}.\end{align}$$

Finally, we divide the numerator and denominator by two, use that

![]() ${\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)=\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2$

, and simplify to obtain

${\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)=\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2$

, and simplify to obtain

$$\begin{align}{\kappa}_F&=1-\frac{R}{R-1}\times \frac{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2-2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{R{\sum}_{r=1}^R{\sigma_r}^2+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}\nonumber\\&=1-\frac{R\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2-2R{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}+R{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{R\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+\left(R-1\right){\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}\nonumber\\&=\frac{2R{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}-{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{R\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+\left(R-1\right){\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}\nonumber\\&=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}-\frac{1}{R}{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+\frac{R-1}{R}{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}. \end{align}$$

$$\begin{align}{\kappa}_F&=1-\frac{R}{R-1}\times \frac{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2-2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{R{\sum}_{r=1}^R{\sigma_r}^2+{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}\nonumber\\&=1-\frac{R\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2-2R{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}+R{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{R\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+\left(R-1\right){\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}\nonumber\\&=\frac{2R{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}-{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{R\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+\left(R-1\right){\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}\nonumber\\&=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\sigma}_{r,\tilde{r}}-\frac{1}{R}{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2+\frac{R-1}{R}{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}. \end{align}$$

Using the same matrix and vector notation as before, this expression for Fleiss’ quadratically weighted kappa becomes

$$\begin{align}{\kappa}_F=\frac{\iota^{\prime}\Sigma \iota -\mathrm{tr}\left(\Sigma \right)-R\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)}{\left(R-1\right)\mathrm{tr}\left(\Sigma \right)+R\left(R-1\right)\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)}.\end{align}$$

$$\begin{align}{\kappa}_F=\frac{\iota^{\prime}\Sigma \iota -\mathrm{tr}\left(\Sigma \right)-R\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)}{\left(R-1\right)\mathrm{tr}\left(\Sigma \right)+R\left(R-1\right)\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)}.\end{align}$$

We note that (21) reduces to Scott’s quadratically weighted pi in (7) if there are only two raters, with

![]() $R=2$

. Furthermore, it becomes the average Pearson product–moment correlation across all rater pairs if raters share the same mean and the same variance,

$R=2$

. Furthermore, it becomes the average Pearson product–moment correlation across all rater pairs if raters share the same mean and the same variance,

![]() ${\sigma}^2$

:

${\sigma}^2$

:

$$\begin{align}{\kappa}_F\mid \mathrm{same}\kern0.17em \mathrm{mean}\kern0.17em \mathrm{and}\kern0.17em \mathrm{variance}=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}{\sigma}^2}{\left(R-1\right)\left(R{\sigma}^2\right)}=\frac{\sigma^2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\frac{\sigma^2R\left(R-1\right)}{2}}=\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\left(\begin{array}{c}R\\ {}2\end{array}\right)}.\end{align}$$

$$\begin{align}{\kappa}_F\mid \mathrm{same}\kern0.17em \mathrm{mean}\kern0.17em \mathrm{and}\kern0.17em \mathrm{variance}=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}{\sigma}^2}{\left(R-1\right)\left(R{\sigma}^2\right)}=\frac{\sigma^2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\frac{\sigma^2R\left(R-1\right)}{2}}=\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\rho}_{r,\tilde{r}}}{\left(\begin{array}{c}R\\ {}2\end{array}\right)}.\end{align}$$

Comparing expressions (15) and (21) for Conger’s and Fleiss’ quadratically weighted kappas shows that these coefficients coincide if all raters share the same mean, which is a much less stringent condition than all raters having identical category proportions (i.e., the same marginal distributions).

Next, we show that Fleiss’ quadratically weighted kappa in (21) equals Lin’s generalized concordance correlation coefficient after recentering the rater means and covariance matrix at the raters’ grand mean (Appendix B):

$$\begin{align}{\kappa}_F=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\tilde{\sigma}}_{r,\tilde{r}}}{\left(R-1\right){\sum}_{r=1}^R{{\tilde{\sigma}}_r}^2}=\frac{\iota^{\prime}\tilde{\Sigma}\iota -\mathrm{tr}\left(\tilde{\Sigma}\right)}{\left(R-1\right)\mathrm{tr}\left(\tilde{\Sigma}\right)},\end{align}$$

$$\begin{align}{\kappa}_F=\frac{2{\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\tilde{\sigma}}_{r,\tilde{r}}}{\left(R-1\right){\sum}_{r=1}^R{{\tilde{\sigma}}_r}^2}=\frac{\iota^{\prime}\tilde{\Sigma}\iota -\mathrm{tr}\left(\tilde{\Sigma}\right)}{\left(R-1\right)\mathrm{tr}\left(\tilde{\Sigma}\right)},\end{align}$$

where

![]() ${\tilde{\sigma}}_{r,\tilde{r}}={\sigma}_{r,\tilde{r}}+{\mu}_r{\mu}_{\tilde{r}}-{\overline{\mu}}^2$

denotes the covariances after recentering at

${\tilde{\sigma}}_{r,\tilde{r}}={\sigma}_{r,\tilde{r}}+{\mu}_r{\mu}_{\tilde{r}}-{\overline{\mu}}^2$

denotes the covariances after recentering at

![]() $\overline{\mu}={\sum}_{r=1}^R{\mu}_r/R$

and

$\overline{\mu}={\sum}_{r=1}^R{\mu}_r/R$

and

![]() ${{\tilde{\sigma}}_r}^2={\sigma_r}^2+{\mu_r}^2-{\overline{\mu}}^2$

denotes the recentered variances. Using the same matrix and vector notation as before, the recentered covariance matrix becomes

${{\tilde{\sigma}}_r}^2={\sigma_r}^2+{\mu_r}^2-{\overline{\mu}}^2$

denotes the recentered variances. Using the same matrix and vector notation as before, the recentered covariance matrix becomes

![]() $\tilde{\Sigma}=\Sigma +\mu {\mu}^{\prime }-{\overline{\mu}}^2$

. This result generalizes an earlier result, obtained in (8) for Scott’s pi with two raters, to any number of raters.

$\tilde{\Sigma}=\Sigma +\mu {\mu}^{\prime }-{\overline{\mu}}^2$

. This result generalizes an earlier result, obtained in (8) for Scott’s pi with two raters, to any number of raters.

6.2 Pearson correlation and regression slope after concatenating the ratings

Fleiss’ quadratically weighted kappa is the multirater generalization of the corresponding Scott’s pi. As Scott’s pi equals the Pearson product–moment correlation between the ratings from two random, different raters, Fleiss’ kappa has the same interpretation. Treating the two raters in the pair as random makes these raters interchangeable with the same mean and variance, so the same proof as in (9) applies. In practice, researchers can compute Fleiss’ kappa with quadratic weights by appropriately concatenating the data and then computing the Pearson correlation from these concatenated ratings. The concatenation procedure generalizes the approach for Scott’s pi. It combines the ratings from all possible rater pairs (including the same pair after flipping the two raters) in a new data set, where the first column captures the first rater in the pair, and the second column captures the second rater. For example, for three raters, the two data columns combine (i.e., concatenate) the ratings from the six rater pairs (1,2), (2,1), (1,3), (3,1), (2,3), and (3,2).

Furthermore, analogous to before, researchers can compute the value of Fleiss’ quadratically weighted kappa by estimating the slope coefficient in a simple linear regression, where the two data columns with concatenated ratings serve as dependent and independent variables (or vice versa). However, standard statistical theory no longer helps obtain the variances, as the concatenated ratings are no independent draws from the data-generating process. We refer to other literature sources to get the correct variance of Fleiss’ kappa (Andres & Hernandez, Reference Andres and Hernandez2025; Gwet, Reference Gwet2014; Schouten, Reference Schouten1980, Reference Schouten1982; Vanbelle, Reference Vanbelle2019). Furthermore, a high-quality R package to compute agreement coefficients and their variances is irrCAC.

6.3 Linear function of Conger’s quadratically weighted kappa

Generalizing the result in (10) for two raters to any number of raters, we write Fleiss’ quadratically weighted kappa in (21) as a linear function of Conger’s quadratically weighted kappa in (15), conditional on the rater means and variances (Appendix C):

$$\begin{align}{\kappa}_F={\kappa}_C-\left(\frac{W}{R+\left(R-1\right)W}\right)\left(1-{\kappa}_C\right)\le {\kappa}_C,\end{align}$$

$$\begin{align}{\kappa}_F={\kappa}_C-\left(\frac{W}{R+\left(R-1\right)W}\right)\left(1-{\kappa}_C\right)\le {\kappa}_C,\end{align}$$

where

$$\begin{align}W=\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2}=\frac{R^2\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)}{\left(R-1\right)\mathrm{tr}\left(\Sigma \right)}\ge 0\end{align}$$

$$\begin{align}W=\frac{\sum_{r=1}^{R-1}{\sum}_{\tilde{r}>r}{\left({\mu}_r-{\mu}_{\tilde{r}}\right)}^2}{\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2}=\frac{R^2\left(\overline{\mu^2}-{\left(\overline{\mu}\right)}^2\right)}{\left(R-1\right)\mathrm{tr}\left(\Sigma \right)}\ge 0\end{align}$$

is the sum of squared differences in means across all rater pairs divided by the corresponding sum of variances under independence. We recall

![]() ${\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)=\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2$

. Equation (25) shows that Fleiss’ quadratically weighted kappa cannot exceed Conger’s, implying that the latter coefficient provides an upper bound for the former. Whereas Warrens (Reference Warrens2010b) proved this result for nominal weights, we extend it to quadratic weights. Furthermore, (25) provides an alternative proof that Conger’s and Fleiss’ quadratically weighted kappas converge to each other as the number of raters,

${\sum}_{r=1}^{R-1}{\sum}_{\tilde{r}>r}\left({\sigma_r}^2+{\sigma_{\tilde{r}}}^2\right)=\left(R-1\right){\sum}_{r=1}^R{\sigma_r}^2$

. Equation (25) shows that Fleiss’ quadratically weighted kappa cannot exceed Conger’s, implying that the latter coefficient provides an upper bound for the former. Whereas Warrens (Reference Warrens2010b) proved this result for nominal weights, we extend it to quadratic weights. Furthermore, (25) provides an alternative proof that Conger’s and Fleiss’ quadratically weighted kappas converge to each other as the number of raters,

![]() $R$

, tends to infinity (Conger, Reference Conger1980; Gwet, Reference Gwet2014).

$R$

, tends to infinity (Conger, Reference Conger1980; Gwet, Reference Gwet2014).

Although Fleiss’ quadratically weighted kappa is a linear function of Conger’s quadratically weighted kappa conditional on the rater means and variances, the relationship between the variance estimators of these kappas remains complex. The reason is that both the kappas and rater moments are functions of the data and interact nonlinearly in (25) and (26).

6.4 Numerical example

Continuing with the four-rater stickleback fish color example of Gwet (Reference Gwet2014, p. 371) and using our earlier calculations of

![]() ${\iota}^{\prime}\Sigma \iota =30.5470$

,