1 Introduction

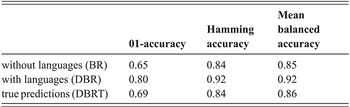

Early in the collaboration between the two authors of this Element, we identified a number of aspects that could be improved in the statistical analysis of linguistic data and World Englishes data in particular. These include the generalizability and interpretability of results, as well as model assessment. As a recent investigation has revealed (Buschfeld, Leuckert, et al. Reference Buschfeld, Leuckert, Weihs and Weilinghoff2024), these aspects are often neglected in World Englishes studies. We therefore developed a new approach for statistical modeling that meets these shortcomings by generating interpretable decision trees, which are characterized by best possible model accuracy and whose results are not only based on the data sample under investigation but generalizable to a broader population. This new approach is presented in this Element in some detail and is compared to models commonly used in linguistic studies, in particular with respect to the generalizability and interpretability of results, as well as model evaluation. All the methods presented in the following are available in the so-called PrInDT package (Prediction and Interpretation in Decision Trees) in the software R (Weihs & Buschfeld Reference Weihs and Buschfeld2025; R Core Team 2019).

In linguistics, statistical methods are widely used for analyzing data. The most prominent and frequently used textbooks on statistics for linguists are Rasinger (Reference Rasinger2013), which mainly relies on Excel, and Baayen (Reference Baayen2008), Field et al. (Reference Field, Miles and Field2012), Levshina (Reference Levshina2015), Schneider & Lauber (2020), Winter (Reference Winter2020), Gries (Reference Gries2021), and Sonderegger (Reference Sonderegger2023), which focus on R. Statistical models typically used in linguistic analyses include various kinds of linear regression models (e.g., fixed effects, mixed effects) and logistic regression for classification (i.e., for categorical dependent variables). Linguists have also repeatedly made use of random forests and conditional inference trees (ctrees) and sometimes of Bayesian variants of statistical models (cf. Section 3). In Buschfeld, Leuckert, et al. (Reference Buschfeld, Leuckert, Weihs and Weilinghoff2024), we have shown that the actual use of statistical methods varies between linguistic subdisciplines and that the World Englishes paradigm slightly lags behind other subdisciplines in terms of frequency and sophistication of statistical approaches used.

A problem often related to the choice of the correct method and its sophistication is either scarcity of linguistic data (i.e., very low token frequencies of the variables of interest) or a high imbalance between large-class and small-class variants of the variable under investigation. In particular, linguists investigating sociolinguistic or regional variation of language, of which World Englishes scholars form a major part, are often confronted with this problem. They are commonly most interested in linguistic forms that diverge from the traditional standard variant of a language, and these forms often come with low frequencies or at least much lower frequencies than their standard variants. For example, if a linguistic nonstandard strategy such as the omission of subject pronouns or verbal inflections is supposed to be characteristic of a variety of English, it is often still not used by the majority of the population, in the majority of speech events. It is normally used interchangeably with the standard variant, depending on the speaker and communicative situation. Some very specific local characteristics, such as past-tense marking via finish as a postverbal marker in Singaporean English (cf. Section 2.1.3), are so rare that those statistical procedures often used in sociolinguistics or World Englishes, for example “simple” ctrees, would only predict the larger class – that is, the more frequent, often standard, variant. However, it is particularly the smaller, nonstandard class variationist linguists are most interested in, which is why it is important to make use of a statistical approach that adequately considers the smaller class, too.

The approach we developed and present in this Element builds on decision trees, which work on the basis of a series of relatively simple “if-then” rules based on priorly defined predictors. They are, therefore, easier to interpret than, for example, linear models, which use linear combinations of the predictors for modeling. To better understand the differences between the approaches and thus our decision in favor of decision trees, we also introduce linear models but ultimately focus on a particular kind of decision tree, namely conditional inference trees. For such trees, it is relatively easy to control their size and therefore their complexity and interpretability (cf. Sections 3.2.3.3 and 4.1). After the size of the tree is adjusted according to the given needs, we optimize model accuracy (cf. Sections 4.1 and 4.3). For the resulting model, we discuss the predictive behavior and the linguistic interpretation of the predictions (cf. Section 5.1). This way, we place the generalizability of the trees (i.e., the transferability of the results from the sample to the wider population) at the center of our discussion (cf. Section 4.1).

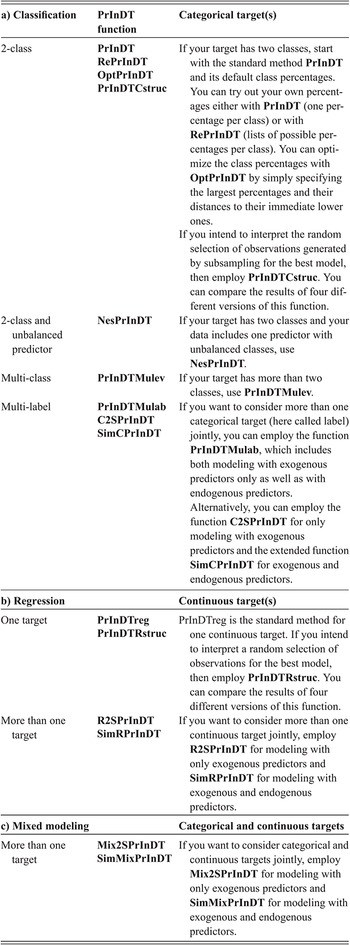

This Element does not aim to present novel linguistic studies or results, but instead focuses on PrInDT and its various applications. It draws on existing studies and data sets to illustrate the novel statistical methods and thus reads like a statistics manual more than a linguistic study. Following this introduction, Section 2 introduces the data sets and methods of the World Englishes case studies drawn upon for the various PrInDT applications. Section 3 describes and applies a number of standard statistical methods our PrInDT applications are based on, with which the reader should be familiar. This may, at times, be rather basic information for a statistical expert reader. However, we aim at a broad audience of readers and would like to give beginners in statistics a chance to follow our explanations and new approach, too. Ultimately, the new PrInDT methods are introduced in Section 4, before we apply them to World Englishes sample data in Section 5. For all analyses, we employ the software R and provide the relevant R-code. A brief, general introduction to R is presented in Section 3.1, while further explanations and applications can be found in the sections that follow. Section 6 summarizes the relevance of the new approach for the World Englishes paradigm, before we provide some overall conclusions in Section 7.

2 Introducing the Data Sets

Before we turn to the various applications of our approach (cf. Sections 4–6), we introduce the different data sets that have been used to develop the PrInDT approach, as well as some of its offspring versions and later advancements. The data were collected by the first author of the Element between 2014 and 2020. The PrInDT approach introduced in this Element was gradually developed, expanded, and modified between 2020 and 2025.

2.1 Data Sets I–IV: Investigating L1 Child Singaporean English

The first four data sets we introduce were collected for a large-scale research project investigating the acquisition of English as a first language (L1) in Singapore (Buschfeld Reference Buschfeld2020). Singaporean English (SingE) is one of the most extensively researched second-language (L2) varieties of English. However, for an ever-increasing number of children it is (one of) their home language(s). According to the Straits Times, one of the leading newspapers in Singapore, the number of students in primary school who speak mostly English at home has risen to around 70% in all three major ethnic groups in Singapore (i.e., the Chinese, Indian, and Malay; Chan Reference Chan2020). This is clearly confirmed by recent census data, as the 2020 Census of Population in Singapore reports that in the group of 5 to 14 year olds, 77.4% of the Chinese, 63% of the Malay, and 69.8% of the Indian segments of the population nowadays speak English as the most frequently used language at home (Department of Statistics Singapore 2020: 29).

To place L1 SingE on the map of L1 varieties of English, Buschfeld (Reference Buschfeld2020) has empirically and systematically investigated different linguistic characteristics of SingE, as well as the acquisitional route Singaporean children take in their linguistic development, and compared her findings to data collected from monolingual and bi-/multilingual children from England. In the following applications of the PrInDT approach, we make use of these data and investigate similar aspects of L1 SingE but base our investigation on a more sophisticated statistical approach.

The three features Buschfeld (Reference Buschfeld2020) investigated in some detail (i.e., subject-pronoun realization, past-tense marking, and vowel-length realization) were chosen since earlier research has identified local realizations of them in SingE. The variables thus offer standard and nonstandard variants, whose realization, we hypothesize, is determined by different extra- as well as intralinguistic predictors, most importantly age, ethnicity, and linguistic background (cf. Sections 2.1.1–2.1.4 for a detailed introduction of the predictors). In this respect, we expect to find clear differences between children from England and Singapore, as they acquire English under quite different circumstances (i.e., in a traditional, officially monolingual English-speaking country versus a former L2 English setting). In the latter context, features like the omission of subject pronouns, SingE past-tense marking strategies, and potential mergers of long and short vowels are the products of general mechanisms of language acquisition, such as simplification strategies and L1 transfer from the local languages of Singapore (i.e., Chinese and Indian languages and Malay). This means that second-language learners – and this is what the Singaporean population originally was – take over linguistic characteristics of their L1 to their L2 (here English), even if they do not match the target grammar. This is how new varieties of English are born, be they L2 or ultimately L1 varieties in contexts such as Singapore. We provide further details on the collected data sets and examples of the local SingE realizations in the following sections (Sections 2.1.1, 2.1.3, and 2.1.4).

2.1.1 Subject-Pronoun Realization

First, we introduce the so-called subpro data set for the investigation of the realization of subject pronouns. The data were collected in Singapore and England in 2014 and 2015 and sourced from video-recorded task-directed dialogue between researcher and child, consisting of several parts: a grammar-elicitation task, a story-retelling task, elicited narratives, and free interaction. The data set includes subject-pronoun realizations of 30 male and female Singaporean children of different ethnicities, aged 2;5 (2 years, 5 months) to 12;1 (all multilingual), and 21 male and female children from England, aged 2;1 to 10;9 (multi- and monolingual). The recorded material was orthographically transcribed and manually coded for the realization of subject pronouns (realized versus zero, i.e., nonrealized). In all, we have 6,146 tokens of the subject-pronoun variable, whose realization can be interpreted by means of a detailed set of extra- and intralinguistic predictors introduced later in this section. A total of 528 subject pronouns were omitted and will thus be referred to as zero pronouns (for details on the exact methods of elicitation and data processing, cf. Buschfeld Reference Buschfeld2020: 90–94, 110–117).

The following examples illustrate some SingE variants of nonrealized subject pronouns (Buschfeld Reference Buschfeld2020: 144):

1. Researcher: … What do you do with your friends? Do you play with them?

Child: [ØI] Play with them. Sometimes drawing.…

Child: Sometimes [ØWE] play some fun things.

2. Child: I think in MH370, I think they can find because [ØIT] is easy to go there.…

These and similar zero forms are variably used by children acquiring English as an L1 in Singapore. They are also typical of adult SingE and thus part of the input the children receive.

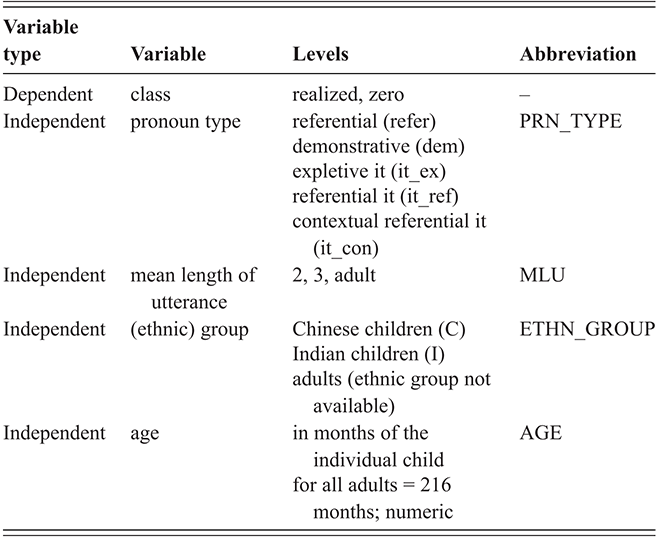

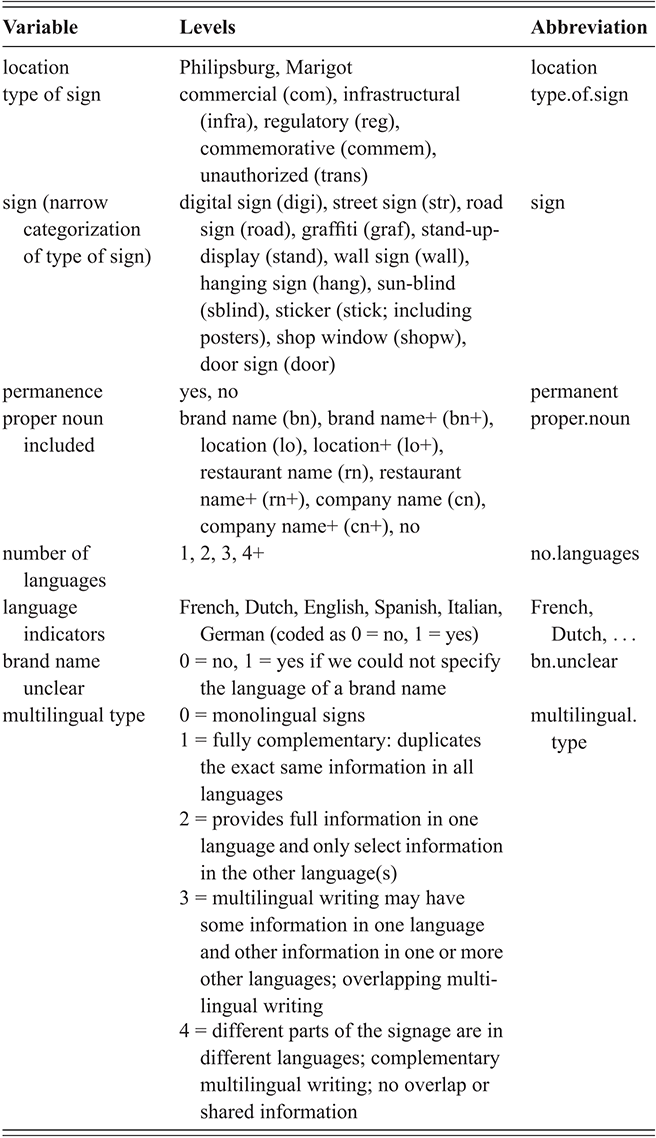

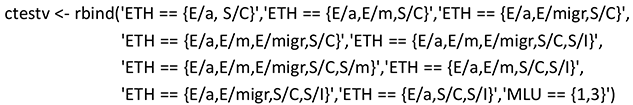

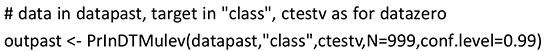

The aim of our analysis is to find prediction rules for the use of subject pronouns (realized versus zero) by means of extra- and intralinguistic variables. The extralinguistic variables considered as independent variables in the statistical analysis are ethnicity (ETH), age (AGE), sex (SEX), linguistic background (LiBa), and mean length of utterance (MLU). Mean length of utterance (MLU) is a measure that determines the syntactic complexity of utterances young children make and thus their grammatical development. It is an aggregated factor for which children were assigned to three groups according to the average grammatical complexity of 50 of their utterances. LiBa refers to the linguistic background of the children and splits the group into children who grow up with English only (mono) and multilingual children who acquire English and at least one further language (multi). The pronoun (PRN) is taken into account as an intralinguistic variable. Depending on the respective application, we work with pronoun categorization types of different granularity. We follow either the traditional distinction of three persons in singular and plural (i.e., I, you, he, she, it, we, you, they) – note, however, that plural tokens of you were not found in any of the data sets – or a distinction based on a more refined classification of it. In the most fine-grained classification of it (cf. Section 2.1.2), we differentiate the referential it (“This is my house. It is blue.”), expletive it (“It is raining.”), and a type Buschfeld (Reference Buschfeld2020: 115–116) has labeled “contextual referential it.” This notion roughly corresponds to what Halliday and Hasan (Reference Halliday and Hasan1976: 52–53) call “extended reference” or “text reference,” as some uses of it show “a greater degree of referentiality” than others (e.g., “It was a perfect day,” when the it refers to a priorly described event in general and not just a single noun phrase). Table 1 summarizes the variables, their levels, and the abbreviations used in the analysis of the subpro data.

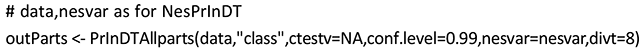

In Sections 3.2.1, 3.2.3.2, 3.2.3.3, and 3.2.4, we analyze these data by means of classical statistical methods. In Sections 5.1 and 5.6, we apply our PrInDT approach to the data set to compare and discuss its potential advantages over the traditional approaches. The underlying methods of the PrInDT approach are introduced in Sections 4.5.1 and 4.5.6.

2.1.2 Subject-Pronoun Realization with an Unbalanced Predictor

In a second study based on the data set introduced in Section 2.1.1, we look into the realization of subject pronouns (realized or zero) in both L1 SingE and the L2 variety to carve out potential differences in language use between L1 and L2 speakers of English in Singapore. We report and discuss the results of the study by Weihs and Buschfeld (Reference Weihs and Buschfeld2021b), with slight modifications only, to complete the picture of how the underlying idea of PrInDT can be applied to various linguistic contexts and objectives.

This study compares the data introduced in Section 2.1.1 to data from the spoken part of the Singapore component of the International Corpus of English (ICE-Singapore). The subcorpus from Buschfeld’s (Reference Buschfeld2020) study amounts to 36,000 words. The ICE-data come from the 90 transcripts of approximately 2,000 words each in the spoken component > dialogues > private > face-to-face-conversations section. They comprise an overall total of 202,000 words from 254 adults (ages 18 and over). Since the ICE-data dates back to the early 1990s, the results might also lend themselves to an apparent time analysis of potentially ongoing language change in SingE.

The data from both sets were manually coded for the realization of subject pronouns. The corresponding data set is called nessubpro. All in all, 3,225 tokens were extracted from the child corpus (2,899 realized, 326 zero) and 17,325 tokens from the adult corpus (16,543 realized, 782 zero). Therefore, the data constitute a high imbalance not only in token frequencies between the small and the large classes (zero versus realized) but also between the child and adult tokens. We introduce how our PrInDT approach deals with this double imbalance in data sets in Section 4.5.2.

The aim of our analysis is to find prediction rules for the use of subject pronouns (realized versus zero) by means of extra- and intralinguistic variables similar to those introduced in Table 1 but extended by the level adults and different types of it (cf. Section 2.1.1).Footnote 1 Table 2 summarizes the variables, their levels, and the abbreviations used in the analysis. The imbalance between the child and adult tokens cannot be explicitly considered by classical statistical classification methods. Therefore, we only apply our PrInDT approach (cf. Section 4.5.2) to these data (cf. Section 5.2).

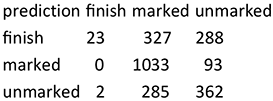

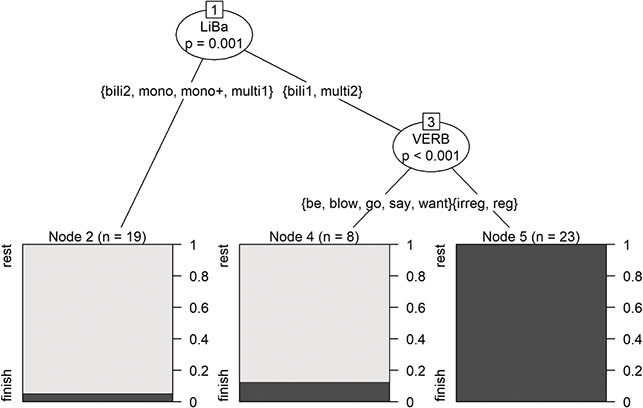

2.1.3 Past-Tense Marking

As a next sample study, we analyzed the child data introduced in Section 2.1.1 for the realization of past-tense morphology, again building and expanding on the study by Buschfeld (Reference Buschfeld2020). As part of the overall data set, the grammatical elicitation task TEGI, or Test of Early Grammatical ImpairmentFootnote 2 (Rice & Wexler Reference Rice and Wexler2001), is specifically designed to elicit past-tense endings in young children (for details on the exact methods of elicitation and data processing; cf. Buschfeld Reference Buschfeld2020: 90–94; 117–120). The data set is called past. Examples 1 and 2 illustrate SingE options to indicate past tense – that is, marked and unmarked verb forms as well as a very specific SingE variant, which marks verbs for completion of an action by means of finish as a postverbal marker (verb+finish).

1. Child: Then he wanted to climb a ladder to a chimney. Then the big bad wolf is in the pot. Then all the water splash and the carrot and the onion.

2. Child: He eat finish everything.

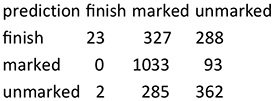

The forms in these examples are variably used by the Singaporean children and, again, are also typical of adult SingE and thus part of the input the children receive. The data include past-tense marking tokens by 29 male and female Singaporean children of different ethnicities, aged 2;5 to 12;1 (all bi- or multilingual) and 19 male and female children from England, aged 2;1 to 10;9 (bi-/multi- and monolingual). The aim of the analysis of these data is to find prediction rules for past-tense marking (marked, unmarked, and finish; i.e., the SingE variants) by means of extra- and intralinguistic predictors. The extralinguistic features considered as independent variables in the statistical analysis are, again, ethnicity (ETH), age (AGE), sex (SEX), linguistic background (LiBa), and mean length of utterance (MLU). The variables ETH, AGE, SEX, and MLU are defined as for the subpro data set in Section 2.1.1 (cf. Table 1). For LiBa, a finer distinction is used than in subpro. Here we distinguish the categories bili1, bili2, mono, mono+, multi1, and multi2, which are briefly explained as follows:

Monolingual (mono): Children are exposed to and use only one language before primary school.

Monolingual+ (mono+): Children who grow up monolingually and start learning a second language as late as the early school years.

Bilingual1 (bili1): Children are exposed to and use two languages from before the age of two.

Bilingual2 (bili2): Children are exposed to one language before the age of two and start acquiring/using the second language later than age two.

Multilingual1 (multi1): Children are exposed to and use three or more languages from before the age of two.

Multilingual2 (multi2): Children are exposed to and use two languages from before the age of two and start acquiring/using a third or fourth language later than age two.

For the past-tense study, we consider the variable VERB as an intralinguistic predictor. The levels of this variable are the nine most frequent verbs in the utterances of the children. For the remaining verbs, we consider the levels reg (regular) and irreg (irregular) only. As the nine most frequent verbs, we identified be, blow, come, do, find, go, make, say, and want, which mostly resulted from the specific elicitation tasks the children had completed. The PrInDT analyses of the data are presented in Sections 5.3 and 5.6; the underlying statistical methods are introduced in Sections 4.5.3 and 4.5.6.

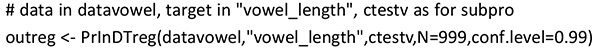

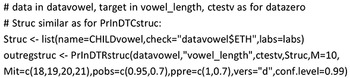

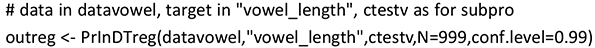

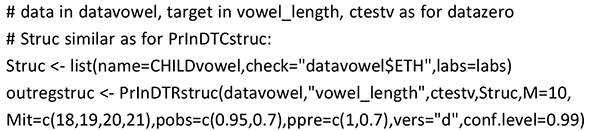

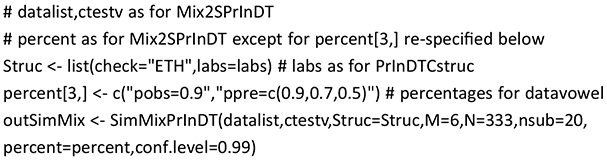

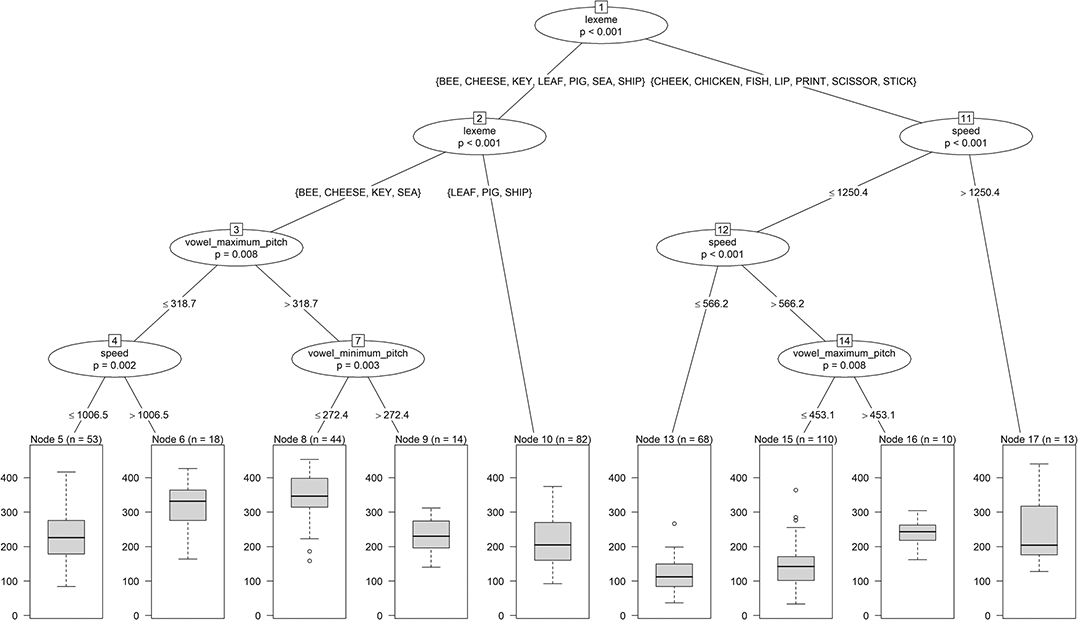

2.1.4 Vowel Length

Based on the data set called vowel, we study the realization of long and short vowel contrasts in L1 child SingE, again compared to the data collected in England and by means of extra- and intralinguistic predictors. Since earlier studies have reported various vowel mergers for L2 SingE (e.g., Wee Reference Wee, Schneider, Burridge, Kortmann, Mesthrie and Upton2004: 1024–1026), which are often not based on substantial, quantifiable empirical evidence but on auditory impression only, Buschfeld (Reference Buschfeld2020, chapter 8) measured quantity and quality contrasts between kit and fleece and foot and goose in her L1 child SingE data in Praat (Boersma & Weenink Reference Boersma and Weenink2018). She could not attest a clear vowel-length merger since phone duration was phonemic for all groups of children, even if contrasts between both pairs were slightly weaker in the Singaporean children than in the children from England (Buschfeld Reference Buschfeld2020: 253).

Again, we take her analysis as a starting point. The data is thus part of the corpus collected by Buschfeld (Reference Buschfeld2020) and was elicited by means of a self-developed picture naming task, which was geared toward triggering vowels in the lexical sets of kit-fleece and foot-goose (cf. Wells Reference Wells1982) in a playful way. The picture-naming task contained six pictures for each set, aiming to elicit [ɪ] and [i:] and [ʊ] and [u:] vowel tokens (cf. Buschfeld Reference Buschfeld2020). For our PrInDT application, we focus on vowel-length realizations in the kit-fleece productions of 22 male and female Singaporean children of different ethnicities, aged 2;6 to 12;1 (all multilingual) and 21 male and female children from England, aged 2;1 to 10;9 (multi- and monolingual). The exact vowel length of all kit and fleece tokens was measured in Praat (Boersma & Weenink Reference Boersma and Weenink2018). A total of 497 tokens, 225 of which were fleece tokens and 272 of which were kit tokens, are available for analysis. Due to the elicitation experiment, tokens are distributed on a limited set of lexemes (cf. Table 3; for details on the exact methods of elicitation and data processing, cf. Buschfeld Reference Buschfeld2020: 90–92, 117–120).

| Variable | Levels | Abbreviation |

|---|---|---|

| phone label | kit, fleece | phone_label |

| lexeme | for the fleece sound: bee, cheese, key, leaf, sea, cheek; for the kit sound: ship, chicken, fish, scissors, pig, lips, print, stick | lexeme |

| number of syllables | 1, 2 | syllables |

| word duration | ms | word_duration |

| speech rate | word_duration/syllables | speed |

| vowel minimum pitch | hertz | vowel_minimum_pitch |

| vowel maximum pitch | hertz | vowel_maximum_pitch |

| vowel intensity mean | db | vowel_intensity_mean |

| formant F1 at 50% of vowel length | hertz | f1_fifty |

| formant F2 at 50% of vowel length | hertz | f2_fifty |

| duration of phone left of the vowel | ms | phone_left_1_duration |

| duration of phone right of the vowel | ms | phone_right_1_duration |

| class of consonant left of the vowel | l, r, tʃ, voiced plosive (vd.p), voiceless fricative (vl.f), voiceless plosive (vl.p) | cons_class_l |

| class of consonant right of the vowel | ? (glottal stop), empty, nas (nasal), vd.f (voiced fricative), vd.p, vl.f, vl.p | cons_class_r |

Again, we apply different, more advanced statistical methods. In our main application of regression ctrees, the dependent variable is vowel length (in milliseconds, ms) of long- and short-vowel contrasts of kit versus fleece sounds, which we model by means of extra- and intralinguistic predictors. As extralinguistic predictors we consider SEX, ETH, AGE, LiBa, and MLU, as defined in Table 1. Additionally, we consider the country with values E = England and S = Singapore. As intralinguistic predictors, we employ the variables in Table 3.

In Sections 3.2.1, 3.2.2, 3.2.3.1, 3.2.3.3, and 3.2.4, we analyze these data by means of classical statistical methods. Our PrInDT analyses of the data are presented in Sections 5.4 and 5.6. The underlying statistical methods are introduced in Sections 4.5.4 and 4.5.6.

2.2 Linguistic Landscaping on St. Martin

Another study we build on for introducing our full set of PrInDT approaches is the recent investigation of linguistic landscapes on the Eastern Caribbean island of St. Martin (Buschfeld, Weihs, & Ronan Reference Buschfeld, Weihs and Ronan2024). What makes the island exceptional for such an analysis is its small size but high degree of multilingualism. Factors such as strong migration flows and tourism have promoted this development, which, at their core, go back to times of European colonization and the division of the island into a French- and a Dutch-ruled part (for further details on its historical and linguistic development, see Buschfeld, Weihs, & Ronan Reference Buschfeld, Weihs and Ronan2024).

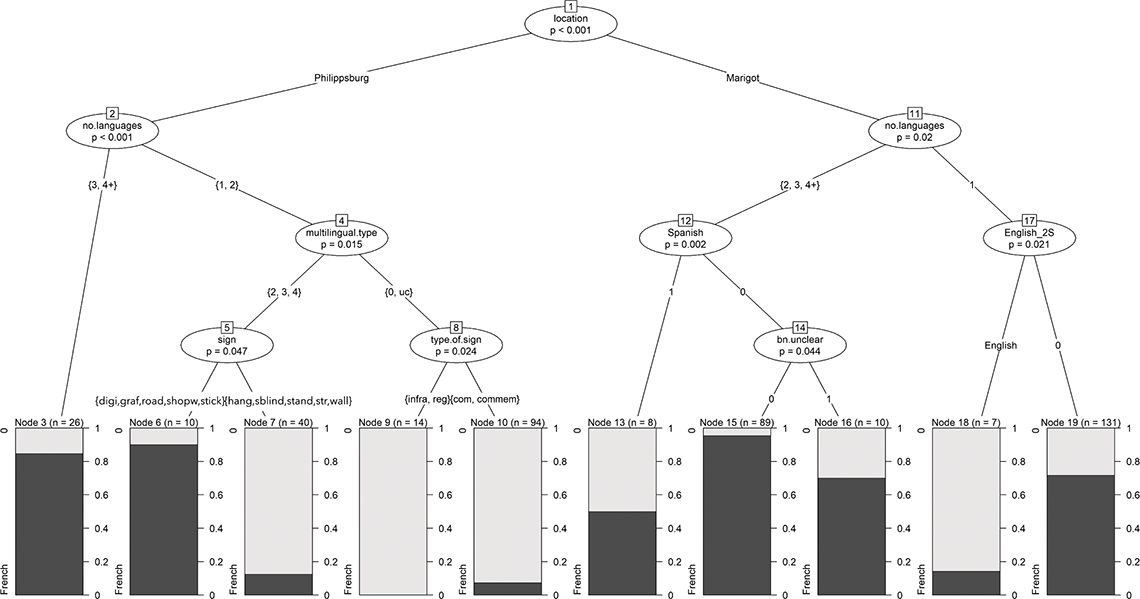

The data for this part of our PrInDT application were collected in Marigot, the capital of the northern, French side of St. Martin, and Philipsburg, the capital of the southern, Dutch part, in February 2020. On the one hand, we report and discuss the results of the study by Buschfeld, Weihs, and Ronan (Reference Buschfeld, Weihs and Ronan2024) with slight modifications only (cf. Section 5.5) to complete the picture of how the underlying idea of PrInDT can be applied to various linguistic contexts and objectives. On the other hand, we apply further PrInDT methods to the data set (cf. Section 5.6). The main aim is to unveil potential differences between the linguistic landscapes of the formerly French- and Dutch-colonized parts of the island. The data were collected in the commercial districts of the two cities. In both cities, pictures were taken of shop windows, street and road signs, memorials, graffiti, and any type of sign that would fall within one of the categories traditionally employed for the classification of signs – that is, infrastructural, regulatory, commemorative, commercial, and unauthorized (cf. Ziegler et al. Reference Ziegler, Schmitz, Uslucan, Pütz and Mundt2018).

The data set consists of 373 signs from Marigot and 372 signs from Philipsburg, and is thus fairly balanced in terms of the number of signs from each of the two locations. We analyzed each individual sign (i.e., “any piece of written text within a spatially definable frame”; Backhaus Reference Backhaus2006: 55), in terms of languages it displays, regardless of the extent of the linguistic sample (i.e., from one-word units to longer stretches of text). The individual signs were then coded according to the characteristics presented in Table 4. Additionally, the researcher (two levels) who collected the data as well as the coder (three levels) were included as possible predictors for our three target variables (i.e., the presence of French, Dutch, and English as the three official and most frequently used languages in the respective parts of the island).

Admittedly, the coding scheme is complex, but we cannot go into all details here (as is also true for the previously introduced data sets), due to spatial restrictions and a focus on the statistical applications to be discussed in subsequent sections of this Element (cf. Buschfeld, Weihs, & Ronan Reference Buschfeld, Weihs and Ronan2024 for further details on the landscaping study). The corresponding data set is called land. For PrInDT analyses of the data, see Sections 5.5 and 5.6. The underlying statistical methods are introduced in Sections 4.5.5 and 4.5.6.

3 Setting the Statistical Background

Before we turn toward introducing the PrInDT approach and its different subversions and presenting our sample analyses of the different data sets in Sections 4 and 5, we briefly introduce R and the statistical approaches and principles on which PrInDT and its various subversions are based, with reference to the data sets introduced in Section 2.

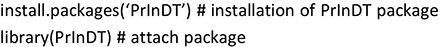

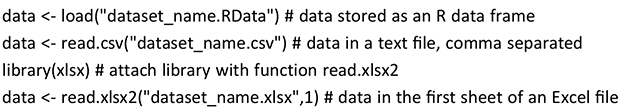

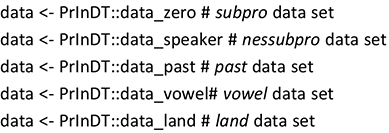

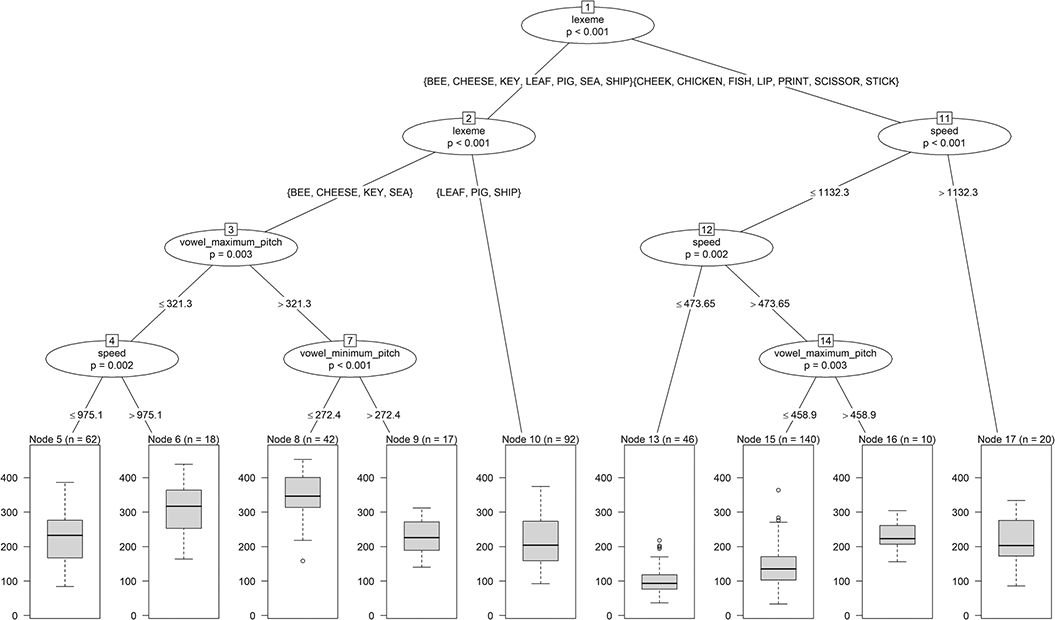

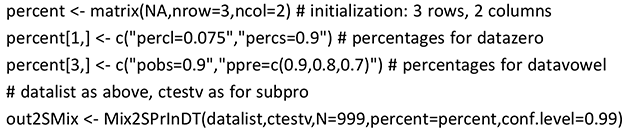

3.1 Steps before Using PrInDT in R: Input, Presentation, and Transformation of Data

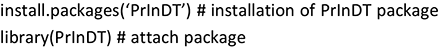

Before using the PrInDT package in R, it has to be installed and attached. Installation is necessary only once; attachment via the library function, however, is necessary in each new R session. In the following, we present the relevant R-code, which is accompanied by explanations wherever necessary, indicated by a hashtag (#).

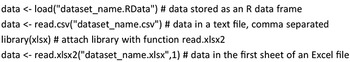

In a next step, the basic data have to be read in. Depending on the format of the underlying data set, this needs to be done in different ways. The data have to be assigned to a so-called data frame with a specified name; here we simply use data. The assignment operator is <-, as illustrated in the following examples.

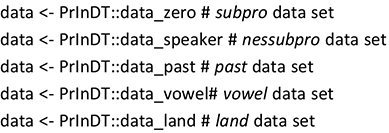

The PrInDT package contains random parts of the data sets introduced in Section 2, but not the full sets collected for the studies. Therefore, the R-code provided in Sections 3.2, 4, and 5 will not produce the reported results but only similar ones if applied to the data available in the package. The data in the PrInDT package can be loaded through the following codes:

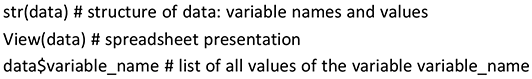

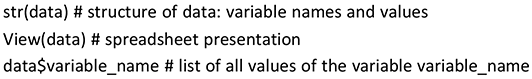

The data frame data consists of a matrix in which each row corresponds to an observation and each column to a variable. Therefore, the number of rows (first dimension) is equal to the number of observations, and the number of columns (second dimension) is equal to the number of variables. To check the content of data, different presentation methods may be applied, for example:

The $ sign separates the names of the data frame (data) and the respective variable (variable_name) in the data frame.

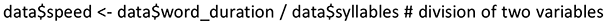

The functions of PrInDT are not programmed to automatically carry out any data transformations. If transformations of observed variables are employed as predictors in a PrInDT analysis, these must be carried out before the function call. In the vowel data set (cf. Section 2.1.4), for example, we need to define speed (per syllable) as the ratio of word duration and number of syllables, as done in the following call:

3.2 Statistical Methods

We now turn toward those statistical methods that our different PrInDT approaches are based on.

3.2.1 Descriptive Statistics

Descriptive statistics provides characteristics of the data, such as mean or median, tables, and graphs, for summarizing and illustrating distributions of observations of variables and their relationships. A multitude of methods exist in descriptive statistics. For univariate descriptive methods, the interested reader is referred to, for example, Levshina (Reference Levshina2015: 41, 69) and for bivariate methods to Levshina (Reference Levshina2015: 115).

3.2.2 Inferential Statistics

Inferential statistics provides tests for statistically validating hypotheses on distributions and on relationships between variables in a specific population. The main idea in statistical testing is that we consider a variable for which the distribution is assumed to be known except for some unknown property. For example, for the variable vowel_length in Section 2.1.4 a normal distribution might be assumed with an unknown mean. In such a situation, in statistics, a so-called hypothesis about the unknown mean is stated: “The mean μ is equal to a certain value μ0.” This hypothesis is then tested by assessing the distance between μ0 and the sample mean. The larger this distance, the less probable μ0 is. One of the most well-known statistical tests is the so-called (one-sample) t-test. In a t-test, the so-called t-statistic is calculated by

![]() with sd(

with sd(

![]() = standard deviation of the mean.

= standard deviation of the mean.

The probability of the calculated value of the t-statistic and of even more extreme values is called the “p-value.” If the p-value is very small (typically smaller than 0.05), we reject the hypothesis since the sample mean appears to be too improbable if the hypothesis is true.

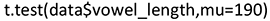

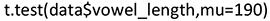

As an example, we consider the hypothesis that the population mean (called “true mean” in R) of vowel_length is equal to μ0 = 190:

Since the p-value is 0.027, the hypothesis is rejected. For vowel_length, the sample mean is 199.2779 and its so-called 95% confidence interval is [191.0651, 207.4907] (cf. the R-output). This confidence interval covers the true mean with 95% probability if the hypothesis is true. The hypothesized value 190 does not lie in this interval, which again supports that μ0 = 190 is rejected as potential population mean.

Such t-tests are also applied for testing the significance of an estimate of an unknown coefficient in, for example, a linear model (cf. Section 3.2.3.1). For conditional inference trees, we apply so-called two-sample tests that compare the distribution of a variable for different populations. This is discussed in Section 3.2.3.3.

3.2.3 Statistical Models: Regression and Classification

Statistical models represent the relationship between variables and combine both descriptive and inferential statistics. On the one hand, a statistical model attempts to represent the relationship between a so-called dependent variable (target) and one or more so-called independent variables (predictors) by means of a descriptive mathematical formula, for example, motivated by earlier theoretical assumptions or a prior analysis. On the other hand, it is an important goal of statistical modeling to test the so-called significance of the influence of potential predictors on the dependent variable. We distinguish two types of models: classification and regression models. For classification models, the dependent variable is categorical (i.e., represents categories, so-called classes); for regression models, the dependent variable is a continuous quantity.

Statistical models typically used in linguistic analyses include various kinds of linear regression models (fixed effects, mixed effects; cf. Section 3.2.3.1) and specific nonlinear models like logistic models in classification (i.e., for categorical dependent variables; cf. Section 3.2.3.2). These types of models rely on the idea of optimizing a fit criterion, namely minimizing the least squares error (for linear models) – that is, the sum of squared differences between observations and model values – or maximizing the probability that the model is true (for logistic models). For the following discussion of classification and regression modeling (Sections 3.2.3.1 and 3.2.3.2), we employ two of the data sets introduced in Section 2, namely the subpro data set for classification and the vowel data set for regression.

3.2.3.1 Linear Models

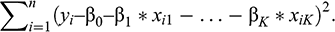

For linear modeling, we assume a linear relationship between a continuous target variable Y and K predictor variables Xj, j = 1, …, K. The idea is to look for the best model of the type

where i = 1, …, n is the observation number; β0, β1, …, βK are unknown model coefficients; and ε is a (normally distributed) model error. β0 is the model constant, called “intercept”; the other βs are the multipliers of the predictors, called “slopes.”

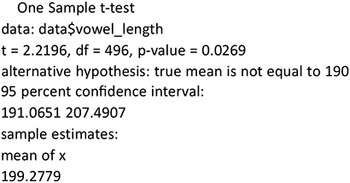

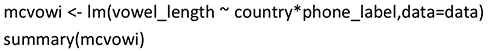

As an example, consider the data set vowel (cf. Section 2.1.4), in which vowel_length is the target variable and vowel_maximum_pitch and vowel_minimum_pitch are the predictor variables:

vowel_lengthi = β0 + β1*vowel_maximum_pitchi + β2*vowel_minimum_pitchi + εi.

In this model, the number of tokens (n) amounts to 497, and the number of predictors (K) is 2. To identify the best values for β0, β1, …, βK, the so-called least squares criterion is applied (i.e., we minimize the following squared distance between the target and the model):

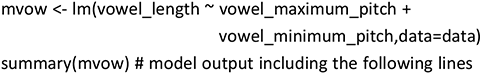

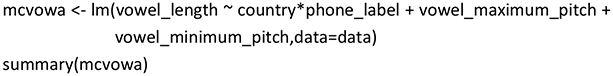

The values minimizing this criterion are the so-called least squares estimates. In R, linear modeling (lm) is applied by means of the following code:

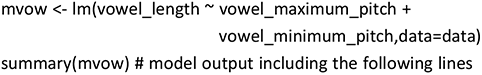

Estimate | Std. Error | t value | Pr(>|t|) | ||

(Intercept) | 187.72465 | 15.38522 | 12.202 | <2e–16 | *** |

vowel_maximum_pitch | 0.45828 | 0.04747 | 9.654 | <2e–16 | *** |

vowel_minimum_pitch | −0.57093 | 0.05992 | −9.528 | <2e–16 | *** |

The output of lm is assigned to the list mvow. The estimated coefficients (Estimate) and some indicators of their uncertainty (Standard Error, t-value, Pr(>|t|)) are part of the output of the summary statement. The column “Pr(>|t|)” gives the p-value of the t-test on the hypothesis “true value of the coefficient = 0.” This hypothesis should be rejected since otherwise the corresponding variable would probably not have any influence on the target, in our example on vowel length. Significance of rejection is typically defined by means of a threshold for the p-value. Such thresholds can be set differently. In our example, the threshold is set to 5%, which is often the case in linguistic modeling. This is indicated by “*” in the last column, “**” indicates that the p-value is smaller than 1%, and “***” indicates that the p-value is smaller than 0.1%.

In our model, all predictors are highly significant (i.e., their slopes are unequal to zero at the 0.1% level). Thus we can argue that the predictors have a significant influence on the target. The model can be interpreted as follows: vowel_length significantly depends linearly on both vowel_maximum_pitch and vowel_minimum_pitch. If vowel_maximum_pitch is increased by 10 Hz, then vowel_length, on average, increases by 4.6 ms (estimate for vowel_maximum_pitch = 0.458). If vowel_minimum_pitch is increased by 10 Hz, then vowel_length decreases by 5.7 ms (estimate = –0.571).

Unfortunately, this procedure is only well-defined for continuous predictors Xk. For categorical predictors, for each value except one, the so-called reference value, an additional constant is estimated, which is added to the constant of the reference value. Such a constant can be interpreted as a correction of the intercept corresponding to a value of the categorical variable, which is not the reference value. In R, the reference value can be set by the user. If set automatically, the alphabetically first value is selected.

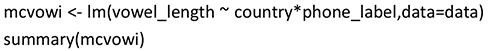

As an example, we consider the influence of two categorical variables, country (with two values E for England [= reference] and S for Singapore) and phone_label (with two values fleece [= reference] and kit) on vowel_length. Does vowel_length depend on country and phone_label? Are different increments necessary for the different values of these two variables?

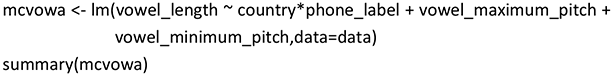

Sometimes a corrective constant depends on the values of other variables. In such cases, we talk about so-called interactions between variables. For example, for two categorical variables the estimate of the interaction of two specific values of the two variables corrects the estimated constants of the two values. This will also be illustrated by the example of vowel length influenced by the two variables country and phone_label. In R, interactions are coded by the notation X1:X2. The model term X1*X2 is a short notation for including the intercept, the individual effects of X1 and X2, and the interaction X1:X2 in the model. We employed the following R-code:

Estimate | Std. Error | t value | Pr(>|t|) | ||

(Intercept) | 266.916 | 8.048 | 33.164 | <2e–16 | *** |

countryS | −34.238 | 10.885 | −3.145 | 0.00176 | ** |

phone_labelkit | −109.734 | 10.734 | −10.223 | <2e–16 | *** |

countryS:phone_labelkit | 37.379 | 14.690 | 2.545 | 0.01125 | * |

This model can be interpreted as follows: vowel_length depends on both country (England versus Singapore) and phone label (fleece versus kit) in the following way: For Singaporean children (countryS), vowel_length is shorter than for children growing up in England (reference level) by (on average) 34 ms. For the label kit (phone_labelkit), vowel length is shorter by (on average) 110 ms than for the label fleece (reference level). However, the combination of country=Singapore and phone_label=kit (countryS:phone_labelkit) has an additional effect on vowel length of (on average) 37 ms. The overall effect of a specific combination of levels of the two predictors depends on the realized levels. For example, the combination (country=Singapore, phone_label=kit) has an overall effect on vowel length of the sum of all estimates (i.e., of [rounded] 267 – 34 – 110 + 37 = 160 ms), whereas, for example, the overall effect of the combination (country=England, phone_label=fleece) is represented by the intercept of 267 ms.

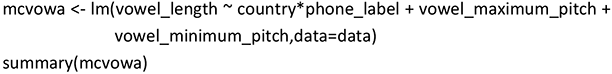

In a next step, we combine the two models mvow and mcvowi, as introduced earlier in this section, to a model that jointly represents dependencies on both continuous and categorical predictors:

Estimate | Std.Error | t value | Pr(>|t|) | ||

(Intercept) | 238.44426 | 15.12258 | 15.767 | <2e–16 | *** |

countryS | −32.32962 | 10.00153 | −3.232 | 0.00131 | ** |

phone_labelkit | −94.25573 | 10.03167 | −9.396 | <2e–16 | *** |

vowel_maximum_pitch | 0.37987 | 0.04306 | 8.821 | <2e–16 | *** |

vowel_minimum_pitch | −0.43172 | 0.05506 | −7.841 | 2.8e–14 | *** |

countryS:phone_labelkit | 35.86929 | 13.51012 | 2.655 | 0.00819 | ** |

As we can see, the estimated slopes are similar but not identical to the corresponding slopes in the models mvow and mcvowi. In particular, the effect sizes of the pitch variables considerably change by including country and phone_label in the model. Still, all effects are highly significant.

The effect of a variable that represents a random choice from a larger population, like the children in the vowel data set, is typically modeled by a so-called stochastic term (i.e., as a so-called random effect), resulting in a so-called mixed-effects linear model. In contrast, for predictors for which we only want to study values with limited and concrete representations, we look for so-called fixed effects. One example is the predictor country, for which we want the model to be valid only for England and Singapore. Mixed-effects linear models will be discussed further in Section 3.2.4.

3.2.3.2 Logistic Models

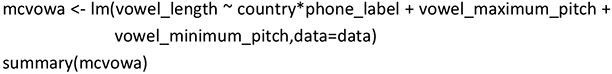

For classification problems, we will focus on binary target variables Y – that is, target variables with two categories, coded by the values 0 and 1. Still, logistic regression can also be applied if a target has more than two values (cf., e.g., Levshina Reference Levshina2015: 277). In so-called logistic regression, we do not model this target variable directly but the probability π1 of 1. To guarantee that this value lies between 0 and 1 (as a probability should), we use the model

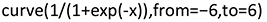

This so-called logistic model only takes values between 0 and 1. This is illustrated in Figure 1, which is generated by the following R-code:

Figure 1 Logistic model.

The estimation of the unknown parameters β0, β1, …, βK is called “logistic regression.” The corresponding R function is glm (generalized linear model) with the option family = binomial (with a binomial distribution being the sum of distributions with values 0 and 1 as for the classes in classification; for further details, see, e.g., Winter Reference Winter2020: 198). Categorical predictors are treated in the same way as linear regression (cf. Section 3.2.3.1). After estimation of the parameters, the probability π1 of 1 can be estimated for any values of the predictors. If it is larger than 0.5, class 1 is estimated; if not, class 0 is predicted.

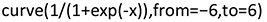

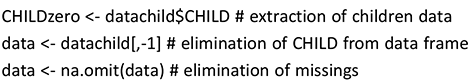

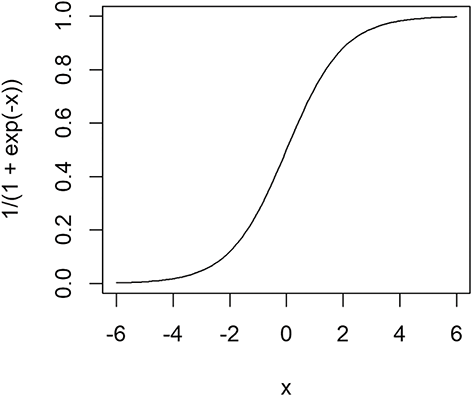

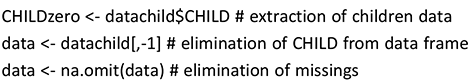

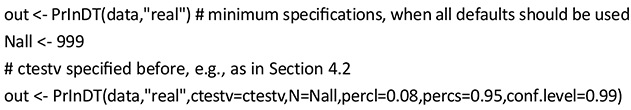

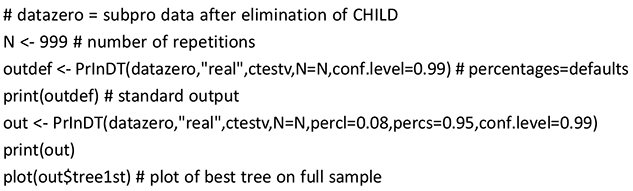

In the following code, we illustrate logistic regression by means of the subpro data set. In the R-code, we use the model specification “real ~ .,” which indicates that all variables in the data should be used as predictors except the target variable “real.” In R, elements of data frames are typically referenced by the index pair [i,j], meaning the ith observation of the jth variable. The expression [,-1] stands for the elimination of variable 1, which is the variable CHILD in our data. The full R-code appears as follows:

The results of our glm application can be found in Table 5. We can see that at a 5% level, only SEXmale, MLU2, MLU3, PRNit, PRNwe, and PRNyou_s are significant. The only continuous predictor in the model is AGE, and its estimated coefficient is close to zero and thus not significant. Moreover, the model includes constants for the categories of the discrete predictors, which are nonsignificant but cannot be eliminated since other constants of this categorical variable are (partly highly) significant. For example, for PRN, the dummies PRNit, PRNwe, and PRNyou_s are highly significant, but the dummies for the other pronouns I, she, and they are insignificant. The levels it, we, and you_s will also turn up as important predictors in the corresponding PrInDT trees (cf. Figure 5, Section 5.1).

3.2.3.3 Decision Trees

Decision trees follow quite a different approach. They aim to characterize the data structure by means of binary partition of the data space via “splits” of the data based on individual predictors. In each split, an “if” condition is specified that splits the data into two subparts: one where the condition holds true and one where it does not. For example, if Singaporean children are of Chinese origin, they tend to leave out subject pronouns; if they are not, they do not. This generates two subparts of the data: one for Singaporean children of Chinese origin, and one for children of various other origins. In decision trees, such rules are selected as follows. In classification (cf. Section 3.2.3), splits are chosen so that the frequencies of realizations of the levels that represent the target variable differ as much as possible in the generated subparts. In regression, splits are chosen so that the distribution of the continuous target variable is as different as possible in the subparts. In the subparts, follow-up splits again look for the best partitions. This leads to a series of so-called if-then rules.

In the so-called terminal nodes of a tree (i.e., in the nodes that are not split anymore), a decision is made about the prediction of the dependent variable, for example, whether under the conditions leading to the respective node zero subject pronouns are used or whether pronouns are realized. In a terminal node, the distribution of all values of the target variable, which are assigned to the node following the if-then rules, is displayed. For continuous targets, this distribution is illustrated by a box-plot (for an introduction to box-plots, see, e.g., Levshina Reference Levshina2015: 57); for binary targets the frequencies of the two classes are presented in different gray shades. As the predicted value generated by the terminal node, only one representative value is taken from these distributions – namely the most frequent class in classification and in regression the mean of all values of the target variable.

Up to this point, we have introduced the generation of decision trees from a solely descriptive perspective (i.e., without considering statistical inference). This is taken into account by so-called conditional inference trees (ctrees; Hothorn et al. Reference Hothorn, Hornik and Zeileis2006), in which the splits are chosen on the basis of p-values, which are generated to test the underlying hypothesis that the distribution of the target is equal in the generated subparts. The more significantly this hypothesis is rejected (i.e., the smaller the p-value), the more relevant the split. Therefore, the split with the smallest p-value is realized in the tree. The maximum p-value accepted for a split (i.e., the maximum significance level of the tree) is typically specified as 5% or 1%.

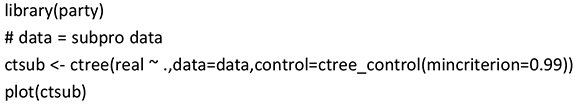

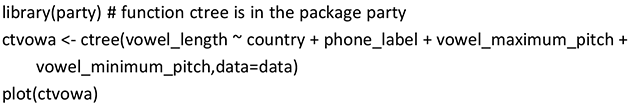

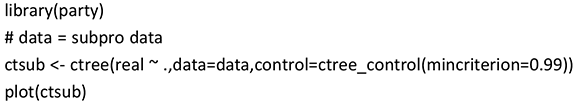

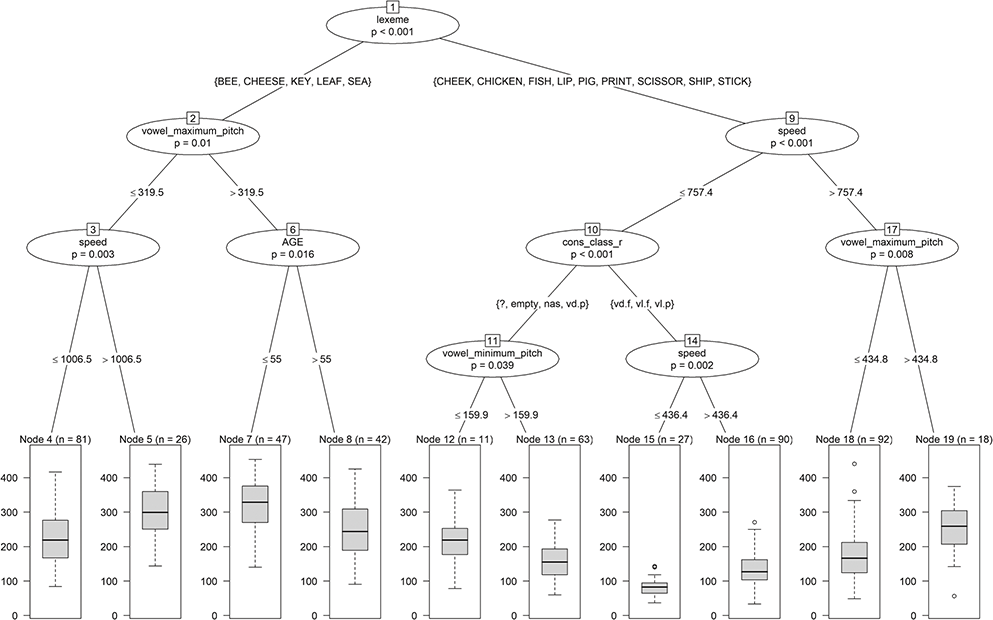

In the following code, we generate a ctree for the same set of predictors for which we generated mcvowa in Section 3.2.3.1 – that is, for the predictors country, phone_label, vowel_maximum_pitch, and vowel_minimum_pitch as potentially influencing the target vowel_length. The corresponding R-code reads as follows:

The plot of the tree in Figure 2 shows the decision variables in the nodes together with the p-value of the test on the equality of the distributions in the two subnodes. Which part of the data occurs on the left and on the right is indicated by the value(s) of the relevant decision variable on the lines connecting the nodes. For example, the first split at the top of the tree (i.e., phone_label) sorts fleece vowels into the left subnode and kit vowels into the right subnode. For continuous variables like vowel_maximum_pitch, splits are characterized by inequalities. For example, observations with vowel_maximum_pitch >319.5 Hz are part of the right split, while the rest of the values go into the left split (Node 2).

Figure 2 Tree for vowel length.

As an example for an if-then rule of the tree in Figure 2, we report the rule for Node 3. If phone_label=fleece and vowel_maximum_pitch ≤ 319.5 Hz, then vowel_length is predicted as the mean of the lengths of all vowels for which these two properties are true.

Another important property of a decision tree is that it automatically identifies interactions between predictors (cf. Section 3.2.3.1). For example, the complete left part of the tree is only valid if phone_label=fleece (i.e., the following splits depend on this first condition). The decisions in the left and the right part of the tree are typically different so that, in our example, the effects of vowel_maximum_pitch and vowel_minimum_pitch depend on the value of phone_label. Therefore, interactions are not explicitly but implicitly modeled in decision trees.

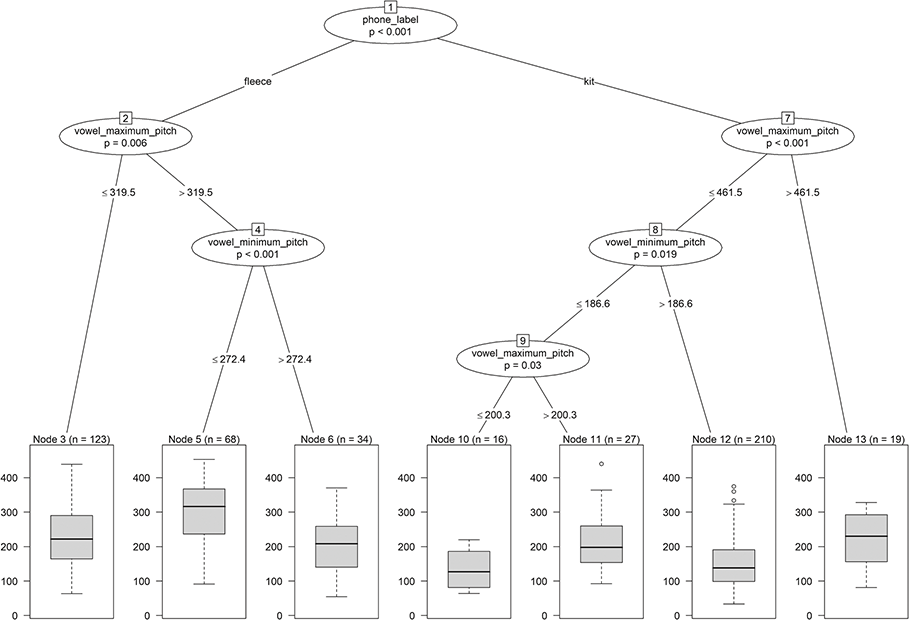

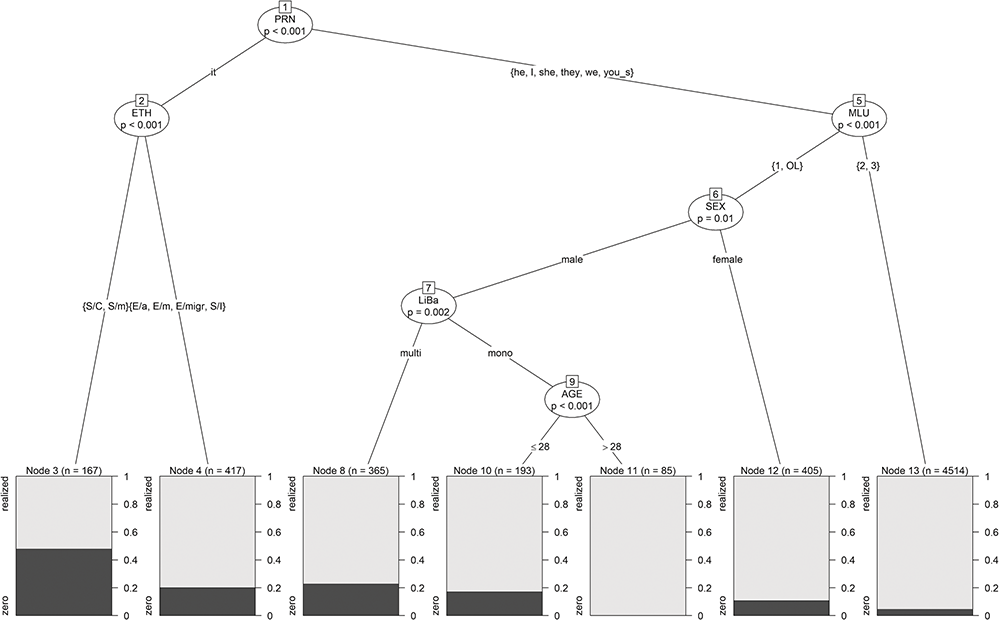

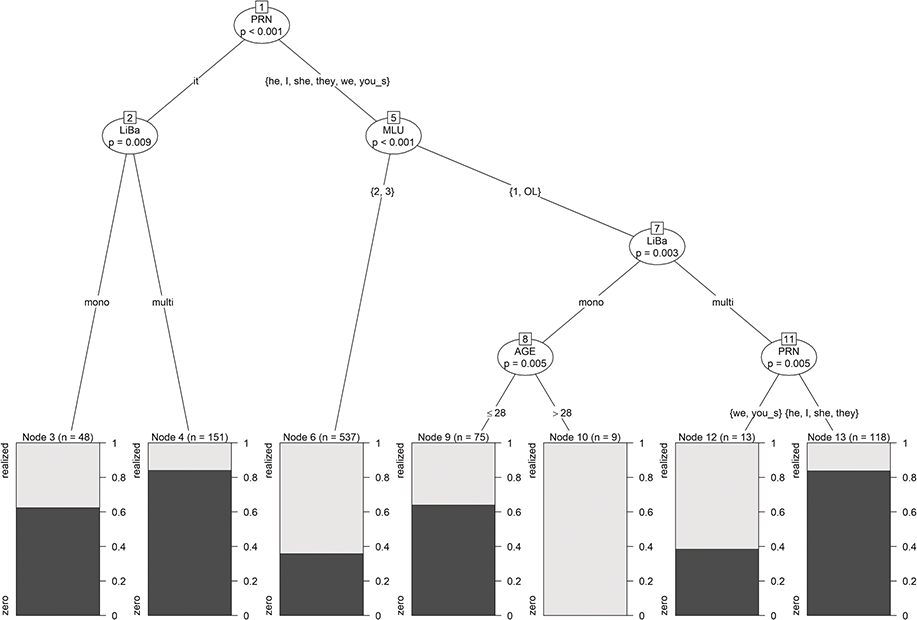

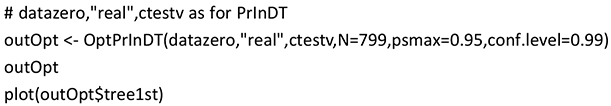

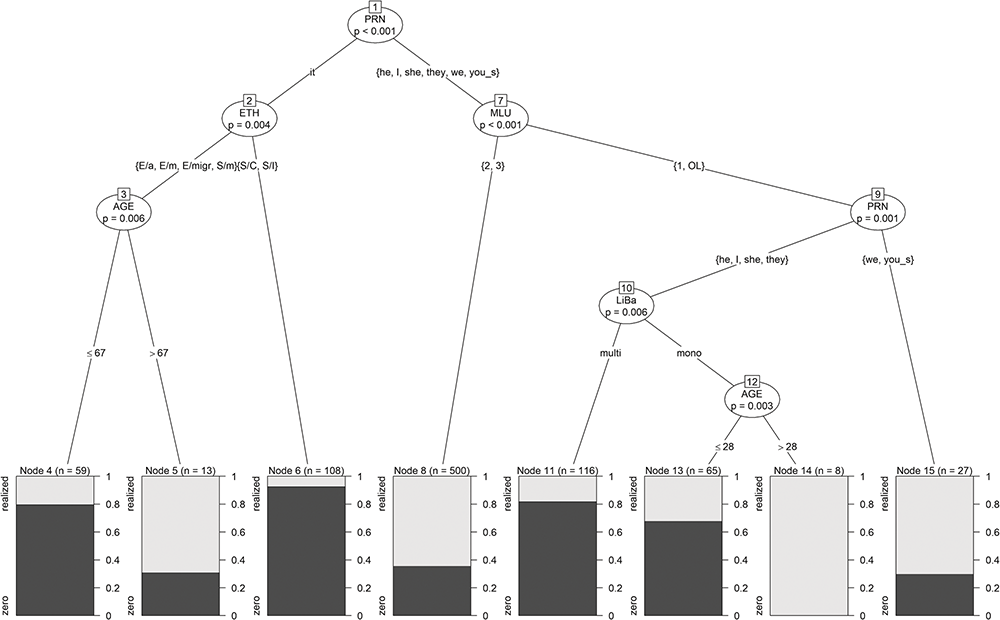

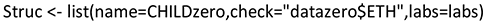

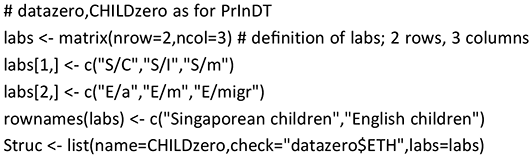

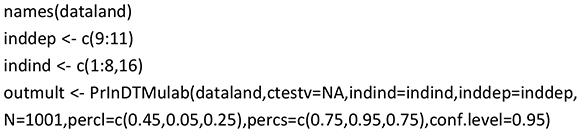

In a next step, we generate a decision tree for the classification problem for which we built a logistic regression model in Section 3.2.3.2 (i.e., for the realization of subject pronouns). The tree is based on the following R-code:

The control parameter mincriterion states that the maximum significance level in the splits should be 1 – 0.99 = 0.01. The standard value for this parameter would be 0.95. This would generate a much larger and more complex tree, which is why we lowered the significance level for ease of illustration and interpretability.

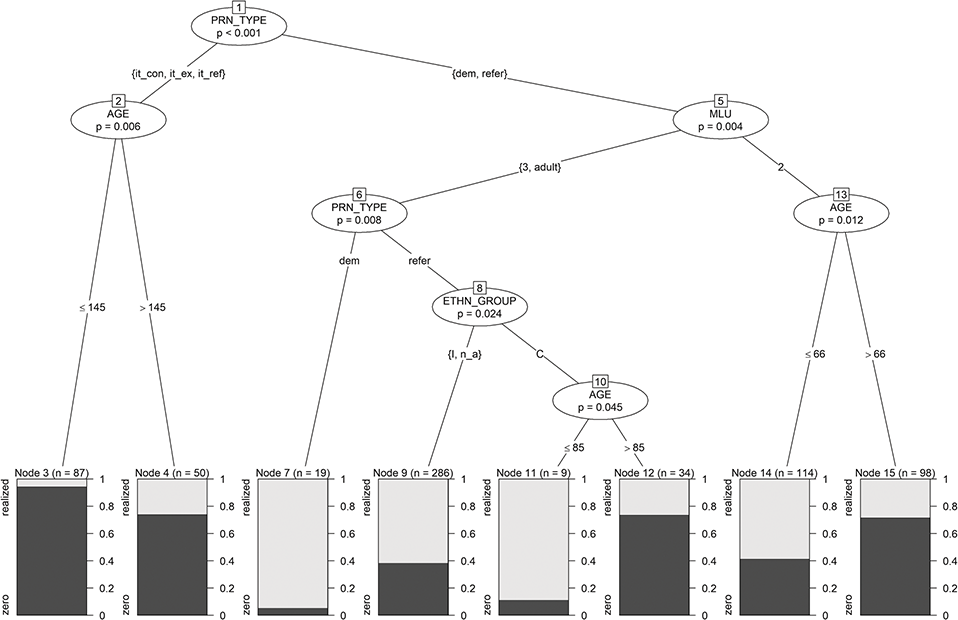

In the terminal nodes of the tree in Figure 3, the shares of the two classes zero (in dark gray) and realized (in light gray) are displayed. In addition to those predictors, identified as significant by the logistic regression model (cf. Section 3.2.3.2), the tree includes splits in the variables ETH and LiBa, which were only significant at the 10% level for logistic regression, and even a split in AGE, which was insignificant for logistic regression. This finding shows that trees identify other kinds of significant relationships. This is also discussed by Gries (Reference Gries2020). He points out that trees might even be unable to find the correct predictors-response relationship. The discrepancies in the findings of linear models and trees, however, are not too surprising and not necessarily due to one approach being superior over the other. They are rather due to different approaches focusing on different statistical relations and thus may produce slightly different findings. Basic linear modeling, for example, assesses the significance of the influences of given predictors (main effects), whereas decision trees mainly identify (significant) interactions between these predictors. In the best case, these two kinds of models complement each other (cf. Tagliamonte & Baayen Reference Tagliamonte and Baayen2012 for a corresponding discussion). For us, decision trees have the advantage that their “if-then” rules are much easier and more straightforward to interpret than the linear combinations of linear models, in particular for novice users of statistical methods.

Figure 3 Tree for subject-pronoun realization.

An often-used generalization of decision trees are random forests. They consist of many trees generated by resampling (i.e., by taking different random subsamples from the observed sample and estimating the tree based on these samples; cf. Section 3.2.6). This is a recognized and often used method to counteract overfitting (i.e., that the model fits the training data extremely well but does not match the behavior of the overall population). By leaving out parts of the observed data for model construction, the validity of the models can be evaluated on these left-out parts of the data (test sample). In this Element, we utilize the idea of random forests to identify the best model. The optimization criterion we employ is “accuracy on the full sample,” combining the accuracies on the training sample (fit) with the accuracy on the test sample, which represents the predictive power of the model. In our approach, we take into consideration Gries’ (Reference Gries2020) criticism that a summary of the trees of a random forest might be inadequate. Such a summary might consist of the most representative tree according to a distance measure between the trees (cf. Laabs et al. Reference Laabs, Westenberger and Inke2024; i.e. the tree which is closest to all other trees). Instead, we evaluate the individual trees of a forest to identify the best tree (cf. Section 3.2.6) – that is, we do not employ a summary of all trees of a forest, but use the forest only as material to identify the best tree by evaluating an accuracy measure.

3.2.4 Model Accuracy

There is no question that the aspects discussed in the preceding sections are all relevant for model selection. However, it ultimately strongly depends on your research questions and hypotheses which models are best suited for the respective analysis, but often more than one approach is suitable for the envisaged investigation. It may thus be sensible to not simply choose the first available approach or simply the approach most “hip” in the discipline but to compare different models, not only in terms of their results but, in particular, also their accuracies. While linguists with strong statistical expertise, of course, consider such aspects and model accuracy in their approaches, model validation still comes up short in many linguistic investigations (cf. Buschfeld, Leuckert, et al. Reference Buschfeld, Leuckert, Weihs and Weilinghoff2024). This is why our approach stresses the importance of evaluating model accuracy. In the following sections (Sections 3.2.4.1–3.2.4.2), we therefore introduce model accuracy for regression and classification models.

3.2.4.1 Regression Models

Linear and nonlinear regression models rely on the idea of optimizing a fit criterion, namely minimizing the least squares error (for linear models; i.e., the sum of squared differences between observations and model values) or maximizing the probability that the model is true (for logistic models). Therefore, such models are called “globally optimal” since they optimize criteria like the goodness of fit on the full data set. Accordingly, linear and nonlinear regression models come up with measures for the overall accuracy of the model that are related to the construction of the models. One of the most prominent measures is the

The more total variance of the target explained by the model (i.e., the closer R2 is to 1), the better the model fit.

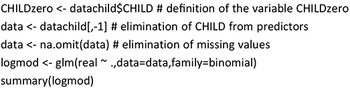

In the following code, we compare the R2 of the models we looked at in Section 3.2.3.1. For the model mvow, we get an R2 = 0.20, and for the model mcvowa, we get an R2 = 0.36. Therefore, the additional categorical predictors added to mcvowa increase the goodness of fit by 0.16. The R2s are automatically included in the output created by the following summary statement:

Aside from the estimations shown in Section 3.2.3.1, the summary statement creates the information “Multiple R-squared: 0.3645.”

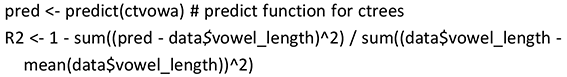

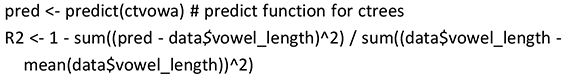

Let us finally compare the R2 of the regression tree ctvowa presented in Section 3.2.3.3 with the R2 for the regression model mcvowa. R2s of decision trees have to be calculated explicitly, which can be done by means of the following statement:

This leads to an R2 = 0.35, which is similar to the R2 = 0.36 for the corresponding linear regression model mcvowa.

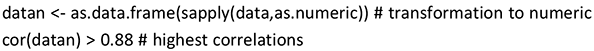

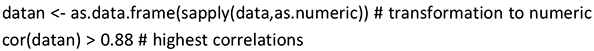

Overall, the model fit of all these regression models is not satisfactory since the R2s are so low. However, we could use the other possible predictors in the vowel data set to improve model quality. Unfortunately, if we use all available predictors, predictors with partial overlaps in their levels (e.g., MLU and AGE, both relating to the children’s linguistic development) influence each other in ways that make model estimation and interpretation difficult. Therefore, we decided to eliminate such correlated features (for a definition of a correlation matrix, cf. Levshina Reference Levshina2015: 134). To identify the highest correlations, we first transformed all factor levels into numerical values to be able to use “standard correlation” and then calculated the correlation matrix by means of the following R-code:

This identifies the correlations between MLU and AGE and between ETH and country as highest. Therefore, we decided to leave out MLU and ETH from further analyses.

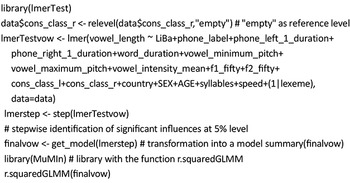

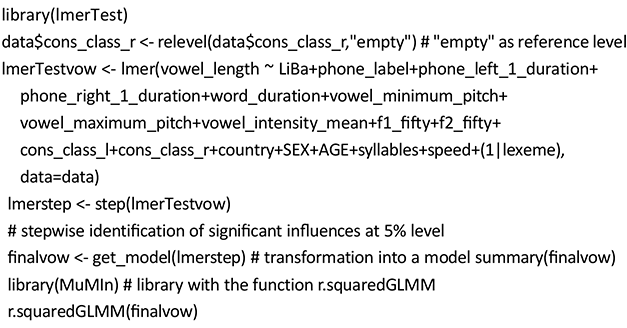

On this data set, we applied the mixed-effects models introduced in Section 3.2.3.1. We start with a model including the stochastic factor lexeme. To apply a stepwise function to identify the significant influences, we utilized the library lmerTest and the following R-code:

The parameter estimates can be found in Table 6. For this model, we have to install the package MuMin to generate the so-called marginal R2m for the model with fixed effects only and R2c, the so-called conditional R2, for both the fixed and the random effects together. This produces the goodness of fit measures R2m = 0.4110 and R2c = 0.6068.

Specifying a stochastic effect for the intercept by (1|lexeme) generates estimates of individual intercepts for each observed value of, in our case, lexeme. These estimates can be illustrated by means of coef(finalvow)$lexeme and can be used as intercepts for values of the lexeme used in the training set of the model. For new levels of the random effects, no such coefficient is available and only the fixed-effects part of the model can be used for prediction. On this basis, the relevant R2 for the goodness of prediction (cf. Section 3.2.6) is R2m = 0.41, which is not much higher than the R2m = 0.36 of mcvowa.

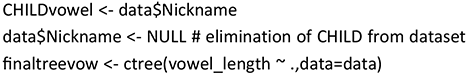

In what follows, we will compare the estimated model from linear mixed-effects regression with a regression tree estimated on the full sample concerning model structure and accuracy. In the estimation of this tree, we include lexeme as a fixed-effect factor and exclude CHILD, which we assume to be stochastic (for a more detailed discussion of stochastic factors in decision trees, cf. Section 4.5.1).

This leads to an R2 of 0.4745, which is higher than R2m = 0.4110 but lower than R2c = 0.6068 for the corresponding linear mixed-effects model finalvow, presented previously. As Figure 4 illustrates, lexeme initiates only the first (root) split in the tree model, separating long vowels (at the left) from shorter ones. Only “Cheek” appears to be misplaced when following standard pronunciation. Such splits are admittedly less detailed than estimating individual increments, as in the linear model. However, as can be seen, the tree identifies a (natural) grouping of the lexemes. Overall, the linear mixed-effects model and decision tree have quite a different structure and thus give very different insights, which might be interesting to compare and combine. The first model only estimates main effects, whereas the second model mainly estimates interactions. For example, cons_class_r and vowel_minimum_pitch only play a role if lexeme is not {Bee, Cheese, Key, Leaf, Sea}. For a comparison of these models with our optimized regression trees, see Section 5.4.2.

Figure 4 Tree for vowel length.

For the sake of completeness, we would like to mention that if we included the stochastic term (1|CHILD) in the estimation of the linear mixed-effects model, the goodness of fit measures would be R2m = 0.4047 and R2c = 0.6542 and the estimates of the fixed effects would be similar, but not identical, to the model without (1|CHILD).

In general, a plot like the one illustrated in Figure 4 is easy to interpret and normally suffices for a straightforward interpretation of decision trees. We ignore the bad fit here, as the focus is simply on the interpretability of the graph. The effects of the predictors of a (mixed-effects) linear model and their dependencies can be visualized by means of various plotting aids in R – for example, by means of the package sjPlot for Data Visualization for Statistics in Social Science (Lüdecke Reference Lüdecke2024).

For logistic regression models, too, a mixed-effects variant exists (cf., e.g., Winter Reference Winter2020: 267). Furthermore, scholars have worked with Bayesian generalizations of the function lmer. For reasons of restrictions in space, we will not further discuss the Bayesian approach here, in particular since it is not relevant for the presentation and discussion of our approach (but see, e.g., Levshina Reference Levshina, Schützler and Schlüter2022 for a comparison of Bayesian and frequentist models, and Winter & Bürkner Reference Winter and Paul-Christian2021 for an application of Bayesian mixed-effects models).

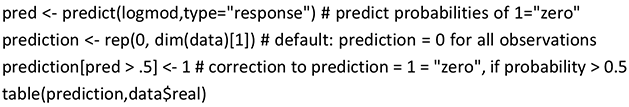

3.2.4.2 Classification Models

For classification models, various methods exist for measuring accuracy. In linguistic studies, overall accuracy is often used since it is a straightforward and easy-to-apply method, even for statistical novices. The underlying formula is simple and overall accuracy thus easy to calculate:

overall accuracy = (number of correct predictions) / (total number of observations).

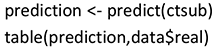

We will illustrate this with reference to the logistic regression model logmod (cf. Section 3.2.3.2), for which class coding is carried out alphabetically: 0 = realized, 1 = zero. To calculate the overall accuracy, a comparison of predictions and observed values of the target is needed. We consider the so-called confusion matrix for a detailed comparison of the observed classes and their predictions. In this matrix, each column sum equals the number of actual observations in the class indicated at the top (e.g., 5618 = 5600 + 18 observations for realized in the confusion matrix provided as follows). Each row presents the numbers of predictions of the class indicated in the left column for each class indicated in the top row. For example, the class zero is correctly predicted in 19 cases, but also incorrectly in 18 cases instead of the class realized.

prediction | realized | zero | |

0 | 5600 | 509 | # 0 stands for "realized" |

1 | 18 | 19 |

Therefore, the overall accuracy is 0.914 (= (5600 + 19) / (5618 + 528)). At first glance, this is a very good result. However, it is misleading since while the large class realized is nearly always correctly predicted, the smaller class zero is underpredicted (i.e. in only 19 of 528 cases is zero correctly predicted; cf. our discussion of this common linguistic problem in Section 1). Therefore, this model is inadequate for prediction.

Unfortunately, for unbalanced classification problems, decision trees often neglect the smaller class, too. This, again, is demonstrated by means of a confusion matrix for the tree ctsub, which corresponds to the logistic regression model logmod:

prediction | realized | zero |

realized | 5618 | 528 |

zero | 0 | 0 |

Since for the tree ctsub the smaller class zero is never predicted, this model is inadequate for prediction, too. As stated in the introduction to this Element (Section 1), this problem was one of the main reasons and motivations for the development of the PrInDT approach (cf. Section 4.1 for further discussion).

3.2.5 Model Interpretation

Another important aspect of statistical modeling is the interpretability of the resulting model. Sometimes statistical models may predict results that go against all earlier linguistic assumptions and theoretical approaches. This may imply two things: Either the model is accidentally wrong in whatever it predicts or earlier theoretical assumptions are. When starting the collaboration, we encountered such a case in the modeling of the Singapore data and thus decided to make interpretability a major aspect of our approach. As further elaborated on in Section 4.2, this procedure is also an interesting means of validating earlier theoretical assumptions, and our observations suggest that restricting trees in their possible combinations of values might be a valid option to avoid an accidental misinterpretation of data and results. A tree chosen for analysis must be fully interpretable in order for us to compare the model to earlier results from similar research questions or put to the test existing theoretical approaches (for details, cf. Section 4.2). In general, we would claim that interpretability is a prerequisite for the assessment of model validity (cf. Gries Reference Gries2020 for a discussion).

Unfortunately, interpretation is only easy and straightforward for comparatively small trees, since the interaction of many “if-then rules” makes trees rather difficult to process and understand. The size of the tree can easily be controlled for ctrees, since we can manually set the significance level, which indirectly determines the size of the tree. (The lower the significance level, the smaller the tree; cf. Section 3.2.3.3.) This is also true for linear models: The lower the maximally accepted significance level, the smaller the model.

Still, the question remains of which tree should be interpreted. Should one use the tree based on all observations? Or do “better” trees exist? We suggest that only models which are fully interpretable and come with high accuracy should be interpreted. High accuracy, however, is relative, and different scientific disciplines would set different standards here. This question has not yet been conclusively discussed by linguists. Winter (Reference Winter2020) and Sonderegger (Reference Sonderegger2023) turn toward this problem for regression models and suggest that an accuracy of 0.7 would be unusually high for a linguistic study (Winter Reference Winter2020: 77) and that stochastic effects often account for major parts of rather high accuracies (Sonderegger Reference Sonderegger2023: 278–279). In the social sciences, 0.5 seems to be treated as an acceptable level (Fernando Reference Fernando2024). We would agree that every model with an accuracy below 50% should be scrutinized. For classification models, experience has shown that accuracy rates of 70% and higher can be treated as very reliable and strong. The generation of models with high accuracy is at the core of our approach, which is why we will discuss strategies for optimizing the accuracy of ctrees in Section 4.3. Please note that we use the term accuracy in a broader sense than usual, since we aim for the optimization of a combination of goodness of fit and predictive power (cf. next section).

3.2.6 Real Prediction with Statistical Models: Resampling

Another shortcoming of many linguistic studies that make use of inferential statistical methods is that they do not consider real prediction (i.e., the transferability of the results beyond the observed sample to a wider population; cf. Buschfeld, Leuckert, et al. Reference Buschfeld, Leuckert, Weihs and Weilinghoff2024). For the prediction of observations of the target variable that were used for model construction, we hope for a result close to the observed value of the target since the model was constructed to represent these observations as accurately as possible. However, strictly speaking, it would be even more interesting to construct models that are valid also outside the observed sample – that is, which can be generalized to a wider population (e.g., Singapore children in general and not only those included in the sample). To get an idea about the predictive power of a model beyond the sample used for model construction, statisticians have used different kinds of so-called resampling methods for nearly 60 years (cf., e.g., Lachenbruch & Mickey Reference Lachenbruch and Mickey1968 for leave-one-out crossvalidation, Efron Reference Efron1979 for the bootstrap method, Politis et al. Reference Politis, Romano and Wolf1999 for subsampling).

In resampling, a random part of the observed sample is excluded from model construction, and for this so-called hold out the predictive power is tested by the comparison of the predicted and observed values of the target. By repeated resampling, it is ensured that each part of the overall sample is part of at least one hold out so that a measure of predictive power can be constructed based on the overall sample of observations.

In our PrInDT approach, we slightly adapt this procedure for the construction of optimal models. Typically, repeated resampling leads to many different models representing the relationship between target and predictors. The entirety of such models is traditionally referred as “ensemble.” In statistics, such ensembles are generally used for model assessment. In our approach, we employ ensembles to identify the best tree according to a problem-adequate accuracy criterion to be introduced in detail in Section 4.1.

Another important aspect to consider when looking for models with high predictive power is the danger of so-called overfitting of the training set (i.e., a too-perfect fit on the training set that makes the generalization of results to other parts of the population difficult). It is, therefore, of crucial importance to avoid overfitting (i.e., to generate models with high predictive power; cf. Section 4.3 for further comments on avoiding overfitting).

3.2.7 Two-Stage and Interdependent Models

Let us, finally, introduce two more advanced statistical modeling techniques used in linguistics, which motivate our approach. The approach called MuPDAR(F) (Multifactorial Prediction and Deviation Analysis using Regression/Random Forests), introduced by Gries (Reference Gries, Flach and Hilpert2022), distinguishes two kinds of speakers: the reference speakers (RSs), which are employed for constructing a first model, and the target speakers on which the first model is tested. MuPDAR(F) involves four steps:

i. A regression or classification model is estimated for the reference speakers (RSs).

ii. If this first model is good (enough), the target variable is predicted for the target speakers (TSs).

iii. The TSs’ actual target value is compared to the prediction of the first model.

iv. A second model is estimated with a response indicating the correctness of the prediction of the first model. The response may be either binary (prediction correctly or not) or numerical (probability of the correct TSs’ choice estimated by the first model in case of classification or by the difference of actual and predicted values in case of regression). The second model uses the same predictors as the first model but with the corresponding values of the TSs.

This kind of modeling includes two estimation stages (i and iv). This way, the properties of the TSs leading to deviation of the observed target values from the prediction by the first model can be confirmed by the second model. This is extremely valuable for analyses where two groups of speakers are compared, since differences between the behavior of the two groups can be revealed. Two-stage modeling is also one of the ideas of our PrInDT approach for estimating interdependent models (see Sections 4.5.6 and 5.6; for an application, see Section 5.4.2).

Studies investigating the interdependencies of particular features are extremely rare in linguistics. One exception is Larsson et al. (Reference Tove, Plonsky and Gregory2021), who discuss the great potential of interdependent models for corpus linguistics. However, in most studies of varieties of English, characteristics are investigated in isolation and either listed as sets of particular features in overview collections of World Englishes (e.g., Kortmann et al. Reference Kortmann, Lunkenheimer and Ehret2020 and the two edited volumes by Kortmann et al. Reference Kortmann, Burridge, Mesthri, Schneider and Upton2004 and Schneider et al. Reference Schneider, Burridge, Kortmann, Mesthrie and Upton2004) or as individual studies. The relationship between, say, the realization of local phonological features and morphosyntactic features (i.e., the question whether a speaker who makes strong use of local phonological features also strongly employs local grammatical characteristics) is underresearched so far. Interdependent models enable testing hypotheses of relationships between multiple dependent and independent variables. In their application, Larsson et al. (Reference Tove, Plonsky and Gregory2021) focused on whether the dependent variables for their research question are influenced solely by the independent variables or whether they also influence each other. This is a common question for studies with more than one dependent variable. A typical modeling approach is a combination of linear models, referred to as “structural equation models,” as in the R-package lavaan (Rosseel Reference Rosseel2012) used in Larsson et al (Reference Tove, Plonsky and Gregory2021). Our PrInDT approach offers an alternative for the investigation of interdependencies between classification and regression models by means of optimized decision trees. In particular, we consider whether the dependent variables are influenced solely by the independent variables or whether they also influence each other (cf. Section 4.5.6).

4 PrInDT: Prediction and Interpretation of Decision Trees

As discussed in the preceding sections, this Element of World Englishes aims to address the shortcomings of many current statistical approaches used in linguistics and, in particular, in World Englishes research (cf. Sections 1–3). We exclusively employ and discuss decision trees since they are easy and straightforward to interpret, in particular when compared to other models such as mixed-effects models. As already addressed in Section 3.2.3.3, decision trees are easy to plot and their graphic representations illustrate so-called if-then rules that are intuitive and straightforward to interpret. Understanding mixed-effects models requires various kinds of plots for the interpretation of effects and predictor dependencies (cf. Section 3.2.3.1). We further focus on ctrees (Hothorn et al. Reference Hothorn, Hornik and Zeileis2006) since the size of these trees can be easily controlled by the specification of a significance level (cf. Section 3.2.3.3). In our PrInDT approach (Weihs & Buschfeld Reference Weihs and Buschfeld2021a, Reference Weihs and Buschfeld2021b, Reference Weihs and Buschfeld2021c), we aim to identify the best ctree from a variety of potential trees generated by different resampling methods. As the accuracy criterion, we always employ the balanced accuracy for classification and R2 for regression on the full sample (cf. Section 4.1).

Looking through textbooks on statistics for linguists, it is striking that regression models are intensively discussed in all of them. Decision trees are commonly used, in particular in variationist linguistics (cf. Tagliamonte & Baayen Reference Tagliamonte and Baayen2012) and mentioned in the books by Levshina (Reference Levshina2015) and Gries (Reference Gries2021). However, how to identify optimal trees (i.e., the option of comparing individual trees and selecting the best one) is never really dealt with. Instead, ensembles like random forests are proposed for the assessment of variable importance at the expense of interpretability since many trees cannot really be interpreted together because the interaction of the full set of “if-then rules” in many different trees is very difficult to assess. Furthermore, we saw in preliminary pilot investigations that single trees might exist that have an even higher predictive power than their ensembles. This might be due to the fact that ensembles generally also include trees with low accuracy, which decrease the overall accuracy of the whole ensemble. Thus, in what follows, we will mainly focus on individual ctrees.

4.1 Model Selection: Accuracy Revisited

In linguistics, ctrees are commonly determined based on the full set of the observed data, and in classification their accuracy is estimated without taking into account the different sizes of the classes (cf. Section 3.2.4). To avoid models that underpredict the smaller class and improve the generalizability of trees (i.e., the validity of their results beyond the observed data and thus for a wider [speech] community), we have developed the PrInDT approach for both classification and regression models (cf. Section 3.2.3).

Model selection is an important task in statistics. The main discussion revolves around how to weigh complexity/interpretability of a model against its accuracy. A well-known simple measure for complexity is the length of a model (Rissanen Reference Rissanen1978) measured, for example, by the number of coefficients in a linear model or the number of nodes in a decision tree. The question is how large, and thus complex, we would want a model to become to improve its accuracy, at the expense of straightforward interpretation. This question relates to the notion of overfitting of models – that is, which fit too closely or even exactly to their training data, so that they cannot make accurate predictions on any data other than the training data.

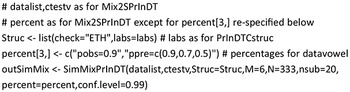

In our PrInDT approach, we decided to weigh accuracy and interpretability against each other and optimize the accuracy of models still keeping them small enough and thus easy to interpret. For ctrees, it is reasonably easy to restrict model size by means of an external parameter, namely the maximum significance level of a split (cf. Section 3.2.3.3). In most of our analyses, it appeared to be adequate to set this level to 1%. For models with this maximum significance level, we then aim for optimizing their accuracy. However, it needs to be kept in mind that for a higher maximum significance level (e.g., 5%), accuracies might be higher but may lead to overfitting. For a more detailed discussion of how to avoid overfitting, see Section 4.3.