Introduction

What you are about to read is the story of the attempt by physicists, economists, statisticians and mathematicians to explain how markets work based on concepts and tools taken from statistical mechanics. Shifting the focus of analysis from individuals to aggregates of individuals is the crux of this attempt.

It is well known that individual maximising behaviour – be it the utility or profit vector – is the basis for theoretical construction, enabling both partial and general equilibrium conditions to be designed. The market works because it is driven by maximising behaviour.

Shifting the perspective from the individual to the aggregate means that the end point of this interpretation is no longer to argue that an equilibrium exists, but to show that the macroscopic stability of a combination of variables – prices, incomes, rents and so on – is possible. If classical mechanics supported the construction of the economics of individuals, statistical mechanics proves useful to the economics of aggregates.

First a conceptual, subsequently a mathematical-statistical construction with numerous logical-empirical implications, the fascinating, lengthy history of the application of statistical mechanics in economics initially involved isolated scholars conducting niche research, and then became the subject of broader debate in the second half of the twentieth century, finally creating a new discipline – econophysics – at the beginning of this century. Running in parallel to mainstream economics, its conceptual assumptions are unlikely to bring the two disciplines together.

Econophysics is based on and has grown with statistical mechanics. Its seeds were sown by research carried out at the turn of the twentieth century, when statistical mechanics was becoming established and Vilfredo Pareto and Louis J. B. Bachelier independently published work on income distribution (Pareto, Reference Pareto1896–1897) and option pricing (Bachelier, Reference Bachelier1900). Earlier still, sociophysics, the ‘average man’ of Adolphe Quetelet and the ‘social physics’ of Auguste Comte, prefigured econophysics, but it was the kinetic theory of gases, as set out by James Clerk Maxwell, Ludwig Eduard Boltzmann and subsequently Josiah Willard Gibbs, that led to mechanical statistics. The latter had been around for about a century when, in 1995, the physicist H. Eugene Stanley first introduced the term econophysics at a conference on socio-economics organised by physicists in Kolkata, India.

Economists greeted the probabilities and innovations provided by statistical physics cautiously, for many years clinging to classical mechanics. The reluctance persisted until a growing number of isolated researchers began to study broad aggregates, including monetary aggregates, the distribution of income and wealth and the prices of financial products. Markets were viewed as large ensembles (the term introduced by Gibbs) of agents and studied as entities in their own right, rather than on the basis of the behaviour of the individuals within them. Introducing his textbook on statistical mechanics, Sethna wrote: ‘Statistical mechanics allows us to solve en masse many problems that are impossible to solve individually’ (Sethna, Reference Sethna2021: 49). Anchoring economics in mechanical statistics enables problems to be tackled that economic individualism and microscopic analysis preclude.

The study of aggregates immediately required a form of representation that did not always coincide with a normal or Gaussian distribution. Other, often asymmetrical, distributions were introduced, to represent markets where mean and variance were unknown, based on the analogy between particles in a gaseous or liquid system and agents in a closed market. Energy exchanges between particles, as studied by kinetic theory, and the motion of molecules in a liquid system provided concepts that could be imported into economics and finance. And not only concepts but tools as well, taken from statistical mechanics and the study of particles, were applied to economic and financial phenomena.

The construction of economic and financial analysis from statistical mechanics was not merely a metaphorical exercise or a simple analogy from one discipline to another but involved a radical rethinking and reinterpretation of phenomena. Just as in the Maxwell–Boltzmann ideal gas model, particles in a closed system reach a point of thermal equilibrium by exchanging energy, so agents in a market reach a distribution of wealth and income by exchanging, which can take a variety of forms, ranging from normal (Gaussian) to more commonly skewed distributions. And the initial endowments are irrelevant.

Exchanges between agents, driven by talent, skill and information, are such that they lead to a probabilistic distribution with concentrations of wealth on the one hand and widespread income poverty or wealth on the other. From a statistical mechanics perspective, it is superfluous to interpret the factor driving exchange; what matters is describing and comparing the actual results. Unlike other approaches, statistical mechanics assumes that market mechanisms determine distribution based on the repetition of transactions.

The final distribution does not result from the initial inequality but is the product of exchanges between agents enriching one side and impoverishing the other. The probability of an agent retaining the same initial endowment after a series of exchanges or investments is very low, and the probability that the endowment changes is very high. This result is what most distinguishes the application of statistical mechanics to finance and economics.

Statistical mechanics may have taken almost a century to gain acceptance in economics, but it was a century rich in conceptual insights and theoretical innovations. Econophysics, and with it the study of complexity, took up the legacy of this long journey, becoming by the end of the twentieth century an independent field of economic and financial research, inspired by statistical mechanics.

A single thread links the theoretical innovations of the early twentieth century with the econophysics of the latter part of the century. The focus on aggregates – ultimately attention to large markets, the replacement of the deterministic by the probabilistic view, the acceptance of random behaviour and recognition that certain phenomena are only visible at the macroscopic level – becomes the constant feature of a research programme painstakingly taking shape until it becomes econophysics (Garibaldi & Scalas, Reference Garibaldi and Scalas2010).

The programme was based not only on concepts but also on shared analytical tools. In particular, the treatment of non-Gaussian and heavy-tailed distributions in price series, income and wealth distributions and other social phenomena represents the approach associated with econophysics (Shubik & Smith, Reference Shubik and Smith2009: 10). The focus is on non-Gaussian distributions because they can probabilistically express the inequality of interactions between individuals. Indeed, heavy-tailed distributions allow for extreme and exceptional events, and this compels us to change the commonly accepted view of economics as a science of averages underlying economic equilibrium. As Benoît Mandelbrot (Reference Mandelbrot2009: 59) argues, it is precisely the assertion that the normal or Gaussian distribution prevails that gives economics the precision that brings it closer to classical physics. To admit that economic phenomena correspond to other skewed distributions, which admits randomness and reduced precision, is to enter the realm of economics and finance reinterpreted by statistical physics.

The emergence of a new, broad field of research is neither sudden nor unexpected. It is the result of insights, years of trial and error and often sporadic research with no follow-up. However, even if these efforts seemed fruitless at first, they have gradually contributed to the emergence of a new field of research, helping to define its objectives and boundaries and to establish its internal coherence. Part I of this Element is devoted to a century of initially fragmented, isolated and generally neglected research that slowly became a research programme in econophysics. This only proved apparent at the end of the twentieth century. The attention paid to the use of mechanical statistics in the 1930s was undoubtedly considered impromptu and extravagant. However, in a long-term perspective, the work has shown itself to embody courageous foresight.

The birth of a new scientific field is never deterministic. The sporadic reflections mentioned so far ultimately created a cultural context that was conducive to the emergence and recognition of the new discipline. Part II of our journey is devoted to the new field of econophysics, originating at the start of the millennium. Its history is as yet brief, only a quarter of a century, but its wealth of contents and ideas suggests that the discipline is developing fast. Econophysics is characterised by a scientific enthusiasm that has become contagious, leaving few indifferent to its future.

Part I A Long Road (1896–2000)

1 The Fundamentals

Statistical Mechanics and the Focus on Aggregates

The story begins at the turn of the twentieth century, when research by Maxwell and Boltzmann on the behaviour of gas molecules in a closed container coincided with the lengthy debate on probability, animating social and political spheres, where considerations of population and wealth distribution were familiar. Probability crossed over into the natural sciences, physics and social disciplines, stimulating a conceptual and methodological transformation known transversally as the probabilistic revolution (Krüger et al., Reference Krüger, Gigerenzer and Morgan1990). In physics it led to the foundation of statistical mechanics (Ehrenfest & Ehrenfest, Reference Ehrenfest and Ehrenfest1990) and elsewhere challenged the deterministic faith behind natural and social analysis, providing glimpses of new knowledge about the interaction of elements (whether particles or agents).

Two dates are symbolic of the transformation of physics: 1870, with the birth of statistical mechanics, a new theory or ‘new kind of knowledge’ in the words of James Clerk Maxwell (see Harman, Reference Harman1998); and 1926, with the shift of attention to quantum mechanics, coinciding with Erwin Schrödinger’s wave function and its probabilistic interpretation by Max Born (see Krüger, Reference Krüger, Krüger, Gigerenzer and Morgan1990). It was precisely in the 1920s that the emergence of these new branches of knowledge also ‘contaminated’ political economy.

The behaviour of particle aggregates studied according to the kinetic theory of gases, the central concern of the earliest applications of mechanical statistics, aroused the curiosity of some economists and statisticians who sought analogies with the behaviour of individuals in contexts where large numbers are present and able to carry out transactions – of any kind – with each other. Even in general terms, the market lent itself to such thinking, bringing kinetic theory into the vocabulary of economists and statisticians, who decided to pursue the analogy, however episodically. And this despite the fact that the world of physics in the 1920s had turned its attention away from particle aggregates towards quantum mechanics.

The meeting of the social and exact sciences was facilitated by the approach of the early probability theorists in relation to the study of gas particles. Maxwell, Boltzmann and Gibbs affirmed the existence of a correspondence between experimental data and probability distributions, without claiming that these distributions corresponded precisely with the state of the system. In its early years, the kinetic theory of gases recognised a degree of inaccuracy in determining the velocities or positions of the particles under study: the accurate macroscopic representation of the system coexisted with an approximate microscopic representation. This finding was taken up by the economists who sketched the first kinetic models of prices and incomes. Previously, liquids had suggested analogies with monetary liquidity (see Morgan, Reference Morgan2012: 172); now, gases came to be used not only metaphorically but also as conceptual tools to study economic phenomena.

The so-called marginalist revolution in economics was in its infancy when kinetic theory suggested studying ensembles rather than individual units. However, earlier classical and mercantilist thinking dealt with macro, not micro, quantities and variables. And it is precisely because income, wealth and money, that is, stocks and quantities, are relevant independently of their distribution among individuals that they were the first objects to be studied through the application of kinetic theory. This is an important point indicating that the application of statistical mechanics to economics, and later to finance, was never intended to spread over the whole of economics, but focused on the macroscopic analysis of large aggregates.

One idea in the kinetic theory of gases that economists liked was the notion of constructing a macroscopic representation on the basis of the binary transactions taking place between agents (as between particles), according to the principle of the conservation of wealth and energy. Although the number of gas molecules in a closed container is far larger than the number of agents in the social sphere, if collisions recurring in a state of stationarity with unchanged energy produce stability or order, the same was thought to be possible with financial, economic or social variables.

The shift of the (few) economists towards the macroscopic level, implicit in the analogy with statistical mechanics, was possible via a series of conceptual and methodological transformations during those years, in particular the spread of probabilities and their application to distribution curves. The economists who ventured into the terrain of statistical mechanics were among the first to advocate the use of probability in economics, although the probabilistic revolution did not occur until the 1930s (see Ménard, Reference Ménard, Krüger, Gigerenzer and Morgan (eds.)1990; Morgan, Reference Morgan, Krüger, Gigerenzer and Morgan (eds.)1990). As we shall see, probability distributions appeared in the study of the transition from micro- to macroeconomic conditions as early as the first years of the 1920s, shortly after Gibbs (Reference Gibbs1914) had demonstrated their relevance in the study of ensembles in thermal equilibrium.

The spread of probability influenced the use of important statistical concepts. As is well known, the great achievements of the statistical-probabilistic disciplines were obtained by assuming the principle of stochastic independence (and, conversely, dependence). Daniel Bernoulli, Pierre-Simon Laplace, Abraham de Moivre and Siméon-Denis Poisson made the principle of independence explicit, and it also features in the construction of Johann C. F. Gauss’s theory of errors. The principle was partially abandoned at the beginning of the twentieth century, due to Max Planck and Andrei Markov, who rejected independence in favour of stochastic dependence in the development of quantum physics.

Stochastic independence is relevant to economics because it allows data to be aggregated without the risk of bias due to correlations between individual variables. But it is precisely the interpretation of emergent properties, the result of correlations between variables, that forces us to replace independence with stochastic dependence. On the other hand, it is difficult to imagine the outcome of interactions between individuals acting in a market without some form of correlation or mutual distortion. And not only that. The asymmetric outcome of the distribution, which must be regarded as a collective phenomenon, can also be explained by such interactions.

An ensemble of variables must be represented by a distribution, be it normal or Gaussian, derived from the law of errors, one of the main legacies of the eighteenth century, or an exponential, gamma or power-law distribution and so on. What matters is the ability to represent the order of the particles as economic variables (incomes, prices, yields), including all the phenomena that could influence them. This is how phenomena observed in nature, including extreme phenomena, are represented.

The initial imports from statistical mechanics transformed classical physics. Not so economics, finance or the social sciences, where the concepts introduced at the beginning of the century stabilised and gained strength until the end of the century, when econophysics emerged as a new field of research.

In econophysics texts, the events most often cited as foundational for the discipline are the publication of the income distribution curve by Pareto and the use of Brownian motion in the study of financial option prices by Bachelier. Although not explicitly linked to contemporary developments in statistical mechanics, the two ideas, albeit diverse, were part of a wave of conceptual transformations. Pareto published his distribution curves in the Cours d’Économie Politique in 1896–1897 (previewed in an article in 1896) (Pareto, Reference Pareto1896–1897); Bachelier presented his thesis on financial speculation, Théorie de la Spéculation, which cited Brownian motion, in 1900 (Bachelier, Reference Bachelier1900). In the history of ideas, the two theories had very different destinies: Pareto’s income curve immediately aroused curiosity and debate, while Bachelier’s thesis was not translated into English or republished until the 1960s, when it was rediscovered by Paul Samuelson.

Whatever the different publishing histories and reception of these two theories, econophysicists came to acknowledge them as fundamental to their discipline, essentially for two reasons. The first concerns the subject matter of both: the incomes received by an aggregate of individuals and the prices of a graded set of options exchanged by individuals, both being aggregates of variables. The fact that both the incomes received by a set of individuals and the prices of the options exchanged by individuals were regarded as random variables made it possible to adopt the approach of statistical mechanics and to represent these aggregates of variables probabilistically by means of distribution curves resulting from interactions between individuals. This will be the common thread running through all the attempts to apply statistical mechanics to economics and statistics in the twentieth century, right up to the creation of the discipline of econophysics.

Vilfredo Pareto’s Stylised Fact

Pareto was sceptical about the analogy with the kinetic theory of gases, and paid little attention to statistical equilibrium until his late works, yet his income distribution curve is one of the pillars of econophysics. Known for its negative slope, its heavy tail at the bottom and the concentration of the highest incomes in the hands of the few, the Pareto curve is familiar to statisticians, physicists and economists, as well as to a wider audience of non-specialists.

Most often compared to Léon Walras as the founder of general economic equilibrium and the animator of the Lausanne School, Pareto, an engineer by training, was not only an economist whose optimality and other important insights are remembered in the history of the discipline but also a political scientist, best known for his theory of the circulation of elites, and the author of a massive treatise on sociology (Pareto, Reference Pareto and Pareto1916).

Something similar to the circulation of elites can be found in his income distribution, since he does not exclude circulation in both directions, towards higher and lower incomes, although these movements are concentrated in the middle of the curve and the middle-income brackets. The distributions at the top and in the heavy tail, that is, the highest and lowest (and more widespread) incomes, respectively, are more stable.

The income (and wealth) curve is a ‘stylised fact’ and as such represents a kind of empirical exception in Pareto’s thinking. Stylised fact or rule of thumb, he shows that the asymmetric distribution of income (and wealth) is repeated in countries and cities at different levels of development and over different time periods. Pareto presented his analysis first in an article in 1896 and then in Cours (Pareto, Reference Pareto1896–1897), where it immediately attracted international interest. The same interpretation of the income curve appears in later works (Pareto, Reference Pareto, Montesano, Zanni, Bruni, Chipman & and McLure1906).

Interestingly, not all incomes are examined, but only those above the threshold (however low) of taxability. Lower incomes, often subsidised and with donations, are excluded because they lie outside the market and are not the result of commercial transactions.

Of Pareto’s three versions of the income curve, he preferred the first because of greater coherence with the income data gathered. Given N, the number of income units above a certain income threshold, x, A is a positive scale parameter and α a parameter representing the slope of a curve expressed in terms of income frequencies. Pareto expresses the equation of the income curve as follows:

![]() (1896–1897, §958). A logarithmic representation of the curve is also provided and is useful to understand the decreasing distribution of income.

(1896–1897, §958). A logarithmic representation of the curve is also provided and is useful to understand the decreasing distribution of income.

The second version of the curve corresponds to:

![]() (Pareto, Reference Pareto1896–1897: §961): a parameter a was added to restrict the range of incomes to above the survival threshold considered in the analysis. Clearly, increasing the constant a narrows the range of incomes considered, as if Pareto were primarily interested in studying the distribution of high incomes. The third equation (Pareto, Reference Pareto1896–1897: §958) approximates the income curve to a normal distribution by broadening the incomes, but was soon abandoned because of its discrepancy with the available data.

(Pareto, Reference Pareto1896–1897: §961): a parameter a was added to restrict the range of incomes to above the survival threshold considered in the analysis. Clearly, increasing the constant a narrows the range of incomes considered, as if Pareto were primarily interested in studying the distribution of high incomes. The third equation (Pareto, Reference Pareto1896–1897: §958) approximates the income curve to a normal distribution by broadening the incomes, but was soon abandoned because of its discrepancy with the available data.

Pareto does not provide a version of the curve in terms of a probability distribution – with one exception at the end of the Cours (1896–1897: 416–419) – reiterating that the starting point can only be empirical. He concentrated instead on the logarithmic application. The slope of the curve depends on the much-debated value of the exponent α, which Pareto set at 1.5, considered to be sufficiently constant among different countries and historical periods. This value best fits the data observed by Pareto, giving us a universally valid rule of thumb. In the words of Mandelbrot (Reference Mandelbrot1960: 81), the negatively sloping logarithmic line represents the most significant Pareto law because the line refers to people with incomes only above the minimum level considered.

The normal distribution was rejected not for logical but for empirical reasons since the observed data would not lead to a normal distribution. The error curve would not be applicable because the observed distribution has a cause and cannot be attributed to chance, as error theory suggests.

Individuals, even in a number of heterogeneous groups (based on income), can be represented according to a hyperbolic distribution of individual qualities or abilities (talent). The curve would thus represent a statistical equilibrium determined by the heterogeneity of individuals. Circulation, similar to the circulation of elites proposed by Pareto in the political sphere, is not excluded, but does not alter the complex configuration precisely because of the concentration in the middle range of incomes and of the curve.

The extremes – the highest and lowest incomes – remain stable, while shifts occur in the middle range of the income distribution. The negative slope of the curve is therefore solely due to the asymmetric distribution of individual talents. Heterogeneity forced Pareto to reject the assumptions of equiprobability: agents are not equiprobable in terms of income possibilities, nor does the imposition of an equal distribution help. Indeed, ‘human nature’ as the cause of the distribution of wealth (Pareto, Reference Pareto1896–1897: §957) means that what Pareto calls the economic organism, that is, the institutional structure, plays no role. The distribution of wealth, and hence of income, is the result of (family, market, price-mediated) transactions between individuals.

This is confirmed by a clear multifractal view of income distribution, which can be seen when he writes that when one draws the distribution lines for countries of different sizes, ‘it looks as if one were drawing a large number of crystals of the same chemical substance. There are large crystals, medium crystals and small crystals, but they all have the same shape’ (Pareto, Reference Pareto1896–1897: §958). This is a mercantile, non-institutional view of income distribution.

Pareto did not consider kinetic theory and probability when formulating his income theory, but the conclusions on the role of trade and markets are close to those adopted subsequently by econophysics. The distinction between income groups set out in the section added to the Cours is used in the Treatise to focus on a society organised into social groups, and this led Pareto to consider a possible analogy with kinetic theory, which is probabilistic by definition. Pareto introduced the analogy with statistical equilibrium from the kinetic theory of gases (Reference Pareto and Pareto1916: §2074) by considering how the actions of individuals compensate each other, resulting in oscillating states leading to a general equilibrium and a stable trend.

The analogy with gas theory is based on the observation that society comprises units (molecules) that are more heterogeneous than those that make up the economy (Pareto,Reference Pareto and Pareto1916: §2079), also recognising the advantages of forming groups of individuals with similar incomes, interests and sentiments (Pareto, Reference Pareto and Busino1922: §1124). By comparing the group to a centre of energy, Pareto seems to refer to the future quantum theory.

Bachelier’s Random Walk

Identifying a criterion, if not a law, for the context-independent pricing of shares, Bachelier’s Théorie de la Spéculation (Reference Bachelier1900) is rightly regarded as a founding text of financial economics and econophysics. The causality characterising the formation of option prices in Bachelier’s financial market, seemingly far removed from talent and other factors underlying income distribution, actually shares with Pareto the naturalness of the mechanism that would regulate the market. The main difference lies in the distribution of the variables, which is almost hyperbolic in the case of Pareto’s income and Gaussian in the case of Bachelier’s prices. The small option price movements observed over a short period are independent of the previous and current prices of the financial asset in question and are independent of the price movements of other options (Brownian motion). Precisely because price fluctuations are case dependent and stochastically independent, and taking into account the central limit theorem, they can be traced back to an error curve with a Gaussian distribution. The differences lie in the conceptualisation of motion and, above all, in the presence of short, stochastically independent movements.

Although the end point is a normal distribution, Bachelier profoundly innovated the approach to financial markets. The conceptual scheme required by the application of the Brownian motion is very different from that of classical mechanics or dynamics. The particle under examination has a motion expressed not in differential but in probabilistic terms. By analogy, the price of an option has a probability distribution. The problem, therefore, is to identify the type of distribution that characterises a memoryless particle driven by a fluctuating force (i.e., white noise, or an osmotic force).

The equation representing a diffusion process expresses the probability, p, that the option is quoted x′ at time t1 and that the probability of price x is quoted at time t1 + Δt as follows:

![]() (Bachelier, Reference Bachelier1900: 137), where

(Bachelier, Reference Bachelier1900: 137), where

![]() (with H = constant) is the positive mathematical expectation of x at t = 1, here taken as a constant. Mathematical expectations of prices (Bachelier, Reference Bachelier1900: 147), all of which depend on the square root of time, are statistically indistinguishable after a few steps, so it can be said that each price behaves like the others. The same result was clearly established by Einstein in 1905, although Einstein was unaware of Bachelier when he pointed out to scientists trying to measure the velocity of a microscopic particle that the average velocity in an interval t is inversely proportional to

(with H = constant) is the positive mathematical expectation of x at t = 1, here taken as a constant. Mathematical expectations of prices (Bachelier, Reference Bachelier1900: 147), all of which depend on the square root of time, are statistically indistinguishable after a few steps, so it can be said that each price behaves like the others. The same result was clearly established by Einstein in 1905, although Einstein was unaware of Bachelier when he pointed out to scientists trying to measure the velocity of a microscopic particle that the average velocity in an interval t is inversely proportional to

![]() .

.

Later, Norbert Wiener fully defined Brownian motion as a two-dimensional process taking into account the position and velocity of the particle (Wiener, Reference Wiener1921; Genthon, Reference Genthon2020). Ideally, if the particle is moved by a fluctuating force, it assumes values entirely irrespective of its past history. Brownian motion represented in this way can therefore be considered Markovian. And Markovian, independent of past values, are the prices of Bachelier’s options. Equally, if we look not at the single motion of a particle but at a series of motions, we obtain a random walk where the independence of each step is balanced by the sum of the previous steps to arrive at the current position.

In The Random Character of Stock Market Prices (Reference Cootner1964), a volume that contains the first English translation of Bachelier’s Théorie de la Spéculation (Theory of Speculation), Paul Cootner (Reference Cootner1964) points out that the density function assumed by Bachelier includes its continuous differentiation with respect to t, that it is possible to find prime and partial derivatives with respect to prices, and that mean and variance are finite. Thus, in Bachelier’s world of option prices, infinitely divisible – starting with Gaussian – distributions are considered.

In the same 1964 volume, Matthew F. M. Osborne points out that Brownian motion does not necessarily imply the absence of an underlying rational structure. While not denying the premise that the most likely value of the expected change in the logarithm of the price of a randomly chosen common stock at a random time is zero, Osborne argues that, under certain conditions and at certain times, it is possible to find a sample of stocks for which the expected change is slightly different from zero. Osborne’s conclusion that the stock market is a gigantic decision-making phenomenon (Osborne, Reference Osborne and Cootner1964: 295), to which scale invariance can be applied, foreshadows the macroscopic approach of econophysics.

The random variables left over from Bachelier’s model express the joint risk of buying and selling shares. Because of their independence and their Gaussian shape, these variables make it possible, for a given time horizon t, to calculate a return as the result of many independent shocks which, according to the central limit theorem, give rise to a Gaussian distribution of returns. The next step is to transform a discontinuous probability into a continuous and therefore differentiable distribution, an operation that Bachelier was unable to carry out because he did not have the mathematical tools subsequently developed by Andrej N. Kolmogorov in 1931. However, the attempt to transform apparently random price movements into a normal distribution was clear.

The adoption of the Gaussian distribution was a hallmark of theoretical research in finance. It was widely used because of the simplifications it allows – such as in the Black–Scholes stock price calculation – which are more applicable to finance than to economics.

However, Brownian motion has another characteristic placing Bachelier’s theory at the origin of econophysics. Measuring single particle properties allows these properties to be extended to several particles to determine a diffusion coefficient expressing volatility. In statistical physics, the average statistical properties of a single particle are equivalent to the statistical properties of an ensemble of particles (see Schweitzer, Reference Schweitzer2003: 40–41). As Mandelbrot (Reference Mandelbrot2009) has pointed out, the main point of applying Brownian motion to option pricing is that price increases are statistically independent and Gaussian, implying that the price itself is a continuous function of time with a stochastic component describing random fluctuations.

Lévy Flights

In the 1920s and 1930s, the mathematician Paul Lévy (Reference Lévy1925, 1937–1954, 1948–1965) investigated a class of random functions now called Lévy processes, which are a generalisation of Brownian motion characterised by steps that are stationary, statistically self-sufficient and stably distributed. However, the length of the steps differs from Brownian motion, which is notoriously short. This is the case of Lévy flights, which can be much longer, actually creating real discontinuities. Although there is no evidence that Lévy was familiar with Pareto’s insights, Lévy’s flights bring Brownian motion closer to Pareto’s heavy tails, where the variance is not finite but infinite.

Later, in a Reference Mandelbrot1960 article, ‘The Pareto-Lévy law and the distribution of income’, Mandelbrot links Pareto to Lévy in an effort to convince his readers of the usefulness of stable non-Gaussian distributions. Clearly, stability depends on the heavy-tailed portion, where the link to Lévy is evident. So Lévy can be used to add stability to the Pareto distribution.

Micro More Than Macro Insights: Quantum Mechanics

Statistical mechanics undoubtedly provides the main foundation of econophysics: concepts such as particle interactions, variations in velocity and heat transfer are central to the kinetic theory of gases, which has been adopted and adapted in economics and finance. By contrast, quantum mechanics – although often cited in modern econophysics – plays a less clearly defined role. Certainly, quantum mechanics provides a more thorough analysis of uncertainty in models based on statistical mechanics, bringing the analysis back to molecules (agents). In any case, the frequency with which it appears in econophysics papers is surprising. The elements of early quantum mechanics that are useful for understanding recent developments in econophysics are detailed in the following paragraphs.

With contributions from Max Planck, Niels H. D. Bohr, Werner K. Heisenberg, Erwin R. J. A. Schrödinger and others, quantum mechanics developed alongside statistical mechanics, introducing two essential elements into the origins of econophysics. The first concerned the nature of energy, no longer regarded as a continuous quantity that can be expressed statistically by the particles comprising the system being analysed. Energy became discrete quantities assigned to the analysed units on the basis of a probabilistic measurement. Although discrete, as a stochastic quantity (Mirowski, Reference Mirowski1989: 86) energy could not be measured with absolute precision. In fact, the concept of a wave function was used to describe the probability of finding a particle or quantity of energy in a particular state.

The second element concerned stochastic independence, which became dependence: molecules (agents) cannot choose their cell (income) without taking into account what happens to other molecules (agents). Thus, among other things, wavelength functions replaced collisions between particles and entanglement (connections) replaced interactions. Essentially, while gas particles are distinguishable from each other and thus metaphorically equated with agents, quanta, as granules of energy, are not distinguishable from each other (Costantini, Reference Costantini2004: 193). Probability is still central. The challenge that quantum mechanics poses to economics and finance seems to involve a re-conceptualisation of the market, characterised no longer by certain but by probable relationships.

In quantum mechanics, the search for the causes of a phenomenon is no longer crucial, because if no cause is apparent, it is simply postulated that there is no cause. This means that the laws of physics are indeterministic and can only be expressed in terms of probabilities (Jaynes, Reference Jaynes2003: 328). Unlike in statistical mechanics, where the causes of a phenomenon may be identified intuitively, in quantum mechanics ignorance of causes is translated into probabilities or, rather, probabilities overcome the problem of causes by elevating chance to a central role.

When moving from statistical to quantum mechanics, the analysis of aggregates or ensembles becomes more complex. This increased complexity stems from Heisenberg’s uncertainty principle: because the position and momentum of a quantum system cannot be determined simultaneously, such a system cannot be represented as a point in phase space. Furthermore, probability densities can take on different configurations and moments, so a second level of probability is required to account for the fact that the system can have a variety of quantum states, that is, positions, velocities, directions and other properties. This explains why ensembles in quantum mechanics are called mixed states, a kind of statistical mixture of different quantum states. Mixed states are immersed in uncertainty and are characterised by decisions made with incomplete information. To understand this characteristic of quantum mechanics, the state of an ensemble with properties corresponds to the set of values these properties take. One such property could be the list of molecules that make up the ensemble, giving the microscopic state of the ensemble. Examining this ensemble in isolation, we can measure and observe it in its deterministic evolution, as in kinetic theory. Unlike in classical mechanics, quantum states can overlap.

When we examine a quantum state, correlations between different parts of a quantum system become observable. This phenomenon is known as entanglement. In mixed states, however, a given subsystem or particle may belong to several quantum states simultaneously. As a result, the entanglement between two states gives rise to intrinsic uncertainties that are characteristic of the states themselves, rather than reflecting limitations in our knowledge of them. While the mixing of different states is an uncommon feature of classical mechanics, the entanglement of parts of states is specific to quantum mechanics. In a debtor–creditor relationship, a change in the state of one party immediately affects the other. Debt, like the price of an option, is a number, and the relationship of that number to a commodity. If in statistical mechanics a molecule can be compared to an agent, in quantum mechanics the analogue of the particle is a number describing a relationship. Reality becomes a numerical or mathematical reality, or rather a reality described by mathematically calculated probability amplitudes. But it is precisely the emphasis on numbers, which express discrete amounts of energy (quanta), that makes quantum mechanics more suitable for microscopic than for macroscopic analysis.

2 Early Attempts to Use Statistical Mechanics in Economics

Dealing with ‘Living Energy’

The curve put forward by Pareto immediately led Italian mathematicians, statisticians and economists to transform the asymmetric Pareto distribution into a probability distribution. In particular, in the 1920s, the mathematician Francesco P. Cantelli used the second version of Pareto’s equation to show that it could be expressed according to a frequentist interpretation (see Tusset, Reference Tusset2018). In Sulla deduzione di legge di frequenza da considerazioni di probabilità (On the Deduction of Frequency Law from Probability Considerations) in 1921, Cantelli compared the distribution of income to the distribution of velocities of gas molecules (Reference Cantelli1921: 89), the first explicit comparison of economic analysis to statistical mechanics, after the indirect analogy in Bachelier’s theory of speculation. The comparison was with a column of gas molecules in a closed container with total energy (kinetic and potential) a given. However, moving to the economic sphere, the problem arises of how to identify a quantity, functioning as a constant, that corresponds to the energy associated with the volume of gas.

This is the problem of André-Marie Ampère’s vis viva, which becomes living energy with Guido Castelnuovo, a mathematician (Reference Castelnuovo1919). In a Reference Cantelli1921 article, Cantelli considers the total utility derived from disposable income as a given, while the utility of individual agents varies as a result of exchanges. Utility is therefore like energy circulating in the system. Given the constraint adopted, Cantelli shows that the most likely distribution of income (source of utility) corresponds to the Pareto income equation

![]() However, Cantelli does not identify the factors leading to this income distribution other than exchange (the market) itself, and ends up seeing chance as the determining factor. The attainment of an income, x, depends on a complicated interweaving of causes (activity, skill, competition, character of the individual, constitution, etc.), so that it seems to depend on chance (Cantelli, Reference Cantelli1921: 90). Although criticised (e.g. because measuring the total utility of income did not seem possible), Cantelli’s attempt was used in research on the probabilistic distribution of income in Italy and elsewhere between the two world wars.

However, Cantelli does not identify the factors leading to this income distribution other than exchange (the market) itself, and ends up seeing chance as the determining factor. The attainment of an income, x, depends on a complicated interweaving of causes (activity, skill, competition, character of the individual, constitution, etc.), so that it seems to depend on chance (Cantelli, Reference Cantelli1921: 90). Although criticised (e.g. because measuring the total utility of income did not seem possible), Cantelli’s attempt was used in research on the probabilistic distribution of income in Italy and elsewhere between the two world wars.

Cantelli returned to the subject in 1929, assuming that the stock to be distributed was the number of working hours and that workers could choose how much to work, thereby determining their income. The stability of the distribution was guaranteed by the fact that wages were ultimately determined by the workers themselves. In this way, Cantelli sought to overcome the abstract nature of utility, replacing it with the total stock of working hours which corresponded to the stock of income. Recently, drawing on Cantelli’s Reference Cantelli1929 article, Jess Benhabib and Alberto Bisin (Reference Benhabib and Bisin2018: 1264, footnote 9) have argued that the relevant variable to consider is neither utility nor hours worked but, rather, total talent, which they regard as being distributed according to a multinomial probability across equiprobable income groups. In Cantelli’s thinking, the choice of hours was a simplified representation of the exchange mechanisms leading to the Pareto distribution.

Cantelli prompted the reflections of the economist and statistician Felice Vinci, who reformulated the second Pareto equation in terms of the density of the distribution curve (Vinci, Reference Vinci1921: 368). The probability of falling into an income segment, Vinci wrote, depended on two factors: personal qualities (talent) and numerous other definable institutional elements. Talent, useful for the acquisition of income, appeared to be concentrated in the higher income population. Institutional factors appeared as barriers to entry increasing with income. Translated into parameters, these factors enabled Vinci (Reference Vinci1921, Reference Vinci1924) to approximate an exponential distribution expressed by a Pearson-type V curve, otherwise known as an inverse gamma distribution, characterised by the presence of a heavy tail.

But Vinci’s conclusion was clear: the distribution of income depends on such a complex of causes that it seems to rely on chance (1921: 369). Chance here is not an imponderable but, rather, the outcome of many – perhaps an excess of – random and chaotic factors. A similar conclusion can be found in the work of Dunkan K. Foley, who argues that the statistical equilibrium underlying income distribution is a chaotic process that tends to explore all possible patterns of income transactions (Foley, Reference Foley, Petri and Hahn2003: 102).

Another important contribution of the time was provided by the mathematician Luigi Amoroso (Reference Amoroso1925). Based on the second Pareto equation revisited by Cantelli and Vinci’s inverse gamma function, he focused on the parameterisation needed to include what Pareto had excluded, that is, lower income brackets. The result is a unimodal, rather than a (Pareto) zero-modal, curve. This gives a gamma distribution that can be written as the following density function:

![]() , where f(x) is the number of agents with an income ranging between x and x0 + dx, and

, where f(x) is the number of agents with an income ranging between x and x0 + dx, and

![]() ; α is a positive integer; x0 is a positive or nil integer; γ is a positive integer; and s is a positive integer such as p + s > 0. The Amoroso curve, still used today, is a generalisation of the special case of the Pareto curve. If p = 1, Amoroso’s distribution is the same as Pareto’s, with zero-modal characteristics. As the value of p increases to 2, 3, 4, …, the peak makes its appearance, and the distribution takes on the well-known spinning top shape. According to the value and sign of the parameters, the gamma distribution lies between a normal and a Pareto distribution.

; α is a positive integer; x0 is a positive or nil integer; γ is a positive integer; and s is a positive integer such as p + s > 0. The Amoroso curve, still used today, is a generalisation of the special case of the Pareto curve. If p = 1, Amoroso’s distribution is the same as Pareto’s, with zero-modal characteristics. As the value of p increases to 2, 3, 4, …, the peak makes its appearance, and the distribution takes on the well-known spinning top shape. According to the value and sign of the parameters, the gamma distribution lies between a normal and a Pareto distribution.

Remembered today in econophysics for his gamma distribution, Amoroso also applied kinetic gas theory to monetary aggregates. In 1924, he published a short, little-known article, ‘La cinematica in un mercato chiuso’ (‘Kinematics in a closed market’), which presented a monetary model he called ‘kinetic’ (Amoroso, Reference Amoroso and Almagià1924: 142), based on the familiar exchange equation MV = PQ. Amoroso argued that the equation of exchange is formally identical to the characteristic equation of gases, provided that the following correspondences hold: the quantity of money in circulation (M) corresponds to absolute temperature; the volume of exchange (Q) to the volume of the gas; the price level (P) to gas pressure; and the velocity of circulation of money (V) to the universal (Boltzmann) constant (Amoroso, Reference Amoroso and Almagià1924: 144). The price level, the gas pressure, is the critical variable that must be controlled by the other variables: money in circulation (by reduction) and the volume of transactions (by expansion).

Amoroso confines himself to this analogy without further elaboration. It was the first time an analogy had been drawn between stocks of money and gaseous quantities.

Amoroso’s variable velocity of circulation of money was taken up in 1932 in an article written in Hungarian by Andrew Pikler and translated as ‘A short groundwork of a kinetic theory of money’. Pikler interpreted the equation of exchange by analogy with the kinetic theory of gases, hence based on a plurality of stocks of money, each characterised by its own velocity of circulation (see Petracca, Reference Petracca2019). The analogy with molecules, each with its own velocity, is obvious. As Enrico Petracca notes, the article was reviewed in Italy (Del Vecchio) and cited in France (Moret). Pikler subsequently (Reference Pikler1951) extended the analogy between the theory of money and kinetic theory, adding elements of quantum mechanics.

Interpreting the various monetary stocks – M1, M2, M3 and so on – discussed by Amoroso and Pikler as monetary budgets helps to clarify the kinetic interpretation of household budgets proposed by Johannes Lisman in 1949 in Econometrics, Statistics and Thermodynamics. In this case, gas budgets were associated with ‘closed’ postage checks in the transfer system. Exchanges were equated with collisions, and postal deposits with collisions with the container walls. Lisman is interesting for two reasons. First, because he expressed total energy as the sum of the deposits used for cheques – that is, by equating the (postal) money stock with energy – whereas in physics energy is defined as a quadratic function of particle velocities, a feature that has no counterpart in the definition of money. Second, he shifted to a macro-analysis of the money stock, following Pikler (quoted explicitly), and applied Boltzmann’s theorem H to show the link between the micro-analysis of postal cheques and the macro-analysis. Lisman ended up ruling out the analogy due to the impossibility of expressing postal cheque transactions in terms of probability distributions. While collisions between particles are reversible, there is no such reversibility in financial exchanges: we can reverse exchange transactions, but not at an unchanged price (Lisman, Reference Lisman1949: 88).

The analogy between thermodynamic and monetary systems was also explored by Meghnad Saha, an Indian nuclear physicist whose textbook Treatise on Heat (1931), co-authored with Biswambhar Nath Srivastava, suggested that students apply kinetic theory to the market in order to explain the asymmetric distribution of income and wealth (see Chakrabarti, Reference Chakrabarti2018). The money market seems to fit the analogy because traders can neither create nor destroy money, which is conserved, analogous to kinetic energy in a closed gas system.

In the 1940s and 1950s the attempt to import statistical mechanics (kinetic gas theory) into economics was complemented by quantum mechanics. In 1943, Harro Bernardelli, an Austrian economist and refugee in Rangoon (Myanmar), argued for the substantial stability of Pareto’s asymmetric income distribution in an article, ‘The stability of income distribution’, published in Sankhyā: The Indian Journal of Statistics. Bernardelli turned to quantum mechanics to study the process of transition to a stable equilibrium, borrowing the expansion theorem from Paul Dirac (Reference Dirac1935). By employing Hamiltonian functions – which represent the total energy of a system and reintroduce irreversibility – Bernardelli sought to understand how initial conditions shape outcomes. The remaining challenge is to justify the emergence of a skewed distribution given a fixed total amount of wealth or income, which in this framework corresponds to total energy. To this end, the system is decomposed into income groups, treated as eigenstates, whose interactions are analysed using concepts drawn from quantum theory,

The probability of moving between income groups depends on the educational, cultural and institutional factors discussed earlier, which tend to operate slowly and therefore do not lead to rapid changes in the overall income distribution. Bernardelli allowed for movement between groups, an exchange of energy, but in such a way that the composition of and within groups remains stable, so that, as he stated in 1943, each income group reproduces in miniature the original composition of the whole society (Bernardelli, Reference Bernardelli1943: 357). He concluded that the rate of change in income is proportional to the deviation of that income from the equilibrium represented by the final distribution, which is by definition stable. Energy, however, only changes form, thus does not bring about real social change.

Some of Bernardelli’s insights were developed in depth in David Champernowne’s A Model of Income Distribution (Reference Champernowne1953), which uses an economic model to argue that the forces that determine the distribution of income in any community are so varied and complex, and interact and fluctuate so constantly, that any theoretical model must be either unrealistically simplified or hopelessly complicated (Reference Champernowne1953: 319). Hence his construction of the Pareto curve as an asymptotic curve at both the top and the bottom, a trend that leaves open the possibility of random changes. This conclusion led him to advise caution when predicting the effects of income policies (Champernowne, Reference Champernowne1953: 351), as Pareto had done many years before (Pareto, Reference Pareto, Montesano, Zanni, Bruni, Chipman & and McLure1906: 204).

Maria Castellani, an Italian mathematician, student of Cantelli and assistant to Castelnuovo before emigrating to the United States in the 1940s, published an article in 1950 entitled ‘On multinomial distributions with limited freedom: A stochastic genesis of Pareto’s and Pearson’s curves’, in which she moved from kinetic to quantum approaches. She called the quantum unit an ‘energy interval’ corresponding to an income group.

Castellani (Reference Castellani1950) explained that each energy interval or income group was characterised by two independent forces, both functions of time. The two forces could vary, but the sum of the different energy intervals always yielded a constant, corresponding to a state of conservation of energy. Castellani did not describe the nature of the two forces that explain the Pareto or Pearson distributions. But individual talent, a single force in the case of Pareto, and talent plus institutions in the case of Pearson, perfectly represent the two independent forces. The distribution of energy between the two factors is uncertain and varies as a function of distance from the starting point, while the total remains constant.

As this brief and incomplete review shows, a number of elements recur in these models, all of which aim for a macroscopic or macroeconomic representation of phenomena. In particular, the principle of energy conservation recurs, accompanied by the search for a similar economic principle, the identification of which seems relevant because it is a constraint. Once the factor assumed as a constant is identified, the relevance of Pareto’s second equation is verified in order to interpret the distribution. The constant treated as a constraint does not account for the distributional inequality that emerges from interactions among multiple factors, a process sometimes summarised by the term ‘chance’ (Cantelli, Reference Cantelli1921; Vinci, Reference Vinci1921).

Majorana

The first phase of the application of mechanical statistics to economics and finance cannot be concluded without mentioning what an eminent physicist wrote on the subject of the encounter between the natural and social sciences. Ettore Majorana, a renowned physicist, wrote an essay in the 1930s entitled ‘Il valore delle leggi statistiche nella fisica e nelle scienze sociali’ (‘The value of statistical laws in physics and in the social sciences’), published in Scientia in 1942 (translated into English only in 2006 – see Bassani and the Council of the Italian Physical Society (CIPS), 2006) after the disappearance of Majorana himself.

Majorana joins with Kolmogorov in the probabilistic revolution by identifying the statistical field – and probability – as the area where the natural and social sciences could converge on common methods and approaches. However, he was addressing his fellow physicists rather than social scientists, emphasising the lack of objectivity in phenomena and asserting the statistical nature of elementary processes (Bassani and the CIPS, Reference Bassani2006: 250).

Majorana argued that the introduction of a new type of statistical law in physics – or, more generally, of probabilistic laws often embedded in statistical ones – would require physicists to reconsider the foundations of the analogy with social statistical laws established earlier. The statistical character of social laws, which derives from the way in which the conditions of phenomena are defined, allows an innumerable range of concrete possibilities and probabilities to be considered as the core of the analogy between physical events and social facts (Bassani and the CIPS, Reference Bassani2006: 258). Although Majorana’s position was forward-looking, it remained a minority view, grounded in the belief that the natural and social sciences could speak a common language, identified by him as the language of statistics, in which probability plays a fundamental role.

3 A New Discipline on the Way

Attempts to introduce elements of statistical mechanics into economic analysis in the first half of the twentieth century appeared to be rather isolated, the result of mostly individual interest, with almost no impact on the economic debate. The Pareto income distribution itself was set aside, except partially in Italy, where it continued to prompt interest and debate. Only in the second half of the century did the application of statistical mechanics to economics arouse the interest of economists and beyond, with the extension of the Pareto curve to areas other than income/wealth and the rediscovery of Bachelier’s ideas. Intriguingly, Pareto and Bachelier seem to meet in the application of statistical mechanics to economics.

Random Economies

Interest in ‘random economies with many interactive agents’, to quote an article by Hans Föllmer from 1974, became widespread in the mid 1960s, especially among economists. Föllmer, a mathematician, first introduced the Ising model, based on ferromagnetic spin pairs, allowing the social interactions of economic agents to be modelled in relation to their preferences in a context of interdependence (Föllmer, Reference Föllmer1974). He showed that in the presence of even short-ranging interactions, microeconomic characteristics can no longer determine the macroeconomic phase (see Chen & Li, Reference Chen and Li2012). Hence, interest turned to agents whose preferences and endowments are random, and the probabilities governing this randomness are determined by the environment, be it a market for real goods or financial products. Returning to Pareto’s skewed distribution, we are in the middle of the curve, not on the heavy tails, where agents interact, determine and actually swap positions. In this part of the distribution, Brownian motion can be applied.

The approach is well represented in a collection of essays published in 1964 by Paul Cootner, The Random Character of Stock Market Prices, which included an English version of Bachelier’s thesis under the title Theory of Speculation. Following Bachelier, the aim was to analyse the pricing of financial assets or, better still, to find a theory enabling predictions to be made about the return on investment in shares.

Despite mathematical improvements, Bachelier’s view that a rational interpretation of option and stock prices can be constructed from random behaviour was maintained, after eliminating some limitations in Bachelier’s analysis, the most important, from an economic point of view, being negative stock prices.

The assumptions introduced correspond to the standard economic interpretation of Brownian motion: prices are memoryless and mutually independent, and both their mean and their variance are finite. Extreme events are therefore excluded. A ‘random walk’ is assumed, which explains the movement of stock prices by their similarity to a series of random factors. The principle of stochastic independence and the absence of direct interaction between particles/agents complete a theoretical construction aimed at arguing the independence of an option price from other prices, a prerequisite for the set of prices to determine a normal distribution. This assumption differs from the interaction-based mechanism at the origin of the skewed distribution mentioned earlier.

For the economists who contributed to the book, an important theoretical corollary was undoubtedly the non-relationship of financial asset prices to past prices. The idea is of a market in which past prices play no role. This aspect, determined by analogy with Brownian motion, leads to an almost atemporal representation of the economic process, which is obviously an important step because atemporality does not arise from the difficulty of constructing a dynamic theory but, rather, is assumed as a fundamental aspect of price theory. Later, it became clear that the assumption of independent prices conflicted with expectations of price volatilities based on past volatilities.

In terms of distribution, the comparison was between the normal distributions with equally distributed left and right errors resulting from the application of Brownian motion to stock prices, and the Paretian distribution with heavy-tailed peaks. The question that remained open, then, was not the existence of random economies but the formation of prices in random economies: in a normal distribution, the central limit theorem allows prices to be independent after all. This is not so in a skewed distribution with heavy, potentially asymmetric tails, accompanied by peaks and extremes.

Mandelbrot and the Paretian Universe

It is this market, with its unique peaks and events, that Mandelbrot examines with an analytically rich and complex theory of incomes, prices and fractals, only hinted at here. What is interesting for our purposes is Mandelbrot’s methodological approach: he tries to show that the analogy with statistical physics must not be abandoned if the weaknesses that plague existing theories are to be avoided. The way to do this, he argues, is not to seek the economic equivalent of statistical thermodynamics but to generalise the statistical methods of thermodynamics to the economic concept of income (Mandelbrot, Reference Mandelbrot1960: 85). However, to better understand Mandelbrot’s view of statistical physics, it is worth recalling that he considered Pareto’s statistical law, which did not originate in physics, as central to both economics and physics (Mandelbrot, Reference Mandelbrot1963a: 421). Mandelbrot suggested ‘imitating’ the principles of physics to interpret economic phenomena (Reference Mandelbrot1963a: 426). Hence, the economic variables did not need to be compressed into a framework of statistical thermodynamics; rather, the statistical methods of thermodynamics needed to be generalised to cover economic concepts such as income.

Mandelbrot’s methodological position had an empirical origin. The data show that the Pareto income curve declines much more slowly than a normal physical law, with the consequence that traditional interpretations based on Bernoulli or Weber–Fechner can be applied to high and middle incomes but not to the heavy tail (Mandelbrot, Reference Mandelbrot1960). Focusing on this, Mandelbrot explains the even extreme phenomena that can occur as a consequence of random interaction, starting from Pareto reinterpreted through Lévy.

In Mandelbrot’s thinking, the two fields at the origin of econophysics – income distribution and Brownian motion in finance – converge into a single field of research that could be called Paretian process analysis. Process, because the Paretian tail characterises not only incomes and prices but also the distribution of firms by size and cities by size. And because the process is interpreted in its various domains on the basis of microfoundations, Mandelbrot refers to this method as ‘invariant laws’ (Reference Mandelbrot1963a: 423), a term he borrowed from physics and adapted for use in economics. Invariance refers mainly to the aggregation of variables such as income or firms of different sizes, which Mandelbrot regards as possible even where the variables to be aggregated are non-Gaussian. However, in the presence of Gaussian distributions, random variables are taken into account and not excluded.

Mandelbrot points out that the family of distributions identified by Paul Lévy in the 1920s (see Lévy, Reference Lévy1948–1965), which appear to be stable even when adding random variables is taken into account, includes non-Gaussian distributions (Mandelbrot, Reference Mandelbrot1960: 86). These confirm the ‘weak Pareto law’ (see the first Pareto equation earlier), 0 < α < 2, characterised by a heavy tail, demonstrating that extreme events, however rare, follow a certain law and are significant. In particular, Mandelbrot defined ‘Pareto–Lévy distributions’ as ‘positive’, stable distributions with 1 < α < 2 (Reference Mandelbrot1960: 87). When α ≥ 2, the curve approaches a Gaussian distribution (Mandelbrot, Reference Mandelbrot1963c). Since the stability of the distribution is ensured by finite values of the first two moments, mean and variance, one consequence of 1 < α < 2 is that the second moment becomes infinite, while the first is finite. The focus is on the length of the tail, rather than the extreme skewness of the curve, because this is the most significant aspect of Pareto’s law, or the Paretian process, to use his words (Mandelbrot, Reference Mandelbrot1963a: 422). According to Mandelbrot, an increase in the number of random variables leads to an extension of the heavy tail. The idea is to start the analysis with the highest incomes and then gradually add the lowest incomes distributed in the heavy tail.

What led Mandelbrot to comment on the Brownian motion introduced by Bachelier was again an empirical finding. Prices tend to have peaks that can scarcely be expressed in the normal distribution assumed by Brownian motion. The tails of the distributions of price changes are so extraordinarily long that the variance of the samples is typically erratic (Mandelbrot, Reference Mandelbrot1963b: 394–395). The market emerging from Mandelbrot’s analysis has an instability not encountered in Cootner or in other economists. Consequently, the Brownian motion of Bachelier becomes a special case of the application of Pareto–Lévy processes. Mandelbrot (Reference Mandelbrot1960) explicitly referred to the Pareto distribution as an alternative to the Gaussian distribution of Brownian motion, which, in the version of Pareto himself, with α parameter below 2, has variance that can be infinite.

With Mandelbrot, the thinking of Pareto and Bachelier comes together in the application of Lévy’s processes, raising the problem of infinite variance that is a challenge for the analysis of financial markets. After some repetition, the small random movements of variables (Brownian motion) predict a martingale environment, that is, expectations reflect current values. However, in the presence of heavy tails, where the frequencies of neighbouring values become significant, Lévy flights can develop, that is, large deviations from the mean that are essentially unpredictable and whose frequency (periodic crises) can be loosely assumed.

Despite resistance from some economists, heavy tails entered the toolkit of the discipline, until new empirical research appeared to demonstrate that the distributions of returns in financial markets tend to be Gaussian on time scales of over one month (Sornette, Reference Sornette2014). In the final decades of the twentieth century, research was moving back towards normal distributions. Nonetheless, with regard to the introduction of mechanical statistics into economics, Mandelbrot showed that the skewed distribution resulting from the interaction of agents (the market) can lead to peaks and crises, that is, extreme events.

Mandelbrot built on Pareto’s insights not only by introducing the scaling properties of physical, economic and social phenomena (such as coastlines, firms and cities) but above all by interpreting the relationships among Gaussian, log-normal and power-law distributions. In so doing, he highlighted both their potential and their limitations, extending their relevance beyond their traditional uses in finance, economics and physics (see Mirowski, Reference Mirowski1990). To fully understand their application, randomness is considered to be binary – either non-random or random – but gradual – light, slow and wild. Markets should be studied from the heavy tails.

Steindl’s Random Processes

In the early 1960s, the Austrian economist Josef Steindl was similarly interested in tails, albeit not as heavy as in Mandelbrot. Steindl’s original contribution, inspired by Pareto, consisted in the study of firm size in relation to growth.

He classified companies in different sectors and countries according to their business and number of employees. The result is a skewed distribution with a slope coefficient (the Pareto α) between 1.5 and 2. According to Steindl, the long tail of the Pareto distribution represents companies – defined by assets and number of employees, not by number of manufacturing plants – with sizes that do not cluster around an average value. A log-normal distribution, such as Robert Gibrat’s (Reference Gibrat1931), would fit well in the middle, excluding the extremes. However, while allowing for heavy tails with extreme values, Steindl makes it clear that the variance of the random variables under consideration is not infinite. Steindl (Reference Steindl1965: 19) seems to be telling us that economists allow for random but finite variables. Beyond the technical aspects, what is significant here is the meaning to be attached to the extreme value.

Steindl’s idea can be seen as an attempt to explain the random process leading to Pareto’s asymmetric income distribution from the size of profits (part of the income distribution) via the size of firms. Although Steindl considers the Pareto distribution to be the most realistic for the representation of the transformations (birth/death) characterising firms, he does not neglect the growth processes represented by log-normal distributions, such as those of Robert Gibrat and Jacobus Kapteyn. He refers to the law of proportional effects, originating with Gibrat (Reference Gibrat1931), and, more interestingly, to the random walk (and Brownian movement) as an analogy to firms, which therefore move towards a log-normal and, in particular, stable distribution (Steindl, Reference Steindl1965: 31). The process has a time dimension, not too long, because the passage of time increases the variance of the Brownian motion.

Steindl reinterprets Pareto’s α as the outcome of opposing growth rates: the rate of birth and death of firms, the rate of appearance and disappearance of wealth holders. The equilibrium between opposing forces determines the Pareto coefficient, as Pareto himself had noted in the Treatise on General Sociology (Reference Pareto and Pareto1916: §2074), where he states that the oscillating states of individuals can generate a general equilibrium.

The Law of Chaos

Economics and statistical mechanics meet again in Laws of Chaos: A Probability Approach to Political Economy, published by Emmanuel Farjoun and Moshé Machover in 1983. Statistical mechanics is taken as a paradigm providing a probabilistic reinterpretation of the Marxian view of economics, starting with the definition of profit as a random variable. The application to production of the relationship between the microscopic view of particle behaviour and the macroscopic phenomenon of the set of particles makes this text one of the first to adopt the concepts of statistical mechanics to analyse production economics.

Farjoun and Machover (Reference Farjoun and Machover1983) base their view of a probabilistic political economy on an analogy with the kinetic theory of gases. From Maxwell–Boltzmann they take the idea that a system can reach a state of equilibrium without individual equilibria. The exchange of kinetic energy between particles is such that there is a re-equilibrium in the distribution of kinetic energy, which corresponds to an equilibrium in the velocity of the particles themselves. It can therefore be assumed that the system as a whole is stable despite micro-instability.

Karl Marx’s and David Ricardo’s labour theory of value is reinterpreted with the tools of mechanical statistics, namely, aggregates of agents, workers and capitalists. Farjoun and Machover (Reference Farjoun and Machover1983) focus on the formation of prices, expressed in relation to the hours of labour demanded. Prices are expressed as a Gaussian distribution characterised by a mean and a standard deviation from the mean.

Attention then shifts from prices to rates of profit, bearing in mind that, according to the kinetic analogy, there is no single rate of profit but a distribution of rates. From an economic point of view, it is interesting to analyse the spread of the profit rate, which Farjoun and Machover consider to be endogenous to the capitalist system. Technological innovations, organisational innovations, material markets and so on come to the fore. The result is a representation of a system that guarantees stable profits over time, given the complexity and randomness of micro-canonical relations.

Farjoun and Machover’s work is important in this history not simply because it extended the analogy between economics and statistical mechanics but also because of the application to production. Although the term econophysics obviously does not appear in their text, the book is nevertheless an example of classical econophysics, where analytical tools borrowed from physics are used to analyse economic variables. Among these, labour, conventionally regarded as the source of value, occupies a prominent position because economics, while concerned with the study of social processes and structures, is actually concerned with how social labour is organised and performed and, ultimately, how the product of labour is distributed and put to different uses (Reference Farjoun and Machover1983: 85) (classical econophysics is provided with later insights in Cockshott et al., Reference Cockshott, Cottrell, Michaelson, Wright and Yakovenko2009).

This does not reduce the importance of other variables such as prices, according to the authors, linked to the labour content of the goods produced. The problem lies in the method used to derive prices, based on the existence of a uniform rate of profit, when it is probabilistic. The main thrust of the argument is to replace variables expressed by uniform values with probabilistic distributions, and this is not merely a methodological step.

The authors question the Marxian-inspired tendency of labour content to decline in the long run, bringing to the fore wages and prices, expressed here as random variables. What appears through the lens of probability and statistical mechanics is an interesting representation of the (capitalist) market. Prices are in equilibrium, but constantly changing as the quantities traded change. Similarly, the price of labour, the main cost of production, can change, but with a different distribution from that of product prices. The result is changing profits, which can also be interpreted in terms of a probability distribution. It should be noted, however, that this distribution is not a power or a Pareto law; rather, it is Gaussian.

Santa Fe Complexity

Econophysics is often associated not with a term that identifies a specific field of research, as is the case with income distribution or financial volatility, but with an approach to, or projection of, economic and financial phenomena: namely, ‘complexity’. In this regard, the Santa Fe Institute is significant as the likely birthplace of complexity as a methodological approach. Operational since 1984, the Santa Fe Institute considers itself a research network on complex systems, whose results and research had a major influence on the birth of econophysics and continue to drive its development. Perhaps nowhere else have economists and physicists worked together on what can be called, abstractly, the ‘complexity approach’ (see Zurek Reference Zurek1989; Casdagli & Eubank, Reference Casdagli and Eubank1990; Weigend & Gershenfeld, Reference Weigend and Gershenfeld1994). It includes the Santa Fe artificial market and the minority game, models useful for understanding the behaviour of agents not guided by perfect rationality but acting inductively, adapting their behaviour to past experience.

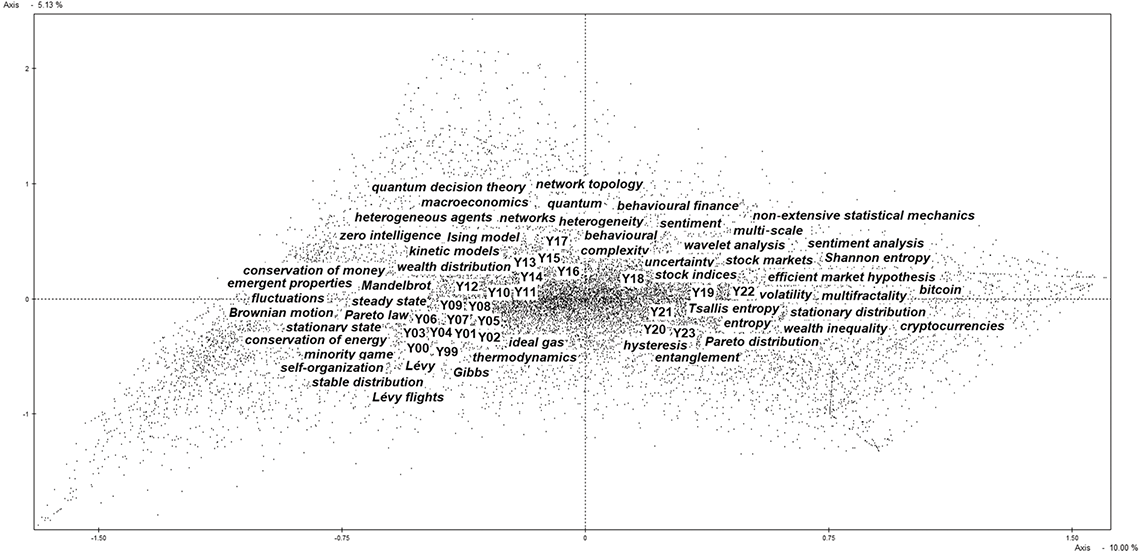

The institute studies the behaviour of agents within a statistical mechanics framework, but shifts the focus from how agents act to why they do so. As we shall see, its analysis parallels and often overlaps with the focus on the macroscopic level. Indeed, although econophysics is primarily concerned with the macro level, the complexity of the relationship between macro and micro levels is crucial. Market behaviour is the result of choices made by agents, so assumptions such as ‘zero intelligence’, ‘bounded rationality’ and ‘perfect rationality’, considered in a complexity-based approach, are essential.