35.1 Introduction

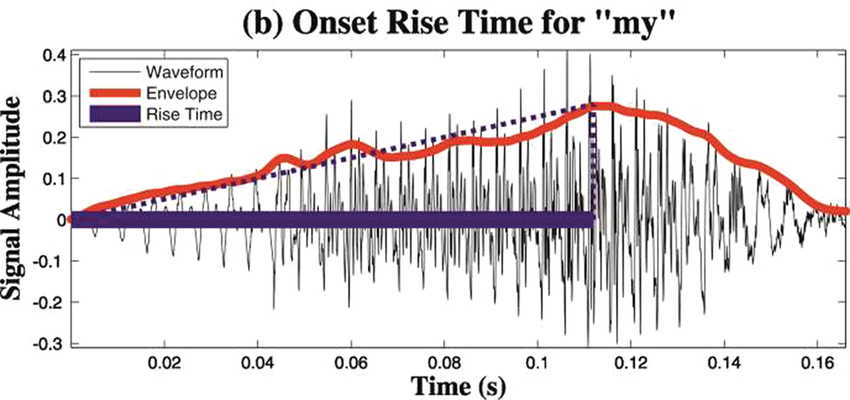

The importance of rhythm for language processing is a core theme throughout this volume. Both the acoustic structure of the speech signal and the physiology of speech perception and production are intimately tied to rhythm, and so the proposal that rhythm is also at the centre of language acquisition is perhaps unsurprising. Rhythm can be understood as a kind of hidden glue that underpins the acoustic structure of human languages and the representation of sensory structure by human brains. Indeed, almost four decades ago, Mehler et al. (Reference Mehler, Jusczyk and Lambertz1988) proposed that sensitivity to rhythm was a universal precursor to language acquisition by young infants. Here, I adapt temporal sampling (TS) theory (Goswami, Reference Goswami2011) to provide a rhythm-based framework for explaining language acquisition by infants (see also Goswami, Reference Goswami2019, Reference Goswami2020, Reference Goswami2022a). TS theory was originally proposed as a causal framework for integrating the acoustic and motor difficulties with rhythm found in children with dyslexia with an oscillatory model of sensory encoding. The acoustic rhythmic difficulties in dyslexia were indexed primarily by sensory difficulties in discriminating changes in speech energy called amplitude rise times (ARTs, depicted in Figure 35.1), and by perceptual difficulties in discriminating syllable stress patterns and musical rhythm patterns, along with problems in tapping in time with a regular metronome beat (e.g., Thomson and Goswami, Reference Thomson and Goswami2008; Huss et al., Reference Huss, Verney, Fosker, Mead and Goswami2011; Goswami et al., Reference Goswami and Leong2013). The rhythmic neural encoding aspects of TS theory were focused on low-frequency oscillations in the delta (0.5–4 Hz) and theta (4–8 Hz) neurophysiological bands, thought to play a core role in rhythmic entrainment (Ghitza and Greenberg, Reference Ghitza and Greenberg2009; see Chapter 3). These features were integrated into a sensory/neural explanatory system for understanding the phonological difficulties that characterise children with dyslexia, and that also characterise many children with what was previously termed specific language impairment (SLI – now termed developmental language disorder, DLD). Since 2011, TS theory has been developed further in the light of experimental data. Following an outline of TS theory drawing on sensory/neural data from adults and children, two longitudinal studies testing the theory with infant participants will be described in detail.

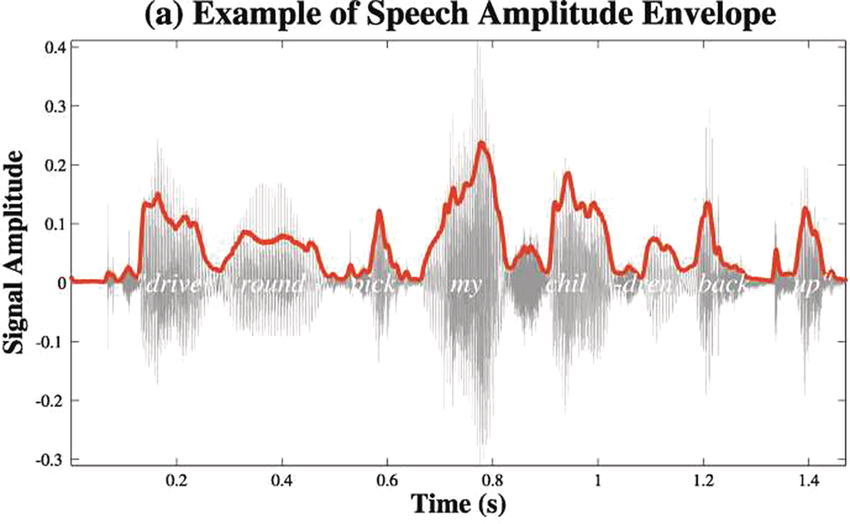

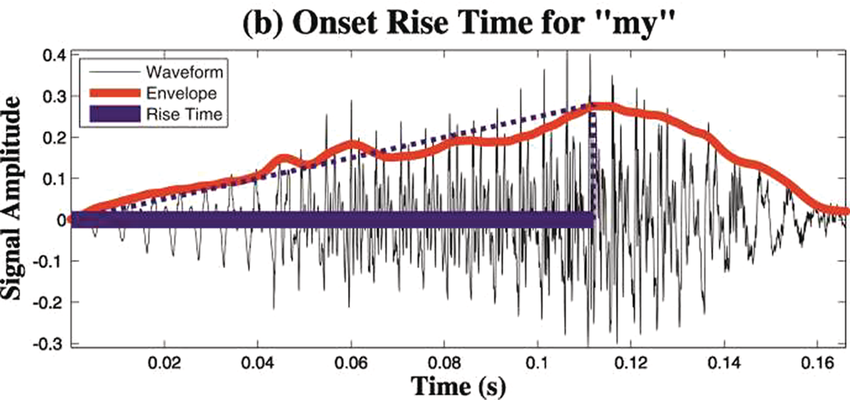

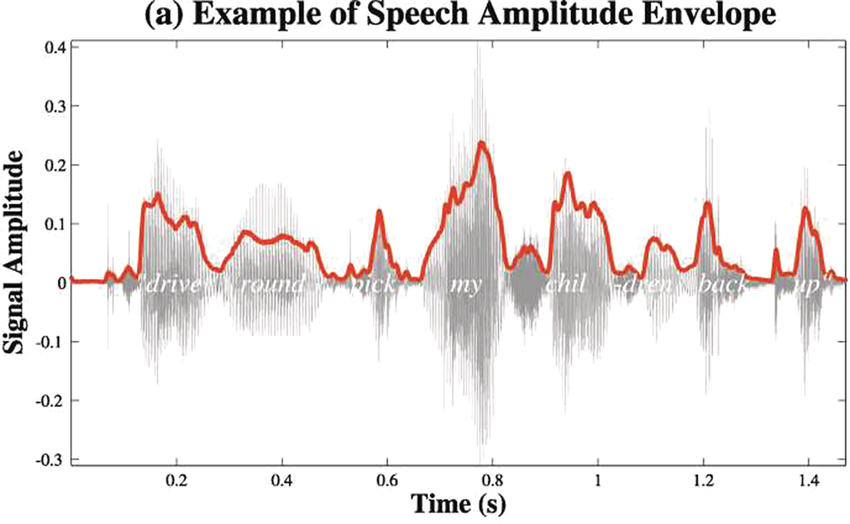

A schematic depiction of ARTs.

Panel 1A depicts the amplitude envelope for the sentence ‘drive round, pick my children back up’, and Panel 1B shows the rise time for the syllable ‘my’, taken from the longer sentence.

Figure 35.1(a) Long description

The horizontal axis represents time ranging from 0.2 to 1.4 seconds. The vertical axis represents signal amplitude, which ranges from minus 0.3 through 0.4. The waveform of speech reads, drive round, pick my children back up. A solid line outlines the waveform. The values are estimated.

Figure 35.1(b) Long description

The horizontal axis represents time ranging from 0.02 through 0.16 seconds. The vertical axis represents signal amplitude, which ranges from minus 0.3 through 0.4. This graph displays a waveform which originates at (0.00, 0) and terminates at (0.16, 0). A solid line for the envelope overlaps the waveform and originates at (0.00, 0) and terminates at (0.16, 0). A dotted line indicates the rising amplitude of the onset. A vertical horizontal line also overlaps the waveform to indicate computation of the rise time, originates at (0.00, 0) and terminates at (0.7, 0). The values are estimated.

35.2 Temporal Sampling Theory

Temporal ‘sampling’ refers to the fact that the brain depends on networks of neurons that oscillate between excitation and inhibition to encode sensory information (‘brain waves’). Temporal coding is an important aspect of information coding in the brain (Buzsáki and Draghun, Reference Buzsáki and Draguhn2004), and for encoding the complex speech signal, the synchronous activity of oscillating networks of neurons at different timescales (frequency bands, primarily delta, 0.5–4 Hz, theta, 4–8 Hz, beta, 15–25 Hz, and gamma, 30–80 Hz) is thought to be critical (Giraud and Poeppel, Reference Giraud and Poeppel2012; see also Obleser et al., Reference Obleser, Herrmann and Henry2012). Neurophysiology shows that these frequency bands are ubiquitous throughout the brain and encode many aspects of sensory information (Buzsáki, Reference Buzsáki2006). The acoustic speech signal can be modelled as the summation of a number of frequency bands fluctuating in intensity (amplitude) over time (the amplitude envelope, AE; Drullman, Reference Drullman, Greenberg and Ainsworth2006). The auditory system codes amplitude modulations (AMs) in natural sounds across different frequency channels and at different timescales (Joris et al., Reference Joris, Schreiner and Rees2004; Turner, Reference Turner2010), and there is systematic AM structure in the AE of all natural sounds, not just speech (Turner, Reference Turner2010; see Daikoku and Goswami, Reference Daikoku and Goswami2022, for AM structure of music, birdsong, wind, and rain). The AE of both child-directed speech (CDS) such as nursery rhymes and infant-directed speech (IDS) or BabyTalk can be analysed in terms of its constituent temporal modulation frequencies (Leong and Goswami, Reference Leong and Goswami2015; Leong et al., Reference Leong, Kalashnikova, Burnham and Goswami2017). This computational modelling shows that the dominant modulation frequency bands for these highly rhythmic speech registers cluster into three AM bands, with centre frequencies around ~2 Hz, ~5 Hz, and ~20 Hz, thereby approximately matching the electrophsyiological bands of delta, theta, and beta/low gamma. These insights concerning the AM structure of CDS and IDS fit well with adult multi-time resolution speech processing models (MTRMs) (see Poeppel, Reference Poeppel2003; Poeppel et al., Reference Poeppel, Idsardi and van Wassenhove2008; also see Chapters 3 and 5), models that played a core role in the development of TS theory (see Goswami, Reference Goswami2011).

35.2.1 TS Theory: Sensory Data

Adult MTRMs proposed that the different temporal integration windows characterised by the different oscillatory networks yielded packets of information at different linguistic grain sizes that were matched to stored representations of words in the mental lexicon (Poeppel et al., Reference Poeppel, Idsardi and van Wassenhove2008). Adult MTRMs focused on the linguistic units of the syllable and the phoneme, thought to be captured by temporal integration windows in the theta and gamma bands, respectively. The infant and child speech modelling studies discussed in Section 35.2 showed that the stressed syllable is also an important linguistic grain size, and is captured by temporal integration windows in the delta band (Leong and Goswami, Reference Leong and Goswami2015). Leong and Goswami’s speech modelling revealed that if one cycle of AM at each temporal rate (delta (~2 Hz), theta (~5 Hz), and beta/low gamma (~20 Hz)) in English nursery rhymes is assumed to match one level of linguistic structure (stressed syllable, syllable, onset-rime), then the AM structure in the AE of CDS provides sufficient sensory information for the brain to extract phonological units of different sizes with over 80–90% efficiency (the spectral-amplitude modulation hierarchy model, S-AMPH, Leong and Goswami, Reference Leong and Goswami2015). This suggests that applying an MTRM approach to the simplified rhythmic genres of IDS and CDS, which underpin language acquisition across cultures, could enable ‘acoustic-emergent’ phonology. When brain rhythms and speech rhythms at these different rates (delta, theta, beta/low gamma) are in temporal alignment, then an emergent phonological system can be extracted from the speech signal by automatic acoustic statistical learning processes (see Goswami, Reference Goswami2022a, for detail; statistical learning is required as speech–brain alignment is only probabilistically accurate in yielding phonological units). This automatic learning can, in principle, enable perceptual organisation of the acoustic speech stream into syllable stress patterns (prosodic feet), syllables, and onset-rime units (m-ash, cr-ash, spl-ash). Further, automatic learning of higher-level acoustic prosodic hierarchies that are also present in the sensory signal could enable the perceptual organisation of acoustic information relevant to extracting syntax and grammar, such as prosodic and intonational phrasing (the ‘prosodic phrasing’ hypothesis; see Cumming et al., Reference Cumming, Wilson and Goswami2015). Perceptual detection of prosodic hierarchies also depends on accurate ART perception (Cumming et al., Reference Cumming, Wilson and Goswami2015).

In TS theory, rhythm patterns are hypothesised to be core to these organisational processes. The S-AMPH computational modelling work based on nursery rhymes further revealed that each AM band is in temporal synchronicity with its adjacent band, via phase relations. Phase relations are temporal relations between different cycles of rhythmic timing, such as slower versus faster bands of AM. In IDS and CDS, these phase relations are governed by the delta AM band (Leong et al., Reference Leong, Stone, Turner and Goswami2014). In particular, phase relations between the delta (~2 Hz) and theta (~5 Hz) AM bands in nursery rhymes are critical to perceiving their ‘beat’ structure or metrical structure (e.g., trochaic versus iambic; Leong et al., Reference Leong, Stone, Turner and Goswami2014, Leong and Goswami, Reference Leong and Goswami2015). Delta–theta AM phase alignment is also core to perceiving rhythm in music (Daikoku and Goswami, Reference Daikoku and Goswami2022). Indeed, the finding that the delta AM band sits at the top of the phase hierarchy in CDS and IDS is thought-provoking, as its centre rate of ~2 Hz matches the dominant beat rate of music (120 bpm) (Fraisse, Reference Fraisse and Deutsch1982; see also Chapters 26 and 27). Temporal analyses of the lullabies sung by mothers to their infants across cultures reveals a cross-cultural convergence on this beat rate of ~2 Hz (Trehub and Trainor, Reference Trehub, Trainor, Rovee-Collier, Lipsitt and Hayne1998). Accordingly, AM-based modelling of the speech signal suggests that cultural practices such as BabyTalk and lullabies may facilitate the automatic extraction of phonology and possibly syntax via the AM phase hierarchy, by statistical sensory learning beginning in the cradle. Infant auditory statistical learning is already known to be highly efficient (e.g., Saffran, Reference Saffran2001), and while IDS and CDS are simplified speech registers, they nevertheless encompass more rhythmic categories than trochaic versus iambic, for example anapest rhythms.

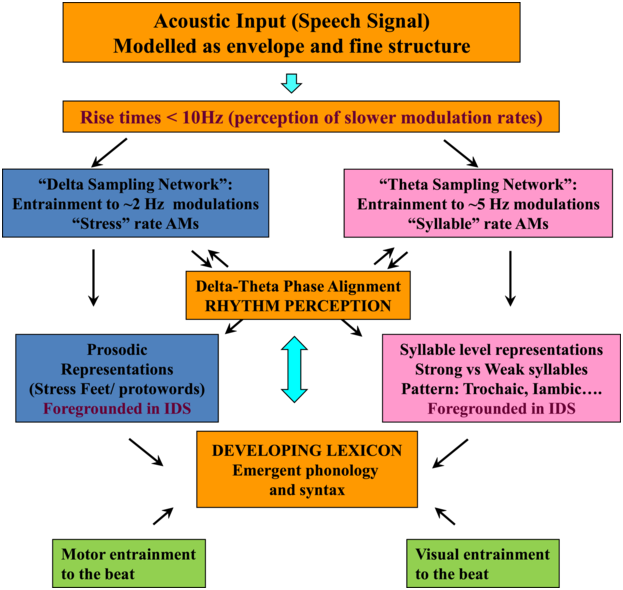

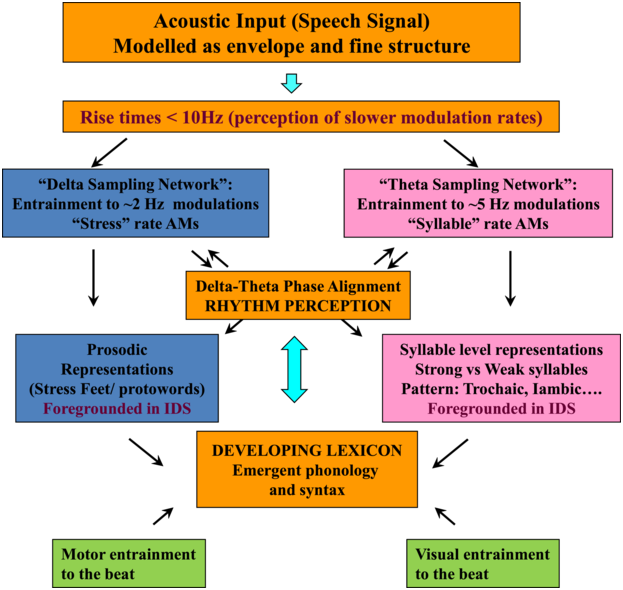

TS theory proposes that this automatic statistical learning depends intimately on ART discrimination, as different ARTs are associated with different temporal modulation rates. TS theory (Goswami, Reference Goswami2011) was in part developed because psychoacoustic studies had repeatedly demonstrated impaired ART discrimination by children with dyslexia, across languages (to date English, French, Finnish, Dutch, Spanish, Chinese, and Hungarian, summarised in Goswami, Reference Goswami2015). Difficulties in discriminating ARTs (which index the rate of change of AMs) could be expected to cause impairments in distinguishing the different modulation frequency ranges in speech, which would result in inefficient phase-locking to these frequency ranges by neuronal oscillatory networks (Goswami, Reference Goswami2011). Adult neuroscience studies were able to demonstrate that ARTs (called ‘speech edges’ in that literature) played a key mechanistic role in the alignment of brain rhythms and speech rhythms (Gross et al., Reference Gross, Hoogenboom and Thut2013; Doelling et al., Reference Doelling, Arnal, Ghitza and Poeppel2014; Chapter 8). For example, ARTs in the theta band provide sensory landmarks that automatically trigger brain rhythms and speech rhythms into temporal alignment, via phase-resetting ongoing neural activity (Doelling et al., Reference Doelling, Arnal, Ghitza and Poeppel2014). Further, the oscillatory networks that are responsive to speech inputs are also hierarchically organised, with delta at the top (Gross et al., Reference Gross, Hoogenboom and Thut2013). Accordingly, prior research with children and adults shows that there is a mechanistic neural hierarchy of oscillations, an AM phase hierarchy in IDS and CDS, and a linguistic hierarchy of phonological units that all match in terms of their intrinsic relational structure. This provides a sensory/neural framework for language acquisition via automatic statistical learning of this relational AM structure, which is depicted in simplified form in Figure 35.2.

TS theory and infant language acquisition.

The schematic depiction of TS theory emphasises the core role of ART and rhythm processing via automatic neural entrainment to AMs at ~2 Hz (delta) and ~5 Hz (theta) rates, sensory-neural processes that support the developing lexicon. Although only briefly discussed in this chapter, individual differences in rhythmic entrainment to visual speech and motor entrainment may also have an impact on the developing lexicon.

Figure 35.2 Long description

The flowchart starts with the acoustic input, which is followed by delta sampling network and theta sampling network, a box for delta theta phase alignment helping to determine prosodic representations or syllable level representations and a box indicating the developing lexicon. Motor entertainment to the beat and visual entertainment to the beat also contribute to developing the lexicon.

35.2.2 TS Theory: Neural Data

Children with dyslexia have phonological impairments at many levels of the linguistic hierarchy, showing difficulties in identifying and manipulating phonological units such as stressed syllables, syllables, onset-rimes, and phonemes in oral tasks (Goswami, Reference Goswami and Skeide2022b, for a recent review). If automatic statistical learning of AM phase relations supports acoustic-emergent phonology, and given the well-established sensory discrimination difficulties with ARTs found in children with dyslexia, it is logical to expect atypical oscillatory responses to natural speech in these children. The first studies investigating the neural aspects of TS theory in children predicted atypical responding in both the delta and theta bands. These early studies used a rhythmic speech paradigm, in which a ‘talking head’ repeated the syllable ‘ba’ at 2 Hz while an electroencephalogram (EEG) was recorded (Power et al., Reference Power, Mead, Barnes and Goswami2012, Reference Power, Mead, Barnes and Goswami2013). Children were listening for oddball syllables that were slightly out of time with the 2 Hz rhythm, and pressed a button when this occurred. Even when children with dyslexia were equated to control children without dyslexia for their performance in the button-press task, their brains were out of time regarding the delta-band response (but not the theta-band response; Power et al., Reference Power, Mead, Barnes and Goswami2013). The key finding was that the oscillatory response in the delta band had a different preferred phase (preferred phase refers to the point in time when most neurons are discharging their electrical potentials). Brain excitation in the delta band was maximal at a different point in time for the group of children with dyslexia compared to control children. By TS theory, this would suggest that the peak delta-band neural response for dyslexic children was aligned with less informative points in the speech stimulus, a phase difference that might be expected to impair the neural representation of phonological information.

Subsequent dyslexia studies testing TS theory in English indicated that the dyslexic brain encoded a less accurate representation of delta-band envelope information (Power et al., Reference Power, Colling, Mead, Barnes and Goswami2016; Keshavarzi et al., Reference Keshavarzi, Mandke and Macfarlane2022; Mandke et al., Reference Mandke, Flanagan and Macfarlane2022). These investigations recorded either EEG or MEG (magnetoencephalography) during speech listening tasks, and either used a method called the multivariate temporal response function (mTRF) to estimate different features of the heard speech such as the speech AE from the EEG responses (generating a correlational r value; Di Liberto et al., Reference Di Liberto, O’Sullivan and Lalor2015; Crosse et al., Reference Crosse, di Liberto, Bednar and Lalor2016), or other methods such as phase-locking values (PLVs) in MEG to estimate the cortical encoding of speech in different frequency bands. These developmental studies showed that neural representation in the delta band was less accurate (exhibiting a significantly lower mTRF r value) in a sentence-listening task for children with dyslexia compared to both age-matched control children and younger reading-level-matched control children using EEG (Power et al., Reference Power, Colling, Mead, Barnes and Goswami2016). It was also less accurate in a story-listening task compared to age-matched control children in neuroimaging studies using MEG (exhibiting a significantly lower PLV; Mandke et al., Reference Mandke, Flanagan and Macfarlane2022) and EEG (exhibiting a significantly lower mTRF r value; Keshavarzi et al., Reference Keshavarzi, Mandke and Macfarlane2022). In the English EEG studies, neural accuracy was significantly related to measures of phoneme awareness and non-word reading, and in the MEG study, to vocabulary development. Neuroimaging studies in other languages have also found impaired low-frequency envelope encoding for children with dyslexia (in both the delta and theta bands; see Destoky et al., Reference Destoky, Bertels and Niesen2020, Reference Destoky, Bertels and Niesen2022, French) and atypical speech–brain synchronisation in the delta band (see Molinaro et al., Reference Molinaro, Lizarazu, Lallier, Bourguignon and Carreiras2016, Spanish). Importantly, speech reconstruction studies with infants, children, and adults (in which EEG recorded during speech listening is used to reconstruct low-frequency speech information <8 Hz using mTRFs) show that delta-band information yields phonetic information as well as prosodic (syllable stress) information (Di Liberto et al., Reference Di Liberto, O’Sullivan and Lalor2015, Reference Di Liberto, Peter and Kalashnikova2018, Reference Di Liberto, Attaheri and Cantisani2023). Accordingly, this review of neural data supporting TS theory indicates that low-frequency envelope information is encoded less accurately by children with dyslexia, and that this impaired encoding affects the quality of their phonological development at many levels of the linguistic hierarchy, including the phoneme level.

35.2.3 Implications for a TS Theory of Language Acquisition

The sensory and neural data with children gathered to test TS theory have a number of implications for language acquisition. Regarding sensory factors, if ART sensitivity could be measured in infancy, it would be expected to be a significant predictor of linguistic development. As infants get older and become able to participate in metrical rhythm tasks, individual differences in rhythm perception and production might also be expected to relate to ART discrimination and to predict linguistic development. These sensory predictions of TS theory were investigated in the SEEDS project. Regarding neural factors, both the accuracy of continuous speech encoding in the delta and theta bands as measured by mTRFs and the timing of oscillatory responses (such as preferred phase) in the delta band to rhythmic inputs might be expected to predict later language outcomes. These predictions of TS theory were investigated in the Cambridge UK BabyRhythm project. Both were longitudinal infant projects, one with an at-risk sample (SEEDS) and one with a typically developing sample (BabyRhythm), and an overview of their findings is presented here.

35.3 SEEDS: Infants at Family Risk for Dyslexia

The SEEDS (of Literacy) project recruited a group of infants at family risk for dyslexia (FR group) and a group of infants not at family risk for dyslexia (NFR group) in Australia, and followed their development from the age of five months. One aim of SEEDS was to investigate whether infant measures of auditory sensitivity to rhythm would be related to subsequent linguistic and phonological skills and, eventually, to reading achievement (Kalashnikova et al., Reference Kalashnikova, Goswami and Burnham2018, Reference Kalashnikova, Goswami and Burnham2019, Reference Kalashnikova, Burnham and Goswami2020a, Reference Kalashnikova, Burnham and Goswami2020b, Reference Kalashnikova, Burnham and Goswami2020c, Reference Kalashnikova, Burnham and Goswami2021). Children were assigned to the FR and NFR groups at the beginning of the project based on a parent having a pre-existing dyslexia diagnosis and/or their performance on a comprehensive parental screening battery that included language, reading, and cognitive tasks. This targeted sample proved difficult to recruit; hence, most publications are based on samples of 50 infants or fewer.

An infant behavioural procedure for assessing sensitivity to ART was developed based on the psychoacoustic stimuli used to measure ART discrimination in younger children (Corriveau et al., Reference Corriveau, Goswami and Thomson2010). An infant version of a two-alternative forced choice (2AFC) adaptive threshold procedure was created based on looking preference. Infants sat on their parent’s lap facing three monitors in a sound-attenuated infant-testing booth. Once infants fixated the central monitor, the images of a chequerboard appeared on the right and left screens. Infants’ fixations to the monitor on one side produced a repeating auditory stimulus with a fixed rise time (e.g., 15 ms, 15 ms, 15 ms, 15 ms …), while fixations to the monitor on the other side produced an auditory stimulus with alternating rise times (15 ms, 300 ms, 15 ms, 300 ms …). Greater fixation to the alternating stimulus was taken as a measure of discrimination, and step size was reduced accordingly (e.g., 15 ms, 270 ms, 15 ms, 270 ms …), enabling a threshold (a just noticeable difference in ART) to be established (see Kalashnikova et al., Reference Kalashnikova, Goswami and Burnham2018, for a full description). When the FR and NFR groups were aged 10 months, they already showed a significant difference in ART discrimination in this task (Kalashnikova et al., Reference Kalashnikova, Goswami and Burnham2018), as would be expected by TS theory.

Early auditory rhythm sensitivity as indexed by the ART measure administered at 10 months was a significant predictor of subsequent vocabulary development (Kalashnikova et al., Reference Kalashnikova, Goswami and Burnham2019). When they were aged 36 months, the SEEDS cohort were given a standardised vocabulary measure from the Stanford-Binet (Roid, Reference Roid2003). They were also given two experimental measures of phonological development at 42 months. These were a mispronunciation detection task (e.g., ‘abble’ for apple) and a non-word repetition task (the children orally copied items such as ‘sep’, ‘gattom’, and ‘katapet’). A linear mixed-effects analysis showed that ART discrimination at age 10 months was a predictor of vocabulary at 36 months of age, but not (contrary to hypothesis) of phonological development at 42 months (Kalashnikova et al., Reference Kalashnikova, Goswami and Burnham2019). The phonological tasks did not yet show group differences, possibly due to reduced sensitivity at this age. By contrast, infants who were less sensitive to ART had smaller vocabularies, and reduced vocabulary development is known to be an early hallmark of risk for dyslexia. Prospective longitudinal studies of children at family (genetic) risk of dyslexia frequently report deficits in expressive vocabulary (e.g., at 17 months; Koster et al., Reference Koster, Been and Krikhaar2005), and van Viersen et al. (Reference van Viersen, de Bree and Verdam2017) showed that children at risk for dyslexia exhibit delayed growth patterns of both receptive and expressive vocabulary sizes from 17 to 35 months. In the latter study, these early vocabulary scores reliably discriminated between at-risk children who later did and did not develop dyslexia.

One explanation for reduced vocabulary development in FR samples could be that reduced sensitivity to ARTs impairs the learning of new words. This would be predicted by TS theory if infants with poorer rise time sensitivity process the speech signal less effectively. Prior research with children with dyslexia has shown a significant relationship between ART discrimination and the ability to learn novel words (Thomson and Goswami, Reference Thomson and Goswami2010). Thomson and Goswami reported that children with dyslexia were worse at learning novel words in a paired associate learning (PAL) task compared to both age-matched and younger reading-level-matched control children. Within the dyslexic group, children with better ART sensitivity performed better in the PAL task. To test the possibility that reduced ART sensitivity impairs novel word learning, a ‘fast mapping’ task was administered when the SEEDS cohort were aged 19 months (Kalashnikova et al., Reference Kalashnikova, Burnham and Goswami2020a). Two non-word items, ‘lif’ and ‘wug’, were presented repeatedly along with two novel visual objects in a habituation paradigm, and word learning was tested in a subsequent preferential looking paradigm. Both groups of infants showed similar patterns of habituation, and similar engagement and attention during the learning component of the task. However, while the NFR infants showed increased looking time to the correct novel referent at test, the FR infants did not. The FR infants were hence less successful at learning new words early in the language acquisition process, as would be expected on the basis of TS theory.

Of course, it could be argued that the FR infants were simply poorer at PAL. This explanation was ruled out by a subsequent study of PAL when the SEEDS sample were aged 48 and 54 months (Kalashnikova et al., Reference Kalashnikova, Burnham and Goswami2020b). In the later PAL task, the SEEDS children were required to learn associations between four novel words and four novel objects, with learning assessed by (1) finding the correct object in response to its label, and (2) producing the novel word when shown the corresponding object. Their letter knowledge was also assessed as a measure of pre-reading skill at both ages, and vocabulary was measured again at 48 months. Kalashnikova et al. (Reference Kalashnikova, Burnham and Goswami2020b) found no deficit in PAL for the FR children on either measure of learning, and no relationship between PAL and letter knowledge. However, the FR children still had significantly smaller vocabularies than the NFR children. Accordingly, linguistic development is still compromised, even though the difficulties in novel word learning present at 19 months for the FR children could no longer be demonstrated using the later PAL task at 48 and 54 months. Further analyses revealed that it was earlier performance in the non-word repetition task given at 42 months that predicted PAL. This suggests developmental continuity between early auditory difficulties, subsequent early word-learning difficulties, individual differences in later phonological sensitivity, and subsequent vocabulary learning.

By four years of age (48 months), the SEEDS sample were able to complete some of the measures of rhythmic sensitivity used in the TS studies of children with dyslexia. These measures comprised a rhythm discrimination task based on musical beats (following Huss et al., Reference Huss, Verney, Fosker, Mead and Goswami2011, hereafter musical beat perception) and a measure of rhythm production based on tapping to a 2 Hz metronome beat (following Thomson and Goswami, Reference Thomson and Goswami2008; see Kalashnikova et al., Reference Kalashnikova, Burnham and Goswami2021). By TS theory, both measures of rhythmic processing should be related to phonological development and should be impaired in the FR group. The non-word repetition data gathered at 42 months was thus studied in relation to rhythm discrimination and rhythm production at 48 months. When aged four years, the FR group showed significantly poorer rhythm discrimination (significantly lower ‘d’ scores) in the musical beat perception task compared to the NFR group (Kalashnikova et al., Reference Kalashnikova, Burnham and Goswami2021). There was no group difference in rhythm production (tapping accuracy), although the FR group displayed more variable performance (Kalashnikova et al., Reference Kalashnikova, Burnham and Goswami2021; Figure 35.2). As noted above, when aged four years the SEEDS sample also received measures of letter knowledge and vocabulary. The FR and NFR children showed significant group differences in all three linguistic tasks (non-word repetition, letter knowledge, and vocabulary development), with the FR children showing consistently worse performance. Correlation analyses showed that tapping variability was significantly related to performance on all three linguistic tasks, whereas variability in musical beat perception was not. A mediation analysis showed that metronome tapping was a significant predictor of non-word repetition, and non-word repetition was a significant predictor of letter knowledge (Kalashnikova et al., Reference Kalashnikova, Burnham and Goswami2021). Accordingly, the link between rhythm and pre-literacy skills (letter knowledge) is mediated by phonological processing (non-word repetition). Again, these longitudinal relations are consistent with TS theory. In future SEEDS analyses, we plan to investigate developmental continuities between ART discrimination, phonological development, and early literacy.

35.4 Cambridge UK BabyRhythm Project: Typically Developing Infants

In order to test neural predictions of TS theory with infants, large samples are required. EEG data collected from infants is quite noisy, and there can be substantial loss of data. The Cambridge UK BabyRhythm project was able to recruit 122 typically developing infants, who were followed from the age of two months to 42 months with very little drop-out. Infants attended for eight EEG sessions at the ages of two, four, five, six, seven, nine, and 11 months, and language outcomes were measured at 12, 15, 18, 24, 30, and 42 months. To date, only language analyses up to 24 months are available (see also Chapter 36). Based on TS theory, three tasks were selected for the EEG recordings: a nursery rhyme task and two rhythmic tasks. Infants watched videos of a ‘talking head’ singing nursery rhymes in IDS, of a ‘talking head’ saying ‘ta’ repeatedly at 2 Hz in IDS, and of a visual presentation of a ball dropping on to a drum accompanied by a metronome beat at 2 Hz. These three audiovisual tasks enabled us to assess cortical tracking of natural speech in the delta and theta bands by applying mTRF analyses to the nursery rhyme EEG, and to generate measures of preferred phase using the EEG recorded to both speech (‘ta’) and non-speech (drumbeat) rhythmic inputs.

These neural measures were used to predict a range of language outcomes. Although many different experimental measures of phonology and grammar were employed, along with standardised measures such as the UK version of the CDI (communicative development inventory; Alcock et al., Reference Alcock, Meints and Rowland2020), the Covid-19 pandemic had a very disruptive effect on data collection, and only some of the language outcome measures could be used for the neural prediction analyses. The most robust measures were the UK-CDI, in which parents estimated their infants’ word comprehension and production, an infant-led vocabulary measure called the CCT (computerised comprehension task; Friend and Keplinger, Reference Friend and Keplinger2003), in which infants selected a named object on a tablet screen to indicate comprehension, and a non-word repetition task introduced as a game about naming toys. The vocabulary measures were administered from 12 months of age, and the non-word repetition task from 18 months of age (see Rocha et al., Reference Rocha, Ní Choisdealbha and Attaheri2024, for full analyses).

On the basis of prior TS studies with children with dyslexia, it was expected that the accuracy of encoding of envelope information in the delta band along with individual differences in preferred phase in the delta band could be predictors of language outcomes. Regarding the accuracy of low-frequency envelope encoding, the EEG recorded in response to the sung nursery rhymes presented at the four-, seven-, and 11-month laboratory sessions was analysed using the mTRF method. These longitudinal analyses compared the accuracy of the speech envelope information encoded in the delta (0.5–4 Hz), theta (4–8 Hz), and alpha (8–12 Hz, a control band) bands at each age. Encoding accuracy was estimated for the first 60 infants in the UK BabyRhythm sample (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Di Liberto2022), and is currently being replicated with the remaining infants (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Rocha2024). In Attaheri et al. (Reference Attaheri, Ní Choisdealbha and Di Liberto2022), delta-band encoding was significantly stronger (significantly larger r values) than theta-band encoding at four months, and continued to be stronger at all subsequent ages, with no evidence for significant alpha-band encoding at any age. Other neural factors related to speech encoding by adults, such as phase-amplitude coupling (PAC) measures, were also present from the earliest measurement point (four months). Adult speech-encoding studies have highlighted theta–gamma PAC as particularly relevant for speech processing (Hyafil et al., Reference Hyafil, Fontolan, Kabdebon, Gutkin and Giraud2015). Our infant data showed that delta-led PAC was equally important as theta-led PAC during the nursery rhyme listening task, with delta–beta, delta–gamma, theta–beta, and theta–gamma PAC all significant at all ages (four, seven, and 11 months) (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Di Liberto2022). In ongoing analyses (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Rocha2024), language data collected between 12 and 24 months is being related to the neural data. Currently, only theta–gamma PAC appears to be a significant source of individual differences. Stronger theta–gamma PAC is related to better language outcomes, while delta-related PAC is not (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Rocha2024). The other neural predictor of better language outcomes as measured by the mTRF analyses was the accuracy of delta-band encoding at 11 months (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Rocha2024). Worse language outcomes were related to the theta–delta power ratio (higher ratios were associated with worse outcomes), and to the overall amount of theta power (higher theta power spectral density was associated with worse language outcomes; Attaheri et al., Reference Attaheri, Ní Choisdealbha and Rocha2024). Accordingly, both delta-band and theta-band oscillatory responses and their dynamic interactions are important for language acquisition, as would be expected on the basis of TS theory.

Given the earlier data collected with children with dyslexia (Power et al., Reference Power, Mead, Barnes and Goswami2013), the preferred phase of entrainment in the delta band to rhythmic speech should also relate to individual differences in language outcomes. Preferred phase analyses were conducted for the two rhythmic tasks (‘ta’ and drumbeat), which were administered at two, six, and nine months in the auditory-only or auditory-visual (AV) domain, and at five and eight months as visual-only (VO) speech. Phase analyses revealed that infants already exhibited phase consistency to rhythmic syllables and drumbeats at two months of age, when most participants were sleeping and hence experienced auditory-only input (Ní Choisdealbha et al., Reference Ní Choisdealbha, Attaheri and Rocha2023). Individual phase angles in the syllable task at two months were a significant predictor of later vocabulary outcomes as measured by the CCT at 18 months. By the time the infants were aged nine months, phase angles in the syllable task were associated with the number of parent-estimated known words at 24 months on the UK-CDI, for both comprehension and production. Regarding the drumbeat task, phase angles at nine months were predictive of non-word repetition skills at the age of 24 months, an early measure of phonological sensitivity (Ní Choisdealbha et al., Reference Ní Choisdealbha, Attaheri and Rocha2023).

For VO speech, similar results were obtained (Ní Choisdealbha et al., Reference Ní Choisdealbha, Attaheri and Rocha2024). At five months, infants already exhibited an increased cortical response to the stimuli at the stimulus frequency (thereby showing cortical tracking at 2 Hz), but phase consistency was variable. At eight months, the infants showed significant phase consistency to VO speech, and oscillatory responses were moving towards a common preferred phase angle. Individual differences in phase angle at eight months were significantly related to later vocabulary at 24 months. Taken together, the analyses reported by Ní Choisdealbha et al. show that individual differences in the phase angle of the neural response to rhythmic speech stimuli are predictive of later language outcomes, for auditory-only, VO, and AV speech. Accordingly, key neural parameters of TS theory explain some of the individual variation in children’s later language outcomes.

Ideally, the Cambridge UK BabyRhythm study would have explored whether there was an association between individual differences in ART sensitivity and these different neural outcome measures. However, the 2AFC behavioural paradigm from the SEEDS study did not yield reliable data with the UK BabyRhythm infants. As the infants were still wearing the EEG cap when they received the 2AFC paradigm, instead of using behavioural thresholds we used their ERPs as an index of ART sensitivity (Ní Choisdealbha et al., Reference Ní Choisdealbha, Attaheri and Rocha2022). The ERP data revealed that the infants showed robust mismatch responses to all the steps on the thresholding staircase, including the ones that we had expected to be below threshold on the basis of the SEEDS data. Even the smallest just noticeable difference of 161 ms in rise time (15 ms versus 176 ms) was discriminated by 85% of the sample at seven months of age, and there was no consistent pattern to the presence or absence of a mismatch response across the 10 values used for the staircase. This finding shows that infants are able to discriminate ARTs with high accuracy, revealing the readiness of the infant brain to process speech rhythm. The ART data also demonstrate the value of neural measures in assessing the parameters of TS theory. Future studies will need to present more fine-grained differences between ART stimuli in order to find individual infant thresholds and relate them to language outcomes.

35.5 Conclusions

Applying TS theory to infant language acquisition appears to be a promising avenue for future research. Referring back to Figure 35.2, the SEEDS and the Cambridge UK BabyRhythm projects have provided evidence for a number of factors theorised to be critical to language acquisition. The UK BabyRhythm project has shown that the infant brain is highly sensitive to ART differences (Ní Choisdealbha et al., Reference Ní Choisdealbha, Attaheri and Rocha2022), and entrains to delta-band and theta-band speech envelope information from four months of age (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Di Liberto2022, Reference Attaheri, Ní Choisdealbha and Rocha2024). Hence, the delta and theta sampling networks shown in Figure 35.2 are gaining information about AMs at the ‘stress’ and ‘syllable’ rates from early in language learning. Computational speech analyses of IDS collected in the SEEDS project showed that delta–theta phase alignment of AMs is foregrounded in BabyTalk (Leong et al., Reference Leong, Kalashnikova, Burnham and Goswami2017), and although phase-phase analyses have yet to be completed, the UK BabyRhythm neural data showed that phase-amplitude mechanisms are already in play during speech processing (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Di Liberto2022). Hence, phase-phase mechanisms may also be online. The effects of individual differences in these sensory/neural variables on the developing lexicon were documented by both projects. The SEEDS data showed that infant rise time sensitivity at 10 months predicted vocabulary development at three years, with behavioural rhythm measures at four years subsequently predicting pre-literacy variables, mediated by phonological sensitivity (non-word repetition). The UK BabyRhythm data suggested that the accuracy of delta-band cortical tracking of speech envelope information at 11 months predicted later vocabulary, as did theta–gamma PAC (Attaheri et al., Reference Attaheri, Ní Choisdealbha and Rocha2024), while individual differences in neural phase angle to rhythmic speech and non-speech stimuli also predicted both later vocabulary and non-word repetition (Ní Choisdealbha et al., Reference Ní Choisdealbha, Attaheri and Rocha2023). This suggests that both neural phase alignment in the delta band and the accuracy of speech envelope encoding are critical for later language outcomes (see also Rios-Lopez et al., Reference Rios-Lopez, Molinaro, Bourguignon and Lallier2022). Accordingly, data from both infant projects converge in showing that the sensory/neural factors foregrounded by TS theory make important contributions to later language outcomes for English-learning infants.

Summary

This chapter presents a theoretical overview of how rhythm may be important for language development, using the framework of TS theory. Sensory and neural data for TS theory from children are reviewed, and the TS-proposed causal sensory/neural mechanisms are assessed by utilising recent infant longitudinal data.

Implications

Amplitude ‘rise time’ discrimination and neural entrainment to rhythmic acoustic signals are physical characteristics of the nervous system and not under conscious control, yet they govern in part the efficiency of language acquisition. Future projects in other languages are now required to test the language universality of TS theory and these sensory/neural factors.

Gains

Deeper understanding of the sensory and neural mechanisms that govern individual differences in language acquisition may open the door to novel interventions, possibly based on rhythm, that may enhance language development.